This paper was presented at a colloquium entitled “Vision: From Photon to Perception,” organized by John Dowling, Lubert Stryer (chair), and Torsten Wiesel, held May 20–22, 1995, at the National Academy of Sciences in Irvine, CA.

Motion perception: Seeing and deciding

(motion perception/psychophysics/decision making/parietal cortex)

MICHAEL N. SHADLEN*AND WILLIAM T. NEWSOME†

Department of Neurobiology, Stanford University School of Medicine, Stanford, CA 94305

ABSTRACT The primate visual system offers unprecedented opportunities for investigating the neural basis of cognition. Even the simplest visual discrimination task requires processing of sensory signals, formation of a decision, and orchestration of a motor response. With our extensive knowledge of the primate visual and oculomotor systems as a base, it is now possible to investigate the neural basis of simple visual decisions that link sensation to action. Here we describe an initial study of neural responses in the lateral intraparietal area (LIP) of the cerebral cortex while alert monkeys discriminated the direction of motion in a visual display. A subset of LIP neurons carried high-level signals that may comprise a neural correlate of the decision process in our task. These signals are neither sensory nor motor in the strictest sense; rather they appear to reflect integration of sensory signals toward a decision appropriate for guiding movement. If this ultimately proves to be the case, several fascinating issues in cognitive neuroscience will be brought under rigorous physiological scrutiny.

A central goal of neuroscience is to understand the neural processes that mediate cognitive functions such as perception, memory, attention, decision making, and motor planning. For several reasons, the visual system of primates has become a leading system for investigating the neural underpinnings of cognition. Hubel and Weisel (1), working in the primary visual cortex of monkeys and cats, made fundamental discoveries concerning the logic of cortical information processing that have influenced virtually all subsequent thinking about cortical function. Following rapidly on the heels of these discoveries, Zeki (2), Kaas (3), and Allman et al. (4) delineated a remarkable mosaic of higher visual areas that occupies up to half of the cortical surface in some species of monkeys (reviewed in ref.5). Inspired by these landmark findings, many investigators have recently shown that visual signals can be followed to the highest levels of the central nervous system, including structures that have been implicated in sophisticated aspects of cognition. Importantly, these signals can be measured in alert monkeys during performance of simple cognitive tasks. Thus the alert monkey preparation is yielding intriguing new insights concerning the neural basis of visually based memory, visual attention, visual object recognition, and visual target selection (e.g., refs.6–17).

This experimental and intellectual framework offers an unprecedented opportunity to investigate the neural underpinnings of cognition. We are positioned to begin realizing Vernon Mountcastle's bold vision:

Indeed there are now no logical (and I believe no insurmountable technical) barriers to the direct study of the entire chain of neural events that lead from the initial central representation of sensory stimuli, through the many sequential and parallel transformations of those neural images, to the detection and discrimination processes themselves, and to the formation of general commands for behavioral responses and detailed instructions for their motor execution. ( 18)

In this paper, we describe initial experiments concerning the neural basis of a simple discrimination process, one of the key integrative stages targeted by Mountcastle. Whereas systems neuroscience has achieved considerable insight concerning the physiological basis of sensory representation and motor activity, the cognitive link between sensation and action—the detection and discrimination processes themselves—remains obscure. We present neurophysiological data from the parietal lobe that may establish such a link between sensory representation and motor plan. The data were obtained while rhesus monkeys performed a two-alternative, forced choice discrimination of motion direction. Our ultimate goal is to understand how perceptual decisions are formed in the context of this visual discrimination task.

Perceptual Decisions

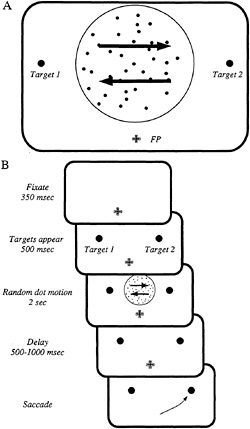

To investigate the neural basis of a simple decision process, we employed a psychophysical task that links the sensory representation of motion direction to the motor representation of saccadic eye movements. In this task, schematized in Fig. 1, a monkey is required to gaze at a fixation point (FP) and judge the direction of coherent motion in a dynamic random dot pattern that appears within a circular aperture on a video monitor. A fraction of the dots move coherently in one of two directions (arrows in Fig. 1A) while the remaining dots appear briefly at random locations, creating a masking noise. The monkey reports the direction of coherent motion by making a saccadic eye movement to one of two visual targets, each corresponding to one of the possible directions of motion. In the example of Fig. 1A, the monkey saccades to target 1 (T1) if coherent motion is leftward, and to target 2 (T2) if coherent motion is rightward.

Fig. 1B shows the sequence of events during a typical trial. The monkey gazes steadily at the fixation point for 350 msec, and two “choice ” targets then appear at appropriate locations on the television monitor. After 500 msec, the random dot stimulus is presented for 2 sec, and the monkey then remembers its decision during a brief delay period that varies randomly in length from 500 msec to 1 sec. At the end of the delay period, the fixation point disappears, and the monkey indicates

The publication costs of this article were defrayed in part by page charge payment. This article must therfore be hereby marked “advertisement” in accordance with 18 U.S.C. §1734 solely to indicate this fact.

Abbreviations: LIP, lateral intraparietal area; SC, superior colliculus; FEF, frontal eye field; T1, target 1; T2, target 2.

|

* Present address: Department of Physiology and Biophysics, University of Washington School of Medicine, Box 357290, Seattle, WA 98195-7290. |

|

† To whom reprint requests should be addressed. |

FIG. 1. The psychophysical task. Two rhesus monkeys performed a single-interval, two-alternative, forced choice discrimination of motion direction. (A) The monkey judged the direction of motion of a dynamic random dot stimulus that appeared within an aperture 4–8° in diameter. In this example, the monkey made a saccadic eye movement to target 1 (T1) if leftward motion was detected; conversely, the monkey made a saccade to target 2 (T2) if rightward motion was detected. Each experiment included several stimulus conditions—two directions of motion for several nonzero coherences, plus the zero coherence condition, which does not contain a coherent direction of motion. All stimulus conditions were presented in random order until a specified number of repetitions was acquired for each condition (typically15). The experiment was designed so that T1 fell within the movement field of the LIP neuron; T2 and the motion stimulus were placed outside the neuron's movement field. (B) The sequence of events in a discrimination trial; see text for details. Throughout the trial, the monkey maintained its gaze within a 1–2° window centered on the fixation point (FP). Failure to do so resulted in abortion of the trial and a brief time-out period. Eye movements were measured continuously at high resolution by the scleral search coil technique (19), enabling us to enforce fixation requirements and detect the monkey 's choices. The monkey received a liquid reward for each correct choice.

its decision by making a saccadic eye movement to the appropriate target.

When viewing these displays, human observers typically see weak, coherent motion flow superimposed upon a noisy substrate of twinkling dots. The discrimination can be made easy or difficult simply by increasing or decreasing the proportion of dots in coherent motion, a value that we refer to as the coherence of the motion signal. A range of coherences, chosen to span behavioral threshold, were used in our experiments, and all stimulus conditions were presented in random order.

This task offers substantial advantages for our purposes because the sensory and motor representations underlying performance are reasonably well known. The motion signals originate in large part from columns of directionally selective neurons in extrastriate visual areas MT and MST (20). This laboratory has shown that single neurons in MT and MST are remarkably sensitive to the motion signals in our displays, that inactivation of MT selectively impairs performance on this task, and that electrical stimulation of a column of directionally selective cells can bias a monkey's decisions toward the direction encoded by the stimulated column (21–27).

Motor signals that govern the monkeys' behavioral responses (saccadic eye movements) almost certainly pass through the superior colliculus (SC) and/or the frontal eye fields (FEFs). Both structures have long been known to play key roles in producing saccades (for reviews see refs.28 and 29). Both the SC and the FEFs contain neurons that discharge just prior to saccades to well-defined regions of the visual field, termed movement fields, and simultaneous lesions of these structures eliminate most saccades (30). Electrical stimulation of either the SC or the FEFs elicits a saccade to the movement field of the stimulated neurons.

In the context of this perceptual task, therefore, we are able to state our key experimental question in a much more focused manner: how do motion signals in MT and MST influence motor structures such as SC and FEFs so as to produce correct performance on the task?

Experimental Strategy and Methods

To explore the link between sensation and action, we targeted for study a specific subset of neurons in the lateral intraparietal region (LIP) of the parietal lobe that carries high-level signals appropriate for planning saccadic eye movements. These high-level signals arise early in the initial stages of planning a saccade and are therefore likely to be linked to the decision process in a revealing manner (31–34). Anatomical data suggest that LIP is an important processing stage in the context of our task: LIP receives direct input from MT and MST and projects in turn to both FEFs and SC (5,35,36). High-level signals like those in LIP also exist in SC and FEFs, and our investigation must ultimately include all three structures (10,15,37–39). We chose to begin in LIP because of its proximity to MT and MST.

The neurons of particular interest to us have been characterized most incisively in a remembered saccade task. In this task, a saccade target appears transiently at some location in the peripheral visual field while the monkey maintains its gaze on a fixation point. The monkey must remember the location of the transiently flashed target during an ensuing delay period which can last up to several seconds. At the end of the delay period, indicated by disappearance of the fixation point, the monkey must saccade to the remembered location of the target. The neurons of interest begin firing in response to the appearance of the saccade target and maintain a steady level of discharge during the delay period until the saccade is made. These neurons are spatially selective in that the delay-period response occurs only before eye movements into the movement field. Thus the delay period activity forms a temporal “bridge” between sensory responses to the visual target and motor activity that drives the extraocular muscles at the time of the saccade.‡

|

‡ Different investigators have suggested that the delay period activity is related to memory of the target location, attention to a particular region of visual space, or an intention to move the eyes (10, 31, 32, 34, 37, 38). We believe that current data are insufficient to take a strong stand in favor of any of these interpretations. Despite the uncertainty, the information contained in delay-period activity is sufficient to guide the eyes to a spatial target. |

In the present experiments we studied the behavior of these high-level neurons during performance on our direction discrimination task. We sought to determine whether the activity of these neurons could provide an interesting window onto the formation of the monkey's decision, which is revealed in the planning of one or the other saccadic eye movement.

We conducted electrophysiological experiments in two macaque monkeys, obtaining similar results in the two animals. LIP was identified by the characteristic visual and saccade-related activity of its neurons, and by its location on the lateral bank of the intraparietal sulcus. Single-unit activity of LIP neurons was recorded by conventional electrophysiological techniques (e.g., ref.21). We searched specifically for neurons that were active during the delay period of a remembered saccade task. Upon finding such a neuron, we set up a psychophysical task after the design illustrated in Fig. 1. Importantly, the locations of the two saccade targets, the location of the stimulus aperture, and the axis of the motion discrimination were adjusted in each experiment according to the location of the neuron's movement field. One target, henceforth called T1, was placed in the movement field of the neuron under study, while T2 was placed well outside the movement field (often in the opposite hemifield). The stimulus aperture was positioned so that the coherent dots moved toward one or the other target on each trial. We positioned the stimulus aperture so as to minimize stimulation of any visual receptive field observed.

In using this geometry, we created a situation in which a decision in favor of one direction of motion should be reflected by an increase in firing rate of the neuron under study because its movement field would be the target of the subsequent saccade. Conversely, a decision favoring the other direction of motion, resulting in a saccade to the target outside the movement field, should decrease or exert no influence upon the neuron's firing rate.

Decision-Related Neural Activity in LIP

Fig. 2 illustrates the responses of a single LIP neuron while a monkey performed the discrimination task. The upper row of rasters and histograms shows trials that contained 51.2% coherent motion; the middle row depicts trials that contained 12.8% coherent motion; and the lower row illustrates trials that contained 0% coherent (random) motion. For each coherence, the left column shows neuronal responses when the monkey decided in favor of motion toward the movement field, resulting in a saccade to T1. The right column depicts the converse: trials on which the monkey decided in favor of motion away from the movement field, resulting in a saccade to T2. For 51.2% and 12.8% coherence, the rasters and histograms include only those trials in which the monkey discriminated the direction of motion correctly (we will consider error trials below). This included the majority of trials, since the monkey performed well at these coherences (95% and 70% correct, respectively). The lower row of rasters and histograms includes all trials at 0% coherence, since “correct” and “incorrect” are meaningless for these trials. The vertical lines on each raster demarcate the stimulus viewing period, which was followed by the delay period and the monkey 's saccade (caret on each line).

Several aspects of these data are notable. First, the neuron's response on a given trial reliably indicated the direction of the upcoming saccade and thus the outcome of the monkey's decision. The neuron fired vigorously for decisions that resulted in eye movements to T1 (into the movement field), but fired weakly for decisions resulting in eye movements to T2. Furthermore, these modulations in firing rate began early in the trial—typically within 500 msec of stimulus onset—and were sustained during the delay period after the random dot stimulus disappeared. For a neuron like the one illustrated in Fig. 2, the responses indicated the monkey's decisions so

FIG. 2. Responses of a LIP neuron during performance of the motion discrimination task. Each raster line depicts the sequence of action potentials recorded during a single trial and the time of the saccadic eye movement (caret on each line). The histogram below each raster shows the average response rate from all trials in the raster, computed within 60-msec time bins, as well as the mean (caret, on line) and standard deviation (horizontal line) of the time of the saccadic eye movement.

reliably that an experimenter could generally predict decisions “on the fly” during an experiment simply by listening to the neuron 's activity on the audio monitor.

In a sense, the existence of predictive activity during the discrimination task is not surprising since we deliberately studied neurons that yielded predictive responses in the remembered saccade task. For our purposes, however, it was necessary to demonstrate that these neurons remain predictive in a fundamentally different task in which the monkey chooses among saccade targets contingent upon a visually based decision process. The critical issue now before us is to determine whether the responses of LIP neurons provide insight concerning the decision process per se or whether the predictive activity can be explained trivially as the result of purely sensory or purely motor signals.

A sensory account of predictive activity can be ruled out quickly by examining the responses at 0% coherence. In the bottom pair of rasters in Fig. 2, the visual stimulus is the same on all trials, yet the neuronal activity clearly predicts the monkey's decision in the absence of distinguishing sensory input.

At first glance, a motor hypothesis appears more likely to explain our data. All responses illustrated in the left column of Fig. 2 have one movement in common (saccade to T1) while all responses in the right column have a different movement in common (saccade to T2). Do the responses of parietal neurons in our task simply comprise a premotor signal for the saccadic eye movement? The analyses illustrated in Figs. 3 and 4 suggest that this is not the case.

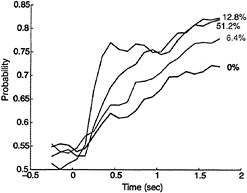

Fig. 3 shows the development of predictive activity during single behavioral trials, averaged across a selected population of 47 LIP neurons. All 47 neurons chosen for this analysis exhibited some predictive activity, but most were less flagrant

FIG. 3. The predictive power of LIP neurons improves with time and stimulus strength. The ordinate plots the probability that an ideal observer could correctly predict the monkey's choice from spike counts measured in 250-msec bins from single LIP neurons. The time axis marks the center of the 250-msec epoch relative to the onset of the visual stimulus. The calculation was made over a population of 47 neurons and employed only correct choices. Only neurons exhibiting predictive activity were included in this sample. The spike counts from each neuron in each 250-msec epoch were standardized (z-transform) and pooled to form distributions of responses sorted by stimulus condition and the monkey's choices. Probability was computed with a signal detection analysis (40) that compared distributions of spike counts obtained when the monkey chose T1 with distributions obtained when the monkey chose T2. The computation was performed independently for each coherence level, and the resulting values are plotted as a function of time for four coherence levels. Note that many LIP neurons have considerably better predictive power (probability values approaching 1.0) than the mean data illustrated in this figure.

than the example in Fig. 2. The quantity plotted on the ordinate (probability) may be thought of as the probability that an ideal observer could predict the monkey 's eventual decision by using only neural responses gathered from an average neuron during a given 250-msec epoch during the trial. Because ours is a two-alternative forced choice task, probability values of 0.5 and 1.0 correspond to random performance and perfect performance, respectively. Thus probability values near 0.5 would indicate no predictive activity among the LIP neurons, whereas values near 1.0 would indicate perfect predictive power.

The most important result in Fig. 3 is that the evolution of predictive activity in LIP differs systematically across coherence levels. For stronger coherences, predictive activity develops more quickly and achieves higher levels by the end of the stimulus period. These quantitative results confirm the impression formed by inspection of the raw data in Fig. 2: the difference in firing rates between the left and right rasters is more pronounced for 51.2% coherence than for 0% coherence. (Note that for the neuron in Fig. 2, the improved predictive power at 51.2% coherence results more from increased suppression for saccades to T2 than from increased excitation for saccades to T1. This was characteristic of many LIP neurons.)

The responses illustrated in Fig. 3 would not be expected of a motor signal whose primary business is to drive the eyes to a particular location in space. If the monkey chooses the direction toward the movement field, an accurate saccade to T1 must be made regardless of the strength of the motion signal that led to the saccade. In other words, strictly motor signals should depend only on the metrics of the planned movement, not on the strength of the sensory signal that evoked the decision to move.

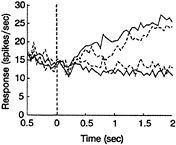

The pattern of neural responses on error trials also argues against a strictly motor interpretation of predictive activity in LIP. Fig. 4 depicts an analysis of error trials for the same 47 neurons used in Fig. 3. Only trials containing 6.4% coherent motion were incorporated in this analysis. The monkey attempted to discriminate the stimulus at this near-threshold coherence, achieving 68% correct performance. Yet enough errors were made to permit a reliable comparison of neural activity for correct choices (1239 trials) and erroneous choices (587 trials).

Fig. 4 shows average firing rates over the course of a single trial for the four conditions of interest. The monkey's decision is indicated by the color of the line: green represents saccades into the movement field (T1), whereas red represents saccades away from the movement field (T2). The line type, on the other hand, indicates whether or not the decision was correct: solid lines represent correct decisions, whereas dashed lines represent error trials. Clearly, the two green curves lie well above the two red curves by the end of the trial, showing that LIP activity predicts decisions both on error trials and on correct trials. Just as clearly, however, the two dashed curves lie closer to each other than do the two solid curves, showing that LIP neurons do not predict the decision as well on error trials as on correct trials. Again, this pattern of activity would not be expected of a strictly motor signal, since the required eye movement is the same for all trials represented by the two green curves (saccades to T1) and for all trials represented by the two red curves (saccades to T2). The primary difference between the solid curve and the dashed curve (of either color) is simply the direction of stimulus motion. Consistent with our inferences from Fig. 3, then, analysis of error trials indicates that important aspects of neural activity in LIP are influenced by the visual stimulus and cannot be characterized as purely motor.

Discussion

Our primary finding is that neurons in LIP carry signals that predict the decision a monkey will make in a two-alternative, forced choice direction discrimination task. These signals typically arise early in the trial during presentation of the random dot stimulus and are sustained during the delay period following disappearance of the stimulus. Thus, predictive activity can arise several seconds in advance of an eye movement that indicates the monkey's decision.

The data in Figs. 2 – 4 are suggestive of a neural process that integrates weak, slowly arriving sensory information to generate a decision. In our stimuli, the coherent motion signals are distributed randomly throughout the stimulus interval. When coherent motion is strong, a substantial amount of motion information arrives quickly and decisions can be formed earlier in the trial and with greater certainty. When coherent motion

FIG. 4. A histogram comparing the average responses of a population of 47 neurons for correct choices (solid lines) and erroneous choices (dashed lines). The monkey's decision is indicated by the color (green for T1 choices, red for T2). The motion stimulus was 6.4% coherence toward or away from the movement field. Average responses were computed in 60-msec bins and plotted relative to stimulus onset (time 0). See text for details.

is weak, information arrives slowly and the better strategy is to integrate over a long period of time (21,41). Even if the optimal strategy is followed, however, the monkey is apt to be less certain of its decisions at low coherences than at high coherences. Thus the dynamics expected of the decision process correspond to the dynamics of the neural signals illustrated in Fig. 3, and the certainty of the monkey's decision appears correlated with the probability level achieved by LIP neurons by the end of the stimulus period.

We therefore suggest that the evolution of predictive signals in LIP comprises a neural correlate of decision formation within the central nervous system. In the context of a discrimination task like ours, the decision process is simply a mechanism whereby sensory information is evaluated so as to guide selection of an appropriate motor response. To use a legal analogy, the decision process is akin to the events that occur inside a jury deliberation room. Sensory signals, in contrast, are analogous to the evidence presented in open court, while motor signals are analogous to the verdict announced after the jury has completed its deliberations. The neural events in LIP are suggestive of the process of deliberation—sifting evidence and forming a decision—as indicated by the gradual evolution of the signals over time, the dependence of the time course on stimulus strength, and the dependence of predictive activity on stimulus strength (i.e., certainty of the decision).

Practically speaking, such distinctions are difficult to make unless the accumulation of sensory information and formation of the decision can be spread out in time and cleanly isolated from execution of the motor response. If, for example, our monkeys viewed only 100% coherent motion and were allowed to make an eye movement as soon as a decision was reached, then sensory, decisional, and motor signals would be densely entangled in only a few hundred milliseconds of neural activity. Distinguishing among these signals may be virtually impossible under such conditions.

Importantly, we are not proposing that decisions in our task are actually formed in LIP. LIP may simply follow afferent signals from another structure or group of structures where decisions are initiated. We are, however, suggesting that neural signals in LIP may reflect the dynamics of decision formation and the certainty of the decision, regardless of where the decision is initiated. If so, neural activity in LIP provides a window onto the decision process that will permit interesting manipulations in future experiments. Obviously, we have not yet addressed the critical question of whether LIP plays a causal role in performance of this task. Microstimulation and inactivation techniques may allow us to investigate this possibility in future experiments.

Finally, we note that the present analyses leave several interesting questions unexplored, mostly because the population histograms in Figs. 3 and 4 may obscure interesting heterogeneity in the data. Are some cells influenced more strongly than others by sensory or motor signals? Are the firing rates of individual cells modulated smoothly, as suggested by the curves in Figs. 3 and 4, or do rates change abruptly at different times on different trials, thus yielding the smoothly increasing probability values in the population curves? These questions will be addressed in future analyses.

A Look at the Future

If the effort to identify neural substrates of a decision process is ultimately successful, a host of fascinating questions will be brought into the realm of physiological investigation. If, for example, LIP integrates motion signals to form a plan to move the eyes in our psychophysical task, a precise pattern of circuitry must exist between the direction columns in MT and MST and the movement fields of LIP neurons. In essence, LIP neurons with movement fields in a particular region of visual space should be excited by columns in MT and MST whose preferred directions point toward the movement field. Columns whose preferred directions point away from the movement field should suppress the response of the LIP neuron. The latter columns should, of course, excite LIP neurons whose movement fields are located elsewhere in space.

Realize that this is merely a restatement of the logic of the task: for the monkey to perform correctly, saccade-related neurons anywhere in the brain should be activated only when directional columns in the motion system signal a preponderance of motion toward their movement fields. Realize also that we are not implying that this circuitry must connect MT, MST, and LIP directly; motion signals could be processed through frontal cortex or other structures before activating parietallobe neurons. The logic of the task demands, however, that such connections exist regardless of the length of the pathway. Tracing such precisely patterned connections with physiological techniques would be a major step toward identifying the circuitry underlying the decision process in our task. Experiments that combine microstimulation of MT and MST with unit recording in LIP may shed light on the circuitry connecting the structures.

Monkeys can be trained to base eye movements on a wide range of sensory signals. For example, our animals could be trained to make rightward or leftward saccades depending upon the color of the random dot pattern rather than its direction of motion. In this case, LIP may continue to contribute to the formation of oculomotor plans, but the sensory signals that differentially activate one or the other pool of LIP neurons must originate from color-sensitive neurons rather than direction-selective neurons. Thus a different, but no less precise, pattern of connections from occipital to parietal cortex would underlie the decision process.

Raising the ante a bit further, monkeys should be able to learn both the color and direction discrimination tasks, and to alternate tasks from one block of trials to the next (perhaps, even, from one trial to the next). In this situation, the effective connectivity between the occipital and parietal cortices must be flexible. One pattern of connections should operate in the color version of the task, but a very different pattern should operate during the motion version. Obviously, higher-level control signals, probably related to visual attention, must engage and disengage these connections on a fairly rapid time scale if the monkey is to perform appropriately. Development of physiological techniques for monitoring the formation and dissolution of such circuits with fast temporal resolution is a high priority for future research (e.g., refs.42 and 43).

To conclude, systems neuroscientists have unprecedented opportunities to make significant discoveries concerning the neural basis of cognition. Though we currently fall short of Mountcastle's vision cited at the outset of this paper, the future promises substantial progress toward this goal.

We are grateful to Daniel Salzman for assistance in design of the experiments and to Jennifer Groh and Eyal Seidemann for participating in some of the experiments. These colleagues as well as Drs. John Maunsell, Brian Wandell, and Steven Wise provided helpful comments on the manuscript. We thank Judy Stein for excellent technical assistance. The work was supported by the National Eye Institute (EY05603) and by a Postdoctoral Research Fellowship for Physicians to M.N.S. from the Howard Hughes Medical Institute.

1. Hubel, D. H. & Weisel, T. N. ( 1977) Proc. R. Soc. London B 198, 1–59.

2. Zeki, S. M. ( 1978) Nature (London) 274, 423–428.

3. Kaas, J. H. ( 1989) J. Cognitive Neurosci. 1, 121–135.

4. Allman, J., Jeo, R. & Sereno, M. ( 1994) in Aotus: The Owl Monkey, eds. Baer, J. F., Weller, R. E. & Kakoma, I. (Academic, New York), pp. 287–320.

5. Felleman, D. & Van Essen, D. ( 1991) Cereb. Cortex 1, 1–47.

6. Wurtz, R. H., Goldberg, M. E. & Robinson, D. L. ( 1980) Prog. Psychobiol Psychol. 9, 43–83.

7. Fuster, J. M. & Jervey, J. P. ( 1982) J. Neurophysiol. 2, 361–375.

8. Haenny, P. E., Maunsell, J. H. R. & Schiller, P. H. ( 1988) Exp. Brain Res. 69, 245–259.

9. Hikosaka, O. & Wurtz, R. ( 1983) J. Neurophysiol. 49, 1268–1284.

10. Funahashi, S., Bruce, C. & Goldman-Rakic, P. ( 1989) J. Neurophysiol. 61, 331–349.

11. Miyashita, Y. & Chang, H. ( 1988) Nature (London) 331, 68–70.

12. Miller, E. K., Li, L. & Desimone, R. ( 1993) J. Neurosci. 13, 1460–1478.

13. Motter, B. ( 1994) J. Neurosci. 14, 2178–2189.

14. Schall, J. & Hanes, D. ( 1993) Nature (London) 366, 467–469.

15. Glimcher, P. W. & Sparks, D. L. ( 1992) Nature (London) 355, 542–545.

16. Assad, J. & Maunsell, J. ( 1995) Nature (London) 373, 518–521.

17. Chen, L. & Wise, S. ( 1995) J. Neurophysiol. 73, 1101–1121.

18. Mountcastle, V. B. ( 1984) in Handbook of Physiology: The Nervous System, Sensory Processes, ed. Geiger, S. R. (Am. Physiol. Soc., Bethesda, MD), Vol. 3, Part 2, pp. 789–878.

19. Robinson, D. ( 1963) IEEE Trans. Biomed. Eng. 10, 137–145.

20. Albright, T. D., Desimone, R. & Gross, C. G. ( 1984) J. Neurophysiol. 51, 16–31.

21. Britten, K. H., Shadlen, M. N., Newsome, W. T. & Movshon, J. A. ( 1992) J. Neurosci. 12, 4745–4765.

22. Salzman, C. D., Murasugi, C. M., Britten, K. H. & Newsome, W. T. ( 1992) J. Neurosci. 12, 2331–2355.

23. Murasugi, C. M., Salzman, C. D. & Newsome, W. T. ( 1993) J. Neurosci. 13, 1719–1729.

24. Salzman, C. D. & Newsome, W. T. ( 1994) Science 264, 231–237.

25. Celebrini, S. & Newsome, W. T. ( 1994) J. Neurosci. 14, 4109– 4124.

26. Celebrini, S. & Newsome, W. T. ( 1995) J. Neurophysiol. 73, 437–448.

27. Britten, K. H., Newsome, W. T., Shadlen, M. N., Celebrini, S. & Movshon, J. A. ( 1996) Vis. Neurosci., in press.

28. Sparks, D. L. ( 1986) Physiol. Rev. 66, 118–171.

29. Schall, J. D. ( 1991) in The Neural Basis of Visual Function, ed. Leventhal, A. G. (Macmillan, New York), pp. 388–442.

30. Schiller, P., True, S. & Conway, J. ( 1980) J. Neurophysiol. 44, 1175–1189.

31. Gnadt, J. W. & Andersen, R. A. ( 1988) Exp. Brain Res. 70, 216–220.

32. Barash, S., Bracewell, R. M., Fogassi, L., Gnadt, J. W. & Andersen, R. A. ( 1991) J. Neurophysiol. 66, 1095–1108.

33. Barash, S., Bracewell, R. M., Fogassi, L., Gnadt, J. W. & Andersen, R. A. ( 1991) J. Neurophysiol. 66, 1109–1124.

34. Colby, C. L., Duhamel, J. R. & Goldberg, M. E. ( 1993) in Progress in Brain Research, eds. Hicks, T. P., Molotchnikoff, S. & Ono, T. (Elsevier, Amsterdam), pp. 307–316.

35. Andersen, R. A., Asanuma, C., Essick, G. & Siegel, R. M. ( 1990) J. Comp. Neurol. 296, 65–113.

36. Boussaoud, D., Ungerleider, L. G. & Desimone, R. ( 1990) J. Comp. Neurol. 296, 462–495.

37. Mays, L. E. & Sparks, D. L. ( 1980) J. Neurophysiol. 43, 207–232.

38. Goldberg, M. E. & Bruce, C. J. ( 1990) J. Neurophysiol. 64, 489–508.

39. Funahashi, S., Chafee, M. & Goldman-Rakic, P. ( 1993) Nature (London) 365, 753–756.

40. Green, D. M. & Swets, J. A. ( 1966) Signal Detection Theory and Psychophysics (Wiley, New York).

41. Downing, C. J. & Movshon, J. A. ( 1989) Invest. Opthalmol. Visual Sci. Suppl. 30, 72.

42. Aertsen, A. M. H. J., Gerstein, G. L., Habib, M. K. & Palm, G. ( 1989) J. Neurophysiol. 61, 900–917.

43. Vaadia, E., Haalman, I., Abeles, M., Bergman, H., Prut, Y., Slovin, H. & Aertsen, A. ( 1995) Nature (London) 373, 515–518.