2

Calibration and Validation

INTRODUCTION

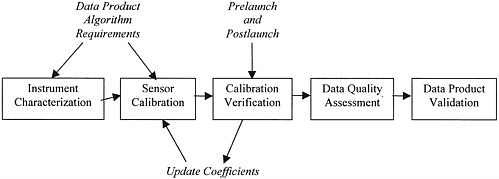

Calibration and validation should be considered as a process that encompasses the entire system, from sensor performance to the derivation of the data products. Long-term studies for documenting and understanding global climate change require not only that the remote sensing instrument be accurately characterized and calibrated, but also that the stability of the instrument characteristics and calibration be well monitored and evaluated over the life of the mission through independent measurements. Calibration has a critical role in measurements that involve several sensors in orbit either simultaneously or sequentially and perhaps not even contiguously. Finally, there is the need to validate the data products that are the raison d’etre for the sensor. As illustrated in Figure 2.1, five steps are involved in the process of calibration and validation: instrument characterization, instrument calibration, calibration verification, data quality assessment, and data product validation.1 All five steps are necessary to add value to the data, permitting an accurate, quantitative picture rather than a merely qualitative one.

1. Instrument characterization is the measurement of various specific properties of a sensor. Most characterizations are performed before launch. They can be performed at the component or subassembly level, or at the system level. For critical characteristics the measurements should be performed before and after final assembly to reveal unpredicted sources of error. Characteristics that are expected to change owing to the rigors of launch must be remeasured on orbit unless it can be shown that the expected changes will be within the error budget allocated according to the data product requirements.

2. Sensor calibration is carried out to determine the equivalent physical quantity—for example, radiant temperature—that stimulates the sensor to produce a specific output signal, for example, digital counts. This process determines a set of calibration coefficients, kelvin per digital count in this example. The calibration coefficients transform sensor output into physically meaningful units. The accuracy of the transformation to physical units depends on the accumulated uncertainty in the chain of measurements leading back to one of the

FIGURE 2.1 Calibration and validation are considered in five key steps from prelaunch sensor characterization and calibration to on-orbit data product validation.

internationally accepted units of physical measurement, the SI units.2 For optimum accuracy and the long-term stability of an absolute calibration, it is necessary to establish traceability to an SI unit.

However, it is more important to determine to which SI unit the measurement must be traceable and whether traceability to an SI unit is even necessary. This can be done by examining the requirements of the data product algorithm. The question of which SI unit to use is answered by determining which chain of measurements has the lowest accumulated uncertainty. Often it is the shortest measurement chain and sometimes the most convenient one. In the case of a relative measurement, that is, in the measurement of a ratio (for example, reflectance), traceability to an SI unit is meaningless. The accuracy in this case will be a function of all the uncertainties accumulated in determining the ratio of the outgoing to the incoming radiation.

The specific characterization and calibration requirements and the needed accuracy are determined by the needs of the algorithms for each data product—that is, the parameters that must be measured and the accuracy to which they must be known. This list of requirements is usually presented as a list of specifications in the contract to build the sensor. It is obvious that if the accuracy requirements are set too high, needless expense will be incurred. If they are set too low, the algorithm will not produce an acceptable data product. The optimum range should be set by sensitivity analyses of the algorithms of several key data products.

3. Calibration verification is the process that verifies the estimated accuracy of the calibration before launch and the stability of the calibration after launch. Prelaunch calibration verification could take the form of documentation of accumulated uncertainty or it could be determined by comparisons with other, similar, well-calibrated and well-documented systems. The latter method of calibration verification is preferred since one or more sources of

uncertainty may have been overlooked in the calibration documentation assembled by a single laboratory. Postlaunch calibration verification refers to measurements using the on-board calibration monitoring system and vicarious calibrations. Vicarious calibrations are those obtained from on-ground measurements (ground truth), from on-orbit, nearly simultaneous comparisons to other, similar, well-calibrated sensors or, in the case of bands in the solar reflectance region, from measurements of sunlight reflected from the Moon.

4. Data quality assessment is the process of determining whether the sensor performance is impaired or whether the measurement conditions are less than optimal. Data quality assessment ensures that the algorithm will perform as expected; it is a way of identifying the impacts of changes in instrument performance on algorithm performance. Instrument degradation or a partial malfunction will affect data quality. Less-than-ideal atmospheric and/or surface conditions, and the uncertainties in decoupling atmosphere-surface interactions, will also degrade the quality of a surface or atmospheric data product. It is necessary to publish the estimated quality of the data products so that the data user will be able to reach well-informed conclusions.

5. Data product validation is the process of determining the accuracy of the data product, including quantifying the various sources of errors and bias. It may consist of comparison with other data products of known accuracy or with independent measurement of the geophysical variable represented by the product. Data product validation provides a quantitative estimate of product accuracy, facilitating the use of the products in numerical modeling and comparison of similar products from different sensors.

Another key issue for calibration and validation is consideration of mission operations factors such as orbit parameters, orbit maintenance, and launch schedules. These items, which are often as important to calibration and validation as the properties of the instruments and software, are not further elaborated on in this report, but the committee considers them important to include in developing plans for calibration and validation in integrating climate research into NPOESS operations.

The five steps leading to a fully calibrated and validated system are discussed in more detail below.

INSTRUMENT CHARACTERIZATION

Instrument characterization must be carried out before a launch, at either the component or the system level, or sometimes both, depending on how critical a specific characteristic is to the data product algorithm as determined by a sensitivity analysis. When summarized in a requirements document that specifies characterization measurements, the results of the sensitivity studies should prove to be a systematic way of accomplishing this work. Blanket specifications covering all channels lead to wasted effort, and important parameters are often overlooked. The time spent in deriving and listing characterization specifications for the parameters for individual channels will result in more efficient design, fabrication, and testing, better sensor performance, and reduced cost.

In addition, an effort should be made to address the interdependency of various instrument characteristics and to ensure balance by determining the sensitivities of the data product algorithms to combinations of instrument characteristics.

The following short list of some of the parameters that might have to be measured is presented as a guide to specifying the characterization requirements for each channel:

-

Spectral response, both in band and out of band, is the relative spectral shape of the sensor response as a function of wavelength. This is usually a critical parameter, and the out-of-band response is often poorly measured, if it is measured at all. Spectral response is normally measured at the component level, that is, by measuring the transmittance of the filters, and the out-of-band response should be measured at that stage also. Because spectral shifts may arise as a result of temperature changes or beam angle, the in-band spectral response should be measured at the end-to-end system level and at the expected operating temperature(s). The spectral transmission of interference filters has been known to be unstable (and subject to on-orbit vacuum shifts in response), so that it may be necessary to remeasure the spectral response on orbit. An example of instrumentation to measure on-orbit response measurements is the spectroradiometric calibration assembly (SRCA) on board the Moderate-resolution Imaging Spectroradiometer (MODIS) now flying on the Earth Observing System (EOS) Terra spacecraft. Such a

-

complex and expensive instrument may not be necessary if reflectance-based rather than radiance-based calibration is used. In this case the precise spectral shape of the band will be less critical (see Appendix C, “Solar Reflection Region Measurements”).

-

Detector and electronics linearity is the ability of the sensor to accurately scale up or down from the level at which it was calibrated. This feature could be checked at the subassembly level, but it is best measured in the full system. It should be noted that it is presently common practice to check linearity by varying the calibration source output. In the case of a blackbody standard source, care must be taken to avoid the effects of spectral changes due to changes in temperature. In the case of integrating sphere sources employing multiple incandescent lamps, it is common practice to check linearity by varying the number of lamps illuminating the sphere. This is not an accurate method. A linearity-verified detector-amplifier should be used to monitor the sphere output. The linearity characterization is then based on a detector of known linearity characteristics rather than on the assumption that the several incandescent lamps have equal radiant outputs.

-

Detector and electronics noise should be measured at the end-to-end system level and over the expected range of operating temperatures of the sensor on orbit.

-

Polarization sensitivity should be measured at the end-to-end system level. It is critical for the short wavelength bands, where the scene is often highly polarized. In systems where a scanning mirror is employed, the reflectance of the scanning mirror may be polarization sensitive, which would change the system polarization response as a function of scan angle.

-

Out-of-field-of-view sensitivity is the sensitivity of the sensor to scattered radiation. This should be tested at the end-to-end system level.

-

Optical performance is the measure of the system modulation transfer function (MTF) or, equivalently, the point spread function. This function should be tested at the end-to-end system level. Measures of individual MTFs are rarely useful. Also, since optical performance may be severely affected by launch and may degrade, it should be tested again on orbit. This can be done by the MODIS SRCA, or it can be done using ground scenes. The characterization of MTF was first demonstrated for the Landsat-4 Thematic Mapper under the NASA Landsat Instrument Data Quality Assessment (LIDQA) program in 1984.

-

Detector array uniformity, often called flat-field correction, will also include measurement of the out-of-specification (bad) pixels and should be measured on the end-to-end system. The detector array can be expected to degrade, so the characterization should be repeated on orbit. Characterization of detector array uniformity can be done by averaging many cloud-filled scenes in both the solar reflective and thermal spectral regions.

-

Pointing accuracy should be measured on the end-to-end system. Pointing accuracy could degrade during launch, so the instrument alignment should be characterized by the use of a library of ground control points once the sensor is in orbit.

-

Band-to-band registration measurements should be done on the full system. As in the case of pointing, band-to-band registration accuracy could degrade during launch, so the measurement should be repeated once the sensor is in orbit. Again, the MODIS SRCA can do this, but the check can be made using ground data, as was demonstrated by the LIDQA project (Wrigley et al., 1984).

SENSOR CALIBRATION

It is usually through the tie-in to SI units that measurements made with different instruments and at different times can be related. As noted above, it is important to determine which type of calibration is truly needed for both short- and long-term studies. This determination, that is, which SI unit to use, is not a semantic argument. It has consequences that will affect the cost and performance of an instrument.

It should also be noted that there is a widespread misconception in the United States that traceability to reference standards of the National Institute of Standards and Technology (NIST) is the only way to ensure accuracy. The accuracy of an absolute measurement can be assessed only via a documented chain of measurements traceable to an SI unit, as described above. Often this is accomplished by utilizing an artifact standard from NIST, but this is not strictly necessary. Other national measurement institutions may have more accurate and

better-documented standards to meet the same need. Other avenues to verifying accuracy should be examined to determine if reduced cost and perhaps improved performance could be achieved.

Specific calibration requirements for particular sensor types and their data products—energy balance, scene temperature, and scene content—are examined below.

Energy Balance Measurements

Energy balance sensors are the radiation balance sensors, such as Clouds and the Earth’s Radiation Energy System (CERES), and the solar irradiance sensors, such as the Active Cavity Radiometer Irradiance Monitor (ACRIM). Each type of sensor is conceptually traceable to the fundamental unit of either electricity or temperature, respectively. The traceability presently in use for these sensors, temperature for radiation balance and electricity for solar irradiance, appears to be state of the art. Their respective measurement chains also appear to be optimal within the limits of present technology.

There has been some recent discussion, however, advocating a change in the traceability of the radiation balance sensors, from temperature to electricity, using an electrical substitution radiometer operated in a cryogenic environment at NIST. The accumulated uncertainty of the measurement chain for the proposed alternative approach, at its present state of development, is much larger than that of the traceability method now being used. If the radiation balance sensors are redesigned and improved, then changing the method of traceability should be considered. A radiation balance sensor on orbit based on the electrical substitution method, the method used in ACRIM, is now technically feasible since the advent of portable, mechanically cooled, cryogenic electrical substitution radiometers (Fox et al., 1996) and superconducting, high-sensitivity temperature sensors (Rice et al., 1998).

Thermal Region Measurements

Remote sensing instruments to determine scene temperature and scene reflectance are normally considered together since they are often functions within the same sensor; two examples are the Landsat Enhanced Thematic Mapper and MODIS. The calibration of these sensors is typically a conversion from digital counts to units of absolute radiance (radiant power per area per solid angle, within a specified spectral band). The calibration traceability is to the SI unit of temperature. The same calibration units are used in both the thermal spectral range, at wavelengths longer than about 2.5 μm, and the solar reflective range, at wavelengths shorter than 2.5 μm. Earth is the dominant radiant source in the thermal spectral range; however, it is not a significant radiant source in the solar reflective range. For a sensor operating in the thermal range, Earth is a radiant emitter, so a calibration in units of radiance traceable to the SI unit of temperature is reasonable. However, in the solar reflective spectral region, Earth is not a radiant emitter, so the basis of the calibration of those channels should be examined more closely. Since reflectance-based calibrations are not typical, the theoretical basis for this type of calibration is discussed in some detail in the next section.

For thermal spectral region sensors such as MODIS, the present method of traceability (that is, via the radiance of a near-Planckian emitter—a blackbody), which is traceable to the SI unit of temperature, is state of the art. It should be mentioned again that changing the method of traceability to the SI unit of electricity by using a cryogenic electrical substitution radiometer could result in a larger uncertainty unless significant improvements are made in this technology.

Similarly, microwave radiation sensor calibrations for active and passive systems are traceable to the SI unit of temperature, the kelvin. Microwave backscatter can be used to deduce roughness or the elevation of properties of the surface rather the radiometric properties. The on-orbit calibration procedure for microwave sensors for measuring scatter or altitude makes use of conventional comparisons of microwave brightness temperatures of near-Planckian sources.

Backscattered microwave radiation sensors on orbit (both active and passive) are calibrated against a hot and a cold brightness temperature source. At the low end, deep space (2.7 K) is the cold brightness temperature source.

At the high end, an on-board blackbody simulator with a calibrated temperature sensor is used as the hot brightness temperature source. The calibration of the temperature sensor is traceable to the kelvin.

CALIBRATION VERIFICATION

As is noted above, prelaunch calibration verification is done by documenting the train of measurements leading to the calibration from the SI unit or from the initial ratio measurement; the uncertainties accumulated in the chain of measurements are the measure of the accuracy of the calibration. The best method of verifying prelaunch calibration accuracy is a comparison between independent paths leading up to the same calibration. The only way for national laboratories to verify accuracy is to conduct interlaboratory measurement comparisons or to compare two different measurement methods.

The on-board calibration monitoring system, often referred to (incorrectly) as the on-board calibrators, should perhaps be called the on-board stability references. It is the function of the on-board references to maintain calibration accuracy through launch and throughout the life of the sensor on orbit. Another role of the on-board reference comes into play if a sensor fails before its replacement is launched. With stable references included in the sensors, there is still the possibility of connecting the two time series.

The stability of microwave radiation sensors, both active and passive, is tested against the on-board hot and cold brightness temperature sources. The greatest uncertainty in backscattered microwave measurements arises from the assumption of sensor linearity in scaling between the hot and cold sources and not from calibrating the temperature of the radiation sources.

If the paradigm for calibrations in the solar reflective spectral region is shifted from radiance- to reflectance-based measurements, there will be no need to maintain the calibration via an incandescent lamp, as is the current practice. Furthermore, as indicated above, wavelength and bandwidth stability will be less critical. Future sensors will not need complex and expensive lamp-based on-board calibrators such as the MODIS SRCA. Only an on-board reflectance standard will be needed, and its stability will have to be assured. The on-board calibrator system for MODIS includes a diffuser stability monitor that is referenced to the Sun and should be able to fulfill the reflectance stability monitoring role, but it has yet to be demonstrated. What has been demonstrated is the use of the Moon to monitor the stability of the diffusers on board the Sea Viewing Wide Field of View Sensor (SeaWiFS) (Barnes et al., 1999) and the Visible and Infrared Scanner (VIRS) (Lyu et al., 2000).

Like Earth, the Moon is not a radiant emitter. It is the ultimately stable reflectance reference, having been undisturbed for eons by the forces that alter the reflectance of Earth. However, it is a less-than-ideal reference because its reflectance is very nonuniform and changes with its position relative to that of the Sun and Earth. The current NASA-supported project to measure the Moon in all the three-body positions will eventually solve the problems associated with its nonuniformity and variability (Kieffer and Wildey, 1996). It is important that this work continue to be supported and possibly verified by measurements made at other institutions. In addition, the impact of the change in calibration paradigm from radiance to reflectance should be examined in regard to the lunar study. Certainly, one immediate result will be to eliminate the need for an absolute spectral radiance calibration, substituting a stable reflectance reference.

Vicarious calibration should be used as one of the methods to assure the long-term stability of a sensor and the comparability of measurements between sensors. Vicarious calibrations involve measuring the radiometric properties of a nearly ideal (i.e., uniform and spectrally unstructured) scene to verify the sensor’s characteristics and its calibration factors. Of course there are no truly ideal scenes, so a variety of near-ideal locations are needed to achieve this objective.

Independent measurements are taken for surface targets considered to be nearly uniform and spectrally unstructured targets. For optical systems, well-instrumented, high-reflectance targets such as White Sands (Gellman et al., 1993) or the Railroad Valley Playa (Scott et al., 1996) are commonly used vicarious calibration sites. For thermal systems, investigators use high-altitude lakes or ocean buoys as thermal vicarious calibration targets (Wan et al., 1999). Another approach for optical systems is to use cloud-top radiance statistics (Vermote and Kaufman, 1995). Present methods for vicarious calibrations report the results as absolute spectral radiance at the top of the atmosphere. However, the actual basis for the measurements on the ground is usually the reflectance

of the surface (Slater et al., 1987). Since absolute radiance is obtained using the absolute values of solar spectral irradiance, little change in the methods of vicarious calibration would be needed to adapt to a reflectance-based calibration paradigm.

DATA QUALITY ASSESSMENT

The objective of data quality assessment is to identify data that are suspect or of poor quality relative to expected instrument and algorithm performance. Automated data quality assessment is undertaken during data production, and post-run-time quality assessment is done shortly after production, usually on a sample of the data. This latter activity usually involves applying various software tools to analyze sample statistics or time series in order to find anomalies or unexpected trends in the data. Information on product quality should be appended to the product as metadata alerting the user to potential problems with the data. Quality assessment is part of the data production system and continues for the life of the instrument. Along with postlaunch calibration and characterization, it provides an understanding of changes in instrument performance and the impact on algorithm performance.

An example of data quality assessment in the EOS era is provided by the MODIS Land Group (information is available online at <http://modland.nascom.nasa.gov/QAA_WWW/qahome.html>). Most of the quality assessment will be performed automatically by the processing software during production (run time quality assessment). Operators will set production quality assessment when the product is generated; the science team and quality assessment support staff will then set quality flags based on an evaluation of a sample of the data product (science quality assessment). User feedback will be solicited as an additional and important source of quality assessment.

Following the guidelines for the EOS Core System quality assessment, information will be stored as metadata in all products. The various quality assessment flags will enable product users to identify data problems within the MODIS time series. A Web site will provide up-to-date information on data problems and remedial action being taken.

Product quality assessment provides an important input for algorithm refinement and identification of problems with instrument performance. The stored quality assessment metadata will be of considerable help in identifying data product records that are in error as a result of problem data.

DATA PRODUCT VALIDATION

Data product validation answers the critical question, What is the accuracy of the geophysical product over the range of environmental conditions for which it is provided? Conceptually, data product validation is simple, but in practice it is often a complex and difficult task to compare the result of independent measurements of a geophysical variable with the result obtained from the sensor on orbit. However, such comparisons need to be done for each data product or record.

Data product validation must involve more than just comparing measurements of the same quantity made in situ (at the surface) and by a satellite-borne sensor. A complete data product validation should include both field and airborne measurements. These must be backed up by laboratory measurements to ensure their validity and then compared with satellite measurements. Data product validation should begin before launch with the laboratory, field, and airborne measurements. After launch, the data product validations cannot be a one-time exercise but must be repeated periodically to ensure continued validity and to test any improvements to the algorithms. Several different groups should participate in the data product validation exercises, preferably with international representation. Finally, the data must be archived with the same attention paid to the continuous data stream from the satellite.

Data product validation can be difficult and expensive, especially when determining the data product’s accuracy over a wide range of conditions. The comparison of surface measurements with satellite measurements requires paying attention to scaling up surface point measurements to the satellite spatial resolution and taking into account the impacts of the atmosphere on the satellite measurement at the time of overpass. Quantifying the temporal and spatial error fields associated with a data product is especially important in the modeling and analysis

of long time series for climate research. These assessments require a commitment to sustained field measurements over the life of the sensor.

Preliminary estimates of accuracy can be obtained for a particular algorithm before launch using simulation or modeling. Such estimates provide a guide to what can be expected; however, accuracy is assessed primarily after launch, once the instrument and algorithm have stabilized.

Validation of higher-order data products for parameters such as snow cover, leaf area index, aerosol optical thickness, and water vapor requires different approaches. A useful distinction can be made between continuous and discrete data products and the methods for their validation. The International Geosphere-Biosphere Program 1-km land cover validation activity represents an important pathfinding activity for validating global land cover. The land community for EOS has developed a series of validation test sites representing a broad range of land surface conditions (Justice et al., 1998), including surface reflectance, vegetation index, leaf area index, and primary productivity. These sites provide a focus for the development and testing of validation instrumentation and satellite data acquisition. Data products from MODIS such as changes in snow and ice cover, fire, and land cover/change will be validated at locations suited to specific product validation. Validation efforts will also include information collected by higher-resolution sensors (Justice et al., 1998). Protocols for validation are being developed and tested for suites of land products. The NASA-funded BigFoot program is addressing some of the problems of scaling from field measurements to satellite resolutions. The land community is starting to coordinate its international validation activities under the Committee on Earth Observation Satellites Calibration and Validation sub-working group on validation (Dowman et al., 1999). The Fluxnet program provides a coordination activity contributing to the validation of data on land productivity.

The oceans community is coordinating much of its validation activity through the Sensor Intercomparison and Merger for Biological and Interdisciplinary Oceanic Studies (SIMBIOS) program (McClain and Fargion, 1999). SIMBIOS is focused on reducing measurement errors by quantifying the uncertainties in the ocean color algorithms, with the aim of blending the data streams from ocean color missions such as SeaWiFS, MODIS, and the Ocean Color Temperature Scanner. There are considerable advantages in international cooperation for the collection of validation data. An important consideration is that validation data be made available to the broader community.

In the past many of the assessments of error in satellite data sets were largely anecdotal. For example, it is known that the Coastal Zone Color Scanner delivered poor retrievals in coastal waters and high-latitude oceans. However, as researchers begin to accumulate long data records and as these data sets begin to influence environmental policy, it will be necessary to move from describing the errors to quantifying them. For data assimilation models, quantitative estimates of the temporal and spatial errors are essential. This level of data product validation will require sustained observations: a one-time data product validation exercise at the beginning of the mission is not sufficient.

CONCLUSIONS AND RECOMMENDATIONS

Following are the committee’s conclusions and recommendations with regard to calibration and validation:

-

A continuous and effective on-board reference system is needed to verify the stability of the calibration and sensor characteristics from launch through the life of a mission. In the case of thermal sensors, there should be an on-board source that is designed for optimum stability. For the solar reflective spectral range, there should be an on-board solar diffuser. The system should be designed to allow for periodic measurements of the Moon. Data product validation and vicarious calibrations should be implemented periodically to verify the stability of the calibration and sensor characteristics.

-

Radiometric characterization of the Moon should be continued and possibly expanded to include measurements made at multiple institutions in order to verify the results. If the new reflectance calibration paradigm is adopted (see Appendix C), then the objective of the lunar characterization program could be changed from measurement of the absolute spectral radiance of the Moon to measurement of the changes in the relative reflectance as a function of the phase and position of Earth, the Sun, and the Moon.

-

The establishment of traceability by national measurement institutions in addition to NIST should be considered to determine if improved accuracy, reduced uncertainty in the measurement chain, and/or better documentation might be achieved, perhaps even at a lower cost.

-

The results of sensitivity studies on the parameters in data product algorithms should be summarized in a requirements document that specifies the characterization measurements for each channel in a sensor. Blanket specifications covering all channels should be avoided unless justified by the sensitivity studies.

-

Quality assessment should be an intrinsic part of operational data production. It involves providing metadata on product quality along with the data product so as to give the user an indication of deviations from expected instrument and algorithm performance and the long-term stability of the data product.

-

Validation should be undertaken for each data product or data record to provide a quantitative estimate of the accuracy of the product over the range of environmental conditions for which the product is provided. It should involve independent correlative measurement of the geophysical variable derived from the satellite data. The guidelines and protocols that are being developed for validation will lead to more standardized measurements and will make comparing the accuracy of similar products from different instruments possible. Undertaking validation both once the instrument calibration is established and following significant changes to the algorithm will contribute to establishing product continuity.

-

Wavelengths and bandwidths of channels in the solar spectral region should be selected to avoid absorption features of the atmosphere, if possible.

-

The calibration of thermal sensing instruments such as CERES and the thermal bands of MODIS should continue to be traceable to the SI unit of temperature via Planckian radiator, blackbody technology. The accumulated uncertainty of calibrations traceable to the fundamental unit of electricity via a cryogenic electrical substitution radiometer is at present much larger than that of calibrations traceable to temperature.

REFERENCES

Barnes, R.A., R.E. Eplee, F.S. Patt, and C.R. McClain. 1999. “Changes in the radiometric sensitivity of SeaWiFS determined from lunar and solar-based measurements,” Appl. Opt. 38:4649-4664.

Dowman, I., J. Morisette, C. Justice, and A. Belward. 1999. “CEOS working group on calibration and validation meeting on digital elevation models and terrain parameters,” Earth Observer 11(4):19-20.

Fox, N.P., P.R. Haycock, J.E. Martin, and I. Ul-Haq. 1996. “A mechanically cooled portable cryogenic radiometer,” Metrologia 32:581-584.

Gellman, D.I., S.F. Biggar, M.C. Dinguirard, P.J. Henry, M.S. Moran, K. Thome, and P.N. Slater. 1993. “Review of SPOT-1 and -2 calibrations at White Sands from launch to present,” Proc. SPIE 1938:118-125.

Justice, C., D. Starr, D. Wickland, J. Privette, and T. Suttles. 1998. “EOS land validation coordination: An update,” Earth Observer 10(3):55-60.

Kieffer, H.H., and R.L. Wildey. 1996. “Establishing the moon as a spectral radiance standard,” J. Atmos. Oceanic Technol. 13:360-375.

Lyu, C-H., W.L. Barnes, and R.A. Barnes. 2000. “First results from the on-orbit calibrations of the Visible and Infrared Scanner for the tropical rainfall measurement mission,” J. Atmos. Oceanic Technol. (February).

McClain, C., and G.S. Fargion. 1999. SIMBIOS Project 1999 Annual Report. NASA Tech. Memo. 1999-209486, NASA Goddard Space Flight Center, Greenbelt, Md.

Rice, J.P., S.R. Lorentz, R.U. Datla, L.R. Vale, D.A. Rudman, M. Lam Chok Sing, and D. Robbes. 1998. “Active cavity absolute radiometer based on high-Tc superconductors,” Metrologia 35:289-293.

Scott, K.P., K.J. Thome, and M.R. Brownlee. 1996. “Evaluation of the Railroad Valley playa for use in vicarious calibration,” SPIE Proc., paper no. 2818-30, Denver, Colo.

Slater, P.N., S.F. Biggar, R.G. Holm, R.D. Jackson, Y. Mao, M.S. Moran, J.M Palmer, and B. Yuan. 1987. “Reflectance- and radiance-based methods for the in-flight absolute calibration of multi-spectral sensors,” Remote Sensing Environ. 22:11-37.

Vermote, E., and Y.J. Kaufman. 1995. “Absolute calibration of AVHRR visible nad near infrared channels using ocean and cloud views,” Int. J. Remote Sensing 16:2317-2340.

Wan, Z., Y. Zhang, X. Ma, M.D. King, J.S. Myer, and X. Li. 1999. “Vicarious calibration of the Moderate-Resolution Imaging Spectroradiometer Airborne Simulator thermal infrared channels,” Appl. Opt. 38:6294-6306.

Wrigley, R.C., et al. 1984. “Thematic Mapper image quality: Registration, noise, and resolution,” IEEE Trans. Geosci. Remote Sensing GE22 (May).