Software and the New Economy

The New Economy refers to a fundamental transformation in the United States economy as businesses and individuals capitalize on new technologies, new opportunities, and national investments in computing, information, and communications technologies. Use of this term reflects a growing conviction that widespread use of these technologies makes possible a sustained increase in the productivity and growth of the U.S. economy.1

Software is an encapsulation of knowledge in an executable form that allows for its repeated and automatic applications to new inputs.2 It is the means by which we interact with the hardware underpinning information and communications technologies. Software is increasingly an integral and essential part of most goods and services—whether it is a handheld device, a consumer appliance, or a retailer. The United States economy, today, is highly dependent on software with

businesses, public utilities, and consumers among those integrated within complex software systems.

-

Almost every aspect of a modern corporation’s operations is embodied in software. According to Anthony Scott of General Motors, a company’s software embodies a whole corporation’s knowledge into business processes and methods—“virtually everything we do at General Motors has been reduced in some fashion or another to software.”

-

Much of our public infrastructure relies on the effective operation of software, with this dependency also leading to significant vulnerabilities. As William Raduchel observed, it seems that the failure of one line of code, buried in an energy management system from General Electric, was the initial source leading to the electrical blackout of August 2003 that paralyzed much of the northeastern and midwestern United States.3

-

Software is also redefining the consumer’s world. Microprocessors embedded in today’s automobiles require software to run, permitting major improvements in their performance, safety, and fuel economy. And new devices such as the iPod are revolutionizing how we play and manage music, as personal computing continues to extend from the desktop into our daily activities.

As software becomes more deeply embedded in most goods and services, creating reliable and robust software is becoming an even more important challenge.

Despite the pervasive use of software, and partly because of its relative immaturity, understanding the economics of software presents an extraordinary challenge. Many of the challenges relate to measurement, econometrics, and industry structure. Here, the rapidly evolving concepts and functions of software as well as its high complexity and context-dependent value makes measuring software difficult. This frustrates our understanding of the economics of software—both generally and from the standpoint of action and impact—and impedes both policy making and the potential for recognizing technical progress in the field.

While the one-day workshop gathered a variety of perspectives on software, growth, measurement, and the future of the New Economy, it of course could not (and did not) cover every dimension of this complex topic. For example, workshop participants did not discuss the potential future opportunities in leveraging software in various application domains. This major topic considers the potential for major future opportunities for software to revolutionize key sectors of the U.S. economy, including the health care industry. The focus of the meeting was on developing a better understanding of the economics of software.

Indeed, as Dale Jorgenson pointed out in introducing the National Academies conference on Software and the New Economy, “we don’t have a very

clear understanding collectively of the economics of software.” Accordingly, a key goal of this conference was to expand our understanding of the economic nature of software, review how it is being measured, and consider public policies to improve measurement of this key component of the nation’s economy, and measures to ensure that the United States retains its lead in the design and implementation of software.

Introducing the Economics of Software, Dr. Raduchel noted that software pervades our economy and society.4 As Dr. Raduchel further pointed out, software is not merely an essential market commodity but, in fact, embodies the economy’s production function itself, providing a platform for innovation in all sectors of the economy. This means that sustaining leadership in information technology (IT) and software is necessary if the United States is to compete internationally in a wide range of leading industries—from financial services, health care and automobiles to the information technology industry itself.

MOORE’S LAW AND THE NEW ECONOMY

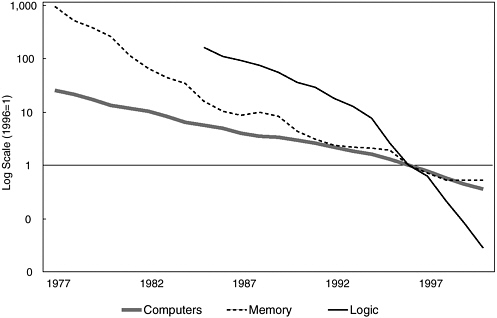

The National Academies’ conference on software in the New Economy follows two others that explored the role of semiconductors and computer components in sustaining the New Economy. The first National Academies conference in the series considered the contributions of semiconductors to the economy and the challenges associated with maintaining the industry on the trajectory anticipated by Moore’s Law. Moore’s Law anticipates the doubling of the number of transistors on a chip every 18 to 24 months. As Figure 1 reveals, Moore’s Law has set the pace for growth in the capacity of memory chips and logic chips from 1970 to 2002.5

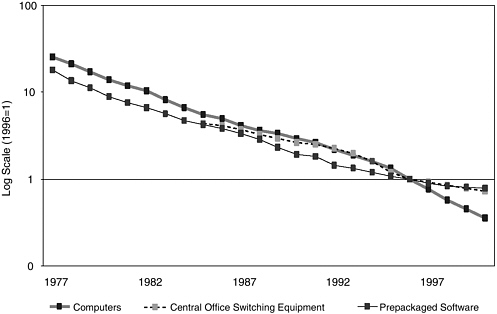

An economic corollary of Moore’s Law is the fall in the relative prices of semiconductors. Data from the Bureau of Economic Analysis (BEA), depicted in Figure 2, shows that semiconductor prices have been declining by about 50 percent a year for logic chips and about 40 percent a year for memory chips between 1977 and 2000. This is unprecedented for a major industrial input. According to Dale Jorgenson, this increase in chip capacity and the concurrent fall in price—the “faster-cheaper” effect—created powerful incentives for firms to substitute information technology for other forms of capital, leading to the productivity increases that are the hallmark of the New Economy.6

The second National Academies conference on the New Economy, “Deconstructing the Computer,” examined how the impact of Moore’s Law and

|

4 |

See William Raduchel, “The Economics of Software,” in this volume. |

|

5 |

For a review of Moore’s Law on its fortieth anniversary, see The Economist, “Moore’s Law at 40” March 26, 2005. |

|

6 |

Dale W. Jorgenson “Information Technology and the U.S. Economy,” The American Economic Review, 91(1):1-32, 2001. |

FIGURE 1 Transistor density on microprocessors and memory chips.

its price corollary extended from microprocessors and memory chips to high technology hardware such as computers and communications equipment. While high-lighting the challenges of measuring the fast-evolving component industries, that conference also brought to light the impact of computers on economic growth based on BEA price indexes for computers (See Figure 3). These figures reveal that computer prices have declined at about 15 percent per year between 1977 and the new millennium, helping to diffuse modern information technology across a broad spectrum of users and applications.

The New Economy is alive and well today. Recent figures indicate that, since the end of the last recession in 2001, information-technology-enhanced productivity growth has been running about two-tenths of a percentage point higher than

in any recovery of the post-World War II period.7 The current challenge rests in developing evidence-based policies that will enable us to continue to enjoy the fruits of higher productivity in the future. It is with this aim that the Board on Science, Technology, and Economic Policy of the National Academies has undertaken a series of conferences to address the need to measure the parameters of the New Economy as an input to better policy making and to highlight the policy challenges and opportunities that the New Economy offers.

This volume reports on the third National Academies conference on the New Economy and Software.8 While software is generally believed to have become more sophisticated and more affordable over the past three decades, data to back these claims remains incomplete. BEA data show that the price of prepackaged software has declined at rates comparable to those of computer hardware and communications equipment (See Figure 3). Yet prepackaged software makes up only about 25 to 30 percent of the software market. There remain large gaps in our knowledge about custom software (such as those produced by SAP or Oracle for database management, cost accounting, and other business functions) and own-account software (which refers to special purpose software such as for airlines reservations systems and digital telephone switches). There also exists some uncertainty in classifying software, with distinctions made among prepackaged, custom, and own-account software often having to do more with market relationships and organizational roles rather than purely technical attributes.9

In all, as Dale Jorgenson points out, there is a large gap in our understanding of the sources of growth in the New Economy.10 Consequently, a major purpose of the third National Academies conference was to draw attention to the need to

address this gap in our ability to understand and measure the trends and contribution of software to the operation of the American economy.

THE NATURE OF SOFTWARE

To develop a better economic understanding of software, we first need to understand the nature of software itself. Software, comprising of millions of lines of code, operates within a stack. The stack begins with the kernel, which is a small piece of code that talks to and manages the hardware. The kernel is usually included in the operating system, which provides the basic services and to which all programs are written. Above this operating system is middleware, which “hides” both the operating system and the window manager. For the case of desktop computers, for example, the operating system runs other small programs called services as well as specific applications such as Microsoft Word and PowerPoint. Thus, when a desktop computer functions, the entire stack is in operation. This means that the value of any part of a software stack depends on how it operates within the rest of the stack.11

The stack itself is highly complex. According to Monica Lam of Stanford University, software may be the most intricate thing that humans have learned to build. Moreover, it is not static. Software grows more complex as more and more lines of code accrue to the stack, making software engineering much more difficult than other fields of engineering. With hundreds of millions of lines of code making up the applications that run a big company, for example, and with those applications resting on middleware and operating systems that, in turn, comprise tens of millions of lines of code, the average corporate IT system today is far more complicated than the Space Shuttle, says William Raduchel.

The way software is built also adds to its complexity and cost. As Anthony Scott of GM noted, the process by which corporations build software is “somewhat analogous to the Winchester Mystery House,” where accretions to the stack over time create a complex maze that is difficult to fix or change.12 This complexity means that a failure manifest in one piece of software, when added to the stack, may not indicate that something is wrong with that piece of software per se, but quite possibly can cause the failure of some other piece of the stack that is being tested for the first time in conjunction with the new addition.

|

Box A: According to Dr. Raduchel a major challenge in creating a piece of code lies in figuring out how to make it “error free, robust against change, and capable of scaling reliably to incredibly high volumes while integrating seamlessly and reliably to many other software systems in real time.” Other challenges involved in a software engineering process include cost, schedule, capability/features, quality (dependability, reliability, security), performance, scalability/flexibility, and many others. These attributes often involve trade offs against one another, which means that priorities must be set. In the case of commercial software, for example, market release deadlines may be a primary driver, while for aerospace and embedded health devices, software quality may be the overriding priority. |

Writing and Testing Code

Dr. Lam described the software development process as one comprising various iterative stages. (She delineated these stages for analytical clarity, although they are often executed simultaneously in modern commercial software production processes.) After getting an idea of the requirements, software engineers develop the needed architecture and algorithms. Once this high-level design is established, focus shifts to coding and testing the software. She noted that those who can write software at the kernel level are a very limited group, perhaps numbering only the hundreds worldwide. This reflects a larger qualitative difference among software developers, where the very best software developers are orders of magnitude—up to 20 to 100 times—better than the average software developer.13 This means that a disproportionate amount of the field’s creative work is done by a surprisingly small number of people.

As a rule of thumb, producing software calls for a ratio of one designer to 10 coders to 100 testers, according to Dr. Raduchel.14 Configuring, testing, and tuning the software account for 95 to 99 percent of the cost of all software in operation. These non-linear complementarities in the production of software mean that simply adding workers to one part of the production process is not likely to make a software project finish faster.15 Further, since a majority of time in devel-

oping a software program deals with handling exceptions and in fixing bugs, it is often hard to estimate software development time.16

The Economics of Open-source Software

Software is often developed in terms of a stack, and basic elements of this stack can be developed on a proprietary basis or on an open or shared basis.17 According to Hal Varian, open-source is, in general, software whose source code is freely available for use or modification by users and developers (and even hackers.) By definition, open-source software is different from proprietary software whose makers do not make the source code available. In the real world, which is always more complex, there are a wide range of access models for open-source software, and many proprietary software makers provide “open”-source access to their product but with proprietary markings.18 While open-source software is a public good, there are many motivations for writing open-source software, he added, including (at the edge) scratching a creative itch and demonstrating skill to one’s peers. Indeed, while ideology and altruism provide some of the motivation, many firms, including IBM, make major investments in Linux and other open-source projects for solid market reasons.

While the popular idea of a distributed model of open-source development is one where spontaneous contributions from around the world are merged into a functioning product, most successful distributed open-source developments take place within pre-established or highly precedented architectures. It should thus not come as a surprise that open source has proven to be a significant and successful way of creating robust software. Linux provides a major instance where both a powerful standard and a working reference for implementation have appeared at the same time. Major companies, including Amazon.com and Google, have chosen Linux as the kernel for their software systems. Based on this kernel, these companies customize software applications to meet their particular business needs.

Dr. Varian added that a major challenge in developing open-source software is the threat of “forking” or “splintering.” Different branches of software can arise from modifications made by diverse developers in different parts of the

world and a central challenge for any open-source software project is to maintain an interchangeable and interoperable standard for use and distribution. Code forks increase adoption risk for users due to the potential for subsequent contrary tips, and thus can diminish overall market size until adoption uncertainties are reduced. Modularity and a common designers’ etiquette—adherence to which is motivated by the developer’s self-interest in avoiding the negative consequences of forking—can help overcome some of these coordination problems.

Should the building of source code by a public community be encouraged? Because source code is an incomplete representation of the information associated with a software system, some argue that giving it away is good strategy. Interestingly, as Dr. Raduchel noted, open-source software has the potential to be much more reliable and secure than proprietary software,19 adding that the open-source software movement could also serve as an alternative and counterweight to monopoly proprietorship of code, such as by Microsoft, with the resulting competition possibly spurring better code writing.20 The experience in contributing to open-source also provides important training in the art of software development, he added, helping to foster a highly specialized software labor force. Indeed, the software design is a creative design process at every level—from low-level code to overall system architecture. It is rarely routine because such routine activities in software are inevitably automated.

Dr. Varian suggested that software is most valuable when it can be combined, recombined, and built upon to produce a secure base upon which additional applications can in turn be built.21 The policy challenge, he noted, lies in ensuring the existence of incentives that sufficiently motivate individuals to develop robust basic software components through open-source coordination, while ensuring that, once they are built, they will be widely available at low cost so that future development is stimulated.

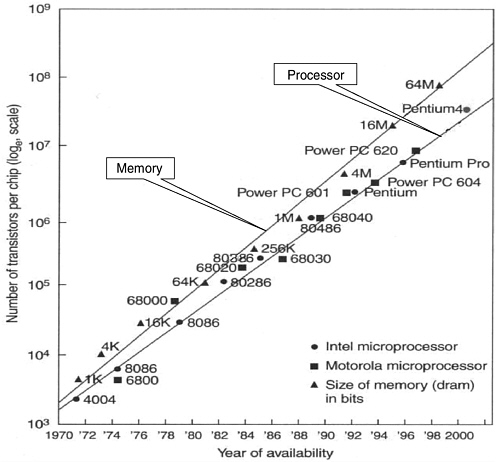

FIGURE 4 Attacks against code are growing.

SOURCE: Analysis by Symantec Security Response using data from Symantec, IDC & ICSA.

Software Vulnerability and Security—A Trillion Dollar Problem?

As software has become more complex, with a striking rise in the lines of code over the past decade, attacks against that code—in the form of both network intrusions and infection attempts—have also grown substantially22 (See Figure 4).

The perniciousness of the attacks is also on the rise. The Mydoom attack of January 28, 2004, for example, did more than infect individuals’ computers, producing acute but short-lived inconvenience. It also reset the machine’s settings leaving ports and doorways open to future attacks.

The economic impact of such attacks is increasingly significant. According to Kenneth Walker of Sonic Wall, Mydoom and its variants infected up to half a million computers. The direct impact of the worm includes lost productivity owing to workers’ inability to access their machines, estimated at between $500 and $1,000 per machine, and the cost of technician time to fix the damage. According to one estimate cited by Mr. Walker, Mydoom’s global impact by February 1, 2004 was alone $38.5 billion. He added that the E-Commerce Times had estimated the global impact of worms and viruses in 2003 to be over one trillion dollars.

To protect against such attacks, Mr. Walker advocated a system of layered security, analogous to the software stack, which would operate from the network gateway, to the servers, to the applications that run those servers. Such protection, which is not free, is another indirect cost of software vulnerability that is typically borne by the consumer.

Enhancing Software Reliability

Acknowledging that software will never be error free and fully secure from attack or failure, Dr. Lam suggested that the real question is not whether these vulnerabilities can be eliminated, raising instead the issue of the role of incentives facing software makers to develop software that is more reliable.

One factor affecting software reliability is the nature of market demand for software. Some consumers—those in the market for mass client software, for example—may look to snap up the latest product or upgrade and feature add-ons, placing less emphasis on reliability. By contrast, more reliable products can typically be found in markets where consumers are more discerning, such as in the market for servers.

Software reliability is also affected by the relative ease or difficulty in creating and using metrics to gauge quality. Maintaining valid metrics can be highly challenging given the rapidly evolving and technically complex nature of software. In practice, software engineers often rely on measurements of highly indirect surrogates for quality (relating to such variables as teams, people, organizations, processes) as well as crude size measures (such as lines of code and raw defect counts).

Other factors that can affect software reliability include the current state of liability law and the unexpected and rapid development of a computer hacker culture, which has significantly raised the complexity of software and the threshold of software reliability. While for these and other reasons it is not realistic to expect a 100 percent correct program, Dr. Lam noted that the costs and consequences of this unreliability are often passed on to the consumer.

THE CHALLENGE OF MEASURING SOFTWARE

The unique nature of software poses challenges for national accountants who are interested in data that track software costs and aggregate investment in software and its impact on the economy. This is important because over the past 5 years investment in software has been about 1.8 times as large as private fixed investment in computers peripheral equipment, and was about one-fifth of all private fixed investment in equipment and software.23 Getting a good measure of this asset, however, is difficult because of the unique characteristics of software development and marketing: Software is complex; the market for software is different from that of other goods; software can be easily duplicated, often at low cost; and the service life of software is often hard to anticipate.24 Even so, repre-

sentatives from the BEA and the Organisation for Economic Co-operation and Development (OECD) described important progress that is being made in developing new accounting rules and surveys to determine where investment in software is going, how much software is being produced in the United States, how much is being imported, and how much the country is exporting.

Financial Reporting and Software Data

Much of our data about software comes from information that companies report to the Securities and Exchange Commission (SEC). These companies follow the accounting standards developed by the Financial Accounting Standards Board (FASB).25 According to Shelly Luisi of the SEC, the FASB developed these accounting standards with the investor, and not a national accountant, in mind. The Board’s mission, after all, is to provide the investor with unbiased information for use in making investment and credit decisions, rather than a global view of the industry’s role in the economy.

Outlining the evolution of the FASB’s standards on software, Ms. Luisi recounted that the FASB’s 1974 Statement of Financial Accounting Standards (FAS-2) provided the first standard for capitalizing software on corporate balance sheets. FAS-2 has since been developed though further interpretations and clarifications. FASB Interpretation No. 6, for instance, recognized the development of software as R&D and drew a line between software for sale and software for operations. In 1985, FAS-86 introduced the concept of technological feasibility, seeking to identify that point where the software project under development qualifies as an asset, providing guidance on determining when the cost of software development can be capitalized. In 1998, FASB promulgated “Statement of Position 98-1” that set a different threshold for capitalization for the cost of software for internal use—one that allows it to begin in the design phase, once the preliminary project state is completed and a company commits to the project. As a result of these accounting standards, she noted, software is included as property, plant, and equipment in most financial statements rather than as an intangible asset.

Given these accounting standards, how do software companies actually recognize and report their revenue? Speaking from the perspective of software company, Greg Beams of Ernst & Young noted that while sales of prepackaged software is generally reported at the time of sale, more complex software systems require recurring maintenance to fix bugs and to install upgrades, causing revenue reporting to become more complicated. In light of these multiple deliverables, software companies come up against rules requiring that they allocate value to each of those deliverables and then recognize revenue in accordance with the

requirements for those deliverables. How this is put into practice results in a wide difference in when and how much revenue is recognized by the software company—making it, in turn, difficult to understand the revenue numbers that a particular software firm is reporting.

Echoing Ms. Luisi’s caveat, Mr. Beams noted that information published in software vendors’ financial statements is useful mainly to the shareholder. He acknowledged that detail is often lacking in these reports, and that distinguishing one software company’s reporting from another and aggregating such information so that it tells a meaningful story can be extremely challenging.

Gauging Private Fixed Software Investment

Although the computer entered into commercial use some four decades earlier, the BEA has recognized software as a capital investment (rather than as an intermediate expense) only since 1999. Nevertheless, Dr. Jorgenson noted that there has been much progress since then, with improved data to be available soon.

Before reporting on this progress, David Wasshausen of the BEA identified three types of software used in national accounts: Prepackaged (or shrink-wrapped) software is packaged, mass-produced software. It is available off-the-shelf, though increasingly replaced by online sales and downloads over the Internet. In 2003, the BEA placed business purchases of prepackaged software at around $50 billion. Custom software refers to large software systems that perform business functions such as database management, human resource management, and cost accounting.26 In 2003, the BEA estimates business purchases of custom software at almost $60 billion. Finally, own-account software refers to software systems built for a unique purpose, generally a large project such as an airlines reservations system or a credit card billing system. In 2003, the BEA estimated business purchases of own-account software at about $75 billion.

Dr. Wasshausen added that the BEA uses the “commodity flow” technique to measure prepackaged and custom software. Beginning with total receipts, the BEA adds imports and subtracts exports, which leaves the total available domestic supply. From that figure, the BEA subtracts household and government purchases to come up with an estimate for aggregate business investment in software.27 By contrast, BEA calculates own-account software as the sum of production costs,

including compensation for programmers and systems analysts and such intermediate inputs as overhead, electricity, rent, and office space.28

The BEA is also striving to improve the quality of its estimates, noted Dr. Wasshausen. While the BEA currently bases its estimates for prepackaged and custom software on trended earnings data from corporate reports to the SEC, Dr. Wasshausen hoped that the BEA would soon benefit from Census Bureau data that capture receipts from both prepackaged and custom software companies through quarterly surveys. Among recent BEA improvements, Dr. Wasshausen cited an expansion of the definitions of prepackaged and custom software imports and exports, and better estimates of how much of the total prepackaged and custom software purchased in the United States was for intermediate consumption. The BEA, he said, was also looking forward to an improved Capital Expenditure Survey by the Census Bureau.

Dirk Pilat of the OECD noted that methods for estimating software investment have been inconsistent across the countries of the OECD. One problem contributing to the variation in measures of software investment is that the computer services industry represents a heterogeneous range of activities, including not only software production, but also such things as consulting services. National accountants have had differing methodological approaches (for example, on criteria determining what should be capitalized) leading to differences between survey data on software investment and official measures of software investments as they show up in national accounts.

Attempting to mend this disarray, Dr. Pilat noted that the OECD Eurostat Task Force has published its recommendations on the use of the commodity flow model and on how to treat own-account software in different countries.29 He noted that steps were underway in OECD countries to harmonize statistical practices and that the OECD would monitor the implementation of the Task Force recommendations. This effort would then make international comparisons possible, resulting in an improvement in our ability to ascertain what was moving where—the “missing link” in addressing the issue of offshore software production.

Despite the comprehensive improvements in the measurement of software undertaken since 1999, Dr. Wasshausen noted that accurate software measurement continued to pose severe challenges for national accountants simply because software is such a rapidly changing field. He noted in this regard, the rise of demand computing, open-source code development, and overseas outsourcing,

|

Box B: Software is “the medium through which information technology expresses itself,” says William Raduchel. Most economic models miscast software as a machine, with this perception dating to the period 40 years ago when software was a minor portion of the total cost of a computer system. The economist’s challenge, according to Dr. Raduchel, is that software is not a factor of production like capital and labor, but actually embodies the production function, for which no good measurement system exists. |

which create new concepts, categories, and measurement challenges.30 Characterizing attempts made so far to deal with the issue measuring the New Economy as “piecemeal”—“we are trying to get the best price index for software, the best price index for hardware, the best price index for LAN equipment routers, switches, and hubs”—he suggested that a single comprehensive measure might better capture the value of hardware, software, and communications equipment in the national accounts. Indeed, information technology may be thought of as a “package,” combining hardware, software, and business-service applications.

Tracking Software Prices Changes

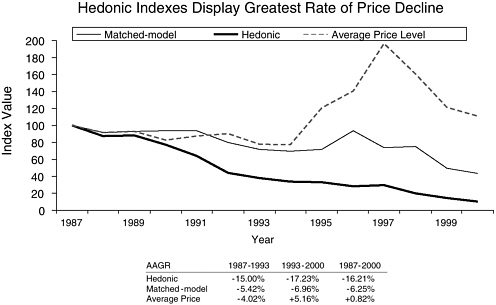

Another challenge in the economics of software is tracking price changes. Incorporating computer science and computer engineering into the economics of software, Alan White and Ernst Berndt presented their work on estimating price changes for prepackaged software, based on their assessment of Microsoft Corporation data.31 Dr. White noted several important challenges facing those seeking to construct measures of price and price change. One challenge lies in ascertaining which price to measure, since software products may be sold as full

FIGURE 5 Quality-adjusted prices for operating systems have fallen, 1987-2000.

SOURCE: Jaison R. Abel, Ernst R. Berndt, Cory W. Monroe, and Alan G. White, “Hedonic Price Indexes for Operating Systems and Productivity Suite PC Software,” draft working paper, 2004.

versions or as upgrades, stand-alones, or suites. An investigator has also to determine what the unit of output is, how many licenses there are, and when price is actually being measured. Another key issue concerns how the quality of software has changed over time and how that should be incorporated into price measures.

Surveying the types of quality changes that might come into consideration, Dr. Berndt gave the example of improved graphical interface and “plug-n-play,” as well as increased connectivity between different components of a software suite. In their study, Drs. White and Berndt compared the average price level (computing the price per operating system as a simple average) with quality-adjusted price levels using hedonic and matched-model econometric techniques. They found that while the average price, which does not correct for quality changes, showed a growth rate of about 1 percent a year, the matched model showed a price decline of around 6 percent a year and the hedonic calculation showed a much larger price decline of around 16 percent.

These quality-adjusted price declines for software operating systems, shown in Figure 5, support the general thesis that improved and cheaper information technologies contributed to greater information technology adoption leading to productivity improvements characteristic of the New Economy.32

THE CHALLENGES OF THE SOFTWARE LABOR MARKET

A Perspective from Google

Wayne Rosing of Google said that about 40 percent of the company’s thousand plus employees were software engineers, which contributed to a company culture of “designing things.” He noted that Google is “working on some of the hardest problems in computer science…and that someday, anyone will be able to ask a question of Google and we’ll give a definitive answer with a great deal of background to back up that answer.” To meet this goal, Dr. Rosing noted that Google needed to pull together “the best minds on the planet and get them working on these problems.”

Google, he noted is highly selective, hiring around 300 new workers in 2003 out of an initial pool of 35,000 resumes sent in from all over the world. While he attributed this high response to Google’s reputation as a good place to work, Google in turn looked for applicants with high “raw intelligence,” strong computer algorithm skills and engineering skills, and a high degree of self-motivation and self-management needed to fit in with Google’s corporate culture.

Google’s outstanding problem, Dr. Rosing lamented, was that “there aren’t enough good people” available. Too few qualified computer science graduates were coming out of American schools, he said. While the United States remained one of the world’s top areas for computer-science education and produced very good graduates, there are not enough people graduating at the Masters’ or Doctoral level to satisfy the needs of the U.S. economy, especially for innovative firms such as Google. At the same time, Google’s continuing leadership requires having capable employees from around the world, drawing on advances in technology and providing language specific skills to service various national markets. As a result, Google hires on a global basis.

A contributing factor to Google’s need to hire engineers outside the country, he noted, is the impact of U.S. visa restrictions. Noting that the H1-B quota for 2004 was capped at 65,000, down from approximately 225,000 in previous years, he said that Google was not able to hire people who were educated in the United States, but who could not stay on and work for lack of a visa. Dr. Rosing said that such policies limited the growth of companies like Google within the nation’s borders—something that did not seem to make policy sense.

The Offshore Outsourcing Phenomenon

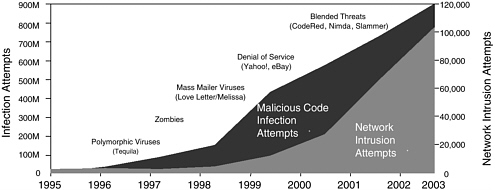

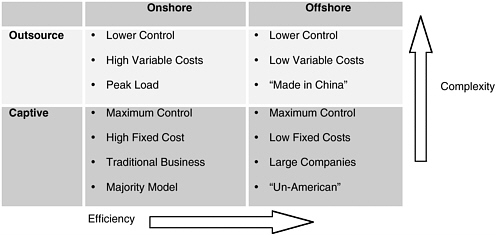

Complexity and efficiency are the drivers of offshore outsourcing, according to Jack Harding of eSilicon, a relatively new firm that produces custom-made microchips. Mr. Harding noted that as the manufacturing technology grows more complex, a firm is forced to stay ahead of the efficiency curve through large recapitalization investments or “step aside and let somebody else do that part of

FIGURE 6 The Offshore Outsourcing Matrix.

the work.” This decision to move from captive production to outsourced production, he said, can then lead to offshore-outsourcing—or “offshoring”—when a company locates a cheaper supplier in another country of same or better quality.

Displaying an outsourcing-offshoring matrix (Figure 6) Mr. Harding noted that it was actually the “Captive-Offshoring” quadrant, where American firms like Google or Oracle open production facilities overseas, that is the locus of a lot of the current “political pushback” about being “un-American” to take jobs abroad.33 Activity that could be placed in the “Outsource-Offshore” box, meanwhile, was marked by a trade-off where diminished corporate control had to be weighed against very low variable costs with adequate technical expertise.34

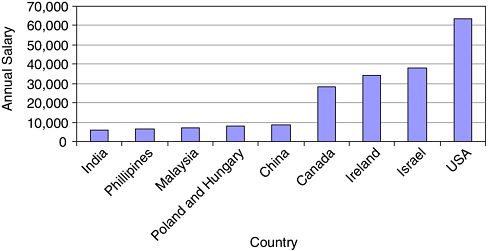

Saving money by outsourcing production offshore not only provides a compelling business motive, it has rapidly become “best practice” for new companies. Though there might be exceptions to the rule, Mr. Harding noted that a software company seeking venture money in Silicon Valley that did not have a plan to base a development team in India would very likely be disqualified. It would not be seen as competitive if its intention was to hire workers at $125,000 a year in Silicon Valley when comparable workers were available for $25,000 a year in Bangalore. (See Figure 7, cited by William Bonvillian, for a comparison of annual salaries for software programmers.) Heeding this logic, almost every

FIGURE 7 Averages of base annual salary for a programmer in various countries.

SOURCE: Computerworld, April 28, 2003.

software firm has moved or is in the process of moving its development work to locations like India, observed Mr. Harding. The strength of this business logic, he said, made it imperative that policy-makers in the United States understand that offshoring is irreversible and learn how to constructively deal with it.

How big is the offshoring phenomenon? Despite much discussion, some of it heated, the scope of the phenomenon is poorly documented. As Ronil Hira of the Rochester Institute of Technology pointed out, the lack of data means that no one could say with precision, how much work had actually moved offshore. This is clearly a major problem from a policy perspective.35 He noted, however, that the effects of these shifts were palpable from the viewpoint of computer hardware engineers and electrical and electronics engineers, whose ranks had faced record levels of unemployment in 2003.

SUSTAINING THE NEW ECONOMY: THE IMPACT OF OFFSHORING

What is the impact of the offshoring phenomenon on the United States and what policy conclusions can we draw from this assessment? Conference participants offered differing, often impassioned views on this question. Presenters did

not agree with one another, even on such seemingly simple issues, such as whether the H1-B quota is too high or too low, whether the level for H1-B visas is causing U.S. companies to go abroad for talent or not, whether there is a shortage of talent within U.S. borders or not, or whether there is a short-term over-supply (or not) of programmers in the present labor market.

Conference participants, including Ronil Hira and William Bonvillian, highlighted two schools of thought on the impact of offshore outsourcing—both of which share the disadvantage of inadequate data support. Whereas some who take a macroeconomic perspective believe that offshoring will yield lower product and service costs and create new markets abroad fueled by improved local living standards, others, including some leading industrialists who understand the micro implications, have taken the unusual step of arguing that offshoring can erode the United States’ technological competitive advantage and have urged constructive policy countermeasures.

Among those with a more macro outlook, noted Dr. Hira, is Catherine Mann of the Institute for International Economics, who has argued that “just as for IT hardware, globally integrated production of IT software and services will reduce these prices and make tailoring of business-specific packages affordable, which will promote further diffusion of IT use and transformation throughout the U.S.

|

Box C: “Outsourcing is just a new way of doing international trade. More things are tradable than were in the past and that’s a good thing…. I think that outsourcing is a growing phenomenon, but it’s something that we should realize is probably a plus for the economy in the long run.” N. Gregory Mankiwa “When you look at the software industry, the market share trend of the U.S.-based companies is heading down and the market share of the leading foreign companies is heading up. This x-curve mirrors the development and evolution of so many industries that it would be a miracle if it didn’t happen in the same way in the IT service industry. That miracle may not be there.” Andy Grove |

economy.”36 Cheaper information technologies will lead to wider diffusion of information technologies, she notes, sustaining productivity enhancement and economic growth.37 Dr. Mann acknowledges that some jobs will go abroad as production of software and services moves offshore, but believes that broader diffusion of information technologies throughout the economy will lead to an even greater demand for workers with information technology skills.38

Observing that Dr. Mann had based her optimism in part on the unrevised Bureau of Labor Statistics (BLS) occupation projection data, Dr. Hira called for reinterpreting this study in light of the more recent data. He also stated his disagreement with Dr. Mann’s contention that lower IT services costs provided the only explanation for either rising demand for IT products or the high demand for IT labor witnessed in the 1990s. He cited as contributing factors the technological paradigm shifts represented by such major developments as the growth of the Internet as well as Object-Oriented Programming and the move from mainframe to client-server architecture.

Dr. Hira also cited a recent study by McKinsey and Company that finds, with similar optimism, that offshoring can be a “win-win” proposition for the United States and countries like India that are major loci of offshore outsourcing for software and services production.39 Dr. Hira noted, however, that the McKinsey estimates relied on optimistic estimates that have not held up to recent job market realities. McKinsey found that India gains a net benefit of at least 33 cents from every dollar the United States sends offshore, while America achieves a net benefit of at least $1.13 for every dollar spent, although the model apparently assumes that India buys the related products from the United States.

These more sanguine economic scenarios must be balanced against the lessons of modern growth theorists, warned William Bonvillian in his conference presentation. Alluding to Clayton Christiansen’s observation of how successful companies tend to swim upstream, pursuing higher-end, higher-margin customers

|

Box D: Information technology and software production are not commodities that the United States can potentially afford to give up overseas suppliers but are, as William Raduchel noted in his workshop presentation, a part of the economy’s production function (See Box B). This characteristic means that a loss of U.S. leadership in information technology and software will damage, in an ongoing way, the nation’s future ability to compete in diverse industries, not least the information technology industry. Collateral consequences of a failure to develop adequate policies to sustain national leadership in information technology is likely to extend to a wide variety of sectors from financial services and health care to telecom and automobiles, with critical implications for our nation’s security and the well-being of Americans. |

with better technology and better products, Mr. Bonvillian noted that nations can follow a similar path up the value chain.40 Low-end entry and capability, made possible by outsourcing these functions abroad, he noted, can fuel the desire and capacity of other nations to move to higher-end markets.

Acknowledging that a lack of data makes it impossible to track activity of many companies engaging in offshore outsourcing with any precision, Mr. Bonvillian noted that a major shift was underway. The types of jobs subject to offshoring are increasingly moving from low-end services—such as call centers, help desks, data entry, accounting, telemarketing, and processing work on insurance claims, credit cards, and home loans—towards higher technology services such as software and microchip design, business consulting, engineering, architecture, statistical analysis, radiology, and health care where the United States currently enjoys a comparative advantage.

Another concern associated with the current trend in offshore outsourcing is the future of innovation and manufacturing in the United States. Citing Michael Porter and reflecting on Intel Chairman Andy Grove’s concerns, Mr. Bonvillian noted that business leaders look for locations that gather industry-specific resources together in one “cluster.” 41 Since there is a tremendous skill set involved in

advanced technology, argued Mr. Bonvillian, losing a parts of that manufacturing to a foreign country will help develop technology clusters abroad while hampering their ability to thrive in the United States. These effects are already observable in semiconductor manufacturing, he added, where research and development is moving abroad to be close to the locus of manufacturing.42 This trend in hardware, now followed by software, will erode the United States’ comparative advantage in high technology innovation and manufacture, he concluded.

The impact of these migrations is likely to be amplified: Yielding market leadership in software capability can lead to a loss of U.S. software advantage, which means that foreign nations have the opportunity to leverage their relative strength in software into leadership in sectors such as financial services, health care, and telecom, with potentially adverse impacts on national security and economic growth.

Citing John Zysman, Mr. Bonvillian pointed out that “manufacturing matters,” even in the Information Age. According to Dr. Zysman, advanced mechanisms for production and the accompanying jobs are a strategic asset, and their location makes the difference as to whether or not a country is an attractive place to innovate, invest, and manufacture.43 For the United States, the economic and strategic risks associated with offshoring, noted Mr. Bonvillian, include a loss of in-house expertise and future talent, dependency on other countries on key technologies, and increased vulnerability to political and financial instabilities abroad.

With data scarce and concern “enormous” at the time of this conference, Mr. Bonvillian reminded the group that political concerns can easily outstrip economic analysis. He added that a multitude of bills introduced in Congress seemed to reflect a move towards a protectionist outlook.44 After taking the initial step of collecting data, he noted that lawmakers would be obliged to address widespread public concerns on this issue. Near-term responses, he noted, include programs to retrain workers, provide job-loss insurance, make available additional venture financing for innovative startups, and undertake a more aggressive trade policy. Longer term responses, he added, must focus on improving the nation’s innovative capacity by investing in science and engineering education and improving the broadband infrastructure.

|

Box E: Given the understanding generated at the symposium about the uniqueness and complexity of software and the ecosystem that builds, maintains, and manages it, Dr. Raduchel asked each member of the final Participants’ Roundtable to identify key policy issues that need to be pursued. Drawing from the experience of the semiconductor industry, Dr. Flamm noted that it is best to look ahead to the future of the industry rather than look back and “invest in the things that our new competitors invest in,” especially education. Dr. Rosing likewise pointed out the importance of lifelong learning, observing that the fact that many individuals did not stay current was a major problem facing the United States labor force. What the country needed, he said, was a system that created extraordinary incentives for people to take charge of their own careers and their own marketability. Mr. Socas noted that the debate over software offshoring was not the same as a debate over the merits of free-trade, since factors that give one country a relative competitive advantage over another are no longer tied to a physical locus. Calling it the central policy question of our time, he wondered if models of international trade and system of national accounting, which are based on the idea of a nation-state, continue to be valid in a world where companies increasingly take a global perspective. The current policy issue, he concluded, concerns giving American workers the skills that allow them to continue to command high wages and opportunities. Also observing that the offshoring issue was not an ideological debate between free trade and protectionism, Dr. Hira observed that “we need to think about how to go about making software a viable profession and career for people in America.” |

What is required, in the final analysis, is a constructive policy approach rather than name calling, noted Dr. Hira. He pointed out that it was important to think through and debate all possible options concerning offshoring rather than tarring some with a “protectionist” or other unacceptable label and “squelching them before they come up for discussion.” Progress on better data is needed if such constructive policy approaches are to be pursued.

PROGRESS ON BETTER DATA

Drawing the conference to a close, Dr. Jorgenson remarked that while the subject of measuring and sustaining the New Economy had been discovered by

|

“Wait a minute! We discovered this problem in 1999, and only five years later, we’re getting the data.” Dale Jorgenson |

economists only in 1999, much progress had already been made towards developing the knowledge and data needed to inform policy making. This conference, he noted, had advanced our understanding of the nature of software and the role it plays in the economy. It had also highlighted pathbreaking work by economists like Dr. Varian on the economics of open-source software, and Drs. Berndt and White on how to measure prepackaged software price while taking quality changes into account. Presentations by Mr. Beams and Ms. Luisi had also revealed that measurement issues concerning software installation, business reorganization, and process engineering had been thought through, with agreement on new accounting rules.

As Dr. Jorgenson further noted, the Bureau of Economic Analysis had led the way in developing new methodologies and was soon getting new survey data from the Census Bureau on how much software was being produced in the United States, how much was being imported, and how much the country was exporting. As national accountants around the world adopted these standards, international comparisons will be possible, he added, and we will be able to ascertain what is moving where—providing the missing link to the offshore outsourcing puzzle.