7

Realizing the Opportunities

Based upon the science reviewed in the previous chapters, the committee has identified special opportunities at the intersection of astronomy and physics in the form of eleven key questions that are of deep interest and are ripe for answering.

Some of the critical work needed to address these 11 questions is part of ongoing programs in astronomy and in nuclear, particle, and gravitational physics. Other needed work is spelled out in the most recent astronomy decadal survey1 or has been recommended by the DOE/NSF High Energy Physics Advisory Panel or their Nuclear Science Advisory Committee.

The committee’s recommendations, which are presented at the end of this chapter, are meant to complement and supplement the programs in astronomy and physics already in place or recommended, to ensure that the great opportunities before us are realized. They are in no way intended to override the advice of the groups mentioned above.

In the section entitled “The Eleven Questions,” the committee presents the 11 questions and summarizes the type of work needed to answer each of them. The detailed strategy for realizing these scientific opportunities that the committee was charged to develop is laid out in seven recommendations contained in section “Recommendations.” The remaining sections provide the justifications for the recommendations and tie the seven recommendations to the science questions.

The committee’s seven recommendations do not correspond simply to the questions; the interconnectedness of the science precluded such a mapping. Some of the projects it recommends address more than one science question, while some of the questions have no clear connection to the recommendations, although programs already in place or recommended by

other NRC committees or advisory groups will address them. The committee calls for three new initiatives—an experiment to map the polarization of the cosmic microwave background, a wide-field space telescope, and a deep underground laboratory. It adds its support to several other initiatives that were previously put forth or set in place and addresses structural issues.

THE ELEVEN QUESTIONS

What Is Dark Matter?

Dark matter dominates the matter in the universe, but questions remain: How much dark matter is there? Where is it? What is it? Of these, the last is the most fundamental. The questions concerning dark matter can be answered by a combination of astronomical and physical experiments. On small astronomical scales, the quantity and location of dark matter can be studied by utilizing its strong gravitational lensing effects on light from distant bright objects and from the distribution and motions of galaxies and hot gas under its gravitational influence. These can be studied using ground-based and space-based optical and infrared telescopes and space-based x-ray telescopes. On larger scales, optical and infrared wide-field survey telescopes can trace the matter distribution via weak gravitational lensing. (Strong gravitational lensing produces multiple images of the lensed objects, while weak gravitational lensing simply distorts the image of the lensed object; see Figure 5.6.) The distribution of dark matter on large scales can be measured by studying motions of galaxies relative to the cosmic expansion. While these observations will measure the quantity and location of dark matter, the ultimate determination of its nature will almost certainly depend on the direct detection of dark matter particles. Ongoing experiments to detect dark matter particles in our Milky Way such as Cold Dark Matter Search II and the US Axion Experiment, future dark-matter experiments in underground laboratories, and accelerator searches for supersymmetric particles at the Fermilab Tevatron or the CERN LHC are all critical. Elements of the program live in the purview of each of three funding agencies, DOE, NASA, and NSF; coordination will be needed to ensure the most effective overall program.

What Is the Nature of the Dark Energy?

There is strong evidence from the study of high-redshift supernovae that the expansion of the universe is accelerating. Fluctuations in the

temperature of the CMB indicate that the universe is flat, but the amount of matter is insufficient (by about a factor of 3) to be in accord with this. All this points to the presence of a significant dark energy component, perhaps in the form of a cosmological constant, both to make up the deficit and, through its repulsive gravitational effects, to cause a universal acceleration. This mysterious energy form controls the destiny of the universe and could shed light on the quantum nature of gravity. Because of its diffuse nature, the best methods to probe its properties rely upon its effect on the expansion rate of the universe and the growth of structure in the universe. The use of high-redshift (z ~ 0.5 to 1.8) supernovae as cosmic mileposts led to the discovery of cosmic speed up. They have great promise for shedding light on the nature of the dark energy. To do so will require a new class of wide-field telescopes to discover and follow up thousands of supernovae as well as a better understanding of type Ia supernovae to establish that they really are standard candles. In addition, clusters of galaxies can be detected out to redshifts as large as 2 or 3 through x-ray surveys, through large-area radio and millimeter-wave surveys using the Sunyaev-Zel’dovich effect and through gravitational lensing. Future x-ray missions will be able to determine the redshifts and masses of these clusters. High-redshift supernovae, counts of galaxy clusters, weak-gravitational lensing, and the microwave background all provide complementary information about the existence and properties of dark energy. Already NASA and NSF have programs and special expertise in parts of this science with their traditional roles in space- and ground-based astronomy, while DOE has made contributions in areas such as CCD detector development. Again, interagency cooperation and coordination will be needed to define and manage this research optimally.

How Did the Universe Begin?

The inflationary paradigm, that the very early universe underwent a very large and rapid expansion, is now supported by observations of tiny fluctuations in the intensity of the CMB. The exact cause of inflation is still unknown. Inflation leaves a telltale signature of gravitational waves, which can be used to test the theory and distinguish between different models of inflation. Direct detection of the gravitational radiation from inflation might be possible in the future with very-long-baseline, space-based laser interferometer gravitational-wave detectors. A promising shorter-term approach is to search for the signature of these gravitational waves in the polarized radiation from the CMB. If the relevant polarization signals are strong

enough, they may be detected by the current generation of balloon-borne and satellite experiments, such as MAP, which is now taking data, and the European Planck satellite, planned for launch late in this decade. However, it is likely that a more sensitive satellite mission devoted to polarization measurements will be required. Support for detector development is critical to realizing such a mission. NSF, NASA, and DOE have already played important roles in CMB science, and their cooperation in the future will be essential.

Did Einstein Have the Last Word on Gravity?

Although general relativity has been tested over a range of length scales and physical conditions, it has not been tested in the extreme conditions near black holes. Its predicted gravitational waves have been indirectly observed, but not directly detected and studied in detail. Gravity has not been unified with the other forces, nor has Einstein’s theory been generalized to include quantum effects. A host of experiments are now probing possible effects arising from the unification of general relativity with other forces, from laboratory-scale precision experiments to test the principle of equivalence and the force law of gravity to the search for the production of black holes at accelerators. Space experiments envisioned in NASA’s Beyond Einstein plan will further test general relativity. Constellation-X, a high-resolution x-ray spectroscopic mission, will be able to probe the regions near the event horizons of black holes by measuring the red- and blueshifts of spectral lines emitted by gas accreting onto the black holes. LISA, a space-based laser interferometer gravitational-wave observatory, will be able to probe the space-time around black holes by detecting the gravitational radiation from merging massive black holes. DOE, NASA, and NSF all have roles to play in establishing a better understanding of gravity.

What Are the Masses of the Neutrinos and How Have They Shaped the Evolution of the Universe?

The discovery that neutrinos have mass and can oscillate among their different types has implications for both the universe and the laws that govern it. Further progress in understanding the masses and oscillations of neutrinos will require an ongoing program of large-scale detectors to study neutrinos from atmospheric and solar sources, striving eventually for sensitivity to the low-energy neutrinos from the proton-proton sequence of

nuclear reactions. Experiments that send beams of neutrinos from accelerators to remote detectors (e.g., MINOS) will also provide critical information on neutrino masses and mixing. Detectors will need to be stable and to run for extended periods if they are to provide a window for the observation and timing of neutrinos from any nearby supernova event. Finally, the absolute scale of neutrino masses can be probed by end-point studies of beta decay and high-sensitivity searches for neutrinoless double beta decay. If neutrino masses are large enough, they may play a small but detectable role in the development of large-scale structure in the universe. Elements of this program will require a deep underground laboratory. Such an underground laboratory would perform experiments at the intersection of particle and nuclear physics. It is likely that scientists supported by both DOE and NSF will be involved in its programs.

How Do Cosmic Accelerators Work and What Are They Accelerating?

Cosmic rays and photons with energies far in excess of anything we can produce in laboratories have been detected. We do not yet know the sources of these particles and thus cannot understand their production mechanism. Neutrinos may also be produced in association with them. Identifying the sources of ultrahigh-energy cosmic rays requires several kinds of large-scale experiments to collect sufficiently large data samples and determine the particle directions and energies precisely. Dedicated neutrino telescopes of cubic kilometer size in deep water or ice can be used to search for cosmic sources of high-energy neutrinos. Further study of the sources of high-energy gamma-ray bursts will also be relevant. DOE, NASA, and NSF are all involved in studying the highest-energy cosmic particles.

To understand the acceleration mechanisms of these particles, a better understanding of relativistic plasmas is needed. Laboratory experiments that use high-energy-density pulses to probe relativistic plasma effects can provide important tests of our ability to model the phenomena in astrophysical environments that are the likely sources of intense high-energy particles and radiation. Laboratory work thus will help to guide the development of a theory of cosmic accelerators, as well as to refine our understanding of other astrophysical phenomena that involve relativistic plasmas. This work will require significant interagency and interdisciplinary coordination. The facilities that can produce intense high-energy pulses in plasmas are laser or accelerator facilities funded by DOE. The expertise needed to bring these resources to bear on astrophysical phenomena crosses both disciplinary and agency boundaries.

Are Protons Unstable?

The observed preponderance of matter over antimatter in the universe may be tied to interactions that change baryon number and that violate matter-antimatter (CP) symmetry. Further, baryon number violation is a signature of theories that unify the forces and particles of nature. Two possible directions to search for baryon-number-changing interactions are direct searches for proton decay and searches for evidence of neutron-antineutron oscillations. To attack proton decay at an order of magnitude increase in sensitivity over current limits will require a large detector in a deep underground location. It will also be desirable to achieve improved sensitivity to decay modes involving a kaon and a neutrino, as well as to modes involving a pion and a positron. These searches are complemented by the program in CP violation physics involving kaon and B-meson decays, which is a central part of the ongoing high-energy physics agenda. In the future, it may be feasible to determine CP violation in neutrino interactions in an underground lab via long-baseline experiments with intense neutrino beams from accelerators. As in the case of the related neutrino experiments mentioned above, this work will require coordinated planning among all agencies supporting any underground laboratory.

What Are the New States of Matter at Exceedingly High Density and Temperature?

Computer simulations of quantum chromodynamics (QCD) have provided evidence that at high temperature and density, matter undergoes a transition to a state known as the quark-gluon plasma. The existence and properties of this new phase of matter have important cosmological implications. Quark-gluon plasmas may also play a role in the interiors of neutron stars. Some, but not all, aspects of the transition from ordinary matter to a quark-gluon plasma can be probed with accelerators (see Figure 6.4). Experiments at the Relativistic Heavy-Ion Collider (RHIC) at Brookhaven National Laboratory may probe the transition to a quark-gluon plasma in the fireball formed when two massive nuclei collide at high energy. If this phase existed in the early universe, it may have left its signature in a gravitational-wave signal. The LISA space gravitational-wave interferometer will begin a search for this signal, but a follow-on experiment with higher sensitivity may be needed in order to observe it. Transitions to other new phases of matter may have occurred in the early universe and left detectable gravitational-wave signatures (possibilities include transitions to states where the

forces of nature are unified). X-ray observations of neutron stars can shed light on how matter behaves at nuclear and higher densities, providing insights about the physics of nuclear matter and possibly even of new states of matter.

Are There Additional Space-Time Dimensions?

Theories containing more than four dimensions (with at least some of the additional dimensions having macroscopic scale) have been suggested as the explanation for why the observed gravitational force is so small compared with the other fundamental forces. Such theories have two types of possible experimental signature. Small-scale precision experiments can search for deviations from standard predictions for the strength of gravity on the submillimeter scale. High-energy accelerator searches can test for events with missing energy, signaling the production of gravitons, evidence for the excitations of a compact additional dimension. Accelerator searches for new particles and/or missing energy are typically not done with a dedicated experiment but by additional analyses of data collected in high-energy collision experiments. It is important for the agencies to recognize the value of these analyses, even if they do not find the desired effect but instead set new limits. This science falls into the realms of both NSF and DOE.

How Were the Elements from Iron to Uranium Made?

While we have a relatively complete understanding of the origin of elements lighter than iron, important details in the production of elements from iron to uranium remain a puzzle. A sequence of rapid neutron captures by nuclei, known as the r-process, is clearly involved, as may be seen from the observed abundances of the various elements. Supernova explosions, neutron-star mergers, or gamma-ray bursters are possible locales for this process, but our incomplete understanding of these events leaves the question open. Progress requires work on a number of fronts. More realistic simulations of supernova explosions and neutron star mergers are essential; they will require access to large-scale computing facilities. In addition, better measurements are needed for both the inputs and the outputs of these calculations.

The masses and other properties of neutrinos are crucial parts of the input. The masses and lifetimes of many nuclei that cannot be reached with existing technology are also important input parameters; however, a complete theoretical description of such nuclei remains out of reach. Almost all

the relevant r-process nuclei could be accessible for study in a suitably designed two-stage acceleration facility (such as RIA) that produces isotopes and reaccelerates them. Such a facility has been recommended by NSAC as a high-priority project for nuclear physics.

For the outputs, sensitive high-energy x-ray and gamma-ray space experiments will allow us to observe the decays of newly formed elements soon after supernova explosions and other major astrophysical events. Comparison of these observations with the outputs of simulations can constrain the theoretical models for the explosions. Better measurements of abundances of certain heavy elements in cosmic rays may also provide useful constraints.

The program suggested above spans nuclear physics, astrophysics, and particle physics and will require coordination between all three agencies.

Is a New Theory of Matter and Light Needed at the Highest Energies?

While few scientists expect that the theory of QED will fail in any astrophysical environment, checking the consistency of observations with predictions of this theory does provide a way to test the self-consistency of astrophysical models and mechanisms. The predictions of QED have been tested with great precision in regimes accessible to laboratory study, such as in static magnetic fields as large as roughly 105 gauss. However, magnetic fields as large as 1012 gauss are commonly found on the surfaces of neutron stars (pulsars), and a subset of neutron stars, called magnetars, have magnetic field strengths in the range 1014 to 1015 gauss, well above the QED critical field, where quantum effects produce polarized radiation. As magnetars rotate rather slowly, it may be possible to observe this polarization and map out the neutron star magnetic field. To carry out such observations will require x-ray instruments capable of measuring polarization.

As can be seen from these brief summaries, important parts of the answers to the 11 questions lie squarely in the central plans of the core disciplines of high-energy physics, nuclear physics, plasma physics, or astrophysics, and much exciting science relevant to our questions is already being pursued. The fact that the recommendations made in this report do not speak directly to existing programs should not be construed as lack of support for those programs. Rather the committee has been charged to focus attention on projects or programs that because they lie between the traditional disciplines may have fallen through the cracks. When viewed from

the broader perspective this science takes on a greater urgency and must be made a priority.

It is also notable that many of the efforts described above have features that fall within the purview of more than one agency, or involve competing approaches that, in the present system, would be reviewed by different agencies. To ensure that the approach to these problems follows the most effective path, interagency cooperation is needed, not just at the stage of funding decisions but also at the level of project oversight when a project is funded through more than one agency.

UNDERSTANDING THE BIRTH OF THE UNIVERSE

The CMB is a relic from a very early time in the history of the universe. The spectrum and anisotropy of the CMB have already given us valuable information about the birth of the universe and provide some evidence that the universe went through an inflationary epoch. Future measurements of the polarization of the anisotropy of the CMB are the most promising way to definitively test inflation and to learn directly about the inflationary epoch.

The photons of the CMB come to us from a time when their creation and destruction effectively stopped because the universe had expanded to relatively low densities. The spectrum of the CMB differs from that of a blackbody by less than 1 part in 104, showing that the energy of CMB photons has not been perturbed since about 2 months after the big bang. For the next 400,000 years, photon-electron scattering scrambled only the directions of the photons. When the universe cooled to about 3000 K, electrons and baryons combined to form neutral atoms. After this “recombination” or last-scattering epoch, the CMB photons traveled freely across the universe, allowing us to compare those coming from parts of the universe that are very distant from us with those coming from parts nearby. In this way the anisotropy of the CMB can reveal the distribution of matter in the universe as it was a half million years after the big bang, before the creation of stars and galaxies.

Efforts to detect the anisotropy of the CMB started immediately after its discovery. Initially, only the anisotropy due to the motion of the solar system at 370 km/sec relative to the average velocity of the observable universe was found. Finally, in 1992, the Differential Microwave Radiometer (DMR) instrument on the Cosmic Background Explorer (COBE) satellite detected the intrinsic anisotropy of the CMB at a level of 10 parts per million.

The DMR detected these 30 millionths of a degree temperature differences across the sky by integrating for a full year. The DMR beam size

(about 7 degrees), when projected to the edge of the observable universe, spans a region a few times larger than the distance light could have traveled in the half million years between the big bang and the last scattering. As a result, the anisotropy seen by the DMR is truly primordial, unaffected by the interaction between the CMB and matter. But interactions between the CMB and matter are a critical part of the later formation of clusters and superclusters of galaxies, and these interactions can be studied by looking at the CMB with smaller beam sizes. These interactions produce a series of “acoustic” peaks in the plot of temperature difference vs. angular scale, with scales of a degree and smaller (see Figure 5.3). They are called acoustic because they record the effect of the interaction of the radiation with matter variations analogous to sound waves.

When looking at small angular scales, the foreground interference from the atmosphere is less of a problem, and experiments on the ground and on stratospheric balloons have observed evidence for a series of acoustic peaks. These experiments have shown by the position of the first peak that the geometry of our universe is consistent with being uncurved, and by the height of the second peak have made an independent measurement of the amount of ordinary matter in the universe. The existence of acoustic peaks and the flatness of the geometry of the universe are consistent with the predictions of inflation and have given the theory its first significant tests. The determination of the amount of ordinary matter, about 4 percent of the critical density, agrees with the determination based on the amount of deuterium produced during the first seconds and strengthens the case for a new form of dark matter dominating the mass in the universe.

For the future, the Microwave Anisotropy Probe (MAP), launched on June 30, 2001, will measure the entire sky with a 0.2-degree beam and a sensitivity 45 times better than that of the DMR. The European Space Agency’s Planck satellite, to be launched in 2007, will have a 0.08-degree beam and a sensitivity 20 times better than MAP. Since anisotropy signals on even smaller angular scales are suppressed by the finite thickness of the surface of last scattering, MAP and Planck will essentially complete the study of the temperature differences resulting from these primordial density fluctuations. We expect to learn much about the earliest moments of the universe from these two very important missions.

There remains one critical feature of the microwave sky to be explored: its polarization. Polarization promises to reveal unique features of the early universe, but it will be difficult to measure. First, its anisotropies are expected to be an order of magnitude smaller than those for the temperature field. This means that more sensitive detectors and longer integration times are required.

And second, it is likely that polarizing galactic foregrounds will be more troublesome than they are for determining the temperature field.

At every point on the sky, the temperature of the radiation can be represented as a single number, while polarization is represented by a line segment (see Figure 4.6). For example, a given point may have a temperature of 2.725 degrees and its temperature may differ from the average by 30 millionths of a degree. But the signal measured by a polarized detector aligned toward the north galactic pole might exceed that measured by a detector aligned in the east-west direction by just two millionths of a degree. The polarization line segment in this example would point north-south, and its length would be related to the latter temperature difference. The science comes from a study of the pattern of these line segments on the sky and how they correlate with the temperature pattern. To reveal this polarization field, more sensitive detectors with polarization sensitivity are required.

According to our understanding of the oscillations in the plasma of photons, electrons, and baryons that were under way before recombination, the inhomogeneities that developed lead naturally to a predictable level of polarization of the CMB photons. This polarization anisotropy is expected to be most prominent at even finer angular scales than those for the temperature, requiring instruments with beams that are smaller than 0.1 degrees.

In the fall of 2002, the first detection of the polarization of the anisotropy of the CMB by the DASI experiment was announced (see Figure 4.6); the amplitude and variation with angular scale was as expected. Nearly two dozen efforts are under way to further characterize the polarization. While most are modifications of existing temperature anisotropy experiments, some are dedicated to detecting polarization. These ongoing efforts are also important in that they will allow accurate study of the foregrounds that are expected to contaminate the measurements.

It is highly likely that experiments already in progress will systematically characterize the CMB polarization and the associated foregrounds. This will be an important confirmation of our understanding of the initial fluctuations that led to anisotropies and structure formation. However, these experiments (including MAP and Planck, which have polarization sensitivity) will not be able to fully characterize the polarization of the CMB anisotropy because their sensitivity is not adequate. Measuring CMB polarization in essence triples the information we can obtain about the earliest moments and exploits the full information available from this most important relic of the early universe.

The most important long-range goal of polarization studies is to detect the consequences of gravitational waves produced during the inflationary epoch. The existence of these gravitational waves is the third key prediction of inflation (after flatness and the existence of acoustic peaks). Moreover, the strength of the gravitational wave signal is a direct indicator of when inflation took place, which would help to unravel the mystery of what caused inflation.

The gravitational waves arising from inflation correspond to a distortion in the fabric of space-time and imprint a distinctive pattern on the polarization of the CMB that cannot be mimicked by that from density fluctuations. This so-called “B-mode” component of the polarization will have amplitudes one or more orders of magnitude smaller than the polarization produced by normal scattering between the photons and matter. This gravity wave signal occurs on relatively large angular scales, greater than about 2 degrees, which is the scale of the observable universe at recombination. Very large sky coverage, high sensitivity, and excellent control of systematic errors are necessary to measure this submicrokelvin signal.

Based on what is known about polarization foregrounds and the existence of a false B-mode signal produced by gravitational lensing, a fully optimized experiment might well be able to detect gravitational waves from inflation, even if their effect on the CMB is three orders of magnitude smaller than that of density perturbations. Achieving this sensitivity would allow one to probe inflation models whose energy scale is 3 × 1015 GeV or larger, close to the energy where the forces are expected to be unified.

While there is no question about the great scientific importance of detecting the B-mode signature of inflation, it must be pointed out that the challenges to doing so are just as great. Still unknown foregrounds or contaminants could preclude achieving the proposed sensitivity, and even if the proposed sensitivity is achieved, the signal, while present, could go undetected because it is too small.

To achieve this extremely ambitious goal, significant detector R&D is needed over 3 or 4 years. The most promising detectors appear to be large-format bolometer arrays, the challenge being to read out signals from compact arrays of several thousand detectors. It is important that this R&D effort be supported and that parallel efforts be encouraged. Ground-based and balloon-borne observations will provide experience with different detection schemes (particularly on how to guard against false polarization signals) and will provide more information about galactic foregrounds. A coordinated program of laboratory research, ground-based and balloon-borne observations, and finally a space mission dedicated to CMB polarization will be

required to get the very best sensitivity to this important signature (and probe) of inflation. Planning for a space mission should begin now, but the final detector design must depend on experience gained through the R&D effort.

The committee notes that it was a broad and coordinated approach that made possible the current successes in learning about the early universe from the anisotropy of the CMB. DOE, NASA, and NSF have all played roles in the anisotropy success story, and all three have roles to play in the quest to detect the polarization signal of inflation.

UNDERSTANDING THE DESTINY OF THE UNIVERSE

Of the 11 questions that the committee has posed, the nature of dark energy is probably the most vexing. It has been called the deepest mystery in physics, and its resolution is likely to greatly advance our understanding of matter, space, and time.

The simplest and most direct observational argument for the presence of dark energy comes from type Ia supernovae at high redshift. In our immediate neighborhood, with proper corrections applied, the intrinsic luminosities of such supernovae are seen to be remarkably uniform, making them useful as “standard candles” for cosmological measurements. Using Type Ia supernovae as cosmic mileposts to probe the expansion history of the universe leads to the remarkable conclusion that the expansion is speeding up instead of slowing down, as would be expected from the gravitational pull of its material content. This implies that the energy content of the universe is dominated by a mysterious dark energy whose gravity is repulsive.

A spatially flat universe (like ours) containing matter only would continue to expand and slow indefinitely. The existence of dark energy changes all that. Depending upon the nature of the dark energy, the universe could continue its speed-up, begin slowing, or even recollapse. If this cosmic speed-up continues, the sky will become essentially devoid of visible galaxies in only 150 billion years. Until we understand dark energy, we cannot understand the destiny of the universe.

We have few clues about the physics of the dark energy. It could be as “simple” as the energy associated with nature’s quantum vacuum. Or, it is possible that our current description of a universe with dark matter and dark energy may just be a clumsy construction of epicycles that we are patching together to save what could be an obsolete theoretical framework. Also puzzling is the fact that we seem to be living at a special time in cosmic history, when the dark energy appears only recently to have begun to dominate over dark and other forms of matter.

The supernova data become more compelling with each new observation. We have no evidence so far, for example, that “gray dust” is obscuring supernovae at high redshift nor that the supernovae are evolving, two effects that could weaken their credibility as standard candles. Recent observations of very high redshift objects such as SN 1997ff support this conclusion. Moreover, the supernova observations are fully compatible with other cosmological observations. We know from the CMB that the universe is spatially flat and therefore that its total matter and energy density must sum to the critical density. On the other hand, all our measurements of the amount of normal and dark matter indicate that matter accounts for only one third of the critical energy density. The dark energy neatly accounts for the remaining two thirds.

We have a significant chance over the next two decades to discern the properties of the dark energy. Because this mysterious new substance is so diffuse, the cosmos is the primary site where it can be studied. The gravitational effects of dark energy are determined by the ratio of its pressure to its energy density. The more negative its pressure, the more repulsive the gravity of the dark energy. The dark energy influences the expansion rate of the universe, in turn governing the rate at which structure grows and the correlation between redshift and distance.

The means of probing the cosmological effects of dark energy include the measurement of the apparent luminosity of Type Ia supernovae as a function of redshift, the study of the number density of galaxies and clusters of galaxies as a function of redshift, and the use of weak gravitational lensing to study the growth of structure in the universe. Given the fundamental nature of this endeavor, it is essential to approach it with a variety of methods requiring not only the full array of existing instruments but also a new class of telescopes on the ground and in space. Many of these measurements can also provide important information on the amount and distribution of dark matter.

In the near term, the search for high-redshift Type Ia supernovae will rely on wide-field cameras on 4-meter-class telescopes. Follow-up observations of the supernovae light curves will use the Hubble Space Telescope (when possible), while spectroscopic measurements that test for possible evolutionary effects are obtained with 8- to 10-meter telescopes. Further evidence for cosmic speed-up and some information about the equation of state of the dark energy can be expected.

The study of the growth of structure and the measurement of the number density of galaxies and clusters will have to combine several methods. One of these is a program of galaxy surveys in the visible and near infrared

at low redshift (such as the Sloan Digital Sky Survey and the 2MASS) and at high redshift (such as the DEEP Survey). Characterization of the hot plasmas present in clusters by x-ray satellites such as Chandra and XMM-Newton will also be important. Mapping of the distortions of the cosmic microwave background caused by hot cluster gas (Sunyaev-Zel’dovich effect) will be used to find and count clusters as well as to understand their evolution. New instruments, especially Constellation-X, which is planned for the end of the decade, should lead to further progress in the study of galaxy clusters.

Pilot studies of the gravitational distortion of the images of distant galaxies by intervening mass concentrations (weak gravitational lensing) show the power of this method for measuring the evolution of structure and for identifying and determining the masses of galaxy clusters. This method will soon be more fully exploited as new large CCD cameras become fully operational on large telescopes.

All these observations depend upon the development of advanced photon sensors in the optical, millimeter, infrared, and x-ray portions of the spectrum and upon the training of instrumentalists who can integrate these sensors into new instruments. Moreover, the whole enterprise must be linked to a vigorous theory and computational program that explores not only the fundamental nature and origin of dark energy but also the phenomenology of the complex astronomical objects that we use as probes (e.g., supernovae, galaxies, and clusters).

In spite of its importance, the program discussed above will only be able to chip away at the problem of dark energy. To understand its properties fully we will need a new class of optical and near-infrared telescopes with very large fields of view (greater than one square degree) and gigapixel cameras. They will be needed to measure much larger numbers of supernovae with control of systematics and to map gravitational lensing over large scales. There is a need for one such telescope on the ground—such as the Large Synoptic Survey Telescope (LSST), recommended by the Astronomy and Astrophysics Survey Committee2—and one in space—such as the proposed SuperNova Acceleration Probe (SNAP).

A 6- to 8-meter wide-field telescope on the ground will be able to carry out weak gravitational lensing maps of over 10,000 square degrees with individual exposures on the order of 7 square degrees. From these observations, tens of thousands of galaxy clusters can be discovered and their masses determined, and the development of structure can be probed. A

wide-field, ground-based telescope will also be very effective in monitoring the sky for variable events, from near-Earth objects to transient astrophysical phenomena such as gamma-ray bursts and supernovae. Such a telescope will discover large numbers of moderate redshift supernovae and measure their light curves. The ability of LSST to explore the astronomical “time domain” was the main reason the survey committee recommended it as a high-priority project.

A wide-field space telescope would probably be much smaller in aperture (2-meter diameter for SNAP) and field of view (around 1 square degree) because of weight and cost considerations, but it would benefit significantly from being above Earth’s atmosphere. A wide-field space telescope could discover thousands of distant Type Ia supernovae and follow their light curves in the optical and near-infrared. Operating above the atmosphere ensures uniform sampling of light curves without regard to weather and helps to minimize systematic errors or corrections. Spectroscopic follow-up of each supernova by a wide-field space telescope (if it has such a capability) or by other telescopes is essential to study potential systematics, including evolution. A wide-field space telescope could, over a few years, put together a high-quality, very uniform sample of several thousand supernovae. Using this sample, the equation of state of dark energy could be determined to about 5 percent and tested for variation with time.

A wide-field space telescope is also well suited for deep, weak-gravitational lensing studies of the evolution of structure over small areas of sky to probe dark energy. The absence of atmospheric distortion allows fainter, more distant, smaller galaxies to be used for this purpose. Finally, a wide-field space telescope will have other astronomical capabilities, e.g., providing targets for the Next Generation Space Telescope (NGST) and searching for transient astronomical phenomena.

Why two wide-field telescopes? The range of redshifts where the dark energy can be studied in detail is from z ~ 0.2 to z ~ 2 (at higher redshifts, the dark energy becomes too small a fraction of the total energy density to study effectively, and at small redshifts the observables are insensitive to the composition of the universe). Our almost complete ignorance of the nature of dark energy and its importance argues for probing it as completely as possible. To do this, two complementary capabilities are needed: (1) the ability to observe to redshifts as large as 2 with high resolution and in the rest frame visible band and (2) the ability to cover large portions of the sky to redshifts as large as unity. The combination of ground- and space-based wide-field telescopes will do just that. Space offers high resolution and access to the near-infrared (which for objects at redshifts as high as 2

corresponds to the rest frame visible band). The ground enables the large aperture (8-meter class) needed to quickly cover large portions of the sky.

A wide-field space telescope can discover and follow up thousands of type Ia supernovae, the simplest and most direct probes of the expansion, to study the effect of dark energy on the expansion out to redshifts z ~ 2. This is because the light from these most distant objects is shifted to near-infrared wavelengths, which must be studied from space. Space observations also minimize the systematic errors by providing the high resolution needed to separate the supernova light from that of the host galaxy and by guaranteeing that the entire light curve is observed, since atmospheric weather is not a problem. While a space-based wide-field telescope is first a high-precision, distant supernova detector, it can also extend the weak-gravitational lensing technique to the highest redshifts because it has sufficient resolution to measure the shapes of the most distant galaxies.

A ground-based wide-field telescope has its greatest power in studying the dark energy at redshifts of less than about 1. It can discover tens of thousands of supernovae out to redshifts of about z ~ 0.8 and follow them up (though not with the same control of systematics that can be done in space). It can carry out weak-gravitational-lensing surveys over thousands of square degrees to moderate depth. Such surveys can probe the dark energy by measuring its effect on the development of large-scale structure and by measuring the evolution of the abundance of clusters (the latter work extends and complements x-ray and Sunyaev-Zel’dovich effect measurements that will be made).

EXPLORING THE UNIFICATION OF THE FORCES FROM UNDERGROUND

Between the 1896 discovery of radioactivity and the development of particle accelerators, the cosmic rays constantly bombarding Earth were essential tools for scientific progress in particle physics. Among the successes were the discoveries of the anti-electron (positron), the pi meson (pion), and the mu meson (muon). However, when it comes to addressing three of the questions—What is dark matter? What are the masses of the neutrinos? Is the proton stable?—the cosmic rays that were once the signal have now become the source of a limiting background.

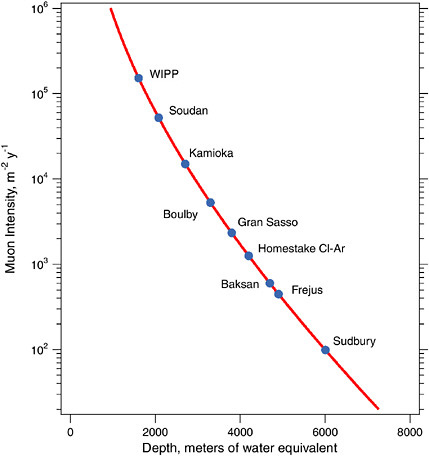

With the known exception of neutrinos, which penetrate everything, most cosmic rays are readily absorbed in the atmosphere and in Earth’s surface. However, muons are absorbed only slowly as they pass through matter. The most penetrating muons, which can produce other radioactive particles, can be removed only by locating the experiment under a substan-

tial overburden (see Figure 7.1). Scientists have sought ever deeper underground environments, well shielded from cosmic-ray muons, to carry out the forefront experiments that address our questions.

The earliest ideas for a water detector capable of detecting the decay of protons and the interactions of neutrinos from the Sun and other cosmic sources trace to the late 1970s in the United States. Though some pioneering underground experiments were done here in the early 1980s, Japan

FIGURE 7.1 The number of penetrating cosmic-ray muons vs. overburden of earth (measured in mwe—meters of water equivalent; 1 mwe is approximately 1 foot of material). Existing underground labs are WIPP, Homestake, and Soudan in the United States; Kamioka in Japan; Boulby, Gran Sasso, Baksan, and Frejus in Europe; and Sudbury in Canada. There is a deeper option in the Homestake mine at about the same depth as Sudbury. Figure courtesy of R.G.H. Robertson.

created a major research program in this new area at the Kamioka mine. In 1998, data from Super-Kamiokande, at a depth of about 3,000 meters of water equivalent (mwe), provided convincing evidence that muon neutrinos mix, or oscillate, with electron or tau neutrinos. This can occur only if neutrinos have mass.

About 30 years ago, U.S. investigators found the first indications of oscillations in electron-family solar neutrinos at the Homestake mine (located near the level of the existing chlorine-argon experiment). Since then, other experiments in Europe and Asia have seen similar manifestations of electron-neutrino oscillations. The Sudbury Neutrino Observatory (SNO) in Canada unambiguously demonstrated the oscillation of solar electron neutrinos, but required a depth of 6,000 mwe to achieve sufficiently low cosmic-ray backgrounds. The establishment of appropriate infrastructure to assemble and operate SNO at this depth accounted for a substantial part of the cost and the construction time.

The Laboratori Nazionali del Gran Sasso, in Italy, and a facility in the Baksan valley in Russia are two general-purpose national underground laboratory facilities (see Figure 7.1). With substantial infrastructure and good access, Gran Sasso (3,400 mwe) represents the kind of facility required to make progress in this field. However, Gran Sasso and Baksan have limited remaining experimental space and insufficient depth for some experiments at the cutting edge today.

There is now good evidence that neutrinos have mass, a phenomenon that points to a grander framework for the particles and forces, since neutrino mass cannot be accommodated within the Standard Model. However, the quantitative parameters associated with neutrino mass and mixing have not yet been accurately measured. Experiments studying oscillations of neutrinos aim to establish clearly the extent of neutrino family mixing, the relationships among the masses for the physical neutrino states, and particle-antiparticle asymmetries. This is a compelling scientific objective that bears not only on the unification of the forces but also on the formation of large-scale structure in the universe and on the production of the chemical elements in supernova explosions.

The richness of the information requires many approaches. Some data will be obtained using neutrinos made on Earth at reactors and at accelerators. However, to measure neutrino oscillations, the target detectors need to be located at substantial distance from the sources. Other experiments can utilize neutrinos created in the Sun and the atmosphere. To exploit these natural sources of neutrinos, low ambient backgrounds and detection of the very low-energy solar neutrinos are essential. Appropriate underground fa-

cilities are essential to do either; one facility could conceivably do both. Many techniques are being studied to carry out these experiments, and most require substantial depth, in some cases at least 4,000 mwe.

Careful study of the end point of the beta decay of tritium has allowed setting a neutrino mass limit of a few electron-volts; experiments in progress might be able to probe as low as a few tenths of an electron-volt. The search for neutrinoless double beta decay can reveal if neutrinos are their own antiparticles, and can yield information about neutrino masses. Such experiments now probe neutrino masses as small as a few tenths of an electron-volt; proposed experiments might be able to probe neutrino masses as small as 0.01 eV. Reaching this level is important as it includes the smallest mass consistent with that implied by the atmospheric neutrino experiments. Like oscillation experiments, double beta decay experiments require extraordinarily low backgrounds and hence great depths. Massive neutrinos may even play a role in the origin of the matter-antimatter critical to the existence of ordinary matter in the universe.

Massive neutrinos contribute about as much to the universe’s matter budget as do stars, but they are unlikely to constitute the bulk of the dark matter. The lightest supersymmetric particle (neutralino) or the axion are more plausible candidates. Identifying the dark matter particle is a high priority. Neutralinos could be produced and discovered with accelerators, but their production may be beyond the capabilities of existing and planned accelerators. It is therefore essential to seek evidence for neutralinos in direct searches. Experiments in the United States are operating and actively seeking to detect the neutralinos or axions that make up the dark matter in our own galaxy. No compelling signal has been found yet. More sensitive (second-generation) experiments, currently being assembled, will soon significantly increase our reach.

New techniques for neutralino searches under development show promise. These include high-purity germanium detectors, very massive liquid xenon detectors, and scaling up of the current cryogenic detector techniques. To extend sensitivity, potential techniques include very large low-pressure drift chambers, phonon asymmetry in isotopically pure crystals, or detection of the mechanical recoils. All these new possibilities are exciting but extremely challenging, and they will require sustained development.

Future neutralino searches will likely require greater depth. The irreducible background in such experiments is that of high-energy neutrons (produced by penetrating muons), which cause nuclear recoils in the detector that appear identical to the neutralino scattering. Such a neutron background is already close to being a limiting factor in second-generation

experiments at depths of 2,000 mwe. Neutralino search experiments will also benefit from common infrastructure at such a laboratory. Specific examples include a monitoring facility for the radioactive background, availability of materials stored underground for long times so the cosmic ray activation has gone, underground material purification and detector fabrication facilities, and shielded clean rooms with radon scrubbing for assembly of radioactivity-sensitive detector elements.

As far as we know, the proton is completely stable. However, there are reasons to believe that the proton is merely long lived: Nonconservation of baryon number is needed to explain the existence of matter in the universe, and extensions of the Standard Model that unify the forces and particles predict that baryon number is not conserved and predict the recently discovered neutrino oscillations. The observation of proton decay would be evidence for a grander theory of the elementary particles and would help to explain the very existence of matter as well as its ultimate demise. The most sensitive experiment, Super-Kamiokande, placed the best limit on the decay to pion and positron, 1.6 × 1033 years. Proton decay to neutrino and kaon, a mode preferred by supersymmetric theories, is more poorly constrained, because of higher backgrounds and lower efficiencies.

A proposal exists to extend the range of accessible proton lifetimes by a factor of about 10 by using the Super-Kamiokande technique and a larger mass detector. Techniques are also being studied that would provide improved efficiency and smaller backgrounds for the kaon decay mode. Though proton decay experiments typically require only modest depth, the envisaged large detector masses dictate substantial infrastructure, including some of the capabilities described above. Because a proton decay experiment does not necessarily need the greatest depth, the optimal site for such an experiment might be somewhere other than a deep underground laboratory.

An underground facility would provide capabilities to do more than address the scientific issues discussed above. For example, neutrinos created in supernovae could be observed directly. To date, neutrinos from a single supernova in 1987 have been seen. Observation of neutrinos from other supernovae could shed light on the nature of neutrinos, on the origin of the elements beyond the iron group, and on the characteristics of the supernova mechanism.

In many cases, with present knowledge and available technologies, our questions on dark matter, neutrino mass, and proton stability are ripe for major experimental breakthroughs. Such experiments must be in locations well isolated from cosmic rays. To be at the cutting edge, they will be large, expensive, and technically challenging, requiring substantial infrastructure.

Though many experiments require depths of only 4,000 mwe, accommodating future experiments over the next two decades may well require depths up to 6,000 mwe.

To address the questions of neutrino mass, proton stability, and dark matter, there is a compelling need for a facility that will provide both shielding and infrastructure. An underground facility in North America is required if the United States is to play a significant leadership role in the next generation of underground experiments. Several proposals exist to provide a site in North America, including deeper levels at the Homestake mine, a site at San Jacinto, and expansion of the scientific area in the Sudbury mine. With appropriate commitment to infrastructure and experiments, any of these sites could provide the depths required for important future experiments.

EXPLORING THE BASIC LAWS OF PHYSICS FROM SPACE

Employing observations of astronomical objects for testing the laws of physics is not new: The orbits of binary neutron stars and of Mercury around the Sun have been used to test theories of gravity. Astrophysicists are now recognizing that the strong gravitational fields and extreme densities and temperatures found in objects like black holes, neutron stars, and gamma-ray bursts allow us to test established laws of physics in new and unfamiliar regimes.

A key scientific component of NASA’s Beyond Einstein initiative is the use of space-based observatories to probe physics in extreme regimes not accessible on Earth. Several missions and development programs directly address a number of the 11 questions considered here. However, the Beyond Einstein initiative is broad and includes elements not directly relevant to the science the committee discusses in this report.

The Beyond Einstein missions relevant for this study include one already under construction (GLAST), two programs (Constellation-X and LISA) undergoing active technology development and detailed mission studies, and a number of advanced concepts and technology programs. Constellation-X and LISA were accorded high priority in the NRC’s Astronomy and Astrophysics in the New Millennium on the basis of their ability to answer key questions in astronomy. Here, we address their unique capabilities to study gravity, matter, and light in new regimes.

Constellation-X is a high-throughput x-ray observatory emphasizing high spectral resolution and broad band-pass. It utilizes lightweight, large-area x-ray optics and microcalorimeters as well as grating spectrometers to cover

an energy range from 0.25 to 10 keV. A coaligned hard x-ray telescope extends spectral coverage to 60 keV. It will improve sensitivity by a factor of 25–100 relative to current high-spectral-resolution x-ray instruments.

Constellation-X will measure the line shapes and time variations of spectral lines, particularly iron K-fluorescence, produced when x rays illuminate dense material accreting onto massive black holes, thus probing the space-time geometry outside the hole to within a few gravitational radii. Current measurements of the broadening of x-ray lines emitted near black holes show variable Doppler shifts and gravitational redshifts, providing evidence that at least a few are spinning. Much more sensitive observations of line shapes with Constellation-X will measure the actual black-hole spin rates. Constellation-X will also measure continuum flares and subsequent changes in line emission, providing data on the effect of gravity on time near a black hole and thereby testing the validity of general relativity in the strong gravity limit.

Additional tests of general relativity can be made by observing quasiperiodic oscillations (QPOs) of the x-ray flux emitted by matter falling onto neutron stars or black holes in galactic binaries. The modulations producing the QPOs almost certainly originate in regions of strong gravity. With its large collecting area, Constellation-X will provide essential new x-ray data, as could future missions specifically designed for high-time-resolution studies of bright sources.

In addition to having strong gravitational fields, collapsed stellar remnants produce densities even higher than in an atomic nucleus. Nuclei are held together by forces involving their constituent quarks and mediated by gluons. What happens at higher densities and temperatures is unknown. Accelerators such as RHIC may be able to generate momentary quark-gluon plasmas with a density 10 times that of nuclei to probe the state(s) of matter at extremely high energy density. Neutron stars (created in supernova explosions) have core densities that are almost certainly much higher than those of nuclei. The core temperature, however, is probably on the order of 109 K, or ~1,000 times cooler than that expected for a quark-gluon plasma produced at an accelerator. Thus, neutron stars contain states of nuclear material that cannot be re-created on Earth.

Various models for the behavior of matter under such conditions make specific predictions for the relationship between neutron star mass and radius, as well as for the cooling rates of newly formed neutron stars. Some masses have been determined by measuring orbits of neutron stars in binary systems. Neutron star radii are sometimes inferred by spectral measurements of hot atmospheres formed after thermonuclear explosions

(seen as x-ray bursts) on surfaces of accreting neutron stars; however, the inferred values can be systematically wrong if the x rays come from a hot spot on the surface or an extended atmosphere. X-ray continuum measurements have been used to estimate temperatures and radii for a few systems. Observations with Constellation-X can search for spectral lines (and absorption features) in bursting sources, in binary systems, and in isolated neutron star atmospheres. Offsets in the central wavelengths of the spectral features determine the gravitational redshift. Widths and strengths of lines measure rotation speed or pressure broadening near the neutron star surface. Such data can determine neutron star mass and radius and constrain the compressibility of “cold” nuclear matter, thereby providing keys to understanding its underlying composition and particle interactions or equation of state.

Another technique is to use x-ray observations of neutron stars of known ages to determine the cooling rates, which in turn depend on structure and processes in the stellar interior. There are only a handful of supernova remnants in our galaxy with a historically observed and recorded explosion. The Chandra X-ray Observatory is using its superb angular resolution to isolate young neutron stars amid the debris in several of these systems. The highly sensitive Constellation-X spectrometers will then be able to observe stars located by Chandra and determine the surface temperature and shape of the energy spectrum. These data can be compared with cooling models whose detailed predictions are sensitive to the composition and equation of state of the interior.

LISA is a gravitational-wave detector consisting of a triangular formation of three spacecraft separated by 5 million km. Laser beams transmitted between the spacecraft can measure miniscule changes in path length between reflecting “proof masses” located in each satellite. Tiny changes in path length can be caused by incident gravitational wave radiation. LISA is sensitive to low-frequency gravitational waves not detectable with the ground-based LIGO interferometer.

LISA is designed to detect gravitational waves released by the coalescence of two supermassive black holes out to very high redshifts. In addition, it can measure the evolution of the gravity wave signal emitted as neutron stars or white dwarfs spiral into the supermassive black holes in the nuclei of galaxies. The details of the wave shapes are sensitive to general relativistic effects, so can be used to probe gravity in the strong field limit. Specifically, as a compact star is drawn toward a spinning supermassive black hole, LISA can measure the spin-induced precession of its orbit plane, providing a detailed mapping of space-time. Theoretical

and numerical calculations of the predicted orbits and waveforms using general relativity will be critical to any interpretation of data from these observations.

Studies of strong gravity and matter in the extremes of temperature and density are identified goals of Constellation-X and LISA. Beyond these two missions there are many other fascinating opportunities. Many of these will require significant technology development to realize their objectives. For example, a space-based x-ray interferometer could directly image gas orbiting a black hole, tracing the gravitational field down to the event horizon. A sensitive x-ray polarimeter could probe QED in magnetic fields exceeding 1014 gauss, well above the QED critical field, where quantum effects become important. Under such conditions QED predicts strong dependence of polarization with x-ray wavelength, so the observations would provide a sensitive test of the predictions of QED. Sensitive hard x-ray and gamma-ray spectrometers could trace radioactive elements produced in supernova explosions, providing important constraints on theoretical models of the explosion and on the sites of nucleosynthesis. NASA is studying these advanced observatories to carry its program of probing the basic laws of physics into the future.

Among these future missions, an exciting prospect particularly relevant to this report is an advanced, space-based gravitational-wave detector designed to detect relic gravitational waves from the early universe. Inflation and phase transitions arising from symmetry breaking associated with electroweak, supersymmetry, or QCD phenomena leave their signatures in gravitational waves. Detection of these gravitational-wave signals could directly reveal how the forces, once unified, split off into the four separate forces we know today. LISA will probe for these signals down to the level where the background from galactic white-dwarf binaries dominates. A mission with multiple pairs of interferometers, designed to reject the galactic background and having a hundred- or thousandfold higher sensitivity than LISA will be required to reach the levels predicted by theory.

To achieve this very challenging goal, substantial technology development will be needed, aimed at optical readout for the gravitational reference sensors, high-power space-qualified lasers, laser stabilization systems, inexpensive, lightweight 3-meter-class optics, and improved micronewton thrusters. Equally important is the development of strategies and techniques for dealing with the astrophysical gravitational-wave signals that could hide the signal from the early universe. It is likely that support from more than one agency will be needed for both the advanced technical developments as well as the required computational and theoretical analyses.

UNDERSTANDING NATURE’S HIGHEST-ENERGY PARTICLES

The observation of particles in the universe with unexpectedly high energy raises several basic questions. What are the particles? Where do they come from? How did they achieve such high energies—many orders of magnitude higher than the output of the most powerful laboratory accelerators? Acceleration of particles to high energy is a characteristic feature of many energetic astrophysical sources, from solar flares and interplanetary shocks to galactic supernova explosions and distant active galaxies powered by accretion onto massive black holes. The signature of cosmic accelerators is that the accelerated electrons, protons, and heavier ions have a distribution of energies that extends far beyond the thermal distribution of particles in the source. It is the high energy that makes this population of naturally occurring particles of great interest, together with the fact that their energy density appears to be comparable to that of thermal gas and magnetic fields in their sources.

Primary cosmic-ray electrons, protons, and nuclei can produce secondary photons and neutrinos in or near the sources, which propagate over large distances in the universe undeflected by the magnetic fields that obscure the origins of their charged progenitors. Photons are produced by electrons, protons, and nuclei, while neutrinos are produced only by protons and nuclei. The proportions of the various types of particles, secondary and primary, thus reflect the nature of their sources. A unified approach to the problem therefore requires observations of the gamma rays and neutrinos as well as the cosmic rays.

Programs for more extensive measurements of high-energy gamma rays and the search for high-energy neutrinos are moving forward. They include STACEE, GLAST, and VERITAS for observation of gamma rays and IceCube to open the neutrino astronomy window. GLAST will be a space mission covering the gamma-ray energy range from 30 MeV to 300 GeV. VERITAS, a ground-based gamma-ray telescope, covers a complementary range from 100 GeV to 10 TeV. AMANDA is an operating neutrino telescope array deep in the south polar ice with an instrumented volume of about 2 percent of a cubic kilometer. IceCube is the planned cubic kilometer follow-on to AMANDA. NASA’s HETE-2 satellite is currently monitoring the x-ray sky for gamma-ray bursts and detects about one burst every 2 weeks; the SWIFT satellite, currently scheduled to be launched in 2004, should detect a burst every day. STACEE is currently in operation; GLAST, VERITAS and IceCube are scheduled for operation and data taking in the second half of this decade. One promising source of high-energy particles of all types is the

distant gamma-ray burst. EXIST is a wide-field-of-view instrument under study for launch near the end of the decade. All of the satellites will be able to provide the transient gamma-ray burst information needed to search for neutrino coincidences.

The primary cosmic ray spectrum as we observe it at Earth extends from mildly relativistic energies, where the kinetic energy is comparable to the rest mass of the particles, up to at least 1020 eV, a range of 11 orders of magnitude. The higher energy particles occur less frequently by about a factor of 50 for each power of 10 increase in energy. The abundant low-energy cosmic rays are accessible to relatively small, highly instrumented detectors that can be carried above the atmosphere to make precise measurements of the energy, charge, and mass of the particles. At higher energy, bigger (and therefore coarser) detectors are needed, and they must be exposed for long periods of time to collect a significant number of particles. At the highest energies, the intensity is on the order of one particle per square kilometer per century, so an effective collecting power of several thousand square kilometer years is needed.

In 1962 the MIT Volcano Ranch 10-square-kilometer air shower array recorded an event with an energy estimated at 1020 eV. Even now, this remains one of the dozen or so highest energy particles ever detected. Immediately after the discovery of the CMB in 1965, the potential importance of this event and its energy assignment was realized. If the sources of the highest energy cosmic rays are distributed uniformly throughout the universe, perhaps associated with quasars or other extremely powerful sources, then there should be a cutoff in the spectrum. This follows from the fact that a 1020 eV proton has enough energy to produce a pion when it interacts with a photon of the universal 2.7 K thermal background radiation. Further, the probability of this process is such that protons from distant extragalactic sources would lose a significant amount of energy in this way. Thus sources of particles with 1020 eV would have to be relatively nearby, comparable to the nearest active galaxies and quasars, for example. Since such nearby sources have not yet been identified, the problem remains unsolved, and it becomes crucial to obtain more data with improved measurement of energy.

The mystery of ultrahigh-energy cosmic rays has generated much speculation about their origin. Possible sites for cosmic accelerators include highly magnetized neutron stars, million-solar-mass black holes accreting matter at the centers of active galaxies, and jets from gamma-ray burst sources, possibly involving stellar-mass black holes. A problem for all these hypothetical mechanisms is that all calculations find it difficult to achieve per

particle energy as high as 1020 eV. Another possibility that has received serious attention is that the observed particles may instead be decay products of massive relics from the early universe—topological defects or very massive, unstable particles—so that no acceleration is needed. Even more exotic is the suggestion of a violation of Lorentz invariance at very high energy in such a way that the energy-loss mechanism in the microwave background is not effective, but this still leaves open the question of how such high energy was achieved in the first place.

The problem of the origin of the highest-energy cosmic rays has been the focus of a series of increasingly large air shower experiments of two types. One approach follows the original MIT design, a ground array of widely separated detectors that take a sparse sample of the particles in the shower front, typically far from its core. The detectors are simple and can be operated continuously, but such an array samples the shower at just one depth and only at distances far from the shower core, which contain less than 1 percent of the remaining cascade. Thus to assign energy to the events requires detailed and complicated calculations and is subject to fluctuations. The largest ground array at present is the 100 km2 Akeno Giant Air Shower Array (AGASA) in Japan.

The second, complementary method uses the atmosphere as a calorimeter to find the energy of each event. Compact arrays of optical detectors look out to large distances and record the trail of fluorescence light generated by ionization of the atmosphere along the shower core. This has the advantage that most of the energy deposition is observed. A disadvantage is that it can operate only on clear, dark nights. In addition, the absolute energy assignment depends crucially on knowing the clarity of the atmosphere at the time of each event to infer the energy from the received signal. The High Resolution Fly’s Eye Experiment (Hi-Res) in Utah uses this approach.

The most extensive data sets at present are those of AGASA and monocular Hi-Res, but a definitive picture of the ultrahigh-energy spectrum has yet to emerge. The problem is a combination of limited statistics and uncertainties in the energy assignment. The Hi-Res experiment can now operate in stereo, or binocular, mode. This should allow it gradually to accumulate more data with better determination of direction and energy because of the improved geometrical reconstruction of the shower axis that is possible with two “eyes.”

A new experiment that combines both techniques, called the Auger Project, is under construction in Argentina. It is a 3,000-square-kilometer ground array that will include three fluorescence light detectors. It will

therefore be able to cross-calibrate the two methods of measuring energy. Because of its size it will also accumulate significantly more statistics in the 1020 eV energy region than previous experiments. For these reasons, completion of this detector is crucial. The resources of several countries of the international Auger Project must come together to finish construction and bring the full array into operation by mid-decade. Eventually, pending the outcome of this investigation, it may be desirable to push to still higher energies with other detectors. A large ground-based detector complex in the northern hemisphere is being discussed, as is the possibility of observing a very large volume of atmosphere by looking down from space.

EXPLORING EXTREME PHYSICS IN THE LABORATORY

As discussed above, one scientific quest that lies solidly at the intersection of physics and astronomy is to extend the domain of physical conditions under which the fundamental laws have been tested and applied. In some cases we are providing checks on theories where we expect to find no surprises (although the history of physics warns us not to be too confident). In other instances, for example, in the study of high-density matter, heavy-element production, and ultrahigh-energy particle acceleration, we really do not understand how matter behaves and need experimental input to guide our physics. Having described how astronomical studies are expanding greatly our scientific horizons, the committee now looks at the other side of the coin and discusses how a complementary program of laboratory investigation in high-energy-density physics can push the application of physical principles into completely new territory.

The facilities that are needed for this research—lasers, magnetic confinement devices, and accelerators—have largely been (or are being) constructed for research in particle and nuclear physics, plasma fusion, and defense science. The challenge is to foster the growth of a new community of scientists who will creatively adapt these facilities for high-energy-density science. The NRC is engaged in a separate study on this field.3

Existing and planned laser facilities, notably the National Ignition Facility, can create transient pressures as high as a trillion atmospheres. They can

be used to create “mini fireballs” that expand into the surrounding plasma and may generate strong magnetic fields and form relativistic shock fronts. The temperature may be so high in this plasma that the protons, which carry the positive charge in conventional plasmas, can be replaced by positrons, just as is thought to occur in quasars, pulsars, and gamma-ray bursts. Another important use of lasers is to measure the wavelengths and strengths of the ultraviolet and x-ray atomic emission lines that have already been observed in astronomical sources by orbiting telescopes.

Magnetic confinement of hydrogen at temperatures approaching a hundred million degrees is one technique for causing nuclear fusion reactions in order to generate power. In addition, several experimental facilities have been constructed in which it is possible to squeeze plasma to tiny volumes and large pressures and to study how they behave. In particular, it should be possible to study turbulence and magnetic reconnection, which take place when magnetic field lines exchange partners. These are of central importance for understanding cosmic plasmas.

An example of a particle accelerator with appropriate capabilities is the Stanford Linear Accelerator Center, which creates intense beams of electrons with energy up to 50 GeV with micron-size cross sections. These beams can be used to create extremely energetic plasmas by focusing them onto solid targets. In addition, by “wiggling” electron beams using strong magnets, it is possible to create intense bursts of radiation throughout the spectrum similar to those thought to operate in astrophysical environments. Additionally, the RHIC facility at Brookhaven National Laboratory in New York is starting to delve into the world of extreme physics by colliding heavy ions with each other at relativistic speeds. The debris from these interactions is analyzed in detectors like those of particle physics.

Although it is possible to create some of the conditions of pressure and temperature found in cosmic sources, it is never going to be possible to reproduce these environments completely. What we can do and what is far more valuable is to use high-energy-density experiments to determine general rules for the behavior of matter. We want to better understand the rules that govern the rearrangement of magnetic structures, how fast electrons are heated and conduct energy in the presence of the collective plasma wave modes, how the pressure changes with density in ways that are relevant to interpreting observations of planets, stars, and supernovae, and how these processes dictate the behavior of shocks, magnetic reconnection, and turbulence. The challenge is to understand all of this physics in a generalizable, device-independent fashion so as to extrapolate and export our understanding to solve astrophysical problems. It is quite likely that this will be

achieved by deriving universal scaling laws like those that are widely used in fluid dynamical investigations.