4

Technology Transition and Program Management: Bridging the Gap Between Research and Impact

Researchers, subject-matter experts, research program managers, and operational line managers participating in the workshops convened by the committee all pointed to the challenge of achieving actual impact on mission performance from research programs. Even under ideal conditions—when the research results are peer-recognized to be of high quality, when the user agency has a solid understanding of operational requirements, and when there is strong institutional incentive on both sides to accomplish the transition—success in bridging the gap between the creation of promising research results and the realization of effective impact may nonetheless be elusive. This chapter examines how systematic approaches can help research managers and user organizations collaborate to bridge the gap between conception and use.

The gap-bridging metaphor should not be taken to imply that the path from concept to product is linear or predictable. Indeed, many major IT innovations have followed paths with duration, complexity, and diversity of players rarely foreseen by the original innovators. This phenomenon was well illustrated in the 1995 CSTB report on the High Performance Computing and Communications Initiative,1 which offered a

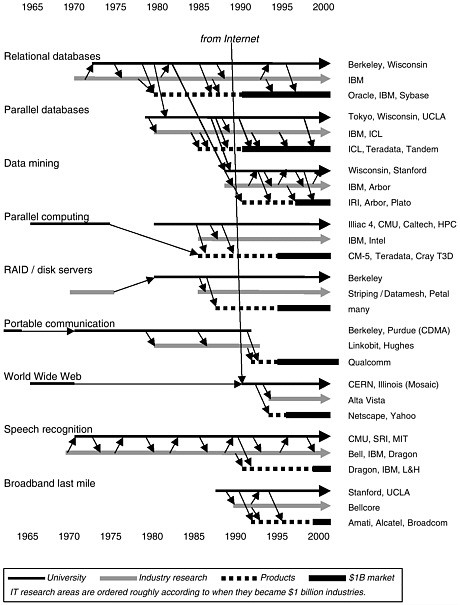

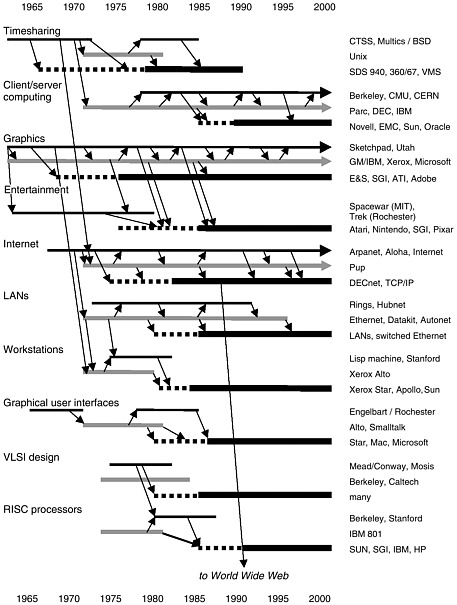

number of examples of well-established multibillion-dollar IT businesses emerging only after many years of research and development, with shifting roles of government-sponsored research, industry research, and industry development. Business areas considered in that report, nearly all of which continue to remain pivotal in the IT economy, include graphics and windows, redundant arrays of inexpensive disks (RAID), very-large-scale integrated circuit (VLSI) design, and reduced instruction set computing (RISC) processors (for an updated display of the links between research and major IT industries, see Figure 4.1). In each case, there was sustained government research participation, including both formative exploratory research and more focused attention to particular technical challenges. (Of course, not all research has this sort of outcome—some successful research has far-reaching socioeconomic or mission impact but nonetheless does not lead to billion-dollar industries furnishing products or services.)

From workshop discussions and other interactions of committee members with research-program managers, it is abundantly clear that government agencies that sponsor major IT research programs—such as NSF, DARPA and military service laboratories, NASA, DOE, and the National Institutes of Health (NIH)—have all evolved diverse cultures of program-management practice. Programs are managed according to management models and practices that are embedded in organizational culture and that may be highly evolved. For example, the DOD has a rich structure of technology-transition activities involving diverse contractors that enable it to hasten the maturing of critical defense technologies along the path from laboratory to operational deployment. This structure can be understood, for example, as a systematic addressing of the many risk issues (see the section “Dimensions of Risk,” below) that arise along this path. There are significant differences in management style and approach among the various mission agencies and programs. (Box 4.1 describes two agency programs in more detail.)

In traditional basic research programs, research teams often operate independently of any particular end user. The NSF’s current Digital Government program, however, represents an important first step in having researchers collaborate directly with potential end users. This kind of direct engagement enables researchers to understand requirements better, validate concepts earlier, and accelerate transition into practice. It also enables potential users to anticipate—and influence—emerging technologies. But regardless of whether an end user is present or not, transition issues must be explicitly addressed, and obtaining an impact in practice requires careful and flexible management by many parties. The teaming structure of the NSF Digital Government program can accelerate this process, but it does not replace it. The right combination of careful manage-

FIGURE 4.1 Examples of government-sponsored IT research and development in the creation of commercial products and industries. SOURCE: 2002 update by the Computer Science and Telecommunications Board of a figure originally published in Computer Science and Telecommunications Board, National Research

|

Box 4.1 Defense Advanced Research Projects Agency For many years there has been tacit acceptance within the R&D community of DARPA’s primary national role in certain IT areas critical to DOD in the long term. These include packet-switched networking, distributed computing, machine intelligence, software-reliability technology, and computer security. In each of these areas, program managers crafted engagements linking diverse participants in the research community, industry, and DOD according to principles that had been evolved through practice over several decades. This national leadership role enabled DOD to command the attention of the computer science research community, and indeed many computer scientists in universities have come to describe their research in military terms such as survivability, decision support, and situation awareness. Since the early 1990s, the strategic environment has been changing rapidly, prompted by the emergence of the private sector Internet, increasingly widespread computer-security challenges, greater military emphasis on asymmetric and coalition warfighting, broadening of the base of strong IT research universities, and a shift in the locus of innovation for many information technologies. Research and acquisition managers have attempted to adapt their strategies in response to these changes (with varying degrees of success). National Science Foundation At NSF, the principal criteria for research support are scientific quality and long-term socioeconomic impact. Perhaps unique to NSF is the emphasis on development of scientific foundations and a long time horizon, though other agencies, such as the DOD’s Office of Naval Research and the Army Research Office, also accept a long time horizon when they consider a potential mission impact. In recent years, NSF has increased its emphasis on broad socioeconomic impact yet further, coinciding with the acknowledgment in the policy and legislative community of the emerging pivotal role of information technology in the national infrastructure and in society broadly. This is also a reflection of the increased attention to measuring the effectiveness of government programs. At the same time that it seeks to better identify the socioeconomic impacts of the research it sponsors, NSF continues to recognize that breakthroughs in “curiosity-driven” basic research in fundamental areas can have unexpected impacts in practice, and it maintains a broad portfolio of basic research in information technology subjects. |

ment and favorable and even fortuitous circumstances may be needed in the operating environment of the recipient organization and in the markets for particular underlying technologies. Complicating this, the time scales can be very long—a decade or more in many cases—possibly well beyond the life span of individual research projects.

The NSF’s Digital Government program illustrates that there is a natu-

ral alliance between researchers and end users. Both groups seek to identify new ways to address fundamental challenges in capability by stimulating new concepts and accelerating their definition and development. Researchers gain access to challenging problems and a real-world context in which to explore them. End users who participate in this process gain insights into technology opportunities and thus are better able to influence their future and accelerate the introduction of new ideas into their production systems. Despite this alliance, however, the transition from new concepts and prototypes to production systems and market acceptance can be lengthy and difficult. Below are examples of questions that might be raised by a potential technology adopter in considering a promising new information technology. These questions, which apply regardless of whether the technology has been developed within the government, at a university, or by a commercial contractor, illustrate how the challenges of transition go beyond identifying functional requirements and solving technical problems:

-

Can the technology be effectively integrated into existing operations and procedures? Can it be packaged to interconnect with related systems? Does it comply with the interface standards that are adopted within the organization or by the integrators responsible for other major systems used by the organization?

-

Will it be a cost-effective way of meeting requirements—in terms of both initial acquisition and total life-cycle costs?

-

Are there fundamental difficulties in adjusting the human interfaces in a concept-demonstration system to conform better with organizational culture and practices?

-

Can the new technology scale up appropriately to expected and potential operational levels? What are the dimensions of scaling, and how can testing and evaluation be conducted to understand the limitations of capacity and performance of the concept?

-

What re-engineering is needed with respect to the prototype concept-demonstration system? Are certain capacity limits expediently “hard-wired” into the prototype, for example, in order to simplify implementation?

-

What are the dimensions of dependability and robustness that must be considered in adopting the prototype system? Will it respond appropriately, for example, to operator errors or misconfiguration?

-

Can the prototype system handle the full range of categories of inputs, or does it handle only a representative set? Are there hidden scaling challenges?

-

Has the prototype been designed to support possible trajectories of evolution in the requirements it is addressing, in the systems environ-

-

ment in which it is situated, in the underlying technologies, and in the culture and mission of the organization in which it is used?

-

Who will be available to respond to questions and provide technical support when problems arise or when adaptation is needed? What assurances can be provided to enable an organizational commitment to this new technology?

From the standpoint of the researchers exploring a new algorithm or systems concept, these questions may appear to be significantly less interesting than the more fundamental issues related to overall feasibility of the technical approach. This amounts to a division of both expertise and responsibility: researchers and their sponsors may focus their initial effort on the overall “concept” or the new algorithmic (or other) idea. But part of the price of success is that once these core conceptual issues are addressed, a diversity of other kinds of expertise must be applied to address adoption-related challenges such as those enumerated above.

A successful transition process must address a wide range of concerns beyond the “merely” technical—including economic and market issues, intellectual property and nonappropriability, standards, the “tipping” of the market in favor of a particular product or standard, organizational issues, and engineering and usability issues. At the committee’s workshops, in the extensive discussions about the development, evaluation, and transition of new technologies to practice, participants particularly noted the risks and challenges of scaling and integrating new capabilities. But perhaps the most important lesson learned from those discussions is that transition cannot occur without broad knowledge of the technological and operational environments, including the extent to which those environments are affected by changing market conditions, standards, and other external forces.

STRATEGIES AND MODELS FOR PROGRAM MANAGEMENT

How can researchers, research managers, acquisition managers, and users develop successful strategies in this complex and changing environment? The sections below present an approach that is based on three principal elements:

-

Consideration and analysis of past programs, including both positive and negative experiences;

-

Use of program-management models, which facilitate comparative consideration of alternative approaches; and

-

Identification of proven program-management strategies by which

-

management decisions can maximize the opportunity for impact with acceptable risk and time horizons.

This chaper discusses two models2 in conventional use in program management. Also enumerated are several examples of strategies employed by program managers in implementing programs to achieve particular kinds of goals. (The emphasis here is on long-term programs seeking a focused impact; approaches to managing purely exploratory programs are not within the scope of this document.)

Models play an informal but essential role in developing and managing successful long-term research programs. Little real data on research-program management exist, which means that managers generally plan and decide largely on the basis of personal experience and organizational culture. The models identified here are intended to organize and express concepts that are generally already present in the culture of program management in various agencies and organizations. The following two models are widely used, often in a tacit way, by research-program managers in articulating, developing, and managing their programs.

-

The supply chain, or technology food chain, is an identification of stakeholders—and the relationships between them—involved in transforming a new technical idea into operational reality (see Box 4.2). Because this model is useful in understanding the respective roles and interests of stakeholders in the process of developing and delivering capability, it can help guide a program manager in identifying potential partners in an innovation process.

-

The dimensions of risk are categories of engineering or market strategy issues that a program manager must address as a new technology or concept moves through the supply chain from an initial innovative

|

Box 4.2 It is well understood that innovation does not follow a neat, linear progression from research laboratory to delivered product; rather, innovation involves complex flows of ideas and people among the various organizational participants. Nonetheless, it is often useful to view IT research, development, and application as constituting an innovation pipeline that includes the following:

This is a normative model for incorporating new technologies and practices into government systems and for improving services. As is discussed in this chapter, the interests and incentives of the actors in this process are not in reality always well aligned, which leads to a set of challenges for mission agencies and research-program managers. |

-

idea to deployment in systems. Engineering issues include, for example, the scalability of a component or system, usability and adoption issues, and robustness of internal interfaces. Market strategy issues include, for example, whether to focus on adaptation of existing components to provide some new capability or to stimulate a new component market that packages the capability. The model for budget management used in the DOD for research, development, test, and evaluation addresses the dimensions of risk explicitly by categorizing funds used for programs (e.g., 6.1, 6.2, 6.3a, and 6.5) according to the character of the risk being addressed.

The following sections use these two models to elucidate a number of program-management strategies and tactics.

LEVERAGE IN THE SUPPLY CHAIN MODEL

Government IT systems are acquired in several different ways. If off-the-shelf components (see Box 4.3) such as operating systems, network components, and office-productivity tools can be used, they are obtained from IT vendors. If custom engineering is necessary (to fill in where off-the-shelf components are lacking), contracts are typically let with large commercial systems integrators. Integrators themselves often have incentives to incorporate off-the-shelf components into systems, thereby limiting the extent of new engineering. Larger mission agencies such as DOD, DOE, NASA, and NIH do some of this custom work in their own, internal engineering organizations and affiliated laboratories. In some cases, the custom engineering can be replaced by using vertically tailored products from domain specialists (for example, geographic information systems). Finally, in many government agencies, “seat management” approaches are used in order to provide federal workers with a base level of common, largely off-the-shelf services (desktop computer, office automation and other basic software, network connectivity, and support).3 These provide both a core set of “productivity” tools and a common platform for custom and vertical applications.

In any case, the collaboration to develop new agency systems involves more than the immediate integrators and suppliers, because these organizations themselves obtain components and technologies from external sources. Each of the acquisition paths noted above involves a wide range of players—including hardware and software component vendors, systems integrators, niche technology providers, vertical-solution developers, government research-program managers, government acquisition-program offices, acquisition regulators, standards organizations, and other nongovernment customers—that have diverse capabilities and interests. Most integrators maintain significant relationships with vendors in order to assure a future supply of critical technology components that can address likely needs. A mission control system developed for NASA Space Science, for example, may include off-the-shelf commercial hardware in the form of workstations, servers, and network appliances, and commercial software components such as operating systems, servers, database systems, file systems, graphical toolkits, third-party domain-specific (or “vertical”) tools, and networking components. An integrator will develop custom software components and “glue code” to provide the aggregate functionality and enable a flexible integration of components.

|

Box 4.3 Very few systems now being developed are entirely custom-made. As their dominance of the IT arena diminished, federal agencies recognized that they could no longer afford to have vendors design and build systems “from scratch.” This led to the notion of purchasing systems fabricated from commercial hardware and software—so-called commercial off-the-shelf (COTS) technology. The goal with COTS is to employ standard, widely used hardware and software products wherever possible so as to decrease costs and increase the likelihood that systems will be interoperable with other systems. The COTS strategy must grapple with a basic tension: even as government seeks through COTS to obtain a greater degree of flexibility that enables future vendor choice, vendors have an incentive to maximize the proprietary content of a system in order to increase the likelihood of future sales. Agencies thus face the dual issue of selecting an appropriate framework/architecture and managing the consequences of that commitment. In other words, once an organization has made decisions about overall system design, this constrains future decisions and limits which software products and vendor product lines will be compatible. Recent legislation and regulation have created strong incentives for acquisition program managers to aggressively incorporate off-the-shelf (OTS) components as well as to support iterative acquisition models, prototyping, product lines, and other processes. Such incentives are appropriate in an environment of rapid technological change, shifting requirements, uncertainties in the life cycle, and demanding interoperation requirements. Use of OTS technologies can improve affordability, reduce training requirements, improve dependability and interoperability, and enable the acquiring organization to “ride the curves” of performance growth (e.g., Moore’s law) over the life span of the system. OTS components can be from commercial sources (COTS), government sources (GOTS), or open sources (no acronym yet). On the other hand, the acquisition organization may find that it must sacrifice some control in the engineering process in order to accommodate vendor products. In particular, adoption of OTS components may force slight shifts in functionality that must be accommodated in requirements engineering and validation. In addition, there can be significant risk in verification and validation, since the acquiring organization may not have full visibility into the design or even functionality of the component. The acquiring organization also does not control the trajectory of growth for OTS components, and may indeed have little opportunity to influence vendors to accommodate special needs, given the breadth of their customer communities. Achieving a suitable balance in the adoption of OTS components can be a significant challenge, especially because the benefits of adopting OTS components may be harder to measure (though they may be greater) than the benefits of increased control. |

The integrator will also employ tools and practices in the engineering process that, while not part of the final product, are nonetheless critical to the success of the effort. In short, the development of a major system involves a large number of separate but interdependent organizations— this is the information technology supply chain.

The research manager needs to have a nuanced understanding of the respective roles, capabilities, and interests of participants in the supply chain in order to maximize impact of a mission-oriented research program. The innovation enterprises and R&D strategies in the major mission agencies such as DOD, DOE, NASA, and NIH have therefore been structured in recognition of the scale, diversity, and depth (beyond firsttier integrators and vendors) of this network of supply-chain participants in providing advanced information technologies. For example, the history and, indeed, continued existence of DARPA illustrates the recognition in DOD years ago that achieving a desired stimulus over the long term requires a broad range of relationships across this supply chain of components and technologies. DARPA has developed a number of mechanisms (and made sustained investments) to engage with entities throughout the supply chain in order to ensure that long-term technology requirements can be feasibly addressed. These mechanisms range from sponsoring the development of underlying technologies and engineering processes to the development of measurement and evaluation methodologies that permit assessment of technology candidates.

In this regard, a strong parallel exists with the actions of major IT users in the private sector who work closely with suppliers in order to ensure that future needs will be addressed. Prudent developers of transition strategies for government research programs thus generally attempt to learn from industry practices and incentives, while keeping in mind that there are significant differences between government and commercial acquisition—for example, with respect to mission, public purpose, planning horizon, organizational structure, acquisition practices, the nature and extent of acquisition oversight, and regulation.

Mission agencies have an additional interest beyond their immediate mission requirements: taking farsighted steps to realize future mission benefits and adopting broad-based approaches to achieve broad societal impact. (A familiar example is DOD’s thoughtful investment in the development and deployment of packet-switched networking technologies.4)

It could be argued that these long-term and broad impacts can be a natural consequence of a combination of factors unique to government research management:

-

The technologies were developed without a specific requirement to maintain proprietary constraint on the core enabling technical concepts, which permitted broad scientific engagement with the ideas; and

-

The technology approach was designed to be vendor-neutral and to address issues of heterogeneity and interoperation from the outset, enabling broad participation from the vendor community.

Although not unique to government, there were two important additional factors:

-

The research plan addressed scaling issues from the outset, in order to address challenges of long system lifetimes and broad spans of deployment (potentially scaling up to millions of users within DOD alone); and

-

Managers sustained a focus on long-term vision and impact, spinning off nearer-term capabilities but without compromise to that overall vision.

WILL INDUSTRY DO IT?

An assumption in government that “industry will do it” may, for broad areas of IT challenge, be incorrect, despite the rapid pace of industrial IT development.5 In industry, the return-on-investment calculations generally preclude innovations that are fundamentally long-term in character or that have broad and nonspecific impact. In the language of economics, many of these innovations are nonappropriable—the impact of the research results diffuses broadly into the technical community, and cannot successfully be confined to a single sponsoring organization. That is, the full value of the innovative ideas cannot be retained by any individual party—they create value for multiple parties. And because none of the parties can retain full value, their willingness to pay for the innovation can diminish to zero. This is characteristic of what economists more generally call public goods. Information technology innovations of this

|

5 |

See, for example, the President’s Information Technology Advisory Committee (PITAC) report (PITAC, 1999, Information Technology Research: Investing in Our Future (PITAC Report to the President), February 24, available online at <http://www.itrd.gov/ac/report/>), which observed that “the IT industry expends the bulk of its resources, both financial and human, on rapidly bringing products to market.” |

character include, for example, development of engineering practices that may lead to across-the-board improvements in reliability or foundational steps in methodology for the development of heterogeneous distributed systems.

A good deal of IT-related innovation yields intellectual property that can be packaged and protected; in other words, it is appropriable. But for a wide range of innovations, individual companies cannot make the case for investment because the value obtained may spread widely—including to competing companies. Scientific findings such as mathematical theorems or other laws of nature are not generally considered protectable intellectual property, and hence industry investment to develop them will not likely be justifiable purely from a competitive or return-on-investment standpoint. Some results of this kind can be retained as trade secrets, though secrecy may not be sustainable once they are employed in products and services. Examples include advances in the basic concepts of algorithm analysis, programming-language foundations, and performance estimation and measurement techniques.

Further compounding the challenge for the program manager, the technology sources for system integrators and consultant organizations are generally vendors, and less often, original innovators. Competitive advantage for a system integrator or other vendor often derives from predictability, risk management, and process, rather than from aggressive enhancement of capability. Indeed, acquisition program managers whose principal incentive is to achieve predictability of outcome rather than enhancement to overall mission capability may make overly cautious choices that do not lead to optimal long-term outcomes.

A system integrator’s interest in research-generated innovation may thus be limited, as it represents a potentially disruptive influence on that company’s way of doing business and could even undermine its competitive advantages.6 However, there are numerous collaborative relationships between basic researchers in universities and their counterparts in vendor organizations, for such reasons as providing the vendor with previews of new technology developments or better access to educational programs and professional talent.

Universities and other research laboratories actually have a natural shared interest with the mission organizations at the “top of the food chain”—it is in these organizations that unmet needs (and thus interesting research problems) are first identified and, often, best understood.

In particular, there is a natural synergy between the government mission to create public goods and the role of university (and federal laboratory) researchers in developing and disseminating ideas and having them evaluated and appreciated by colleagues. This synergy is reflected in the pattern of federal agencies engaging university researchers as agents of innovation. While there are natural processes in the supply chain that enable university innovations to flow into government systems, program managers can accelerate and enhance these processes by working at appropriate points of leverage. For example, government can support researchers in creating “prenormative” definitions for interfaces that can lead to standards for the delivery of new kinds of information services as a way to stimulate innovation.

The Department of Defense, for example, develops its most technologically aggressive concepts by engaging university and laboratory research teams. If the concepts have promise and the risks of further development and scale-up are acceptable, then the DOD will stimulate collaboration between those research teams and vendor or integrator organizations. The DOD invests in this participation in order to “buy down the risk” of commercial organizations committing resources, assimilating innovations, and developing underlying technological infrastructure in advance of concrete evidence of a market.

When university researchers do develop appropriable technologies, they can follow several possible routes to achieving impact, many of which involve moving into the marketplace. One of the most common pathways (even in difficult economic times) is the creation of start-up companies that provide vehicles for packaging and proving a technology and also for evaluating its underlying value in the marketplace. Successful start-up companies may be acquired by vendors—or, less often, grow into vendors themselves. The vendor thus acquires the packaged intellectual property, as well as the experience, validation, and contacts with early adopter customers. Alternatively, the intellectual property may be packaged and marketed directly to vendors by a university. Regardless, there are good reasons why a government (or private sector) research sponsor may promote this kind of commercialization. It can accelerate the transition of the technology into latter stages of the supply chain and thus into practice, at lower cost, while reaping the knowledge and skills of leading-edge researchers.

Another potential point of leverage for a government agency is to establish an organization along the lines of an incubator/venture-capital investor that can share financial risks in identifying, developing, and demonstrating new technologies. (This idea motivated the Central Intelligence Agency [CIA] In-Q-Tel, which seeks to invest in technology areas where both a CIA need and commercial interest exist.)

DIMENSIONS OF RISK

Risk (or, more accurately, risk exposure) may be roughly defined as the product of the probability of an unsuccessful outcome with the extent of loss suffered when that outcome is experienced. Thus, risk is high when either unsuccessful outcomes are very likely, regardless of consequence, or when unsuccessful outcomes are less likely, but with high consequence of loss.

These meanings are distorted slightly in the vernacular of program management. A research program is often referred to as a “high-risk, high-payoff” activity when it is attempting to achieve a major break-through, but with uncertain likelihood of success. Long-term basic research programs are generally intended to be of that type, in this positive sense: for researchers, a “high-risk, high-payoff” program has a higher likelihood of failure but a larger impact if successful, while a more conservative “low-risk” program—focused, perhaps, on achieving incremental gains in an established technology area by following routine scientific approaches—carries a higher likelihood of lesser impact. This notion of risk is almost always approached in a qualitative manner, as it is rarely the case that probabilities of failure and consequences of success can be usefully quantified.

How can a program manager think systematically about questions related to risk when very few of the variables of interest can be quantified and when programs exhibit diverse kinds of risk? In this section, several dimensions of risk are identified, along with some examples of strategies that can be used to mitigate the risks and thereby move innovations closer to adoption. This framework enables program managers to put names to risks and strategies and to identify the risk focus at a particular stage of program execution; addressing too many risk issues at once in a program can compound overall program risk to unacceptable levels. On the other hand, sequencing risks necessarily entails some “willing suspension of disbelief” with respect to typical risk issues later on, such as scaling and integration.

An example of potentially high-risk investment by government is the development of new concepts for system protocols and architectural interfaces. The risk derives primarily from a combination of achieving the technical goals (for example, aggressive improvements in security and scaling for the Internet Protocol) and achieving acceptance among stakeholders and impact on the mission applications. Box 4.4 illustrates how risk emphasis changes as a technology is evolved.

The sections below consider several kinds of risk issues that are relevant to research-program management. They illustrate the diversity of kinds of risk issues and the need, when defining research and transition

|

Box 4.4 An example of how risk emphasis can shift over the course of a maturing process is the evolution of the World Wide Web from its original CERN (European Organization for Nuclear Research) design. From an orthodox IT engineering standpoint, many researchers have long considered the original concept and implementation of the Web to be flawed in a number of technical respects. For example, unlike other institutional information-management systems (such as digital libraries), assets are mutable and identified by location rather than by unique identifiers (i.e., links can break). Also, HTML Web pages lack sufficient structure to enable effective structural indexing. And issues of reliability, replication, security, and naming, for example, were not fully addressed at the outset. On the other hand, if the designers at CERN had been forced to address all these issues early on, very likely we would have no Web at all. Instead, the initial imperfect Web showed itself to embody a robust and compelling concept that was successfully bootstrapped into widespread use, even though substantial evolution and development of both the concept and its realization still lay ahead. This kind of history is an example of iterative development with sequenced consideration of key risk issues. A detailed historical analysis would reveal which participants in the innovation supply chain addressed which aspects and when. From the standpoint of program management, all details of the trajectory of an emerging technology from concept to impact cannot be predicted or determined in advance; the environment is just too complex and unpredictable. However, a deliberate but adaptive strategy for identification and sequencing of risk issues can accelerate the process significantly. In the case of the Web, examples of such activities include development of the Mosaic browser at the University of Illinois’s National Center for Supercomputing Applications and the decision by CERN to make the Web technology freely available.1 This bootstrapping is a significant program-management issue. The early acceptance of the imperfect Web meant that many stakeholders were able to participate in its evolution. This raised the overall cost of the evolution process, but it also distributed that cost and exploited early market acceptance. Conversely, overcommitment to particular requirements early in a process may appear to offer “lower risk” than an iterative approach, but in fact it may raise risk because designers are considering too many issues simultaneously and so do not have appropriate intermediate evaluation points. Indeed, in the case of the Web, the locus of leadership and management shifted considerably over time, ultimately diffusing into a community process. An additional lesson is that the imperfect Web evolved to do things that a perfect-from-the-outset Web might never have addressed. The imperfect Web did not support transactions (it had insufficient network privacy to carry credit cards or personal data) but was evolved to support this capability. |

programs, to establish the particular risks to be addressed in program execution. For each risk issue, appropriate management strategies for addressing it are identified.

Evaluation Risk

As noted above, evaluation of research progress and ultimate potential for impact can be problematic in early-stage or exploratory-research programs. Indeed, some organizations may avoid long-term and exploratory activity altogether because they cannot adequately justify the investment—they cannot provide externally verifiable measures of the potential for ultimate impact downstream. This is a perennial problem, as there are a number of good reasons why a quantitative basis for research-program management continues to be elusive. Most obviously, many of the variables that matter are difficult to measure, and the lag from program conceptualization to impact in deployed systems can be very long, exceeding a decade or longer.7 Even when considerable mission impact is achieved, traceability from initial development of concept to mission capability may be elusive.

In a number of critical technical areas, in which the measurement challenges are particularly acute, progress may be thwarted as a consequence. These areas include software reliability, computer security, the effectiveness of autonomous systems, and the usability of human interfaces. In large-scale engineering projects, there can be considerable evaluation risk with respect to life-cycle issues such as interoperation, evolvability, and reuse. In digital-government programs in particular, evaluation risk is present in the definition and development of infrastructure for major information and transaction portals, in the use of advanced collaboration technologies to facilitate intragovernmental processes, and in the development of software technologies to support and evolve highly dependable systems.

More generally, what kinds of approaches can be adopted to enable progress despite evaluation risk? Strategies include these:

-

Building measurement into research. Delaying research until measures are developed may be an inappropriate management response, because the same understanding that yields progress often also yields mea-

-

sures. Indeed, one management strategy often used is to challenge researchers to identify appropriate measures of their own progress as part of the research effort, and to apply those measures on an ongoing basis to their own and other work. This can include, of course, retrospective analysis of similar projects in order to identify correlates with various aspects of success.

-

Benchmarking. In the absence of direct measures, especially in problem spaces of unknown dimensionality, benchmark data sets are useful surrogates. This approach has been successfully used in areas such as high-performance computing, speech recognition, image understanding, and text management. Well-designed benchmark data sets can help reveal the particular elements of capability within a set of “competing” technologies. Different approaches to a problem—speech recognition, for example—may excel in different respects, such as the handling of ambient noise, use of free microphones, speaker independence, extent of system training, and limits on vocabulary and grammatical complexity. This identification assists potential downstream adopters because it enables them to identify relevant evaluation criteria better.

-

Testbed. Many research programs focused on achieving realism or scale-up along various dimensions create testbed facilities for conducting larger-scale experiments. “Scale,” here, can refer, for example, to the size of a database, the number and skills of users, the extent of distribution or interconnectivity, robustness, performance, and other factors. Further, by creating a facility shared by multiple research efforts, costs can also be shared and experimental results more easily compared and evaluated. In addition, potential users can participate in the testbed definition and evaluation in order to ensure realism. This approach is widely used in major programs (e.g., DARPA Gigabit Networking and DOD Joint Warrior Interoperability Demonstration programs). The principal risk associated with the approach is defining the testbed project in a way that achieves realism while enabling research issues to be addressed effectively.

-

Gentle-slope adoptability. One risk-mitigation approach is to design programs that offer a “gentle slope” with regard to return on investment—that is, increments of effort in adopting a technology yield increments of impact. This approach (named by Michael Dertouzos) can enable researchers working with early adopters both to make midcourse corrections and to collaborate with the adopters in defining evaluation criteria.8 The resulting “early validation” can help reduce evaluation risk even when objective measures are unavailable.

Solution-Concept Risk

Another risk issue faced by managers of a problem-directed research program is the extent to which the program should commit to particular solution approaches. Such commitments could include choices for system architecture (e.g., business-rule framework in a three-level architecture), measurement frameworks, technical approaches (e.g., neural networks versus other decision frameworks), or infrastructural components (e.g., operating-system choice). Solution-concept risk is an issue, for example, in areas such as improvement of information security, text-search capability, capability to integrate databases, or support for distributed teamwork in some operational domain. Underconstraint of solution approach can lead to solutions that are incompatible with the target environment. Overconstraint, on the other hand, can exclude development of innovative out-of-the-box approaches—such as RAID storage architecture to complement development of larger and denser disks—that may offer superior capability. When early projects were initiated in parallel computing, for example, a diversity of architectural approaches were considered, which yielded a number of different options that permit a number of different classes of problems to be tackled today.

One example of solution-concept risk in the domain of digital government is the development of technologies to model and remediate unwanted linking of databases (e.g., to prevent revealing identities of medical-research subjects). Solution approaches could include, for example, development of large-scale meta-models of released information, data-dithering techniques, and creation of surrogates for zip codes as geographic identifiers. Premature commitment to any one of these could increase risk. Computer-security research faces a similar challenge—it can be easy for a program to overcommit to particular threat models or security architectures and thereby be deflected from critical technical areas and a diverse portfolio.

Strategies for addressing solution-concept risk include these:

-

Mixed strategy. This approach for exploratory programs is to adopt a diverse portfolio of technical approaches. While it is an obvious response, how to manage it for success is not so obvious, primarily because of evaluation risk. That is, a more constrained strategy may reduce within-program evaluation risk but increase the overall evaluation risk with respect to the intended impact.

-

Iceberg model. Diversity enables individual efforts to accept greater risk while not substantially increasing overall program risk. If there is an expectation that not all research efforts will yield impact—if local failures can be tolerated—then greater risks can be taken, leading potentially to greater levels of innovation. A supporting organizational culture is pre-

-

requisite to success in adopting this model. DARPA and NSF, for example, have followed this model in certain critical technical areas, with considerable success. These agencies understand that too-aggressive management at this stage could inhibit the exploratory nature of this activity and prematurely eliminate promising approaches. This approach may be adopted by researchers as well as funding agencies: a principal investigator may consider many possible approaches to a problem before selecting a subset for more focused effort. This approach can be applied iteratively, following a “progressive deepening” strategy.

-

Avoid overmanagement. Project managers may be tempted to constrain solution concepts prematurely in order to obtain a more linear program execution model with predictable milestones. This optimization in favor of near-term predictability may actually increase overall solution concept risk because it compresses or eliminates the exploratory phases critical to the invention or identification of new solution concepts. Unless a solution concept is clearly understood in advance, program managers need to balance visibility and predictability (and hence measurability) with the fostering of exploratory and creative activity.

Problem-Concept Risk

Often the perceived gaps in capability are not the actual gaps. An organization may identify as a technology challenge what is in fact a challenge in process, organizational structure, or technological-legacy management. That is, risk is associated with the correct identification of requirements to be addressed (validation). For example, when designing a system to facilitate information sharing in an organization, the real challenge may in fact derive from organizational culture rather than from technological impediment. Or, an effective solution may need to address organizational culture factors and technological factors.

Sometimes the difficulty is not in requirements elicitation but rather in fluidity of requirements. Consider, for example, the design of command-and-control systems for use in crisis management or military operations. There are important interactions between the capabilities of available communication and collaboration technologies and the processes and physical organization of command posts. If aggressive innovation is sought in command-and-control capability, constraining the technical focus to address communications capabilities within existing organizational and physical structures may fail to deliver sufficiently innovative results and may indeed reinforce older, less-effective practices. On the other hand, an overly broad focus may not yield meaningful results. Identifying the right scope of research focus is thus pivotal in effective program design.

Examples of areas of potential problem-concept risk in digital-government programs include new command-and-control capabilities for crisis management, statistical-analysis support for nonexpert users of government statistical data, and database interoperation support for rapidly linking geographical databases to support crisis response.

Strategies for addressing problem-concept risk include these:

-

Wizard of Oz. Even when a research challenge is appropriately scoped, there can be significant difficulties in designing a program that enables rapid and graceful coevolution of technology and its context of use. In particular, naÏve iterative approaches can yield, at best, long validation cycles and, at worst, divergence from goals. The so-called Wizard of Oz approach involves the creation of system mock-ups that do not necessarily embody real capability but that can nonetheless enable potential adopters to experiment with concepts of operation. The early validation received from experiences with the mock-ups can reduce problem-concept risk and focus technology development efforts. The mock-ups also help researchers communicate with potential adopters by providing a concrete instantiation of concepts; where appropriate, they may also serve as surrogates for formal specification of requirements or technological capabilities. The Wizard of Oz tactic thus also addresses risks related to evaluation, integration, and usability.

-

Double Helix. As potential users gain experience with new capabilities, the problem concept may shift in response to that experience. For example, the use of collaborative-filtering technologies has had significant effects on concepts of operation for electronic-commerce sites. In the Double Helix approach, a coevolution of operational concepts and technology concepts occurs. Close collaboration of technical researchers and subject-matter experts may, after several iterations, yield simultaneous innovation in both operational concept and underlying technology. Also, validation can be enhanced through ongoing empirical assessment of such coevolution. This approach has been applied with some success in the DARPA Command Post of the Future (CPOF) program, particularly in identifying and evaluating new approaches to visualization and collaboration in midechelon military command posts.

Integration and Adoption Risk

Can the technologies developed within a program be effectively integrated into an intended systems or organizational context? Answering this question requires consideration of issues ranging from interoperation to usability. For example, wearable computer systems used in systems-maintenance applications need to be integrated with evolving practices

and systems for documentation management and maintenance reporting. This could be called “business process adaptation risk.” While certain adoption risks must be addressed early in a research effort, addressing too many of them too early can multiply risk and impede accomplishment.

Integration risk is a significant factor for many (and perhaps most) digital-government research projects. Many new capabilities must be integrated with evolving commercial components as well as with custom-integrated government IT solutions. In other words, both the target environment and the base technological infrastructure are rapidly moving targets.

Many of the strategies mentioned above are used to address adoption risks. In addition, the following specific strategies apply:

-

Pipeline. The pipeline model, often used in long-lived research programs focused on strategic challenges, embraces a portfolio of research efforts that span a range of levels of technological maturity. Achieving that broad span may entail working with multiple stages of the innovation supply chain—basic researchers, exploratory-development teams, companies developing new products, and companies integrating systems, among others. This approach was used with success in the DARPA Strategic Computing program in the 1980s. The program followed a “pyramid” model that encompassed simultaneous work on generic enabling technologies, multipurpose applications infrastructure, and aggressive experimental applications.

-

Producer-consumer connection. One of the features of the multiagency High Performance Computing and Communications program (HPCC) of the 1990s was the direct connection it established between computational scientists and engineers and the developers of advanced high-performance computing and communications systems. The connection enabled system developers to get early validation and feedback from users (see the subsection “Evaluation Risk,” above), and it enabled the users to gain experience and assess scalability issues for emerging platforms. The program accomplished this by seeding the market—“buying down risk” on both sides, by assisting users in acquiring aggressive systems and assisting developers in maturing concepts so that they could sustain external evaluation. This is analogous to, but not the same as, the alignment of end users and innovators in mission programs, including NSF’s Digital Government program. The distinction is that the producer-consumer model is focused on building links in the supply chain, while the NSF Digital Government program emphasizes directly connecting the producer and consumer. Producer-consumer connection issues also arise when organiza-

|

Box 4.5 How can the introduction and use of new technologies in the immediate response to a crisis be managed so as to maximize their availability and better use the talents of emergency staff? One idea that emerged from the committee’s consideration of crisis management is to attach a clearinghouse function to emergency operations centers that are generally established to respond to crisis situations. A clearinghouse would help systematize the introduction of technology into crisis response activities (an effort that is frequently haphazard today) and help promote effective use of information technology by technical specialists and emergency managers during response to and recovery from disasters. The clearinghouse would provide a single point of contact for easy exchange of information among technology companies, researchers, emergency managers, and practitioners and provide a check-in and check-out point for all technology vendors and researchers at the incident scene. It would also help direct vendors, researchers, and observers away from overburdened local government officials and toward the specific areas of need. The clearinghouse would allow for a more coherent and methodical investigation of all disaster impacts, the gathering of “perishable” data, and the tracking of all field investigations. It would help ensure that all areas had been thoroughly explored for resource needs. Further, it would facilitate documentation of findings and observations and provide all investigators with updated information on damage, through daily briefings and reports. The clearinghouse should be as close to the affected area as possible, while still providing the necessary space and support services. It should be located so that it affords access to the emergency operations center, although physical proximity may not be as important as electronic connectivity. |

-

tions seek to rapidly acquire technology to support ad hoc needs, as occurs in crisis response (see Box 4.5).

-

Common architecture. Interoperation risk can be reduced considerably by adopting common architectural frameworks and interface specifications. When the commonalities are well chosen, system- or component-specific interoperation challenges are replaced by framework compliance. Widely adopted frameworks—for example, mainstream commercial application programming interfaces (APIs)—can provide significant inter-operation benefit in mission systems. Conversely, poor choices in frameworks can result in exclusion of mainstream components, high costs of integration and, when framework technical characteristics have not been fully evaluated, high risks both at the component and system levels. Because of network externalities, frameworks must be evaluated on the basis of technical criteria, market acceptance, and potential trajectory.9 The

-

point is that the system architecture can itself be a source of risk, which is appropriate when architectural exploration is a program focus.

-

Framework building. Introducing a new protocol or architectural framework shifts emphasis in a research program from particular realizations of capability to the service interface through which it is delivered. This approach was used in the early days of the Arpanet in order to ensure that issues of scaling and heterogeneity were addressed from the outset. It is a risky approach, however, because protocols, interfaces, and other commonalities only have impact if they are widely adopted. In order to drive down the risks of adoption, specific tactics are used. For example, both the Internet Engineering Task Force and the World Wide Web Consortium generally require reference implementations to exist before a commonality can be considered for potential adoption. Introduction of service interfaces into existing prototype systems can be a successful strategy to reduce the costs and risks of entry for new participants. This was, for example, the goal of the Defense Modeling and Simulation Office (DMSO) when it introduced the HLA protocol in the early days of the Defense Simulation program. The risks of this effort were considered acceptable because existing prototypes provided evidence of value and capability, enabling a more direct focus on interoperation and scaling up. In the early days of the International Organization for Standardization’s Open Systems Interconnect (OSI) network architecture effort, uptake was slowed by the absence of compelling evidence of feasibility and value.

-

Scaffolding. Interoperation risk at the system level can be addressed through the creation of scaffolded components. In scaffolding, a common technique in component-oriented system design, missing components are “stubbed out” with relatively trivial placeholder components that have limited functionality. This enables partial early validation of compliance with internal protocols and interfaces (APIs).

Moore’s Law Risk

Several years of effort may be required to develop prototypes and evaluation systems that embody a new IT concept. Few IT implementations are insensitive to the performance of underlying technologies. Improvements in processor speed, network capacity, storage capacity, manufacturability and economies of scale, and infrastructural reliability can enable significant new approaches, for example, to capability, architecture, and usability. Researchers can anticipate the improvements likely to occur over the lifetime of a research program by extrapolating infrastructural capability according to Moore’s law and its analogs. If the matured concept is deployed 3 years after program initiation, for instance, the conventionally deployed platforms at that future time could be more than

four times as powerful as those generally used when the research program was initiated.

To enable researchers to anticipate these changes, research-program managers sometimes employ a tactic of “living in the future,” in which unusually high-performance platforms are deployed to researchers in order to enable them to gain experience with the targeted future platforms. This is sometimes also called “leading the receiver,” because of the analogy to a football quarterback throwing the ball to the expected location of the wide receiver, even though the receiver may be far from that location when the throw is initiated. The tactic has been applied successfully in programs for computational science, information visualization, and applications for high-performance networking. In digital government, this approach could be used, for example, to experiment with the use by census takers of low-cost wireless handheld devices (now emerging in the marketplace), with speech interfaces for use in crisis management, or with very-high-performance network links available in rapidly deployed field settings for crisis management.

Reliability and Usability Risks

Many IT applications require high levels of assurance of system availability, of data confidentiality and integrity, of design compliance to laws or regulations, or of correctness of design and implementation with respect to safety or functional properties. This is particularly true for mission-specific government applications, and across a wide spectrum of government sectors ranging from health care, social security, and statistical data to aerospace, defense, and energy. The experience of highly reliable systems development is that users must sacrifice capability, flexibility, interoperation, and performance in order to achieve high levels of reliability—which includes fault tolerance, security, robustness in the face of component failure, and many other attributes. With improvements in reliability practices, the extent of this sacrifice can be reduced. Conversely, requirements for reliability can force program managers to scale back ambitions for capability, performance, and even usability. Usability poses similar challenges. All Internet users have had the frustrating experience of attempting to extract information or conduct transactions through poorly designed Web sites. In applications in which government information and transactions are made available to citizens, the need to provide broad access to a widely diverse population creates enormous challenges in achieving usable designs. Usability challenges are also faced in systems designed for internal use—for example, crisis management applications intended for use by professionals who may be in a state of stress when interacting with the system.

When designing a research program that is addressing a particular capability challenge, an important early decision is when and how to take on usability issues. There is growing evidence, for example, that the overall architecture of a system can influence the ability to create usable human interfaces.10 In addition, poor usability may deter potential adopters even when the underlying technology has considerable merit.

Early attention to these issues may not be enough, however. Certain product attributes, especially those related to reliability and usability, are multifaceted issues that must be considered at every stage of the engineering process. Reliability in particular must be addressed during system conceptualization, design, development, evaluation, integration, and evolution.

Planning Risks

This section on “Dimensions of Risk” has illustrated and partially enumerated the dimensions of risk that might be addressed in a mission-focused research program and some of the strategies and tactics conventionally used to address them. Of course, these and other kinds of risks may be present to different degrees, depending on the particular research challenges being addressed. Regardless, it is clear that successful program designs incorporate, if only tacitly, an identification of the critical risk elements, indicating which ones are the primary drivers and which others can be safely deferred for later consideration. A full identification of risks does not imply that they should all be addressed in the scope of a program—rather, it can help a manager understand, and later communicate, how transition steps might be taken by others once results start to emerge from the program.

In addition to identification of the risks, the sequencing of risks to be considered can also be a risk issue: an overambitious program design that takes on too many risk dimensions at once effectively multiplies overall program risk and lessens the chance of overall success. This challenge of sequencing the risks may best be addressed by drawing on experience in research, engineering, evaluation, and operational settings, where the full range of risk issues can be best identified. Questions include how the risk dimensions interact, and which risk issues can be addressed in parallel and which must be done sequentially.

SUMMARY

While there can be no recipes for success in program management, this chapter suggests some models and principles that may be usefully applied by a program manager in seeking innovation in government applications. Most importantly, a program manager may benefit from understanding the challenge of achieving a particular innovation in the framework of the two principal models identified in this chapter—the supply chain model and the risk-identification model. This understanding may be developed and applied in a process that includes, for example, steps such as the following:

-

Identify the desired innovation and the stakeholders in successfully achieving it.

-

Identify the horizon (time available to achieve the operational impact) and the overall risk tolerance for the effort.

-

Map out the full set of participants in the supply chain that links operational end users with innovators. This includes, for example, understanding the readiness of the potential participants and the natural opportunities for collaboration.

-

Identify the principal risk issues at each stage and consider appropriate strategies for addressing those risks.

-

Identify critical points of leverage in the supply chain. Understand the timing considerations and other factors regarding the opportunities so identified.

-

Take action according to the understanding gained in the previous steps in order to stimulate appropriate participants in the supply chain to achieve the innovation goals. Example actions: Invest to stimulate exploration or buy down risk, stimulate collaboration, and facilitate new standards.