3

Factors in Emergence

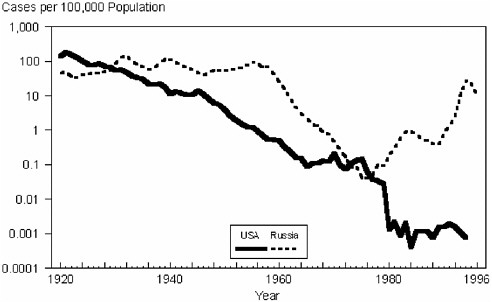

Six factors in the emergence of infectious diseases were elucidated in a 1992 Institute of Medicine (IOM) report, Emerging Infections: Microbial Threats to Health in the United States. A decade later, our understanding of the factors in emergence has been substantially influenced by a broader acceptance of the global nature of microbial threats. As a result, this report expands the original list, identifying thirteen factors in emergence (see Box 3-1). These thirteen factors are reviewed in turn in this chapter. The chapter ends with a case example—influenza—illustrating the interaction among the factors in the emergence of an infectious disease.

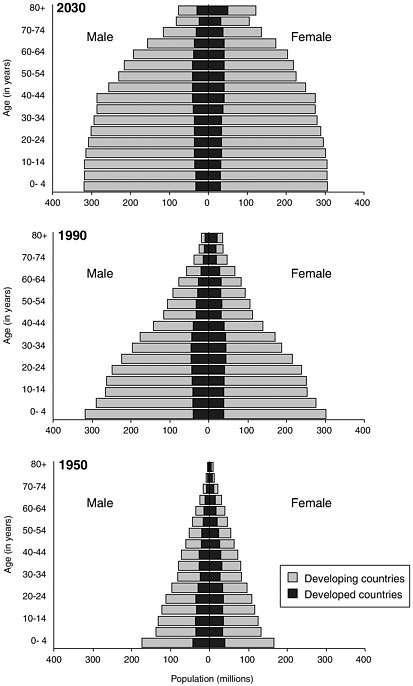

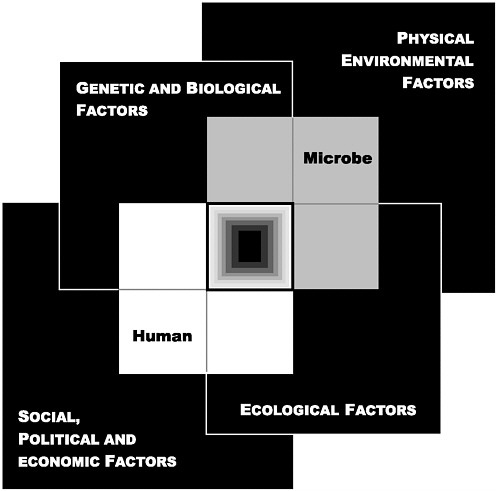

Future scientific discoveries and an increased understanding of the complexity of the emergence of infectious diseases will no doubt add to the list of factors identified in this report. In this light, the committee developed a model for conceptualizing how the factors in emergence converge to impact on the human–microbe interaction and result in infectious disease (see Figure 3-1). This model organizes the various factors into four broad domains: (1) genetic and biological factors; (2) physical environmental factors; (3) ecological factors; and (4) social, political, and economic factors. As we examine the individual factors, envisioning each as belonging to one or more of these four domains may simplify the understanding of the complex dynamics of emergence.

MICROBIAL ADAPTATION AND CHANGE

Microbes live on us and within us and inhabit virtually every available ecological niche of the external environment, and they will expand into new

|

BOX 3-1 Factors in Emergence 1992

2003

|

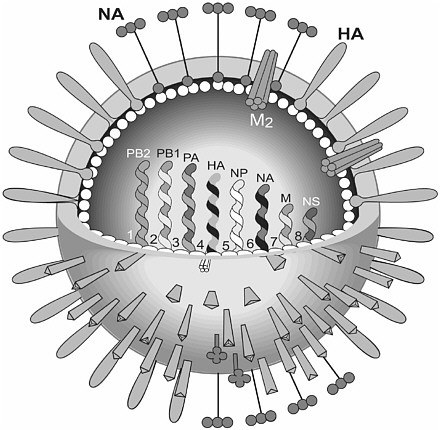

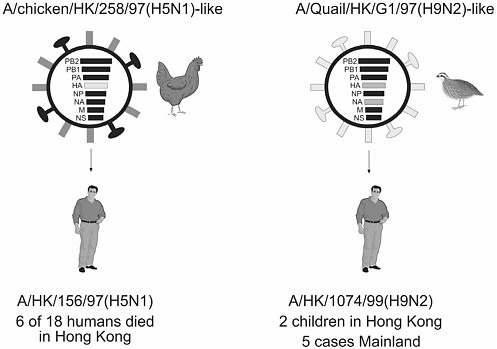

niches that occur as we continue to alter the environment and extend our contact with the microbial world. Most of the microbes that live on or inside humans or exist in the environment do not cause disease in humans (see Box 3-2). These microbes may appear to be unimportant. However, they are often crucial to the human ecosystem. Moreover, microbes that have heretofore not affected humans directly may still represent a potent threat. Microbes that are pathogenic to the animals and plants on which we depend for survival, for example, are an indirect threat to human health. Other microbes live in apparent harmony with animals but can be pathogenic for humans, as evidenced by the number of emerging zoonotic diseases that are transmitted to humans from animals. Microbes are also adept at adaptation and change under selective pressures for survival and replication, including the use of antimicrobials by humans. Microbial adaptation and change continually challenge our responses to disease control and prevention. For example, the influenza virus is renowned for its ability to continually evolve so that new strains emerge each year, giving rise to

FIGURE 3-1 The Convergence Model. At the center of the model is a box representing the convergence of factors leading to the emergence of an infectious disease. The interior of the box is a gradient flowing from white to black; the white outer edges represent what is known about the factors in emergence, and the black center represents the unknown (similar to the theoretical construct of the “black box” with its unknown constituents and means of operation). Interlocking with the center box are the two focal players in a microbial threat to health—the human and the microbe. The microbe–host interaction is influenced by the interlocking domains of the determinants of the emergence of infection: genetic and biological factors; physical environmental factors; ecological factors; and social, political, and economic factors.

annual epidemics and necessitating the ongoing development of new influenza vaccine strains.

When the “germ theory” of disease was born in the late nineteenth century, Robert Koch and his contemporaries were convinced that diseases were caused by invariant, monomorphic microbial species. Early microbiologists dismissed the variants seen in petri dishes as mere contaminants— foreign entities that had floated into the culture medium from the atmosphere. Now, of course, the inherently variable nature of these early microbial species is well known. Microbes have enormous evolutionary potential and are continually undergoing genetic changes that allow them to bypass the human immune system, infect human cells, and spread disease. They may also traverse an alternative pathway that is a symbiotic accommodation to their hosts (see Box 3-2).

Numerous microbes have developed mechanisms to exchange or incorporate new genetic material into their genomes; even unrelated species can exchange virtually any stretch of DNA or RNA. Genomic sequencing of pathogens made possible by technological advances shows that horizontal movement, or lateral transfer, of DNA is common and may be responsible for the emergence of many new microbial species. Lateral transfer can involve the exchange of virulence genes (genes that confer pathogenicity) and/or other genes required for adapting to a particular host or environment. Indeed, the exchange of virulence genes is so pervasive among bacterial pathogens that species-specific chromosome regions containing virulence genes have inspired their own name—“pathogenicity islands” (Ochman and Moran, 2001; Groisman and Ochman, 1996; Hacker et al., 1997; Hacker and Kaper, 2000). Some pathogenicity islands encompass very large genetic regions, as many as 100 kilobases long. The transfer of just a single pathogenicity island in E. coli is sufficient to convert a benign strain into a pathogenic one (McDaniel and Kaper, 1997).

Pathogens have devised other means of adapting rapidly to new circumstances in their environment. RNA viruses, and retroviruses in particular, can mutate at very high rates, allowing them to adapt rapidly to changes in their external environment, including the presence of therapeutic drugs. Because microbes reproduce so quickly—as often as every 10 minutes— even very rare mutations build up rapidly in viral and bacterial populations. Many pathogenic bacteria have short runs of identical bases (“repeats”) in their DNA; very minor changes in these repeats occur commonly and result in changes in gene expression. Moreover, many bacteria and viruses can sense changes in the external environment, and depending on what they sense, their genes can enable virtually instant changes in the regulation of certain sets of other genes, thus allowing the microbe to adapt to the new environment.

The more we learn about microbial genetics, structure, and function, the more we marvel at the sophistication of the survival strategies of microbes. Their mechanisms of survival are many and varied, and specific pathogens are generally tailored to flourish in particular niches. Many viruses and bacteria use our own cellular receptors to attach to and enter human cells; others utilize various human proteins for their own essential needs. Microbes use several means to defend themselves from being disabled or destroyed by the human immune system, including the rapid evolution of new antigenic variants, the masking of crucial surface antigens, inhibition of the immune system, and escape from the immune system by “hiding” inside human cells. Some microbes coat their surfaces with mimics of human tissue to prevent recognition by their human host as “nonself.” As a result, the human immune response is not activated, and the microbe is ignored and left to survive and reproduce at will. Some microbes have evolved mechanisms to downregulate the human innate immune system, which would otherwise serve as the human body’s first line of defense. Others stimulate an immune response that is injurious to the human host; for example, a sustained anti-self response may be triggered by viral or bacterial antigens that are molecular mimics of human antigens leading to chronic inflammation. Other strategies for survival include the ability to cause latent infections that can reactivate years later at a time when the host’s immune responses are blunted. Clearly, pathogens are extraordinarily adept (and successful) in carrying out their game of survival of the fittest.

The development of preventive vaccines and antimicrobial therapies is among the greatest achievements of modern medicine. Unfortunately, the tremendous evolutionary potential of microbes empowers them with adeptness at developing resistance to even the most potent therapies and complicating attempts to create effective vaccines. In some cases, the antimicrobial drug target on the microbe mutates in such a way that binding of the antiviral or antibiotic no longer inhibits the virus or bacteria. For example, one of the major obstacles to the development of an effective vaccine against HIV is the very rapid antigenic change that the viral surface proteins undergo regularly. In fact, their mutation rate is so high that almost every retroviral particle is genetically different from every other particle by at least one nucleotide substitution. In other cases, bacteria have evolved enzymes that modify or destroy the antibiotic before it can reach its target inside the bacterium, or they “pump it back out” before it can do any damage to the microbe. Many genes for resistance can be transferred readily among different bacterial species; resistance can easily spread through multiple populations of species that occupy the same host environment.

Acquisition of genes for resistance is advantageous for the microbe only when it is under attack by therapeutics. The adapted microbe may be slightly less fit in the absence of antimicrobial therapy, and thus the organ-

|

BOX 3-2 The Microbiome Medical science is imbued with the Manichaean view of the microbe–human host relationship: “we good; they evil.” Indeed, the ascription of microbes to pathology has pervaded the teaching of biomedical science for over a century and consequently has left us with certain blind spots in our biological perspective of the pathogen–human host relationship. Obviously, microbes do have a knack for making us ill, killing us, and even recycling our remains to the geosphere. Nevertheless, in the long run, microbes have a shared interest in our survival. After all, if a pathogen does too much damage to its host, it will kill off not only the host but itself as well. “Domesticating” the host is a much better long-term strategy, and thus natural selection tends to favor less virulent pathogens that do not cause quite so much harm. Most successful parasites likely travel a middle path with regards to the amount of damage they do to their host; they need to be aggressive enough to enter the body surfaces and toxic enough to counter their host’s defenses, but once established they also do themselves (and their hosts) well by moderating their virulence. A better understanding of the host–pathogen relationship might be achieved by thinking of the host as a superorganism—or “microbiome”—with the host’s genome and those of all of the host’s indigenous microbes yoked into a chimera of sorts (Lederberg, 2000; Hooper and Gordon, 2001). The microbiome refers to the small biotic community that defines each of us as individuals, as well as the collective set of genomes that inhabit our skin, gut lumen, mucosal surfaces, and other body spaces. For the most part, the microbiome is a poorly catalogued ensemble, of which the majority of entries have yet to be cultivated and characterized, let alone understood with regard to their pathogenicity. Indeed, from a microbiome perspective, the mitochondria—which provide the oxidative metabolism machinery for every eukaryotic cell, from yeast to protozoa to multicellular organisms—can be regarded as the most successful of all human microbes. Mitochondria derive from an ancient lineage within the proteobacteria (Gray et al., 1999) and illustrate just how far the genomic collaboration between a host and a member of its indigenous microbial community can evolve. Until recently, infectious disease research has given sparse attention to how microbes have evolved adaptations for sustaining themselves as chronic inhabitants or “domesticators” of their human hosts. The evolutionary rate of large, complex multicellulars such as ourselves is, for the most part, simply too slow to evolve their own resistance and keep pace with the rapid evolution of microbes. A year in microbial history matches all of primate, perhaps mammalian, evolution. Not only do microbes evolve much more quickly than humans, but their enormous evolutionary potential is further enhanced by their sheer numbers as well as their many ingenious mechanisms of gene exchange (e.g., conjugation and plasmid interchange). Microbes can go beyond inhabiting our body space to completely set up genetic shop. Retroviruses, for example, are unable to replicate until they have become integrated into the host DNA; thereafter, their replication involves simply the fairly standard transcription of host chromosomal DNA into RNA copies. Indeed, it appears that some of the so-called HERVs (human endogenous retroviruses), with which the human genome is so heavily populated, have evolved so far as to participate in the physiology of our placenta and in our gustatory behavior. We have no idea what pathways HERVs have used to reach their target, nor can we predict the long-term consequences of their further evolution. But experience has shown we have every reason to expect that our |

|

most notorious retrovirus, HIV, will find a way to lodge itself in the germ line as well. The human genome encodes some 223 proteins with significant homology to bacterial proteins, suggesting that they were acquired from bacterial sources via horizontal transfer (Lander et al., 2001). These apparent insertions from microbial sources serve as further evidence of a historic host–microbe collaboration among the various components of the microbiome. Our focus on “conquering” infectious disease may deflect from more ambitious, yet perhaps more pragmatic, aims; little consideration has been given to the notion that perhaps we could learn to live with a pathogen instead of being so insistent on getting rid of it. Natural history abounds with infections that have, over the course of evolutionary history, achieved a mutually tolerable state of equilibrium with their host. Genetic variation of the influenza A virus, for example, has remained stable in its wild aquatic bird reservoir, and infected avians often show no sign of disease. Although the recognition of AIDS in 1981 has inspired the most intense biomedical research program in history, the incidence of disease is only increasing. Would this trend reverse if, instead of focusing exclusively on ways to conquer HIV, we were to give equal weight to developing therapeutic measures that nurtured the immune system that HIV erodes? Indeed, consider that many of the microbes that reside in our gut—such as Lactobacillus spp.—actually serve a protective, not a pathogenic, role. In fact, their protective advantage is currently being exploited in so-called “probiotic” therapy—the administration of live, benign microbes that benefit the host and aid in the treatment of disease (Hooper and Gordon, 2001; IOM, 2002b). Although scientists have known about the health benefits of lactic acid bacteria in particular for more than a century, the broader concept of probiotic therapy is a recent one (IOM, 2002b; Fuller, 1989). In addition to Lactobacillum, other probiotic preparations have contained Bifidobacterium, Streptococcus spp., and E. coli. Thus far, probiotic therapy has proven most beneficial in treating active ulcerative colitis, as well as complications following surgical intervention for that condition (Gionchetti et al., 2000; Rembacken et al., 1999). Probiotic lactobacillus may even prove useful in strengthening immune responses in persons infected with HIV. Normal bacterial flora are altered in HIV infection, as evidenced by the frequency of bacteremia associated with altered gastrointestinal function, diarrhea, and malabsorption; and failure-to-thrive, which is linked to altered gastrointestinal function, is relatively common in congenital HIV infection. Recent studies have shown that the effect of L. plantarum 299v, a specially developed probiotic lactobacillus, has a generally beneficial effect on the immune response in HIV-infected children (Cunningham-Rundles and Nesin, 2000). The concept of probiotic therapeutics extends even beyond simply introducing a living microbe. Recent studies have demonstrated that genetically engineered gut commensal bacteria can be used as drug delivery platforms to treat infectious disease (Steidler et al., 2000; Beninati et al., 2000; Shaw et al., 2000). Other possible uses of probiotic therapy include using microbial products that target specific disease processes, such as weakened epithelial barriers or reduced activity of the mucosal immune system (Hooper and Gordon, 2001); using microbes that bear relevant cross-reacting epitopes instead of vaccines; and using them as optional food additives (Lederberg, 2000). The rewards of a microbiomal perspective on infectious disease could be great. Not only would we achieve new insights with regard to how we and the microbes around and within us adapt to each other, and thus how pathogens emerge, but we would likely develop new approaches to preventing and treating infectious diseases. |

ism may slowly revert to a sensitive state when therapy is withdrawn. Thus, the frequency of antimicrobial use is key; less use results in less resistance, while more use leads to more resistance. Unfortunately, antibiotics are frequently used when they are not truly needed (see the discussion of inappropriate use of antimicrobials in Chapter 4).

HUMAN SUSCEPTIBILITY TO INFECTION

Many properties of the human body—from its genetic makeup to its innate biological defenses—affect whether a microbe will cause disease. The body has evolved an abundance of physical, cellular, and molecular barriers that protect it from microbial infection, beginning with the skin. Even minor breaks in the skin increase susceptibility to infection. The normal bacterial flora of the gut and inner mucosal surfaces serve a protective role; not only do they occupy receptors to which pathogenic bacteria would otherwise attach themselves, but they produce antimicrobial substances that inhibit the growth of their pathogenic competitors. When these normal bacterial flora are reduced, as happens when a broad-spectrum antibiotic is used to treat an infection or when acidity in the stomach is reduced through various medications, the body is more susceptible to pathogens. Another protective defense mechanism is seen with the enzyme lactoferrin, which is plentiful in breast milk and on mucosal surfaces. Lactoferrin serves a protective role by sequestering iron, thereby making the mineral unavailable to invading pathogens that need it to reproduce. Susceptibility to infection can result when these normal defense mechanisms are altered or when host immunity is otherwise compromised as a result of impaired immune function; genetic polymorphisms; and other factors, such as aging and poor nutrition.

Impaired Host Immunity

The innate or nonspecific immune response is the body’s initial inflammatory reaction to any kind of injury or microbial invasion. Innate immune defenses are believed to have first evolved in insects and other lower organisms that lack the ability to produce antibodies and thus depend entirely on this primitive but effective system for their protection against infection. In humans, more than a dozen different so-called TLRs (TOLL-like receptors)1 have been found on cells that make up the mucosa and skin (including macrophages and dendritic cells), which is where pathogens first encounter their human host. When foreign molecules, such as bacterial DNA

or flagella, bind to TLRs, they trigger a complex set of responses that leads to the production of inflammatory cytokines and local antimicrobial peptides. When the inflammation is inadequate to deal with an injury or microbial invader, the so-called adaptive or acquired specific immune response kicks in. This mechanism encompasses both cell-mediated and humoral responses. The former involves the production of antigen-specific T cells, which, depending on their surface protein makeup, serve a variety of functions, such as influencing the activities of other immune cells; the latter involves the production of antigen-specific B cells, which produce humoral antibodies.

New knowledge about the innate and specific immune responses is being used to develop potential therapies for infectious disease control. For example, the key to a good innate immune system defense is a balanced, regulated production of inflammatory cytokines. Otherwise, microbial infection can provoke such a massive release of inflammatory cytokines as to seriously damage and even kill their host. Researchers are exploring ways to interrupt the TLR pathways in order to either downregulate overly active inflammatory responses or upregulate weak responses. These could be useful strategies in the treatment of infectious diseases for which no otherwise effective specific therapies exist.

Genetic Polymorphisms

J.B.S. Haldane (1949) was among the first to suggest that pathogens serve as potent natural selective forces that have helped shape the evolution of human defenses against infection (Hill, 1998; Weatherall, 1996a; Lederberg, 1999). In particular, Haldane predicted that people who live in historically malaria-laden areas may have evolved genetic polymorphisms—in particular, heterozygous hemoglobinopathies—that increase their ability to survive infection with malaria.

Hemoglobinopathies are a group of diseases caused by or associated with the presence of abnormal hemoglobin in the blood; they are one of the most common single-gene disorders in humans. The hemoglobin gene has several allelic variants, including hemoglobin S, which, if homozygous, causes sickle cell disease. Hemoglobin S heterozygotes have the sickle cell trait and are virtually asymptomatic; however, they exhibit 80 to 95 percent protection against P. falciparum infection (Weatherall, 1996b). Hemoglobin S homozygosity exacts a cost in adverse health effects (as many persons of African descent have sickle cell disease), but clearly the protective power of this particular allelic variant is still under major selective pressure. Indeed, in areas free of malaria, sickle cell trait and sickle cell disease are very rare, or generally found only in lineages who have migrated from malaria-laden areas. Another structural hemoglobin variant, hemo-

globin E, occurs at high frequencies throughout the Indian subcontinent, Burma, and Southeast Asia; in some areas, carriers make up as much as 50 percent of the population. Like hemoglobin S, hemoglobin E protects against P. falciparum (Flint et al., 1993; Weatherall, 1996b). More common than the structural variants hemoglobin S and E are a group of anemias known as thalassemias, which result from a defective production rate of either the alpha or the beta chain of the hemoglobin polypeptide. Again, heterozygotes are usually asymptomatic, and protection against malaria appears to have been the major selective force responsible for the more than 120 different beta thalassemia mutations, as well as the many different alpha thalassemia mutations (Weatherall, 1996b). The biological mechanism underlying the protective power of the heterozygous hemoglobinopathies is still unclear.

The presence of malaria in a population does more than modify hemoglobin. Several other malaria-related balanced polymorphisms, many of which involve the red blood cell structure and metabolism, have likewise evolved in response to the tremendous selective force exerted by the disease. Glucose-6-phosphate dehydrogenase deficiency (an X-linked chromosomal disorder), for example, serves a protective role in heterozygous female carriers and hemizygous males (Ruwende et al., 1995). Heterozygous carriers of a mutation in band 3 of the red blood cell membrane, which in its homozygous state causes the potentially lethal melanesian ovalocytosis, may also have a protective advantage. Finally, different blood group antigens may have evolved in response to past exposure to malaria (Miller, 1994). Racial differences in the distribution of certain red blood cell receptors for malaria parasites have been observed, possibly as a result of evolutionary genetic selection (IOM, 1991; Barragan et al., 2000; Hamblin et al., 2002). The Duffy antigen (a name taken from the hemophilia patient in whom it was first identified) is a parasite receptor on red blood cells that is recognized by certain forms of malaria, including P. vivax and P. knowlesi. Many persons of African descent lack the Duffy gene and therefore cannot be infected by either of these malaria parasites.

Other balanced polymorphisms have apparently evolved in response to malaria. For example, the tumor necrosis factor alpha gene and the HLA-DR class II genes both have polymorphic systems that have been linked to malaria (Hill et al., 1991). The remarkable human genetic diversity that has evolved in response to malaria, and that scientists have only just begun to uncover, suggests that other less common or less studied infections have probably generated extraordinary diversity as well. Fortunately, knowledge gleaned from the human genome project and its technological offshoots is leading to a dramatic explosion in new understandings of polymorphisms in a variety of genes that alter the response to infection. One of the most recently reported links between infection and natural selection is a deletion

in the host-cell chemokine receptor CCR5, which reduces the risk of acquiring HIV infection after exposure (Sullivan et al., 2001). As another example, certain major histocompatibility complex class I molecules have been shown to reduce the risk of dying from HIV infection (Kaslow et al., 1996; Gao et al., 2001). Likewise, several different mutations or polymorphic systems influence the susceptibility to or likelihood of death from meningococcal infection (Read et al., 2000; Nadel et al., 1996; Westendorp et al., 1997). Numerous other examples exist of genetic associations with diseases, including cancers and chronic diseases, and the list is growing rapidly (Hill, 2001; Topcu et al., 2002; Chen et al., 2002a; Calhoun et al., 2002; Helminen et al., 2001; Pain et al., 2001).

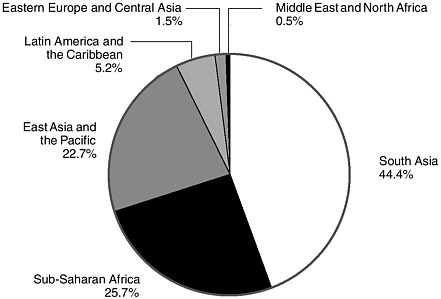

Malnutrition

Host susceptibility to infection is aggravated by malnutrition. A strong and consistent relationship has been found between childhood malnutrition and increased risk of death from diarrhea, acute respiratory infection, and possibly malaria (Rice et al., 2000). Conversely, infectious processes, especially those associated with diarrhea, drive malnutrition in young children (Mata, 1992; Mata et al., 1977), so that diarrheal illness is both a cause and an effect of malnutrition (Guerrant et al., 1992; Wierzba et al., 2001; Lima et al., 1992). Clinically, malnutrition is characterized by inadequate intake of protein, energy, and micronutrients and by frequent infections or disease (WHO, 2002d). Malnutrition has been associated with 50 percent of all deaths among children worldwide (Rice et al., 2000). In 2000, an estimated 150 million of the world’s children under age 5 were malnourished on the basis of low weight for age (WHO, 2002d). More than two-thirds (70 percent) of these children were in Asia, especially southern Asia. The number of malnourished children living in Africa—26 percent of the world’s malnourished children—has risen as a result of population growth in the region, as well as natural disasters, wars, civil disturbances, and population displacement (WHO, 2000b).

Malnutrition diminishes host resistance to infection through a number of mechanisms. Virtually all bodily processes and physical barriers that keep infectious agents from invading the host are affected. These include the skin, mucous membranes, gastric acidity, absorptive capacity, intestinal flora, cell-mediated immunity, phagocyte function, and cytokine production (Chandra, 1997; Levander, 1997). Although multiple-nutrient deficiencies are much more common than single-nutrient deficiencies, lack of even one vitamin or mineral (e.g., zinc; selenium; iron; copper; vitamins A, C, E, B-6, and folic acid) can impair the immune response. For example, vitamin A deficiency significantly increases the risk of severe illness and death from common childhood infections, such as diarrheal disease and

measles, by diminishing the host’s resistance to infection. For children deficient in vitamin A, the periodic supplying of high-dose vitamin A has reduced mortality by 23 percent overall and by up to 50 percent for those who suffer from acute measles (WHO, 2002d). The relative risk of measles mortality in children younger than 2 years of age has been shown to be significantly reduced when the children’s diets are supplemented with vitamin A for only 2 days (Barclay et al., 1987; West, 2000). Consequently, WHO recommends treating children who have measles, prolonged diarrhea, wasting malnutrition, or other acute infections with vitamin A (IOM, 2002c; WHO, 1997). Furthermore, studies have suggested an association between maternal vitamin A deficiency and an increased risk of vertical HIV transmission from mother to child (Semba et al., 1994; Greenberg et al., 1997). It is not yet clear, however, what role vitamin A supplementation has in the management of HIV infection.

CLIMATE AND WEATHER

Many elements of the physical environment influence the host directly; determine the survival of agents that exist outside the host; and mediate the transmission of agents between hosts, including the movement from animal to human hosts. Viewed in this light, the physical environment takes on considerable importance in determining the epidemiology of infectious diseases (Wilson, 2001). The interactions among vectors, animal reservoirs, microbes, and humans present many opportunities for changes in the physical environment to influence transmission dynamics. Many of the factors that affect the abundance, survival, activity, or feeding behavior of vectors also impact on the reproduction, survival, and abundance of animal reservoirs. For example, elevated rainfall often creates new breeding habitats for mosquitoes, leading to an increase in mosquito population density. Increased levels of precipitation can also lead to decreased marsh salinity, which in turn may increase the survival rates of certain toxic aquatic bacteria. Likewise, these same factors can affect human behavior or exposure to infection by impacting outdoor activities, housing, the quality and quantity of food, and agricultural or other uses of the environment.

Among the numerous elements of the physical environment that influence the emergence of infectious diseases, climate and weather2 have received a great deal of attention in recent years. Many infectious diseases either are strongly influenced by short-term weather conditions or display a seasonality suggesting that they are influenced by longer-term climatic

changes (Patz et al., 2000). Climate can directly impact disease transmission through its effects on the replication and movement (and perhaps evolution) of disease microbes and vectors; climate can also operate indirectly through its impacts on ecology or human behavior (NRC, 2001). To be transported over relatively large distances from one host to another, many microbes must be borne passively through moving air or water. Some pathogenic microbes, such as those causing coccidiomycosis (see Box 3-3), are picked up from the soil and carried by dry, dusty winds (Schneider et al., 1997); some opportunistic human pathogens can apparently survive transoceanic transport in dust clouds (Griffin et al., 2001); and others, such as cryptosporidiosis, may be washed by heavy rains into reservoirs of drinking water (Alterholt et al., 1998) (see Box 3-4). The 1993 hantavirus outbreak in the southwestern United States, due to an El Nino event, is an example of how climatic factors have contributed to the emergence of infectious disease (see the later discussion of hantavirus). Likewise, higher water temperatures in the Pacific Northwest resulting from a 1997 El Nino event provided unusual conditions favorable to the growth of Vibrio parahaemolyticus, which led to a shellfish-associated outbreak of disease (CDC, 1998a).

The fact that local and regional climatic factors clearly influence disease emergence has led scientists to suggest that projected global climate changes will have an impact on infectious disease emergence. Climatologists project upward trends in global temperatures and estimate that by 2100, temperatures will have increased by 1.4–5.8°C (Intergovernmental Panel on Climate Change, 2001a). Climate change has already been detected, and impacts from such change are sure to follow. Arthropod-borne diseases, such as malaria, yellow fever, and dengue, are expected to be affected more readily than other types of diseases by climate change since arthropod transmission patterns are highly sensitive to changes in ambient temperature. Waterborne diseases, such as cryptosporidiosis, may also be affected (Patz et al., 2001). However, to fully assess the effects of climate change, both confounding factors (e.g., drug resistance, crop yields, population migration) and the adaptive capacity of a population must be considered. Many argue that at present, other factors—including human population density and the capacity of the public health system to prevent and control infectious disease outbreaks—affect disease risk more than does global climatic change. Indirectly, if global climatic change were to result in reduced food availability, thereby producing undernourished human populations more vulnerable to disease, its impact on infectious disease could be dramatic (Intergovernmental Panel on Climate Change, 2001b). Likewise, if social disruption, economic decline, and displaced populations were to emerge as a result of reduced food availability due to global climate change,

|

BOX 3-3 Fungal Threats In 1994, a major earthquake shook Ventura County, California, generating massive landslides in the Santa Susana Mountains just north of Simi Valley. These landslides resulted in the formation of large dust clouds that were dispersed into nearby valleys by northeast winds. Within the dust clouds were tiny arthrospores of the fungus Coccidioides immitis. As the dust clouds settled over Ventura County, residents of the area inhaled the contaminated air; the result was 203 cases of coccidioidomycosis (also known as Valley fever and San Joaquin Valley fever), including three fatalities associated with this event (Schneider et al., 1997). The dimorphic fungus Coccidioides immitis that causes coccidioidomycosis, grows in topsoil layers in the southwestern United States, Mexico, and parts of Central and South America. It is transmitted through the air following the disturbance of contaminated soil, as in the case of dust storms, earthquakes, and excavations. It is not transmitted from person to person. Although 60 percent of infected individuals are asymptomatic, the remainder can develop a range of infirmities, from influenza-like illness, to pneumonia, to severe pulmonary and extrapulmonary disease in immunocompromised individuals (CDC, 1994a). Simple environmental measures, such as planting grass and paving roads, can lower the risk of airborne dispersion of C. immitis. As of 2002, however, no practical method for eliminating the organism had been developed. National surveillance for coccidioidomycosis began through the National Electronic Telecommunications System for Surveillance (NETSS) in 1995; the disease is reportable in California, New Mexico, and Arizona. In addition to dissemination by dust clouds, fungal infections can be a health threat for travelers to regions of the world where these infections are endemic (Panackal, 2002). Travelers have developed fungal infections as a result of a wide range of recreational and work activities. For example, an outbreak of coccidioidomycosis occurred in a church group from Washington State upon returning from Tecate, Mexico, where these members of the congregation had assisted with construction projects at an orphanage. Following the outbreak, Coccidioides immitis-was isolated from soil samples taken from Tecate (Cairns et al., 2000). College students visiting Acapulco, Mexico, were diagnosed with histoplasmosis, an infection caused by the soil-inhabiting fungus Histoplasma capsulatum, after staying at a beach resort hotel (CDC, 2001d). Similarly, Italian spelunkers returning from Mato Grosso, Peru, displayed signs and symptoms consistent with histoplasmosis (Nasta et al., 1997). Other potential mycotic disease threats include blastomycosis, which is endemic to parts of the south-central, southeastern, and midwestern United States, as well as Central and South America and parts of Africa; cryptococcosis, the fungal agent of which has been isolated from soil worldwide, usually in association with bird droppings; aspergillosis; candidiasis; and sporotrichosis (CDC, 2001e). |

|

BOX 3-4 An Outbreak of Cryptosporidiosis Cryptosporidiosis, a waterborne intestinal infection caused by Cryptosporidium spp., produces potentially life-threatening disease in those who are immunocompromised and mild to chronic diarrhea in others (Fayer and Ungar, 1986). In 1993, an estimated 403,000 crytposporidiosis infections occurred among residents of and visitors to Milwaukee, Wisconsin (MacKenzie et al., 1994). Cryptospordium oocysts in untreated water from Lake Michigan had apparently been inadequately removed by the coagulation and filtration process in a portion of the Milwaukee water treatment plant. The source of the oocysts leading to the outbreak remains speculative. Possible sources include cattle along two rivers that flow into the Milwaukee harbor, slaughterhouses, and human sewage. Various vertebrates (e.g., cows and wild deer) are naturally infected by Cryptosporidium spp. (Navin and Juranek, 1984; Simpson, 1992; Tzipori et al., 1981). Perhaps considerable rainfall, combined with a high concentration of animal runoff near the municipal water supply, triggered this transmission event. Genotypic and experimental infection data may suggest a human rather than bovine source (Peng et al., 1997). In the 2 years following this contamination of the water supply, it was estimated that 54 deaths (85 percent among people with AIDS) may have resulted from the 1993 outbreak (Hoxie et al., 1997). In addition to contaminated drinking water, outbreaks of cryptosporidiosis in the United States and abroad have been linked to chlorinated and unchlorinated recreational water facilites, such as public swimming pools, water parks, lakes, and rivers (Carpenter et al., 1999). |

the emergence and spread of infectious disease would likely be substantially impacted. A recent National Research Council (NRC) report, Under The Weather: Climate, Ecosystems, and Infectious Disease, addresses the impact of climate and weather change in further detail (NRC, 2001).

CHANGING ECOSYSTEMS

The abundance and distribution of plants and animals can, conversely, impact on components of the physical environment. Forest growth, for example, usually reduces evapotranspiration; cropping often increases local relative humidity; and the development of large urban areas generally leads to an accumulation of atmospheric particulates and warmer air temperatures. Even very minor ecological changes, such as implementing a new farming technique, can confront pathogens with new environments and significantly alter the transmission patterns of infectious diseases. Of course, the pathogens must have sufficient genetic variation to adapt to such ecological changes and new environments. But most pathogenic evolutionary changes that result in a potentially new disease still require an ecological

cofactor for the disease to actually take root (Stephens et al., 1998). In other words, regardless of its genetic prowess, the pathogen still must be able to reach its animal (or human) host or vector.

Given today’s rapid pace of economic development and enormous scale of ecological changes, understanding how environmental factors are impacting on the emergence of infectious diseases has assumed an added urgency. To the pressing issues of environmental conservation, natural resource utilization, population growth, and economic development can be added the need to understand the interplay of these processes with the emergence of infectious diseases. Such environmental and ecological factors are playing an increasingly important role in disease emergence. In general, changes in the environment tend to have the greatest influence on the transmission of microbial agents that are waterborne, airborne, foodborne, or vector-borne, or that have an animal reservoir.

Vector Ecology

Pathogens transmitted by mosquitoes and their arthropod allies sicken millions of people each year, cause inestimable morbidity in humans and animals around the globe, and remain major barriers to social and economic development in much of the tropical world. Of the ten diseases targeted by WHO for special control programs, seven have arthropod vectors (WHO 2003a). Many of these diseases—for example, dengue, yellow fever, and malaria—which were controlled to a substantial degree, are now resurgent in many formerly endemic areas. Malaria continues to afflict much of the tropical world and causes an estimated 1.5 million to 2 million deaths per year. More than 2.5 billion people are at risk for dengue virus infection; 100 million cases of dengue are estimated to occur annually, and the incidence of dengue hemorrhagic fever is increasing rapidly throughout the tropics. Yellow fever virus has recently caused major epidemics in Africa and South America (Gubler, 2001; Monath, 2001), and sylvatic reservoirs in these areas provide an ongoing threat for its reintroduction into Aedes aegypti–infested metropolitan areas throughout the world. Ae. aegypti is also the principal vector of the dengue viruses. Vector-borne diseases continue to emerge in new areas and/or to resurge throughout the world, even in areas where they were previously controlled. Many newly emerged pathogens and diseases, including Sin Nombre and Andes viruses (and a plethora of other newly discovered hantaviruses), Guanarito, Lyme disease, and ehrlichiosis all have rodent hosts and/or arthropod vectors (Mills et al., 1999; Gubler, 1998; Gratz, 1999). Others, such as the Seoul, dengue, Japanese encephalitis, West Nile, and Rift Valley fever (RVF) viruses (see Box 3-5), have demonstrated their ability to emerge in new or

|

BOX 3-5 Rift Valley Fever Rift Valley fever (RVF) provides an excellent example of how ecological conditions determine pathogen transmission. In Saudi Arabia, 453 individuals with suspected hemorrhagic fever required hospitalization from August to October 2000 (WHO, 2000c). The case-fatality rate was 19 percent, with a median age of 47 years and an age range of 1 to 95 years. In Yemen, 1,087 similarly suspected case-patients were identified from August to November 2000; 121 of them died (CDC, 2000c). The mean age of suspected cases was 32.2 years, with an age range of 1 month to 95 years. Symptoms included low-grade fever, abdominal pain, vomiting, diarrhea, and jaundice with liver and kidney dysfunction, often progressing to death. Three out of 4 case-patients reported being exposed to sick animals, handling an abortus, or slaughtering animals in the week before the onset of illness. Using diagnostics, including antigen and antibody detection, polymerase chain reaction, virus isolation, and immunohistochemistry, the CDC confirmed the diagnosis of RVF. Satellite images and aerial surveys revealed numerous areas throughout the coastal plain and adjacent mountains that would be conducive to transmission of the RVF virus. Entomologic studies revealed large numbers of two species of mosquitoes—Culex tritaeniorrhynchus and Aedes caspius—in the flood irrigation farming areas where most of the human cases were reported. The mechanism of virus trafficking is not clear; however, it is thought that animal relocation from Africa may have resulted in introduction of the virus into Saudi Arabia. It is now believed that the RVF virus may be able to establish itself almost anywhere in the world, given the availability of potential permissive vectors and animal reservoirs. RVF virus was first recognized and isolated as the agent of a zoonotic disease in Kenya in 1930. The disease is now widespread throughout much of the African continent. RVF virus is transmitted mainly by floodwater Aedes spp., which feed primarily on animals. Mosquitoes may be infected transovarially, but domestic ungulates (cattle, sheep, goats, etc.) amplify transmission and become sufficiently viremic to infect other “bridge” mosquitoes (e.g., Culex spp.), which can then infect humans (Wilson, 1994). Virus transmission is linked to periodic heavy rainfalls that fill shallow depressions called dambos, where mosquitoes, such as Ae. macintoshi, lay their eggs. This vector has been associated with vertical transmission of the virus to progeny, thereby providing a mechanism for the virus to survive adverse climatic conditions. When the dambos are flooded, infected mosquitoes can emerge to initiate the transmission cycle. The local abundance of ungulates, their movement while searching for forage, and their proximity to humans are important in the epidemiology of the disease. Thus, complex ecological factors impact on where, when, and with what intensity RVF emerges (Linthicum et al., 1999). Outbreaks outside of sub-Saharan Africa occurred in Egypt in 1977–1978 and again in 1993; the identification of RVF in Saudi Arabia and Yemen in 2000 (Ahmad, 2000) was the first confirmation of its occurrence beyond the African continent. |

previously endemic regions, thereby causing significant morbidity and mortality.

Arthropod-borne parasitic diseases, such as malaria, filariasis, onchocerciasis, trypanosomiasis, and leishmaniasis, remain major human threats. An estimated 120 million people suffer from lymphatic filariasis, and approximately 18 million people are afflicted with onchocerciasis, of whom about 340,000 are blind and an equal number visually impaired. More than 10 million people are afflicted with leishmaniasis, and 50,000 to 100,000 individuals die of visceral leishmaniasis each year in India alone. In Latin America, an estimated 20 million people have Chagas disease. In Africa, approximately 45 million people are at risk for African trypanosomiasis, which has virtually precluded domestic livestock production in a geographic region of Africa larger than the United States and now is tragically resurgent in humans in areas in East Africa.

Ecological and environmental conditions are key determinants of the transmission and persistence of vector-borne pathogens. Ecological conditions can increase the risk of infection by altering human exposure to vectors or changing their distribution, abundance, longevity, activity, and habitat associations, and thereby increasing or decreasing the overall potential for the vector population to transmit the pathogen to humans. Mosquito abundance and transmission of pathogens are typically associated with rainy seasons, since juvenile mosquitoes develop in aquatic habitats. Dengue transmission, for example, typically occurs during the rainy season, although the prior month’s temperature has been shown to affect transmission during the rainy season (Focks et al., 1995). Populations of floodwater- and container-breeding mosquitoes (e.g., Ae. aegypti) are dramatically affected by environmental conditions; their abundance is directly linked to rainfall (or snowmelt for temperate-zone mosquitoes), which induces the eggs to hatch. In contrast, transmission of Saint Louis encephalitis virus may be greatest in relatively dry periods after the rainy season (Shaman et al., 2002). The preferred breeding site of the principal vector, Culex quinquefasciatus, is stagnating pools of water with concentrated nutrient materials, and thus mosquito abundance increases during drier conditions, which favor the formation of such breeding sites. The emergence and reemergence of vector-borne pathogens are linked to changes in temperature (which determines how long it takes the parasite to develop), wind speed, and relative humidity (all of which affect vector feeding frequency); the amount and diversity of vegetation; and the presence of alternative hosts (which can alter the rate of blood feeding on humans). In particular, as previously discussed, global warming could theoretically result in dramatic alterations in the incidence and distribution of vector-borne diseases.

The movement of goods and people can also support the movement of vectors, allowing them to become established in new areas (see the later

discussion of international travel and commerce). This is certainly not a recent development. Probably the most notable public health example of such events is the dissemination of Ae. aegypti throughout the world (Tabachnick et al., 1985). After domestication and adaptation to humans and human environments, Ae. aegypti apparently spread to coastal areas of Africa, and was then transported throughout the world in sailing ships. Presumably Ae. aegypti, as well as yellow fever virus, was introduced into the New World on slave ships. Ae. albopictus, the Asian tiger mosquito, likely entered the United States via shipping (Moore, 1999). Aedes spp. eggs can easily be transported in such objects as waterlogged tires to new areas and hatch upon exposure to water. The large shipping containers used to transport so many products provide excellent environments for the transport of mosquito eggs. This presumably occurred recently as well with Ae. japonicus (Fonseca et al., 2001). Adult mosquitoes can be spread much more quickly throughout the world in the cabins or other areas of airplanes (Lounibus, 2002). Traveling mosquitoes can also rapidly introduce new genes, leading to the emergence of epidemiologically important vector phenotypes in new areas. Jet transport has been postulated as a mechanism for the rapid dissemination of an esterase mutation conferring pesticide resistance to organophosphates on Culex pipiens populations throughout the world (Raymond et al., 1998).

Traveling viremic humans can easily disseminate dengue virus to new areas via jet travel, and with the establishment of the mosquito Ae. aegypti in tropical and subtropical areas, can readily introduce new and perhaps virulent virus genotypes into susceptible populations. These same areas are also at risk for introduction of yellow fever virus, which is likewise transmitted by Ae. aegypti. Between 1970 and 2000, seven cases of yellow fever in unvaccinated travelers from the United States and Europe were reported (Monath and Cetron, 2002). It would appear to be inevitable for yellow fever to be introduced into new areas, such as Asia, with potentially catastrophic results.

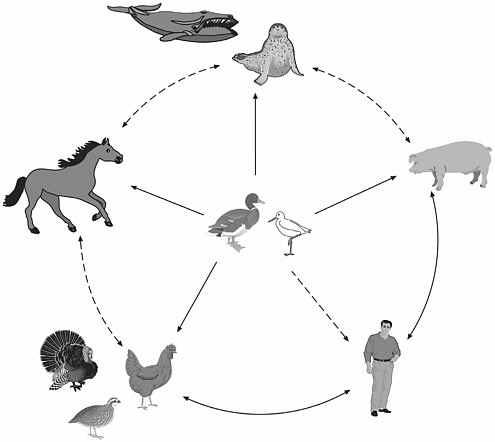

Reservoir Abundance and Distribution

It has been estimated that 75 percent of all emerging infections are zoonotic, i.e., they can be transmitted from animals to humans (Taylor et al., 2001a). In some cases, the mechanisms of transmission of a pathogen from animals to humans has been identified, but the transmission of the same pathogen between various animal reservoirs remains a mystery (see Box 3-6). Pathogens transmitted to humans directly from rodents (e.g., rodent-borne viral diseases) or maintained in nature by rodents and transmitted to humans by arthropods (e.g., Lyme disease, erhlichiosis, plague)

|

Box 3-6 Nipah Virus Between September 1998 and June 1999, an outbreak of a Japanese encephalitis-like illness occurred among people from several pig-farming villages in Malaysia; 265 cases of febrile encephalitis were reported to the Malaysian Ministry of Health, including over 100 deaths (Chua et al., 2000; Goh et al., 2000; WHO, 2001e). The majority of illnesses were characterized by 3–14 days of fever and headache, followed by drowsiness and disorientation that progressed to coma within 24–48 hours. In other cases, however, the infection was mild or inapparent. Most of the affected individuals were adult men who had histories of close contact with swine. During March of the same year, nine similar cases of encephalitis and two cases of respiratory illness that resembled some of what had been seen in the Malaysia outbreak were reported in Singapore; all eleven had handled swine imported from Malaysia. Concurrent with the human cases, many pigs in the regions were also becoming ill and dying. Tissue culture isolation from human and swine central nervous system specimens resulted in the identification of a previously unknown infectious agent, later named Nipah virus after the village of Sungei Nipah where it is believed the outbreak originated through infected bats that frequent the fruit trees near the pig farms. These fruit bats are distributed across northern, eastern, and southeastern areas of Australia, Indonesia, Malaysia, the Philippines, and some of the Pacific Islands. Infected bats appear to be asymptomatic reservoirs; it is unknown how the virus is transmitted from the bats to pigs. More than 900,000 pigs were culled in response to the outbreak (Uppal, 2000). Transmission of the Nipah virus to humans is primarily through direct contact with infected pigs or contaminated swine tissue; no evidence has been found for any person-to-person transmission. Although pigs are the only source of human infection identified thus far, they may not be the only one. For example, dogs infected with the Nipah virus have also shown a distemper-like illness, although no epidemiological link has been found between their infection and human disease; likewise, horses have shown serological evidence of infection, which again does not appear to be linked to human disease. The apparent ability of Nipah virus to infect a wide range of hosts and the fact that it causes a fatal and untreatable disease in humans have made this emerging infection an important public health concern. |

make up a significant proportion of emerging and resurging diseases (Mills and Childs, 1998). Epidemics of plague, tularemia, relapsing fever, and typhus have all occurred in recent years. Exacerbating the situation is the potential for many of these agents to be weaponized and used intentionally for harm. Rodent-borne viral diseases have been unusually refractory to control or eradication programs and continue to emerge as significant pathogens of human and animal populations. Many newly emerged viruses (e.g., Sin Nombre virus and other hantaviruses, and Guanarito and other arenaviruses) have rodents as primary hosts. The high mortality rates asso-

ciated with these rodent-borne hemorrhagic fevers and hantavirus pulmonary syndrome generate great concern in the medical, scientific, and public health communities—and among the general public. Rodent-borne diseases will undoubtedly continue to emerge and increase in medical significance in many areas of the world.

Ecological and environmental conditions determine the epidemic potential of pathogens transmitted by animal reservoirs. Transmisson of arena-viruses—such as Junin virus (found in the corn mouse, Calomys musculinus) in Argentina, Machupo virus (in Calomys calosus) in Bolivia, and Lassa virus (from multimammate rats [Mastomys spp.]) in Africa—has strong environmental determinants. Transmission of each of these pathogens occurs via contact with rodent urine, feces, or tissues; the stability of the pathogens is influenced by humidity and sunlight. A strong link exists between the density of rodent reservoirs and arenaviral diseases in humans. For example, a longitudinal study of C. musculinus populations in an area in which Argentine hemorrhagic fever was endemic demonstrated a dramatic increase in the density of rodents immediately preceding an outbreak of human disease (Mills et al., 1992). During an outbreak of Bolivian hemorrhagic fever in San Joaquin, nearly 3,000 rodents (C. callosus) (about 10 per household) were removed during a 3-week period, apparently contributing to the rapid decline in new cases (Mercado, 1975). Changes in resources and predators that affect the abundance of rodents, combined with patterns of agricultural production and land use, appear to be the major determinants of risk for many arenaviral diseases.

The emergence of Sin Nombre virus and other hantaviral agents provides a textbook example of the effect of ecological forces on rodent distribution and abundance (Nichol et al., 1993). In 1993, an outbreak of acute respiratory distress disease occurred in the southwestern United States, with the initial cases occurring predominantly among Native Americans. The case fatality rate was approximately 60 percent, causing widespread anxiety and enormous media interest in this newly emerged disease. Sin Nombre virus (genus Hantavirus, family Bunyaviridae) was identified as the etiologic agent of this disease, designated hantavirus pulmonary syndrome (Nichol et al., 1993; Elliott et al., 1994). This finding was unexpected; the only other hantaviruses known in the United States at that time were Prospect Hill virus, which was not known to cause human illness, and Seoul virus, which had been associated with mild renal illnesses in humans in the eastern portion of the country (Glass et al., 1994). Thus, no a priori reason existed to associate the acute respiratory disease outbreak in 1993 with hantavirus infection.

Hantaviruses are found worldwide and are major causes of morbidity and mortality in Asia and Europe (Schmaljohn and Hjelle, 1997). In Eurasia, Hantaan virus infections have been associated with illnesses causing signifi-

cant mortality following acute, systemic disorders characterized by fever, hemorrhagic manifestations, and renal failure. These illnesses have usually been clinically diagnosed as hemorrhagic fever with renal syndrome (HFRS) or Korean hemorrhagic fever. Several other viruses, including Dobrava-Belgrade virus, Puumala virus, and Seoul virus, cause similar diseases. Renal involvement, rather than respiratory symptoms, is the hallmark of these diseases.

Hantaviruses are transmitted directly between rodents and to humans via excreta (dried saliva, urine, feces). Human-to-human transmission of Sin Nombre virus has not been reported, although there is evidence from Argentina for direct human-to-human transmission of Andes virus, which is very closely related to Sin Nombre virus (Padula et al., 1998). The rodent reservoir of Sin Nombre virus has been identified as the deer mouse, Peromyscus maniculatus, one of the most commonly occurring and widely distributed mammals in North America (Childs et al., 1994). Hence, Sin Nombre virus shares a similar widespread distribution, but prevalence rates of the virus in this rodent reservoir can differ temporally and spatially (Mills et al., 1999).

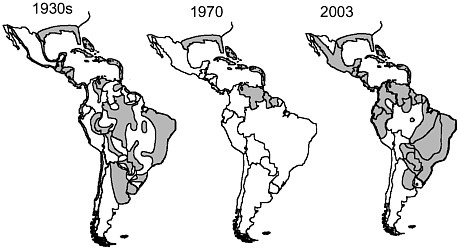

Identification of a hantavirus as the etiologic agent of the hantavirus pulmonary syndrome epidemic prompted increased surveillance for these agents in the Western Hemisphere that has revealed an array of heretofore unrecognized hantaviruses. Cases of hantavirus pulmonary syndrome have now been documented in 31 states, Canada, and Central and South America, and serologic evidence has demonstrated the presence of hantaviral infections in Mexican rodents. More cases of the syndrome are now recognized to occur in South than in North America (Calisher et al., 2002). Of the 39 hantaviruses now recognized (CDC, 2002v), 25 have been identified since 1994, 11 occur exclusively in the United States, and many new ones have been identified in Central and South America (see Figure 3-2). Each hantavirus is associated with a primary rodent reservoir host, suggesting that more hantaviruses will be discovered as new rodent hosts are assayed for these pathogens (Monroe et al., 1999).

Data from the National Science Foundation’s Long-Term Ecological Research Site at Sevilleta in Central New Mexico reveal that P. maniculatus densities increased dramatically beginning in the early 1990s and were highest in 1993 (Yates et al., 2002a). These conditions may have resulted from an El Nino Southern Oscillation event in previous years, which had caused ample rainfall, warm winters, dramatically increased plant productivity, and abundant forage for P. maniculatus. In an area of southwestern Colorado near hantavirus pulmonary syndrome cases, P. maniculatus abundance was estimated to be as high as 50 per hectare, and Sin Nombre virus antibody prevalence rates exceeded 50 percent (Childs et al., 1994). These areas have subsequently been monitored continuously in long-term longitu-

FIGURE 3-2 New world hantaviruses (bold) and their rodent reservoirs (italics).

SOURCE: CDC, 2002x.

dinal studies of hantaviruses in rodent reservoirs in the southwestern United States as part of a landmark effort by the CDC to understand the environmental and epidemiologic determinants of hantavirus emergence and to develop predictive models for risk assessment (Boone et al., 1998; Glass et al., 2000; Hjelle and Glass, 2000). Rodent population densities and seroprevalence rates have not been as high in Sin Nombre virus–endemic areas since the 1993 outbreak. However, an additional El Nino Southern Oscillation event in 1997 resulted in an increased number of human hantavirus pulmonary syndrome cases and a growth in rodent populations; a more refined model for Sin Nombre virus emergence was subsequently developed (Yates et al., 2002a).

ECONOMIC DEVELOPMENT AND LAND USE

The physical environment is constantly being modified by human activities. Most economic development activities, including the consumption of natural resources, deforestation, and dam building, have some intended or unintended impact on the environment, or both. In the present context, it is important to note that a growing number of emerging infectious diseases arise from increased human contact with animal reservoirs as a result

of changing land use patterns. A recent example of this phenomenon is Venezuelan hemorrhagic fever, a new disease that was identified in 1989 and emerged following the transformation of forest to agricultural land, which provided a highly favorable environment for the probable reservoir host, the cane mouse Zygodontomys brevicauda (IOM, 2002d). Other examples include increases in malaria following the clearing of land for rubber plantations in Malaysia; increases in schistosomiasis, malaria, and other infectious diseases following the Volta River project in Africa; increases in vector-borne diseases after the construction of new transportation routes in Brazil; and the emergence of Lyme disease in the United States after the reforestation of abandoned farmlands in the northeast (Mayer, 2000). Even the emergence of HIV is believed to have been due to increased contact with nonhuman primates infected with the related simian immunodeficiency viruses (SIVs); exposure to infected blood during the hunting and field dressing of animals and the preparation of primate meat for consumption may have led to human infection. Indeed, compelling evidence indicates that SIV counterparts of HIV, specifically SIVcpz from chimpanzees and SIVsm from sooty mangabeys, have been introduced into the human population on multiple occasions, generating HIV types 1 and 2 (HIV-1 and HIV-2), respectively (IOM, 2002b).

Reforestation and Lyme Disease

Lyme disease is a classic example of a microbial threat influenced by multiple environmental determinants. The principal vector in North America is the deer tick, Ixodes scapularis, which must take blood meals as larva, nymph, and adult to survive and reproduce. Wild rodents, especially Peromyscus spp. in the northeastern and midwestern United States and Neotina spp. in the western United States, serve as reservoirs for the bacterial agent of Lyme disease, Borrelia burgdorferi, but white-tailed deer are the definitive hosts for the adult ticks. The emergence of the disease has been linked in part to the reforestation of former farm land, which led to a dramatic increase in the distribution and abundance of white-tail deer populations (Barbour and Fish, 1993). People become infected when they encounter the tick vector, usually during outdoor recreation or near residences in wooded areas. Prior to the reforestation of land formerly cleared for farming, Lyme disease was unrecognized. Lyme disease has increased in incidence and geographic distribution in the past 10 years in the continental United States, and as people continue to build homes and expand their neighborhoods even farther into reforested areas, the number of cases continues to rise. The preservation of vertebrate biodiversity and community composition may help reduce the incidence of Lyme disease (LoGiudice et al., 2003).

Dam Building and Schistosomiasis

Environmental changes resulting in the creation of standing water, such as dam building or the diversion of water by canalization and irrigation, have been implicated in the reemergence of infectious diseases transmitted by mosquitoes and other arthropod vectors. For example, the incidence of Japanese encephalitis, which accounts for approximately 7,000 deaths annually in Asia, is closely associated with the increase in mosquitoes that reproduce as a consequence of flooding fields for rice growing (Morse, 1995). Outbreaks of RVF in some parts of Africa have been associated with increased mosquito density as a result of dam building, as well as periods of heavy rainfall (Monath, 1993).

Environmental changes involving dam-building and irrigation projects appear to be allowing the spread of schistosomiasis to new areas. More than 200 million people worldwide are infected by and up to three times as many are at risk of this parasitic worm disease which causes chronic urinary tract disease and often results in cirrhosis of the liver and bladder cancer (WHO, 1999a). The development of dams in the Senegal River basin, for example, is among the major factors leading to a significantly increased prevalence of schistosomiasis over a period of only 3 years (Gryseels, 1994). Similarly, the Aswan High Dam has been implicated in increased rates of schistosomiasis in Egypt (El Alamy and Cline, 1977; Abdel-Wahab, 1982). The potential for the Three Gorges Project in China to create conditions conducive to schistosomiasis transmission concerns many scientists.

Schistosomiasis is caused by trematode worms or blood flukes of the genus Schistosoma. Transmission is primarily in tropical or subtropical regions and involves certain snail species, primarily of the genera Biomphalaria, Bulinus, and Onchomelonia, that serve as a host for the development of one stage of the parasite’s life cycle. Humans become infected as they work or bathe in water infested with Schistosoma larvae released by snails. These larvae penetrate the skin and travel to internal organs, where they mature to adult worms that mate and reproduce. Human disease is a consequence of reaction to the eggs deposited in tissues by adult worms that live for years inside chronically infected people. The eggs are released when people urinate or defecate, and if they do so into snail-infested waters, more snails become infected, hence perpetuating the cycle. Thus, both people and snails are important to Schistosoma biology, and environmental changes that affect either can increase the risk of infection. The rise in water levels and change in flow rates that result from dam building may increase the contact between snails and parasites, as well as create fertile soil and sand beds that propagate the development of snails.

HUMAN DEMOGRAPHICS AND BEHAVIOR

The opportunity for transfer of a microbe from one human to another has grown with the explosion of the world’s population. People are also rapidly moving to urban settings by choice or circumstance, leading to close contacts conducive to the spread of infection. Increases in life expectancy have also increased the proportion of elderly among the population, who are at greater risk of infection by virtue of the natural decrease in immune function with age. At the opposite end of the age spectrum, the epidemiology of childhood infections has been altered by the numbers of children in day care centers, often the result of maternal employment (see Box 3-7). Yet another factor in the spread of infection is the growing number of immunocompromised individuals due to the use of steroids and other immunosuppressives, cancer chemotherapy, and HIV infection. Finally, these groups, and potentially all humans, place themselves and others at risk of infection through various behaviors.

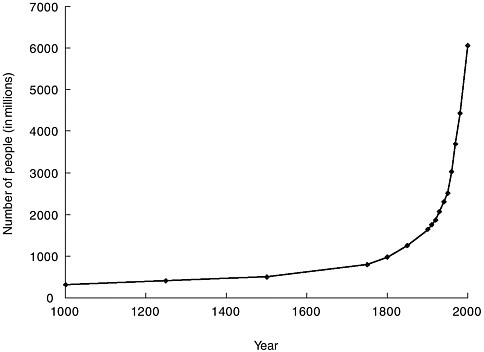

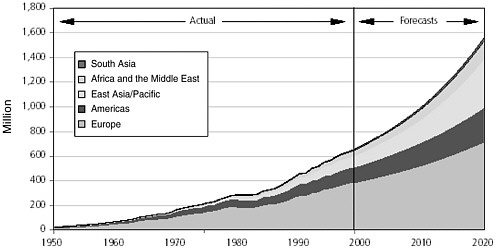

Population Growth

The explosive growth of the world’s population is illustrated in Figure 3-3. At the beginning of the twentieth century, the world’s population was approximately 1.5 billion. By 1960 it had doubled, and by late 1999 it had quadrupled to 6 billion (United Nations Population Fund, 1999). The world population is growing at an annual rate of 1.2 percent, or 77 million people, per year (United Nations Population Division, 2001); six countries (India, China, Pakistan, Nigeria, Bangladesh, and Indonesia) account for half of this annual growth. International migration is projected to remain high during the twenty-first century. The more developed regions are expected to continue being net receivers of international migrants, with an average gain of about 2 million persons per year over the next 50 years (United Nations Population Division, 2001).

Like the rest of the world, the United States has seen a steady increase in its population; by 2000, the U.S. population had reached 281,421,906 (U.S. Census Bureau, 2002a). During the 1990s, every state gained in population for the first time in the twentieth century (U.S. Census Bureau, 2001). It is estimated that 852,000 more people came into the United States than left between July 1998 and July 1999. The nation’s foreign-born population grew from 10 million in 1970, to 14 million in 1980, and 20 million in 1990. By March 2000, the estimated foreign-born population in the United States was 28 million. The largest-growing share of foreign-born U.S. residents between 1970 and 2000 came from Latin America.

|

BOX 3-7 The Changing Demographics of Child Care in the United States Although child day care establishments have existed in the United States since the first known facility opened in Boston in 1828, changes in maternal employment during the past three decades have dramatically increased the percentage of children enrolled in out-of-home child care. This increase has in turn significantly altered the epidemiology of childhood infectious diseases. Among women with children under age 5, the proportion working outside the home increased from 30 percent in 1970 to 75 percent in 2000. Estimates of the number of children attending out-of-home day care in the United States range from more than 5.3 million (Osterholm et al., 1992) to more than 11 million (Klein, 1986). About 65 percent of 4-year-old children attended organized day care or nursery schools in 1995 (Ball et al., 2002). Several studies conducted during the last 15 years have shown that exposure to other children through nonparental child care arrangements increases the likelihood of contracting an infectious disease, including respiratory illness, ear infection, diarrhea, and skin disease. Of particular interest are epidemics of diarrhea in day care centers, caused by organisms believed to be rare 20 years ago, including the intestinal parasites Cryptosporidium (CDC, 1984) and Giardia lamblia (Sealy and Schuman, 1983). The risk of infection does not end with the child. Day care providers, family members, and even the community at large are all potentially at risk for infectious diseases that occur in day care centers. In one study, for example, the rates of gastrointestinal infection brought into the home by a child in day care were 26 percent for Shigella infection, 15 percent for rotavirus infection, and 17 percent for G. lamblia infection (Pickering et al., 1981). More recently, 34 percent of reported cases of a 1997 community-wide hepatitis A epidemic were associated with contact with child care centers; people who had direct contact with child care were 6 times more likely to become infected than people who did not have such contact (Venczel et al., 2001). Many more studies are needed to better evaluate which particular infectious diseases pose an increased risk among children who attend child care centers and how these risks can be decreased. Compulsory hand washing after handling infants, blowing noses, changing diapers, or using toilet facilities is believed to be one of the most important preventive measures (Klein, 1986). Other measures include ensuring that facilities have adequate light and ventilation, space for play and rest, and an appropriate number of toilet and wash areas; that toilet facilities are separated from areas where foods are prepared and eaten; that sinks, soap dispensers, and paper towels are plentiful and appropriately placed; that surfaces, toys, and materials are regularly cleaned; and that staff members are well trained with regard to hygiene. Indeed, it has been recommended that national regulations and standards be developed and enforced to ensure infection control in out-of-home child care centers and homes. |

FIGURE 3-3 The human population explosion. The growth of the human population since 1000 is called the population explosion.

SOURCE: United Nations Population Division, 1999.

Aging

The global population is aging at an unprecedented rate (see Figure 3-4). Lower fertility rates, reduced death rates, and improved health have led to growing proportions of elderly people worldwide. As previously noted, aging increases susceptibility to infection even in the absence of other underlying health conditions. This is likely due to a number of factors, including senescence of gut-associated lymphoid tissue (Morris and Potter, 1997), a reduction in gastric acid secretion (low stomach pH serves as protection from enteric pathogens) (Feldman et al., 1996; Haruma et al., 2000), and diminished cell-mediated immunity and impaired host defenses (Strausbaugh, 2001). The efficacy of immunizations also decreases with advancing age (Bernstein et al., 1999). Some elderly are more vulnerable to infectious disease because of a breakdown in host defenses due to chronic disease, use of medications, and malnutrition.

Virtually all nations are experiencing growth in their elderly populations in absolute numbers (Kinsella and Velkoff, 2001). Developing countries have seen the most rapid increase, accounting for 77 percent of the

world’s net gain of elderly individuals from July 1999 to July 2000 (615,000 people monthly). Despite this increase, Europe remains the region with the highest proportion of population aged 65 and over (15.5 percent in 2000), while sub-Saharan Africa has the lowest proportion (2.9 percent).

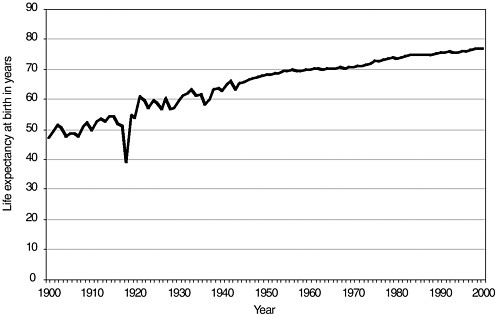

Life expectancy has increased enormously in the United States since the beginning of the twentieth century (see Figure 3-5). In developed countries, the average national gain in life expectancy at birth was 66 percent for males and 71 percent for females between 1900 and 1990 (Kinsella and Velkoff, 2001). Increases were more rapid in the first half than in the second half of the century because of the expansion of public health services and infectious disease control programs that greatly reduced death rates, particularly among infants and children in developed countries. Estimates of life expectancy in developing countries in the early part of the 1900s are generally unreliable. Since World War II, changes in life expectancy in developing regions have been fairly uniform. Some exceptions include Latin America and, more recently, Africa as a result of the HIV/AIDS epidemic. In 2000, life expectancy in developing countries ranged from 38 to 80 years, as compared with 66 to 81 years in developed nations.

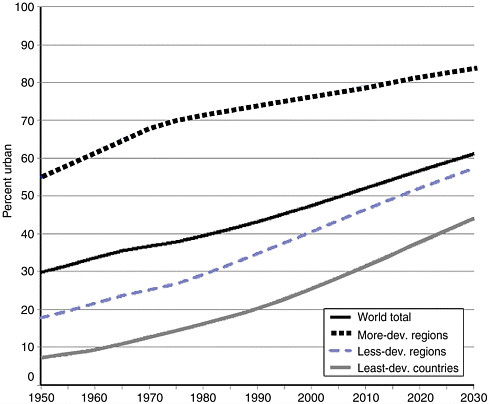

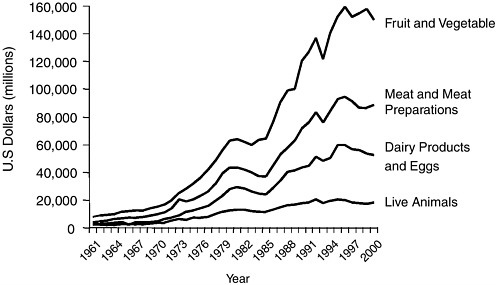

Urbanization

The mass relocation of rural populations to urban areas is one of the defining demographic trends of the latter half of the twentieth century. The world’s cities are currently growing at four times the rate of their rural counterparts, and at least 40 percent of their expansion is the result of migration rather than natural increase. Each day about 160,000 people move from the countryside to metropolitan areas, and almost 50 percent of the world’s population lives “in town” for significant periods (United Nations Population Fund, 2001; United Nations Population Division, 2002). The movement of people to cities has accelerated in the past 50 years (see Figure 3-6). The world’s urban population was 2.9 billion in 2000 and is expected to climb to 5 billion by 2030. Urbanization is greater in the more developed regions of the world, where 75 percent of the population lived in urban settings in 2000. Although the percentage of urban dwellers in less-developed regions had increased to 40 percent in 2000 from 18 percent in 1950, the level and pace of urbanization differed markedly among the major constituent areas. Latin America and the Caribbean as a whole became highly urbanized, with 75 percent of their populations living in urban settlements in 2000. Conversely, only 37 percent of the populations of Africa and Asia lived in urban areas in 2000; however, this number is expected to increase more than 50 percent for both continents by 2030. With 26.5 million inhabitants, Tokyo was the most populated urban agglomeration in the world in 2001 (see Table 3-1).

FIGURE 3-5 Estimated life expectancy at birth in years: 1900–2000, United States. Death-registration states, 1900–1928 and United States, 1929–2000.

SOURCE: National Center for Health Statistics, 2002.