6

A Phased Approach to Monitoring Microbial Water Quality

INTRODUCTION

Monitoring microbial water quality has been conducted for more than a century by measuring indicator bacteria that occupy human intestinal systems, primarily fecal coliforms, Escherichia coli, and some enterococci. The historical origins and premises for the indicators measured are discussed at length in Chapters 1, 2, and 4.

Technological advances described in Chapter 5 provide new opportunities for revising these monitoring procedures. Our increased understanding of microbiology at the molecular level allows existing indicators to be measured using faster and cheaper methods. These advances also provide cost-effective opportunities for measuring new indicators or combinations of indicators, and in some cases, pathogens themselves. There is a strong consensus in the committee that with sufficient support for the necessary research, current indicator systems and their applications will undergo a comprehensive evolution during the coming decade. This evolution will substantially enhance our ability to rapidly and correctly identify when water used for recreational or drinking purposes is contaminated with microorganisms that are pathogenic to humans.

The increasing number and diversity of analytical tools imply that it is timely to reevaluate the appropriateness of currently used indicators. This chapter provides the committee’s conclusions and recommendations regarding preferred indicators, in both the short- and the long-term, for a variety of applications. It also provides a monitoring framework within which to make those choices and dis-

cusses potential impediments and drivers to implementing the framework. The chapter closes with a summary of its conclusions and recommendations.

PHASED MONITORING APPROACH

Indicators for waterborne pathogens are used to achieve a variety of goals, fulfill various regulations, and meet differing applications under the Safe Drinking Water Act (SDWA) and Clean Water Act (CWA; see Chapters 1 and 4). Often they are used to provide an early warning of potential microbial contamination, an application for which a rapid, simple, broadly applicable technique is appropriate. They are used for health risk confirmation where resulting actions can be costly and time consuming. They are also used to identify the source of a microbial contamination problem which can have terrestrial origins. In both of these latter applications, the time frame and investment in indicators, indicator approaches, and methods must be greater.

Indicator applications also vary according to the media in which they are used. For example, warning systems for groundwater typically focus on the presence or absence of bacterial indicators of fecal contamination because high-quality groundwater does not normally contain fecal bacteria and is often used without disinfection. In contrast, quantitative tests for indicator bacteria are used in monitoring surface drinking water intakes because these waters often show some evidence of fecal contamination and are usually treated with filtration and disinfection. Interpretation of indicator data in recreational water applications is different again because the exposure can be more irregular and involves a more limited population at risk. Furthermore, all of the indicator applications discussed in this report are inextricably linked and to some extent must account for surrounding terrestrial ecosystems (e.g., through fecal loading from agricultural, wild, and domestic animals living in a flood plain) that can affect the microbiological quality of the water being assessed.

A single microbial water quality indicator or small set of indicators cannot meet this diversity of needs and applications. The complexity of issues surrounding microbial water quality assessment requires the use of a “tool box” in which the indicator(s) and method(s) are matched to the requirements of a particular application. Like health investigations, water quality studies may have to proceed through a series of phases with a different suite of tools needed for each phase.

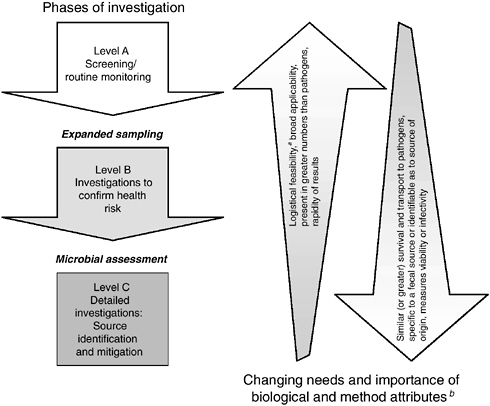

The committee recommends use of a phased, three-level monitoring framework, as illustrated in Figure 6-1, for selecting indicators. The first phase of this framework is screening or routine monitoring (Level A). The objective of this phase is early warning of a health risk or of a change from background condition that could lead to a health risk. This is the most frequent type of monitoring and is routinely conducted throughout the country.

In general, the most important indicator attributes at this level are speed, low cost (logistical feasibility), broad applicability, and sensitivity. Speed is impor-

FIGURE 6-1 Recommended three-level phased monitoring framework for selection and use of indicators and indicator approaches for waterborne pathogens.

aIncludes training and personnel requirements, utility in field, cost, and volume requirements (see Box 4-3).

bNot all biological and method attributes discussed in Chapters 4 and 5 are included in this figure nor are they listed in order of importance.

tant because managers have to react quickly to a system change, such as a sewage leak into drinking or recreational waters. Cost is important because the spatial or temporal frequency of monitoring is inversely related to per-sample cost and comprehensive sampling for screening purposes is typically desirable. Methods that have broad applicability to a number of geographic locations, various types of watersheds, and different water matrices are preferred. Sensitivity (i.e., the indicator occurs at more readily measured concentrations than the broad class of pathogens for which it serves as an indicator) is important because managers should not miss potential microbial water quality problems. Method precision and a definitive (quantitative) relation to health risk are less important during this phase. Although these are obviously desirable traits, management decisions

should rarely be made at the screening level, unless the indicator concentrations are extreme or supported by ancillary information.

Once screening has identified a potential problem, the second phase involves more detailed studies to confirm a public health risk (Level B). The aim of such investigations is to assess the need for further management actions (e.g., beach closures, boil-water orders) and/or expanded specific data-gathering efforts. There are several ways in which confirmation can be accomplished. A typical approach involves expanded sampling with screening indicators to determine whether the response is repeatable over space and time; Chapter 4 discusses granularity in indicator response and the need for additional sampling to determine whether the signal persists. In some cases, a more reliable processing method is used to confirm that the result is not an artifact.

Ancillary information may be used to help confirm health risk. For instance, visual (“sanitary”) surveys for upstream sources of leaking sewage are often conducted to identify point and nonpoint sources of contamination. Changes in water color, odor, or similar parameters may also serve as clues for increased health risk.

The confirmation phase often involves measurement of new indicators, including direct measurement of pathogens. Such studies are not initiated on a routine basis, but would typically be undertaken when screening indicators persist at high levels without a clearly identifiable contamination source. Many of the new and emerging methods described in Chapter 5 and Appendix C—such as quantitative polymerase chain reaction (PCR), microarray technology, and viral cell culture concentrates to screen for a multitude of targets of health concern—will be useful at this stage.

Since confirmation studies focus on assessing health risk, the most important indicator biological attributes during this phase are correlation with contamination sources and transport or survival behavior similar to pathogens. Desirable method attributes include quantifiability and effectiveness at measuring viability or infectiousness, while logistical considerations, rapid turnaround, and broad applicability become less important.

The third phase (Level C) involves studies to determine sources of microbial contamination so that the health risk can be abated through a variety of engineering and policy solutions. However, this chapter and report focuses on identifying the sources of microbial contamination rather than their mitigation. In some cases, source identification is accomplished through expanded spatial sampling to look for gradients. Where recreational waters are concerned, indicator strategies based on molecular signatures are becoming more commonly used in place of screening indicators.

For these detailed investigations, the essential indicator attributes include specificity for a fecal source and quantifiability, while cost considerations and

rapidity of results diminish further in importance. Level C studies often overlap in goal with Level B health risk confirmation studies, since identifying the source of contamination facilitates identification of the health risk. Depending on the need to differentiate between source of contamination and public health risk, the ability to measure the infectiousness of the detected indicators or pathogens may also be important.

The standardization and regulation of monitoring methodology generally decrease as indicator use progresses through the three phases. Methods used in the screening phase are typically “standard” and can be accomplished by almost all county health department laboratories. This also holds true for many Level B studies. However, the techniques used during the latter phases may be more specialized and require the expertise of a research laboratory. This is especially true for Level C studies. The responsibility for study costs may also shift through the three phases, with parties potentially responsible for contamination sources typically more involved with the latter two levels of studies.

The committee recommends that the U.S. Environmental Protection Agency’s (EPA’s) presently available guidance documents and indicator recommendations (focused predominantly on Level A) be expanded to address the latter two phases of selection and use of indicators and indicator approaches for waterborne pathogens. There is an increasing need for national leadership and guidance for the investigation phases that follow screening. Moreover, EPA active support for indicator and method development in the latter two phases is required to fully realize the potential advantages of developing technology. EPA should invest in a long-term research and development program to build a tool box of indicators that will serve as a resource for all three phases of investigation.

The remainder of this chapter describes application of this framework to three typical monitoring situations: marine beaches, surface water sources of drinking water, and groundwater sources of drinking water. The following sections also provide recommendations regarding the most appropriate indicators at present, in the near-term future (including the proposed Ground Water Rule and Interim Enhanced Surface Water Treatment Rule provisions), and in the long-term future at each level of investigation. Lastly, it is important to note that the phased monitoring framework for indicator selection and use described in this chapter is focused on supporting risk management, not risk management actions themselves which are largely beyond the scope of this report. Appropriate risk management decisions depend not only on the results of microbial water quality monitoring but on the application the monitoring is designed to address, such as public health warning systems for recreational beaches (see more below). Under any circumstances, time is of the essence in all three levels of microbial water quality monitoring in order to ensure the public is not exposed to water that is known to be contaminated.

APPLICATION TO MARINE BEACHES

Level A—Screening/Routine Monitoring

The Beaches Environmental Assessment and Coastal Health (BEACH) Act of 2000 requires that EPA develop a consistent national monitoring program for marine and Great Lakes bathing beaches. EPA is creating such a program and recommends enterococci as the preferred indicator for marine systems; it is investigating this group of bacteria for use in freshwater lakes (EPA, 2002; see also EPA, 1999a and Chapter 2). Most states have not yet adopted this recommendation and are still relying on total and fecal coliform bacteria as indicators (see Tables 1-4 and 4-1). EPA has encouraged states to adopt the enterococci standard by requiring its use if their programs are supported by BEACH Act grants.

The committee agrees with EPA’s recommendation that enterococci are the best bacterial indicator presently available for screening at marine beaches. While the epidemiological evidence has several deficiencies (see Chapters 2 and 4), enterococci has the best correlation to health risk (EPA, 1986; Prüss, 1998; Wade et al., 2003). It is also the most protective of the standard available indicators. When enterococci have been measured concurrently with fecal coliforms, total coliforms, and E. coli, enterococci were the sole indicator failing water quality standards at most sites that failed and exceeded standards at the vast majority of sites where standards failures occurred for other indicators (Noble et al., 2003). It is also relatively inexpensive to measure.

Although enterococci are the best choice for screening at marine beaches, EPA may also want to reexamine whether there is additional/added value in using the total:fecal coliform ratio. Haile et al. (1999) found that enterococci had the best epidemiological relationship with gastrointestinal illness, but the total:fecal ratio had a better relationship with several other illnesses, such as ear infections. The rationale for the ratio is that when fecal coliforms are a high percentage of total coliforms, the presence of a human contamination source is more likely. Haile et al. is the only study to find a strong relationship between the ratio and illness, although it is the only one to have looked for it. The EPA is currently evaluating Bacteroides in epidemiological studies (Richard Haugland, EPA, personal communication, 2003) so more information regarding the application of this indicator to assessment of health is forthcoming.

The future holds many possible improvements in beach water quality screening, the most likely of which are enhancements in the speed at which indicators are measured. As detailed in Chapter 4, the greatest shortcoming in present screening methods is the 18- to 96-hour culturing period necessary to obtain results, during which time swimmers are exposed to potentially harmful conditions. Effective screening requires a short quantification period so that water quality managers can react quickly with a public health warning, or conduct further investigations as to whether a public health warning is warranted. A series of new methods

that reduce laboratory processing time are in late stages of development (see Chapter 5). Antibody based methods have the greatest potential for shortening the time frame to as little as 20 minutes, but the sensitivity of this technology at present is inadequate to measure bacterial concentrations near water quality standards. Adoption of antibody techniques or other new methods that measure subcellular structures may also require establishment of new standards since the attributes they measure do not assess indicator viability.

Future improvements could also include replacement of the indicator species used, but this is not likely to occur in the near future. While direct pathogen measurement techniques will soon be available, they generally do not fill the need for a broad-spectrum measure. Microarrays that assess the presence of numerous pathogens simultaneously might meet this need, but it is unclear whether microarrays can be used to analyze a large enough water sample to appropriately assess exposure to human pathogens that occur at low density. Cost and operator complexity constraints at the screening level are also an issue. The more likely use of microarrays in the near-term will be at the subsequent phase of investigation, in which microarray technology is used after a short-term incubation, water filtration, PCR, or other concentration or amplification methods to better define the nature of the water quality problem.

Another factor that will limit replacement of present indicators with direct pathogen measurements is the lack of epidemiologic studies to quantify the relationship between concentrations of specific pathogens and health effects (see Chapters 2, 4, and 5 for further information). Previous studies on the relationships between indicators and human health risks have not included efforts to identify the etiological agents causing infection and illness. Such etiological studies are recommended and will be important in developing the pathogen-health effects relationships that will form the basis for water quality standards.

Level B—Investigations to Confirm Health Risk

Confirmation sampling is standard practice in marine beach monitoring. Screening alerts managers to the possibility of health risk from water contact, but additional data collection typically precedes issuance of a beach closure notice. States differ considerably in their approach to confirming health risk. Most states conduct temporal and spatial confirmation sampling prior to issuing a closure notice. This type of confirmation sampling is warranted because several studies have demonstrated considerable variability in indicator bacteria concentrations over small spatial and temporal scales (Boehm et al., 2002; Leecaster and Weisberg, 2001; Taggart, 2002). Many sources are intermittent, and reliance on a single sample may lead to incorrect decisions. While confirmation testing is always appropriate, the need to take immediate action must also be considered to protect public health in situations where other information suggests that a contamination source is, and is likely to continue, discharging to recreational waters.

Many states also take other actions to confirm health risk, including sampling additional indicators, before issuing a beach closure notice. The committee concurs with this since presently measured indicators, such as enterococci, are not specific enough to human waste streams to automatically equate elevated concentrations to a health risk. Hawaii does this by measuring Clostridium perfringens, which has been suggested to be more specific to human contamination (Sorenson et al., 1989), although such specificity has not been confirmed more than a decade later. Some states have used the fecal coliform:fecal streptococcus ratio based on a higher prevalence of fecal coliforms in human waste than in animal waste (Feachem, 1975). Other states have used the ratio of fecal coliforms to total coliforms, based on the same concept (Haile et al., 1999). Although these source indicators provide some information and are easily measured by most laboratories, they can be unreliable because of differential survival in the environment (Scott et al., 2002).

The options for improving both spatial/temporal and other forms of health risk confirmation sampling will expand considerably in the near future. An important change will be the availability of rapid methods, allowing confirmation sampling to be conducted in a more cost-effective and timely manner. Methods that provide a response within a few hours will allow the size of the contaminated area to be determined immediately after detecting a standards failure, ensuring that closure decisions are of an appropriate size and are not made on an isolated patch of poor-quality water that dissipates before action is taken.

The future will also include an array of new indicators, including direct pathogen measurements, for confirmation of health risk. Direct pathogen measurements are not presently used for screening largely because no epidemiologic studies have included these measures, which prevents the determination of standards (although such studies are being conducted), and because current techniques are not sensitive enough to generate meaningful data in a screening mode. Pathogen measurements are suitable at the present time for confirmation sampling; however, because the presence of pathogens along with other data on screening indicators (especially the co-occurrence of high concentrations of indicator organisms) suggests a significant public health risk.

Level C—Source Investigations

Confirmation of health risk leads naturally to more detailed investigations that identify and allow mitigation of the microbial contamination source. The most frequently used approach for this has been visual surveys to search for a leaking pipe system. This is often combined with more spatially intensive use of screening indicators to identify gradients in concentration that allow focusing of visual surveys in the proper area.

Source investigation is likely to improve substantially in the near future because of technological developments in two areas. The first is more rapid meth-

ods for measuring screening indicators, which will improve the spatially intensive surveys. At present, these surveys must sample in multiple directions simultaneously, because of the long time necessary for laboratory processing, making them impractical when there are multiple tributaries in a given stream system. Development of rapid analysis methods will allow investigations to proceed unidirectionally toward the location of highest bacterial concentration because samples from the confluence of every tributary can be processed quickly.

The other improvement will result from the availability of phenotypic and genotypic source identification (tracking) methods that allow for source differentiation by animal species. These methods show great promise, but the field is still new and there is disagreement among practitioners as to which techniques hold the most promise (Griffith et al., 2003; Malakoff, 2002; Simpson et al., 2002). Most methods have been tested in a limited number of locations, often within a single watershed, and with a limited number of possible sources. There have been a few recent attempts at standardized comparative testing (see Box 6-1), but these

|

BOX 6-1 Microbiological source tracking (MST) methods are potentially powerful tools that are increasingly being used to identify sources of fecal contamination in surface waters, but these methods have been subjected to limited comparative testing. The Southern California Coastal Water Research Project recently led an effort (Griffith et al., 2003) in which 22 researchers employing 12 different methods were provided identical sets of blind water samples containing one to three of five possible fecal sources (human, dog, cattle, seagull, or sewage). Researchers were also provided portions of the fecal material used to inoculate the blind water samples for their use as library material. No MST method tested predicted the source material in the blind samples perfectly, although all methods provided useful information. Host-specific PCR performed best at differentiating between human and nonhuman sources, but primers are not yet available for differentiating among nonhuman sources. Human virus and F+ coliphage methods reliably identified the presence of sewage but were not able to identify fecal contamination from individual humans. Library-based isolate methods were able to identify the dominant source in most samples but frequently had false positives, identifying the presence of fecal sources that were not in the samples. The U.S. Geological Survey is presently conducting a similar type of comparative study using different evaluation criteria (Donald Stoeckel, USGS, personal communication, 2003). Multiple comparative studies are warranted because all desirable attributes of source tracking indicators cannot be tested in a single study. |

are limited and have tested only a subset of required attributes. Thus, as stated in Chapter 4, the committee cannot recommend which of these techniques is most suitable at this time, but it does recommend continued support for development and testing of these very promising technologies.

APPLICATION TO SURFACE DRINKING WATER SOURCES

Level A—Screening/Routine Monitoring

Screening for microorganisms in surface water is generally limited to regulatory or voluntary monitoring by water utilities. Routine monitoring for conventional indicators, including total coliforms and either fecal colifoms or Escherichia coli, is required under the Total Coliform Rule (EPA, 1989b), but this monitoring applies only to treated water. A requirement for monitoring untreated surface drinking water sources for total coliforms or fecal coliforms is included in the Surface Water Treatment Rule (EPA, 1989a) for systems that wish to avoid filtration. Routine monitoring of all untreated surface drinking water sources for coliforms and either fecal coliforms or E. coli is also included in the Interim Enhanced Surface Water Treatment Rule (IESWTR; EPA, 1998c), with monitoring to be conducted at least monthly. At present, there are no other national regulatory requirements for routine monitoring of different microbial indicators or pathogens in surface water sources of drinking water.

The monitoring program at the screening level for surface water supplies should focus not only on the water intake but also on the watershed level. Thus, the indicator systems and attributes will be focused on applications to a large spatial area. It is not clear that total coliform monitoring will be of value; however, water utilities should be encouraged to examine multiple indicators such as E. coli, enterococci, coliphage, Bacteriodes, and perhaps Clostridium perfringens when sampling (sub)tropical waters. Point sources (e.g., sewage discharge points) can be evaluated more readily and may be a constant source of inputs to the watershed. Nonpoint sources will be more difficult to assess because they are likely to be intermittent sources of pollution, often related to meteorological events. Baseline concentrations and changes in levels should be assessed. The integration between Level A and B and/or C studies should be considered.

Turbidity is a reliable indicator of changes in water quality, particularly when those changes occur as a result of meteorological conditions. The U.S. Geological Survey (USGS) and others are currently using flow, rainfall, and turbidity to address potential risk to a system. The availability of technology for on-line and real-time monitoring of turbidity facilitates its inclusion in microbiological monitoring programs.

The committee concludes that better, more reliable drinking water protection can be provided if indicator systems are used to routinely monitor the microbiological quality of untreated surface drinking water supplies. The committee rec-

ommends that such routine monitoring be undertaken using the phased approach outlined in Figure 6-1, with greater investments in expanded monitoring and investigations being required as lower-level monitoring indicates the need. Because of an enhanced potential for contamination during wet weather, the committee further recommends that special Level B studies be conducted in each surface water source during at least a half-dozen major wet weather events so that exposure during these periods is well understood. Different intakes from the same waterbody should be considered different sources because contamination is sometimes very local in nature.

Level B—Investigations to Confirm Health Risk

Health risk or Level B assessment for surface drinking water sources has typically taken place via direct pathogen monitoring. Although it is not currently required by federal regulations, some large water utilities have established voluntary routine monitoring programs for selected pathogens, albeit with a relatively low monitoring frequency (typically once per month or once per quarter). Such monitoring is primarily for protozoa and enteric viruses and in many cases is a continuation of the Information Collection Rule (ICR; see Table 1-1) monitoring. The ICR is an example of a special, nationwide study (Level B) conducted as a federal requirement for 18 months from July 1997 to December 1998. An outcome of this special study was the implementation by some of these large water utilities of voluntary long-term monitoring for protozoa and, in more limited cases, for enteric viruses as well. Protozoan monitoring is directed at detection of Cryptosporidium and Giardia, utilizing the indirect fluorescent assay (IFA) with EPA recommended or approved methods, as described in the ICR or Method 1623, respectively. Monitoring for enteric viruses is conducted with less frequency and by fewer water utilities due, in part, to the complexities and cost of the assay. When voluntary enteric virus monitoring is conducted, the principal method used is the total culturable enteric virus assay in buffalo green monkey kidney (BGMK) cells following the ICR method or its predecessor in Standard Methods for the Examination of Water and Wastewater (APHA, 1998; see also Chapter 5).

Level B investigations have been conducted in surface water as part of multistate occurrence studies by independent research groups or in nationwide occurrence studies performed as part of the ICR, the ICR Supplementary Survey, and in the near future, the Long-Term 2 Enhanced Surface Water Treatment Rule (LT2ESWTR; see Table 1-1 and EPA, 2003, for further information). Two large multistate surveys found Cryptosporidium in 87 and 51 percent of source waters tested by LeChevallier et al. (1991) and Rose et al. (1991), respectively. The recently proposed LT2ESWTR will require large utilities to monitor for Cryptosporidium, E. coli, and turbidity for a period of 24 months (EPA, 2003). The results of the two-year monitoring will be used to determine the level of

treatment for Cryptosporidium, referred to in the rule as “bin classification.” Viability or infectivity is of particular interest for Cryptosporidium to enable a determination of the potential health risk. In this regard, a recent study by Gennaccaro et al. (2003) demonstrated viable oocycts in reclaimed wastewater effluent using cell culture methods. In addition, genotyping via PCR methods may provide some indication of source. These methods have been widely published, and EPA should develop a consensus method to begin testing in laboratories nationwide as recommended in Chapter 5.

Testing water for other waterborne pathogens is conducted only via special monitoring studies with experimental or non-EPA-approved methods. Such special studies can target a specific pathogen or utilize a representative protozoan, virus group, and/or enteric bacteria for a broader and more complete source water quality assessment. Despite the limited number of special studies conducted to date, they can provide more direct links to the public health issue of concern for the source water under investigation. For certain scenarios, special studies conducted with experimental or research-oriented methods are the most relevant approach, particularly when survival, infectivity, and a high degree of specificity of detection are required. Thus, with special studies, data can be obtained for a specific pathogen (e.g., Cryptosporidium parvum), for a group of related pathogens (e.g., enteroviruses), or for a selected suite of pathogens with representative organisms from each of the major waterborne pathogen classes—protozoa, viruses, and bacteria (see also Chapter 3 and Appendix A). Such data can be used more directly to determine their occurrence, survival, and transport in raw surface water.

Analysis of samples for many of the viruses on the 1998 Drinking Water Contaminant Candidate List (CCL; see EPA, 1998a, and NRC, 1999 for further information) will require methods that utilize a combination of cell culture and PCR or PCR directly because viruses such as the noroviruses are not cultivatable. Since the monitoring is of source water, quantitative methods or Most Probable Number (MPN) cell culture methods should be employed, thus permitting an evaluation of the vulnerability of the system and the nature of the challenge to the system by pathogens on a routine or intermittent time frame.

There is often a disincentive for conducting special studies with direct detection of pathogens. Because the nature of the health risk and lack of standards, quality assurance/quality control procedures, or guidelines on acceptable levels, there is a reluctance to address contaminants that the drinking water industry feels it is already controlling (via disinfection) or for which little can be done to prevent contamination of the source water in the first place. Thus, data for most pathogens in untreated water are limited, but as more studies are undertaken, the data will have to be placed into a risk assessment framework (see also Chapter 2), which can be used to make management decisions regarding protection of the watershed or treatment changes in the future (e.g., addition of ozone or UV light). Another disincentive is the lack of guidelines on research methods that would

best support the goals for surface water monitoring of microorganisms associated with health risks. The effect of this technology gap is clearly evident in the regulatory arena in both the Unregulated Contaminant Monitoring Rule (UCMR; EPA, 1999b,c) and the CCL (EPA, 1998a), where the listed microbial contaminants are essentially in “regulatory limbo” due to a perceived lack of methods for their detection in water.

To completely understand the potential impacts of the microorganisms listed on the CCL on public health, significant efforts must be made to monitor for their presence in a wide variety of untreated and treated water supplies across the United States. As discussed in Chapter 5, it is also the committee’s conclusion that a prerequisite for this monitoring is the development, testing, and discussion of standard methods for their analysis in a free and open environment.

The committee recommends that the EPA (1) prepare a review of published methods for each CCL microorganism (to include groups of related organisms); (2) publish these reviews on the Internet so researchers and practitioners can assess, use, comment, and improve on them; and (3) promote their use in special studies and monitoring efforts in microbial water quality.

Level C—Source Investigations

Monitoring for the presence of fecal contamination is no longer seen as sufficient to establish a priority for public health concerns or as an approach for mitigating or establishing best management practices for reduction of a microbial contaminant in surface water sources of drinking water. Determining the source(s) of microbial contamination is critical for defining the public health issues of concern and establishing priorities for mitigation. The health issues of concern, the risk level, and the dose and mode of exposure will be different if the source of contamination in surface water is from a pasture or from a confined/concentrated animal feeding operation (CAFO), from seagulls or other birds, or from wastewater treatment plant effluent. In current practice, assessments of source of contamination are made via sanitary surveys, reasonable inferences, and with less frequency, chemical tracers (although tracers are used more in groundwater; discussed later). Thus, source tracking studies may be necessary to identify the source or sources of contamination (see Chapter 4 and Tables 4-3 and 4-4 for currently used techniques).

It is clear in this instance that data and information from Level A and B programs could feed into a Level C investigation. The most commonly used microorganisms for source identification in surface water are bacteria, as previously mentioned, although bacteriophage, mammalian viruses, and protozoa may also be used (Scott et al., 2002; Simpson et al., 2002). Because the water industry has been more involved in direct pathogen testing for both Cryptosporidium and the enteric viruses (Level B testing), it follows that some percentage of samples could be archived for genotyping.

Mammalian viruses are particularly useful for source identification because they are largely host specific; thus, the source of contamination can be inferred. In some watersheds however, the low frequency of isolation of viruses in drinking water sources imposes a requirement for more frequent, extensive sampling. For Cryptosporidium, genotyping may be useful. If Genotype 1 is detected, then human sewage may be the predominant source; however because Genotype 2 can be detected from both humans and animals, discrimination of the source may be possible only by additional sequence analysis. It is worthwhile to develop a database of the national occurrence and distribution of genotypes in water because this will assist in investigations of waterborne outbreaks in the future.

A waterbody that is susceptible to fecal contamination will have potentially multiple human and animal sources of microbial contamination. The goal for source tracking studies should be to also address relative loading. Quantitative information will be an important attribute of a method that could then feed into a total maximum daily load (TMDL; see also Table 1-2) assessment. Although each source of microbial contamination is unique, current source tracking techniques are often isolate-based and do not address the distribution and relative frequency of the various sources. A combination of technologies that would employ (sample) concentration, population assessment, and quantitative measurement of key targets associated with sources should be employed.

Because of its ubiquitous nature as intestinal flora, as discussed in Chapter 4, Escherichia coli is the most commonly used organism in surface water source tracking investigations. For example, if E. coli is isolated frequently from a surface water source, the approach for assessing relevant health issues will depend on the exact source of the E. coli. If the intended use is for drinking water, then the weight or significance of isolating E. coli will be lower if the source is birds, gulls, or geese. That is, although bird feces may contain enteric bacteria that are human pathogens, these are easily controlled during conventional treatment. If the source is cattle or sheep, which may also carry Cryptosporidium oocysts in their feces, the level of health concern would be higher. The reason for this is that these animals carry Cryptosporidium Genotype 2, a zoonotic pathogen known to infect man (see Chapter 3), and because Cryptosporidium is more difficult to control with conventional water treatment practices. There would be an even greater health concern if the source is wastewater effluents because these may contain high concentrations of several human pathogens. Enteric viruses and protozoa are of greater concern in non-nitrified, chlorinated, secondary wastewater effluent due to their relatively higher resistance to disinfection compared to enteric bacteria. Thus, source tracking is a valuable method for supplementing sanitary survey inferences and perhaps identifying a new or unknown source of contamination.

While source tracking methods are evolving, at best the current technology can give some indication of the source as being either animal or human. However, more sophisticated molecular analyses will need to be undertaken for more

specific distinction of contamination sources (e.g., septic systems versus sewage discharges; pig farms versus chicken farms). Given the amount of money that may have to be spent on infrastructure changes—changes including ongoing agricultural practices and wastewater treatment—more investment should be made to improve the discriminating power of the source tracking methods available. As mentioned previously, source-specific genetic targets hold great promise but must be field tested, and more than one target will be needed to characterize sources in a surface water body. Microarray technology has the potential to screen multiple targets; thus, once these genetic sequences have been identified, it will be very useful in this application. Lastly, it must be kept in mind that all detection methods must be developed in tandem with water concentration and sample preparation methods.

APPLICATION TO GROUNDWATER SOURCES OF DRINKING WATER

When considering the use of indicators and indicator approaches for monitoring the quality of groundwater, important differences between groundwater and surface waters must be understood. The very nature of groundwater makes it a difficult environment to study. Therefore, much less information on the occurrence of indicators and pathogens is available for groundwater than for surface waters. The EPA has recently reviewed the studies that have examined the occurrence of pathogenic microorganisms, generally viruses, and indicators in groundwater systems (EPA, 2000a). It concluded that only one of the studies (Abbaszadegan et al., 1999) was somewhat representative because an attempt was made to collect samples from a variety of hydrogeologic settings. However, even in this study, all of the samples were obtained from community water systems, and water from wells was subject to disinfection prior to distribution for consumption, so it is questionable whether the results of this study are representative of the nation’s groundwater. Due to the lack of data, much more effort is needed to screen possible indicators and indicator approaches so that future approaches may suggest which suite of indicators is best for groundwater assessment.

Level A—Screening/Routine Monitoring

In May 2000, the EPA proposed a new regulation, the Ground Water Rule, the purpose of which is to reduce the public health risk associated with the consumption of groundwater that has been contaminated by microorganisms of fecal origin (EPA, 2000a; see also Table 1-1). The approach being taken in the proposed regulation conforms to the Level A—Screening/Routine Monitoring component of the framework shown in Figure 6-1. One of the requirements of the proposed regulation is that systems that draw water from a hydrogeologically

sensitive source and do not provide treatment to achieve 99.99 percent virus reduction must monitor their source water for indicator organisms. In the proposal language, utilities may monitor for Escherichia coli, enterococci, or coliphages, whichever one is specified by the state.

The decision to require monitoring for indicator organisms rather than directly for enteric pathogens was made on the basis of the impracticality of monitoring for the large number of pathogens that might be present in groundwater that has been contaminated by fecal material (EPA, 2000a). As discussed elsewhere in this report, the analytical methods required for a number of pathogens are expensive, time-consuming, and require a degree of technical expertise beyond that available to many groundwater systems. For some pathogens, analytical methods are not currently available. In addition, many pathogens present a health concern at an extremely low concentration; thus, a large volume of water (e.g., more than 1,000 L) would have to be sampled, which would increase monitoring costs significantly.

The proposed indicator organisms meet many of the desired attributes described in the recommended phased monitoring framework. The indicator organisms can be measured rapidly; they are present in greater numbers than the pathogens; and the analytical methods are amenable to use on large numbers of samples, generally available levels of technical expertise, and can be done at a relatively low cost (i.e., meet the logistical feasibility attribute). In addition, it is not necessary to have quantitative information (i.e., a method that provides presence or absence information would be sufficient for screening purposes). If, however, one of the bacterial indicators is chosen (E. coli or enterococci), there are concerns about their similarity to the pathogens, especially viruses, with respect to survival and transport in the subsurface (Gerba, 1984). The difference in size between bacteria (0.2-2 μm) and viruses (0.02-0.1 μm) can result in viruses being transported much greater distances than bacteria, especially in fine-textured soils with small pore sizes (see Chapter 3 for further information). The shorter survival time of bacteria relative to viruses may also be an issue, especially if the time of travel from the surface to the groundwater is long or if the environmental characteristics are harsh. The use of coliphages, or the joint use of coliphages and one of the bacterial indicators, as suggested by the Drinking Water Committee of the EPA’s Science Advisory Board1 (EPA, 2000b), would avoid many of these potential problems. The committee recommends that coliphages be required, in conjunction with bacterial indicators, as indicators of the vulnerability of groundwater to fecal contamination.

Detection of a fecal indicator in groundwater has different implications than detection of indicators in other aqueous environments. Transport of microorganisms through subsurface media is a very complex process; thus, the presence of a

|

1 |

See http://www.epa.gov/sab/index.html for further information about the Science Advisory Board. |

microorganism at a specific location is subject to a high degree of spatial variability but is often more consistent over time. When detects occur, additional sampling should be conducted to obtain more information about the situation. Like-wise, the absence of an indicator in a single subsequent sample does not invalidate the previous positive result. The EPA has determined that between 6 and 18 samples are necessary to determine with 99.9 percent probability that a fecal indicator will be detected in a groundwater that is highly contaminated during at least part of the year (EPA, 2000a).

Depending on the use of such information, it may be sufficient to detect indicators in groundwater. For example, detection of fecal indicators demonstrates that groundwater is vulnerable to contamination by surface sources of fecal material. This may be sufficient to spur action, such as installation of on-site treatment in the case of a drinking water well.

Level B—Investigations to Confirm Health Risk

The detection of indicator organisms in source water during routine monitoring could trigger a second level of study, Level B investigations to confirm health risk. This level of investigation might be desirable if, for example, an assessment of the risk to public health was needed. In the absence of a quantified, documented relationship between the concentrations of indicator organisms and the concentrations of pathogens in water, it would be necessary to collect and analyze samples for specific pathogens. The specific pathogen(s) to study could be determined on the basis of a number of considerations. One such consideration would be the existence and types of possible contaminant sources. If there are no sources of human fecal material in the area, human viruses would likely not be of concern. Another consideration would be the incidence of specific infectious diseases in the source community, because this might indicate specific organisms that should be targeted in the investigation.

The choice of analytical methods in a Level B investigation will be a critical part of the process. For a confirmation of health risk, it is necessary to use a method that provides quantitative information on the pathogens so that an appropriate exposure assessment can be performed. To allow the best input to a risk assessment, the method should also provide information on the viability or infectivity of the pathogens. For viruses, this would require the use of a cell culture system; one limitation of cell culture systems is that information is not obtained on the identity of the virus detected. For bacteria, some cultural technique would be required; depending on the method used, the identity of the microorganisms can be determined. The need to use a culture-based method may limit the number of organisms that can be assessed. For example, no culture-based method has been developed for the detection of noroviruses (formerly called “Norwalk-like viruses”), although they are significant causes of water- and foodborne diseases (CDC, 2003). However, the availability of molecular methods, such as PCR and

microarrays, makes it possible to identify specific organisms, including noroviruses, in samples. Microarray technology has already been used to screen a large number of viruses from cell culture samples, and this approach would be quite applicable to the screening of groundwaters (Wang et al., 2002) When molecular methods are combined with cultural methods, information on the presence of specific, infective organisms is obtained.

In the specific case of groundwater, it may be possible to use non-culture-based methods to assess the potential health risk. With respect to groundwater, the concern is whether there is a subsurface pathway through which pathogens in surface sources of contamination can travel and ultimately reach groundwater. Because the microorganisms of concern are not native to groundwater, evidence of their presence, whether in an infective form or not, is proof that the pathway, and thus the potential for groundwater contamination, exists. Therefore, it may be acceptable to use molecular methods such PCR to analyze samples for the presence of pathogens. With the availability of quantitative PCR and information on the ratio between infective and noninfective particles, an estimate of the number of infective microorganisms can be made.

Level C—Source Investigations

In some cases, it may be desirable to determine the source of the contamination so that it can be eliminated. This determination will often require a detailed investigation (Level C). Many enteric pathogens are present in both animal and human wastes. Further complicating matters is that many of the manifestations of infection are relatively nonspecific, such as gastroenteritis, so it is not possible to differentiate sources based on the prevalence of a particular type of illness in the community. In urban areas, there may be multiple point and nonpoint sources of human waste, including septic systems, leaking sewer lines, sites at which reclaimed sewage effluent is being used for irrigation or artificial groundwater recharge, and surface waters receiving treated sewage effluent.

In a situation such as this, it will be necessary to identify the exact source(s) of contamination; thus, traditional methods of microbial identification and enumeration will not be adequate. This can be accomplished by a tracer study, in which some unique substance (such as a colored or fluorescent dye or a microorganism that has been marked with a unique identifying feature) is added to a suspected source and the movement of that substance is followed to determine whether it does, indeed, end up in groundwater. This method can provide useful information; however, it suffers from a number of drawbacks. These include the difficulty of obtaining permission to add tracers to a source, identification of a tracer that has the same properties as the pathogens of interest, and devising an appropriate monitoring scheme. A well-conducted tracer study also requires the involvement of individuals who are highly trained in hydrogeology and/or soil physics; thus, the cost of conducting such a study is very high. In many cases, it

may not be practical to conduct a tracer study, especially if extended travel times are involved or if an appropriate network of monitoring wells cannot be installed.

A more common approach for source identification is microbial source tracking (see Box 6-1). Further assessment of coliphage typing as a means to distinguish sewage from other sources should be evaluated since this could be a natural bridge between Level A and Level C investigations. As discussed previously, because of its ubiquitous presence in the intestinal tract of animals, E. coli is the most commonly used organism for source tracking. However, detection of E. coli in groundwater is much rarer than in surface waters. Thus, all E. coli detected in groundwaters should be characterized using a variety of molecular techniques. In groundwater systems the potential for regrowth is high; hence, a marker for biofilm development would be very worthwhile since this may be indicative of other naturally occurring microorganisms (e.g., Legionella). Finally, the integration of Level B with Level C studies for direct virus testing would truly indicate a source of human waste as well as human health risk.

IMPEDIMENTS AND DRIVERS TO IMPLEMENTATION

The principal impediments to the development and implementation of a phased monitoring framework for the selection and use of indicators and indicator approaches are the requirements for technical development, the cost of more sophisticated monitoring, and institutional resistance to change. Investment by the government in research designed to develop and standardize new molecular techniques will be an important contributor to resolving the first two impediments. Technical development is important because government investment will be required to make these developments come about in a timely fashion, and cost is critical because investment in methods development and related research will bring down the cost of these methods. Government-funded round-robins and government-sponsored surveys and workshops will also go a long way to overcoming institutional resistance to change. Consequently, as recommended in Chapter 5, EPA should invest in the development of rapid-turnaround biomolecular methods to improve our ability to assess contamination of the nation’s water by pathogens.

It is also critical that investments be made in improving or replacing existing methods for collecting and processing samples. Currently, the emphasis is on the development of new detection methodologies that are rapid, sensitive, and specific. However, most of these methods are limited to analyzing very small (microliter-range) sample sizes. Because the presence of a single pathogen in several hundred liters of water may be sufficient to cause a significant public health risk, large samples must be evaluated (see Chapter 5 and Figure 5-5 for further information). The methods that have been developed to concentrate those samples to smaller volumes have generally focused on producing a sample for culture-based analysis. Thus, many substances that are inhibitory to molecular methods are

present at highly concentrated levels, which interferes with the analysis. New methods for sampling large volumes must be developed, with the aim that sample analysis be conducted using these new molecular methods. Consequently, the committee also recommends that the EPA invest in research to develop concentration methods designed to vastly increase the size of samples on which biomolecular assay tools can be employed. The effort to develop these concentration and purification methods may cost more and will certainly take longer than development of the assays themselves, but the ultimate payoff in human health protection will be profound.

Evaluation of sources and health risk carries with it issues that may involve legal matters. Communities have the right to know what is in their drinking water supply, even if it is being treated adequately, because some consumers may have cause for concern (see EPA, 1998b). There are issues of who will pay for cleaning up contaminated water systems and how much treatment is needed, given the continued conflict between disinfection and disinfectant by-product formation. As methods are developed, concurrent investment must be made in maintaining health surveillance and addressing risk assessment methods. The level of acceptable risk and the communication of such social values have to be addressed. Better investigation of all waterborne outbreaks with new tools and indicator systems would be very useful for examining relative risks. It may be worthwhile to develop a Risk Advisory committee, perhaps through EPA’s Science Advisory Board. The new approaches and new methods will lead to more monitoring data and in some cases, as with microarrays, large databases could be developed very quickly. It is the interpretation of the data and use of the information that will provide the pathway forward.

SUMMARY: CONCLUSIONS AND RECOMMENDATIONS

Microbial indicators are measured to achieve a number of different goals in a variety of water media. No single microbial water quality indicator or even a small set of indicators can meet this diversity of needs. Rather, most appropriate indicators, indicator approaches, and methods depend on the specific application and needs of the situation. Indicator recommendations for selection and use should be developed in the context of a phased monitoring framework. These phases should include screening and routine monitoring (Level A); investigations to confirm health risk (Level B); and detailed investigations for source identification and mitigation (Level C). Under any circumstances, time is of the essence in all three levels of microbial water quality monitoring whenever there exists a possibility that the public may be at risk.

Microbial measurement technology is evolving rapidly and there is an opportunity to leverage these advances toward water quality needs. If sufficient investment is made in the coming decade, indicator systems will undergo a com-

prehensive evolution, and the correct and rapid identification of waters that are contaminated with pathogenic microorganisms will be substantially enhanced.

Historically, EPA has focused much of its investment on indicators and indicator systems that are used at the screening level (A), but there is an increasing need for national leadership and guidance for the phases of microbial investigation that follow screening. In the limited context of screening, EPA’s guidance has been of mixed value, and in this regard the committee concludes that (1) the selection of enterococci for screening at marine recreational beaches is appropriate, because enterococci have been shown to have the best relationship to health risk; (2) existing and proposed monitoring requirements for surface water sources of drinking water are irregular and are not supported by adequate research; and (3) proposed monitoring requirements for groundwater are not adequately protective for viral pathogens.

Based on these conclusions, the committee makes the following recommendations:

-

EPA should invest in a long-term research and development program to build a flexible tool box of indicators and methods that will serve as a resource for all three phases of investigation identified in this report.

-

That tool box should include the following:

-

the development of new indicators, particularly direct measures of pathogens that will enhance health risk confirmation and source identification;

-

the use of coliphages, as suggested by EPA’s Science Advisory Board, in conjunction with bacterial indicators as indicators of groundwater vulnerability to fecal contamination; and

-

the use of routine microbiological monitoring of surface water supplies of drinking water before as well as after treatment.

-

-

A significant portion of that investment should be directed toward concentration methods because existing technology is inadequate to measure pathogens of concern at low concentrations.

-

Consistent with previous related recommendations, EPA should invest in comprehensive epidemiologic studies to (1) assess the effectiveness and validity of newly developed indicators or indicator approaches for determining poor microbial water quality and (2) assess the effectiveness of the indicators or indicator approaches at preventing and reducing human disease.

-

EPA should develop a more proactive and systematic process for addressing microorganisms on the CCL. The EPA should (1) prepare a review of published methods for each CCL microorganism and groups of related microorganisms; (2) publish those reviews on the Internet so researchers and practitioners can use them and comment on how to improve them; and (3) promote their use in special studies and monitoring efforts.

These conclusions and recommendations should not be taken as an excuse to either cling to or abandon current indicator systems until research develops new approaches. On the contrary, the committee recommends a phased approach to monitoring, as both a means to make existing indicator systems more effective, and to encourage the successive adoption of new, more promising indicator systems as they become available.

REFERENCES

Abbaszadegan, M., P.W. Stewart, M.W. Lechevallier, J.S. Rosen, and C.P. Gerba. 1999. Occurrence of Viruses in Ground Water in the United States. Denver, Colorado: American Water Works Association Research Foundation.

APHA (American Public Health Association). 1998. Standard Methods for the Examination of Water and Wastewater, 20th Edition. Washington, D.C.

Boehm, A.B., S.B. Grant, J.H. Kim, S.L. Mowbray, C.D. McGee, C.D. Clark, D.M. Foley, and D.E. Wellman. 2002. Decadal and shorter period variability and surf zone water quality at Huntington Beach, California. Environmental Science and Technology 36: 3885-3892.

CDC (Centers for Disease Control and Prevention). 2003. Norovirus: Technical Fact Sheet. [On-line]. Available: http://www.cdc.gov/ncidod/dvrd/revb/gastro/norovirus-factsheet.htm.

EPA (U.S. Environmental Protection Agency). 1986. Ambient Water Quality Criteria for Bacteria -1986. Washington, D.C.: Office of Water. EPA 440-5-84-002.

EPA. 1989a. Drinking Water; National Primary Drinking Water Regulations; Filtration, Disinfection; Turbidity; Giardia lamblia, Viruses, Legionella, and Heterotrophic Bacteria; Final Rule. Federal Register 54: 27486-27541.

EPA. 1989b. Drinking Water; National Primary Drinking Water Regulations; Total Coliforms (Including Fecal Coliforms and E. coli); Final Rule. Federal Register 54: 27544-27568.

EPA. 1998a. Announcement of the Drinking Water Contaminant Candidate List. Notice. Federal Register 64(40): 10274-10287.

EPA. 1998b. Consumer Confidence Reports: Final Rule. Washington, D.C.: Office of Water. EPA 816-F-98-007.

EPA. 1998c. National Primary Drinking Water Regulations: Interim Enhanced Surface Water Treatment; Final Rule. Federal Register 64(241): 69477-69521.

EPA. 1999a. EPA Action Plan for Beaches and Recreational Waters. Washington, D.C.: Office of Research and Development and Office of Water. EPA-600-R-98-079.

EPA. 1999b. Revisions to the Unregulated Contaminant Monitoring Regulation for Public Water Systems: Proposed Rule. Federal Register 64(83): 23397-23458.

EPA. 1999c. Revisions to the Unregulated Contaminant Monitoring Regulation for Public Water Systems: Final Rule. Federal Register 64(180): 50556-50620.

EPA. 2000a. National Primary Drinking Water Regulations: Ground Water Rule; Proposed Rule. Federal Register 65(91): 30193-30274.

EPA. 2000b. Comments on the Environmental Protection Agency’s (EPA) Draft Proposal for the Ground Water Rule (GWR). June 30. Washington, D.C.: EPA Science Advisory Board Committee on Drinking Water.

EPA. 2002. Implementation Guidance for Ambient Water Quality Criteria for Bacteria (Draft). Washington, D.C.: Office of Water. EPA-823-B-02-003.

EPA. 2003. National Primary Drinking Water Regulations: Long Term 2 Enhanced Surface Water Treatment Rule; Proposed Rule. Federal Register 68(154): 47640-47795.

Feachem, R.G. 1975. An improved role for fecal coliform to fecal streptococci ratios in the differentiation between human and nonhuman pollution sources. Water Research 9: 689-690.

Gennaccaro, A.L., M.R. McLaughlin, W. Quintero-Betancourt, D.E. Huffman, and J.B. Rose. 2003. Infectious Cryptosporidium oocysts in final reclaimed effluents. Applied and Environmental Microbiology 69: 4983-4984.

Gerba, C.P. 1984. Applied and theoretical aspects of virus adsorption to surfaces. Advances in Applied Microbiology 30: 133-168.

Griffith, J.F., S.B. Weisberg, and C.D. McGee. 2003. Evaluation of microbial source tracking methods using mixed fecal sources in aqueous test samples. Journal of Water and Health 1: 141-151.

Haile, R.W., J.S. Witte, M. Gold, R. Cressey, C.D. McGee, R.C. Millikan, A. Glasser, N. Harawa, C. Ervin, P. Harmon, J. Harper, J. Dermand, J. Alamillo, K. Barrett, M. Nides, and G. Wang. 1999. The health effects of swimming in ocean water contaminated by storm drain runoff. Journal of Epidemiology 104: 355-363.

LeChevallier, M.W., W.D. Norton, and R.G. Lee. 1991. Occurrence of Giardia and Cryptosporidium spp. in surface water supplies. Applied and Environmental Microbiology 57(9): 2610.

Leecaster, M.K., and S.B. Weisberg. 2001. Effect of sampling frequency on shoreline microbiology assessments. Marine Pollution Bulletin 42: 1150-1154.

Malakoff, D. 2002. Microbiologists on the trail of polluting bacteria. Science 295: 2352-2353.

Noble, R.T., D.F. Moore, M. Leecaster, C.D. McGee, and S.B. Weisberg. 2003. Comparison of total coliform, fecal coliform, and enterococcus bacterial indicator response for ocean recreational water quality testing. Water Research 37: 1637-1643.

NRC (National Research Council). 1999. Setting Priorities for Drinking Water Contaminants. Washington, D.C.: National Academy Press.

Prüss, A. 1998. Review of epidemiological studies on health effects from exposure to recreational water. International Journal of Epidemiology 27: 1-9.

Rose, J.B., C.P. Gerba, and W. Jakubowski. 1991. Survey of potable water supplies for Cryptosporidium and Giardia. Environmental Science and Technology 25: 1393-1400.

Scott, T.M., J.B. Rose, T.M. Jenkins, S.R. Farrah, and J. Lukasik. 2002. Microbial source tracking: Current methodology and future directions. Applied and Environmental Microbiology 68: 5796-5803.

Simpson, J.M., J.W. Santo-Domingo, and D.J. Reasoner. 2002. Microbial source tracking: State of the science. Environmental Science and Technology 36: 5729-5289.

Sorenson, D.L., S.G. Eberl, and R.A. Diksa. 1989. Clostridium perfringens as a point source indicator in non-point polluted streams. Water Research 23: 191-197.

Taggart, M. 2002. Factors affecting shoreline fecal bacteria densities around freshwater outlets at two marine beaches. Ph.D. dissertation. University of California, Los Angeles.

Wade, T.J., N. Pai, J.N.S. Eisenberg, and J.M. Colford, Jr. 2003. Do U.S. Environmental Protection Agency water quality guidelines for recreational waters prevent gastrointestinal illness? A systematic review and meta-analysis. Environmental Health Perspectives 111(8): 1102-1109.

Wang, D., L. Coscoy, M. Zylberberg, P.C. Avila, H.A. Boushey, D. Ganem, and J.L. DeRisi. 2002. Microarray-based detection and genotyping of viral pathogens. Proceedings of the National Academy of Sciences 99: 15687-15692.