5

Sensory Aids, Devices, and Prostheses

In disability determination for people with hearing loss, how should the Social Security Administration (SSA) account for the potential benefits that the individual might receive from a sensory aid? In determining vision-based disability, SSA mandates testing with “best correction,” but for claimants with hearing loss, currently most SSA guidance directs that they should be tested without correction. Hearing aids cannot be fitted like eyeglasses, whereby a quick refraction arrives at a prescription that provides the “best correction” almost immediately. The process of achieving the best hearing correction for an individual can be long and complicated. Prosthetic correction for hearing impairment is often not as successful as vision correction, leaving the individual with substantial residual limitations. Many individuals find that the positive benefits of the prosthesis do not outweigh the negative consequences (such as ear discomfort, feedback squeal, high cost, and stigma), and they may elect to continue without correction.

This chapter reviews the variety of devices that typically are used to ameliorate the effects of hearing loss. Wearable personal devices include hearing aids and cochlear implants, as well as auditory brainstem implants. In addition, there is a class of ancillary devices called assistive listening devices (ALDs) that may be used alone or in combination with hearing aids or implants. The chapter briefly describes the main types of devices and discusses some of the issues involved in their provision and use.

HEARING AIDS

Conventional wearable hearing aids are self-contained amplifying systems. They include a microphone to pick up sound energy, an amplifier to boost the level of the signal, and various filters and other devices that modify the sound to match it more precisely to the needs of the impaired listener. In addition, air conduction instruments include a miniature loudspeaker (called a receiver) to generate the amplified sound, which is routed into the ear canal. Bone conduction instruments deliver energy to the cochlea by vibrating the skull with a small transducer. Finally, all hearing aids require a power source (battery).

Early hearing aids were worn on the body or carried in a pocket, but these types are very seldom seen today. Current air conduction hearing aids are almost all worn at ear level, and there are several different styles.

Behind-the-ear (BTE) models fit snugly over the pinna of the external ear and deliver sound to the ear canal through a plastic tube and plug (earmold).

In-the-ear (ITE) models are manufactured with all the components inside the custom earmold. This type of hearing aid fits into the concha of the ear.

In-the-canal (ITC) models are smaller ITE versions that fit mostly into the outer end of the ear canal.

Completely-in-the-canal (CIC) models are the smallest devices and fit entirely inside the ear canal.

Bone conduction hearing aids are used only when the ear canal is not able to accommodate or tolerate the insertion of an air conduction device. Conventional bone conduction devices use a BTE-style case and incorporate a vibrator that is held against the skull using a spring headband.

Signal Processing in Hearing Aids

At the most basic level, a hearing aid simply increases the loudness of sounds presented to the impaired ear. Most hearing aids also modify sounds somewhat to attempt to compensate for specific features of the individual hearing loss. It is typical to shape the frequency response to provide more amplification for frequencies for which the hearing loss is greater: this is called selective amplification. In addition, to accommodate the reduced range of sound levels available to typical hearing-impaired listeners, many instruments provide more gain for soft environmental sounds than they do for louder sounds: this is called wide dynamic range compression (WDRC) processing. Another important feature in many modern instruments is the inclusion of a directional microphone that is able to partially suppress sounds from the back and sides of the wearer in

some listening environments, on the assumption that sounds from these directions have less functional importance than sounds from in front.

Some instruments filter the signal into several bands (usually 2-4 but sometimes more) to shape the frequency response. Others use several independent channels to make compression processing more precise. Still others combine both approaches. Much of this processing can be accomplished using analog technology or digitally programmable analog (hybrid) devices. However, current trends are toward using all-digital processing in hearing aids. Digital processing is able to accomplish selective amplification, compression processing, and directional sensitivity in more complex and sophisticated ways and with greater flexibility than previously possible in analog and hybrid devices. In addition, digital processing opens the door for the development of new features, such as noise management, speech enhancement, and feedback reduction. Most of the potential advantages of digital processing have not been fully realized at this time.

Candidacy for Hearing Aids

Hearing aids should not be considered for management of hearing loss until all treatable otological problems have been addressed. Use of hearing aids and cochlear implants by children with hearing loss is considered in Chapter 7.

For adults with hearing loss, hearing aids can be useful when hearing thresholds in the better ear are poorer than about 25 dB HL. In practice, most adults do not seek amplification until their hearing loss is sufficient to cause more than occasional problems in daily life. This “low fence” differs across individuals and is influenced by auditory demands. However, if hearing loss in the better ear in the mid-frequency region is worse than about 40 dB, typical adults will experience considerable problems in everyday communication, especially when ambient noise is present. About 70 to 80 percent of adults with bilateral hearing loss prefer to wear two hearing aids. The purported advantages of two hearing aids over one include improved speech recognition in difficult listening environments, improved localization, reduced head shadow effect, and a better sense of auditory space. However, it should be noted that substantial benefit can be obtained from a single hearing aid, even in the presence of bilateral hearing loss (see, e.g., Chung and Stephens, 1986; Kobler, Rosenhall, and Hansson, 2001).

Adults with severe hearing loss (greater than 70 dB) or worse may derive limited benefit from hearing aids. Many are candidates for a cochlear implant (discussed later in this chapter).

Although current hearing aids have the potential to benefit many

individuals with mild to severe hearing loss, it appears that only about 22 percent of potential hearing aid candidates choose to obtain amplification (Kochkin, 2001). Many efforts have been made to determine the reasons for the low take-up rate and methods for increasing it, but this has proved to be an intransigent problem. Issues such as high cost, low perceived benefit, stigma associated with hearing loss, and denial of hearing problems may contribute to the low acceptance rate of hearing aids. As a result of these complicating factors, many individuals do not have or use amplification despite having pure-tone thresholds that suggest that they are hearing aid candidates.

Selection and Adjustment Issues

The term “hearing aid fitting” is used to encompass the process of selecting, adjusting, and fine-tuning an appropriate hearing aid for a person with hearing loss. Although there is not a fully developed science of hearing aid selection and fitting, there are several generally recognized principles. An ideal fitting will:

-

restore audibility for sounds that are soft but audible to people with normal hearing;

-

present conversational speech at a comfortable loudness level for the listener with hearing loss;

-

allow normally loud sounds to be perceived as loud;

-

prevent any sounds from violating the loudness discomfort threshold; and

-

preserve a natural, pleasant sound quality with minimal distortion.

Simultaneous achievement of all these goals often is not possible. In these cases, compromises are necessary and the best compromises are often reached using a trial-and-error method.

In many hearing aid fittings, the amount of amplification needed at each frequency is determined on the basis of a theoretical prescription. Several prescriptive methods have been developed and can be found in the audiology literature. The most thoroughly validated method is the one developed by Byrne and Dillon (1986) entitled the National Acoustics Laboratory Revised (NALR) procedure. This procedure has become the de facto gold standard for newer procedures, which may offer additional features. The NALR method provides a gain-by-frequency prescription for a single input level (conversational speech). If a WDRC hearing aid is used, additional decisions must be made about the parameters of the compression processing. These include the number of channels of com-

pression, the input levels at which compression is initiated, how much compression to use, and how to rapidly modify the signal for best results. Some newer procedures, for example, NAL-NL1 (Byrne, Dillon, Ching, Katsch, and Keidser, 2001), DSLi/o (Cornelisse, Seewald, and Jamieson, 1995), and Cambridge (Moore, 2000), offer guidelines for some of these decisions. Even when these guidelines are used as a starting point, trial and error may be necessary to determine the best combination of settings for the individual listener.

After a hearing aid has been selected and tentatively adjusted to the appropriate settings for the listener, it is important to determine how closely the amplification matches the theoretical goals (i.e., the prescription). This part of the fitting process is called “verification,” and it can be completed in several ways. One widely used method of verification, and indeed the only method currently possible with very young children, is accomplished using a probe-tube microphone in the ear canal. The probe tube is placed in the listener’s ear canal and sound measurements are made both with and without the hearing aid. The difference between the aided and unaided measurements is a measure of the effective amplification provided by the fitting overall. This quantity is compared with the prescription goals, and adjustments are made until the match is satisfactory, or as good as possible. With listeners who can respond appropriately, other methods of verification are often used instead of, or in addition to, ear canal probe tube microphone measures. These include aided thresholds, sound quality ratings, and aided speech recognition tests. After the hearing aid fitting and verification are complete, a period of accommodation and adjustment is necessary before the maximum benefit can be obtained from the hearing aids. During this period, which may last from a few weeks to a few months, follow-up appointments that allow input from the hearing care professional can be important in facilitating the best outcome. This input may take the form of problem-focused counseling, hearing aid adjustments, or group audiological rehabilitation classes, depending on the needs of the hearing aid wearer. This post-fitting component of hearing aid provision is often overlooked or minimally performed. For many clients, this probably contributes to a less-than-optimal long-term outcome.

Problems Not Solved by Hearing Aids

Even when hearing aids are fitted competently, hearing aid wearers often continue to experience a variety of problems.

Even the most sophisticated hearing aids do not restore hearing to normal. Wearers often still cannot detect soft sounds. Even sounds that are audible may not be heard clearly or comfortably. These facts are often

not realized by people without hearing loss, who may expect a level of performance from the hearing aid wearer that is beyond his or her ability, even with amplification. These shortcomings add to the frustrations of adjusting to wearing hearing aids.

For many hearing aid wearers who have sensorineural hearing loss, the major concern is an inability to understand speech in a situation when background noise is present. This is generally agreed to be a physiological problem resulting from hair cell loss. Recent data suggest that temporal processing and cognitive abilities also may impact speech understanding in noise. Hearing aids often do not help substantially with this problem. Thus, communication in noise remains a major difficulty for many hearing aid wearers.

The highest possible output of the hearing aid is typically adjusted with the goal of maximizing the range and variety of sounds available to the listener without causing loudness discomfort. Sometimes this effort is not fully successful, and certain environmental sounds are experienced at unpleasant loudness levels.

Another problem is that when sound that is produced by the hearing aid leaks back out of the ear canal and reaches the hearing aid’s microphone, an annoying (and embarrassing) whistling can be produced. This type of feedback loop can be created by a variety of combinations of gain, earmold fit, and external conditions (such as the proximity of a hat brim or a nearby wall).

Feedback can be especially problematic when a telephone is placed near a hearing aid, as in normal telephone use. The resulting squeal can prevent effective telephone communication while using the hearing aid. Some individuals with relatively mild hearing loss can communicate on the telephone without the hearing aid, but this is not possible for those with more severe hearing problems. An induction coil (T-coil) built into the hearing aid can solve this problem for many individuals, but these coils are sometimes not provided, or the hearing aid wearer may not know how to use the T-coil, or the telephone may not be hearing aid compatible (i.e., it does not produce a suitable magnetic field for the T-coil to use).

Finally, the hearing aid wearer’s own voice may be unpleasantly loud when the device is worn due to the effects of plugging the ear canal with the earmold or hearing aid. Sometimes this problem is severe enough to prompt the user to reject the device.

Hearing Aid Fitting Outcomes in Adults

There are two basic approaches to the measurement of hearing aid fitting outcomes: the first involves measures of the hearing aid’s technical

merit when in situ. The second involves measures of the extent to which the amplification system as a whole is reported to have alleviated the daily life problems of the person with hearing loss and his or her family. Much early research concentrated on technical outcomes. It was assumed that subjective (real-life) outcomes would be accurately predicted from these objective data. It is now clear that this often is not the case. Real-life problems associated with hearing loss are complicated by such contextual issues as personality, lifestyle, environment, and family dynamics, and these play an important part in the ultimate success of a hearing aid fitting. Numerous studies have shown that measures of technical merit are not strongly predictive of real-life effectiveness of hearing aid fitting (e.g., Souza, Yueh, Sarubbi, and Loovis, 2000; Walden, Surr, Cord, Edwards, and Olsen, 2000). Thus, it is now recognized that real-life outcomes of a fitting must be assessed separately from the technical merit of the hearing aid. Many researchers feel that both types of data are essential for a full description of hearing aid fitting outcome.

The technical merit of a fitted hearing aid may be assessed acoustically using, for example, real-ear probe-microphone measures (e.g., Mueller, Hawkins, and Northern, 1992) or audibility measures, such as the speech intelligibility index (American National Standards Institute, 2002). An equally popular approach to exploring technical merit involves psychoacoustic data, especially speech recognition scores measured in quiet or in noise (e.g., Shanks, Wilson, Larson, and Williams, 2002).

The real-life effectiveness of a hearing aid is measured using subjective data provided by the person with hearing loss or by significant others. Numerous questionnaires have been developed and standardized specifically for the purpose of assessing hearing aid fitting outcomes, and many others have been conscripted to serve this application (see reviews in Bentler and Kramer, 2000; Noble, 1998, Tables 4.1 and 4.2).

Research on Outcomes

Although the literature is replete with data depicting technical and real-life hearing aid fitting outcomes for relatively small groups (N < 50) of participants, most of these are experimental studies and do not attempt to determine the typical outcomes of representative contemporary hearing aid fittings. There are not many studies that describe results from large groups of clinic patients originating in the United States who have been fitted with current-technology hearing aids.

Larson et al. (2000) described a carefully controlled and conducted crossover trial with 360 older adults with moderate bilateral sensorineural hearing loss. Each of three hearing aid circuits was used for three months by each subject. Outcome measures included speech recognition

tests and self-report using a standardized questionnaire. When speech was presented at a conversational level, recognition of monosyllabic words in quiet improved about 30 percent (from 58 to 88 percent) with amplification. When noise was present at +7 dB signal to noise (S/N) ratio (on average), unaided sentence recognition scores were about 48 percent and aided scores averaged about 64 percent. Thus, appropriate amplification improved sentence recognition in noise about 16 percent for the typical listener. Haskell et al. (2002) reported subjective outcomes from the same investigation of 360 adults. They noted that, without hearing aids, the subjects reported experiencing communication problems in about 40 percent of quiet situations and 65 percent of noisy situations. Subjective reports of real-life benefits indicated that with hearing aids, subjects reported a 20-25 percent lower frequency of problems for speech communication in quiet and a 30 percent lower frequency for communication in noisy settings.

Humes, Wilson, Barlow, and Garner (2002) presented comparable data for 134 older adults with moderate hearing loss. In this study, sentence recognition was measured after one year of hearing aid use. Results indicated that understanding of sentences at a conversational level with a +8 dB S/N ratio increased by about 19 percent for the average hearing aid user; unaided performance scores averaged about 55 percent and aided scores were about 74 percent.

Like most reports of hearing aid outcomes, the studies by Larsen et al. (2000) and Humes et al. (2002) focused on listeners with moderate hearing loss. It is likely that individuals who seek Social Security disability benefits would have more severe hearing losses than these groups. The literature yields relatively few data reflecting the performance of people with severe and profound hearing loss who have been fitted with appropriate hearing aids.

Flynn, Dowell, and Clark (1998) reported a study employing 34 listeners with severe or profound hearing loss who wore carefully fitted hearing aids. To be eligible for this study, subjects were required to be proficient in the use of spoken English. The typical subject achieved a recognition score of 55 percent when listening to amplified monosyllabic words in quiet. Everyday sentences produced an average recognition score of 72 percent in quiet and 58 percent with a +10 dB S/N ratio. It should be emphasized that the variability across subjects was large (as usually seen in studies of listeners with hearing loss): standard deviations were on the order of 25-30 percent.

Kuk, Potts, Valente, Lee, and Picirrillo (2003) reported a study of 20 individuals with severe or profound hearing losses who were carefully fitted with high-technology hearing aids. Proficiency in spoken English was not reported to be a selection criterion in this study. For speech pre-

sented at a conversational level in quiet, subjects were able to repeat about 15 percent of amplified sentences that contained no contextual cues and about 33 percent of sentences that were high in contextual cues. These subjects reported that when they use hearing aids, they experience difficulty in real life about 25 percent of the time in quiet and about 50 percent of the time in noise.

Although the mean pure-tone thresholds of the subjects studied by Kuk et al. (2003) were only slightly poorer than those studied by Flynn et al. (1998), the aided speech recognition performance observed by Kuk et al. was notably worse than that measured by Flynn et al. Such inconsistent outcomes are not unusual in investigations of individuals with hearing loss, especially in studies with small numbers of subjects. Many listener variables, such as the ability to perceive acoustic features of sounds and to utilize contextual cues, have an impact on speech recognition scores. In addition, acoustic variables such as the particular speech test used, the presentation level, and the characteristics and level of any background noise, all strongly affect obtained recognition scores. Thus, some individuals with significant hearing loss are able to perform quite well in situations requiring speech communication, while others with similar pure-tone thresholds are almost completely unsuccessful.

COCHLEAR IMPLANTS

Profound neurosensory hearing loss is one of the most significant impediments to an individual’s ability to successfully communicate with other human beings using audition and spoken language. If the hearing loss occurs prior to the development of speech and language, additional lifelong reading and spoken language deficits confront those who are profoundly deaf. Individuals with moderate to severe hearing loss experience difficulty hearing sound and, more importantly, understanding speech clearly. In the presence of competing noise or other sounds speech understanding is more impaired. In the past, the only rehabilitative assistance for people with severe to profound hearing loss was the amplification of sound by hearing aids and the use of sign language. However, hearing aids can only make sound louder without providing important speech cues to improve the discrimination or understanding of words. They also do not provide sufficient cues to assist hearing in background noise.

The development of the cochlear implant has radically altered the habilitation and rehabilitation of profoundly deaf children and adults. The past 25 years of basic science and clinical research have shown that cochlear implants are safe and effective in improving speech perception in both children and adults. The introduction of this technology into clini-

cal practice over the past 10 years has generated renewed interest in the etiology and the methods of assessment of individuals with profound hearing loss. In this section we review the hardware and software components of a cochlear implant, present patient selection criteria for an implant, and discuss speech perception results in postlingually deafened adults with multichannel cochlear implants. The same cochlear implants are used for management of profound deafness in children deafened prelingually (before age 2) and postlingually. The indications and results for children are discussed in Chapter 7.

Cochlear Implant Devices

Cochlear implants attempt to replace the transducer function of damaged inner ear hair cells. Most causes of sensorineural deafness result in injury to the hair cells rather than to auditory nerve fibers. Prolonged deafness eventually affects the auditory nerve, as its neurons rely on neurotrophic factors (proteins produced by hair cells) for their survival. It has been shown that electrical stimulation provided by cochlear implants can prevent neural degeneration (Leake, Hradek, and Snyder, 1999). The devices are continually undergoing modification and upgrading; however, the basic components of the systems have not changed.

The early pioneers of cochlear implants include William House (1976), Blair Simmons (1965, 1966), and Robin Michelson (1971). These individuals were the first to describe the clinical benefits of electrically stimulating the inner ear. House and Michelson described the placement of single electrodes within the scala tympani of the inner ear, while Simmons introduced multiple electrodes directly into the auditory nerve. Their reports in the 1960s were strongly criticized by the scientific community.

The first commercially available implants were produced in 1972 as the House-3M single channel implant. This device was an aid to speechreading and provided an awareness of environmental sounds to several thousand postlingually deafened adults and a few hundred children. Graeme Clark (Clark et al., 1977) and his research team in Australia focused on multichannel stimulation. A commercially available (Cochlear Corporation) multichannel implant with 22 separate channels began clinical trials in 1983. The University of California at San Francisco implant team also began clinical trials in 1985 with a multichannel implant. A variant of this device is now manufactured by Advanced Bionics (the Clarion cochlear implant). Major advances in microcircuitry and speech coding algorithms have been developed over the past 20 years.

In 2003, there were three companies that made commercially available cochlear implants for use in the United States—Cochlear Corporation, Advanced Bionics, and Med El. Each has a slightly different elec-

trode array that is inserted into the inner ear and a different way of encoding speech information into an electrical signal. There is substantial variability in results with all devices and subject populations, and the results have improved as more is learned about the way the human brain processes speech signals. It is important to recognize that results with implants will likely continue to improve as device technology advances and indications for implantation expand. The results reported here are most likely to be out of date in two to three years.

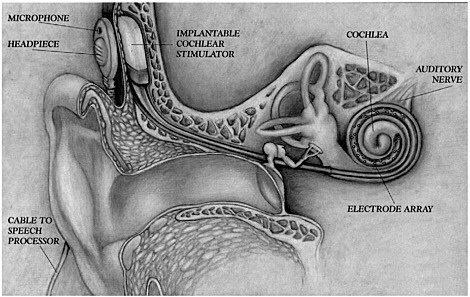

The basic components of a cochlear implant (shown in Figure 5-1) include:

-

a microphone to pick up auditory information from the environment,

-

a speech processor that changes the mechanical acoustic sound energy into electrical signals,

-

a headpiece with transmitter coil to send the information via radio frequency through the skin,

-

an implanted receiver/stimulator that interprets the electrical signal sent by the speech processor, and

-

an intracochlear electrode array that distributes the electrically processed speech information to the auditory nerves.

FIGURE 5-1 The components of a cochlear implant.

NOTE: In some models, the speech processor is incorporated with microphone and batteries in “behind-the-ear” unit.

SOURCE: Advanced Bionics Company, reprinted with permission.

Multichannel cochlear implants that deliver different temporal information to different parts of the cochlea (place or spectral information) have been the most successful in generating speech perception in postlingually deafened adults with profound hearing loss and in postlingually and prelingually deafened children (Cohen, Waltzman, and Fisher, 1993; Gantz, McCabe, and Tyler, 1988). Innovative methods of processing speech have been developed by many independent research programs and cochlear implant manufacturers.

Most cochlear implants today employ a band-pass filter system to separate the acoustic signal into discrete frequency bands that can be delivered to the appropriate frequency regions of the cochlea, providing spectral information about the speech signal. Temporal and intensity cues are delivered by varying the rate of stimulation and the amount of stimulating current. Multichannel implant systems use between 8 and 24 channels, depending on the implant manufacturer.

Current cochlear implant systems are designed to take advantage of the tonotopic organization of the cochlea. Thus, place coding is used to transfer spectral information in the speech signal as well as to encode durational and intensity cues (American Speech-Language-Hearing Association, 2004). The number of channels may not be as important as the speech processing algorithm. Most implant systems use a nonsimultaneous stimulation paradigm, which prevents stimulation of more than one channel at a time and eliminates electrical field interaction across electrodes; however, newer speech coding algorithms employing simultaneous stimulation are now in development. Electrical field interaction can induce excessive current loads causing increased loudness and distortion from overlapping signals.

One type of speech processing that has been shown to improve speech perception scores has been labeled continuous interleaved sampling (CIS) (Wilson, Lawson, Finley, and Wolford, 1991). CIS speech processing employs a rapid pulse rate (up to 25 microseconds/phase) to deliver envelope cues similar to an analog signal. The CIS processing is available in the Advanced Bionics Clarion, the Nucleus CI24M, and the MedEl implants.

The Nucleus implant has additional proprietary Spectral Peak (SPEAK) and Advanced Combination Encoders (ACE) speech coding algorithms. The ACE strategy claims to combine advantages of the SPEAK and the CIS strategies (Cochlear Corporation, 2004). The Clarion implant can be programmed in a pulsatile, compressed analog, or combination pulsatile/analog format. It is capable of stimulating at a rate of 82000 Hz, allowing presentation of more fine temporal information in their proprietary coding strategy, “Hi Res.” A new implant by the Cochlear Corporation (RP-8), now in feasibility trials by the Food and Drug Administration

(FDA), also incorporates a faster stimulating chip set. Adding more fine temporal information may allow improved word understanding in background noise as well as appreciation of quality changes in voice and music. Changes in speech processing strategies surely will continue as the technology advances, and new implant systems are being designed to enable adaptation to a broad array of speech processing software changes without requiring surgical hardware reimplantation.

A recent addition to cochlear implant systems is the on-board telemetry capability to measure the electronic functioning of the implanted electrode package. Each implant manufacturer has developed its own proprietary system. For all devices, impedance measures of individual channels can be obtained as well as measures of the electronic signal being transmitted by each channel. Auditory whole nerve action potential measures developed by Brown and Abbas (Brown, Abbas, and Gantz, 1990) have been incorporated into the Nucleus (neural response telemetry, NRT) and Clarion (neural response imaging, NRI) implant systems, but not into the Med El systems at this time. The telemetry systems can measure the activity of the residual auditory nerve. The whole nerve action potential measures have a moderate correlation with speech perception skills with an implant. Presumably these measures are based on the integrity and number of residual auditory nerve fibers, so it may be possible to use this capability to identify different areas in an individual cochlea that have better surviving neural populations. It is expected that this information will be useful in developing unique speech coding algorithms for individual subjects, thereby improving their speech perception. Ongoing studies of residual auditory nerve function may be an important method of enhancing performance in individual patients.

Audiological Criteria for Implantation in Adults

The selection criteria for adults continue to evolve as cochlear implant hardware and software are able to reliably provide improved speech understanding. Successful restoration of speech perception with cochlear implants in adults has been limited to postlingually deafened individuals (adults who have learned spoken language and speech prior to losing their hearing). Prelingually deafened adults who use primarily spoken language obtain some important speech cues from the device. Prelingually deafened adults who use sign language have not been able to obtain much benefit from the devices. Cochlear implantation is also limited to individuals with bilateral moderate to profound sensorineural hearing loss in the low frequencies and profound loss in the high frequencies. The present FDA guidelines for adult cochlear implant candidacy include the following

or similar criteria that may differ by implant manufacturer and model (Advanced Bionics, 2004; Cochlear Corporation, 2004; Food and Drug Administration, 2001): If the subject can detect speech at 70 dB sound pressure level (SPL), a series of speech perception tests are performed, bilaterally aided in a sound field at 70 dB SPL. Candidates unable to detect speech in a sound field with the assistance of appropriately fitted hearing aids are considered audiological candidates for an implant. Individuals who can understand some speech in the test condition are considered to be candidates for an implant if they understand 50 percent correct or less on a recorded sentence test (many centers use the HINT hearing in noise sentence test for cochlear implant evaluation) in the ear to be implanted and 60 percent or less in the best binaural aided condition. The above indications are in transition as speech coding algorithms and devices continue to provide improved speech understanding results. Continued research and careful documentation of these findings are necessary to expand the clinical selection criteria for electrical speech processing.

Adults with prelingual, long-term deafness demonstrate a poor prognosis for developing speech understanding following cochlear implantation (Busby, Roberts, Tong, and Clark, 1991; Dawson et al., 1992; Zwolan, 2000). A number of these individuals can learn to recognize environmental or warning sounds and may demonstrate limited speech-reading enhancement with their cochlear implants. Other individuals have reported some improvement in their own speech production following implantation (Zwolan, Kileny, and Telian, 1996). Prelingually deafened adults with previous auditory or oral training or experience (those who communicate primarily with speech rather than sign language) have a better prognosis for accepting and using their devices. It is suspected that central neural processing of auditory information is not possible using current cochlear implant technology if the central auditory pathways have not been stimulated either through normal hearing mechanisms or electrical stimulation with a cochlear implant prior to puberty.

Medical Considerations for Implantation

There are very few medical conditions that limit the use of a cochlear implant in an adult or child. The ability to undergo a general anesthetic must be assessed. Certain personal characteristics, such as onset of profound deafness, educational environment, and history of chronic ear disease and surgery should be ascertained. The medical history is important because it can identify potential problems that might result in less than optimal outcome with a cochlear implant. This information should be considered when counseling the candidate and planning rehabilitation.

Rarely is a candidate eliminated from consideration for an implant based on medical history.

Almost all diseases and disorders that induce profound deafness primarily affect the cochlear hair cells, which are responsible for transducing acoustical signals into electrical responses. This has enabled candidates with almost all causes of profound deafness to be candidates for cochlear implantation. Exceptions to this are those who have had tumors removed from their hearing and balance nerves and those with severe trauma severing the auditory nerve. Studies have failed to identify any etiology except meningitis with severe labyrinthine ossification with obliteration of the cochlea as a disadvantage for cochlear implantation (Gantz, Woodworth, Abbas, Knutson, and Tyler, 1993).

Radiographic imaging of the cochlea is essential to determine the presence of a congenital inner ear deformity. Absence of the cochlea (Michele deformity) or a small internal auditory canal (similar to the fallopian canal) are contraindications for implantation on that side. Congenital malformations, such as Mondini deformities, are not contraindications for implantation, but they should alert the implant team that complications may be encountered during implantation and the candidate must be carefully counseled as to possible limited hearing outcome (Jackler, Luxford, and House, 1987; Miyamoto, Robbins, Myres, and Pope, 1986).

Recurrent acute or chronic ear disease must be controlled prior to placing a cochlear implant. If acute otitis media occurs following implantation, it should be treated with appropriate antibiotics.

In the recent past it has been recognized that there may be an increased risk of meningitis associated with cochlear implantation. Because of this, the Centers for Disease Control and Prevention (2003), the FDA (2003), and the American Academy of Pediatrics (2004) have recommended that all young children who receive a cochlear implant, as well as adults at high risk of invasive pneumococcal disease, be vaccinated against meningitis. It is recommended that vaccination take place prior to implantation in children and the elderly.

Finally, a history of a congenitally deafened ear should be noted. The congenitally deafened ear in a postlingual deaf adult should not be implanted. Case reports indicate that these ears perform similarly to implants in prelingually deafened adults, in that a sensation of sound may not be perceived in a prelingually deafened ear.

Other Considerations

The characteristics of an individual cochlear implant user play a large role in the communication outcomes he or she achieves (Wilson, Lawson, Finley, and Wolford, 1993). Two important factors are age at implantation

and duration of deafness (Battmer, Gupta, Allum-Mecklenburg, and Lenarz, 1995; Gantz et al., 1993; Geir, Barker, Fisher, and Opie, 1999; Shipp, Nedzelski, Chen, and Hanusaik, 1997). Individuals implanted at a younger age with a corresponding shorter period of auditory deprivation are more likely to achieve good outcomes. In addition, cochlear implant outcome improves with increasing device use for many recipients (Rubinstein and Miller, 1999; Rubinstein, Parkinson, Tyler, and Gantz, 1999). Other factors that may influence adult outcomes include speechreading ability and degree of preimplant residual hearing (Cohen et al., 1993; Gantz et al., 1993; Rubinstein et al., 1999).

Cochlear Implant Results

Evaluation of cochlear implant performance has required the development of new test materials for both children and adults, because existing test materials were too difficult or they were used so frequently that subjects could learn the test material. As with routine auditory testing, speech perception material should be presented from audiotape or videotape standardized material (see Chapters 3 and 4). A battery of tests of varying difficulty is needed, assessing temporal or intonation patterns of speech, sound + vision tests to evaluate speech reading enhancement, closed-set and open-set sound-only sentence tests or single word tests. The most difficult are open-set monosyllabic word tests, such as the CID W-22 word lists, NU-6, or CNC monosyllabic word tests (see Chapters 3 and 7 for test descriptions).

Postlingual Adult Performance

Multichannel implant strategies have been shown to provide postlingually deafened adults more auditory information than single channel implants in comparative studies (Gantz et. al., 1988a) and in the prospective randomized Veterans Administration clinical trial (Cohen et. al., 1993). Open-set sentence test and monosyllabic word test score results from subjects using multichannel implants have improved as speech coding strategy advances have been incorporated in clinical trials. It should also be kept in mind that during this same period the selection criteria for implantation have been liberalized. Subjects receiving implants in 2003 have much more residual auditory function than the individuals with no response to audiometric testing implanted in the early 1980s.

Initial prospective randomized clinical trials using the four-channel Ineraid cochlear implant and the Nucleus CI-22 (feature extraction, F0F1F2 coding) implant demonstrated an average open-set sentence score of 30-

38 percent correct after 9 months of device experience (Gantz et al., 1993). Monosyllabic NU-6 word score averages ranged between 9 and 12 percent at 9 months.

It is of interest that the Iowa trial (Gantz et. al., 1988b) could not demonstrate any performance differences between the 22-channel Nucleus device using a feature extraction speech coding algorithm and the Ineraid 4 channel vocoder compressed analog speech processing paradigm. Speech scores improved with the development of the CIS-like speech coding used in the Clarion implant and the SPEAK band-pass filter strategy of the Nucleus 22. Average sentence scores for the Clarion CIS implant are in the range of 65 percent; NU-6 word scores are 30-38 percent (Tyler, Fryauf-Bertchy, Gantz, Kelsay, and Woodworth, 1997). Similar results have been obtained with the Nucleus CI-22 implant using SPEAK speech coding (Hollow et al., 1995). Multiple speech coding strategies are available in most cochlear implants. A recent comparison of five different speech coding strategies in the Clarion device, including CIS, simultaneous analog stimulation (SAS), paired pulsatile sampler (PPS), quadruple pulsatile sampler (QPS), and hybrid strategies (HYB), showed no statistically significant difference between CIS and SAS strategies on vowel and sentence recognition tasks (Loizou, Stickney, Mishra, and Assman, 2003). About one-third of the group benefited from PPS and QPS strategies. There were individual preferences for each strategy. A similar study with the Nucleus implant compared SPEAK, ACE, and CIS speech coding strategies (Skinner et al., 2002). The outcomes in postlingually deafened adults indicated individual preferences for each strategy; however, group means demonstrated similar performance for all three coding strategies.

It is recommended that clinicians fit each coding strategy for an individual in order for the user to obtain maximum benefit from the implant. A period of trial (4-6 weeks) for each is recommended, as the initial preferred strategy may not be the final best speech coding strategy.

In all published trials of cochlear implant performance, a wide range of scores is seen among individual subjects. Ranges have widened as speech coding has improved. Average scores for monosyllabic word understanding for different devices are 40-50 percent correct in the sound-only condition. Average sentence understanding scores range between 60 and 70 percent. Duration of profound deafness has been shown to be the most significant patient variable that contributes to intersubject score differences (Gantz et al., 1993; Rubinstein et al., 1999).

Cognitive abilities, residual hearing, and speech-reading skills have also had a small influence on performance with an implant. Implanting individuals with more residual hearing has shown that preoperative sentence recognition scores and duration of deafness can account for 80 per-

cent of the variance in single word recognition scores (Rubinstein et al., 1999).

The etiology of deafness, except for meningitis with labyrinthine ossification, has not contributed to performance differences. It is suspected that residual auditory nerve function has some influence on performance, but until auditory nerve integrity tests are available on large populations, this remains speculative. Over the past 15 years, speech perception scores have demonstrated steady improvement. Much of the improvement is attributed to advancing technology and speech processing; however, it must be kept in mind, as mentioned above, that the subject population being implanted today has more residual hearing than the people implanted several years ago. The cochlear implant has also been able to elevate the functional health status of older adults (Francis, Chee, Yeagle, Cheng, and Niparko, 2002). A team at the Johns Hopkins University evaluated the quality of life of individuals between ages 50 and 80 who were using cochlear implants. There was a strong correlation between increases in emotional utility scores and improvements in speech understanding scores; 65 percent of this group could conduct interactive conversations on the telephone. These studies also demonstrated that cochlear implantation was cost-effective in this patient population, similar to findings for young adults and children. The cost utility analysis of $9,480 per quality-adjusted life year (QALY) in this age group was well below the threshold of $20-25,000 per QALY for procedures that are considered to be acceptable value for the money. Costs for children ranged between $5,197 and $9,029/QALY (Cheng et al., 2000).

Bilateral Implantation

The increasing clinical success of cochlear implants has prompted the exploration of bilateral implantation (Gantz et al., 2002; van Hoesel and Tyler, 2003). Preliminary results suggest that the greatest benefit from bilateral implantation is sound localization. The head shadow effect in a noisy environment was also a benefit to most individuals. A small percentage of the subjects improved speech recognition in quiet with two implants compared with one device. Much more research in larger groups of individuals must be undertaken to determine whether bilateral implantation should become standard clinical care.

Hybrid (Combined Acoustic and Electrical) Stimulation

The improved speech perception scores achieved by an increasing number of individuals implanted with multichannel cochlear implants,

along with the implants’ record of safety, has enabled the gradual expansion of implant selection criteria to those with more residual hearing. Individuals with severe hearing loss who use hearing aids complain of poor word understanding that is not improved with conventional hearing aids. Amplifying the sound does not improve their word perception scores. Most adults with severe to profound age-related deafness and noise exposure have substantial low-frequency hearing but cannot hear the high-frequency consonants that are essential for word understanding. While the speech communication performance of postlingually deafened patients with cochlear implants is quite good, acoustic hearing (if the damage to the ear is not too severe) still offers considerable advantages for appreciation of the aesthetic qualities of sound (such as music and voice quality), as well as for understanding speech in background noise (Fu, Shannon, and Wang, 1998). Many users of cochlear implants complain that the sound is very mechanical and background noise reduces the ability to perceive speech. The loss of the aesthetic quality of sound is most likely to be related to the inability to discriminate the pitches of sound (Gfeller et al., 2002). The loss of pitch perception is a consequence of the limited spectral resolution of current speech coding strategies (Fishman, Shannon, and Slattery, 1998). Hearing in background noise requires even finer spectral resolution (Fu et al., 1998).

Residual low-frequency hearing (125-750 Hz) has the potential to provide more low-frequency spectral resolution than the current generation of speech coding algorithms. However, placement of the standard intracochlear electrode usually results in loss of residual hearing. To circumvent this loss, a novel “short” 10 mm multichannel cochlear implant was developed for use in severely impaired individuals with residual low-frequency acoustic hearing (Gantz and Turner, 2003). Recipients of this device have preserved low-frequency acoustic hearing and have been able to combine acoustic and electrical speech processing to substantially improve speech perception in quiet and in multitalker noise (Gantz and Turner, 2003). These early experiences suggest that residual low-frequency hearing is important and should be preserved as individuals with more hearing are considered for cochlear implantation.

The cochlear implant has been shown to be safe and effective in providing significant improvements in speech perception skills for postlingually deaf adults. The results with cochlear implants are encouraging and have continued to improve as indications for implantation expand and software and hardware advances become clinically available. Newer implant designs are in development, and the combination of acoustic and electrical stimulation strategies may greatly expand the application of this technology to more individuals with hearing loss in the future.

AUDITORY BRAINSTEM IMPLANTS

Neurofibromatosis Type II (NF-2) is a disease in which patients develop multiple tumors of the central nervous system, including bilateral acoustic neuromas (vestibular schwannomas). Profound deafness is the usual outcome following removal of the acoustic neuromas in these individuals. Researchers have developed auditory brainstem implants that can deliver stimulation to the cochlear nucleus, bypassing the damaged nerve, in patients undergoing such surgery (House and Hitselberger, 2001).

The present auditory brainstem implant (ABI) is approved by the FDA for use in patients with NF-2. The device has a Silastic pad with 22 electrode contacts that are placed adjacent to the dorsal cochlear nucleus in the brainstem following tumor removal. Some patients who receive an ABI do not receive any auditory benefit from the device, and the patients must fully understand this, as well as the risks and potential side effects of surgery, prior to implantation. The most recent results with the ABI can be found in an article by Otto et al. reporting on the 55 subjects that were included in the FDA clinical trial with this device (Otto, Brackmann, Hitselberger, Shannon, and Kuchta, 2002).

OTHER ASSISTIVE LISTENING DEVICES

In addition to conventional hearing aids and cochlear implants, there is a category of technologies called ALDs. These devices are typically used part-time either instead of, or in addition to, hearing aids or implants. They are usually targeted toward improving communication functionality in specific limited situations, such as talking on the telephone, communicating over a distance, attending the theater, or detecting a doorbell ring. A recent review of ALD types and technologies is found in Compton (2000).

Varieties of ALDs may be partitioned into two categories: acoustic and alerting. Acoustic ALDs facilitate the reception of acoustic signals by reducing the corruption of desired sounds that occurs in many everyday listening situations, for example, background noise (including speech babble), reverberation effects in auditoria, large distance between talker and listener, and limited visual cues resulting from poor lighting or a talker who cannot be seen.

Amplified telephones are probably the most widely used and effective acoustic ALDs (Kochkin, 2002). Another effective type of device employs a wireless system composed of a transmitter and receiver pair. The transmitter is driven by a microphone that is held by or near the talker. The signal is broadcast to the receiver using FM radio, induction loop, or

infrared transmission. The receiver routes the signal to the hearing aid (or other amplifier), from which it is delivered to the listener. A related category of ALDs converts the acoustic speech signal into a visual representation. Examples of these are real-time captioning and speech recognition technology such as that used by CapTel. There are also other visually based technologies such as TTY (a keyboard and display device connected to the phone system), e-mail, and instant messaging.

Alerting ALDs are designed to improve detection of warning and alerting signals by substituting visual or vibratory signals for auditory signals. Examples of this category include vibrating alarm clocks, light-up telephone signalers, and doorbells and fire alarms that activate flashing lights.

ALDs are often considered appropriate in addition to a conventional hearing aid or in cases in which a hearing aid is not effective or desired. Despite enthusiastic support from some professionals (e.g., Loovis, Schall, and Teter, 1997) and considerable popularity in some other countries, ALDs are not used very much in the United States (with the exception of amplified telephones). It is not clear whether this results from a culturally based reluctance to embrace devices that are more obvious, or whether it results more from lack of their promotion by hearing care professionals.

Although both alerting and acoustic ALDs seem to be used quite effectively in some home situations, their penetration into work settings and other settings outside the home is reported to be limited (Bowe, 2002; Kochkin, 2002; Wheeler-Scruggs, 2002). The reasons for this are not clearly established. Reluctance of workers to request accommodations may be a contributing factor; lack of knowledge about available and appropriate devices is another.

In addition, there is evidence that persons with hearing loss are often reluctant to utilize ALDs that they know to be helpful because they are conspicuous and potentially stigmatizing. For example, with a personal FM system, the talker must be close to the microphone (typically holding it or wearing it) before the device will be helpful to the listener with the hearing loss. It is difficult to achieve this in an inconspicuous, flexible manner that does not inconvenience communication partners in the work setting.

This presumption has been supported in two studies that have compared hearing aids and ALDs in fairly large groups of persons with hearing loss. Jerger et al. (1996) noted that although many subjects preferred the sound quality of an ALD (a personal FM system), 97 percent of them still preferred to use a conventional hearing aid in daily life. Yueh et al. (2001) reported that use of conventional hearing aids produced substantial reported improvements in hearing-related quality of life, whereas the

quality of life improvement reported to result from use of an ALD was very small.

RECOMMENDATIONS FOR RESEARCH

The committee recommends that SSA support research efforts that are directed toward increasing the knowledge base in the area of amplification effectiveness. Despite the long history of study of amplification, fitting strategies, and outcomes, there is a lack of empirically based information about the use and benefit of modern sensory aids and prostheses that are used by persons with severe/profound hearing loss. Such data potentially would be of interest to SSA in judging the likely employability of individuals with hearing loss.

Research Recommendation 5-1. There is very limited information about the efficacy and effectiveness1 of modern high-technology wearable hearing aids in adults with severe or profound hearing loss. Reports in the literature tend to be on small samples that are rather loosely defined. Results are probably of limited generalizability to the population of adults with work potential. It would be valuable to have information from large groups of adults with severe and profound hearing loss about the effectiveness of conventional amplification. Data describing both laboratory measures of speech understanding and self-reports of real-world performance should be obtained. Basic linguistic abilities would be an important variable in such studies. Individuals with adult-acquired hearing loss should be investigated separately from those whose hearing losses originated in early life.

Research Recommendation 5-2. Research should be undertaken to determine: (1) performance for hearing aid wearers with severe/profound losses on the tests recommended in Chapter 4 of this report (monosyllabic words in quiet and in noise); and (2) the ability of the same hearing aid wearers to function in real work environments.

Research Recommendation 5-3. After work environments are meaningfully categorized using dimensions such as typical background noise level, noise modulation, and need for oral communication (as recommended elsewhere in this report), data describing: (1) performance on tests for SSA disability determination, and (2) performance with hearing aids in different work environments should be combined in attempts to develop reasonably accurate models to predict likely abilities of given individuals to function auditorily in given jobs.

Research Recommendation 5-4. Overall, it seems likely that ALDs have the potential to be quite efficacious in the workplace, but they are underutilized at this time. Because many of them are less costly to purchase, adjust, and maintain and less individually specialized than either hearing aids or cochlear implants, they could provide a cost-effective approach to facilitating integration of some persons with hearing loss into the workforce. The committee recommends that SSA support research directed toward determining the benefits and cost-effectiveness of ALDs in the workplace. It may be valuable to develop a program that facilitates the purchase, maintenance, and promotion of ALDs by employers who have, or could have, workers with hearing loss.