1

Introduction

This report is provided to the National Science Foundation (NSF) by the Panel on Research and Development Statistics at the National Science Foundation. The panel was convened in January 2003 by the Committee on National Statistics of the National Academies at the request of the National Science Foundation to conduct an in-depth and broad-based study of the Research and Development Statistics program of the NSF Science Resources Statistics (SRS) Division. A portion of the study was also mandated by Congress in the National Science Foundation Authorization Act of 2002.

The panel was asked to look at the definition of R&D, the needs and potential uses of R&D data by a variety of users, the goals of an integrated system of surveys and other data collection activities, and the quality of the data collected in the existing SRS surveys. Our overall review examines the portfolio of R&D expenditure surveys as a total system, finds gaps and weaknesses, and identifies areas of missing coverage.

The work of the panel is aligned with an associated workshop by the National Academies’ Board on Science, Technology, and Economic Policy, which examined the current and evolving structure of the U.S. R&D enterprise.

This final report of our study contains recommendations for identifying and defining R&D activities, the appropriate goals for an integrated R&D measurement system, and recommendations on methodology, design, resources, structure, and implementation priorities.

CONGRESSIONAL MANDATE

The National Science Foundation Authorization Act of 2002 mandated that the director of NSF, in consultation with the director of the Office of Management and Budget (OMB) and the heads of other federal agencies, enter into an agreement with the National Academies to conduct a comprehensive study to determine the source of discrepancies in federal reports on obligations and actual expenditures of federal research and development funding (U.S. Congress, 2003). The legislation directed that the study examine the relevance and accuracy of reporting classifications and definitions; examine whether the classifications and definitions are used consistently across federal agencies for data gathering; and examine whether and how federal agencies use NSF funding reports, as well as any other sources of similar data used by those agencies.

In view of the fact that this committee study had been recently initiated when the legislation was passed, NSF requested and the panel accepted, the task of studying the discrepancy.

STUDY APPROACH AND SCOPE

The panel prepared an interim report, which should be considered as the basis of this final report. Indeed, highlights of the interim report’s analysis and recommendations have been carried forward into this final report.

The interim report assessed the commitment of the Science Resources Statistics Division of NSF to quality of performance and professional standards and examined aspects of the statistical methodology and accuracy in the SRS portfolio of surveys. Both the interim report and this final report focus on the concept of quality for the NSF R&D expenditure statistics. While there is no commonly accepted definition of quality for surveys, despite over two decades of intense interest in aspects of quality in federal surveys, there is an evolving consensus that the quality of federal statistical data encompasses four components: accuracy, relevance, timeliness, and accessibility (Andersson et al., 1997).

The panel chose to focus the discussion in the interim report primarily on the dimension of accuracy. As defined by the OMB, accuracy includes the measurement and reporting of estimates of sampling error for sample survey programs as well as the measurement and reporting of nonsampling error, usually expressed in terms of coverage error, measurement error, nonresponse error, and processing error. The OMB working paper concludes “it is important to recognize that the accuracy of any estimate is affected by both sampling and nonsampling error” (U.S. Office of Management and Budget, 2001:1-2).

The panel adopted this perspective on the accuracy component of quality in developing its assessment of the quality of the NSF R&D surveys. We further adopted the approach to examining the sources of sampling and nonsampling error reflected in the work of Brooks and Bailar (1978). The quality profiling approach adopted by the panel was applied across the surveys, looking at common or cross-cutting issues, as well as to each of the five major current survey operations separately.

This final report also addresses the accuracy of the statistical data for each of the current and potential NSF R&D surveys but focuses more on the relevance, or usefulness, of the information; the timeliness, or the fitness for use of the data; and the accessibility, or utility of the statistical series, their microdata inputs, and their longitudinally matched data files. The definitions of these components of data quality are taken from the work of the Federal Committee on Statistical Methodology (U.S. Office of Management and Budget, 2001), and are used throughout this report.

In addition, this final report addresses the continuing issue of the discrepancy between the federal reports on obligations of funds for research and development and the expenditures of federal research and development funding reported by recipients of those funds. Our review of the reporting classifications and definitions used in the differing reports and a reconciliation of the data sources appear in Chapter 6.

CHANGING ENVIRONMENT FOR RESEARCH AND DEVELOPMENT

The relationship between public policy and federal statistical programs has long been symbiotic. Changing public policy changes the demand for data and the way in which data are received. Changing public policy also changes the role that data plays in the formulation of public policy. In turn, statistical programs help identify the need for and assist in assessing the requirements and results of public policy. Data help illuminate the paths that public policy should take to solve public issues.

Over the past half century, the federal government’s statistical programs that produce data on research and development have exemplified this relationship. As interest in the federal role in science and engineering has mounted and focus on the contribution of research and development to innovation and national growth has intensified, the need for good data to measure the research and development enterprise has grown (National Research Council, 2000). As data have become more available and the sophistication of means of disseminating the results of the data collections has expanded, the public’s interest in issues of trend, level, structure, balance, equity, and appropriateness of the public and private investment in research and development have intensified. To understand the current status of

research and development statistics at the National Science Foundation, it is important to understand the context in which the statistical programs have developed, and the role they play in contributing to the understanding of public policy issues today.

World War II is often identified as a watershed in U.S. science policy. Brooks (1968) describes federal policy toward science in the prewar period as largely instrumental in character—concerned with utilization of science and technology for closely defined purposes, such as agriculture, defense, and natural resource development. Although there were examples of federal investment in basic science, such as the National Bureau of Standards, which was doing critical basic research in a broad spectrum of areas, the sense of mutual dependence with the scientific community had not yet fully blossomed.

The tenor of the times, as U.S. science and engineering policy stood on the springboard that would catapult the enterprise to new levels, was best captured in Science—the Endless Frontier, a report by Vannevar Bush (1945), who had headed the wartime research and development programs. The influence of this study, which was instrumental in the establishment of the National Science Foundation 5 years later, cannot be overestimated (National Research Council, 1995). Importantly, the study illuminated both the times and the level of understanding about the issue of research and development.

The data on research and development were hardly up to the task at hand when Bush conceptualized the future organizational structure for federal support of research and development in 1945. For example, information in his report about trends in expenditures in industry, academic institutions, and the government were the “best estimates available,” and they were “taken from a variety of sources and arbitrary definitions have necessarily been applied” (Bush, 1945:20). In order to obtain factual information concerning research expenditures in colleges and universities for the report, questionnaires were sent to a list of colleges and universities accredited by the Association of American Universities. Some 188 replied, and 125 reported organized research programs (Bush, 1945). At the time of this report, federal expenditures for research and development had been compiled from budget sources in a fairly consistent manner for two decades, although there were recognized shortfalls in the data and omissions in that they did not include grants to educational institutions and schools (Bush, 1945).

The years since publication of the Bush study have been marked by several major thrusts that have had a remarkable influence on the course of research and development in the United States, and, in turn, on the requirements for measurement of the research and development enterprise. This was a period, characterized by the Cold War and international competition,

in which the federal government funded an increasing share of research in the nation’s universities. This “second mega-era” for science and science funding was further characterized by two other forces—the virtual explosion of federal support for science and engineering, and the birth of the National Science Foundation—that had an especially pronounced effect on the demand for and eventual availability of information on research and development in the United States (U.S. Congress, House, 1998:9).

Explosion of Federal Support for R&D

The growth of federal support was sure yet uneven in scope and impact in the decades since the Bush study. In the immediate aftermath of the war, even before the birth of the National Science Foundation in 1950, the trend toward consolidation of federal research in a few agencies became apparent. The National Institutes of Health established prominence in health-related research, including university-based biomedical research and training; the Office of Naval Research took on a major role in supporting academic research in the physical sciences; and the Atomic Energy Commission took on most of the research in atomic weapons and nuclear power (National Research Council, 1995). The National Aeronautics and Space Administration and the Advanced Research Projects Agency in the Department of Defense were signs of further consolidation and growth following the launch of Sputnik by the Soviet Union in 1957, which “provoked national anxiety about a loss of U.S. technical superiority and led to immediate efforts to expand U.S. R&D, science and engineering, and technology deployment” (National Research Council, 1995:42).

Each of the decades that followed had a theme and an emphasis that further challenged the data systems that helped to illuminate the scope and trends in R&D. A renewed emphasis on health and biomedical research in the 1950s, an increased focus on environmental and energy issues in the 1970s, a new national commitment to international competitiveness and breakthroughs in information technology in the 1980s and 1990s, and then the robust growth of the health and medical research sector since the 1980s were examples of the global shifts in emphasis and resourcing that needed to be explored and explained.

During this same time period, the increasing recognition of the contribution of R&D to economic growth and productivity advanced heightened national interest in R&D and, in particular, innovation. Over time, the importance of information on R&D expenditures was recognized as an indicator of the extent to which the generation and diffusion of knowledge has become an economic activity with the growth in the science and engineering enterprise (Rosenberg, 1994).

A fundamental importance of R&D in the process of economic growth

is that it generates spillover benefits. As documented by Griliches (1980) and Mansfield (1980), the benefits of innovation made by one company can spread widely, or spill over, to many industries. For example, cost-reducing production techniques developed in one industry are likely to be copied or adapted for use by companies in many industries (U.S. Congress, Joint Economic Committee, 1999a). The existence of spillover benefits explains why studies find that investment returns from R&D to the economy as a whole are often greater than the returns to the investing businesses themselves.

In order to estimate spillover effects, two types of benefits from innovation must be identified: those flowing from “customer” benefits and “knowledge” spillovers. Customer spillovers occur when customers benefit from new products and better production techniques that businesses develop in order to earn higher rates of return. The value of the benefit is greater than the cost to the customer. On a global scale, these customer benefits have been embodied in imported capital and intermediate goods, contributing significantly to economic growth.

The rapid exchange of technical information facilitated by academic journals, conferences, the Internet, and licensing agreements has generated knowledge spillovers, which help firms increase productivity more broadly, even with patent protections for the innovating company.

Growing Importance of R&D Expenditure Measures

Data on R&D expenditures have documented these trends in the size and scope of science and engineering, as well as the contribution of R&D to growth. As federal government support for science and engineering grew, so did demand for information to support decision making and the burden on the instruments for measuring science and engineering activity.

The birth of the National Science Foundation in 1950 was quickly followed by an articulated demand for measuring the science and engineering enterprise in the United States. The first emphasis was on identifying the federal contributions in a systematic way, so NSF quickly assumed responsibility for maintaining the National Register of Scientific and Engineering Personnel and commissioned the first collection of data on federal R&D funding and R&D performance. The Survey of Federal Funds for Research and Development, which collects data on R&D obligations made by federal agencies, was instituted in 1953. In that same year, NSF set a pattern for future statistical operations by contracting out the first Survey of Industrial Research and Development to the Bureau of Labor Statistics. Administration of the survey was later transferred to the U.S. Census Bureau. In the same year, NSF conducted the first of six occasional surveys of R&D performance by nonprofit institutions. In 1954, NSF conducted the first small-scale surveys of R&D at major universities.

Looking back on the 1950s, it is apparent that a pent-up demand for information on science and engineering led to an era of statistical innovation, enabled by the fresh infusion of resources from the new NSF. It was a period of creativity and risk-taking as NSF expanded its portfolios in both the expenditures and human resources statistics programs. This era saw the birth of statistical data collections that still, to this day, define the base of knowledge about science and engineering expenditures. And it established precedent in the organizational structure and operational modes of NSF that continue to define NSF’s approach to survey operations.

Knowledge begets the demand for more knowledge. Over the next two decades, NSF expanded the data it collected on public support for science and engineering. A second predominant initiative was to deepen the data collected on federal R&D spending by expanding detailed fields of science and engineering as well as budget function, as well as by collecting federal obligations for research to universities and colleges by agency and detailed field.

In the mid-1960s Congress initiated a role that NSF has continued to play with regard to R&D statistics when it mandated the Survey of Federal Science and Engineering Support to Universities and Colleges. The purpose of this data collection was to enable Congress to better understand the role of the federal government in supporting academic research and development. Congress continued to mandate the collection of additional data on R&D performance and infrastructure in the 1980s and early 1990s: the National Survey of Academic Research Instruments and Instrumentation Needs in 1983; what is now the Survey of Science and Engineering Research Facilities at Colleges and Universities in 1986; and the Master List of Federally Funded Research and Development Centers in 1990. In many ways, congressional interest has shaped the landscape for research and development measurement. Congress has played an activist role in formulating NSF’s R&D expenditure survey portfolio by supporting the collection of specific data and by relying on the resulting data in its formulation of policy.

Simultaneously, NSF added several collections of human resources data. The Survey of Earned Doctorates was begun in 1957; the annual Survey of Graduate Students in Science and Engineering began in 1966; and the Postcensal Survey of Scientists and Engineers commenced in 1982. It was replaced by the umbrella Scientists and Engineers Statistical Data System (SESTAT) in 1993, which incorporated data from the National Survey of College Graduates, the Survey of Recent College Graduates, and the Survey of Doctorate Recipients. The quest for improvement of the surveys on the human resources side parallels this study. The Committee on National Statistics recently completed a study of options for improving the SESTAT database, recommending a best approach to carry the database through the

next decade and encouraging NSF to pursue opportunities to improve understanding of the numbers and characteristics of scientists and engineers in the United States (National Research Council, 2003).

Several innovative collection efforts were mounted by NSF over the years. Some were absorbed into or merged with regular data collections; some simply fell by the wayside. A survey of Science and Engineering Activities at Universities and Colleges was appended to the Survey of Industrial Research and Development in 1964 and absorbed into a new annual Survey of Research and Development Expenditures at Universities and Colleges in 1972. In 1971, SRS started an Industrial Panel on Science and Technology with about 80 members to obtain quick, qualitative information on issues in industrial R&D (National Research Council, 2000). This panel was disbanded in 1991.

Nearly a half a century of growing demand for understanding the impact of the science and engineering enterprise on the economy shaped today’s portfolio of research and development expenditure surveys. The growth has largely been responsive to specific congressional or agency needs, as each new data collection activity was initiated to address a narrow topic. In its 2000 report, the National Research Council’s Committee to Assess the Portfolio of the Division of Science Resources Studies of the NSF observed that this evolution yielded several rather unconnected data collections in which surveys failed to “serve as a piece of a cohesive R&D funding and performance data system” (National Research Council, 2000:25). As an example, the committee discussed the discrepancies in funding and performance estimates among its surveys, which are covered in some depth in Chapter 6 of this report. The committee concluded that “further work to improve comparability—even the integration—of these surveys would improve their analytic value” (National Research Council, 2000:25).

In retrospect it is apparent that R&D expenditure statistics progressed in tandem with the growing federal involvement in R&D. They soon became the accepted measures of the amounts of R&D spending and public and private investment in areas of science and engineering. These data were called on to serve other purposes as well. They became a proxy indicator of the direction of technological change. They portrayed the locus of emphasis among the public, private, nonprofit, and college and university sectors. Most importantly, they helped frame the national debate over the investment strategy for R&D.

There are other reasons for the growing importance of R&D expenditure measures in recent years. The science and engineering enterprise has changed substantially in the past two decades, and there is an evident association of trends in research and development spending with questions of national growth and prosperity. At the same time, the task of measuring R&D activity has become more diffuse and complicated. For example, the

declining share of R&D expenditures contributed by the federal government has meant that federal agencies no longer account for the bulk of R&D spending. Other major changes often cited in the literature are the shift from a manufacturing to a service economy, the diffusion of innovation among smaller firms, the shift from emphasis on manufacturing and engineering R&D investment to medical sciences, the geographical clustering of domestic technological research and innovation—the so-called Silicon Valley effect—as well as the globalization of R&D activity and innovation. These are indeed challenging times for those in the National Science Foundation and elsewhere who are responsible for the provision of data to illuminate these changes as they occur.

Declining Federal Share in R&D Spending

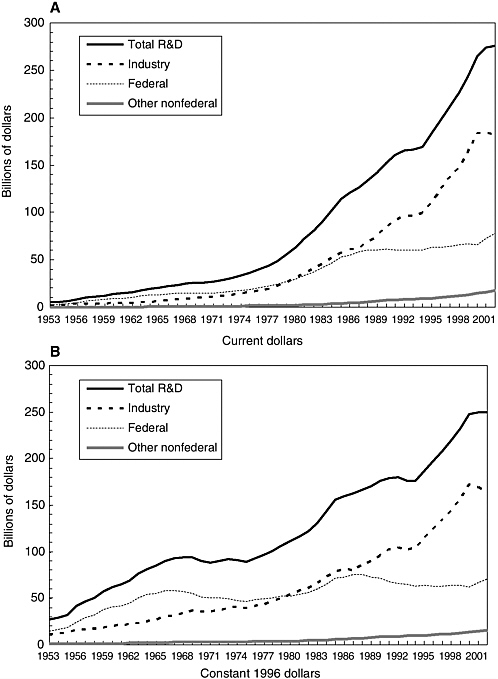

In 1979, the federal government, which had been the main investor in research and development since the expansion of funding in the post-World War II period, saw its proportion of spending on R&D fall below 50 percent. The federal government had accounted for about two-thirds of R&D spending as recently as 1968, but since then the share has fairly steadily declined while industry’s share has steadily increased. In 2002, the latest data available, the federal government’s total spending accounted for just 26 percent of total U.S. R&D expenditures (see Figure 1-1).

The strong growth of industry-funded R&D since the early 1980s may be attributable to a number of factors (U.S. Congress, Joint Economic Committee, 1999a). Among the factors that have been named are lower regulatory costs and higher competition in many U.S. industries, increasing global integration and greater global competition, and the fast-changing technological nature of today’s manufacturing processes and markets. The result of this shift to industry funding is a greater need for understanding such aspects of R&D expenditures as the relationship between R&D spending and the general state of the economy, the trends by sector and size of establishment, and the specific geographical impact of these trends.

This fact of diminishing importance of the federal government in the R&D enterprise has several practical implications for data collection activities. Most obviously, in contrast to just three decades ago, it is no longer possible to simply collect data that are administratively available from the 32 federal agencies that conduct most of the government-funded research and development programs and be assured that the majority of U.S. R&D activity is being tallied.

The growth of influence of the private sector has compounded the difficulty of accounting for R&D expenditures. Industrial funding is more diffuse and less easily measured. Although R&D activity in industry is concentrated in a few hundred large companies, an inclusive tally of indus-

trial R&D activity requires that surveys cover many sectors of activity and thousands of smaller establishments, some in their infancy.1 Until 1990, in fact, the Survey of Research and Development in Industry collected data from only a small number of large firms. To expand coverage of the universe of companies that conduct R&D in the private sector, the survey was redesigned in 1991. Within the increasingly important private sector, fast-moving changes in structure continue to challenge accurate and informative data collections.

Shift to a Service Economy

The structural shift from a goods-producing to a service-producing economy has been a powerful force in shaping U.S. institutions for several decades and has been amply documented (National Science Foundation, 2001). The service sector of the economy has eclipsed the goods-producing sector in proportion of gross domestic product (GDP), now accounting for over 77 percent of it (Jankowski, 2002). This growth of economic activity outside the classic areas of agriculture, mining, construction, and manufacturing is reflected in the growing contribution of the service sector to R&D activity. According to current definitions and data, nonmanufacturing business R&D now accounts for about 39 percent of R&D performance, up significantly from a 5 percent share in 1983. Although this trend has been most evident in the United States, there is evidence that it is widespread. The Organisation for Economic Co-operation and Development, using its definitions, has reported that the service sector accounted for 34 percent of industrial R&D in the United States in 2000; the figures were comparable for Canada (28 percent) and Australia (27 percent); while the proportions in Great Britain (17 percent), Germany (5 percent), and Japan (3 percent) were somewhat less.

This increasing presence of a dynamic service sector in the economy has had ramifications for many aspects of economic measurement, from necessitating design changes in the economic censuses, to refocusing GDP and employment measurement, to the development of the North American Industrial Classification System (NAICS) in 1997 to replace the old Standard Industrial Classification (SIC) system. The measurement of R&D should be no different in this respect. For many years, the emphasis of R&D data collection and analysis had been devoted to measuring R&D in the manufacturing sector, which was seen as the source of most innovation and, by the way, produced tangible outputs which were easier to measure than

intangible services. This focus of attention resulted in a view of the service sector as lagging in technological innovation and consequently in productivity growth (Tether and Metcalfe, 2002). Thus, the relative difficulty of measuring R&D in the service sector has fostered a view that was at variance with the anecdotal observation that the service sector is a very large consumer of new technologies and human capital and innovative activity. The service sector not only exhibits considerable innovation in such activities as the Internet, web-based services, and new financial instruments, but also appears to be providing innovative services to client industries, such as systems integration services in manufacturing.

The growth of the service sector not only presents issues of classification and measurement, but also brings into question the applicability of the traditional definition of R&D. Jankowski points out that the service sector creates value and competes by buying products and assembling them into a system or network, efficiently running or operating the system, and providing services for customers who are often members of the general public. Thus, the R&D portfolios of service firms reflect these required core competencies and typically address: system design (network of physical products and information); system operation (equivalent to manufacturing processes in manufacturing industries); and service design and delivery, including interaction with individual customers. It is an open question whether many of these service activities would be interpreted to be readily classified as traditionally measured R&D in surveys.

The growth of the service sector confounds the identification of the share of innovative activity that is accounted for by traditionally defined R&D. Many of the innovative inputs of the service sector are not currently classified as R&D. These include market research, training in innovation, adoption and adaptation of new technology, start-up activities, organizational changes, and incremental impacts. There are, however, major definitions and measurement issues here. For example, Gallaher and Petrusa (2003) suggest that customization, an important product of the service sector, is a gray area for distinguishing between R&D and non-R&D innovation activities.

By nature, R&D in the service sector is more difficult to measure. Michael Gallaher, in a presentation to the panel, outlined several of the sources of difficulty in presenting the initial results of his research into service sector measurement issues. It focused on four industries: telecommunications (NAICS 5133), financial services (NAICS 52 and 53), computer systems design and related services (NAICS 5415), and scientific research and development services (NAICS 5417). His research identified issues of misclassification and mismeasurement that pertained to each of these industries (Table 1-1).

Greater recognition of differences between the growing service indus-

TABLE 1-1 Measurement Issues in Four Service Industries

|

Industry |

Misclassification Issues |

Measurement Issues |

|

Telecommunications |

Content developers |

Larger companies with R&D division |

|

Financial services |

Patent holding companies |

R&D closely linked to new product offerings |

|

Computer systems design and related services |

Computer hardware, computer software (shrink wrap) |

R&D is frequently “on the job” integrated with the service |

|

Scientific research and development services |

R&D outsourcing, biotech startups |

High percentage of activities are R&D |

|

SOURCE: Gallaher, 2003. |

||

tries and the more traditional manufacturing sector may lead to the recognition that different concepts and definitions as well as techniques and methodologies are needed to measure R&D expenditures in the service sector. With further research of the type discussed above, NSF may come to recognize the need to consider collecting information from the service sector using a very different survey from the current collection instruments.

ISSUES IN THE FINANCIAL SERVICES SECTOR

Some of the most key assets in financial services have been new ideas. New ideas produced derivative products, securitizations, mutual funds, exchange traded funds, and thousands of new securities. The power of ideas has not only made financial service firms well-off, but also enabled savers to more efficiently invest, firms to raise capital, and homeowners to enjoy lower mortgage rates.

Patents are one traditional method of defining and measuring innovation. But since the early days of the 20th century, there has been considerable doubt in the United States as to whether methods of doing business fell under the definition of patentable subject matter, namely “any new and useful process, machine, manufacture, or composition of matter.” While the United States did not explicitly forbid so-called business method patents, as many nations in the French and German legal traditions did, there was still a presumption that they did not fall into these four categories and hence were not patentable. To make matters worse, financial service firms were required by the marketplace and by regulation to make public the broad outlines of publicly registered securities through prospectus disclosure.

As a result, financial innovators took different approaches to managing their key intellectual assets than did other industries. It was well understood that ideas alone were not likely to generate competitive advantages. Key innovations by one investment bank were rapidly copied by their competitors, with imitative products usually occurring soon after the innovator had an opportunity to bring to market a single issue of a new security. Firms boasted that they could use the information in required securities filings to “reverse engineer” virtually any discovery. Whole prospectuses were copied virtually verbatim. Firms used branding, in the form of service-marked products, to differentiate their offerings from one another. Defecting employees would frequent carry knowledge with them from one employer to the next, while vendors would transfer insights developed during projects with financial institutions to their competitors. While firms tried to protect insights (e.g., trading strategies) through the use of trade secrets, these protections were frequently modest at best. While most have described the latter 20th century as a time of great financial innovation, other dissenting voices have countered that quite little revolutionary innovation took place in financial markets, in part because there were few incentives for firms to invest massive amounts of money in doing R&D that could be easily appropriated by rivals.

There are also, it should be noted, considerable differences among rivals. Some firms pride themselves on creating new products and processes; others excel at scanning the markets and then finding creative ways to reverse engineer others’ products, rebrand them, and push them through their powerful distribution networks.

Unlike traditional manufacturers, for which most innovations grow out of dedicated R&D laboratories, more of the innovation in financial institutions comes from within operating units. With a centralized R&D function, it is fairly easy to keep track of the inventory of intellectual property and R&D. The challenge for data collection within financial service firms is, however, much larger: they often have no clear sense of the amount of new product and service development activity going on, or even the number of successful activities completed.

Today, these time-honored arrangements are under stress. Since the State Street decision legalizing financial patents in 1998, hundreds of financial patents have been issued and many more applications are pending (State Street Bank & Trust Co. v. Signature Financial Group, Inc., 927 F. Supp. 502, 38 USPQ2d 1530). These awards are proving to have material economic value. The case that ignited this new change in policy pitted State Street Bank against a smaller firm, Signature Financial. When Signature won its suit to stop State Street from infringing Signature’s patent on a way of creating mutual funds, State Street’s stock fell 2.3 percent or $277 million in one day. More recently, the trading firm Cantor Fitzgerald received

$30 million from the Chicago Board of Trade and the Chicago Mercantile Exchange to settle charges of patent infringement. To date, small and large firms, and even academic institutions, have been rushing to patent their financial products and processes like homesteaders on a frontier of financial intellectual property. How this will affect the innovation process remains unclear, but it is likely to be important. This is an example of a sectoral picture that NSF needs to draw to fully explain trends in R&D and identify emerging measurement issues.

Role of Small Firms in R&D

Another significant change in the environment for R&D, closely associated with the growth of the service sector, has been the growth in the role of small firms in the generation of innovation and economic growth through R&D. Firms in the service sector that perform R&D tend to have fewer employees and smaller R&D budgets than those in the manufacturing sector. Some 43 percent of currently measured R&D in the service sector was performed by firms with fewer than 500 employees, compared with a contribution of about 8 percent by smaller firms in the manufacturing sector.

The important question for purposes of economic measurement hinges on the role of firm size, or market concentration, on R&D investment and innovative performance. Is large size conducive to R&D investment, as hypothesized by Schumpeter (1947)? Although large firms account for the preponderance of R&D in the economy, a literature review by Achs and Audretsch (2003) found that empirical evidence suggests that small entrepreneurial firms play a key role in generating innovations in selected industries.

More importantly, the new recognition of the role of small businesses has been postulated to result from a class of studies that challenge traditional R&D measurement. The main instruments for measuring R&D activity are restricted to measuring inputs into the innovative process, such as R&D expenditures. Achs and Audretsch (2003) suggest that it was only when measures were developed to measure innovative output that the vital contribution of small firms became recognized. For example, research on patent activity by smaller firms suggests the presence of an important class of “serial innovators” whose contribution to technical progress is both effective and important within their industries. It is claimed that this contribution is not adequately measured through reporting of R&D expenditures (CHI Research, Inc., 2002). In this view, activity in small firms is better depicted through measures of innovation (see Chapter 4).

Increasing Role of Medical R&D

No trend in federal funding of research and development has been as pervasive as the shift in the spending for health-related R&D. Over the

past two decades, health-related R&D rose from about one-quarter (27.5 percent in 1982) to nearly one-half (45.6 percent in 2001) of the federal R&D budget. In order to fully understand the scope and impact of this funding shift, it is important to understand the “what” (the reason for this shift) and the where (which fields, performers, and geographic locations have benefited).

Medical research and development has received the lion’s share of increases in federal support (National Science Board, 2002). The federal decision to grow the budget for medical R&D was related to successive campaigns to eradicate cancer, then AIDS, then other diseases over the past several decades. Biotechnological advances, the human genome project and its offshoots, and, most recently, investments in activities related to countering bioterrorism have also played a role (National Science Board, 2002).

Health-related R&D is skewed toward basic research, which accounted for over 55 percent of the budget authority for health in fiscal year (FY) 2001. Not surprisingly, the majority of federal health-related support is directed toward academia. The result of these shifts in funding is that, in FY 2001, most (61.9 percent) of federal support to academia was funneled through the U.S. Department of Health and Human Services. At a time when funding for most disciplines has been stagnant, in real terms, obligations for the life sciences have nearly doubled since the early 1990s. There is clear evidence that this infusion of funds has, in turn, attracted a larger proportion of graduate researchers.

This trend has a significant impact on measurement. In order to understand the effect of this shift, timely information is needed not only on federal spending by budget authority, activity, agency, performer, and field, but also on the associated science and engineering human resource investment.

It is difficult to account for the impact of the increasing investment in health-related R&D in the public sector because of a lack of a common sectoral definition. It is almost impossible to identify trends with precision in the business sector because there is no accepted definition of a “biomedical industry,” which would certainly contain bits and pieces of health services, pharmaceuticals, and other industries classified elsewhere by NAICS code. In 1999, companies officially categorized in the “health care services” industry accounted for only 0.4 percent of all industrial R&D. It is difficult to appropriately portray a business R&D counterpart to the public R&D investment, in part, because pharmaceuticals and medicine are classified as manufacturing in the chemical industries, and, in part, because the NAICS categories are not sufficiently detailed to identify pieces of the sector.

There are other examples of industrial sectors that are similarly hard to document—one being software development, which is conducted in various ways in a broad spectrum of industries. It has been suggested that these cross-sectoral portraits could be obtained by collection of more detail on line of business within the NAICS industries and collection of information

on the fields of science from industry, as are the data for the federal government and academia. The issues attending line-of-business data collection are discussed in Chapter 3. There are several reasons that data on field of science in industry have not been collected. Suffice it to say that, until such data are available for the private as well as the public sector, understanding of the impact of emerging sectoral trends will always be incomplete.

Geographic Clustering

The emergence of the phenomena of Silicon Valley, the Route 128 corridor, and several other centers of high technology, often concentrated in the vicinity of major research-oriented academic institutions, has contributed to interest in the geographic aspects of R&D. The reasons for this interest are varied. Local economic development interests seek to attract and build these concentrations for their economic growth and job-producing potential. Policy makers at all levels seek to understand the workings of clusters so as to better direct public investment and land use policies. And businesses seek to exploit clustering for the several benefits these clusters afford: the ability to quickly capture spillovers from like industries; the availability of venture capital sources or “bands of angels”; proximity of incubator firms; and the prospect of parenting of new firms by larger, successful organizations (Thornton and Flynn, 2003:5).

The clusters are variously defined by region, state, and locality (Krugman, 2000). They are often defined by proximity to research universities (Acs et al., 2002). They may be unplanned or planned, like some science parks in the United States. The fact of clustering and the intense interest in the extent and determinates of clustering put a premium on understanding patterns of R&D expenditure by geographic area.

In an attempt to identify the geographic location of R&D activity, NSF has developed and published data geographically separated by state on R&D performance by industry, academia, and federal agencies, along with federally funded R&D activities of nonprofit institutions. These data show that R&D is substantially concentrated in a small number of states. NSF also prepares and publishes two analytical measures—state R&D level as a proportion of gross state product and the proportion of R&D by sector—in order to depict differences in “intensity” and source of R&D. Although the state-level data may be useful for comparison purposes, they contain measurement error. Even if reliable, they cannot be adequate to depict the extent and impact of geographic clustering, which occurs at the local level and sometimes crosses state lines.

Two recent studies on the geographic distribution of R&D activities illustrate why it is informative to disaggregate R&D by locality. In a study for the U.S. Economic Development Administration, Reamer et al. (2003)

examined the impact of R&D activity in local areas. They found that almost all innovation takes place in metropolitan areas, and that large metropolitan areas have an advantage in the innovation process due to their greater specialization and diversity. The presence of a public R&D institution (university, nonprofit research institute, federal laboratory) did not necessarily lead to local corporate technology spillover effects in all areas. Rural and small metropolitan areas did not benefit as much from the presence of such research institutions as did larger metropolitan areas.

Likewise, a pioneering compilation of detailed federal R&D activities (laboratories, centers, universities, and companies performing federally funded R&D) by local area used the RAND Research and Development in the United States (RaDiUS) database and supplemental data sources to detail the geographic aspects of federal R&D investments (Fossum et al., 2000). The report portrayed an impact of federal R&D funding that was heavily concentrated in a few regions, in spite of being spread in some way into nearly every community in the nation. Despite these recent attempts to put a geographic face on R&D activity, the data by state and substate level are not considered sufficiently robust or reliable to support investigation of the extent and impact of geographic clustering.

New Organizational Structures for R&D

The very vibrancy of the R&D enterprise owes significantly to the vibrancy of business, social, and political arrangements that initiate, support, integrate, and sustain innovative activity. These arrangements are constantly evolving, much as living organisms adapt and change to survive and prosper. Organizations learn and evolve. The successful adaptors join, split, move, and change associations quickly and efficiently. While this is natural and necessary, it does make life quite difficult for those who attempt to measure various economic phenomena.

The impact of alternative and emerging organizational structures is potentially a more difficult issue for the measurement of R&D than for many other types of economic activity. The organizations that conduct intangible activities like research are sometimes easily located in corporations and other entities with accounting systems that permit independent reporting of those activities. An example of an organization that is generally identifiable and for which data exist independently is the research laboratory.

Quite often, however, the organizations that conduct development activities are deeply embedded in organizations that are not traditionally considered wellsprings of R&D activity, such as manufacturing operations and marketing. As a result, these development activities are not easily distinguished from production and other corporate processes. They are often

overlooked because they are not easily identified in the accounting systems of most companies, which do not usually permit separate identification of R&D expenditures and benefits.

Other research activities cross traditional organizational lines and are similarly difficult to identify and describe. Examples of this growing group of R&D organizational entities are those formed by cooperative agreements, domestic and international collaborations, alliances, and strategic research partnerships. The entities formed by these arrangements permit the pooling of assets and the sharing of knowledge irregardless of organizational lines and geographic boundaries.

In his recent work, Chesbrough terms these arrangements “open innovation” (Chesbrough, 2003). In contrast with the closed innovation stereotype of an embedded corporate research center that seeks to single-handedly develop, commercialize, and dominate an emerging technology, the open innovation companies combine their internal capabilities with an awareness of the innovation marketplace and a business model that embraces licensing, acquisitions, and collaboration to maximize the speed and impact of innovation. New forms of innovation may allow companies to reduce spending on internal R&D facilities while continuing active R&D programs.

When carried into other areas of the business, at their ultimate, these arrangements define a new kind of 21st century enterprise that separates its physical and legal boundaries and fragments the old legal entity into a labyrinth of licenses, contract, and other trading agreements, often involving multiple jurisdictions (Eustace, 2003). These trends are further characterized by Romer as being part of a “soft revolution,” in which assets described as soft, immaterial, or intangible may be key economic goods (Romer, 2003). An analysis by the European Community suggests that this “hidden” productive economy that is intimately related to R&D requires new measurement tools. Its report found that these new arrangements would not be appropriately depicted in current measures.

Today’s R&D expenditure surveys produce measures based on firms that conduct R&D in traditional entities, such as labs, and are operated within fixed boundaries with resources that are physical and owned. To the extent that they fail to identify the new organizational structures, they give a flawed picture of innovative activity. Estimates of total innovative activity by Hollander and Mansfield suggest that focusing on “dedicated” R&D effort may be missing half of all innovative activity.

Globalization of R&D Activity

One of the most important and complex trends is the so-called globalization of R&D and innovation. There is much testimony to an apparent

rise in cross-border corporate R&D, although past studies have largely been anecdotal or based on very small samples. In a paper presented to the National Research Council workshop on R&D data issues, Kuemmerle (2003) summarized the results of a study of the foreign direct investment decisions of 32 multinational firms. He found extensive globalization of activity among his sample of multinational corporations, with an average of nearly five international R&D sites per firm. These firms invested in R&D activities abroad either to appropriate relevant knowledge and skills through externalities, or to transfer knowledge and skills within the company from knowledge creation to manufacturing and marketing. His report concluded with a recommendation for more detailed data collection and the standardization of data collection on foreign direct investment in R&D across more countries.

There are several indirect indicators on the extent of globalization of R&D, primarily through three major data sources: counts of international business alliances maintained in the Cooperative Agreements and Technology Indicators database compiled by the Maastricht Economic Research Institute on Innovation and Technology (CATI-MERIT); records of government-to-government cooperation maintained by RAND; and data from NSF on U.S. expenditures financed by foreign sources, industrial R&D performed by foreign affiliates of U.S. companies, and industrial R&D performed by U.S. affiliates of foreign companies by NSF. These data sources depict a large amount of international activity but by no means an explosion in the internationalization of R&D. In fact, these indirect measures suggest a leveling off in new activity following a peak in the mid-1990s. The importance of the international sector suggests a need for more and better expenditure measures that cross international borders.

There is no doubt that the globalization of R&D will have a significant impact on the measurement of R&D. A fresh examination of the impact of globalization by the Conference Board, which is reviewing the validity of science and technology indicators for international comparisons and for conducting R&D surveys of multinational firms, has concluded that substantial organizational change in multinationals is affecting the way R&D is conducted (McGuckin, 2004). The study observes that research is becoming more closely aligned with corporate business plans, and that basic research is not very relevant to most firms since multinational businesses rarely undertake research that does not have some potential for application. Research is increasingly being conducted in closer collaboration with outsiders (universities and business alliances), and development makes extensive use of alliances, particularly customer and supplier partnerships. Some of the costs of R&D are being borne by suppliers and probably not identified as R&D on their books.

Some of the concern focuses on the global outsourcing and offshoring

of various aspects of U.S. business operations, including R&D. It has been suggested that research and technology outsourcing is coming of age, and a great deal has been written about the subject of external sourcing of R&D (Howells, 1999). However, little is known about the extent of global outsourcing of R&D. There is an expanding body of knowledge—some coming from the Sloan Foundation Industry Centers program—that suggests that outsourcing of R&D is becoming an issue of some importance. This path of inquiry suggests the need for the systematic collection of information on this phenomenon.

CONCEPTS AND DEFINITIONS

Understanding of the changing environment for research and development is conditioned by the concepts and definitions that underscore the measurement of R&D. These concepts and definitions have been shaped, in turn, by the changing environment for research and development.

This symbiotic relationship between the phenomenon to be measured and the measures themselves constitutes a continuing challenge to NSF and the science and technology constituents the agency serves. On one hand, it is important that concepts reflect changing reality, and definitions are revised as conditions change and understanding advances. On the other, it is important that concepts and definitions are consistent with those used in other countries, particularly U.S. trading partners; that they are consistent over time so that intertemporal comparisons can be made; and that they represent a general professional and public understanding of the phenomena to be measured so that they have credibility. There is a tension between these competing goals.

It is important to start at the beginning—with the concept of R&D expenditures. According to the Frascati Manual—the basic international source of methodology for collecting and using R&D statistics—an organization may have expenditures either within the unit (intramural) or outside it (extramural) (Organisation for Economic Co-operation and Development, 2002a). Each of the major R&D entities—government, academia, and industry—has a mixture of intramural and extramural expenditures. A proper accounting of R&D expenditures requires an understanding of the flow of resources among units, organizations, and sectors.

In practice, these rather straightforward concepts are quite difficult to measure. For example, government generally makes extramural expenditures. Some that are made through direct procurement, grants, cooperative agreements, or other financial instruments are quite easy to measure and to be reported by the recipient. However, there is an entire class of expenditures in the form of free services, implicit rent, forgiven loans, bonuses, and

tax credits for which the value is less directly measurable and much more difficult for the beneficiary to report.

Similarly, industry makes direct investment in R&D that is relatively easy to measure and indirect investment that is harder to quantify. Industrial R&D spending data are collected at the corporate level and classified by the principal business of the firm. Thus, the diversity of R&D performed by many multinational companies is obscured, and large amounts of R&D expenditures can be reclassified from year to year as a result of reclassifying the principal business of one or more large companies (e.g., reclassifying IBM from computer manufacturing to computer services).

Another major issue in the defining of R&D has to do with the general R&D typology—basic research, applied research, and development applied to all sectors—and the imprecision with which these components are reported. For example, there are incompatibilities in the very measure of basic research. The guidance that NSF provides on the questionnaires concerning the cutoff point between basic research and applied research is deliberately vague. Given the diversity and complexity of activities that might be subjectively (and objectively) defined as basic research, the agency has relied on the perspective of the survey respondents in reporting these data. It is admittedly a challenge for most respondents. Even when respondents do have a clear idea of the activities that they would classify as basic research, generally their accounting and budget records are not maintained with this classification coded.

In the survey of business enterprise R&D, basic research is defined to include the cost of research projects that represent original investigation for the advancement of scientific knowledge and that do not have specific immediate commercial objectives, although they may be in the fields of present or potential interest to the reporting company. Applied research is to include the cost of research projects that represent investigation in discovery of new scientific knowledge and that have specific commercial objectives with respect to either products or processes.

For academic R&D, the guidance is to rely on official budgetary and regulatory definitions for R&D: R&D for purposes of this survey is the same as “organized research” as defined in Section B.1.b. of OMB Circular A-21 (revised). It includes all R&D activities of an institution that are separately budgeted and accounted for. R&D includes both “sponsored research” activities (sponsored by federal and nonfederal agencies and organizations) and “university research” (separately budgeted under an internal application of institutional funds). Research is systematic study directed toward fuller knowledge or understanding of the subject studied. Research is classified as either basic or applied, according to the objectives of the investigator. Basic research is directed toward an increase of knowledge; it

is research for which the primary aim of the investigator is a fuller knowledge or understanding of the subject under study rather than a specific application thereof. Thus, in reality, basic research is to be defined at the individual grant level by each principal researcher. When this is not possible, each department head or other relevant research coordinator should review the grants. Here is another method used by one institution to estimate the amounts of basic and applied research: all federally funded grants and R&D funded from other universities, foundations, and nonprofit organizations are considered to be basic research. R&D funds received through federal cooperative agreements and federal contracts and most state-funded R&D are, by definition, applied research.

Finally, for the survey of the government sector, NSF and the Office of Management and Budget use the same definitions. Basic research is defined as systematic study directed toward fuller knowledge or understanding of the fundamental aspects of phenomena and of observable facts without specific applications toward processes or products in mind. Applied research is defined as systematic study to gain knowledge or understanding necessary to determine the means by which a recognized and specific need may be met. Development is defined as systematic application of knowledge or understanding, directed toward the production of useful materials, devices, and systems or methods, including design, development, and improvement of prototypes and new processes to meet specific requirements (U.S. Office of Management and Budget, 2003a).

The fields-of-science taxonomies are another example of definitions that have been maintained over time despite clear evidence that the phenomena to be measured have changed. The definition of basic and applied research sponsored by federal agencies and conducted at universities is classified by fields that reflect the traditional—and in the view of some critics—antiquated organization of university departments. The field classification of public-sector research and the industrial classification of privatesector R&D are not comparable, and classifications are not standard across NSF’s R&D and personnel surveys. Additional discussion of the fields-of-science issues is found in Chapter 6.