3

Ensuring Data Access and Utilization

Users of NOAA’s environmental satellite data fall into two distinct groups: those that receive data directly from NOAA and those that receive data indirectly as data-derived, value-added products from resellers. The group that receives data directly comprises a relatively small number of large institutions that individually consume huge amounts of data, such as the National Weather Service, the Department of Defense, universities, and other research institutions. The group that receives data indirectly includes a very large number of private citizens who individually consume a small amount of data from sources such as Accuweather and Weather.com. For this second group, the importance of environmental satellite data and the total volume consumed will grow dramatically as mechanisms for delivery of the data continue to improve.

MAKING IT EASIER TO USE ENVIRONMENTAL SATELLITE DATA

Data utilization by both direct and indirect users can be dramatically increased by making data easier to locate and use. The utilization of any data set is highly dependent on the following four “ease” factors: (1) the ease of discovering the data set, (2) the ease of understanding what the data set is, (3) the ease of acquiring the data set, and (4) the ease of translating the data set to a usable format. NOAA can best ensure utilization of its environmental satellite data by making certain that potential users can easily find, understand, obtain, and analyze the data.

The Internet has totally changed user communities’ expectations regarding what

acceptable data availability is. Twenty years ago a researcher looking for a data set began by searching in a library through card catalogs and periodical indexes, not uncommonly spending weeks finding relevant citations, locating the appropriate publication and its authors, requesting a copy of their data, and then waiting for the data to arrive by mail. Twenty years ago, a month was a reasonable and acceptable time for the entire data discovery and acquisition process. Today the expectation for that time frame has been compressed to less than a day.

Today’s data users want to go to their favorite search engine and type in a few keywords describing the data they are looking for. They then expect to immediately see a listing of all relevant sources with direct links to Web pages that describe the data and are capable of delivering it directly to users’ desktops with a simple click of their mouse. Finally, they expect the data to be in a format that is directly usable by whatever software package they happen to have. These are not unreasonable expectations for a potential data user, given today’s technologies. In fact, NOAA could make this specific data discovery and delivery scenario integral to its goal for users’ access to and use of its environmental satellite data. The achievement of the goal of near-real-time data availability is dependent on NOAA’s fulfillment of the four “ease” factors mentioned above and discussed in more detail below.

Data cannot be used unless it has been found and understood. The first step in making data widely available is to specify a metadata standard that will be applied to all data sets produced by a data supplier. The purposes of a metadata standard are to provide a common terminology and set of definitions for documentation of the digital data being described. The standard should establish the names and the structure of data elements to be used for these purposes as well as their definitions and information about the values that are to be provided for the data elements. (Metadata for federal geospatial data are required by Executive Order 12906, “Coordinating Geographic Data Acquisition and Access: The National Spatial Data Infrastructure,” which was signed in 1994 by President Clinton.) These metadata can then be used as a mechanism for distributing key words to agency Web sites and public search engines, thus enabling users to quickly and easily find and understand the data.

A user who has located data he believes will be useful will want to acquire the data as quickly as possible. If the data set is of a reasonable size, then the user should be able to download the entire set to a workstation via a widely accepted transfer protocol such as FTP or HTTP. If the data set is too large for such a transfer, then the user should be able to either view a compressed or degraded version of the data, or alternatively, download a subset of the data at its full resolution. The user can then order a CD or DVD of the data and have it delivered within a day or two.

Once a user has downloaded a data set to her desktop, it is imperative that the data be in a format that can be used easily—ideally, the most widely usable binary formats. When that is not possible, the format of the data should be fully docu-

mented so that the data can be easily imported into the user’s application. All data sets should be readily usable by non-programmers.

MEETING USERS’ REQUIREMENTS FOR ENVIRONMENTAL SATELLITE DATA

Direct Users

NPOESS and GOES-R will increase by several orders of magnitude the volume of NOAA data delivered to direct users. This increase must be accommodated by all the systems that support the operational and research environmental communities, and particularly by the components that support data production, storage, and distribution. For example, today the NOAA Satellite Active Archive/CLASS ingests approximately 0.2 terabytes per day. The launch of the first NPOESS satellite in 2009 will raise the daily archive rate to more than 5.3 terabytes per day. Subsequent NPOESS launches will raise the rate by approximately 1 terabyte per day per spacecraft until the predicted archive rate is in excess of 10 terabytes per day.1 In addition, each satellite in the GOES-R series (first launch planned for 2012) is expected to produce much more than 1 terabyte per day of data. Therefore, adaptable data storage and distribution strategies, which can be tailored to the specific needs of users, are needed to achieve a cost-effective set of capabilities that are part of the Integrated Earth Observation System.

NOAAPORT, an important mechanism for broadcast distribution of operational weather data products, provides data at a rate of 1.5 Mbps. While incremental, or even step-function, increases in transmission bandwidth are possible via NOAAPORT-like direct broadcast system technologies, these systems do not scale well and offer limited additional capability unless the majority of the data products being broadcast are required by most of the user base. Although there will always be some high-volume users who can and want to receive all available data (e.g., NCEP), many other operational and scientific users would find such a data tsunami overwhelming and not useful. Then, the issue becomes how to get the right data to the right user at the right time.

The concept of operations for data distribution should center on tailoring data products to systems’ and users’ processing, storage, distribution, communications resources, and information requirements. Among the candidate strategies for meeting the needs of the broad spectrum of operational and scientific users of NOAA’s data are the following:

-

Direct broadcast: The current direct broadcast capabilities of POES, GOES, and NOAAPORT are critical elements of NOAA’s overarching strategy to serve society’s needs for weather and water information. NOAA POES, GOES, and NASA EOS satellites all currently provide direct-broadcast capability (for details, see the case study on direct broadcast in Appendix D). Although it is probable that Internet services for data distribution will satisfy a large fraction of the user community, it remains likely that because of the concerns about the availability and quality of commercially provided Internet service, as well as about the cost of maintaining private networks, direct broadcast will remain a valid option for many users, either as the primary data transport method or as a backup. The concerns of direct-broadcast users (e.g., data resolution, timeliness, bandwidth requirements, systems/technology/ media, user upgrade cost, and transition approach) all have to be addressed.

-

Subsets and subsampling: Subsets of data products can be tailored routinely and/or in response to a specific request (on demand). Routine subsetting is done in response to standing requirements (e.g., regional, or for a particular data characteristic) that can be defined a priori. On-demand subsetting occurs in response to current and forecast conditions. For example, routine subsetting can be used to cover areas of tropical storm formation that can be defined well in advance, whereas on-demand subsetting applies in the coverage of an existing storm’s path, which must be defined and updated as frequently as several times a day. On-demand subsetting to cover thunderstorm and tornado conditions has to be continuously refined on a time scale of hours, or even minutes. Routine subsetting can also be done in a temporal context: for example, a standing requirement for general surveillance might require delivery of information only several times a day, rather than hourly or every time an area is observed.

Subsampling is done in the context of either reduced spatial (e.g., 10-km resolution rather than a standard 1-km-resolution product) or, perhaps, reduced spectral (e.g., reduced number of channels) resolution.

Subsetting and subsampling can be combined to provide a continuum of data products ranging from broad-area, low- to moderate-resolution products to regional (or smaller) high-resolution products.

-

Subscriptions: Data products supplied by subscriptions can range from all of the data all of the time, to some of the data some of the time. For example, operational weather modelers may want all available data, whereas regional users may require high- or moderate-resolution data, but only for limited geographic areas and/ or times. To fulfill validated subscriptions, NOAA can develop appropriate data products (e.g., for characterizing upper Midwest, tropical regions based on the use of subsetting and subsampling capabilities). Similarly, event-driven subscriptions (e.g., lifted index or cloud cover) can be used to provide data delivered to meet standing or ad hoc needs.

-

Search and order: It will be difficult, if not impossible, to completely define a set of subscriptions that fully cover the range of conditions for which a user might need data. For example, an Advanced Weather Interactive Processing System (AWIPS) user who is monitoring an area might decide that additional information not already covered by a static or dynamic subscription would be useful. An ad hoc query, from either the AWIPS terminal or a user client, would allow the user to “drill down” into the data for more resolution, more bandwidth, more recent data, and so on, or to obtain data from other areas, time periods, or sensors that are helpful in understanding the current situation for forecasting.

-

Peer-to-peer access: As data volumes increase, the traditional “person in the loop” search and order will be increasingly supplemented by peer-to-peer2 system interfaces that automatically harvest NOAA data repositories for the data needed by users to generate their own domain-specific information products.

-

Maintaining a capability for data assurance: Data assurance, the guaranteed delivery of scientifically valid data, is a key user requirement that system architecture, design, implementation, and operations must all support. Users must be able to tell, for example, if data has become corrupted during transmission, and so the data must have a defined, controlled format that ensures data integrity regardless of the delivery media. Similarly, data-compression techniques to reduce transmission and storage requirements must ensure the retention of scientific and operational validity and function from the perspective of the system and the user. Subsetting and subsampling create new products that must also meet NOAA’s quality standards.

Despite the potential of each of these approaches to reduce communications requirements and enable the increased use of environmental satellite data throughout the operational and scientific community, these strategies pose additional requirements for data production, distribution, storage, and management.

Level-0 or level-1 data products have to be stored, and standard products produced must be based on operational and scientific needs. Dynamic requests create a need for ad hoc, on-demand processing to generate tailored products as described above. In addition, subscriptions based on data content (e.g., a parameter exceeding a predefined threshold value) are a source of additional requests for on-demand products and distribution. Simultaneous demands from many users or denial-of-

|

2 |

Generally, a peer-to-peer (or P2P) computer network is any network that does not have fixed clients and servers, but rather a number of peer nodes that function as both clients and servers for the other nodes on the network (in contrast with the client-server model). Any node on a P2P network is able to initiate or complete any supported transaction. Peer nodes may differ in local configuration, processing speed, network bandwidth, and storage quantity. Popular examples of P2P are file-sharing networks. See Wikipedia at http://en.wikipedia.org/wiki/Peer_to_peer. |

service attacks could overload the data distribution system and degrade overall performance.

A long-term archive is needed to protect and preserve the permanent record, thereby enabling improvements in science through reanalysis, reprocessing, and development of new, time-series products. Requirements for access tend to be driven by long-term analysis campaigns and data volume rather than by timeliness. The archive must contain, at a minimum, the level-0 or level-1 products, the production software, and the control/initialization parameters used in operations. Selected higher-level products may also be archived to support distribution of historic data sets, provide a record for long-term studies and studies of trends, and support reanalysis and reprocessing. Reprocessing can then be performed on the data to improve algorithm performance or validate the impact of system changes via comparison with the operational products. Distribution of the archived data, reprocessed data, or new products is performed using the subscription and search-and-order capabilities described above.

Short-term storage is needed to meet users’ immediate requirements and to provide a complete look at the recent and current environment, and so requirements for access are very time sensitive. Short-term storage also supports ad hoc subscriptions and search and order, and holds data between production and distribution for both data access and data distribution scenarios.

System management is required to resolve resource issues that arise when demand exceeds production, to provide for archiving/storage and distribution so as to ensure that needs are met in a prioritized manner, and to ensure that system security and integrity are maintained. System management can mitigate these challenges by allocation of additional resources (e.g., grid computing for processing), offloading service requests to back-up sites, or suspending service requests until higher-priority needs are met.

In summary, the higher-resolution data and improved temporal coverage offered by the NPOESS and GOES-R systems require innovative approaches to the production, archiving and storage, and distribution of the data so that NOAA’s goals for the utilization of environmental satellite data by a broad range of users for the benefit of society can be realized.3

Indirect Users

Internet and cellular radio communication technologies are already expanding the use of NOAA’s environmental satellite data by making data-derived products

such as weather maps and forecasts available to the private citizen via Web browsers and cellular phones. Such use of data should increase dramatically over the next 10 years as use of the Internet and cellular communication grows. For example, real-time lightning strike alerts in a user’s local area could be easily accessible from the GOES-R (2012+ time frame) Geosynchronous Lightning Mapper, which will identify the time and location of each strike. With constrained budgets a fact of life, NOAA’s emphasis should include the assured availability of a prioritized, validated data product set, beginning with calibrated-at-aperture radiances, atmospherically corrected radiances, cloud and other masks, and basic products of key utility (e.g., measurements of sea-surface temperature) before focusing on the complete spectrum of possible products. A possible consequence of this approach could be a focus on supporting the value-adding efforts of researchers and resellers rather than the interests of individual consumers (for every possible product). It is unlikely that NOAA will have the expertise or the resources to deal with the growing diversity of requirements and interests of hundreds of thousands of individual data users. Instead, NOAA should concentrate on developing and standardizing data portals suitable for data resellers, universities, and other value-adding parties.

TOWARD ENHANCED DATA UTILIZATION—A SAMPLING OF CURRENT EFFORTS

The Geospatial One-Stop Initiative

President George W. Bush’s e-government strategy has identified several high-payoff, government-wide initiatives to integrate agency operations and information technology investments.4 The goal of these initiatives is to eliminate redundant systems and significantly improve the government’s quality of customer service for citizens and businesses. One of these initiatives is entitled “Geospatial One-Stop.”

Geospatial data identifies the geographic location and characteristics of natural or constructed features of and boundaries on Earth. Although a wealth of geospatial

information exists, it is often difficult to locate, access, share, and integrate in a timely and efficient manner. Myriad government organizations collect geospatial data in different formats and standards to serve specific missions. This can result in inefficient spending on information assets, and impedes the ability of federal, state, and local government to perform critical intergovernmental functions, such as homeland security.

The Geospatial One-Stop initiative will promote coordination and alignment of geospatial data collection and maintenance among all levels of government. The initiative’s goals include:

-

Developing portals for seamless access to geospatial information,

-

Providing standards and models for geospatial data, and

-

Creating an interactive index to geospatial data holdings at federal and non-federal levels.

Encouraging greater coordination among federal, state, and local agencies as regards existing and planned geospatial data collections, Geospatial One-Stop will accomplish these goals by accelerating the development of the National Spatial Data Infrastructure (NSDI), which will provide federal, state, and local governments, as well as private citizens, with “one-stop” access to geospatial data. Interoperability tools, which allow different parties to share data, will be used to migrate current geospatial data from all levels of government to the NSDI, following data standards developed and coordinated through the Federal Geographic Data Committee using the standards process of the American National Standards Institute. A comprehensive Web portal will then be developed and deployed to provide “one-stop” access to standardized geospatial data.

Much of the data that NOAA is responsible for managing is geospatial in nature and is therefore subject to the requirements of the GeoSpatial One-Stop initiative. For example, hyper-dimensional environmental satellite data is obtained from observing Earth’s land surface, oceans, and atmosphere in three spatial dimensions as a function of time, using multiple sensors and multiple orbit planes, each with specific solar-illumination and viewing-zenith angles, with multi- and hyper-spectral sensor capability. From these data are created multi-dimensional environmental data products with accompanying metadata. Typical utilization includes data development, processing and reprocessing, validation, and analysis. Many of these processes require integrating simultaneous and collocated “truth” data from ground-based and airborne in situ and remote assets, including data acquisition, management, imaging, and processing in arbitrary multiple dimensions, including spatial/ relational methods in time and space.

The Experience with EOSDIS

The Earth Observing System Data and Information System (EOSDIS) manages data from NASA’s science research satellites and field measurement programs. The system has been operating since August 1994. EOSDIS is a distributed system with many interconnected nodes, each with specific responsibilities for the production, archiving, and distribution of data products. Eight of these nodes constitute the Distributed Active Archive Centers (DAACs) around the United States.

Currently, EOSDIS is managing and distributing data from:

-

EOS missions (Landsat 7, QuikSCAT, Terra, Aqua, Aura, and ACRIMSAT);

-

Pre-EOS missions (UARS, SeaWIFS, TOMS-EP, TOPEX/Poseidon, and TRMM); and

-

All of the Earth Science Enterprise legacy data (e.g., Pathfinder data sets).

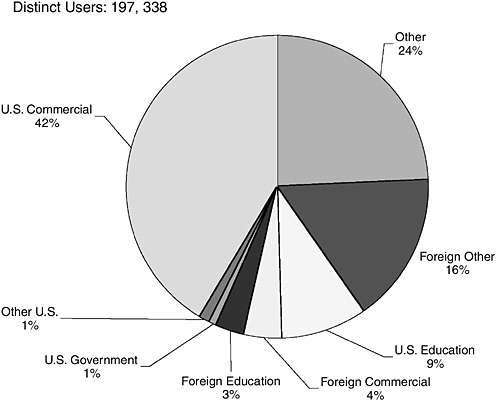

EOSDIS holds more than 1450 data sets. In fiscal year 2000, EOSDIS supported more than 104,000 unique users and filled 3.4 million product requests (over 8.1 million products were delivered). Repeat users averaged 60 percent. EOSDIS customers include researchers; federal, state, and local governments; application users; the commercial remote sensing community; teachers; museums; and the general public. Anyone can access EOSDIS data at any DAAC through the EOS Data Gateway.

EOSDIS is managing extraordinary rates and volumes of scientific data. The Terra spacecraft produces 194 gigabytes of data per day with a data downlink speed of 150 megabits per second. The average amount of data collected per orbit is 18.36 megabits per second. In addition to Terra, Landsat 7 is producing 150 gigabytes of data per day.

In August 1999, NASA’s entire Earth science data holdings were estimated at about 284 terabytes. Terra’s data when processed through higher levels totals 850 gigabytes per day. The Terra satellite alone will have doubled NASA’s Earth science holdings in less than 1 year. At 194 gigabytes per day Terra takes in almost as much data as the Hubble Space Telescope acquires in an entire year, as much data as the Upper Atmosphere Research Satellite obtains in 1 1/2 years, and as much data as the Tropical Rainfall Measuring Mission obtains in 200 days.

Figure 3.1 shows user demand for EOSDIS data products during fiscal year 2003.

Case Study of Temperature Measurements

One example of how NOAA has developed a means to take advantage of externally processed, value-added satellite data is found in global atmospheric tem-

FIGURE 3.1 User demand for EOSDIS data products during fiscal year 2003. SOURCE: Presented to the committee by H.K. Ramapriyan, assistant project manager, ESDIS Project, NASA.

perature products generated from microwave emissions. In attempting to create global measurements of upper air temperatures with long-term stability, two University of Alabama, Huntsville scientists developed a relatively homogeneous time series of globally gridded, deep-layer temperature measurements in 1990. These products required highly specialized knowledge of the NOAA microwave instruments and of the spacecraft on which they flew. Years of subsequent research, funded through scientific grants and published in the peer-reviewed literature, have been necessary to discover minuscule, spurious effects that are of no consequence

to the real-time weather forecasting mission, but that are significant for long-term climate monitoring. For example, adjustments were developed for errors introduced from orbital drift, calibration shifts, and unanticipated biases, all of which generally fall below 0.1 C in magnitude. Since knowledge of changes in global temperature needs to be precise to a few hundredths of a degree per decade, such errors are important to detect and resolve.

Producing a data set with precision of this level on a continuing basis might best be called operational research. Unfortunately, there has not been a source of funding for this type of project because it requires constant human attentiveness and intervention rather than a set period of performance.

Because of the highly specialized expertise required for the construction of these data sets, few scientists undertake such endeavors, and such efforts are generally outside the tasks mandated for NOAA employees. NOAA has deemed a few of these data construction activities as vital for its climate monitoring function and so has initiated small contracts with groups such as the University of Alabama, Huntsville, which then provide monthly updates of these products, usually by the tenth day after each month’s end. As additional spurious effects are discovered and minimized, these data sets are updated, metadata files describing the issue are created, and if appropriate, the results are published in the peer-reviewed literature.

This case study presents a scenario in which NOAA’s mission to provide climate-quality data records for national and international assessments is met through the expertise of university scientists at relatively low cost.