Engineering and the System Environment

Paul C. Tang

Palo Alto Medical Foundation

I will address three questions: (1) how engineering can help determine characteristics of a desirable information infrastructure; (2) how engineering can help establish data standards; and (3) how engineering can help build an information infrastructure for health care.

Ethnography, the social science method of studying human cultures in the field, is a useful technique for understanding information needs in the health care environment. A derivative technique, video ethnography, includes the video recording of subjects in their natural state. With the consent of patients and physicians, we used observational ethnography and video ethnography to study the information-seeking habits of physicians.

Time and motion studies have shown that physicians spend up to 38 percent of their time foraging for data in the paper medical record and creating more data for the record (Mamlin and Baker, 1973). Formal studies of physicians’ information needs showed that 81 percent of the time they were unable to find one to 20 pieces of information (four pieces on average) important to a specific patient visit at the time decisions were being made (Tang et al., 1994). Although physicians often spent additional time trying to track down the missing information, including asking patients what they might have heard, they often ended up making decisions without the information, even though they had the paper-based medical record 95 percent of the time. In summary, although clinical decision making depends on the availability of patient data, domain information, and administrative information, these data are routinely not available when physicians make patient-care decisions.

In Crossing the Quality Chasm, the Institute of Medicine stated that “American health care is incapable of providing the public with the quality health care it expects and deserves.” Furthermore, “if we want safer, higher-quality care, we will need to have redesigned systems of care, including the use of information technology to support clinical and administrative processes” (IOM, 2001). There are many challenges to be overcome in transforming health care via information technology. Some of the environmental barriers to the adoption of information technology are: high capital acquisition costs for electronic medical records (EMR); an inadequate supply of fully functioning EMR systems; high training costs for EMR implementation; and uncertainty about who will pay and who will benefit.

Another challenge facing the health care system is the lack of an effective mechanism for knowledge diffusion. Even though medical knowledge is increasing very rapidly, the diffusion of medical knowledge into practice has been limited by the absence of decision support at the point of care. Compliance with the guidelines for influenza vaccinations is a good example. It is well known that administering the influenza vaccine to eligible adults can halve the death rate, halve the hospital admission rate, and halve the costs associated with outbreaks of influenza. Nevertheless, because of human oversight, physicians immunize only 50 to 60 percent of the eligible patients they see during flu season. Simple computer-based reminders at the time of a patient’s visit have been shown to increase adherence to the simple clinical guideline by 78 percent compared to controls (Tang et al., 1999). Engineering techniques, such as EMR systems that remind physicians at the moment of opportunity, have been proven effective.

Another area of opportunity for engineering is in resolving cross-organizational issues that impede health care delivery. Health care is delivered in many settings, by multiple providers, and over a period of time. Yet, because of an absence of standards, neither the paper system nor computer-based systems allow for the seamless, reliable exchange of data across settings of care. The current health care delivery model is highly fragmented and poorly designed. Furthermore, current health care financing schemes create disincentives to the creation of any kind of system of care. Engineering could make a major contribution by applying systems design and analysis techniques to the health care delivery system.

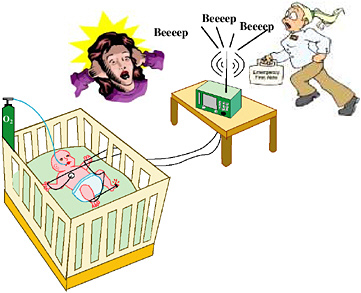

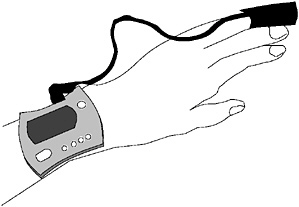

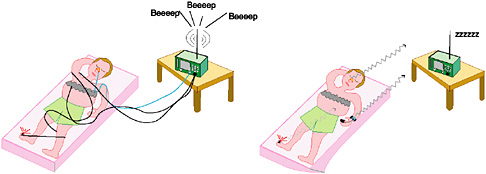

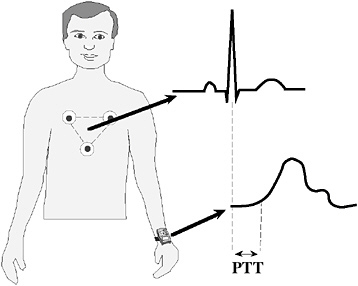

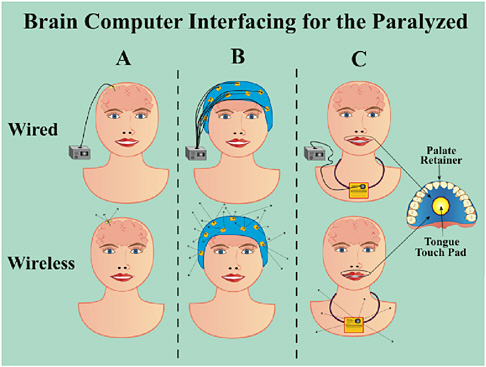

Another area of opportunity is at the interface between devices and information systems. As living beings, patients constantly emit signals, but there is no instrumentation to capture and filter those signals. At best, information is gathered at random intervals determined by the vagaries of matching schedules rather than by clinical events. As the care of patients is transferred from one clinic to another or to a specialist or to a hospital, the inefficiency of these “handoffs” further impedes the delivery of coordinated care. We have also failed to provide patients with tools to help themselves. We must do a better job.

Engineering can help provide methods for the continuous gathering of data at patients’ homes, the automatic filtering of data, and alerts to care providers when there are deviations from expected control points. EMRs with evidence-based decision support can improve the diffusion and implementation of best practices. Collaborative work technologies—among providers and between patients and providers—could be applied to patient care.

In short, twenty-first century clinicians have been practicing medicine with twentieth-century information tools. We need a National Health Information Infrastructure (NHII) to support the information-driven practice of contemporary medicine. This infrastructure would consist of standards for connectivity, system interoperability, data content and exchange, applications, and laws. The challenge is to design, develop, and implement these necessary systems in a resource-constrained environment. Financing NHII and reimbursing the costs of ongoing operation will be key to the widespread adoption of engineering and information technology that supports the delivery of care.

There are many engineering opportunities in building the NHII. First, we will need a technical infrastructure that includes standards to enable systems to interoperate technically and semantically. Second, the system must have an application infrastructure that supports mobile, secure, and robust functionality that can access patient information wherever it is stored. Third, there must be an interoperable method of storing structured, executable knowledge that can be used at the point of care by any qualified provider. Fourth, policies must be in place to protect sensitive, confidential patient information stored and transmitted by these systems. And finally, there must be a financing and incentive model that provides investment resources for the implementation and continuing operation of patient care systems.

Engineering opportunities abound to address the information-technology needs of health care. At the top of the list is the need for a systems perspective and repertoire of methods in the study and design of a rational health care system that serves the diverse needs of current and future patient populations. Monitoring technologies that process and interpret high-volume data and mine the important information therein would be useful. A secure, wireless infrastructure would support patient and provider mobility. Interoperability, for both computers and people, would be important for collaboration among providers and patients. Knowledge diffusion tools would be important to help physicians keep up with fast-paced advances in medical knowledge. Tools to assist with distributed authoring of key technical standards would help accelerate the development of essential technical standards. Methods of managing the constant queues in scheduling scarce medical resources would help distribute medical services to those who need them.

In summary, delivering patient-centered, evidence-based, safe care is an expectation of twenty-first century health care. To deliver on that expectation, we need sophisticated, computer-based tools, and an NHII. That is the engineering challenge—and the engineering opportunity.

REFERENCES

IOM (Institute of Medicine). 2001. Crossing the Quality Chasm: A New Health System for the 21st Century. Washington, D.C.: National Academy Press.

Mamlin, J.J., and D.H. Baker. 1973. Combined time-motion and work sampling study in a general medicine clinic. Medical Care 11(5): 449–456.

Tang, P.C., D. Fafchamps, and E.H. Shortliffe. 1994. Traditional medical records as a source of clinical data in the outpatient setting. Pp. 575–579 in Proceedings of the 18th Symposium on Computer Applications for Medical Care. Philadelphia: Hanley & Belfus Inc. Medical Publishers.

Tang, P.C., M.P. LaRosa, C. Newcomb, and S.M. Gorden. 1999. Measuring the effects of reminders for outpatient influenza immunizations at the point of clinical opportunity. Journal of the American Medical Informatics Association 6(2): 115–121.

Challenges in Informatics

William W. Stead

Vanderbilt University Medical Center

The ultimate purpose of information technology is to help everyone make better decisions, no matter what their role in the system. Biomedical informatics is the structuring of information into simple systems that clarify complex relationships, reveal relationships between similar or related information from disparate sources, and link that information into the work flow.

Implementing a solution can be difficult, however, because current tools were built largely to administrate and automate processes instead of to provide information to help us function more effectively. The value of information decays exponentially over time. Without effective information technology, information moves very slowly through administrative processes or publication channels. The object of information technology is to move information quickly so it can be assimilated into decision-making processes at the right time.

Let me give you an example from Vanderbilt. A few years ago, we noted that physicians were ordering many more tests than could be justified. They did this for one simple reason—the tests were routine. We put an intervention in place to address this problem. Physicians now have a decision-support tool that shows them patient data and local and national guidelines at the time they are making ordering decisions. When a physician tries to order basic chemistries, for example, a screen pops up showing graphically the results of previous tests for that patient and highlighting which chemistries are stable. With that information, physicians may choose to order tests just for the chemistries that are changing or the ones they think need to be done. With this change, we reduced the number of basic chemistries ordered by 60 percent.

Two trends in information technology will be particularly important for the management and provision of knowledge in health care. First, with the convergence of media, computing, and communication, all information will be available in digital form. The information will be easily accessible and available at minimum cost, which means we will be able to provide the most appropriate information organized to meet an objective. Second, the continuing movement toward smaller, cheaper, faster technology will result in better user interfaces and embedded, context-sensitive sensors that can identify the time, location, and function of a technology. This will eliminate a large number of data entry problems.

When data displays in fighter cockpits became too big, too numerous, and too fast for pilots to react, virtual reality displays were developed to create patterns they could recognize. Thus, data were turned into useful signals, and overload was eliminated. The same kind of advancements will be made in health care.

One of the trends in informatics is the development of an architecture that allows you to separate the content from the tools. Historically, information systems have held information about how we practice hostage. When we separate the content from the tools we use to automate processes, the information becomes scalable and much more useful. We will have to figure out how to protect information about patients and about how we practice. This will require a system that keeps the information separate and encapsulates it in digital rights technology, so that the patient can always pull the key. Caregivers, however, are not trained to protect privacy, so unless “We shall not violate privacy” becomes their Hippocratic oath, we will not be able to reap the benefits of new information technologies.

A second trend concerns process redesign. We are working on new approaches based on constant communication between the different parts of a distributed system process. This will lead to changes in the educational system based on a model of continuous education in which teaching is done with tools and techniques that students use as they move forward. Instead of credentials being based on completion of a curriculum, credentials would be based on competencies built up over time and on learning records and outcome records. This will lead to changes in traditional roles. The

role of the physician would change from intermediary and prescriber to coach. Our current concept of patient compliance is backwards. If a physician prescribes something that doesn’t fit in with a patient’s life style, the patient probably won’t follow it. Instead of working with the patient to develop a regime the patient is likely to follow, we label the patient noncompliant.

One of the challenges facing informatics designers is the need for standard structures for representing biomedical knowledge, data, and patient data. We will also need people who can certify that products are in compliance with various standards. We will need a payer system with incentives for installing the informatics infrastructure and for people to use it.

People will need new skills, such as data-driven practice improvement, to perform well in this new environment. Physicians at Vanderbilt were surprised when they were presented with data about how they actually practice. They suddenly realized the variability in how they practice. Another new skill will be learning at “teachable moments” instead of trying to learn “just in case.” If a physician reads two articles every night, he will be 800 years behind at the end of the first year. Physicians will have to learn new ways to learn. Physicians and other care providers will also, of course, continue to provide traditional care and comfort.

SUMMARY

Some of the challenges facing the developers of informatics for health care delivery are listed below:

-

standard structures for representing biomedical knowledge, protocols, and patient data

-

techniques for modeling diversity

-

digital certification and rights technology

-

decision-support tools that reduce the caregiver’s workload

-

certification of products for compliance with standards

-

incentives

Some of the new skills caregivers will require are listed below:

-

data-driven practice improvement

-

privacy, confidentiality, security

-

learning at teachable moments

-

distributed clinical trials

-

licensing of intellectual property

Traditional skills that all caregivers will need are listed below:

-

comforting patients

-

observing patients

-

reflecting patients’ values

-

knowing what one needs to know

-

recognizing patterns

A National Standard for Medication Use

David Classen

First Consulting Group

The unsafe use of medication is not the only safety problem in the health care system, but it is certainly one of the most significant. Most published studies about patient safety relate to the use of medication, and a lot of attention has been focused on improving the safety of medication use (Classen, 1998, 2000, 2003; Classen and Metzger, 2003; Classen et al., 1991, 1992a,b; Evans et al., 1994). Ensuring a safer medication system at an organizational level, as well as at the national level, is a major challenge that involves engineering, information technology, and the overall health care system. The creation of a national standard is very much a work in progress.

Engineers tend to think in terms of process, and this is also one way to approach the issue of medication management. From a process perspective, medication management is multidisciplinary and highly complex. Interestingly enough, in most organizations it is also a largely manual process. Even if the process of providing medications goes well, the system must be monitored prospectively to detect when things begin to go wrong. Surveillance will be one component of an improved system.

Most studies have shown that the use of medications is very risky for patients. We must incorporate what we know from the literature about risks—especially two kinds of risk. One is medication errors—errors that occur in the medication process but usually do not lead to harm to the patient. For instance, one common medication error is giving medication a few minutes late, which rarely causes harm to the patient. Another kind of risk is adverse drug events—events that actually do cause harm to patients. These two kinds of risk overlap but are not concurrent (Classen et al., 1997).

A process model showing where and how errors occur reveals that at least a quarter of the events that harm patients occur during the administration phase of the process. Many of these errors involve IV fluids rather than pills. Interventions to improve the safety of the medication process should initially be focused on the events that harm patients, rather than on the more numerous events that do not. The process model also shows that interventions in the prescribing and transcribing phases of the process could affect almost 60 percent of events that adversely impact patients (Classen et al., 1997).

One change that could affect a substantial percentage of events that harm patients is computerized physician order entry (CPOE), which is being adopted all across the country. My company, First Consulting Group, is working on a national safety standard for CPOE (Kilbridge et al., 2001; Metzger and Turisco, 2001). Another group, the Leapfrog Group, a large employer group dedicated to improving health care, is aggressively pushing for the implementation of standards (Leapfrog Group, 2003). So far, Leapfrog has focused on three proven safety practices it believes could markedly improve the safety of health care (Classen, 2003). A fourth standard, which will touch on CPOE and will be the first ambulatory standard, is about to be issued. The new standard will relate to the electronic retrieval of laboratory results and the electronic prescribing of medications for outpatients. Leapfrog intends to introduce standards in certain regions of the country and engage business leaders to pressure health care organizations to adopt the standards. But these standards have also stimulated interest in a national standard for CPOE (Classen, 2003).

The Leapfrog CPOE standard has several components. First, it will require physicians to enter medication orders for inpatients via a computer system linked to error-prevention software. Second, it will require documented acknowledgement by the prescribing physician of any interception (warning or alert) prior to an override. Third, the hospital or health care organization will be required to demonstrate that the CPOE system picks up at least half of the most common serious errors. There has been a great deal of debate about the third component (Kilbridge et al., 2001).

An organization cannot simply put in a CPOE system and say it meets the standards, because the literature shows that

there is a great deal of variability in the safety impact of CPOE systems (Bates et al., 1995; Classen et al., 1997; Evans et al., 1998; Kilbridge et al., 2001; Leape et al., 1995). The debate has centered on whether a national standard should require that CPOE systems be tested for safety using simulations. The literature shows that CPOE systems can have a wide range of effects on safety. One study of a CPOE system at Brigham and Women’s Hospital in Boston showed there was a significant decrease in medication errors, but a much smaller decrease in actual harm to patients (Bates et al., 1998). A study at another hospital showed there was a much larger decrease in adverse drug events with CPOE (Evans et al., 1998). The differences between these two studies have raised a number of questions (Classen, 2003).

The Institute of Safe Medicare Practices conducted another study of a CPOE system testing electronically ordered medications at the pharmacy level, the level at which most medications are ordered (ISMP, 1999). For the test, 10 unsafe orders were created posing 10 different problems. For instance, a drug toxic to the kidney was ordered for a patient with markedly elevated kidney function. The 10 unsafe orders were sent to 304 pharmacies around the country. Some of them caught a few of the errors, but only four of the 304 picked up all 10. And remember, these sites had systems designed to pick up errors.

A second study focused on the drug Cisapride, which was withdrawn from the market after six or seven years because it was found to interact adversely with a number of commonly used drugs (Jones et al., 2001). The FDA issued three very strong warnings that prescribing Cisapride with contraindicated drugs could be fatal. This study of how Cisapride was being prescribed in a managed care system showed that the drug was often prescribed for patients taking contraindicated drugs. Half of the time, the contraindicated drugs were ordered by the same physician; 90 percent of the time, the prescriptions were filled by the same pharmacy. When the pharmacies were investigated, it was discovered that pharmacists had turned off aspects of the safety systems in the interest of saving time.

As these studies show, having a system in place does not equal safe operation. Both studies created a lot of angst about the value of a national standard in this area. Another test, a simulation of installed CPOE systems that evaluated if they met safety standards, has led to some changes (Kilbridge et al., 2001). A variety of categories were developed, based on the points at which very common errors occurred (e.g., therapeutic duplication, ordering too high a dose, and ordering a drug to which the patient is allergic). Other areas that were tested included corollary orders (Overhage et al., 1997). For instance, if a patient is admitted with a seizure disorder, a physician may put the patient on a seizure drug but not specify the dosage. Cost was also tested. For instance, sometimes within an hour or two the same test was ordered twice. Another area tested was nuisance alerts (Kilbridge et al., 2001). If safety systems have too many warnings, physicians tend either to ignore all of them or refuse to use the system. Deception analysis was also tested (i.e., orders intended to test safety) (Kilbridge et al., 2001).

The 12 organizations tested in the first round showed a very high degree of variability in impact on medication safety. This is still a work in progress, however. The test I just described was designed for inpatients; a test for outpatients is being designed (Classen, 2003).

So far, we have learned three major lessons from testing this national safety standard. First, the impact on safety depends on how a system is installed and used rather than on which system is used. Second, CPOE had the greatest impact on safety in organizations with the most clinical decision support. Third, organizations with highly disparate systems (clinical applications from several different vendors) did not score well.

REFERENCES

Bates, D.W., D.J. Cullen, N. Laird, L.A. Petersen, S.D. Small, D. Servi, G. Laffel, B.J. Sweitzer, B.F. Shea, R. Hallisey, et al. 1995. Incidence of adverse drug events and potential adverse drug events: implications for prevention: ADE prevention study group. Journal of the American Medical Association 274(1): 29–34.

Bates, D.W., L.L. Leape, D.J. Cullen, N. Laird, L.A. Petersen, J.M. Teich, E. Burdick, M. Hickey, S. Kleefield, B. Shea, M. Vander Vliet, and D.L. Seger. 1998. Effect of computerized physician order entry and a team intervention on prevention of serious medication errors. Journal of the American Medical Association 280(15): 1311–1316.

Classen, D.C. 1998. Clinical decision support systems to improve clinical practice and quality of care. Journal of the American Medical Association 280(15): 1360–1361.

Classen, D.C. 2000. Patient safety, thy name is quality. Trustee 53(9): 12–15.

Classen, D.C. 2003. Medication safety: moving from illusion to reality. Journal of the American Medical Association 289(9): 1154–1156.

Classen, D.C., and J. Metzger. 2003. Improving medication safety: the measurement conundrum and where to start. International Journal for Quality in Health Care. Accepted for publication, Fall 2003.

Classen, D.C., J.P. Burke, S.L. Pestotnik, R.S. Evans, and L.E. Stevens. 1991. Surveillance for quality assessment IV: surveillance using a hospital information system. Infection Control and Hospital Epidemiology 12(4): 239–244.

Classen, D.C., R.S. Evans, S.L. Pestotnik, S.D. Horn, R.L. Menlove, and J.P. Burke. 1992a. The timing of prophylactic administration of antibiotics and the risk of surgical-wound infection. New England Journal of Medicine 326(5): 281–286.

Classen, D.C., S.L. Pestotnik, R.S. Evans, and J.P. Burke. 1992b. Description of a computerized adverse drug event monitor using a hospital information system. Hospital Pharmacy 27(9): 774, 776–779, 783.

Classen, D.C., S.L. Pestotnik, R.S. Evans, J.F. Lloyd, and J.P. Burke. 1997. Adverse drug events in hospitalized patients: excess length of stay, extra costs, and attributable mortality. Journal of the American Medical Association 277(4): 301–306.

Evans, R.S., S.L. Pestotnik, D.C. Classen, S.D. Horn, S.B. Bass, and J.P. Burke. 1994. Preventing adverse drug events in hospitalized patients. Annals of Pharmacotherapy 28(4): 523–527.

Evans, R.S., S.L. Pestotnik, D.C. Classen, T.P. Clemmer, L.K. Weaver, J. Orme Jr., J.F. Lloyd, and J.P. Burke. 1998. A computer-assisted management program for antibiotics and other anti-infective agents. New England Journal of Medicine 338(4): 232–238.

ISMP (Institute for Safe Medication Practices). 1999. Over-reliance on pharmacy computer systems may place patients at great risk. ISMP Medication Safety Alert 4(3). Available online at: http://www.ismp.org/MSAarticles/Computer.html.

Jones, J.K., D. Fife, S. Curkendall, E. Goehring Jr., J.J. Guo, and M. Shannon. 2001. Coprescribing and codispensing of Cisapride and contraindicated drugs. Journal of the American Medical Association 286(13): 1607–1609.

Kilbridge, P., D.C. Classen, and E. Welebob. 2001. Overview of The Leapfrog Group test standard for computerized physician order entry. Report by First Consulting Group to The Leapfrog Group, November 2001. Available online at: http://www.fcg.com/research/serve-research.asp?rid=40.

Leapfrog Group. 2003. Factsheet: Computer Physician Order Entry. Available online at: http://www.leapfroggroup.org/FactSheets/CPOE_FactSheet.pdf.

Leape, L.L., D.W. Bates, D.J. Cullen, J. Cooper, H.J. Demonaco, T. Gallivan, R. Hallisey, J, Ives, N. Laird, G. Laffel, et al. 1995. Systems analysis of adverse drug events: ADE prevention study group. Journal of the American Medical Association 274(1): 35–43.

Metzger, J., and F. Turisco. 2001. Computerized physician order entry: a look at the vendor marketplace and getting started. Report by First Consulting Group to The Leapfrog Group, December 2001. Available online at: http://www.informatics-review.com/thoughts/cpoe-leap.html.

Overhage, J.M., W.M. Tierney, X.H. Zhou, and C.J. McDonald. 1997. A randomized trial of “corollary orders” to prevent errors of omission. Journal of the American Medical Informatics Association 4(5): 364–375.

Obstacles to the Implementation and Acceptance of Electronic Medical Record Systems

Paul D. Clayton

Intermountain Health Care and University of Utah

If an investigator could come up with a big yellow pill that would reduce the length of hospital stays by 10 percent for all patients across the board, then that investigator would be a serious candidate for the Nobel prize. The issue in this paper is whether “information intervention” can accomplish the same goal as a big yellow pill.

The benefits of electronic medical records systems have been highlighted in several reports released by the Institute of Medicine (IOM, 1991, 1997, 2001):

-

convenient, rapid access (by legitimate stakeholders) to organized, legible patient data

-

links from displayed information to pertinent literature

-

automated generation of alerts, reminders, and suggestions when standards of care are not being met

-

analysis of population databases for clinical research, epidemiological assessments, quality measures, and outcomes

-

lower costs

-

better service to providers and patients

Although good examples of electronic medical records exist, the industry in general has not yet implemented systems that can routinely provide all of the desired functionality. In this paper, I describe the obstacles keeping us from enjoying the potential benefits of these systems. There are high-level obstacles, such as the absence of institutional commitment and the lack of capital, and low-level obstacles that have more to do with engineering, functionality, and technical issues.

The biggest obstacle is the lack of institutional commitment. Many hospitals in the United States are losing money and cannot afford all of the technologies and services they would like. Because it takes at least a decade to select and implement a comprehensive clinical information system, beleaguered executives are often reluctant to initiate a long-range strategic project that they might not be able to see through to fruition. Lack of commitment may also be attributable to concerns about demonstrable returns on investment in information technology, and there are some examples of wasteful failures (Littlejohns et al., 2003). However, the number of well documented examples of financial savings and improved quality is increasing (Pestotnik et al., 1996; Wang et al., 2003). Even if the cost and quality benefits of clinical information systems are appreciated and the institutional leadership is committed to the idea, the lack of capital remains an issue both for hospitals that are losing money and for private practices.

In fact, the benefits of investments in information systems often accrue to the payer rather than to the care provider. For example, if the blood glucose levels of a patient with diabetes can be monitored remotely, the patient may require fewer office or hospital visits to treat complications of the disease. Some payers now offer a premium for care provided with the assistance of competent information systems. The Leapfrog Group, for example, pays extra to hospitals that have computerized physician order entry. The problem is that there are many payers, and all of them want to lower costs for the patients they insure by having providers use information systems that support standards of care. If United Health Care promotes one standard for people with diabetes and Intermountain Health Care (IHC) supports a slightly different standard that requires additional data, how does a physician know which standard or information system to use? Another challenge is keeping standards of care up to date on a national basis (Shekelle et al., 2001).

A nontechnical obstacle is the lack of people who understand clinical practice, project management, and software technology and who have practical strategic vision, wisdom, and experience. We need people with both education in medical informatics and a sense of economic considerations. We currently have openings for such people that we cannot fill.

A partly technical, but mostly sociological, obstacle is maintaining a longitudinal medical record for patients being

cared for by multiple parties. If the care is episodic, the benefits of an electronic medical record may not accrue. One provider may enter allergies and prescriptions into a system, but when the patient visits a second provider, that information may not be available. Patients often switch insurers (on average, once every four years); they may see a primary care physician, multiple specialists, and be treated at different hospitals, nursing homes, and emergency rooms. In the 1980s and 1990s, the Hartford Foundation attempted to build community health information networks, but as of 1995, with one or two exceptions, when the grant support diminished, these models disintegrated because of complex legal, organizational, funding, and control issues (Duncan, 1995). The problem was not only the reluctance of competitors to facilitate easy switching of providers by patients, but also the question of how to identify individual patients. Even in communities that have networks (e.g., state childhood vaccination registries), when a patient goes from provider to provider, it is difficult to consolidate information because duplicate versions of the same patient contain fragments of the record; often merged information from more than one patient creates an inaccurate composite. Some have suggested that we use a national patient identifier akin to a Social Security number. But cost and privacy implications have impeded progress in that direction, even though such an identifier was mandated in the original Health Insurance Portability and Accountability Act of 1996 (P.L. 104-191). Standards for providers and payers have been established, but individual patient identifiers have not. Then, in 1998, Congress rescinded the original requirement and forbade the U.S. Department of Health and Human Services from issuing ID numbers. Several approaches to this problem have been identified (Appavu, 1997). For obvious reasons, countries in which government is the single payer for health care have been more successful in addressing the problems of unique identifiers.

The problems discussed to this point have been addressed by IHC in Salt Lake City. However, few organizations in the country can emulate them. IHC is an integrated health delivery network with 21 hospitals, 400 physicians who practice in 90 ambulatory clinics, and a health insurance plan that has affiliations with another 2,500 physicians. IHC provides health insurance for a half-million people and brokers insurance for another half-million; we provide care for more than 50 percent of the population of the intermountain region. The IHC patient population and facilities are distributed over a 400 mile geographic area connected by high-speed networks. But even in this organization, in which investment in information systems (3.9 percent of gross revenues) is considered a key to the delivery of high-quality, cost-effective care, we still have problems. In the remainder of this paper, I will discuss the technical problems facing IHC.

In the 1960s, work began on an electronic medical record system for hospitals. This system, known as HELP, generates automatic alerts, reminders, and suggestions based on logical criteria used to evaluate coded data recorded in the patient database. The suggestions have been well received by clinicians and have been shown to improve care (Pryor et al., 1983). In 1992, IHC realized the need for a longitudinal medical record that could provide for continuity of care, regardless of where the patient was located (e.g., hospital, clinic, or home).

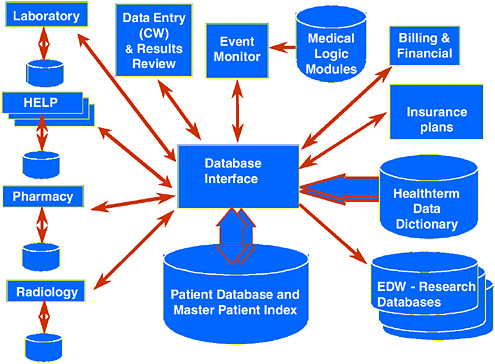

Figure 1 shows the basic architecture of our current approach. The system is based on: (1) a master patient index, in which local medical record numbers/identifiers for each person are mapped to a unique, persistent identifier; and (2) a longitudinal patient database (clinical data repository) that includes as much health-related information as we are able to capture for each of 1.45 million patients. This clinical repository is designed for optimum retrieval of data for a single patient.

A separate enterprise data warehouse (that includes cost information as well as clinical data) is used for population research, quality improvement, and cost analysis. About a dozen people are devoted full time to analyzing the data in this population-oriented warehouse.

Data are entered using a variety of applications and are transmitted to the clinical data repository. Necessary data captured in one application are shared via interfaces with other applications that may need particular data items. For example, when a patient encounter is created in one of our registration systems, the information is fed to the billing system, the laboratory system, the operating room scheduling system, and other relevant systems. The interfaces are complicated, because even simple things like the concept of gender are not standardized (radiology uses M and F, pharmacy uses one and zero, and laboratory uses zero and one). A dictionary contains coded identifiers for medical concepts and mappings from the canonical description of that concept to the analogous vocabulary used by other systems that have been developed by vendors independently of our nomenclature. This mapping allows us to build interfaces that communicate with independent systems using their native languages and still maintain a canonical representation of information for generating alerts and reports, regardless of the origin of the data. Eight people are devoted full time to management of the dictionary content and data models.

Implementing each interface costs us as much as $50,000 because there are no universally accepted vocabularies or data models in the health care industry. We currently employ 22 people to implement and maintain our 60 or so interfaces. Under current conditions, the interfaces are just as expensive for smaller organizations, which do not have our level of resources. In 1997, the interface issue was identified as a major obstacle by Clem McDonald (1997), one of the leaders trying to develop standards for vocabulary and messaging formats. A group known as Health Level 7 (HL7) is trying to promote standards, but vendors are resistant because of their investment in existing product platforms and because they do not want to make it easy for purchasers to

FIGURE 1 An overview of the IHC information architecture. This architecture reflects the philosophy that data should be entered only once regardless of the source and that multiple independent components can be integrated. The entry of data or the passage of time evokes the event monitor, which evaluates medical logic to produce, when warranted, alerts, reminders, and suggestions.

Source: Clayton et al., 2003.

switch vendors or to buy components from multiple vendors. Few institutions have the resources to drive market demand for interoperability (HL7, 2003).

The IHC system also has a rules engine triggered by the arrival of new data or the passage of time. Logical criteria are used to evaluate patient data to generate patient-specific alerts, reminders, and suggestions, when appropriate. Because these alerts must be generated in real time, the design of the data dictionary and the clinical data repository has extremely challenging requirements (Bakken et al., 2000; Huff et al., 1998; Johnson 1996). New data items must be stored without adding new tables. Response time for queries generated by users and the rules engine must be minimal (up to 500 queries per second). The database should not be taken down for maintenance, but because the system is very complex, we have trouble keeping it up 24 hours a day, seven days a week. Therefore, we have redundant hardware. Despite our best quality-assurance efforts, however, we still have problems, especially when we make software changes. We need better tools for managing complex systems.

All of our coded medications, problems, and laboratory values, can be linked via an ”info button” to five or six standard questions about a particular patient diagnosis/problem, medication, or laboratory test result (Cimino, 1996; Reichert et al., 2002). With three clicks of a button, one can be reading a paragraph that provides a concise answer to the question at hand. The problem is that publishers publish books, not paragraphs. We need reference literature that is marked up and searchable quickly.

The most vexing unsolved problem involves data capture. When we link instruments, such as infusion pumps, respirators, spirometers, labor and delivery monitors, or ICU monitors to our system, the devices need to “know” the identity of the subject and must transmit data in a way that can be easily accommodated. As more and more monitoring takes place in the home, these challenges will become more difficult. We have documented that monitoring (blood glucose, blood pressure, weight in heart failure patients, etc.) patients at home is cost effective, especially for patients with certain chronic diseases. The effectiveness of therapy would be greatly improved if we had unobtrusive ways to ascertain whether people were routinely taking their medications.

Studies have shown that nurses spend one-third of their time at work dealing with documentation. For every hour of direct patient care, they spend up to an hour documenting that care (PricewaterhouseCooper, 2001). Estimates for physicians are not as high, but the time required is still significant. Individuals can enter data by typing text, dictating and having someone else transcribe the dictation, using bar codes or other scanning devices, filling in forms by clicking with a mouse, or letting the computer understand the spoken commands and narrative dictation. We have 20 to 30 physicians who use voice recognition, but many users (about 225 of our 400 employed physicians) prefer to use “hot text” (macros

created for certain types of patients and certain types of visits that enable clinicians to change only the items that are exceptional/remarkable). We have found that this approach is much faster than dictation or clicking and that physicians in the ambulatory setting change only 3 percent of the standard text. Overall, we need to reduce the amount of required documentation, reduce the amount of duplication in documentation, and derive billing information from data collected at the point of care (rather than asking people to fill out separate billing-oriented forms).

Natural language processing is emerging as a valuable means of extracting coded, machine-processable data from narrative text (Hripcsak et al., 2002). Much of the documentation burden stems from regulatory requirements to ensure that we have provided the care for which we generate bills. In essence, regulators have added a 25-percent overhead to catch the 1 or 2 percent of crooks who abuse the system. If we could use sampling techniques instead, the 99 percent would not be punished.

Wireless mobile devices would be very convenient, but many problems would have to be overcome: battery life, screen size, the need for individualized devices (e.g., individual profiles for voice recognition), and the propensity to lose information.

Authentication is another challenge. Proximity cards, biometric markers, and other tokens present logistical challenges for rotating medical students, residents, interns, and per diem nurses. Once we have accurately identified the user, we believe that the confidentiality of patient information can be preserved, but no system, paper or electronic, is absolutely secure in the face of a truly determined investigator. At IHC, we have five criteria for allowing someone to see patient information: (1) an established patient/provider relationship (these relationships can often be established automatically via scheduling or registration systems); (2) other people with a relationship to the provider (e.g., covering partners, nurses who work with physicians, etc.) who assist in the care of that provider’s patients; (3) patient location (if the patient is in one of our facilities); (4) user location (clinicians in the same facility); and (5) user’s role (revealed information may be limited in scope). These criteria narrow the number of patients whose information can be seen by a particular user from more than a million to a few thousand. We also keep audit trails of who has looked at what data and terminate individuals who violate their agreement by looking at data when there is no legitimate need.

In the emerging Web-based, desktop paradigm, one should ideally be able to switch seamlessly from application to application (e.g., literature server, image browser, registration process), even if the applications have been developed by different vendors and run on different servers. The goal is to preserve user authentication and privileges and patient context without requiring the user to re-authenticate or reselect the patient. The approach in medicine has been to establish a standard (CCOW) (HL7, 2003). The World Wide Web consortium is addressing these same issues, and it would be nice to end up with a universal standard.

The final obstacle is facilitating best practices based on evidence, rather than on recent experience or premonition. Our approach at IHC is to create a knowledge base of problem-specific standards of care and measurable expected outcomes. After a clinician enters a problem, we generate a patient-specific work list and suggested order sets for the patient. We find that it is easier for physicians to use these order sets (modified, if appropriate) than to write their own list of 15 or so items, which may not be complete or justified. We recognize that managing this knowledge base for every individual institution will be very expensive.

I hope this brief summary of the challenges we face will stimulate those of you with applicable expertise and accomplishments in the engineering domain to help us find solutions. In the end, we hope to provide better, more cost-effective care.

REFERENCES

Appavu, S.I. 1997. Analysis of unique patient identifier options: final report. Available online at: http://ncvhs.hhs.gov/app0.htm.

Bakken, S., K.E. Campbell, J.J. Cimino, S.M. Huff, and W.E. Hammond. 2000. Toward vocabulary domain specifications for Health Level 7-coded data elements. Journal of the American Medical Informatics Association 7(4): 333–342.

Clayton, P.D., S.P. Narus, S.M. Huff, T.A. Pryor, P.J. Haug, T. Larkin, S. Matney, R.S. Evans, B.H. Rocha, W.A. Bowes, F.T. Holston, and M.L. Gundersen. 2003. Building a comprehensive clinical information system from components: the approach at Intermountain Health Care. Methods of Information in Medicine 42(1): 1–7.

Cimino, J.J. 1996. Linking patient information systems to bibliographic resources. Methods of Information in Medicine 35(2): 122–126.

Duncan, K.A. 1995. Evolving community health information networks. Frontiers of Health Service Management 12(1): 5–41.

HL7 (Health Level Seven, Inc). CCOW. Available online at: http://www.hl7.org/special/Committees/ccow_sigvi.htm.

HL7. 2003. HL7 Standards. Available online at: http://www.hl7.org/.

Hripcsak, G., J.H. Austin, P.O. Alderson, and C. Friedman. 2002. Use of natural language processing to translate clinical information from a database of 889,921 chest radiographic reports. Radiology 224(1): 157–163.

Huff, S.M., R.A. Rocha, H.R. Solbrig, M.W. Barnes, S.P. Schrank, and M. Smith. 1998. Linking a medical vocabulary to a clinical data model using Abstract Syntax Notation 1. Methods of Information in Medicine 37(4-5): 440–452.

IOM (Institute of Medicine). 1991. The Computer-Based Patient Record: An Essential Technology for Health Care, R.S. Dick and E.B. Steen, eds. Washington, D.C.: National Academy Press.

IOM. 1997. The Computer-Based Patient Record: An Essential Technology for Health Care (Revised Edition), R.S. Dick, E.B. Steen, and D.E. Detmer, eds. Washington, D.C.: National Academy Press.

IOM. 2001. Crossing the Quality Chasm: A New Health System for the 21st Century. Washington, D.C.: National Academy Press.

Johnson, S.B. 1996. Generic data modeling for clinical repositories. Journal of the American Medical Informatics Association 3(5): 328–339.

Littlejohns, P., J.C. Wyatt, and L. Garvican. 2003. Evaluating computerized health information systems: hard lessons still to be learnt. British Medical Journal 326: 860–863.

McDonald, C.J. 1997. Barriers to electronic medical record systems and how to overcome them. Journal of the American Medical Informatics Association 4(3): 213–221.

Pestotnik, S.L., D.C. Classen, R.S. Evans, and J.P. Burke. 1996. Implementing antibiotic practice guidelines through computer-assisted decision support: clinical and financial outcomes. Annals of Internal Medicine 124(10): 884–890.

PricewaterhouseCoopers. 2001. Patients or Paperwork?: The Regulatory Burden Facing America’s Hospitals. Paper prepared for the American Hospital Association (AHA). Chicago, Illinois: AHA.

Pryor, T.A., R.M. Gardner, P.D. Clayton, and H.R. Warner. 1983. The HELP system. Journal of Medical Systems 7(2): 87–102.

Reichert, J.C., M. Glasgow, S.P. Narus, and P.D. Clayton. 2002. Using LOINC to link an EMR to the pertinent paragraph in a structured reference knowledge base. Pp. 652–656 in Proceedings of the American Medical Informatics Association Symposium 2002. Bethesda, Md.: American Medical Informatics Association.

Shekelle, P.G., E. Ortiz, S. Rhodes, S.C. Morton, M.P. Eccles, J.M. Grimshaw, and S.H. Woolf. 2001. Validity of the Agency for Healthcare Research and Quality clinical practice guidelines: how quickly do guidelines become outdated? Journal of the American Medical Association 286(12): 1461–1467.

Wang, S.J., B. Middleton, L.A. Prosser, C.G. Bardon, C.D. Spurr, P.J. Carchidi, A.F. Kittler, R.C. Goldszer, D.G. Fairchild, A.J. Sussman, G.J. Kuperman, and D.W. Bates. 2003. A cost-benefit analysis of electronic medical records in primary care. American Journal of Medicine 114(5): 397–403.

Automation of the Clinical Practice: Cost-Effective and Efficient Health Care

Prince K. Zachariah

Mayo Clinic Scottsdale

According to the American Society of Testing Material, the purpose of the health record is to present a unified, coordinated, and complete repository of genetic, environmental, and clinical health care data (ASTM, 1991). It has been estimated, however, that as much as 30 percent of the information an internist needs is not accessible during a patient’s visit because of missing clinical information and missing laboratory reports (Covell et al., 1985). At the very least, this lack of information can be considered inconvenient; at worst, it may have a negative impact on patient care.

The health care record becomes highly fragmented over the life of an individual patient as care is sought from multiple practitioners with various subspecialties practicing at numerous health care institutions. For each practitioner to have a complete record, there must be frequent duplication, reiterations by multiple practitioners with potential recording or transcription errors, which, over time, affects the reliability of the information. These inherent weaknesses in the system hinder the tracking of clinical problems and often result in duplicate testing, which makes it difficult to evaluate outcomes and reduce the cost of health care.

Although the complexity of health care has increased exponentially, the patient medical record has remained essentially the same. The paper record, historically consisting of pages of handwritten notes, is archived and stored in medical records departments or warehouses managed by each health care organization that provides care. Although this antiquated method has obvious limitations, health care professionals have been reluctant to change. Now, however, pressures to change are increasing from external and internal sources because of concerns about the quality of the record, its inaccessibility to patients and health care providers, declining reimbursements, increasing medicolegal and regulatory agency reporting requirements, and many others.

At the Mayo Clinic, patient records have a long history of being well organized, thorough repositories of information. Originally designed in Rochester, Minnesota, around 1907 by Henry Plummer, M.D., the Mayo Clinic record was planned to be a comprehensive compendium of patient medical information that spans the life of the patient. Upon arrival at the clinic, each patient is registered and assigned a unique serial number. Dr. Plummer developed a central file consisting of an envelope (called a “dossier”) bearing that identification number in which all of the patient’s records are placed for the first and subsequent visits. Upon completion of a visit, the record is cross-indexed according to disease, surgical technique, surgical result, and pathologic findings to make record archiving and data gathering at a later date simpler. Patient number one was registered on July 19, 1907 (Clapesattle, 1954). With 88 years of experience and more than 4.5 million patient records, this invaluable database daily complements our practice. The record is designed to include a variety of forms filed in a certain order that can be easily identified by color. Today, the Mayo Clinic uses more than 350 different color or coded forms that span both outpatient and inpatient care (Mayo Magazine, 1989).

Historically, an elaborate manual system has been used to maintain this record. Large numbers of people are required to sort, organize, and transcribe data, as well as to file and retrieve records. Beyond organizing charts, clinic personnel manually transcribe all laboratory and x-ray results. “Green sheets” provide in one location in the record a summary of all laboratory and radiological test results arranged in chronological order. This design has allowed for a consolidation of results into a concise summary format, which reduces the bulk of the record and makes it easier to use. Patient care is both more efficient and, at the same time, simpler because of this summarization. Another benefit is that the Mayo Clinic record easily complements research activities by allowing quick access to needed information.

Over time, patient demands, as well as our own administrative and clinical work flow, have required us to increase our level of service in the midst of a changing health care system that has experienced significant reductions in

reimbursements. In response to these influences, Mayo Clinic has an opportunity to improve the quality of the patient record through automation, at the same time increasing the efficiency of the physician’s use of time, decreasing the patient’s waiting time, and reducing expenses. We believe that the implementation of a clinical information system can improve not only the patient chart, but also our integrated practice by automating the processes of ordering, billing, scheduling, and result inquiry.

Historically, because of the limitations of computer-based patient records (CPRs), physicians have resisted using them. Concerns have included the organization of data, security, and most important, how data are entered and retrieved (Barnett, 1984). A CPR should provide patient information in a clear, intuitive format concurrently from any terminal. It should automate charge capture and improve the use of institutional resources. Finally, the process of entering data should require no more time than the manual method. In fact, it should require less time because data entered in a single, online location are automatically available in a variety of electronic formats.

According to C.J. McDonald, M.D., physicians want automated clinical records that provide access to appropriately organized patient information when they want it, in a format tailored to their needs. They also want pertinent data trends and patterns displayed, the ability to organize subsets of information, flexible reporting requirements, and order entry capability (IOM, 1991).

For Mayo Clinic to continue to provide high-quality health care with a high degree of patient satisfaction based on an integrated practice model, the shift to a CPR (the electronic medical record [EMR]) is not an option; it is a mandatory change dictated by declining revenues at a time of increased demand. A true CPR provides the automated management of a comprehensive, longitudinal health record (IOM, 1991). EMR is designed to meet this definition. Using a common format via a central system, EMR incorporates all of the elements necessary for a lifetime of care for each patient. These elements are: the medical record, laboratory reports, surgery and pathology reports, dictation and transcriptions, consultations, hospital records, radiology reports, and radiology images.

Not only are these basic components represented in an intuitive, easy-to-use graphical interface, they are accompanied by the tools necessary to customize the display to suit each user’s practice style and personal preferences. The user interface integrates automated support services for ordering, scheduling, and coordinating the activities of a business office. These features are incorporated into a system that can be expanded and adapted to accommodate varied specialty care needs.

With the EMR, most of the limitations of our practice have been streamlined or eliminated. A physician’s ordering and scheduling requests are automatically and immediately processed by the computer alone. The patient’s schedule can be printed out almost instantly on the floor, and the patient promptly sent on his or her way. The billing information is also immediately processed and, in an electronic billing arrangement, is ready to be sent to the payer. Mayo Clinic’s management will have the ability to access current clinical and financial data instantly as a basis for making practice decisions. And the record, the fixture that started this all, is legible, accessible, and reliable—in fact more accurate. In addition, there is less room for error because the radiology and laboratory reporting systems have direct data links to the EMR. The record is also more organized through the use of standardized dictation templates, whose contents vary according to the level of service, which makes billing and the justification for billing straightforward and simple.

We believe with EMR we will achieve our goal of increasing efficiency and reducing cost. The Mayo Clinic sites (Jacksonville, Rochester, and Scottsdale) are at different stages of EMR implementation because of differences in the size and complexity of the organizations. Through further automation, Mayo will meet its commitments to patients and enhance its academic mission.

The system security arrangement makes it possible to assign different levels of access to individuals, based on the information required by each care provider. System managers are granted the highest level of security access, followed by physicians, and so on, down the security ladder. Each security level restricts user access to specified documents, thereby allowing graduated access to the record but not necessarily to privileged clinical information. This approach to system security has alleviated physicians’ concerns about maintaining the confidentiality of patient information.

Even though there is a strong desire to share EMR data with patients, differences in medical institutions and clinical practices and the lack of standardized data elements, common clinical vocabularies, and formatting have hampered the portability of EMR and the acquisition of data for research. However, attempts are being made to achieve commonly accepted recommendations of diagnoses and open-standard clinical vocabularies, such as SNOMED and formats like XML. To meet the challenges facing the health care system, significant automation of operational processes has become imperative, and we are attempting to meet these challenges without compromising the quality and efficiency of care.

In this discussion, I have outlined the history of the Mayo Clinic patient medical record, as well as the motivation for change at Mayo Clinic. We acknowledge that our past policies and procedures have been labor intensive, but a review of medical records practices at most other medical facilities will show that they too have tremendous operating costs for moving and managing patient medical records. From the outset, our goal has been to maintain, if not improve, patient satisfaction and the efficiency of patient care delivery. We believe that if we can achieve this goal, physician satisfaction will also be enhanced. Where EMR is available, almost

instantaneous access to patient records, laboratory results, ordering, and billing services has become a practice asset to which physicians have eagerly responded.

Nevertheless, although physician acceptance has been high, there is a price. Using the EMR requires significant physician procedural rewiring. It requires significant, sometimes painful, changes in the methods and habits physicians have developed over years of clinical training and practice. Physicians have become comfortable and dependent upon the paper record, and adapting to its absence requires time and patience. In our experience, after four to eight weeks of training, physician workloads are reduced and practice volumes return to their normal levels.

The Institute of Medicine concluded that CPR should be the heart of the health care information system (IOM, 1991). It should form an individual’s longitudinal health record and provide a terminal-based system that supports text and graphics, requires minimal training time, and includes a private and secure form of data entry that takes no longer to create than the paper record. The Mayo Clinic EMR meets these goals and builds upon the history of the CPR by establishing an expandable, open architecture as a foundation for future progress.

REFERENCES

ASTM (American Society for Testing and Materials). 1991. Standard Guide for Description for Content and Structure of an Automated Primary Record of Care. Standard E1384-91. West Conshohocken, Pa.: ASTM.

Barnett, G.O. 1984. The application of computer-based medical-record systems in ambulatory practice. New England Journal of Medicine 310(25): 1643–1650.

Clapesattle, H.B. 1954. The Doctors Mayo. Minneapolis, Minn.: University of Minnesota Press.

Covell, D.G., G.C. Uman, and P.R. Manning. 1985. Information needs in office practice: are they being met? Annals of Internal Medicine 103(4): 596–599.

IOM (Institute of Medicine). 1991. The Computer-Based Patient Record. Washington, D.C.: National Academy Press.

Mayo Magazine. 1989. Old records never die: medical records at the Mayo Clinic. Mayo Magazine 4(1): 2.

The eICU® Solution: A Technology-Enabled Care Paradigm for ICU Performance

Michael J. Breslow

VISICU, Inc.

This presentation describes a broad-based effort to redesign a complex clinical environment, the intensive care unit (ICU). ICUs account for about 10 percent of inpatient beds nationwide, although in tertiary-care centers the percentage is higher. ICU patients have the highest acuity of all patients in the hospital; their mortality rate exceeds 10 percent, and their daily costs are four times higher than those of other inpatients. As a result, the ICU represents an ideal target for quality initiatives. ICU patients experience a high incidence of medical errors (1.7 per patient per day in one study), and because of their inherent instability, they are particularly vulnerable to harm from suboptimal care (Donchin et al., 1995). Improvements in care delivery can lead to substantial improvements in outcomes, both clinical and financial.

ICUs also provide major support for other areas of the hospital. Many key functional areas (e.g., emergency department, operating room) send patients to the ICU. If ICU patients are not well enough to leave the ICU, the unit becomes a bottleneck—a common problem in many urban centers—and the operation of other service areas is adversely affected. Thus, improving clinical outcomes in the ICU can improve the overall efficiency of the hospital.

Several trends in ICU care suggest a need for new systems. First, the number and acuity of ICU patients is increasing rapidly, driven primarily by the aging of the population. It is estimated that the number of patients requiring ICU care will double in the next 10 to 15 years. These changes in ICU volumes and the severity of problems are increasing demands on care providers and adversely affecting the operating effectiveness of ICUs and the throughput of patients. At the same time, there are major problems with the clinical workforce. The number of nurses choosing to work in ICUs is decreasing, and the average level of experience of the nursing force is lower than in years past.

In addition, physician coverage is inadequate to meet patient needs. ICUs with intensivists in constant attendance have been shown to have clinical outcomes superior to those of ICUs with other staffing models. The value of these specialists derives both from their expertise and from their constant monitoring and altering of care plans in response to changes in patients’ clinical status. Intensivists also serve as the leaders of care teams, coordinating the activities of the many different physicians and ancillary staff who contribute to the care of ICU patients with complex conditions. Despite the clear advantages of this staffing model, less than 15 percent of U.S. hospitals have dedicated physicians in the ICU. There are many reasons hospitals do not have dedicated intensivist staffs, but the biggest problem is a severe shortage of these specialists. Fewer than 6,000 intensivists are currently in active practice. Staffing ICUs nationwide, 24 hours a day, seven days a week, would require 30,000 intensivists. Therefore, most ICUs depend on nurses to detect new problems, assess their severity, identify the appropriate physician, track him or her down, and communicate the nature of the problem—just to get a treatment order.

Despite the shortage of intensivists, the Leapfrog Group, a health care purchasing organization created by Fortune 500 companies to improve the quality of health care, has called for dedicated intensivist staffing for all nonrural U.S. hospitals within the next two years. Leapfrog estimates that broad implementation of this staffing pattern would save 50,000 to 150,000 lives annually. Although the call for intensivist staffing is controversial—after all, how can hospitals meet this performance standard if the resources aren’t there—the corporate leaders of the Leapfrog Group want to change behaviors and expectations by sending a strong message that businesses do not trust the health care system to maintain the health of their workers and control the costs. Don Berwick, of the Institute for Healthcare Improvement and a longtime proponent of fundamental changes in health care, put it this way, “Every system is perfectly designed to get the results it achieves.”

There are many points of failure in our current system. The Institute of Medicine (IOM) created quite a stir with the

publication in 2000 of To Err Is Human, a report that estimated there were as many as 100,000 deaths each year in American hospitals from medical errors. IOM focused almost exclusively on errors of comission. In ICUs, errors of omission outnumber errors of comission by a large margin. When these errors are included, the number of unnecessary deaths is even higher. Crossing the Quality Chasm, the follow-up report by IOM in 2001, outlined the need for fundamental changes in the way health care is delivered. The basic message was that outcomes will improve only when new systems of care are introduced.

In the remainder of this presentation, the eICU solution, a systematic reorganization of ICU care focused on improving patient safety and operating efficiency, is described. The reengineering of ICU care was initiated by two intensivists (the author and Brian Rosenfeld, the other founder of VISICU, Inc.) who ran a large tertiary-care center ICU for almost 20 years. The eICU solution has two main components. First, technology is used to leverage the expertise of intensivists. A telemedicine-type application bridges the manpower gap by creating networks of ICUs and linking them to centralized command centers (eICU facilities) that are continuously staffed by intensivists and support personnel. eICU care teams, led by intensivists, provide continuous monitoring and timely interventions when intensivists cannot be available on site. The second feature of the eICU solution is the use of technology tools to help both on-site and remote intensivists do their jobs better, more safely, and faster. Specifically, information technology systems are used to identify problems, guide decision making, and improve operating efficiency.

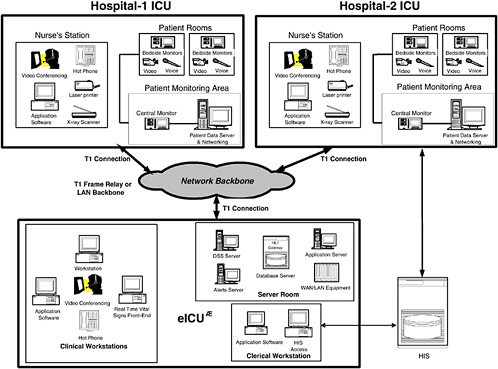

Figure 1 is a schematic drawing of an eICU network, which usually links multiple hospitals within an integrated delivery system (or any geographically proximate aggregation of hospitals) to an eICU facility. The participating hospitals generally care for different types of patients, and the availability and sophistication of on-site physicians and the organization of their ICUs vary. Tertiary-care centers usually have multiple ICUs with very high acuity patients and some dedicated intensivist presence during daytime hours. They frequently have step-down units with unstable patients but minimal physician presence. They also often care for similar patients in the emergency department, at least until they can be transferred to the ICU. Community and rural hospitals generally have fewer ICU beds, less acutely ill patients, and fewer intensivists. Rural hospitals, which often do not have sophisticated ICU resources, attempt to stabilize sick patients and transfer them to larger hospitals. All of these sites may be included in an eICU network, but their needs are different, and the role of the off-site team varies accordingly.

The physical network connecting an eICU to participating hospitals and ICUs must be secure and robust and must have adequate bandwidth to support real-time video. Some hospitals already have such networks, but most do not. In the absence of an existing network, dedicated T-1 lines can be used. Each patient room has a high-resolution camera and a two-way audio system so the eICU care team can see the patient and communicate directly with on-site personnel. In addition, “hot” phones provide ICU staff with immediate access to the intensivist-led staff in the eICU. Other equipment in the eICU includes real-time bedside monitor viewers, an electronic data system, note-writing and order-entry applications, an alerting system, and a computerized decision-support tool. High-resolution scanners are used for x-rays and other images, unless a digital x-ray system is already in place.

Some have suggested that it would be helpful to provide remote patient access to physicians in their offices or at home. We see many advantages to a dedicated staffing center instead. When I (as a physician) am at home, in my office, or on the golf course, I am doing something else, and the staff person in the ICU (usually a nurse) has to detect a problem and decide whether or not to contact me. I then have to stop what I am doing to address that problem. Once the problem has been dealt with, I probably will return to my preferred activity, without providing follow-up. Acutely ill patients need continuous monitoring by people who have the expertise and the authority to initiate therapies and who have nothing to do but oversee the care of patients in the network.

Experience suggests that eICU personnel often detect patient problems before the on-site nurses. We have noticed that nurses in traditional ICUs often are reluctant to ask for help—usually because they don’t want to “bother” the physicians (a reaction that may be conditioned by prior inappropriate physician responses to such calls). In addition, ICU nurses today are less experienced than they were in the past, and they may not recognize problems early. Prompt detection is very important because appropriate interventions at an early stage often can restore stability and prevent complications.

The eICU program uses a suite of information technology tools to support the remote team and the on-site team. The core information system collects data from a variety of sources and reconfigures it to optimize data presentation and facilitate physician work flow. The goal is to organize data in a format that makes the information easily accessible so clinicians can see temporal and other associative relationships. As part of this application, we provide note-writing and order-writing applications that allow physicians to initiate therapies and document their actions. We also provide real-time decision support designed for succinct data presentation and real-time use in guiding patient care decisions. Computer-based algorithms provide patient-specific assistance. These decision trees solicit key clinical information and, based on the data entered, provide clinicians with concrete recommendations suited to the situation. Another major focus has been on the creation of an early warning system that provides timely alerts designed to ensure that appropriate actions are initiated as soon as problems begin to develop.

The goal is to move away from a system in which correct decisions depend solely on flawless behavior of busy clinicians.

Four key applications have been developed to achieve these goals. The first, called eCareManager, is a physician-focused ICU electronic medical record and tool set for executing routine tasks (e.g., monitoring, note and order writing, care planning, communication, etc.). eCareManager was designed to support the key functions of an intensivist, on site or off site. Data display screens are organized by organ system to provide context, and data are formatted to show changes in key parameters over time. The data density is high to highlight important relationships. Other screens show more detailed information (e.g., laboratory results, medications, etc.) with icons that announce the presence of new information. The overall acuity of the patient is prominently displayed, and this is tied to specific care processes. For example, the most acutely ill patients are reviewed comprehensively at least once every hour by the eICU team. Another screen contains all details of the care plan. ICUs have many different caregivers (e.g., intensivists, consultants, nurses, nutritionists, respiratory therapists, pharmacists, etc.) all providing care to the same patients. Often each member of the care team carefully documents his or her activities, but other members of the team do not take the time to process the information. As a result, communication and coordination are less than optimal. For better integration, we created a single site to document the inputs of team members. The goal is to facilitate information transfer. Our decision support tool, called The Source, was created with the assistance of more than 50 physicians around the country. The Source, which includes approximately 160 acute medical problems, provides succinct summaries of the literature, with an emphasis on diagnosis and therapy. Links to source material are provided for additional detail, but the primary goal is to provide real-time assistance with decision making.

A second important feature is the presence of clinical algorithms that help physicians deal with a specific patient. These algorithms are generally based on published best practices or, if evidence is not definitive, major consensus reports, such as recent publications by the American Thoracic Society and the American Society of Infectious Diseases on the empirical treatment of hospital-acquired pneumonia. These comprehensive review articles have been deconstructed and a series of decision trees created. Based on physician-provided, patient-specific answers to key questions, the user is directed to appropriate recommendations for prescribing antibiotics.

The third major application, Smart Alerts, functions as an early warning system. Remember that all relevant clinical data (e.g., vital signs, laboratory results, medications, etc.) are being stored in a relational database. Whenever new data are entered, they are run against a complex set of rules to determine whether the ICU team (on-site or remote) should be notified of an impending problem. These rules can identify values that are out of range or parameters that have changed by a predetermined amount over a fixed period of time. One example flags patients on heparin if their platelet count drops. The rationale is to alert clinicians to the possibility of an infrequent (but life-threatening) complication.

The fourth application, Smart Reports, also capitalizes on the robust information stored in the database. Smart Reports provides detailed information about outcomes, practice patterns, resource utilization, and clinical operations. For example, a report on the use of deep-venous thrombosis prophylactic therapies identifies the population at risk, shows when preventative treatments were begun during the ICU stay (if at all), and shows which agents were used. These reports, which can detail individual physician practice patterns, become an effective tool for managing change.

The eICU solution is currently being used in five health care systems. The impact on outcomes has been studied formally at Sentara Healthcare, a six-hospital system in Virginia, where the program has been up and running for two years. This detailed study showed a 25 percent reduction in hospital mortality, a 17 percent reduction in ICU length of stay (LOS), and a 13 percent reduction in hospital LOS. The decrease in ICU LOS is attributable entirely to a reduction in the number and LOS of the outliers, which strongly suggests that early, appropriate interventions can prevent complications that prolong ICU stay and lead to outliers.