1

Introduction

The first municipal water utility in the United States was established in Boston in 1652 to provide domestic water and fire protection (Hanke, 1972). The Boston system emulated ancient Roman water supply systems in that it was multipurpose in nature. Many water supplies in the United States were subsequently constructed in cities primarily for the suppression of fires, but most have been adapted to serve commercial and residential properties with water. By 1860, there were 136 water systems in the United States, and most of these systems supplied water from springs low in turbidity and relatively free from pollution (Baker, 1948). However, by the end of the nineteenth century waterborne disease had become recognized as a serious problem in industrialized river valleys. This led to the more routine treatment of water prior to its distribution to consumers. Water treatment enabled a decline in the typhoid death rate in Pittsburgh, PA from 158 deaths per 100,000 in the 1880s to 5 per 100,000 in 1935 (Fujiwara et al., 1995). Similarly, both typhoid case and death rates for the City of Cincinnati declined more than tenfold during the period 1898 to 1928 due to the use of sand filtration, disinfection via chlorination, and the application of drinking water standards (Clark et al., 1984). It is without a doubt that water treatment in the United States has proven to be a major contributor to ensuring the nation’s public health.

Since the late 1890s, concern over waterborne disease and uncontrolled water pollution has regularly translated into legislation at the federal level. The first water quality-related regulation was promulgated in 1912 under the Interstate Quarantine Act of 1893. At that time interstate railroads made a common cup available for train passengers to share drinking water while on board—a practice that was prohibited by the Act. Several sets of federal drinking water standards were issued prior to 1962, but they too applied only to interstate carriers (Grindler, 1967; Clark, 1978). By the 1960s, each of the states and trust territories had established their own drinking water regulations, although there were many inconsistencies among them. As a consequence, reported waterborne disease outbreaks declined from 45 per 100,000 people in 193840 to 15 per 100,000 people in 196670. Unfortunately, the annual number of waterborne disease outbreaks ceased to fall around 1951 and may have increased slightly after that time, leading, in part, to the passage of the Safe Drinking Water Act (SDWA) of 1974 (Clark, 1978).

Prior to the passage of the SDWA, most drinking water utilities concentrated on meeting drinking water standards at the treatment plant, even though it had long been recognized that water quality could deteriorate in the distribution system—the vast infrastructure downstream of the treatment plant that delivers

water to consumers. After its passage, the SDWA was interpreted by the U.S. Environmental Protection Agency (EPA) as meaning that some federal water quality standards should be met at various points within the distribution system rather than at the water treatment plant discharge. This interpretation forced water utilities to include the entire distribution system when considering compliance with federal law. Consequently water quality in the distribution system became a focus of regulatory action and a major interest to drinking water utilities.

EPA has promulgated many rules and regulations as a result of the SDWA that require drinking water utilities to meet specific guidelines and numeric standards for water quality, some of which are enforceable and collectively referred to as maximum contaminant levels (MCLs). As discussed in greater detail in Chapter 2, the major rules that specifically target water quality within the distribution system are the Lead and Copper Rule (LCR), the Surface Water Treatment Rule (SWTR), the Total Coliform Rule (TCR), and the Disinfectants/Disinfection By-Products Rule (D/DBPR). The LCR established monitoring requirements for lead and copper within tap water samples, given concern over their leaching from premise plumbing and fixtures. The SWTR establishes the minimum required detectable disinfectant residual, or in its absence the maximum allowed heterotrophic bacterial plate count, both measured within the distribution system. The TCR calls for the monitoring of distribution systems for total coliforms, fecal coliforms, and/or E. coli. Finally, the D/DBPR addresses the maximum disinfectant residual and concentration of disinfection byproducts (DBPs) like total trihalomethanes and haloacetic acids that are allowed in distribution systems.

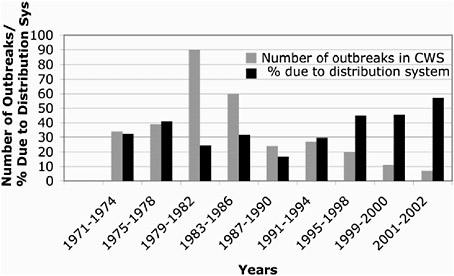

Despite the existence of these rules, for a variety of reasons most contaminants that have the potential to degrade distribution system water quality are not monitored for, putting into question the ability of these rules to ensure public health protection from distribution system contamination. Furthermore, some epidemiological and outbreak investigations conducted in the last five years suggest that a substantial proportion of waterborne disease outbreaks, both microbial and chemical, is attributable to problems within distribution systems (Craun and Calderon, 2001; Blackburn et al., 2004). As shown in Figure 1-1, the proportion of waterborne disease outbreaks associated with problems in the distribution system is increasing, although the total number of reported waterborne disease outbreaks and the number attributable to distribution systems have decreased since 1980. The decrease in the total number of waterborne disease outbreaks per year is probably attributable to improved water treatment practices and compliance with the SWTR, which reduced the risk from waterborne protozoa (Pierson et al., 2001; Blackburn et al., 2004).

There is, however, no evidence that the current regulatory program has resulted in a diminution in the proportion of outbreaks attributable to distribution system related factors. Therefore, in 2000 the Federal Advisory Committee for the Microbial/Disinfection By-products Rule recommended that EPA evaluate available data and research on aspects of distribution systems that may create

FIGURE 1-1 Waterborne disease outbreaks in community water systems (CWS) associated with distribution system deficiencies. Note that the majority of the reported outbreaks have been in small community systems and that the absolute number of outbreaks has decreased since 1982. SOURCE: Data from Craun and Calderon (2001), Lee et al., (2002), and Blackburn et al. (2004).

risks to public health. Furthermore, in 2003 EPA committed to revising the TCR—not only to consider updating the provisions about the frequency and location of monitoring, follow-up monitoring after total coliform-positive samples, and the basis of the MCL, but also to address the broader issue of whether the TCR could be revised to encompass “distribution system integrity.” That is, EPA is exploring the possibility of revising the TCR to provide a comprehensive approach for addressing water quality in the distribution system environment. To aid in this process, EPA requested the input of the National Academies’ Water Science and Technology Board, which was asked to conduct a study of water quality issues associated with public water supply distribution systems and their potential risks to consumers.

INTRODUCTION TO WATER DISTRIBUTION SYSTEMS

Distribution system infrastructure is generally the major asset of a water utility. The American Water Works Association (AWWA, 1974) defines the water distribution system as “including all water utility components for the distribution of finished or potable water by means of gravity storage feed or pumps though distribution pumping networks to customers or other users, including distribution equalizing storage.” These systems must also be able to provide water for nonpotable uses, such as fire suppression and irrigation of landscaping.

They span almost 1 million miles in the United States (Grigg, 2005b) and include an estimated 154,000 finished water storage facilities (AWWA, 2003). As the U.S. population grows and communities expand, 13,200 miles (21,239 km) of new pipes are installed each year (Kirmeyer et al., 1994).

Because distribution systems represent the vast majority of physical infrastructure for water supplies, they constitute the primary management challenge from both an operational and public health standpoint. Furthermore, their repair and replacement represent an enormous financial liability; EPA estimates the 20-year water transmission and distribution needs of the country to be $183.6 billion, with storage facility infrastructure needs estimated at $24.8 billion (EPA, 2005a).

Infrastructure

Distribution system infrastructure is generally considered to consist of the pipes, pumps, valves, storage tanks, reservoirs, meters, fittings, and other hydraulic appurtenances that connect treatment plants or well supplies to consumers’ taps. The characteristics, general maintenance requirements, and desirable features of the basic infrastructure components in a drinking water distribution system are briefly discussed below.

Pipes

The systems of pipes that transport water from the source (such as a treatment plant) to the customer are often categorized from largest to smallest as transmission or trunk mains, distribution mains, service lines, and premise plumbing. Transmission or trunk mains usually convey large amounts of water over long distances such as from a treatment facility to a storage tank within the distribution system. Distribution mains are typically smaller in diameter than the transmission mains and generally follow the city streets. Service lines carry water from the distribution main to the building or property being served. Service lines can be of any size depending on how much water is required to serve a particular customer and are sized so that the utility’s design pressure is maintained at the customer’s property for the desired flows. Premise plumbing refers to the piping within a building or home that distributes water to the point of use. In premise plumbing the pipe diameters are usually comparatively small, leading to a greater surface-to-volume ratio than in other distribution system pipes.

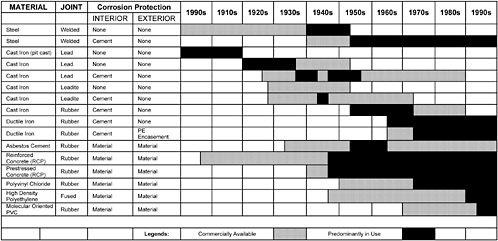

The three requirements for a pipe include its ability to deliver the quantity of water required, to resist all external and internal forces acting upon it, and to be durable and have a long life (Clark and Tippen, 1990). The materials commonly used to accomplish these goals today are ductile iron, pre-stressed concrete, polyvinyl chloride (PVC), reinforced plastic, and steel. In the past, unlined cast iron and asbestos cement pipes were frequently installed in distribu-

tion systems, and thus are important components of existing systems (see Figure 1-2). Transmission mains are frequently 24 inches (61 cm) in diameter or greater, dual-purpose mains (which are used for both transmission and distribution) are normally 16–20 inches (40.6–50.8 cm) in diameter, and distribution mains are usually 4–12 inches (10.0–30.5 cm) in diameter. Service lines and premise plumbing may be of virtually any material and are usually 1 inch (2.54 cm) in diameter or smaller (Panguluri et al., 2005).

It should be noted that this report considers service lines and premise plumbing to be part of the distribution system, and it considers the effects of service lines and premise plumbing on drinking water quality. If premise plumbing is included, the figure for total distribution system length would increase from almost 1 million miles (Grigg, 2005b) to greater than 6 million miles (Edwards et al., 2003). Premise plumbing and service lines have longer residence times, more stagnation, lower flow conditions, and elevated temperatures compared to the main distribution system (Berger et al., 2000). Inclusion of premise plumbing and service lines in the definition of a public water supply distribution system is not common because of their variable ownership, which ultimately affects who takes responsibility for their maintenance. Most drinking water utilities and regulatory bodies only take responsibility for the water delivered to the curb stop, which generally captures only a portion of the service line. The portion of the service line not under control of the utility and all of the premise plumbing are entirely the building owner’s responsibility.

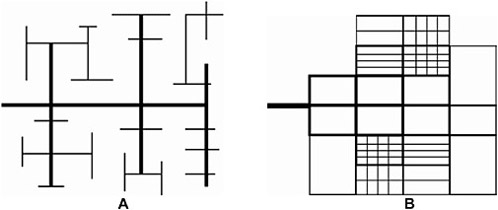

Pipe-Network Configurations

The two basic configurations for most water distribution systems are the branch and grid/loop (see Figure 1-3). A branch system is similar to that of a tree branch, in which smaller pipes branch off larger pipes throughout the service area, such that the water can take only one pathway from the source to the consumer. This type of system is most frequently used in rural areas. A grid/looped system, which consists of connected pipe loops throughout the area to be served, is the most widely used configuration in large municipal areas. In this type of system there are several pathways that the water can follow from the source to the consumer. Looped systems provide a high degree of reliability should a line break occur because the break can be isolated with little impact on consumers outside the immediate area (Clark and Tippen, 1990; Clark et al., 2004). Also, by keeping water moving looping reduces some of the problems associated with water stagnation, such as adverse reactions with the pipe walls, and it increases fire-fighting capability. However, loops can be dead-ends, especially in suburban areas like cul-de-sacs, and have associated water quality problems. Most systems are a combination of both looped and branched portions.

Design of water networks is very much dependent on the specific topography and the street layout in a given community. A typical design might consist

FIGURE 1-3 Two Basic Configurations for Water Distribution Systems. (A) Branched configuration. (B) Looped configuration.

of transmission mains spaced from 1.5 to 2 miles (2,400 to 3,200 m) apart with dual-service mains spaced 3,000 to 4,000 feet (900 to 1,200 m) apart. Service mains should be located in every street.

Storage Tanks and Reservoirs

Storage tanks and reservoirs are used to provide storage capacity to meet fluctuations in demand (or shave off peaks), to provide reserve supply for fire-fighting use and emergency needs, to stabilize pressures in the distribution system, to increase operating convenience and provide flexibility in pumping, to provide water during source or pump failures, and to blend different water sources. The recommended location of a storage tank is just beyond the center of demand in the service area (AWWA, 1998). Elevated tanks are used most frequently, but other types of tanks and reservoirs include in-ground tanks and open or closed reservoirs. Common tank materials include concrete and steel.

An issue that has drawn a great deal of interest is the problem of low water turnover in these facilities resulting in long detention times. Much of the water volume in storage tanks is dedicated to fire protection, and unless utilities properly manage their tanks to control water quality, there can be problems attributable to both water aging and inadequate water mixing. Excessive water age can be conducive to depletion of the disinfectant residual, leading to biofilm growth, other biological changes in the water including nitrification, and the emergence of taste and odor problems. Improper mixing can lead to stratification and large stagnant (dead) zones within the bulk water volume that have depleted disinfectant residual. As discussed later in this report, neither historical designs nor operational procedures have adequately maintained high water quality in storage tanks (Clark et al., 1996).

Security is an important issue with both storage tanks and pumps because of their potential use as a point of entry for deliberate contamination of distribution systems.

Pumps

Pumps are used to impart energy to the water in order to boost it to higher elevations or to increase pressure. Pumps are typically made from steel or cast iron. Most pumps used in distribution systems are centrifugal in nature, in that water from an intake pipe enters the pump through the action of a “spinning impeller” where it is discharged outward between vanes and into the discharge piping. The cost of power for pumping constitutes one of the major operating costs for a water supply.

Valves

The two types of valves generally utilized in a water distribution system are isolation valves (or stop or shutoff valves) and control valves. Isolation valves (typically either gate valves or butterfly valves) are used to isolate sections for maintenance and repair and are located so that the areas isolated will cause a minimum of inconvenience to other service areas. Maintenance of the valves is one of the major activities carried out by a utility. Many utilities have a regular valve-turning program in which a percentage of the valves are opened and closed on a regular basis. It is desirable to turn each valve in the system at least once per year. The implementation of such a program ensures that water can be shut off or diverted when needed, especially during an emergency, and that valves have not been inadvertently closed.

Control valves are used to control the flow or pressure in a distribution system. They are normally sized based on the desired maximum and minimum flow rates, the upstream and downstream pressure differentials, and the flow velocities. Typical types of control valves include pressure-reducing, pressure-sustaining, and pressure-relief valves; flow-control valves; throttling valves; float valves; and check valves.

Most valves are either steel or cast iron, although those found in premise plumbing to allow for easy shut-off in the event of repairs are usually brass. They exist throughout the distribution system and are more widely spaced in the transmission mains compared to the smaller-diameter pipes.

Other appurtenances in a water system include blow-off and air-release/vacuum valves, which are used to flush water mains and release entrained air. On transmission mains, blow-off valves are typically located at every low point, and an air release/vacuum valve at every high point on the main. Blow-off valves are sometimes located near dead ends where water can

stagnate or where rust and other debris can accumulate. Care must be taken at these locations to prevent unprotected connections to sanitary or storm sewers.

Hydrants

Hydrants are primarily part of the fire fighting aspect of a water system. Proper design, spacing, and maintenance are needed to insure an adequate flow to satisfy fire-fighting requirements. Fire hydrants are typically exercised and tested annually by water utility or fire department personnel. Fire flow tests are conducted periodically to satisfy the requirements of the Insurance Services Office or as part of a water distribution system calibration program (ISO, 1980). Fire hydrants are installed in areas that are easily accessible by fire fighters and are not obstacles to pedestrians and vehicles. In addition to being used for fire fighting, hydrants are also for routine flushing programs, emergency flushing, preventive flushing, testing and corrective action, and for street cleaning and construction projects (AWWA, 1986).

Infrastructure Design and Operation

The function of a water distribution system is to deliver water to all customers of the system in sufficient quantity for potable drinking water and fire protection purposes, at the appropriate pressure, with minimal loss, of safe and acceptable quality, and as economically as possible. To convey water, pumps must provide working pressures, pipes must carry sufficient water, storage facilities must hold the water, and valves must open and close properly. Indeed, the carrying capacity of a water distribution system is defined as its ability to supply adequate water quantity and maintain adequate pressure (Male and Walski, 1991). Adequate pressure is defined in terms of the minimum and maximum design pressure supplied to customers under specific demand conditions. The maximum pressure is normally in the range of 80 to 100 psi; for example, the Uniform Plumbing Code requires that water pressure not exceed 80 psi (552 kPa) at service connections, unless the service is provided with a pressure-reducing valve. The minimum pressure during peak hours is typically in the range of 40 to 50 psi (276–345 kPa), while the recommended minimum pressure during fire flow is 20 psi (138 kPa).

Residential Drinking Water Provision

Of the 34 billion gallons of water produced daily by public water systems in the United States, approximately 63 percent is used by residential customers for indoor and outdoor purposes. Mayer et al. (1999) evaluated 1,188 homes from 14 cities across six regions of North America and found that 42 percent of an-

nual residential water use was for indoor purposes and 58 percent for outdoor purposes. Outdoor water use varies quite significantly from region to region and includes irrigation. Of the indoor water use, less than 20 percent is for consumption or related activities, as shown below:

|

Human Consumption or Related Use – 17.1 %....... |

Faucet use – 15.7 % Dishwasher – 1.4 % |

|

Human Contact Only – 18.5 %.............................. |

Shower – 16.8 % Bath – 1.7 % |

|

Non-Human Ingestion or Contact Uses – 64.3 %... |

Toilet – 26.7 % Clothes Washer – 21.7 % Leaks – 13.7 % Other – 2.2 % |

Most of the water supplied to residences is used primarily for laundering, showering, lawn watering, flushing toilets, or washing cars, and not for consumption. Nonetheless, except in a few rare circumstances, distribution systems are assumed to be designed and operated to provide water of a quality acceptable for human consumption. Normal household use is generally in the range of 200 gallons per day (757 L per day) with a typical flow rate of 2 to 20 gallons per minute (gpm) [7.57–75.7 L per minute (Lpm)]; fire flow can be orders of magnitude greater than these levels, as discussed below.

Fire Flow Provision

Besides providing drinking water, a major function of most distribution systems is to provide adequate standby fire flow, the standards for which are governed by the National Fire Protection Association (NFPA, 1986). Fire-flow requirements for a single family house vary from 750 to 1,500 gpm (2,839–5,678 Lpm); for multi-family structures the values range from 2,000 to 5,000 gpm (7,570–18,927 Lpm); for commercial structures the values range from 2,000 to 10,000 gpm (7,570–37,854 Lpm), and for industrial structures the values range from 3,000 to over 10,000 gpm (11,356–37,854 Lpm) (AWWA, 1998). The duration for which these fire flows must be sustained normally ranges from three to eight hours.

In order to satisfy this need for adequate standby capacity and pressure, most distribution systems use standpipes, elevated tanks, and large storage reservoirs. Furthermore, the sizing of water mains is partly based on fire protection requirements set by the Insurance Services Office (AWWA, 1986; Von Huben, 1999). (The minimum flow that the water system can sustain for a specific period of time governs its fire protection rating, which then is used to set the fire insurance rates for the communities that are served by the system.) As a conse-

quence, fire-flow governs much of the design of a distribution system, especially for smaller systems. A study conducted by the American Water Works Association Research Foundation confirmed the impact of fire-flow capacity on the operation of, and the water quality in, drinking water networks (Snyder et al., 2002). It found that although the amount of water used for fire fighting is generally a small percentage of the annual water consumed, the required rates of water delivery for fire fighting have a significant and quantifiable impact on the size of water mains, tank storage volumes, water age, and operating and maintenance costs. Generally nearly 75 percent of the capacity of a typical drinking water distribution system is devoted to fire fighting (Walski et al., 2001).

The effect of designing and operating a system to maintain adequate fire flow and redundant capacity is that there are long transit times between the treatment plant and the consumer, which may be detrimental to meeting drinking water MCLs (Clark and Grayman, 1998; Brandt et al., 2004). Snyder et al. (2002) recommended that water systems evaluate existing storage tanks to determine if modification or elimination of the tanks was feasible. Water efficient fire suppression technologies exist that use less water than conventional standards. In particular, the universal application of automatic sprinkler systems provides the most proven method for reducing loss of life and property due to fire, while at the same time providing faster response to the fire and requiring significantly less water than conventional fire-fighting techniques. Snyder et al. (2002) also recommended that the universal application of automatic fire sprinklers be adopted by local jurisdictions for homes as well as in other buildings.

There is a growing recognition that embedded designs in most urban areas have resulted in distribution systems that have long water residence times due to the large amounts of storage required for fire fighting capacity. More than ten years ago, Clark and Grayman (1992) expressed concern that long residence times resulting from excess capacity for fire fighting and other municipal uses would also provide optimum conditions for the formation of DBPs and the regrowth of microorganisms. They hypothesized that eventually the drinking water industry would be in conflict over protecting public health and protecting public safety.

Non-conventional water distribution system designs that might address some of these issues are discussed below including decentralized treatment, dual distribution systems, and an approach that utilizes enhanced treatment to solve distribution system water quality problems. These alternative concepts were not part of the committee’s statement of task, such that addressing them extensively is beyond the scope of the report. However, their potential future role in abating the problems discussed above warrants mention here and further consideration by EPA and water utilities.

Decentralized Treatment

Distributed or decentralized treatment systems refer to those in which a cen-

tralized treatment plant is augmented with additional treatment units that are located at various key points throughout the distribution system. Usually, the distributed units provide advanced treatment to meet stringent water quality requirements at consumer endpoints that would otherwise be in violation. Distributed units would be located either at the point-of-entry of households, for example, or at a more upstream location from which different water use could be served. This might be at the neighborhood or district level, depending on technological and financial requirements.

How the decentralized treatment concept might be implemented in water systems worldwide is still at a theoretical stage (e.g., Norton, 2006 and Weber, 2002, 2004). Weber’s approach involves having distributed networks (Distributed Optimal Technologies Networks or DOT-Nets) in which water supply is optimized by separately treating several components of water and wastewater streams using decentralized treatment units. The approach largely views water supply, treatment, and waste disposal as different aspects of the same integrated system. Box 1-1 describes the concepts in detail.

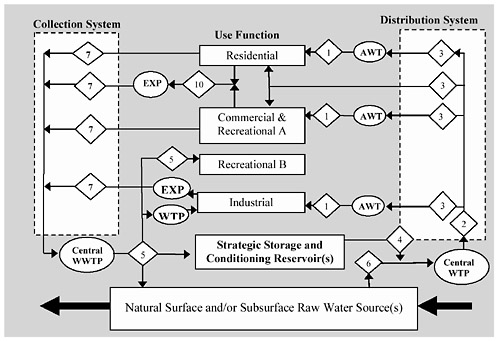

|

BOX 1-1 Distributed Optimal Technologies Networks DOT-Net is a decentralized treatment concept in which water supplies are segregated based on uses (or use functions) and levels of quality, to which a qualitative ranking on a scale of 1 to 10 is assigned, with 1 being the best quality and 10 the worst. The use functions include potable water, black water, gray water, various industrial discharges, etc. For example, water extracted from a local surface water source might be given a rank of 6. Following centralized treatment, the water would have a rank of 2. There is then an assumption that this supply will be degraded in distribution systems to a level that is generally not acceptable as potable water (say 3). To address this, advanced treatment technologies such as membranes and super-critical water treatment would be located as satellite systems close to the point of use, producing a water of ranking 1. This concept hinges upon segregating water into the various use functions and developing and deploying the technology needed to bring about the desired water quality for each function. For example, water for drinking, showering, and cooking would require the highest level of quality and should be treated appropriately using satellite systems and advanced technologies. Advanced technologies exist for the treatment, analysis, and control of personal water including sophisticated electromechanical systems for rapid monitoring and feedback. The existing distribution system would still be used, but would be supplemented with treatment units to treat a portion of the water supply. For example, satellite treatment units may be located in large buildings with a high population density or distributed over neighborhoods. The concept extends to the waste streams generated by each type of water use, as shown in Figure 1-4. Thus, advanced water treatment would be used not only prior to water delivery, but also upon water disposal but before it is discharged into a centralized collection system. For example if a certain commercial enterprise produced a highly degraded waste stream (with a ranking of 10), a satellite unit could be used to raise the quality to that of the other common waste streams (say 7). Such advanced and other wastewater treatment would be implemented in a manner to eventually resupply the source waters or to |

The principal trade-off associated with utilizing such systems is the alternative cost associated with upgrading large centralized treatment facilities and distribution networks. On a per person basis, it is less expensive to build one large treatment system than to build several small ones. In addition to these costs, multiple or new pipe networks are a necessary part of the design framework for these satellite systems. That is, new piping would be needed from the advanced water treatment system into the household (or industry), although it would travel a short distance and would be a small percentage of the total plumbing for the building. It is possible that investing in larger satellite systems with separate piping might offer a cost advantage compared to small satellite systems, based on economies of scale (Norton, 2006). Clearly, there would have to be a policy to avoid social injustice such that decentralized treatment when implemented is affordable to the average user.

A second important consideration is the need to monitor the satellite systems, and whose responsibility that monitoring would be. Maintenance activities, such as repair and replacement of a new piping system associated with satellite treatment, would also have to be well planned in order to prevent contamination of the distribution system downstream of the treatment unit. Incorporation of remote control technologies and other monitoring adaptations could reduce the need for human intervention while ensuring that the units operate satisfactorily.

The decentralized treatment concept was tested on a limited basis in the field (from October 1995 to September 1996) by Lyonnaise des Eaux-CIRSEE in the municipality of Dampierre, France. A one-year study was carried out using an ultrafiltration/nanofiltration system to treat water for 121 homes through 13,123 ft (4,000 m) of pipe at an average flow of 22.0 gpm (5 m3/h) and a peak flow of 44.0 gpm (10 m3/h). The ultrafiltration/nanofiltration system was fully automatic and monitored by remote control. Results from the study were very satisfactory from a quality perspective, and the cost calculations showed that the system was cost competitive with centralized treatment if production volumes were greater than 5,284,020 gal/year (20,000 m3/year) (Levi et al., 1997).

A more prospective example is provided by the Las Vegas Valley Water District (LVVWD) and the Southern Nevada Water Authority (SNWA), which serve one of the most rapidly growing areas in the United States (see Box 1-2). Because of concerns over proposed MCLs for DBPs and the compliance framework being established by the Stage 2 D/DBPR, Las Vegas is investigating the application of decentralized or satellite water treatment systems within its distribution network. Currently only about 10 percent of the network is having trouble with compliance but it is anticipated as the system expands, more and more of the network will be out of compliance.

Enhanced Treatment

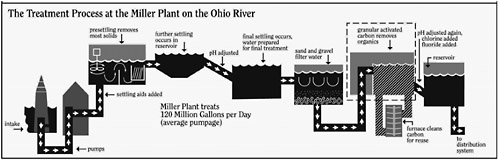

A third approach to slowing water quality deterioration involves centralized treatment options that can improve the quality of water to such a degree that formation of DBPs and loss of disinfectant residual are minimized. This approach is practiced by the Greater Cincinnati Water Works, which serves a large metropolitan area consisting of urban and suburban areas with potable water and fire flow protection. The distribution system is served by two treatment plants, the largest being the Miller Plant, which has a design capacity of 220 mgd (833,000 m3/day) with an average water production of 133 mgd (428,000 m3/day). The Miller Plant (Figure 1-5) has 12 granular activated carbon contactors, each containing 21,000 ft3 (600 m3) of GAC. During normal plant operation, between seven and 11 of these contactors are used (in parallel) to process water. Once GAC becomes spent, it is reactivated using an on-site reactivation system. GAC treatment reduces total organic carbon (TOC) levels from an

|

BOX 1-2 Application of Decentralized Treatment within the Las Vegas Valley Water District Between 1989 and 2004, Las Vegas grew faster than any other metropolitan area in the U.S. As a result, during this period LVVWD has more than doubled its service area population. In 1989, the service area population was 558,000 but by 2004 it had grown to 1,209,000 (Jacobsen and Kamojjala, 2005). The LVVWD receives its water on a wholesale basis from the Southern Nevada Water Authority (SNWA), which operates two water treatment plants with a combined design capacity of 900 mgd (3.41 mil m3 per day). The source of water is Lake Mead. The treatment train at both plants is nearly identical, consisting of ozone and direct filtration. Chlorine is utilized as the final disinfectant. Coagulation dosages are limited and TOC removals through the biologically active filters range from 10 to 30 percent. The distribution system consists of 3,300 miles (5,280 km) of pipe and 29 water storage reservoirs. The system experiences long residence times (in some case greater than a week), resulting in an increase in water temperature as it moves through the system. Consequently it is difficult to maintain chlorine residuals in some parts of the system, necessitating the addition of chlorine at many locations. Currently the system is in compliance with all Safe Drinking Water Act regulations. However, based on distribution system hydraulic modeling estimates of detention time and known formation rates for trihalomethanes and haloacetic acids, it is expected that some areas in the LVVWD will not comply with the DBP MCLs and compliance framework being established by the Stage 2 D/DBPR. In order to meet Stage 2 regulations, the LVVWD/SNWA evaluated several alternatives to change its treatment and/or its residual disinfectant. Advanced oxidation, granular activated carbon (GAC) adsorption, enhanced coagulation (including addition of clarification), and nanofiltration were considered possible changes to treatment that could be helpful. In addition, operational and residual disinfectant changes were considered such as conversion from free chlorine to chloramine and a reduction in distribution system detention time. A more unconventional option considered by LVVWD/SNWA evaluated the potential for targeted or “hot-spot” treatment using several smaller-scale treatment systems that would reduce the concentration of DBPs in those areas of the distribution system that might exceed the MCLs established by the Stage 2 D/DBPR. Ultimately, the LVVWD/SNWA chose to use the “hot-spot” treatment approach for the following reasons. It would provide a cost-effective approach by only treating water where needed at specific locations, instead of treating water for the entire system. It would reduce residuals production from treatment as compared to intensive organics removal. And it would provide for the continuous use of chlorine and avoid potential nitrification problems. The decentralized treatment options being considered are (1) DBP and natural organic material (NOM) removal by GAC adsorption, (2) DBP and NOM removal by biologically active carbon (BAC), and (3) control of DBP reformation after treatment by GAC and BAC. The American Water Works Association Research Foundation has funded a project that will test the concept of decentralized treatment and its application to the LVVWD (Jacobsen et al., 2005). |

annual average of 1.5 mg/L prior to GAC treatment to a combined five-year average of 0.6 mg/L after GAC treatment. The plant is one of the world’s largest municipal GAC potable water treatment systems (Moore et al., 2003).

Las Vegas and Cincinnati have chosen distinctly different approaches to meeting and solving the residence time and excess capacity problem. The Las Vegas system is currently conducting studies to explore the possibility of applying treatment at various locations. Decentralized treatment units would be installed at points in the system where DBPs might exceed the Stage 2 D/DBPR. Research is being conducted that would focus primarily on removing the precursor material in the water in order to keep the DBP formation potential below regulated limits. A key aspect of this strategy is to use distribution system models and GIS technology to monitor residence time and DBP formation potential in the system. Cincinnati, on the other hand, has chosen the more traditional but very effective approach of removing DBP precursor material prior to distribution and thereby minimizing the potential formation of DBPs throughout the system. Although the Greater Cincinnati Water Works has a very large distribution system composed of a wide variety of pipe materials, the utility routinely provides water well below the total trihalomethane level of 80 μg/L and the total haloacetic acid level of 60 μg/L at all locations in the system.

Dual Distribution Systems

Another option for design and operation of distribution systems is the creation of dual systems in which separate pipe networks are constructed for potable and nonpotable water. In these types of systems, reclaimed wastewater or water of sub-potable quality may be used for fire fighting and other special purposes such as irrigation of lawns, parks, roadway borders and medians; air conditioning and industrial cooling towers; stack gas scrubbing; industrial processing; toilet and urinal flushing; construction; cleansing and maintenance, including vehicle washing; scenic waters and fountains; and environmental and recreational purposes. The design of these systems differentiates dual systems from most community water supplies, in which one distribution system provides potable water to serve all purposes.

Most dual systems in use today were installed by adding reclaimed water lines alongside (but not connected to) potable water lines already in place. For example, in St. Petersburg, Florida, a reclaimed water distribution system was placed into operation in 1976, and fire protection is provided from both the potable and reclaimed water lines. San Francisco has a nonpotable system, constructed after the 1906 earthquake, that serves the downtown area to augment fire protection. Rouse Hill, Australia was the first community to plan a dual water system with the reclaimed water lines to serve all nonpotable uses, including fire protection, such that the potable water line can have much smaller pipe diameters. Both the potable and nonpotable systems have service reservoirs for

meeting diurnal variations in demand, and if a shortage of water for fire protection occurs, potable water can be transferred to the nonpotable system.

In a recent exchange of letters in the Journal of the American Water Works Association, Dr. Dan Okun (Okun, 2005) and Dr. Neil Grigg (Grigg, 2005a) addressed the merits of dual distribution systems for U.S. drinking water utilities, especially given that ingestion and human consumption are minor uses in most urban areas (see above). The argument is that because existing water distribution systems are designed primarily for fire protection, the majority of the distribution system uses pipes that are much larger than would be needed if the water was intended only for personal use. This leads to residence times of weeks in traditional systems versus potentially hours in a system comprised of much smaller pipes. In the absence of smaller sized distribution systems, utilities have had to implement flushing programs and use higher dosages of disinfectants to maintain water quality in distribution systems. This has the unfortunate side effect of increasing DBP formation as well as taste and odor problems, which contribute to the public’s perception that the water quality is poor. Furthermore, large pipes are generally cement-lined or unlined ductile iron pipe typically with more than 300 joints per mile. These joints are frequently not water tight, leading to water losses as well as providing an opportunity for external contamination of finished water.

From an engineering perspective it seems intuitively obvious that it is most efficient to satisfy all needs by installing one pipe and to minimize the number of pipe excavations. This philosophy worked well in the early days of water system development. However, it has resulted in water systems with long residence times (and their negative consequences) under normal water use patterns and a major investment in above-ground (pumps and storage tanks) and below-ground (transmission mains, distribution pipes, service connections, etc.) infrastructure. Therefore as suggested in Okun (2005) it may be time to look at alternatives for supplying the various water needs in urban areas such as dual distribution systems. The water reuse aspect of dual systems is particularly attractive in arid sections of the U.S. that otherwise require transportation of large quantities of water into these areas.

Although there are many examples of water reuse in the United States (EPA, 1992), not many of them involve the use of a dual distribution system. The City of St. Petersburg, which operates one of the largest urban reuse systems in the world, provides reclaimed water to more then 7,000 residential homes and businesses. In 1991, the city provided approximately 21 mgd (79,500 m3/day) of reclaimed water for irrigation needs of individual homes, condominiums, parks, school grounds, and golf courses; cooling tower make-up; and supplemental fire protection. In Irving, Texas, advanced secondary treated wastewater and raw water from the Elk Fork of the Trinity River are used to irrigate golf courses, medians, and greenbelt areas, and to maintain water levels at the Las Colinas Development. The reclaimed water originates from the 11.5 mgd (43,500 m3/day) Central Regional wastewater treatment plant. A third example is provided in Hilton Head, South Carolina, where about 5 mgd

(18,900 m3/day) of wastewater is being used for wetlands applications and golf course irrigation. All of the wastewater treatment systems have been upgraded to tertiary systems, and an additional flow rate of the same size as the first is being planned. Perhaps the most famous water reuse operation using a dual distribution system is the Irvine Ranch Water District in Irvine, California (see Box 1-3). There is a recent trend in California toward the use of more dual distribution systems, particularly in new developments, as a result of statutory requirements to use reclaimed water in lieu of domestic water for non-potable uses (California Water Code Section 13550-13551) and because of the need to conserve water to meet increasing local and regional water demands.

The potential advantages of using dual distribution systems include the fact that much smaller volumes of water would need be treated to high standards, which would result in cost savings at the treatment plant if all water supplied were to be treated in this fashion. Another advantage is that flow in the potable line would be expected to be relatively constant compared to a traditional system where large quantities of water would need to be transferred over short time periods (e.g., during fires). The associated flow and pressure changes in a pipe carrying the total water needs for a community are expected to be much greater than in the potable line of a dual distribution system. As discussed later in this document, there is evidence that pressure transients may result in intrusion of contaminated water. Furthermore, use of improved materials in the newer, smaller distribution system would minimize water degradation, loss, and intrusion.

However, the creation of dual distribution systems necessitates the retrofitting of an existing water supply system and reliance on existing pipes to provide non-potable supply obtained from wastewater or other sources. Large costs would be incurred when installing the new, small diameter pipe for potable water, disconnecting the existing system from homes and other users so that it could be used reliably for only nonpotable needs, and other retrofitting measures. These costs can be reduced if a new system is used only for reclaimed water distribution, as was done at Irvine Ranch, but this of course would not decrease the extent of quality degradation now experienced in existing systems. It is also critical to differentiate between full and partial adoption of dual distribution systems, the latter of which has occurred in several cities. For example, if a new nonpotable line is installed alongside an existing potable line, the nonpotable line can draw demand away from the potable line, thereby increasing its detention time and aggravating water quality deterioration in the potable line. Furthermore, if the potable system is still used for fire flow, which generally governs pipe sizing, many of the advantages of the dual system will not be realized.

Dual systems may be most advantageous in new communities where neither type of distribution system currently exists. New communities could better optimize their systems because both types of piping systems could be built simultaneously. The cost savings from the need to treat a much smaller portion of the total water to a higher quality could partially offset the costs of constructing two

|

BOX 1-3 Irvine Ranch Water District The Irvine Ranch Water District is one of the first water districts in the United States to practice wastewater reuse. It serves 316,287 people over 133 square miles (344.5 km2), making it about a quarter the size of the Los Angeles Department of Water and Power. There are 85,500 domestic connections and 3,700 recycled connections. As of 2003, there are 1,075 miles (1,730 m) of pipe for the potable system. As part of the potable system, there are 28 above-ground and below-ground storage tanks that range in volume from 0.75 million gallons (0.0028 million m3) to 16 million gallons (0.061 million m3) and have a total storage capacity of 131.75 million gallons (0.50 million m3). Much of the District’s infrastructure is below grade due to aesthetic considerations. The most unusual aspect of the District system is the recycled (reclaimed) water network. There are 350 miles (563.2 km) of reclaimed water lines compared to 1,075 miles (1729.7 km) of potable network lines. Domestic water tanks sit side by side with reclaimed water tanks. The recycled water is used only for toilet flushing in a few high-rise buildings, for cooling towers, for landscape irrigation especially at golf courses and condominium complexes, for food crops, and by one carpet manufacturer. Recycled water for toilet flushing is not used in residences, only in businesses. Recycled water itself is tertiary treated wastewater. It meets all of the water quality standards for drinking water, but it is high in salt. Interestingly, in the summer the recycled water has a much lower retention time in the distribution system than the potable water because of greater demand for the recycled water for landscaping. However, when the demand for recycled water is less than the input from WWTPs, the recycled water is put it in long-term storage. Indeed, one of the reasons dual systems were installed in high rises and other buildings was to make demand for recycled water more level throughout the year. There are no hydrants on the recycled system, so the reclaimed water is not used for fighting fires, and the pipe sizes in the recycled system are generally smaller than in the potable system. Chloramine provides residual disinfection in the potable system but chlorine is used in the recycled system (as mandated by California regulations). The SCADA system, which consists of 6,000 sampling points, provides minute-by-minute monitoring of chlorine residuals in the recycled system. The potable system is required to meet all SDWA regulatory requirements such as the TCR, SWTR, D/DBPR, LCR, and source water monitoring on the imported water sources and the well water. Special purpose monitoring includes a nitrification action plan that requires tank sampling. For the recycled system, however, there are no specific monitoring objectives required by regulations because the NPDES permit has been met at the end of the WWTP. Internal requirements include bi-monthly sampling of conductivity, turbidity, color, pH, chlorine residual, total coliform, and fecal coliform and total suspended solids (at special locations). The water uses for the recycled water are very specific, and it is the goal of the utility to make sure the water is of an acceptable quality for those uses. Domestic potable water costs are 64 cents per thousand gallons for domestic water and 59 cents per thousand gallons for recycled water. As might be expected, Irvine Ranch has a very extensive cross-connection control (CCC) program. There are approximately 13,000 CCC devices in place throughout the system. The District conducts an annual cross-connection shut-down test for the recycled irrigation water, and only one cross connection has been found in the last 10 years. For backflow prevention, a reduced pressure principle assembly at the meter is used, as required by the state of CA. Additional devices are installed if found to be needed. SOURCE: Johannessen et al. (2005). |

systems. Clearly, better understanding the technological potential and economic consequences of dual distribution systems is an important research goal.

***

Non-traditional options for drinking water provision present many unanswered questions but few case studies from which to gather information. The primary concerns include determining their economic feasibility and the existence of unknown costs, developing a plan for transition and implementation (which are expected to be very significant undertakings in existing communities), and maintenance of quality assurance and quality control in systems that would be potentially much more complicated than the current system. Furthermore, it is not clear how alternative distribution system designs will affect water security, an important consideration since September 11, 2001. The potential for cross connections or misuse of water supplies of lesser quality is greatly increased in dual distribution systems and decentralized treatment. Larger-scale questions involve potential social inequities and the extent to which nontraditional approaches will transfer costs to the consumer. These issues will have to be considered carefully in communities that decide to adopt these new designs for water provision.

The previous discussion raises a number of research issues, some of which are already noted. With regard to the influence of fire fighting requirements, distribution systems are frequently designed to supply water to meet maximum day demand and fire flow requirements simultaneously. This affects minimum pipe diameters, minimum system pressures (under maximum day plus fire flow demand), fire hydrant spacing, valve placement, and water storage. Generally, agencies that set fire flow requirements are not concerned about water quality while drinking water utilities must be concerned about both quality and fire flow capacity. It will be important to better evaluate the effectiveness of alternative fire suppression technologies including automatic sprinkler systems in a wide range of building types, including residences. Such systems have rarely been evaluated for their positive and negative features with respect to water quality. Furthermore, if fire suppression technologies were improved, it might be possible to rely on smaller sized pipes in distribution systems, as is being tested in Europe (Snyder et al., 2002), rather than moving to dual distribution systems.

If alternatives such as satellite systems and dual systems are not used, continued efforts will be required to upgrade existing distribution systems and to treat water to acceptable levels of quality, so that quality does not deteriorate during distribution. The balance of this report is focused on traditional distribution system design, in which water originates from a centralized treatment plant or well and is then distributed through one pipe network to consumers. Nonetheless, many of the report recommendations are relevant even if an alternative distribution system design is used.

Water System Diversity

Water utilities in the United States vary greatly in size, ownership, and type of operation. The SDWA defines public water systems as consisting of community water supply systems; transient, non-community water supply systems; and non-transient, non-community water supply systems. A community water supply system serves year-round residents and ranges in size from those that serve as few as 25 people to those that serve several million. A transient, non-community water supply system serves areas such as campgrounds or gas stations where people do not remain for long periods of time. A non-transient, non-community water supply system serves primarily non-residential customers but must serve at least 25 of the same people for at least six months of the year (such as schools, hospitals, and factories that have their own water supply). There are 159,796 water systems in the United States that meet the federal definition of a public water system (EPA, 2005b). Thirty-three (33) percent (52,838) of these systems are categorized as community water supply systems, 55 percent are categorized as transient, noncommunity water supplies, and 12 percent (19,375) are non-transient, non-community water systems (EPA, 2005b). Overall, public water systems serve 297 million residential and commercial customers. Although the vast majority (98 percent) of systems serves less than 10,000 people, almost three quarters of all Americans get their water from community water supplies serving more than 10,000 people (EPA, 2005b). Not all water supplies deliver water directly to consumers, but rather deliver water to other supplies. Community water supply systems are defined as “consecutive systems” if they receive their water from another community water supply through one or more interconnections (Fujiwara et al., 1995).

Some utilities rely primarily on surface water supplies while others rely primarily on groundwater. Surface water is the primary source of 22 percent of the community water supply systems, while groundwater is used by 78 percent of community water supply systems. Of the non-community water supply systems (both transient and non-transient), 97 percent are served by groundwater. Many systems serve communities using multiple sources of supply such as a combination of groundwater and/or surface water sources. This is important because in a grid/looped system, the mixing of water from different sources can have a detrimental influence on water quality, including taste and odor, in the distribution system (Clark et al., 1988, 1991a,b).

Some utilities, like the one operating in New York City, own large areas of the watersheds from which their water source is derived, while other utilities depend on water pumped directly from major rivers like the Mississippi River or the Ohio River, and therefore own little if any watershed land. The SDWA was amended in 1986 and again in 1996 to emphasize source water protection in order to prevent microbial contaminants from entering drinking water supplies (Borst et al., 2001). Owning or controlling its watershed provides an opportunity for a drinking water utility to exercise increased control of its source water quality (Peckenham et al., 2005).

The water supply industry in the United States has a long history of local government control over operation and financial management, with varying degrees of oversight and regulation by state and federal government. Water supply systems serving cities and towns are generally administered by departments of municipalities or counties (public systems) or by investor owned companies (private systems). Public systems are predominately owned by local municipal governments, and they serve approximately 78 percent of the total population that uses community water supplies. Approximately 82 percent of urban water systems (those serving more than 50,000 persons) are publicly owned. There are about 33,000 privately owned water systems that serve the remaining 22 percent of people served by community water systems. Private systems are usually investor-owned in the larger population size categories but can include many small systems as part of one large organization. In the small- and medium-sized categories, the privately owned systems tend to be owned by homeowners associations or developers. Finally, there are several classifications of state chartered public corporations, quasi-governmental units, and municipally owned systems that operate differently than traditional public and private systems. These systems include special districts, independent non-political boards, and state chartered corporations.

Infrastructure Viability over the Long Term

The extent of water distribution pipes in the United States is estimated to be a total length of 980,000 miles (1.6 x 106 km), which is being replaced at an estimated rate of once every 200 years (Grigg, 2005b). Rates of repair and rehabilitation have not been estimated. There is a large range in the type and age of the pipes that make up water distribution systems. The oldest cast iron pipes from the late 19th century are typically described as having an expected average useful lifespan of about 120 years because of the pipe wall thickness (AWWA, 2001; AWWSC, 2002). In the 1920s the manufacture of iron pipes changed to improve pipe strength, but the changes also produced a thinner wall. These pipes have an expected average life of about 100 years. Pipe manufacturing continued to evolve in the 1950s and 1960s with the introduction of ductile iron pipe that is stronger than cast iron and more resistant to corrosion. Polyvinyl chloride (PVC) pipes were introduced in the 1970s and high-density polyethylene in the 1990s. Both of these are very resistant to corrosion but they do not have the strength of ductile iron. Post-World War II pipes tend to have an expected average life of 75 years (AWWA, 2001; AWWSC, 2002).

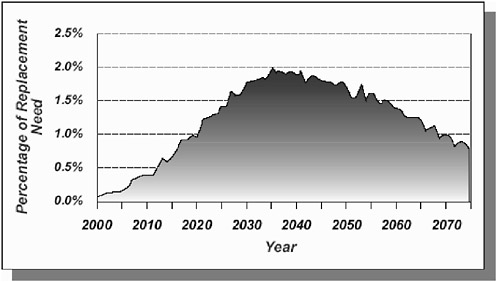

In the 20th century, most of the water systems and distribution pipes were relatively new and well within their expected lifespan. However, as is obvious from the above paragraph and recent reports (AWWA, 2001; AWWSC, 2002), these different types of pipes, installed during different time periods, will all be reaching the end of their expected life spans in the next 30 years. Indeed, an estimated 26 percent of the distribution pipe in the country is unlined and in

poor condition. For example, an analysis of main breaks at one large Midwestern water utility that kept careful records of distribution system management documented a sharp increase in the annual number of main breaks from 1970 (approximately 250 breaks per year) to 1989 (approximately 2,200 breaks per year) (AWWSC, 2002). Thus, the water industry is entering an era where it must make substantial investments in pipe repair and replacement. As shown in Figure 1-6, an EPA report on water infrastructure needs (EPA, 2002c) predicted that transmission and distribution replacement rates will rise to 2.0 percent per year by 2040 in order to adequately maintain the water infrastructure, which is about four times the current replacement rate according to Grigg (2005b).

These data on the aging of the nation’s infrastructure suggest that utilities will have to engage in regular and proactive infrastructure assessment and replacement in order to avoid a future characterized by more frequent failures, which might overwhelm the water industry’s capability to react effectively (Beecher, 2002). Although the public health significance of increasingly frequent pipe failures is unknown given the variability in utility response to such events, it is reasonable to assume that the likelihood of external distribution system contamination events will increase in parallel with infrastructure failure rates.

FIGURE 1-6 Projected annual replacement needs for transmission lines and distribution mains, 2000–2075. SOURCE: EPA (2002c).

DISTRIBUTION SYSTEM INTEGRITY

Many factors affect both the quantity and quality of water in distribution systems. As discussed in detail in Appendix A, events both internal and external to the distribution system can degrade water quality, leading to violation of water quality standards and possible public health risks. Corrosion and leaching of pipe materials, growth of biofilms and nitrifying microorganisms, and the formation of DBPs are events internal to the distribution system that are potentially detrimental. Furthermore, most are exacerbated by increased water age within the distribution system. External contamination can enter the distribution system through infrastructure breaks, leaks, and cross connections as a result of faulty construction, backflow, and pressure transients. Repair and replacement activities as well as permeable pipe materials also present routes for exposing the distribution system to external contamination. All of these events act to compromise the integrity of the distribution system.

For the purposes of this report, distribution system integrity is defined as having three basic components: (1) physical integrity, which refers to the maintenance of a physical barrier between the distribution system interior and the external environment, (2) hydraulic integrity, which refers to the maintenance of a desirable water flow, water pressure, and water age, taking both potable drinking water and fire flow provision into account, and (3) water quality integrity, which refers to the maintenance of finished water quality via prevention of internally derived contamination. This division is important because the three types of integrity have different causes of their loss, different consequences once they are lost, different methods for detecting and preventing a loss, and different remedies for regaining integrity. Factors important in maintaining the physical integrity of a distribution system include the maintenance of the distribution system components, such as the protection of pipes and joints against internal and external corrosion and the presence of devices to prevent cross-connections and backflow. Hydraulic integrity depends on, for example, proper system operation to minimize residence time and on preventing the encrustation and tuberculation of corrosion products and biofilms on the pipe walls that increase hydraulic roughness and decrease effective diameter. Maintaining water quality integrity in the face of internal contamination can involve control of nitrifying organisms and biofilms via changes in disinfection practices.

In addition to the distinctions mentioned above, there are also commonalities between the three types of integrity. All three are subject to system specificity, in that they are dependent on such site-specific factors as local water quality, types of materials present, area served, and population density. Furthermore, certain events involve the loss of more than one type of integrity—for example, backflow due to backsiphonage involves the loss of both hydraulic and physical integrity. Materials quality is important for both physical and water quality integrity. In order for a law or regulation to adequately address distribution system integrity—one of the options being considered during revision of the TCR—it must encompass physical, hydraulic, and water quality integrity.

IMPETUS FOR THE STUDY AND REPORT ROADMAP

Water supply systems have historically been designed for efficiency in water delivery to points of use, hydraulic reliability, and fire protection, while most regulatory mandates have been focused on enforcing water quality standards at the treatment plant. Ideally, there should be no change in the quality of treated water from the time it leaves the treatment plant until the time it is consumed, but in reality substantial changes may occur as a result of complex physical, chemical, and biological reactions. Distribution systems are the final barrier to the degradation of treated water quality, and maintaining the integrity of these systems is vital to ensuring that the water is safe for consumption.

The sections above have discussed the aging of the nation’s water infrastructure and the continuing contribution of distribution systems to public health risks from drinking water. For the last five years, EPA has engaged experts and stakeholders in a series of meetings on the topic of distribution systems, with the goal of defining the extent of the problem and considering how it can be addressed during revisions to the TCR. As part of this effort, EPA led in the creation of nine white papers that summarized the state-of-the-art of research and knowledge in the area of drinking water distribution systems:

-

Cross-Connections and Backflow (EPA, 2002a)

-

Intrusion of Contaminants from Pressure Transients (LeChevallier et al., 2002)

-

Nitrification (AWWA and EES, Inc., 2002e)

-

Permeation and Leaching (AWWA and EES Inc., 2002a)

-

Microbial Growth and Biofilms (EPA, 2002b)

-

New or Repaired Water Mains (AWWA and EES Inc., 2002e)

-

Finished Water Storage (AWWA and EES, Inc., 2002c)

-

Water Age (AWWA and EES, Inc., 2002b)

-

Deteriorating Buried Infrastructure (AWWSC, 2002)

Additional activities are ongoing, including consideration of a revision of the TCR to provide a more comprehensive approach for addressing the integrity of the distribution system. To assist in this process, EPA requested that the National Academies’ Water Science and Technology Board conduct a study of water quality issues associated with public water supply distribution systems and their potential risks to consumers. An expert committee was formed in October 2004 with the following statement of task:

-

Identify trends relevant to the deterioration of drinking water in water supply distribution systems, as background and based on available information.

-

Identify and prioritize issues of greatest concern for distribution systems based on review of published material.

-

Focusing on the highest priority issues as revealed by task #2, (a) evaluate different approaches for characterization of public health risks posed by water quality deteriorating events or conditions that may occur in public water supply distribution systems; and (b) identify and evaluate the effectiveness of relevant existing codes and regulations and identify general actions, strategies, performance measures, and policies that could be considered by water utilities and other stakeholders to reduce the risks posed by water-quality deteriorating events or conditions. Case studies, either at state or utility level, where distribution system control programs (e.g., Hazard Analysis and Critical Control Point System, cross connection control, etc.) have been successfully designed and implemented will be identified and recommendations will be presented in their context.

-

Identify advances in detection, monitoring and modeling, analytical methods, information needs and technologies, research and development opportunities, and communication strategies that will enable the water supply industry and other stakeholders to further reduce risks associated with public water supply distribution systems.

The NRC committee addressed tasks one and two in its first report (NRC, 2005), which is included as Appendix A to this report. The following trends were identified as relevant to the deterioration of water quality in distribution systems:

-

The aging distribution system infrastructure, including increasing numbers of main breaks and pipe replacement.

-

Decreasing numbers of waterborne outbreaks reported per year since 1982, but an increasing percentage attributable to distribution system issues.

-

Increasing host susceptibility to infection and disease in the U.S. population.

-

Increasing use of bottled water and point-of-use treatment devices.

It was recommended in NRC (2005) that EPA consider these trends as it revises the TCR to encompass distribution system integrity. The committee was made aware of another important trend subsequent to the release of NRC (2005)—population shifts and how they have affected water demand. Older industrial cities in the northeast and Midwest United States no longer have industries that use high volumes of water, and they have also experienced major population shifts from the inner city to the suburbs. As a consequence, the utilities have an overcapacity to produce water, mainly in the form of oversized mains, at central locations, while needing to provide water to suburbs at greater distances from the treatment plant. Both factors can contribute to problems associated with high water residence times in the distribution system.

As part of its second task, the NRC committee prioritized the issues that are the subject of the nine EPA white papers, and it identified several significant issues that were overlooked in previous reports. The highest priority issues were

those that have a recognized health risk based on clear epidemiological and surveillance data. These include cross connections and backflow; contamination during installation, rehabilitation, and repair of water mains and appurtenances; improperly maintained and operated storage facilities; and control of water quality in premise plumbing.

This report focuses on the committee’s third and fourth tasks and makes recommendations to EPA regarding new directions and priorities to consider. All of the issues discussed in NRC (2005) are presented here, but considerably more information is presented on the higher priority issues when recommending detection, mitigation, and remediation strategies for distribution systems. The report is intended to inform decision makers within EPA, public water utilities, other government agencies and the private sector about potential options for managing distribution systems.

It should be pointed out that this report is premised on the assumption that water entering the distribution system has undergone adequate treatment. [As recognized in the SDWA, adequate treatment is a function of the quality of source water. For example, some lower quality source waters may require filtration to achieve a product entering the distribution system that is of the same quality (and hence poses the same risk) as a cleaner source water that was treated only with disinfection.] There is not, therefore, an in-depth discussion of drinking water treatment in the report except where it is pertinent to mitigating the risks of degraded water quality in the distribution system. For example, if the lack of disinfectant residual in the distribution system is identified as a risk, the options for mitigating that risk must first consider whether the root cause is inadequate treatment (e.g., insufficient reduction in disinfectant demand), or causes attributable to the distribution system (e.g., excessive water age in storage facilities). It should also be noted that deliberate acts of distribution system contamination are not considered, at the request of the study sponsor.

Chapter 2 reviews the legal and regulatory environment in which distribution systems are designed, operated, and monitored, including federal, state, and local regulations. The limitations and possibilities associated with non-regulatory approaches are also mentioned. Chapter 3 presents the three primary approaches for assessing the public health risk of contaminated distribution systems, focusing on short-term acute risks from microbial pathogens.

Chapters 4, 5, and 6 consider the physical, hydraulic, and water quality integrity of distribution systems, respectively. For each type of integrity, the chapters consider what causes its loss, the consequences if it is lost, and how to detect, maintain, and recover the type of integrity. In most cases, the events that compromise distribution system integrity are discussed only once, in the earliest chapter to which they are relevant. Many of the common themes from these chapters are brought together in Chapter 7, which presents a holistic framework for distribution system management, highlighting those activities felt to be of greatest importance to reducing public health risks. Areas where emerging science and technology can play a role are discussed, including real-time, on-line monitoring and modeling. The report concludes in Chapter 8 by considering the

importance of premise plumbing to overall water quality at the tap, the need for additional monitoring of premise plumbing, and the need for greater involvement by regulatory agencies in exercising authority over premise plumbing. Premise plumbing is an issue not generally considered to be the responsibility of drinking water utilities, but there is growing interest—in terms of public health protection—about the role of premise plumbing in contributing to water quality degradation.

REFERENCES

American Water Works Association (AWWA). 1974. Water distribution research and applied development needs. J. Amer. Water Works Assoc. 6:385–390.

AWWA. 1986. Introduction to Water Distribution Principles and Practices of Water Supply Operations. Denver, CO: AWWA.

AWWA. 1998. AWWA Manual M31: Distribution system requirements for fire protection. Denver, CO: AWWA.

AWWA. 2001. Reinvesting in Drinking Water Structure: Dawn of the Replacement Era. Denver, CO: AWWA.

AWWA. 2003. Water Stats 2002 Distribution Survey CD-ROM. Denver, CO: AWWA.

AWWA and EES, Inc. 2002a. Permeation and leaching. Available on-line at http://www.epa.gov/safewater/tcr/pdf/permleach.pdf. Accessed May 4, 2006.

AWWA and EES, Inc. 2002b. Effects of water age on distribution system water quality. http://www.epa.gov/safewater/tcr/pdf/waterage.pdf. Accessed May 4, 2006.

AWWA and EES, Inc. 2002c. Finished water storage facilities. Available on-line at http://www.epa.gov/safewater/tcr/pdf/storage.pdf. Accessed May 4, 2006.

AWWA and EES, Inc. 2002e. New or repaired water mains. Available on-line at http://www.epa.gov/safewater/tcr/pdf/maincontam.pdf. Accessed May 4, 2006.

American Water Works Service Co., Inc. (AWWSC). 2002. Deteriorating buried infrastructure management challenges and strategies. Available on-line at http://www.epa.gov/safewter/tcr/pdf/infrastructure.pdf. Accessed May 4, 2006.

Baker, M. H. 1948. The quest for pure water. The American Water Works Association. Lancaster, PA: Lancaster Press.

Beecher, J. A. 2002. The infrastructure gap: myth, reality, and strategies. In: Assessing the Future: Water Utility Infrastructure Management. D. M. Hughes (ed.). Denver, CO: AWWA.

Berger, P. S., R. M. Clark, and D. J. Reasoner. 2000. Water, Drinking. In: Encyclopedia of Microbiology 4:898–912.

Blackburn, B. G., G. F. Craun, J. S. Yoder, V. Hill, R. L. Calderon, N. Chen, S. H. Lee, D. A. Levy, and M. J. Beach. 2004. Surveillance for waterborne-disease outbreaks associated with drinking water—United States, 2001–2002. MMWR 53(SS-8):23– 45.

Borst, M., M. Krudner, L. O’Shea, J. M. Perdek, D. Reasoner, and M. D. Royer. 2001. Source water protection: its role in controlling disinfection by-products (DBPs) and microbial contaminants. In: Controlling Disinfection By-Products and Microbial Contaminants in Drinking Water. R. M. Clark and B. K. Boutin (eds.). EPA/600/R-01/110. Washington, DC: EPA Office of Research and Development.

Brandt, M., J. Clement, J. Powell, R. Casey, D. Holt, N. Harris, and C. T. Ta. 2004. Managing Distribution Retention Time to Improve Water Quality-Phase I. Denver CO: AwwaRF.

Clark, R. M. 1978. The Safe Drinking Water Act: implications for planning. Pp. 117– 137 In: Municipal Water Systems—The Challenge for Urban Resources Management. D. Holtz and S. Sebastian (eds.). Bloomington, IN: Indiana University Press.

Clark, R. M., and D. L. Tippen. 1990. Water Supply. Pp. 5.173–5.220 In: Standard Handbook of Environmental Engineering. R. A. Corbitt (ed.). New York: McGraw-Hill Publishing Company.

Clark, R. M., and W. M. Grayman. 1992. Distribution system quality: a trade-off between public health and public safety. J. Amer. Water Works Assoc. 84(7):18.

Clark, R. M., and W. M. Grayman. 1998. Modeling water quality in drinking water distribution systems. Denver, CO: AWWA.

Clark, R. M., E. E. Geldreich, K. R. Fox, E. W. Rice, C. H. Johnson, J. A. Goodrich, J.A. Barnick, and F. Abdesaken. 1996. Tracking a Salmonella Serovar Typhimurium Outbreak in Gideon, Missouri: role of contaminant propagation modeling. Journal of Water Supply Research and Technology–Aqua 45(4):171–183.

Clark, R. M., J. A. Goodrich, and J.C. Ireland. 1984. Cost and benefit of drinking water treatment. Journal of Environmental Systems 14(1):1–30.