3

Rationale for and Overview of the L.E.A.D. Framework

|

KEY MESSAGES

|

Ideally there would be a firm and comprehensive evidence base to inform the myriad decision makers whose actions—intentionally or unintentionally—influence the social, policy, and environmental determinants of excess weight gain and the resulting overweight/obesity. In reality, however, the concept of an evidence base as applied to decision making about treatments for established obesity or other clinical problems

does not apply to many of the decisions that must be made to prevent obesity. The current research literature lacks the power to set a clear direction for obesity prevention interventions across a range of target populations. Thus, although the concept of evidence-based obesity prevention is likely to resonate with many health professionals, policy makers, and stakeholders, challenges are associated with the identification of data that can be used to address the different types of questions decision makers might need to answer. A range of evidence-based intervention approaches have been developed for such public health issues as tobacco control and immunization. However, there is often a mismatch between the types of evidence that are available to answer public health questions and the types of evidence being generated and published.

With respect to obesity prevention, research into the causal factors driving dietary or energy intake and physical activity levels includes a broad range of designs and evidence, including randomized controlled (experimental) trials and quasi-experimental studies at the individual, organizational, and community levels. Although a similarly broad array of research approaches is potentially applicable to environmental and policy strategies to reduce obesity risk through improved diet and reduced caloric intake and/or increased physical activity, the basic methodologies require different conceptualizations, implementation, and emphases for obesity prevention research—particularly to inform interventions designed to change policies or environmental contexts for eating and physical activity. Although the literature reports some positive findings with respect to strategies that work for obesity prevention, there are many gaps in the evidence. This chapter describes those gaps and reviews concepts from evidence-based public health and public policy that can provide the fundamentals for the use of evidence to address complex population health problems such as obesity prevention. The focus is on the evidence needs most likely to impede progress in obesity prevention and those considered most important to address in the development of an evidence framework to inform decisions about obesity prevention interventions.

STATUS OF THE EVIDENCE BASE

The contrast between the high prevalence and consequent importance of addressing obesity and the paucity of the knowledge base with which to inform prevention efforts is striking. This evidence gap creates tension between the sense of urgency to take action and the lack of specificity about what actions to undertake. This is especially true in circumstances when decision makers cannot wait for a body of literature to be created and critically reviewed.

The evidence for obesity prevention is ideally accumulated from a variety of sources to provide insight into a particular topic, often combining quantitative and qualitative data. To this end, systematic reviews of the intervention literature are undertaken that apply strategies designed to limit bias in the assembly, critical appraisal, and synthesis of all relevant studies on a specific topic (Cook et al., 1995). As noted in Chapter 1, the challenges in evaluating and assembling evidence related

to obesity prevention have been highlighted in previous Institute of Medicine (IOM) reports (IOM, 2005, 2007).

To obtain an overview of the status of the existing relevant evidence base, the committee examined studies published over the past 13 years to identify reviews focused on obesity prevention. This examination was not meant to be exhaustive, but to illustrate the scope of existing appraisals and the criteria used to determine what studies did or did not qualify for inclusion. Nearly 50 such reviews, hereafter referred to as “appraisals,” were identified (see Appendix C). Approximately half of these appraisals consider literature published as early as 1980, and several include literature from as early as 1966 and/or the inception of the search databases used. The appraisals consist of meta-analyses, systematic reviews, integrative reviews, a review of reviews, evidence syntheses, best practice summaries, and task force recommendations. Nearly one-third of the appraisals were published in journals specific to obesity; the other two-thirds appeared in journals focused on medicine, preventive medicine, public health, health promotion, family and community health, health education, nutrition, nursing, epidemiology, endocrinology and metabolism, and psychology. Almost three-quarters of the appraisals had been published since 2005, indicating the increased interest in the topic. This examination of the literature revealed the challenges involved in applying traditional evidence hierarchies to population-based prevention efforts, as described below.

Quantity of Available Evidence

In the appraisals examined, the number of studies found eligible for inclusion ranges from 3 to 158, a small percentage of the number of studies initially identified for potential further analysis, which ranged from 12 to 13,158. However, while all of the appraisals report the number of studies found eligible for inclusion, some do not report the number of studies initially identified. The range of studies included reveals a clear discrepancy between what authors of specific studies might think of as obesity prevention research and what authors of reviews consider to be part of the evidence base. There is considerable overlap in studies included in these appraisals; however, conclusions vary as to what is effective. Furthermore, most studies included in the appraisals lack detail about the process of the interventions and process evaluation information. Several of the appraisals explicitly address what types of evidence should be considered relevant, whether one fails to be comprehensive by restricting evidence to randomized controlled trials (RCTs) with rigorous inclusion and exclusion criteria, and whether literature searches should extend beyond well-known databases and include monographs or reports not published in peer-reviewed journals.

Lack of Conceptual Frameworks

The lack of a conceptual framework specifically related to evidence selection has resulted in the majority of appraisals failing to draw conclusions about the most effec-

tive components of interventions. Many of the appraisals have a narrow focus (e.g., schools, local environments) and fail to recognize the larger systems context in which the interventions were implemented. This lack of focus on contextual considerations (such as feasibility [including legal and political aspects], sustainability, effects on equity, potential side effects, acceptability to stakeholders, and cost-effectiveness) and other dimensions of generalizability (such as the intervention reach and the representiveness of participants, implementation and adaptation of the intervention, and its maintenance and institutionalization) limits the usefulness of the appraisals to inform decision making in diverse settings. Furthermore, the challenges that arise when interventions are undertaken in the real world lead the conclusions drawn to be negative or obsolete. In fact, many appraisals exclude comprehensive multilevel, multisector interventions (the most promising type of interventions for addressing obesity, as discussed in Chapter 2) because the specific effects on individuals cannot be disaggregated, and the results are considered “messy.” When such interventions target multiple behaviors simultaneously, it is often difficult to disentangle their impacts. Although such trials yield some positive results, the many gaps in the evidence suggest that obesity prevention lacks the vast array of effective interventions that are available for many other public health issues (e.g., tobacco control, immunization). While efforts are under way to fill these gaps, the gravity of the obesity epidemic calls for immediate action, suggesting that, as noted in an earlier IOM report, decision makers should rely on the best available evidence, instead of waiting for the best possible evidence (IOM, 2005).

Choice of Outcomes

The choice of outcomes on which to focus affects conclusions about the effectiveness of interventions across this set of appraisals. The outcome measures for selecting which individual studies to include in the appraisals were similar, and in most cases were cause for excluding a large percentage of studies initially identified for consideration. As stated in Chapter 2, the ultimate objectives of obesity prevention efforts are lowering the mean BMI level and decreasing the rate at which people enter the upper end of the BMI distribution. Accordingly, most individual-level studies focus on distal measures of weight status (e.g., percent overweight or obese, BMI or BMI z-score, body fat percentage), risk factors, or disease presence rather than the more proximal psychosocial and behavioral outcomes and the pathways that can alter the conditions in which eating, physical activity, and weight control occur (see Figure 2-2 in Chapter 2). Moreover, the period of follow-up in many of the studies included in the appraisals may not have been long enough to detect significant, sustainable changes in weight status. Indeed, the comprehensive changes called for to combat the obesity epidemic—changes in the physical environment, social norms, cultural practices, and policy—often require years to accomplish and are not always tracked or funded at adequate levels for sustained periods. In the appraisals that specify duration of study or length of follow-up as an inclusion criterion, the vast majority required a period of

6-12 months. Thus, many of the appraisals examined report null or negative findings, which limits the ability to justify scaling up of promising interventions. Yet while the ultimate biological outcome (changes in weight status) may not have been achieved, intermediate outcomes (structural, institutional, systemic, social, and environmental changes in society or the community, as shown in Figure 2-2) that influence the cognitive, social, and behavioral outcomes among individuals may have been achieved. These intermediate outcomes have the potential to lead to biological outcomes if the interventions are sustained.

Specifically for evidence-based obesity prevention efforts, a body of intervention research on policy and environmental approaches is largely absent from the literature. Some recent studies examine policy- and environmental-level strategies with the potential to reduce obesity. These studies consider how changes in the food and physical activity environments influence eating and activity behaviors. The criteria applied to rate these efforts include reach, mutability, transferability, effect size, and sustainability of health impact, which may be useful in informing scalability.

FUNDAMENTAL EVIDENCE CONCEPTS

The Perspective from Evidence-Based Medicine

The movement toward evidence-based practice in various public service arenas, including obesity prevention, has been significantly influenced by earlier trends in medicine and published guidelines for evidence standards in evidence-based medicine. For example, the Cochrane Collaboration—an international, independent nonprofit organization dedicated to making current, accurate information about the effects of health care available—assembles, maintains, and disseminates systematically collected and reviewed information on health care interventions (The Cochrane Collaboration, 2009). Its major product is the Cochrane Collaboration of Systematic Reviews (published along with several other databases in the Cochrane Library), which focuses solely on synthesizing research-based evidence on the effectiveness of various treatments in specific circumstances. The Cochrane Library is intended to serve as an electronic resource and reference guide for physicians who make individual patient care decisions. It should be noted that the Cochrane Collaboration has broadened its original scope and now includes the Cochrane Public Health Review Group, which produces and publishes Cochrane reviews of the effects of population-level public health interventions.

The criteria these databases use to select studies from the medical literature to synthesize focus heavily on methodological standards. The level of certainty (internal validity) is a paramount criterion in screening for high-quality evidence. RCTs, which involve randomization of subjects in a double-blinded design, are viewed as one of the best means of maximizing the level of certainty of the causal relationship being tested, affording the tightest possible controls for testing the effectiveness of a treatment and

providing the strongest basis for drawing causal inferences about interventions (West et al., 2000).

The evidence selection criteria of evidence-based medicine have led to a widely disseminated evidence hierarchy that has been adopted by evidence-based movements in nursing, psychology, social work, education, and public health. The hierarchy characterizes the quality of evidence for decision making at three levels. Systematic reviews of RCTs and individual RCT studies of particular therapies are at the top of this hierarchy, serving as the “gold standard”; next are observational studies and systematic reviews of such studies; and at the lowest level are physiologic studies and unsystematic clinical observations (Guyatt and Rennie, 2002). Other research designs and evidence sources, such as surveys, quasi-experimental designs, and qualitative research methods, are sometimes excluded from the evidence hierarchy of evidence-based medicine.

Leading authors in evidence-based medicine have acknowledged that the evidence hierarchy applies better to medical therapies than to clinical decisions that lie outside the therapy domain (Guyatt and Rennie, 2002). For example, evidence on issues of diagnosis, prognosis, or assessment of harmful side effects of a therapy (where an “intervention” cannot be randomly assigned or manipulated) call for alternative study designs and evidence selection criteria.

Evidence-Based Decision Making in Public Health

Many of the approaches discussed in this report fall within the broad category of evidence-based public health (EBPH), which has been defined as “the process of integrating science-based interventions with community preferences to improve the health of populations” (Kohatsu et al., 2004, p. 419). Formal discourse on the nature and scope of EBPH originated in the 1990s. In 1997, Jenicek first defined the concept (Jenicek, 1997). In 1999, scholars and practitioners in Australia (Glasziou and Longbottom, 1999) and the United States (Brownson et al., 1999) elaborated further on EBPH. Glasziou and Longbottom (1999) posed a series of questions to enhance its uptake (e.g., “Does this intervention help alleviate this problem?”) and identified 14 sources of high-quality evidence. Brownson and colleagues (1999, 2003) described a six-stage process by which practitioners can take a more evidence-based approach to decision making. In 2004, Rychetnik and colleagues developed a glossary summarizing many key aspects of EBPH (Rychetnik et al., 2004).

Following principles of EBPH when addressing obesity prevention has numerous direct and indirect benefits, including access to more and higher-quality information on what works, a greater likelihood of implementing successful programs and policies, and more efficient use of public and private resources (Brownson et al., 2003, 2009a; Cavill et al., 2006; Hausman, 2002; Kohatsu and Melton, 2000). As these principles have been formalized, two parallel developments have occurred: recognition that science needs to play a key role in the development of public health programs

and policies, and the instant availability of greater amounts of information (Anderson et al., 2005). The principles of evidence-based decision making apply to medicine and to numerous disciplines and approaches in addition to public health, social work, and public policy (the latter being referred to as evidence-based public policy [EBPP]) (Brownson et al., 2009b; Choi, 2005; Dobrow et al., 2004; Kerner, 2008; Satterfield et al., 2009).

Both EBPH and EBPP appear to be informed by consensus that a combination of scientific evidence and values, resources, and context should enter into decision making (Brownson et al., 2003; Muir Gray, 2009; Rychetnik et al., 2004; Satterfield et al., 2009). In EBPP, three key domains have been described: (1) process, which involves an attempt to understand approaches to enhancing the likelihood of policy adoption (e.g., a strong coalition would enhance the likelihood of advancing an agenda for obesity prevention); (2) content, which involves identifying specific policy elements that are likely to be effective (e.g., obesity policy should be grounded in the latest science); and (3) outcomes, which involves documenting the potential impact of a policy (e.g., a system should be in place to track the effects of new obesity prevention policies) (Brownson et al., 2009b). While the use of research-derived evidence may be a key feature of most policy models, scientific evidence will not necessarily carry as much weight as other types of evidence in real-world policy-making settings. Policy makers operate according to a different hierarchy of evidence than scientists (Choi et al., 2005), leaving the two groups to work in “parallel universes” (Brownson et al., 2006). In interviews with decision makers (which included state legislators and public health agency officials), many reported that they were not trained to distinguish between good and bad data and were therefore prone to the influence of misused “facts” often presented by interest groups (Jewell and Bero, 2008).

Types of Questions That Need to Be Answered

Several types of questions have been identified in the field of EBPH that are important to consider in relation to different aspects of obesity prevention (Brownson et al., 2009b). Type 1 questions address the causes of obesity-related diseases and the magnitude, severity, and preventability of obesity-related risk factors. They suggest that “something should be done” about the obesity epidemic. These questions (whether, where, when) might include such issues as the burden of disease, etiologic relationships, time trends, and high-risk populations. Type 2 questions address the relative impact of specific interventions that do or do not improve health, adding “specifically, this should be done.” For example, Type 2 questions (what works, in what settings, with what outcomes, and at what costs) might include such issues as where interventions are effective, what outcomes are observed, and what an intervention costs. Type 3 questions address how and in what context interventions are implemented and how they are received, thus informing “how something should be done.” These

questions might address how interventions may need to be adapted, what training is needed for staff, or how to sustain effects over time.

Two terms relevant to evidence quality and standards in evidence-based medicine or public health are key to the discussion here and throughout this report: internal validity (the level of certainty of the causal relationship between an intervention and the observed outcomes), hereafter referred to as “level of certainty,” and external validity (the extent to which research results can be generalized to other populations, including individuals, settings, contexts, and time frames, which speaks to issues of the transferability and application of findings to real-world settings), hereafter referred to as “generalizability.” (See Appendix A for formal definitions.)

In relation to Type 2 and perhaps Type 3 questions, studies to date have tended to emphasize the level of certainty of the causal relationship between an intervention and the observed outcome (e.g., well-controlled efficacy trials) while giving limited attention to the intervention’s generalizability (e.g., the translation of science to the various circumstances of practice) (Glasgow, 2008; Glasgow et al., 2006; Green and Glasgow, 2006; Klesges et al., 2008). For example, Klesges and colleagues (2008) reviewed 19 childhood obesity studies to assess the extent to which dimensions of external validity were reported. Their work reveals that some key contextual variables (e.g., cost, program sustainability) are missing entirely in the peer-reviewed literature on obesity prevention. Because generalizability is deemphasized or compromised in study selection and evidence screening procedures based on the hierarchical framework of evidence-based medicine, concern has been raised that applying that hierarchy’s narrow inclusion criteria results in a limited pool of sources for subsequent evidence synthesis (Dixon-Woods et al., 2001). Further, it is increasingly acknowledged that the traditional hierarchy works less well in public health and health care arenas involving large-scale, complex social interventions than in the arena of medical therapies. Evidence standards for community-based public health problems call for taking a broader perspective to locate useful forms of evidence. The types of evidence that are available for assessing both level of certainty and generalizability were a key consideration when the committee developed the framework proposed in this report.

Approaches to Evaluating Evidence

While the highest-quality source of evidence is generally deemed to be the RCT, much of the evidence available for environmental and policy strategies to reduce obesity risk is not derived from such trials. For example, a randomized design is seldom useful in policy research because the scientist cannot randomly assign exposure (the policy), and understanding of the policy process is often gained from qualitative methods (e.g., case studies). In addition, reductionist approaches for inferring causality (common in medicine) tend to reduce a problem to a single cause (Davidovitch and Filc, 2006), whereas obesity is likely to be influenced by a complex web of causation (Hill and Peters, 1998; Kumanyika and Brownson, 2007; Kumanyika et al., 2002).

Attention to Contextual Issues That Influence Interventions

While numerous authors have written about the role of context in informing evidence-based practice and policy, there is little consensus on its definition. When moving from clinical interventions to population-level and policy interventions, context becomes more uncertain, variable, and complex (Dobrow et al., 2004). One useful definition of context highlights information needed to adapt and implement an evidence-based intervention in a particular setting or population (Rychetnik et al., 2004).

The context for questions on how to implement an obesity-related intervention involves five overlapping domains. First is characteristics, such as level of education, of the target population. Second, interpersonal variables provide important context; for example, a person who comes from a family that exercises together may have a lower risk of developing obesity. Third, organizational variables should be considered, such as how an agency’s capacity (e.g., a trained workforce, agency leadership) may influence its success in carrying out an evidence-based obesity prevention program (Dreisinger et al., 2008; Hausman, 2002). Fourth, social norms and culture are known to shape many health behaviors. Finally, larger political and economic forces affect context; for example, a high rate of obesity may influence a state’s political will to address the issue in a meaningful and systematic way.

Particularly for high-risk and understudied populations, there is a pressing need for evidence on obesity prevention in relation to contextual variables and ways of adapting programs and policies across settings and population subgroups. Contextual issues are being addressed more fully in the new “realist review” approach—a systematic review process that involves examining not only whether an intervention works but also how it works in real-world settings (Pawson et al., 2005). This enhanced understanding of context is a key theme of a systems approach (see Chapter 4). Well-reasoned recommendations have emerged from many quarters to replace the hierarchy of evidence-based medicine with a systems approach to garnering, analyzing, and appraising evidence when addressing complex public health problems such as obesity prevention (Briss et al., 2000; Brownson et al., 2003; Green and Glasgow, 2006; Harris, 2000; McKinlay, 1992; Petticrew and Roberts, 2003; Rychetnik et al., 2004; Swinburn et al., 2005). There are several reasons for this shift.

First, unlike medical therapies focused on individual patient care, obesity prevention interventions are typically mounted on a relatively large scale and focus on health issues affecting communities at large (at the regional, national, or even global level). Causal pathways to population outcomes do not involve the straightforward, patient-level treatment-to-outcome linkages typically tested in trials of medical therapies.

Second, obesity prevention interventions frequently comprise multiple components operating at different levels of society. In a typical example, a legislative action at the highest level (e.g., a state action on mandatory nutrition labeling) may be combined with health campaigns and education programs administered at the community

level (e.g., a county or district program delivered via local agencies such as schools, clinics, and hospitals), which are then expected to affect factors at the household/individual level (e.g., lifestyle choices and eating behaviors). Different intervention components at different levels are mediated by social and human factors operating in their natural settings. All such factors call for appropriate observation, measurement, and modeling that are constrained by traditional experimental and quasi-experimental designs. Complex interventions often are constrained in simplistic experimental designs and accompanying analytic models that assume a treatment to be a single manipulated factor (similar to a medical therapy).

Third, while the level of certainty (or internal validity) of research designs continues to be salient, external validity increases greatly in importance when one is identifying and appraising research evidence on public health issues such as obesity. Effective public health interventions need to have generalizability, transferability, and sustainability beyond small-scale research studies (Green and Glasgow, 2006).

Finally, not all questions that arise in taking social action are best answered by cause–effect designs or even research-based evidence. While the issue of whether a particular treatment works to reduce obesity may be answered with experimental designs, questions such as what causative and protective factors could potentially be targeted by interventions call for different approaches and research methods. Correspondingly, different criteria must be applied to build the needed evidence base. In short, the type of evidence must be matched to the policy or practice objective at hand. Potential alternative research designs to the RCT are described in Appendix E.

Transparency in Decision Making

Transparency implies openness in decision making, effective communication, and accountability. The ability to make public health decisions as transparent as possible is likely to foster public trust, better risk communication, and financial accountability (Honore et al., 2007; Laforest and Orsini, 2005; McGloin et al., 2009). Few frameworks for evidence-based decision making have made transparency a central theme; the committee decided in its deliberations that transparency was a goal of its framework. Transparency in summarizing and communicating the evidence used in making a decision is discussed in Chapter 7.

Approaches to Measurement

Measurement of progress in preventing obesity can take several different forms. The recommended indicator of obesity for adults and children is BMI—calculated as weight in kilograms divided by the square of height measured in meters (kg/m2). Beyond measurement at the individual level, consideration of how to measure the effects of environmental and policy changes is a relatively new aspect of obesity research, and appropriate measures and evaluation designs are still being developed. Chapter 8 and Appendix E provide more detailed discussion of methods for measur-

ing effects and generating evidence that are applicable to decision making on obesity prevention and other public health issues.

For decisions on environmental and policy interventions, both quantitative data (e.g., epidemiologic) and qualitative information (e.g., analysis of the content of policy, narrative accounts of the policy process) are often important. Quantitative evidence (i.e., data in numerical quantities) can take many forms, ranging from scientific information in peer-reviewed journals, to data from public health surveillance systems, to evaluations of individual programs or policies. Qualitative evidence involves non-numerical observations, collected by such methods as participant observation, group interviews, or focus groups. Qualitative evidence can be presented in narrative form as a powerful means of influencing policy deliberations, priority setting, and the proposing of environmental and policy solutions by telling persuasive stories with an emotional hook and intuitive appeal.

In designing obesity prevention interventions aligned with population needs and profiles, data on stakeholder opinions, preferences, and acceptability could serve as a useful and legitimate part of the evidence base. Swinburn and colleagues (2005), on behalf of the Prevention Group of the International Obesity Task Force (IOTF), proposed an evidence framework for obesity prevention that incorporates a broad policy perspective to guide users in locating a pool of evidence relevant to the problem. This framework acknowledges that valid and useful evidence cannot be restricted to impact evidence from traditional RCTs. Policy making, from the IOTF perspective, should be guided by a goal to reduce uncertainty given the best information available on the issue. Applying this criterion results in the inclusion of case histories, simulation studies, expert (informed) opinion, economic analyses, formative program evaluations, monitoring and surveillance data, and logic models, as well as research-based evidence from both experimental and observational studies, as relevant data sources.

Evaluation of specific environmental and policy interventions is complicated by the fact that additive or synergistic effects of multiple interventions across different levels and sectors may be necessary to achieve an impact on behaviors related to energy balance and to have a measureable impact on weight status. This need can be addressed in part by multilevel interventions or combinations of studies, but to date such studies are few in number. While the development of metrics for environmental and policy interventions is still in an early (first-generation) stage, several sources provide useful discussion of methods for data collection, promising indicators, and future directions for the field (Brownson et al., 2009a; Cheadle et al., 2000; Khan et al., 2009; Lytle, 2009; McKinnon et al., 2009).

APPLICATION OF AN EXPANDED EVIDENCE FRAMEWORK

To apply the principles of EBPH or EBPP to obesity prevention, an expanded approach to locating, evaluating, and assembling evidence is needed. Neither the more complex nature of the evidence nor the attendant controversies can be a reason for inaction or for taking actions purely on the basis of guesswork. In addition, evidence

on the effectiveness or impact of ongoing actions is needed. Accordingly, this report provides a framework for identifying and using scientific and other relevant knowledge to address complex public health problems, and advocates for its proactive use in addressing the obesity epidemic to increase the soundness of decisions being made on a daily basis by a variety of public and private actors.

Key considerations in the proposed framework include the following:

-

The framework should include an understanding and characterization of the types of decisions and decision-making processes and contexts that are applicable. These decisions often take place within complex systems.

-

Building on this premise requires an understanding and characterization of the respective types of questions being asked, the context involved, and evidence that can inform the decisions being made, along with algorithms to guide the use of this evidence in decision making.

-

To give decision making priority as the basis for the framework does not subordinate the importance of science as a basis for action. Rather, the committee’s approach emphasizes the role of science in helping to address the challenges associated with the untenable societal burden of obesity. The committee’s approach also broadens the scientific platform so that the focus goes beyond obesity as a biomedical problem to encompass multiple disciplines, recognizing that obesity can be prevented only by interventions spanning the social, economic, cultural, and policy realms. This broader platform opens doors to a wealth of additional approaches for addressing evidence needs in the obesity prevention arena.

THE L.E.A.D. FRAMEWORK

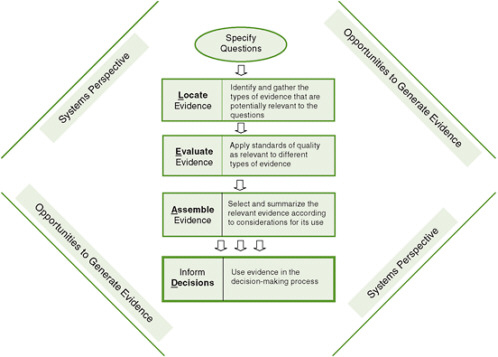

The framework developed by the committee for obesity prevention decision making, and by extension for decision making about other complex prevention scenarios, reflects the above considerations (see Figure 3-1). It incorporates concepts and approaches that are standard procedure in the development of practice guidelines: formulate the question, use an analytic framework to specify key questions to be addressed, do a systematic literature search, evaluate the evidence, describe the body of evidence, and determine its implications. The committee adapted this general approach for use in policy and programmatic decision making about complex public health problems such as obesity prevention.

The Locate Evidence, Evaluate Evidence, Assemble Evidence, Inform Decisions (L.E.A.D.) framework, which highlights the four steps that are at the core of the evidence review process, is intended to convey the entire picture of obesity prevention decision making. It was designed to identify the nature of the evidence that is needed; to clarify what changes in current approaches to generating and evaluating evidence will help meet decision makers’ evidence needs; and to facilitate a systematic approach that enables identification, implementation, and evaluation of promising, reasonable actions. The framework is intended as a guide to using evidence to inform decision

FIGURE 3-1 The Locate Evidence, Evaluate Evidence, Assemble Evidence, Inform Decisions (L.E.A.D.) framework for obesity prevention decision making.

making. Evidence—regardless of how complete or relevant—is only one component of making a decision; decision makers usually must take one or more other factors into account when making final decisions.

For a conceptual understanding of the framework, it is useful to begin with the systems perspective that surrounds the steps in the framework to emphasize the importance of taking such a perspective throughout the process, and then move to specifying questions that will guide the search for evidence; locating, evaluating, and assembling the evidence; and making a decision based on this evidence. Finally, opportunities to generate evidence, like a systems perspective, are pertinent throughout the process. Although the framework leads decision makers and investigators through a series of steps, it is important to point out that a user could begin at any point on the framework and return to earlier steps—for example, from the assemble step back to further elaboration of the questions, broadening the search for evidence accordingly. For example, a researcher seeking ideas for solution-oriented research could first attempt to identify user questions or examine a report generated during the decide step and work backward to identify research needs encountered in locating or evaluating evidence.

Section II of this report provides detail on each step of the L.E.A.D. framework, and elaborates on the other concepts the framework emphasizes: the importance of taking a systems perspective and opportunities to generate evidence. By applying this framework as outlined, decision making, and ultimately public health practice, can be improved.

REFERENCES

Anderson, L. M., R. C. Brownson, M. T. Fullilove, S. M. Teutsch, L. F. Novick, J. Fielding, and G. H. Land. 2005. Evidence-based public health policy and practice: Promises and limits. American Journal of Preventive Medicine 28(5, Supplement):226-230.

Briss, P. A., S. Zaza, M. Pappaioanou, J. Fielding, L. Wright-De Aguero, B. I. Truman, D. P. Hopkins, P. D. Mullen, R. S. Thompson, S. H. Woolf, V. G. Carande-Kulis, L. Anderson, A. R. Hinman, D. V. McQueen, S. M. Teutsch, and J. R. Harris. 2000. Developing an evidence-based Guide to Community Preventive Services—methods. American Journal of Preventive Medicine 18(1, Supplement 1):35-43.

Brownson, R. C., J. G. Gurney, and G. H. Land. 1999. Evidence-based decision making in public health. Journal of Public Health Management and Practice 5(5):86-97.

Brownson, R. C., E. A. Baker, T. L. Leet, and K. N. Gillespie. 2003. Evidence-based public health. New York: Oxford University Press.

Brownson, R. C., C. Royer, R. Ewing, and T. D. McBride. 2006. Researchers and policymakers: Travelers in parallel universes. American Journal of Preventive Medicine 30(2):164-172.

Brownson, R. C., J. E. Fielding, and C. M. Maylahn. 2009a. Evidence-based public health: a fundamental concept for public health practice. Annual Review of Public Health 30(15):1-27.

Brownson, R. C., J. F. Chriqui, and K. A. Stamatakis. 2009b. Understanding evidence-based public health policy. American Journal of Public Health 99(9):1576-1583.

Cavill, N., C. Foster, P. Oja, and B. W. Martin. 2006. An evidence-based approach to physical activity promotion and policy development in Europe: Contrasting case studies. Promotion & Education 13(2):104-111.

Cheadle, A., T. D. Sterling, T. L. Schmid, and S. B. Fawcett. 2000. Promising community-level indicators for evaluating cardiovascular health-promotion programs. Health Education Research 15(1):109-116.

Choi, B. C. 2005. Twelve essentials of science-based policy. Preventing Chronic Disease 2(4):1-11.

Choi, B. C. K., T. Pang, V. Lin, P. Puska, G. Sherman, M. Goddard, M. J. Ackland, P. Sainsbury, S. Stachenko, H. Morrison, and C. Clottey. 2005. Can scientists and policy makers work together? Journal of Epidemiology and Community Health 59(8):632-637.

The Cochrane Collaboration. 2009. The Cochrane Collaboration. http://www.cochrane.org/ (accessed December 15, 2009).

Cook, D. J., D. L. Sackett, and W. O. Spitzer. 1995. Methodologic guidelines for systematic reviews of randomized control trials in health care from the Potsdam consultation on meta-analysis. Journal of Clinical Epidemiology 48(1):167-171.

Davidovitch, N., and D. Filc. 2006. Reconstructing data: Evidence-based medicine and evidence-based public health in context. Dynamis 26:287-306.

Dixon-Woods, M., R. Fitzpatrick, and K. Roberts. 2001. Including qualitative research in systematic reviews: Opportunities and problems. Journal of Evaluation in Clinical Practice 7(2):125-133.

Dobrow, M. J., V. Goel, and R. E. G. Upshur. 2004. Evidence-based health policy: Context and utilisation. Social Science and Medicine 58(1):207-217.

Dreisinger, M., T. L. Leet, E. A. Baker, K. N. Gillespie, B. Haas, and R. C. Brownson. 2008. Improving the public health workforce: Evaluation of a training course to enhance evidence-based decision making. Journal of Public Health Management and Practice 14(2):138-143.

Glasgow, R. E. 2008. What types of evidence are most needed to advance behavioral medicine? Annals of Behavioral Medicine 35(1):19-25.

Glasgow, R., L. Green, L. Klesges, D. Abrams, E. Fisher, M. Goldstein, L. Hayman, J. Ockene, and C. Olrleans. 2006. External validity: We need to do more. Annals of Behavioral Medicine 31(2):105-108.

Glasziou, P., and H. Longbottom. 1999. Evidence-based public health practice. Australian and New Zealand Journal of Public Health 23(4):436-440.

Green, L. W., and R. E. Glasgow. 2006. Evaluating the relevance, generalization, and applicability of research: Issues in external validation and translation methodology. Evaluation & the Health Professions 29(1):126-153.

Guyatt, G., and D. Rennie. 2002. Users’ guides to the medical literature: Essentials of evidence-based clinical practice. Chicago, IL: American Medical Association Press.

Harris, M. R. 2000. Searching for evidence in perioperative nursing. Seminars in Perioperative Nursing 9(3):105-114.

Hausman, A. J. 2002. Implications of evidence-based practice for community health. American Journal of Community Psychology 30(3):453-467.

Hill, J. O., and J. C. Peters. 1998. Environmental contributions to the obesity epidemic. Science 280(5368):1371-1374.

Honore, P. A., R. L. Clarke, D. M. Mead, and S. M. Menditto. 2007. Creating financial transparency in public health: Examining best practices of system partners. Journal of Public Health Management and Practice 13(2):121-129.

IOM (Institute of Medicine). 2005. Preventing childhood obesity: Health in the balance. Edited by J. Koplan, C. T. Liverman and V. I. Kraak. Washington, DC: The National Academies Press.

IOM. 2007. Progress in preventing childhood obesity: How do we measure up? Edited by J. Koplan, C. T. Liverman, V. I. Kraak and S. L. Wisham. Washington, DC: The National Academies Press.

Jenicek, M. 1997. Epidemiology, evidenced-based medicine, and evidence-based public health. Journal of Epidemiology 7(4):187-197.

Jewell, C. J., and L. A. Bero. 2008. Developing good taste in evidence: Facilitators of and hindrances to evidence-informed health policymaking in state government. Milbank Quarterly 86(2):177-208.

Kerner, J. F. 2008. Integrating research, practice, and policy: What we see depends on where we stand. Journal of Public Health Management and Practice 14(2):193-198.

Khan, L. K., K. Sobush, D. Keener, K. Goodman, A. Lowry, J. Kakietek, and S. Zaro. 2009. Recommended community strategies and measurements to prevent obesity in the United States. Morbidity and Mortality Weekly Report 58(RR-7):1-26.

Klesges, L. M., D. A. Dzewaltowski, and R. E. Glasgow. 2008. Review of external validity reporting in childhood obesity prevention research. American Journal of Preventive Medicine 34(3):216-223.

Kohatsu, N. D., and R. J. Melton. 2000. A health department perspective on the Guide to Community Preventive Services. American Journal of Preventive Medicine 18(1, Supplement):3-4.

Kohatsu, N. D., J. G. Robinson, and J. C. Torner. 2004. Evidence-based public health: An evolving concept. American Journal of Preventive Medicine 27(5):417-421.

Kumanyika, S., and R. C. Brownson. 2007. Handbook of obesity prevention: A resource for health professionals. New York: Springer.

Kumanyika, S., R. W. Jeffery, A. Morabia, C. Ritenbaugh, and V. J. Antipatis. 2002. Obesity prevention: The case for action. International Journal of Obesity 26(3):425-436.

Laforest, R., and M. Orsini. 2005. Evidence-based engagement in the voluntary sector: Lessons from Canada. Social Policy and Administration 39(5):481-497.

Lytle, L. A. 2009. Measuring the food environment: State of the science. American Journal of Preventive Medicine 36(4, Supplement):134-144.

McGloin, A., L. Delaney, E. Hudson, and P. Wall. 2009. Symposium on “The challenge of translating nutrition research into public health nutrition.” Session 5: Nutrition communication the challenge of effective food risk communication. Proceedings of the Nutrition Society 68(2):135-141.

McKinlay, J. B. 1992. Health promotion through healthy public policy: The contribution of complementary research methods. Canadian Journal of Public Health 83(Supplement 1): S11-S19.

McKinnon, R. A., J. Reedy, M. A. Morrissette, L. A. Lytle, and A. L. Yaroch. 2009. Measures of the food environment: A compilation of the literature, 1990-2007. American Journal of Preventive Medicine 36(4, Supplement):124-133.

Muir Gray, J. A. 2009. Evidence-based healthcare and public health: How to make decisions about health services and public health. 3rd ed. New York: Churchill Livingstone (Elsevier).

Pawson, R., T. Greenhalgh, G. Harvey, and K. Walshe. 2005. Realist review—a new method of systematic review designed for complex policy interventions. Journal of Health Services Research and Policy 10(Supplement 1):21-34.

Petticrew, M., and H. Roberts. 2003. Evidence, hierarchies, and typologies: Horses for courses. Journal of Epidemiology and Community Health 57(7):527-529.

Rychetnik, L., P. Hawe, E. Waters, A. Barratt, and M. Frommer. 2004. A glossary for evidence based public health. Journal of Epidemiology and Community Health 58(7):538-545.

Satterfield, J. M., B. Spring, R. C. Brownson, E. J. Mullen, R. P. Newhouse, B. B. Walker, and E. P. Whitlock. 2009. Toward a transdisciplinary model of evidence-based practice. Milbank Quarterly 87(2):368-390.

Swinburn, B., T. Gill, and S. Kumanyika. 2005. Obesity prevention: A proposed framework for translating evidence into action. Obesity Reviews 6(1):23-33.

West, S., J. Biesanz, and S. Pitts. 2000. Causal inference and generalization in field settings: Experimental and quasi-experimental designs. In Handbook of research methods in social and personality psychology, edited by H. Reis and C. Judd. Cambridge: University Press. Pp. 40-84.