4

Experiences in Other Countries

Two presentations on the second morning of the workshop offered an international perspective on field evaluation of behavioral science methods for use in intelligence and counterintelligence.

A UNITED KINGDOM PERSPECTIVE

The session’s first presentation featured George Brander of the UK Ministry of Defence, who described his work with a human factors team that uses behavioral science methods to provide support to information operations. The work, he said, requires the full spectrum of human and behavioral sciences, from psychology and sociology to anthropology and even market research, and its complexity, messiness, and incomplete data make traditional evaluation and validation techniques problematic. However, he added, it is still possible to advance the state of the field through maintaining best practices.

Some 15 years ago, Brander said, he was doing traditional human factors engineering, trying to understand and develop techniques to improve the performance of UK military personnel. But about 12 years ago he found the emphasis shifting, and now his focus is on their adversaries and potential adversaries within theatres of operation. The idea was that if researchers understood how to improve their own side’s performance and effectiveness, it should be possible to turn some of those techniques around and to try to reduce the performance and effectiveness of adversaries or shape the perceptions of other influential figures.

As context, Brander quoted British General Sir Michael Jackson, who said, “Fighting battles is not about territory; it is about people, attitudes, and perceptions. The battleground is there.”

In their work, Brander said, there are a variety of possible foci. The first and most obvious is key individuals, such as political leaders, military leaders, business leaders, or opinion leaders. Beyond the individual level, the focus may be on teams or groups of people or larger social groups. In analyzing people at these various levels of aggregation, one can focus on such things as attitudes and opinions, cultural contexts, or the information environment in which the people function. Each of these foci requires expertise of a different sort—psychology for the study of individuals, social psychology for the study of groups, anthropology for the study of cultural contexts, market research for the study of attitudes and opinions, and so on.

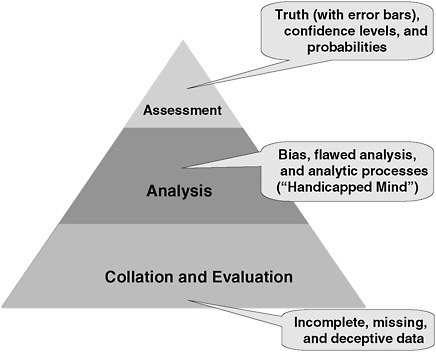

Brander, who is a psychologist, initially worked with other psychologists. “Then we realized that wasn’t enough,” he said, “so we started to recruit anthropologists to work with us to better understand the cultural context. And then because of the importance of the information environment, we incorporated skills from media and marketing and journalism.” As a way of encapsulating what his group does and where the various difficulties arise, Brander displayed a pyramid (see Figure 4-1) adapted from that originally used by Sherman Kent, often described as the father of intelligence analysis. At the bottom of the pyramid is data. The difficulties facing analysts at this level generally arise from data that are incomplete, missing, or deceptive. The middle of the pyramid represents analysis, which can be weakened by bias or flawed analytical processes. At the top of the pyramid is the answer, or the assessment together with associated “likelihood” and “confidence” levels. There are a variety of ways to evaluate and to strengthen each of the parts of the pyramid, Brander said. In the case of data, for instance, there has actually been very little work done on the validity of data, he said, and thus there is generally the possibility that a collection of data is biased in some way. On the other hand, there has been some interesting work on how to improve data, and Brander offered an example from the field of social network analysis.

The network analysis involved groups of people believed to be adversaries, he said, although he would not be more specific. The groups were being viewed as a military organization within which there were various commanders who exercised military command and control. Brander’s hypothesis was that the data might also include some people who were not military commanders as such but rather who helped broker or facilitate between different organizations.

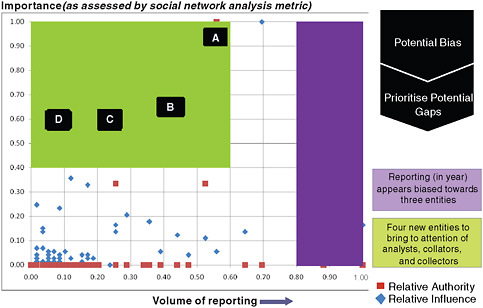

To illustrate their analysis of the data, Brander exhibited a figure that summarized a year’s worth of data they had investigated (see Figure 4-2).

FIGURE 4-1 Analysis pyramid (adapted from Sherman Kent’s pyramid).

SOURCE: Brander (2009). Reprinted with permission.

Each of the squares or diamonds on the figure represents an individual in the network, and the position of each symbol signifies both the amount of data collected on the person (on the horizontal axis) and the importance of the person as assessed by a social network analysis metric (on the vertical axis).

There were three entities in particular for which there was a great deal of data collected; the reporting for that year seemed biased toward those three entities.

In addition to noting the amount of data collected for each of the entities, Brander’s group had also used social network analysis to provide a centrality measure that reflected the importance of a given person in facilitating across different networks. That was one of the particular characteristics that Brander’s group was interested in. And when they performed that analysis, they discovered four individuals for whom there was relatively little data collection but who appeared to be important facilitators according to the analysis. Thus, Brander said, the analysis enabled them to overcome some of the biases in the data collection and identify people who were of interest but who would not have stood out merely in terms of the amount of data collected. Furthermore, he con-

FIGURE 4-2 Identifying bias and gaps in data.

SOURCE: Brander (2009). Reprinted with permission.

cluded, the analysis did indeed identify an individual who would later become a significant player.

The case study also exemplifies the interplay between data and theory, Brander noted. Generally speaking, one collects data for a particular reason, and that reason should be taken into account during the data collection. In this case, the group was interested in individuals who were playing a role as brokers between different organizations, and social network analysis allowed them to identify such individuals who would not otherwise have been noticed. In turn, the group could point these individuals out to the data collectors and ask them to collect more data on them in order to overcome the potential bias in the initial data.

In general, Brander said, there are a variety of problems with the data available. First, the available data vary in their accuracy and completeness. Some data are incomplete, others are biased, still others have errors or may be based on some sort of deception. The data also vary according to the individuals about whom they are being collected, since people themselves vary in many different ways—age, educational background, cultural norms, motivation, access to resources, life experiences, health, and so forth. “We are trying to better understand people, and the data we get vary every time we look at a different individual,” Brander said.

In order to improve the data, Brander and his group have worked with the data collectors in a variety of ways, such as urging them not to throw any data away. Data collectors who may have observed such things, for instance, sometimes do not report mood or emotion for the individual concerned, so Brander’s team has let them know that such information can indeed be helpful and has asked them to report it when possible.

They also have explored alternative methods of data collection, such as body movement analysis. A video of someone, for instance, can provide “a source of triangulation” that might help confirm a hypothesis arrived at through different methods.

They also work with third parties who might have observed a key individual and could report how that person responded to different things in meetings, how that person treated other people, and similar information, all of which can be used to inform the group’s assessments.

Because they are operating in the context of highly variable data, individual variability, and differing cultural norms, the group has borrowed from a variety of theories and approaches to inform their analyses of individuals. These include theories of motivational style, leadership style, personality traits, and life stages. “We put in other theories because all these factors might be important to the individual we are interested in.”

They also employ a variety of tools and methods: questionnaires, frameworks used to help other people make observations, content analysis of speeches, and many others. Assessing these tools and methods also demands a variety of approaches. In some cases, such as self-report questionnaires, they have a great deal of control over the method and it is relatively easy to validate. In the case of remote assessments, which makes up the bulk of what they do, it is much more difficult. They try to compare results from a variety of different methods—observed behavior, third-party assessments, case history analysis, and so forth—and see if they match up. They also use peer review to get a fresh assessment from the outside.

In the case of analysis and assessment of data, process issues are key, Brander said. There is common training for the analysts, who are generally psychologists or anthropologists who have come from the research community. The analysts are given continuous training. “We use challenge functions and discussion peer review, formal review, logbooks, and table review,” Brander said. “We try and track how our methods evolve over time and problems people have had with them so we can course-correct as we go along and evolve our methods.”

They also share approaches across the UK government behavioral science community as well as with their colleagues in allied countries, including the United States and various NATO countries. They have

commissioned various research studies, such as looking at alternative approaches to validation, the goal being to improve analysis and assessment of data by improving the process, even despite the limited opportunities for evaluation.

At the top of the pyramid is assssment, the outcome of the data collection and analysis. The biggest problem is determining what the customers actually want and need.

In trying to deal effectively with the customers, they keep five factors in mind: awareness, plausibility, credibility, trustworthiness, and insight. Awareness is the case of whether customers know they can ask for a particular thing. Do they know, for example, that they could come and talk to an anthropologist about how tribal dynamics work in a particular culture? Plausibility refers to whether the answers make sense to the customer. Credibility refers to whether other people agree with the answers. Trustworthiness depends on the background and credentials of the analysts. And insight refers to the implications for a decision maker—passing the “so what?” test.

Over time, Brander’s group has found a variety of approaches that increase the chances of dealing successfully with customers. “We try and avoid psychological jargon because that creates all kinds of problems. We manage expectations. We say we are not predicting—we are forecasting…. We seek feedback on accuracy and utility; although we don’t often get it. People say that was great but not much more.” And they themselves try to assess the accuracy of their predictions, but it is usually not an easy task. “In terms of how a political situation may evolve or how social change may occur in Afghanistan, for example, the measures are not very good.”

In summary, Brander said, much of what they do is qualitative rather than quantitative. More generally, human factors as applied to analysis and assessment is inevitably largely qualitative, so the question is whether the same quantitative approaches to validation apply. “We use multidisciplinary, multimethodologies that seek to provide insight. We try and create a sufficient degree of rigor and we try to involve best practice. We can’t actually validate our tools and techniques in the traditional sense.” Instead, the validation, such as it is, is done through study approaches and organizational learning aimed at helping them evolve over time toward best practice.

He quoted the English industrialist William Hesketh Lever, who once said, “Half the money I spend on advertising is wasted, and the trouble is I don’t know which half.” That well summarizes, Brander said, the problems he and his colleagues have with evaluating outcomes. “Some of it works. Which part we are not entirely sure.”

CANADIAN DEFENSE VALIDATION EFFORTS

In the session’s second presentation, David Mandel discussed his experience with Defence Research and Development Canada (DRDC), where he is a senior defense scientist and group leader of the Thinking, Risk, and Intelligence Group (TRIG) in the Adversarial Intent Section, the DRDC’s human effectiveness center. An adjunct professor of psychology at the University of Toronto as well, Mandel studies various aspects of human judgment and decision making, particularly expert judgment in the area of intelligence analysis.

Mandel was put in charge of TRIG in January 2008 to carry out research related to various topics in the intelligence field. At the time of the workshop, he had hired three other behavioral scientists to work with him and was hoping to hire another soon. TRIG is currently working on three projects: one on radicalization and the economic crisis; one on developing models of state instability and conflict, which builds on the work of the political instability task force; and one on understanding and augmenting human capabilities for intelligence analysis.

Early on, he said, he found out that many people in the intelligence community with whom he was working did not understand what he did. When he first began speaking with the members from Chief of Defence Intelligence, which is the military intelligence organization that now funds two current TRIG projects, he found that he needed to establish “role clarity” concerning the functions that a behavioral science team might carry out. Some intelligence personnel thought his team might be providing behavioral analysis that would augment the agency’s analytical capabilities. “I had to be clear that we were not analysts and we were not providing analytic products,” Mandel said, “and I explained what we want to do really is to analyze the analytic process and to make recommendations for how to improve that.”

It was a great opportunity for him, Mandel said, because as a behavior decision researcher it was very valuable to get a chance to see what analysts actually do in performing their jobs. He did find, however, that he had to be willing to switch gears from the theory-driven approach that is typical in academia to a mindset in which he paid attention to the issues that were important to those in the intelligence community and worked on those problems.

One of the challenges he has faced in working on those problems is the choice of subjects. The intelligence community tends to be skeptical of research that is done with university students, he said, and, indeed, any research that is not conducted on intelligence personnel may simply be disregarded. Yet it is difficult to free up analysts’ time enough to be able to conduct behavioral studies with them as subjects.

He has come up with two solutions to this catch-22. First, he does

research on real judgments that have already been made. Instead of taking time away from analysts, he works with archival data on intelligence estimates. An advantage of this approach is that it has 100 percent external validity—that is, the results of this research clearly apply to intelligence analysts. However, the internal validity is usually lower than in experiments that are completed under the experimenter’s control. Thus the research may not allow firm conclusions to be drawn about cause-effect relationships.

Mandel’s second approach has been to work with trainees at the Canadian School for Military Intelligence. It does use some of the trainees’ time, but the trainers are generally happy to work with the group because they are interested in the issues the group is examining. Indeed, some of the issues and training protocols that the group has studied have led to changes in the school’s curriculum. In one study, for instance, Mandel and other TRIG scientists taught the trainees an analytical method based on Bayesian reasoning. The group observed a significant increase in the accuracy and logical coherence of the trainees’ judgments after just a brief training.

To conclude, Mandel offered two key lessons for building a partnership between behavioral scientists and the intelligence community. First, never underestimate the importance of being poised to capitalize on opportunities. One must be ready to take action when conditions are amenable to transformative change. He illustrated this by discussing the creation of TRIG.

That creation did not come about through a top-down initiative, with senior management deciding that this particular research capability needed to be developed. Instead, it came about because of an opportunity. The chief executive of DRDC had broadened its mandate from research and development in support of defense activities to research and development in support of defense and security activities. At the same time, the center was going through an organizational realignment, which allowed Mandel the chance to describe some of his ideas to people higher up in the organization. Upper-level buy-in is important, he said, although it is also important for people at the lower levels to scan for opportunities and take advantage of them when they present themselves.

The second key lesson, he said, is never to underestimate the importance of face-to-face interactions. In the first year that he was setting up his group, he took a break from bench research and spent a lot of time in Ottawa, the Canadian capital, meeting with as many people from the intelligence community as he could—directors, analysts, trainers, administrators, and so—in an effort to understand their interests and concerns. And once he had hired the members of his team, he encouraged them to meet people from the intelligence community in order to develop famil-

iarity with them and what they did. This was particularly valuable, Mandel said, because it led the team members to become personally interested in what the analysts did and were trying to do. “We weren’t just reading about a set of problems in a published document,” Mandel said. “We were meeting with people who were telling us, ‘These are the issues we have, and, What can you do about them?’” That allowed the team members to gain a better understanding of what the real applied issues were from the perspective of the analysts and made it easier for them to assess what it was that they could offer as scientists. “That face-to-face interaction, I think, is critical.”

DISCUSSION

A significant part of the discussion following the presentations centered on the qualitative focus that Brander had described as being dominant in the United Kingdom, contrasting it with the more quantitative and device-oriented approach in the United States. Robert Fein, the moderator, said that he had had the chance over the past couple of years to get to know Brander and some of his colleagues and had been impressed with how they pushed and developed qualitative methodologies. He asked Brander why the British had gone so far in the qualitative direction and how they avoided being preoccupied with the hard technology approaches to the social and behavioral sciences that are more common in the United States.

It was partly money, Brander said—qualitative approaches tend to be less expensive than quantitative ones. But it was also because the analysts wanted to get their hands dirty and feel the data for themselves. Eventually, he predicted, they will move to more technical solutions. “For example, we are looking at ways of enhancing content analysis by some machine activity, but we wanted to know what it was like to do it ourselves before we invested heavily in the machinery.”

On a related note, Brander said that the behavioral scientists and the analysts in his department tend to view technology in very different ways. He mentioned in particular a data-mining laboratory with a variety of tools to extract meaning from large amounts of data. The analysts, he said, are generally not interested unless the technology can give them more data or answers. Their attitude is, “I am just going to read everything because somebody is going to ask me a question.” By contrast, the people with science backgrounds are more interested in how the technology might help them better understand their problems or how they might apply it to do better content analysis. In short, different people in the intelligence community see the advantages of technology in different ways.

Philip Rubin commented that it is important to think carefully about

technologies before they are developed. People often get caught up in the details of how the technologies work, but the more important questions are what is being measured, how well it is measured, what are the limitations with the data, and what the data can be used for.

Neil Thomason asked Brander what sorts of indicators exist for various social and behavioral features. Brander responded by discussing briefly what sorts of indicators one would use to measure social change. It is not obvious, and it depends in large part on the underlying theory used to understand and interpret social change. Should one use Max Weber? How about Foucault and the other postmodern French philosophers? In practice one place to start would be to look at attitudes, which can be measured with questionnaires. Behavioral changes would probably come more slowly than changes in attitudes. Such changes in a place like Afghanistan might take several generations to appear, he said. What are we going to measure now?