3

Building Blocks of Intraseasonal to Interannual Forecasting

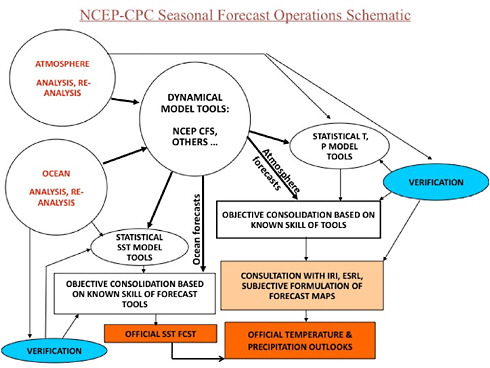

An ISI forecast is made utilizing observations of the climate system, statistical and/or dynamical models, data assimilation schemes, and in some cases the subjective intervention of the forecaster (see Box 3.1). Improvements in each of these components, or in how one component relates to another (e.g., data assimilation schemes expanded to include new sets of observations; observations made as part of a process study to validate or improve parameters in a dynamical model), can lead to increases in forecast quality. This portion of the report discusses these components of ISI forecasting systems, with an emphasis on assessing quality among forecast systems following a change in forecast inputs. Past advances that have contributed to improvements in forecast quality are noted, and the section ends by presenting areas in which further improvement could be realized.

HISTORICAL PERSPECTIVE FOR INTRASEASONAL TO INTERANNUAL FORECASTING

Scientific weather prediction originated in the 1930s, with the objective of extending forecasts as far into the future as possible. Studies at MIT under Carl Gustaf Rossby consequently included longer time scales than just the daily prediction issue. Jerome Namias became a protégé of Rossby, and took on the task of extending to longer scales as director of the “Extended Forecast Section” of the Weather Bureau/National Weather Service. The approaches developed emphasized upper level pressure patterns that could persist or move according to the Rossby barotropic model, and could provide “teleconnections” from one region to another. These patterns were then used to infer surface temperature and precipitation patterns. The latter were initially done by subjective methods, but soon statistical approaches were adopted through the work of Klein. For more than a few days in advance, prediction of daily weather would necessarily have low skill and so monthly or longer forecasts were obtained as averages. Work by Lorenz in the 1960s explained the lack of atmospheric predictability after more than about 10 days in terms of the chaotic nature of the underlying dynamics (see Chapter 2). At about the same time, Namias was emphasizing the need to consider underlying anomalous boundary conditions as provided by SSTs, soil moisture, and snow cover. The importance of changing tropical SSTs through ENSO was first identified by Bjerknes in the late 1960s. A first 90-day seasonal outlook was released by NOAA in 1974.

|

BOX 3.1 TERMINOLOGY FOR FORECAST SYSTEMS Observation—measurement of a climate variable (e.g., temperature, wind speed). Observations are made in situ or remotely. Many remote observations are made from satellite-based instruments. Statistical model—a model that has been mathematically fitted to observations of the climate system using random variables and their probability density functions. Dynamical or Numerical model—a model that is based, primarily, on physical equations of motion, energy conservation, and equation(s) of state. Such models start from some initial state and evolve in time by updating the system according to physical equations. Data assimilation—the process of combining predictions of the system with observations to obtain a best estimate of the state of the system. This state, known as an “analysis”, is used as initial conditions in the next numerical prediction of the system. Operational forecasting—the process of issuing forecasts in real time, prior to the target period, on a fixed, regular schedule by a national meteorological and/or hydrological service. Initial conditions/Initialization—Initial conditions are estimations of the state (usually based on observational estimates and/or data assimilation systems) that are used to start or initialize a forecast system. Initialization can include additional modification of the initial conditions to best suit the particular forecast system. |

Progress since the 1960s can be discussed in terms of advances in forecasting approaches (including their evaluation) and improved understanding and treatment of underlying mechanisms. One major direction of advancement in forecasting has been that of dynamical modeling (see “Dynamical Models”section in this chapter). Generally the dynamical models continued to improve according to advancements in computational resources and a growing knowledge of the key processes to be modeled. However, official forecasts in the United States depended on subjective interpretation of these objective products. In addition, various statistical (empirical) modeling approaches were developed and improved to remain as capable as the dynamical approaches in their validation. Other countries have been developing similar capabilities for seasonal prediction since the 1980s, largely depending on numerical modeling.

Recognition of the role of tropical SST anomalies, especially those associated with ENSO, in driving remote climate anomalies has led to much work in predicting tropical SST. Some of the key advancements in estimating these SSTs developed during the TOGA international study in conjunction with the deployment of the Tropical Atmosphere Ocean (TAO) array in the 1980s and 1990s (NRC, 1996; see “Ocean Observations” section in this chapter and “ENSO” section in Chapter 4).

Further expansion of the efforts in ISI forecasting have been undertaken by CLIVAR (Climate Variability and Predictability), a research program administered by the World Climate

Research Programme (WCRP). CLIVAR supports a variety of research programs9 around the world focused on cross-cutting technical and scientific challenges related to climate variability and prediction on a wide range of time scales. CLIVAR also helps to coordinate regional, process-oriented studies (WCRP, 2010).

What follows is a description of the “building blocks” of an ISI forecasting system: observations, statistical and numerical models, data assimilation schemes. The quality and use of forecasts are also discussed. It is a broad overview, offering some historical context, an evaluation of strengths and weaknesses, and potential avenues for improvement. At the conclusion of Chapter 3, the key potential improvements are summarized; the Recommendations (Chapter 6) have been made with these improvements in mind.

OBSERVATIONS

Observations are an essential starting point for climate prediction. In contrast to weather prediction, which focuses primarily on atmospheric observations, ISI prediction requires information about the atmosphere, ocean, land surface, and cryosphere. Also in contrast to weather prediction, the observational basis for ISI prediction is both less mature and less certain to persist. Indeed, both continuing evolution and the need to sustain observations for ISI prediction are seen as issues at present and into the future. International cooperation and the governance of the World Meteorological Organization do much to ensure continuity of weather observations. Similar international cooperation is being developed for climate observations, but formal international commitments to these observations are not the general case. The following sections describe some of the platforms available for making these observations, and the increase in the number of observations over time.

Observations of quantities that end-users track, such as sea surface temperature and precipitation, and of quantities that record the coupling between elements of the climate system, such as soil moisture and air-sea fluxes, are particularly useful to assess both the realism of models and identify longer-term variability and trends that provide the context for ISI variability. However, current observational systems do not meet all ISI prediction needs, or are not always used to maximum benefit by ISI prediction systems. Some observations for the Earth system needed for initialization are not being taken, or are not available at a spatial or temporal resolution to make them useful. Some observations have not been available for a sufficiently long period of time to permit experimentation, validation, verification, and inclusion within statistical or dynamical models. In yet other cases, the observations are available, but they are not being included in data assimilation schemes. Additionally, regionally enhanced observations or studies that target learning more about the processes that govern ISI dynamics, including developing improved parameterizations of processes that are sub-grid scale in dynamical models, are needed.

New observations, both in situ and remotely sensed, may be available through research programs. Part of the challenge is to integrate these new observations, assess their utility and impact, and then, if the observations contribute to ISI prediction, develop the advocacy required to sustain them. Integration of observational efforts, as in CLIVAR climate process teams or by

bringing together observationalists with operational centers to engage in observing system simulation studies and assessments of the improvement stemming from observations have merit. Heterogeneous networks of observations, at times obtained by different organizations, may need better integration into accessible data bases and particular attention from partnered observationalists and modelers. For all observations, appropriate attention to metadata and data quality, including realistic estimates of uncertainty, are essential to ensuring their use and utility.

Atmosphere

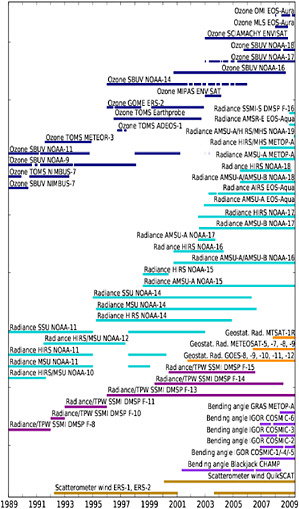

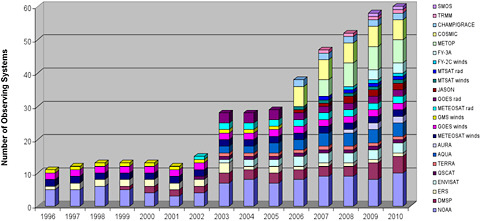

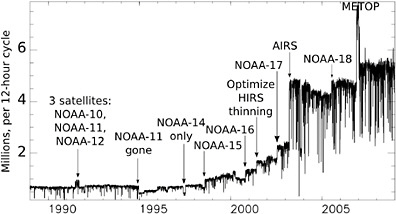

Over the years the conventional meteorological observing system evolved from about 1,000 daily surface pressure observations in 1900 to about 100,000 at the present. Likewise, upper air observations (rawinsondes, pilot balloons, etc.) grew from less than 50 soundings in the 1920s to about 1,000 in the 1950s (of which most were pilot balloons). Today, there are about 1,000 rawinsondes used regularly (Dick Dee, personal communication). Satellite observations, introduced into operations in 1979, ushered in a totally new era of numerical weather prediction, although it was only in the 1990’s that the science of data assimilation (see “Data Assimilation” section in this chapter) progressed enough to demonstrate that there was a clear positive impact from satellite data when added to rawinsondes in the Northern Hemisphere. Figure 3.1 illustrates the huge increase of different types of available satellite observations in the last two decades assimilated for ECMWF operational forecasts. These satellite products have not only grown in number, but also in diversity. They can provide information about atmospheric composition and hydrometeors, as well as vertical profiles of thermodynamic properties.

The assimilation of each of these observing systems poses a new challenge, and the full impact of each may not become clear for years because of the partial duplication of information among the different systems. It is often difficult to attribute an increase in prediction quality to the incorporation of a new set of observations in an ISI forecasting system. Some examples of improvements arising from the assimilation of specific observations, such as AMSU radiances, are discussed in the “Data Assimilation” section of this chapter.

The incorporation of targeted observations that focus on atmospheric processes that are sources of ISI predictability could also contribute to ISI forecast quality. In some cases, these observations exist for research purposes but are not being exploited by ISI forecast systems. In other cases, these observations do not exist. For example, high resolution observations of the vertical structure of the tropical atmosphere could improve the understanding of the MJO, the ability to validate current dynamical models, and perhaps the parameterization of these models. This is part of the mission of the Dynamics of the MJO experiment (DYNAMO; http://www.eol.ucar.edu/projects/dynamo/documents/WP_latest.pdf).

Oceans

As mentioned in Chapter 2, the oceans are a major source of predictability at intraseasonal to interannual timescales. The ocean provides a boundary for the atmosphere where heat, freshwater, momentum, and chemical constituents are exchanged. Large heat losses and evaporation at the sea surface cause convection and make surface water sink into the interior,

FIGURE 3.1: Number of satellite observing systems available since 1989 and assimilated into the ECMWF system. Each color represents a different source/platform. SOURCE: Courtesy of Jean-Noel Thepaut, ECMWF.

while surface heating and the addition of freshwater make surface water buoyant and resistant to mixing with deeper water.

Variability in the air-sea fluxes, in oceanic currents and their transports, and in large-scale propagating oceanic Rossby or Kelvin waves all contribute to the dynamics of the upper ocean and the sea surface temperature. In turn, the states of the ocean surface and sub-surface can force the atmosphere on intraseasonal to interannual timescales, as is clearly evident in the ENSO and MJO phenomena. Therefore, the initialization of sea surface and sub-surface ocean state is required for near-term climate prediction. Unfortunately, the comprehensive observation of the global oceans started much later than in the atmosphere and even today there are challenges that prevent collection of routine observations over large parts of the ocean.

The significant climatic impacts of ENSO, especially after the 1982–1983 event, demonstrated that a sustained, systematic, and comprehensive set of observations over the equatorial Pacific basin was needed. The TAO/Triangle Trans-Ocean Buoy Network (TRITON) array was developed during the 1985–1994 Tropical Ocean Global Atmosphere (TOGA) program (Hayes et al., 1991, McPhaden et al., 1998). The array spans one-third of the circumference of the globe at the equator and consists of 67 surface moorings plus five subsurface moorings. It was fully in place to capture the evolution of the 1997–1998 El Niño. In 2000, the original set called TAO was renamed TAO/TRITON with the introduction of the TRITON moorings at 12 locations in the western Pacific (McPhaden et al., 2001). TAO/TRITON has been the dominant source of upper ocean temperature and in situ surface wind data near the equator in the Pacific over the past 25 years and has provided the observational underpinning for theoretical explanations of ENSO such as the recharged oscillator

(e.g. Jin, 1997). It provides a key constraint on initial conditions for seasonal forecasting at many centers around the world.

After the success of the TAO/TRITON array, further moored buoy observing systems have been developed over the Atlantic (PIRATA) and Indian (RAMA) oceans under the Global Tropical Moored Buoy Array (GTMBA) program. The moorings allow simultaneous observations of surface meteorology, the air-sea exchanges of heat, freshwater, and momentum, and the vertical structure of temperature, salinity, horizontal velocity, and other variables in the water column. Thus, they provide the means to monitor both the air-sea exchanges and the storage capacity of the upper ocean. The PIRATA array was designed for the purpose of improving the understanding of ocean-atmosphere interactions that affect the regional patterns of climate variability in the tropical Atlantic basin (Servain et al., 1998). The array, launched in 1997 and still being extended, currently has 17 permanent sites. The RAMA array was initiated in 2004 with the aim of improving our understanding of the east Africa, Asian, and Australian monsoon systems (McPhaden et al., 2009). It currently consists of 46 moorings spanning the width of the Indian Ocean between 15ºN and 26ºS. It is expected to be fully completed in 2012.

The maintenance of the GTMBA is absolutely essential for supporting climate forecasting. However, there are many difficulties in maintaining these arrays, not the least of which is identifying institutional arrangements that can sustain the cost of these observing systems (McPhaden et al., 2010). Away from the equator, the permanent in situ moored arrays are sparser and address sample the characteristic extra-tropical regions of the ocean-atmosphere system under the international OceanSITES program. Few such sites exist in high latitude locations, but efforts are underway in the United States (under the National Science Foundation Ocean Observatories Initiative) and in other countries to add sustained high latitude ocean observing capability.

In parallel to the development of the moored buoy arrays, the observation of SST has improved markedly over the last 20 years. SST is a fundamental variable for understanding the complex interactions between atmosphere and ocean. Since 1981, operational streams of satellite SST measurements have been put together with in situ measurements to form the modern SST observing systems (Donlon et al., 2009). Since 1999 more than 30 satellite missions capable of measuring SST in a variety of orbits (polar, low inclination, and geostationary) have been launched with infrared or passive microwave retrieval capabilities. New approaches to integrate remote sensing observations with in situ SST observations that help reduce bias errors are being taken (Zhang et al., 2009).

Despite the evident progress, an important issue remains: satellite observations of SST only started in the 1980s and satellites have a relatively short life span. Therefore, further work is necessary to ensure the “climate quality” of the data over long periods. This would facilitate the generation of SST re-analysis products for operational seasonal forecasting (Donlon et al., 2009).

Even with the evident progress made with the tropical moored buoy arrays and the improvement of the satellite measurements of SST, as recently as the late 1990s there were still vast gaps in observations of the subsurface ocean. Such observations are needed for seasonal to interannual prediction. The ability of the ocean to provide heat to the atmosphere, the extent to which the upper ocean can be perturbed by the surface forcing, and the dynamics of the ocean that lead to changes in the distribution of heat and freshwater all depend on the vertical and horizontal structure of the ocean and its currents.

Surface height observations by satellite altimeters have added information about the density field in the ocean and thus, for example, the redistribution of water properties and mass

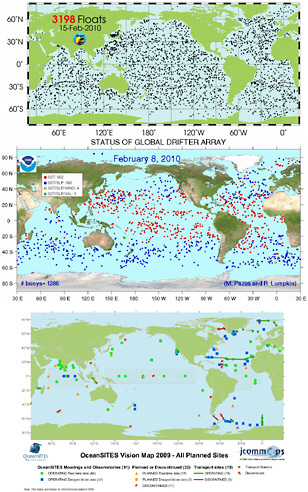

along the equator associated with ENSO. Efforts to quantify the state of the ocean were further improved by the international implementation of the Argo profiling float program (http://www.argo.ucsd.edu/). Until then, most sub-surface ocean measurements were taken by expendable bathythermograph (XBT) probes measuring temperature versus depth and by shipboard profiling of salinity and temperature versus depth from research vessels, which are both limited in their global spatial coverage and depth. The Argo program was initiated in 1999 with the aim of deploying a large array of profiling floats measuring temperature and salinity to 1,500 to 2,000 meters deep and reporting in real time every 10 days. To achieve a 3º x 3º global spacing, 3,300 floats were required between 60ºS and 60ºN. As of February 2009, there are 3,325 active floats in the Argo array. After excluding floats from which data was not passing the quality control and those in high latitudes (beyond 60º latitude) or in heavily sampled marginal seas, the number of floats is only 2,600. Argo data is distributed via the internet without restriction and about 90% of the profiles are available within 24 hours of acquisition. Quality control continues after receipt of the data, particularly for the salinity observations. To improve the quality of data from Argo floats, ship-based hydrographic surveys obtaining salinity and temperature profiles are needed, and the process may require several years before the Argo data experts are confident that the best data quality has been achieved (Freeland et al., 2009). However, the real time Argo data is a critical contribution. With it, the depth of the surface mixed layer can be mapped globally, thus determining the magnitude (depth and temperature) of the oceanic thermal reservoir in immediate contact with the atmosphere.

Internationally, there is a coordinated effort under the Joint Commission on Oceanography and Marine Meteorology (JCOMM) of the World Meteorological Organization (WMO) and the International Oceanographic Commission (IOC) to coordinate sustained global ocean in situ observations, including Argo floats, surface SST drifters, Volunteer Observing Ship-based measurements, tropical moored arrays, and the extra-tropical moored buoys. Remote observations of surface vector winds combined with drifting buoy data can be used to identify the wind-driven flow of the upper ocean, thus complementing the ability of Argo floats and altimetry to observe the density-driven flow. Future satellite observations of interest include those of surface salinity.

The in situ ocean observing community will benefit from an ongoing dialog with those interested in improving prediction on intraseasonal and interannual timescales. Programs such as the World Climate Research Programme’s (WCRP) CLIVAR work to coordinate sustained observations in the ocean with focused process studies that improve understanding of climate phenomena and processes. Distributed and sustained ocean and air-sea heat flux observations with global and full depth coverage are being used to identify biases and errors in coupled and ocean models. These include the surface buoys and associated moorings of OceanSITES and the repeat hydrographic survey lines done in each basin every 5–10 years. The moorings provide high temporal resolution sampling from the air-sea interface to the seafloor, while the surveys map ocean properties along basin-wide sections. Both programs provide data sets that quantify the structure and variability of the ocean that are often found in model fields. In contrast, denser sampling arrays are deployed for a limited duration as part of process studies. These studies are

FIGURE 3.2 Examples of the spatial distribution of various ocean observations mentioned in the text. Top panel: Argo floats, which can provide surface and sub-surface information. SOURCE: Argo website (http://www.argo.ucsd.edu/) Middle panel: Drifters, which can provide SST, SLP, wind, and salinity information (see colors in legend). SOURCE: NOAA (http://www.aoml.noaa.gov/phod/dac/gdp.html). Bottom panel: OceanSITES, intended for long-term observations for depths up to 5000m in a stationary location. SOURCE: (OceanSITES http://www.jcommops.org/FTPRoot/OceanSITES/maps/200908_VISION.pdf)

designed to improve our understanding of physical processes and to aid in the parameterization of the processes not fully resolved by models. CLIVAR also works to build connectivity among the observing community, researchers investigating ocean processes and dynamics, and climate modelers. Process studies by CLIVAR and others add to understanding of ocean dynamics, develop improved parameterizations of processes not resolved in ocean models, and guide longer term investments in ocean observing.

Land

The land variables of potential relevance for seasonal prediction—the variables for which accurate initialization may prove fruitful—are soil moisture, snow, vegetation structure, water table depth, and land heat content. These variables help determine fluxes of heat and moisture between the land and the atmosphere on large scales and thus may contribute to ISI forecasts. In addition, some of these variables are associated with local hydrology and hydrological prediction (e.g., observations of snow in a mountain watershed in the winter can provide information on spring water supply). This evolution in the use of land and hydrological observations mirrors the emerging interest in new types of ocean observations, noted in the previous section.

Despite their importance to the surface energy and moisture balances and fluxes, our ability to measure such land variables on a global scale is extremely limited. Thus, alternative approaches for their global estimation have been, or still have to be, developed.

Soil Moisture

Of the listed land variables, soil moisture (perhaps along with snow) is probably the most important for subseasonal to seasonal prediction. For the prediction problem, however, direct measurements of soil moisture are limited in three important ways. First, each in situ soil moisture measurement is a highly localized measurement and is not representative of the mean soil moisture across the spatial scale considered by a model used for seasonal forecasting. Second, even if a local measurement was representative of a model’s spatial grid scale, the global coverage of existing measurement sites would constitute only a small fraction of the Earth’s land area, with most sites limited to parts of Asia and small regions in North America. Finally, even if the spatial coverage were suddenly made complete, the temporal coverage would still be lacking; long historical time series (decadal or longer) may be needed to interpret a measurement properly before using it in a model.

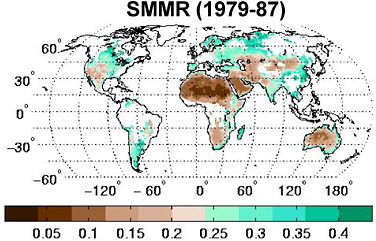

Satellite retrievals offer the promise of global soil moisture data at non-local scales. Data from the Scanning Multichannel Microwave Radiometer (SMMR) and Advanced Microwave Scanning Radiometer—Earth Observing System (AMSR-E) instruments, for example, have been processed into global soil moisture fields (Owe et al., 2001; Njoku et al., 2003). Figure 3.3 shows an example of the mean soil moisture as observed by the SMMR instrument. Such instruments, however, can only capture soil moisture information in the top few millimeters of soil, whereas the soil moisture of relevance for seasonal prediction extends much deeper, through the root zone (perhaps a meter). The usefulness of satellite soil moisture retrievals or their associated raw radiances will likely increase in the future as L-Band measurements come online

FIGURE 3.3 Mean soil moisture (m3/m3) in upper several millimeters of soil, as estimated via satellite with the SMMR instrument using the Owe et al. (2001) algorithm. SOURCE: Adapted from Reichle et al. (2007).

and data assimilation methods are further developed (see section on “Data Assimilation” in this chapter and the soil moisture case study in Chapter 4).

Currently, global soil moisture information for model initialization has to be derived indirectly from other sources. A common approach is to utilize the soil moisture produced by the atmospheric analysis already being used to generate the atmospheric initial conditions. This approach has the advantage of convenience, and the soil moisture conditions that are produced reflect reasonable histories of atmospheric forcing, as generated during the analysis integrations—if the analysis says that May is a relatively rainy month, then the June 1 soil moisture conditions produced will be correspondingly wet.

The main meteorological driver of soil moisture, however, is precipitation, and analysis-based precipitation estimates are far from perfect. Thus, a more careful approach to using model integrations to generate soil moisture initial conditions has been developed in recent years. This approach is commonly referred to as LDAS, for “Land Data Assimilation System”, although the term is something of a misnomer; true land data assimilation in the context of the land initialization problem is discussed further in the “Data Assimilation” section below. LDAS systems are currently in use for some experimental real-time seasonal forecasts and are planned for imminent use in some official, operational seasonal forecasts.

An operational LDAS system produces real-time estimates of soil moisture by forcing a global array of land model elements offline (i.e., disconnected from the host atmospheric model) with real-time observations of meteorological forcing. (Here, real-time may mean several days to a week prior to the start of the forecast, to allow time for processing.) Real-time atmospheric data assimilation systems are the only reasonable global-scale sources for such forcings as wind speed, air temperature, and humidity. However, the evolution of the soil moisture state depends even more on precipitation and net radiation, whose reanalysis estimates are not reliable.

Consequently, LDAS systems use alternative sources such as merged satellite-gauge precipitation products (e.g., CMAP, or the Climate Prediction Center Merged Analysis of Precipitation) and satellite-based radiation products (e.g., AFWA AGRMET, or Air Force Weather Agency Agricultural Meteorology Modeling System). The LDAS system may still need atmospheric analysis data for the sub-diurnal time sequencing of the forcing, but the alternative data sources prove invaluable for “correcting” these precipitation and radiation time series so that their temporal-averages are realistic.

Such LDAS systems also require global distributions of surface parameters (vegetation type, soil type, etc.), currently available in various forms (e.g., Rodell et al., 2004). Consistency between the parameter set used for the LDAS system and that used for the full forecast system is an important consideration.

Snow

Real-time direct measurements of snow on the global scale do not exist, though some measurements are available at specific sites, for example, in the western United States (Snowpack Telemetry, SNOTEL) and through coded synoptic measurements made at weather stations (SYNOP). For global data coverage, satellite measurements are promising—certain instruments (e.g., MODIS) can estimate snow cover accurately at high resolution on a global scale. Satellite snow retrievals, however, also show significant limitations. For the seasonal forecasting problem, snow cover is not as important as snow water equivalent (SWE), which is the amount of water that would be produced if the snowpack were completely melted. Satellite estimates of SWE are made difficult by the sensitivity of the retrieved radiances to the morphology (crystalline structure) of the snow, which is almost impossible to estimate a priori—a given snowpack may have numerous vertical layers with different crystalline structures, reflecting the evolution of the snowpack with time through compaction and melt/refreeze processes. Compounding the difficulty of estimating SWE from space are spatial heterogeneities in snowpack associated with topography and vegetation.

The LDAS approach described above can provide SWE in addition to soil moisture states, assuming the land model used employs an adequate treatment of snow physics. In the future, the merging of LDAS products with the available in situ snow depth information and satellite-based snow data in the context of true data assimilation (see “Data Assimilation” section) will likely provide the best global snow initialization for operational forecasts.

Vegetation Structure

Current operational seasonal forecast models treat vegetation as a boundary condition, with prescribed time-invariant vegetation distributions and (often) prescribed seasonal cycles of vegetation phenology, e.g., leaf area index (LAI), greenness fraction, and root distributions. Early forecast systems relied on surface surveys of these quantities, and modern ones generally rely on satellite-based estimates.

Reliable dynamic vegetation modules would, for the seasonal prediction problem, allow the initialization and subsequent evolution of phenological prognostic variables such as LAI and rooting structure. A drought stressed region, for example, might be initialized with less leafy

trees, with subsequent impacts on surface evapotranspiration, and the leaf deficit would only recover if the forecast brought the climate into a wetter regime. However, the use of dynamic vegetation models in seasonal forecasts is not on the immediate horizon for forecast centers, in light of other priorities and the need to develop these models further.

Water Table Depth

The use of water table depth information in historical and current operational systems is prevented by two things. First, outside of a handful of well-instrumented sites, such information does not exist (though GRACE satellite measurements of gravity anomalies can provide useful information at large scales); the global initialization of water table depth given current measurement programs is currently untenable. Second, even if such observations were available, land surface models used in current seasonal forecasting systems do not model variations in moisture deeper than a few meters below the surface, so that the observations, if they did exist, could not be used. The lack of deep water table variables in the models also prevents the estimation of water table depth through the LDAS approach. Given the long time scales associated with the water table, improvements in its measurement and modeling do have the potential to contribute to ISI prediction.

Soil Heat Content

Real-time in situ measurements of subsurface heat content are spotty at best and far from adequate for the initialization of a global-scale forecast system. Satellite data have limited penetration depth; they can only provide estimates of surface skin temperature. Global initialization of subsurface heat content can thus be accomplished in only two ways: (1) through an LDAS system, as described above, and (2) through a land data assimilation approach that combines the LDAS system information with observations of variables such as soil moisture, snow, and skin temperature. For maximum effectiveness, the land models utilized in these systems need to include temperature state variables representing at least the depth of the annual temperature cycle (i.e., a few meters).

Polar Ice

Polar regions are important components of the climate system. The most important parameters are those that influence the exchange of heat, mass, and momentum with the atmosphere and global oceans. NASA, NOAA, and DOE have polar-orbiting satellites that are collecting relevant data in the Arctic region. The National Snow and Ice Data Center (http://nsidc.org/) is supported by NOAA, NSF, and NASA to manage and distribute cryosphere data. The National Ice Center (http://www.natice.noaa.gov/) is funded by the Navy, NOAA, and the Coast Guard to provide snow, ice (ice extent, ice edge location), and iceberg products in the Arctic and in the Antarctic.

The NRC Report Towards an Integrated Arctic Observing System (NRC, 2006) advocated observation of “Key Variables” using in situ and remote sensing methods. These

include albedo; elevation/bathymetry; ice thickness, extent, and concentration; precipitation; radiation; salinity; snow depth/water equivalent; soil moisture; temperature; velocity; humidity; freshwater flux; lake level; sea level; aerosol concentration; and land cover. Observing methods and recommendations are reviewed and presented in the Cryosphere Theme Report of the Integrated Global Observing System (IGOS 2007). Their cryospheric plan includes satellite-based, ground-based, and aircraft-based observations together with data management and modeling, assimilation, and reanalysis systems.

In terms of monitoring climate variability and change and weather and climate prediction, these reports identify the priority cryosphere observations as: long-term consistent records of cryosphere variables, high spatial and temporal resolution fields of snowfall, snow water equivalent, snow depth, albedo and temperature, and mapping of permafrost and frozen soil, lake and sea ice characteristics. Remote sensing methods can be used to address sea ice extent (recorded since the late 1970s) and ice thickness (recorded more recently, with IceSat) in order to investigate ice mass balance and the movement. Aerial sea ice reconnaissance needs to continue. Relevant in situ methods include the use of autonomous underwater vehicles (AUVs), moorings, and automated weather stations.

More specific recommendations are provided by Dickson et al. (2009), who assert that climate models do not represent Arctic processes well, limiting our ability to understand change in the Arctic seas and the impact of that change on climate. They also advocate that observations are needed for the Norwegian Atlantic current transport of heat, salt, and mass into the Arctic Ocean and of the amounts that enter by the Fram Strait and the Barents Sea; the change in sea ice in response to inflows into the Arctic of warmer water; the change and variability in temperature and salinity profiles beneath the ice, as by Ice-Tethered Profilers (ITPs); and, in general, all quantities relevant to the estimation of ocean-atmosphere heat exchange in the Arctic. For improving sea ice prediction, Dickson et al. (2009) recommends improved sea ice thickness measurements, especially in the spring. Such improved measurements could be obtained from below and above the ice as well as on the ice, using (for example) laser and radar altimetry, tiltmeter buoys on the ice surface, and floats or moorings below the ice with upward looking sonars.

STATISTICAL MODELS

Statistical and dynamical predictions are complementary. Advances in statistical prediction are often associated with enhanced understanding, which may lead to improved dynamic prediction, and vice versa. In addition, both techniques can ultimately be combined to provide better guidance for decision support.

Linear Models

What follows is a description of techniques used in statistical prediction models. Many of these techniques are similar to validation schemes for numerical models, for which the strengths and weaknesses are shown in Table 2.1. Also, a more in-depth description is provided in Appendix A.

Correlation and Regression

There is a long history of using correlation patterns to identify teleconnections, beginning with the landmark Southern Oscillation studies of Walker in the early 20th century (Katz, 2002) and increasing exponentially since (e.g., Blackmon et al., 1984; Wallace and Gutzler, 1981). The idea of teleconnections in meteorology is tied closely to that of correlations. Base points in locations, such as the center of the Nino3.4 box (Hanley et al., 2003), have served as the origin for teleconnection analysis throughout the globe (Ding and Wang, 2005) and have formed the basis for forecast quality (Johansson et al., 1998). The relationships between two locations can be calculated by measuring the mean squared error between the base point and the remote location. Large correlations correspond to a large degree of covariability and a correspondingly small mean squared error. Linear regression is an extension of correlation where directionality is assumed in diagnosing relationships between a predictor variable and a response variable or predictand.

Most often, the variance of the response variable is partitioned into components that are explained or unexplained by the predictor. The coefficient of determination (known as R2) gives the amount of variance explained by the predictor and is often used to assess the goodness of fit for a given model, though it has been criticized as a forecast performance index for verification as it ignores bias (Murphy, 1995). If the assumptions regarding the distribution of the data are met, significance of the model parameters can be assessed through t-tests. In cases when the assumptions are not met, bootstrapping of the (x,y) pairs or the model residuals has been shown to be effective (Efron and Tibshirani, 1993).

Often the problems addressed by regression require multiple predictors to give meaningful answers. The statistical model, multiple regression, is a generalization of simple regression. Rather than pairs of data measured simultaneously, n-tuples of data are used where all of the predictors, x1, x2, … ,xm and the predictand, y, form the training data that are observed over n cases.

Historically, the most common application of regression methodology has been for relating numerical model output to some predictand at a future time using linear regression. The method is called “model output statistics” (MOS) by Glahn and Lowry (1972). This is related to another regression technique, known as the perfect prog (PP) method (Klein et al., 1959) where both predictors and response variables are observed quantities in the training dataset. These methods are popular, as they utilize information at relatively larger scales to represent sub grid scale processes. MOS has the advantage over PP of correcting for forecast model biases in the mean and variance. The disadvantages of MOS include rebuilding the equations with changes in models and assimilation systems. Brunet et al. (1988) offer detailed comments on the relative advantages of each method, claiming PP was superior for shorter-range forecasts and MOS for longer time leads.

When cross-correlations are used to establish relationships between two non-adjacent locations, the maps of correlations are termed teleconnections. The earliest instance of using such a methodology was to establish the correlation structure of the Southern Oscillation (Walker, 1923). Maps of teleconnectivity at widely separated locations at a given geopotential height have been constructed to establish the centers of action of various modes in the mid-troposphere (Wallace and Gutzler, 1981). A catalogue of such teleconnections, based on

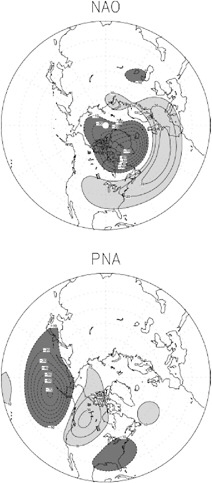

FIGURE 3.4 Example of two teleconnection patterns (North Atlantic Oscillation (NAO); Pacifc-North American pattern (PNA)), shown as anomalies in the 500-hPa geopotential height field. Dark shading indicates negative anomalies, and light shading indicates positive anomalies.The patterns emerge from a rotated Empirical Orthogonal Function analysis of monthly mean 500-hPa geopotential heights. Contour interval is 10 m. SOURCE: Johansson (2007).

principal component loadings (used to summarize the linked regions) was created by Barnston and Livezey (1987).

Empirical Orthogonal Functions (EOF)/Principal Component Analysis (PCA)

The use of eigentechniques was pioneered by Pearson (1902) and formalized by Hotelling (1933). The key ideas behind eigentechniques are to take a high dimensional problem that has structure (often defined as a high degree of correlation) and establish a lower dimensional problem where a new set of variables (e.g., eigenvectors) can form a basis set to reconstruct a large amount of the variation in the original data set. In terms of information theory, the goal is to capture as much signal as possible and omit as much noise as possible. While that is not always realized, the low dimensional representation of a problem often leads to useful results. Assuming that the correlation or covariance matrix is positive semidefinite in the real domain, the eigenvalues of that matrix can be ordered in descending value to establish the relative importance of the associated eigenvectors. Sometimes the leading eigenvector is related to some important aspect of the system. However, modes beyond the first are rarely related to specific physical phenomena owing to the orthogonality imposed on the EOFs/PCs. One possibility is to transform the leading PCs to an alternate basis set. This process is known as PC rotation (Horel, 1981; Richman, 1986; Barnston and Livezey, 1987) and has been shown to offer increased stability and isolation of patterns that match more closely to their parent correlation (or covariance) matrix. For example, in Figure 3.4, the rotated EOFs that are derived from monthly 500-hPa geopotential height data define two teleconnection patterns, the North Atlantic Oscillation (NAO) and the Pacific North American (PNA) pattern. These patterns explain a relatively large portion of the variance in the 500-hPa geopotential height data and can be related to the large-scale dynamics of the atmosphere as well as incidences of extreme weather in certain locations. In cases where the data lie in a complex domain, eigenvectors can be extracted in “complex EOFs.” Such EOFs can give information on travelling waves, under certain circumstances, as can alternative EOF techniques that incorporate times lags to calculate the correlation matrix (Branstator, 1987). As was the situation for correlation analysis, EOF/PCA are data compression methods. They do not relate predictors to response variables.

Canonical Correlation Analysis(CCA)/Singular Value Decomposition (SVD)/Redundancy Analysis (RA)

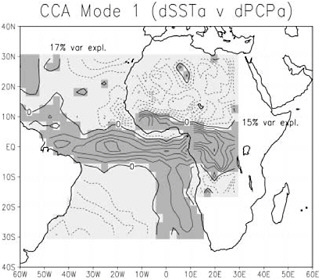

A multivariate extension of linear regression is canonical correlation analysis (CCA). It can be thought of as multiple regression where there is more than one predictor (x1, x2,…, xm) and multiple response variables (y1, y2,…,yp). Consequently, CCA is useful for prediction of multiple modes of variability associated with climate forcing (Barnston and Ropelewski, 1992). The goal of CCA is to isolate important coupled modes between two geophysical fields. Singular value decomposition (SVD) is analogous to CCA when applied to an augmented covariance matrix. Despite the similarity, Cherry (1996) argues that the techniques have different goals. Both techniques can lead to spurious patterns (Newman and Sardeshmukh, 1995), particularly when the observations are not independent and the cross-correlations/cross-covariances are weak relative to the correlations within the x’s and y’s. In such cases, pre-

FIGURE 3.5: Patterns relating errors in SST to errors in precipitation. Dark gray areas in the ocean indicate areas of “warm” errors; lighter shading indicates “cold” errors. Over land, dark gray indicates “wet” errors; lighter shading indicates “dry” errors that accompany the pattern of errors in SST. Contours are in normalized units, with a 0.1 contour interval. SOURCE: Goddard and Mason (2002).

filtering the predictors and response variables with EOF or PCA may have benefits (Livezey and Smith, 1999). Given the oversampling in time and space of most climate applications, PCA is often used as the initial step to establish a low dimensional set of uncorrelated basis vectors subject to CCA (Barnett and Preisendorfer, 1987). Despite the potential pitfalls, CCA has been shown to exhibit considerable skill for long-range climate forecasting (Barnston and He, 1996) and is one of the favored techniques for relating teleconnections to climate anomalies. Figure 3.5 shows an example of how CCA has been used to relate errors in SST in the tropical Atlantic Ocean to errors in model-produced estimates of precipitation in parts of Africa. Recently, redundancy analysis (RA), a more formal modeling approach based on regression and CCA, has been applied successfully to find coupled climate patterns useful in statistical downscaling (Tippett et al., 2008).

Constructed Analogues

Natural analogues are unlikely to occur in high degree-of-freedom processes (see Table 2.1 regarding historical use of analogues in prediction). In reaction to this, van den Dool (1994) created the idea of constructing an analogue having greater similarity than the best natural analogue. The construction is a linear combination of past observed anomaly patterns in the

predictor fields such that the combination is as close as desired to the initial state. Often, the predictor (the analogue selection criterion) is based on a reconstruction from the leading eigenmodes of the data field at a number of periods prior to forecast time. The constructed analogue approach has been used successfully to forecast at lead times of up to a year (van den Dool et al., 2003) and usually outperforms natural analogues forecasting one meteorological variable from another contemporaneously. A constructed analogue yields a single linear operator derived from data by which the system can be propagated forward in time.

Nonlinear Models

Most of the linear tools have nonlinear counterparts. Careful analysis of the data will reveal the degree of linearity. Additionally, comparison of the skill for linear versus nonlinear counterparts will reveal the degree of additional information to be gained by nonlinear methods. Specific recommendations on techniques to apply are given in Haupt et al. (2009).

Logistic Regression

Logistic regression is a nonlinear extension of linear regression for predicting dichotomous events as the response variable. The function that maps the predictor to the response variable is called the logistic response function, which is a monotonic function ranging from zero to one10. This involves minimizing the loss function using a nonlinear procedure. Logistic regression has been applied successfully to problems such as precipitation forecasting (Applequist et al., 2002), medium range ensemble forecasts (Hamill et al., 2004), and blocking beyond two weeks (Watson and Colucci, 2002).

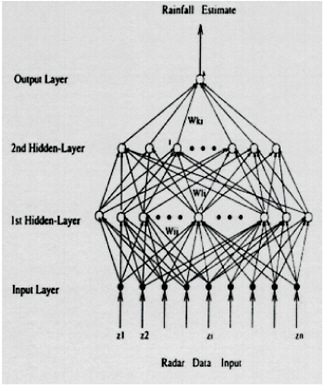

Artificial Neural Networks (ANN)

Artificial Neural networks (ANNs) have been applied successfully to numerous prediction problems, including ENSO (Tangang et al., 1998) and precipitation forecasts from teleconnection patterns (Silverman and Dracup, 2000). An ANN is composed of an input layer of neurons, one or more hidden layers, and an output layer. Each layer comprises multiple units connected completely with the next layer, with an independent weight attached to each connection. The number of nodes in the hidden layer(s) is dependent on the process being modeled and is determined by trial and error. Such models require considerable investigator supervision to train as nonlinear techniques are prone to overfitting noise (finding solutions at local minima). During the training process the error between the desired output and the calculated output is propagated back through the network. The goal is to find the network architecture that generalizes best.

Support Vector Machines (SVM)

Support vector machines (SVM) are a form of supervised learning techniques that use kernels to arrive at solutions at the global minimum or generalization error. For data existing in high dimensional space, SVM will separate the data into several subsets, attempting to achieve an optimal linear separation. This can be useful for noisy data sets.

SVM have been applied to cloud classification problems (Lee et al, 2004), wind prediction (Mercer at al., 2008) and severe weather outbreaks (Mercer et al., 2009). Comparison of SVM to standard logistic regression in Mercer et al. (2009) suggests that SVM is equal or superior to the more traditional techniques in minimizing misclassification of forecasts. On the ISI time scale, Lima et al. (2009) have shown that kernelized methods lead to additional skill in ENSO forecasts over traditional PCA techniques

FIGURE 3.6 Schematic of an Artificial Neural Network (ANN) for forecasting rainfall from radar data. The network is composed of “nodes” (circles) that are linked by “weights” (arrows). The number of hidden layers and number of nodes in each layer can be specified by the user, or determined through experimentation. Weights are determined by training the network on a subset of the data. SOURCE: Figure 2 from Trafalis et al. (2005).

Composites

Determination of climate system processes often involves univariate or bivariate displays of the slowly varying forcing (e.g., ENSO, MJO). Investigating how such signal propagates through the climate system can be accomplished through correlation or regression. These approaches are unconditional as all the data are used to establish the pertinent relationships. Another possibility is to condition the relationships on subsets of time when the climate system is in a given state. Such states are termed composites. A key aspect of creating these states is to measure the intra-state variability to ensure that all cases assigned to a given state have commonality. The quality of a composite is often tested by calculating the means of each group to insure adequate separation. Relating climate linkages to such composites is commonly performed to relate forcing to effects in climate studies (e.g., Ferranti et al. 1990; Hendon and Salby 1994; Myers and Waliser, 2003; Tian et al. 2007 for the MJO). Most often, correlations are used to establish the linkages, although comparisons can be based on linear or nonlinear statistics.

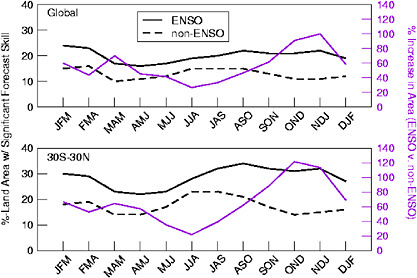

Figure 3.7 provides an example of how composites can distinguish, better than linear methods, anomalous precipitation patterns in the continental United States associated with the SST anomalies in the tropical Pacific Ocean. The figure shows that the patterns of precipitation associated with warmer-than-average SST are not necessarily “mirror images” of the patterns of precipitation associated with colder-than-average SST. For example, for the warmer-than-average SST composite, areas of the Midwest exhibit significantly drier-than-average conditions during March, while the Great Plains experience wetter-than-average conditions (third row, left column). By comparison, the composite representing precipitation associated with colder-than-average SST shows near-average conditions for much of the Midwest, with significantly drier-than-average conditions across the Great Plains (third row, right column).

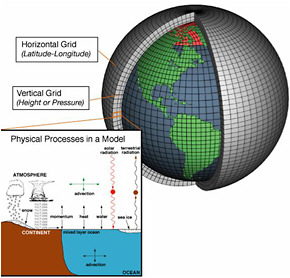

DYNAMICAL MODELS

With the advent of computers, it became feasible to solve the fluid dynamical equations representing the atmosphere and the ocean using a three-dimensional gridded representation. Physical processes that could not be resolved by this representation, such as turbulence, were parameterized using additional equations. The computer software that solves this set of equations is referred to as a dynamical or numerical model. The earliest dynamical models were developed for the atmosphere for the purposes of weather forecasting, and dynamical (or physical) models for other components of the climate system (land, ocean, etc.) followed thereafter.

Evolution of Dynamical ISI Prediction

Some of the earliest attempts at making ISI predictions with dynamical models were performed to essentially extend the range of weather forecasts. Miyakoda et al. (1969) described two-week predictions made with a hemispheric general circulation model (GCM). Miyakoda et al. (1983) used an atmospheric general circulation model (AGCM) with a horizontal resolution of 3–4 degrees and 9 levels in the vertical, which was the state of the art for weather forecasting at the time. Their study used a 30-day prediction to forecast a blocking event that occurred

FIGURE 3.7. Composites of monthly-mean precipitation anomalies associated with months experiencing warm SST (left column) or cold SST (right column) in the tropical Pacific for January through April. These maps illustrate that opposite signed SST anomalies do not necessarily produce opposite signed precipitation anomalies (e.g., the areas experiencing wetter-than-average conditions when SST is warm are not necessarily drier-than-average when SST is cold, and vice versa). Contour interval is 10 mm of precipitation; solid contours are for wet anomalies; dashed contours are for dry anomalies. Shading indicates areas of statistical significance. SOURCE: Adapted from Livezey et al. (1997).

during January 1977. The success of the prediction was attributed to improved spatial resolution and better representation of subgrid-scale processes.

Extended range numerical predictions of this sort were referred to as dynamical extended range forecasting (DERF) to distinguish them from short and medium range weather forecasts. The numerical models used for extended range forecasts were the same AGCMs that were then being used for weather forecasting. These AGCMs solved the basic three-dimensional fluid

dynamical equations numerically, using either finite-differencing or spectral decomposition. The AGCMs also incorporated physical parameterizations of shortwave and longwave radiation, moist convection, boundary layer processes, and subgrid-scale turbulent mixing. The complexity and resolution of these AGCMs increased throughout the 1990s in concert with computing power and understanding.

Although the DERF activities had some limited successes, they were barely scratching the surface of short-term climate prediction, in part because some processes were still poorly represented (e.g., MJO, see Chen and Alpert, 1990; Lau and Chang, 1992; Jones et al., 2000; Hendon et al., 2000) and there was no coupling to the ocean. One of the premises of the DERF approach was that there was enough information in the atmospheric initial conditions to make useful extended range predictions. In the terminology of Lorenz (1975), this would be predictability derived from knowledge of the initial condition. Because of the rapid decay of quality with lead time, one would not expect useful predictions on seasonal or longer time scales to arise solely from atmospheric initial conditions. To obtain forecast quality on longer timescales, one has to consider predictability arising from the knowledge of the evolution of boundary conditions or external forcing11 (Lorenz, 1975; Charney and Shukla, 1981).

One of the most important boundary conditions for an atmospheric model is sea surface temperature (SST). Variations in SST can heat or cool the atmosphere, influence the rainfall patterns, and thus change the atmospheric circulation. This is especially obvious in the tropical Pacific, where strong SST anomalies associated with the El Niño -Southern Oscillation (ENSO) phenomenon significantly alter atmospheric convection patterns. Although more subtle, and secondary to the initial conditions of the diabatic heating and circulation structure, SST as a boundary condition for properly initiating the MJO is also expected to be important (e.g., Krishnamurti et al., 1988; Zheng et al., 2004; Fu et al., 2006). The evolution in diabatic heating associated with ENSO and MJO events affects not only the local atmospheric circulation over the tropics, but also affects atmospheric circulation in extratropical regions such as North America through teleconnections (Wallace and Gutzler, 1981; Hoskins and Karoly, 1981; Weickmann et al., 1985; Ferranti et al. 1990).

The link between tropical Pacific SST and atmospheric anomalies elsewhere makes prediction of ENSO valuable for climate predictions in many remote regions. It is a continuing challenge to characterize this link (i.e., how a particular SST anomaly or evolution of anomalies may affect a given, remote location), especially given the complex interactions among local and remote processes that can contribute to predictability in a particular location. Better characterization of the link between ENSO (and other processes that affect boundary conditions for large-scale circulation) and the climate of remote locations is an important component for translating ISI forecasts into quantities useful for decision-makers (see “Use of Forecasts” section in this chapter).

In order to exploit atmospheric predictability associated with ENSO, one has to predict the SST in the tropical Pacific. The quasi-periodic nature of ENSO, with enhanced spectral power in the 4–7 year band, suggested that useful predictions might be possible months or seasons in advance. The next major step in short-term climate prediction came about when Cane et al. (1986) used a simple model of ENSO, a one-layer ocean representing the thermocline and a simple Gill-type model for the atmosphere, to make numerical predictions of ENSO events.

Successful predictions with the Cane-Zebiak model shifted the focus of short-term climate prediction to ENSO forecasting. ENSO is associated with much of the forecast quality at global scales in current forecast systems on seasonal to interannual timescales, although some other phenomena may dominate in specific regions. The type of model used by Cane and Zebiak is referred to as an Intermediate Coupled Model (ICM), because the atmospheric and the oceanic model are highly simplified. Following the success of the ICM approach, more sophisticated techniques were developed for ENSO prediction. One was the Hybrid Coupled Model (HCM) approach, where the atmospheric model remained simple but the one-layer ocean model was replaced by a comprehensive ocean general circulation model (OGCM). Neither the ICM nor the HCM approaches produced useful predictions of atmospheric quantities over continents. Therefore, a two-tier approach was used to produce climate forecasts over land. The SSTs predicted by the ICM/HCM (Tier 1) were used as the boundary condition for AGCM predictions (Tier 2).

Another approach to ENSO prediction was the use of a comprehensive coupled GCM (CGCM), where an AGCM is coupled to an ocean GCM, with the two models exchanging fluxes of momentum, heat, and freshwater. CGCMs were originally developed for studying long term (centennial) climate change associated with increasing greenhouse gas concentrations. CGCMs used for climate change used coarse spatial resolution to facilitate multi-century integrations. The shorter integrations required for ENSO prediction allowed finer spatial resolution, especially in the ocean, which could better resolve the processes important for ENSO. Finer resolution in the atmosphere improved forecast quality over the continents without requiring a two-tier approach. The quality of ENSO predictions in a CGCM arises almost exclusively from initial conditions in the upper ocean.

The major modeling/forecasting centers began to use CGCMs for ENSO prediction in the 1990s (Ji and Kousky, 1996; Rosati et al., 1997; Stockdale et al., 1998; Schneider et al., 1999) although the two-tier approach continued to be used operationally to predict the associated terrestrial climate. Atmospheric model resolution was initially about 2–4 degrees in the horizontal and the ocean model resolution was 1–2 degrees, often with substantially finer meridional resolution near the equator. Initial conditions were derived from an ocean data assimilation system.

Early attempts to use CGCMs for ENSO prediction fared poorly when compared to the ICM/HCM approaches or statistical techniques. CGCM predictions for ENSO suffered from “climate drift,” where the model prediction evolved from the “realistic” initial condition to its own equilibrium climate state. This led to a rapid loss in quality for ENSO predictions. Statistical corrections applied a posteriori (Model Output Statistics, see “Correlation and Regression” section in this chapter) had only limited efficacy in arresting this loss of quality. Anomaly coupling strategies, where the atmospheric and oceanic models exchange only anomalous fluxes, were also used (Kirtman et al., 1997), but did not address the underlying deficiencies of the component models.

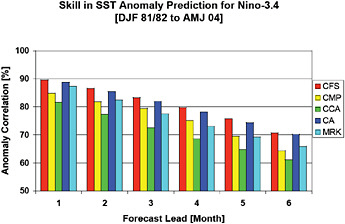

Over the last decade, the ENSO forecast quality associated with CGCMs has improved significantly. Reductions in the model bias and improved ocean initial conditions have now enabled CGCMs to be competitive with statistical models. An important development has been the use of multi-model ensembles (MME), where predictions from a number of different CGCMs are combined to produce the final forecasts (Krishnamurti et al., 2000; Rajagopalan et al., 2002; Robertson et al., 2004; Hagedorn et al., 2005). The Development of a European Multi-model Ensemble System for Seasonal to Interannual Prediction (DEMETER) project included seasonal

predictions from seven different CGCMs, with atmospheric horizontal resolutions ranging from T42–T63 and oceanic horizontal resolution in the 1–2 degree range (Palmer et al., 2004). The MME forecast quality of the DEMETER ensemble (and other ensembles) beats the quality of any single CGCM that is part of the ensemble (Palmer et al., 2004; Jin et al., 2008). The MME anomaly correlation skill of Nino3.4 at 6 month lead time is 0.86 in the ensemble considered by Jin et al. (2008), with individual models showing lower correlations (some as low as 0.6).

In terms of intraseasonal and MJO prediction, evaluating and incorporating the role of ocean coupling has evolved somewhat independently. Given the shorter time scale relative to ENSO, the interaction with SST has been found to be mostly limited to the ocean mixed-layer (e.g, Lau and Sui, 1997; Zhang, 1996; Hendon and Glick, 1997). A number of model studies have indicated improvement in MJO simulation and prediction by incorporating SST coupling of various levels of sophistication (e.g., Waliser et al., 1999b; Fu et al., 2003; Zheng et al., 2004; Woolnough et al., 2007; Pegion and Kirtman, 2008).

Current Dynamical ISI Forecast Systems

Currently, CGCMs serve as the primary tool for dynamical ISI prediction. Improvements in atmospheric model resolution mean that it is no longer necessary to use a two-tiered approach for ISI prediction. In operational forecasting centers, CGCMs are used in conjunction with sophisticated data assimilation systems and statistical post-processing to produce the final forecasts. Typically, the atmospheric component of a CGCM is a coarse-resolution version of the AGCM used for short-term weather forecasts. A CGCM also includes a land component (as part of the AGCM), an ocean component, and optionally a sea ice component (Figure 3.8). CGCMs also include a comprehensive suite of physical parameterizations to represent processes such as convection, clouds, and turbulent mixing that are not resolved by the component models. In this section, we provide a brief overview of the state-of-the-art in model resolution for CGCMs used for ISI prediction at two of the major operational forecasting centers, NCEP and ECMWF.

The atmospheric component of the NCEP Climate Forecast System (CFS) (Saha et al., 2006), which became operational in August 2004, currently has a horizontal resolution of 200 km (T62) with 64 levels in the vertical. It is scheduled to have a six-fold increase in horizontal resolution in 2010. The oceanic component of the CFS is derived from the GFDL Modular Ocean Model version 3 (MOM3), which is a finite difference version of the ocean primitive equations with Boussinesq and hydrostatic approximations. The ocean domain is quasi-global extending from 74ºS to 64ºN, with a longitudinal resolution of 1º and a latitudinal resolution that varies smoothly from 1/3º near the equator to 1º poleward of 30º. The model has 50 vertical levels, with spacing between levels (resolution) ranging from 10 m near the surface to over 500 m in the bottom level. The atmospheric and oceanic components exchange fluxes of momentum, heat, and freshwater daily, with no flux correction. Soil hydrology is parameterized using a simple two-layer model. Sea ice extent is prescribed from observed climatology.

At ECMWF, the current generation of the Seasonal Forecasting System (v3) has an atmospheric model with a horizontal resolution of 120 km (T159), with 62 levels in the vertical (http://www.ecmwf.int/products/changes/system3/). In contrast, the current operational deterministic weather prediction AGCM used by ECMWF has a resolution of 16 km and 91 vertical levels. The ocean model has a longitudinal resolution of 1.4º and a latitudinal resolution that varies smoothly from 0.3º near the equator to 1.4º poleward of 30º. There are 29 levels in

FIGURE 3.8 Schematic of a coupled general circulation model illustrating the horizontal/vertical grid, the different components (atmosphere, land, ocean), important physical processes, and air-sea flux exchange. SOURCE: NOAA.

the vertical. A tiled land surface scheme (HTESSEL) is used to parameterize surface fluxes over land. Sea ice is handled though a combination of persistence and relaxation to climatology.

Systematic errors are found in the mean state, the annual cycle, and ISI variance of climate simulations in the current generation of CGCMs (Gleckler et al., 2008). Model errors in the tropical Pacific, such as a cold SST bias or a ‘double’ Inter-tropical Convergence Zone, are particularly troublesome because they impact phenomena such as ENSO and the MJO that are important for ISI prediction. Indeed, the models often exhibit significant errors in the simulation of spatial structure, frequency, and amplitude of ENSO and the MJO. These errors lead to the degradation of ISI prediction quality in CGCMs. Although some of the systematic errors can be attributed to poor horizontal resolution of the CGCMs, other errors are attributable to deficiencies in the subgrid-scale parameterizations of unresolved atmospheric processes such as moist convection, boundary layers and clouds, as well as poorly resolved oceanic processes such as upwelling in the coastal regions. Improvements in both model resolution and subgrid-scale parameterizations are needed to address these problems.

Multi-Model Ensembles

As mentioned above, one source of error in dynamical seasonal prediction comes from the uncertainties arising from the physical parameterization schemes. Such uncertainties and

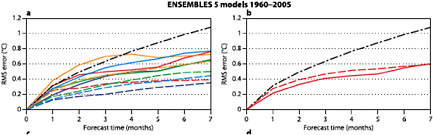

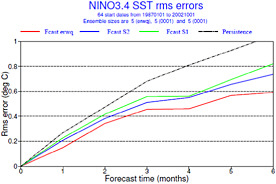

errors may be, to some extent, uncorrelated among models. A multi-model ensemble (MME) strategy may be the best current approach for adequately resolving this aspect of forecast uncertainty (Palmer et al., 2004; Hagedorn et al., 2005; Doblas-Reyes et al., 2005; Wang et al., 2008; Kirtman and Min, 2009; Jin et al., 2009)12. Figure 3.9 demonstrates how a multi-model ensemble can outperform the individual models that are used to form the ensemble. The MME strategy is a practical and relatively simple approach for quantifying forecast uncertainty. In fact, as argued in Palmer et al. (2004), Kirtman and Min (2009) and a number of studies using the DEMETER seasonal prediction archive and the APCC/CliPAS seasonal prediction archive, the multi-model approach appears to outperform any individual model using a standard single model approach (e.g., Jin et al., 2009; Wang et al., 2009). Although the “standard” MME approach applying equal weights to each model is relatively straightforward to implement, it has some shortcomings. For example, the choice of which models to include in the MME strategy is in practice ad-hoc and is limited by the “available” models. It is unknown whether the available models are in any sense optimal. Indeed, it is an open question as to whether more sophisticated single model methods such as perturbed parameters or stochastic physics will out perform MME strategies.

Developing alternative methodologies for combining the models can be challenging since the hindcast records for CGCMs used to assign weightings to the models are limited. Using predictions or simulations from AGCMs allows for longer records. One example is the super ensemble technique proposed by Krishnamurti et al. (1999), where the individual model weights depend upon the statistical fit between the model’s hindcasts with observations during a training period. If a model has consistently poor predictions for a variable at a specific location during the training period, the weight could be zero or negative. Another approach is the Bayesian combination approach developed by Rajagopalan et al. (2002) and refined by Robertson et al. (2004), in which the prior probabilities are equal to the climatological odds, and models are optimally weighted based on probabilistic likelihood based on past performance. An outstanding question for MME research involves explaining why some MME statistics, such as the ensemble mean, consistently outperform the individual models. Similarly, it would be valuable to improve our understanding of what the ensemble mean and ensemble spread represent and how differences among these statistics can be best evaluated following MME experiments.

DATA ASSIMILATION

For the purposes of climate system prediction, data assimilation (DA) is the process of creating initial conditions for dynamical models. Since ISI predictions are based on coupled ocean-land-atmosphere models, it seems apparent that data assimilation eventually needs to be carried out in a coupled mode. At the present, however, data assimilation is being done separately for different model components, with exceptions such as the partial coupling carried out in the recent NCEP reanalysis (Saha et al., 2010). In the following sections, the current approaches (non-coupled) for carrying out assimilation on atmospheric, ocean, and land observations are discussed.

FIGURE 3.9 Comparison of RMSE of individual models to the multi-model mean for for Nino3 SST (solid) and ensemble standard deviation (spread) around the ensemble mean (dashed). The curves on the left represent individual models; the red curve on the right represents the multimodel mean The multi-model mean has a lower RMSE at nearly all forecast lead times, and a larger spread among its members, indicating that it outperforms any of the individual models. The black dash-dotted curve indicates the performance of a persistence forecast. SOURCE: Weisheimer et al. (2009).

Atmospheric Data Assimilation

Early efforts at data assimilation for short-term weather prediction used a priori assumptions about the statistical relationship between observed quantities and values at model gridpoints. The most sophisticated of these early methods was referred to as optimal interpolation (OI). Modern data assimilation for short-term numerical weather prediction objectively combines observations, model predictions started at earlier times, and a priori statistical information about the observations and the model to create initial conditions for updated model predictions.

The central theme of the evolution of atmospheric DA has been to use more information from the prediction model as both the models and the DA algorithms themselves have improved (Kalnay, 2003). In the earliest systems, the only information used from the model was the relative locations of model gridpoints (Daley, 1993). Later, short-term model predictions were used as a “first-guess” field that was then adjusted to be consistent with available observations (Lorenc, 1986).

A major advance was the development of variational data assimilation methods in which a cost function measuring the fidelity of the model’s estimation of the observed values is minimized using tools from variational calculus (LeDimet and Talagrand, 1986). Three-dimensional variational (3D-Var) techniques were implemented first (Parrish and Derber, 1992), with the most recent state from a model prediction being modified to better fit the observations. Variational techniques require a priori specification of a background error covariance, an estimate of the statistical relationship between different model state variables (Courtier et al., 1998). Although in principle OI and 3D-Var are nearly equivalent, (Lorenc, 1986), the ability of 3D-Var to find a global solution using all observations simultaneously resulted in less noisy and more balanced initial conditions for the predictions. More recently, four-dimensional variational (4D-Var) techniques have become the state of the art for operational numerical weather prediction. These techniques adjust the initial state of the model at an earlier time so that the

FIGURE 3.11 Number of satellite observations assimilated into the ECMWF ERA-Interim reanalysis (data assimilation system), with arrows indicating the introduction of new systems. SOURCE: ECMWF.

model evolves to fit a time sequence of available observations (Rabier et al., 1999). Predictions are then made by extending this model trajectory into the future.

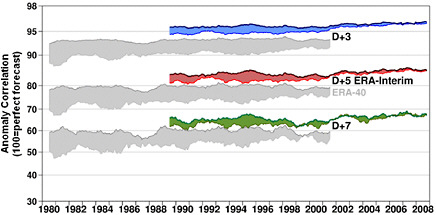

Assimilation of remotely sensed atmospheric observations has played a large role in the increase in prediction quality over the last two decades (see “Atmospheric Observations” section, Figures 3.10, and 3.11). Figure 3.10 shows the types of remotely sensed satellite observations since 1989, and Figure 3.11 shows the number of satellite observations assimilated in the ECMWF Interim Reanalysis (ERA-Interim): about 1.5 million/day in 1989, jumping to 10 million/day in 2002 with the introduction of AIRS high resolution infrared sounder, and with another large increase from the high resolution infrared interferometer IASI (METOP). Figure 3.12 compares two recent reanalyses performed at ECMWF, the ERA 40, carried out with 3D-Var, and ERA-Interim, an experimental 4D-Var reanalysis. The obvious difference in performance between the two systems (which use the same observations but differ in their data assimilation systems) during the overlapping years quantifies the importance of the methods used for data assimilation, quality control, and advances in the model. It is remarkable that in the “reforecasts” from the ERA-Interim, it is possible to detect the improvement due to the introduction of AIRS in 2003, with a perceptible increase in anomaly correlation values in the five- and seven-day predictions.

The most recently developed methods for atmospheric data assimilation are ensemble Kalman filter (EnKF) techniques that use a set of short-term model predictions to sample the probability distribution of the atmospheric state. The ensemble provides information about both the mean state of the model and the covariance between different model variables. The ensemble members are adjusted using observations to produce initial conditions for a set of predictions. EnKF techniques are now in operational use for ensemble weather prediction (Houtekamer and Mitchell, 2005). Understanding the relative capabilities and advantages of 4D-Var and ensemble methods is an area of active research (e.g., Kalnay et al., 2007; Buehner et al., 2009a and b). There is a developing consensus that a “hybrid” approach combining a variational system (3D-Var or 4D-Var) with EnKF may be optimal.

In concert with using increasing amounts of information from the numerical model, increasingly sophisticated DA techniques facilitated the use of a more diverse set of observations. The earliest techniques were limited to assimilating observations of quantities that were one of the model state variables. Variational methods facilitated the assimilation of any observation that could be functionally related to the model state variables. However, a priori estimates of the relationship between errors in estimates of model state variables and the observed quantities were required. Ensemble methods automatically provide estimates of these relationships making it mechanistically trivial to assimilate arbitrary observation types. The types and numbers of observations assimilated for NWP has soared as DA techniques have improved in concert with the development of remote sensing systems that produce ever increasing numbers of observations.