3

Established Weather Research and Transitional Needs

There are multiple research and research-to-operations (R2O) issues that have been recognized for some time as important and achievable but that have yet to be completed or implemented in practice. The committee refers to these as established needs for weather research and the transition of research results into operations—in contrast to the emerging needs discussed in Chapter 4. Four established priority needs are identified, and all are in various stages of development, but none have yet been resolved despite having been identified as pressing in numerous previous studies (see Table 1.1). They include global nonhydrostatic coupled modeling, quantitative precipitation forecasting, hydrologic prediction, and mesoscale observations. The reader may question why hurricane intensity forecasting is not included here as an established need. The answer lies in the unavoidable reality that virtually all research and transitional needs have both established and emerging aspects, and so the hurricane intensity challenge is embedded within the following section on predictability and coupled modeling and it is also embedded in the following chapter dealing with emerging needs.

UNDERSTANDING PREDICTABILITY AND GLOBAL NONHYDROSTATIC COUPLED MODELING

The United States continues to maintain world leadership in weather and climate research as indicated, for example, by the worldwide use of weather1 and climate2 research models developed in the United States and the leadership positions held by U.S. scientists in international programs. The nation has also made substantial investments in the development of global satellite, in situ, and remote sensing observing systems. In spite of

|

1 |

Weather Research and Forecasting (WRF) model; see http://www.wrf-model.org. |

|

2 |

Community Climate System Model (CCSM); see http://www.ccsm.ucar.edu. |

these accomplishments, the United States is not the world leader in global numerical weather prediction (NWP). Figure 3.1 indicates that the United States has made steady progress in global weather forecasting performance, but so have other countries.

Within the United States, however, the performance of the NOAA/NWS National Centers for Environmental Prediction (NCEP) Global Forecast System (GFS) is superior to the Navy Operational Global Atmospheric Prediction System (NOGAPS) operated by the Fleet Numerical Meteorology and Oceanography Center (FNMOC). In particular, the gap in model performance between NCEP and the European Centre for Medium-Range Weather Forecasts (ECMWF) has not narrowed in the past 15 years. The primary reason is the slow and sometimes ineffective transfer of achievements in the external research community to operational centers in the United States (R2O). Another reason is the lack of investment and progress in assimilating observations in advanced weather prediction models, which is also related to the slow R2O process in data assimilation. In addition, NCEP’s high-performance computing (HPC) capacity, despite recent upgrades, lags behind the capacities of many other major prediction centers around the world.3 The complexities associated with using a hydrostatic global model (GFS) and a variety of regional (nonhydrostatic and hydrostatic) models4 makes it very challenging to maintain and improve these prediction models and associated data assimilation schemes, particularly at the underresourced NCEP. As a consequence, the United States is not fully realizing the potential benefits of its substantial investments in observing systems.

Progress and Remaining Needs

As the horizontal grid spacing of models continues to decrease, especially to less than 10 km, hydrostatic models are no longer appropriate, and it is essential that global nonhydrostatic NWP models (Box 3.1) be coupled with ocean and land models. In fact, Japan’s global Non–hydrostatic Icosahedral Atmospheric Model (NICAM), which runs on the Earth Simulator5 computer, has reached horizontal grid spacing of 3.5 km (Satoh et al., 2008), which results in a spatial resolution 10 times greater (and an areal

|

3 |

These and other findings have been discussed in the recently completed external review of NCEP, the “2009 Community Review of National Centers for Environmental Prediction,” that was managed by the University Corporation for Atmospheric Research (UCAR). The executive summary of the NCEP review is included as Appendix B of this report. |

|

4 |

The NCEP website describes the models operated by NCEP; see http://www.emc.ncep. noaa.gov/. |

|

5 |

![FIGURE 3.1 The United States and other countries have made steady progress in global weather forecasting performance. Time series of seasonal mean anomaly correlations of 5-day forecasts of 500-hPa heights for different forecast models (Global Forecast System [GFS], ECMWF (EC in legend), UK Meteorological Office [UKMO], Fleet Numerical Meteorology and Oceanography Center [FNMOC], the frozen Coordinated Data Analysis System [CDAS], and Canadian Meteorological Centre [CMC] model) from 1985 to 2008. Seasons are 3-month non-overlapping averages, Mar–Apr–May, etc. for the Northern Hemisphere. The green shaded bars at the bottom are differences between ECMWF and GFS performance. SOURCE: NCEP. Available at http://www.emc.ncep.noaa.gov/gmb/STATS/html/seasons.html.](/openbook/12888/xhtml/images/p2001c0f6g51001.jpg)

FIGURE 3.1 The United States and other countries have made steady progress in global weather forecasting performance. Time series of seasonal mean anomaly correlations of 5-day forecasts of 500-hPa heights for different forecast models (Global Forecast System [GFS], ECMWF (EC in legend), UK Meteorological Office [UKMO], Fleet Numerical Meteorology and Oceanography Center [FNMOC], the frozen Coordinated Data Analysis System [CDAS], and Canadian Meteorological Centre [CMC] model) from 1985 to 2008. Seasons are 3-month non-overlapping averages, Mar–Apr–May, etc. for the Northern Hemisphere. The green shaded bars at the bottom are differences between ECMWF and GFS performance. SOURCE: NCEP. Available at http://www.emc.ncep.noaa.gov/gmb/STATS/html/seasons.html.

resolution 100 times greater) than the 35-km grid spacing of the hydrostatic GFS model at NCEP’s Environmental Modeling Center (EMC).6 ECMWF has also upgraded its operational forecasts to 16-km grid spacing since January 2010.

Now is an optimal time to invest in this area because of many achievements that have been made in the past decade, such as progress in global

|

BOX 3.1 Modeling Terminology Convective parameterizations: When an atmospheric model’s grid spacing is too coarse relative to the scales of convective clouds, convective processes cannot be resolved by the model and hence are represented in terms of the grid-scale model variables. This is a necessary simplification that cannot be avoided unless the model grid spacing is small enough to explicitly resolve these convective clouds. High-resolution nonhydrostatic atmospheric models: Nonhydrostatic atmospheric models are models in which the hydrostatic approximation (that the vertical pressure gradient and buoyancy force are in equilibrium) is not made, so that the vertical velocity equation (arising from applying Newton’s second law to atmospheric motion) is solved. This allows nonhydrostatic models to be used successfully for horizontal scales of the order of 100 m. “High resolution” has different meanings for global, regional, and local models and its meaning also changes with time (or with the increase of computing power over time). At present, “high resolution” usually refers to a few kilometers in horizontal grid spacing in global models and around 1 km for regional models. Icosahedral grid: This is a geodesic grid formed by arcs of great circles on the spherical Earth. It consists of 20 equilateral triangular faces expanded onto a sphere and further subdivided into smaller triangles. It provides near-uniform coverage over the globe while allowing recursive refinement of grid spacing. Incompatible lateral boundary conditions: Lateral boundary conditions refer to the conditions at the horizontal boundaries of regional atmospheric–ocean–land models that are necessary for running these models and are provided from the output of global models or reanalyses. Incompatible conditions can arise from differences in the regional and global models in the model physics (e.g., cloud microphysics), dynamics (e.g., atmospheric waves), or configuration (e.g., topography), and can have a significant and negative impact on regional modeling. Predictability and predictive skill: Predictability refers to the extent to which future states of a system may be predicted based on knowledge of current and past states of the system. Because knowledge of the system’s past and current state is generally imperfect (as are the models that utilize this knowledge to produce predictions), predictability is inherently limited. Even with arbitrarily accurate models and observations, there may still be limits to the predictability of a physical system due to chaos. In contrast, predictive skill refers to the statistical evaluation of the accuracy of predictions based on various formulations (or skill scores). Predictability provides the upper limit in the time for skillful predictions. Quantitative Precipitation Estimation (QPE): QPE refers to the estimation of precipitation amounts or rates based on remote sensing data from radar, satellites, or lightning detection systems, and also estimates from in situ gauges that may or may not provide spatially representative data. Quantitative Precipitation Forecasting (QPF): QPF refers to forecasts of precipitation that are quantitative (e.g., millimeters of rain, centimeters of snow) rather than qualitative (e.g., light rain, flurries), indicating the type and amount of precipitation that will fall at a given location during a particular time period. Testbeds: A testbed is a platform for rigorous testing of scientific theories, numerical models or model components, and new technologies. Testbeds in weather forecasting allow for the testing of new ideas in a live environment similar to that in weather forecasting, and hence accelerate the transition from research to operations. |

nonhydrostatic research modeling, progress in data assimilation (including the assimilation of WSR-88D radar reflectivity and radial velocity data over the continental United States), and increased high-performance computing capacity.

A recent achievement is the establishment of various testbeds (e.g., the multiagency, distributed Developmental Testbed Center; and the virtual National Oceanic and Atmospheric Administration [NOAA] Hydrometeorological Testbed [HMT]), which will be helpful for the R2O transition as venues to test new observing and forecast capabilities. The National Aeronautics and Space Administration (NASA)/NOAA/Department of Defense (DOD) Joint Center for Satellite Data Assimilation (JCSDA) has also been established with the goal of accelerating the use of global satellite data in operational forecasting. However, extramural funding to support operationally oriented research at these testbeds is very limited (Mass, 2006).

In addition to the requirement for better weather forecasting models and more efficient and effective data assimilation methods, there is a pressing need for basic research to better understand the inherent predictability of weather phenomena at different temporal and spatial scales, which is also relevant to social scientists whose research and questions often have elements of scale (see Chapter 2). There is also a major emerging weather research question concerning how weather may change in a changing climate. These and similar issues have been raised in various previous studies (e.g., PDT–1 [Emanuel et al., 1995]; PDT–2 [Dabberdt and Schlatter, 1996]; PDT–7 [Emanuel et al., 1997]; NRC, 1998b), but little progress has been achieved. Although the relationship between changes in climate and weather is important both scientifically and practically, it is outside the scope of the present study and this report.

It is now widely recognized that physical processes at the atmosphere– ocean–land interface play a significant role in weather forecasting, such as the impact of atmosphere–wave–ocean coupling on hurricane forecasting (Chen et al., 2007) and land–atmosphere coupling on near-surface air temperature, humidity, turbulent fluxes, convection initiation, and precipitation. The role of biological (e.g., vegetation greenness and leaf area index) and chemical (e.g., trace gases, aerosols) processes in weather and air pollution forecasting has also received increased attention.

Unified Modeling Frameworks and Coupled Modeling

Many global weather forecasting models, such as those at NCEP and ECMWF, are hydrostatic because their grid spacings are generally greater than 10 km or so. In contrast, many regional weather research and forecast

models (e.g., WRF) are nonhydrostatic. Of particular note are high-resolution nonhydrostatic models (those with grid spacing around 2 km), which would remove the dependence of the model on convective parameterizations, a major barrier for progress in weather forecasting. Global nonhydrostatic NWP models would provide a unified framework for global and regional modeling, and consequently help form a more seamless transition between weather and climate predictions; the importance of a unified framework was recently advocated by the World Climate Research Program in its new strategic plan (WCRP, 2009). The UK Meteorological Office7 has adopted this approach with positive results (Figure 3.1). Such a unified nonhydrostatic modeling system with different configurations (e.g., as a global model with a uniform horizontal resolution, as a global model with two-way interactive finer meshes at specific regions, or as a regional model) has also been developed in the United States (Walko and Avissar, 2008). The nonhydrostatic WRF model has been widely used (there are thousands of registered domestic and international users from public agencies, academia, and the private sector) as a regional model for research and weather forecasting (e.g., NCEP); WRF can also be configured as a global model. However, with a latitude-longitude grid in the global WRF, a polar filter is still required, and a better alternative grid may be the icosahedral grid (see Box 3.1). This development of a unified framework would facilitate and increase the interaction among several communities that have traditionally been segregated—the weather and climate communities, and the regional and global modeling communities. A common model framework would also reduce costs through improved efficiencies and enhanced collaborations in the development of various model physical parameterizations.

High-resolution global nonhydrostatic models have the potential to improve regional modeling because many regional weather prediction and data assimilation problems are essentially global problems; better global models can also reduce the effects of incompatible lateral boundary conditions for the regional models through the use of consistent model physics and two-way nesting. The ability to run both global and regional models in two-way nested mode will also create many new research opportunities (e.g., to study changes in weather in a changing climate and the potential upscaling effects on global circulations).

A number of key capabilities remain to be developed for coupled nonhydrostatic models; they include sufficiently high spatial and temporal resolutions that enable convection and high-impact weather to be explic-

itly resolved (avoiding the limitations of cumulus parameterizations) in global models; assimilation of convective-scale observations using advanced methods that eliminate precipitation spin-up and improve initial conditions in general; improvements in cloud microphysics, physics of the planetary boundary layer (PBL), and interface physics related to the atmospheric coupling to ocean and land processes; and design and development of convective-scale ensemble prediction and post-processing systems for improved and readily interpretable probabilistic forecasts.

Various numerical methods—spectral, finite-difference, and finite-volume—have been used in global hydrostatic weather forecasting models. For global high-resolution nonhydrostatic models, further evaluation of these methods and development of new methods are still needed. New grid cell structures (rather than the traditional latitude-longitude grids) are needed, especially for the treatment at the poles (e.g., Walko and Avissar, 2008). A promising combination might be the finite-volume method with icosahedral grid cells (e.g., Heikes and Randall, 1995). In particular, a new global weather forecasting model with icosahedral horizontal grid, isentropic-sigma hybrid vertical coordinate, and finite-volume horizontal transport (called “FIM”) has been developed at the NOAA Earth Systems Research Laboratory (ESRL).8 The hydrostatic version of FIM is available, but the nonhydrostatic version remains to be developed.

Observations are still inadequate to optimally run and evaluate most high-resolution models and determine forecast skills at various scales. (A detailed discussion of the opportunities and needs for mesoscale observations is provided in the last section in this chapter.) Perhaps even more challenging is the development of suitable and effective verification and evaluation metrics and methods for determining probabilistic forecast skills at different scales.

High-resolution and ensemble forecasts require high performance computing (HPC) capability for model predictions but also for data assimilation, post-processing, and visualization of the unprecedented large volumes of data. HPC facilities are currently available at some Department of Energy (DOE) and NASA centers, as well as at NSF-sponsored centers, which are usually used for research and climate simulations. It would be beneficial to have an HPC center that is dedicated to the support of weather forecasting and research in the academic and related research community to facilitate R2O activities. HPC facilities are also suboptimal within NOAA for operational weather forecasting. A substantial increase in computing capacity

|

8 |

See http://fim.noaa.gov. |

dedicated to the operational and research communities for the high-resolution weather modeling enterprise is required (see Appendix B). The recent NOAA partnership with the DOE Oak Ridge National Laboratory on HPC support (with a focus on climate research and prediction) will help alleviate the HPC demand at NCEP. Also crucial is development of improved software to increase the computational efficiency and scalability of the forecast models as well as post-processing and visualization, particularly at petascale (1015) HPC (e.g., NRC, 2008c).

Data Assimilation and Observations

Data assimilation as part of the forecast system is also important for acquiring and maintaining observing systems that provide the optimal cost-benefit ratio to different user groups and their applications. Data denial experiments can selectively withhold data from one (or more) system(s) and assess the degradation in forecast skill. Data assimilation can be used to determine the optimal mix of current and future in situ and remotely sensed measurements, and also for adaptive or targeted observations (e.g., Langland, 2005). It is also beneficial to understand the impacts of observing systems on model performance and the resulting forecast accuracy (e.g., Gelaro and Zhu, 2009; Rabier et al., 2008). With aging satellites in space and insufficient satellites in the NASA and NOAA pipelines to replace or enhance them, observing system simulation experiments can also help support detailed cost-benefit analyses.

Besides the satellite and radiosonde data that are widely used in global operational NWP, the assimilation of data from radar and other sources is also crucial, particularly for regional forecasting. Preliminary work done at the University of Oklahoma (Xue et al., 2009) indicates that a high-resolution regional model initialized using global model output has relatively large initial errors in precipitation forecasting but these errors do not further increase with time in the first few hours. On the other hand, their results also indicate that regional precipitation forecasting with radar data assimilation has smaller initial errors but they increase rapidly with time in the first few hours, as expected from our understanding of atmospheric predictability.

For data assimilation in high-resolution, cloud-resolving, and coupled air–sea–land models, it is particularly important to address the inconsistency in model physics and observations. For instance, the cloud droplet size distribution assumed in models may not be the same as that assumed in satellite-retrieved cloud properties. Although significant progress has been made in data assimilation using the individual model component of the atmosphere,

ocean, or land, progress is lacking with the fully coupled system. There is also a lack of coherent observations across the atmosphere–ocean–land interface for data assimilation in the fully coupled models.

It is important to critically compare different advanced data assimilation methods, (e.g., the ensemble Kalman filter [EnKF] and 4-dimensional variational [4DVar] analysis) with those methods currently used by some operational models such as 3-dimensional variational (3DVar) approaches. For instance, the Navy’s FNMOC recently initiated operational use of the Naval Research Laboratory’s 4DVar data assimilation system to replace the 3DVar in NOGAPS. Preliminary impact tests (Xu and Baker, 2009) indicate that equivalent 5-day forecast skill with the 4DVar system is extended about 9 hours in the Southern Hemisphere and 4 hours in the Northern Hemisphere, and tropical cyclone 5-day track forecast errors are reduced. Similarly, the experiments at the NOAA ESRL (MacDonald, 2009) indicate that the EnKF improves global weather forecasts compared with the 3DVar system. A community consensus is emerging that the future of data assimilation may belong to a hybrid EnKF-4DVar system (e.g., Zhang et al., 2009); the pace in testing and implementing such a hybrid system needs to be accelerated at NCEP.

Model advances—such as improved grid resolution, model physics, and data assimilation—also improve the performance of short-range, 0- to 12-hour forecasts. These forecasts are of high societal relevance for many applications, such as forecasting severe weather (warning the public and protecting lives and property), wind speed/direction changes (improving the use of wind-generated power), solar radiation (for solar power generation), visibility (for surface and aviation transport), and air pollution (for public health). Such forecasts also demand and stimulate the development of new observing technologies and measurements, such as the high-performance, low-cost, polarimetric X-band radar networks being developed within the Collaborative Adaptive Sensing of the Atmosphere (CASA) program (McLaughlin et al., 2007, 2009).

Observational data with high temporal and spatial resolution are crucial to the understanding of atmospheric processes, providing data for assimilation in models, and evaluating and improving those models. This requires the synergistic combination of data from diverse sources. Rawinsonde, radar, satellite, and aircraft data as well as data from other sources all play complementary roles in weather research and forecasting.

Rawinsonde coverage needs to be maintained and enhanced because of its value for weather forecasting and evaluation of satellite data. Geostationary satellites provide excellent temporal coverage, but new technologies are

still required to add passive microwave sensors that can penetrate through clouds. Polar orbiting satellites provide global coverage with better spatial resolution, but active microwave, infrared, radio frequency, and optical sensors are still needed to provide data on the three-dimensional structure of the atmosphere. Soundings of refractive index (as a function of atmospheric temperature and the partial pressures of dry air and water vapor) from global positioning system (GPS) satellites have also proved very useful for weather forecasting. NEXRAD (WSR 88-D) radars provide excellent precipitation detection above the PBL over relatively flat surfaces, but their spatial coverage—especially over the western United States—is far from complete, and some radars are not optimally sited for weather research and forecasting. The increase in the number of radar sites and technological upgrades (e.g., the planned dual-polarization capability) are very much needed. The massive numbers of surface networks need to be effectively used in data assimilation. Similarly, methods to make good use of the large numbers of automated meteorological reports from commercial aircraft need to be developed (Moninger et al., 2003). More detailed discussion on mesoscale observations are provided in the last section of this chapter.

Finally, a national capacity needs to be developed for optimizing the transitioning of environmental observations from research to operations. Such a capacity is still lacking at present (NRC, 2009b). A rational five-step procedure for configuring an optimal observing system for weather prediction was proposed a decade ago by a prospectus development team, PDT–7 (Emanuel et al., 1997) and remains relevant today. Briefly summarized, the procedure involves identifying specific forecast problems; using contemporary modeling techniques; estimating the incremental forecast improvements; estimating the overall cost (to the nation, rather than to specific federal agencies); and using standard cost-benefit analyses to determine the optimal deployment.

Recommendation: Global nonhydrostatic, coupled atmosphere–ocean– land models should be developed to meet the increasing demands for improved weather forecasts with extended timescales from hours to weeks.

These modeling systems should have the capability for different configurations: as a global model with a uniform horizontal resolution; as a global model with two-way interactive finer grids over specific regions; and as a regional model with one-way coupling to various global models. Also required are improved atmospheric, oceanic, and

land observations, as well as significantly increased computational resources to support the development and implementation of advanced data assimilation systems such as 4DVar, EnKF, and hybrid 4DVar–EnKF approaches.

Predictability

Intrinsic predictability of the atmosphere–ocean–land system is a fundamental research issue. Even though predictability has been studied for the past half century and was a major theme in the Stormscale Operational and Research Meteorology (STORM) documents in the 1980s (e.g., NCAR, 1984), not much is known today about the inherent limits to predictability of various weather phenomena at different spatial and temporal scales. Because of the error growth across all scales, from cumulus convection to mesoscale weather and large-scale circulations, a high-resolution (preferably cloud-resolvable) nonhydrostatic global model is crucial to address such error growth and better understand the predictability of weather systems. Although predictability is obviously important to operational forecasting, increased emphasis on basic science (such as the limits of predictability) would be beneficial to the greater weather community.

Another fundamental question of predictability is error growth across various scales. This issue is particularly important as higher-resolution non-hydrostatic global models are developed. For instance, how up-scaling error growth from convective scales affects the larger scale circulation is poorly understood in both regional and global models. Some recent modeling experiments indicate that increasing model resolution may not improve forecasting skill for the first few days but may improve forecasts for days 3 through 5 (MacDonald, 2009).

Actual predictive skill may likely be dependent on the specific phenomenon (e.g., mesoscale convective systems [MCSs] versus tornadoes). It is difficult to assess predictive skill, because the lack of skill can result from problems arising from data and data assimilation deficiencies, errors in numerical representation, intrinsic predictability limitations, and forecast verification methodology. Retrospective forecasts have been found to be helpful in better understanding forecast errors and improving global forecast skills (Hamill et al., 2006). To address both intrinsic predictability and predictive skill, global nonhydrostatic modeling can be helpful. If such models are used operationally and if they become user-friendly and available to the research community, researchers will be able to assist in diagnosing the sources of errors by rerunning modeling cases with large (and small)

forecast errors and carefully analyzing the results. In short, it is difficult to address and understand intrinsic predictability in the current operational prediction environment.

To address prediction skill, there is the need to understand the causes of poor forecasts (or forecast outliers), identify sources of error (e.g., in model physics, observations, or methods), and identify solutions that may improve prediction skill. The research community can contribute significantly to an understanding of these issues by using operational models (O2R) in their research and transferring research results back to the operational centers (R2O). Further discussion of the importance of O2R is provided in Box 3.2.

Societal Benefits

Improvements in weather forecasting brought about by better models will help increase U.S. economic efficiency and productivity; improve management of air, land, rail, and ship transportation systems including NextGen and the Federal Highway Administration’s IntelliDriveSM Initiative;9 improve renewable energy siting and production; and enhance other weather-sensitive applications where better forecasts lead to increased safety, cost avoidance, and improved performance. Participation of social scientists and the socioeconomic community is required to address the societal impacts and quantify the benefits, improve the focus of weather research, and realize the importance of weather forecasting in general. Their participation is also crucial to address the value of new and improved weather information products from high-resolution nonhydrostatic regional and global models. These issues are discussed in more detail in Chapter 2 (Socioeconomic Research and Capacity) and Chapter 4 (Emerging Weather Research and Transitional Needs).

QUANTITATIVE PRECIPITATION ESTIMATION AND FORECASTING

The Challenge

QPFs (Box 3.1) are much less skillful than forecasts of meteorological state variables (pressure, temperature, and humidity) and winds (Fritsch and Carbone, 2004). Precipitation, while often forced by large-scale dynamical conditions, is also heavily influenced by regional mesoscale circulations, microscale processes within cloud systems, and localized forcings near

|

BOX 3.2 Importance of Operations to Research (O2R) Although R2O is usually emphasized, O2R is also important. Routine forecasts and verifications at operational centers provide outstanding questions in weather research. If the research community can present ideas to directly address these questions, operational centers will have a strong motivation to accelerate the transition of these ideas to operation, because these ideas might facilitate solving operational weather forecasting problems. Furthermore, few, if any, individual groups have the capability to routinely run ensembles of weather forecasting models as large as those at operational centers. To make O2R efficient, the operational centers and the research community need to work more closely. One way to achieve this would be for the NWS and its NCEP/EMC to make the operational models more user-friendly and available to the research community (beyond what NCEP/EMC has already done to make ensemble model outputs available to researchers). In particular, the operational models need to be well documented so that graduate students can easily run these models and understand the model physics and dynamics. The research community, in turn, could use those ensemble outputs to better analyze, understand, and improve the poor forecast cases, address research questions related to weather forecasting, and also use the operational models in their research. The TIGGE project (THORPEX Interactive Grand Global Ensemble; Bougeault et al., 2010) illustrates good progress in this area. TIGGE has made available for the first time the ensemble forecasts from multiple operational centers in a consistent format. As useful as these datasets are, even the TIGGE archive does not contain the full arrays of model fields. It is necessary to place more emphasis on providing the full, high-resolution data stream of observations and forecasts to the entire community in a timely fashion, as originally suggested by Mass (2006). Currently, NCEP cannot distribute all of the data it produces from its modeling system, significantly filtering the output in both three-dimensional space and time before distribution. This limits the ability of users to capitalize on the full value of these forecasts. This limitation will only grow worse as the resolution and duration of forecasts increase and as ensemble systems proliferate. As a way to alleviate this issue, modeling centers could consider becoming “open” centers, inviting value-adding applications access to the full and direct model output within the centers. Such an open approach would likely also catalyze more intellectual exchanges between members of the weather enterprises. |

the planetary surface. A casual glance at typical precipitation patterns associated with individual events reveals substantial mesoscale variability in all seasons. This variability challenges both the forecast process and the adequacy of precipitation observations, which enable hydrologic prediction and precipitation forecast verification.

QPE Status and Progress

Operational QPE (Box 3.1) over U.S. territory is currently achieved through contributions from traditional gauge observations and the national network of Doppler radars (mainly WSR-88D). Space-based infrared and microwave data are employed over oceanic regions.

Gauge measurements are relatively accurate but lack representativeness in patchy convective precipitation, thereby leading to analysis errors, even for relatively dense mesoscale surface networks. Radar estimates are inclusive of nearly all rainfall where there is coverage; however, these are prone to wellknown biases and uncertainty in application of the reflectivity–rainfall rate transformation function (e.g., Wilson and Brandes, 1979). The operational implementation of polarimetric WSR-88D capabilities promises a marked improvement in QPE over U.S. land areas and adjacent coastal waters. This will include precipitation phase, characteristic hydrometeor size range, and hydrometeor type discrimination, in addition to reduced uncertainty in cumulative amounts. These improvements will greatly benefit those hydrologic predictions that are based solely on observations. Remaining uncertainties are associated with network density, which could be satisfied through a combination of mesoscale surface networks that might reasonably include gauges and inexpensive, gap-filling high-frequency radars (as exemplified by the NSF CASA project; McLaughlin et al., 2007, 2009).

Merged infrared and microwave satellite products can also be helpful in mesoscale rainfall estimation (e.g., Joyce et al., 2004) where rain gauge and radar information is unavailable, though temporal resolution is a limiting factor in the utility of such products for short-range forecast applications. Convective rainfall can also be estimated from measurements of cloud-to-ground lightning activity, albeit with substantial uncertainty, as recently described by Pessi and Businger (2009) for the North Pacific Ocean.

QPF Progress and Seasonal Verification Performance

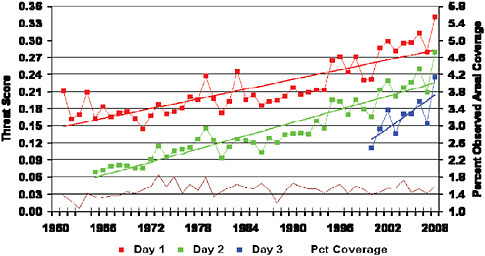

The skill in quantitative precipitation forecasts, while lagging progress in forecasting other variables, has increased steadily over the past 30 years as measured by equitable threat scores10 (e.g., Figure 3.2). Forecasts are more skillful at shorter range and lesser cumulative precipitation amounts, which

|

10 |

The Equitable Threat Score is a statistical skill score commonly used in the verification of quantitative precipitation forecasts. The score increases and forecasts are rewarded when both forecast and observed amounts greater than a given threshold are collocated (see http://www.meted.ucar.edu/satmet/goeschan/glossary.htm). |

FIGURE 3.2 The skill in quantitative precipitation forecasts, as measured by equitable threat scores, has increased steadily over the past 30 years. This figure shows skill in the equitable threat score from 1961 to 2009 in precipitation predictions by the NOAA NWS NCEP Hydrometeorological Prediction Center for 1.00 inch of precpitation at 24 (red), 48 (green) and 72 (blue) hours. Percent areal coverage refers to the fraction of total forecast area to the area that actually received 1.00 inch or more of precipitation in the forecast period. SOURCE: NOAA NWS NCEP Hydrometeorological Prediction Center. Available at http://www.hpc.ncep.noaa.gov/html/hpcverif.shtml.

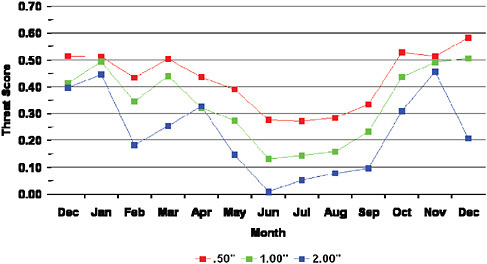

occur much more frequently than heavy or extreme events. Nevertheless, progress has been steady with rapidly increasing skill in Day 3 forecasts over the past 10 years as evidenced in Figure 3.2. The seasonal variation in predictive skill is marked by relatively high skill in winter and extremely low skill in summer (Figure 3.3). Although a number of factors come into play for this seasonal cycle, low skill in summer is related to weak forcing at larger scales of motion together with decreased stability and moist convection as the principal precipitation process.

Progress in QPF for Cool-Season Orographic Precipitation

Considerable skill has been achieved in the dynamical prediction of cool season orographic precipitation accounting for much of the skill associated with the winter season (Figure 3.3). By traditional verification methods, such as the equitable threat score, the skill in prediction of cool season orographic precipitation greatly exceeds all other circumstances, including strongly forced precipitation from fronts and cyclones over relatively flat

FIGURE 3.3 There is seasonal variation in predictive skill, with relatively high skill in winter and low skill in summer. Monthly skill in the equitable threat score from December 2008 to December 2009 in precipitation predictions by the NOAA NWS NCEP Hydrometeorological Prediction Center for 0.5 (red), 1.0 (green), and 2.0 (blue) inches of precipitation at 24 hours. SOURCE: NOAA NWS NCEP Hydrometeorological Prediction Center. Available at http://www.hpc.ncep.noaa.gov/html/hpcverif.shtml.

land. For example, recent results (Ikeda et al., 2010) from research simulations of snowfall over the central and southern Rockies produce water content equivalents that are highly consistent with in situ SNOTEL observations on an event basis, and often within 2 to 5 percent of cumulative snowfall for seasonal integrations. This skill in orographic precipitation prediction is a consequence of accurate prediction of upstream synoptic scale winds, the prevalence of relatively stable flow regimes, and the mechanical lifting imposed by complex terrain. While the uncertainties and biases are non-negligible, the current status is one that is mainly in need of refinement in the representation of microphysical processes and improved treatment of gravity waves and turbulence.

Progress in QPF for Fronts and Cyclones

Forecast ability for precipitation associated with extratropical fronts and cyclones is increasingly skillful at synoptic scales as cyclone track predictions become more accurate and uncertainty in the intensity of developing storm systems is reduced. This broad category of precipitation events con-

tributes to the skill associated with all non-summer seasons (Figure 3.3). Ensemble prediction techniques for cyclone track and positions of fronts are contributing to this increased skill, especially at medium and extended ranges. Within synoptic-scale regions of baroclinic instability, the amount and phase of precipitation often exhibits considerable variability at the mesoscale, which contributes to reduced forecast skill scores at moderate-to-high cumulative precipitation amounts (Figure 3.3). In such regions, intense snow and rain bands, embedded convection, or squall lines may prevail. This is especially problematic in winter storms where local differences in snowfall amounts and location of the rain/snow line have enormous societal impacts. The limitations to the skill in these predictions are often related to errors in initial and boundary conditions, and deficiencies in parameterizations, both microphysical and dynamical. The former are related to gaps in observations (as discussed under the Mesoscale Observational Needs section at the end of this chapter) together with shortcomings in the data assimilation schemes, both of which are viable candidates for improvement.

The predictions of precipitation from hurricanes and weaker tropical cyclones upon landfall suffer from similar deficiencies. A greater fraction of precipitation is of convective origin in rain bands, and there is a particularly strong dependence of the skill of precipitation forecasts on the precision of forecasts for the cyclone track and its speed of motion. Satellite and airborne adaptive observations at sea (e.g., flight-level wind and state parameter measurements, dropwindsonde soundings, and stepped-frequency microwave radiometer measurements of sea state) are playing an increasingly important role in improving dynamical forecasts of tropical cyclone precipitation following landfall.

Weakly Forced Warm Season: A Principal Focus for Research and R2O Activities

Although the aforementioned deficiencies associated with precipitation forecasts are both important and worthy of further research and forecast system refinements, the least skillful predictions occur under weakly forced conditions when local variability and local physical factors are more likely to exert influence on precipitation occurrence and amount. This is evident in Figure 3.3 where equitable threat scores typically hover between 0.1 and 0.2 for 1 inch of precipitation at the Day 1 range. Historically, much of this precipitation had been characterized as “air mass” convection, occurring pseudo-randomly near the time of maximum diurnal heating in a conditionally unstable atmosphere. Research has since revealed systematic

patterns and lower-boundary forcing that emerge in the absence of strong synoptic-scale forcing. An underlying theme is the capacity of convection to self-organize and to scale upward into larger mesoscale convective systems under a fairly wide range of summertime conditions. For example, the foci for triggering convection include shallow thermodynamic or kinematic boundaries. These can be remnants of antecedent convection; the result of natural land surface heterogeneity; or diurnal cycles of mesoscale transport and moisture convergence (e.g., Bonner, 1968; Tuttle and Davis, 2006; Wilson and Schreiber, 1986).

Somewhat larger scale diurnal forcing includes whole cordilleras, such as the Rockies and the Appalachians, and secondary mesoscale convective systems spawned by primary sea breeze convection. Such conditions are most common in mid-summer when convective precipitation is at its maximum and the skill of precipitation prediction is at a deep annual minimum, as exhibited in Figure 3.3 and discussed by Fritsch and Carbone (2004). A substantial fraction of these events is very highly organized in the form of MCSs and sequences thereof, which can remain active for 6 to 60 hours and travel 300 to 2,500 km (e.g., Carbone et al., 2002; Laing and Fritsch, 1997, 2000; Maddox, 1983). Such episodes of diurnally triggered rainfall propagate across North America in a wavelike manner on a daily basis; are most frequent between the Rockies and the Appalachians; and account for approximately 50 percent of summertime rainfall in that region (Carbone and Tuttle, 2008). Such systems are the product of a persistent seasonal circumstance—strong thermal forcing over elevated terrain combined with westerly shear in a conditionally unstable atmosphere. Related forcings include the Great Plains low-level jet and the (Rocky) Mountain–(Great) Plains solenoidal circulation. The heaviest precipitation often occurs in corridors (Tuttle and Davis, 2006), where a region of westerly shear (mainly poleward) intersects a region (mainly equatorward) of convective available potential energy associated with relatively shallow moisture convergence. Owing to the regularity of this diurnal cycle and the coherent regeneration of convective systems, predictability at ranges of 6 to 24 hours, although intrinsic to this convective regime, has not yet been fully captured by operational forecast models.

Improving the Skill of Precipitation Predictions

General circulation models that have been used for operational weather prediction have not represented convective precipitation systems explicitly. That is to say, convective clouds and storms are smaller than the computa-

tional grid scale and therefore can be implicit by means of a parameterization (a switch of sorts) that redistributes heat, water substance, and momentum in the model as if convection had occurred in appropriate places and at the appropriate times.

Convective parameterizations have been developed for more than 30 years. Until recently, success had been limited, for the most part, to relatively simple cumulus clouds behaving in a manner similar to rising plumes of buoyant air. Such plumes deposit heat aloft and mix horizontal momentum downward, toward the surface. Whereas such representations of convective rainfall are useful in limited applications, organized deep convective systems are far more complicated and usually act in a dissimilar manner, thus sowing the seeds for faulty NWPs, both regionally in the short term and globally for long-range forecasts. Despite decades of effort, no traditional parameterization scheme has succeeded in systematically reproducing the organized convective rainfall events that are both common and of high impact in the United States and elsewhere globally (Fritsch and Carbone, 2004). A comprehensive review of convective parameterization, including “super-parameterization” techniques, is given by Randall et al. (2003) vis à vis long-term climate research and prediction requirements, for which parameterization will continue to be required in the foreseeable future.

Because of increased computational capacity, limited-area mesoscale models (e.g., Chen et al., 2007; Davis et al., 2003; Liu et al., 2006; Trier et al., 2008), when run at high resolutions with a grid spacing less than 5 km, routinely produce events similar to nature because the models can represent large convective systems without the aid of parameterizations, though specific predictions of individual storms may still have large spatial and temporal errors. At least one global numerical model, the NICAM developed and run on the Earth Simulator in Japan, has successfully demonstrated reproduction of realistic convective events, owing to a high-resolution global model with a grid spacing of 3.5 km (Satoh et al. 2008).

The limitations of cumulus parameterizations and the subsequent lack of predictive skill lead to the conclusion that short-range weather prediction applications need to represent deep moist convection explicitly in forecast models. This is mainly a matter of having sufficient computational capacity, which is commercially available today. Although models with explicit convection are necessary to produce a spectrum of events similar to the observed climatology, this step alone is insufficient for skillful predictions of convective rainfall. Predicting the probable location of such events is dependent upon improving initial and boundary conditions in the forecast models. With respect to the lower troposphere, atmospheric boundary

layer depth and high-resolution vertical profiles of wind and water vapor are necessary, together with surface analyses that are skillful in capturing mesoscale variability on a scale of approximately 10 km or less. In addition, it is necessary to characterize land surface conditions so as to properly model the corresponding fluxes of latent and sensible heat fluxes as well as the soil heat flux; the latter being highly dependent on the vertical profiles of soil moisture and soil temperature.

To enable very short- (≤12 hours) and short-range (≤48 hours) summertime predictions, it is therefore necessary to have robust analyses of mesoscale conditions in the lower troposphere and at the land surface. Summertime QPF prediction depends heavily on the three-dimensional structure of water vapor, land surface conditions, and profiles of both soil moisture and temperature (Fritsch and Carbone, 2004).

In addition to employing explicit convection in forecast models and having representative mesoscale initial conditions, mesoscale ensemble prediction techniques could be further exploited with respect to skill in explicit predictions of moist convection and accompanying precipitation. Ensemble predictions hold promise to mitigate and quantify forecast uncertainty, especially with respect to the detailed time and location of specific events for which the intrinsic limits of predictability are uncertain. To realize this promise requires an improved understanding of the ensemble spread and optimum number of ensemble members that are needed, the representativeness of the resulting spread in predictions, and the benefits to be gained (or lost) from higher resolution and fewer ensemble members in a computationally limited environment. The current state of knowledge concerning such trade-offs (and other trade-offs related to data assimilation) is insufficient to provide objective guidance.

Ensemble prediction techniques and related hybrid probabilistic forecast approaches could be pursued as an integral part of the solution toward increased skill in and the utility of warm-season precipitation predictions. For example, at very short range (<6 hours), rule-based, nondynamical forecasting (often referred to as nowcasting11) has an important role to play. Extrapolation, neural networks, fuzzy logic, and similar methodologies have demonstrated skill, especially during the “spin-up” period for complex dynamical models. These nondynamical forecast products are part of the in-

formation available to advanced data assimilation systems, thus constituting a blend of nowcasting and dynamical prediction. Such information could be further researched and transitioned, as appropriate, to operations as an integral part of the dynamical forecast process.

Hydrologic Prediction Implications

Precipitation estimation and prediction are intimately related to hydrologic prediction, serving as part of the information necessary to define hydrologic initial and boundary conditions. The amount of rainfall over large areas, although helpful, is insufficient, because hydrologic prediction can be highly sensitive to small-scale variability in precipitation spatial distribution; the probability density function of precipitation rate; subsequent melt rate in the case of ice-phase precipitation; and surface losses such as evaporation of liquid water and sublimation of snow and ice. Several of these factors argue for precipitation verification methods that better inform the hydrologic user of estimated and forecast data than does the equitable threat score. Such methods are widely considered to be “object oriented,” including specific location, shape, rate, and translation information in addition to cumulative amount (Davis et al., 2006a, b). Both improved observations, such as with polarimetric radar, and catchment- and convection-resolving models are necessary to achieve this level of sophistication.

Recommendation: To improve the skill of quantitative precipitation forecasts, the forecast process of the National Weather Service should explicitly represent deep convection in all weather forecast models and employ increasingly sophisticated probabilistic prediction techniques.

Global and regional weather forecast models should represent organized deep convection explicitly to the maximum extent possible, even at resolutions somewhat coarser than 5 km, as may be necessary initially, in the case of global models. Explicit representation should markedly improve forecast skill associated with the largest and highest impact precipitation events. The introduction of explicit convection will likely require the refinement of microphysical parameterizations, boundary-layer and surface representations, and other model physics, which are, in themselves, formidable challenges.

Probabilistic prediction should be vigorously pursued through research, and the development and use of increasingly sophisticated ensemble techniques at all scales. New tools need to be developed for

verification of probabilistic, high-resolution ensemble model forecasts, eventually supplanting equitable threat scores.

HYDROLOGIC PREDICTION

Despite the fact that the skill of precipitation forecasts has significantly improved over the past decade—especially for cool-season events, as discussed in the preceding section—the skill in hydrologic forecasts has not kept pace. Of all the weather-related hazards in the United States, floods are one of the most devastating; they claim hundreds of lives and cause billions of dollars in damage each year (EASPE, Inc., 2002; NOAA, 2001). From 1970 to 2000, 3,829 deaths were attributed to floods, with an annual average of 128 deaths. Three 10-year cycles show a trend of reduced deaths: 1971 to 1980, 175 average deaths per year; 1981 to 1990, 112 deaths; and 1991 to 2000, 91 deaths (EASPE, Inc., 2002). The average annual flood damages for 1981 to 1990 were $3.2 billion, and for 1991 to 2000, $5.4 billion, which includes the 1993 record-setting floods of the Mississippi River ($18.4 billion in damages; USACE, 2000). Improving the accuracy and lead time of flood forcasts and ensuring timely mitigation plans would reduce weather-related losses and provide other societal benefits such as increased public health and safety.

The 2009 BASC Summer Study workshop participants emphasized the need for our nation to develop an adequate research and R2O focus and the necessary supporting infrastructure to translate improved precipitation forecasts to improved operational hydrologic forecasts that are more accurate and have increased spatial resolution. Efforts toward developing an advanced hydrologic prediction12 service (NRC, 2006b) have been ongoing for more than a decade and need to be sustained and intensified.

Hindrances to Progress

The challenges in QPF articulated in the previous section are far from being resolved. However, suppose for a moment that the science and technology had achieved the ability to make virtually perfect precipitation forecasts. A hydrologic modeling system would then be able to translate those precipitation forecasts into accurate, distributed runoff and streamflow by

invoking the physical mechanisms of runoff generation, snow accumulation, surface water–groundwater exchanges, river and floodplain routing, river hydraulic routing, agricultural and other consumptive uses, among others. But all of these elements of an advanced hydrologic prediction system are far from being well observed, far from being well understood, far from being appropriately represented in numerical prediction models, and far from being even rudimentarily verified. Therefore, a perfect precipitation forecast would not translate today into a hydrologic forecast that meets user needs. The challenges amplify because the precipitation forecasts are far from perfect. Thus, there is a compelling need for major changes in hydrologic research and infrastructure if we are to successfully translate the investment in improved weather forecasts into improved hydrologic forecasts at the local and regional scales, and meet the pressing societal and economic demands of flood protection and water availability.

To better understand the priorities for hydrologic research and R2O, it is helpful to first understand the impediments to progress in hydrologic forecasting:

-

lack of comprehensive and representative observations across a range of space-time scales and across different hydrologic regimes that can be assimilated in hydrologic models to improve the physical representation of water cycle dynamics;

-

lack of a coherent distributed hydrologic modeling framework to simultaneously advance hydrologic forecasting research and facilitate the transition of research results into operations;

-

lack of systematic verification metrics to track and measure progress; and

-

lack of a centralized and sustained mechanism to foster academic, private-sector and intergovernmental exchange and collaboration toward a targeted effort to deliver the next-generation hydrologic forecasting system with demonstrated improvement and robustness.

Hydrologic Prediction and a Changing Climate

Changes in precipitation extremes (storm amounts, frequency, and duration) are already posing unique challenges in water management at the local to regional scales. The assumption of stationarity, on which current hydrologic model calibration practices for hydrologic forecasting are based, is no longer valid (Milly et al., 2008). Revised hydrologic models that are physically based are less reliant on calibration and tuning, take full advantage

of real-time observations from multiple sensors, and are a clear necessity for hydrologic prediction in the future. Unfortunately, the physics of water cycle dynamics are not fully understood and physically based predictive models are not yet available, either beyond single investigator research or in operational practice.

In addition to the need to make optimum use of precipitation forecasts in hydrologic forecasting on weather timescales, there also is a need to assess hydrologic impacts and conditions on climate timescales. Theory, simulations, and empirical evidence all indicate that warmer climates will materially change the distribution of precipitation at both local and global scales (e.g., Solomon et al., 2007; Trenberth et al., 2003; Wentz et al., 2007). Generally, drier areas are becoming drier and wet areas are becoming wetter, and the snow season has become shorter by up to 3 weeks in parts of the North American continent owing to an earlier onset of spring. In addition, increased water vapor capacity in warmer climates is expected to lead to more intense precipitation events even when the total annual precipitation remains the same or is slightly reduced (e.g., Karl et al., 2009). This will inevitably increase the risk of flooding and rainfall-induced hazards, such as landslides in steep mountainous terrains, putting increased pressure into the development of accurate, high-resolution, physically based hydrologic prediction models.

Need for Change

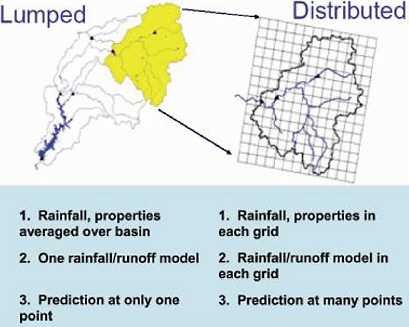

Today’s operational hydrologic forecasting is performed at the 13 river forecast centers (RFCs) of the NWS. This forecasting heavily relies on calibrated lumped or semidistributed hydrologic models (Box 3.3) based on historical observations and which incorporate subjective information by the regional forecast offices. These forecasts are also restricted to river stages at specific locations only, which are often too widely spaced for local decision making.

There are two important reasons it is imperative to change this process: (1) nonstationarity due to climate and land-use changes makes past observations of limited use in model calibration, and thus there is a need to move to physically based, minimally calibrated models based on assimilated real- or near-real-time observations from multiple sensors; and (2) there is a growing need to provide water cycle forecasts at a range of space and time scales, not only river stages for flood protection, but also for soil moisture (ecosystem functioning), streamflow (sediment transport and river habitat assessment), agricultural use practices and impacts, as well as water quality and renewable

energy (hydropower and hydrokinetic energy; see also the section on Renewable Energy Siting and Production in Chapter 4) applications.

A recent study by Welles et al. (2007) pointed out some troubling findings: (1) the RFC hydrologic forecasting skill has hardly improved (based on 15 watersheds studied) over the past 10 to 20 years; (2) the skill in above-flood-stage hydrologic forecasts is less than 3 days; and (3) the lack of an objective and systematic hydrologic forecast verification program has hindered progress and effective exchange between the scientific and operational communities.

Development of a Community Hydrologic Modeling System

In the meteorological and atmospheric sciences community, advances in NWP and climate modeling have been enabled to a large degree by the development of community-supported models.13 Such models (e.g., the WRF and the Community Climate System Model) have provided a framework to test alternative hypotheses and new parameterization schemes, to guide the collection of new observations, to prioritize science investments based on model performance, to support various societal and business applications, and to track the progress of research in a systematic way that allows moving ahead in a robust and traceable fashion.

In the hydrologic community, no such systematic effort exists for the purpose of bringing together the research and operations sectors toward the development of a hydrologic prediction system that can be advanced and extensively tested by the community, provide a frame of reference for tracking improvements, and be highly responsive to end-user needs. Efforts to date to develop a community hydrologic modeling framework include the Modular Modeling System (MMS) developed by the U.S. Geological Survey (USGS; Leavesley et al., 1996, 2005) and the ongoing effort of the NWS (NRC, 2006b) to develop the Community Hydrologic Prediction System (CHPS). These efforts were designed to advance the hydrologic forecasting capacity within the USGS and NWS; they were not designed to create the foundation of a true academic–government–private-sector partnership that could accelerate the development and implementation of an advanced distributed hydrologic modeling system to serve both as a research tool and as

an operational platform. Such an effort is currently lacking. It would require a community-led organization toward accelerated research and synthesis of physical-process understanding, targeted observational campaigns for model verification and improvement, quantification of uncertainty and probabilistic forecasting, theoretical advances in scaling and transferability, predictability studies of coupled models including sensitivity to initial conditions and model parameter uncertainty, and full exploration of high-performance computing. Establishing such a modeling framework would foster a more effective dialogue between the research and operational communities, including decision makers across diverse sectors, and would accelerate the R2O and O2R processes.

There is a critical, ongoing need to develop a robust hydrologic forecast capacity; this will require a strategic commitment and investment in research and R2O. Such a systematic hydrologic prediction framework effort would bring together (1) the collective resources of the hydrologic community in establishing a unified hydrologic observational, research, and modeling agenda; (2) the atmospheric and hydrologic communities in developing, testing, and improving a fully coupled atmospheric–hydrologic prediction system; and (3) the research and operations communities as a joint team that identifies research priorities to fill important gaps in the application of hydrologic products by the governmental and private sectors. In addition, a verified distributed model is certain to well serve communities other than water resources, such as the ecologic community that relies on water cycle forecasts at a range of scales for assessing ecosystem performance and species diversity at the local to regional scales under climate and land-use change.

Transitioning Research Developments into Operations

The NWS has the primary responsibility among the federal agencies to provide advanced alerts—flood warnings and forecasts—in the United States. The current operational hydrologic forecasting process at the NWS relies on a combination of observations (including precipitation, soil moisture, evapotranspiration, soil type, and land cover) and atmospheric forecasts that provide input to a land-surface hydrologic model. The rainfall-runoff model currently in use at most RFCs is the Sacramento Soil Moisture Accounting (SAC-SMA) model (Burnash et al., 1973). This model does not explicitly take into account distributed forcings (and thus does not take advantage of improvements in QPF) and other heterogeneities in a watershed, is mostly lumped as opposed to distributed (Box 3.3), and requires considerable local

calibration using historical observations. Recent efforts have extended this model to a gridded distributed version, called the Distributed Hydrologic Model,14 which is used experimentally in some of the RFCs.

Because hydrologic services generate nearly $2 billion of benefits each year through timely flood and weather forecasting (EASPE, Inc., 2002), the NWS Office of Hydrologic Development (OHD) began in 1997 the implementation of the Advanced Hydrologic Prediction Service (AHPS) program aimed to advance technology for hydrologic services (NRC, 2006b). AHPS is a congressionally funded program that had its first prototype implementation in 1997 for the Des Moines River Basin and is slated to be fully implemented nationwide in 2013. As part of the AHPS, OHD is taking critical steps to improve the hydrologic forecast process along two main directions: (1) exploration of the merits of distributed hydrologic models for operational use, and (2) development of a comprehensive verification system.

The NWS OHD has recently led the Distributed Model Intercomparison Project (DMIP). Twelve groups, including other federal agencies, academic institutions in the United States, and representatives from Canada, China, Denmark, and New Zealand, compared differing methods and approaches of distributed hydrologic models for analysis of the same predefined conditions. These results will guide NWS in developing the next generation of hydrologic models Results of the DMIP, Phases I and II, are generally encouraging but the road to full implementation with skill beyond the lumped hydrologic models remains poor. As a result, the new strategic and implementation plan (OHD, 2009) of the NWS OHD spells out a way to move forward and proposes the development of the CHPS, which emphasizes a combination of conceptual and physical modeling approaches. It has the ability to merge distributed hydrologic prediction models with atmospheric models at weather and climate scales, to resolve not only streamflow but also interior fluxes needed for water quality, ecologic, and geohazard applications, and the ability to make hydrologic predictions, including their uncertainty, at ungauged basins. The committee and the 2009 BASC Summer Study workshop participants support these efforts of the NWS and encourage implementation of additional ways of engaging the research community in this activity.

Two examples of complementary activities aimed at developing an advanced hydrologic forecasting capability are (1) the Consortium of Universities for the Advancement of Hydrologic Sciences, currently supported by NSF, which is working toward the development of the Community Hy-

drologic Modeling Platform and has initiated an ongoing community-wide dialogue (Famiglietti et al., 2008); and (2) the Integrated Water Resources Science and Service15 initiative currently supported by NOAA, USGS, and the U.S. Army Corps of Engineers. These two synergistic activities further illustrate the timeliness of taking a quantum leap forward now in order to improve the performance and skill of hydrologic forecasts in the next decade. In these efforts, close cooperation between academia and government agencies is important for transitioning the most recent research results to operations and for guiding new research directions based on societal relevance and scientific discovery.

The Need for Hydrometeorological Observations

As the complexity and resolution of hydrologic models increases, new and improved observations are needed for model initialization, improvement of model physics, data assimilation, and metrics for model verification. Several small-scale hydrologic observatories are currently in operation, for example, the Critical Zone Observatories (CZOs)16 supported by NSF, the Agricultural Research Service (ARS)17 observatories, and NOAA’s HMT.18

These observatories provide a variety of high-resolution in situ and remote sensing observations from the vegetation canopy, to soil, to groundwater in order to understand the interaction of physical, chemical, and biological processes in a watershed and accurately predict material fluxes (e.g., NRC, 2001). Coordinating efforts need to be instituted such that full advantage is taken of these observatories for further model improvements. Also, the development of new integrated observatories of the water cycle—from the atmosphere, to the rivers and lakes, to the deep subsurface zone, and to the built environment—are needed. For example, the Water

|

15 |

|

|

16 |

Operating at the watershed scale, CZOs are natural laboratories for investigating the processes that occur at and near the Earth’s surface and are affected by freshwater. CZOs are heavily instrumented for a variety of hydrogeochemical measurements as well as sampled for soil, canopy, and bedrock materials. CZOs also involve teams of cross-disciplinary scientists who have expertise in fields including hydrology, geochemistry, geomorphology, pedology, ecology, and climatology. See http://criticalzone.org/. |

|

17 |

The ARS watershed network is a set of geographically distributed experimental watersheds that has been operational for more than 70 years and provides essential research capacity for conducting basic long-term hydrologic research. See http://www.tucson.ars.ag.gov/icrw/Proceedings/Weltz.pdf. |

|

18 |

The HMT is a concept aimed at accelerating the infusion of new technologies, models, and scientific results from the research community into daily forecasting operations of the NWS and its RFCs. See http://hmt.noaa.gov/. |

and Environmental Research Systems (WATERS) Network initiative (NRC, 2009a) proposes a series of observatories to address the challenges of ecosystem sustainability and water availability under changes caused by human activities and climatic trends.19

Unique opportunities to use remotely sensed observations from surface and space require exploration (e.g., Yilmaz et al., 2005). These include currently available data (e.g., see the following section on observations) and the new stream of data that will be delivered by NASA’s Global Precipitation Measuring mission as well as the satellite soil moisture measurements of the Soil Moisture Active-Passive.20 The use of satellite observations for improved hydrologic prediction offers ample research challenges and opportunities, such as retrieval algorithm development over land, multichannel integration, bias adjustment, and increasing accuracy and resolution via product blending. Remotely sensed observations are the only observations for many parts of the world and their optimal use in hydrologic forecasting needs to be fully explored. In the United States, the ongoing upgrade of the NWS NEXRAD radars to dual-polarimetric capability will significantly improve the ability to resolve hydrometeorologic variables important for the development of coupled atmospheric–hydrologic models. Also, as clearly articulated in NRC (2009b) and discussed in the following section, a ground-based national network of networks at the mesoscale, inclusive of radio and optically sensed PBL profiles of water vapor and winds is expected to dramatically improve our predictive ability of the coupled atmospheric–hydrologic system.

Recommendation: Improving hydrologic forecast skill should be made a national priority. Building on lessons learned, a community-based coupled atmospheric–hydrologic modeling framework should be supported to accelerate fundamental understanding of water cycle dynamics; deliver accurate predictions of floods, droughts, and water availability at local and regional scales; and provide a much needed benchmark for measuring progress.

To successfully translate the investment in improved weather and climate forecasts into improved hydrologic forecasts at local and regional scales, and meet the pressing societal, economic, and environ-

|

19 |

The overarching science question of the WATERS Network is: “How can we protect ecosystems and better manage and predict water availability and quality for future generations, given changes to the water cycle caused by human activities and climate trends?” (WATERS, 2009). |

|

20 |

See: http://nasascience.nasa.gov/earth-science/decadal-surveys/Volz1_SMAP_11-20-07.pdf. |

mental demands of water availability (floods, droughts, and adequate water supply for people, agriculture, and ecosystems), an accelerated hydrologic research and R2O strategy is needed. Fundamental research is required on the physical representation of water cycle dynamics from the atmosphere to the subsurface, probabilistic prediction and uncertainty estimation, assimilation of multisensor observations, and model verification over a range of scales. Integral to this research are integrated observatories (from the atmosphere to the land and to the subsurface) across multiple scales and hydrologic regimes.

Continuous support of individual models geared toward improving specific components of the hydrologic cycle is a necessary element of progress. However, the committee recommends that a community organization around benchmark hydrologic and coupled atmospheric–hydrologic modeling systems is necessary at this stage to further advance hydrologic predictions, in both research and operational modes. Such benchmark models will provide for the synthesis of ideas and data, avoid duplication, identify major research gaps, provide metrics of success and track progress, and provide a platform on which communities can share knowledge (e.g., there is a need for ecologists to have access to hydrologic models, and a community-led framework would be useful in the same way that WRF has been useful to decision makers across widely diverse sectors). Also, such a community-based modeling framework will accelerate the development of a focused R2O and O2R strategy based on a sustained and effective academia–industry–government partnership.

MESOSCALE OBSERVATIONAL NEEDS

Improved observing capabilities at the mesoscale are an explicit aspect of every weather priority identified in this study, including socioeconomic priorities such as reduction in vulnerability for dense coastal populations, and improvements in forecasts at the scale of flash floods and routinely disruptive local weather. The 2009 BASC Summer Study workshop participants identified the underlying need for enhanced mesoscale observing networks throughout the oral presentations and in the working group discussions. This high-priority need was the focus of a recent BASC report, Observing Weather and Climate from the Ground Up: A Nationwide Network of Networks (NRC, 2009b), and many of its authors were participants in the 2009 BASC Summer Study workshop. Accordingly, this section draws heavily on the findings and recommendations contained in that report and sup-

ports each of its recommendations concerning observing-system technical requirements.

Observations at the mesoscale are an important part of the national and global observing systems. The global observing system captures large-scale circulation features and their related thermodynamic context. Components of the global observing system include various satellites and constellations thereof; radiosondes and regional electromagnetic profiling devices; and reference surface stations at key locations that maintain and extend the surface climate record. For example, geostationary satellites provide excellent temporal coverage, but new technologies are needed to penetrate clouds for thermodynamic information. Observations based on GPS constellation occultation techniques contribute important temperature and humidity information, principally at the synoptic scale. Observations at the mesoscale are increasingly important to establishing initial conditions for global models as resolution improves.

Mesoscale components include observations that either resolve mesoscale atmospheric structure or uniquely enable NWP at the mesoscale. The emphasis on mesoscale observations is motivated by the scale and phenomenology associated with disruptive weather; the necessity to understand it, detect it, and warn of the potential consequences; and improved capacity to specifically predict or otherwise anticipate it at very short to short ranges (0 to 48 hours).

Challenge: Why Are Enhanced Mesoscale Observations Needed?

Perhaps the simplest answer to this question is that high-impact weather happens at the mesoscale, and because the lowest 2 to 3 km of the atmosphere are, at once, underobserved and most important for processes such as convection, chemical transport, and the determination of winter precipitation type.

A more complete justification for mesoscale observations resides in the requirements to serve a wide variety of stakeholders inclusive of basic and applied researchers, intermediate users associated with weather-climate information providers, and a wide variety of end users at all levels of government and numerous commercial sectors. Some examples of these requirements include

-