11

Using Uninsured Data to Track State CHIP Programs

John McInerney

The Commonwealth Institute for Fiscal Analysis

With the creation of the Children’s Health Insurance Program (CHIP) as part of the Balanced Budget Act of 1997, focus and attention on providing access to health insurance coverage for low-income children significantly increased. CHIP gave states an attractive option to help reduce the population of low-income uninsured children, which was in excess of 20 percent in the years leading up to the program’s enactment. Combined with a generous federal contribution, the block grant program helped states provide comprehensive and affordable coverage to children with family income too high for Medicaid but too low to afford private insurance coverage.1

Throughout the program’s history, data on the uninsured rate of children has played an important role in funding and the evaluation of program success. Nationwide, as the number of low-income children enrolled in CHIP programs has increased, the uninsured rate for children has fallen according to census data and individual state surveys. CHIP and Medicaid became successful examples of using public insurance options effectively to make real progress in reducing the rates of uninsured. The recently enacted Patient Protection and Affordable Care Act (PPACA) builds on that success. When implemented over the next several years,

the law will retain CHIP and Medicaid as a primary source of coverage for low-income children and families.

State policy makers, advocates, and child coverage experts have learned a great deal about the use of data in evaluating CHIP over time. Using Virginia as a primary example, this paper discusses the some of these lessons in using uninsured and administrative data in running and evaluating CHIP at the state level. First, I discuss the role that data have played in CHIP policy making and program management and also how state analysts and advocates use, promote, and disseminate national survey data at the state level. I then describe how more accessible local uninsured and enrollment data would add useful information to aid in evaluating CHIP and the unmet needs of uninsured children.

THE VIRGINIA CHIP EXPERIENCE

Virginia, like most states, had high levels of uninsurance among children before CHIP was created in 1997. The percentage of employer-sponsored health insurance (ESI) for children was in decline, and there were few public insurance options for children whose families had too much income to qualify for Medicaid coverage. Nationally, over 22 percent of low-income children with family incomes below 200 percent of the federal poverty line (FPL) were without health insurance in 1997 (Georgetown University Center for Children and Families, 2006).

The Balanced Budget Act allocated $40 billion over 10 years in federal funding to allow states to create CHIP programs. Unlike Medicaid, CHIP was created as a block grant program, so states would receive an allotment based on a funding formula, not on the number of eligible applicants. States were given significant flexibility in program design and management in determining eligibility levels, enrollment, and program design. To further entice states, the federal match rate was set at 30 percent above the match rate for Medicaid. A state with a 50 percent federal match rate in Medicaid would receive 65 percent federal reimbursement for CHIP.2 States broadly embraced CHIP, and, by 2000, every state had a program up and running.

However, the effort was not the same in all states. Despite the substantial uninsured low-income child population, Virginia did not fully embrace CHIP initially. The state program, the Children’s Medical Security Insurance Plan (CMSIP), was created as a Medicaid expansion program, covering children from the Medicaid eligibility endpoint3 up to 185

percent of FPL. Enrollment in the program was low, largely because of enrollment barriers, such as a 12-month waiting period for children who had other insurance coverage.

The lackluster results of CMSIP were seen as a failure to many in the state, and the program was reformed in August 2001. The name of the program was changed to Family Access to Medical Insurance Security (FAMIS), eligibility was increased to 200 percent of FPL, and the 12-month waiting period was shortened to 6 months. In addition, the program was changed from a Medicaid expansion program to a separate CHIP. Further reforms in 2002 and 2003 implemented a common application for FAMIS and Medicaid, created a 12-month continuous eligibility provision for FAMIS children, and streamlined Medicaid eligibility for children at 133 percent of FPL (with children aged 6-18 and family incomes between 100-133 percent of FPL covered as a Medicaid expansion population with CHIP funding).

Since the 2001-2003 reforms, FAMIS has remained relatively unchanged.4 Although the state was utilizing all of its federal funding, Virginia was able to use a significant carryover balance from the slow beginnings of CHIP coverage to avoid the kind of funding shortfall that many other states faced. The temporary extension of CHIP in 2007 and its reauthorization in February 2009 also aided the Commonwealth in avoiding an estimated $24 million shortfall beginning in July 2009. The Children’s Health Insurance Program Reauthorization Act (CHIPRA) will provide states with a total of $69 million over 5 years in federal funding. The new CHIP block grant under CHIPRA provides Virginia with $175 million and $188 million in federal funding for the first 2 years of the 5-year reauthorization and is sufficient to provide coverage at the current enrollment and eligibility levels.

ADMINISTRATIVE DATA SHOW PROGRAM ENROLLMENT INCREASES BUT CURRENT POPULATION SURVEY DATA SHOW RISE IN UNINSURED

Virginia’s CHIP enrollment dramatically increased in the years following the creation of FAMIS. In 2001, fewer than 38,000 were enrolled

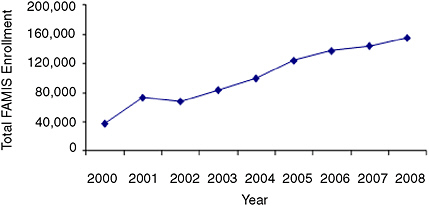

FIGURE 11-1 CHIP coverage for kids steadily increased in Virginia, 2000 to 2008.

SOURCE: Centers for Medicare & Medicaid Services (CMS).

in the program, far lower than most other comparable states. However, enrollment steadily increased each year, and, by 2006, approximately 125,000 were enrolled at some point during the year (see Figure 11-1). Virginia’s outreach was extensive, and the program proved popular with the public. The Commonwealth even renamed its Medicaid program for children FAMIS Plus to capitalize on the good public support.

Yet despite enrollment gains in FAMIS and Medicaid, data from the Current Population Survey (CPS) suggested that progress in covering uninsured children in Virginia was inconsistent. Between 2000 and 2004, CPS data showed a 5 percentage point decline in the low-income uninsured population, which was 23.5 percent in 2000 (this decline, however, was not statistically significant). This decline corresponded with the introduction of CHIP in Virginia and expansions in the program, and these trends are consistent with CHIP lowering the rate of uninsurance for Virginia’s children. However, between 2004 and 2006—a period during which FAMIS enrollment increased steadily—CPS data show that the percentage of low-income uninsured children in Virginia actually increased by nearly 4 percentage points (this increase, however, was not statistically significant) (Cook, Kenney, and Lawton, 2010).5

Data users are left to provide a plausible explanation for why the CPS shows that Virginia’s uninsured rate increased during this period

of expansion in CHIP.6 It is likely that an erosion in ESI coverage for all incomes led to the increase in uninsured children. Traditionally, Virginia has had a high percentage of residents with ESI coverage in part because of its large federal workforce. But between 2004 and 2006, ESI coverage in Virginia declined sharply for all incomes (Cook, Kenney, and Lawton, 2010.

LESSONS FOR STATE POLICY MAKERS, ANALYSTS, AND ADVOCATES

Although, in Virginia, CPS data do not reflect the CHIP enrollment gains evident in administrative data between 2004 and 2006, in most states survey and administrative data have shown a negative relationship between CHIP enrollment gains and the uninsured rate for children as measured by survey data. These trends in the data helped encourage state policy makers to do more to increase coverage for children throughout the first decade of CHIP. The results could be seen just about every time the Census Bureau released new data on uninsured rates. While ESI coverage was beginning to erode in many states, and the uninsured rate of the adult nonelderly population was generally stagnant or increasing, state policy makers could point to the positive data on children as a bright spot.7

In examining the ways in which data have been used in evaluating and operating CHIP, several lessons learned can help policy makers and those working on children’s health issues as the program continues over the next several years.

Lesson 1

—Uninsured Data for Children and Administrative Enrollment CHIP and Medicaid Data Are Important in Shaping and Directing Policy Options and Decisions

Uninsured Data Used in Original CHIP Funding Formula

Before CHIPRA was enacted in 2009, the CHIP funding formula was a combination of the health costs in the state, the total number of low-income children in the state, and the number of low-income uninsured children in the state.

Because CHIP is a block grant—not an entitlement program like Medicaid—states relied on adequate allotments to meet the demand for coverage. Few states wanted to close the doors to their program or deny eligible children, especially with the generous match, which can go as high as 85 percent.8 The fact that a part of the state funding allotments was based on CPS uninsured estimates increased the relative importance of the survey in policy making.

However, the importance of the CPS was problematic for many states. Many did not believe that the survey accurately reflected the number of low-income uninsured children in their states. For 42 states the CPS low-income children sample size was less than 100, which often led to funding fluctuations from year to year of up to 25 percent (Blewett and Davern, 2007). Some states received higher allotments than they needed and had large carryover balances and unspent block grant funds. Others were spending their entire block grant and using carryover money from the early years of the program to avoid a funding shortfall. In addition, states were in effect penalized as they covered more children because they were reducing the percentage of low-income children. By the end of 2006, however, at least 14 states faced shortfalls that cumulatively totaled more than $700 million (Park and Broaddus, 2006).

Policy makers recognized these concerns, and CHIPRA created a new funding structure that removes uninsured data from the formula. Instead, states are funded on the basis of the higher of prior year spending or estimated current year spending. In 2011, funding will be rebased so that states will receive funds based on their spending history and need. Survey data can be a helpful indicator in program evaluation, but they were not always an efficient metric in determining CHIP funding allocations.

August 2007: Regulating Income Eligibility Using New CMS Data Requirement

Data have also been used in policy making as a way to control state flexibility in setting income eligibility standards. In August 2007, while Congress was considering reauthorization of CHIP, the Centers for Medicare & Medicaid Services (CMS) issued a directive to prevent states from covering children above 250 percent of FPL unless they met certain requirements. Among the provisions in what became known as the “August 17 directive” was a requirement that states prove that they are covering 95 percent of the eligible children below 200 percent of FPL before offering CHIP coverage to anyone with family income above 250 percent of FPL.

The directive was troubling to states for many reasons. The arguably arbitrary benchmarks, which also included a 12-month waiting period requirement, would have prevented most states from expanding and improving their programs. In addition, states that were already covering children above 250 percent of FPL would have been forced to scale back eligibility if they could not reach the requirement for coverage of uninsured children under 200 percent of FPL. A likely intent of the directive was to set CHIP coverage limits at a maximum of no more than 250 percent of the FPL.

A key concern of state policy makers and other children’s health advocates and analysts was the lack of a reliable and uniform data source to measure whether a particular state had actually covered 95 percent of eligible children in CHIP and/or Medicaid.9

CMS did not provide states with a definitive data source or method to determine compliance. The CPS would have been the logical choice, but its small sample size and tendency to underreport Medicaid and CHIP coverage concerned the states. Other surveys, such as the Survey of Income and Program Participation and the National Health Interview Survey, did not have recent enough data. Finally, although CMS said that states could use state-based surveys, many were not produced consistently enough to be a viable option (National Academy for State Health Policy, 2008).

In the end, the directive was formally rescinded in February 2009, and no state was forced to reduce income eligibility levels that were already in place. However, CMS did use the directive to initially deny expansion efforts in New York, Ohio, and other states. Essentially, state CHIP coverage was frozen in place for at least a year through the imposition of an

arbitrary set of rules without a viable method of compliance. In the future, as program requirements incorporate data measures, CMS or Congress should be sure to name (and provide) a data source and methodology to determine compliance.

Lesson 2

—State Analysts and Advocates Are Key in Disseminating Information to Policy Makers, the Media, and the Public

State groups often lack the resources and capacity to collect and produce survey data, instead relying on national state-specific surveys by the Census Bureau and comprehensive analysis of census and other uninsured data by national experts. State analysts fill a valuable role in explaining the data and promoting state-specific information that can help inform the policy process.

Census Bureau Release of Uninsured Data

One of the most effective ways for children’s coverage experts to influence policy makers and the public is through the media. In the current information age, the media includes not only newspapers and television, but also blogs, Twitter, and even social networking sites like Facebook. To capture the attention of these media, old news about the uninsured is a tough sell. Media outlets respond to news that adds fresh information to the discussion (“Are things getting better or worse?” “What does the latest data tell us about where we stand?”).

To cultivate the media, one of the most important times of the year for health and social policy analysts and advocates are the days that the Census Bureau releases data from the CPS and the American Community Survey (ACS) on the number and percentage of uninsured people. These are one of the few times of the year that the media are focused and fully engaged on how states and the country are faring in tackling the problem of uninsurance.

Throughout much of the past decade, the message in the CPS release was often clear and easy to articulate to the media, interested policy makers, and the public. The number and percentage of uninsured Americans tended to increase, and the percentage of children without insurance usually fell. CHIP and Medicaid were providing coverage to millions more children. The message was that, without these sources of public insurance, the uninsured rate would undoubtedly be higher.

The difference between a rising uninsured rate overall and a falling uninsured rate for children was a powerful incentive for many state leaders to keep moving ahead with improvements to CHIP through cover-

age eligibility expansions, enrollment simplifications, and other outreach strategies. Governors and legislators could highlight the success they were having in lowering the uninsured rate for children, diverting attention away from a rising overall uninsured rate, for which they did not have an easy solution at the state level.

Survey Estimates of State Data Fluctuate Year to Year

As mentioned earlier, a common complaint about the CPS state estimates is that the small sample size can sometimes produce inconsistent results or outliers. Most accept this reality, believing that year-to-year results will at least show similar trends, albeit with different levels of magnitude, to larger surveys. Analysts can still present the data in comparison to previous years and help evaluate progress.

However, sometimes trends in survey data can be difficult to explain to a nontechnical audience. As an example, in Virginia the CPS estimated that the state had 185,000 (10.1 percent) uninsured children in 2006 and 187,000 (10.2 percent) in 2007, showing relative stability. Enrollment in FAMIS had increased during these years, but not enough to offset a significant decline in ESI coverage for children.

When the data for 2008 were released in September 2009, most experts expected to see an increase, or at least no significant change, in children’s uninsured rates in Virginia. The economy had been in recession for a year, and unemployment had increased from 3.2 percent in December 2007 to 5.2 percent in December 2008.10 Virginia’s overall economy was performing better than many states, but there were still struggles. Yet despite the downturn, the CPS showed Virginia with only 129,000 uninsured children in 2008, a decline of over 30 percent from the previous year. Even using 2- and 3-year averages to help smooth out irregularities and the margin of error in the data, the percentage of uninsured children declined between 2007 and 2008.

Although the uninsured rate for children declined nationally as well, the decline was largely caused by an increase in Medicaid and CHIP enrollment due to the economic downturn. Virginia’s decline could not be as easily explained. Public insurance coverage in Virginia increased, according to Census Bureau estimates, but only by less than 1 percentage point. In Virginia, the CPS data suggested that the biggest reason for the decline in the uninsured rate was the increase in ESI coverage for children, which rose from 61.1 percent in 2007 to 69.2 percent in 2008, even though a major recession was under way.

Although analysts could discern that the decline in uninsured children seen in the 2008 CPS data could be explained by an increase in children covered by ESI in the 2008 CPS, these trends in the survey data did not appear to reflect the reality of the situation in the state. It made the job of explaining the results to policy makers and the media more difficult (especially in states that are not so committed to supporting CHIP and Medicaid). Many wondered how it could be that advocates were asking for FAMIS eligibility to be increased when it appeared that the state was achieving success in reducing the uninsured.

Since there can be such large variation in annual estimates, analysts and advocates are left with a difficult decision about how to publicize estimates. They could have a difficult time explaining a good result, such as Virginia’s dramatic decline in the number of uninsured children in 2008, as an outlier or an artifact of small sample sizes in a survey. It may be more appropriate to simply point out the unmet needs that still exist (over 100,000 Virginia children may still be eligible but unenrolled for FAMIS and Medicaid) (Holahan, Cook, and Dubay, 2007). If the result is indeed an outlier, then the 2009 data may produce a result more consistent with the overall economic conditions and trends in the state.

Lesson 3

—More Data Are Needed

State Surveys Help Fill Gaps, But Are Not Done Regularly in Each State

Although the CPS and the ACS state data are not without flaws, they are the only options for many states in examining uninsured data for children. Most states have produced at least a few state surveys of the uninsured over the past decade, but only a few produce them on a yearly basis. The states that do state-based surveys more often tend to be ones that have a more robust public safety net and lower rates of uninsurance. Surveys, unless funded from federal grants, cost states money, and it is logical that the ones that are more dedicated to reducing the uninsured are more likely to make the investment.

Virginia produced two state surveys in the past decade, the most recent one in 2004.11 Without federal funding, it is unlikely that the Commonwealth will do another one in the near future. There is no ground-swell of support among lawmakers, regardless of ideology, to expand efforts to reduce the uninsured population. In fact, in the most recent bud-

get proposals, the General Assembly and the governor actively tried to decrease FAMIS eligibility from 200 to 185 percent of FPL to save money in balancing the budget. Although that cut will not happen because of PPACA maintenance-of-effort requirements,12 Virginia is likely to resist any coverage expansion beyond what is required by the law. In this climate, most policy makers simply do not believe that state surveys are necessary to produce something above and beyond what the Census Bureau produces.

For states that do not do comprehensive surveys, there are ways to get a better picture of state insurance trends. For example, every 2 years, the Virginia Health Care Foundation funds a report by the Urban Institute examining the CPS data and looking at uninsured rates using various demographics like age, gender, and income. The Urban Institute compares the results in Virginia with survey data dating back to 2000. Consumers of the report can see how much progress the state has made in covering children and other populations. For example, has the racial disparity in Virginia improved or deteriorated? Are there more or fewer uninsured now than 8 years ago? Four years ago? Although the data are not new, the Urban Institute study presents a more complete picture that can be used by those seeking to improve and expand CHIP and other children’s coverage options.

County Data Would Be Very Valuable

State-level data are not always sufficient to identify where the uninsured are located. Many states, especially larger ones with different regional economic conditions, have areas of both low and high uninsurance. Virginia provides an instructive example. Northern Virginia, near Washington, DC, is one of the most prosperous metropolitan areas in the country, with high incomes and low uninsured rates, in part because of the large federal workforce. Yet by contrast, Southwest Virginia in the Appalachian region contains some of the poorest communities in the United States. Providing one-size-fits-all state data is not representative of the entire makeup of a bigger state.

The Census Bureau is moving toward providing more local data. The Small Area Health Insurance Estimates (SAHIE) Program, with 2006 data most recently released, provides county-level estimates of the uninsured using CPS data. Subsequent years of SAHIE data (the 2007 data are sched-

uled for release later in 2010) will also be useful in identifying trends and areas of states that need extra attention.

In addition, the ACS will soon be a significant source of county and regional data for states once the survey has produced 3 years of data. By 2011, the ACS will release 3-year estimates of the uninsured by state, congressional district, metropolitan statistical areas (MSAs), public-use microdata areas (PUMAs), and county. Current 1-year estimates are already available for all these geographic breakdowns except the county level.

Getting access to county information would be beneficial to anyone analyzing CHIP or insurance coverage in general. MSAs and PUMAs provide valuable and important data, but there are limitations. Different municipalities could have vastly different economic conditions, even though they are in the same PUMA or MSA. For example, Virginia Beach is the largest and one of the wealthier cities in the state, and it is in the same MSA as the bordering city of Chesapeake, a much poorer city with a considerably higher uninsured rate and low-income population. Thus, the Norfolk–Virginia Beach–Newport News MSA results do not present an entirely accurate story for either the wealthier or poorer communities. PUMAs are somewhat smaller in size than MSAs, but they face the same problems in providing an accurate assessment when different economic conditions exist within the region.

Because of this problem, local service providers and foundations are not very interested in the MSA and PUMA data if they do not accurately reflect their service areas. They would like local data to help assess their efforts in reaching residents in need in their communities. State-level estimates of the uninsured or CHIP are not particularly relevant either, and regional data could be distorted depending on the breakdown of the communities in the survey.

Privacy and Administrative Data

A further difficulty also exists in analyzing CHIP and Medicaid enrollment at the local level. While some states release these data by county, Virginia will not publicly release the data for most counties. The state interprets the Health Insurance Portability and Accountability Act privacy rules to prohibit the release of enrollment data for jurisdictions with fewer than 20,000 enrollees. This means that data can be released only for the biggest counties or with counties grouped into regional data. With FAMIS, a much smaller program than Medicaid, county-level administrative CHIP data are also not released in Virginia.

This presents a challenge in analyzing the success of CHIP and Medicaid in particular regions of a state. Although state-level administrative data are usually easy to obtain, they don’t tell the full story of where

coverage gaps remain. Reasonable assumptions can be made by analyzing uninsured county data (when available) and Medicaid coverage information from the CPS and the ACS. But having access to the administrative data would be more useful for analysis.

FINAL REMARKS

From its inception, CHIP has been very successful at providing access to health insurance for low-income children and families. Uninsured data, both census state-level data and state-produced surveys, have provided valuable information for states to evaluate the program, but they have limits. As CHIP moves forward over the next several years, detailed and more localized data on the uninsured would assist states in locating and addressing the coverage gaps that still exist.

REFERENCES

Blewett, L.A., and Davern, M. (2007). Evaluating federal funding formulas: The State Children’s Health Insurance Program. Journal of Health Policy Politics and Law, 32, 415-455.

Cook, A., Kenney, G., and Lawton, E. (2010). Profile of Virginia’s Uninsured and Trends in Health Insurance Coverage, 2000-2008. Washington, DC: Urban Institute.

Georgetown University Center for Children and Families. (2006). Too close to turn back: Covering America’s children. Analysis based on data from the National Health Interview Survey.

Holahan, J., Cook, A., and Dubay, L. (2007). Characteristics of the Uninsured: Who Is Eligible for Public Coverage and Who Needs Help Affording Coverage? Report from the Kaiser Commission on Medicaid and the Uninsured.

National Academy for State Health Policy. (2008). The CMS August 2007 directive: Implementation issues and implications for State SCHIP programs. State Health Policy Briefing, 2(5).

Park, E., and Broaddus, M. (2006). Fourteen States Face SCHIP Shortfalls This Year Totaling Over $700 Million. Report from the Center on Budget and Policy Priorities.