6

Modeling Strategies for Improving Estimates

At the workshop, the participants were reminded that, in a 2003 paper discussing the issue of variability in the Current Population Survey (CPS) and the American Community Survey (ACS), the State Health Access Data Assistance Center (SHADAC) suggested that the “most desirable path would be to create estimates via statistical modeling that combine the strengths of the CPS-ACS and the fully implemented ACS. This method would be similar to the one used by the Census Bureau to allocate Title I education funds” (State Health Access Data Assistance Center, 2003). Although this suggestion has not been implemented, it indeed reflects a growing recognition that models for the generation of estimates, using survey and administrative data as inputs, could well have positive attributes for the equitable distribution of program funds to subnational geographic areas. The Kenney and Lynch paper (Chapter 8, in this volume), discussed in Chapter 3, suggested that it is possible that model-based estimates that combine ACS and CPS Annual Social and Economic Supplement (ASEC) estimates with administrative records data could be an improvement over single-source survey data.

This chapter summarizes presentations on the two main Census Bureau models—the Small Area Income and Poverty Estimation project (SAIPE) and the Small Area Health Insurance Estimation project—that currently produce estimates for small areas. It also reports on another approach that is gaining traction in the statistical community: the combination of data from multiple surveys.

THE SMALL AREA INCOME AND POVERTY ESTIMATION MODEL

The SAIPE project is an ongoing Census Bureau program to estimate numbers of low-income school-age children by state, county, and ultimately school district, based on data from the CPS, tax information, the Food Stamp Program, and the latest decennial census. SAIPE has a long history. It has been the subject of extensive development at the Census Bureau and evaluation by a previous National Research Council panel. The SAIPE approach to county-level estimates was developed in response to legislation in 1994 (National Research Council, 2000, p. 3) calling for the Census Bureau to supply “updated estimates” of county-level child poverty for use in allocating Title I education funds to counties in 1997-1998 and 1998-1999, and thereafter to provide estimates at the school district level.

William Bell pointed out at the workshop that the SAIPE was designed to respond to the prototypical small-area estimation problem that although some surveys produce reliable estimates at national level, subnational (e.g., state or county) estimates are desired, and the sample is not large enough to support the small-area estimates. The idea is to apply a statistical model to “borrow information” across areas and from other data sources to improve estimates. The key features in a successful model are the other data sources used, the form and underlying assumptions of the model, and diagnostics that can be used to check model assumptions.

The SAIPE model follows this form, using as data sources sample surveys, administrative records, and census data. Except for a complete enumeration from the census, all of these sources have errors of one of three types: (1) sampling error, that is, the difference between the estimate from the sample and what is obtained from a complete enumeration done in the same way; (2) nonsampling error, that is, the difference between what is obtained from a complete enumeration and a population characteristic of interest (“desired target”); and (3) target error, that is, the difference between what a data source is estimating (its target) and the desired target. As an example of target error, Bell pointed out that the model uses food stamp participants to measure poverty, but Food Stamp Program participant data are only an approximation of the desired target.

Bell summarized the strengths and weaknesses of the main data sources for SAIPE purposes. The CPS, the primary national survey measuring population and poverty each year, provides the SAIPE program with national county-level child poverty estimates, through sample-weighted estimates of the numbers and the proportion of poor children among children aged 5-17 related to the primary householder (poor related school-age children). Since 2005, these data have been obtained from the ACS. Both the CPS and the newer ACS produce direct poverty estimates that are up to date but that have large sampling errors for

“small” areas. The administrative predictors are the county numbers of child tax exemptions for families in poverty and of all child exemptions reported on tax returns, along with county numbers of households participating in the Food Stamp Program. Although there is no sampling error in these administrative data sources, there is target error, in that the data are not collected specifically to measure poverty.

The SAIPE uses a basic univariate model developed by Bob Fay and Roger Herriott in 1979. In this model,

given:

yi = direct survey estimate of population quantity Yi for area i,![]() = vector of regression variables for area i,

= vector of regression variables for area i,

β = vector of regression parameters,

ui = area i random effect (model error) ~ i.i.d. ![]() and independent of ei, and

and independent of ei, and

ei = survey errors ~ ind. N(0,vi) with vi assumed known.

In application, the SAIPE produces a state-level poverty rate for children aged 5-17. The direct estimates yi were originally from the CPS, but since 2005 they have been taken from the ACS. The regression variables in ![]() include a constant term and, for each state, a pseudo state child poverty rate from tax return information, a tax “nonfiler rate,” a Food Stamp Program participation rate, and a state poverty rate for children aged 5-17 estimated from the previous census, or residuals from regressing previous census estimates on other elements of

include a constant term and, for each state, a pseudo state child poverty rate from tax return information, a tax “nonfiler rate,” a Food Stamp Program participation rate, and a state poverty rate for children aged 5-17 estimated from the previous census, or residuals from regressing previous census estimates on other elements of ![]() for the census year.

for the census year.

The actual model is estimated as follows:

-

Given

and the υi, let

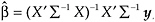

and the υi, let  , estimate β by WLS:

, estimate β by WLS:

where y = (y1,…, ym)′, and X is m × r with rows

.

. -

Given, υi, estimate

by the method of moments, ML, REML, or do so via the Bayesian approach.

by the method of moments, ML, REML, or do so via the Bayesian approach. -

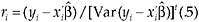

Use the standardized residuals:

for model diagnostics.

for model diagnostics.

A technique known as best linear unbiased prediction is used to improve the variance. In this case:

where ![]() .

.

One can also compute Var(Yi | y, model) = Var(Yi− Ŷi). The model assumes ![]() , and the WLS fitting then implies that

, and the WLS fitting then implies that ![]() .

.

Bell pointed out that, under this assumption, the model fitting linearly adjusts the xij so that ![]() estimates Yi. For example, if there is only one regression variable, xi, plus an intercept, then

estimates Yi. For example, if there is only one regression variable, xi, plus an intercept, then

which is a linear adjustment of xi to estimate Yi.

Variance improvement from modeling and the best linear unbiased prediction is reflected by

Negative values of % diff represent improvements.

Bell observed that the work on the SAIPE model indicates that small-area models can reduce variances of direct survey estimates for areas with small samples (and provide estimates for areas with no sample). In his presentation, he used examples from California, Indiana, and Mississippi, which showed that the model improved variances but the improvements were much more substantial for the two smaller states (50-60 percent) than California (26 percent). He also showed that the model estimates generally ignore nonsampling error in the direct survey estimates that provide the dependent variable in the model, which implicitly defines the target to be what the survey is estimating. He said that model fitting effectively adjusts the independent variables so that model predictions estimate the target defined by the dependent variable and that success in modeling depends on the quality of the data sources and the appropriateness of the model.

Another positive virtue of modeling is that various diagnostics (plots and tables) can be used to check the model. Bell referred to a National Research Council report (2000) on SAIPE that suggested a number of diagnostics to be applied to the models. These diagnostics were based on standardized residuals from the model plotted against various geographic areas and population groups to reveal patterns. In one test, for example, the Western census region showed negative residuals over a number of years, suggesting the need to modify the model.

The lessons learned in the development and testing of the SAIPE model were carried over into the development of a model for health insurance in small areas.

SMALL AREA HEALTH INSURANCE ESTIMATION MODEL

The Small Area Health Insurance Estimation (SAHIE) model is an outgrowth of the SAIPE model, although it goes beyond SAIPE in that it estimates the numbers in income groups and then, within income groups, the number of uninsured.

Small-Area Health Insurance Estimation Coverage

At the workshop, Mark Bauder presented a paper coauthored by Brett O’Hara on the SAHIE model. He reported that SAHIE began on an experimental basis in 2005 in order to meet needs of the Centers for Disease Control and Prevention to estimate the population of low-income uninsured women in particular age groups. The first live estimates were published in 2007, and the SAHIE program currently publishes state health insurance coverage estimates by age, sex, race, Hispanic origin (i.e., demographic characteristics), and income categories (0-200 percent and 0-250 percent of the poverty threshold and the total poverty universe). For counties, SAHIE produces estimates for fewer items—age, sex, and income categories (0-200 percent or 0-250 percent of the poverty threshold and the total poverty universe). By 2009, the SAHIE program had matured sufficiently to be considered a production model, and now it produces health insurance coverage estimates on an ongoing basis.

In their paper, O’Hara and Bauder (Chapter 13, in this volume) assess the ACS-based SAHIE model by comparing its state estimates with a CPS-based SAHIE model, and then modifying the model to obtain ACS model-based estimates for more income categories. They observe that gains from model-based state estimates increase as the number of domains increases (e.g., the income categories). These potential changes to the model, based on the strengths of the ACS, are intended to create more useful or refined estimates of uninsured populations for policy makers and other stakeholders.

They also reported on research comparing the ACS-based SAHIE model with the current CPS-based model. The research shows that an ACS-based SAHIE model generally has lower measures of uncertainty compared with a CPS-based SAHIE model. This is not surprising given that 1 year of ACS data is needed rather than a 3-year average of data from the CPS ASEC. The research also tested whether the ACS survey data could support modeling more income-to-poverty ratios. They found that, for most small domains (state/age/race/sex estimates for each income group), the ACS model-based estimates offer a large improvement over using survey-only estimates. With ACS direct estimates, many of the domains at the state level have coefficients of variation that should be used with caution or not at all.

According to the authors, the SAHIE estimates of uninsured people produce promising results, particularly when the ACS estimates are used as input. The SAHIE estimates could be a valuable tool for policy makers to evaluate and administer means-tested programs, such as Medicaid or the Children’s Health Insurance Program, and could be used to target funds for outreach to specific uninsured and underserved demographic groups. The state estimates could be used by states to estimate the number of uninsured people by income group, to assess program performance, and to assist in managing the program, for example, to approximate the total cost of subsidizing premiums for the health insurance exchanges.

O’Hara and Bauder report that research on the SAHIE model is continuing. Some planned areas of research include using ACS direct variance estimates. They can produce replicate-based estimates of the variances of ACS estimates, relaxing model assumptions by investigating alternatives to assumptions of independence and of constant variances, developing predictors for insurance coverage, such as those that now predict income status, testing the model to incorporate more income groups (e.g., 250-300 and 300-400 percent of poverty) and age groups (e.g., 19-25-year-olds), and producing multiyear ACS estimates. With these improvements, the possibility becomes more likely of using the results of models to supplement surveys as the official estimates of health insurance coverage for children.

Using Small-Area Models to Understand Survey Differences

In the discussion period following these presentations, Eric Slud, a member of the steering committee, suggested a way in which small-area models could be used in confirming and understanding systematic differences between health-insured estimates from multiple health surveys relating to subpopulations determined by income and age group. His suggestion was that each of the survey estimates of the size of such insured subpopulations could be modeled as a response variable, at levels of aggregation of state and sometimes smaller units, using the other survey estimates (aggregated as necessary) of related quantities as predictors. Some of the survey estimates might turn out to be highly predictable and reproducible from the others, and some might not.

He thought this might be a cost-effective way to document which of the survey estimates are most reproducible and predictable from the others and to calibrate them. Whether this could lead to a combined estimator of still higher quality would be a further worthwhile research issue. He suggested that an exploratory statistical analysis would be much cheaper than survey modifications, giving rise to a hope that this would be an appropriate path of investigation for the Census Bureau.

COMBINING INFORMATION FROM MULTIPLE SURVEYS

Nathaniel Schenker summarized his work on combining information from multiple surveys as a contribution to this session that highlighted alternate ways of strengthening estimates of uncovered children. His paper was based, in large part, on work published in a review article by Schenker and Raghunathan (2007).

He pointed out that there are several reasons for combining information from multiple surveys. They include the possibility of taking advantage of different strengths from different surveys and using one survey to supply information that is lacking from another. There are also reasons associated with handling various types of nonsampling errors, such as coverage error (when the population being sampled is different for some reason from the actual target population), errors due to missing data (nonresponse being one cause of missing data), and measurement or response error (when the variables being measured are measuring them with error).

He reported on three projects that involve combining information: combining estimates from a survey of households (the National Health Interview Survey, NHIS) and a survey of nursing homes (the National Nursing Home Survey, NNHS) to extend coverage; using information from an examination-based health survey (the National Health and Nutrition Examination Survey, NHANES) that uses measurement error models to predict clinical outcomes from self-report answers and covariates in order to improve on analyses of self-reported data in a larger interview-based survey; and combining information from the Behavioral Risk Factor Surveillance System survey (BRFSS) and the NHIS using Bayesian methods in order to enhance small-area estimation.

The combination of data from the NHIS and the NNHS was for the years 1985, 1995, and 1997. The NHIS samples households and collects data with regard to chronic conditions. In the NNHS, nursing homes are sampled and the nursing home staff provide information on the diagnosis of selected residents from medical records. For those aged 85 and over, 21 percent were in nursing homes, so improving coverage of this population required a combination based on design-based prevalence estimates from the two surveys for various chronic conditions.

Schenker’s second example used information from the NHANES to improve on analysis of self-reported data, when they might not accurately reflect prevalence of chronic conditions because of the way the questions are asked. The NHANES was used because there is an interview phase and a physical exam, which provides actual clinical measures. In this study, measurement error or imputation models were fitted, and the fitted models were used to impute clinical outcomes for people in the NHIS. This work is akin to developing valid estimates of prevalence for small

populations based on interviews in order to improve surveillance without the need for clinical measurements.

The third study used the BRFSS, a large survey with extensive coverage but, as a telephone survey, it does not cover households without phones and has high nonresponse rates. Schenker made the point that this was a form of missing data problem because the data for a part of the population that was not sampled is missing.

These three studies yielded several lessons with possible applicability to the issues related to improving measurement of children’s health insurance coverage. First, combining information across surveys can yield gains, particularly when the surveys have complementary strengths. Second, the methods developed can become obsolete quickly, particularly when collection techniques are changing rapidly, as is the case with telephone surveys. Third, attention must be paid to such issues as context and mode, as the phrasing of the questions has a significant impact. And fourth, sample designs count. For example, very different results would be obtained from an area-level design rather than a person-level design.

Some of the more practical lessons learned include the tremendous amount of work that goes into sharing data and estimates among multiple collection agencies, owing to confidentiality concerns and different policies and priorities among the agencies, as well as issues with such matters as selection of software.

DISCUSSION

In the discussion that followed these three presentations on modeling and combining data, the following points were made:

-

It is important, as in the SAHIE program, to refit the models every year, since the administrative data could change and affect the model coefficients between periods. This was important in 1997, when the welfare reform legislation changed the Food Stamp Program and affected how the Food Stamp variable worked in the models. The variable was dropped but eventually was reinstated after states settled their administrative procedures.

-

There is concern that models may not be an acceptable way of making allotments of CHIP resources, although it was pointed out that Title I education funds are allocated using the results of the SAIPE model and have been for some time.

-

It is important when dealing with administrative data in the models to keep in mind the biases that exist among the states that affect the data series. Sometimes these biases are difficult to measure, as they may be related to subtle program management differences.

-

One such difference is state policies regarding how long children are maintained on the rolls even when no longer actively enrolled, due to 12-month continuous enrollment policies.

-

Combining data series, as discussed by Schenker, has other promising attributes. One participant pointed to the possible use of such analysis in assisting states in finding prospective program participants on the basis of the results of the combination of surveys, comprising a more powerful identification tool than is presently possible using only the BRFSS.