1

Introduction

For more than half a century, specification of the quantities of nutrients needed to meet human requirements—dietary reference values—has been carried out at the national level in the United States and Canada. Reference values known in the United States as Recommended Dietary Allowances (RDAs) and in Canada as Recommended Nutrient Intakes (RNIs) were used well into the 1990s (IOM, 2008). They were established primarily to set nutrition and health policy (IOM, 2008) and have found broad application in government programs ranging from standards for school meals to the basis for food fortification. They have also been used to counsel individuals about dietary intake. Over the years, both governments have funded on-going updates and reviews of these reference values.

In 1994, in response to important changes in the nutrition field as well as the recognition that for many nutrients the single-value RDA or RNI did not meet the expanding needs for nutrient reference values, the Institute of Medicine (IOM) in Washington, DC, began an initiative to develop a new, broader set of values known as the Dietary Reference Intakes or DRIs (IOM, 2008). The U.S. and Canadian governments have jointly supported this initiative, and the resulting DRIs are now used in both countries. As a result of the initiative, the DRIs as reference values now

-

Include an estimate of an average (or median) requirement as well as an estimate of an intake level that meets, and in turn exceeds, the needs of most (97.5 percent) of the population;

-

Include upper levels of intake to ensure no harm from nutrient intake;

-

Incorporate chronic disease indicators when the data allow; and

-

Highlight concepts of probability and risk for defining reference values.

With this new model as a backdrop, the IOM in 1997 issued the first set of DRIs. The nutrients included in the first of what became a series of DRI reports were: calcium, phosphorus, magnesium, vitamin D, and fluoride (IOM, 1997). Therefore, the 1997 DRIs for calcium and vitamin D—the nutrients that are the topic of this 2010 review—have been in existence for 13 years. In 2008, the U.S. and Canadian governments made the decision that there were now sufficient new data to warrant funding another study of the DRIs for vitamin D (Yetley et al., 2009). They included calcium in this study because of its close inter-relationship with vitamin D. A 14-member ad hoc expert committee was convened by the IOM in 2009 to take on this task; its work was to be completed by 2010. Committee members had general expertise in the areas of vitamin D and calcium or a closely related topic area, with specific expertise related to endocrinology, bone and skeletal health, immunology, oncology, dermatology, cardiovascular health, pregnancy and reproductive nutrition, pediatrics and infant nutrition, epidemiology, cellular metabolism, toxicology and risk assessment, nutrition monitoring, biostatistics, and minority health and health disparities. Three members of the committee had served on other DRI committees.

The current consideration of the DRIs for vitamin D and calcium takes place at a time when the interest in vitamin D is enormous. This vitamin—with its hormone-related activities—has received much media attention and has been the subject of countless publications and lay press reporting of its benefits for an array of health outcomes. Concerns about widespread vitamin D deficiency in North American populations are often expressed. This committee’s focus was, first, to review objectively the existing evidence concerning the benefits and health outcomes associated with vitamin D as well as calcium, using the well-established scientific principles for judging the quality and relevance of data from intervention as well as observational studies. The members of the committee next integrated the available data and, within the context of the risk assessment approach for establishing DRIs, carried out activities to specify DRIs for calcium and vitamin D. The reference values established in 1997 were noted by the committee, but they were not binding on the committee’s work.

THE TASK

The charge to the committee was to assess current relevant data and update, as appropriate, the DRIs for vitamin D and calcium. The review was to include consideration of chronic disease indicators (e.g., reduction

in risk of cancer) and other (non-chronic disease) indicators and health outcomes. The definitions of these terms are discussed below. Consistent with the framework for DRI development, the indicators to assess adequacy and excess intake were to be selected based on the strength and quality of the evidence and their demonstrated public health significance, taking into consideration sources of uncertainty in the evidence. Further, the committee deliberations were to incorporate, as appropriate, systematic evidence-based reviews of the literature.

Specifically, in carrying out its work, the committee was to:

-

Review evidence on indicators to assess adequacy and indicators to assess excess intake relevant to the general North American population, including groups whose needs for or sensitivity to the nutrient may be affected by particular conditions that are widespread in the population such as obesity or age-related chronic diseases. Special groups under medical care whose needs or sensitivities are affected by rare genetic disorders or diseases and their treatments were to be excluded;

-

Consider systematic evidence-based reviews, including those made available by the sponsors as well as others, and carefully document the approach used by the committee to carry out any of its own literature reviews;

-

Regarding selection of indicators upon which to base DRI values for adequate intake, give priority to selecting indicators relevant to the various age, gender, and life stage groups that will allow for the determination of an Estimated Average Requirement (EAR);

-

Regarding selection of indicators upon which to base DRI values for upper levels of intake, give priority to examining whether a critical adverse effect can be selected that will allow for the determination of a so-called benchmark intake;

-

Update DRI values, as appropriate, using a risk assessment approach that includes (1) identification of potential indicators to assess adequacy and excess intake, (2) selection of the indicators of adequacy and excess intake, (3) intake-response assessment, (4) dietary intake assessment, and (5) risk characterization.

-

Identify research gaps to address the uncertainties identified in the process of deriving the reference values and evaluating their public health implications.

THE DIETARY REFERENCE INTAKE FRAMEWORK

The framework for DRI development has been described by others (IOM, 2006, 2008; Taylor, 2008) and will be outlined here to set the con-

text for this report. The original framework for DRIs was put in place in 1994 (IOM, 1994), and the reviews of nutrients were completed in 2004. During the 4-year period between 2004 and 2008, it was the subject of discussions concerning its needed improvements as well as it successes (IOM, 2008). The present DRI effort described in this report for vitamin D and calcium is the first to be issued since the 2004 to 2008 evaluative discussions.

In developing and enhancing the DRI framework, two goals were identified. The first is that the framework should ensure and foster transparency of the decision-making process. The second goal is that the framework should anticipate the need to make decisions in the face of limited data and, in turn, offer options for making scientific judgments. Scientific judgment in the face of limited data is important, given the interest in protecting public health and the reality that “no decision is not an option”—that is, a science-based judgment is more useful than no recommendation at all. In other words, the framework must operate under conditions of uncertainty.

The framework that has evolved for DRI development is increasingly recognized as akin to that developed in other fields and referred to as risk assessment. Risk assessment is a component of risk analysis, a process for managing situations where public health interventions and monitoring come into play. It analyzes and controls the “risks” that may be experienced by a population of interest (Taylor, 2008). In the case of DRI development, the “risk” is nutrient intakes that are too low or too high. Although the terminology associated with the discipline of risk analysis may at times be unfamiliar to those in the nutrition field, the discipline’s structure and application are a good match for DRI development (Taylor, 2008).

Risk analysis, as considered generically for all fields of study, typically is described as including three components: risk assessment, risk management, and risk communication. These are often illustrated as overlapping circles. The component known as risk assessment has received attention as an organizing scheme for the DRI study committee review process, and is described separately in a section below. Overall, however, the basic assumptions underlying all of risk analysis are relevant to DRI development. At its most basic, risk analysis is predicated on the assumption that scientific deliberations should be organized in a manner that meets user/sponsor needs while maintaining the scientific integrity of the assessment (NRC, 1983). Further, the following general assumptions of risk analysis relate directly to the overall development of DRIs, particularly concerning scientific judgments when uncertainties and limited data exist (Taylor, 2008):

-

Failure to provide a reference value (“no decision”) is often not a viable option from the perspective of protecting public health. It is better to offer

-

those operating in the public health arena an informed decision based on the best available scientific expertise and judgment, even if not perfect or very precise, than to offer no information, which by default provides no guidance for evaluating or dealing with the current situation.

-

Available datasets are often incomplete, and scientific uncertainties must be dealt with through use of scientific judgment and judicious, transparent documentation.

-

Meeting the scientific needs of users/sponsors requires a framework for ensuring understanding of the needs and a useful presentation of the scientific assessments, as well as the independence of the scientific evaluations and protection of the scientific reviewers from undue stakeholder influence.

Finally, the DRI framework recognizes the considerable utility in organizing and rating the available data through the use of systematic reviews (Taylor, 2008; Russell et al., 2009), which are now a well-established process in many fields of medicine. However, unlike a systematic review of a medical intervention, a systematic review for the relationship between nutrient intake and a health outcome is much broader. In contrast with focused clinical interventions, most nutrients have direct and indirect effects on a wide range of health outcomes and could potentially reduce the risk of chronic diseases. In turn, the breadth of outcomes—and thus research that needs to be assessed—is greater than that for a medical intervention; as a result, considerable care is required in formulating and prioritizing the key questions to be addressed (Chung et al., 2010).

Definition of Dietary Reference Intakes

The DRIs are comprised of several reference values that relate to the concept of a distribution of requirements and a distribution of intakes. These different values are tools for assessing and planning diets and are most applicable for use with groups of people because the exact nutritional requirements of an individual cannot be known. The application of DRI nutrient reference values for these general purposes is wide and diverse. They range from use by federal government agencies in making national nutrition policy or developing federal nutrition and food assistance programs, to work at the local level in assessing diets of groups and individuals. Public health protection and promotion is the common interest. Further, DRIs address nutrients in foods overall. Because people structure diets primarily by selecting individual foods as opposed to selecting a set of nutrients, an important role of government and related advisory groups has been the task of translating quantitative nutrient reference values into

food-based recommendations for the generally healthy U.S. and Canadian populations. That was not the task of this committee for whom the focus has been the quantitative nutrient requirements and upper levels of intake.

Currently, the mainstays of DRI development are the EAR, and the Tolerable Upper Intake Level, or UL (also referred to at times as Upper Levels of Intake). The RDA is to be derived from the EAR and reflects an estimate of an intake that meets the needs of 97.5 percent of the population’s requirements. It is not a target intended to be met by all individuals, and intakes below the RDA cannot be assumed to be inadequate because the RDA by definition exceeds the actual requirements of all but 2 to 3 percent of the population. The Adequate Intake (AI) was originally incorporated into the framework to address the inevitable uncertainties associated with specifying requirements for infants, given the challenges in obtaining sufficient information for this group, but has expanded to include use when available data for any life stage group are too limited to establish a requirement. The AI is the subject of some debate, given that it does not appear to readily “fit” into the probability assumptions for DRI use (Taylor, 2008). There are also other reference values, as described in other IOM documents (IOM, 2006), but as these are not relevant to this report, they are not described here.

Estimated Average Requirement

The EAR is the average daily nutrient intake level that is estimated to meet the nutrient needs of half of the healthy individuals in a life stage or gender group. Although the term “average” is used, the EAR is actually an estimated median requirement (IOM, 2006). Therefore, by definition, the EAR exceeds the needs of half of the population and is less than the needs of the other half (Taylor, 2008).

The 1994 to 2004 DRI process placed emphasis on the distribution of requirements for a population, rather than focusing on a single value constructed to “cover” the great majority of the population, as had been the case in earlier efforts (Taylor, 2008). This, along with the development of newer methodologies for assessing and planning adequate intakes for groups, made the EAR a central reference value, along with the UL. The 10 years of DRI development moved the process from a black-and-white cutoff in the form of an RDA to consideration of a probability model. Doing so made it clear that there is a distribution of requirements in the population (Taylor, 2008).

The EAR itself presents little controversy as an expressed reference value. Beyond the question of how to handle EAR estimation in the face of limited data, most of the issues that surround EAR development are

related to the uncertainty surrounding the value and ensuring appropriate discussions about the variation in requirements. A challenge lies in obtaining adequate data to allow a reasonable approximation of the variability in requirements and hence the distribution of the requirement among individuals (Taylor, 2008).

Recommended Dietary Allowance

The RDA is calculated from the EAR. It is dependent upon estimating the variance around the EAR and reflects a point estimate defined generally as two standard deviations above the EAR (Taylor, 2008). Although some refer to this reference value as “the requirement plus a safety factor,” this is potentially misleading in that it underplays the importance of the variability around the median. The RDA is intended to reflect the EAR plus two standard deviations.

This RDA calculation starts with the assumption that the distribution of a nutrient requirement is generally normal. However, this is not the case for a number of nutrients. There is also the need to describe the variance around the EAR. Such data are usually limited; when the variance is not known, the coefficient of variation is assumed, commonly as 10 percent. There is concern expressed by some that RDAs cannot be considered to be scientifically derived because too often the variance around the EAR cannot be determined precisely from the available data, and is therefore unknown, and the assumptions made about the variance may be inappropriate (Taylor, 2008).

The estimation of the RDA results in a value that is above the intake required for about 97.5 percent of the population. The RDA thus exceeds the requirements of nearly all members of the life stage group. Current guidance (IOM, 2000a, 2003) stipulates that the RDA is useful for some applications with individuals, but it is not appropriate when working with groups of persons for the purposes of assessing and planning for nutrient intake (Taylor, 2008).

Adequate Intake

The possibility of the AI—except for reference values for infants—was not considered when the DRI framework was first developed in 1994 (IOM, 2008). The AIs emerged as a result of the deliberations of the early study committees during the implementation of the initial DRI process. When the available data were judged lacking for the purposes of estimating an EAR, an AI was set. The value was seen as filling the gap that would have existed had no value been issued (Taylor, 2008).

The AI is defined as a value based on observed or experimentally determined estimates of nutrient intake by a group of people who are apparently healthy and assumed to be maintaining an adequate nutritional state. Examples of adequate nutritional states include normal growth, maintenance of normal levels of nutrients in plasma, and other aspects of nutritional well-being or general health. The AI is obviously derived differently from the EAR/RDA, and a distribution of requirements cannot be offered.

Tolerable Upper Intake Level

As intake increases above the UL, the potential risk of adverse effects may increase; it is a level above which the risk for harm begins to increase. The UL is the highest average daily nutrient intake level likely to pose no risk of adverse health effects for nearly all people in a particular group. The need to set a UL grew out of two major trends; increased fortification of foods with nutrients and the use of dietary supplements by more people in larger doses (IOM, 2006).

The UL is not a recommended level of intake, but rather the highest intake level that can be tolerated without the possibility of causing adverse effects in most people. The value applies to chronic daily intake among free-living persons in the community (IOM, 2006). It has often been misused as a determination of levels to be allowed in controlled clinical trials. However, ULs are not defined to fit this purpose, and higher levels may be approved for controlled research purposes if there is a rationale for the levels to be used and if monitoring and other safety precautions are put in place. Rather, the UL is meant for public health protection. The biggest challenge in establishing ULs is the paucity of data indicating the effects of chronic intakes of high levels of nutrients. Experimental animal data as well as observational data are useful and relevant under these circumstances.

Applications of DRIs

The application of the DRIs in real world settings has been the subject of detailed IOM reports (IOM, 2000a, 2003). The EAR is the foundation of DRI development and is relevant to the planning and assessing of diets as they relate to population groups. The EAR is a reference value often important to the government sponsors of the report who may use requirement distributions to set national food policy, establish criteria for food programs, and make decisions about the adequacy of the food supply.

An individual’s nutrient requirement cannot be readily determined, and the use of DRIs for the purposes of assessing and planning diets of individuals is challenging. If an individual’s daily intake is typically below the

EAR, there is likely a need for improved intake. If daily intake is typically between the EAR and the RDA, there is probably a need for improvement because the probability of adequacy, although more than 50 percent, is less than 97.5 percent. However, intakes below the RDA cannot be assumed to be inadequate because the RDA by definition exceeds the actual requirements of all but 2 to 3 percent of the population; many with intakes below the RDA may be meeting their individual requirements (IOM, 2006).

Life Stage Groups

The DRIs are expressed on the basis of reference values for a number of different life stage groups. These life stages have been stipulated generally on the basis of variations in the requirements of all the nutrients under review. A recent IOM report (IOM, 2006) described these general groupings as follows.

Infancy

Infancy covers the first 12 months of life and is divided into two 6-month intervals. In this report infancy is designated as 0 to 6 months (meaning from birth to 5.9 months or about the first 182 days of life) and as 6 to 12 months (meaning from 6.0 months to 11.9 months or approximately the second 182 days of life). Intake is relatively constant during the first 6 months after birth. That is, as infants grow, they ingest more food; however, on a body-weight basis their intake remains the same. During the second 6 months of life, growth rate slows. As a result, total daily nutrient needs on a body-weight basis may be less than those during the first 6 months of life (IOM, 2005). In general, special consideration was not given to possible variations in physiological need during the first month after birth or to the intake variations that result from differences in milk volume and nutrient concentration during early lactation (IOM, 2005). Specific recommended intakes to meet the needs of formula-fed infants are not set as part of the DRI process.

Children: Ages 1 Through 3 Years

In terms of height, toddlers experience a faster growth rate compared with older children, and this distinction provides the biological basis for establishing separate recommended intakes for 1- to 3-year-olds compared with 4- to 8-year-olds. However, data on which to base DRIs for toddlers are often sparse; in many cases, DRIs must be derived by extrapolating data taken from the studies of infants or adults.

Children: Ages 4 Through 8 Years

During early childhood, children ages 4 through 8 or 9 years (the latter depending on the onset of puberty in each gender) undergo major changes in growth rate and endocrine status. For many nutrients, a reasonable number of data have been available on nutrient intake, and various criteria for adequacy serve as the basis for nutrient reference values for this group. For nutrients that lack data on the requirements of children in this age group, the nutrient reference values must be based on extrapolations from other life stage groups.

Children/Adolescence: Ages 9 Through 13 Years and 14 Through 18 Years

The adolescent years are divided into two categories. Several conclusions support the biological appropriateness of creating two adolescent age groups within the DRI framework (IOM, 2006):

-

The mean age of onset of breast development for white girls in North America is 10 years; this is a physical marker for the beginning of increased estrogen secretion (in African American girls, onset is about a year earlier, for unknown reasons).

-

The female growth spurt begins before the onset of breast development, thereby supporting the grouping of 9 through 13 years.

-

The mean age of onset of testicular development in boys is 10.5 through 11 years.

-

The male growth spurt begins 2 years after the start of testicular development, thereby supporting the grouping of 14 through 18 years.

Young Adulthood and Middle Age: Ages 19 Through 30 Years and 31 Through 50 Years

Adulthood was divided into two age groups, in part due to consumption of higher nutrient intakes during early adulthood compared with later in life. Mean energy expenditure decreases from ages 19 through 50 years, and nutrient needs related to energy metabolism may also decrease (IOM, 2006).

Older Adults: Ages 51 Through 70 Years and Over 70 Years

The age period of 51 through 70 years spans active work years for most adults. After age 70, people of the same age increasingly display different

levels of physiological functioning and physical activity (IOM, 2000b). Age-related declines in nutrient absorption and kidney function also may occur.

Pregnancy and Lactation

Unique changes in physiology and nutrition needs occur during pregnancy and lactation. For the DRI framework, consideration is often given to the following factors:

-

The needs of the fetus during pregnancy and the production of milk during lactation;

-

Adaptations to increased nutrient demand, such as increased absorption and greater conservation of many nutrients; and

-

Net loss of nutrients due to physiological mechanisms, regardless of intake.

Owing to the last two factors, for some nutrients there may not be a basis for setting reference values for pregnant or lactating women that differ from the values set for other women of comparable age.

Indicators for DRI Development

Indicators for DRIs are defined as the health outcomes that serve as the basis for estimating a nutrient requirement. Within the fields of biology and medicine, the term “indicators” has been defined differently and in some cases the definition may not be the same used for DRI purposes. In the case of indicators for DRIs, they can take various forms and many different indicators have been used in the more than 15 years of DRI experience (Taylor, 2008). The term in other settings encompasses what are variously referred to as endpoints, surrogates, biomarkers, or risk factors. Additionally, the term clinical outcome, also referred to as health outcome, is used to refer to the ultimate measurable effect of interest for nutrients, which is, of course, an indicator. Other measures preceding the occurrence of a clinical outcome can be predictive of the clinical outcome itself, although this is not necessarily the case and they must be validated before this can be assumed.

The term biomarker, like the term indicator, is defined differently within different fields of study. In the field of nutrition it is often referred to in the same way in which this report uses the term indicator. In order for them to equate, however, the biomarker must be causally related to the outcome indicator. Important terms in common parlance are biomarker of exposure and biomarkers of effect. The former is a validated measure that can be relied upon to reflect intake or exposure in the case of nutrients. A biomarker of

effect is an indicator and can be relied upon to be causally related to and predictive of the health outcome of interest.

The guiding principles for selecting indicators as they are used in DRI development is that they must be feasible, valid, reproducible, sensitive, and specific (WHO, 2006). As pointed out by others (WHO, 2006), they must, however, be used intelligently and appropriately. In addition to causal association, general characteristics of indicators for DRI development include the following:

-

Changes in the indicator are plausibly related to changes in the risk of an adverse health outcome.

-

Changes in the indicator are usually outside the homeostatic range.

-

Changes in the indicator are generally associated with adverse sequelae.

-

Measurement of the indicator can be accomplished accurately and is reproducible between laboratories.

DRI Risk Assessment

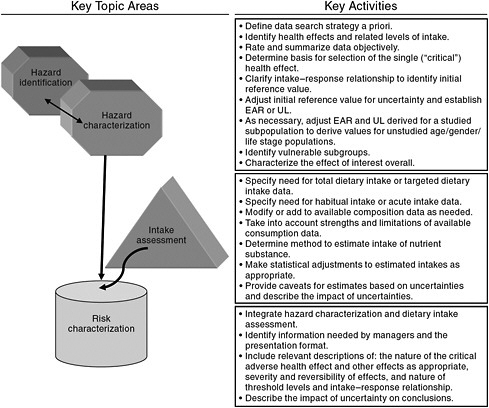

Beginning in the 1990s, the process of risk assessment formally entered into DRI development as the basis for the model for establishing ULs for nutrients (IOM, 1998). However, the risk assessment organizing scheme is as applicable to the activities focused on requirements for ensuring nutritional benefit (i.e., the EAR) as it is to establishing ULs. Risk assessment reflects a flexible, objective scientific scheme for making transparent and accountable decisions, whatever the indicator of interest. It is applied across a range of disciplines and has been generically described as shown in Figure 1-1.

The word “risk” causes some in the nutrition field difficulty, in that it does not seem appropriate to link the benefits of nutrient intake to the concept of “risk,” despite the ultimate purpose of reducing the risk for intakes too low to provide the health benefits (Taylor, 2008). Other risk assessment terminology may also seem inappropriate, such as the decision steps labeled as “hazard identification” and “hazard characterization,” as well as the final step of “risk characterization.” Nonetheless, the approach that has evolved for estimating EARs rests on a sequence of decisions that are similar to those specified within generic risk assessment (Taylor, 2008).

Given that the DRI development process couples the considerations for nutrient adequacy with those for excess intakes, there are advantages to applying the same organizing scheme for both ULs and EARs. For instance, incorporating the same general decision-making process to derive both adequate and excess intakes allows side-by-side comparisons of the process as it progresses. This could be of value in identifying unintended conse-

FIGURE 1-1 The four generic steps of risk assessment.

SOURCE: Modified from WHO (2006).

quences or inconsistencies among the various DRI development activities. One example is the procedures used for extrapolation relative to EAR and UL values. Study committees would likely notice potential incompatibilities if the evaluations for both adequate and excess intakes were compared in a side-by-side risk assessment framework. Additionally, as the methodological challenges in the studies used to evaluate risks are likely to be associated with both inadequate and excess intakes, a consistent framework for analyzing both is logical (Taylor, 2008).

The steps associated with risk assessment, as applied in this report on vitamin D and calcium, are briefly described below.

Step 1:

“Hazard Identification” or Indicator Review and Selection

An initial starting point for this report—as for all deliberations based on risk assessment—is the identification and review of the potential indicators to be used in developing the DRIs. Based on this review, the indicators

to be used are selected. As described within the DRI framework, this step of indicator identification (or hazard identification) is outlined as follows.

-

Literature reviews and interpretation Subject-appropriate and well-done systematic evidence-based reviews as well as other relevant scientific reports and findings serve as a basis for deliberations and development of findings and recommendations for the nutrient under study. De novo literature reviews carried out as part of the study are well documented, including, but not limited to, information on search criteria, inclusion/exclusion criteria, study quality criteria, summary tables, and study relevance to the task at hand consistent with generally accepted methodology used in the systematic review process.

-

Identification of indicators to assess adequacy and excess intake Based on results from literature reviews and information gathering activities, the evidence is examined for potential indicators related to adequacy for requirements and the effects of excess intakes of the substance of interest. Chronic disease outcomes are taken into account. The approach includes a full consideration of all relevant indicators, identified for each age, gender, and life stage group for the nutrients under study as data allow.

-

Selection of indicators to assess adequacy and excess intake Consistent with the general approach, indicators are selected based on the strength and quality of the evidence and their demonstrated public health significance, taking into consideration sources of uncertainty in the evidence. They are in consideration of the state of the science and public health ramifications within the context of the current science. The strengths and weaknesses of the evidence for the identified indicators of adequacy and adverse effects are documented.

Step 2:

“Hazard Characterization” or Intake-Response Assessment and Specification of Reference Values

The intake–response (more commonly referred to as dose–response) relationships for the selected indicators of adequacy and excess are specified to the extent the available data allow. If the available information is insufficient, then appropriate statistical modeling techniques or other appropriate approaches that allow for the construction of intake-response curves from a variety of data sources are used. In some instances, most notably for the derivation of UL relative to excess intake, it is necessary to make use of specified levels or thresholds in the absence of the ability to describe a dose–response relationship, specifically a no observed effect level

or a lowest observed effect level. Further, the levels of intake determined for adequacy and excess are adjusted as required, appropriate, and feasible by uncertainty factors, variance in requirements, nutrient interactions, bioavailability and bioequivalence, and scaling or extrapolation.

Step 3:

Intake Assessment

Consistent with risk assessment approaches, after the reference value is established, based on the information derived from scientific studies, an assessment of the current intake of (or exposure to) the nutrient of interest is carried out in preparation for the risk characterization step. That is, the known “exposure” to the substance (or the known intake in the case of nutrients) is examined in light of the reference value established. Where information is available, an assessment of biochemical and clinical measures of nutritional status for all age, gender, and life stage groups can be a useful adjunct.

Step 4:

“Risk Characterization” or Discussion of Implications and Special Concerns

Risk characterization is a hallmark of the risk assessment approach. For DRI purposes, it includes an integrated discussion of the public health implications of the DRIs and how the reference values may need to be adjusted for special vulnerable groups within the normal population. As appropriate, discussions on the certainty/uncertainty associated with the reference values are included as well as ramifications of the committee’s work that the committee has identified as relevant to its risk assessment tasks.

THE APPROACH

The committee began its task in early 2009 and held a total of eight meetings through 2010. Committee members first reviewed the documents concerning the DRI framework (IOM, 2006, 2008; Taylor, 2008) so that members were well versed in the context of their work related to reference values. One of the committee’s first activities was to open a website where anyone could submit data or comments to the committee concerning vitamin D and calcium. Any information that was available to the public could be considered by the committee. During its first meeting, the committee made plans for a 1-day public workshop so that information could be presented and explained to the committee, and questions asked of stakeholders.

In order to set the stage for its review, the committee gathered current background information on the metabolism of calcium and vitamin

D, including life stage differences in metabolism (Chapters 2 and 3). This information may be helpful to those less familiar with the biology and physiology of the two nutrients that are the subject of this report.

Consistent with the risk assessment approach, the committee then initiated the first step of risk assessment in Chapter 4—that is, the work to identify potential indicators. As described in Chapter 4, it reviewed the evidence related to those relationships that could potentially serve as the indicators for establishing DRIs. In order to ensure comprehensiveness, the committee included, as potential indicators, relationships that appeared marginal by standard scientific principles, as well as those suggested to be of interest by stakeholders.

An important set of analyses for the committee’s work was the evidence-based reviews on vitamin D (and vitamin D in combination with calcium) carried out by the Agency for Healthcare Research and Quality (AHRQ) (Cranney et al., 2007; Chung et al., 2009). These are referred to throughout the report as AHRQ-Ottawa and AHRQ-Tufts, respectively, at times without a specific reference citation. The methods and results chapters from AHRQ-Ottawa and AHRQ-Tufts are included in their entirety in the appendix section of this report. These large, comprehensive analyses were prepared by AHRQ at the request of the U.S. and Canadian governments and were conducted independently from this committee’s work. They provided valuable in-depth information on the quality of the available studies and the overall nature of the database for DRI development for vitamin D and to a lesser extent for calcium.

The AHRQ-Ottawa and AHRQ-Tufts analyses represent the current thinking on approaches to developing dietary reference values in which expansive and at times conflicting bodies of evidence must be arrayed and evaluated in as objective a manner as possible. The key to ensuring the relevance of such analyses to the DRIs as well as their rigor and objectivity is to integrate subject matter experts with methodologists at the planning stages of the systematic reviews. Although the importance of evidence synthesis in medicine was recognized in the 1970s, its widespread use has taken place more recently, especially with the concern that the judgments and opinions of experts could be inadvertently biased (Moher and Tricco, 2008). The questions identified for the analysis must be reflective of the physiological and biological issues, and the inclusion/exclusion criteria must be agreed upon and specified a priori. As described by Moher and Tricco (2008), the four main components of the relevant questions are (1) the population or problem; (2) the intervention, the independent variable, or exposure; (3) the comparators; and (4) the dependent variable or outcomes of interest. The movement to systematic reviews in the nutrition field has been the subject of discussion recently and has been called out as particularly relevant for nutrient reference value development (Russell et al., 2009). Their

utility is their ability to analyze objectively the available data; their strength derives from including subject matter experts in the planning stages and in the review stages as well. The specific approach used for each of the AHRQ analyses is described in the methodologies section of each (Appendixes C and D) and includes the itemization of the questions asked for the analysis.

It is important to underscore that systematic reviews array much but not all of the data and can assist a DRI committee in identifying relevant indicators. But they do not and cannot establish nutrient reference values, nor do they replace the rigorous integration process and exercise of scientific judgment that characterizes DRI development. That process remains within the purview of the committee.

The committee actively identified other relevant studies not included in the AHRQ analyses or that were published after the close of the AHRQ analyses. These were included in the data consideration. Information from the committee’s open sessions as well as the work of committee consultants was also used. In this way, a totality of the body of evidence was established and carefully examined by the committee.

At the close of the literature review process, the committee selected the best indicators to serve as the basis of the DRI values (in Chapter 4). As shown in Chapter 5, the committee then moved to Step 2 in risk assessment, which was to consider the intake-response (or dose–response) relationships based on the available literature. The information identified in Chapter 4 underpins the conclusions reached in Chapter 5. As a result of these discussions, the committee specified first for the purposes of adequacy (EARs, RDAs, and AIs; Chapter 5) and then for preventing excess intakes (ULs; Chapter 6). Step 3 in risk assessment followed, during which the committee performed an intake assessment using current national survey data from the United States and Canada (Chapter 7). For vitamin D, consideration was given to the measures of serum 25OHD concentrations available from national surveys.

In the final step, Step 4, the committee outlined the implications of its work and discussed population segments of interest (Chapter 8). Medical conditions that may relate to special calcium or vitamin D nutriture are specifically outside the scope of the work for this committee and are not addressed in this report. However, a few prevalent clinical groups (e.g., premature infants) are mentioned briefly in Chapter 8. Finally, consistent with its charge, the committee identified research needs for the further development of DRIs for calcium and vitamin D (Chapter 9). Appendix A contains a glossary of terms, acronyms, and abbreviations. With the exception of the Summary and the tables that present the DRIs, this report expresses quantities of calcium as milligrams (mg) and quantities of vitamin D as International Units (IU). In some venues vitamin D is expressed as micrograms (μg) for which 1 μg is equivalent to 40 IU. Serum levels of

25-hydroxyvitamin D are expressed as nanomoles per liter (nmol/L), but are also often expressed elsewhere as nanograms per milliliter (ng/mL). Values expressed as nmol/L are divided by the conversation factor of 2.5 to obtain the equivalent measure in ng/mL. The Summary and the tables presenting the DRIs express vitamin D using μg as well as IU and express serum 25OHD levels using ng/mL as well as nmol/L.

In sum, Chapters 2 and 3 as developed provide background information about the basic biology of calcium and vitamin D for the readers of this report, but they are not central to the risk assessment process that forms the foundation for this report. The risk assessment approach begins with Chapter 4, which reflects a literature review and evaluation concerning potential indicators for development of DRIs for adequacy; at the close of the chapter, the indicator to be used for the development of DRIs for adequacy is identified. Chapters 5 through 8 contain discussions related to the other steps of risk assessment as specified in the generic model with Chapter 5 providing the reference values related to adequacy of calcium and vitamin D. Chapter 6 overviews the literature related to adverse events and specifies the ULs. Appendix B lists special issues of interest identified by the sponsors of this report and taken into account during committee deliberations.

Finally, it should be noted that this report is not intended to critique or reevaluate the specific conclusions arrived at in the 1997 DRI report related to calcium and vitamin D. This would not be appropriate given the closed nature of those deliberations as well as the specific charge to this committee, which was to review the state of the data currently and come to its own conclusions about DRI values. When necessary to clarify this committee’s conclusions, and as relevant to set these new reference values in context, mention is made of the 1997 report.

REFERENCES

Chung, M., E. M. Balk, S. Ip, J. Lee, T. Terasawa, G. Raman, T. Trikalinos, A. H. Lichtenstein and J. Lau. 2010. Systematic review to support the development of nutrient reference intake values: challenges and solutions. American Journal of Clinical Nutrition 92(2): 273-6.

Chung M., E. M. Balk, M. Brendel, S. Ip, J. Lau, J. Lee, A. Lichtenstein, K. Patel, G. Raman, A. Tatsioni, T. Terasawa and T. A. Trikalinos. 2009. Vitamin D and Calcium: A Systematic Review of Health Outcomes. Evidence Report No. 183 (Prepared by the Tufts Evidence-based Practice Center under Contract No. HHSA 290-2007-10055-I.) AHRQ Publication No. 09-E015. Rockville, MD: Agency for Healthcare Research and Quality.

Cranney A., T. Horsley, S. O’Donnell, H. A. Weiler, L. Puil, D. S. Ooi, S. A. Atkinson, L. M. Ward, D. Moher, D. A. Hanley, M. Fang, F. Yazdi, C. Garritty, M. Sampson, N. Barrowman, A. Tsertsvadze and V. Mamaladze. 2007. Effectiveness and Safety of Vitamin D in Relation to Bone Health. Evidence Report/Technology Assessment No. 158 (Prepared by the University of Ottawa Evidence-based Practice Center (UO-EPC) under Contract No. 290-02-0021). AHRQ Publication No. 07-E013. Rockville, MD: Agency for Healthcare Research and Quality.

IOM (Institute of Medicine). 1994. How Should the Recommended Dietary Allowances Be Revised? Washington, DC: National Academy Press.

IOM. 1997. Dietary Reference Intakes for Calcium, Phosphorus, Magnesium, Vitamin D, and Fluoride. Washington, DC: National Academy Press.

IOM. 1998. Dietary Reference Intakes: A Risk Assessment Model for Establishing Upper Intake Levels for Nutrients. Washington, DC: National Academy Press.

IOM. 2000a. Dietary Reference Intakes: Applications in Dietary Assessment. Washington, DC: National Academy Press.

IOM. 2000b. Dietary Reference Intakes for Vitamin C, Vitamin E, Selenium, and Carotenoids. Washington, DC: National Academy Press.

IOM. 2003. Dietary Reference Intakes: Applications in Dietary Planning. Washington, DC: The National Academies Press.

IOM. 2005. Dietary Reference Intakes for Water, Potassium, Sodium, Chloride, and Sulfate. Washington, DC: The National Academies Press.

IOM. 2006. Dietary Reference Intakes: The Essential Guide to Nutrient Requirements. Washington, DC: The National Academies Press.

IOM. 2008. The Development of DRIs 1994-2004: Lessons Learned and New Challenges: Workshop Summary. Washington, DC: The National Academies Press.

Moher, D. and A. C. Tricco. 2008. Issues related to the conduct of systematic reviews: a focus on the nutrition field. American Journal of Clinical Nutrition 88(5): 1191-9.

NRC (National Research Council). 1983. Risk Assessment in the Federal Government: Managing the Process. Washington, DC: National Academy Press.

Russell, R., M. Chung, E. M. Balk, S. Atkinson, E. L. Giovannucci, S. Ip, A. H. Lichtenstein, S. T. Mayne, G. Raman, A. C. Ross, T. A. Trikalinos, K. P. West, Jr. and J. Lau. 2009. Opportunities and challenges in conducting systematic reviews to support the development of nutrient reference values: vitamin A as an example. American Journal of Clinical Nutrition 89(3): 728-33.

Taylor, C. L. 2008. Framework for DRI Development: Components “Known” and Components “To Be Explored.” Washington, DC.

WHO (World Health Organization). 2006. A Model for Establishing Upper Levels of Intake for Nutrients and Related Substances: A Report of a Joint FAO/WHO Technical Workshop on Food Nutrient Risk Assessment. Geneva, Switzerland: World Health Organization.

Yetley, E. A., D. Brule, M. C. Cheney, C. D. Davis, K. A. Esslinger, P. W. Fischer, K. E. Friedl, L. S. Greene-Finestone, P. M. Guenther, D. M. Klurfeld, M. R. L’Abbe, K. Y. McMurry, P. E. Starke-Reed and P. R. Trumbo. 2009. Dietary reference intakes for vitamin D: justification for a review of the 1997 values. American Journal of Clinical Nutrition 89(3): 719-27.