3

Execution of the Modernization

and Associated Restructuring

This chapter focuses on the implementation of the Modernization and Associated Restructuring (MAR) of the National Weather Service (NWS) during the period of 1989 to 2000. The chapter provides an overview of the management and planning issues, technology upgrades, and the reorganization of field offices and the work force. The actual implementation is compared to the MAR execution objectives presented in the preceding chapter, and summarized in specific findings about the major aspects of the MAR.

The MAR was “the most complex project ever carried out in the Department of Commerce” at the time (Hayes, 2011). Implementation occurred during a period of rapid technological change (including the emergence of the Internet), and involved a number of major systems deployed across a geographically diverse nation, as well as several federal agencies and the direct participation of three National Oceanic and Atmospheric Administration (NOAA) line offices (NWS, the National Environmental Satellite, Data, and Information Service [NESDIS], and the Office of Oceanic and Atmospheric Research [OAR]). Any such undertaking requires rigorous management. A NOAA Deputy Assistant Administrator for Modernization was appointed to oversee the NEXRAD Joint System Program Office, the Office of Systems Development (which included the ASOS and AWIPS projects), the Office of Systems Operation, the Office of Hydrology, and the Transition Program Office. NWS established the Transition Program Office to support coordination activities between all the NWS offices involved in the MAR. Contracting, personnel management, external relations, and facilities construction was overseen by NOAA headquarters and the Department of Commerce (DOC; NRC, 1991).

Management Context and Constraints

To understand the MAR management, it is helpful to first identify key context and contemporary issues within which the MAR was implemented (NRC, 1980, 1991; NWS, 1989):

• Perception. The perspective was that NWS was in need of substantial improvement (Kraus, 2011; NRC, 1980); there were high expectations that the MAR would improve the agency.1

• Mission. The MAR did not seek to change the primary NWS role to be the nation’s authoritative source of weather information. However, the MAR did change the manner NWS interacted with other weather information sectors.

• Operating Model. The NWS operating model of free weather-related services to the nation was not questioned and did not change during the MAR.2

__________________

1This was true both formally and informally; the MAR was expected to provide a substantially better cost-benefit ratio than “business as usual” with payback of investment in 1.6 years (NIST, 1992).

2It had been questioned during the 1980s, with substantial discussion regarding privatization of some or all elements of NWS (Booz Allen & Hamilton Inc., 1983). Many national weather services in other countries use operating models that differ from NWS.

• International Obligations. NWS needed to maintain its international obligations, most notably through the World Meteorological Organization (WMO), and none were altered by the MAR.

• Budget. NWS and NESDIS are parts of NOAA and the DOC, and thus subject to NOAA and DOC considerations as well as their own.3 Furthermore, NWS worked with the Federal Aviation Administration (FAA) on the Automated Surface Observing System (ASOS), and FAA and the Department of Defense (DOD) for the Next Generation Weather Radar (NEXRAD).

• Downsizing Government. The NWS expected the MAR to increase the efficiency of its operations and downsize its organization with no degradation of weather services. The agency planned to reduce the number of field offices from 256 to about 120, and to reduce its staffing levels from a pre-MAR level of 5,100 to about 4,000 through restructuring and automation (GAO, 1995d; NWS, 1989).4 The long term net savings in staffing costs was used as part of the justification for the MAR (NWS, 1989).

• Performance Guarantee. Congressional Language (Public Laws 100-685 and 102-567) required certification that services did not degrade. This was an important factor in deciding how the MAR would be executed, with several key processes tied directly to this issue.

• Congressional Politics. In addition to agency-level political issues, NWS was highly sensitive to state, district, and local politics because of the national distribution of field offices and the plan to close or move many of them. There was high potential for politically-influenced congressional and Administration involvement, and resulting risks to the overall plan and delay, that played out in numerous Congressionally-requested reviews of individual office relocation plans (OAR, 2010) and even specific legislative direction for the location of particular offices (U.S. Congress, 1992).

• Labor Relationships. NWS had strong union participation at the field office staff level (National Weather Service Employees Organization; NWSEO). The MAR did not include plans to change the role of NWSEO, but the proposed change in workforce structure meant NWSEO and its members were strongly affected. Prior to the MAR, NWS had generally maintained limited interaction with NWSEO (NRC, 1994a).

• Partnerships. NWS depended on many partnerships with government, academia, media, and private sector entities. At the time of the MAR, some of these were generally strong (e.g., government, academia, research institutions, technology firms), others such as the media and private sector meteorology firms were informal to a fault, or simply absent.

• Shared Responsibilities. The MAR elements of ASOS and NEXRAD required shared responsibility with FAA and DOD. This inevitably introduced challenges from authority and coordinated budgeting. Within NOAA, the shared responsibility with NESDIS for satellites was important, but mostly handled in a cooperative and constructive way.

• Public-Private Interaction. A growing private sector marketing weather products was increasingly performing functions of data acquisition, modeling, and delivery of customized products. At the time, there was considerable friction between NWS and the private sector regarding perceived conflict of roles (NRC, 2003a).

• Completeness. The MAR did not focus primarily on some elements of the enterprise, such as the River Forecast Centers (RFCs). These, while proceeding along in development, did not receive the same priority in planning, implementation, and oversight as the other elements of the MAR.

Budget and Schedule

Information sources available to the committee are surprisingly poor for assessing budget and schedule performance of the MAR. The generally accepted authoritative source is GAO reports published throughout the MAR, which are rather sparse in their supporting details. The annual National Implementation Plans of the MAR documented budget requests; while not always identical to the funds expended, they provide some ability to interpret the GAO numbers.

__________________

3One anecdotal comment was that “[i]t is sometimes easier to get funding for new programs than for sustaining existing ones” (Kraus, 2011).

4Staff was ultimately reduced from 5,200 to 4,700 while changing the mix from one third meteorologists and two thirds technicians to the opposite (NRC, 1994a; Sokich, 2011). There were proposals for more dramatic staff reductions early in the planning stages (Booz Allen & Hamilton Inc., 1983).

TABLE 3.1 Cost and schedule performance of the MAR as documented in GAO reports.

|

|

||||||||

| MAR Element | Planned Cost ($M) | Final Cost ($M) | Planned Completion | Actual Completion | ||||

|

|

||||||||

| ASOS | 72*,a | 150**,b | 1990a | 1998c | ||||

| NEXRAD | 340a | 800†,b | 1989a | 1996b | ||||

| Satellite Upgrades†† | 640a,d | 2,000d | 1989a | 1994d | ||||

| Advanced Computer Systems |

47.5e | 106b | 1994e | 1999b | ||||

| AWIPS | 350a | 539b | 1995a | 2000b | ||||

| Other Costs (facilities, staff, R&D) |

∼500 | ∼1,000‡ | n/a | n/a | ||||

| TOTAL | 2,000a | 4,500 f,g | 1994a,h | 2000 | ||||

|

|

||||||||

aGAO (1991a); bGAO (2000); cNadolski (2011); dGAO (1997c); eGAO (1994); fGAO (1997a); gGAO (1998a); hGAO (1995b). Detailed information about each of these information sources can be found in the Reference list at the end of the report.

The planned cost for ASOS is in 1986 constant dollars; for NEXRAD is in 1980 constant dollars; for satellite upgrades is in 1991 constant dollars; and for AWIPS is in 1985 constant dollars.

*This cost was for 250 NWS locations initially planned.

**This cost was for the 314 NWS locations. The total cost of the 314 NWS, and 678 FAA and DOD locations was approximately $350 million (GAO, 2000).

†This cost was for the 125 NWS radars. The total cost of the 125 NWS, 12 FAA, and 29 USAF radars was approximately $1.2 billion (GAO, 2000).

††These budget figures are for the total GOES-Next system, including the government part of the effort, as well as the SS/L prime contract, the ITT subcontract, and various other subcontracts under SS/L (GAO, 1997c). The completion dates are for the launch of the first satellite in the series.

‡The actual amount of the Other Costs is hard to determine, but appears to be in the range of $900 to $1,200 million, as discussed in the text.

According to GAO initial MAR planning anticipated completion within 5 years of the formal MAR start5 within a budget of $2 billion (GAO, 1991a). From this and other GAO reports, it is possible to construct the overall view of cost and schedule performance shown in Table 3.1. Each element is described in more detail later in this chapter.

Executing on budget and schedule was among the biggest challenges of the MAR. From the start, cost overruns and schedule delays received considerable visibility in the GAO and at the Congressional level. Problems persisted throughout the duration of the MAR; it even achieved the GAO designation of a high-risk Federal program for 1995 and 1997. The many GAO reports addressing these issues are discussed later in this chapter and listed in Appendix B.

Unlike the GAO, this committee had the luxury of reviewing cost and schedule issues in hindsight. Given this freedom, the committee identified a framework of four questions within which the review was accomplished:

1. Do the budget and schedule numbers reported in GAO reports and summarized in Table 3.1 accurately reflect the cost and schedule performance?

2. Were the cost and schedule issues encountered during the MAR out of the ordinary for projects of comparable magnitude?

3. What were the root causes of the cost and schedule issues?

4. What lessons can be learned for the future?

Question 1: Do the budget and schedule numbers reported in GAO reports and summarized in Table 3.1 accurately reflect the cost and schedule performance? While GAO cost and schedule numbers appear correct as cited, assessment of MAR cost and schedule performance is highly dependent on the GAO’s definitions of when program elements started and what they included. The committee believes that the chosen definitions lead to a distorted picture of MAR schedule and budget performance.

First, GAO chose to compare actual costs in real year (inflated) dollars to planned costs in fixed year dollars for all program elements. The NEXRAD system, for example, was proposed in 1980 to not exceed $340 million in 1980 dollars (JSPO, 1980). By the 1988 planned completion, inflation had contributed

__________________

5While several GAO reports state that initial planning estimated that the MAR would be completed in 1994, the anticipated date of completion was in flux during the early stages of the MAR. The first National Implementation Plan, for example, estimated that the MAR would be completed in 1996 (NWS, 1990).

approximately a factor of 1.75 (the actual value depends on details of the year-by-year spend), suggesting the proposed cost should be adjusted to at least $600 million (even if it assumed that the original schedule had been maintained). Overall, inflation likely accounted for $800 million of the cited $2.5 billion cost overrun; planned costs should have been adjusted for this inflation by GAO for proper comparison.

Second, GAO chose a cost and schedule baseline (project start) going back as far as a decade before formal MAR initiation (e.g., 1980 for NEXRAD); although these projects were executed by NOAA, they preceded MAR management. An alternate approach might have been to use the date of MAR initiation and compare final cost and schedule to those estimated at MAR initiation. The cited figure of $2 billion for planned cost was updated to $4.6 billion as early as 1991; an updated baseline might substantially change the assessment of actual performance. Indeed, by the end of the MAR the GAO calculated the completion cost of the four major systems (ASOS, NEXRAD, Next Generation Geostationary Environmental Satellite [GOES-Next], and AWIPS) at $3.5 billion (GAO, 2000), well under the $4.2 billion expected by GAO in 1991 (GAO, 1991a).6

Third, some costs appear to have been improperly accounted for by GAO, such as inclusion of facilities in the NEXRAD cost prior to FY1992 (the original NEXRAD cost estimate explicitly excludes such costs). This cost was as much as $63 million per year in subsequent years; it is unclear how much from prior years is improperly included in the NEXRAD completion cost.

Fourth, it is not clear that all costs, such as the transient staff increase needed to execute the MAR, were properly included in the GAO reports. The difference between summing the program element costs shown in the table and the cited MAR total cost appears to correspond to MAR-related cost elements not included by the GAO but referenced in the NIP budgets. These internal R&D, construction, and temporary personnel costs were originally expected to be about $500 million. The actual cost is difficult to determine, but it appears to have been between $900 million and $1,200 million (NWS, 1990, 1991a, 1992b, 1993, 1994b, 1995, 1996c, 1997, 1998, 1999). If so, that would imply the total MAR cost was approximately $4.5 to 4.7 billion, comparable to the $4.5 billion cited by GAO. Other internal NWS costs essential to the MAR, such as the 1980s R&D work done on PROFS ultimately needed to implement AWIPS, are also not included. These would grow the cost further, though it is readily argued that such R&D should fall under normal operating budgets rather than the MAR.

In conclusion, the GAO cost numbers and schedules appear to be largely accurate based on a strict reading of GAO’s assumptions, but the ability to draw conclusions about MAR cost and schedule performance is limited by these assumptions. The strict GAO accounting implies a total MAR cost growth of 150 percent. The considerations described here suggest the actual value is considerably less under assumptions deemed more appropriate by the committee, but any particular number depends subjectively on the assumptions used.

Question 2: Were the cost and schedule issues encountered during the MAR out of the ordinary for projects of comparable magnitude? The answer to this question depends to some extent on the interpretation of Question 1 as to what cost and schedule issues should be attributed to the MAR. For comparison, recent studies of NASA programs having roughly comparable complexity show an average cost growth ranging from 33 percent (Emmons et al., 2007) to 45 percent (CBO, 2004), while transportation infrastructure projects have had average cost overruns of about 28 percent (Flyvbjerg et al., 2002). These account for the cost of inflation, whereas the GAO numbers for the MAR do not. When the inflation difference is included, and the external factors (such as the Challenger failure) are accounted for, MAR cost and schedule issues appear to be high but not substantially out of line with experience on similar projects. There is no question that issues with virtually all MAR elements persisted through MAR completion as documented in GAO reports. But one argument might be that while these were all the responsibility of NOAA, many of the issues were inherited by the MAR and should not be attributed to it. As much as $1 billion had been spent prior to the formal MAR initiation, and many of the issues that subsequently plagued these program elements were already committed by that time.

Question 3: What were the root causes of the cost and schedule issues? GAO reported extensively on the prob-

__________________

6GAO included the cost for the entire NEXRAD system in the 1991 estimate but only the NOAA portion in the 2000 summary.

lems with MAR elements in a contemporary context, but the root causes are not well described and are still difficult to identify from other sources. More than half of the total overrun occurred within the satellite upgrade program element alone. This overrun has been widely attributed to poor government oversight and technical problems encountered by the contractor (GAO, 1989, 1991b). While correct, a deeper analysis reveals two major external contributing factors that are poorly referenced in GAO summaries.

The first is inadequate initial costing of the launch component, a result of the lack of full cost-accounting associated with Shuttle launches that was used to help justify the Shuttle program at the time. Following the Challenger accident in 1987, GOES-Next switched to expendable launch vehicles and had to adjust launch costs to reflect market values.

The second is the cost-constrained government environment within which GOES-Next was conceived, leading to an ill-advised procurement plan, which eliminated a critical development phase while at the same time requesting substantial technology advances.7 While each of the MAR elements had distinct issues, the common internal contributing factor appears to have been weakness of the procurement process. In all cases, it is difficult to separate the relative roles of an inadequate government contracting process and poor contractor performance within the procurements. Examples of both can be identified. What can be said is that these issues were largely set in place prior to MAR initiation. MAR management appears to have taken repeated steps to recover; the fact that the accepted MAR expenditure of $4.5 billion (GAO, 2000) is actually lower than the 1991 estimate of $4.6 billion (GAO, 1991a) is a testament.

Question 4: What lessons can be learned for the future? Practical lessons unique to the MAR are difficult to identify beyond those that apply to the challenges of executing all large projects, of which there were many. These lessons could fill their own report. Certainly, contemporary issues, such as the 1980s debate about limited government and the planned use of non-market-cost shuttle launches, played a role. But no singular issue stands out as a clear MAR-specific lesson for the future readily identified in the history. The following should thus be viewed as informed opinions of the committee rather than a definitive analysis of MAR performance.

The MAR clearly suffered from poor ‘project initiation’ when its roots in the early 1980s are considered. It was pulled together from previously initiated program elements with different management teams, varying procurement experience, and only partially aligned objectives. There was no integrating architecture until well into the MAR. At some level, the problems with each program element were independent of the others. But a common theme was an attempt to do complex development with procurement processes not up to the task; ASOS: (GAO, 1995h); NEXRAD: (GAO, 1995f); GOES-Next: (GAO, 1991b); AWIPS: (DOC, 1992). Weak procurement processes lead to poorly-defined objectives, incomplete understanding of technical and programmatic risks, inadequate mitigation processes, overly rigid processes, and selection of contractors without sufficient experience or with design flaws in their proposals. Once these problems are set in place, program execution becomes a series of recovery actions. With the MAR, these issues had almost a decade to develop before coming under the MAR auspices. After MAR initiation, individual initiative seems to have been a critical element in completing the planned technological changes without further cost growth, although additional schedule delays occurred. The parallel development of a PROFS-based approach to replace the AWIPS contracted solution is one example—an excellent case of flexibility built into the process to recover from unanticipated problems. Decisions during the MAR undoubtedly contributed to further cost and schedule issues, but the most important lesson appears to be the need to establish a procurement process with sufficient definition yet adequate flexibility to accommodate the challenges of complex system development.

Organization and Staff

The MAR implemented significant changes in both organization and staffing. Prior to the MAR, the NWS culture was resistant to change. This was

__________________

7Specifically, the Phase B development phase was eliminated, something usually done only for systems that have little or no new technology development. The planned improvements included a switch from a spinning spacecraft to one that is three-axis stabilized and the corresponding switch from instruments that scan based on spacecraft motion to those that stare and perform scanning internally.

understandable, based on the experience with Automation of Field Operations and Services (AFOS), the only other significant technological upgrade that was implemented NWS-wide. Therefore, as the MAR plan was introduced the staff generally accepted that change was inevitable. They were motivated to evolve the culture (Glackin, 2011), though they were anxious about the uncertainties of change. Planners anticipated these issues, but it is not clear that the human dimensions of the change were fully appreciated. Staffing levels underwent a temporary increase: 5,100 prior to the MAR, about 5,400 during the MAR, and evolving to 4,700 today (Friday, 2011; GAO, 1995d; Sokich, 2011).8 Such a temporary increase was to be expected during the changeover from pre-MAR to post-MAR operations (GAO, 1995d), while at the same time ensuring the Congressional mandate for no degradation of service (U.S. Congress, 1988). NWS promised employees and NWSEO that any staff reduction would occur by attrition only (Friday, 2011).9 The stated commitment to retain and formally retrain staff was essential to maintaining morale as well as enlisting cooperation of NWSEO, with the shared story being that staff would be better off as a result. Many NWS field office staff members recall that the change they encountered was hard at the time, but with years of hindsight they now see the change as worthwhile (committee member WFO site visits, see Appendix C for list of WFOs visited). Staff at RFCs was also affected by changes in office locations and staffing profiles, as well as new technologies and procedures for working with the WFOs. Other staff, such as those at the National Centers, was also affected through the consolidation of the centers.

It is appropriate to ask what ongoing cost savings were achieved by this staffing reduction. The staffing mix was about one-third meteorologists and two-thirds technicians prior to the MAR and the reverse afterward, with an overall reduction from 5,100 to 4,700 (GAO, 1995d; NRC, 1994a; Sokich, 2011). Meteorologists are grade GS-12 to GS-14 employees while technicians are GS-9 to GS-11. With typical GS pay rates, this implies an increase in overall staff cost of about 7 percent, though a more thorough analysis with actual personnel data could reach a slightly different conclusion. Had the originally planned reduction to a staff level of 4,038 (GAO, 1995d) been achieved, a savings of 8 percent would have been obtained instead. Whether this originally planned staffing reduction was a target or a commitment is unclear. The MAR Strategic Plan (NWS, 1989) stated ambiguously “…lower costs associated with more accurate and timely warning and forecast services are accomplished while concurrently increasing the benefits…” Furthermore, cost savings are measured against a baseline, and NWS argued in part that the deployment of new technology would otherwise have required additional staff. “If the new technological network were constrained by the current field office structure, required staffing levels and overall costs would increase unnecessarily” (NWS, 1989).

Processes

The MAR was executed using a wide variety of processes. These included the following:

• Planning and Documentation. Several NWS and National Research Council reports (e.g., NRC, 1980) preceded the MAR and set the stage for what was expected from it. Execution plans were documented in a strategic plan (NWS, 1989), a sequence of annual implementation plans (e.g., NWS, 1990) that tracked progress, and a well-defined set of site-specific and transition plans. External reviews (e.g., General Accounting Office [GAO], Modernization Transition Committee [MTC], NRC) also contributed.

• Plan Execution. Analysis of these reports shows that execution largely followed the original plan. Real-time issues forced some key changes. One good example is the transition of the majority of the devel-

__________________

8It is noteworthy that this staffing level is small compared to weather agencies in some other industrialized countries, such as Japan and China and certainly for Europe as a whole where each country has its own meteorological service and several countries operate an equivalent of the NWS National Center for Environmental Prediction (e.g., United Kingdom, France, Germany, a joint Scandinavian Center) as well as the European Centre for Medium-range Weather Forecasts. For example, Japan cites staffing of 5,555 during FY2008 and countries such as Germany, United Kingdom, and France typically fall in the range 2,000 to 4,000.

9Primarily retirement, though some staff left because they did not like the required relocation or personal changes (such as retraining from being a meteorological technician to being a professional meteorologist).

opment of the Advanced Weather Interactive Processing System (AWIPS) from a contracted provider to a NOAA entity. All of the major system procurements required frequent adjustments to respond to technical and programmatic issues.

• Organizational Dynamics. The NWS placement within NOAA and DOC determined which processes were employed and how. In contrast to a major technological procuring agency like DOD, DOC, possibly with the exception of NESDIS, rarely undertakes an effort the size and scope of the MAR, and therefore must create essentially a one-time process and assemble staff to undertake the unique systems acquisitions. It follows that DOC has essentially no room for extended evaluation or internal budget and program adjustment. Each decision becomes a budget decision.

• Process Flexibility and Individual Initiative. A critical contribution to MAR success was the individual initiative to deviate from process where it made good sense. Persistence and individual initiative from senior staff and the general workforce was in many cases critical to success when process alone could not overcome impediments.

• Oversight. Many oversight bodies examined and influenced the MAR process. This topic is addressed more completely later in this chapter.

• Communication. The original MAR plan encouraged active communication channels with Congress, the private sector, NWSEO, oversight entities, and other stakeholders. The continuing communication and outreach to partners through these channels was critical to MAR success.

• Validation. The AFOS program of data collection established a performance baseline that enabled performance improvement validation. By the final MAR annual report (NWS, 1999), several statistics for improvements in tornado warning accuracy and lead time, flash flood warnings, hurricane landfall prediction, and other metrics were available. However, publically available, systematic, long-term validation of surface weather forecasts over the United States is not widely available outside the NWS.

• Commissioning. The commissioning process evolved from an initial ad hoc effort to a regular and repeatable process as the MAR progressed. This process satisfied the Congressional language mandating no degradation of services.

Finding 3-1

During the Modernization and Associated Restructuring (MAR) period from 1989 to 2000, the major components of the MAR were well planned and completed largely in accordance to that plan. Established processes were extensive and generally followed. However, notable budget overruns and substantial schedule delays occurred for nearly all of the project elements. This was due in large part to the MAR aggregating four major technology programs that had been separately initiated during the 1980s. Many of the MAR’s cost and schedule issues were set in place by decisions that occurred during this pre-MAR period.

As described in Chapter 2, the MAR included the development, procurement, and deployment of technologies in five major areas: surface observations, the radar network, satellites, computing upgrades, and a forecaster interface to integrate the data and information made available by the other elements of the modernization. The systems procured as part of the MAR all involved major technology upgrades, which require long lead times, on the order of many years, and in the case of satellite systems, on the order of a decade. One of the strengths of the MAR was the development, prototyping, and demonstration of operating concepts through a number of risk reduction activities. The MAR planning included the Modernization and Associated Restructuring Demonstration (MARD), which was intended to showcase the new capabilities of the modernized NWS (NWS, 1989, 1990). The Program for Regional Observing and Forecasting Services (PROFS) created a laboratory that used prototypes of NEXRAD and AWIPS to develop operating concepts for the post-MAR weather offices. These included the Denver AWIPS Risk Reduction and Requirements Evaluation (DAR3E) and the Norman AWIPS Risk Reduction and Requirements Evaluation (NAR3E), which assisted in transitioning PROFS prototypes into operation.

Automated Surface Observing System

As part of the MAR, the NWS cooperated with the FAA and the DOD to change the paradigm for

surface weather observing in the United States. The new observation strategy deployed automated sensors to perform much of the work previously done by human weather observers. The instrumentation suite was labeled the Automated Surface Observing System (ASOS). At the time of the MAR, staff at about 250 airports across the nation manually gathered surface airway observations (SAO). Staffing limitations prevented some SAO sites from operating 24 hours per day. The ASOS deployment plan increased the number of surface observation sites to about 1,000. In addition, ASOS allowed for the possibility of 24-hour operations, and more frequent observations than its SAO counterparts.

ASOS automatically collects surface weather data and electronically provides observations to weather observers, weather forecasters, airport personnel, pilots, air traffic control specialists, and other users. The system automatically collects, processes, and error checks data; and formats, displays, archives, and reports the weather elements included in the basic Aviation Routine Weather Report (METAR) and Aviation Selected Special Weather Report (SPECI). These data typically include temperature, pressure, wind, type and intensity of precipitation, runway visibility, sky condition, and ceiling height. To date, there are 1,009 ASOS stations deployed. These include 315 operated by NWS, 571 operated by the FAA, and 123 operated by the DOD (Nadolski, 2011). NWS electronics technicians (52 Full Time Equivalent [FTE]) conduct the operations and maintenance for NWS and FAA ASOS sites through an interagency memorandum of agreement (Nadolski, 2011).

The ASOS Preproduction Development contract ($34M) was awarded to competing industrial sources in April 1988. Program reviews were completed in October 1988 (Preliminary Design Review), in March 1989 (Hardware Critical Design Review), and in May 1989 (Software Design Review). The release of the Request for Proposals for the Deployment Phase of the ASOS contract occurred in June 1989. In 1990, a “limited production” run of 55 ASOS units for the three participating agencies were created (NWS, 1990). These limited production units supported other modernization prototype activities, primarily in the central and southern plains. AAI, Inc. won the production contract in February 1991 and provided for the balance of all required ASOS systems (Nadolski, 2011).

When AAI, Inc. was let the contract for full production of ASOS in the early 1990s, there were already 55 “limited production”-run ASOS sites located in the southern/central plains. McNulty et al. (1990) studied the Kansas ASOS sites and tried to determine whether ASOS resulted in improved forecasts. Although the results were inconclusive, it was clear that it was left to the scientific community to determine what metrics would be used to evaluate the success of ASOS. Over the next decade, numerous publications appeared that redefined the metrics, as well as gauged ASOS against those metrics. Some examples follow.

In 1993, an NRC report found problems with the reliability of ASOS (NRC, 1993), and in November 1994, commissioning of ASOS sites was halted (GAO, 1995h). Also in 1994, then NWS Director Joe Friday stated, “[o]perational use of ASOS has allowed the NWS to review ASOS performance in a real-world environment. This experience has confirmed that ASOS can provide timely and accurate observations for the aviation and meteorological communities” (Friday, 1994). On behalf of itself and its partner agencies, NWS had bought 617 units as of December 1994, and 491 of those had been accepted. Forty seven of the 491 accepted units had been commissioned (GAO, 1995h). No human observers had yet ceased recording surface observations.

In 1995, a General Accounting Office (GAO) report was commissioned that was the most critical of ASOS to date, stating that “ASOS’ overall reliability during 1994 winter testing, measured in terms of mean hours between critical system failures and errors, was only about one-half and one-third of specified levels, respectively” (GAO, 1995h). The report stated that reliability testing was not performed before deployment, so this problem surfaced after ASOS was deployed. The report documented that six of the eight ASOS system sensors did not meet contract specifications for accuracy or performance.

The 1995 GAO report led the NWS to develop a proposal to conduct limited tests comparing ASOS with manual observations for a period of six months at 22 commissioned and four noncommissioned ASOS sites. This ASOS Aviation Demonstration was designed to assess the “operational representativeness and system performance” of ASOS in different weather regimes (NWS, 1996a). At the time, “operational representa-

tiveness” was defined as “the ability to provide accurate and timely weather observations in support of aviation operations,” and “system performance” was defined as “the ability of ASOS to generate and transmit complete observations through the communications network” (NWS, 1996a). The Demonstration occurred in 1995, and the results were reported in an internal NWS document in February 1996 (NWS, 1996a). The Demonstration found that while there were some differences between automated and manual observations, “the operational representativeness and availability of the ASOS system was, in general, very good.” The Demonstration also highlighted a higher number of short duration failures than expected. Modifications to the sensor suite were developed to address this problem, and while they were not deployed during the Demonstration, commissioning of ASOS sites resumed based on expected improvements in the sensor suite (NWS, 1996a).

The main impetus behind the deployment of ASOS was achieving the cost and staff reduction goals of the MAR. This contributed significantly to gaining Congressional approval for the MAR. The deployment of ASOS enabled a reduction in the number of NWS field offices and reduced the staffing levels needed to make surface observations. The deployment of ASOS also shifted the NWS workforce toward one with fewer technicians and more professional meteorologists.

Next Generation Weather Radar

As noted in Chapter 2, the tri-agency NEXRAD program was well under way prior to the official beginning of the MAR. The NEXRAD program initially did not provide for adequate prototype demonstrations under operational conditions. An Initial Operational Test and Evaluation (Part 2) carried out by the USAF (1989) using the Unisys NEXRAD prototype provided an independent test that highlighted a number of problems requiring attention (NRC, 1991). These ranged from reliability concerns, software algorithms and documentation issues, to training programs. According to the GAO (1991a), since 1980 the schedule for completion of the NEXRAD system had slipped by seven years and the estimated cost escalated by a factor of more than four (though the latter was based on current-year dollars on both ends). Factors in addition to inflation contributing to the cost increase included an increase in the number and change in the types of units to be procured; inclusion of costs such as WFO construction, training, and logistics not incorporated in the original estimates; and technical and contractual problems.

Efforts to deal with these problems continued through the spring of 1991, when the tri-agencies and contractor reached a comprehensive settlement of contract claims and deficiencies. Meanwhile, in 1990 the option to start Full-Scale Production had been exercised and the first Limited Production Phase unit had been delivered. Further Operational Assessment took place with that unit in the spring of 1991. However, the reliability problems continued into the mid-1990s (GAO, 1995f).

The prototype and the first half-dozen fielded systems operated with circular polarization, mainly to facilitate the suppression of ground-clutter echoes (earlier operational weather radars operated with linear polarization). However, research on microwave propagation through rain had revealed a difference in the propagation velocity (and hence in the phase shift) of horizontally versus vertically polarized waves (e.g., Oguchi and Hosova, 1974; Seliga and Bringi, 1976), a property of the medium that would gradually degrade the circularly-polarized signal as it passes through. A circularly-polarized research weather radar had been operating in Alberta for some 15 years (McCormick, 1968) and this behavior of the circularly-polarized waves was known (e.g., Humphries, 1974). This unacceptable feature necessitated a redesign of the system and conversion of the already-fielded systems to linear polarization. The failure to account for the results of prior research in this case was a shortcoming of the JSPO operation.

The NEXRAD program was supported from the beginning in both engineering and scientific matters, first with an Interim Operational Test Facility (established about the time the NTR was issued) to assist in the development of hardware, software, and operational concepts. This organization transitioned to an Operational Support Facility (OSF; later renamed Radar Operations Center) to support deployment, maintenance, operation, application, and upgrade of the WSR-88Ds. As NWS field sites began making use of the Limited Production Phase radars in late 1991,

the OSF began operating a Hotline (eventually 24/7) to provide consultation with the field staff as questions and problems with the new system arose. The OSF supported deployment of the NEXRAD systems with a vigorous training program to help ensure effective operation and use of the new systems in the field. At the same time maintenance training was conducted at the NWS Technical Training Center. The NEXRAD Technical Advisory Committee monitored the evolving program and provided engineering and scientific advice and recommendations. OSF began issuing a series of software builds in 1995 to introduce solutions to identified problems and upgraded capabilities. Moreover, a NEXRAD Product Improvement Program was established to capitalize on continuing advances in technology and science underlying the processing and use of the radar data.

These aspects are pursuant to a trio of recommendations in the second report of the NRC’s National Weather Service Modernization Committee (NRC, 1992b):

Modernization must continue beyond the implementation of systems now being procured. Provision should be made to … take advantage of scientific developments as well as improved computational and information systems as they become available.

Steps should be taken to ensure the continued development and improvement of Next Generation Weather Radar processing algorithms as new developments and operational experience accumulate….

The National Weather Service and the National Oceanic and Atmospheric Administration should create technical advisory panels for each of the major systems that contribute to the technical modernization….

The first Full Scale Production NEXRAD was delivered in mid-1992, and the last of the initially planned NWS radars was installed in 1997. An NRC panel reviewed the nationwide coverage of the network in the mid-1990s and noted a few locations for which coverage was less satisfactory than that provided by the earlier systems (NRC, 1995b). Under the Congressional “no degradation of service” mandate, action was taken to provide better coverage to those locations. Three NEXRAD systems were added to the network in 1997-1998; another radar was installed in 2000, and yet another is to be added in 2012.

NEXRAD Information Dissemination Service

In the pre-MAR era, the NWS did not collect radar data at a central location and had limited capacity for redistribution of data from remote radar sites. In addition, users (researchers, universities, commercial companies, broadcasters, etc.) interested in collecting radar data, analyzing and studying it, and/or potentially redistributing it, had to provide their own communication equipment and the appropriate transmission lines (Baer, 1991). During the development of NEXRAD, a more robust capability to disseminate WSR-88D data to users was part of the design. The NWS outsourced this capability, through a competitive procurement, to four companies (Alden Electronics Inc., Kavouras Inc., Unisys, and WSI Corporation) and called the contractual agreement the NEXRAD Information Dissemination Service (NIDS). Through the NIDS agreement a suite of select WSR-88D base and derived radar reflectivity and velocity products (NIDS products) were made available to subscribers such as television stations, private weather forecasting companies, energy companies (gas and electric utilities), airlines, and other industries (Baer, 1991; Klazura and Imy, 1993; Morris et al., 2001; Pirone, 2011). Special subscriber status was provided via the NIDS contract to universities, and federal, state, and local government agencies. NIDS providers were allowed to charge such special subscribers for only the cost of delivery of the NIDS products, with restrictions on data redistribution. Alden, Kavouras, Unisys, and WSI each paid a one-time access fee of $780 per radar site and a recurring maintenance fee of $1,395 per site via a NIDS Access Agreement (Baer, 1991). The four NIDS providers were given exclusive rights to redistribute the radar data to recover their costs of collecting the data from all sites and providing it on a display terminal for quality control purposes at NWS headquarters.

During the transition from the WSR-57/74 radars to the WSR-88D radars, NEXRAD data was merged into the value-added radar products, including radar data mosaics, winter storm mosaics, and other innovative reflectivity-based radar products that have become commonplace and easily accessible through a multitude of media. It is clear that this acquisition strategy for radar data via NIDS allowed competitive market forces to provide benefits not only to the government,

but also to the weather industry, and ultimately the public. Despite these benefits, such dedicated vendor arrangements were problematic from a user perspective. Such arrangements have the unintended side effect of impeding hydrometeorological research and innovations in calibration and correction methodologies because they can make data difficult or costly to obtain. These arrangements are antithetical to the free flow of scientific data and information upon which the scientific enterprise is founded, as well as the operating model of the NWS. The NIDS contract expired on December 31, 2000, and with the intervening advances in communication technologies the NWS became the sole provider for NEXRAD data (NRC, 2003a).

Satellite Upgrades

The life cycle of a multi-satellite system procurement can be long relative to the upgrade or development of some of the other assets of NOAA. A full system procurement, including planning, design, build, integration, pre-launch test, launch, and on-orbit operational test activities, can easily extend over 10 years for a five-satellite system. Factors affecting the schedule include launch requirement date for each satellite, the number of satellites and instruments involved, changes in product requirements, and the design complexity of spacecraft and instruments. The upgrade goals for the geostationary system stated in the MAR Strategic Plan (NWS, 1989), as well as the plans for the NEXRAD network, had been under development well before the MAR, and may have been implemented in any case. However, it is likely that the MAR made the realization of the NEXRAD and satellite upgrade goals possible by gaining the necessary public support and financial support from Congress. The satellite system that was part of the MAR planning, referred to as GOES-Next, will be addressed here.

The desired polar system upgrades foreseen in the MAR Strategic Plan included all-weather atmospheric data (by implementing microwave imagers and sounders, for example). However, in May 1994 President Clinton signed a directive requiring DOD and DOC to integrate their separate satellite systems. The Defense Meteorological Satellite Program (DMSP) and the Polar Operational Environmental Satellite (POES) converged into a single, national system, the joint National Polar-orbiting Operational Environmental Satellite System (NPOESS; GAO, 1995c). This system and the associated program effort reflected the complexity involved when a single system is to meet the needs of multiple, diverse communities with differing requirements. NOAA did not manage NPOESS. Instead an Integrated Program Office had that responsibility. Thus it was not a part of the MAR and will not be addressed here.

The MAR included development and launch of the GOES-Next satellite system. NESDIS is the line office within NOAA responsible for satellite systems. Acting on behalf of NESDIS, the National Aeronautics and Space Administration (NASA) awarded a cost-plus-award-fee contract in 1985 to Space Systems/Loral, Inc. (SS/L, formerly the Ford Aerospace Corporation), with an instrument subcontract to ITT Corporation (GAO, 1991b). Five new satellites were to be developed and built, each with an imager and a sounder. GOES-Next system improvements ultimately resulted in the collection of substantially more weather data of higher quality. However, the program experienced several technical issues, and substantial cost and schedule overruns. The official estimate of the overall development cost increased over 200 percent, from $640M in 1986 to $2.0B in 1996 (GAO, 1997c). The costs include the government effort as well as the contractor effort. The launch of the first satellite was delayed from July 1989 to April 1994, leading to a potential gap in geostationary satellite coverage. Fortunately, NESDIS obtained use of a European METEOSAT, and avoided the threatened outage (NRC, 1997b). The second satellite (GOES-9) exhibited signs of imminent momentum wheel failure three years after launch and was taken out of operation (GAO, 2000). All five satellites were ultimately launched, becoming GOES-8 (launched April 1994), GOES-9 (May 1995), GOES-10 (April 1997), GOES-11 (May 2000), and GOES-12 (July 2001).

The development, execution, and technical problems that occurred during the program effort can be summarized as follows:

• Lack of preliminary analyses and ensuing design complexity. The typical engineering analyses usually required for a technical program were not authorized by NESDIS or required by NASA prior to GOES-Next development work. They concluded there was sufficient proof-of-concept in “body-stabilized” spacecraft and instrument design heritage, and NOAA was facing

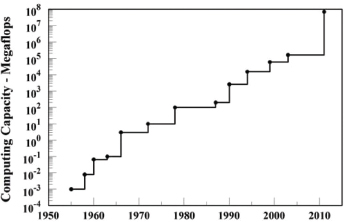

FIGURE 3.1 Growth trajectory of National Weather Service computational capacity since the beginning of the numerical weather prediction era in the mid-1950s. The y-axis units are floating point operations per second (‘flops’), which are a measure of computing power. SOURCE: Based on data from Kalnay et al. (1998) and VandenBerghe (2010).

budget and scheduling issues. Challenging stability/pointing requirements led to complex spacecraft and instrument designs. At the start of the program, NESDIS, NASA, and contractors did not fully recognize this challenge and complexity. Prior systems benefited from the Operational Satellite Improvement Program (OSIP), a NOAA-NASA agreement in effect from 1973 to 1981. With OSIP, NASA funded all development satellites that would later become NOAA operational systems. The elimination of OSIP by NASA resulted in NOAA having no engineering support to design, develop, and test new spacecraft and instrument technologies before incorporating them into the satellite systems (GAO, 1997b).

• Inadequate program management. ITT instrument work was subcontracted directly to SS/L. This led to inadequate NASA direction and a restriction of the necessary collaboration between NASA and ITT. Prior to this, NASA directly managed the instrument subcontracts.

• Poor contractor performance. Instrument problems resulted from lack of proper direction of the instrument subcontract by SS/L and poor staffing plans and quality of workmanship at ITT. Component problems caused a reduction in the expected operational life from five years to three years for the first two satellites (GAO, 1997c).

In spite of the technical, cost, and schedule issues associated with the program, it is important to stress the substantial improvements in the frequency, spatial resolution, and quality of the new GOES data. For the first time, the system provided simultaneous, independent, imaging and sounding on a continuous basis. These improvements were large steps in technical development that enabled the provision of data that enhanced the ability to study the atmosphere and improve forecasts. Additional detail is provided in the Satellites section of Chapter 4.

National Centers Advanced Computer Systems

Although National Centers10 computational facilities had undergone continuous upgrades prior to the MAR and have been upgraded frequently since the MAR (Figure 3.1), the time-period surrounding the MAR was coincident with the emergence of numerical weather prediction (NWP) skill and the advent of computing systems that could produce forecasts, for public dissemination, and use in a timely manner. At that time, it was foreseen that numerical forecast products, developed at NWS National Centers (then the National Meteorological Center [NMC]), would increasingly provide an informational backbone from which standardized analyses and forecasts would flow (NWS, 1989). The timely and consistent flow of forecast information from central computing facilities to forecast offices

__________________

10The National Centers are the NWS office responsible for providing worldwide forecast guidance products.

required state-of-the-art computational systems as well as upgraded telecommunication and digital display and analysis systems. Specifically, high performance computing required to support data ingest and data assimilation systems as well as numerical prediction models required a full order of magnitude greater capacity than was being used at the NMC at the beginning of the MAR. Additionally, the shift in the computational paradigm from shared memory supercomputers to massively parallel systems occurred during the MAR. Thus, the MAR specifically identified the procurement of the next generation of high performance computer as a key element in the modernization process. The final cost of the computer upgrades was $106 million (GAO, 2000). This procurement, along with the related realignment of the NMC into the National Centers for Environmental Prediction (NCEP) appears to have been an important element of the MAR and has played a significant role in the continued scientific and technological evolution of NWS prediction capabilities. In fact, it is thought that the continued upgrade of supercomputing facilities was and has continued to be “instrumental for improved models to support forecasts made by NWS meteorologists and by commercial forecasters and the private sector industry … [and has] ultimately led to [NWS’s] on time delivery of products” (Uccellini, 2011).

Advanced Weather Interactive Processing System

The Advanced Weather Interactive Processing System (AWIPS) was the cornerstone of the MAR. It was designed to receive, process, and integrate data from ASOS, NEXRAD, GOES, and other observing systems, as well as output and guidance from the National Centers and products originating at other international processing centers under the WMO World Weather Watch. AWIPS plays a critical role in the analysis of data and in the preparation and dissemination of weather-related products and services. It consists of a workstation-based system at WFOs and other NWS sites, and a satellite broadcast network (NOAAPORT) that connects to the AWIPS sites and supports data and product distribution. The WFOs use an IP network (OPSNET or NOAAnet) to communicate among themselves.

As discussed in Chapter 2, AWIPS was developed to address the problem of the obsolete Automation of Field Operations and Services (AFOS) system. In 1984 NWS formed an AWIPS Requirements Task Team (ARTT) composed of representatives of NWS administrative, development, and field offices, as well as what became the Forecast Systems Laboratory (FSL) in Boulder, Colorado. This task team worked closely with NWS meteorologists and hydrologists to obtain feedback on forecasting needs, and with competing contractors to obtain feedback on requirements costs and achievability. The work of the ARTT was used to refine and validate the AWIPS requirements; these requirements formed the basis of the functional requirements included in the AWIPS Request for Proposals (RFP) and the Development Phase contract.

As part of the process to refine and validate requirements, NOAA engaged in extensive prototyping of system functions and interfaces, involving forecasters in the effort. Early prototyping, begun in 1984, included development of a pre-AWIPS unit based on research code developed by the Program for Regional Observing and Forecasting Service (PROFS) at the FSL. This was essentially a workstation environment in the FSL laboratory. The PROFS/FSL process involved NWS forecasters in the development and test activities (GAO, 1993). FSL began placing the workstation in the Denver forecast office for forecasters to experiment with. The effort included personnel who worked at both FSL and the Denver forecast office, communicating workstation knowledge to forecasters and forecaster comments on utility back to FSL. Ultimately the effort led to the Denver AWIPS Risk Reduction and Requirements Evaluation (DAR3E) effort, which included a complete suite of hardware for workstations and servers. Once tested in the operational environment and stabilized in Denver, a similar system was placed at the Norman, Oklahoma forecast office for additional testing (NAR3E; NRC, 1992b).

The National Bureau of Standards (NBS) reviewed the AWIPS procurement plan for the 1990s and concluded the approach to the development of the requirements for AWIPS was sound (NBS, 1988). Based on a quantitative assessment of the anticipated data volume, the AWIPS requirements were considered to be a reasonable set of assumptions using modern proven technologies and techniques. In November 1988, after the four year long Requirements Phase, Definition Phase

contracts were awarded to two competing contractor teams, Computer Sciences Corporation and Planning Research Corporation (PRC; U.S. Congress, 1996). During this phase the contractor teams worked with NWS to further define and validate requirements and to develop competing designs for AWIPS. In December 1992, after an award date slip of over one year, PRC was selected as the Development Phase prime contractor to provide the AWIPS hardware, system software, and some portion of the hydrometeorological technique software (GAO, 1993). Various NOAA offices were to provide the remainder of the technique software for integration into AWIPS by PRC. In addition, PRC would provide the AWIPS Communications Network (ACN). The total deployment was projected to take four years (NRC, 1992b). The DAR3E activities continued as a parallel risk reduction and demonstration effort as PRC began work on the AWIPS contract.

After early successes in demonstrating the feasibility of system functions, design problems and disagreements between NOAA and PRC in 1993 and 1994 stymied progress (GAO, 1997e). These delays created concern that the deployment of AWIPS into the forecast offices would be substantially delayed and affect the capability of the NWS to utilize the data from the new observing components of the MAR. Accordingly, an AWIPS Independent Review Team (IRT) was formed. In its Final Report of June 1994 the IRT concluded that,

[a]lthough real progress has been made, the AWIPS program is currently at a standstill due to a combination of factors: complex requirements, contractor performance problems, lack of an accepted system design, contract and communication problems, and distributed leadership (AWIPS IRT, 1994).

The IRT analyzed the overall management responsibility and concluded that the major problems were distribution of authority and responsibility, and lack of an overall AWIPS system design. They concluded that elements of a successful AWIPS deployment would include

• NOAA assuming responsibility for system design, applications code development, and overall system performance;

• PRC retaining responsibility for the design and development of the AWIPS system components other than the applications code, integrating all the components, and working with NOAA to deploy AWIPS; and

• designing the development builds to evolve capability in smaller steps allowing more frequent integration and evaluation of the components, assuring early identification of problems, and easing the integration of AWIPS into the operations by testing the builds in increasingly realistic environments (AWIPS IRT, 1994).

Acting on the IRT recommendations, the NWS restructured the AWIPS program in 1994. With these changes, significantly more design and development responsibility was transferred to the government, in particular to the FSL. In August 1996 the NWS decided that FSL’s pre-AWIPS code was to form the core of the AWIPS WFO and RFC environment. The FSL system focused solely on WFO needs and RFC requirements were to be addressed by government development by the Office of Hydrology (OH) and local RFC applications. PRC retained design and development responsibilities for the National Control Facility. During the critical 1997 to 2002 development period, PRC and the government developers worked extremely closely on integration and test efforts. After development at PRC offices, development versions were released to government test facilities and field sites for testing before full scale deployment. This close working relationship was a major contributor to the success of the last five year push. During this period, all software releases occurred according to schedule, with no slips.

One concern expressed at the time was that research lab (e.g., FSL) software lacked quality assurance and configuration management processes for production-level software (GAO, 1997e), although this appears not to have been a major problem over the long term. Review reports also indicated that a very large, complex AWIPS requirements set may have contributed to the program problems. AWIPS consisted of about 22,000 requirements, grouped into about 450 higher-level capabilities. The AWIPS System/Segment Specification related about 75 percent of the capabilities to five broad functional areas. The early prototyping efforts and this functional breakdown of requirements were valuable in ensuring that proposed AWIPS capabilities were anchored in user needs. However, this did not ascertain whether the requirements were based on

mission-based goals. The requirements review process did not attempt to validate requirements back to mission improvements. These issues in the requirements development and validation process may have contributed to the contractor’s failure to develop a viable AWIPS design (GAO, 1996c).

A 1997 assessment of fiscal requirements noted that the first three AWIPS systems deployed in laboratory or forecast office settings (Boulder, Denver, and Norman) resulted in improvements in warning times and accuracy of some forecasts (Kelly, 1997). The assessment also concluded that like many large information technology programs, AWIPS had “experienced technical difficulties, cost growth and schedule delays” that appeared to have caused considerable oversight from external agencies and eventually resulted in a Congressional mandate to complete development and deployment activities within a $550 million cap.

Ultimately, AWIPS deployment in the field was completed in 2000, within the $550 million mandated spending cap, by a final build cycle. Software additions and enhancements continued beyond the MAR period, into 2002 and through the present. NOAA officials recognized that designing AWIPS was not an easy task. They also concluded it was probably unrealistic to expect a contractor to have the corporate knowledge—the understanding of operational weather forecasting and complex meteorological processes—necessary for successfully designing such a system (GAO, 1997b). The successes and failures of the AWIPS development process provide important lessons about how to satisfy multiple user needs; develop, validate, and manage requirements; instill operational software development standards; and determine a most effective work share between government and contractor based on the specific program goals.

The AWIPS program experienced delays and cost overruns but in the end it was considered a major success. The capabilities of AWIPS have improved the capability of WFOs to efficiently ingest, manipulate, and analyze tremendous amounts of data, thus helping to improve accuracy and timeliness of forecasts and warnings (Jackson, 2011).

Changes in the Technological Environment

As detailed plans for the MAR were formulated in the late 1980s, the telecommunications and computing environments were very different than what prevailed at the end of the MAR. AT&T (The Bell System) was broken up in 1984, and competitors were beginning to appear. This occurred first in voice lines followed in the late 1980s by data services. In the planned NEXRAD radar installations, the highest data rates were between the Radar Data Acquisition (RDA) unit at the radar site and the Radar Product Generator (RPG) in the WFO. The data rate needed to support this link was on the order of 1.5 Mb/s (megabits per second), a so-called “T1” link (Vogt, 2011).

In the late 1980s, commercial suppliers of T1 links charged thousands of dollars per month including a distance dependent charge (Wallace, 1988). The resulting costs were viewed as prohibitive and among the major factors leading to the colocation of radars and WFOs. Coaxial cable connection could be used over short distances without incurring any telecommunications charges.

During the 10-year rollout of the MAR, the costs for telecommunications and computing dropped precipitously. Moreover, the modern Internet burst on the scene during the 1990s. The first high-speed Internet backbone was NSFNET, which operated at T1, and then, in 1989, at T3 (45 Mb/s) speeds (Living Internet, 2011). The first web browser, Mosaic, appeared in 1993. Traffic on the Internet backbone grew at 15 to 20 percent per month during the mid-1990s, as thousands of networks made the technical changes enabling them to join the Internet. Data communications were revolutionized, both from a cost and a capability perspective. All this drastically changed the technical environment while the MAR was proceeding.

In addition to the impact on communication costs, the costs and capabilities associated with AWIPS also changed in real time. The FSL dealt with this change by creating a series of prototype AWIPS-style systems that were tested by selected forecast offices. The resulting feedback was used to improve, upgrade, and ‘harden’ the software (GAO, 1996c). Among other great advantages, this prototyping process incorporated the improvements in computing and telecommunica-

tions technologies that were occurring independently of the MAR.

In hindsight, telecommunications costs need not have been a major factor motivating the colocation of radars and WFOs. The time scale for major government technical initiatives is quite different than the time scale over which change occurs in digital technologies. Hence, federal programs with a large digital technology component need to be aware that prevailing costs and capabilities during the planning period are not appropriate for their forecasts of eventual costs and capabilities. This dilemma is not easily overcome and is a factor that needs to be taken into account. An agency capability for rapid prototyping and user-feedback during a major acquisition is one way of dealing with the reality of rapid technological change. Another can be leasing of computation capabilities as opposed to purchase, because provision can be made for constant upgrading of agency capability.

Finding 3-2

The various technological problems that were encountered included lack of preliminary analysis and ensuing design problems, inadequate program management, and poor contractor performance. These problems were generally overcome and the major technology system upgrades were successfully executed.

RESTRUCTURING OF FORECAST OFFICES AND STAFF

Restructuring of the NWS involved substantial reduction in the number of field offices, relocation and/or realignment of the functions performed at many of those offices, and staff changes including reduction in total numbers along with upgrading of the overall professional levels.

Consolidation of Offices

The 52 Weather Service Forecast Offices (WSFOs) and 204 Weather Service Offices (WSOs) were replaced by 122 Weather Forecast Offices (WFOs). The distribution of WFOs was based on attaining an even distribution of offices across the nation for equal service provision, and it generally followed the distribution of NEXRAD radars. Each WFO was assigned responsibility for forecasts and warnings for a county warning area (CWA) covered by its NEXRAD.

The transition to the new organizational structure required closing more than half the existing offices, a politically sensitive issue. The “no degradation of service” requirement of Public Law 100-685 called for a certification of no degradation before any office could be closed. An elaborate certification procedure was established to meet this requirement (U.S. Congress, 1988). It included commissioning of the newly-installed technologies (ASOS, NEXRAD, and AWIPS) and demonstration that forecasting and warning services could be provided to the CWA before the WSO or WSFO previously serving that area could be closed. The certification process was overseen by the Modernization Transition Committee (MTC), a Federal Advisory Committee.

Workforce

The field staffing was changed from a mix of about one-third professional meteorologists and two-thirds meteorological technicians before the MAR to the reverse after the MAR (Sokich, 2011). Meteorological technicians, while required to become certified in several important meteorological tasks, are not required to have a professional atmospheric sciences degree. Before the MAR, they were mainly responsible for observations, including radar, aviation surface weather, and upper air (via radiosonde) observations. In the WSOs, they were also responsible for issuing severe weather warnings (e.g., tornado, severe thunderstorm, flash flood) based on radar observations. Other duties included answering phones and attending to the NOAA Weather Radio. Meteorologists have professional atmospheric sciences degrees. Before the MAR, meteorologists were mostly found only at WSFOs. Generally at WSOs, the Meteorologist in Charge (MIC) was the staff person with a meteorology degree. At WSFOs, journeyman and lead forecasters held degrees in atmospheric sciences and were responsible for severe weather warnings within their area of responsibility, in addition to statewide aviation, marine, and public forecasts, discussions, and summaries. The lead forecasters at WSFOs also served as shift supervisors at their office while also overseeing the work of all WSOs under their jurisdiction.

With the revised makeup of WFO staff planned under the MAR, the question of bringing staff meteorological technicians up to the required levels of training arose. A program was established at San Jose State University to provide training equivalent to a B.S. degree in meteorology; support was offered to any of the meteorological technicians who wished to qualify for meteorologist positions. The program was free of cost to the technicians who participated. While not many went into the program (Sokich, 2011), it did allow some to upgrade their skills and thus bring the benefit of their experience into the modernization era. NOAA also initiated a Cooperative Agreement with the University Corporation for Atmospheric Research (UCAR) to implement the Cooperative Program for Operational Meteorology, Education, and Training (COMET). COMET, which still exists, provided professional development courses for operational forecasters (NWS, 1991a). Most of the training was intended to be taken through “distance learning” facilities. This was initially a challenge, but the advent of the Internet created a truly flexible capability for distance learning. NEXRAD training was provided in Norman, Oklahoma (NWS, 1991a), and was viewed favorably by the workforce (NRC, 1994a). Training on the new technologies was also provided for the electronics personnel. The initial plan was for maintenance, at least for ASOS, to be contracted out. However, it was determined that retraining existing electronics technicians would be more cost effective (Sokich, 2011).

In addition to training, the change in field office distribution required relocation of many staff, which caused some dissatisfaction within the workforce (NRC, 1994a). However, the upgrading of staff was accomplished without forced termination of any of the in-place personnel. The reduction in total staffing level was achieved primarily through retirements.

The National Weather Service Employees Organization (NWSEO) played a crucial role in the process, becoming more engaged than ever before in defending and helping define the future role of its constituent members. One proposal (Booz Allen & Hamilton Inc., 1983) was to reduce staff to less than 3,000 employees, down from the pre-MAR figure of 5,200. However, the final number of employees after the MAR was far greater (4,700) due in part to the efforts of NWSEO and NWS management (Friday, 2011; Hirn, 2011). Communication about the MAR between NWS management and field level staff was perceived as inadequate (NRC, 1994a). A 1994 NRC survey of employee attitudes about the MAR found that while 66 percent of respondents felt they received enough information about the new technologies, 61 percent felt they received too little information about the implementation process and timing of the MAR (NRC, 1994a). This lack of communication with field office employees likely contributed to some of the initial resistance to the MAR. The 1994 NRC survey found that within job categories, meteorological technicians were the least optimistic about the MAR (NRC, 1994a).

Before the MAR, each WSFO was led by a Meteorologist in Charge (MIC) and a Deputy MIC (DMIC). The DMIC had a diverse set of responsibilities, from personnel management of the WSFO staff and staff scheduling, to attending to media requests and educational outreach. The Deputy could well have been the most multidimensional person on staff. The MAR’s groundbreaking division of deputy duties into the Science Operations Officer (SOO) and the Warning Coordination Meteorologist (WCM) allowed for a more focused approach to two critically important tasks at the WFOs. These new positions were responsible for incorporation of ongoing scientific advances into WFO operations, and communication with the external user community, respectively. The SOO in essence was the office’s lead scientist, typically holding a Ph.D. or M.S. and a strong scientific background. This enhanced WFO staffing provides for improved forecast and warning performance by enabling increased situational awareness and recognition of evolving severe weather, speed and accuracy of issued warnings, and frequency and quality of “follow up” severe weather communications that augment the initial warning messages.

Changes in Customer Linkages

Customer service advanced significantly with the creation of the WCM position at WFOs. Before the MAR, outreach from NWS to the user community was spotty at best. As noted, this was one of many functions of the DMIC at most WSFOs. WSO sites were staffed by technicians focused on data acquisition and the issuance of storm-based warnings. In the few U.S. cities with a WSFO, ad hoc staff efforts to reach out

to the general population often superseded infrequent efforts from the MIC or DMIC (Santos, 2011).

Before the MAR, communication links were mostly one-directional. For example, media requests were typically handled on a case by case basis. Professional meteorologists were located at WSFOs in less than a quarter of the country’s main media markets. The creation of the WCM increased and strengthened the linkages between the NWS and media outlets. A strong partnership between the NWS and the media and emergency management community is crucial to facilitate timely and accurate delivery of lifesaving messages.

The field of emergency management was undergoing its own modernization during the decade of the MAR. The end of the Cold War provided the final incentive to transition away from the civil defense posture of earlier decades. The 1990s saw a significant shift to preparing for all hazards that face communities. There was a greater emphasis on preparedness by individuals and communities and on mitigation against future disasters.

The services of the NWS continued to be of great value to emergency managers (EMs) during the MAR. While at some field offices special telephone hot lines or radio communication devices provided a direct link between the NWS and EMs, there was no uniformity of linkages or services to the EM community. In some offices, state and local EMs were customers in the same manner and priority as an individual citizen. Those charged with first response to disasters received the NWS warnings at the same time and in the same manner as the general public. The local authorities then issued their own instructions about evacuation, sheltering, and other emergency measures.

The improvement in NWS warning times for tornadoes, flash floods, and other fast breaking events contributed to the overall time needed for action by local governments and individuals, but the process remained linear, with information passing from NWS to local governments, to individuals and households.

Finding 3-3a

The restructuring of offices and upgrading of staff brought more evenly-distributed and uniform weather services to the nation.

Finding 3-3b

During the early stages of the Modernization and Associated Restructuring, there was insufficient communication between National Weather Service management at the national level and the field office managers and their staff, as well as the employee union.

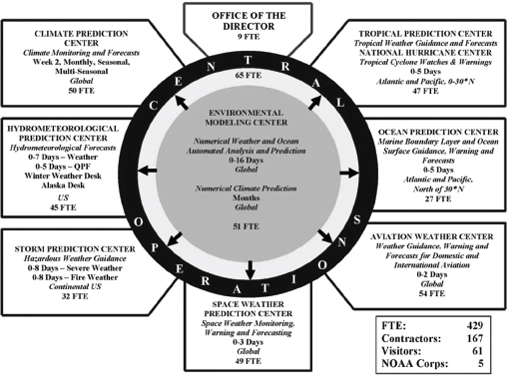

Concomitant with the goals of the MAR was the need to implement and sustain more science-based approaches to weather, climate, and hydrological prediction, and to rapidly assimilate evolving facets of information technology. To do so required restructuring of the relationship between WFOs, RFCs, and the various National Centers. At the time of the MAR, the National Meteorological Center (NMC) had six components: Automation, Development, and Meteorological Operations Divisions; the Climate Analysis Center; the National Hurricane Center; and the National Severe Storms Forecast Center (McPherson, 1994). The National Centers, as they exist today, serve to support many core activities of the NWS through the collection, ingest, analysis, and archival of weather, climate, oceanographic, space environment, and hydrology data; the development of data assimilation and numerical modeling systems; and the generation of many forecast products.