To understand the complex relationship of implementation and safety, this chapter presents key concepts of system safety and applies those concepts to the domains of health IT and patient safety.

Complex systems are in general more difficult to operate and use safely than simple systems—with more components and more interfaces, there are a larger number of ways for untoward events to happen. Safety of complex systems in a variety of industries, such as health care (IOM, 2001), aviation (Orasanu et al., 2002), oil (Baker, 2007), and military operations (Snook, 2002), has been the focus of substantial research, and a number of broad lessons have emerged from this research.

Safety is a characteristic of a system—it is the product of its constituent components and their interaction. That is, safety is an emergent property of systems, especially complex systems. Failure in complex systems usually arises from multiple factors, not just one. The reason is that the designers and operators of complex systems are generally cognizant of the possibilities for failure, and thus over time they develop a variety of safeguards against failure (e.g., backup systems, safety features in equipment, safety training and procedures for operators). Such safeguards are most often useful in guarding against single-point failures, but it is often difficult to anticipate combinations of small failures that may lead to large ones. Put differently, the complexity of a system can mask interactions that could lead to systemic failure.

Nevertheless, complex systems often operate for extended periods of time without displaying catastrophic system-level failures. This is the result of good design as well as adaptation and intervention by those who operate and use the system on a routine basis. Extended failure-free operation is partially predicated on the ability of a complex system to adapt to unanticipated combinations of small failures (i.e., failures at the component level) and to prevent larger failures from occurring (i.e., failure of the entire system to perform its intended function). This adaptive capability is a product of both adherence to sound design principles and of skilled human operators who can react to avert system-level failure. In many cases, adaptations require human operators to select a well-practiced routine from a set of known and available responses. In some cases, adaptations require human operators to create on-the-fly novel combinations of known responses or de novo creations of new approaches to avert failures that result from good design or adaptation and intervention by those who use the system on a routine basis.

System safety is predicated on the affordances available to humans to monitor, evaluate, anticipate, and react to threats and on the capabilities of the individuals themselves. It should be noted that human operators (both individually and collectively as part of a team) serve in two roles: (1) as causes of and defenders against failure and (2) as producers of output (e.g., health care, power, transportation services). Before a system-wide failure, organizations that do not have a strong safety- and reliability-based culture tend to focus on their role as producers of output. However, when these same organizations investigate system-wide failures, they tend to focus on their role as defenders against failure. In practice, human operators must serve both roles simultaneously—and thus must find ways to appropriately balance these roles. For example, human operators may take actions to reduce exposure to the consequences of component-level failures, they may concentrate resources where they are most likely to be needed, and they may develop contingency plans for handling expected and unexpected failures.

When system-level failure does occur, it is almost always because the system does not have the capability to anticipate and adapt to unforeseen combinations of component failure, in addition to not having the ability to detect unforeseen adverse events early enough to mitigate their impact. By most measures, systems involving health IT are complex systems.

One fundamental reason for the complexity of systems involving health IT is that modern medicine is increasingly dependent on information— patient-specific information (e.g., symptoms, genomic information), general biomedical knowledge (e.g., diagnoses, treatments), and information related to an increasingly complex delivery system (e.g., rules, regulations, policies). The information of modern medicine is both large in volume and highly heterogeneous.

A second reason for the complexity of systems involving health IT is the large number of interacting actors who must work effectively with the information. For example, the provision of health care requires primary care physicians, nurses, physician and nurse specialists, physician extenders, health care payers, administrators, and allied health professionals, many of whom work in both inpatient and outpatient settings.

The IT needed to store, manage, analyze, and display large amounts of heterogeneous information for a wide variety and number of users is necessarily complex. Put differently, the complexity of health IT fundamentally reflects the complexity of medicine. Safety issues in health IT are largely driven by that complexity and the failure to proactively take appropriate systems-based action at all stages of the design, development, deployment, and operation of health IT.

THE NOTION OF A SOCIOTECHNICAL SYSTEM

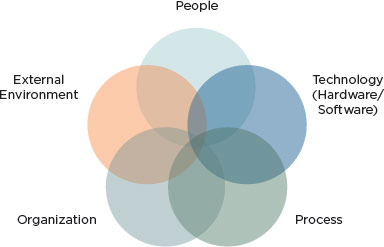

The sociotechnical perspective takes the approach that the system is more than just the technology delivered to the user. The overall system—the sociotechnical system—consists of many components whose interaction with each other produces or accounts for the system’s behavior (Fox, 1995). A sociotechnical view of health IT-assisted care might be depicted as in Figure 3-1.

For purposes of this report, the components of any sociotechnical system include the following:

• Technology includes the hardware and software of health IT, which are organized and developed under an architecture that specifies and delivers the functionality required from different parts of health IT, as well as how these different parts interact with each other. From the perspective of health professionals, technology can also include more clinically based information (e.g., order sets), although technologists regard order sets as the responsibility of clinical experts.

• People relates to individuals working within the entire socio- technical system and includes their knowledge and skills regarding both clinical work and technology as well as their cognitive capabilities such as memory, inferential strategies, and knowledge. The “people” component also includes the implementation teams that configure and support the technology and those who train clinical users. People are affected by technology—for example, the use of health IT may affect clinician cognition by changing and shaping how clinicians obtain, organize, and reason with

FIGURE 3-1

Sociotechnical system underlying health IT-related adverse events.

SOURCE: Adapted from Harrington et al. (2010), Sittig and Singh (2010), and Walker et al. (2008).

knowledge.1 The way knowledge of health care is organized makes a difference in how people solve problems. Clinicians’ interactions with the technology and with each other in a technology-mediated fashion—both the scope and nature of these interactions—are very likely to affect clinical outcomes.

• Process (sometimes referred to as “workflow”) refers to the normative set of actions and procedures clinicians are expected to perform during the course of delivering health care. Many of the procedures clinicians use to interact with the technology are prescribed, either formally in documentation (e.g., a user’s manual) or informally by the norms and practices of the work environment immediately surrounding the individual. Process also includes tasks such as patient scheduling.

• Organization refers to how the organization installs health IT, makes configuration choices, and specifies interfaces with health

![]()

1 As one illustration, the introduction of a computer-based patient record into a diabetes clinic was associated with changes in the strategies used by physicians for information gathering and reasoning. Differences between paper records and computer records were also found regarding the content and organization of information, with paper records having a narrative structure while the computer-based records were organized into discrete items of information. The differences in knowledge organization had an effect on data-gathering strategies, where the nature of doctor–patient dialogue was influenced by the structure of the computer-based patient record system (Patel et al., 2000).

IT products. Organizations also choose the appropriate clinical content to use. These choices reflect the organizational goals such as maximizing usage of expensive clinical facilities (e.g., computed tomography scanners, radiation therapy machines) and minimizing costs. Of particular relevance to this report is the organization’s role in promoting the safety of patient care while maximizing effectiveness and efficiency. Organization also includes the rules and regulations set by individual institutions, such as hospital guidelines for treatment procedures that clinicians must follow, and the environment in which clinicians work. In many institutions, the environment of care is chaotic and unpredictable—many clinicians are often interrupted in the course of their day, subject to multiple distractions from patients and coworkers.

• External environment refers to the environment in which health care organizations operate. Essential aspects of the environment are the regulations that may originate with federal or state authorities or with private-sector entities such as accreditation organizations. For example, health care organizations are often required to publicly report errors made in the course of providing care at a variety of levels, including the private-sector, federal, and state levels.

A comprehensive analysis of the safety afforded by any given health care organization requires consideration of all of these domains taken as a whole and how they affect each other, that is, of the entire sociotechnical system.2For example, an organization may develop formal policies regarding workflow. In the interests of saving time and increasing productivity, health care professionals may modify the prescribed workflow or approved practices in ways of which organizational leadership may be unaware. Workflow also can affect patient care, as in cases in which psychiatric patients in the emergency room are transferred from a psychiatric unit to a general medical unit. Units are often specialized to accommodate the needs of a particular group of patients; thus, the transfer of a psychiatric patient to the medical section may result in suboptimal or even inappropriate care for his medical condition (e.g., a medication associated with substance withdrawal not prescribed, or monitoring for withdrawal not performed) (Cohen et al., 2007).

A traditional perspective on technology draws a sharp distinction between technology and human users of the technology. The contrast between

![]()

2 A conceptual model of some of the unintended consequences of information technologies in health care can be found in Harrison et al. (2007).

a traditional perspective of technology and the sociotechnical perspective has many implications for how to conceptualize safety in health IT-assisted care. Perhaps most important, in the traditional perspective, health IT- related adverse events are generally not recognized as systemic problems, that is, as problems whose causation or presence is influenced by all parts of the sociotechnical system (see Box 3-1).

From a traditional perspective, software failures are primarily the result of errors in the code causing the software to behave in a manner inconsistent with its performance requirements. All other errors are considered “human error.” However, software-related safety problems can often arise as a misunderstanding of what the software should do to help clinicians accomplish their work. The representative of an electronic health record (EHR) vendor testified to the committee that a user error occurs when

BOX 3-1

Mismanaging Potassium Chloride (KCl) Levels Part I: A Sociotechnical View

As a result of multiple medication errors, an elderly patient who was originally hypokalemic (suffering from low levels of KCl) became severely hyperkalemic (suffering from high levels of KCl). To treat the original hypokalemia, the diagnosing physician used the hospital’s computerized provider order entry (CPOE) system to prescribe KCl to the patient. Although she used the coded entry fields to write the order, she also tried to limit the dose by communicating to the nurse the volume of KCl that should be administered because the CPOE system was not designed to recognize instructions written in comment boxes. However, the nurse either misinterpreted the note or did not read it and the patient received more KCl than the diagnosing physician intended. A second physician, not having been informed that the patient was already prescribed KCl, used the CPOE system to prescribe even more KCl to the patient. As a r esult of these and other errors, the patient became hyperkalemic (Horsky et al., 2005).

Here, the patient’s hyperkalemia was not a result of any coding errors in the CPOE system. However, when the CPOE system was closely scrutinized, it was discovered that the interface’s poor design (see Box 3-2) and failure to display important lab reports and medication history (see Box 3-3) were also major contributors to the patient’s hyperkalemia. The patient’s hyperkalemia was not solely caused by human or computer error. Instead, it was the result of combined interactions of poor technology, procedures, and people. The boxes throughout this chapter examine how these interactions all contributed to the hyperkalemia suffered by the patient.

a human takes some action under a certain set of circumstances that is inconsistent with the action prescribed in the software’s documentation.3In this view, the software works as designed and the user makes an error. However, such views can have negative consequences. In examining the traditional perspective, a National Research Council report (NRC, 2007) concluded that:

As is well known to software engineers (but not to the general public), by far the largest class of problems arises from errors made in the eliciting, recording, and analysis of requirements. A second large class of problems arises from poor human factors design. The two classes are related; bad user interfaces usually reflect an inadequate understanding of the user’s domain and the absence of a coherent and well-articulated conceptual model.

By blaming users for making a mistake and not considering poor human factors design, the organization accepts responsibility only for training the individual user to do better the next time similar circumstances arise. But the overall system and the interactions among system components that might have led to the problem remain unexamined. Better training of users is important but it does not by itself address issues arising because of the overall system’s operation. Other parts of the socio- technical system—such as organization, technology, and processes—could also have increased the likelihood of an error occurring (see Box 3-2). Of particular importance to technology vendors is that adopting this unsophisticated oversimplification that user error is the principal actionable cause essentially exonerates any technology that may be involved. If the problem arises because the user failed to act in accordance with the technology’s documentation, then it is the clinician’s organization that must take responsibility for the problem, and vendors may feel that they need not take responsibility for making fixes for other users that may encounter a similar situation or for improving the technology to make serious errors less likely.

Applying the concept of the sociotechnical system of which technology is a part, safety is a property of the overall system that emerges from the interaction between its various components. By itself, software—such as an EHR—is neither safe nor unsafe; what counts from a safety perspective is how it behaves when in actual clinical use.

By viewing technology as part of a sociotechnical system, a number of realizations can be made. First, although individual components

![]()

3 Testimony on February 24, 2011, to the committee by a health IT vendor defining “user error.”

BOX 3-2

Mismanaging Potassium Chloride (KCl) Levels

Part II: Medication Errors Can Result from Poor Design

Although the physician in Box 3-1 typed instructions as free text, the physician had also attempted to use the computerized provider order entry (CPOE) system’s coded order entry fields. However, the user interface design made it difficult to correctly write the orders. The CPOE system allowed KCl to be prescribed either by the length of time KCl is administered through an intravenous (IV) drip or by dosage through an IV injection. The CPOE system’s interface headings, denoting the two types of orders, were subtle and hard to see; therefore, the physician may have been confused as to whether she should place her KCl IV drip order by volume or by time. Further complicating matters, a coded entry field for drip orders was labeled “Total Volume,” which the physician may have interpreted as the total volume of fluid the patient will receive. However, the “Total Volume” field is meant to indicate the size of the IV drip bags (Horsky et al., 2005).

Here, instead of the CPOE system assisting the clinician in calculating the correct dosages, the system’s poorly designed interface serves as a hindrance and increases the cognitive workload placed on clinicians. The CPOE system’s design made it easy for clinicians to make a mistake. Vendors may claim that the software worked as designed and that clinicians should be better trained to use the design. However, it may be much more effective to appropriately design and simplify the CPOE system than to train all the clinicians to use a needlessly complicated system that requires an increased cognitive workload and is prone to misinterpretations. A safer system would be designed to make it difficult for a clinician to make a mistake that could result in harm to the patient.

of a system can be highly reliable,4 the system as a whole can still yield unsafe outcomes. Second, while no component of any system is perfect or 100 percent reliable, even “unreliable” components can be assembled into a system that operates at an acceptable level of reliability at the systems level even in the face of individual system element failures. Third, the distinction between “human error” and “computer error” is misleading. Human errors should be seen as the result of human variability, which is an integral element in human learning and adaptation (Rasmussen, 1985). This approach considers the human-task or human-machine mismatches

![]()

4 Reliability is used here as behavior that is consistent with the stated performance requirements of those components. Reliability does not speak to whether those stated requirements are correct.

as a basis for analysis and classification of human errors, instead of solely tasks or machines (Rasmussen et al., 1987). These mismatches could also stem from inappropriate work conditions, lack of familiarity, or improper (human-machine) interface design.

From the sociotechnical perspective, safety issues commonly arise from how practitioners interact with the technology in question. “Human error” in using technology can be more properly regarded as an inconsistency between the user’s expectations about how the system will behave and the assumptions made by technology designers about how the system should behave. In some cases, the system is designed in a way that induces human behavior, resulting in unsafe system behavior. Human behavior is a product of the environment in which it occurs; to reduce or to manage human error, the environment in which the human works must be changed (Leveson, 2009). This implies thoughtful design that can proactively mitigate the risk of harm when health IT is used.

Finally, when complex systems are involved, a superficial event-chain model of an unsafe event is inadequate for understanding such events but is often employed by unsophisticated organizations. This model is described in terms of event A (the unsafe event) being caused by event B (the “proximate cause”), which was caused by event C, and so on until the “root cause” is identified.

Many problematic events involving complex systems cannot be ascribed to a single causative factor. Although the model described above provides some information as to the cause, it fails to account both for the conditions that allowed the preceding events to occur and for the indirect, usually systemic factors that increase the likelihood of these conditions occurring. Furthermore, because the decision to terminate the chain at any given event is essentially arbitrary, the single root cause is frequently ascribed to human error, as though possible system-induced causes of human error need not be further investigated. Investigations that find human error to be the root cause, while common, are usually inadequate and result in corrective action that essentially directs people to be “more careful” rather than to examine the constellation of contributing factors that make a so-called human error more likely (see Box 3-3).

The primary lesson from this perspective on safety can be described as the following: “Task analysis focused on action sequences and occasional deviation in terms of human errors should be replaced by a model of behavior-shaping mechanisms in terms of work system constraints, boundaries of acceptable performance, and subjective criteria guiding adaptation to change” (Rasmussen, 1997).

BOX 3-3

Mismanaging Potassium Chloride (KCl) Levels

Part III: Medication Errors Caused by Multiple Factors

After receiving an excessive amount of KCl by the diagnosing physician, the patient described in Box 3-1 was prescribed an additional dose of KCl by a second physician. Although told by the first physician to review the patient’s KCl levels, the second physician was unaware that the patient was already being administered an excessive amount of KCl due to several factors. These factors included the following:

1. The previous physician did not explicitly inform the second physician that KCl was already ordered.

2. The KCl IV drip did not appear in the computerized provider order entry (CPOE) system’s medication list because IV drips are not displayed in the CPOE’s medication list.

3. The CPOE only showed the patient’s lab results before the administration of the KCl drip ordered by the first physician; therefore, the second physician only saw the patient’s previously low levels of KCl (Horsky et al., 2005).

Although the second physician had checked the previous physician’s notes, medication history, and lab reports, there was no indication that the patient was already receiving KCl. These factors, including the poorly designed CPOE interface, may not be identified in a single event chain, yet each independently contributed to the patient’s excessive KCl levels. Looking for a single “root cause” responsible for the patient’s adverse condition would fail to address the other factors that may continue to put future patients at risk.

THE NEED FOR AN EXPLICIT EVIDENCE-BASED CASE FOR SAFETY IN SOFTWARE5

Safety has no useful meaning for software until a clear understanding is achieved regarding what the software should and should not do and under what circumstances these things do and do not happen. (In this context, safety refers to claimed properties of software that make it safe enough to use for its intended purpose.)

When safety is at issue, the burden of proof falls on the software developer to make a convincing case that the software is safe enough for use. The audience for the case differs depending on the situation at hand.

![]()

5 This section is based in large part on Software for Dependable Systems (NRC, 2007).

For example, it may be the software vendor who must make the safety case to a prospective purchaser of its products, or to an entity that might provide a safety certification for a given product. Once the software has been developed, installed, and the relevant processes and procedures put into place for proper software use, it may be the health care organization that must make the safety case for the overall system—that is, the software as installed into a larger sociotechnical system—to an external oversight organization responsible for ensuring the safe operation of care providers.

Such a case cannot be made by relying primarily on adherence to particular software development processes, although such adherence may be part of a case for safety. Nor can the safety case be made by relying primarily on a thorough testing regimen. Rigorous development and testing processes are critical elements of software safety, but they are not sufficient to demonstrate it. Developing a comprehensive case for safety that can be independently assessed depends on the generation, availability, communicability, and clarity of evidence. Three elements are necessary to develop a case for safety:

• Explicit claims of safety. No software is safe in all respects and under all conditions. Thus, to claim software is safe, an explicit articulation is needed of the requirements and properties the software is expected to possess and exhibit in use and the assumptions about the environment in which the software operates and usage models upon which such a claim is contingent. Explicit claims of safety further depend on the inclusion of a hazard analysis. Hazard analyses ought to identify and assess potential hazards and the conditions that can lead to them so that they can be eliminated or controlled (Leveson, 1995).

• Evidence. A case for safety must argue that the required behavioral properties of the software are a consequence of the combination of the actual technology involved (that is, as implemented), users, the processes and procedures they use, and other aspects of the larger sociotechnical system within which the technology is embedded. All domains of the sociotechnical system must be taken into account in the development of a case for safety. Evidence acquired from testing the software will be part of this case, but “lab” testing alone is usually insufficient. The case for software safety typically combines evidence from testing with evidence from analysis. Other evidence also contributes to the safety case, including the qualifications of the personnel involved in the system’s development, the safety and quality track record of the organizations in building the system’s components, integration of the components into the overall system, and the process through which the software was developed. Furthermore, the safety case must present evidence that

use of the technology in the actual work environment by real clinicians with real patients demonstrates functioning without a level of malfunction greater than that specified in the design requirements.

• Expertise. Expertise—in software development and in the relevant clinical domains, among other things—is necessary to build safe software. Furthermore, those with expertise in these different contexts must communicate effectively with each other and be involved at every step of the design, development, and component integration process.

When software is complex, it can be difficult to determine its safety properties. An analytical argument for safety is easier to make when global safety properties of the software can be inferred from an analysis of the safety properties of its components. Such inferences are more likely to be possible when different parts of the system are designed to operate independently of each other.

Achieving simplicity is not easy or cheap, but simpler software is much easier for independent assessors to evaluate, and the rewards of simplicity far outweigh its costs (NRC, 2007). Pitfalls to avoid include interactive complexity, in which components may interact in unanticipated ways and a single fault cannot be isolated but it causes other faults that cascade through the software. Avoiding these characteristics both reduces the likelihood of failure and simplifies the safety case to be made.

Most important to developing a plausible case for safety is the stance that developers take toward safety. A developer is better able to make a plausible safety case when it is willing to provide safety-related data from all phases in the components’ or software’s life cycle, to ensure the clarity and integrity of the data provided and the coherence of the safety case made, and to accept responsibility for safety failures. One report goes so far as to assert that “no software should be considered dependable if it is supplied with a disclaimer that withholds the manufacturer’s commitment to provide a warranty or other remedies for software that fails to meet its dependability claims” (NRC, 2007).

With respect to health IT, it is not often that health care organizations make an explicit case for the safety of health IT in situ, and not often that vendors make an explicit case for the safety of their health IT products.

THE (MIS)MATCH BETWEEN THE ASSUMPTIONS OF SOFTWARE DESIGNERS AND THE ACTUAL WORK ENVIRONMENT

Generally, health IT software is created by professionals in software development, not by clinicians as content experts. Content experts are usually provided with multiple opportunities to offer input into the performance

requirements that the software must meet (e.g., users brief software developers on how they perform various tasks and what they need the software to do, and they have opportunities to provide feedback on prototypes before designs are finalized). Traditionally, technology development follows a process where users of the technology articulate their needs (or performance requirements) to developers. Developers create technology that performs in accordance with their understanding of user needs. Users then test the resulting technology and provide feedback to developers. Developers provide a new version that incorporates that feedback and, when users are satisfied with the technology, the developer assumes it is suitable for use in the user’s environment and delivers the technology. However, software developers and clinicians generally come from different backgrounds, making communication of ideas more difficult. As a result, these processes for gaining input rarely capture the full richness and complexity of the actual operational environment in which health professionals work and vary enormously from setting to setting and practitioner to practitioner.6

Deviations Versus Adherence to Formal Procedures

Indeed, in most organizations, guidance provided by formal procedures is rarely followed exactly by health professionals. Although this lack of user predictability can dramatically increase the difficulty for the software developer to deliver the degree of functional robustness required, deviations between work-as-designed and work-in-practice (work-in-practice is sometimes regarded as a workaround) are not necessarily harmful or negative. Such deviations are necessary under circumstances not anticipated by rules governing work-as-designed. In some cases, deviations are necessary if work is to be performed at all (Kahol et al., 2011).

Deliberate deviations between work-as-designed and work-in-practice are smallest when significant changes are made to the work environment— and the introduction of new technology usually counts as a significant change. Deviations are smallest during this period of introduction because practitioners are unfamiliar with the new technology and are learning about its capabilities for the first time. But, as practitioners become more familiar with the new technology, the limitations imposed by the new technology become more apparent in the local work environment. Practitioners thus develop modified—possibly unsanctioned—practices for using the technology

![]()

6 For example, Suchman (1987) argues that user actions depend on a variety of circumstances that are not explicitly related to the task at hand. In practice, the behavior of people varies if they are in the presence of other people (when they can ask for advice about what actions to take), for example if they are unusually pressed for time (in which case they may take possibly risky shortcuts).

that account for the on-the-ground requirements of doing work; this process is sometimes known as “drift” (Snook, 2002) or workarounds and reflects the phenomenon of local rationality in which practitioners are all doing reasonable things given their limited perspective but the modified practices result in poor outcomes (Woods et al., 2010).

Sometimes modified practices are needed to manage conflicting goals that arise in an operational environment (e.g., pressures for speedy resolution versus pressures for collecting more data) (Woods et al., 2010). Under some circumstances, adherence to prescribed procedures can indeed result in unsafe outcomes. Although modified practices may be required to make the overall system safer, the modified practices themselves can sometimes result in unsafe outcomes. Almost by definition, the situations for which the use of the modified practices is unsafe occur only rarely. Practitioners adopt the modified practices to cope more effectively with frequently occurring situations, but the modified practices have mostly not been developed with the rarely occurring situation in mind.

Herein lies a critical safety paradox. Practitioners following the prescribed procedures may be unable to complete all of their work, which may motivate them to use nonstandard or unapproved approaches. If a disaster occurs because they did not follow the prescribed procedures in a given instance, they may be blamed for not following procedures. As discussed previously, unsafe outcomes result not from human failures per se but rather from the way the various components of the larger sociotechnical system interact with each other.

Clumsy Automation

A particularly relevant illustration of mismatches between the assumptions of software designers and the actual work environment can be seen in the notion of clumsy automation (Woods et al., 2010). Clumsy automation “creates additional tasks, forces the user to adopt new cognitive strategies, [and] requires more knowledge and more communication at the very times when the practitioners are most in need of true assistance” (see Box 3-4). At such times, practitioners can least afford to spawn new tasks and meet new memory demands to fiddle with the technology, and such results “create opportunities for new kinds of human error and new paths to system breakdown that did not exist in simpler systems” (Woods et al., 2010).

Clumsy automation reflects poor coordination between human users and information technology. Even clumsy automation often offers benefits when user workload is low (which is why systems that offer clumsy automation are so often accepted initially), but the costs and burdens of such automation become most apparent during periods of high workload, high criticality, or high-tempo operations.

BOX 3-4

Mismanaging Potassium Chloride (KCl) Levels

Part IV: Poor Performance Due to Clumsy Automation

The computerized provider order entry (CPOE) interface described in Box 3-1 did not have one screen that lists previous medication and drip orders, up-to-date laboratory results, or whether the patient is currently receiving a KCl drip (Horsky et al., 2005). Here, clinicians may need to switch between different display windows to ascertain all the information needed to complete KCl calculations. This requires the practitioner to enter keying sequences that are quite arbitrary and to remember what was on previous screens as he switches between them. The practitioner becomes the de facto integrating agent for all such data and hence bears the brunt of all the cognitive demands required for such integration (Woods et al., 2010). Furthermore, practitioners who work in a chaotic interruption-driven environment must turn their efforts to many other tasks before they have completed the task on which they are currently working. In such an inadequately designed environment, it is easy for a practitioner to lose context, to get lost in a multitude of windows, and to regain context of the interrupted task only partially, resulting in a higher risk of patient harm.

The use of a computerized interface—usually a video display screen—to display data can provide examples of the phenomenon of clumsy automation. Poorly designed computerized interfaces tend to make interesting and noteworthy things invisible when they hide important data behind a number of windows on the screen (Woods et al., 2010). Thus, practitioners are forced to access data serially even when the data are highly related and are most usefully viewed in parallel.

SAFETY REPORTING AND IMPROVEMENT

The safety of a system may degrade over time if attention is not given to ensuring system safety. Over time, technology changes as fixes and upgrades are made to the applications and the infrastructure on which those applications run, and the changes may often not be systems based and may be made without considering their impact on operational tasks. Experienced personnel depart and inexperienced personnel arrive. External regulations and institutional priorities both evolve, and thus operating procedures change.

When such changes are large, they are often accompanied by formal documentation that modifies existing work-as-designed procedures. But more often, changes to work-in-practice occur with little formal documentation.

As the system’s work-in-practice drifts farther away from work-as- designed, the likelihood of certain unsafe outcomes increases, as discussed above. For this reason, safety-conscious overseers of the system will audit the system from time to time so that they can identify budding safety problems and take action to forestall them.

But it is hard to know where to look for problems in a system that appears to be performing safely. Thus, all parties responsible for safety must make it easy for practitioners to report circumstances that result in actual harm and also to report close calls that could have resulted in harm if they had not been caught in time.

In addition, because the society in which the U.S. health care system is embedded (that is, society writ large) generally seeks to apportion responsibility and fault for actual harm, health professionals—who are in the best position to know what actually happened in any given accident—often have incentives to refrain from reporting fully or at all when unsafe conditions occur. Thus, information that is needed to improve the safety of health care—and of health IT-assisted care in particular—is likely to be systematically suppressed and underreported. Reporting mechanisms must therefore be structured to offer countervailing incentives for such reporting. Safety analysts often point to the “Just Culture” principles for dealing with incident reporting (Global Aviation Information Network Working Group E, 2004; Marx, 2001; Reason, 1997). Based on the notion that the safety afforded by an organization can benefit more by learning from mistakes than by punishing people who make them, a Just Culture organization encourages people to report errors and to suggest changes as part of their normal everyday duties. People can report without jeopardy, and mistakes or incidents are seen not as failure but as an opportunity to focus attention and to learn. Thus, information provided in good faith is not used against those who report it.

The Just Culture organization recognizes that most people are genuinely concerned for the safety of their work, and it takes advantage of the fact that when reporting of problems leads to visible improvements, employees need few other motivations or exhortations to report. In Leveson’s words, “empowering people to affect their work conditions and making the reporters of safety problems part of the change process promotes their willingness to shoulder their responsibilities and to share information about safety problems.… Blame is the enemy of safety… [and] when blame is a primary component of the safety culture, people stop reporting incidents” (Leveson, 2009).

The idea that safety is an emergent property of a sociotechnical system is easy to acknowledge in the abstract. But in fact, the implications of

taking such a view challenges many widespread practices found in health IT vendors and health care-providing organizations. Vendors often focus on the role of technology when safety is compromised, and they pledge to fix any technology problems thus found without addressing the human- interaction component in the overall functioning of the technology as an inextricable component of health IT as a clinical tool. Because complex systems almost always fail in complex ways (a point noted in safety examinations in other fields7), health care organizations must focus on identifying the conditions and factors that contribute to safety compromises. They must pledge to address these conditions and factors in ways that reduce the likelihood of unsafe events rather than superficially focusing only on single root causes. Failure to acknowledge that technology-related problems that are encountered are a product of larger systems-based issues will result in the implementation of countermeasures that will fall far short with regard to the reduction of risk to the patient.

The fact that a sociotechnical system has multiple components that interact with each other in unpredictable ways means that an isolated examination of any one of these components will not yield many reliable insights into the behavior of the examined component as it operates in actual practice. This point has implications for technology developers in particular, who must develop products that can fit well into the operational practices and workflow (which are usually nonlinear) of many different health care organizations. The next chapter suggests various levers with which to improve safety.

Baker, J. A. 2007. The report of the BP U.S. Refineries Independent Safety Review Panel. http://www.bp.com/liveassets/bp_internet/globalbp/globalbp_uk_english/SP/STAGING/local_assets/assets/pdfs/Baker_panel_report.pdf (accessed September 28, 2011).

Cohen, T., B. Blatter, C. Almeida, and V. L. Patel. 2007. Reevaluating recovery: Perceived violations and preemptive interventions on emergency psychiatry rounds. Journal of the American Medical Informatics Association 14(3):312-319.

Fox, W. M. 1995. Sociotechnical system principles and guidelines: Past and present. Journal of Applied Behavioral Science 31(1):91-105.

Global Aviation Information Network Working Group E. 2004. A roadmap to a just culture: Enhancing the safety environment. http://204.108.6.79/products/documents/roadmap%20to%20a%20just%20culture.pdf (accessed August 9, 2011).

Harrison, M. I., R. Koppel, and S. Bar-Lev. 2007. Unintended consequences of information technologies in health care—an interactive sociotechnical analysis. Journal of the American Medical Informatics Association 14(5):542-549.

![]()

7 This point was noted in the report of the Board investigating the Shuttle Columbia disaster (http://spaceflightnow.com/columbia/report/006boardstatement.html) and in the investigation of the Deepwater oil rig explosion (http://www.deepwaterinvestigation.com/go/doc/3043/1193483/).

Horsky, J., G. J. Kuperman, and V. L. Patel. 2005. Comprehensive analysis of a medication dosing error related to CPOE. Journal of the American Medical Informatics Association 12(4):377-382.

IOM (Institute of Medicine). 2001. Crossing the quality chasm: A new health system for the 21st century. Washington, DC: National Academy Press.

Kahol, K., M. Vankipuram, V. L. Patel, and M. L. Smith. 2011. Deviations from protocol in a complex trauma environment: Errors or innovations? Journal of Biomedical Informatics 44(3):425-431.

Leveson, N. 1995. Safeware: System safety and computers. Reading, MA: Addison-Wesley.

Leveson, N. 2009. Engineering a safer world: Systems thinking applied to safety. http://sunnyday.mit.edu/safer-world/safer-world.pdf (accessed June 1, 2011).

Marx, D. 2001. Patient safety and the “just culture”: A primer for health care executives. New York, NY: Columbia University. Available at http://psnet.ahrq.gov/resource.aspx?resourceID=1582.

NRC (National Research Council). 2007. Software for dependable systems. Washington, DC: The National Academies Press.

Orasanu, J., L. H. Martin, and J. Davison. 2002. Cognitive and contextual factors in aviation accidents: Decision errors. In Linking expertise and naturalistic decision making, edited by E. Salas and G. Klein. Mahwah, NJ: Erlbaum Associates. Pp. 209-226.

Patel, V. L., A. W. Kushniruk, S. Yang, and J.-F. Yale. 2000. Impact of a computer-based patient record system on data collection, knowledge organization, and reasoning. Journal of the American Medical Informatics Association 7(6):569-585.

Rasmussen, J. 1985. Trends in human reliability analysis. Ergonomics 28(8):1185-1195.

Rasmussen, J. 1997. Risk management in a dynamic society: A modelling problem. Safety Science 27(2-3):183-213.

Rasmussen, J., K. Duncan, and J. Leplat. 1987. New technology and human error. New York: John Wiley & Sons.

Reason, J. 1997. Managing the risks of organizational accidents. Hants, England: Ashgate.

Snook, S. A. 2002. Friendly fire: The accidental shootdown of U.S. Blackhawks over northern Iraq. Princeton, NJ: Princton University Press.

Suchman, L. 1987. Plans and situated actions: The problem of human machine communication. New York: Cambridge University Press.

Woods, D., S. Dekker, R. Cook, L. Johannesen, and N. Sarter. 2010. Behind human error, 2nd ed. Burlington, VT: Ashgate.