Identifying and Improving Students’

Conceptual Understanding in

Science and Engineering

One way to conceptualize undergraduate education is as a process of moving students along the path from novice toward expert understanding within a given discipline. To achieve this goal, it is important to begin by identifying what students know, how their ideas align with normative scientific and engineering explanations and practices (i.e., expert knowledge), and how to change those ideas that are not aligned.

Undergraduate science and engineering learning, like all learning, occurs against the backdrop of prior knowledge that students bring to the learning experience. Chi (2008) presents three levels of prior knowledge. In some situations, students may have no prior knowledge of the topic at hand. For example, at the start of a semester, students in an introductory Earth science class know the general concept of time yet have no knowledge about the significance of the geologic time periods. In such situations, learning can be viewed as adding new knowledge. In other situations, students may have correct but incomplete knowledge. For example, students in an introductory chemistry class may remember that the periodic table of the elements is arranged such that the elements in a particular column all have similar chemical properties, but that might be the extent of their knowledge about the information to be found in the periodic table. In these cases, learning can be conceived of as filling in the gaps. Finally, students may have incorrect knowledge that conflicts with the material to be learned, such as when students in an introductory biology class believe that lizards are more closely related to frogs than to mammals (Morabito, Catley, and Novick, 2010). In this case, learning involves conceptual change.

Research indicates that students at all levels, from preschool through college, enter instruction with various commonsense but incorrect interpretations of scientific and engineering concepts and skills (e.g., Chinn and Brewer, 1993), such as the well- known misconception1 that the change in seasons is caused by changes in Earth’s distance from the sun, rather than the tilt of Earth’s axis (Schneps and Sadler, 1987). Some of these ideas are more firmly rooted than others, and thus are more resistant to change (Vosniadou, 2008a).

This chapter focuses on what is known about college students’ conceptual understanding of science and engineering. To place discipline-based education research (DBER) in context, the chapter begins with a brief consideration of the broader knowledge base on students’ conceptual understanding, including different theoretical perspectives. The chapter then summarizes DBER on conceptual understanding and on instructional practices to promote conceptual change and concludes with a summary of the key findings and directions for future research.

In this and subsequent chapters, the committee uses expert-novice differences and understandings as a framework for conceptualizing DBER findings. However, we recognize that expertise lies on a continuum, and we were guided by a relevant maxim from cognitive science that it takes 10 years for someone to acquire expertise in a domain (e.g., Ericsson, Krampe, and Tesch-Römer, 1993). Students are not expected to become experts within a single class, or even across the four years of their undergraduate education. They are, however, expected to progress along the path of increasing expertise. Thus, our frame of reference for this discussion is focused on helping students move toward the more expert end of the continuum.

DIFFERENT PERSPECTIVES ON

CONCEPTUAL UNDERSTANDING

Understanding what students know about science is the focus of considerable inquiry in cognitive science, educational psychology, and K-12 science education research (National Academy of Sciences, National Academy of Engineering, and Institute of Medicine, 2005; National Research Council, 1999, 2007). A key principle emerging from this research is that:

__________________

1In this report, we use the term “misconceptions” to mean understandings or explanations that differ from what is known to be scientifically correct. We recognize that other research refers to these explanations as “alternate conceptions,” “prior understandings,” or “preconceptions,” and that the different terms can reflect different perspectives. When we use the term “misconceptions,” we are following the convention of most DBER on this topic.

Humans are viewed as goal-directed agents who actively seek information. They come to formal education with a range of prior knowledge, skills, beliefs, and concepts that significantly influence what they notice about the environment and how they organize and interpret it. This, in turn, affects their abilities to remember, reason, solve problems, and acquire new knowledge. (National Research Council, 1999, p. 10)

Not all of students’ ideas align with accepted science and engineering explanations, even if they are sensible and rooted in experience (National Academy of Sciences, National Academy of Engineering, and Institute of Medicine, 2005). Some research has focused on categorizing incorrect knowledge. In this regard, Chi (2008) argues that incorrect knowledge can be assigned to one of three levels, and that the approach to changing incorrect knowledge depends on the level of that knowledge:

1. Incorrect beliefs at the level of a single idea. An example is the false belief that all blood vessels have valves (Chi, 2008). In situations such as these, refutation might help students to change their beliefs.

2. Flawed mental models representing an interrelated set of concepts. For example, many students have a mental model of the human circulatory system as a single loop, rather than the correct model of a double loop (Chi, 2008; Pelaez et al., 2005). In these types of cases, multiple incorrect beliefs need to be corrected, ideally leading to the transformation of students’ mental models. Although instruction is often successful in promoting such transformation, some students may instead assimilate correct concepts into their flawed mental model when those particular concepts do not directly contradict their model (also see Chinn and Brewer, 1993).

3. Assignment of core concepts to laterally or ontologically inappropriate categories. Examples of this type of incorrect knowledge include categorizing mushrooms as nonliving rather than living or believing that force is a substance-like entity that can be possessed, transferred, and dissipated, rather than a process. Such misconceptions have been found to be highly robust and resistant to change (Chi, 2005). In these cases, instruction needs to be focused at the categorical level, first teaching students the nature of the relevant categories so they can understand the concept in question as a member of that appropriate category.

Chi’s (2008) tripartite taxonomy represents an eclectic approach to thinking about students’ incorrect knowledge. Although she makes the forceful claim that most (perhaps all) robust misconceptions are due to lateral or

ontological miscategorizations, other researchers are unconvinced that all instances of robust misconceptions can be classified as categorical mistakes. Indeed, a vibrant current area of research in cognitive science concerns the nature of students’ initial, incorrect understandings of scientific concepts and phenomena (see Vosniadou, 2008b, for a thorough review of this literature).

One perspective is the “theory view,” which suggests that students’ concepts in a particular domain are coherent, systematic, and interrelated, essentially having the status of a naïve “theory.” Although proponents of this view take different stances on the nature of such naïve theories, they share the view that students’ knowledge is coherent (Vosniadou, Vamvakoussi, and Skopeliti, 2008). A contrasting perspective, the “pieces” view, proposes that students’ naïve concepts are fragmented, piecemeal, and highly contextualized (diSessa, 2008). Although there may be some coherence across the numerous pieces of knowledge, this coherence does not rise to the level of even a naïve theory. Of course, these different perspectives on the nature of students’ intuitive scientific knowledge may both be true, but for different areas of science. diSessa, Gillespie, and Esterly (2004) suggest that the extent to which to everyday experiences are connected to a particular set of scientific beliefs may be relevant, with the naïve theory view being more relevant when experiential knowledge is low and the pieces view being more plausible when it is high.

OVERVIEW OF DISCIPLINE-BASED RESEARCH

ON CONCEPTUAL UNDERSTANDING

Similar to scholars in other fields, DBER scholars have devoted considerable effort to identifying, documenting, and analyzing students’ conceptual understanding (and misunderstandings). Indeed, investigations into the causes of students’ reasoning difficulties and inaccurate beliefs about the physical world have dominated the physics education research literature since the 1970s (see Bailey and Slater, 2005, and Docktor and Mestre, 2011, for reviews, and see McDermott and Redish, 1999, for a list of approximately 115 studies related to misconceptions in physics). Likewise, with approximately 120 chemistry papers published on this topic between 2000 and 2010, students’ conceptual understanding is one of the most active lines of inquiry in chemistry education research (see Barke, Hazari, and Yitbarek, 20092). Considerably less research has been conducted on students’ conceptual understanding in engineering (16 studies published between 2000 and 2010 as identified by Svinicki, 2011), biology (17 studies published between 2001 and 2010 as identified by

__________________

2For a web-based bibliography of students’ conceptions, see http://www.ipn.uni-kiel.de/aktuell/stcse/stcse.html [accessed March 26, 2012].

Dirks, 2011; see Tanner and Allen, 2005 for a review3), the geosciences (79 studies published between 1982 and 2010 as identified by Cheek, 2010), and astronomy (Bailey and Slater, 2005). Although most of these studies focus on courses taken by majors and nonmajors in the first two years of college, a limited body of research also exists on upper division and graduate courses.

Research Focus

For each field of DBER, initial research in this area often has involved cataloguing incorrect understandings and beliefs and identifying those that are more difficult to change than others. Across the disciplines, much of this research is predicated on the assumption that instructors need to know what their students already know, because prior knowledge can either interfere with or facilitate new learning (National Research Council, 1999). This research is sometimes coupled with instructional techniques that are designed to move students toward a more accurate understanding of the concepts at hand (see “Instructional Strategies to Promote Conceptual Change” in this chapter). When linked to the primary instructional goals of a discipline, these efforts represent an important first step in improving student learning. Most DBER on conceptual understanding in engineering, biology, the geosciences, and astronomy education research currently has this focus. As research on conceptual understanding within a discipline progresses, researchers seek linkages among the existing catalog of misconceptions. Identifying an underlying structure allows for the eventual development of more general instructional strategies that have the potential to address large classes of misconceptions rather than addressing them one at a time. Research on student understanding and knowledge construction in chemistry and physics have this focus. In physics two examples of this research are facets and P-Prims (diSessa, 1988; Minstrell, 1989), both of which are based on the perspective that student knowledge is characterized by fragmented pieces perspective. In chemistry, a different perspective—Ausubel, Novak, and Hanesian’s (1978) construct of meaningful learning—has proved useful to identify fragments of information that students have memorized but not connected in a coherent, conceptual framework.

__________________

3Several web-based compilations of biology also exist. See for example, http://departments.weber.edu/sciencecenter/biologypercent20misconceptions.htm and http://teachscience4all.wordpress.com/2011/04/08/aaas-science-assessment-beta-items-for-assessing-misconceptions/ [accessed February 28, 2012].

Methods

DBER scholars use a variety of assessment tools and research methods to measure students’ conceptual understanding. These tools and methods include concept inventories (CIs); indepth interviews, concept maps, and concept sketches; surveys; and observations of students. In this section, we describe these methods, their strengths, and their limitations in terms of generating insights into students’ understanding of concepts that are central to a discipline. Because these methods are commonly used to study a variety of topics in DBER —including those discussed in Chapters 5, 6, and 7—we elaborate on them here.

Concept Inventories

CIs are used to assess students’ preconceptions, to measure changes in response to a particular treatment, and to compare learning gains across individual courses dealing with a particular area of the discipline. Most commonly used in introductory courses, CIs are generally in a multiple-choice format, with incorrect responses (distractors) based on common misunderstandings or erroneous beliefs that have been identified by the literature (D’Avanzo, 2008; Libarkin, 2008). (See Box 4-1 for a description of one approach to developing a CI.) One exception is engineering, where the process of developing engineering CIs is generating much of the knowledge about student misconceptions in engineering, instead of the other way around (Reed-Rhoads and Imbrie, 2008).

With a long history in formative assessments (Treagust, 1988) and earlier lines of research, CIs initially gained traction in introductory physics with the development and widespread use of the Force Concept Inventory (Hestenes et al., 1992). CIs have since become increasingly common in other disciplines. In 2008, one researcher estimated that 23 CIs were in use across various science domains, with several others under development (Libarkin, 2008). The scope and quality of these CIs vary, as does the extent to which they have been validated.

Concept inventories (e.g., Mulford and Robinson, 2002) are not as widely used in chemistry as the Force Concept Inventory is in physics. In chemistry, misconceptions mostly have been identified by diagnostic assessments such as those developed by Treagust (1998) and conceptual exams developed by the American Chemical Society Examinations Institute.4

A particular strength of CIs is that their development often includes an identification of the most important concepts and learning goals for

__________________

4See http://chemexams.chem.iastate.edu for available examinations, study materials, and other resources related to these examinations [accessed March 25, 2012].

BOX 4-1

Development of the Genetics Concept Assessment

The following description summarizes the process of developing the genetics concept assessment, which is a concept inventory designed to measure learning gains from pre-test to post-test. The developers of this discipline-specific concept inventory followed the development process for concept inventories in other disciplines (Smith, Wood, and Knight, 2008, p. 423):

1. Review literature on common misconceptions in genetics.

2. Interview faculty who teach genetics, and develop a set of learning goals that most instructors would consider vital to the understanding of genetics.

3. Develop and administer a pilot assessment based on known and perceived misconceptions.

4. Reword jargon, replace distracters with student supplied incorrect answers [to pilot questions], and rewrite questions answered correctly by 70 percent of students on the pre-test.

5. Validate and revise the Genetics Concept Assessment through 33 student interviews and input from 10 genetics faculty experts at several institutions.

6. Give current version of the Genetics Concept Assessment to a total of 607 students, both majors and nonmajors, in genetics courses at three different institutions.

7. Evaluate the Genetics Concept Assessment by measuring item difficulty, item discrimination, and reliability.

a discipline or subdiscipline (Libarkin, 2008; Smith, Wood, and Knight, 2008; see Box 4-1). When they assess what experts in the field deem as central concepts, CIs provide a helpful structure for future research on conceptual understanding, and for the development of interventions to promote understanding that is aligned with normative scientific explanations. CIs are also useful because they can be used in large classes and across a range of students, allowing for greater generalizability of results; for longitudinal studies of the prevalence of certain misconceptions; and for disaggregating responses from multiple institutions along such dimensions as class size, type of institution, geographic setting, and demographic group (McConnell et al., 2006). However, as with all multiple-choice tests, CIs necessarily address a relatively coarse level of knowledge and provide no guarantee that a student who answers such a question understands the

concept. Research has indeed shown that students may answer CI questions correctly even when they do not understand the concept (O’Brien, Lau, and Huganir, 1998). In addition, only erroneous ideas that are specifically targeted by the CI can be examined; CIs do not uncover new misunderstandings. Moreover, in the case of the Force Concept Inventory, scholars have debated about exactly what the CI is testing and the centrality of those concepts to the discipline (Hestenes and Halloun, 1995; Huffman and Heller, 1995).

Interviews, Concept Maps, and Concept Sketches

DBER scholars commonly use indepth interviews to probe students’ conceptions. Such interviews are typically conducted with one student at a time, and the typical sample size for most interview studies is fewer than 20. As in the social sciences, these interviews range from structured interviews with a fixed set of questions exploring a student’s responses on a survey, CI, or other assessment of their understanding, to open-ended interviews that elicit students’ thoughts and motivations, uncover common misconceptions, explore students’ thinking processes, and examine their metacognition (see Chapter 6 for a discussion of metacognition or students’ thinking about their learning processes).

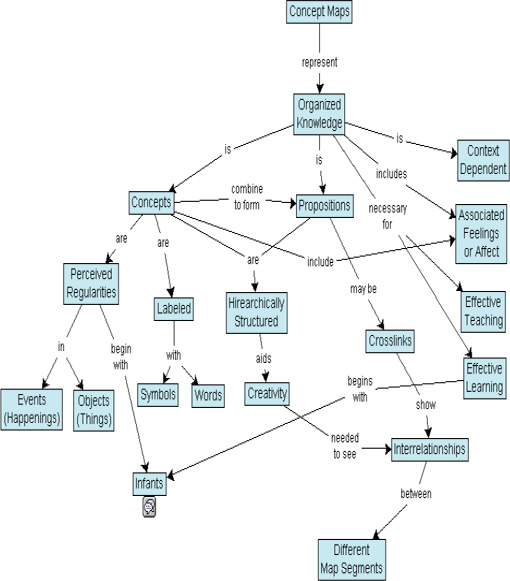

DBER scholars also sometimes use concept mapping to assess conceptual understanding. Developed by Novak in the 1970s (Novak and Gowin, 1984), concept maps are designed to provide a nonlinear, two-dimensional impression of how students relate (and interrelate) a list of concepts. Typically the concepts in question are linked by words or phrases to indicate how they are related (see Figure 4-1 for an example). Concept maps and concept sketches have been used to assess conceptual understanding in chemistry (Lopez et al., 2011); for various purposes in engineering (Besterfield-Sacre et al., 2004; Heywood, 2006); and in the geosciences to measure conceptual change that has occurred after instruction and to reveal students’ understanding of processes, concepts, interrelationships, and key features (Englebrecht et al., 2005; Johnson and Reynolds, 2005; Rebich and Gautier, 2005). Like all methodologies, concept maps have limitations. When used as assessment tools, they can be difficult to score and difficult to compare to a “correct” concept map because there is never just one correct concept map. Also, no inferences can be drawn from any ideas students omit from the map. And although the collective body of research using these tools generates insights into a wide variety of concepts, not all of these concepts are central to expert understanding of the discipline.

FIGURE 4-1 Example of a concept map.

SOURCE: English Wikipedia user Vicwood40 (2005). Available: http://en.wikipedia.org/wiki/File:Conceptmap.gif [February 21, 2012; printed as posted].

Surveys

In-class surveys or surveys across multiple classes or institutions are another mechanism for understanding students’ ideas and conceptual understanding. These surveys typically contain forced-choice and/or open-ended questions about specific aspects of the course content or learning experiences. Surveys mix the relative ease of implementation and analysis of concept inventories with the more open-ended nature of interviews. However, self-reported data can be unreliable—even with carefully designed surveys—so results must be interpreted with caution (Marsden and Wright, 2010). Moreover, the degree to which instructors use surveys that they develop themselves limits the degree to which results can be generalized.

Given their relative strengths and limitations, the research methods described above should be used in concert to bring out the full timbre of students’ understanding, then combined with instruction to promote more expert-like understanding. An example from biology education research illustrates the limitations of relying solely on a single mode of assessment. In a study of misconceptions about blood circulation held by undergraduate biology students who were prospective elementary teachers, Pelaez et al. (2005) integrated methods of identifying common misconceptions with learning activities designed to deepen understanding. These assessment methods included pretest drawings, peer reviewed essays, debates that required students to integrate knowledge, written exams, and oral final exams that consisted of probing interviews. The authors concluded that “Multiple data sources were necessary to expose many errors about circulatory structures and functions. Drawings combined with individual interviews provided the richest source of information about student thinking. Relying solely on the essay exam would not have uncovered the magnitude of the problem” (Pelaez et al., 2005, p. 178).

UNDERGRADUATE STUDENTS’ UNDERSTANDING

OF SCIENCE AND ENGINEERING CONCEPTS

For every discipline in this study, DBER has revealed that undergraduate students have misunderstandings and incorrect beliefs related to a wide range of concepts (Bailey and Slater, 2005; Barke, Hazari, and Yitbarek, 2009; Cheek, 2010; Dirks, 2011; Docktor and Mestre, 2011; McDermott and Redish, 1999; Svinicki, 2011; Tanner and Allen, 2005). Across the disciplines, students have difficulties understanding interactions or phenomena that involve very large or very small spatial scales (e.g., Earth system processes, the particulate nature of matter, quantum mechanics) and take very long periods of time (e.g., natural selection, Earth history). Considering that students often use their own experiences to generate scientific explanations,

it stands to reason that they have difficulties with concepts for which they lack a frame of reference (National Academy of Sciences, National Academy of Engineering, and Institute of Medicine, 2005; National Research Council, 1999, 2007).

Although DBER on upper division and graduate courses is currently relatively limited, the available research suggests that many incorrect understandings and beliefs are highly resistant to change (Orgill and Sutherland, 2008; Rushton et al., 2008). For example, a body of research on thermodynamics shows that some incorrect beliefs students hold in high school, chiefly that chemical bonds release energy when they break, still remain after students complete several undergraduate courses in chemistry (Canpolat, Pinarbasi, and Sözbilir, 2006; Sözbilir, 2002, 2004; Sözbilir and Bennett, 2006). Organic chemistry students also have difficulties understanding hydrogen bonding—a topic that is fundamental to understanding the chemical properties of organic molecules, and that is first encountered in introductory chemistry (Henderleiter et al., 2001). In general, students are able to define hydrogen bonding, but they have trouble using hydrogen bonding to predict properties of molecules. Even more striking, some graduate students in chemistry doctoral programs still harbor confusion about phase changes—a topic first taught in elementary school (Bodner, 1991). A common misconception about phase changes is that bubbles in boiling water are made of air rather than of water vapor (Nakhleh, 1992).

As described in Chapter 1, a defining characteristic of DBER is deep disciplinary knowledge of the topic under consideration. For measuring students’ conceptual understanding, this knowledge is vital to identifying concepts that are central to a given discipline, and identifying the expert-like understandings that are the goals of instruction on those concepts. Some, but not all, DBER studies on conceptual understanding involve concepts that are central to the discipline. As discussed under “Concept Inventories” several existing concept inventories have included an explicit identification of the concepts that are vital for students to gain a more expert-like understanding of the discipline. For example, engineering CIs are being developed in response to standards developed by the accrediting agency ABET (see Chapter 2); these CIs primarily address ABET criteria A (an ability to apply knowledge of mathematics, science, and engineering) (Reed-Rhodes and Imbrie, 2008).

Beyond CIs, some notable examples of research that is central to the discipline include the body of research on temporal and spatial scales in the geosciences (Catley and Novick, 2009; Cheek, 2010; Hidalgo, Fernando, and Otero, 2004; Teed and Slattery, 2011; Trend, 2000); seasons and moon phases in the Sun-Earth-Moon system in astronomy (Schneps and Sadler, 1987; Zeilik et al., 1997, 1999, 2000, 2002); and research on the three domains of Johnstone’s triangle in chemistry, discussed next.

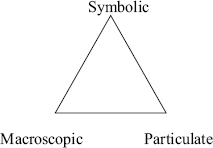

FIGURE 4-2 Johnstone’s triangle representing the three central domains of chemistry.

Johnstone’s triangle (1982) portrays three central components of chemistry knowledge: the macroscopic, particulate, and symbolic (letters, numbers, and other symbols used to succinctly communicate chemistry knowledge) domains (see Figure 4-2). Because chemists expect students to develop fluency with all three of these domains and understand their connections to one another (Johnstone, 1982, 1991), assessing students’ understanding of each domain is an important line of chemistry education research. That research has shown that students have trouble understanding all three domains, and that difficulties understanding the particulate nature of matter represent one of the most important barriers for students (see, for example, Gabel, Samuel, and Hunn, 1987; Yezierski and Birk, 2006).

Understanding the structure of matter at the particulate level is critical to understanding the behavior and interactions of molecules. During their general chemistry courses, students learn to construct Lewis structures, which show the arrangement of atoms, bonds, and electrons in a molecule. Faculty then use these structures to explain molecular shape and subsequently emphasize how shape and electron distribution influence physical properties such as polarity, solubility, and miscibility. However, as discussed in Chapter 5, several studies have shown that students have difficulty constructing Lewis structures, and their representations of molecules do not necessarily improve over time (Cooper et al., 2010; Nicoll, 2003).

INSTRUCTIONAL STRATEGIES TO

PROMOTE CONCEPTUAL CHANGE

When students harbor known misunderstandings and incorrect ideas or beliefs about concepts that are fundamental to a discipline, moving toward more expert-like understanding can involve conceptual change, or helping to align their beliefs with accepted science and engineering ideas. Teaching for conceptual change requires that instructors understand and explicitly

address these everyday conceptions and help students to refine or replace them (National Academy of Sciences, National Academy of Engineering, and Institute of Medicine, 2005). This process goes beyond identifying misconceptions, to being aware of their origins (e.g., common wisdom or “folk science,” the result of instruction, or cultural strictures such as religious faith), understanding their roots in deeper cognitive mechanisms, and considering their impact on student learning across disciplines.

Promoting conceptual change is challenging because it is a slow process and some ideas are more deeply rooted than others (Vosniadou, 2008a). In addition, incorrect ideas, beliefs, and understandings arise in many different ways, and their origins have implications for instruction. Some ideas arise because they align with personal experience. For example, the belief that denser objects fall more quickly than lighter objects in a vacuum is consistent with the observation that rocks fall more quickly than leaves (Docktor and Mestre, 2011). Some incorrect ideas are induced by instruction (Wandersee, Mintzes, and Novak, 1994), perhaps because students have no previous experience with the phenomenon in question—representing the atomic scale, for example (Taber, 2001)—because students overgeneralize from instructional analogies (Jee et al., 2010), or because there are inaccuracies in teaching materials (Hubisz, 2001). Indeed, although inaccuracies in K-12 teaching materials have been well-documented (King, 2010), similar studies have not been conducted for college-level teaching materials. Some scholars also claim that incorrect ideas about controversial topics such as the ozone hole, acid rain, and climate change have been deliberately fostered (Oreskes, Conway, and Shindell, 2008).

Effective conceptual change depends on students’ understanding that their beliefs are hypotheses or models rather than facts about the world, that other people may have other beliefs/hypotheses/models, and that these hypotheses or models need to be evaluated in light of relevant empirical evidence (National Academy of Sciences, National Academy of Engineering, and Institute of Medicine, 2005). Thus, considerations of students’ understanding of the nature of science and engineering and of the process of learning also are key to promoting conceptual change.

Clement (2008) discusses a range of types of conceptual change such as modifying an existing model by adding, removing, or changing elements; creating a new model (that has not grown out of an existing model); or replacing a concept with an ontologically different concept. He argues that all of these types of conceptual change are applicable at times, so a variety of different teaching strategies will be needed, possibly even within a single class.

Several approaches have been used to promote conceptual change in physics (see Docktor and Mestre, 2011, for a review). The University of Washington physics education research group identifies misconceptions

and then engages in a cyclic process of designing an intervention (based on previous research, instructor experiences, or expert intuition), testing and evaluating the intervention, and then refining the intervention until evidence is obtained that the intervention works (McDermott and Shaffer, 1992).

One instructional strategy is to use “bridging analogies” that provide a series of links between a correct understanding that students already possess and the situation about which they harbor an erroneous understanding (Brown and Clement, 1989; Camp, Clement, and Brown, 1994; Clement, 1993; Clement, Brown, and Zietsman, 1989). Research in chemistry (Zimrot and Ashkenazi, 2007) and physics (Sokoloff and Thornton, 1997) also has shown that interactive lecture demonstrations can promote conceptual change. For example, Sokoloff and Thornton (1997) found that after students experienced an interactive lecture demonstration related to Newton’s Third Law, they retained appropriate understanding of the law months later. Effective problem-solving instruction more broadly also has been shown to promote conceptual change (Cummings et al., 1999; see Chapter 6). On the other hand, as an example of the difficulty of promoting lasting conceptual change, college students have been shown to perform well on exams on the laws of motion, but continue using their incorrect, experience-based ideas to act in the world (diSessa, 1982).

A limited amount of engineering education research has focused on assessing conceptual change (see Turns et al., 2005). One strength of this research lies in the cooperation between engineering education researchers and educational psychologists to incorporate effective measurement and assessment techniques in the study of engineering education outcomes. Although the committee has characterized the strength of findings from this research as limited because few studies exist and most were conducted on a small scale, the findings point to increased conceptual understanding, in general, over time among students in engineering programs. However, in addition to being limited, the evidence is mixed and does not indicate how long the conceptual change lasts. Some studies demonstrate positive changes in conceptual knowledge over time (e.g., Muryanto, 2006; Segalas, Ferer-Balas, and Mulder, 2008) while others show limited change over the life of the academic program (e.g., Case and Fraser, 1999; Montfort, Brown, and Pollock, 2009). One possible explanation for this discrepancy is the degree to which programs develop concepts over time. Engineering design, like all complex subjects, requires repeated exposure rather than a single intense immersion or two (e.g., freshman engineering, capstone design) (Cabrera, Colbeck, and Terenzini, 2001). Indeed, cognitive science research, and science education research have shown that students are more likely to change their conceptions when they interact more with the content and the learning process (National Research Council, 1999, 2007; Stevens et al., 2008; Taraban et al., 2007; see Box 4-2 for an example).

BOX 4-2

Changing Students’ Conceptions About Heat Transfer

Students often believe that heat is a substance rather than energy transfer. This misperception presents challenges throughout the chemical engineering curriculum. These misconceptions are particularly robust because they represent the mis-categorization of ontological differences. In one study, 23 introductory chemical engineering students engaged in three laboratory activities to address very specific heat transfer misconceptions. In the first activity, they worked with boiling liquid nitrogen in an experiment related to the misconception that temperature is a good indicator of total energy as opposed to average kinetic energy. The second laboratory activity compared heat transfer in chipped versus block ice to address the misconception that more heat is transferred if a reaction is faster. The third laboratory activity, which involved cooling heated blocks with ice, was designed to help students distinguish between rate and amount of heat transfer. The researchers compared students’ scores on the heat transfer concept inventory before and after the laboratory activities and reported statistically significant gains on many of the items that pertained to the activities. These results suggest that conceptual change can occur, at least in the short term.

SOURCE: As discussed in Prince, Vigeant, and Nottis (2009).

Geoscience education research also has identified some strategies to successfully align students’ beliefs with accepted scientific explanations; the committee characterized the strength of findings from this research as limited because few studies exist and they were typically conducted within individual courses over brief time frames. McNeal, Miller, and Herbert (2008) used inquiry-based learning and multiple representations to effect conceptual change regarding increased plant biomass caused by increased nutrients in coastal waters (coastal eutrophication). Other research has reported large increases in knowledge and a decrease in misconceptions immediately after a three-week mock summit on climate change that used “role-playing, argumentation, and discussion to heighten epistemological awareness and motivation and thereby facilitate conceptual change” (Rebich and Gautier, 2005, p. 355). As an example of the difficulty of promoting lasting conceptual change, other researchers examined students’ concept maps over a two-semester sequence of introductory geology lectures and found an increase in the number of geological concepts identified but “a disproportionately small increase in integration of those

concepts into frameworks of understanding” (Englebrecht et al., 2005, p. 263).

SUMMARY OF KEY FINDINGS ON

CONCEPTUAL UNDERSTANDING

• In all disciplines, students have incorrect ideas, beliefs, and explanations about fundamental concepts. These ideas pose challenges to learning science and engineering because they are often sensible, if incorrect, and many are highly resistant to change.

• Many robust misunderstandings and incorrect beliefs have been identified, but not all are equally important. The most useful research focuses on ideas, beliefs, and understandings that involve central concepts in the discipline and that are widely held.

• In general, students have difficulty understanding phenomena and interactions that are not directly observable, including those that involve very large or very small spatial and temporal scales.

• A variety of tools and approaches have been used to measure students’ conceptual understanding, ranging from highly focused interviews to broader measures such as concept inventories. Although each tool has its own strengths and limitations, it is vital for them to address the key concepts and practices of a discipline.

• A variety of teaching strategies is needed to help students refine or replace incorrect ideas and beliefs, possibly even in a single unit of instruction. Physics education research has identified several strategies for successfully promoting conceptual change, including interactive lecture demonstrations, interventions that target specific misconceptions, and “bridging analogies” that link students’ correct understandings and the situation about which they harbor a misconception.

DIRECTIONS FOR FUTURE RESEARCH ON CONCEPTUAL

UNDERSTANDING AND CONCEPTUAL CHANGE

Across the disciplines considered in this report, a substantial body of literature exists about students’ conceptual understanding. Nonetheless, many gaps remain. All disciplines would benefit from well-validated, overarching schemas that describe the kinds of phenomena about which humans are prone to develop misunderstandings; Talanquer’s (2002, 2006) research on interpreting students’ ideas in chemistry may be a step in this direction. This research should focus on the key concepts and practices that are central to learning in a given discipline. A thorough understanding also is needed of whether and how the types and persistence of misconceptions

differ for students from different groups, including gender, race/ethnicity, academic ability, urban vs. rural, and majors versus nonmajors.

Perhaps even more importantly, with the exception of physics, very little research at the undergraduate level provides evidence of conceptual change over time as a result of instruction or other learning experiences. Many researchers provide suggestions for instruction, but fewer provide evidence about the efficacy of these suggestions (e.g., Tanner and Allen, 2005). Although physics education research has identified several pedagogical techniques that reliably move students toward scientifically normative conceptions, additional research is needed to understand whether these strategies work in other disciplines. Studies on these strategies in physics have been informed by cognitive science, and future DBER in other disciplines should be as well. For example, recent cognitive science research (Vosniadou, Vamvakoussi, and Skopeliti, 2008) has helped to replace Posner’s (1982) classical approach to conceptual change with more nuanced, and competing, perspectives. These newer perspectives emphasize the continuity of knowledge that can be expected over the course of learning and thus the possibility of identifying elements of novices’ prior knowledge that can contribute to the construction of more expert knowledge structures.

Research on effective methods for promoting conceptual change is particularly important because evidence exists for the persistence of incorrect ideas, beliefs, and explanations even after years of studying science and engineering. However, the length of many studies on this topic is often just one semester. Longitudinal studies of curriculum and pedagogy experiments designed to help students move toward normative scientific and engineering explanations are needed to more fully understand when and why incorrect ideas persist or reemerge. When applicable, these studies also should take into account the relationship between students’ personal belief systems and conceptual change. Research suggests that although students may be able to effectively apply knowledge that is inconsistent with their personal beliefs (such as answering questions about evolution correctly while not accepting evolution as valid) (Blackwell, Powell, and Dukes, 2003; Champagne, Gunstone, and Klopfer, 1985; Sinatra et al., 2003), their awareness of their beliefs and willingness to question those beliefs affect their receptivity to conceptual change (Pintrich, 1999).

Another important question for further DBER is how specific incorrect ideas originate, as a way of identifying effective means of moving students toward more normative understanding. Future DBER in this area might be informed by the growing body of research in cognitive science that focuses on the nature of students’ initial, incorrect conceptions of scientific concepts and phenomena (see Vosniadou, 2008b, for a thorough review of this literature). Progress on this set of questions would benefit from collaboration between DBER scholars and K-12 science education researchers, especially

those working on learning progressions (Mohan, Chen, and Anderson, 2009; Plummer and Krajcik, 2010; Schwarz et al., 2009). Research on the conceptual understanding of pre- and in-service K-12 teachers (Dahl, Anderson, and Libarkin, 2005; Kusnick, 2002) also may be a fruitful area of common ground between the two research communities.

Finally, the committee identified some discipline-specific needs for future research. More information is needed in engineering, biology, and the geosciences to design assessments that can diagnose students’ difficulties and to design instruction to move them toward more accurate understanding. Chemistry education could benefit from additional measures that specifically target students’ conceptual understanding because only a few such measures exist—to date, chemistry education researchers have used a variety of other tools to uncover and document incorrect ideas and beliefs. In astronomy, the next generation of assessment instruments is emerging, including assessments for general astronomy (Slater, Slater, and Bailey, 2011) and for targeted topic areas such as stars and stellar evolution (Bailey et al., 2011), light and spectra (Bardar, Prather, and Slater, 2006), planetary science (Hornstein et al., 2011), and the influence of gravity (Lindell and Sommer, 2003). The goal of this next generation of instruments is to reveal the underlying cognitive processes undergraduates use when thinking about these topics in astronomy. Research also is needed on students’ cognition and spatial thinking skills to more fully explore undergraduates’ conceptual understanding in astronomy. In physics, research on the underlying cognitive processes learners use when engaging in physics and how these might change as learners progress from novice to more expert perspectives has already begun. Although this type of research is more difficult and time-consuming than cataloging misconceptions, and might not be immediately applicable to instruction, it has the potential to generate broader insights about student understanding that could be relevant to many disciplines.