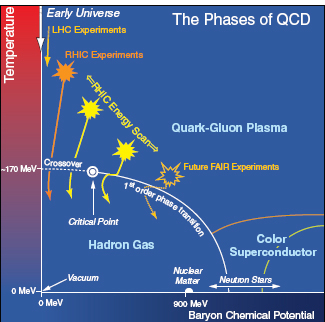

This chapter discusses in more detail the recent accomplishments and directions that are expected to be taken in nuclear physics in upcoming years. Where the discussion in Chapter 1 focused on four overarching questions being addressed by the field, this chapter is separated into more traditional subfields of nuclear physics—(1) nuclear structure, whose goal is to build a coherent framework for explaining all properties of nuclei and nuclear matter and how they interact; (2) nuclear astrophysics, which explores those events and objects in the universe shaped by nuclear reactions; (3) quark-gluon plasma, which examines the state of “melted” nuclei and with that knowledge seeks to shed light on the beginnings of the universe and the nature of those quarks and gluons that are the constituent particles of nuclei; (4) hadron structure, which explores the remarkable characteristics of the strong force and the various mechanisms by which the quarks and gluons interact and result in the properties of the protons and neutrons that make up nuclei; and (5) fundamental symmetries, those areas on the edge of nuclear physics where the understandings and tools of nuclear physicists are being used to unravel limitations of the Standard Model and to provide some of the understandings upon which a new, more comprehensive Standard Model will be built.

PERSPECTIVES ON THE STRUCTURE OF ATOMIC NUCLEI

The goal of nuclear structure research is to build a coherent framework that explains all the properties of nuclei, nuclear matter, and nuclear reactions. While extremely ambitious, this goal is no longer a dream. With the advent of new generations of exotic beam facilities, which will greatly expand the variety and intensity of rare isotopes available, new theoretical concepts, and the extreme-scale computing platforms that enable cutting-edge calculations of nuclear properties, nuclear structure physics is poised at the threshold of its most dramatic expansion of opportunities in decades.

The overarching questions guiding nuclear structure research have been expressed as two general and complementary perspectives: a microscopic view focusing on the motion of individual nucleons and their mutual interactions, and a mesoscopic one that focuses on a highly organized complex system exhibiting special symmetries, regularities, and collective behavior. Through those two perspectives, research in nuclear structure in the next decade will seek answers to a number of open questions:

- What are the limits of nuclear existence and how do nuclei at those limits live and die?

- What do regular patterns in the behavior of nuclei divulge about the nature of nuclear forces and the mechanism of nuclear binding?

- What is the nature of extended nucleonic matter?

- How can nuclear structure and reactions be described in a unified way?

New facilities and tools will help to explore the vast nuclear landscape and identify the missing ingredients in our understanding of the nucleus. A huge number of new nuclei are now available—proton rich, neutron rich, the heaviest elements, and the long chains of isotopes for many elements. Together, they comprise a vast pool from which key isotopes—designer nuclei—can be chosen because they isolate or amplify specific physics or are important for applications.

At the same time, research with intense beams of stable nuclei continues to produce innovative science, and, in the long term, discoveries at exotic beam facilities will raise new questions whose answers are accessible with stable nuclei.

Examples of the current program that offer a glimpse into future areas of inquiry are the investigation of new forms of nuclear matter such as neutron skins occurring on the surfaces of nuclei having large excesses of neutrons over protons, the ability to fabricate the superheavy elements that are predicted to exhibit unusual stability in spite of huge electrostatic repulsion, and structural studies in exotic isotopes whose properties defy current textbook paradigms.

Hand in hand with experimental developments, a qualitative change is taking

place in theoretical nuclear structure physics. With the development of new concepts, the exploitation of symbiotic collaborations with scientists in diverse fields, and advances in computing technology and numerical algorithms, theorists are progressing toward understanding the nucleus in a comprehensive and unified way.

Revising the Paradigms of Nuclear Structure

Shell Structure: A Moving Target

The concept of nucleons moving in orbits within the nucleus under the influence of a common force gives rise to the ideas of shell structure and resulting magic numbers. Like an electron’s motion in an atom, nucleonic orbits bunch together in energy, forming shells, and nuclei having filled nucleonic shells (nuclear “noble gases”) are exceptionally well bound. The numbers of nucleons needed to fill each successive shell are called the magic numbers: The traditional ones are 2, 8, 20, 28, 50, 82, and 126 (some of these are exemplified in Figure 2.1). Thus a nucleus such as lead-208, with 82 protons and 126 neutrons, is doubly “magic.” The concept of magic numbers in turn introduces the idea of valence nucleons—those beyond a magic number. Thus, in considering the structure of nuclei like lead-210, one can, to some approximation, consider only the last two valence neutrons rather than all 210. When proposed in the late 1940s, this was a revolutionary concept: How could individual nucleons, which fill most of the nuclear volume, orbit so freely without generating an absolute chaos of collisions? Of course, the Pauli exclusion principle is now understood to play a key role here, and the resulting model of nucleonic orbits has become the template used for over half a century to view nuclear structure.

One experimental hallmark of nuclear structure is the behavior of the first excited state with angular momentum 2 and positive parity in even-even nuclei. This state, usually the lowest energy excitation in such nuclei, is a bellwether of structure. Its excitation energy takes on high values at magic numbers and low values as the number of valence nucleons increases and collective behavior emerges. The picture of nuclear shells leads to the beautiful regularities and simple repeated patterns, illustrated in Figure 1.2 and seen here in the energies of the 2+ states shown at the top of Figure 2.2. The concept of magic numbers was forged from data based on stable or near-stable nuclei. Recently, however, the traditional magic numbers underwent major revisions as previously unavailable species became accessible. The shell structure known from stable nuclei is no longer viewed as an immutable construct but instead is seen as an evolving moving target. Indeed the elucidation of changing shell structure is one of the triumphs of recent experiments in nuclear structure at exotic beam facilities worldwide. For example, experiments

FIGURE 2.1 Shell structure in atoms and nuclei. Left: Electron energy levels forming the atomic shell structure. In the noble gases, shells of valence electrons are completely filled. Right: Representative nuclear shell structure characteristic of stable or long-lived nuclei close to the valley of stability. In the “magic” nuclei with proton or neutron numbers 2, 8, 20, 28, 50, 82, and 126, which are analogous to noble gases, proton and/or neutron shells are completely filled. The shell structure in very neutron-rich nuclei is not known. New data on light nuclei with N >> Z tell us that significant modifications are expected. SOURCE: Adapted and reprinted with permission from K. Jones and W. Nazarewicz, 2010, The Physics Teacher 48 (381). Copyright 2010, American Association of Physics Teachers.

at Michigan State University (MSU) in the United States and at the Gesellschaft für Schwerionenforschung (GSI) have shown that in the very neutron-rich isotope oxygen-24, with 8 protons and twice as many neutrons, N = 16 is, in fact, a new magic number.

One of the most interesting regions exhibiting the fragility of magic numbers is nuclei with 12 to 20 protons and 18 to 30 neutrons. The experimental evidence is exemplified in the lower portion of Figure 2.2 by the energies of the first excited 2+ states in this region. The figure shows the disappearance of neutron number N = 20 as a magic number in magnesium while it persists for neighboring elements.

FIGURE 2.2 Measured energies of the lowest 2+ states in even-even nuclei. Top: Color-coded Z-N plot spanning the entire nuclear chart clearly show the filaments of magic behavior at particular neutron and proton numbers (denoted by dashed lines) and the lowering of these states as nucleons are added and collective behavior emerges. The legend bar relates the colors to an energy scale in MeV. Bottom: Close-up view of the data for the neutron-rich magnesium (Mg), silicon (Si), sulfur (S), argon (Ar), and calcium (Ca) isotopes. The fingerprint of magic numbers is missing in the neutron-rich isotopes of Mg, Si, S, and Ar, in which the “standard” magic numbers at either N = 20 or 28 have dissipated. As of 2011, no data exist on Si, S, and Ar nuclei with N = 32. SOURCES: (Top) Courtesy of R. Burcu Cakirli, Max Planck Institute for Nuclear Physics, private communication, 2011; (bottom) Courtesy of Alexandra Gade, MSU.

Similarly, N = 28 loses its magic character for silicon, sulfur, and argon, while calcium, which is also magic in protons, retains its doubly magic character at N = 28.

There are at least three factors leading to such changes in shell structure: changes in how nucleons interact with each other as the proton-neutron asymmetry varies, the influence of scattering and decay states near the isotopic limits of nuclear existence (the “drip lines”), and the increasing role of many-body effects in weakly bound nuclei where correlations determine the mere existence of the nucleus. This new perspective on shell structure affects many facets of nuclear structure, from the existence of short-lived light nuclei, to the emergence of collectivity, to the stability of the superheavy elements.

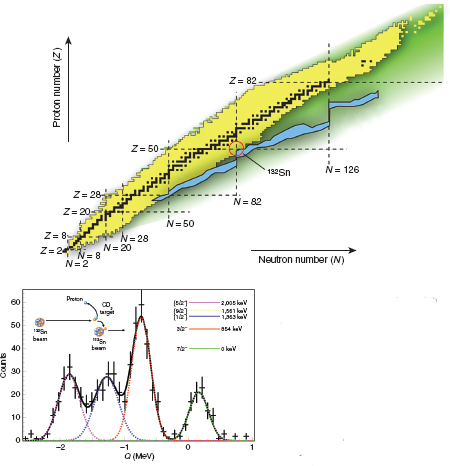

Recent studies of calcium, nickel, and tin isotopes using techniques such as Coulomb excitation and light-ion single nucleon transfer reactions, both near traditional magic numbers and along extended isotopic chains, are beginning to answer questions about effective internucleon forces in the presence of large neutron excess, the relevance of the detailed shell-model template in the presence of weak binding, and the nature of nuclear collective motion. Excellent tests of the nuclear shell model were offered by recent studies of the tin (Sn) isotopes. The nucleus tin has a magic number (50) of protons, and its short-lived isotopes tin-100 and tin-132, with 50 and 82 neutrons, respectively, are expected to be rare examples of new doubly magic heavy nuclei. Unique data in the tin-132 region (see Figure 2.3) shows that tin-132 indeed behaves as a good doubly magic nucleus. Other experiments providing data around tin-100, in particular the first structural information on tin-101, have led to theoretical surprises. Further tests of shell structure and interactions in the heaviest elements will be discussed below.

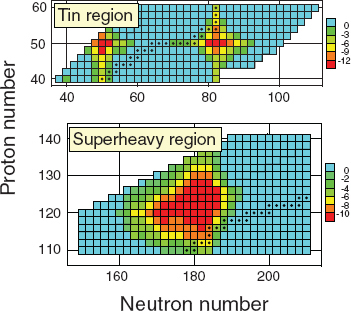

It is expected that the shell model will undergo sensitive tests in the region of superheavy nuclei, whose very existence hinges on a dynamical competition between short-range nuclear attraction and huge long-range Coulomb repulsion. Interestingly, a similar interplay takes place in low-density, neutron-rich matter found in crusts of neutron stars, where “Coulomb frustration” produces rich and complex collective structures, discussed later in this chapter in “Nuclear Astrophysics.” Figure 2.4 shows the calculated shell energy—that is, the quantum enhancement in nuclear binding due to the presence of nucleonic shells. The nuclei from the tin region are excellent examples of the shell-model paradigm: the magic nuclei with Z = 50, N = 50, and N = 82 have the largest shell energies, and the associated closed shells provide exceptional stability. In superheavy nuclei, the density of single-particle energy levels is fairly large, so small energy shifts, such as the regions of enhanced shell stabilization in the super-heavy region near N = 184, are generally expected to be fairly broad; that is, the notion of magic numbers and the energy gaps associated with them becomes fluid there.

Another dimension in studies of shells in nuclei has been opened by precision studies, at the Thomas Jefferson National Accelerator Facility (JLAB) and at the

FIGURE 2.3 Top: All known nuclides are shown as black (if stable) or yellow (unstable). Dashed lines indicate the traditional magic numbers of protons and neutrons. Two doubly-magic nuclei, tin-132 and nickel-78, are adjacent to the r-process region (blue) of as-yet-unseen nuclides that are thought to be involved in the creation of the heaviest elements in supernovae. By adding neutrons or protons to a stable nucleus, one enters the territory of radioactive nuclei, first long-lived, then short-lived, until finally the nuclear drip line is reached, where there is no longer enough binding force to prevent the last nucleons from dripping off the nuclei. The proton and neutron drip lines form the borders of nuclear existence. Bottom: Experimental spectrum for a transfer reaction in which an incident deuteron grazes a tin-132 target, depositing a neutron to make tin-133 with detection of the exiting proton (that is, d + tin-132 ![]() p + tin-133). The solid line shows a fit to the four peaks shown in green, red, blue, and lavender in the level scheme (inset). The top left inset displays a cartoon of the reaction employed. The investigations revealed that low energy states in tin-133 have even purer single-particle character than their counterparts in lead-209, outside the doubly-magic nucleus lead-208, the previous benchmark. SOURCE: (Top) Reprinted by permission from Macmillan Publishers Ltd., B. Schwarzschild. August 2010. Physics Today 63:16, copyright 2010; (Bottom) Reprinted by permission from Macmillan Publishers Ltd., K.L. Jones, A.S. Adekola, D.W. Bardayan, et al. 2010. Nature 465: 454, copyright 2010. Portions of the figure caption are extracted from K.L. Jones, W. Nazarewicz, 2010. Designer nuclei

p + tin-133). The solid line shows a fit to the four peaks shown in green, red, blue, and lavender in the level scheme (inset). The top left inset displays a cartoon of the reaction employed. The investigations revealed that low energy states in tin-133 have even purer single-particle character than their counterparts in lead-209, outside the doubly-magic nucleus lead-208, the previous benchmark. SOURCE: (Top) Reprinted by permission from Macmillan Publishers Ltd., B. Schwarzschild. August 2010. Physics Today 63:16, copyright 2010; (Bottom) Reprinted by permission from Macmillan Publishers Ltd., K.L. Jones, A.S. Adekola, D.W. Bardayan, et al. 2010. Nature 465: 454, copyright 2010. Portions of the figure caption are extracted from K.L. Jones, W. Nazarewicz, 2010. Designer nuclei ![]() making atoms that barely exist, The Physics Teacher 48: 381.

making atoms that barely exist, The Physics Teacher 48: 381.

FIGURE 2.4 Contribution to nuclear binding due to shell effects (in MeV) for nuclei from the tin region (top) and super-heavy elements (bottom) calculated in the nuclear density functional theory. The nuclei colored in darker red are those whose binding is most enhanced by quantum effects. The nuclei predicted to be stable to beta decay are marked by dots. SOURCE: Reprinted and adapted from M. Bender, W. Nazarewicz, and P.G. Reinhard, Shell stabilization of super- and hyperheavy nuclei without magic gaps, Physics Letters B 515: 42, Copyright 2001, with permission from Elsevier.

Japanese National Laboratory for High Energy Physics (KEK), of hypernuclei—nuclei that contain at least one hyperon, a strange baryon, in addition to nucleons. By adding a hyperon, nuclear physicists can explore inner regions of nuclei that are impossible to study with protons and neutrons, which must obey the constraints imposed by the Pauli principle. The experimental work goes hand in hand with advanced theoretical calculations of hyperon-nucleon and hyperon-hyperon interactions, with the ultimate goal being the comprehensive understanding of all baryon-baryon interactions.

Exploring and Understanding the Limits of Nuclear Existence

An important challenge is to delineate the proton and neutron drip lines—the limits of proton and neutron numbers at which nuclei are no longer bound by

the strong force and nuclear existence ends—as far into the nuclear chart as possible (see Figure 2.3 [top]). For example, experiments at MSU have produced the heaviest magnesium and aluminum isotopes accessible to date and have shown that magnesium-40, aluminum-42, and possibly aluminum-43 exist. Nuclei near the drip lines are very weakly bound quantum systems, often with extremely large spatial sizes. In recent years, experiments at Argonne National Laboratory (ANL), TRIUMF, Grand Accélérateur National d’Ions Lourds (GANIL), GSI, the European Organization for Nuclear Research (CERN), and Rikagaku Kenky jo (RIKEN) using high-precision laser spectroscopy have determined the charge radii of halo nuclei helium-6, helium-8, beryllium-11, and lithium-11 with an accuracy of 1 percent through the determination of isotope shifts of atomic electronic levels. With the advanced-generation Facility for Rare Isotope Beams (FRIB) it should be possible to extend such studies and to delineate most of the drip line up to mass 100 using the high-power beams available and the highly efficient and selective FRIB fragment separators.

Drip line nuclei often exhibit exotic decay modes. An example is the extremely proton-rich nucleus iron-45 that decays by beta decay or by ejecting two protons from its ground state. Another example of exotic decay modes, proton-rich nuclei exhibiting “superallowed” beta decays, is discussed in “Fundamental Symmetries,” later in this chapter. Moving toward the drip lines, the coupling between different nuclear states, via a continuum of unbound states, becomes systematically more important, eventually playing a dominant role in determining structure. Such systems where both bound and unbound states exist and interact are called “open” quantum systems.

Many aspects of nuclei at the limits of the nuclear landscape are generic and are currently explored in other open systems: molecules in strong external fields, quantum dots and wires and other solid-state microdevices, crystals in laser fields, and microwave cavities. Radioactive nuclear beam experimentation will answer questions pertaining to all open quantum systems: What are their properties around the lowest energies, where the reactions become energetically allowed (reaction thresholds)? What is the origin of states in nuclei, which resemble groupings of nucleons into well-defined clusters, especially those of astrophysical importance? What should be the most important steps in developing the theory that will treat nuclear structure and reactions consistently?

The Heaviest Elements

What are the heaviest nuclei that can exist? Is there an island of very long-lived nuclei in the N-Z plane? What are the chemical properties of superheavy atoms? These questions present challenges to both experiment and theory. As discussed earlier, the repulsive electrostatic Coulomb force between protons grows so much

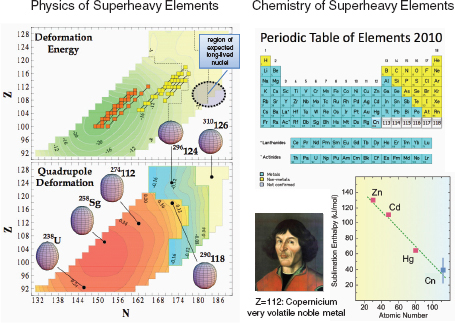

in those nuclei with large proton number that they would not be bound except for subtle quantum effects. Theory predicts that stability will increase with the addition of neutrons in these systems as one approaches N = 184 (see Figure 2.5), but there is no consensus about the precise location of the projected island of long-lived superheavy elements and their lifetimes (some are predicted to have lifetimes as long as 105-107 years.

By using actinide targets and rare stable beams, such as calcium-48, elements up to Z = 118 have been produced and observed. The discovery of a nucleus with Z = 117, with a target of berkelium-249, is a case in point as well as an excellent example of international cooperation in nuclear physics (Box 2.1). Not only did this work discover a new element but new information obtained on the half lives of several nuclei in its decay path provided experimental support for the existence of the long-predicted island of stability in superheavy nuclei. Further incremental progress approaching Z = 118 and beyond is possible, but it requires new actinide targets beyond berkelium, and intense beams of rare stable isotopes such as titanium-50. However, there is a range of options for synthesizing heavy elements with exotic beams. By using neutron-rich radioactive targets and beams a highly excited system can be formed, which would decay into the superheavy ground state via evaporation of the excess neutrons. An area of related importance is the further study of the spectroscopy of the heaviest nuclei possible using reaccelerated beams and large acceptance spectrometers, looking at alpha-decay and gamma-ray spectroscopy up to at least Z = 106.

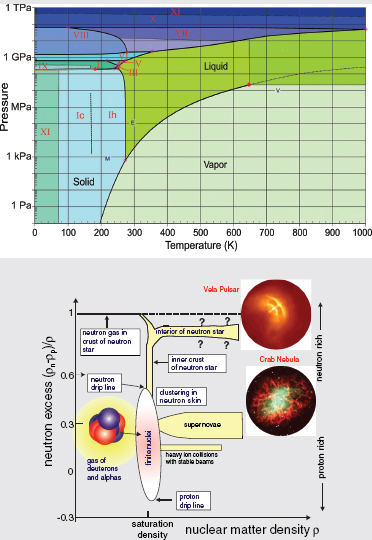

Neutron-Rich Matter in the Laboratory and the Cosmos

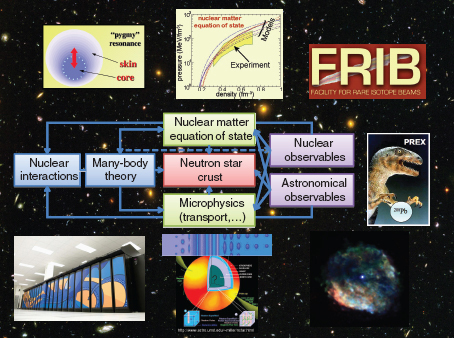

Neutron-rich matter is at the heart of many fascinating questions in nuclear physics and astrophysics: What are the phases and equations of state of nuclear and neutron matter? What are the properties of short-lived neutron-rich nuclei through which the chemical elements around us were created? What is the structure of neutron stars, and what determines their electromagnetic, neutrino, and gravitational-wave radiations? To explain the nature of neutron-rich matter across a range of densities, an interdisciplinary approach is essential in order to integrate laboratory experiments with astrophysical theory, nuclear theory, condensed matter theory, atomic physics, computational science, and electromagnetic and gravitational-wave astronomy. Figure 2.6 summarizes such linkages in this interdisciplinary endeavor.

In heavy neutron-rich nuclei, the excess of neutrons predominantly collects at the nuclear surface creating a skin, a region of weakly bound neutron matter. The presence of a skin can lead to curious collective excitations, for example, “pygmy resonances,” characterized by the motion of the partially decoupled neutron skin against the remainder of the nucleus. Such modes could alter neutron capture cross sections important to r-process nucleosynthesis (discussed further in “Nuclear

FIGURE 2.5 Left: Calculated properties of even-even superheavy nuclei. The upper-left diagram shows the deformation energy (in MeV) defined as a difference between the ground state energy and the energy at the spherical shape. Several Z = 110-113 alpha decay chains found at GSI and RIKEN with fusion reactions using lead or bismuth targets are marked by pink squares and those obtained in hot fusion reactions at the Joint Institute for Nuclear Reactions (JINR) in Dubna are marked by yellow squares. The region of anticipated long-lived superheavy nuclei is schematically marked. Lower left: contour map of predicted ground-state quadrupole deformations and nuclear shapes for selected nuclei. Prolate shapes are red-orange; oblate shapes, blue-green; and spherical shapes, light yellow. The symbols 274112, 290118, 296124, and 310126 refer to unnamed nuclei having the given number of nucleons (superscript) and protons (base). Right: Periodic table of elements as of 2010 including the element Z = 112 discovered at GSI and accorded the name copernicium (chemical symbol Cn) in honor of astronomer Nicolaus Copernicus. Its chemistry suggests it is a member of the metallic group 12 (containing zinc, cadmium, and mercury). SOURCES: (Left) Adapted by permission from Macmillan Publishers Ltd., S. Ćwiok, P.H. Heenen, and W. Nazarewicz. 2005. Nature 433: 705; (right) K.L. Jones and W. Nazarewicz, 2010, Designer nuclei—Making atoms that barely exist, The Physics Teacher 48: 381. Reprinted with permission from The Physics Teacher, Copyright 2010, American Association of Physics Teachers.

Astrophysics,” later in this chapter). One of the main science drivers of FRIB is to study a range of nuclei with neutron skins several times thicker than is currently possible. Studies of high-frequency nuclear oscillations (giant resonances) and intermediate-energy nuclear reactions will help pin down the equation of state of nuclear matter.

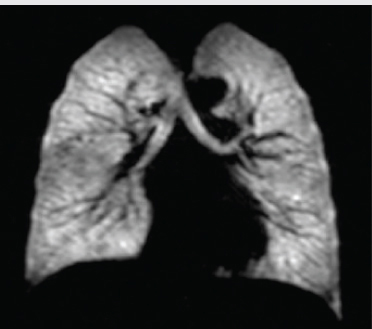

Another insight is being provided by electron scattering experiments. The Lead Radius Experiment (PREX) at JLAB uses a faint signal arising from parity violation by weak interaction to measure the radius of the neutron distribution in lead-208. This measurement should have broad implications for nuclear structure, astrophysics, and low-energy tests of the Standard Model. Precise data from PREX would provide constraints on the neutron pressure in neutron stars at subnuclear densities. Important insights come from experiments with cold Fermi atoms that can be tuned to probe strongly interacting fluids that are very similar to the low-density neutron matter found in the crusts of neutron stars (see Box 2.2).

Nature and Origin of Simple Patterns in Complex Nuclei

Rather than tackling the nuclear problem from the femtoscopic perspective of nucleon motions and interactions, one can focus on a complementary view of the atomic nucleus as a mesoscopic system characterized by shapes, oscillations, and rotations and described by symmetries applicable to the nucleus as a whole. In this way, properties and regularities, which might not be explicit in a description in terms of individual nucleons, are highlighted, providing insights that can inform microscopic understanding. Such a perspective focuses on identifying what nuclei do and what that tells us about their structure, while the femtoscopic approach is essential to understanding why they do it.

The mesoscopic approach is motivated by the recognition of, and search for, regularities and simple patterns in nuclei that signal the appearance of many-body symmetries and associated emergent collective behavior. Despite the fact that the number of protons and neutrons in heavy nuclei is rather small, the emergent collectivity they show is similar to other complex systems exhibiting self-organization, such as those studied by condensed matter and atomic physicists, quantum chemists, and materials scientists. While few if any nuclei will exhibit idealized symmetries exactly, such a conceptual framework provides important benchmarks. In this perspective, an important goal is to determine the experimental signatures that spotlight these patterns and the interactions responsible for them. Already, research with exotic nuclei is showing the breakdown of traditional patterns (see discussion of Figure 2.2) and new ways of seeing the emergence of collective phenomena in both light and heavy nuclei.

Box 2.1

U.S. and Russian Scientists Collaborate to

Create a New Chemical Element, 117

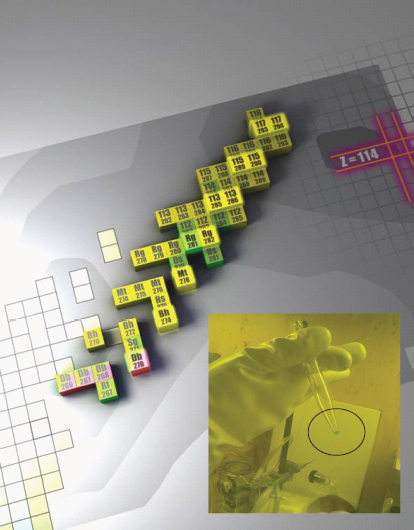

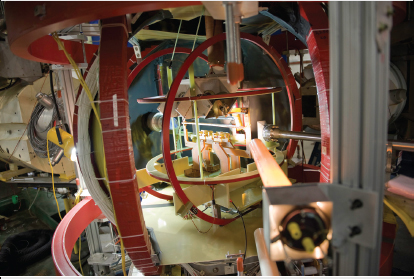

A team of U.S. and Russian physicists has created a new element with atomic number Z = 117, filling in a gap in chemistry’s periodic table. The new superheavy element, born in a Russian accelerator laboratory at JINR, in Dubna, required coordinated collaborative efforts between four institutions in the United States and two in Russia and more than 2 years to achieve, highlighting what international cooperation can accomplish. The identification of element 117 among the products of the berkelium-249 + calcium-48 reaction occurred in late 2009 and the results were published in April 2010.1 Production of the berkelium-249 target material, with a short half-life of T½ = 320 days, required an intense neutron irradiation at the High Flux Isotope Reactor (HFIR) of the Oak Ridge National Laboratory (ORNL), chemical separation from other reactor-produced products including californium-252, again at ORNL, followed by target fabrication in Dimitrovgrad, Russia, and six months of accelerator bombardment with an intense calcium-48 beam at Dubna, Russia—a continual intercontinental race against radioactive decay. Analysis of the experimental data was performed independently at Dubna and Lawrence Livermore National Laboratory, providing nearly round-the-clock data analysis by virtue of the 11- to 12-hour time difference between Russia and California. Six atoms of element 117—five of 293117 and one of 294117—were observed and 11 new nuclides were discovered in the decay products of those two new Z = 117 isotopes (Figure 2.1.1). The measured half-lives of new superheavy nuclei were observed to increase with larger neutron number. This work represents an experimental verification for the existence of the predicted island of enhanced stability. Scientists and students at Vanderbilt University and the University of Nevada also contributed to this successful experiment.

1 Y.T. Ogannessian, F.S. Abdullin, P.D. Bailey, et al. 2010. Physical Review Letters 104: 142502.

FIGURE 2.1.1 Upper end of the chart of nuclides highlighting the 11 new nuclides produced as a result of synthesizing 293117 and 294117. The inset shows 22 mg of berkelium-249 in the bottom of a centrifuge cone after chemical separation (green solution). The californium-252 contamination was reduced about 108 times during the purification process at the Radiochemical Engineering Development Center at ORNL. SOURCE: Images courtesy of W. Nazarewicz and K. Rykaczewski, Oak Ridge National Laboratory.

FIGURE 2.6 Multidisciplinary quest for understanding the neutron-rich matter on Earth and in the cosmos. The study of neutron skins and the PREX experiment are discussed in the text. The anticipated discovery of gravitational waves by the Laser Interferometer Gravitational Wave Observatory (LIGO) and the allied European detector Virgo will help understanding large-scale motions of dense neutron-rich matter. Finally, advances in computing hardware and computational techniques will allow theorists to perform calculations of the neutron star crust. SOURCE: Courtesy of W. Nazarewicz, University of Tennessee at Knoxville; inspired by a diagram by Charles Horowitz, Indiana University.

Nuclear Masses and Radii

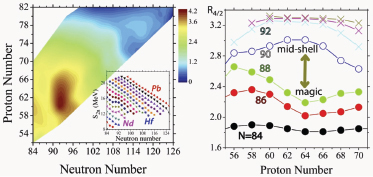

The binding of nucleons in the nucleus contains integral information on the interactions that each nucleon is subjected to in the nuclear environment. Differences in nuclear masses and nuclear radii give information on the binding of individual nucleons, on the onset of structural changes, and on specific interactions. Examples of recent measurements of charge radii in light halo nuclei were discussed above. With exotic beams and devices such as Penning and atomic traps, storage rings, and laser spectroscopy the masses and radii of long sequences of exotic isotopes are becoming available, extending our knowledge of how nuclear

structure evolves with nucleon number. Figure 2.7 (left) shows the sensitivity of separation energies to nuclear structure. The inset displays the energy required to remove the last two neutrons from the nucleus. These energies have sharp drops after magic numbers but approximately linear behavior in between. Subtracting an average linear behavior therefore magnifies structural changes as seen in the color-coded contours in the two-dimensional plot in the proton-neutron plane.

Phase-Transitional Behavior

Changes in nuclear properties as a function of nucleon number can signal quantum phase transitions between regions characterized by different symmetries. Although the behavior of such transitions is muted in finite nuclear systems, experimental studies have provided evidence for their existence and tested simple theoretical schemes for nuclei at the critical points. Theoretical studies that model nuclear shape variations in the limit of large valence nucleon number have shown how phase transitional character in large systems evolved toward the muted remnant of this behavior seen in finite nuclei and helped to identify empirical signatures of first- and second-order phase transitions that have been used to classify the phase transitions in, for example, the A ~ 150 and A ~ 134 mass regions.

The extra binding gained in the shape transition region near N = 90 is evident in the brown shaded area in Figure 2.7 (left). Representative spectroscopic data showing the ratio R4/2 of energies of the lowest 4+ and 2+ excitations are given for the same A ~ 150 region in Figure 2.7 (right). The phase transition is signaled by the concave-to-convex change of pattern between N = 88 and N = 90 associated with a breakdown of a subshell gap at Z = 64.

Probing Nuclear Shapes by Rapid Rotation

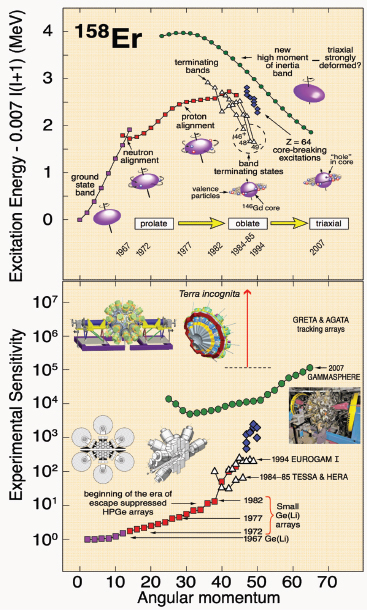

Gamma-ray spectroscopy is a basic tool for studying nuclear structure, shapes, and their changes—both from the energies and decay paths of excited nuclear states and by measuring nuclear level lifetimes from Doppler effects. Recently, a great diversity of phenomena has been discovered as increasingly sensitive instrumentation reveals unexpected behavior in our quest to observe higher excitation energies and angular momentum states in nuclei.

Figure 2.8 illustrates this progress for the rare earth nucleus erbium-158. The future of gamma-ray spectroscopy is brighter than ever with the development of the next generation of detector systems comprising a highly segmented shell of germanium detectors covering a complete sphere around a source using the new technology known as “gamma-ray tracking.” Such systems will have a sensitivity or resolving power about 100 times better than present-day systems. Since gamma-ray spectroscopy is one of the most powerful experimental approaches to unraveling

Box 2.2

Intersections of Dense Nuclear Physics with

Cold Atoms and Neutron Stars

Nuclear systems—from atomic nuclei to the matter in neutron stars to the matter formed in ultrarelativistic heavy ion collisions—are complex many-particle systems that exhibit a great range of collective behavior such as superfluidity. This facet of nuclear systems, shared with matter studied by condensed matter physicists, atomic physicists, quantum chemists, and materials scientists, has opened up splendid opportunities for productive and valuable cross-fertilization among these fields. Of growing importance is the intersection of nuclear physics and ultracold atomic gases.

Atomic gas clouds allow physicists to control experimental conditions such as particle densities and interaction strengths, a control intrinsically unavailable to nuclear physicists. Such control has inspired nuclear physicists to develop more unified pictures of nuclear matter, beyond the constraints of laboratory nuclear systems, and to see commonalities with atomic systems. The experimental flexibility of cold atom systems makes them ideal to explore exotic phases and quantum dynamics in these strongly paired Fermi systems.

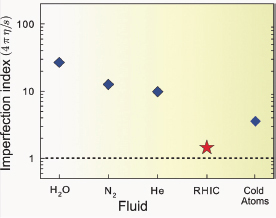

The quark-gluon plasmas in ultrarelativistic heavy ion collisions are the hottest materials one can produce in the laboratory, with temperatures of trillions of degrees. On the other hand, clouds of ultracold trapped atoms are the coldest systems in the universe, reaching temperatures as low as one billionth of a degree above absolute zero.1 Nonetheless, despite this difference in temperatures and energies, the two systems share significant physical connections, enabling cross-fertilization between high-energy nuclear physics and ultracold atomic physics. As discussed later in Chapter 2, “Exploring Quark-Gluon Plasma,” both systems, when strongly interacting, have the smallest viscosities (compared with their entropy, or degree of disorder) of any system in the universe. The transition observed in strongly interacting cold fermionic atom clouds from paired superfluid states, analogous to superconducting electrons in a metal, to BEC states of molecules consisting of two fermion atoms, captures certain aspects of the transition from a quark-gluon plasma to ordinary hadronic matter made of neutrons, protons, and mesons.

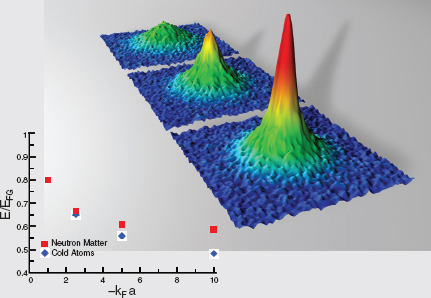

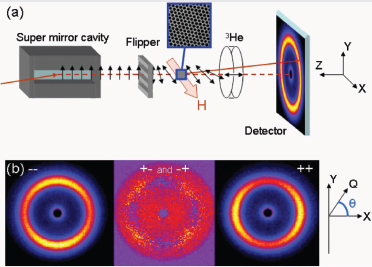

Superfluid pairing in low-density strongly interacting fermionic atomic systems is very similar to that pairing in low-density neutron matter in neutron stars. Figure 2.2.1 compares the predicted energy of a low-density cloud of cold superfluid neutrons with that of cold atomic fermions as the density increases, and shows how the two systems behave in common. Although the energy scales are vastly different, the attractive interactions between fermions in both systems produce extremely large superfluid pairing gaps, on the order of one-third to one-half the Fermi energy, and in this sense these systems are the highest temperature superfluids known. Experiments in cold atoms (illustrated in the inset of Figure 2.2.1) can measure the energies and superfluid pairing gaps of cold fermions from weak to strong coupling, and provide sensitive tests of theories used to compute the properties of matter in the exterior of neutron stars, large neutron-rich nuclei, and quark matter. These properties are key to understanding the limits of stability and pairing in neutron-rich nuclei and the cooling of neutron stars.

One can also study analogues of nuclear and quark-gluon plasma states with cold atoms: Simple examples include the binding of fermionic atoms in three distinct (hyperfine) states, as in lithium-6, analogous to quarks of three colors of quarks, into three-atom molecules, the analogs of nucleons; or the binding of bosonic atoms with fermionic atoms into molecules. One can also exploit similarities of the tensor interaction between nucleons to the magnetic interaction between atoms with large magnetic dipole moments, e.g., dysprosium, to make analogs of the pion condensed states proposed in dense neutron star matter. Strongly interacting ultracold atomic plasmas also present unusual opportunities to study the dynamics of strongly interacting quark-gluon plasmas. Further examples include the formation and interaction of vortices and possible exotic superfluid

phases of matter. Future experiments with optical traps will allow one to study the properties of the inhomogeneous matter that exists in the crust of neutron stars. And, strongly interacting clouds of atoms with differing densities of up and down spins, as can be engineered in optical traps, share some common features with strongly interacting quark matter with differing densities of up, down, and strange quarks. In both contexts, superfluid pairing gaps that are modulated in space in a periodic pattern may develop, yielding a superfluid and crystalline phase of matter, hints of which may have been seen in very recent cold atom experiments.

FIGURE 2.2.1 Upper right: Images of a superfluid condensate of fermion pairs in the laboratory. The images from upper left to lower right correspond to increasing strength of the pairing obtained by varying the magnetic field. The density tracks the equation of state of strongly paired fermions from the Bose-Einstein condensate (BEC) toward the very strong interaction (or “unitary”) limit. Lower left: Comparison between the energies of cold atoms and neutron matter at very low densities. The energies are given relative to those of a noninteracting Fermi gas, EFG, and are plotted as a function of the product of Fermi momentum, kF, and scattering length a, representing the interaction strength. SOURCES: (Upper right) Copyright Markus Greiner, Harvard University, and Deborah Jin, NIST/JILA; (Lower left) A. Gezerlis and J. Carlson, Physical Review C 77: 032801, 2008, Figure 1. Copyright 2008 by the American Physics Society.

1 National Research Council, 2007, Controlling the Quantum World, Washington, D.C.: The National Academies Press.

FIGURE 2.7 Left: Experimental two-neutron separation energies S2n extracted from measured nuclear masses in the Z = 50-82 and N = 82-126 shells. Removing a smooth reference from the bare values shown in the inset highlights the collective contributions attributed to the valence nucleons. The onset of nonspherical nuclear shapes is clearly seen around N = 90, along with more subtle effects near N = 84 and N = 116. Right: Illustration of shape/phase transitional behavior around N = 90. The signature observable R4/2 (= E(4+)/E(2+)) varies from <2 for nuclei very near closed shells to ~2 for spherical vibrational nuclei, to ~3.33 for nonspherical nuclei. SOURCE: Figure courtesy of R. Burcu Cakirli, Max Planck Institute for Nuclear Physics, private communication, 2011. Based on data available through 2010.

FIGURE 2.8 Upper panel: The evolution of the structure and shape of erbium-158 as this nucleus’s rotation speed increases. The excitation energies of various states in erbium-158, with respect to a simple quantum rotor reference, are plotted as a function of angular momentum. A sequence of shape transitions, from weakly deformed prolate, to nearly spherical oblate, to well deformed triaxial is seen. The future challenge will be to reach the region of extremely high nuclear rotations at which erbium-158 cannot withstand the huge centrifugal force and fissions into fragments. The timeline indicates some of the significant milestones in this evolutionary path. Lower panel: This timeline, and the rapid development of more sophisticated instrumentation, are further echoed here, where the intensity of a particular gamma-ray transition (normalized to unity for transitions between low angular momentum states) between specific energy levels, as the nucleus de-excites, is plotted as a function of spin. This serves as a guide to the increasing sensitivity as new instruments have become available, starting from single detectors in the 1960s, to small arrays in the 1970s and 1980s, to the current array, called Gammasphere, and to the large gains expected for future generations of gamma-ray tracking arrays such as the Gamma-Ray Energy Tracking Array (GRETINA/GRETA), and the Advanced Gamma Ray Tracking Array (AGATA). SOURCE: Courtesy of Mark A. Riley, Florida State University.

the structure of nuclei, these new, highly sensitive arrays will greatly enhance, for example, the discovery potential of FRIB, which will produce key nuclei—crucial for understanding new structural phenomena of the types discussed on these pages—but often in very small amounts. These prospects are supported by the advances already obtained with existing current-generation instruments.

New Facets of Nucleonic Pairing

Nucleonic superfluidity plays a large role in nuclear structure. A generic feature of superfluidity is that elementary particles called fermions (such as protons or neutrons) combine to form specially constructed pairs (Cooper pairs) that are bosons and exhibit very different behavior and interactions than their constituent particles. In loosely bound nuclei, pairing may be the decisive factor for stability against particle decay. A striking example is the unbound nature of odd-neutron He nuclei while their even-neutron neighbors are bound. Nucleonic pairing is also important for the structure of neutron star crust. As the number of nucleons can be controlled experimentally, nuclei far from stability offer new opportunities to study pairing. For instance, it has been suggested that, in neutron-rich nuclei, neutron pairs (di-neutrons) are well localized in the skin region. In heavier nuclei with similar neutron and proton numbers, pairing carried by deuteron-like proton-neutron pairs with nonzero angular momentum is expected. Such yet-unobserved correlations are believed to profoundly impact nuclear binding in nuclei with approximately equal numbers of protons and neutrons (N ~ Z nuclei), to influence isospin symmetry and beta decay, and to modify the equation of state of diluted symmetric nuclear matter. Pairing can be probed with a variety of nuclear reactions that add or subtract pairs of nucleons. These reactions can be studied in inverse kinematics (experimental conditions in which the usual roles of target and projectile are interchanged) with a variety of exotic nuclear beams with intensities >103/s. Because of finite-size effects and different polarization effects in nuclei and nuclear matter, a theoretical challenge will be to relate experiments on nucleonic superfluidity in finite nuclei to pairing fields in neutron stars (see Box 2.2).

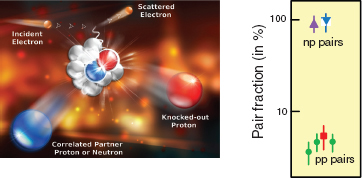

A new twist on the pairing story has been provided by studies at the Brookhaven National Laboratory (BNL) and JLAB. These studies precisely probed nuclear interactions on short distance scales, showing that energetic protons are about 20 times more likely to pair up with energetic neutrons than with other protons in the nucleus when nucleons overlap (see Figure 2.9). As discussed earlier, in studies of pair correlations at lower energies, such proton-neutron predominance has not been observed. This can be traced back to variations in the nuclear interaction when changing the relative distance between the two nucleons.

FIGURE 2.9 Left: A diagram of a short-range correlation reaction. Knocking out a proton by an energetic electron causes a high-momentum correlated partner nucleon to be emitted from the nucleus, leaving the rest of the system relatively unaffected. Right: Depiction of the experimental results from JLAB and BNL that demonstrate the large momentum nucleons in nuclei are primarily coming in proton-neutron pairs. Different symbols and colors mark results of different reactions used. Isolating the signatures of short-range behavior addresses the long-standing question of how close nucleons have to approach before the nucleons’ quarks reveal themselves and nucleonic degrees of freedom can no longer be used to describe the system. SOURCES: (Left) Courtesy of JLAB; (right) adapted from R. Subedi, R. Shneor, P. Monaghan, et al. 2010, Science 320: 1476. Reprinted with permission from AAAS.

Toward a Comprehensive Theory of Nuclei

An understanding of the properties of atomic nuclei is essential for a complete nuclear theory, for an explanation of element formation and properties of stars, and for present and future energy and defense and security applications. Nuclear theorists strive for a comprehensive, unified description of all nuclei, a portrait of the nuclear landscape that incorporates all nuclear properties and forces and can deliver maximum predictive power with well-quantified uncertainties. Such a framework would allow for more accurate predictions of the nuclear processes that cannot be measured in the laboratory, from the creation of new elements in exploding stars to the reactions occurring in cores of nuclear reactors. Developing such a theory requires theoretical and experimental investigations of rare isotopes, new theoretical concepts, and extreme-scale computing, all carried out in partnership with applied mathematicians and computer scientists (see Box 2.3).

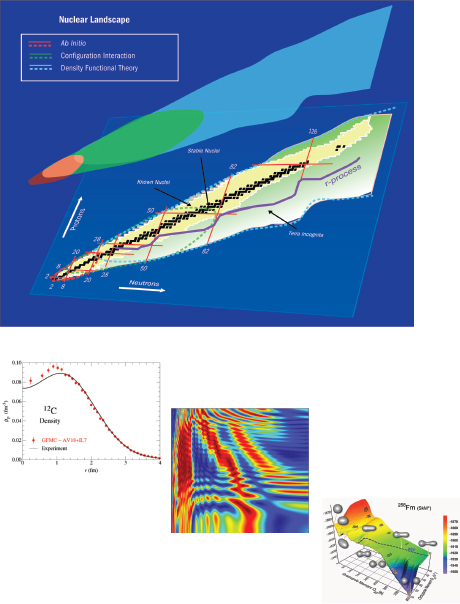

There is a well-delineated path toward such a description at the nucleonic level across the nuclear chart that merges three approaches: (1) ab initio, (2) configuration-interaction (CI), and (3) nuclear density functional theory (DFT). Ab initio methods use basic interactions among nucleons to fully solve the nuclear

Box 2.3

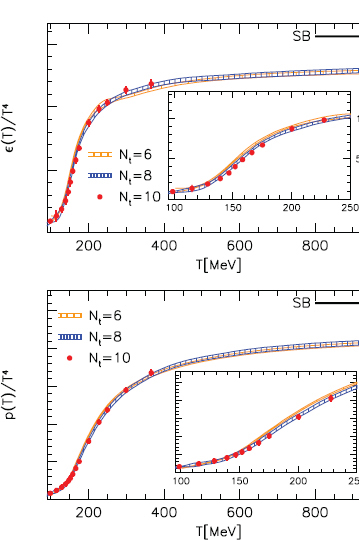

High-Performance Computing in Nuclear Physics

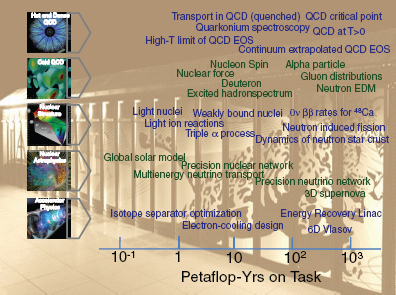

One of the trends in science today is the increasingly important role played by computational science. Yesterday’s terascale computers, capable of a trillion calculations per second, are being replaced by petascale computers, which are a thousand times faster, and scientists are even now working toward exascale computers, which will be a thousand times faster again (at a million trillion calculations per second). All of this computing power will provide an unprecedented opportunity for nuclear science (see Figure 2.3.1). Scientific computing, including modeling and simulation, has become crucial for research problems that are insoluble by traditional theoretical and experimental approaches, too hazardous to study in the laboratory, too time-consuming, or too expensive to solve.

High-performance computing provides answers to questions that neither experiment nor analytic theory can address. As such, it becomes a third leg supporting the field of nuclear physics. Nuclear physicists perform comprehensive simulations of strongly interacting matter in the laboratory and in the cosmos. These calculations are based on the most accurate input, the most reliable theoretical approaches, the most advanced algorithms, and extensive computational resources. Until recently working with petascale resources was hard to imagine, and even at the present time such an ambitious endeavor is beyond what a single researcher or a traditional research group can carry out. To this end, collaborative software environments have been created under the DOE’s Scientific Discovery Through Advanced Computing (SciDAC) program, where distributed resources and expertise are combined to address complex questions and solve key problems.1 In each partnership, mathematicians and computer scientists are collaborating with nuclear physicists to remove barriers to progress in nuclear structure and reactions, QCD, stellar explosions, accelerator science, and computational infrastructure. Computational resources required for these calculations are currently obtained from a combination of dedicated hardware facilities at national laboratories and universities, and from national leadership-class supercomputing facilities.

Although significant advances have been achieved in computer hardware as well as in the algorithms used in today’s computations, the forefront computational challenges in nuclear physics require resources that can only be achieved in national supercomputing centers or by dedicated special-purpose machines. Collaborative frameworks such as SciDAC will need to continue in order to prepare for, and to fully utilize, computing resources beyond the petascale when they become available to nuclear physicists. As the nature of the computers will be quite different from that of today’s computers, the codes and algorithms will need to evolve accordingly. Given the scale of the computational facilities, it is clear that one should view these numerical efforts like experiments in their style of operation. Currently, the nuclear physics community can efficiently use between 1 and 10 sustained petaflop resources; hence a staged evolution to the exascale seems appropriate.

In summary, the field of nuclear physics is poised to be transformed through the deployment of extreme-scale computing resources. Such resources will provide nuclear physics with unprecedented predictive capabilities that are needed for the systematic exploration of fundamental aspects of nature that are manifested in the structure and interactions of nuclei and

FIGURE 2.3.1 Estimates of the computational resources required to make breakthrough predictions in key areas of nuclear physics: hot and dense QCD, structure of hadrons, nuclear structure and reactions, nuclear astrophysics, and accelerator physics. SOURCE: Adapted from a figure by Martin Savage, University of Washington.

hadronic matter. Future high-performance computing resources will generate enhancements to nuclear physics program that cannot be imagined today.

1 More information about the SciDAC program can be found at http://www.scidac.gov/physics/physics.html; portions of the discussion in this section are adapted from http://www.scidac.gov/aboutSD.html.

many-body problem. Deriving internucleon interactions from quantum chromodynamics (QCD) is a fundamental problem that bridges hadron physics and nuclear structure. While excellent progress has been made in this domain (see the section “The Strong Force and the Internal Structure of Neutrons and Protons”), the lattice calculations have not yet been done with pions as light as those in nature. Meanwhile, QCD-inspired interactions derived within the framework of effective field theory and precise phenomenological forces carefully adjusted to scattering data are commonly used in nuclear structure and reaction calculations. Ab initio techniques have been extended to mass A = 14 and also can be applied to mediummass doubly magic systems. Configuration-interaction methods adopt the notion of a nuclear potential, which the nucleons themselves both create and move in. This approach has promise up through the region of mid-mass nuclei and heavy near-magic systems. The nuclear DFT focuses on nucleon densities and currents instead of on the particles themselves and is applicable throughout the nuclear chart. The road map for this effort involves the extension of ab initio approaches all the way to medium-heavy nuclei, the development of configuration interaction approaches in a variety of model spaces, and the quest for a nuclear density functional for all nuclei up to the heaviest elements (see Figure 2.10). Special, related challenges are the description of the role of the continuum in weakly bound nuclei and the development of microscopic reaction theory that is integrated with improved structure models.

The nuclear many-body problem is of broad intrinsic interest. The phenomena that arise—shell structure, superfluidity, collective motion, phase transitions—and their connections with many-body symmetries, are also fundamental to fields such as atomic physics, condensed matter physics, and quantum chemistry. Although

FIGURE 2.10 Top: The superposed colored bands at the top indicate domains of major theoretical approaches to the nuclear problem. By investigating the intersections between these theoretical strategies, one aims at developing a unified description of the nucleus. Bottom: Three examples of theoretical calculations involving high-performance computing—proton densities of carbon-12 obtained in the ab initio quantum Monte Carlo method compared with experimental results (left); numerical simulations of neutrino flavor evolution in a hot supernova environment, where the oscillation probability of neutrinos is shown as a function of neutrino energy and the direction of emission from the surface of a collapsing star (middle); two-dimensional total energy of fermium-258 (in MeV) using nuclear DFT explaining the phenomenon of bimodal fission observed in this nucleus, where nuclear shapes are shown as three-dimensional images that correspond to calculated nucleon densities (right). SOURCES: (Top; bottom left) Universal Nuclear Energy Density Functional SciDAC (DOE’s Scientific Discovery Through Advanced Computing) Collaboration; (bottom middle; bottom right) Nuclear Physics Highlights, Department of Energy; available at http://science.energy.gov/~/media/np/pdf/docs/nph_basicversion_std_res.pdf. Last accessed on April 12, 2012.

the interactions of nuclear physics differ from the electromagnetic interactions that dominate chemistry, materials, and biological molecules, the theoretical methods and many of the computational are shared. Figure 2.10 gives selected examples of many-body calculations.1

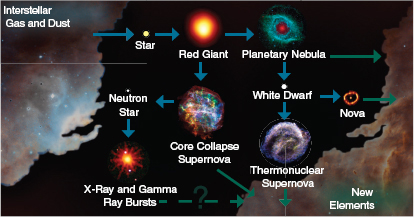

The aim of nuclear astrophysics is to understand those nuclear reactions that shape much of the nature of the visible universe. Nuclear fusion is the engine of stars; it produces the energy that stabilizes them against gravitational collapse and makes them shine. Spectacular stellar explosions such as novae, X-ray bursts, and type Ia supernovae are powered by nuclear reactions. While the main energy source of core collapse supernovae and long gamma-ray bursts is gravity, nuclear physics triggers the explosion. Neutron stars are giant nuclei in space, and short gamma-ray bursts are likely created when such gigantic nuclei collide. And last but not least, the planets of the solar system, their moons, asteroids, and life on Earth—all owe their existence to the heavy nuclei produced by nuclear reactions throughout the history of our galaxy and dispersed by stellar winds and explosions.

Among the open questions that will guide nuclear astrophysics in the coming decade are these:

- How did the elements come into existence?

- What makes stars explode as supernovae, novae, or X-ray bursts?

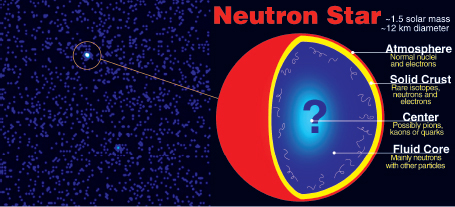

- What is the nature of neutron stars?

- What can neutrinos tell us about stars?

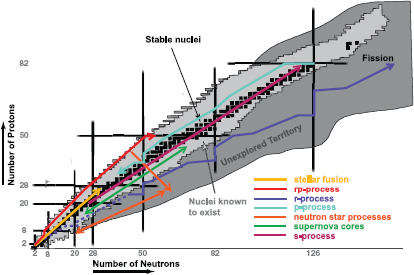

Answering these questions requires understanding intricate structural details of thousands of stable and unstable nuclei, and so draws on much of the work described in the preceding section on nuclear structure. This can be seen in Figure 2.11, which illustrates the principal nuclear processes that shape the visible universe. Each step of each process depends on the nature of that particular nucleus. As an example, a small change of just 10 percent in the energy of a single excited state of one particular nucleus, the famous Hoyle state in carbon-12, would make heavy elements, planets, and life as we know it disappear.

Unraveling the nuclear physics of the cosmos, therefore, requires a broad range of experimental and theoretical approaches. In the last decade, ever more sensitive laboratory measurements of low-energy nuclear reactions enabled precise solar models revealing a deficit of solar neutrinos detected on Earth. Knowledge of this

________________________________________________

1 Portions of this paragraph are adapted from Department of Energy, 2007, Computing Atomic Nuclei, SciDAC Review 6:42.

FIGURE 2.11 Schematic outline of the nuclear reactions sequences that generate energy and create new elements in stars and stellar explosions. Stable nuclei are marked as black squares, nuclei that have been observed in the laboratory as light gray squares. The horizontal and vertical lines mark the magic numbers for protons and neutrons, respectively. A very wide range of stable, neutron-deficient, and neutron-rich nuclei are created in nature. Many nuclear processes involve unstable nuclei, often beyond the current experimental limits. SOURCE: Adapted from a figure by Frank Timmes, Arizona State University.

deficit of solar neutrinos combined with the results of advanced neutrino detectors led scientists to the discovery that neutrinos have mass (as discussed in more detail late in this chapter under “Fundamental Symmetries”) and confirmed the accuracy of solar models. Laboratory precision measurements also revealed that the nuclear reactions that burn hydrogen in massive stars via the carbon-nitrogen-oxygen (CNO) cycle proceed much more slowly than had been anticipated, changing the predictions for the lifetimes of stars. A few key isotopes in the reaction sequence of the rapid neutron capture process (r-process) responsible for the origin of heavy elements in nature have now been produced by rare isotope facilities. Advanced experimental techniques also enabled measurements of the nuclear properties that characterize their role in the r-process, despite short lifetimes and small production quantities. The same sensitive techniques enabled precision mass and decay measurements of the majority of the extremely neutron-deficient rare

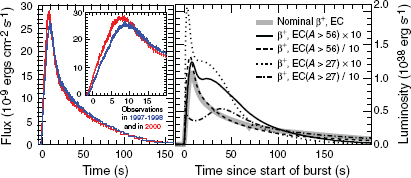

isotopes in the rapid proton capture process powering X-ray bursts. The results explain the existence of two classes of X-ray bursts, short and long bursts. In addition, a new rare class of X-ray bursts, so-called superbursts, were discovered and nuclear physics provided the likely explanation of a deep carbon explosion. New multidimensional core collapse supernova models included much more realistic weak interaction physics and nuclear matter properties owing to new results from laboratory experiments and nuclear theory calculations. Contrary to earlier work, some of these supernova models do now explode although many questions about the explosion mechanism remain. In these supernova explosion models, a new type of nuclear process producing heavy elements, the so called neutrino-p process, was found. The discovery of the most massive neutron star to date has eliminated many theoretical predictions about the nature of nuclear matter.

Future nuclear astrophysics efforts are emerging along two frontiers: (1) the study of unstable isotopes that exist in large quantities inside neutron stars and are copiously produced in stellar explosions but difficult to make in laboratories and (2) the determination of extremely slow nuclear reaction rates, which are important for the understanding of stars. Enabled by technical advances, dramatic progress is expected in the coming decade at both frontiers. The FRIB facility in the United States will, together with other rare isotope laboratories around the world, provide unprecedented access in the laboratory to the same unstable isotopes that play crucial roles in cosmic events. And a new generation of high-intensity stable beam accelerators to be located deep underground, as has been proposed for the United States, will enable the measurement of extremely slow stellar nuclear reactions without disturbance from cosmic radiation.2

A precision frontier also has emerged in the area of measuring neutron-induced reaction rates using neutron beams. Work is needed at this frontier not only on understanding the origin of those elements produced by neutron capture reactions, but also on applications of nuclear science that depend on neutron capture processes. These applications include the design of novel nuclear reactors and stockpile stewardship, as discussed in Chapter 3.

Nuclear theory is of special importance for nuclear astrophysics for many reasons:

- The extreme densities and temperatures encountered inside stars alter the properties of nuclei compared to what is measured in terrestrial laboratories.

________________________________________________

2 Such a facility would also facilitate research in fundamental symmetries, as discussed later in this chapter under “Fundamental Symmetries,” as well as in NRC, 2012, An Assessment of the Science Proposed for the Deep Underground Science and Engineering Laboratory (DUSEL), Washington, D.C.: The National Academies Press.

-

Nuclear theory is needed to calculate the necessary corrections, such as thermal excitations and electron screening.

- In some astrophysical environments such as the r-process or the interiors of neutron stars, extremely rare isotopes exist that cannot be produced in sufficient quantities to fully characterize their properties even with the most powerful rare isotope facilities on the horizon. Experimental data on rare isotopes are needed to advance nuclear theory models, which can then be used to predict the remaining data still out of reach of experiments.

- Many astrophysical reaction rates cannot be measured directly because the rates are too small and the beams too weak. Indirect techniques, where a faster surrogate reaction is used to constrain the slow astrophysical reaction, require reliable reaction theory. In addition, nuclear theory is needed to calculate reaction rates where no experimental information exists.

- Dense nuclear matter can be produced in the laboratory for short times, but can only be observed indirectly from the resulting particle emission. A significant theory effort is necessary to interpret laboratory reaction measurements, and experimental constraints must be used to advance the reliability of the nuclear matter equation of state needed in many astrophysical scenarios.

Progress in nuclear astrophysics must also go hand in hand with progress in astrophysics and observational astronomy. Astronomical observations of the manifestations of nuclear processes in the cosmos provide the link between laboratory and nature. The last decade has seen extraordinary progress in astronomy, with high-precision observations of the composition of very old stars at the largest telescopes on Earth and in space and with surveys scanning hundreds of thousands of candidate stars to find the targets. A new generation of X-ray space telescopes has opened up a novel era in the understanding of phenomena related to neutron stars. Gamma-ray observatories detected the decays of rare isotopes in space, ejected by stellar explosions. Neutrino telescopes provided neutrino images of the sun and had earlier registered neutrinos from a nearby supernova. In the coming decade this progress is bound to continue. Any ongoing large-scale surveys to search for old stars will only pan out in the coming decade, and a new generation of larger ground-based telescopes will enable detailed spectroscopy on many of the resulting targets. Existing X-ray observatories will be complemented with new facilities that push observations toward harder X-rays and possibly gamma-rays and will provide new data on neutron stars and stellar explosions. New-generation gravitational wave detectors are expected to detect signals from supernovae and neutron stars for the first time. Neutrino observatories are ready, and with a little bit of luck they might observe a galactic supernova, an achievement that would revolutionize our understanding of such an event. And a new thrust in astronomy toward wide-field

and high-repetition surveys is expected to shed new light on supernovae and to lead to the discovery of new, possibly nuclear-powered, transient astrophysical phenomena.

Astronomy, astrophysical modeling, and nuclear physics need to work together to achieve progress in nuclear astrophysics. Communication across field boundaries, coordination of interdisciplinary research, and exchange of data are essential for these fields to jointly address the open questions. The Joint Institute for Nuclear Astrophysics, funded by the Physics Frontiers Center Initiative of the National Science Foundation (NSF), has been critical in forming and maintaining a unique worldwide platform to foster such interdisciplinary collaboration between the different nuclear astrophysics communities.

Finally, it will be important to strengthen efforts to coordinate research across field boundaries, to form broad interdisciplinary research networks that integrate the wide range of required expertise, and to facilitate the exchange of data and information between astrophysics and nuclear physics, and between experiment, observations, and theory. Such interdisciplinary research networks are also needed to attract and educate the next generation of nuclear astrophysicists, who, with emerging new facilities in nuclear physics, astrophysics, and high-performance computing, are likely to make transformational advances in our understanding of the cosmos.

The complex composition of our world—some 288 stable or long-lived isotopes of 83 elements—is the result of an extended chemical evolution process that started with the big bang and was followed by billions of years of nuclear processing in numerous stars and stellar explosions (see Figure 2.12). The steady buildup of heavier elements in stars by the successive fusion of hydrogen, helium, carbon, oxygen, neon, and silicon marks the beginning of a new round in the ongoing cycle of nucleosynthesis. The freshly synthesized elements are ejected by stellar winds or supernova explosions and then mixed with interstellar gas and dust from which a new generation of stars is born to repeat the cycle.

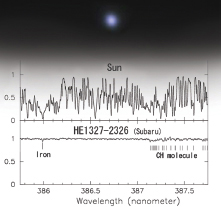

Nuclear physics provides the underlying blueprint for this chemical evolution by determining the composition of new elements generated in each astrophysical event. Observations of rare iron-poor, hence old, stars, reveal the composition of the early, chemically primitive galaxy and provide a “fossil record” of chemical evolution. By deciphering the structure of the nuclei involved and by advancing observations, we can trace our chemical history back, step by step, perhaps all the way to the very first supernovae that illuminated the universe. This “nuclear archeology” will advance our understanding of the early universe, of the formation of our galaxy, and also of the future of the universe.

FIGURE 2.12 The ongoing cycle of the creation of the elements in the cosmos. Stars form out of interstellar gas and dust and evolve only to eject freshly synthesized elements into space at the end of their lives. The ejected elements enrich the interstellar medium to begin the cycle anew in a continuous process of chemical enrichment and compositional evolution. SOURCES: (Background image) NASA, ESA, and the Hubble Heritage Team (AURA/STScI); (red giant) A. Dupree (Harvard-Smithsonian Center for Astrophysics), R. Gilliland (STScI), Hubble Space Telescope (HST), NASA; (x-ray) NASA, Swift, and S. Immler (NASA Goddard Space Flight Center); (planetary nebula) NASA, Jet Propulsion Laboratory (JPL)-California Institute of Technology (Caltech), Kate Su (Steward Observatory, University of Arizona) et al.; (thermonuclear supernova) NASA/Chandra X-ray Center (CXC)/North Carolina State University/S. Reynolds et al.; (nova) NASA, ESA, HST, F. Paresce, R. Jedrzejewski (STScI).

The Eve of Chemical Evolution: How Did the First Stars Burn?

How were the first heavy elements created by the potentially extremely massive stars formed after the big bang? The pattern of the elements ejected in their deaths might still be observable today in the most iron-poor stars of the galaxy, survivors of an early second generation of stars. Candidate stars with iron content a few 100,000 times lower than that of the sun have been found (see Figure 2.13). Comparing the signatures of these elements with predictions from theoretical models of first stars requires a quantitative knowledge of the nuclear reaction sequences generating these elements. This opens up an observational window into the properties of first stars that is complementary to the planned, very difficult direct observations with future infrared telescopes. The reward might be not only a deeper understanding of the beginnings of chemical evolution in our galaxy but also clues about the nature of the early universe and the formation of structure in the cosmos.

FIGURE 2.13 The most iron-deficient star identified so far has about 400,000 times less iron than the sun. It is believed to be extremely old, having formed shortly after the big bang. The absorption spectrum, here compared to that of the sun, reveals the composition of the early universe at the time the star formed and might contain clues about the elements created by the very first supernovae. SOURCE: (top) copyright © Magnum Telescope; (bottom) copyright © Subaru Telescope, National Astronomical Observatory of Japan. All rights reserved.

Stars: What Elements Are Formed from the Cauldrons of the Cosmos?

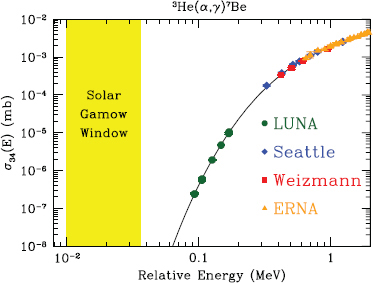

Stars are the nuclear furnaces that forge many of the chemical elements in nature. The composition of the material that stars eject into space depends sensitively on the rate at which the various nuclear fusion reactions occur in their interior. While the reaction sequences have been identified, many reaction rates are still not known accurately, limiting predictions of element formation and stellar evolution. A prominent example is the rate of capture of helium on carbon. With a few exceptions, which mark major milestones in nuclear astrophysics, a direct experimental determination of the low-energy stellar fusion rates has not yet been possible. Some of these pioneering measurements have been enabled by experiments in the low background environments of laboratories deep underground. Models of stars therefore employ uncertain theoretical nuclear reaction rates mostly derived by extrapolating experimental data obtained at higher energies or indirectly.

Addressing this problem will remain a formidable challenge in the coming decade. Advances in experimental techniques such as high-intensity stable beam accelerators in underground laboratories, intense rare isotope beams, and advanced detection and target systems will be needed (see Figure 2.14). On the theoretical

FIGURE 2.14 The fusion probability of helium-3 (3He) and helium-4 (4He), an important reaction in stars affecting neutrino production in the sun, measured directly at various laboratories. The challenge is to measure the extremely small fusion rates at the low relative energies that the particles have inside stars. The reduced background in underground accelerator laboratories (LUNA data shown above in green) compared to aboveground laboratories (all other data) enables the measurement of fusion rates that are smaller by a factor of approximately 1,000. This reduces the error when extrapolating the fusion rate to the still lower stellar energies. SOURCE: Courtesy of Richard Cyburt, Michigan State University.

side, ab initio calculations of nuclear reactions and models that account for cluster structures in nuclei are particularly promising guides for predicting reaction rates at the energies nuclei have in stars. Theory also needs to address the impact of electrons, which always accompany nuclei and modify reaction rates differently in a laboratory target and in stellar plasma.

The Alchemist’s Dream: How Are Gold, Platinum, and Uranium Created in Nature?

A large gap in our understanding of the chemical evolution of our galaxy surrounds the origin of the elements heavier than iron, such as gold, platinum, or uranium, which comprise more than half of the elements in the periodic table. A

slow neutron capture process (s-process) in red giant stars is thought to produce about half of these elements, ending with the production of lead and bismuth. The other half, including the heaviest elements found on Earth, such as uranium and thorium, require an astrophysical environment with an extraordinary density of neutrons. While such an environment has not been identified with certainty, theory predicts that under such conditions, captures of neutrons are very fast, enabling the synthesis of heavy elements beyond bismuth. During the brief duration of this rapid neutron capture process (r-process), exotic short-lived nuclei with extreme excesses of neutrons come into existence as part of the ensuing chain of nuclear reactions. Most of these exotic nuclei have never been made in the laboratory. This will change with the advent of next-generation rare isotope beam facilities like FRIB, which will allow experimental nuclear physicists to produce such nuclei and to determine their properties. The goal is to finally understand how and where nature produces precious metals like gold and platinum and heavy elements like thorium and uranium. Physics questions concerning the neutron-induced processes that constitute the r-process are closely related to neutron-driven applications such as nuclear reactors.

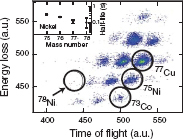

Although the ultimate goal—namely, to identify the astrophysical site of the r-process—has not been reached yet, progress in nuclear physics and astrophysics has been made in the past decade toward unraveling the origin of the r-process elements. Existing radioactive beam facilities have provided experimental data on some of the key nuclei participating in the r-process. Important recent milestones include the half-life measurement of nickel-78 (see Figure 2.15), high-precision ion

FIGURE 2.15 Very neutron-rich r-process nuclei observed in a rare isotope laboratory. Each dot represents an isotope that has arrived at the experiment, and the dot’s location on the map identifies mass number and element. The production and identification of the r-process waiting point nucleus nickel-78 was a challenge, though a sufficient number of isotopes were identified to determine a first measurement of its half-life. Because most r-process isotopes are out of reach of current rare isotope facilities, their study must await a new generation of accelerators such as FRIB. SOURCE: P.T. Hosmer, H. Schatz, A. Aprahamian, et al. 2005. Half-life of the doubly magic r-process nucleus 78Ni, Physical Review Letters 94: 112501 Figure 1. Copyright 2005, American Physics Society.

trap mass measurements of zinc-80, and constraints on the neutron capture rate on tin-132. These data provide guidance for theoretical models, which are used to predict the properties of the many nuclei out of current experimental reach. This has led to recognizing the importance of forbidden beta decay transitions and the direct mechanism in neutron captures and, accordingly, to a more realistic description of nuclear fission.

A variety of astrophysical models have been developed that might provide the conditions necessary for an r-process and eject sufficient amounts of matter into space to account for the observed element abundances. The most promising ones involve core collapse supernovae and the merging of two neutron stars. As a breakthrough, observations of the surface composition of iron-poor stars have opened an unprecedented window into the gradual enrichment of the early galaxy with r-process elements. These stars preserve the composition of the early, chemically less evolved galaxy at the time and location of their formation. The observations tell us that r-process events must have started very early in the evolution of the universe, and that they generate a very robust and characteristic pattern for the abundance of elements throughout the history of the galaxy.

Progress in nuclear physics is needed to connect advances in observations and theoretical astrophysics. In addition to new facilities, the data-driven advances expected in nuclear theory will allow predicting the properties of the nuclei that remain out of reach experimentally and quantifying the errors of such extrapolations. This will reduce the uncertainty in astrophysical models related to nuclear physics to the point where various astrophysical assumptions can be rigorously tested against observations, enabling a data-driven approach to solving the r-process puzzle. New approaches in astrophysics are also needed because none of the existing models achieves the conditions and event frequencies inferred from observations for the r-process. Future large-scale astronomical surveys, followed by high-resolution spectroscopy with the largest telescopes available, need to increase the sample of iron-poor stars formed in r-process-rich environments in the early galaxy to provide statistically relevant information on the frequency of r-process events and the nuclear abundance patterns they produce. Detections of the traces of nearby supernovae in Earth’s geological record might also provide clues on the r-process site, and future gamma-ray observatories might be able to detect or at least delimit the radioactive isotopes produced by a supernova r-process.

Dust Grains from Space: Can They Reveal the Secrets of Stellar Cores?

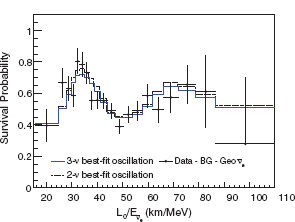

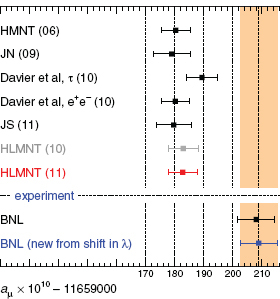

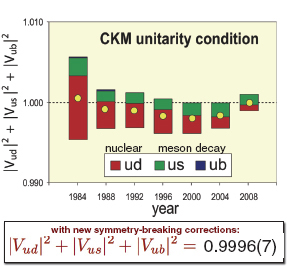

The slow neutron capture process is known to occur in red giant stars. But how does matter flow in the deep interiors of stars to generate the necessary free neutrons, and how have these processes changed over the history of chemical evolution? Progress has been achieved in the past decade by analyzing presolar