Using Emerging Science and

Technologies to Address Persistent

and Future Environmental Challenges

Chapter 2 discussed some of the broad drivers and challenges that are inherent to the mission of the US Environmental Protection Agency (EPA) today and in the future. Remarkable progress has been made in the last several decades in the development of new scientific approaches, tools, and technologies relevant to addressing those challenges. The purpose of this chapter is to highlight new and changing science and technologies that are or will be increasingly important for science-informed policy and regulation in EPA.

New tools and technologies can substantially improve the scientific basis of environmental policy and regulations, but it is important to remember that many of the tools and technologies need to build on and enhance the current foundation of environmental science and engineering in the United States. In addition, addressing the complex “wicked problems” facing EPA today and in the future requires not only new science and technology but a more deliberate approach to systems thinking, for example, by using frameworks that strive to integrate a broader array of interactions between humans and the environment. From the perspective of scientific advances relevant to the future of EPA, it will be increasingly important that all aspects of biologic sciences and environmental sciences and engineering—including human health risk assessment, microbial pathogenesis, ecosystem energy and matter transfers, and ecologic adaptation to climate change—be considered in an integrated systems-biology approach. That approach must also be integrated with considerations of environmental, social, behavioral, and economic impacts.

A SIMPLE PARADIGM FOR DATA-DRIVEN, SCIENCE-INFORMED DECISIONS IN THE ENVIRONMENTAL PROTECTION AGENCY

New scientific advances, including the development and application of new tools and technologies, are critical for the science mission of EPA. Effec-

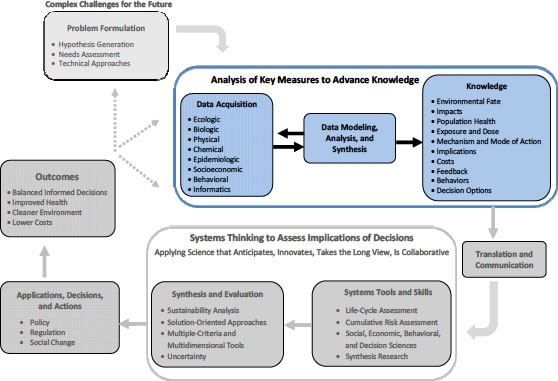

tive science-informed regulation and policy aimed at protecting human health and environmental quality relies on robust approaches to data acquisition and to knowledge generated from the data. For science to inform regulation and policy effectively, a strong problem-formulation step is needed. Once a problem is formulated, EPA scientists can evaluate what types of data are needed and then determine which available tools and technologies are appropriate for gathering the most robust data (see Figure 3-1). As described in detail in this chapter, management and interpretation of “big data” will be a continuing challenge for EPA inasmuch as new technologies are now capable of quickly generating huge amounts of data. Senior statisticians are needed in the agency to help analyze, model, and support the synthesis of that data. In many instances, large amounts of data are directly acquired as a component of hypothesis-driven research. However, many new technologies are also used for discovery-driven research— that is, generating large volumes of data that may not be a derivative of a clear, hypothesis-driven experiment, but nevertheless may yield important new hypotheses. In both instances, the data themselves do not become knowledge that can be applied as solutions to problems until they are analyzed and interpreted and then placed in the context of an appropriate problem or scientific theory. As depicted in Figure 3-1, there are iterations and feedback loops that must exist, particularly between data acquisition and data modeling, analysis, and synthesis.

The generation of knowledge, which can take many forms depending on the question being addressed and the nature of the data, ultimately serves as the basis of science-informed regulation and policy (see Figure 3-1). The committee recognizes that scientific data constitute one—albeit important—input into decision-making processes but alone will not resolve highly complex and uncertain environmental and health problems. Ultimately, environmental and health decisions and solutions will also be based on economic, societal, and other considerations apart from science. They need to take into account the variety and complexities of interactions between humans and the environment. But with better scientific understanding, regulations and other actions can be more effective and can have better and more cost-effective outcomes, such as improved human health and improved quality of ecosystems and the environment.

In accordance with the above discussion, it is imperative that EPA have the capacity and knowledge to take advantage of the latest science and technologies, which are always changing. The remainder of the chapter highlights a number of scientific and technologic advances that will be increasingly important for state-of-the-art, science-informed environmental regulation. It also includes several examples of how emerging science, technologies, and tools are transforming the way in which EPA will use data to address important regulatory issues and decision-making, and they demonstrate the need for a systems approach to addressing these complex problems. The chapter has been organized in parallel to the challenges identified in Chapter 2. The main topics that will be discussed are tools and technologies to address challenges related to 1) chemical exposures, human health, and the environment; 2) air pollution and climate

FIGURE 3-1 The iterative process of science-informed environmental decision-making and policy. The process starts with effective problem-formulation, which drives both the experimental design and the selection of data to be acquired. Modeling, synthesis, and analysis of the data are necessary to generate new knowledge. Only through effective translation and communication of new knowledge can science truly inform policies that can generate actions to improve public health and the environment. An evaluation of outcomes is an essential component in determining whether science-informed actions have been beneficial, and it, in turn, adds to the knowledge base.

change; 3) water quality and nutrient pollution; and 4) shifting spatial and temporal scales. The chapter ends with a section on, “Using New Science to Drive Safer Technologies and Products”, which discusses ways in which EPA can prevent environmental problems before they arise.

The examples in this chapter are not intended to be comprehensive; rather, they are provided to illustrate from different perspectives the many ways in which new advances in science, engineering, and technology could be embraced by the agency, its scientists, and regulators to ensure that the agency remains at the leading edge of science-informed regulatory policy to protect human health and the environment. Having assessed EPA’s current activities, the committee notes that EPA is well equipped to take advantage of most of the new scientific and technologic advances and that, in fact, its scientists and engineers are leaders in some fields.

New technologies will be important to EPA for identifying chemicals in the environment, understanding their transport and fate in the environment, assessing the extent of actual human exposures through biomonitoring, and identifying and predicting the potential toxic effects of chemicals. Current and emerging tools and technologies related to these topics are discussed in the sections below.

Identifying Chemicals in Environmental Media

Analytic chemistry continues to improve at breakneck speed, and analytic determinations for both metals and organic chemicals have improved exponentially. Chemicals can now be detected at ever lower concentrations. For some organic chemicals, such as chlorinated dioxins, standard EPA methods include the routine measurement of samples in parts per quadrillion (ppq) or picograms per liter (pg/L) (EPA 1997), which allows risk managers to characterize lifetime uptake of exposure to various carcinogens and daily uptake rates in chronic hazard quotient assessments of chemicals that were not previously detectable. Simply being able to measure concentrations of chemicals in environmental media or blood confronts EPA with new decisions on whether to set maximum contaminant levels in drinking water or allowable daily intakes in food or whether to allow states to do so independently if health effects are uncertain.

As the public learns about new methods of detection of chemicals in, for example, their blood, their children’s blood, and the environment (water, air, and soil), questions arise as to what such occurrences mean. Of course, the simple detection of chemicals in relevant receptors does not necessarily imply any human health or ecologic effects. To evaluate the health implications of chemical

exposures throughout the range of exposure levels, sufficiently large epidemiologic studies that incorporate state-of-the-art analytic methods are needed (see the section “Applications of Biomarkers to Human Health Studies”). But, even when biologic effects are not evident (and in special cases of hormesis when there are potentially beneficial effects), the challenge for EPA is to provide meaningful and relevant information to potentially affected parties.

It is now possible, while testing for emerging contaminants of interest and their metabolites, to monitor the effluent of a publicly owned wastewater-treatment plant and determine trace quantities and metabolites of substances— such as pharmaceuticals (licit and illicit), personal-care products, and hormones (natural and synthetic)—that are being used and disposed of or excreted by people in each town (Zegura et al. 2009; Jean et al. 2012; Neng and Nogueira 2012). The mass emission factors per capita can be calculated for the chemicals without determining individual household use. However, without better knowledge of the environmental and human health risks of such low-dose exposures, the advanced detection capabilities do not necessarily help the agency to interpret the results or to protect human health and the environment more effectively. One example is mercury. On one hand, from a toxicologic standpoint, mercury is one of the most studied elements (Schober et al. 2003; Jones et al. 2010). On the other hand, it is still difficult to make a conclusive assessment of the health effects of mercury emitted into the environment (EPA 2011a). Finding cost-effective research opportunities for connecting data on environmental chemicals with environmental and health outcomes can contribute to an increase in knowledge and can inform policy.

Fate and Transport of Chemicals in the Environment

EPA has long been recognized as a leader in developing computer models of the fate and transport of chemical contaminants in the environment, a key component in constructing models of human exposure and health outcomes, as well as in source attribution for ecologic and human endpoints. It develops and supports models for both scientific purposes and application in environmental management. Although many of its models are well established and now backed by years of application experience, EPA and the broader environmental-modeling community face challenges to improve spatial and temporal resolution, to account for stochastic environmental behaviors and for modeling uncertainties, to improve the characterization of transfers between environmental media (air, surface water, groundwater, and soil), and to account for feedback between contaminant concentrations and environmental behavior (for example, the effects of such short-lived radiative-forcing agents as ozone and aerosols have on climate change). Furthermore, sources, properties, and behaviors of some contaminants remain poorly understood, even after years of study. EPA also faces significant challenges and opportunities for integrating models with data from new monitoring systems through data assimilation and inverse model

ing techniques. Specific examples of ways in which new approaches to environmental fate and transport modeling are enhancing the understanding of health and ecologic impacts of pollutants are provided in the section on “Tools and Technologies to Address Challenges of Air Pollution and Climate Change”.

Assessing the Extent of Human Exposures Through Biomonitoring

Historically, exposure research in EPA has focused on discrete exposures—in external or internal environments, concentrating on effects from sources or effects on biologic systems, and on human or ecologic exposures— one pollutant or stressor at a time. Tools and methods have evolved for undertaking those specific challenges, but targeted approaches have led to sparse exposure data (Egeghy et al. 2012).

The broader availability and ease of use of advanced technologies are resulting in a profusion of data and an overall democratization of the collection and availability of exposure data. The US Centers for Disease Control and Prevention (CDC) National Health and Nutrition Examination Survey (NHANES) alone has provided one of the most revealing snapshots of human exposures to environmental chemicals through the use of biomonitoring (CDC 2012). The collaboration between CDC and national and international organizations quickly expanded the breadth and depth of data available at the population and subpopulation level. That rapid progress was predicated on the availability of better analytic methods and a national commitment to generate baseline data.

Scientific and technologic advances in disparate fields—including computational chemistry, climate change science, health tracking, computational toxicology, and sensor technology—have provided unprecedented opportunities to address the needs of exposure research. Many of the tools are more accessible and easier to use than earlier ones and are slowly being deployed by researchers and stakeholders, such as state agencies and public-interest groups. For example, advances in personal environmental monitoring technologies have been enabled because people around the world routinely carry cellular telephones (Tsow et al. 2009). Those devices may be equipped with motion, audio, visual, and location sensors that can be controlled through wireless networks. Efforts are underway to use them to create expanding networks of sensors to collect personal exposure information.

As discussed in Chapter 2, biomonitoring for human exposure to chemicals in the environment has provided a new lens for understanding population exposures to toxicants. Although the analytic and technical methods discussed to measure human exposure to environmental toxicants will continue to improve, without better information to understand whether the dose is of sufficient magnitude to cause an effect, simply identifying the presence of a toxic substance may raise more questions than it answers. Therefore, there are continuing advances needed to measure and understand the burden of chemicals and their metabolites in the human body.

Recent advances in microchip capillary electrophoresis for separation and identification of nucleotides, proteins, and peptides and advances in spectrometrics, such as nuclear magnetic resonance imaging and mass spectrometry, have changed the nature of health effects monitoring. These technologic advances— especially in genomics, proteomics, metabolomics, bioinformatics, and related fields of the molecular sciences (referred to here collectively as panomics)— have transformed the understanding of biologic processes at the molecular level and should eventually allow detailed characterization of molecular pathways that underlie the biologic responses of humans and other organisms to environmental perturbations. Advances in “–omics” technologies provide EPA with a better understanding of mechanistic pathways and modes of action that can support the risk assessment process. Also, the integration of those technologies with population-based epidemiologic research can contribute to the discovery of major environmental determinants, dose-response relationships, mechanistic pathways, susceptible populations, and gene-environment interactions for health effects in human populations. Appendix C discusses some of the recent advances in -omics technologies and approaches, their implications for EPA, where EPA is at the leading edge of applying the technologies to address environmental problems, and where EPA could benefit from more extensive engagement.

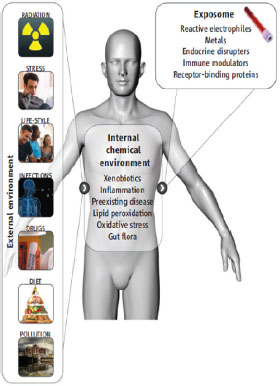

New high-throughput -omic and biomonitoring technologies are providing a greater number of potential biomarkers to assess multiple exposures simultaneously over the course of a lifetime. The biomarkers address exposures to a wide variety of stressors, including chemical, biologic, physical, and psychosocial stressors. The exposome is now being presented as a unifying concept that can capture the totality of environmental exposures (including lifestyle factors, such as diet, stress, drug use, and infection) from the prenatal period on by using a combination of biomarkers, genomic technologies, informatics, and environmental exposures (Figure 3-2) (Wild 2005; Rappaport and Smith 2010; Lioy and Rappaport 2011). The exposome, in concert with the human genome and the epigenome, holds promise for elucidating the etiology of chronic diseases and relevant contributions from the environment (Rappaport and Smith 2010). The concept of the exposome will be of particular value to EPA in assessing and comparing potential health and environmental consequences of individual chemical exposures against previously identified risks. It may also allow for more carefully designed and rational experiments to evaluate potential chemical interactions that contribute to the exposome of individuals or populations.

Exposure information is a key component of prediction, prevention, and reduction of environmental and human health risks. Exposure science at EPA has been limited by the availability of methods, technologies, and resources, but recent advancements provide an unprecedented opportunity to develop higher-throughput, more cost-effective, and more relevant exposure assessments. Research in this field is funded by other federal agencies and international programs, such as the National Institute of Environmental Health Sciences Expo-

sure Biology Program; the National Science Foundation Environmental, Health, and Safety Risks of Nanomaterials Program; and the European Commission’s exposome initiative. Those organizations provide valuable partnership opportunities for EPA to build capacity through strategic collaborations. Moreover, an integral need for EPA in the future will be to develop processes and procedures for effective public communication of the potential public health and environmental risks associated with the increasing number of chemicals, both old and new, that will undoubtedly be identified in food, water, air, and biologic samples, including human tissues. Risk communication strategies should include the latest approaches in social, economic, and behavioral sciences, as discussed in Chapter 5.

FIGURE 3-2 Characterizing the exposome. The exposome represents the combined exposures from all sources that reach the internal chemical environment. Examples of toxicologically important exposome classes are shown. Biomarkers, such as those measured in blood and urine, can be used to characterize the exposome. Source: Adapted from Rappaport and Smith 2010.

Applications of Biomarkers to Human Health Studies

Epidemiologic research plays a central role in assessing, understanding, and controlling the human health effects of environmental exposures. In 2009,

the National Research Council (NRC) report Science and Decisions: Advancing Risk Assessment recommended that EPA increase the role of epidemiology, surveillance, and biomonitoring to support cumulative risk assessment (NRC 2009). The most successful and current epidemiologic studies leverage multiple resources and use highly collaborative and multidisciplinary approaches (Seminara et al. 2007; Baker and Nieuwenhuijsen 2008). In the United States, a number of high-quality prospective cohort studies funded mostly by the National Institutes of Health have followed millions of people and have collected bio-specimen repositories (blood, urine, nails, and DNA) and sociodemographic, genetic, medical, and lifestyle information (Seminara et al. 2007; Willett et al. 2007; NHLBI 2011). Major prospective cohort studies have also been undertaken in other countries (Riboli et al. 2002; Ahsan et al. 2006; Elliott and Peak-man 2008).

With some exceptions, current prospective cohort studies generally lack information on environmental exposures. EPA can contribute to closing this gap by, for instance, adding high-quality environmental measures to studies that already have good followup and outcome measures. Examples of collaborations in which EPA plays a critical role are the Agricultural Health Study (NIH 2012), the Multiethnic Study of Atherosclerosis and Air Pollution (MESA Air) (University of Washington 2011), and the National Children’s Study (NRC/IOM 2008). In the National Children’s Study, the linkage of monitoring data on toxicants in air, water, food, and ecosystems to individual participant data has already been explored in depth in Queens, New York, one of the Vanguard National Children’s Study sites (Lioy et al. 2009). Budgetary and implementation challenges for the National Children’s Study will require innovative strategies for recruitment, examination, and followup without compromising the quality of the science (Kaiser 2012).

Alternatively, EPA could add followup and outcome measures to studies that have good measures of exposure, although this is likely to be more time-consuming and expensive. At a minimum, EPA should ensure that environmental indicators, including country-wide air-monitoring and water-monitoring data, meet quality and accessibility criteria, for example, through a public data-access system. The indicators can then be merged with individual and community-level data in population-based studies by using geographic and temporal criteria. Biomonitoring and modeling approaches to predict exposure and dose and other advances in exposure science—including the exposome (Weis et al. 2005; Sheldon and Cohen Hubal 2009; Rappaport and Smith 2010; Lioy and Rappaport 2011), -omic technologies, and complex systems approaches (Diez Roux 2011)—could be incorporated into the prospective studies. By building expertise and leadership in exposure assessment and by working in collaboration with other national and international efforts, EPA can play a principal role in the incorporation of environmental exposures into prospective cohort studies and thus contribute to the discovery of major environmental determinants, dose— response relationships, mechanistic pathways, and gene—environment interactions for chronic diseases in human studies.

Environmental informatics plays an important role in the human-population—based studies described above. Although environmental informatics received much of its momentum from central Europe in the early 1990s (Pillmann et al. 2006), EPA has recognized its importance and has played a role in shaping its direction. The agency helped to establish the Environmental Data Standards Council, which was subsumed in 2005 by the Exchange Network Leadership Council (Environmental Information Exchange Network 2011), an environmental-data exchange partnership representing states, tribes, territories, and EPA. The council’s mission includes supporting environmental information-sharing among its partners through automation, standardization, and real-time access. The scope of data exchange covers air, water, health, waste, and natural resources, and covers multiple programs. Cross-program data include data from the Department of Homeland Security, the Toxics Release Inventory, pollution-prevention programs, the Substance Registry Services System, and data obtained with geospatial technologies. The council is an example of useful and productive national efforts to generate environmental informatics data. On the basis of technologic advances and new environmental challenges discussed throughout this report, it will be necessary for EPA to begin to make data standards flexible and adaptable so that it can use data that are less structured and less groomed.

Health informatics has a strong history in the United States. There are numerous national and state data registries on chronic and nonchronic diseases, such as the Surveillance, Epidemiology, and End Results cancer registry and the National Birth Defects registry. The Agency for Healthcare Research and Quality of the Department of Health and Human Services maintains a national hospital discharge database and, as previously mentioned, CDC’s National Center for Health Statistics conducts the NHANES annually to study health behaviors, dietary intake, environmental exposure, and disease status of the US population. EPA could also work with CDC’s National Center for Health Statistics and the National Center for Environmental Health to facilitate the merging of environmental-monitoring data (on air, water, and ecosystems) with national databases that have biomarker and health data, such as NHANES. Such merging, following the NHANES model of public access, could constitute a major advance in the understanding of environmental exposures and their health effects and in informing policy regulation and the prevention and control of environmental exposures. Collaborating with other epidemiologic research efforts, EPA will have the opportunity to identify the optimal population-based prospective cohort study protocol to answer environmental-health questions, to ensure that high-quality data on environmental exposures are incorporated into large epidemiologic studies, and to contribute to the analysis and interpretation of exposure and health-effect associations. In addition, there are proprietary databases owned by healthcare providers and insurers, including Medicare and Medicaid. These databases lay out the foundation of health informatics in the United States and have been successfully used in environmental health research.

Identifying and Predicting the Potential Toxic Effects of Chemicals

In 2007, NRC convened a panel of experts to create a vision and strategy for toxicity testing that would capitalize on the -omics concepts described in Appendix C and on other new tools and technologies for the 21st century (NRC 2007a). Conceptually, that vision is not very different from the now classic four-step approach to risk assessment—hazard identification, exposure assessment, dose—response assessment, and risk characterization—that was laid out in the NRC report Risk Assessment in the Federal Government: Managing the Process (commonly referred to as the Red Book) (NRC 1983) and that has been widely adopted by EPA as its chemical risk assessment paradigm (EPA 1984, 2000). However, the vision looks to new tools and technologies that would largely replace in vivo animal testing through extensive use of high-throughput in vitro technologies that use human-derived cells and tissues coupled with computational approaches that allow characterization of systems-based pathways that precede toxic responses. The computational approach to predictive toxicology has many advantages over the current time-consuming, expensive, and somewhat unreliable paradigm of relying on high-dose in vivo animal testiXng to predict human responses to low-dose exposures.

Although there is generally widespread agreement that the new panomics tools (that is, genomics, proteomics, metabolomics, bioinformatics, and related fields of the molecular sciences), coupled with sophisticated bioinformatics approaches to data management and analyses, will transform the understanding of how toxic chemicals produce their adverse effects, much remains to be learned about the applicability and relevance of in vitro toxicology results to actual human exposures at low doses. With the fundamental mechanistic knowledge, it should be easier to distinguish responses that are relevant to humans from responses that may be species-specific or to identify responses that occur at high doses but not low doses or vice versa. That knowledge would contribute to a reduction in the frequency of false-positive and false-negative results that sometimes plague high-dose in vivo animal testing.

A key issue in the use of such technologies is phenotypic anchoring,1 which is an important step in the validation of an assay. It is essential to validate treatment-related changes observed in an in vitro –omics experiment as causally associated with adverse outcomes seen in the individual. A single exposure to one dose of one chemical can result in a plethora of molecular responses and hundreds of thousands of data points that reflect the organism’s response to that exposure. Quantitative changes in gene expression (transcriptomics), protein content (proteomics), later enzymatic activity, and concentrations of metabolic

![]()

1 The concept of phenotypic anchoring arose from studies that examined the effects of chemical exposures on gene expression in tissues (transcriptomics). In that context, the term is defined as “the relation[ship between] specific alterations in gene expression profiles [and] specific adverse effects of environmental stresses defined by conventional parameters of toxicity such as clinical chemistry and histopathology” (Paules 2003).

substrates, products, cofactors, and other small molecules (metabolomics) can all be measured. But which of those signals, if any, are quantitatively predictive of the ultimate adverse response of interest is the key. Changes in the profiles are dynamic, tissue-specific, and dose-dependent, so the results may be drastically different depending on the tissue that was examined, the time when the sample was taken, and the dose or concentration that was used. Sophisticated bioinformatic analyses will be required to make biologic sense out of such massive amounts of data. Tremendous advances have been made in this field in the last 5 years, and it is now possible to coalesce such information into pathway analyses that may have utility in toxicity assessment. Indeed, EPA’s ToxCast program has begun to examine approaches discussed above to predictive in vitro toxicity assessment (Judson et al. 2011).

Example of Using Emerging Science to Address Regulatory Issues and Support Decision-Making: ToxCast Program

In 2006, EPA began a new computational toxicology program aimed at developing new approaches to assess and predict toxicity in vitro (Judson et al. 2011). Agency scientists in the computational toxicology program have been substantial contributors to the development of new approaches to toxicity testing. They have collectively published over 130 peer reviewed articles since its inception, including 38 publications from ToxCast (EPA 2012a). Although the use of an array of high-throughput in vitro tests—focused on different putative toxicity endpoints and pathways—to predict in vivo outcomes is attractive from both a cost-savings and time-savings perspective, it entails many challenges, including the following:

• Chemical metabolism and disposition may differ between the in vitro and in vivo situations. A principle tenet of toxicology is that the concentration of a toxicant at a specific target site is a key determinant of toxicity. If a metabolite of a toxicant, not the parent molecule, is responsible for toxicity, the in vitro systems must be able to form that metabolite—and other metabolites that might modify the response (for example, alternate detoxification pathways)—in a ratio similar to what occurs in vivo. If an in vitro system fails to form the toxicant or if it forms one that does not occur in vivo, the test system will generate a false-negative or false-positive response. The large amounts of data that can be generated from -omics experiments may be useful in identifying putative pathways of toxicity, but the relevance of the pathways to human exposures depends on a reasonably accurate simulation of the metabolic disposition of the substance that would occur in vivo.

• The time course of effects observed in vitro may be very different from what occurrs in vivo. Many chemical treatments of cells result in immediate changes in gene expression, and the nature and magnitude of the changes are highly dynamic. Initial responses may be largely adaptive in nature, and not necessarily reflective of an ultimate toxic effect. Adaptive responses can indi-

cate the potential for future toxicity, but many intervening biologic processes may abrogate downstream responses, so the fact that a particular pathway is activated by a chemical does not necessarily mean that the same will occur in vivo. It will be important for high-throughput screening approaches to consider multiple time points for analysis.

• Dose-response assessment determined in vitro may be difficult to correlate with in vivo responses and administered doses. Relating dose rate (in milligrams per kilogram per day) in vivo at specific tissues to cell-culture concentrations tested in vitro is extremely difficult and requires detailed knowledge of the absorption, distribution, metabolism, and excretion of a xenobiotic after in vivo exposure. It also requires knowledge about protein binding to plasma and intracellular proteins, lipid portioning, tissue-specific activation, and detoxification for interpretation of the relevance of an in vitro cell concentration to a target-tissue concentration after in vivo administration. Thus, physiologically based pharmacokinetic modeling, which will require some in vivo data, will continue to be an important part of hazard evaluation and risk assessment for chemicals that are identified as being potentially of concern on the basis of in vitro screening assays. Although advances in in vitro toxicity assessment continue to improve and will certainly decrease the number of animals required for in vivo testing, it is unlikely that in vitro tests will fully replace the need for in vivo animal testing for understanding the pharmacokinetics and pharmacodynamics of toxic substances because of the complex interplay between tissues and organs that are ultimately critical determinates of a toxic response.

The importance of those concepts was recently illustrated in some modeling studies of EPA ToxCast data. In the first phase of the ToxCast program, EPA scientists used hundreds of in vitro assays to screen a library of agricultural and industrial chemicals to identify cellular pathways and processes that were modified by specific chemicals; they intended to use the data to set priorities among chemicals for further testing (Judson et al. 2010). However, the potency of a chemical in an in vitro assay may or may not reflect its biologic potency in vivo because of differences in bioavailability, clearance, and exposure (Blaauboer 2010). Scientists at the Hamner Institute, in collaboration with EPA scientists, recently developed pharmacokinetic and pharmacodynamic models that incorporate human dosimetry and exposure data with the ToxCast high-throughput in vitro screening data (Rotroff et al. 2010; Wetmore et al. 2012). Their results demonstrated that incorporation of dosimetry and exposure information is critically important for improving priority-setting for further testing and for evaluating the potential human health effects at relevant exposures.

EPA scientists have played a leading role in the new approaches, and it will be important for them to continue to lead the way in both computational and systems toxicology in the future. With further improvements, such as inclusion of human dosimetric and exposure data, high-throughput in vitro assays for screening of new chemical entities for potentially hazardous properties will

probably become widely used for toxicity testing. Although the new technology-driven approaches to in vitro toxicity testing and high-throughput screening constitute an important advance in hazard evaluation of new chemicals, they are not yet ready to replace traditional approaches to hazard evaluation because of inherent limitations of extrapolation from in vitro to in vivo findings, as discussed above. But they will be very useful in setting priorities among new chemicals for more thorough toxicity testing. Additionally, the new technologies will greatly augment traditional approaches to in vivo toxicity evaluation by providing mechanistic insights and more detailed characterizations of biologic responses at doses well below those shown to produce toxicity. That will be especially important in evaluating endocrine-active chemicals and chemically induced alterations that may occur during early life.

McHale et al. (2010) have discussed the importance of new –omics technologies and of a systems-thinking approach to human health risk assessment of chemical exposures, or systems toxicology. EPA has already begun to examine such approaches to predictive in vitro toxicity assessment through the ToxCast program (EPA 2008a). It is evident that new approaches to data management and analysis will be critical for the success of computational approaches to predictive toxicology. The statistical and modeling challenges are immense in addressing the large volumes of data that will come from systems-toxicology experiments, which are an essential element of EPA’s computational-toxicology effort. It will be critical for the success of this and other efforts that involve large amounts of data for EPA to have access to the best available tools and technologies in informatics.

Example of Using Emerging Science to Address Regulatory Issues and Support Decision-Making: Predicting the Hazards of a New Material

Nanotechnology is an emerging technology that poses new challenges for EPA. Deemed the next industrial revolution, nanotechnology is predicted to advance technology in nearly every economic sector and be a major contributor to the nation’s economy. The rationale of that prediction is that nanoparticles, with dimensions of 1-100 nm, have properties that are useful in a wide variety of applications, including electronic, photovoltaic, structural, catalytic, diagnostic, and therapeutic.

A potential concern is that some of the properties of nanoparticles might pose risks to human health or the environment. The challenge for EPA is to use or develop the science and tools needed to assess and manage the widespread use of nanoscale materials that have unknown hazards. That includes assessing potential risks associated with an emerging technology and, if necessary, monitoring potential exposures and hazards. Using nanotechnology as an example, the committee identified several questions that can be used to better understand the risks associated with new science and tools. Many of these issues regarding the environmental, health, and safety aspects of nanotechnology are addressed in

a 2012 NRC report, A Research Strategy for the Environmental, Health, and Safety Aspects of Nanotechnology (NRC 2012).

First, do nanoparticles present different properties and inherent risks from smaller molecules or larger particles? To answer this question, it will be important for EPA to adapt and develop new science and tools that strengthen the correlation between the structure and identity of a nanomaterial and the hazard posed by it. That means that new analytic tools or approaches that permit reliable and rapid assessment of engineered-nanomaterial structure and purity are needed. Rapid tests to screen for hazards and set priorities among materials for further testing are essential to keep pace with the development of new materials and to make efficient use of resources available to test materials. To model and predict the properties of the new materials, it will be necessary to develop precisely defined reference materials to ensure that inputs to predictive models and informatics efforts are robust and reliable. The measurement tools, rapid screening approaches, defined reference materials, and modeling and informatics approaches, advanced in an integrated fashion, can determine more rapidly what, if any, unique hazards are associated with this emerging technology.

Second, what are the likely routes and venues of exposure to engineered nanomaterials? Consumer-use patterns, production methods, and life-cycle effects of emerging technologies are unknown. To identify likely ways in which exposure can occur, it will be important for EPA to use physical science, engineering, and social science tools in a multidisciplinary approach that seeks to understand the life cycle of the materials, the supply chains that incorporate them, the projections for market growth, and consumer behaviors in using nanomaterial-containing products. By identifying the intersection between the most likely exposures and unique hazards, EPA can focus on further characterizing the potential risk and using science to inform policies needed to monitor and manage the risk.

Third, how can nanomaterials be detected, tracked, and monitored in complex biologic and environmental media? To complement the science to assess unique hazards and realistic exposures described earlier in this section, EPA will require tools to monitor the distribution of and potential exposures to nanomaterials. The characterization of pristine nanomaterials has been a challenge given the lack of specialized tools for detecting and measuring them. Once distributed, nanomaterials pose even greater challenges to detection, tracking, and monitoring than small molecules or micron-scale particles. This is because nanomaterials tend to have distributions of sizes and surface coatings, their high surface area leads to agglomeration or deposition, their surface chemistry has been shown to be dynamic, and their speciation can be complex. EPA and its collaborators and contractors will need to invent, develop, or refine tools to detect, track, and monitor nanomaterials. In some cases, the solution may be to integrate the use of existing tools. In others, new tools will be required. In addition to direct detection of the materials, strategies that exploit the use of biomarkers as described earlier in this chapter may prove essential for understanding exposures.

The three questions posed in this section may be similarly applied to any emerging material to identify concerns surrounding new hazards, exposure routes, and material tracking. The case of nanotechnology is an example of how EPA will need to approach many emerging tools, technologies, and challenges in general in the future. In order to have the capacity to address those tools, technologies, and challenges, it will need to have enough internal expertise to identify and collaborate with the expertise of all of its stakeholders in order to ask the right questions; determine what existing tools and strategies can be applied to answer those questions; determine the needs for new tools and strategies; develop, apply, and refine the new tools and strategies; and use the science to make recommendations based on hazards, exposures, and monitoring.

TOOLS AND TECHNOLOGIES TO ADDRESS CHALLENGES RELATED TO AIR POLLUTION AND CLIMATE CHANGE

As discussed in Chapter 2, EPA’s first goal in its 2011–2015 strategic plan is “taking action on climate change and improving air quality” (EPA 2010). Improved modeling capabilities are integral to attaining that goal inasmuch as models are needed to test the understanding of sources, environmental processes, fate, and effects of airborne contaminants and to investigate the effects of potential mitigation measures. Examples of the many areas in which new technologies will impact air quality and climate change are discussed in the following sections on air-pollution modeling; carbon-cycle modeling, greenhouse-gas emissions, and sinks; and air-quality monitoring.

Air-Pollution Modeling

EPA has a strong history of leadership in air-quality modeling. Its Community Multi-scale Air Quality (CMAQ) model is used both domestically and internationally as a premier platform for “one atmosphere” modeling of the chemistry and transport of ground-level ozone, particulate matter, reactive nitrogen, mercury, and dozens of other materials. In recent years, EPA researchers have worked with other government and university scientists to develop capabilities to run the CMAQ model in a real-time forecast mode (Eder et al. 2009); to couple the CMAQ model to an advanced meteorologic model, the Weather Research and Forecasting system (Appel et al. 2010); and to build advanced sensitivity analysis and inverse modeling capabilities (Napelenok et al. 2008; Tian et al. 2010).

In coming years, investments in modeling efforts will advance the understanding of sources and environmental processes that contribute to particulate-matter loadings and health and environmental effects. Modeling efforts will also improve the understanding of interactions between climate change and air quality with a special focus on relatively short-lived greenhouse agents, such as ozone, black carbon, and other constituents of particulate matter. Improved

modeling capabilities will enable EPA to evaluate actions that have dual benefits for reducing radiative-forcing agents (such as ozone and aerosols) and improving air quality, and it will also enable EPA to understand better how tropospheric particulate matter may have masked some global warming in the past. The committee has identified several efforts that will likely be important for EPA in the future. They include, working with other federal and university scientists to improve the use of global climate model predictions to inform air-quality management and other climate-adaptation decisions; working toward a better understanding of the global mass balance of mercury and other biologically active metals, including the role of natural sources and re-emission, chemical and biologic processing, and interregional transport; improving its understanding of physical and chemical processes; leading the integration of models and observations (including satellite and other remote sensing techniques2) to help to estimate emissions of greenhouse agents and conventional air pollutants, especially from dispersed or fugitive sources; and expanding its efforts to integrate socioeconomic and biophysical systems models for integrated assessment, including examination of air and climate effects of changing agriculture, energy, information, land-use, and transportation systems.

Carbon-Cycle Modeling, Greenhouse-Gas Emissions, and Sinks

EPA is engaged in a variety of science, engineering, regulatory, and policy development activities related to greenhouse-gas emissions, the global carbon cycle, and impacts of resulting changes on human health. The agency is responsible for the national-level inventory of greenhouse gases in the context of the Framework Convention on Climate Change. Under the Clean Air Act, the agency has authority to regulate greenhouse gases, including carbon dioxide, methane, nitrous oxide, and hydrofluorocarbons. Much attention is also focused on estimating ecosystem uptake and sequestration of carbon as a quantifiable (and monetizable) ecosystem service.

Fossil-fuel emissions can be estimated with relatively high precision, and the science of monitoring and modeling of their uptake by terrestrial and marine ecosystems is evolving rapidly. National-scale and continental-scale estimates of carbon fluxes are now produced through several approaches. In one approach, atmospheric-inversion models rely on regional measurements of atmospheric carbon dioxide coupled to surface ecosystem fluxes and atmospheric circulation

![]()

2 Remote sensing—the study of Earth processes and phenomena without direct physical contact—will be discussed several times throughout this chapter. It includes both passive sensors, which measure electromagnetic radiation that is emitted or reflected by the object or area being observed, and active sensors, such as synthetic-aperture radar or light detection and ranging systems, which emit energy and measure its return to infer properties of the scanned surfaces. Remote sensing complements expensive and slow data collection on the ground and provides local-to-global areal coverage of many key environmental processes.

(Gurney et al. 2002). In another approach, which is more direct, biomass inventories (for example, forest and cropland inventories) are used for estimating uptake by monitoring changes in biomass stocks. A third approach involves spatially explicit modeling of ecosystem processes on the basis of weather, soil, land use, and land cover (Schwalm et al. 2010). Each of those approaches has limitations and uncertainties, and derived estimates show only moderate agreement (Hayes et al. 2012). Hayes et al. (2012) demonstrate the value of the inventory approach, which relies on stock estimates obtained from EPA reports (for example, EPA 2011b), for subcontinental-scale estimates of carbon fluxes.

Integrated modeling of greenhouse-gas sources and sinks3 will continue to develop rapidly given continuing advances in remote sensing of ecosystem properties and understanding of the carbon cycle. To meet its regulatory mandate and to support policies that address climate change, EPA could benefit from increased science and engineering capacity in ecosystem ecology and Earth-system science.

Air-Quality Monitoring

Advances in atmospheric remote sensing have created a new paradigm for air-quality monitoring and prediction from regional to global scales (NRC 2007b). Research and applications have focused on fine particulate aerosols, tropospheric ozone, nitrogen dioxide, formaldehyde, sulfur dioxide, and carbon monoxide, but have also included other compounds, such as benzene, ethylbenzene, and 1,3-butadiene (NRC 2007b, Fishman et al. 2008, Hystad et al. 2011). Active sensors, such as satellite and aircraft-mounted light detection and ranging systems (LiDAR) (for example, the cloud-aerosol LiDAR with orthogonal polarization), can provide information on the vertical distribution of clouds and aerosols on the basis of the magnitude and spectral variation in backscatter of the vertical beam. However, most remote sensing of air quality has relied on passive sensors, for example, measurements of pollution in the troposphere, the moderate-resolution imaging spectroradiometer and multi-angle imaging spectroradiometer on the National Aeronautics and Space Administration’s (NASA) Terra platform, and the ozone-monitoring instrument and tropospheric emission spectrometer on NASA’s AURA platform (Martin 2008). Those collect radio-metric data on solar backscatter or thermal infrared emissions that are then used in retrieval algorithms that incorporate other geophysical information and radiative-transfer models. The reliability of results depends on the surface reflectivity or emissivity, clouds, the viewing geometry, and the retrieval wavelength (Martin 2008). Estimating ground-level concentrations, which are of greatest relevance to EPA, requires additional information on the vertical structure of the

![]()

3 The ocean is the largest sink, inasmuch as carbon dioxide is dissolved in seawater and is in equilibrium with the atmosphere (in freshwater bodies, it can change the water pH to some extent).

atmosphere, especially for ozone and carbon monoxide. Inverse modeling is required to infer pollutant source strength from observed concentration patterns.

Although it is not a substitute for ground-based air-quality measurements, satellite-derived data provide important spatial, temporal, and contextual information about the extent, duration, transport paths, and distances of pollution from a source, which is generally not possible with in situ ground-based measurements. For example, Morris et al. (2006) linked increases in surface ozone in Houston to wildfires in Alaska and western Canada, and Heald et al. (2006) traced an increase in springtime surface aerosols in the northwestern United States to anthropogenic sources in Asia. As retrieval algorithms and the spatial and spectral quality of satellite data have improved, remote sensing has provided a means of obtaining relatively consistent estimates of air-pollutant exposure over large areas for health-effects assessments (van Donkelaar et al. 2010; Hystad et al. 2011), which has facilitated large-scale epidemiologic investigations in settings where monitoring data are inadequate to determine spatial contrasts (Crouse et al. 2012). Another important trend is the assimilation of concurrent data from multiple sensors with ground data; that has proved especially useful in improving estimates of ground-level ozone (Fishman et al. 2008).

Example of Using Emerging Science to Address Regulatory Issues and Support Decision-Making: Remote Sensing to Monitor Landfill Gas Emissions

Great progress has been made in reducing or eliminating releases of toxic substances from concentrated sources (also known as point sources), but monitoring and mitigating emissions from so-called area sources has been technically difficult and remains one of the persistent challenges faced by EPA. Recent efforts to use emerging technology in monitoring provide a glimpse on a very broad scale of what might be possible with further advances. EPA’s National Risk Management Research Laboratory used a tunable diode laser to perform optical remote-sensing of fugitive methane, hazardous air pollutants (including mercury), volatile organic compounds, and nonmethane organic compounds emitted from three landfills. With multiple measurements of concentrations along different light paths, the system calculates a mass emission flux for the entire area. What had been thought to be an excessively expensive monitoring challenge is now financially and practically manageable (EPA 2012b).

Example of Using Emerging Science to Address Regulatory Issues and Support Decision-Making: Multipollutant Analysis Standard-Setting

Regulation in the United States is predicated on single-pollutant standards or control strategies. Improved understanding of health effects of cumulative and mixed exposures calls for new approaches to standard-setting that consider a multipollutant approach. The shift will require understanding of the joint behav-

ior of multiple stressors, the interactions among them, and their contributions to health outcomes.

The air-pollution health community has been examining the science-readiness of a multipollutant regulatory strategy (Dominici et. al. 2010; Greenbaum and Shaikh 2010). The challenges, opportunities, and future research needs related to multipollutant approaches for the assessment of health risks associated with exposures to air pollution were evaluated in a public workshop held in 2011 (Johns et al. 2012). The workshop highlighted the need for a transdisciplinary research approach for developing more relevant tools and methods in the fields of exposure science, human and animal toxicology, and air pollution epidemiology. More important, it recommended collaboration among science, engineering, and policy communities to develop practical and implementable approaches that could ultimately inform decision-making (D. Johns, EPA, personal communication, May 9, 2012).

Related efforts to characterize toxicity of mixtures of chemicals in chemical risk assessment are under way. A key challenge is to define the universe of possible combinations of mixtures that are representative of real-world exposures. In a recent analysis, EPA researchers investigated methods from the field of community ecology originally developed to study avian species cooccurrence patterns and adapted them to examine chemical co-occurrence (Tornero-Velez et al. 2012). Their findings showed that chemical co-occurrence was not random but was highly structured and usually resulted in specific predictable combinations. Novel application of tools and approaches from a variety of research disciplines can be used to address the complexity of mixtures, advance the scientific communities’ understanding of exposures to the mixtures, and promote the design of relevant experiments and models to assess associated health risks.

TOOLS AND TECHNOLOGIES TO ADDRESS CHALLENGES RELATED TO WATER QUALITY

As discussed in Chapter 2, there are several important drivers of water quality and water-quality policies for which new technologies and approaches can be instrumental in enhancing data-driven regulations. For the purposes of this chapter, examples of the many areas in which new technologies will impact water quality are divided into the following areas: remote sensing technologies for water-quality monitoring; water modeling; and detecting microorganism and microbial products in the environment.

Water-Quality Monitoring

Multispectral imagery has been successfully applied to water-quality monitoring for several decades, notably for monitoring surface temperature and concentrations of suspended sediments and algae (see reviews by Mertes 2002; Matthews 2011). Modern multispectral sensors—such as the moderate-resolution imaging

spectroradiometer and the European medium-resolution imaging spectrometer sensor, which have moderate (about 250m) spatial resolution, 10–15 spectral bands, and high sampling frequency—have accelerated progress in remote sensing of suspended sediments, dissolved organic matter, chlorophyll, phycocyanin, and other water-quality indicators that are extensive enough to suit sensor resolution (Bierman et al. 2011). Satellite-based assessments of water quality will probably be increasingly routine, especially with better integration and assimilation of in situ data and multiscale sensor data via empiric and physically based models (Matthews 2011). As mentioned in the section “Air-Quality Monitoring” above, the new tools and technologies are not a substitute for ground-based water-quality measurements, but they provide important spatial and contextual information about the extent, duration, transport paths, and distances of pollution from a source and should be used to enhance the current water-monitoring infrastructure and related exposure assessment efforts.

Water Modeling

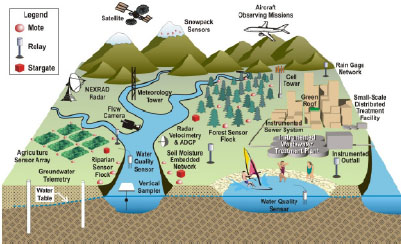

Real-time reporting of water-quality data would complement EPA research programs. Data could be downloaded to a community Website so that other researchers and the general public could understand water-quality and quantity (storm-flow) information better. That type of network would eventually allow analysis of infiltration or inflow problems, including policy options (such as disconnecting storm drains from the sanitary sewer) and the likely effectiveness of infrastructure investment in light of climate change (such as more intense storm events). Figure 3-3 illustrates how a sensor network might be set up.

Spatially detailed high-frequency sensing of water resources that uses an embedded network can provide breakthroughs in water science and engineering by promoting understanding of nonlinearities (the knowledge base to discern mechanisms and basic kinetics of nonlinear water processes) (Ostby 1999; Coppus and Imeson 2002; Nowak et al. 2006); scalability (the ability to scale up complex processes from observations at a point to the catchment basin) (Ridolfi et al. 2003; Sivapalan et al. 2003; Long and Plummer 2004); prediction and forecasting (the capacity to predict events, to model and anticipate outcomes of management actions, and to provide warnings or operational control of adverse water-quantity and water-quality trends or events) (Christensen et al. 2002; Scavia et al. 2003; ASCE 2004; Vandenberghe et al. 2005; Shukla et al. 2006; Hall et al. 2007); and discovery science (the discovery of heretofore unknown and unreported processes) (Jeong et al. 2006; Messner et al. 2006; Loperfido et al. 2009; 2010a,b).

Detecting Microorganisms and Microbial Products in the Environment

Development of detection methods for microbial contamination in water, soil, and air is a critical part of environmental protection. EPA is one of the few federal agencies that oversees a substantial research portfolio that includes new

analytic techniques for environmental assessment. Although more modern biochemical methods are available, coliform bacteria and enterococci continue to be used as indicators for the assessment of safe drinking and recreational waters (EPA 1986, 2002, 2005), and cultivation methods for viability remain the gold standard (Messer and Dufour 1998). In recognition of the inadequacy of the bacterial indicator system over the years, research methods have been developed and improved for measuring enteric viruses (Fong and Lipp 2005; Yates et al. 2006; Pepper et al. 2010) and protozoa (Sauch et al. 1985; Rose 1988; Aboytes et al. 2004) and for expanding the understanding of risk (Slifko et al. 1997, 1999; Aboytes et al. 2004). National surveys of groundwater and surface water have directly influenced important rule-making, including the Surface Water Treatment Rule, the Information Collection Rule, the Long Term Enhanced Surface Water Treatment Rule, the Disinfectants and Disinfection Byproducts Rule, the Ground Water Rule, and final rules for the use or disposal of sewage sludge.

Assessment and control of waterborne diseases still rely on the ability to sample and quantify fecal indicator organisms and pathogens as part of the evaluation of water quality. The most recent advances in the detection of microorganisms in water include quantitative polymerase chain reaction (PCR) methods, which can be designed for any microorganism of interest because they are highly specific and quantitative. The PCR methods can produce information relatively fast and, under the Clean Water Act and the Beaches Environmental Assessment and Coastal Health Act, their adoption has moved quickly toward meeting total maximum daily load requirements and beach safety (see the example below on “Beach Safety”).

FIGURE 3-3 Schematic of an instrumented watershed in an observatory of the national network. Real-time sensors for meteorology, rainfall, stream velocity, suspended sediment, water quality, soil moisture, groundwater, and snowpack are shown with wireless communications equipment necessary for transmitting the data. Source: WATERS Network 2009.

New approaches to next-generation DNA-sequencing technologies offer the promise of characterizing healthy water by ensuring the absence of harmful biotic organisms, even rare ones (see Appendix C for background information on genomics tools and technologies). Just as the human microbiome studies are examining the diversity and ecology of microorganisms in the intestinal tract, DNA-sequencing methods are being used to explore the water microbiome in polluted, pristine, and unique environments, although finding rare microbial populations that will exhibit genetic characteristics with the potential for harm to humans is difficult. Metagenomics of the wastewater system, and in particular the viral genome, provide insight into the complex world of water microbiotas, but is only being used for exploration. Current efforts are being spent in developing methods and generating large amounts of data (Table 3-2); the methods are able to identify which microorganisms (including potentially pathogenic organisms) are present, but their viability and functional activity are often not known. Finally, genomic data have not been used much to inform microbial risk assessment. In the next decade, environmental microbiome studies and data will need to move toward sophisticated data interpretation and modeling, and substantial investment in bioinformatics will be necessary. With the growing understanding of the ecosystem microbiome and its interaction with human health and the environment, it is becoming evident that the microbiome plays an important role in modulating health risks posed by broader environmental exposures. Understanding such interactions will have important implications for understanding individual and population susceptibility and the observed variability in risks posed by environmental exposures.

Other recent advances that are facilitating the use of molecular tools include new techniques for increasing sample concentration—such as ultrafiltration, continuous filtration, and new types of filters—for improved recovery and automated extraction of nucleic acids with less contamination, less inhibition, and more rapid throughput (Hill et al. 2005; Srinivasan et al. 2011). New quantitative PCR approaches for monitoring the viability of pathogens of concern are of particular interest, and several approaches show some promise. Such dyes as ethidium monoazide and propidium monoazide have been used to distinguish between live cells and heat-killed cells, but the dyes are not able to penetrate apparently killed cells when applied to disinfected treated sewage samples, so the signals that are produced through quantitative PCR methods are comparable with counts made before and after disinfection with or without use of the dyes (Varma et al. 2009; Srinivasan et al. 2011). More work is needed to address the possible presence of viable but nonculturable cells in disinfected effluents. An approach to examining viability associated with bacteria is to use quantitative PCR methods to target the precursors of ribosomal RNA (rRNA). That was done to quantify viable cells of Aeromonas and mycobacteria in water (Cangelosi et al. 2010) and showed promise for both saltwater and freshwater and for post-chlorination monitoring. Those types of methods will require verification in the monitoring of disinfected drinking water and wastewater. There may be a need

| Environment Sampled | Target and Approach | Findings | Reference |

| Wastewater biosolids | Bacterial 16S rRNA genes; PCR, pyrosequencing (454 GS-FLX sequencer) | Most of the pathogenic sequences belonged to the genera Clostridium and Mycobacterium | Bibby et al. 2010 |

| Wastewater (activated sludge, influent, and effluent) | Bacterial 16S rRNA gene (hypervariable V4 region); PCR, pyrosequencing (454 GS-FLX sequencer) | Most of the pathogenic sequences belonged to the genera Aeromonas and Clostridium | Ye and Zhang 2011 |

| River sediment | Bacterial antibiotic-resistance genes; MDA, pyrosequencing (454 GS-FLX sequencer) | Large amounts of several classes of resistance genes in bacterial communities exposed to antibiotic were identified | Kristiansson et al. 2011 |

| Reclaimed and potable water | Viral DNA and RNA; tangential flow filtration, DNase treatment, MDA, pyrosequencing (454 GS-FLX and GS20 sequencer) | Over 50% of the viral sequences had no significant similarity to proteins in GenBank; bacteriophages dominated the DNA viral community; the RNA metagenomes contained sequences related to plant viruses and invertebrate picornaviruses | Rosario et al. 2009 |

| Wastewater biosolids | Viral DNA and RNA; DNase and RNase treatment, reverse transcription for RNA, pyrosequencing (454 GS-FLX sequencer), optimal annotation approach specific for viral pathogen identification is described | Parechovirus, coronavirus, adenovirus, aichi virus, and herpesvirus were identified | Bibby et al. 2011 |

| Lake water | Viral RNA; tangential flow filtration, DNase and RNase treatment, random amplification (klenow DNA polymerase), pyrosequencing (454GS-FLX sequencer) | 66% of the sequences had no significant similarity to known sequences; presence of viral sequences (30 viral families) with significant homology to insect, human, and plant pathogens | Djikeng et al. 2009 |

Abbreviations: DNase, deoxyribonuclase; MDA, multiple displacement amplification; PCR, polymerase chain reaction; RNase, ribonuclease; rRNA, ribosomal RNA.

Source: Aw and Rose 2012. Reprinted with permission; copyright 2012, Current Opinion in Biotechnology.

for a method that combines some type of cultivation with quantitative PCR techniques in real time to address viability. The use of molecular tools that can be used to inform decisions for water treatment and public-health protection will still require substantial investment in sample concentration, hazard characterization, quantification, and assessment of viability.

Example of Using Emerging Science to Address Regulatory Issues and Support Decision-Making: Beach Safety

Shorelines provide benefits to society as a whole and in particular are directly associated with tourism, which remains one of the largest economic sectors around the world. According to the Natural Resources Defense Council (NRDC 2011), beaches in the United States were given advisories or were closed 24,091 times in 2010—the second-highest number of advisories and closures in the 21 years since NRDC began reporting. It was suggested that aging and poorly designed sewage-treatment systems and contaminated stormwater were the main causes of pollution that led to fecal—indicator concentrations that exceeded the state’s health and safety standards. There were also more than 9,000 days of Gulf Coast beach notices, advisories, and closures due to the Deepwater Horizon oil-spill disaster in 2010 (NRDC 2011).

As part of an overhaul of the Clean Water Act, the Beaches Environmental Assessment and Coastal Health Act mandated that research be undertaken to understand coastal pollution, address polluted sediments, decrease response time, and improve protection of public health (EPA 2006a); most of the research programs under this act have yet to be realized, and improving public-health protection has been slow. EPA has begun to update water-quality standards, address health studies and swimmer surveys, and advance the use of new genomic technology for the rapid testing of water quality. The development of the first standardized quantitative PCR method for enterococci is being promoted for recreational-water assessment (Wade et al. 2006). Evaluations based on new quantitative PCR methods for indicators in ambient and recreational waters are being published (Byappanahalli et al. 2010; Noble et al. 2010), but there are challenges to using these methods for regulatory purposes because interpretation of the signals may underestimate or overestimate human health risks and could lead to beach closures that cause unnecessary economic losses (Srinivasan et al. 2011). Continued investment in new methods, applications for surveys, and links to health effects and management strategies are necessary.

Wastewater and stormwater are key culprits in water pollution, and further improvement of water safety cannot occur unless point and nonpoint sources of pollution are elucidated. Research on microbial source tracking has advanced the use of molecular tools for investigating the presence of pathogens in impaired waters and to setting total maximum daily load requirements. EPA is taking a leadership role in the microbial source-tracking research (EPA 2005). In addition, California has organized one of the largest blind studies, the Global

Inter-Laboratory Fecal Source Identification Comparison Study, which involves the evaluation of 39 microbial source-tracking methods by 29 laboratories (Shanks 2011).

To maximize the benefits of clean water, protect the general public, sustain water resources, and restore impaired shorelines, decision-makers will need to rely increasingly on an understanding of the long-term and short-term changes in water quality and aquatic ecosystems. The advanced science and technology are poised to play an increasingly important role in providing forecasts of effects on appropriate temporal and spatial scales. Advances could be made quickly for safe and sustainable water resources in the promotion of methodologic developments and applications in rapid and predictive monitoring; development of and investment in a safe-waters program that links genomic tools with watershed and beach-shed characterizations; continued microbial characterization of stormwater, combined sewage overflows, and wastewater; and development of and investment in innovative engineering designs to reduce pollution loads.

Example of Using Emerging Science to Address Regulatory Issues and Support Decision-Making: Quantitative Microbial Risk Assessment

Quantitative microbial risk assessment had its beginnings in the 1980s; it is associated with the first publication of dose—response models (Haas 1983) and is now an accepted process for addressing waterborne disease risks and management strategies (Haas et al. 1999; Medema et al. 2003). Although great strides have been made in using quantitative microbial risk assessment in EPA’s Office of Homeland Security (including leading an interagency working group and the exchange of information with CDC), EPA has yet to take a leadership role in developing the necessary databases for use in a national risk assessment of wastewater, stormwater, and recreational water.

Linking biology, mathematics, health, the environment, and policy will require substantial interdisciplinary research focused on problem-solving and systems thinking. Quantitative microbial risk assessment has been seen as an important framework for pulling science and data together and can lead to innovative work in decision science. According to the Center for Advancing Microbial Risk Assessment, “ultimately, the goal in assessing risks is to develop and implement strategies that can monitor and control the risks (or safety) and allows one to respond to emerging diseases, outbreaks and emergencies that impact the safety of water, food, air, fomites, and in general our outdoor and indoor environments” (CAMRA 2012). The framework is being promoted by the World Health Organization (WHO 2004), and the international need for data, education, and mathematical tools to assist countries around the world with the implementation of quantitative microbial risk assessment strategies is paramount. More recently, Science and Decisions: Advancing Risk Assessment (NRC 2009) called for more integration with the risk-assessment—risk-management paradigm. This approach will provide a pathway to the integration

of new tools and science for addressing EPA’s goals of safe and sustainable water.

If a quantitative microbial risk-assessment framework were put into practice by EPA, it would need to incorporate alternative indicators based on genomic approaches, microbial source-tracking, and pathogen-monitoring. Also, the complete human-coupled water cycle would need to be explored, including built and natural systems. Implementation of a quantitative microbial risk-assessment framework would require investment in a health-related water microbiology collaborative research network. The network would bring molecular biologists, ecologists, engineers, and water-quality health and policy experts together to build internal capacity, to develop external partnerships, and to foster national collaboration. Regardless of whether EPA decides to systematically use a quantitative microbial risk-assessment framework, the future of science at the agency would benefit from continuing to build exposure databases and support work on the survival and inactivation of pathogens that can feed into quantitative microbial risk assessment. Agency science would also benefit from new informatics and application tools that are based on quantitative microbial risk assessment models to enhance decision-making to meet safe-water goals.

An example of an area in which EPA may be able collaborate to more effectively fill information gaps or address funding overlap in a resource-constrained environment is through microbiology research. There are other organizations that have microbiology programs, but few address the environment. NIH’s Division of Microbiology and Infectious Diseases supports clinical research and basic science for microbes and infectious disease. NIH has recently partnered with the National Science Foundation and the US Department of Agriculture to fund research on the ecology and evolution of infectious disease. The partnership addresses diseases that have an environmental pathway and can include waterborne diseases, but most of the efforts have been related to cholera and little attention has been given to other groups of pathogens. EPA has not yet played a role in the partnership, but it could contribute to filling a gap in knowledge about wastewater treatment and monitoring as it relates to microbes and environmental and human health.

TOOLS AND TECHNOLOGIES TO ADDRESS CHALLENGES RELATED TO SHIFTING SPATIAL AND TEMPORAL SCALES

Chapter 2 noted that current environmental challenges are expanding in both space and time and it emphasized that long-term data are needed to characterize such changes and to characterize the cause and the potential implications of different policy options. To address the challenges of increasing spatial and temporal scales for a variety of environmental problems, new approaches, tools, and technologies in such areas as computer science, information technology (IT), and remote sensing will become increasingly important to EPA. The ability to take full advantage of all the new tools and technologies discussed in the pre-

ceding sections of this chapter will require EPA to have state-of-the-art IT and informatics resources that can be used to manage, analyze, and model diverse datasets obtained from the vast array of technologies.

Computer Science, Informatics, and Information Technology

The future needs for IT and informatics in support of science in EPA are subject to two principal influences: the future directions of EPA’s mission and the underlying science in future directions taken by the IT industry. Science in EPA will increasingly depend on its capability in IT and informatics. IT is concerned with the acquisition, processing, storage, and dissemination of information with a combination of computing and telecommunication (Longley and Shain 1985). The term informatics, as used here, refers to the application of IT in the generation, repository, retrieval, processing, integration, analysis, and interpretation of data obtained in different media and across geographic and disciplinary boundaries that are related to the environment and ecosystem, community and human activities, and human health (see He 2003). Informatics is also concerned with the computational, cognitive, and social aspects of IT. One way in which IT can be used for data acquisition is through public engagement. Taking advantage of expertise outside of EPA (from academia, industry, and other agencies) and considering the general public as a source of new information is a way in which knowledge and resources can be combined in a cost-effective manner. Examples include taking advantage of social media and crowdsourcing. Appendix D provides additional background information on various important and rapidly changing tools and technologies in the field of information technology and informatics.

Example of Using Emerging Science to Address Regulatory Issues and Support Decision-Making: Social Media