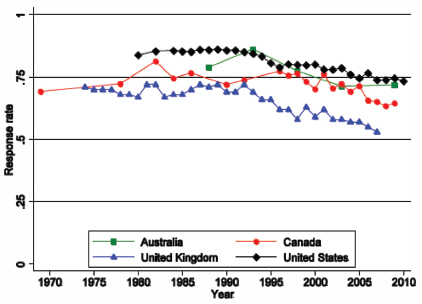

Today, all household surveys, including the Consumer Expenditure Surveys (CE), face well-known challenges. These challenges include maintaining adequate response from increasingly busy and reluctant respondents. In addition, more and more households are non-English speaking, and a growing number of higher-income households have controlled-access residences. Call screening and cell-phone-only households have made telephone contacts on surveys more difficult. Today’s household surveys face confidentiality and privacy concerns, a public growing more suspicious of its government, and competition from an increasing number of private as well as government surveys vying for the public’s attention (Groves, 2006; Groves and Couper, 1998).

In the midst of these challenges for household surveys, the CE surveys stand out as particularly long and arduous. In the Interview survey, recall of many types of expenditures is likely to be imperfect. A typical respondent lacks records or at least the motivation to use them in answering the CE questions. The level of detail that is required in describing each purchase is daunting. In the Diary survey, respondents are asked to record the details of many small purchases in a complicated booklet. These demands can easily result in limited compliance and the omission of expenditures.

Further exacerbating the problem, the CE faces the additional challenge that consumer spending has changed dramatically over the past 30 years, and it continues to change (Fox and Sethuraman, 2006; Kaufman, 2007; Sampson, 2008). When the CE was designed in the 1970s, there was no online shopping or options for electronic banking and bill paying. Over that

time, shopping patterns have shifted from individual purchases at a variety of neighborhood stores to collective purchasing at “big box” stores such as Walmart, Target, and Costco that sell everything from meat to shirts, furniture, and motor oil under one roof. The CE surveys are cognitively designed to collect spending information based on the 1970s world of purchasing behaviors, and today’s consumers are unlikely to relate to that.

Underreporting of expenditures is a long-standing problem with the CE as evidenced by a growing deviation from other data sources and by the results of several studies. This underreporting appears to differ sharply across commodities, raising the possibility of differential biases in the Consumer Price Index (CPI) and the picture of the composition of household spending. This is the biggest concern with the CE program. The Panel on Redesigning the BLS Consumer Expenditure Surveys believes that there are a number of issues with the current design and implementation of the CE, and that collectively these problems lead to the underreporting of expenditures. This chapter documents this underreporting and then discusses the issues and concerns that the panel identified in its study of the CE.

With that said, the panel understands that no survey is perfect. In fact all surveys are compromises between the need for specific data, the quality with which those data can be collected, and the burden and costs required to do so. The CE is no exception. It is the panel’s expectation that by examining the issues with the current CE along with some alternative designs, a new and better balance can be found between data requirements, data quality, and burden.

EVIDENCE OF UNDERREPORTING IN THE CE

In many federal surveys, one can assess the quality of data by comparisons with other sources of information. One of the difficulties in evaluating the quality of CE data is that there is no “gold standard” with which to compare the estimates. However, several sources provide insight into data quality. The National Research Council, in its review of the conceptual and statistical issues with the CPI, expressed concern about potential bias in the expenditure estimates from the CE. That report recommended comparison of the CE estimates with those from the Bureau of Economic Analysis’s Personal Consumption Expenditures (PCE):

The panel’s foremost concern is with the extent of bias in the CEX [Consumer Expenditure Surveys] which, in turn affects the accuracy of CPI expenditure category budget shares. A starting point for evaluating household expenditure allocations estimated by the CEX is to compare them against budget shares generated by other sources. The Bureau of Economic Analysis (BEA) produces the most obvious alternative, the per-capita and product accounts (NIPA). (National Research Council, 2002, p. 253)

Comparisons Between the CE and PCE

Compatibility

A long literature has focused on the discrepancy between the CE and PCE data from the National Income and Product Accounts (NIPA) (Attanasio, Battistin, and Leicester, 2006; Branch, 1994; Garner, McClelland, and Passero, 2009; Garner et al., 2006; Gieseman, 1987; Meyer and Sullivan, 2011; Slesnick, 1992). However, in comparing the CE to the PCE data, it is important to recognize conceptual incompatibilities between these data sources. Slesnick (1992, p. 22), when comparing CE and PCE data from 1960 through 1989, concluded that “approximately one-half of the difference between aggregate expenditures reported in the CEX [CE] surveys and the NIPA can be accounted for through definitional differences.” Similarly, the General Accounting Office (1996, p. 15), now the U.S. Government Accountability Office, in a summary of a Bureau of Economic Analysis comparison of the differences in 1992, reported that “more than half was traceable to definitional differences.”

Thus, a key conceptual difference between the CE and PCE is “what is measured.” The CE measures out-of-pocket spending by households, while the PCE definition is wider, including purchases made on behalf of households by institutions. The CE is not intended to capture purchases by households abroad such as those on military bases, whereas the PCE includes these purchases. These differences are important and growing over time. Imputations including those for owner-occupied housing and financial services, but excluding purchases by nonprofit institutions serving households and employer contributions for group health insurance, now account for over 10 percent of the PCE. In-kind social benefits account for nearly another 10 percent. Employer contributions for group health insurance and workers’ compensation account for over 6 percent, while life insurance and pension fund expenses and final consumption expenditures of nonprofits represent almost 4 percent. McCully (2011) reported that in 2009 nearly 30 percent of the PCE was out-of-scope for the CE, up from just over 7 percent in 1959.

Another important conceptual difference between the CE and PCE is the underlying data and how the estimates are constructed. Chapter 3 of this report describes the CE surveys in some detail. In comparison, the PCE aggregates come from data on the production of goods and services, rather than consumption or expenditures by households. The PCE depends on multiple sources, primarily from business records reported on the economic censuses and other Census Bureau surveys. The PCE numbers are the product of substantial estimation and imputation processes that have their own error profiles. Estimates from these business surveys are adjusted using input-output tables to add imports and subtract sales that do not go

to domestic households. These totals are then balanced to control totals for income earned, retail sales, and other benchmark data (Bureau of Economic Analysis, 2010, 2011a,b).

One indicator of the potential error in the PCE is the magnitude of the revisions that are made from time to time (Gieseman, 1987; Slesnick, 1992). A recent example is the 2009 PCE revisions, which substantially revised past estimates of several categories. Food at home, one of the largest categories, decreased by over 5 percent after the 2009 revision.1

Some authors have argued that despite the incompatibilities between the CE and PCE, the differences between the series should be expected to be relatively constant (Attanasio et al., 2006). While a plausible conclusion, a gradual widening of the difference between the sources could still be expected given their growing incompatibility, as reported in McCully (2011) and Moran and McCully (2001).

Comparisons

Gieseman (1987) conducted one of the first evaluations of the current CE, comparing the CE to the PCE for 1980–1984.2 He found that the CE reports were close to the PCE for rent, fuel and utilities, telephone services, furniture, transportation, and personal care services. On the other hand, substantially lower reporting in the CE for food, household furnishings, alcohol, tobacco, clothing, and entertainment was apparent back in 1980–1984.

The current patterns have strong similarities to those from 30 years ago. Garner et al. (2006) reported a long historical series of comparisons for the integrated data that begins in 1984 and goes up through 2002. Some categories compare well. Rent, utilities, and fuels and related items are reported at high and stable levels relative to the PCE. Telephone services, vehicle purchases, and gasoline and motor oil are reported at high levels (compared to the PCE) but have declined somewhat over time. Food at home relative to the PCE is about 0.70, but has remained stable over time. The many remaining categories of expenditures are reported at low levels relative to the PCE, though some small categories such as footwear and vehicle rentals show relative increases.

_____________________

1The 2008 value for food at home was $741,189 (in millions of dollars) prior to revision and $669,441 after, but the new definition excludes pet food. A comparable pre-revision number excluding pet food is $707,553. The drop from $707,553 to $669,441 is 5.4 percent. Appreciation is given to Clinton McCully (BEA) for clarifying this revision.

2Comparisons of consumer expenditure survey data to national income account data go back at least to Houthakker and Taylor (1970). The issues were also addressed in a long series of articles comparing the CPI to the PCE deflators by Bunn and Triplett (1983) and Triplett and Merchant (1973).

Garner et al. (2006) ultimately argued that this comparison should focus on expenditure categories whose definitions are the most comparable between the CE and PCE, noting “a more detailed description of the categories of items from the CE and the PCE is utilized than was used when the historical comparison methodology was developed. Consequently, more comparable product categories are constructed and are included in the final aggregates and ratios used in the new comparison of the two sets of estimates” (Garner et al., 2006, p. 22). The new series provides comparisons every five years from 1992 to 2002 (Garner et al., 2006), and were updated and extended annually through 2007 in Garner, McClelland, and Passero (2009).

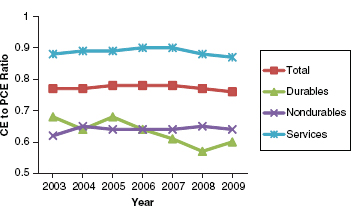

When using comparable categories and when the PCE aggregates are adjusted to reflect differences in population coverage between the two sources, the ratio of total expenditures on the CE to PCE is fairly high but still decreases over time. The ratio for 1992 and 1997 was 0.88, while in 2002 it was 0.84 and by 2007 had fallen to 0.81 (Garner, McClelland, and Passero, 2009). Figure 5-1 shows the time pattern for the ratio of CE to PCE spending for comparable categories over 2003–2009. The above discussion highlights that it is easy to overstate the discrepancy between the CE and the PCE by comparing all categories, rather than restricting the comparison to categories with comparable definitions (Passero, 2011).

Separate Comparison of the Interview Survey Estimates and the Diary Survey Estimates with the PCE

It is also important to look at comparability with the PCE of estimates from the Interview survey and Diary survey separately. Gieseman (1987) reported separate comparisons of the Interview survey and Diary survey estimates to PCE estimates for food because these were the only estimates available from both surveys.3 He found that Interview food at home exceeded Diary food at home by 10 to 20 percentage points, but was still below the PCE. For what was then a much smaller category, food away from home, the Diary aggregate exceeded the Interview aggregate by about 20 percentage points. Again, the CE numbers were considerably lower than the PCE ones.

It is not surprising that the Interview and Diary surveys yield different estimates, given the different approaches to data collection, including a

_____________________

3In these early years, BLS published separate tables for Interview and Diary data. In recent years, tables have been published with only integrated data. Consequently, subsequent comparisons of CE to PCE almost exclusively rely on the integrated data that combine Interview survey and Diary survey data. In cases where the expenditure category is available in both surveys, the BLS selects the source for the integrated data that is viewed as most reliable. See Creech and Steinberg (2011) and Steinberg et al. (2010).

FIGURE 5-1 Coverage of comparable spending between the CE and PCE.

NOTE: CE = Consumer Expenditure Surveys, PCE = Personal Consumption Expenditures.

SOURCE: Passero (2011).

different form of interaction with the respondent household. These differences provide the likelihood of differences in estimates between the two surveys as currently configured, as discussed in more detail later in this chapter.

Bee, Meyer, and Sullivan (2012) looked further at comparing the estimates from both surveys separately to the PCE. The authors examined estimates for 46 expenditure categories for the period 1986–2010 that are comparable to the PCE for one or both of the CE surveys. Table 5-1 shows the 10 largest expenditure categories for which these separate comparisons can be made, showing ratios of the CE to PCE for these categories. Among these categories, six (imputed rent on owner-occupied nonfarm housing, rent and utilities, food at home, gasoline and other energy goods, communication, and new motor vehicles) are reported on the CE Interview survey at a high rate (relative to the PCE) and have been roughly constant over time. These six are all among the eight overall largest expenditure categories. In 2010, the ratio of CE to PCE exceeded 0.94 for imputed rent, rent and utilities, and new motor vehicles. It exceeded 0.80 for food at home and communication and is just below this number for gasoline and other energy goods. In contrast, no large category of expenditures was reported at a high rate (relative to the PCE) in the Diary survey that was also higher than the equivalent rate calculated from the Interview survey. Reporting of rent and utilities is about 15 percentage points higher in the

TABLE 5-1 CE/PCE Comparisons for the 10 Largest Comparable Categories, 2010

| PCE Category | PCE ($ millions) | Ratios | |

| Diary to PCE | Interview to PCE | ||

|

Imputed rental of owner-occupied nonfarm housing |

1,203,053 | 1.065 | |

|

Rent and utilities |

668,759 | 0.797 | 0.946 |

|

Food at home |

659,382 | 0.656 | 0.862 |

|

Food away from home |

545,579 | 0.519 | 0.506 |

|

Gasoline and other energy goods |

354,117 | 0.725 | 0.779 |

|

Clothing |

256,672 | 0.487 | 0.317 |

|

Communication |

223,385 | 0.686 | 0.800 |

|

New motor vehicles |

178,464 | 0.961 | |

|

Furniture and furnishings |

140,960 | 0.433 | 0.439 |

|

Alcoholic beverages purchased for off-premises consumption |

106,649 | 0.253 | 0.220 |

NOTE: CE = Consumer Expenditure Surveys, PCE = Personal Consumption Expenditures.

SOURCE: Bee, Meyer, and Sullivan (2012).

Interview survey.4Gasoline and other energy goods are about 5 percentage points higher in the Interview survey and communication is about 10 percentage points higher. The 2010 ratios for food away from home and furniture and furnishings are close to a half for both the Interview and Diary surveys. For clothing and alcohol, the Diary survey ratios are below 0.50, but the Interview survey ratios are even below those for the Diary survey.

The panel next looked at smaller expenditure categories that are comparable between the PCE and the CE. Of the 36 such categories, only six in the Interview and five in the Diary have a ratio of at least 0.80 in 2010. In the Diary survey household cleaning products and cable and satellite television and radio services were reported with a high rate (comparable to the PCE). Household cleaning products had a ratio (relative to the PCE) of 1.15 in 2010 in the Diary survey; the ratio has not declined appreciably in the past 20 years. The largest of these categories reported with a high rate (comparable to the PCE) in the Interview survey were motor vehicle accessories and parts, household maintenance, and cable and satellite television and radio services. The remaining categories were reported at low

_____________________

4There is some disagreement about how to interpret the fact that food at home from the CE Interview survey compares more favorably to PCE numbers than does food at home from the CE Diary survey. Some have argued that the CE Interview survey numbers may include nonfood items purchased at a grocery store. Battistin (2003) argued that the higher reporting of food at home for the recall questions in the Interview component is due to overreporting, but Browning, Crossley, and Weber (2003) stated that this is an open question.

rates (compared to the PCE) in both surveys with ratios below one-half. These include glassware, tableware, and household utensils and sporting equipment. Gambling and alcohol had especially low ratios, below 0.20 and 0.33, respectively, in both surveys in most years.

Summary of Comparisons with the PCE

The overall pattern indicates that the estimates for larger items from the CE are closer to their comparable estimates from the PCE. The current Interview survey estimates these larger items more closely to the PCE than does the current Diary survey. For the 36 smaller categories, neither the Interview survey nor the Diary survey consistently produces estimates that have a high ratio compared to the PCE. The categories of expenditures that had a low rate (compared to the PCE) tended to be those that involve many small and irregular purchases, categories of goods for specific family members (clothing), and categories for which individuals might want to underestimate purchases (alcohol, tobacco). Large salient purchases (like automobiles), and regular purchases (like rent and utilities) for which the Interview survey was originally designed, seem to be well reported. These patterns have been largely evident since the 1980s or even earlier. However, over the past three decades, there has been a slow decline in the level of reporting of many of the mostly smaller categories of expenditures in both the Interview survey and the Diary survey.

Similar results are reported from Canada. Statistics Canada’s consumption survey was redesigned with both a recall survey and diary, with partial implementation in 2009. The level of expenditures from the diary was found to be 14 percent less than the recall interview for less frequent expenses and 9 percent less for frequent expenditures. Incomplete diaries contributed to the underestimation, given that 20 percent of diary days were “nonresponded” days (Dubreuil et al., 2011).

The panel reiterates that there are many differences between the CE and the PCE, and it does not consider the PCE to be truth. Nevertheless, the most extensive benchmarking of the CE is to the PCE, so these results are informative. Furthermore, when separate comparisons of the Interview survey and the Diary survey to the PCE are available, the comparisons provide an indication of the possible degree of relative underreporting in the two surveys.

Comparisons Between the CE and Other Sources

There have been comparisons of the CE to a number of other sources. Most are summarized on the BLS Comparisons Web page.5 These comparisons

_____________________

include, but are not limited to: utilities compared to the Residential Energy Consumption Survey (RECS); food at home compared to trade publications Supermarket Business and Progressive Grocer; and health expenditures compared to the National Health Expenditure Accounts (NHEA) and the Medical Expenditure Panel Survey (MEPS). Some of the findings are presented below.

The CE’s estimates for utilities are compared to those generated by the RECS. The populations of households from these two surveys are not identical, but fairly consistent. The RECS collects most information on utilities directly from utility companies after obtaining permission from the sampled households. Between 2001 and 2005, the CE estimates of total expenditures for residential energy were between 7 and 9 percent higher than from the RECS. When the energy source was broken down, the CE was higher for electricity and natural gas, while lower for the smaller category of fuel oil and LP gas.

In 2007, the CE’s estimate for total health expenditures was 67 percent of the total out-of-pocket health expenditures estimated from the NHEA. The NHEA is based on a broader population definition than is the CE, and the differences between its estimates and the CE may be affected by the population differences plus the concepts, context, and scope of data collection. When compared to the MEPS, the CE estimates were lower for total health expenditures, with comparison ratios similar as those of the NHEA.

Comparisons were made between total food at home from the CE with grocery trade association data from Supermarket Business and Progressive Grocer. During the 1990s, the CE estimate was consistently between 10 percent and 20 percent higher than the trade association data.

Summary of Comparisons with Other Sources

The panel was not charged with evaluating the error structure of the PCE or other relevant sources of administrative data. However, the above analysis provides important background for making decisions about the CE redesign. It indicates that the concerns about underreporting of expenditures in both the CE Diary and CE Interview surveys are warranted. For many uses of the CE, any underreporting is problematic. However, for the use in calculating CPI budget shares, the differential underreporting that is strongly indicated by these results, and discussed in more detail on p. 105 of Chapter 5, “Disproportionate Nonresponse,” is especially problematic. In principle, an attentive, motivated respondent could report a particular expenditure—a pound of tomatoes for a certain price—concurrently with better accuracy than in a recall survey. This potential is not evident from the estimates of aggregate spending obtained from the current designs of the CE Interview and Diary surveys. The above analysis indicates that there

are issues with both the CE Diary and CE Interview surveys, leading to the need for them to be assessed and redesigned. As a result, the panel reached this conclusion:

Conclusion 5-1: Underreporting of expenditures is a major quality problem with the current CE, both for the Diary survey and the Interview survey. Small and irregular purchases, categories of goods for specific family members, and items that may be considered socially undesirable (alcohol and tobacco) appear to suffer from a greater percentage of underreporting than do larger and more regular purchases. The Interview survey, originally designed for these larger categories, appears to suffer less from underreporting than does the Diary survey in the current design of these surveys.

MEASUREMENT DIFFERENCES BETWEEN THE INTERVIEW AND DIARY

Before examining potential sources of response errors in the Interview survey and Diary survey separately, this section considers whether these two independent surveys, as currently designed, are inherently comparable in the information that each collects. In the section above, the panel raised its concern about basic comparability of expenditure categories when comparing to the PCE. Here, the report explores another aspect of comparability.

It is important to remember the purposes for which the two surveys were originally designed. The Diary was designed to gather information on the myriad of frequent, small purchases made on a daily basis. These items include food for home consumption and other grocery items such as household cleaning and paper products. The Diary also is the source of expenditures for some clothing purchases, small appliances, and relatively inexpensive household furnishings, as well as the source of estimates on food away from home. The Interview, on the other hand, was designed to produce estimates for regular monthly expenditures like rent and utilities. It was designed to capture major expenditures, including those for large appliances, vehicles, major auto repair, furniture, and more expensive clothing items. Given the very different purposes of the two surveys, it is not surprising that they have entirely different designs and, hence, different problems.

Differences in Questions, Context, and Mode

A broad base of literature in survey research has identified many factors that can independently affect the accuracy of answers to survey questions. Some of the most important include the following:

- Different question wording is likely to produce different responses (Groves et al., 2004).

- The context in which questions are asked—for example, the purpose of the survey as it is explained to the respondent and the order in which questions are asked—influences what respondents will report (Tourangeau and Smith, 1996; Tourangeau, Rips, and Rasinski, 2000).

- Survey mode influences answers. For example, the literature demonstrates that in-person interviews are more likely to produce socially desirable answers that put the respondent in a more favorable light (Dillman, Smyth, and Christian, 2009).

- For self-administered diaries, the visual layout and design can have a dramatic effect on respondent answers (Christian and Dillman, 2004; Tourangeau, Couper, and Conrad, 2007).

These influences are realized as respondents go through the well-established cognitive process of comprehending the question and concluding what they are being requested to do, retrieving relevant information for formulating an answer, deciding which information is appropriate and adequate, and reporting. It is well documented that errors may occur at each of these stages (Tourangeau, Rips, and Rasinski, 2000).

As noted earlier, Bee, Meyer, and Sullivan (2012) found that the level of reported expenditures for certain purchases are consistently different in the Interview survey and the Diary survey. Although the Interview survey generally yields larger expenditures, these differences are not consistently in the same direction. For example, food purchased away from home, payments for clothing and shoes, and purchases for alcoholic beverages are greater from the Diary. Expenditures for rent and utilities, food at home, and gasoline and other energy goods are larger from the Interview. Some argue that larger is simply more accurate, but that may not be the case. The panel has not said that either approach or type of question is inherently better or worse. However, it is appropriate to illuminate these differences more closely.

Different questions are asked in the Interview and the Diary surveys, and these different questions are also asked in different survey contexts. To illustrate this, consider the category of food and drink at home to see how each survey collects this information. This is one of the categories for which the Diary was designed.

The Interview survey asks the following questions:

- What has been your or your household usual WEEKLY expense for grocery shopping? (Include grocery home-delivery service fees and drinking water delivery fees.)

- About how much of this amount was for nonfood items, such as paper products, detergents, home cleaning supplies, pet foods, and alcoholic beverages?

- Other than your regular grocery shopping already reported, have you or any members of your household purchased any food or nonalcoholic beverages from places such as grocery stores, convenience stores, specialty stores, home delivery, or farmer’s markets? What was your usual WEEKLY expense at these places?

- What has been your or your household’s usually MONTHLY expense for alcohol, including beer and wine to be served at home?

Thus, the Interview survey asks the respondent to estimate “usual” weekly expenditure (at grocery stores and home delivery) and to estimate a second “weekly” amount for nonfood items that is included in the first estimate. The respondent is then asked to estimate a third “weekly” expenditure for food at home purchased at all other places apart from grocery stores. Finally, the respondent is asked to make a fourth estimate, this time for the “monthly” purchase of alcoholic beverages consumed at home. In the Interview survey, the questionnaire does not use the term “food or drink for home consumption” but instead talks about “weekly grocery shopping” with no mention of home consumption.

In contrast, the Diary is introduced to the respondent as wanting specific expenses as the respondent makes them. Thus, the respondent is asked to individually record each purchase that fits under the category of food and drinks for home consumption. The emphasis here is on specific products and their detailed characteristics, and whether it is purchased for someone not “on your list.” Alcohol is to be included, but the cost for alcohol is also recorded separately. The respondent is not asked to estimate any “weekly” or “monthly” amounts.

In addition, certain of these expenditures may be viewed as socially undesirable (e.g., alcohol use). An extensive literature has shown that questions about socially undesirable behaviors tend to be underreported in the presence of an interviewer and that more accurate data may be obtained in self-administered modes (Kreuter et al., 2011; Tourangeau and Smith, 1996).

Obviously, not all questions about expenditures in the CE are about socially undesirable behaviors, although questions of finance (in particular income) tend to be seen as sensitive by U.S. respondents. However, the presence or absence of an interviewer is another clear difference between the Diary and Interview collections. It is unclear what percentage of CE questions is likely to benefit from self-administration, as opposed to benefiting from having an interviewer available to clarify confusing concepts and provide motivation. The designs proposed in Chapter 6 attempt to address the unresolved

questions about the benefits of interviewer- and self-administration in different ways. Ultimately, the panel agrees that more research will be needed to fully determine when and for which respondents it will be possible to gain the benefits of increased disclosure in self-administration while also gaining the benefits of interviewer support.

In sum, the CE Interview and Diary present quite different questions and settings so that different answers are to be expected. The Diary uses an itemization process as expenditures are occurring (using instructions that may or may not be understood). In the example of food at home, the Interview survey uses a “global question” quick-response format that involves addition and subtraction of items to form totals. The Interview survey includes an interviewer whose presence may act as a cheerleader or motivator, but in some cases may reduce disclosure of sensitive information or in some ways license the inference that estimation and satisficing6 are sufficient in order to maintain the speed of the interview. It is not surprising that different amounts are reported in the Interview and Diary in this situation, and that these differences are not always in the same direction.

Error Structure

It was beyond the resources of the panel to examine fully the error structure of the current Interview and Diary surveys. However, as the panel went through the process of considering design alternatives for the CE, there was considerable discussion about the error structure of the current surveys.

One issue of discussion was whether the different collection modes in the CE were more or less likely to produce an asymmetric error structure, and if such were the case, whether that type of structure could contribute to the differential underreporting observed between the Interview and Diary surveys. A collection process with a pronounced asymmetric error structure might be more likely to create an observable bias in the estimates.

The current Diary survey asks the diary-keeper to enter expenditures concurrently using records or short-term recall. Errors of omission—forgetting to record a purchase—are the types of errors most likely to occur. If the diary-keeper does not enter expense items on a daily basis but waits until the end of the recording period, there are likely to be more expense items left off of the diary form (more errors of omission). It is possible that the diary-keeper may recall that a purchase was made but then over- or underestimate the amount spent. This latter type of recall error might be more

_____________________

6In this context, “satisficing” is responding to a survey question with an answer that is “good enough” to move forward to the next question, without necessarily being an accurate or complete response.

symmetrical in its structure. In general, most panel members concluded that the current Diary survey had an error structure with asymmetrical properties. This led the panel to look at ways to minimize errors of omission.

The current Interview survey collects expenses retrospectively over a three-month period. The respondent is asked to use records to report expenses, but in reality the survey depends heavily on the respondent’s recall of making specific purchases over a three-month period. In this scenario, errors of omission—failure to recall a purchase or other expense—are likely to be a common type of error. As discussed above, this type of error is likely to have an asymmetrical structure. However, this problem may be less prevalent if respondents estimate expenditures during the recall period rather than trying to reconstruct all purchases as discussed below. Another common type of error is when the respondent recalls that a purchase was made, but he or she has trouble recalling the exact amount of the purchase. This type of error may have a more symmetrical structure if a respondent is as likely to over- or underestimate the amount. Moreover, the current Interview survey uses a bounding interview as its first wave. A major purpose of this bounding interview is to control for asymmetric telescoping errors (erroneously reporting an expenditure that occurred before the reference period) in recall. Some panel members hypothesized that the structure is more likely to be symmetrical in nature, not necessarily subject to bias, although the data are not available to test that conjecture.

The current Interview survey also features another type of question whose error structure may be quite different. For certain frequently purchased items (such as gasoline or food at home), the respondent is not asked to recall all purchases over the three months. Instead he or she is asked to “estimate” the usual amount the household spent on the item per month or per week. This type of question is illustrated earlier in this section. The assumption in this type of question is that the household is likely to make many such purchases and that a systematic recall of individual purchases over three months would be very problematic. So the respondent makes an “estimate” of how much the household typically spends on the item. The panel discussed the possible error structure of these types of questions. Some panel members hypothesized that the structure is symmetrical in nature, not necessarily subject to bias. Other panel members held that the panel did not have sufficient information on the structure to draw a conclusion.

Summary of Relative Error in Reporting

Back to the example, which survey more accurately collects the food and drink consumed at home data required by the CE? It depends. If the conclusion is based on the fact that aggregating the Interview estimates

more closely approximates the PCE total, the current Interview survey may be more accurate for this expense item. On the other hand, if based on the hypothesized accuracy of a diary response in its report of a particular expenditure, one might conclude that the Diary may be more accurate. Drawing a conclusion as to whether the Interview or Diary collects more accurate data is not possible with the data at hand. The questions are different, the context is different, and the question order is different. The field representative has greater presence in one mode. In addition, each data collection mode is subject to different causes of inaccurate reporting. The Interview relies on estimates often given with little prior thought to the exact question that is going to be asked. At the same time, Diary responses rely on “near daily” compliance to record every single expenditure, both large and small. The modes are subject to different visual layout effects. The panel knows of no research on consumer expenditures that controls for these factors while asking the same consumer expenditure questions. The panel has made the following conclusion:

Conclusion 5-2: Differences exist between the current Interview and Diary reports of expenditures. Differences in questions, context, and mode are likely to contribute to these differences. The error structures for the two surveys, and for different types of questions in the Interview survey, may be different. Because of these differences, we cannot conclude whether a recall interview or a diary is inherently a better mode for obtaining the most accurate expenditure data across a wide range of items. Both have real drawbacks, and a new design will need to draw from the best (or least problematic) aspects of both methods.

SOURCES OF RESPONSE ERROR IN THE INTERVIEW SURVEY

The CE Interview survey is long and exhausting. A household respondent is expected to complete this interview five times, three months apart. The interviews average 60 minutes but may be shorter or much longer. (Panel members who reported their own expenditures in mock interviews with Census field representatives described interviews that lasted significantly longer.) During the interview, respondents are asked to report as many as 1,000 specific expenditures during the preceding three months. These questions cover the gamut of items for which a household might expend dollars, including health insurance, women’s blouses, children’s toys, men’s socks, toasters, the repair of an air conditioning unit, vehicle cleaning, mortgage interest, electricity, prescription medications, alcohol, gasoline, greeting cards, and parking and tolls, to mention just a few. To put the enormity of the task in perspective, an information booklet is handed to the respondent at the beginning of the interview. This booklet includes

36 pages with 9 to 70 items per page of possible consumer expenditures the respondent is asked to report.

Inaccurate reporting to this gauntlet of questions will occur. The rest of this section highlights some of the potential reasons for these errors.

Motivation in Interview Survey

Respondents in the CE Interview have little apparent motivation to engage in a complex, protracted interview. Once a household member agrees to participate in the CE Interview survey, he or she discovers that the task is cognitively difficult and time-consuming. Some respondents see the reporting of detailed expenditures as an invasion of privacy. Others may fear sharing certain information with a government agency. Some expenditures, such as gambling losses or excessive purchases of alcohol, may be embarrassing to report. Beyond those concerns, the majority of respondents just want the interview to be over as quickly as possible (Mockovak, Edgar, and To, 2010).

The field representatives understand these concerns. When asked about factors that contributed to underreporting on the CE, they said the greatest factor was respondent mental fatigue because the interview is too long. Sixty-two percent (62 percent) rated this factor as a 6 or 7 on a 7-point scale of importance (Mockovak, Edgar, and To, 2010). Field representatives want to do their job: complete the current interview and return to repeat the process four more times. They feel a need to keep the interview short so that it does not end up as a refusal, either immediately or in subsequent waves. This produces a tradeoff between completing the interview and pushing too hard for accurate answers.

There is little doubt that both the respondent and the field representative benefit from keeping the interview short. Encouraging respondents to give more complete answers, for instance by encouraging them to find and consult records or consult with other household members, is likely to slow down the interview process. Placing an emphasis on getting exact amounts is also likely to lengthen the completion process and frustrate respondents.

Some respondents may be initially motivated to report accurately but soon find that they cannot. Accurate recall of the hundreds of items on the CE is very difficult. Even motivated respondents may find they are not able to do this. (See panel members’ reactions to completing the CE in Chapter 4, in the section “Panelists’ Insight as Survey Respondents.”) Under these conditions the motivations of both the respondent and field representatives affect the accurate reporting of expenditures.

Conclusion 5-3: Motivational factors of both the respondent and field representative appear to negatively influence the quality of the CE

Interview data. This leads the panel to the judgment that a changed incentive and support structure for both respondents and field representatives will be needed for a future CE redesign to motivate high-quality reporting and reduce fatigue.

Interview Questionnaire Structure

The current CE Interview questionnaire is structured around categories of expense items. The field representative asks first about a fairly broad category of items and then drills down until the question is directed toward a specific detailed item. For example, we will ask first about any clothing purchases: “Did you purchase any pants, jeans, or shorts?” At this point, the questionnaire asks a series of ancillary questions about the purchased item.

- Describe the item.

- Was this purchased for someone inside or outside of your household?

- For whom was this purchased? Enter name of person for whom it was purchased. Enter age/sex categories that apply to the purchase.

- How many did you purchase? Enter number of identical items purchased.

- When did you purchase it?

- How much did it cost?

- Did this include sales tax?

- [if the respondent cannot separate the item from other items] What other clothing is combined with the item? Enter all that apply from a list of 18 clothing categories.

The questionnaire then returns up one level of aggregation to identify other expenditures within that subcategory (“Did you purchase any other pants, jeans, or shorts?”). This questionnaire structure creates the cognitive challenges described below.

The Structure of the CE Interview Encourages Satisficing and Similar Response Errors

The CE Interview asks a series of global questions that require respondents to think about unnamed specific items (e.g., pants, socks, belt) they have purchased based upon a general stimulus (e.g., clothing). If they answer “yes” to the global question, then they will be asked specific questions about that purchase or purchases. This sequence is repeated dozens of times during each interview and may affect respondent behavior. It seems likely

that respondents learn quickly in the first interview, and are reminded in each successive one, that the interview will last longer if they answer “yes” to these screening questions. For example, a respondent would be asked if anyone in the household took any trips during the three-month period. A “yes” answer leads to many questions about specific expenditures made on that trip. After completing that series of specific questions, the respondent is then asked if household members took any other trips. The respondent quickly understands that reporting a second trip would add a number of additional questions and minutes to the interview. This phenomenon is known as “motivated underreporting” and is discussed by Kreuter et al. (2011). A survey of CE field representatives (Mockovak, Edgar, and To, 2010) quantified this problem in the CE. Field representatives were asked how often this phenomenon happens in a CE interview. Fifty percent of field representatives said that it happened frequently or very frequently.

Conclusion 5-4: The current structure of the Interview questionnaire cycles down through global screening questions, and asks multiple additional questions when the respondent answers “yes” to a screening question. As this cycle repeats itself, a respondent “learns” and may be tempted not to report an expenditure in order to avoid further questions.

CE Methods Are Not Well Aligned with Modern Consumption Behavior

The CE Interview questionnaire is cognitively designed to collect spending information in an earlier era when purchases and expenditures were made in quite different ways. The cognitively outdated design of the questionnaire makes it difficult for consumers to respond easily and accurately to the questions. This exacerbates both recall error problems and overall response.

Major changes have occurred in retail markets in the last decade, including a major consolidation of the retail pharmacy and grocery industry. Simultaneously there has been an explosion of loyalty card programs in these same (and other) industries. These days, it is common for households to purchase a variety of types of items in a single large store, such as Costco or Walmart, rather than going separately to a grocery store, butcher shop, clothing store, and hardware store. A single purchase of a group of items and the payment of a “total amount” may make it more difficult for a consumer to later recall details about an individual item than if that item were purchased in a separate transaction. Separate transactions provide a focus on the individual items and may help reinforce the memory of both purchasing the item and the amount paid.

Consumers purchase items through many different methods, including credit card, debit card, check, cash, gift card, payroll deduction, and preauthorized automated payment. Items may be purchased in person, by telephone, by mail, or online. Respondents may remember how much they paid on their credit card bill but be unable to recall the specific items that were purchased. Respondents may not think at all about automatic payments. The combined effects of these increasingly varied ways of making purchases and a rigid interview questionnaire that generally flows by product groupings rather than by shopping trip or payment method make the task of recalling and reporting those expenditures more difficult.

This question structure seems likely to encourage the use of “estimation” rather than the reporting of a specific recall, and ultimately may lead to less accurate reporting of particular expenditures (Beatty, 2010; Peytchev, 2010). Some questions on the Interview survey (such as food at home) specifically ask the respondent for estimates rather than specific recall. Other questions ask for a specific recall, yet it is unclear in these questions how much estimation is also taking place.

Conclusion 5-5: The current design of the CE Interview questionnaire makes the cognitive task of recalling expenditures difficult and encourages estimation.

Some Questions Are Just Difficult to Answer

In the fifth interview, respondents are asked a series of questions about household financial assets:

- On the last day of last month, what was the total balance or market value (including interest earned) of checking accounts, brokerage accounts, and other similar accounts?

- On the last day of last month, what was the total balance or market value (including interest earned) of U.S. savings bonds?

- How does the amount your household had on the last day of last month compare with the amount your household had on the last day of last month one year ago?

These questions and others like them that ask for precise accountings by month are very difficult for respondents to answer. Banks are likely to provide monthly statements for checking accounts, but holders of savings and other asset accounts are generally provided with quarterly statements. A respondent is unlikely to know the market value of those accounts on the last day of the last month unless that day corresponds with a quarterly

statement. Think of the frustration of respondents who do not have the information to answer that question accurately and are then asked to compare their estimate with the market value of those same assets one year earlier. Regarding U.S. savings bonds, individuals may monitor the maturity date of those bonds but are very unlikely to observe the growth in value on a monthly basis, nor even know how to do so.

Respondents are asked for the purchase date of most expenditures they recall. For some, they may be confused about the date on which a particular “expense” occurred. This can be particularly problematic with online or mail purchases. Was it “purchased” when the order was placed, when the item arrived, or when the bill was paid? In the urgency to complete the interview quickly, these CE guidelines may not be explained, understood, or remembered.

Some consumer transactions occur quickly and routinely without the purchaser remembering the cost, even momentarily. Specific purchases and prices that did not mentally register with the respondent cannot be reported later (Bradburn, 2010). Imprinting the “event” in memory or encoding, as psychologists describe it, is less likely to happen with minor, routine purchases. The use of credit and debit cards to pay for groups of varied purchases in large stores seems less likely to result in encoding for specific purchases and prices. In addition, credit or debit cards are increasingly likely to be used for even small routine purchases (lunch, a newspaper, or garage parking), contributing further to the lack of encoding. Automatic deductions of payments from a bank account may also contribute to a lack of encoding. Thus, multiple aspects of contemporary society appear to increase the difficulty of respondents’ ability to report expenditures accurately or at all.

Even if respondents remember how much they paid for a shirt, they may have difficulty knowing whether the amount included sales tax. Additionally, many online purchases are made without the inclusion of state sales tax. The respondent may answer that the amount of an online purchase did not include sales tax, but the CE process will then add tax to that purchase when no tax was actually paid.

Conclusion 5-6: Some questions on the current CE Interview questionnaire are very difficult to answer accurately, even with records.

Interview Survey Recall Period

The CE Interview questionnaire asks respondents to recall most expenditures over the previous three months. The issue of recall, and its effect on reporting accuracy in the CE, was a major topic in the BLS-sponsored

CE Methods Workshop in December 2010. Cantor (2010, p. 4) provided a basic summary, saying “longer recall periods lead to more measurement error. For the CEQ [CE Interview], there are two important characteristics related to error. One is whether the expense is reported at all. The second is the detail associated with the event.” A BLS paper at that same workshop expressed concern:

The length of this three-month recall period, combined with the wide range of question types asked, is generally thought to represent a substantial cognitive burden for respondents. Furthermore, there are different approaches to asking about the three-month recall period, which may compound the cognitive burden for respondents. For example, some CEQ [CE Interview] questions ask about cumulative expenses over the entire three-month recall period, other questions ask respondents about total monthly expenditures for the first, second, and third month of the recall period, and still others ask respondents for average weekly expenses over the recall period. (Bureau of Labor Statistics, 2010c, p. 1)

A three-month recall period can be appropriate for major expenditures such as a major appliance or an automobile, or for expenditures that occur on a regular basis such as rent and utility bills. These are the types of purchases for which the CE Interview survey was initially designed (Bureau of Labor Statistics, 2008). However, the survey currently attempts to collect data on expenditures less likely to be remembered. It also asks detailed information about those expenditures that are difficult to recall. A common response error is one of omission—to simply not remember or not report the purchase and/or price of a particular item. These errors appear to be a major factor in the underreporting of expenditures on the CE.

On the other hand, a longer reporting period (be it for recall or concurrent reporting) has some advantage for estimation. Frequent expenditures may be captured well by a short reporting period, while other expenditures are less frequent and will not be reported by all households during a short recall period. A sufficient sample size collected throughout the year will avoid bias in the estimates, but there is likely to be more variability in the estimates with a shorter reporting period if all other factors are equal. If infrequent expenditures are also major expenditures (i.e., easily remembered), then a longer recall period for those items may be reasonable.

Conclusion 5-7: Three months is long for accurate recall of many items on the CE Interview survey. This situation is exacerbated by the ancillary details that are collected about each recalled expense. Errors of omission are likely to occur, and are a contributing factor to the underreporting of expenditures on this survey. Short recall periods, however,

may produce more variability in the estimates and provide difficulties for economic research.

Use of Records in the Interview Survey

Some respondents do not keep records for expenditures, nor do they keep receipts that specify item amounts. If they have records, they may only keep grand totals without breakouts by item. When records are available, they likely are not organized in a way that allows them to be used effectively (or efficiently) during the interview. Field representatives have reported that a respondent occasionally goes in search for a receipt or record, to return sometime later without it.

Respondents who keep electronic records may not have them up to date in their computer when the interview is conducted and therefore cannot use them to provide accurate answers. A respondent’s electronic records are most likely organized differently from how they are requested in the interview. For example, a respondent may have a record of automobile fuel costs, but may not keep it separately for different cars; purchases at Safeway may not be broken down by food and other household items, all being considered “groceries.”

Paper receipts may be problematic as well. Groups of purchases are likely to be combined into one receipt, and that receipt may be difficult to decipher. Coupon discounts, discounts for the use of a particular credit card, or special “in-store” sales may be shown separately on receipts, so even a conscientious respondent may have difficulty understanding what was actually paid for a specific item. The receipt probably does not include rebates that consumers receive at a later point in time. Receipts vary enormously across stores, with abbreviations, special coding, and character limitations affecting how a particular purchase is described. The respondent may not be able to interpret that information, especially as the memory of the specific purchase fades over days and weeks.

BLS conducted a study on use of records for the CE, using data from CE interviews conducted between April 2006 and March 2008. Following each interview, the field representative recorded whether the respondent used records and which types of records were used. Edgar (2010) indicated that the study involved 44,300 interviews with 21,011 unique households She reported that in 39 percent of interviews, the respondent never or almost never used records, while 31 percent always or almost always used records. Younger respondents were less likely to use records than older respondents. Interviews were longer when records were used and shorter when they were not used. There was a higher level of total expenditures reported when records were used. Those always or almost always using records

as a group reported a higher level of expenditures. In summary, Edgar (2010, p. 26) found that the use of records is “related to longer interviews, more reports, and higher reports.”

These patterns appear again in a later (2010) evaluation survey. Field representatives working on the CE surveys were asked how often respondents use records and receipts to help report their expenditures. Thirty-two percent reported that respondents rarely used these records and another 50 percent reported that respondents only sometimes used records. Only 18 percent of field representatives said that respondents often used records. In that same study, 68 percent of field representatives said that respondents never or rarely consulted online or electronic records.7

Geisen, Richards, and Strohm (2011) reported on a study conducted for BLS on the availability of records and response accuracy without those records. They conducted two interviews with the same households (n = 115) within a week of each other. Incentives were given for both interviews. The first interview asked respondents to complete nine sections of the CE Interview survey. Afterward, respondents were asked to gather all of the records they had relevant to that interview, and they were re-interviewed within the week with their gathered records. The second interview focused on matching the original response to available records. They found that respondents had records for only 36 percent of the expenditure items and 41 percent of the income items. Records were most likely to be available for property taxes (59 percent), mortgage payments (59 percent), and subscriptions (53 percent). The most recent pay stub was available only 40 percent of the time. Regarding response accuracy without records, the authors found that the expenditure amount reported in the first interview “matched”8 the amount on the record only 53 percent of the time. The difference varied greatly by expenditure categories, and ranged from an 11 percent underestimate to an 83 percent overestimate.

The increasing use of telephone interviewing in the CE may reduce the respondent’s use of records in responding. In preliminary results from a 2011 survey of field representatives working on the CE survey (Bureau of Labor Statistics, 2011d), 45 percent of field representatives reported that respondents were much less or somewhat less likely

_____________________

7Internal Bureau of Labor Statistics memo dated November 18, 2010.

8Geisen, Richards, and Strohm (2011) classify a matched response as one in which the initial response was between 90–110 percent of the record amount (for amounts . $200); 95–105 percent of the record amount if the amount was > $200; and 95–105 percent of the record amount for rent, mortgage loan, and income regardless of amount.

to use records when being interviewed over the phone. Only 4 percent of field representatives reported that respondents were much more likely or somewhat more likely to use records in a phone interview. However, 39 percent of field representatives reported that respondents were neither less likely nor more likely to use records on a phone interview.

Conclusion 5-8: The use of records is extremely important to reporting expenditures and income accurately. The use of records on the current CE is far less than optimal and varies across the population. A redesigned CE would need to include features that maximize the use of records where at all feasible and that work to maximize accuracy of recall when records are unavailable.

Proxy Reporting in the Interview Survey

According to the CE procedures, multiple household members are not interviewed even though most households have more than one person who makes purchases. The field representative attempts to interview the “most knowledgeable” household member. Schaeffer (2010) reported that proxy information reported by one person for another in many types of surveys has been found to be inaccurate. In the CE, the household respondent may be unaware of purchases made by other household members (Bureau of Labor Statistics, 2010b).

The effect of proxy reporting is serious, especially for “personal” purchases such as clothing or for purchases that household members want to keep private. A survey of CE field representatives (Mockovak, Edgar, and To, 2010) quantified this problem. Field representatives reported the issue of the household respondent not knowing about purchases made by others in the consumer unit (household) as one of the two most important reasons for underreporting of expenditures. More than half (53%) mentioned it as 6 or 7 on a 7-point scale of importance. In households with multiple purchasers, field representatives reported 18 percent of the time that a second person never or almost never participates in the CE survey. An additional 48 percent mentioned that it happens less than half of the time.

Multiple considerations may underlie the inability of one person to report for others. Household members may have separate sources of income. In addition, they may have separate bank accounts and credit cards. While each person may contribute to household expenses, the exact amounts of the other person’s contribution may be unknown; for example, one person buys food while the other pays housing costs. The great variety of arrangements for handling individual and joint income complicates the reporting of consumer expenditures. In addition, field representatives have reported it appears that some other household members may intentionally withhold information from the household respondent.

Conclusion 5-9: The use of proxy reporting on the CE Interview is problematic, and is a potential cause of underreporting of expenditures.

Telephone Data Collection in the Interview Survey

More than one-third (about 38 percent) of the CE interviews are completed by telephone rather than face-to-face (Safir and Goldenberg, 2008). For in-person interviews, field representatives hand an Information Booklet to respondents to assist them in recalling items that might be forgotten. In telephone interviews, this recall aid is not available. Telephone interviews are shorter and have fewer positive answers to screener questions. They also result in less detail in responding, higher item nonresponse, and more reporting of rounded values. According to the field representative survey, receipts and other records are also less likely to be used by telephone respondents.

Conclusion 5-10: Telephone interviews appear to obtain a lower quality of responses than the face-to-face interviews on the CE, but a substantial part of the CE data is collected over the telephone.

SOURCES OF RESPONSE ERROR IN THE DIARY SURVEY

The Diary survey collects data on expenditures a household makes during a brief period of time (two weeks). It was designed to collect a level of detail unlikely to be recalled accurately during the Interview survey. Cognitively, the Diary survey is very different from the Interview survey, and has its own error profile.

Motivation to Complete the Diary

The current CE Diary is designed to reduce recall problems by emphasizing the recording of expenditures the day they are made. However, respondents in the CE Diary have little apparent motivation to engage in a complex, protracted diary-keeping exercise. Once a household member agrees to participate in the CE Diary survey, he or she discovers that the task can be difficult and time-consuming. Some respondents do not record expenditures each day. Expense items may be reported early in the week, with less enthusiasm later in the week, and even less enthusiasm during the second week. This lapse may be caused by general fatigue with the process and/or the fact that the respondent found the diary form difficult to use. Statistics Canada found the same concern with the diary portion of their consumer survey, reporting that 20 percent of their two-week diary days were “nonrespondent” days (Dubreuil et al., 2010). This lack of motivation

of a respondent to stop in the middle of busy daily activities and record an expenditure on the diary form is probably the major cause of underreporting of expenses in the current Diary survey.

Field representatives place mid-week calls to diary-keepers to mitigate this problem—to ask whether the diary-keeper is having any difficulties and to encourage continued reporting through the week. In addition, the diary pick-up at the end of each week provides the field representative an opportunity to explore whether the respondent may have forgotten to record certain expenditures such as those from checks, cash, credit card payments, automatic online payments, or deductions from pay stubs. These mitigation strategies are not uniformly implemented. There are shortcuts allowed in the fielding of the Diary survey; for example, field representatives are permitted to place both one-week diaries at the same time. Thus, no “reinforcing” visits take place in these cases to examine diary entries and to encourage increased compliance during the second week. The panel did not have data on the extent of this practice or on how often the mid-week telephone calls were placed with households.

Conclusion 5-11: A major concern with the Diary survey is that respondents appear to suffer diary fatigue and lack motivation to report expenditures throughout the two-week data collection period and especially to go through the process of recording all items in a large shopping trip.

Diary Structure

The current diary form suffers from a number of cognitive problems that make the diary-keeping process more difficult than it needs to be and thus can contribute to underreporting on those forms.

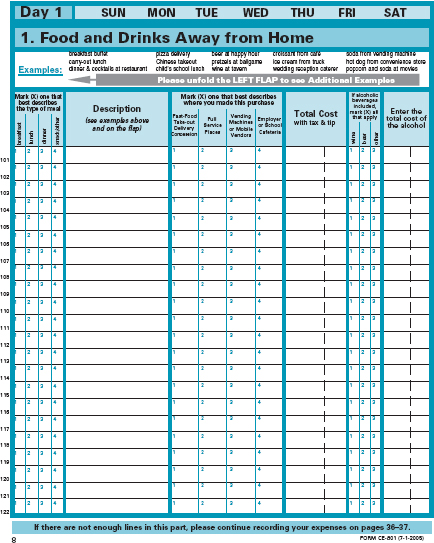

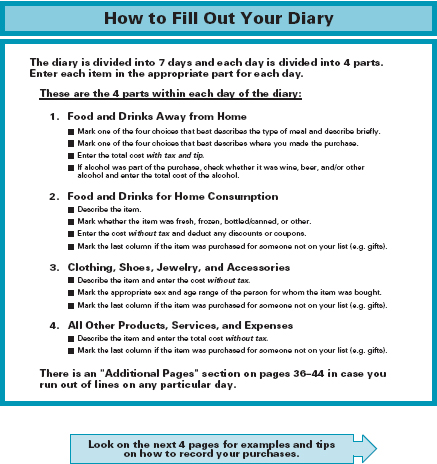

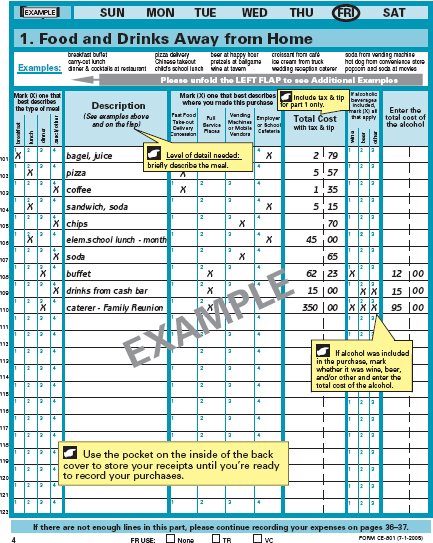

Learning to Complete the Diary May Be Confusing

An initial challenge facing respondents willing to complete the diary is sorting through all the instructions to understand how and where to report expenditures. The diary has 44 numbered pages plus front and back covers, both of which have attached foldout flaps. Fifteen separate pages (including covers and foldout flaps) provide instructions for the diary-keeper on what and how to report, with instructional material scattered among these 15 pages. On the front cover, the field representative identifies the days the diary is supposed to be kept and the first names of the people in the household. The foldout cover flap lists numerous examples for each category of expenditures. Page 1 tells “Why the CE Diary Is Important.” Page 2 describes “How to Fill Out Your Diary” and the four parts. Pages

4 through 7 provide examples of how to fill out each of the four sections. Finally, the back cover provides a pocket and instructions for storing receipts and other expenditure records. It also includes a Daily Reminder list of 18 items with an instruction to ask other members of the household for their expenditures each day. Another foldout flap provides answers to 15 frequently asked questions.

The diary booklet is not organized so as to reveal a natural linear process for becoming acquainted with the recording process. The field representative must flip through the booklet to train the respondent on its use, referring to the 15 individual instructional pages (including foldout flaps) to explain how the diary is designed to be completed. Although each field representative is likely to handle the diary placement somewhat differently, the following may be a typical set of instructions to a potential diary-keeper. Figures 5-2 through 5-5 show four relevant pages from the diary:

- Turn to page 8 (instead of starting at page 1). This page is labeled Day 1” with a separate heading labeled “1. Food and Drinks Away from Home.” Circle the “day of week” that appears in a third heading.

- Moving to the substance of the page, the field representative might explain the six columns of information that need to be answered for each food entry: type of meal; description of meal; where was meal purchased; total cost; which of three types of alcoholic beverages might have been purchased; cost of that alcohol.

- Turn to page 3, to a section on “How to Fill Out Your Diary.” The field representative explains that the food and drinks section previously reviewed was only one of four such tables that have to be filled out each day.

- Turn to page 4. This page displays detailed examples of how the food and drinks might be reported.

- Move back and forth between blank diary pages, examples of filled-out pages, and other sections. These sections include general instructions (page 2), keeping receipts in the back pocket of the diary, a “What Not to Record” section, and, on the back flap of the cover, answers to 15 different questions that the respondent might have.

Conclusion 5-12: A lot of information is conveyed to the diary respondent in a short amount of time. The organization of the diary booklet may result in considerable frustration among some individuals, who feel they cannot master the instructions. They choose instead to collect receipts and leave them for the field representative to enter during the follow-up visit.

Recording Expenditures May Be Problematic

The diary instructions focus on recording expenditures each day during the diary week, separately for different categories. A total of 28 pages of the booklet are laid out by “day” and consist of labeled tables for recording household expenditures made in each of four categories:

- Food and Drinks Away from Home

- Food and Drinks for Home Consumption

- Clothing, Shoes, Jewelry, and Accessories

- All Other Products, Services, and Expenses

Nine additional pages are included for reporting any information that will not fit on the individual day pages.

The diary-keeper is to record each expenditure on the correct page by day and expenditure category. For each item recorded, the form asks for a description of the item, the cost, plus additional information that differs for each of the four expenditure categories. For example, the tables for Clothing, Shoes, Jewelry, and Accessories ask for additional information on the individual for whom the item was purchased: gender, age, and whether the person was a member of the household. The tables for Food and Drinks Away from Home also ask where the item was purchased, whether the item included alcoholic beverages, and a breakout of the cost of any such alcohol.

Research has shown that respondents are influenced by much more than words on how to complete questionnaires; a mostly linear path with guidance from numbers, graphics, and symbols also helps to instruct respondents on how a questionnaire (or diary) is designed to be completed (Dillman, Smyth, and Christian, 2009). The current in-person delivery and retrieval process is designed to compensate for some of these problems. The field representative may look at the receipts collected by the household respondent and ask other questions to find out whether all expenditures have been recorded. Realization at the initial visit that the interviewer will call mid-week and return to pick up the diary at the end of the week would seem to encourage respondents to think about their daily expenditures and be able to recall and report them at the end of the diary week contact. However, this mitigation process is not always followed.

Other general problems can occur with the diary. Some shopping trips require complex reporting of many and varied items on the diary forms. For example, a major grocery-shopping trip may take considerable time and effort to record. Each item purchased must be itemized separately with a description and cost. (The respondent has to figure out whether to record before or after the food is put away.) Receipts are often limited to abbreviations

and codes that may not be understandable to the person who made the purchase and/or is completing the diary.

Part of the diary placement visit is for the field representative to “size up” the respondent as to whether he or she understands how to complete the diary and seems committed to do so. If the respondent does not appear to understand the instructions and the use of the daily recording forms, some field representatives will revert to an alternative approach and ask such a respondent to merely keep all of the household receipts for the week’s expenditures in a pocket of the inside back cover. On the next visit a week (or two weeks) later, the field representative and the respondent will go through the receipts and fill in the diary forms together. The panel learned that this approach is likely used for a significant number of households in which the respondent finds keeping the diary too difficult to do.

Conclusion 5-13: It is likely that the current organization of recording expense items by “day of the week” makes it more difficult for some respondents to review their diary entries and assess whether an expenditure has been missed.

Reporting Period for the Diary Survey

The Diary survey has a one-week reporting period, followed immediately by a second wave also consisting of a one-week reporting period. The Diary survey was conceived as a vehicle for collecting smaller and frequently purchased items that were unlikely to be reported accurately over a three-month recall period. However, in practice, the Diary collects a wide variety of expenditure items. Since many types of expenditures are made infrequently, and others are not purchased in the same amount each week, Diary expenditure estimates for these variables are likely to be more variable than those from the Interview survey with its three-month reporting period. For example, Bee, Meyer, and Sullivan (2012) found that for 2010 the weighted average coefficient of variation of spending reports on 35 categories of expenditures common to both the Interview and Diary was nearly 60 percent higher for a typical Diary response than for a typical Interview response.9 One reason is that, in 2010, close to 10 percent of weekly diaries that were considered as valid observations reported no in-scope spending at all. (About 75 percent of these reports were out-of-scope because the family was on a trip for the week.) Consequently, a larger number of weekly diaries is required to equal the precision of the quarterly interviews.

_____________________

9Their comparisons adjusted for the different sample sizes of the Diary and Interview surveys. The coefficient of variation is the standard error of a mean or other statistic expressed as a percentage of the statistic.

A related conceptual issue is that the short reference period in the current Diary survey may be too short to accurately measure an individual’s normal spending pattern. While these errors may average out in the calculation of means, several important uses of the CE require the measurement of the distribution of spending.

Proxy Reporting in the Diary Survey

Respondents are asked to consult with other members of the household during the week and to report expenditures for all members. The field representative lists the names of the members on the inside cover of the diary. These instructions are aimed at encouraging communication between the person who agrees to complete the diary and others in order to facilitate accurate reporting. As stated earlier, the diary mode does provide more opportunity to confer with other household members over the week than there is within a rushed recall interview. However, there are still issues with proxy reporting in today’s households.

The changing structure of U.S. households, in which the adults in that household are more likely to have separate incomes and expenditure patterns, means that unless deliberate communication occurs expenditures may be underreported. In addition, household members are not always open with each other about what they have purchased or how much it cost. If a member of the household does not want the person most responsible for completing the diary to know of the expenditure or its cost (e.g., a teenager downloading a new video game, the cost of an anniversary gift, or payment of a parking ticket), the diary will probably miss the expense.

Conclusion 5-14: Although the diary protocol encourages respondents to obtain information and record expenditures by other household members during the two weeks, it is unclear how much of this happens.

Comparison of Response Rates

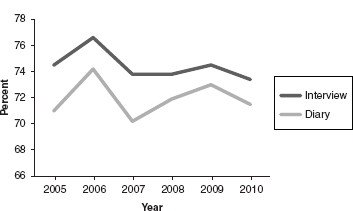

In calculating response rates on the CE Interview survey, BLS uses outcome information from each household for each wave (waves two through five) as independent observations in the survey. For the Diary survey, BLS counts each week of the two weeks of diary reporting by a household as an independent observation. The “CE program defines the response rate as the percent of eligible households that actually are interviewed for each survey” (Johnson-Herring and Krieger, 2008, p. 21). These calculations exclude cases where the household is ineligible.