25- Linking, Finding, and Citing Data in Astronomy

Michael J. Kurtz1

Smithsonian Astrophysical Observatory

My presentation is focused on data citation and attribution issues in the field of astronomy. There are commercial astronomy journals, but most of them are not very important. Basically, the entire system is operated through collaboration between data centers and publishers, where the publishers are the professional societies. I thought I would first share with you some information about a similar workshop held 25 years ago. This Astrophysics Data System (ADS) workshop (1,2) was held on 1987 to discuss issues related to:

1. Data Accessibility

2. Data Format Standards and Quality

3. Data Analysis and Reduction Software

4. User Scenarios

5. Observation Planning and Operations

There was a report from this workshop. One of the points that the report made was the following:

There is an urgent need for a master directory for all NASA space-based observations. It is recommended that the directory should include all past observations and currently planned observations from observatories, and the past and planned observations from ground-based observatories, where possible. NASA and NSF should enter into discussions regarding how this can be accomplished.

If we think about what we are trying to do in this workshop, it is basically similar to this 1987 workshop. If we make a list of all the observations, give them names and addresses, that is essentially putting DOIs and addresses on every piece of data. However, this has never happened. They spent over the next seven years or so $25 million-$30 million trying to make this happen. The reason it did not happen was primarily control. None of the archival systems were willing to give up the control necessary to make it happen. Now, it is a quarter of a century later and that still is the case.

The second issue that I would like to talk about is related to the American Astronomical Society’s (AAS) policy for dataset linking. Their policy started seven or eight years ago, is active and people are joining up. The big data centers can create tags or names for their datasets. They are able to do it the way they want. There is no large system telling them how to do it. However, they have to agree that they will be able to resolve these tags with the datasets when asked. This is a great effort and all the big U.S. data centers are part of it, but the Europeans did not join.

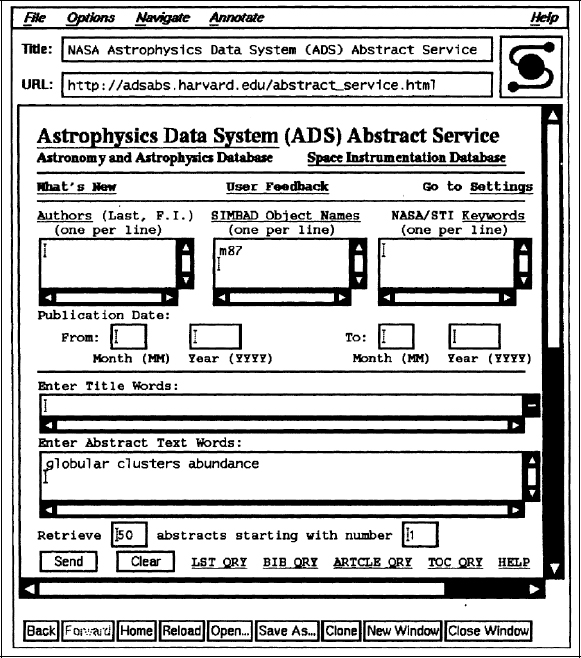

We now will look at data citation and attribution in practice. We have been doing this for a couple of decades now. It pretty much works and it is growing organically. Below is a 17-year old image. It is the first image of a web browser used in our ADS system.

______________________

1 Presentation slides are available at http://www.sites.nationalacademies.org/PGA/brdi/PGA_064019.

FIGURE 25-1 First web browser used in ADS system.

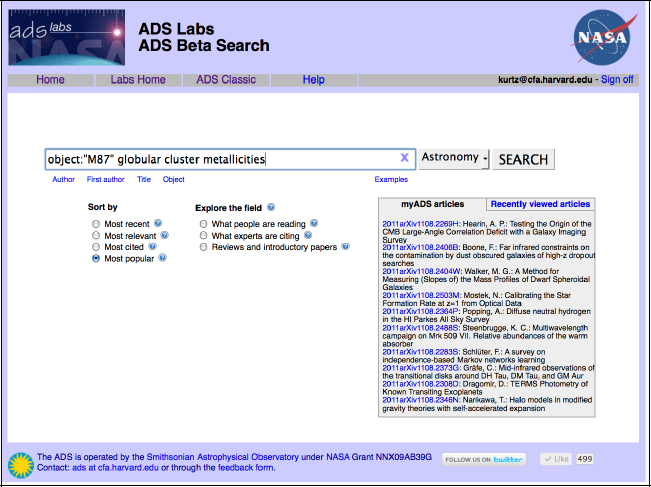

What you can see at the bottom is a complex literature query. To an astronomer, “abundance” means the fraction of different elements in a star. It is also called metallicity. The next image is what it looks like today. It is basically the same query, except that we have changed the word abundance to metallicity.

FIGURE 25-2 Complex literature query.

What happens when you run a query is that a list of popular papers about the metallicity of M87 comes up. We are interested in data so we can ask the system to select only papers with links to data from the space telescope; this yields a list of seven papers concerning the metallicity of the galaxy M87 which have links to on-line data in the HST archive.

We could just as well have chosen any (or all) of about a dozen other archives to obtain original (from the telescope) data, or chosen to retrieve tabular data from any of several dozen papers.

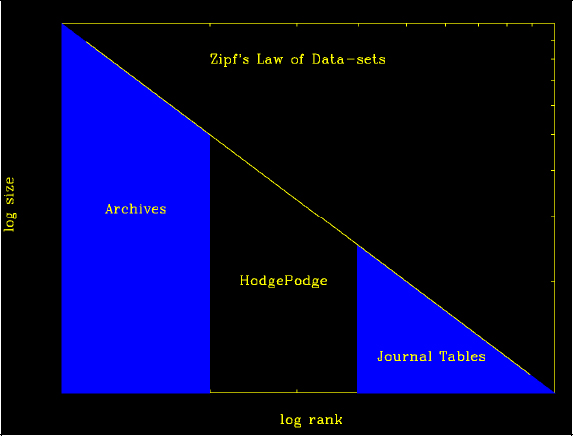

The next image is from Todd Vision of Dryad. It shows the two different kinds of data to which the ADS is linked.

FIGURE 25-3 Zipf’s Law of Datasets

SOURCE: Todd Vision, Dryad.

This figure shows that the highest-ranking source in terms of size are the archives. The archives are enormous in the amount of data they hold and most of them are very well managed. They have people who curate their data and make links. Some of these archives are better than others but in general, they are good. On the right-hand side, there are small data tables from journals. There is a system for taking these tables numerically and keeping them online. Most of these functionalities have been running pretty smoothly for about 20 years. Finally, the middle part is where the problem is. There we find small and medium size datasets with no home; they are often too big for the journals, but are not part of the established archives.

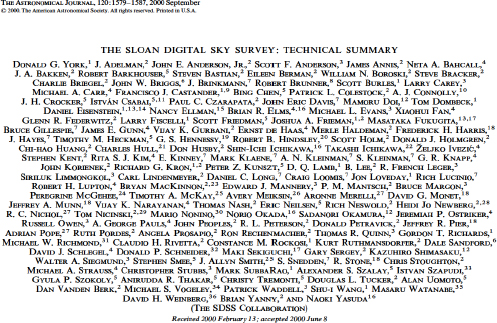

I will conclude by talking about a paper that Margaret Geller and I submitted to the Astronomical Journal last week. I am going to show you the references for a plot that we made in the paper. The first one is from the Sloan Digital Sky Survey (SDSS). It shows that we used York, et al, but we did not use the paper, we used the database.

FIGURE 24-4 Sloan digital sky survey notes.

SOURCE: Reproduced by permission of the AAS.

York, et al, has about 3,000 citations, but has millions of downloads from the on-line database. There need to be ways to measure the impact of things like the SDSS and not just citations.

The next paper below is a secondary catalog of clusters created from the SDSS. This is the part we cited but the catalog itself is not in the journal. The catalog is quite large, more than 50,000 clusters, with about a hundred measures per cluster.

FIGURE 25-5 Cluster Catalog entries form SDSS DR7.

SOURCE: Reproduced by permission of the AAS.

As the catalog is not in the journal, the question then is, Where is it? I did not know, so I queried Google and it showed me that it is on the personal website of the first author. This is it where we got it from. This is the type of data that is not linked to in the ADS.

So, these are two problems in how astronomers link their data.

References

(1) Astrophysics data system workshop. Workshop report, Annapolis, Maryland, August 18-20, 1987, Pasadena: California Institute of Technology (CALTECH,CIT), Jet Propulsion Laboratory (JPL), 1987, edited by Squibb, Gael F.

(2) Squibb, G. F.; Cheung, C. Y. NASA astrophysics data system (ADS) study ESO Conference Workshop Proceedings, No. 28, p. 489 - 496 http://www.adsabs.harvard.edu/abs/1988ESOC…28..489S

DISCUSSION BY WORKSHOP PARTICIPANTS

Moderated by Bonnie Carroll

DR. MINSTER: This comment is for Anita de Waard. You said correctly that commercial software producers do a good job. However, give me any piece of software and I will show you that some of its thousands of files dating back to 1995 cannot open and will never open again. On the other hand, my Linux mail from the 1980 is perfectly fine and I can open all my files with no problem.

DR. DE WAARD. You are right. I should not have said “commercial”. I do not have any preference for any kind of software development. My point is not about how the software is being developed, but about the fact that software developers-commercial or academic—have an important role to play in the infrastructure of scientific communication.

PARTICIPANT: My question is for Bruce Wilson. The last time I looked at the DataONE project, they were using pieces of software from Mercury to obtain metadata from different sources. Is Mercury now producing outputs that can be used directly as a citation?

DR. WILSON: What I showed with COinS (Complex Objects in Spans; see http://www.ocoins.info/) is embedded in the Mercury results. It is the software that drives the search interface for the ORNL back and about 15 other data centers. It is also being used for search in the DataOne project. So yes, we are getting there in terms of using COinS in the search results. The package itself is also using the Open Archives Initiative-Protocol for Metadata Harvesting (OAI-PMH) and we have been extending it to expose OAI-PMH to other harvesters.

PARTICIPANT: Does it produce output that can be used directly as a citation?

DR.WILSON: COinS produces output that can be used directly as a citation in the sense that what we are trying to do is to provide structured metadata in a format that can be used by citation tools.

PARTICIPANT: My comment is for the commercial publishers. At least in my scientific community, biodiversity, there is a growing understanding that one of the ways to encourage the citation approach is to publish the data and to provide some incentives, something like a data paper. Given the fact that there would not be any operational burden on them, how do you think the commercial publishers will respond to such a call from the community? Would they produce a section in their journals dedicated to data papers where the datasets are described through the offering of metadata? Will the journals be engaged in the peer review and publishing of such data papers? It is important for scientists to publish and make data available in the open public domain, and therefore I think it is important that the commercial publishing community come forward and introduce such sections in their existing journals and publications. Do you think publishers would respond to that? How would this affect their business and operational models?

PARTICIPANT: I think the peer review of datasets is a very difficult task. If we look at Michael Kurtz’data, for example, we will realize that it would take a lot of work and time to do a good job reviewing the data. Someone needs to do that work and it costs money. This seems like the

kind of work that governments usually fund. I do not think it is the publishers’ job. If a journal agrees to do it because it is critical in their field, then that would be great. I do not think there is any blanket statement to be made here except that everybody realizes it is a difficult, time consuming, and expensive process and that somebody needs to pay for it.

PARTICIPANT: There is a good example from chemistry. There is a leading institute called the Beilstein Institute that does a lot of work in the data curation area.

PARTICIPANT: There is also a commercial version of that. I think that we need either a strong mandate backed with funding from a government organization or a private business model to make sure that the data curation is done professionally and properly.

PARTICIPANT: There is a journal that does that. It is called Earth Science Data. I think it has been marginally successful. Like any journal, they struggle to get reviewers but in this particular case, it has been more difficult and challenging.

PARTICIPANT: I should start by saying that I am a total cynic. Yes, we do have this session with a group of stakeholders in the research enterprise, and yes, data citation is the main theme, but I do not think that citation and attribution are going to solve all the important issues across this large spectrum. There needs to be a lot more outreach and interfacing. This is just an observation, not a criticism.

This question is for Bruce Wilson. If I understood correctly, you said that downloads of data did not necessarily correlate with the citations of data. If you look at the literature, however, downloaded papers do actually correlate with the citations of these papers to some degree. It seems to me that there is a difference of views here and I think it would be important to understand why that might be the case. Whether it is because the data are not properly attributed, because there is not enough metadata, or it is a function of different disciplines, it would seem to be an important point to understand.

DR. WILSON: I am not aware of broad studies on these issues. My statement was based on some observations of the roughly 1,000 datasets held by the ORNL. This sample has some limitations, but what we found, through simply going out and asking questions, is that there were cases in which people stopped working with the dataset because it was too hard or because there was a problem with the data. These findings helped us to identify some of these issues and fix them or greatly lower the barrier to the datasets. After fixing some of these issues, the number of downloads increased and early indications suggested that the citation of that data has also gone up. We have seen cases where some of these datasets are now being routinely downloaded and used in the classroom for undergraduate education. That is also another issue, where downloads might be attributed to other kinds of uses of the data. That is why I am interested in the discussion about what are the impacts of the data outside of the scholarly community literature.

DR. KURTZ: Downloads correlate with citations only when researchers use them. This is true because practitioners do not cite data. It seems that materials that are useful in practice are often never cited. Also, not everybody who downloads the dataset is planning to immediately write a paper. There are many other uses of data. If you look at download statistics in Google scholar versus citation statistics, there is no correlation, whatsoever, but if you look at download

statistics for research articles by astrophysicists through ADS, the correlation is perfect. So, it really depends on what the use is.

PARTICIPANT: I have two points. First, I am following up on Phil Bourne’s point about whether we are overloading data citation. I think that despite our attempts to keep this meeting within a narrow range, we also wanted to surface the whole set of issues concerning data citation and attribution. Second, I want to emphasize that describing the data for future reuses within the immediate discipline is hard enough. Describing it for future uses in adjacent disciplines and beyond requires much more context. Basically, the farther you want to go from the point of origin, the more interpretation is going to be required.

Potentially, this might be a librarian’s full-time job. Allen Renear and I are among the few people in this room who built courses and educated libraries around data archiving, but I do not see several dozen of our graduates being hired for these jobs. I am not seeing the growth yet. There are real infrastructure and human resource issues here. If the panel could address whom you are hiring to do this job and why, that would be really helpful, too.

DR. CHAVAN: I want to comment on Michael Kurtz’s point that citation is not the only way to measure the usage of the data and that there are several uses of the data that often do not result in scholarly publications. The way to address this issue in our community is through building what we call “a data usage index”. This is an index with several parameters, whereby download and use of data is one of the aspects of the data usage index. Several co-authors and I proposed this index through a paper in 2009. The index has gone through community consultations and over the past 18 months and we will be advertising the algorithms auditors. We believe that this algorithm can be modified for different disciplines because of the different data usage patterns in different disciplines. So, in addition to data citation, we also need to promote and facilitate the creation of other forms of impact measurement.

DR. SMITH: Yesterday, someone talked about the importance of data citation to provide credit to researchers and that professional data centers also care about getting that credit. However, I do not hear that universities and libraries also require credit for the incredibly labor intensive work that they do to get the data managed and archived. It would be useful to discuss whether research universities and libraries also should get such credit.

PARTICIPANT: I think it is important to parse this topic well here. Let us start from a community perspective. In some communities, there is very clear value from having shared data and in this case there is an absolute requirement for standards for data attribution and citation. In other communities, on the other hand, the cost-benefit analysis does not come out so clearly in favor of benefits.

The second point I would like to make is about credit. Some institutions choose to play a role in the community in the provision of data assets that extend beyond the institution. In those cases, it is very clear that those institutions expect to be rewarded for the role they are playing. When it comes to getting credit for having, for example, an institutional repository that serves the need of the researchers in one institution, I would not expect that institution to require credit for that work. This is simply the responsibility of this institution to its stakeholders. That maybe helps to tease out some of the issues in the discussions we have been having over the last couple of days,

because when it comes to data attribution and citation, I do not think there is a one-size-fits-all model.

DR. WITT: From a library perspective, I think it is more about supporting an institutional mission more so than credit. It is also about relevance. Books and journals are going away and there is more focus now on how libraries are going to deal with data collections. So if we are not an actor in this process, whether through discussing data management plans, building repositories, or creating services to help people find and use data, libraries will lose relevance. As for the workplace and the kind of new organization or infrastructure that the libraries will need, I think that the principles are all there but the technology and the packages that are in place need to evolve to meet the requirements of the new tasks.

Take cataloging, for example. The people who understand the AACR2 (Anglo-American Cataloguing Rules) and how to create records and descriptions will still very much be needed. Such areas are still relevant to the data world. It is not what needs to be done, but how it is done to make these changes. We can look at the organizational chart of libraries and try to identify the relevant components, whether they are in informational literacy outreach, collection development, metadata and technical services, acquisitions, or archives. I currently see many libraries defining new positions related to data service and curation. So maybe we should take a hybrid approach, where a library will have folks who do the new data related work, and other folks, who still work on more traditional areas.

PARTICIPANT: At our publishing house, we are hiring knowledge modelers. This category can include different domain specialists, such as economists and technologists. They do modeling, analysis, and visualization.

MR. UHLIR: I just want to point out that the NRC Board on Research Data and Information will be doing a consensus study on the future workforce and educational requirements for digital curation, starting in the fall of 2011. We will be looking at all of these issues.

PARTICIPANT: I think that what will happen in the data citation context is similar in some ways to what happened when we went online and searching online became an important skill. Libraries needed the expertise, so they hired some specialists who did searching for their patrons. In the library schools, they hired experts who did specialized courses on online searching that were very popular.

DR. WILSON: The one thing that I would add from a data center perspective regarding workforce needs is that we are frequently looking for what Mark Parsons has called the data wrangler, which is somebody who has domain expertise, information science expertise, and understands that what they are going to be doing in ten years, even though it may have nothing to do with what they are doing now.

This page intentionally left blank.