4

Metrics, Definitions of Success, and Data

The National Nanotechnology Initiative (NNI) aims to understand and control matter at the nanoscale so that industries can be revolutionized and society will benefit. That vision provides a high-level, generalized “definition of success” for the NNI. The substantial complexity, federal investment, and importance of this enterprise, on the basis of its four goals, require careful and regular assessment of its effectiveness. Because it can be argued that federal investments in nanotechnology by many of the participating agencies would have occurred even without the formal establishment of the NNI, a key challenge for the NNI is to identify and possibly quantify the extra value added by its establishment and operation and to determine whether it is meeting its goals.

The NNI is working to accomplish four primary goals:1

1. To advance world-class nanotechnology research and development.

2. To foster the transfer of new technologies into products for commercial and public benefit.

3. To develop and sustain educational resources, a skilled workforce and the supporting infrastructure and tools to advance nanotechnology.

4. To support the responsible development of nanotechnology.

1 See National Nanotechnology Initiative, “NNI Vision, Goals, and Objectives,” available at http://www.nano.gov/about-nni/what/vision-goals, accessed January 23, 2013.

The challenge raises issues that go well beyond the usual assessments of an individual agency or mission. Each agency already has in place processes for relating inputs to outcomes. There appear to be data that, although not routinely cast in this form, would permit each agency to evaluate the effectiveness and efficiency of its individual NNI investments. One example is the NSF website, detailing grants funded by the agency; another is the NIH Reporter, giving details of grants, albeit slightly different.2 The committee found that those computer-based assessment tools are not adequate for assessing the overall effectiveness of the NNI as a major national multiagency initiative. In particular, the kinds and formats of data collected by the participating agencies are neither mutually compatible nor readily shared among the agencies.

However, progress toward achieving the four NNI goals is currently reported by NNI in largely anecdotal form in the annual NNI supplements to the President’s budget. There, several agencies provide examples of successful projects; some provide numerical data, and some present short summaries without many details. Interagency activities are reported in the same manner. Clearly this makes it difficult to link what is reported to specific progress toward achieving each of the four goals.

The result is lost opportunities to evolve best practices; to measure the value added by interagency cooperation, planning, and collaboration; to identify and rectify programmatic gaps or redundancies; or to determine whether the levels of investment are adequate to meet the goals. The Nanoscale Science, Engineering, and Technology (NSET) Subcommittee of the National Science and Technology Council’s (NSTC’s) Committee on Technology and the National Nanotechnology Coordination Office (NNCO) could gather and aggregate such information from the agencies and bring the data and associated metrics to bear toward NNI goals in ways that are accessible to the various NNI stakeholders.

In this chapter, the committee identifies some of the shortcomings of the current processes and offers recommendations for improvement. It describes in general terms the role of data and metrics in assessment, identifies some aspects of particular relevance to the NNI, and then briefly reviews other studies of metrics for federal research and development (R&D) programs and suggestions for specific types of data and models appropriate for the NNI. It also discusses some new tools and methods that are becoming available owing to research in the field of metrics and assessment and concludes with a proposed implementation process.

2 See, for example, the NSF and NIH webpages on current funding at http://www.nsf.gov/awardsearch/ or http://projectreporter.nih.gov/reporter.cfm, accessed January 24, 2013.

The key for determining progress toward successful outcomes is to have explicit models for the NNI that link desired goals and specific long-term outcomes to investment (funding and resources), implementation plans, actions throughout the NNI, outputs, and short-term outcomes that can be measured and evaluated. The purpose of measurement and evaluation can be thought of as threefold: to determine whether the plans are being followed, to determine whether the investments and plans should be changed on the basis of outputs and short-term outcomes, and to determine whether the plans are producing the desired outcomes.

The methods, techniques, and potential of nanotechnology pervade the programs of the participating agencies. In many cases—such as the Department of Energy (DOE) Nanoscale Science Research Centers (NSRCs), the National Science Foundation (NSF) Nanotechnology Undergraduate Education (NUE) program and similar programs, and the multiple agency investments in the environmental, health, safety, and societal effects of nanotechnology—the mapping of investments to specific NNI goals is clear and direct. In other cases, however, such mapping is substantially less straightforward. Most often, the nanotechnology funding accounted for under the NNI is not defined by explicit nano-directed programs but is ascribed to nano-related projects in the broad portfolio of existing agency programs (the Type 1 funding described in Chapter 2). That makes it difficult to assess the true effect of NNI investment on outcomes of agency research or to distinguish when nanotechnology has been the driver in the outputs of the agency programs from when it has played a supporting, yet enabling, role. The committee believes that improving how individual agencies determine their share of NNI funding and making this publicly known would substantially enhance the ability to relate NNI-derived funding of projects directly to the overall output and outcomes of the agency research portfolios.

Finding: Data appear to exist that would permit evaluation of the effectiveness and efficiency of each agency’s individual NNI investment. However, at present the kinds and formats of data collected by the participating agencies are neither mutually compatible nor readily shared among the agencies.

Finding: The NSET Subcommittee and the NNCO could gather and aggregate such already existing information across agencies and bring the data and associated metrics to bear to assess progress toward NNI goals.

ESTABLISHING METRICS FOR QUALITY IMPROVEMENT

In its interim report, the committee examined the role of metrics in managing such programs as the NNI.3 It is most important that measurements be made only if actions will be taken as a result. With a materials-manufacturing analogy, the information in Box 4.1 sheds light on the general relationship between making measurements and taking action as a result.

The following excerpts from the interim report highlight some additional thoughts that guided the committee through the process of writing this chapter and defining its recommendations. For the full text of the committee’s interim report, see Appendix E.

This report reflects the committee’s view that measuring something just because it can be measured is not good enough: metrics must be indicators of desired outcomes. There must be a model that accurately relates what is measured to a desired outcome and an equally accurate system to perform the measurement. Having both constitutes a metric. Without both, measurements have little value for program assessment and management. (p. 141)

… progress toward achieving the four NNI goals is reported in largely anecdotal form. Several agencies provide examples of successful projects, some provide numerical data, and some present short summaries without many details. Interagency activities are reported in the same manner. That approach is consistent with how the NNI agencies manage their overall portfolios, how they gather information to report to the president, and what is included in the NNI supplement to the president’s budget. (pp. 152 and 153)

A good metric for output should be an accurate measure of whether the desired outcomes of an activity have been achieved—outcomes that represent the value that the activity was intended to generate. In fact, however, many accepted quantitative metrics are used to measure what can be easily measured, rather than the value created in the course of the activity. (p. 154)

Additional characteristics of a good metric are that the information supporting it are reliably and relatively easily obtainable and that, at the very least, the benefits contributed by the metric to evaluation, strategy, and priority setting justify the cost of obtaining the information. (p. 155)

The definitions of success and associated metrics that have been applied to NNI-funded programs are set by the agencies, and are, therefore, predominantly agency-mission-based, with nanotechnology being secondary. More is needed for assessing the success of the NNI as a whole beyond the success of the individual agencies in fulfilling their missions. As noted in a 2012 Government Accountability

3 National Research Council, Interim Report for the Triennial Review of the National Nanotechnology Initiative, Phase II, The National Academies Press, Washington, D.C., 2012 (reprinted in Appendix E).

BOX 4.1

Metrics in Industry and in Academia

The relationship between output metrics and desired outcomes for the NNI can be illustrated by analogy with manufacturing—which is predicated on a market, i.e., “customer need.” In manufacturing, a material or product is measured for three reasons: quality control, quality improvement, and establishing that a legal requirement specified in a contract between a supplier and a customer has been met. In the first case, all that is needed is a simple, reliable measurement to identify when it is no longer producing acceptable outcomes; the measurement produces as simple a result as “acceptable/unacceptable,” and the information it provides stays local to provide quality control. In the second case, the measurement is more quantitative, guiding changes to produce better outcomes than previously obtained. In the third case, a supplier agrees to provide the customer a material that has specific properties as measured with specific agreed-on, standardized techniques. In each of those cases, there is an established model that relates a measurement to a desired outcome, and the measurement may be different in each case.

Academia’s answer is to evaluate an individual based on a model of academic success using a set of subjective, qualitative metrics supported by quantitative data on output and subjective evaluation of that data. This combination of subjective evaluations and quantitative output metrics has evolved to support a model of academic success for faculty at different career stages and performance levels, from assistant to full professor.

Dependence on the subjective evaluation of a group of experts chosen for some mix of technical expertise, judgment, and breadth of knowledge of the field is key to this approach. Although the results of the application of qualitative metrics are subjective, such metrics have been demonstrated both to be reasonably reproducible and to successfully encourage desired outcomes.

Office (GAO) report,4 neither input data nor output data can be readily compared among agencies. The measurement systems are not the same; each agency uses different metrics and processes for quality control of its programs that are based on the agency, its mission, and its historical way of doing things.

Establishing metrics for quality improvement—in which the process being improved is the NNI and its R&D system for addressing the four goals (listed on the first page of this chapter) and contractual obligations between the agencies and the societal customer—would be a reasonable next step. For these cases, an effective model would be one that connects what is being measured and evaluated (funding and resources) to the intermediate-term and long-term outcomes for which the customer is paying. Without the establishment of this connection, even accurate metrics will likely provide an incomplete and inaccurate assessment of whether desired outcomes are being met. As noted in the interim report, having

4 GAO, Nanotechnology: Improved Performance Information Needed for Environmental, Health, and Safety Research, GAO-12-427, 2012, available at http://www.gao.gov/assets/600/591007.pdf, accessed October 11, 2012.

both a model and a valid measurement system constitutes a “metric.” Without both, measurements have little value for program assessment and management.

An important characteristic of a good metric is that the necessary supporting information is reliable, rigorously definable, and relatively easily obtainable. Also the information generated by the metric should inform decisions that guide the program quality. The quest for good metrics is often confined to quantitative metrics. That can lead to collection of output data that are peripheral to the goals and outcomes of the activity. Furthermore, there is general awareness that reliance on quantitative metrics alone may change the behavior of participants in ways that are not necessarily beneficial or helpful in achieving successful outcomes.

The committee believes that effective evaluation must couple judiciously chosen quantitative measures with appropriate qualitative methods. In particular, subjective evaluation by a group of domain experts is key to any overall analysis of the NNI’s effectiveness. It is an accepted component of the agencies’ review panels and reports that serve as input to program management.

Similarly, quantitative and qualitative metrics can and must be applied to assessing the effects of NNI-related activity. The committee recognizes the great difficulty in defining robust models and metrics for a field as diffuse as nanotechnology and for agencies as diverse as the NNI member agencies. Nevertheless, the models and metrics applied must be rigorous and have clearly and publicly defined assumptions, sources, methods, and means for testing whether the models and data are accurate. If the data or analysis methods are inaccurate, incomplete, or not rigorously defined, the resulting evaluation, decision making, and allocation of resources will be compromised.

Although it is exciting, as discussed below, that new methods for gathering, analyzing, and interpreting data are being examined in the scientific community, the committee urges caution in their adoption before thorough evaluation. For example, although the NSF Star Metrics project5 has many promising characteristics, it also presents grounds for concern. Directly accessing institutional human resources databases to automate data collection on personnel, for example, seems excellent, but the software algorithms used to parse project summaries to identify emerging fields of research may not be ready for application. Implementation of the Star Metrics approach to define fields and current funding levels without independent validation could thus lead to erroneous conclusions.

5 See Department of Health and Human Services, “What Is STAR METRICS?,” available at http://www.starmetrics.nih.gov/, and Federal Demonstration Partnership, “STAR METRICS,” available at http://nrc59.nas.edu/star_info2.cfm, as well as J. Lane and S. Bertuzzi, “The STAR METRICS Project: Current and Future Uses for S&E Workforce Data,” available at http://www.nsf.gov/sbe/sosp/workforce/lane.pdf; all accessed February 1, 2013.

In summary, the committee suggests that strictly quantitative output metrics are not themselves definitive in evaluating the success of the NNI mission. Well-crafted qualitative and semiquantitative metrics and their review, supported by rigorously documented quantitative metrics, are more likely to be useful in producing evaluations that measure success and in setting NNI goals and policy.

DEFINITIONS OF SUCCESS AND METRICS FOR THE NNI: BUILT ON DATA AND SCIENCE

In the interim report (see Appendix E), the committee developed definitions of success for the NNI based on the four NNI goals (Box 4.2).

The committee believes that these are appropriate definitions of success for the NNI. The challenge for NNI is now to develop and make available data that

BOX 4.2

Definitions of Success for the NNI Goals

Goal 1: Advance world-class nanotechnology research and development.

• A full spectrum of R&D—fundamental research, use-inspired basic research, application-driven applied research, and technology development—is being supported within the NNI.

• The NNI supports research that crosses boundaries—disciplinary, institutional, national, agency, and sector (government-university-industry)—to advance nanoscience and nanotechnology.

• The nanoscience and nanotechnology developed within the NNI are comparable to or better than the best in the rest of the world. In other words, NNI-supported research is world class.

• The frontiers of knowledge are being substantially advanced, commensurate with the NNI funding.

• Industrial sector-specific nanotechnology knowledge is used to inform application-driven research investment decisions.

• NNI dollars are spent wisely to advance world-class R&D effectively and efficiently.

Goal 2: Foster the transfer of new technologies into products for commercial and public benefit.

• Vibrant, competitive, and sustainable industry sectors are developed in the United States that use nanotechnology to create new products, skilled, high-paying jobs, and economic growth.

• NNI-supported research is leading to valuable new technology that is being commercialized.

Goal 3: Develop and sustain educational resources, a skilled workforce, and the supporting infrastructure and tools to advance nanotechnology.

• A nanotechnology scientific and technical workforce is being trained and educated, and it contributes effectively to the U.S. economy, with the supply matching the growing demand for U.S.-based skilled nanotechnology workers.1

• Public understanding of, and interest in, nanotechnology and how it may impact our lives is expanded.

• Cohesive and substantial facilities and networks are being built that are of broad relevance to the nanotechnology community, and these facilities foster scientific collaboration.

can be (1) used to determine performance with respect to these definitions, (2) analyzed and used for strategic management, and (3) used by domain experts to independently evaluate success based on a combination of qualitative and quantitative metrics that relate to outcomes.

DATA SETS ESSENTIAL TO NNI ASSESMENT

Based on these definitions of success, the committee identified one critical data set and eight additional data sets needed to assess the current state of the NNI and determine progress toward NNI goals.

1. NNI-funded projects, including such information as researcher name and affiliation, funding agency and amount, and abstract. Such an NNI-wide

• The amount and the types of infrastructure for nanotechnology advancement are appropriate for the funding levels.

• The technical needs of NNI stakeholders are met through NNI user facilities.

• Utilization rates for NNI infrastructure are high.

Goal 4: Support the responsible development of nanotechnology.

• Development, updating, and implementation of a coordinated program of environmental, health, and safety (EHS) research lead to development of tools and methods for risk characterization and risk assessment in general—including both hazards and the likelihood of exposure—and support a growing understanding of potential risks of broad classes of nanomaterials.

• Results of EHS research worldwide are public and easily available to researchers and users of nanomaterials.

• Businesses of all sizes are aware of potential risks of nanomaterials and know where to obtain current information about the materials’ properties and best practices for handling them.

• To enable continued innovation, regulatory agencies have sufficient information to assess the risks posed by new nanomaterials.

• The NNI supports research to assess the societal impacts of nanotechnology in parallel with technology development.

• K-12 students are exposed to nanotechnology as part of their education and are aware of the potential applications and opportunities available to those who go into STEM disciplines.

• The general public has access to information about nanotechnology, and a growing proportion is familiar with the fundamental precepts.

• The NNI includes R&D aimed at applying nanotechnology to solve societal challenges such as affordable access to clean water, safe food, and medical care.

1 A “nanotechnology worker” is, for example, a scientist or an engineer (such as a materials scientist, chemist, or physicist) who is trained to work on processes in the 1 to 100 nm range.

data set, made available publicly by the NNCO with the assistance of each of the participating agencies, will provide a range of indicators related to the definitions of success—for example, the amount of collaboration occurring across agency and organizational boundaries that can be used to monitor and track interagency, multi-institution, and multidisciplinary activity. It will also allow an assessment of the spectrum of R&D activities that are undertaken, from fundamental to applied, and their relevance to NNI signature initiatives. It will provide funding amounts for individual projects that are now reported for most agencies only in the total annual NNI investment. This is the single most critical set of data to be collected. Once these data are made public, some of the following data sets can be efficiently and effectively collected and analyzed using data-mining techniques. The committee believes that developing and maintaining this NNI portfolio data set is critical for tracking progress and measuring success for the NNI.

2. Published documents arising from NNI activities, including papers, patents, reports, material safety data sheets, and conference summaries. The document-based metrics lag the actual date of the research or discovery and take varied amounts of time to be published—for example, several years in the case of patents. The bibliometric data can provide indicators of the outputs of the NNI’s activities, especially the more fundamental activities. These data are generically available in the public domain and will become readily searchable from Dataset 1. NNI success in acquiring and maintaining such a data set would lead to increases in the number of NNI-driven publications and in the breadth and depth of nanotechnology subjects addressed.

3. Data related to impact, including frequently cited and downloaded papers and patents, invited presentations, special sessions at conferences, and reports in the mass media for comparisons over time and across national boundaries. This data set can provide indicators of the global impact of NNI activities in driving global research directions (for example, as indicated by citations) and industry (for example, as indicated by downloads). Patent citations may be a useful indicator of technology transfer and of the translation of published NNI outputs into potential economic benefit. This data set is also largely available in the public domain and readily searchable by using Dataset 1 as input. NNI success in acquiring and maintaining such a data set would lead to an increase in the number of high-impact papers, presentations, press comments, and so on.

4. Number of students supported. This data set can provide an indicator of the development of an educated workforce (the nanotechnology workforce). These data are available essentially only from the participants in the NNI, and the NNCO would need to invest some effort to collect them. The com-

mittee notes, however, that many agencies already collect such data as part of project annual reports. There may be privacy concerns, and these data may be available only for release in aggregate form in the public domain. NNI success in acquiring and maintaining such a data set would lead to an increase in the number of graduates who have key skills relevant to advancing nanotechnology solutions to practical problems.

5. User facility and network use, measured via operational efficiency and effectiveness of key tools, including number of users, types of users, and their tool use. The purpose of this data set is to provide multiple indicators of the effectiveness of co-locating equipment, tools, and experienced personnel in specialized centers to address nanotechnology challenges. The data should provide insights as to best practices for center and network research management when used in conjunction with other information about management practices. Consideration of best practices should also lead to clearly articulated recommendations and guidelines for planning, coordination, and management. Some of the data are available from the centers and networks but require aggregation and analysis into a single data set. There may be difficulties in aggregating such data if definitions used by different management teams vary widely. It is hoped that the NNCO will be able to resolve such issues in collaboration with the participating agencies, but this is not known a priori. NNI success in acquiring and maintaining this data set would lead to increases in the operational efficiency and effectiveness of the NNI centers and networks.

6. Data related to technology transfer, including details of meetings, workshops, and conferences, and sessions in conferences. Other data should include standards development and small-business outreach (Department of Defense Small Business Innovation Research and Small Business Technology Transfer program) activities. The purpose of collecting this data set is to provide indicators of the variety of technology-transfer activities driven by the NNI and information on how NNI stakeholders are touched by these activities. Many of the data are available through the NNCO but only if provided by the participating agencies. Again, there may be difficulties in aggregating the data efficiently and effectively. NNI success in acquiring and maintaining such a data set would lead to increases in the numbers of such activities, of topics covered by the activities, and of people touched by the activities.

7. Data related to education and outreach, including workshops, activities aimed at K-12 students, and museum exhibits. The purpose of collecting this data set is to aggregate data that show the variety and scope of informal education and outreach activities being undertaken by the NNI. Many of the data are available to the NNCO—some on nano.gov if provided

by the participating agencies. There may be difficulties in aggregating the data efficiently. NNI success in acquiring and maintaining such a data set would lead to efficient distribution of educational tools and materials and to sustained or growing numbers of people being exposed to and learning about nanotechnology at all levels.

8. U.S.-based nanotechnology job advertisements, including all direct and indirect jobs pertaining to nanoscale expertise in the government, education, and commercial sectors.6 The purpose of collecting this data set is to provide an indicator of demand for an educated nanotechnology workforce. Ideally, the data would be segregated by whether they are direct or indirect, by region, and by employment sector; at a minimum, the aggregate number should be tracked as a function of time. A substantial set of the data is easily obtained from job-aggregating public websites, such as Indeed.com and SimplyHired.com. The usefulness of such data in tracking nanotechnology jobs and economic growth will require further analysis. NNI success in acquiring and maintaining such a data set would lead to sustained or growing demand for workers in nanotechnology-related positions and businesses.

9. NNI-related communications about environmental, health, safety, and societal implications of nanotechnology, such as National Institute for Occupational Safety and Health guidance regarding nanomaterials in the workplace. The purpose of collecting this data set is to provide indicators of the NNI’s activities and effectiveness in addressing social responsibility issues that arise in the creation and use of nanotechnology. The data are available only by direct input from the NNI participating agencies and the NNCO. NNI success in acquiring and maintaining such a data set would be indicated by an increase with time in the evidence of more people and organizations seeking, receiving, and using such information.

Finding: There are valid, measurable, and relatively transparent indicators of NNI success that are suitable for long-term tracking (in longitudinal studies) to assess impact and progress toward stated goals. Several types of data (for example, as seen in the list above) are useful and relatively easy to obtain; many are in the public domain already. With the help of appropriate models linking inputs, outputs, and outcomes, metrics for assessing success for the NNI can be developed from these data sets by tracking and evaluating them over time.

Recommendation 4-1: The nine searchable data sets listed above should be

6 A direct job is work where the job description is directly linked to usage of nanoscale expertise. An indirect job is one that is created due to the existence of one or several direct jobs.

collected annually and made available on the NNI website to allow the NNI’s impacts and successes to be more effectively assessed by internal and external interested parties and used for resource allocation and planning.

Of these data sets, the first is the most critical and of highest priority; knowing nanotechnology research and development projects, people, and organizations funded by the NNI will allow progress to be tracked and greater value to be realized from the collective NNI investment.

OPERATIONAL ISSUES: COLLECTING, TRACKING, AND EVALUATING DATA

The committee notes that it is highly likely that many of the data required for assessment already exist in the participating agencies inasmuch as most of them are widely used by the agencies as indicators of activity and impact. With the exception of creating a publicly available database for NNI R&D projects, this is not a recommendation to create multiple large databases or data sets from scratch: The recommended data sets could be generated by data-mining experts from publicly available information and the NNI R&D projects database. The committee recognizes the variable and potentially significant cost associated with collecting the proposed data sets and leaves it to the NSET Subcommittee and the NNI agencies to identify an efficient and workable manner in which to collect the data, with a priority given to the first data set.

Recommendation 4-2: The NSET Subcommittee and the NNCO should obtain data-mining expertise to undertake the collection and collation of essential data sets, develop tools to analyze the data in accordance with the management and reporting needs of the NNCO and the agencies, and manage the process of making the data sets publicly available.

Once the data are gathered and analyzed, the NSET Subcommittee should engage a team of experts from both inside and outside the federal government to evaluate the validity of the data. To avoid the appearance of conflict, the group should consist predominantly of persons who are not members of the NSET Subcommittee or other NNI management stakeholders. The NSET Subcommittee should evaluate progress on the basis of the data analysis and report the results in the annual budget supplement. Progress will also be assessed by the National Nanotechnology Advisory Panel (NNAP)/The President’s Council of Advisors on Science and Technology (PCAST) and the National Research Council (NRC) as part of their periodic reviews.

The field of R&D metrics has benefited in recent years from a great deal of tech-

nical and methodologic research by social scientists. That research has the potential to increase the possibilities to assess the impact and success of the NNI. Advances include the inexorable increases in computing power and database size, norms that encourage the opening of databases to public scrutiny (within the boundaries of privacy concerns), the tendency to make computation and problem-solving an “open-source” and public effort, advances in machine learning and data-mining algorithms, and the entry of private firms into bibliometric and personnel linkage. Taken together, those advances increase the possibility that the problem of metrics for the NNI can be partially solved by taking advantage of research that is going on outside the NNI agencies.

The committee notes that creating perfect data sets to assess and manage the NNI is neither reasonable nor possible. The NNI is a large program; as happens in most large organizations, the data returning to the management teams will often be incomplete, particularly at the beginning. The art of management of large organizations is getting the strategic directions right in the absence of perfect data, and the NNI should be no different in this regard. Any commentary regarding data, metrics, and interpretations should acknowledge the known limitations of the data sets and metrics. Provided that the data sets are made public, the committee expects that their fidelity will improve with time as the agencies and those whose work is included in the data sets identify and correct errors.

Finding: There are a wide variety of objectives, metrics, data formats, and reporting processes in the NNI participating agencies. Various research efforts on metrics are under way and are evolving quickly and benefiting from the application of big data computation of social science data that could be applied to the assessment of NNI progress.

Recommendation 4-3: Rather than try to mandate a particular data format, metric, or reporting process, the committee recommends that NNI social-science researchers make their code and processes public and their data available through an application programming interface (API). Open-code development and widespread availability of data through APIs will greatly facilitate database linkage, innovation, and convergence on best practices. Agencies that fund NNI research should support and require infrastructure development in the course of any research on commercialization metrics. The NNCO and NNI agencies should also encourage private firms to make their bibliometric tools and databases public.

With efforts to encourage the diffusion of best practices and their improvement in metrics development, it is hoped that individual agencies will eventually adopt a consistent data standard out of self-interest. Working-group meetings of data

professionals in the different agencies would facilitate diffusion of best practices and convergence on standards.

Linking databases requires articulating relationships among a wide variety of “subjects,” such as grants, papers, patents, products, and organizations. One useful way to link such subjects is to identify the people involved unambiguously. For example, they would include the principal investigator and graduate students who are funded on a particular grant, the papers and patents that they produce, and the organizations to which the graduate students disperse when their project is finished. Data on people can be disambiguated from large paper and patent databases or assigned permanently, perhaps with an Open Researcher and Community ID (ORCID) number. The ORCID approach aims to solve the name-ambiguity problem in scholarly communications by creating a registry of persistent unique identifiers for individual researchers and an open and transparent linking mechanism between ORCID, other ID schemes, and research objects, such as publications, grants, and patents.7 Use of ORCID is now required for publication in American Physical Society journals. It will not be applied to publications retroactively and so cannot be used for historical study.

Finding: The ability to track people and link them to their work products and organizations would greatly facilitate an assessment of the efficiency and effectiveness of NNI investments.

Recommendation 4-4: NNI agencies should record NNI participants and link them to their work products and organizations by individual grants, using ORCID, and link these data to published paper and patent databases, which over time may be linked to social and economic outcomes.

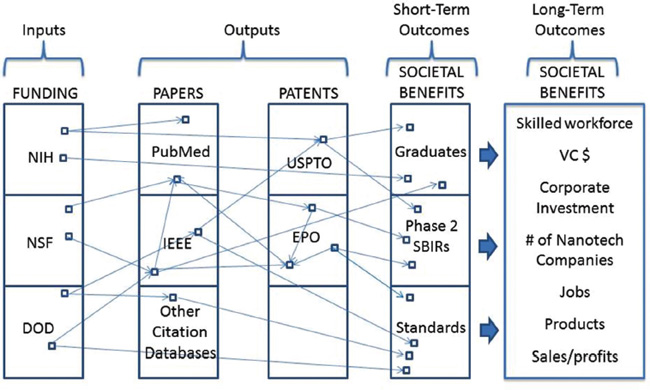

Figure 4.1 illustrates how the inputs of funding and scientific personnel could be traced in their transformation toward economic impact.

7 ORCID, “Our Mission,” available at http://orcid.org/about/what-is-orcid/mission, accessed September 27, 2012.

FIGURE 4.1 An idealized schematic that illustrates potential linkages between databases that would permit the impact of a research investment to be linked to publications and patents, personnel mobility, and social and economic outcomes. EPO, European Patent Office.