Three workshop sessions were devoted to the content of National Patterns. The first, “National Patterns Purposes and Uses,” featured panelists who represented various categories of data users: the legislative and administrative branches of the federal government, policy analysts from organizations concerned with science and engineering policy, academics interested in the science of science policy, and people from the international science and engineering community. The panel had been asked to address, from their own perspectives, the utility of National Patterns and to indicate how it might better address their current and anticipated future needs.

In the second session, “Advances in International Comparability of National Patterns Data and Reports,” the panelists examined in what ways National Patterns was and was not comparable with reports on R&D from countries that provide substantial support for R&D. Three issues that are often raised in this context are (1) the categorization of R&D funding by socioeconomic objectives, (2) the categorization of R&D by science and engineering fields, and (3) the tabulation of capital expenditures in support of R&D.

The third session, “Reporting of Additional Variables,” addressed the content of National Patterns. While the panelists in the first session were directed to focus their remarks (but not exclusively) on variables that were already collected on the primary National Center for Science and Engineering Statistics (NCSES) censuses and surveys of R&D, this session looked to other possibilities. The steering committee was interested in whether variables that are not currently available would, if tabulated, be useful to National Patterns users. It was recognized that making major

changes to existing censuses and surveys, including adding to respondent burden, should generally be avoided, but knowing what users need and would like to include in National Patterns could direct longer term decisions on census and survey content, as well as access to other sources of such information.

PURPOSES AND USES OF NATIONAL PATTERNS

In the workshop session on this topic, participants heard from five panelists, representing different kinds of users: Kei Koizumi, White House Office of Science and Technology Policy (OSTP); David Mowery, University of California, Berkeley; Martin Grueber, Battelle; Charles Larson, Innovation Research International; and David Goldston, Natural Resources Defense Council.

Kei Koizumi

Kei Koizumi summarized the use of National Patterns data by OSTP. A key theme of his presentation was the need for timely data. As assistant director for federal R&D, he is an extensive user of National Patterns information, and the R&D figures that appear in National Patterns are a vital tool in understanding the current R&D situation. In particular, given the goal set by President Barack Obama of 3 percent of the nation’s gross domestic product (GDP) invested in R&D (Obama, 2009) during his administration, for Koizumi the ratio of R&D to GDP is the most important statistic in National Patterns reports.

Koizumi said that state-level R&D expenditure and funding is also of great interest, especially to people like him who are concerned with tracking regional economic development and developing regional innovation strategies. Koizumi considers National Patterns a one-of-a-kind product because it amalgamates information across surveys, but OSTP’s use of R&D data from NCSES is not limited to National Patterns. As the need arises, data from the component surveys and censuses are also used for policy-making purposes.

Koizumi’s overall comments suggested that receiving data 3 years after the reference year is a frustrating experience for many users and, therefore, the more timely release of data should be examined for improvement. Among other areas of improvement, he mentioned that state-level data could be made more useful by publishing both additional R&D data at the state level and, when possible, R&D data for areas within states. He also expressed the concern that National Patterns does not provide any way of understanding the role played by ARAA (the American Recovery and Reinvestment Act of 2009) investments in R&D.

Koizumi also pointed out three areas in which National Patterns falls

short and urged NCSES to explore new ways to address them. First is the congruence of information from the Federal Funds Survey (see Chapter 2) and information reported by various agencies and departments. Second is the categorization of business and federal R&D data by science and engineering fields. Third is the development of variables denoting innovation and finding good ways of internationally comparing such non-R&D data.

He is looking forward to the information that is, and will be, available from the redesigned Business R&D and Innovation Survey (BRDIS). He concluded his presentation by referring to future additions to the federal government’s Strategy for American Innovation (see National Economic Council, 2011) in the form of administrative policy initiatives which will be better informed if there are better and more timely data on science, technology, and innovation at national and regional levels.

David Mowery

David Mowery discussed the needs of the academic user community for information on R&D. Being an economist and historian interested in science and technology issues, Mowery said that he considers National Patterns to be a unique product. It produces a long, stable time series for R&D expenditures and funding that is not available elsewhere, and it also provides a comprehensive performer/funder matrix that is valuable to understanding the changes in the R&D performer and funding sectors. Also, he said, National Patterns helps in widening a myopic view of many of the policy makers who focus entirely on the R&D-to-GDP ratio. National Patterns tabulations go beyond this single indicator and provide information on underlying structural changes, such as the rise in the share of academic-funded R&D or the recent decline in R&D performed by large-sized firms (see National Science Foundation and National Center for Science and Engineering Statistics, 2005, Table 7).

However, Mowery said, the publication does not satisfy the need to better understand and track new and emerging areas of R&D and innovation activity, such as photonics and nanotechnology. He also expressed concern about the uncertainty or error generated by the various survey-based estimates and hoped that, because the data are reported at higher levels of aggregation (national and states), the aggregation removes some of that noise on a percentage basis.

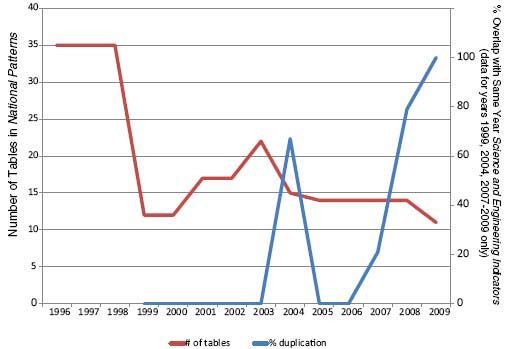

Mowery then raised the issue of content and dissemination. Because the statistical content of National Patterns is restricted solely to R&D expenditures and funding, it is duplicative of much of what appears in the biennial Science and Engineering Indicators (published by the National Science Board): see Figure 3-1. The figure plots two values. First, the number of tables published in National Patterns is plotted, which has decreased

FIGURE 3-1 Duplication of information in National Patterns and Science and Engineering Indicators.

SOURCE: Mowery (2012, Figure 1).

from 35 to 26 from 1996 to 2009. Second, it plots the percentage of tables appearing in National Patterns that also appeared in Science and Engineering Indicators, which has increased from less than 25 percent in 2004 to 100 percent in 2009.

In its first issue in 1994, National Patterns contained tables and charts on R&D resources, which included R&D expenditures, R&D funding, and scientists and engineers involved in R&D. Tables on these same topics were published in 1995 and 1996. There was a change in the 1997 data update: it contained tables on R&D expenditure and funding and on how the United States is performing in R&D relative to the rest of the world. The series went back to its original statistical displays in 1998, but in 1999 it reverted back to the 1997 format and has continued that format. In addition, there has been a reduction in the number of tables, as less information has been provided in the data updates. Several items that appear in the 1994-1996 and 1998 volumes are no longer part of the current series: the breakdown of academic R&D and R&D expenditures at university-administered federally funded research and development centers (FFRDCs) by science and engineering fields, disaggregation of industrial R&D by

industry type and firm size, and the cost per R&D scientist/engineer by industry and company size. Mowery noted, however, that most of that information is now available in IRIS, SESTAT, and WebCASPAR, NCSES’s online communal tools.1 The 1994-1996, 1998, and 2003 data updates also contained interesting analysis and charts. The 2004-2009 data updates did not follow up on the earlier analysis and charts, and analysis of R&D data is now restricted to the Info Briefs.

Mowery said that the first Science and Engineering Indicators was published in 1972. The 1994 data update of National Patterns reported that it complements the Science and Engineering Indicators in those years that it is not published. The 1997 data update made it clear that almost all the information in the report makes its way into Science and Engineering Indicators of the next year. Currently, the situation is not very positive, he said, since there is close to 100 percent duplication with Science and Engineering Indicators in the years that it is released, thereby making the information in National Patterns redundant.

Mowery offered two suggestions to improve the content of National Patterns and to make it more complementary to Science and Engineering Indicators. One is that the series go back to its original data topics, which includes tabulation of data on human capital. The other is to include the breakdown of business R&D by industry type, which is not currently published in National Patterns but as a separate Info Brief. In terms of dissemination policy, Mowery suggested that National Patterns be made a biennial publication coming out in those years when Science and Engineering Indicators is not published and be a complement to it.

Martin Grueber

Martin Grueber represented users who are familiar with the individual cell entries of National Patterns tabulations and their quality, given that his job is to forecast global R&D funding. Battelle, in collaboration with R&D Magazine, produces forecasts of global R&D funding. The 2012 global R&D funding forecast was Battelle’s 44th and R&D Magazine’s 54th publication of the forecast series.

In his presentation, Grueber described details of the forecast calculation process and how National Patterns data are used in arriving at these forecasts. During production of the 2012 forecast, the latest Data Update of National Patterns that was available was for 2008. Hence, he had to use 3-year-old data to forecast 4 years ahead. This time lag highlights

_________________

1 IRIS is the Industrial Research and Development Information System; SESTAT is the Scientists and Engineers Statistical Data System; WebCASPAR is the Integrated Science and Engineering Resources Data System.

users’ discomfort when there are long delays in this annual publication. But Grueber praised the historical corrections in the series, which helps in improving forecasts. Grueber also acknowledged that the time series from National Patterns helps him validate various homogeneity assumptions, assuming that the ratios of elements of the source-performing matrix are stable over time.

Grueber said that he relies not only on data from the National Science Foundation (NSF), but also on science and technology data from the White House OSTP, federal agencies, OECD, the European Union, the International Monetary Fund, various trade and technical associations, the media, and other third-party data providers. In addition, Battelle conducts its own surveys. Other surveys that provide primary data to the forecast process are R&D Magazine reader surveys and the Global Researcher Survey. Battelle also collects company data from annual reports and filings with the Security and Exchange Commission (SEC). Grueber is also in direct touch with NCSES staff members Mark Boroush and Ray Wolfe, who provide him with insights and verification of some his assumptions.

As does National Patterns, the forecast publication series contains a R&D funding source and R&D performer matrix: see Table 3-1. Three important elements are missing from the funding source/performer matrix of National Patterns: (1) industry funding to FFRDCs; (2) other government funding, such as state and provincial governments’ contributions to R&D funding to industry, nonprofit organizations, and FFRDCs; and (3) R&D funding by nonprofit organizations to industry and other nonprofit organizations. The first is not distinctly reported in National Patterns but is subsumed under federal funding to FFRDCs as relatively small, given the size of nonfederal contributions to the FFRDCs,2 even though there is sufficient evidence to conclude that this cell is not empty. Grueber pointed out that a nonprofit organization like Battelle is involved in research being conducted in FFRDCs, which in turn is funded by companies. Thus, there is important work done by the industrial sector that is not recognized by National Patterns.

The second missing element would be of great interest to state economic development offices, since they are keen to understand the R&D performance of their states. Direct state funding to R&D in the private sector (both nonprofit and for-profit entities) has increased over the last decade. Grueber gave the example of Ohio’s “Third Frontier Initiative,” which is a major component of the state’s Office of Technology Investments. It provides funding to technology-based companies, universities,

_________________

2 In fiscal 2010, NSF’s survey of FFRDCs showed that 97.3 percent of all their funding came from the federal government; less than $450 million came from other sources: see National Science Foundation (2012e, Table 5).

TABLE 3-1 R&D Funding Source and R&D Performer Matrix

| Source | Performer | |||||

| Federal Gov’t. | FFRDC | Industry | Academia | Nonprofit | Total | |

| Federal Government | $29,152 -2.51% | $14,666-3.69% | $37,577 -2.42% | $37,4400.93% | $6,817-2.29% | $125,652 -1.61% |

| Industry | $2022.20% | $273,487 3.37% | $3,868 26.49% | $2,1298.89% | $279,685 3.75% | |

| Academia | $12,3182.85% | $12,3182.85% | ||||

| OtherGovernment | $3,817 2.72% | $3,817 2.72% | ||||

| Non-Profit | $3,491 2.70% | $11,0552.70% | $14,546 2.70% | |||

| Total | $29,152 -2.51% | $14,868 -2.36% | $311,063 2.63% | $60,934 2.85% | $20,001 1.55% | $436,018 2.07% |

SOURCE: Grueber and Studt (2011, Table 4).

nonprofit research institutions, and other organizations in Ohio to create new technology-based products, companies, industries, and jobs.3 Grueber acknowledged, however, that in the context of national R&D, performance state-level R&D funding to private-sector efforts is likely small and it is understandable that there is not a separate data series in National Patterns.4

The third missing element, funding by nonprofit organizations to industry and other nonprofit organizations, is a serious data gap and a challenge for NCSES: there has not been a survey on the nonprofit sector since 1997. As NCSES is relying on 15-year-old data to calculate estimates for the nonprofit sector, there is the danger of missing the changes that have taken place in this sector, especially for nonprofit FFRDCs. Grueber observed that there has been substantial flow of dollars from the industrial sector to non-profit organizations, though this is still a relatively small fraction of total R&D. He pointed out that the dynamics in the funding and performing sectors have changed, and NCSES is missing out on these changes because it is still following a traditional performer/funder matrix.

Companies often restate their R&D expenditures in their subsequent SEC filings for accounting purposes. Grueber expressed concern that there is currently no mechanism that can help NCSES update its business R&D expenditure and funding time series as companies revise their SEC filings. In contrast, NCSES is able to update federal R&D expenditure and funding time series when similar issues arise. He is looking forward to the changes that will take place in business R&D and academic R&D data when more detailed extensions (beyond traditional science and engineering fields) are included in National Patterns as a result of changes in the BRDIS and Higher Education Research and Development (HERD) surveys. Grueber concluded his presentation by commending BRDIS for starting to look at the structure of foreign investment and investment made by foreign companies in the United States, which in the future might result in a change to the source/performer matrix with the inclusion of a column on “sector abroad.”

Charles Larson

Charles Larson focused on new initiatives for National Patterns that he said could aid in the process of better directing U.S. innovation and competition policies. His talk was inspired by the Science and Engineering

_________________

3 For more information, see http://development.ohio.gov/bs_thirdfrontier/default.htm [October 2012]. Grueber noted that Batelle receives some funding from this intiative.

4National Patterns captures the R&D contribution from state and local governments to academic institutions and the business sector. State and local government is formally a part of the funding sources tracked in the academic and business R&D surveys, but the numbers are not reported explicitly: see National Science Foundation (2013, Table 3 Notes).

Indicators: 2010 Digest (National Science Board, 2010): it said that the United States holds a preeminent position in science and engineering in the world, but that the edge is slipping, while other countries are increasing their R&D spending in education. Larson referred to the view expressed by Richard N. Foster (formerly of McKinsey and Company) that “we need to learn the lesson of the winners” (see Foster, 1986; Foster and Kaplan, 2000) and gave examples of how institutions and policies contribute to the growth of the nation. The introduction and development of courses on the management of technology and entrepreneurship by U.S. universities has helped the United States’ lead in competitiveness, and this strategy has been adopted by other countries.

Larson noted that in the world competitiveness rankings produced by the IMD Business School in Switzerland, the United States was the top competitive nation from 1995 to 2011 but came in second to Hong Kong in 2012.5 Institutions and policies that encourage economic freedom and entrepreneurship are the hallmarks of U.S. competitiveness, he said. This point is further reinforced by the economic freedom rankings published by the Heritage Foundation (in partnership with the Wall Street Journal), in which the United States remained in the top five until 2009 but ranked only tenth for the first time in 2012.6 Hence, even though policies and strategies adopted by United States have helped it to be in the top group, there has been a shift in the order, which is becoming a concern for U.S. policy makers and business leaders. Larson said that in such an environment it is all the more necessary to analyze and publicize the information on global competitiveness.

Larson mentioned three reasons cited by the IMD Business School as contributing to U.S. leadership in competitiveness: (1) its unique economic power, (2) the dynamism of its enterprises, and (3) its capacity for innovation.7 The third criteria, the capacity for innovation, is crucial. Larson pointed out that innovation and R&D are different: “R&D converts money into knowledge; innovation converts that knowledge back into money.” In addition, he said, the factors responsible for raising R&D investment are different from those encouraging innovation. He illustrated this by showing 2010 rankings by Booz & Company (see Jaruzelski, Loehr, and Dehoff, 2011) of the largest R&D investors and the most innovative firms in the world: there was no relationship among their rankings. He said that for innovation to take place successfully, the management of human

_________________

5 For more information, see http://www.imd.org/news/IMD-announces-its-2012-World-Competitiveness-Rankings.cfm [October 2012].

6 For more information, see http://www.heritage.org/index [January 2013].

7 For more information, see http://www.imd.org/research/publications/wcy/World-Competitiveness-Yearbook-Results/# [October 2012].

resources is the key, along with strategic alignment and a culture that supports innovation.

Larson added that government policies have a key role in promoting innovation and economic competitiveness. These policies include funding for science and technology and stimulating interaction on R&D between government laboratories, universities, and businesses. Also, regulations have a great influence on business R&D and its success, he noted, citing an article on “the culture of ‘no’” in some European countries.8 He suggested that NCSES move from reporting only R&D investment to developing additional indicators on success factors in management practices for R&D and innovation. He added that collecting data on the impact of risk, seed capital, and labor regulations on investment in innovation can be of tremendous value to businesses and for informing economic and financial policy decisions.

The recent report, Rising to the National Challenge: U.S. Innovation Policy for the Global Economy (National Research Council, 2012b), proposed a review or renewal of U.S. investments in the “pillars of innovation.” It concluded that the nation’s economic growth and national security depend on renewed investments and sustained policy attention. To achieve these objectives, policy makers require a better understanding of how institutions and laws encourage innovation and competitiveness. National Patterns can act as a key tool in this process.

In conclusion, Larson urged that NCSES consider several specific actions:

• Make National Patterns broader, deeper, and more timely to serve the national interest.

• Additional data are needed on success factors in management practice for R&D and innovation.

• Analyze and publicize the criteria that enable global competiveness for the benefit of U.S. policy makers and business leaders.

• More data are needed on the factors involved in making R&D more effective in stimulating innovation.

• New data are needed on the impact of risk, seed capital, and labor regulations on investment in innovation.

• New data are needed on measuring return on R&D investment and innovation.

• More data are needed on the numerous factors of R&D and innovation success in a form that can be utilized more easily by business.

_________________

8 See http://www.economist.com/node/21559618; http://www.washingtonpost.com/world/europe/in-france-entrepreneurs-battle-culture-of-no/2012/09/01/58d12e9a-f287-11e1-adc6-87dfa8eff430_story.html [January 2013].

David Goldston

The final speaker of this session was David Goldston, director of government affairs for the Natural Resources Defense Council. From 2001 through 2006, he served as chief of staff for the Committee on Science of the U.S. House of Representatives, the culmination of more than 20 years on Capitol Hill working primarily on science policy and environmental policy. He first commented on some of the points of the previous presenters and then considered the science and technology policy questions that policy makers grapple with.

Responding to Koizumi’s presentation that policy makers are very much focused on the ratio of R&D to GDP, Goldston expressed concern that it is a myopic view. The meaning of that ratio is not very clear to economists, industrial researchers, or science and technology policy makers. He suggested that National Patterns could be a platform to produce a wider set of science and technology or R&D indicators. He said he agreed with Mowery on two points. First, along with R&D investment, one should look at other factors, including unemployment rates and job creation, to obtain a holistic picture. Second, he agreed on the importance of including new and emerging fields in the current taxonomy of R&D expenditure and funding. He noted that Larson’s suggestions on new variables were interesting but would be difficult to collect.

Goldston said he is concerned about the limitations of the data in National Patterns. The series publishes R&D expenditure and funding by character of work: basic, applied, and development. But academic R&D is not disaggregated by science and engineering fields; therefore, it is hard to understand the amount of R&D investment in interdisciplinary research, transformational or high-risk research, and translational research.

Goldston then turned to major science and technology policy questions that go beyond utility and limitations of National Patterns. He offered several important questions that cannot be answered with the current data on R&D:

1. Do we know anything about the impacts of different fields so that policy makers know how much to allocate to various fields: for example, what would constitute a balance between the biological and physical sciences?

2. How many scientists and engineers does the United States need? An index that indicated when the United States is facing a shortage or surplus of scientists and engineers would be extremely valuable.

3. What is happening with younger researchers, especially in terms of their ability to get federal funding?

4. What are current salary figures and their effect on career choices and residence choices of foreign students getting a U.S. science or engineering degree?

5. How internationally comparable are the figures on human resources in science and technology given the differences in quality of training?

6. In the field of energy, is there a dearth of innovative ideas or do bottlenecks exist in the transfer of innovative ideas to marketplace? Do such bottlenecks arise from the supply side, the demand side, or labor relations?

7. Where is the “valley of death” and what kind of cycle is it currently in?9 What kind of funding or other solutions would there be to bridge it?

In general, Goldston said he is concerned about the ability to answer questions concerning how technology and science education lead to the economic growth of a nation. What is the model of economic growth? It is known that investing in technology and science education raises productivity, but nobody is aware of the exact mechanism. Is this lack of knowledge a shortcoming from the analysis side or the data collection side? Although this question is broader than National Patterns, it is clear that National Patterns has a role to play in answering it.

ADVANCES IN INTERNATIONAL COMPARABILITY OF NATIONAL PATTERNS DATA AND REPORTS

This workshop session featured presentations by Fernando Galindo-Rueda, head of the Science and Technology Indicators Unit at OECD’s Directorate of Science, Technology and Industry, and John Jankowski, director of the R&D statistics program at NCSES. Because Jankowski’s presentation was primarily a response to Galindo-Rueda’s comments on NCSES’s R&D statistics, it is discussed in Chapter 2.

Galindo-Rueda began by reminding participants that the use of NCSES’s R&D statistics is not limited to U.S. policy makers, economists, and science and technology policy analysts. The international science and technology community is very interested in knowing how their respective nations are performing relative to the United States. Moreover, because the United States accounts for a large share of the OECD’s R&D efforts, a comparable and timely U.S. estimate is a necessary input for the entire OECD.

_________________

9 “Valley of death” is a metaphor used to refer to the funding gap faced by innovators and investors. The funding gap takes place in the intermediate stage of the innovation process, between basic research and the commercialization process: it is a trough between two crests, a slump in the funding fow (Ford, Koutsky, and Spiwak, 2007; also see Branscomb and Auerswald, 2002).

Galindo-Rueda also reminded participants that one of the issues mentioned in the charge to the steering committee for the workshop was to assess the comparability of NCSES’s R&D statistics with those produced by international organizations and agencies, including OECD, UNESCO, and Eurostat. Galindo-Rueda first explained his team’s role in collecting and reporting R&D statistics, focusing on how National Patterns differs from international publications (see Chapter 2). Galindo-Rueda then discussed efforts to achieve greater international comparability. Two of the main current differences in National Patterns with respect to OECD data (which are published for 34 OECD members and 7 non-OECD economies) are the treatment of capital expenditures for R&D and information on R&D funding sources. The OECD’s Frascati Manual (see Chapter 2) recommends the collection and reporting by countries of both current expenditures and capital expenditure related to R&D for a given year. NCSES collects data on current expenditures and a book estimate of capital depreciation.

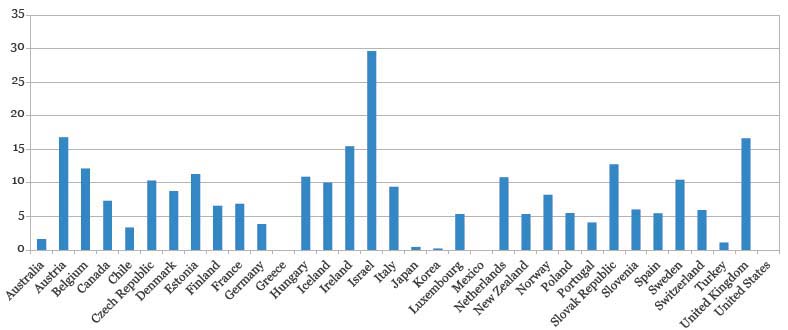

When NCSES submits data on R&D expenditures to OECD, depreciation estimates are removed without a compensating adjustment for capital expenditures. This results in an underestimate of total R&D expenditures for the United States relative to other countries. Galindo-Rueda suggested additional reporting for capital expenditures, which is now feasible for the business sector because NCSES’s BRDIS asks survey respondents for this information. A further step that will add value to National Patterns would be publishing capital expenditures by funding source. In terms of funding source, Galindo-Rueda reiterated what Martin Grueber said about the absence of an “abroad sector” in the data, a big information gap in the current series. For example, almost 30 percent of Israel’s national R&D is funded by the rest of the world: see Figure 3-2. Austria, Ireland, and United Kingdom have 15 percent or more of their R&D funded by other nations. (In the case of Austria, the percentage is large because of major investments by German companies.) This variable has the potential to be a policy-relevant indicator.

Another potentially useful indicator would be R&D investment of domestic U.S. entities in R&D-performing units located abroad. The entities could be organizations or institutions or companies or even national governments. Drawing on his experience with R&D expenditure and funding reported by various nations, Galindo-Rueda pointed out that most countries do not provide the performer/funder matrix by character of work (i.e., basic, applied, and experimental development, as does NCSES), but they do tend to provide detailed information by type of cost, separating employment costs from other current and capital expenditures.

In addition to data on R&D financial resources, there are other differences between National Patterns and international R&D statistics. There is

FIGURE 3-2 Percentage of gross domestic expenditures on R&D funded by rest of the world, 2009.

NOTES: Data for Australia, Chile, Iceland, Israel, and Switzerland are for 2008. Data are missing for Greece, Mexico, and the United States. Data are derived from OECD’s main science and technology indicators database.

SOURCE: Galindo-Rueda (2012, Figure 3).

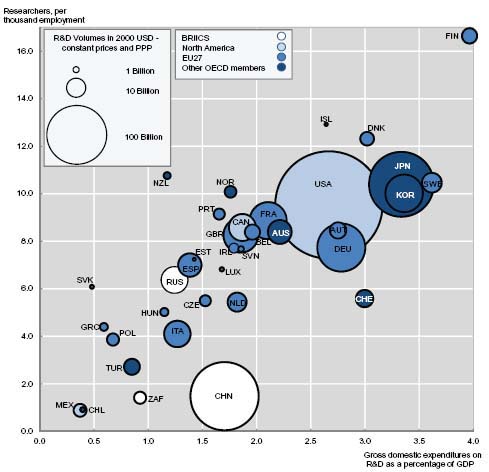

a lack of information on R&D personnel and researchers10 (see Chapter 2), and Galindo-Rueda said he hoped that this issue could be addressed better with the redesigned BRDIS and HERD surveys. Information on human resources in science and technology are used as a normalizing factor and are frequently requested by many users. In the field of bibliometrics, for example, analysts look at the data on researchers to investigate research productivity. OECD integrates information on R&D investment and researchers to produce a range of figures and graphs: for an example, see Figure 3-3. This figure highlights research intensity either as a measure of R&D expenditure relative to GDP or as a measure of researchers relative to population size. The figure shows a close relationship between the two measures, though with some outliers. By combining science and technology variables, one can investigate a number of factors that can aid policy decisions. Galindo-Rueda also highlighted the problems associated with measuring human capital in science and technology in terms of full-time equivalents in comparison with headcounts, as both of these measurements present difficult analytic issues. As Schaaper (2012) notes:

Headcount data are data on the total number of persons who are mainly or partially employed on R&D. Headcount data are the most appropriate measure for collecting additional information about R&D personnel, such as age, gender or national origin. But R&D activity can be a primary activity or secondary activity of R&D personnel. It can also be a significant part-time activity for university teachers or postgraduate students. To take into account such factors number of persons engaged in R&D is also expressed in full-time equivalents (FTEs). Therefore FTE is the true measure of the volume of R&D activity performed by R&D personnel. Across nations there exists diversity of methods that are used to calculate full-time equivalents of R&D activity and the formula also varies across sectors, which leads to problems in comparisons. But FTE is key to adequately calculating national R&D expenditure as Researcher’s salaries are a significant part of it. National R&D expenditure should only include the proportion of the salaries devoted to R&D as inclusion of salaries based on headcounts would lead to significantly overestimated value of national R&D expenditure.

_________________

10 The category of R&D personnel comprises teachers, technicians and equivalent staff, and other supporting staff. Researchers are professionals engaged in the conception or creation of new knowledge, products, processes, methods, and systems and in the management of such projects. Technicians are staff whose main tasks require technical knowledge and experience and they perform scientific and technical tasks involving the application of concepts and operational methods, normally under the supervision of researchers. Equivalent staff are those who perform the corresponding R&D tasks under the supervision of researchers in the social sciences and humanities. Other supporting staff includes skilled and unskilled craftsmen and secretarial and clerical staff participating in R&D projects or directly associated with such projects.

FIGURE 3-3 A comparison of researchers per thousand of employers and R&D expenditures as percentage of GDP: Multiple years.

NOTE: Data are from 2008 for Australia, Canada, Chile, France, Iceland, Korea, South Africa, and Switzerland. Data are from 2007 for Greece, Mexico, New Zealand, and the United States. BRIICS = Brazil, Russia, India, Indonesia, China, and South Africa; EU = European Union; PPP = purchasing power parity.

SOURCE: OECD, main science and technology indicators database, June 2011, see http://dx.doi.org/10.1787/888932485196 [May 2013].

Galindo-Rueda added that NCSES publishes values for gross domestic expenditure on R&D (GERD) in the international comparability table of National Patterns. Most national publications he is aware of typically contain such a section, drawing on data collected through official channels by OECD from its members and other economies. This section is important to domestic users in all countries as international benchmarking of R&D efforts has been one of the main purposes of R&D data reporting.

However, he said, there is some potential for confusion by users who may not fully appreciate the difference between the core series on R&D expenditures that is reported in National Patterns and the adjusted GERD series used for international comparisons. For the United States, GERD values are marginally different from reported total national R&D expenditures: the difference is driven by the omission of capital depreciation costs and a small adjustment to federal R&D in the U.S. GERD estimate. Galindo-Rueda said that NCSES may want to continue publishing a figure of R&D expenditures that incorporates capital depreciation costs to maintain the time series comparability, while reporting an improved GERD estimate in line with the Frascati Manual guidelines using the newly available data.

Being an international user of the U.S. R&D statistics, he looks forward to more timely data from NCSES. The current omission of U.S. data results in an incomplete analysis because one-third of the world’s R&D is performed in the United States (see National Science Board, 2012, Ch. 4, Fig. 4). From his experience, Galindo-Rueda said, European nations are very timely, and, among Asian nations, China provides up-to-date information. In conclusion, Galindo-Rueda highlighted the fact that National Patterns is a global statistical public good that is widely used by the international science and technology community. It is a key component of OECD’s R&D statistics and a valuable resource for analysts because it offers a long time series. NCSES’s efforts toward redesigning two of the major input surveys and the consequent survey findings will provide assistance to OECD in terms of reviewing the Frascati Manual guidelines.

REPORTING OF ADDITIONAL VARIABLES

Although the topic of possible additional variables was appropriately part of several workshop sessions, the steering committee for the workshop decided to devote a full session to it to stimulate more thought on an issue that is expected to become increasingly important over time. Adding new variables that reflect changes in the field will maintain the relevance of National Patterns over time. Kaye Husbands Fealing, of the Committee on National Statistics, National Research Council (NRC), provided the single presentation of this session. Her goal was to examine what variables that were not currently collected on any of the five censuses or surveys that feed into National Patterns would, if available and tabulated, be useful to National Patterns users. As study director of an NRC Panel on Developing Science, Technology, and Innovation Indicators for the Future11 given

_________________

11 This study was also sponsored by NCSES and is being conducted under by the NRC’s Committee on National Statistics in collaboration with the Committee on Science, Technology, and Economic Policy; its report is expected in 2013.

that this panel was charged with examining the status of NCSES’ science, technology, and innovation (STI) indicators, Fealing was in a position to describe the statistics that her panel thought would be useful to produce to understand innovation activities in the United States and worldwide.

As mentioned by Charles Larson, Fealing said, there is an important difference between R&D statistics and indices for innovation. The statistical tabulations for National Patterns are mainly information on R&D stocks; in contrast, indicators on innovation activities include measures of R&D flows, science and technology outputs and trade, knowledge networks, and human capital stocks and flows. The hope is to obtain data not only on the current status of R&D but also of trends.

Fealing noted the challenges faced by the panel in devising a conceptual framework for scientific discovery and technological innovation. This framework needs to include not only the traditional elements—inputs, outputs, and effects—but also the institutional elements that influence the functioning of the system. The panel was also tasked with providing the best priority framework to NCSES that can guide the development of STI indicators for such a system. Fealing showed the audience an example of a model for a national innovation system and pointed out that there is a lack of information on linkages or spillover effects. Her experience with the panel has convinced her that data on spillover effects between actors and activities in the system and the resulting outputs and outcomes are particularly useful for informing policy decisions. Another data gap of concern is subnational—STI statistics such as state and local funding to industrial R&D performers—as noted earlier in the workshop.

In its interim report (National Research Council, 2012a), the panel’s recommendations to NCSES focused on improving or developing new indicators on:

1. how labor force mobility is related to STI activities by exploring existing longitudinal data from various surveys,

2. innovation and firm size based on data from the restructured BRDIS,

3. understanding firm dynamism by matching BRDIS data to surveys from the Census Bureau and Bureau of Labor Statistics, and

4. indicators on payments and receipts for R&D services between the United States and other countries by using the BRDIS data on firms’ domestic and foreign activities.

The panel also recommended that NCSES host working groups to further develop subnational STI indicators and to fund exploratory activi-

ties on frontier data extraction and development methods.12 Fealing also stressed the importance of the timeliness and reliability of National Patterns data. She pointed out that timely data is another aspect of data quality and very important to users of STI data and statistics.

HOW TO IMPROVE NATIONAL PATTERNS: DISCUSSION

One impression that was evident from these sessions is that the user community of National Patterns is heterogeneous regarding the specific areas users would like to see addressed in this series of reports and that satisfying all users would be a challenge. Yet the current National Patterns reports have clearly been successful in satisfying user needs by providing quality information, including at considerable levels of detail when justified by data quality. The range of proposals for improving National Patterns for users varied widely from focusing on only a single indicator—the ratio of R&D expenditure to GDP—to broad questions on how investment in STI leads to economic prosperity.

One issue mentioned by virtually all the participants is the need for timely publication of the series. Fealing made the interesting observation that one need not consider timeliness and quality in opposition: rather, one can consider timeliness as one of several attributes of quality data.

In terms of more content, all the presenters had different wish lists, including more information on human capital, better innovation indicators, and more detailed analysis of data instead of just reporting R&D figures. Possibly the most important lesson from these three sessions is that National Patterns would benefit from finding some way of keeping abreast of users’ needs and wishes so that it can be modified over time to remain as relevant as possible to users.

In response to Mowery’s call for less duplication, Koizumi said that the duplication of topics and tables is inherent because NCSES produces separate tables and reports based on estimates from each survey that feeds into the series. The agency also conducts surveys on graduate students, postdoctoral researchers, and nonfaculty researchers in science and engineering. As noted in the sessions, National Patterns contained more comprehensive information in the 1990s, with a subsequent shift in the topics included in the publication to only R&D expenditure and funding. Jankowski said that this was done because the agency determined that science and engineering topics had more in common with topics covered in the series. This is a common conundrum of federal statistical agencies: reducing statistical output to

_________________

12 Frontier tools and methods are those used to extract data and develop new datasets. Examples of such methods include nowcasting, netometrics, CiteSeerX, Eigenfactor, and Academia.edu.

avoid duplication can lead to user dissatisfaction. Many users of National Patterns preferred the publication to Science and Engineering Indicators in the 1990s, but there has been a shift of preference in the last decade.

Regarding timeliness, Karen Kafadar wondered whether NCSES could produce flash estimates or preliminary estimates with uncertainty bounds. Koizumi said that he would be happy to receive earlier estimates of the R&D-to-GDP ratio and added that policy makers are used to revisions in unemployment and job figures released by the Bureau of Labor Statistics. Therefore, he said, he does not think flash estimates of aggregate R&D figures will lead to confusion. Also, such flash estimates could be produced at a very aggregate level to reduce the impact of sampling error, though that could lead to frustration or confusion among state and local data users who are interested in understanding R&D performance of states. William Bonvillian supported the importance of flash estimates during times of serious cuts in R&D budgets. For example, science and technology policy analysts in government, the private sector, and in academia need to know how the current plans for sequestration may be impacting national R&D performance.

Goldston repeated his warning that there is a trade-off because preliminary estimates might reduce the credibility of the revised figures, which are produced after some delay but are more reliable. He agreed that timely data is always useful, but if the data are not sufficiently credible, it could cause a bigger problem than that of timeliness. In addition, Joel Horowitz noted, using only standard errors as indicators of accuracy, which would be the available metric for data quality, would be insufficient, since they ignore any systematic errors that might be present in the data. He said that a firm’s reporting of R&D figures in survey questionnaires is influenced by the tax laws, so a firm may have a motivation to misreport. Christopher Hill agreed that further investigation is necessary to look at systematic errors in NCSES’s surveys and how those errors influence the quality of final estimates.

Grueber suggested that, in a way, Battelle’s R&D forecast is a preliminary estimate of R&D performance. When it comes to forecasts, the goodness of fit of the forecast model can be determined by how close the forecast is to actual values. Grueber’s comparison of his 2011 forecast and Battelle 2011 data differed by $15 billion. The main reason for this difference was attributed to restatements by respondents (firms performing R&D) and updating the National Patterns data series. It is his understanding that restatement is sometimes a result of changes in accounting practices. Therefore, forecasting of R&D performance is tricky as users of the forecast expect it to be very close to actual R&D figures, not understanding various reasons for differences. The question is how much of that difference is bearable to the users. This issue will become of concern to NCSES if the agency decides to produce preliminary or flash estimates.

The last two presentations in this session took a very broad view of the STI system. Larson stressed the priority of data analysis instead of the current format of tabular representation of data. NCSES, through its Info Briefs, produces a data update and some data analysis, but it is not sufficient to answer the big-picture questions raised by Larson and Goldston. R&D data users focus not only on magnitudes; they also want to understand structural changes, if any, and the channels through which science and technology investments lead to the development of new goods, create more jobs, and otherwise affect the economy. Even though NCSES tries to satisfy (as much as possible) the varied data needs of its broad user community, Hill said it is important to keep in mind that NCSES is a statistical agency, not an analytical agency, and the nature of the agency determines the kinds of data products it produces. NCSES, as a federal statistical agency, designs and conducts surveys to collect data that are relevant to various policy questions. Hill further added that the analytical program of the agency needs to be given importance so that the data products are in line with user community’s demands.

National Patterns contains marginal totals that have had broad utility for a variety of users for a long period of time. The Panel on Developing Science, Technology, and Innovation Indicators for the Future in its interim report (National Research Council, 2012a) encouraged NCSES to take steps toward the development of very rich, detailed databases that would support regression models, microsimulation models, and other detailed analyses that would answer specific questions. One of the key drivers of that panel’s recommendations is the policy questions raised by Goldston. However, Hill warned, detailed databases contain respondent-level information,13 and agencies need to be careful about confidentiality issues.

Drilling down to lower levels of geography also brings up additional problems, such as the treatment of interorganizational transfers of R&D funds, as when a firm in one state transfers a major part of an R&D grant to its subsidiary located in another state. When responding to BRDIS, will the firm assign the whole amount to the first location or report the actual split among locations? A similar problem arises with international firms like IBM whose R&D operations are not restricted to a single country. The updated BRDIS contain questions that try to address these two problems (see Chapter 2). Fealing noted that it is a knotty problem given an increase in demand for microlevel data, while statistical agencies need to follow their procedures and categories. Hill expressed concern about whether microlevel

_________________

13 The Public Use Microdata Sample (PUMS) of the Census Bureau is an example of a database that contains respondent-level information. Depending on the variable, the respondent might be a member of a household or a household.

data will provide answers to difficult questions and reiterated the need to begin with good questions.

A question of interest to many researchers is the effect of R&D expenditure at both the regional and national levels. Fealing said that R&D expenditure and funding measures the outputs and inputs of science and engineering enterprise and that measuring effects and spillover of R&D is the next research frontier. Stephanie Shipp added that statistical agencies design surveys to answer specific policy questions, a retrospective approach. She suggested that NCSES takes a more proactive approach by considering administrative records and unstructured data. John Gawalt, Jankowski, and Fealing updated the workshop participants on the progress made by NCSES on its administrative records project (see Chapter 4).

NCSES faces opportunities and challenges in a world that increasingly demands more detailed information. These demands will lead to more pressure on the agency to update its surveys to gather hitherto uncollected information and present the data (tabular and micro) in more informative and usable formats.