KEY SPEAKER THEMES

Nelson

- Accountable care presents a promising opportunity for meaningful health system reform and innovation.

- New delivery models, such as accountable care organizations, require a core set of metrics to gauge progress and value.

- The assessment of value should include measures of health outcomes and patient experience in relationship to per capita costs.

Stiefel

- There are myriad challenges in implementing measurement of the three-part aim.

- Each of the three three-part aim domains—population health, care experience, and cost—requires unique metrics adjustments to account for inherent measurement complications.

- Measures are critical to building out a high-quality data system because they make it possible to identify the specific data elements the system needs to capture.

- On a larger scale, core measure sets can drive data capture that promotes progress toward a learning health system as a whole.

- Implementation is crucial to realizing the benefits of health information technology and to producing consistent, accurate, and interoperable data.

Implementing core metrics presents broad opportunities for improvement, but it also involves numerous challenges. This chapter summarizes three presentations that were focused on core measure sets in use, with a particular emphasis on the diversity of current measure sets, the need for tailoring metrics to their use, and the multiple supports necessary for metrics implementation. Eugene Nelson, professor of community and family medicine and a professor at the Dartmouth Institute, both at Dartmouth University, discussed measuring aspects of the three-part aim in an accountable care environment. Matthew Stiefel, senior director for care and service quality at Kaiser Permanente, shared his perspective on the implementation of core metrics for measuring the three-part aim and the challenges inherent to that process. Craig Jones, executive director of the Vermont Blueprint for Health, concluded the panel’s presentations with a case example of the Blueprint’s experience with metrics and their integral role in building both a high-functioning digital infrastructure and a learning health system as a whole.

ACCOUNTABLE CARE AND MEASURING THE THREE-PART AIM

Eugene Nelson began his comments by adapting a quote from Wayne Gretsky: The secret to success is skating to where the puck is going to be. In other words, Nelson said, the focus should be in setting goals based not on the current status of health care in the United States but rather on where it will be in 2015 and what value will look like then to patients, consumers, and communities. Nelson explained that the focus by 2015 will be with accountable care, as 25 million to 31 million Americans already receive health care from organizations recognized as accountable care organizations (ACOs) (Gandhi and Weil, 2012). Accountable care offers a promising chance for meaningful reform and innovation if measures of system

progress that are an inherent part of accountable care can promote rapid innovation and patient-centered, value-based approaches.

Citing the Dartmouth Spine Center as an example of accountable care, Nelson provided details concerning this delivery model. The accountable care process requires using patient-reported health outcomes, engaging patients in care decision making, and employing data to inform and improve care processes continuously. By incorporating all of the necessary resources for treating back pain, including specialists and physical therapists, into one central clinical microsystem, the Dartmouth Spine Center is able to provide better care in real time and to foster better research over time. Its information system allows for extensive use of patient-reported outcomes data in orienting new patients to the treatment process, after which an initial work-up is completed, and a plan of care is developed. That plan can direct patients to acute or chronic care management, functional restoration, or even palliative care. Through continuous tracking of patient status and progress, the information collected informs the plan of care moving forward and also is fed back to an improvement registry for public reporting and research. This information allows for monitoring of functional status, disease burden, pain levels, and actual patient experience, along with prior history and risk status. The data provided by this system, Nelson said, are actionable, valued by clinicians, and allow for predictive modeling for patients embarking on the care-decision process.

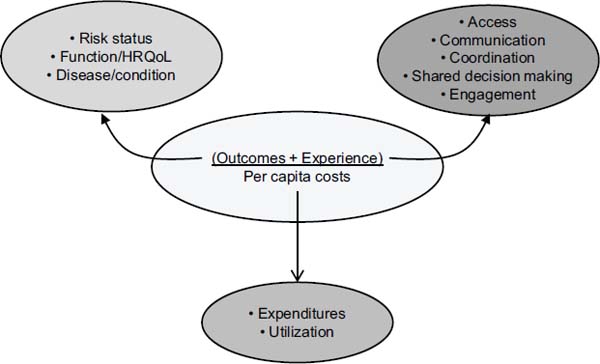

Nelson then described several examples of core measures in the accountable care setting. The CQO Roundtable, which includes such organizations as Intermountain Healthcare, Virginia Mason Medical Center, and Kaiser Permanente, has suggested parsimonious and balanced measures that emphasize the measurement of what matters. Another multi-stakeholder group, informally called the Gretsky Group, has put forth example core metrics for accountable care that are longitudinal and cross-cutting, that measure value, and that support the three-part aim. Nelson concluded by outlining the value equation for a core set, which would consider patient experience in organizations and health outcomes in relationship to per capita costs, and he highlighted several areas for progress along each dimension. (See Figure 4-1 for additional details on this calculation.)

GENERAL THEMES FOR IMPLEMENTATION

In his discussion of how metrics are implemented, Matthew Stiefel paid particular attention to the challenges confronted in measuring the three-part aim. In addition to overall measurement challenges, he highlighted specific challenges in assessing population health, care experience, and cost. Overall challenges include defining a population, embedding metrics into a learning system, and combining metrics into a single measure of overall

FIGURE 4-1 Value equation for the three-part aim, reflecting the multiple considerations for each aim.

SOURCE: Nelson, 2012.

value. Measurement of population health is complicated by the connection of health determinants to health outcomes, and care experience is difficult to measure because of the complexity of clinical care. The measurement of cost is also challenging because its definition depends on the varying perspectives on cost.

Further examining the challenges of population health measurement, Stiefel emphasized the merits of viewing total population from a geopolitical perspective. Each geopolitical area contains myriad subpopulations, but overall progress toward the three-part aim requires a broader, higher-level definition of the population. Moreover, he underscored the complex relationships between health determinants and health outcomes, highlighting the need to connect the upstream and individual factors that influence health with the downstream outcomes.

At Kaiser Permanente, Stiefel continued, care experience metrics have been streamlined in accordance with the six domains of care quality defined by the Institute of Medicine: safety, effectiveness, timeliness, patient-centeredness, equitability, and efficiency. Composite measures have greatly facilitated the ability to drill down from broad regional metrics to more localized outcomes. He also explained that cost metrics vary according to the perspective of the investigating party. Cost to a supplier is simply the

cost of production, but cost to health plans and insurance companies is the cost of production plus a provider’s overhead margin. That cost plus the health insurer’s margin is the cost to purchasers and consumers. Each different frame on cost yields a different answer, complicating the overall measure of cost.

Stiefel’s final comments focused on the measurement of value and its reliance on the relationships between population health, care experience, and per capita costs. Cost-effectiveness, efficiency, and overall effectiveness overlay those three domains of value, each influencing the ultimate measure of the three-part aim. Stiefel concluded by highlighting a compilation of potential three-part aim measures (see Table 4-1).

TABLE 4-1 Potential Three-Part Aim Measures

|

|

|

| Dimension | Measure |

|

|

|

| Population Health |

1. Health Outcomes:

2. Disease Burden: Incidence (yearly rate of onset, average age of onset) and/or prevalence of major chronic conditions 3. Behavioral and Physiological Factors: Behavioral factors include smoking, alcohol, physical activity, and diet. Physiological factors include blood pressure, body mass index, cholesterol, and blood glucose. (Possible measure: a composite Health Risk Appraisal score) |

| Experience of Care |

1. Standard questions from patient surveys, for example:

2. Set of measures based on key dimensions (e.g., Institute of Medicine Quality Chasm aims: safe, effective, timely, efficient, equitable, and patient-centered) |

| Per Capita Cost |

1. Total cost per member of the population per month 2. Hospital and ED utilization rate and/or cost |

|

|

|

| NOTE: CDC HRQOL-4 = Centers for Disease Control and Prevention health-related quality of life-4; ED = emergency department; PROMIS Global-10 = Patient-Reported Outcomes Measurement Information System®; VR-12 = Veterans RAND 12-Item Health Survey. SOURCE: Stiefel and Nolan, 2012. | |

VERMONT BLUEPRINT FOR HEALTH: CORE METRICS TO GUIDE THE DIGITAL INFRASTRUCTURE

In his presentation on the Vermont Blueprint for Health and the implementation challenges it has faced, Craig Jones first gave an overview of the Blueprint’s projects and goals. Initiatives currently under way include advanced primary care practices, community health teams, payment reforms, digital infrastructure investments, community self-management programs, and learning health system activities. Specifically, Jones underscored the Blueprint’s continuously learning, community-building directive: generating a foundation of medical homes and community health teams that can support coordinated care and linkages with a broad range of services; instituting multi-insurer payment reform that supports this foundation of delivery services; constructing a health information infrastructure that includes a variety of sources; and incorporating an evaluation system that uses routinely collected data to support services, guide quality improvement, and determine program impact.

Regarding the growing team-based network in Vermont, Jones emphasized the critical nature of shared goals, clear roles, mutual trust, effective communication, and measurable processes and outcomes as the core principles of team-based care. He noted that this has required a change in culture as a wider variety of health and social service professionals begin to work together in a team to improve health. The culture has continued to evolve as the teams work together over longer time periods.

However, even as these principles continue to take hold among the growing team networks in Vermont, questions persist concerning the promise of health information technology and why that promise has not been realized. In particular, he explained, clinicians see funding directed toward health information technology (health IT), but have yet to see it impact the provision of health services or provide them with the feedback they need. Jones emphasized the implementation challenges and practical considerations for realizing the benefits of health IT, especially with a fragmented delivery system with many independent providers, multiple electronic medical record systems, several practice management systems, and other differences. The Blueprint has spent considerable effort working throughout the state to address technical limitations and ensure that the data are accurate, trusted, reliable, and actionable for all data systems.

To advance the implementation of health IT, the Blueprint shifted its attention to clinical data capture guided by core metrics for measurement. It started with a core data dictionary which was compiled based on clinician input, national guidelines, and other organizations’ models. The dictionary’s elements were designed to build out the state’s health information network to allow data to be aggregated, sorted, manipulated, and used to

improve patient care. With core measures, it is possible to implement specific numerators and denominators, which allow for defined data elements to be captured, which in turn necessitates structured data capture systems to hold those common elements, ultimately resulting in high-quality, trusted, and reliable data.

However, without a core set of elements and an incentive for technical vendors to build their systems to capture those core elements, it is difficult to manipulate and transport the data between sources. As a result, the Blueprint is continuing its efforts to refine data-capture systems to increase transferability. Moreover, financial incentives should encourage users, Jones said, to utilize those core measures to build out their datasets and ensure comparability between different sources.

In summary, Jones concluded, with reliable data it is possible to generate actionable knowledge for a learning health system and to measure progress. Core metrics can drive the core data dictionaries to ensure that captured data are useful for multiple purposes, such as clinical care, population health management, reporting, and payment programs. Those data are then available to guide ongoing policy and payment reforms that will influence the care process and generate new metrics data, helping to building a learning health system as a whole.

To start the discussion, one participant asked if health information technology vendors were receiving feedback from users in an appropriate time frame and, if not, how the field could move more quickly to refine systems for data collection. Jones said that there are few straightforward steps that the field could take to help address this issue. One suggestion he made was for meaningful use dollars or payment reforms to be linked to the tracking, exchange, and use of a core set of metrics, a change that he believes would have a major impact on the marketplace. As an example, he cited the 33 metrics that are now associated with ACOs and the fact that, because of the associated financial incentives, there is now a major effort under way to develop methods for measuring them and collecting and organizing the necessary data.

In response to a question about how to capture useful data on specialty and condition-specific measures, Nelson said there are now good measures of risk and functioning status—such as the Patient Reported Outcome Measurement Information System (PROMIS) measure sets—that the field could use. He equated the PROMIS measure sets for health outcomes to the Consumer Assessment of Healthcare Providers and Systems measures for patient experience. He also noted that there are a number of excellent condition-specific measures available, though he added that more global

measures would be more useful for patients with multiple chronic problems. Jones suggested that if a composite measure related to outcomes was linked to payment and was of shared interest to specialists and primary care physicians, there would be a rapid change in collaborative behavior. In his opinion, the same domains could apply to specialty and primary care, particularly for chronic conditions.

A participant asked if work is being done to do a better job capturing costs. Jones noted that the Vermont Blueprint is trying to capture total expenditures with an all-payer claims database. The development of this system can capture the complete expenditure picture, he said, and it is providing a look at total cost of care per person. Stiefel added that it is also necessary to look beyond health care costs and to include spending on public health and social services.

Gandhi, N., and R. Weil. 2012. The ACO Surprise. New York: Oliver Wyman.

Nelson, E. 2012. Accountable Care and Measuring the 3-Part Aim. Presentation at IOM Workshop on Core Metrics for Better Care, Better Health, and Lower Costs, Irvine, CA.

Stiefel, M., and K. Nolan. 2012. A Guide to Measuring the Triple Aim: Population Health, Experience of Care, and Per Capita Cost. IHI Innovation Series White Paper. Cambridge, Massachusetts: Institute for Healthcare Improvement.