KEY SPEAKER THEMES

Larsen

- The Office of the National Coordinator for Health Information Technology, working with federal and non-federal partners, is developing a strategy focused on real-time measurement, as opposed to retrospective measurement, that is linked to decision support and patient dashboards.

- Achieving the full benefits of digitally enabled measurement requires additional actions beyond adopting new technologies, in order to achieve the goal of a culture of care that uses health information technology to enhance care.

Queram

- Sharing transparent clinical-level and provider-level data can lead to significant improvements in the quality of care.

- It is important to develop new approaches for putting data into a context that is useful for patients and support consumers as active participants in their health care.

- There are limited resources to support both national and local transparency efforts.

Ferguson

- There are important new local and regional opportunities for collecting real-time data and using it to make inferences for use in real-time decision making.

- Health care delivery organizations create streams of clinical, quality, operational, and administrative data that could interface with electronic health records to enable just-in-time research and learning and could serve as a valuable resource for designing metrics.

Implementing metrics requires a robust data, technical, and social infrastructure. Three workshop speakers explored common themes around the infrastructure needs for advancing measurement (Hillestad et al., 2005). In particular, their presentations focused on the challenges and opportunities for making measurement a routine component of health and health care systems. Kevin Larsen, medical director of meaningful use in the Office of the National Coordinator for Health Information Technology (ONC), discussed the next generation of the digital infrastructure and the opportunities it can afford. Christopher Queram, president and chief executive officer of the Wisconsin Collaborative for Healthcare Quality, described one example of building and using a data collection infrastructure. T. Bruce Ferguson, Jr., inaugural chairman of the Department of Cardiovascular Sciences at East Carolina University, provided a practitioner’s perspective on using metrics within the confines of a real-world health care organization.

INFORMATION TECHNOLOGY-ENABLED QUALITY MEASUREMENT

Kevin Larsen explained that the goal of ONC is to improve the performance of the overall health and health care system, not simply to expand the use of digital tools. Operationalizing this goal means providing tools that providers, organizations, and public health systems can use to drive improvement. At their best, such tools can identify gaps in a patient’s care, allowing providers to make changes to address those specific gaps and to reform care processes in order to improve outcomes for future patients. To further this vision, ONC, the Centers for Medicare & Medicaid Services (CMS), and other federal partners have developed a strategy that focuses on real-time measurement, as opposed to retrospective measurement, and that emphasizes local ownership, benchmarking, links to decision support, and patient engagement tools. Larsen emphasized that ONC’s initiatives are

a collaborative effort relying on partnerships with CMS, other agencies of the Department of Health and Human Services (HHS), and many federal partners.

Larsen explained that meaningful use is not just about installing technology for the sake of technology but rather because the technology supports some goal or purpose. ONC’s role in driving meaningful use is to make sure that an electronic health records system is working properly and providing the information needed to support defined goals. Toward that end, its certification process tests the basics of any new electronic health records system against a set of standards so that health care systems can purchase a system with confidence that it does work. He detailed the stages of the meaningful use program and the progression in functionality. Stage 1 involved ensuring that data capture and sharing capabilities met or exceeded a published standard and also ensuring the initial infrastructure was in place. Stage 2, the regulations for which were only recently published, is focused on advancing clinical process. In Stage 3, ONC will help support new models of care, such as accountable care organizations (ACOs) and patient-centered medical homes, that represent the next generation of health care delivery.

One challenge is take advantage of new digital sources of clinical data for measures. Many current metrics were developed for use with a given set of data sources, such as claims, chart abstraction, and others, yet electronic health records, registries, and other types of records can be used to supplement this information to provide a broader view of care quality and health. There are multiple challenges with today’s measures: Some providers are expected to report on measures unrelated to practice scope, duplicate data are often submitted by multiple providers for the same patients, and data systems are often not interoperable. To address these and other issues, ONC and CMS are building modular measures that can be useful for the clinical care of common conditions and that can be integrated into an electronic health record. Explaining the concept of a modular measure, Larsen noted that it would rely on standardized components, such as the definition of a disease or a population. The advantage of using modular components is that it can allow individuals at the local level to innovate, reuse, and reconfigure their measurement framework, while being assured that they are aligning their work with definitions and technical specifications from other programs. As part of this work, ONC has been building common definitions, which are housed in the Value Set Authority Center at the National Library of Medicine and can be downloaded easily.

ONC, CMS, and the National Library of Medicine are also moving measures to new standards of representation, Larsen explained, using rich, standardized clinical languages such as SNOMED. This will require a transition for organizations used to claims-based measures, but the result

will be a richer, most sophisticated representation of disease. Larsen noted that in developing automated measures, ONC has identified the need to develop a more rigorous testing and standardization process. ONC is also developing a set of standardized transmission formats that enable data to be moved electronically between systems regardless of the design of a specific electronic health record system.

Larsen said that ONC aims to support a range of improvement initiatives with the wider application of electronic health records. He presented an example of a tool, popHealth, that physicians can use for free that lets them see in real time how they are doing on various measures, such as asking their patients about tobacco use. ONC’s aim is to link standardized measures to standardized tools for clinical decision support. For example, a measure about cardiovascular risk reduction linked to data standards that would instantly calculate a Framingham risk score for a patient or provider could lead to a conversation about risk right at the point of care. “The measurement is linked to a tool that helps make decisions, and it becomes part of the quality improvement ecosystem,” Larsen explained.

In closing, Larsen said that the goal is to create for health information technology the equivalent of the Google home page screen. “It’s a white screen with one little line, and you put a search in, and the whole world of information is at your fingertips,” he said. “It all just works. You don’t think about how it works. You don’t know how it works, but it works, and you like it. That’s what we’re trying to achieve.”

WISCONSIN COLLABORATIVE FOR HEALTHCARE QUALITY

Before discussing the experiences of the Wisconsin Collaborative for Healthcare Quality (WCHQ), Christopher Queram commented on the growing number of regional health improvement organizations, including WCHQ, that are working in the areas of accountability, public reporting of comparative performance measures, and performance improvement. He noted that as the work of these organizations has matured, they have been venturing into new areas such as supporting or catalyzing payment reform and engaging consumers in the use of the information that they have been generating. He credited initiatives such as the Robert Wood Johnson Foundation’s Aligning Forces For Quality program, the high-value health care initiative promoted by former HHS Secretary Michael Leavitt, and ONC’s current Beacon Community Programs for the key roles that they have played in catalyzing the development of these multi-stakeholder, largely not-for-profit, and largely private-sector regional health improvement organizations.

Given that context, Queram described WCHQ, which was founded in 2003, as a completely voluntary operation that worked to maintain a

distinctive value proposition as its work matured and became more broadly accepted. Today WCHQ represents some 60 percent of Wisconsin’s primary care physicians and includes most of the multi-specialty group practices and integrated health systems in the state as well as many of the state’s small primary care clinics. Several years ago, WCHQ made the explicit decision to align its work around the three-part aim, anticipating the prominence that the three-part aim would take as the centerpiece of the National Quality Strategy. WCHQ’s core competencies revolve around four activities: developing and prioritizing performance measures for assessing the quality and cost of ambulatory care in Wisconsin; collecting, validating, and analyzing both administrative and clinical data; publicly reporting comparative performance results for health care providers, purchasers, and consumers; and sharing the best practices of health care organizations that demonstrate high quality. In its early days, WCHQ worked on developing performance measures, particularly with regard to adapting the Healthcare Effectiveness Data and Information Set (HEDIS) measures to fit a non-enrolled population, but now it focuses on prioritizing measures.

Queram explained that WCHQ acquires data via two routes. Ten of WCHQ’s 21 publicly reporting members calculate measures internally using detailed specifications and then submit aggregate denominator and numerator data for measures to WCHQ’s Web-based reporting tool. These 10 also submit de-identified patient-level data for validation purposes. The other 11 publicly reporting members, as well as the 9 new members scheduled to join in 2013, use repository-based data submission to submit global files of patient demographic, encounter, and clinical data. The repository’s centrally programmed measure specification tool calculates performance results. This Health Insurance Portability and Accountability Act (HIPAA)-compliant data repository is approved as a CMS registry for payment purposes, for meaningful use submission, and for WCHQ public reporting. Queram noted that WCHQ shares basic functionality with the Minnesota Community Measurement system. He also remarked that WCHQ ensures the accuracy of its performance measures through the oversight of a multi-stakeholder audit committee that consists of representatives from WCHQ member organizations, health insurers, and purchasing partners. The audit committee guides the development and revision of WCHQ policies and procedures for data submission, validation, and reporting. The audit process, which includes some random checks, validates numerators and denominators and has a mechanism to resolve issues prior to data reporting.

Turning to how WCHQ’s member organizations use these data, Queram used UW Health, the academic practice group for the University of Wisconsin medical school faculty, as an example. UW Health joined WCHQ in 2004 and began sharing enterprise-transparent clinical level reports in 2009, department-transparent provider level reports in 2010,

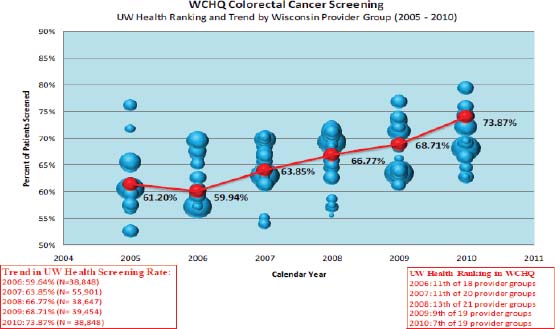

FIGURE 7-1 WCHQ organizational-level report.

SOURCE: Queram, 2012.

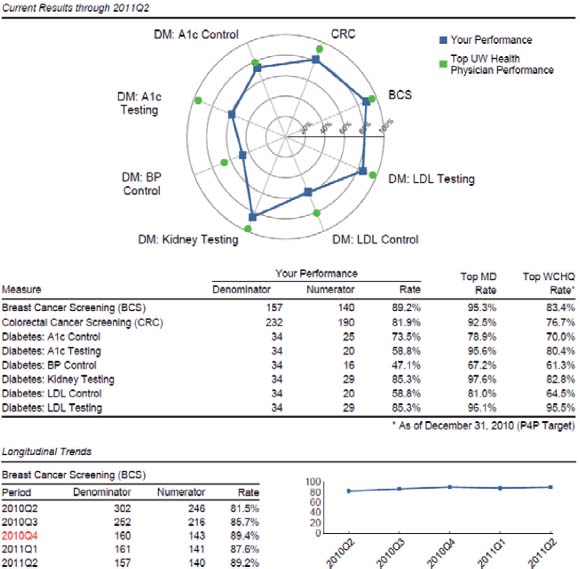

and enterprise-transparent provider reports in 2012. Queram displayed examples of the organizational level (see Figure 7-1) and provider level (see Figure 7-2) reports that WCHQ generates and showed how UW Health uses the data to track colorectal cancer screening rates of its 23 clinics and then by provider at each clinic over time. UW Health has used these data to improve colorectal cancer screening rates across all of its clinics, from 61 percent in 2005 to 79 percent in 2011, which was better than the 61 percent to 73 percent improvement seen across the entire WCHQ population.

WCHQ makes its reports available through its webpage, and Queram noted that most of the visits to the website are by provider organizations that use the reports as benchmarks for their own organizations’ performances. Few consumers have used the site, he added, because the data are available largely in raw form, and WCHQ is now experimenting with a number of different approaches for repurposing and repackaging the data for presentation on a consumer-oriented website, As for the future direction of WCHQ, Queram said that the organization has started developing the mechanisms for reporting at the clinic or practice level and plans to begin public reporting of practice site–level data for all ambulatory care measures in May 2013. This will provide individual and provider organizations with a more granular depiction of perfor-

mance. He added that one of the major lessons that WCHQ has learned is that there is no substitute for leadership from senior level executives and thought leaders, both for conceptualizing possibilities and managing expectations over time. It was also important to spend time with diverse groups to develop buy-in and to build the social capital needed to develop and grow. Furthermore, WCHQ has developed and published an evidence base, finding a correlation between public reporting and improvement (Smith et al., 2012).

In a final remark, he said that organizations such as WCHQ are learn-

FIGURE 7-2 WCHQ provider-level report.

SOURCE: Queram, 2012.

ing that they are fragile in terms of their organization and market structure. “There has been a commoditization of performance measurement in the last decade,” he said. “For all of the good intentions and emphasis of these national level activities, it’s crowding out the human and financial capital that’s critical to support local activities.” He implored the workshop attendees to help redress this trend and create a balance that will enable important community-level work to continue.

BUILDING THE DATA INFRASTRUCTURE IN A HEALTH CARE ENVIRONMENT

Bruce Ferguson began the workshop’s final presentation with a brief comment about the concept of a global outcome score—the proportion of potentially preventable adverse events that are actually prevented with the current level of care—as an actionable metric that can be used to assess the potential effectiveness of different interventions in a real-world setting. This type of score can be used to set target goals, which is where the quality improvement process starts. The success of the global outcome score also highlights the value of local information, and Ferguson reiterated Queram’s final comment about the increasing difficulty in getting the resources needed to collect data at the local level (Eddy et al., 2012).

Local centers, Ferguson said, create a data stream, consisting of clinical data, quality data, operational data, and administrative data, that can be augmented by electronic health records. By integrating this data stream with existing electronic health record systems, the data stream becomes the resource for the populating the daily dashboard and for monthly and quarterly scorecard data, the tools that an ever-increasing number of academic medical institutions are using to assess their current status and design their futures. This structure also enables just-in-time research and learning, Ferguson said, and therefore can be a valuable resource for designing core metrics.

Ferguson showed an example of how this data stream can be used to catalyze improvements in care. Using an efficiency measure for coronary artery bypass, it was easy to identify one physician who fell into the high-cost, low-quality category and another physician who was in the low-cost, high-quality category. Putting these two physicians together produced improvements in quality quickly. In another example at a regional level, the data stream was used to analyze the cost of care for aortic valve prostheses and showed that the main driver of health care costs was surgical complications.

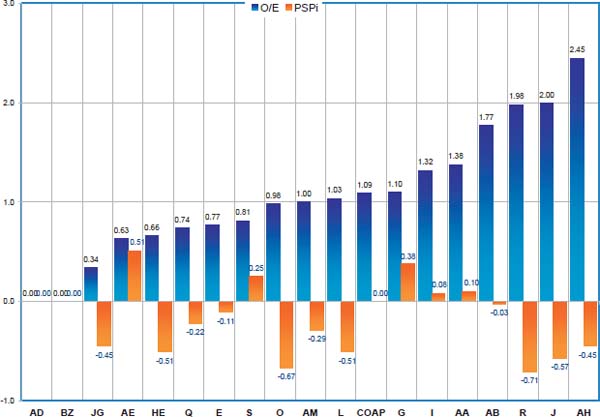

Ferguson also commented that mortality can be a misleading metric if it is not interpreted in the correct way. Two hospitals could have similar mortality rates overall, he said, but a closer look might find that patients who might be expected to have a low risk of mortality are in fact dying at

a higher rate than expected. Using observed and predicted mortality rates, Ferguson and his colleagues calculate a patient-specific performance indicator, which evaluates a hospital’s performance by incorporating the predicted risks of the patient with a specific event into a performance indicator module, where a positive score indicated better-than-expected performance and a negative score indicates a worse-than-expected performance. Comparing these two methods shows little correlation between the two measures (see Figure 7-3).

Commenting on the universal patient identifier, Ferguson said that the cost to implement the universal patient identifier ranges from $1.5 billion to $11 billion but that the return on investment from having this identifier in combination with electronic health records would be $10 billion to $20 billion annually as a result of decreasing the inefficiency that now occurs in exchanging health information. He also remarked that technology can help overcome some of the obstacles that are impeding physician adoption

FIGURE 7-3 Comparison of two different mortality measures (the observed versus expected mortality measures [black] and patient-specific performance indicator [gray]), which found little correlation between the two methods.

SOURCE: Ferguson, 2012.

of electronic health records. As an example, he cited a new tool he has been involved in developing that could incorporate clinical information directly into the health record with structured data elements instead of free-text notes.

Ferguson concluded by stating that he believes there are important new local and regional opportunities for taking real-time data and making inferences for use in real-time decision making. He added that these technology developments are quite robust as they come down the development pipeline. It will be important, though, to evaluate the realities of the clinical environment in which national core metrics will operate and to evaluate how well the core metrics can be effectively executed in these clinical environments.

During the ensuing discussion, a comment was made that it is important to start thinking now about how to pull clinical data out of registries and combine it with information-rich population data in a unified data stream. Another participant added that there is a real need for tools to turn these data into information that is useful to consumers, not just health care professionals. Larsen noted that he believes the creative marketplace will develop those tools in a cost-effective manner. He added, though, that organizations will have to undergo a culture change in order to recognize the need to make their data transparent to consumers.

A participant described in detail a system that that the American College of Surgeons has rolled out across the country. This Rapid Quality Reporting System focuses initially on adherence to the National Quality Forum recommendations regarding post-surgical adjuvant therapy for breast and colorectal cancers. This system allows individual providers, physicians, and nurses to input a parsimonious dataset for each individual cancer patient via a Web-based portal. The data are processed at the college’s Chicago headquarters and is then available for individual hospital sites to track their patients over time so that they are beginning therapy on schedule. Patient data are aggregated at the individual and hospital level. One key question about this system, the participant noted, is how to make this information available to the broader community. Another workshop attendee remarked that this effort shows that it does not need to take years and millions of dollars to create clinical database structures.

A participant who serves as a chief medical officer at a hospital said that standards need to be created to enable data transfer from electronic health records directly into registries such as the one that the American College of Surgeons developed. Such standards would have a significant impact on the burden of inputting data into these systems. Another participant,

while recognizing the power of these disease-specific registries to improve care, wondered how it would be possible to connect these registries, given their increasing number. He noted an earlier comment that one hospital has 23 of these registries and has to populate data for each of them manually from the electronic health record.

Eddy, D. M., J. Adler, and M. Morris. 2012. The “Global Outcomes Score”: A quality measure, based on health outcomes, that compares current care to a target level of care. Health Affairs 31(11):2441–2450.

Ferguson, T. B. 2012. Building the Data Infrastructure in a Health Care Environment. Presentation at IOM Workshop on Core Metrics for Better Care, Better Health, and Lower Costs, Irvine, CA.

Hillestad, R., J. Bigelow, A. Bower, F. Girosi, R. Meili, R. Scoville, and R. Taylor. 2005. Can electronic medical record systems transform health care? Potential health benefits, savings, and costs. Health Affairs 24(5):1103–1117.

Queram, C. J. 2012. Case Examples of Building the Infrastructure: Wisconsin Collaborative for Healthcare Quality. Presentation at IOM Workshop on Core Metrics for Better Care, Better Health, and Lower Costs, Irvine, CA.

Smith, M. A., A. Wright, C. Queram, and G. C. Lamb. 2012. Public reporting helped drive quality improvement in outpatient diabetes care among Wisconsin physician groups. Health Affairs 31(3):570–577.