Design of Materials—Session Summary

MATERIALS GENOMICS PAST AND FUTURE FROM CALPHAD TO FLIGHT

Gregory B. Olson, Walter P. Murphy Professor of Materials Science and Engineering, Northwestern University; Chief Science Officer, QuesTek Innovations LLC

Dr. Olson began his presentation by restating the goals of President Obama’s Materials Genome Initiative, which was announced in June 2011: to expand the system of fundamental materials databases and cut materials development cycles in half or more. He pointed to the study Accelerating Technology Transition: Bridging the Valley of Death for Materials and Processes (National Research Council, 2004), which used the Human Genome project as a model for recommending the construction of a fundamental database for materials, with the White House Office of Science and Technology Policy (OSTP) leading the effort. The purpose of a materials genome project would be to promote science-based engineering in materials instead of empirically based engineering, as is common today. While implementation of this recommendation has begun with the Materials Genome Initiative, Dr. Olson reiterated his support for the NRC’s recommendation and said that he believes that focused support is necessary to make significant progress.

Dr. Olson then described what he believes to be the most significant milestone in materials genomics: the flight test of the QuesTek Ferrium S53 aircraft landing gear. Completed in December 2010, this was the first fully computationally designed and qualified material. The flight test provides a benchmark for the ma-

turity level of the CALPHAD (calculation of phase diagrams) technology, which is described below.

Dr. Olson went on to discuss the materials genome timeline and the CALPHAD process, which has been under development for more than 50 years. The scope of CALPHAD has broadened beyond phase diagrams to include molar volumes, atomic mobilities, and elastic constants. Formerly an empirical science, CALPHAD is increasingly based on DFT calculations. The CALPHAD community brings together metals and ceramics. There are other communities in fields such as organics that use the same tools and techniques; the concept is to build a strategy to span all classes of materials. In the 1980s researchers successfully applied a CALPHAD-based process to predictively design a set of high-performance steels, which are now commercially available. Researchers then expanded into other metallic systems, followed by demonstration projects in polymers and ceramics. The successes in computational materials design helped the integrated computational materials engineering (ICME) process initiated by the DARPA-AIM program to gain acceptance, leading to a full optimization at the component level to predict design allowables and to put materials into use more rapidly. Full ICME has been demonstrated in metallics only, but Dr. Olson believes there is little question that the process will move to all materials, given the generic character of the CALPHAD-based approach.

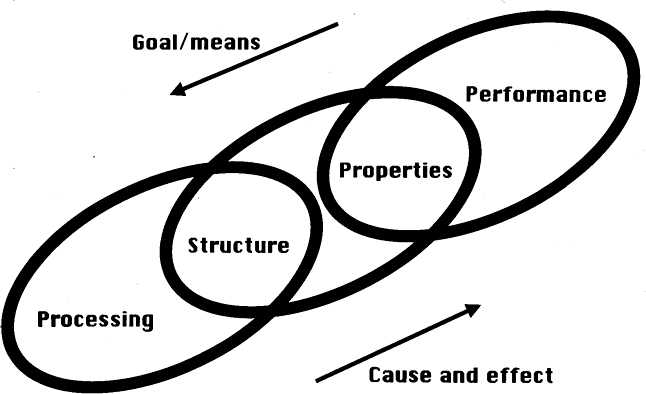

Dr. Olson then described how Cyrill Stanley Smith wrote extensively about the concept of multiscale interactive structural hierarchy of materials and how to apply a systems approach to materials. Morris Cohen described this as the reciprocity between the opposite philosophies of science and engineering, in which science, and its deductive cause-and-effect approach, flows in one direction, while engineering, and its inductive goals-means relations, flows in the opposite direction. This is shown schematically in Figure 4.1. The idea of reciprocity forms the backbone of a systems approach to the science-based engineering of materials. Each material design consists of performance metrics that map back to a set of quantitative property objectives that are connected to the structural hierarchy within the material. Process/structure/property relations can be identified and prioritized, and predictive models can be built. In particular, mechanistic models can be deliberately structured to extract maximum value from existing fundamental database systems.

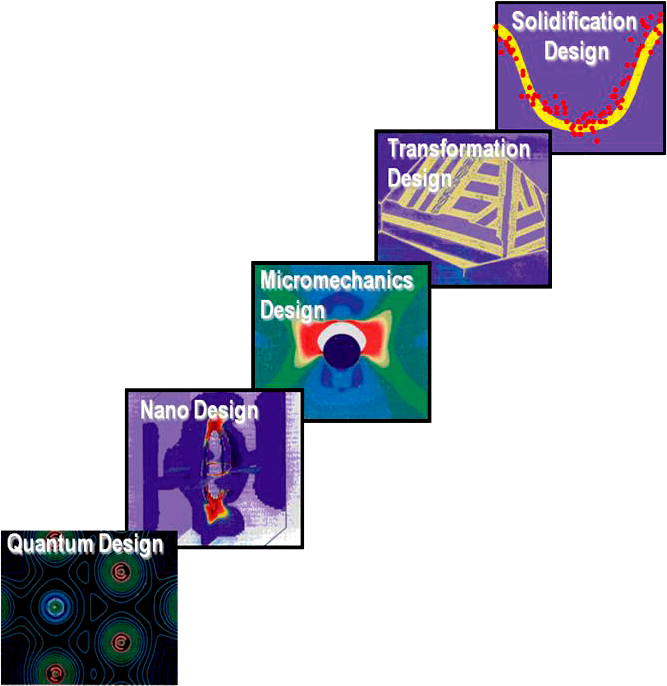

Dr. Olson then explained a resulting hierarchy of design models, shown in Figure 4.2. He pointed out that at the most fundamental level, the maximum impact of the all-electron DFT calculations has been in the prediction of surface thermodynamic quantities, which are the most difficult to measure. The greatest accuracy and control have been in nanoscale precipitation behavior and associated strengthening behavior. Researchers have been able to integrate applied mechanics in simulating fracture and other failure modes. They have been able to include processability in the integration of all the solid state transformation models. Re-

FIGURE 4.1 Cohen’s reciprocity, showing the reciprocity between science and engineering, in which science, and its deductive cause-and-effect approach, flows in one direction, while engineering, and its inductive goals-means relations, flows in the opposite direction. SOURCE: Gregory Olson, Northwestern University, presentation to the Standing Committee on Defense Materials, Manufacturing and Infrastructure on December 5, 2012, slide 7. From G.B. Olson, 1997, Computational design of hierarchically structured materials, Science 277(5330): 1237-1242.

searchers have also integrated solidification behavior both in terms of the accurate prediction of microsegregation as a function of process scale as well as with liquid buoyancy models, which can set constraints on macrosegregation phenomena. The model hierarchy integrates materials science, quantum mechanics, and applied mechanics. To get the maximum value out of the supporting database structure, the Thermo-Calc software is the central integrator of those systems. Parametric design (2D mappings) allows one to arrive at unique compositions and process temperatures with specified tolerances.

Dr. Olson then described the first four commercial cybersteels on the market, which include two types of aircraft landing gear steels and two types of casehardened gear steels for automotive applications. All four of these alloys exploited an optimization of M2C alloy carbides at a particle size of about 3 nm, providing a high level of strengthening efficiency with a 50 percent higher strength at a given carbon content compared to conventional steels.

Dr. Olson then discussed the Accelerated Insertion of Materials (AIM) program, an initiative sponsored by DARPA and conducted in collaboration with GE Aircraft Engines and Pratt & Whitney. The metals phase of the project involved a component-level process optimization for aeroturbine disc alloys, and research

FIGURE 4.2 Hierarchy of design models from the smallest length scales with quantum design all the way to solidification models needed in order to accurately predict the behavior of objects such as landing gears. SOURCE: Gregory Olson, Northwestern University, presentation to the Standing Committee on Defense Materials, Manufacturing and Infrastructure on December 5, 2012, slide 9. From G.B. Olson, 2000, Designing a new material world, Science 288(5468): 993-998.

was able to show there is enough accuracy to predict small spatial variation and micromechanical properties. The difficulty of this project was that the data sets were very small—large enough to determine the first and second moments of yield strength but not the shape of the distribution function. Researchers addressed this by using mechanistic Monte Carlo simulations to predict the shape of the distribution function of yield strength, and they integrated sparse data through linear transformation.

Dr. Olson then described the qualification process for S53 and M54, the two

landing gear steels developed by QuesTek. It took 8 years from material design to qualification for S53, and QuesTek is on track to get M54 qualified within 5 years. This computationally based qualification is much accelerated compared to the standard 10-20 year development cycle.

Closing the loop with the first workshop topic, additive manufacturing, Dr. Olson then described the DARPA Open Manufacturing project conducted collaboratively with Honeywell. In this project the laser-based additive manufacturing of a nickel-based 718+ alloy was being controlled. This will enable calibrated design tools to create new alloys optimized for the capabilities of the additive manufacturing process, particularly looking at alloys that can exploit faster solidification. He said that principles established in welding metal design can be adapted to additive manufacturing.

Addressing the second workshop topic, the electromagnetic manipulation of properties, Dr. Olson discussed magnetic field effects on steel. His research looked at high (12-17 T) magnetic field effects on the thermodynamics and kinetics of the martensitic transformations in steels. Dr. Olson and his team have recently exploited that knowledge to expand the performance envelope of secondary hardening gear steels. They would like to reduce the gear weight by half relative to current materials. While strengthening the steel with nanoscale carbides is possible, adding that much carbon (as well as nickel, which is needed for core toughness) results in a transformation temperature that is unacceptably low for conventional processing. However, the desired properties of the steel can be attained with combinations of cryogenic high-magnetic-field processing and multistep tempering.

Dr. Olson then turned to the third workshop topic, a vision for design and databases. One area of opportunity, he said, is in a large-scale public database system, which could reside at the National Institute of Standards and Technology (NIST). He suggested that this database should consist essentially of protodata—that is, data below the assessed genome level: raw information on experimentally measured phase relations, thermochemical measurements, and DFT calculations. That data could be pooled and periodically assessed to generate usable databases with public and private development channels. There are also opportunities at the database level, he said, including the D3D project (which will be described below). There is a public website maintained by the Minerals, Metals, and Materials Society (see http://www.tms.org) that stores 3D microstructural information. The standard database for materials selection is the set of discrete properties of material searchable by the Ashby materials selection system. The opportunity exists to use the same architecture to search all databases at all levels, to select atoms and components the same way that higher-level systems are selected. Another opportunity is to hand off a material accompanied by its associated information system to adapt to future manufacturing/field experience; this opens up the possibility of

moving beyond discrete properties into a system based on constituitive modeling with state variables for the microstructure of the material.

D3D was a QuesTek-led Office of Naval Research/DARPA-sponsored university consortium that brought together a suite of tomographic characterization and simulation tools for multiple scales. The properties of interest were strength, toughness, and fatigue resistance (particularly fatigue nucleation). At this point, while most structural component designs are strength-driven, some are fatigue-limited, but it takes too much data to quantify fatigue properties properly. The new frontier for this technology is to accurately predict minimum fatigue strength.

Dr. Olson was questioned about the types of models that should be used: data-driven or physics-based. He responded that the most successful projects use the physics-based model, with a mechanistic modeling-based approach rather than a data-driven one. A data-driven model might be good as an interim measure, he explained, but certain types of problems (such as fatigue) are too difficult to resolve with a data-driven model because they require too much empirical data and tend to provide superficial information. Modeling-based approaches can define new experiments and sometimes new discoveries.

Another questioner wanted to know about the use of the DFT as the basis for CALPHAD. The questioner pointed out that this was rather unsatisfying, as this type of modeling is of limited accuracy. Dr. Olson responded that these methods are still useful provided uncertainty is quantified. In surface thermodynamics, techniques are almost always DFT-based, and the accuracy has been sufficient to achieve substantial gains in grain boundary cohesion for enhanced stress corrosion resistance.

Another participant stated that in the last 15 years, six NRC reports have discussed the need for federal support for fundamental materials databases, but nothing is really taking place. Dr. Olson responded that the database project is happening, but not with enough force behind it; he would like to see more Human Genome-like energy behind it.

Referring to Cohen’s reciprocity (Figure 4.1), a participant pointed out that it is easier to go from processing to performance than it is from performance to processing. Dr. Olson said that this is universally true: It is easier to move from the science to the engineering than from the engineering to the science. Materials science and engineering is no different from any other branch of technology; the dominance of science over engineering in the study of materials has been a limitation. He recommended “legalization” of engineering at our universities.

When asked how this dovetails with industry, Dr. Olson said it is essential that a material be designed for scale to make sure there will be no degradation in properties when moving up in size. Speaking specifically about costs and his own experience with QuesTek, he said that in the accelerated qualification of the stain-

less steel, QuesTek was able to share necessary inventory costs with the supplier because there was shared confidence in model accuracy.

SOME STEELS ON THE VERGE OF INDUSTRIALIZATION

John Speer, Professor, Department of Metallurgical and Materials Engineering, Colorado School of Mines

Dr. Speer began his presentation by describing partnerships at the Colorado School of Mines Advanced Steel Processing and Products Research Center (ASPPRC). The School of Mines partners primarily with industry, along with Los Alamos National Laboratory. The school is interested in conducting research that involves students, producers, and users of steel. In the 1990s sponsors were all based in North America; today, the partnerships are global and serve a diverse group of needs and perspectives. The school also performs some DOE- and NSF-sponsored programs.

Dr. Speer explained that he would not be giving a design talk, but rather would be discussing new steels of interest in the industrial realm that might have crossover application in defense. The steels under consideration include steels for automotive structures, pipeline steels, sheet products, plates, bars, heat treating, construction steels, and fire-resistant steels.

Dr. Speer provided an example in the steel manufacturing industry of a company (ArcelorMittal) that produced 172 billion lb of steel in 2011, at a profit of 1.3 ¢/lb. This figure underscores the scale of production and how cost is critical in the steel industry. It is a different paradigm compared to something like additive manufacturing in that steel manufacturing is a large-scale industry with severe cost constraints. Manufacturing issues often control the implementation of steel product research and development. These issues can include facilities, capital requirements, conversion costs, and end-user fabrication requirements (such as forming, joining, and painting).

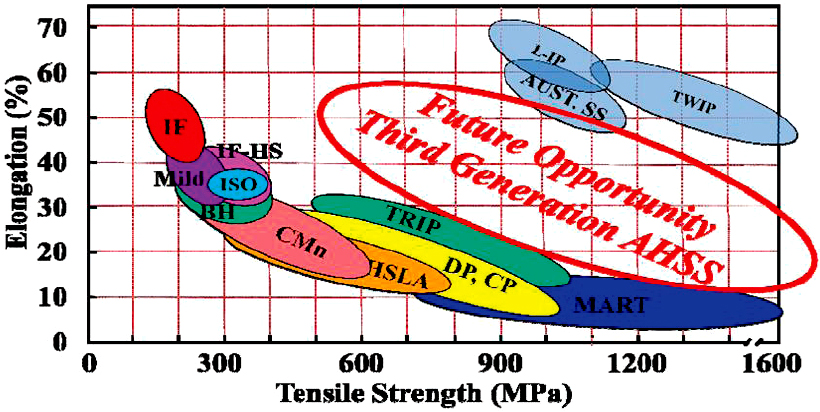

Dr. Speer then described the role of automotive “lightweighting” as a research driver in steel. He discussed elongation versus tensile strength (Figure 4.3). The austenitic steels referenced in the upper right corner of the figure are usually not cost-competitive enough to be used on a massive scale, and researchers are seeking materials with better strength properties than the conventional steels included in the lower left of the figure. The gap in the middle is considered to be the materials design space, referred to as third-generation advanced high-strength steels (AHSS), where cost and performance requirements might be achieved. Dr. Speer explained that austenite is a crucial component of the microstructure in third-generation steels, which could be valuable to the defense industry as well as civilian industry,

FIGURE 4.3 Elongation versus tensile strength for a variety of steels, showing conventional steels in the lower left, high-cost austenitic steels in the upper right, and the area of opportunity in AHSS between them. SOURCE: John Speer, Colorado School of Mines, presentation to the Standing Committee on Defense Materials, Manufacturing and Infrastructure on December 5, 2012, slide 29, originally from the American Iron and Steel Institute.

where attention is currently focused. These steels can be processed in a variety of ways to create different microstructures and different strength/formability/ toughness properties.

Dr. Speer highlighted several types of advanced high-strength steels under consideration, including quenched and partitioned (Q&P) and medium-manganese (med-Mn) steels. He first described the Q&P process developed at the ASPPRC. First, the steel is heated into the austenitic phase field, and then it is quenched to form martensite. A unique aspect of the processing is that the quenching process is interrupted at a carefully selected, elevated temperature so that the material contains martensite along with untransformed austenite. There is a driving force for carbon atoms to migrate from the martensite back into the austenite; that carbon force stabilizes the austenite to room temperature more than if quenching had taken place at room temperature. Effectively, carbon is used as “cheap” austenite stabilizer. A Chinese company, Baosteel, is the first company to create commercially produced Q&P steels (with or without a corrosion-resistant coating); these steels have been in limited production and used in vehicles since January 2012.

The second concept Dr. Speer described was med-Mn steel. Manganese is an austenite stabilizer. The addition of manganese changes the microstructure of steel, and researchers at ASPPRC have stabilized 20-40 percent austenite through long-

term annealing and the addition of Mn. The microstructure is very interesting, with fine grains (approximately 1 μm grain size). Research indicates that the annealing does not need to be done for weeks at a time; instead, the processing can be done in minutes to obtain Mn partitioning over short length scales.

Dr. Speer’s final example was press-hardenable steels for hot stamping. The hot stamping concept has been around for more than 20 years and is growing in importance in the automotive industry. The concept is as follows: rather than heat treating a formed component to a high-strength martensitic microstructure, a flat piece of sheet material is heated to the austenitic field, then formed and die quenched. In this way, one can make high-strength products of complicated shape. Current research opportunities include increasing the sophistication of the heat treatments and exploring the use of zinc coatings.

In response to questions about cost, Dr. Speer pointed out that because steels are high-volume, production-lean alloy chemistries, small changes in chemical composition can change the cost of production. Thermomechanical processing does not add much to the cost if the capability is already in existence, though it can slow production rates in some applications (for instance, it takes time to cool during rolling, which can influence productivity). He also pointed out that while the automotive industry will always want more performance at a lower cost, the defense industry tends to be performance-driven, with cost constraints that are somewhat less severe.

Dr. Speer was asked about the increasingly complex contours in contemporary automobiles and if that has been made possible by alloy technology. He responded that shape technology has benefitted from improvements in forming and modeling technologies, thus enabling the use of stronger steels. There have been steady improvements in steel performance and corrosion resistance, but those are not the factors that would promote radical changes to body design.

During a discussion of the Q&P process, Dr. Speer clarified that, to date, Q&P has only been applied to sheets, not thick sections. For the Q&P process to be effective with thick section steel, the steel would have to be redesigned to enable a transformation response due to the cooling, and a method for controlling the quenching temperatures in the Q&P process in a thick section would be required.

A participant asked about global activity in steels research and production. Dr. Speer replied that steel production is stable in the United States and Europe. China has increased its steel production from about 100 million tons/year to 700 million tons/year over the past 15 years or so, while the rest of the world has remained stable at something over 600 million tons/year. Research activity is also increasing in the rest of Asia.

A participant pointed out that steel strengths are increasing and asked at what point steel could outperform aluminum. Dr. Speer said there is a continued pressure for all materials to achieve higher strengths. There will always be niche

applications for different materials, he said. The competition between different materials has been dynamic for many years. For example, aluminum would be considered more competitive in automotive engine blocks and perhaps less so in body structures, but other participants noted that steel is superior in crashworthiness and recycling.

DATA-DRIVEN MATERIALS CODESIGN

Krishna Rajan, Wilkinson Professor of Interdisciplinary Engineering, Department of Materials Science and Engineering, Iowa State University

Dr. Rajan began his presentation by explaining his take-home messages. First, he said that just as biology has collected information about its many important molecules, formed it into massive databases, and appropriated -omics as a tag for some of its component disciplines, materials science should also look to informatics to enable “materials by design.” Informatics involves a statistically based learning process—with physics, chemistry, and materials science all coupled together. Second, he said that his goal is to change materials databases from repositories where one can conduct search and retrieval exercises into laboratories where new and unexpected discoveries can be made. In his presentation, Dr. Rajan explained the co-design process, gave examples of co-design problems currently being addressed by DOD, and ended with a suggestion on how to integrate this information.

Dr. Rajan emphasized the importance of considering design manufacturing issues at the beginning of any materials development, as these issues are the main difference between discovering new materials and designing new materials. He said that a challenging goal in materials design is to discover the links that can help explain materials behavior across critical length scales. This was a focus of the AIM program of DOD over a decade ago; Dr. Rajan believes that AIM was one of the first major efforts to get the materials science and engineering design communities to establish a materials-mechanism-based design strategy for engineering systems. AIM highlighted the need to find ways to cooperatively and simultaneously design an engineering system from both the macro- and microscale. Enabling the inclusion of design manufacturing issues from the beginning of materials development is at the heart of this “co-design” process. Co-design is the cooperative design of materials taking into account, in parallel, the life cycle of materials: extraction, synthesis, processing, manufacturing, and recycling.

Dr. Rajan then went on to describe informatics. Informatics is a strategy to integrate information of all types (including experiments, modeling, data, and theory) in an environment where there is no preexisting model. Informatics in the context of materials design is the computational strategy of integrating

information associated with material structure, chemistry, and performance so as to extract patterns of multiscale behavior in materials. The requirements of statistical learning/machine learning guide the experiment. Dr. Rajan referred to this as “omics:” a whole set of length and timescale problems. He described multiscale modeling, which looks at different length scales and timescales, and where the interconnections and linkages between material structure, chemistry, and performance are not known. This becomes a network problem: Connections may be greater at higher length or timescales than at other places in the network, and a design process may be able to “leap” length scales or timescales because of how the information is networked together. Dr. Rajan pointed out that, currently, the primary ways to rapidly accelerate discovery and make unexpected jumps in materials design are (1) unexpected discovery or (2) failures. He pointed out that Nobel prizes that had a materials science component (such as superconducting ceramics, conductive polymers, quasicrystals, and Buckyballs) were outliers, or unexpected discoveries. Engineering failure analysis is another (unfortunate) way to discover material properties that cut across length scales in an accelerated manner. He wondered whether there are other ways to collect information that would lead to faster progress in materials design.

Dr. Rajan then described the genome equations: Each material can be described as a function of different state variables. The critical issue is then determining which variables are important in the engineering environment. (It may not be the materials science variables that guide the materials physics.) The next issue is to classify behavior among the variables. Borrowing a concept known as quantitative structure activity relationship (QSAR) from the biology community, Dr. Rajan suggested applying QSAR to assign a weighting parameter to individual variables, based on observed functionality or toxicity of each variable in question. The idea is not to assume a priori which variables are the most important, but to instead apply a systems biology approach to assess which variables are the most significant.

Dr. Rajan then introduced the “big data” framework. This paradigm is also from the health science and social science communities, where there are large volumes of data. He described four aspects of data, known as the four V’s: volume, velocity, variety, and veracity. While one always tries to increase the volume of existing data, situations in which the volume is small, sparse, distributed, or skewed may require the use of predictions. In the case of the velocity variable, because data are received real time, inferential reasoning regarding their evolution and the in situ dynamics may be required. With the variety variable, data are in different forms: for instance, numbers, qualitative (such as good/bad), unstructured, or multimedia. The challenge is finding useful applications for the data. With the veracity variable, the question becomes one of quantifying uncertainty—that is,

given the data on hand, and knowing the skew, sparseness, and uncertainties, can the model be justified?

Dr. Rajan then described how to “map” the materials genome: Determine the dominant features (“genes”) that govern a material’s characteristics and then reduce the dimensional space without losing the physics. In the reduced space, look for patterns, classifications, organizations, and clusters (“sequencing”). This provides a computational framework for the model.

Dr. Rajan then described examples of material co-design using big data. The first example he described was research on a drug delivery/vaccine system using co-design. This 5-year project was a Multidisciplinary University Research Initiative (MURI) with the Office of Naval Research on the vaccine and drug delivery for plague. The development bottleneck was that drug delivery material and vaccine are not typically developed in parallel, which adds delay and inefficiency. The goal of this project was to develop the drug delivery polymer, based on known characteristics of the vaccine, to couple the design of each. The research examined the polymer chemistry via a QSAR analysis. In parallel, vaccinated animals were given full body scans to track information at the cellular level. The data were quantified to a matrix, and image processing techniques were applied to develop descriptor ranking and outlier detection. This information was then put together to design a drug and delivery system that would warn the immune system to respond in a certain way when the drug was administered. The end result was that the materials science community developed connections based on the information gathered that the medical community would not have discovered. This is a good example of the co-design approach; the key was to link all the information together in the absence of valid models.

Dr. Rajan then described a second example of co-design based on the big data approach: high-temperature piezoelectric perovskites. In this case, the goal was to increase the Curie temperature while maintaining a material’s ferroelectric properties. To develop this system, the options were (1) combinatorics: make lots of materials and see what happens or (2) computation: generate the lowest minimum energy solution (although this assumes the physics of the models is well understood). Dr. Rajan explained that when using the co-design with the big data method, the figure of merit is inherent to the process. There must be some scalar relationship(s) between the important properties of the material and some variable. In this case, Dr. Rajan explained that researchers developed a tolerance factor and then examined Curie temperature against the tolerance factor. This information was translated into a unified model to predict new chemistries.

Dr. Rajan then presented a plot demonstrating the contrast between materials development before and after informatics. The plot indicated that after informatics-based design, there are more materials with higher Curie temperatures.

Informatics informs experimentalists to tell them what to develop (validation), as well as informs the computational community, to tell them how to refine their models and theories. The point is that accelerated design occurs even with limited information.

Dr. Rajan then briefly mentioned a new program using co-design techniques to develop high-temperature superalloys. The idea is to avoid using rare earth elements and other critical elements. He also touched on a project applying principles of co-design to expand design limits for Ashby maps.

In conclusion, Dr. Rajan briefly described the materials bar code idea, in which a digital bar code is embedded in the material itself to encode information about the material’s properties, modeling, evolution of the material properties, qualification, service, and manufacturing. The bar code can bridge the gap between basic materials science and manufacturing and may provide a framework for integrating materials discovery and manufacturing.

OPEN DISCUSSION: DESIGN OF MATERIALS

Discussion Leader: Haydn Wadley, University Professor and Edgar Starke Professor of Materials Science and Engineering, University of Virginia

Dr. Wadley opened the discussion by asking workshop participants what is important in the materials science field that is currently impossible to do. One participant reframed this question slightly by asking for challenge problems that would further the analytical capabilities in the design of materials. The responses focused on two areas. The first was how to match experimentation to models. One participant pointed out that we have heard about successes only, not failures, but that there are instances where fabrication does not match up with predictive approaches. It was suggested that perhaps there should be some statistics on failures so that any database built would be backed with the most meaningful data possible. Another participant noted that when the Human Genome Initiative started, it generated new experimental protocols to create new data; with the Materials Genome Project, the same type of new experimental protocols may be generated, causing a rapid expansion of fundamental databases. This could be the greatest impact of the project. The second area was related to how one could integrate models so they interact over different length scales and how to do that in a single system. However, other participants pointed out that we do currently have the knowledge needed to decouple the system and process in stages to make the system integration problem tractable.

Dr. Wadley then pointed out that a DFT calculation provides resolution on the order of 0.1 eV. Ambient temperature is on the order of 0.05 eV; he asked if that

resolution was good enough. Is the materials science community happy with that as state of the art? Others defended the use of DFT calculations, saying that although improvements could always be made to the fidelity of the DFT calculations, in practice most are measured, not calculated. In general, the use of DFT does not appear to be a showstopper in the design process. There is significant knowledge of the relative uncertainties involved.

Dr. Wadley then posed a question to the group, putting forth a scenario in which Team A is asked to design a material without any experimentation (only modeling), and Team B is asked to design a material without any modeling (only experimentation). He then asked which of these teams would win 30 years ago, today, and 30 years from now. This question generated a lot of discussion among the participants. One respondent pointed out that for a material to be accepted for use, experiments are necessary. Where experiments are weak is in the quantification of uncertainty from one step to the next; that is where computational tools are necessary. Dr. Olson’s response was that 30 years ago, materials development was purely empirical. Today it is a mixture of experimentation and modeling. The future will likely be driven by models, with experiments necessary to confirm them. It will be a theory-driven approach, which will change the nature of the experiments. Another participant pointed out that for a material to be accepted and qualified for use, experiments will always be necessary. However, experiments do not quantify uncertainty well; for that, computational tools are necessary.

Another participant noted that finite element modeling tools in the mechanical engineering community would be a good benchmark for the materials science community. Current success should not be considered sufficient, and the inclusion of additional functionality, such as the addition of informatics, should be a critical element.

A questioner said that presenters at the workshop described the possibility of using magnetic fields as a state variable, which would require vector thermodynamics, and asked if there have been developments in this area. Dr. Olson replied that a parametric design approach uses vector and matrix representations, extracting out scalar parameters to use as design parameters. The individual pointed out that that approach does not provide any information about grain boundary composition. Dr. Olson responded that other principles would apply if one was trying to design grain boundaries. When designing grain boundaries, one tends to design a “typical” boundary rather than the full distribution function.

Another question focused on process control. Dr. Wadley explained that before the (DARPA) AIM program, a program existed at DARPA called Virtual Integrated Processing (VIP), which developed models and controls concepts for materials processing, and that before that (20 years ago), another Intelligent Processing of Materials program developed sensors. The combination of these sensors and predictive models now allows some control of material state trajectory during processing (but

probably not of the defects that motivate the need for much experimentation and materials certification). Dr. Olson added that alloys have been developed for the current processing technology, so the opportunity exists to develop more robust materials.

Dr. Wadley left the group with a final thought: With so many opportunities in the design of materials, how do we set priorities for what to pursue?