3

ASSESSMENT DESIGN AND VALIDATION

Measuring science content that is integrated with practices, as envisioned n A Framework for K-12 Science Education: Practices, Crosscutting Concepts, and Core Ideas (National Research Council, 2012a, hereafter referred to as “the framework”) and the Next Generation Science Standards: For States, By States (NGSS Lead States, 2013), will require a careful and thoughtful approach to assessment design. This chapter focuses on strategies for designing and implementing assessment tasks that measure the intended practice skills and content understandings laid out in the Next Generation Science Standards (NGSS) performance expectations.

Some of the initial stages of assessment design have taken place as part of the process of writing the NGSS. For example, the NGSS include progressions for the sequence of learning, performance expectations for each of the core ideas addressed at a given grade level or grade band, and a description of assessable aspects of the three dimensions addressed in the set of performance expectations for that topic. The performance expectations, in particular, provide a foundation for the development of assessment tasks that appropriately integrate content and practice. The NGSS performance expectations also usually include boundary statements that identify limits to the level of understanding or context appropriate for a grade level and clarification statements that offer additional detail and examples. But standards and performance expectations, even as explicated in the NGSS, do not provide the kind of detailed information that is needed to create an assessment.

The design of valid and reliable science assessments hinges on multiple elements that include but are not restricted to what is articulated in disciplinary frameworks and standards (National Research Council, 2001; Mislevy and Haertel, 2006). For example, in the design of assessment items and tasks related to the NGSS performance expectations, one needs to consider (1) the kinds of conceptual models and evidence that are expected of students; (2) grade-level-appropriate contexts for assessing the performance expectations; (3) essential and optional task design features (e.g., computer-based simulations, computer-based animations, paper-pencil writing and drawing) for eliciting students’ ideas about the performance expectation; and (4) the types of evidence that will reveal levels of students’ understandings and skills.

Two prior National Research Council reports have addressed assessment design in depth and offer useful guidance. In this chapter, we draw from Knowing What Students Know (National Research Council, 2001) and Systems for State Science Assessment (National Research Council, 2006) in laying out an approach to assessment design that makes use of the fundamentals of cognitive research and theory and measurement science. We first discuss assessment as a process of reasoning from evidence and then consider two contemporary approaches to assessment development—evidence-centered design and construct modeling—that we think are most appropriate for designing individual assessment tasks and collections of tasks to evaluate students’ competence relative to the NGSS performance expectations.1 We provide examples of each approach to assessment task design. We close the chapter with a discussion of approaches to validating the inferences that can be drawn from assessments that are the product of what we term a principled design process (discussed below).

ASSESSMENT AS A PROCESS OF EVIDENTIARY REASONING

Assessment specialists have found it useful to describe assessment as a process of reasoning from evidence—of using a representative performance or set of performances to make inferences about a wider set of skills or knowledge. The process of collecting evidence to support inferences about what students know and can do

___________

1The word “construct” is generally used to refer to concepts or ideas that cannot be directly observed, such as “liberty.” In the context of educational measurement, it is used more specifically to refer to a particular body of content (knowledge, understanding, or skills) that an assessment is designed to measure. It can be used to refer to a very specific aspect of tested content (e.g., the water cycle) or a much broader area (e.g., mathematics).

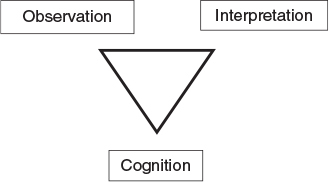

FIGURE 3-1 The three elements involved in conceptualizing assessment as a process of reasoning from evidence.

SOURCE: Adapted from National Research Council (2001, p. 44).

is fundamental to all assessments—from classroom quizzes, standardized achievement tests, or computerized tutoring programs, to the conversations students have with their teachers as they work through an experiment. The Committee on the Cognitive Foundations of Assessment (National Research Council, 2001) portrayed this process of reasoning from evidence in the form of an assessment triangle: see Figure 3-1.

The triangle rests on cognition, defined as a “theory or set of beliefs about how students represent knowledge and develop competence in a subject domain” (National Research Council, 2001, p. 44). In other words, the design of the assessment should begin with specific understanding not only of which knowledge and skills are to be assessed, but also of how understanding and competence develop in the domain of interest. For the NGSS, the cognition to be assessed consists of the the practices, the crosscutting concepts, and disciplinary core ideas as they are integrated in the performance expectations.

A second corner of the triangle is observation of students’ capabilities in the context of specific tasks designed to show what they know and can do. The capabilities must be defined because the design and selection of the tasks need to be tightly linked to the specific inferences about student learning that the assessment is intended to support. It is important to emphasize that although there are various factors that assessments could address, task design should be based on an explicit definition of the precise aspects of cognition the assessment is targeting. For example, assessment tasks that engage students in applying the three-dimensional learning (described in Chapter 2) could possibly yield information about how students use or apply specific practices, crosscutting concepts, disciplinary core ideas, or combinations of these. If the intended constructs are clearly specified, the design of a specific task and its scoring rubric can support clear inferences about students’ capabilities.

The third corner of the triangle is interpretation, meaning the methods and tools used to reason from the observations that have been collected. The method used for a large-scale standardized test might involve a statistical model. For a classroom assessment, it could be a less formal method of drawing conclusions about a student’s understanding on the basis of the teacher’s experiences with the student, or it could provide an interpretive framework to help make sense of different patterns in a student’s contributions to practice and responses to questions.

The three elements are presented in the form of a triangle to emphasize that they are interrelated. In the context of any assessment, each must make sense in terms of the other two for the assessment to produce sound and meaningful results. For example, the questions that shape the nature of the tasks students are asked to perform should emerge logically from a model of the ways learning and understanding develop in the domain being assessed. Interpretation of the evidence produced should, in turn, supply insights into students’ progress that match up with that same model. Thus, designing an assessment is a process in which every decision should be considered in light of each of these three elements.

Construct-Centered Approaches to Assessment Design

Although it is very valuable to conceptualize assessment as a process of reasoning from evidence, the design of an actual assessment is a challenging endeavor that needs to be guided not only by theory and research about cognition, but also by practical prescriptions regarding the processes that lead to a productive and potentially valid assessment for a particular use. As in any design activity, scientific knowledge provides direction and constrains the set of possibilities, but it does not prescribe the exact nature of the design, nor does it preclude ingenuity in achieving a final product. Design is always a complex process that applies theory and research to achieve near-optimal solutions under a series of multiple constraints, some of which are outside the realm of science. For educational assessments, the design is influenced in important ways by such variables as purpose (e.g., to assist learning, to measure individual attainment, or to evaluate a program), the context in which it will be used (for a classroom or on a large scale), and practical constraints (e.g., resources and time).

The tendency in assessment design has been to work from a somewhat “loose” description of what it is that students are supposed to know and be able to do (e.g., standards or a curriculum framework) to the development of tasks or problems for them to answer. Given the complexities of the assessment design process, it is unlikely that such a process can lead to a quality assessment without

a great deal of artistry, luck, and trial and error. As a consequence, many assessments fail to adequately represent the cognitive constructs and content to be covered and leave room for considerable ambiguity about the scope of the inferences that can be drawn from task performance. If it is recognized that assessment is an evidentiary reasoning process, then a more systematic process of assessment design can be used. The assessment triangle provides a conceptual mapping of the nature of assessment, but it needs elaboration to be useful for constructing assessment tasks and assembling them into tests. Two groups of researchers have generated frameworks for developing assessments that take into account the logic embedded in the assessment triangle. The evidence-centered design approach has been developed by Mislevy and colleagues (see, e.g., Almond et al., 2002; Mislevy, 2007; Mislevy et al., 2002; Steinberg et al., 2003), and the construct-modeling approach has been developed by Wilson and his colleagues (see, e.g., Wilson, 2005). Both use a construct-centered approach to task development, and both closely follow the evidentiary reasoning logic spelled out by the NRC assessment triangle.

A construct-centered approach differs from more traditional approaches to assessment, which may focus primarily on surface features of tasks, such as how they are presented to students, or the format in which students are asked to respond.2 For instance, multiple-choice items are often considered to be useful only for assessing low-level processes, such as recall of facts, while performance tasks may be viewed as the best way to elicit more complex cognitive processes. However, multiple-choice questions can in fact be designed to tap complex cognitive processes (Wilson, 2009; Briggs et al., 2006). Likewise, performance tasks, which are usually intended to assess higher-level cognitive processes, may inadvertently tap only low-level ones (Baxter and Glaser, 1998; Hamilton et al., 1997; Linn et al., 1991). There are, of course, limitations to the range of constructs that multiple-choice items can assess.

As we noted in Chapter 2, assessment tasks that comprise multiple interrelated questions, or components, will be needed to assess the NGSS performance expectations. Further, a range of item formats, including construct-response and performance tasks, will be essential for the assessment of three-dimensional learning consonant with the framework and the NGSS. A construct-centered approach

___________

2Messick (1994) distinguishes between task-centered performance assessment, which begins with a specific activity that may be valued in its own right (e.g., an artistic performance) or from which one can score particular knowledge or skills, and construct-centered performance assessment, which begins with a particular construct or competency to be measured and creates a task in which it can be revealed.

focuses on “the knowledge, skills, or other attributes to be assessed” and considers “what behaviors or performances should reveal those constructs and what tasks or situations should elicit those behaviors” (Messick, 1994, p. 16). In a construct-centered approach, the selection and development of assessment tasks, as well as the scoring rubrics and criteria, are guided by the construct to be assessed and the best ways of eliciting evidence about a student’s proficiency with that construct.

Both evidence-centered design and construct-modeling approach the process of assessment design and development by:

- analyzing the cognitive domain that is the target of an assessment;

- specifying the constructs to be assessed in language detailed enough to guide task design;

- identifying the inferences that the assessment should support;

- laying out the type of evidence needed to support those inferences;

- designing tasks to collect that evidence, modeling how the evidence can be assembled and used to reach valid conclusions; and

- iterating through the above stages to refine the process, especially as new evidence becomes available.

Both methods are called “principled” approaches to assessment design in that they provide a methodical and systematic approach to designing assessment tasks that elicit student performances that reveal their proficiency. Observation of these performances can support inferences about the constructs being measured. Both are approaches that we judged to be useful for developing assessment tasks that effectively measure content intertwined with practices.

Evidence-Centered Design

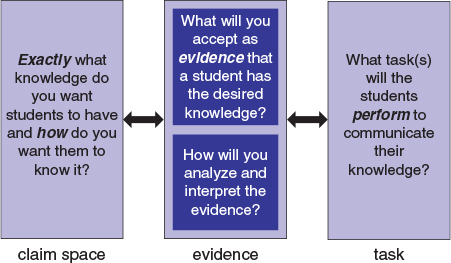

The evidence-centered design approach to assessment development is the product of conceptual and practical work pursued by Mislevy and his colleagues (see, e.g., Almond et al., 2002; Mislevy, 2007; Mislevy and Haertel, 2006; Mislevy et al., 2002; Steinberg et al., 2003). In this approach, designers construct an assessment argument that is a claim about student learning that is supported by evidence relevant to the intended use of the assessment (Huff et al., 2010). The claim should be supported by observable and defensible evidence.

Figure 3-2 shows these three essential components of the overall process. The process starts with defining as precisely as possible the claims that one wants

FIGURE 3-2 Simplified representation of three critical components of the evidence-centered design process and their reciprocal relationships.

SOURCE: Pellegrino et al. (2014, fig. 29.2, p. 576). Reprinted with the permission of Cambridge University Press.

to be able to make about students’ knowledge and the ways in which students are supposed to know and understand some particular aspect of a content domain. Examples might include aspects of force and motion or heat and temperature. The most critical aspect of defining the claims one wants to make for purposes of assessment is to be as precise as possible about the elements that matter and to express them in the form of verbs of cognition (e.g., compare, describe, analyze, compute, elaborate, explain, predict, justify) that are much more precise and less vague than high-level cognitive superordinate verbs, such as know and understand. Guiding this process of specifying the claims is theory and research on the nature of domain-specific knowing and learning.

Although the claims one wishes to make or verify are about the student, they are linked to the forms of evidence that would provide support for those claims—the warrants in support of each claim. The evidence statements associated with given sets of claims capture the features of work products or performances that would give substance to the claims. This evidence includes which features need to be present and how they are weighted in any evidentiary scheme (i.e., what matters most and what matters least or not at all). For example, if the evi-

dence in support of a claim about a student’s knowledge of the laws of motion is that the student can analyze a physical situation in terms of the forces acting on all the bodies, then the evidence might be a diagram of bodies that is drawn with all the forces labeled, including their magnitudes and directions.

The value of the precision that comes from elaborating the claims and evidence statements associated with a domain of knowledge and skill is clear when one turns to the design of the tasks or situations that can provide the requisite evidence. In essence, tasks are not designed or selected until it is clear what forms of evidence are needed to support the range of claims associated with a given assessment situation. The tasks need to provide all the necessary evidence, and they should allow students to “show what they know” in a way that is as unambiguous as possible with respect to what the task performance implies about their knowledge and skill (i.e., the inferences about students’ cognition that are permissible and sustainable from a given set of assessment tasks or items).3

As noted above, the NGSS have begun the work of defining such claims about student proficiency by developing performance expectations, but it is only a beginning. The next steps are to determine the observations—the forms of evidence in student work—that are needed to support the claims and then to develop the tasks or situations that will elicit the required evidence. This approach goes beyond the typical approach to assessment development, which generally involves simply listing specific content and skills to be covered and asking task developers to produce tasks related to these topics. The evidence-centered design approach looks at the interaction between content and skills to discern, for example, how students reason about a particular content area or construct. Thus, ideally, this approach yields test scores that are very easy to understand because the evidentiary argument is based not on a general claim that the student “knows the content,” but on a comprehensive set of claims that indicate specifically what the student can do within the domain. The claims that are developed through this approach can be guided by the purpose for assessment (e.g., to evaluate a students’ progress during a unit of instruction, to evaluate a students’ level of achievement at the end of a course) and targeted to a particular audience (e.g., students, teachers).

___________

3For more information on this approach, see National Research Council (2003), as well as the references cited above.

Evidence-centered design rests on the understanding that the context and purpose for an educational assessment affects the way students manifest the knowledge and skills to be measured, the conditions under which observations will be made, and the nature of the evidence that will be gathered to support the intended inference. Thus, good assessment tasks cannot be developed in isolation; they must be designed around the intended inferences, the observations, the performances that are needed to support those inferences, the situations that will elicit those performances, and a chain of reasoning that will connect them.

Construct Modeling

Wilson (2005) proposes another approach to assessment development: construct modeling. This approach uses four building blocks to create assessments and has been used for assessments of both science content (Briggs et al., 2006; Claesgens et al., 2009; Wilson and Sloane, 2000) and science practices (Brown et al., 2010), as well as to design and test models of the typical progression of understanding of particular concepts (Black et al., 2011; Wilson, 2009). The building blocks are viewed as a guide to the assessment design process, rather than as step-by-step instructions.

The first building block is specification of the construct, in the form of a construct map. Construct maps consist of working definitions of what is to be measured, arranged in terms of consecutive levels of understanding or complexity.4 The second building block is item design, a description of the possible forms of items and tasks that will be used to elicit evidence about students’ knowledge and understanding as embodied in the constructs. The third building block is the outcome space, a description of the qualitatively different levels of responses to items and tasks that are associated with different levels of the construct. The last building block is the measurement model, the basis on which assessors and users associate scores earned on items and tasks with particular levels of the construct; the measurement model relates the scored responses to the constructs. These building blocks are described in a linear fashion, but they are intended to work as elements of a development cycle, with successive iterations producing better coherence among the blocks.5

___________

4When the construct is multidimensional, multiple constructs will be developed, one for each outcome dimension.

5For more information on construct modeling, see National Research Council (2003, pp. 89-104), as well as the references cited above.

In the next section, we illustrate the steps one would take in using the two construct-centered approaches to the development of assessment tasks. We first illustrate the evidence-centered design approach using an example developed by researchers at SRI International. We then illustrate the construct-modeling approach using an example from the Berkeley Evaluation and Assessment Research (BEAR) System.

ILLUSTRATIONS OF TASK-DESIGN APPROACHES

In this section, we present illustrations of how evidence-centered design and construct modeling can be used to develop an assessment task. The first example is for students at the middle school level; the second is for elementary school students. In each case, we first describe the underlying design process and then the task.

Evidence-Centered Design—Example 2: Pinball Car Task

Our example of applying evidence-centered design is drawn from work by a group of researchers at SRI International.6 The task is intended for middle school students and was designed to assess student’s knowledge of both science content and practices. The content being assessed is knowledge of forms of energy in the physical sciences, specifically knowledge of potential and kinetic energy and knowledge that objects in motion possess kinetic energy. In the assessment task, students observe the compression of a spring attached to a plunger, the kind of mechanism used to put a ball “in play” in a pinball machine. A student observes that when the plunger is released, it pushes a toy car forward on a racing track. The potential energy in the compressed spring is transformed, on the release of the plunger, into kinetic energy that moves the toy car along the racing track. The student is then asked to plan an investigation to examine how the properties of the compression springs influence the distance the toy car travels on the race track.

Although the task was developed prior to the release of the NGSS, it was designed to be aligned with A Framework for K-12 Science Education: Practices, Crosscutting Concepts, and Core Ideas. The task is related to the crosscutting concept of “energy and matter: flows, cycles and conservation.” The task was designed to be aligned with two scientific practices: planning an investigation and analyzing and interpreting data. The concepts are introduced to students by pro-

___________

6Text is adapted from Haertel et al. (2012). Used with permission.

viding them with opportunities to track changes in energy and matter into, out of, and within systems. The task targets three disciplinary core ideas: definitions of energy, conservation of energy and energy transfer, and the relationship between energy and force.

Design of the Task

The task was designed using a “design pattern,” a tool developed to support work at the step of domain modeling in evidence-centered design, which involves the articulation and coordination of claims and evidence statements (see Mislevy et al., 2003). Design patterns help an assessment developer consider the key elements of an assessment argument in narrative form. The subsequent steps in the approach build on the arguments sketched out in domain modeling and represented in the design patterns, including designing tasks to obtain the relevant evidence, scoring performance, and reporting the outcomes. The specific design pattern selected for this task supports the writing of storyboards and items that address scientific reasoning and process skills in planning and conducting experimental investigations. This design pattern could be used to generate task models for groups of tasks for science content strands that are amenable to experimentation.

In the design pattern, the relevant knowledge, skills, and abilities (i.e., the claims about student competence) assessed for this task include the following (Rutstein and Haertel, 2012):

- ability to identify, generate, or evaluate a prediction/hypothesis that is testable with a simple experiment;

- ability to plan and conduct a simple experiment step-by-step given a prediction or hypothesis;

- ability to recognize that at a basic level, an experiment involves manipulating one variable and measuring the effect on (or value of) another variable;

- ability to identify variables of the scientific situation (other than the ones being manipulated or treated as an outcome that should be controlled (i.e., kept the same) in order to prevent misleading information about the nature of the causal relationship; and

- ability to interpret or appropriately generalize the results of a simple experiment or to formulate conclusions or create models from the results.

Evidence of these knowledge, skills, and abilities will include both observations and work products. The potential observations include the following (Rutstein and Haertel, 2012):

- Generate a prediction/hypothesis that is testable with a simple experiment.

- Provide a “plausibility” (explanation) of plan for repeating an experiment.

- Correctly identify independent and dependent variables.

- Accurately identify variables (other than the treatment variables of interest) that should be controlled or made equivalent (e.g., through random assignment).

- Provide a “plausibility” (explanation) of design for a simple experiment.

- Be able to accurately critique the experimental design, methods, results, and conclusions of others.

- Recognize data patterns from experimental data.

The relevant work products include the following (Rutstein and Haertel, 2012):

- Select, identify, or evaluate an investigable question.

- Complete some phases of experimentation with given information, such as selection levels or determining steps.

- Identify or differentiate variables that do and do not need to be controlled in a given scientific situation.

- Generate an interpretation/explanation/conclusion from a set of experimental results.

The Pinball Car Task7

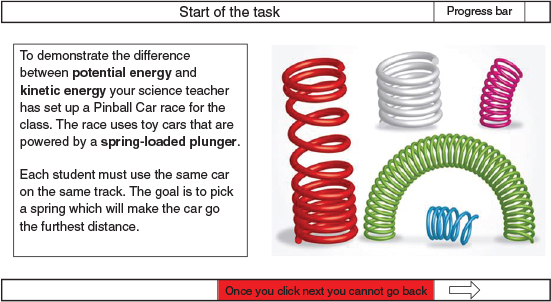

Scene 1: A student poses a hypothesis that can be investigated using the simulation presented in the task. The student is introduced to the task and provided with some background information that is important throughout the task: see Figure 3-3. Science terminology and other words that may be new to the student (highlighted in bold) have a roll-over feature that shows their definition when the student scrolls over the word.

The student selects three of nine compression springs to be used in the pinball plunger and initiates a simulation, which generates a table of data that illustrates how far the race car traveled on the race track using the particular compression springs that were selected. Data representing three trial runs are presented

___________

7This section is largely taken from Haertel et al. (2012, 2013).

FIGURE 3-3 Task introduction.

NOTE: See text for discussion.

SOURCE: Haertel et al. (2012, 2013, Appendix A2). Reprinted with permission from SRI International.

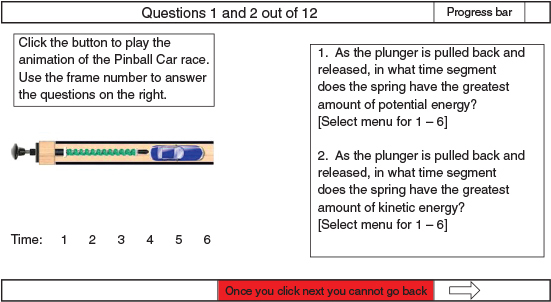

FIGURE 3-4 Animation of a pinball car race.

NOTE: See text for discussion.

SOURCE: Haertel et al. (2012, 2013, Appendix A2). Reprinted with permission from SRI International.

each time the simulation is initiated. The student runs the simulation twice for a total of six trials of data for each of the three springs selected.

Scene 2: The student plays an animation that shows what a pinball car race might look like in the classroom: see Figure 3-4. The student uses the animation and its time code to determine the point in which the spring had the greatest potential and kinetic energy.

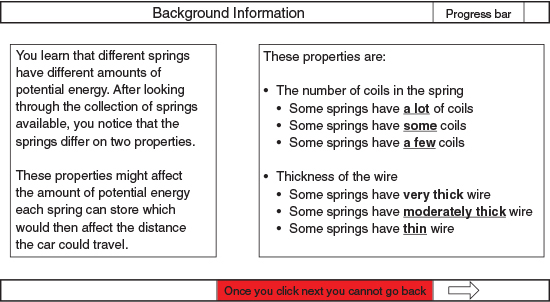

Scene 3: This scene provides students with background information about springs and introduces them to two variables, the number of coils and the thickness of the wire: see Figure 3-5.

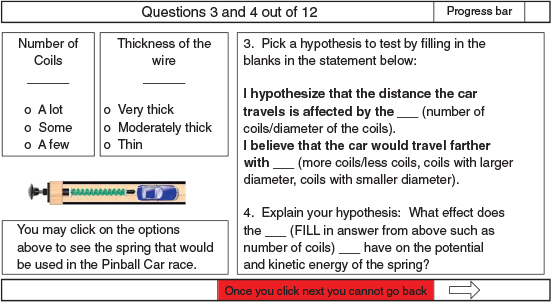

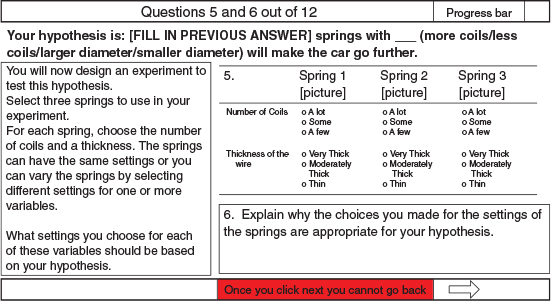

Scene 4: Using the information from Scene 3, the student poses a hypothesis about how these properties might influence the distance the race car travels after the spring plunger is released; see Figure 3-6. The experiment requires that students vary or control each of the properties of the spring.

FIGURE 3-5 Background information.

NOTE: See text for discussion.

SOURCE: Haertel et al. (2012, 2013, Appendix A2). Reprinted with permission from SRI International.

FIGURE 3-6 Picking a hypothesis.

NOTE: See text for discussion.

SOURCE: Haertel et al. (2012, 2013, Appendix A2). Reprinted with permission from SRI International.

FIGURE 3-7 Designing an experiment for the hypothesis.

NOTE: See text for discussion.

SOURCE: Haertel et al. (2012, 2013, Appendix A2). Reprinted with permission from SRI International.

Scene 5: The student decides whether one or both of the properties of the spring will serve as independent variables and whether one or more of the variables will serve as control variables; see Figure 3-7.

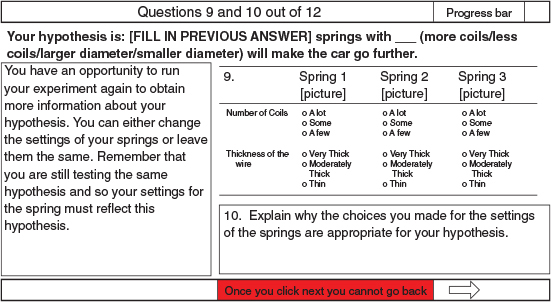

Scene 6: In completing the task, the student decides how many trials of data are needed to produce reliable measurements and whether the properties of the springs need to be varied and additional data collected before the hypothesis can be confirmed or disconfirmed.

Scene 7: Once a student has decided on the levels of the properties of the spring to be tested, the simulation produces a data table, and the student must graph the data and analyze the results.

Scene 8: Based on the results, the student may revise the hypothesis and run the experiment again, changing the settings of the variables to reflect a revision of their model of how the properties of the springs influence the distance the toy car travels: see Figure 3-8.

FIGURE 3-8 Option to rerun the experiment.

NOTE: See text for discussion.

SOURCE: Haertel et al. (2012, 2013, Appendix A2). Reprinted with permission from SRI International.

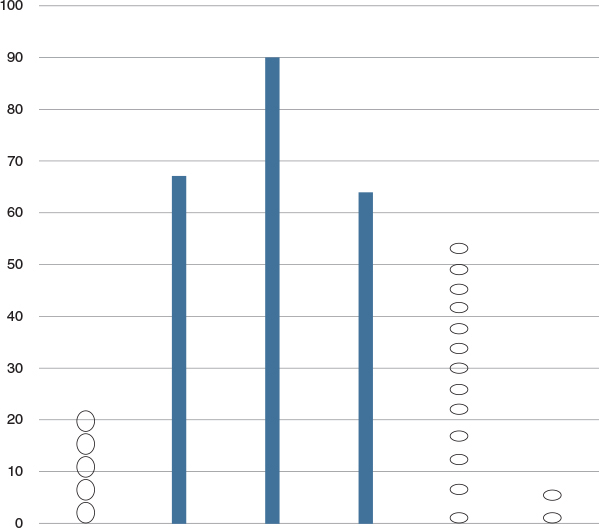

FIGURE 3-9 Results of two experiments.

NOTE: See text for discussion.

SOURCE: Haertel et al. (2012, 2013, Appendix A2). Reprinted with permission from SRI International.

FIGURE 3-10 Use of results from the two experiments.

NOTE: See text for discussion.

SOURCE: Haertel et al. (2012, 2013, Appendix A2). Reprinted with permission from SRI International.

FIGURE 3-11 Final result of the pinball car task.

NOTE: See text for discussion.

SOURCE: Haertel et al. (2012, 2013, Appendix A2). Reprinted with permission from SRI International.

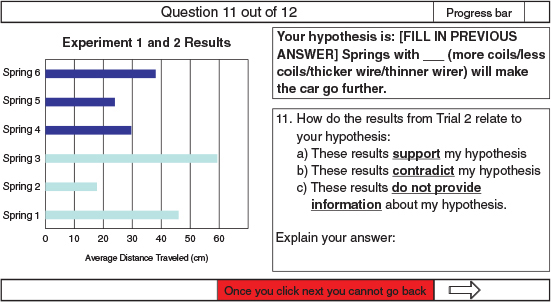

Scene 9: If the student chose to run the experiment a second time, the results of both experiments are now shown on the same bar chart: see Figure 3-9.

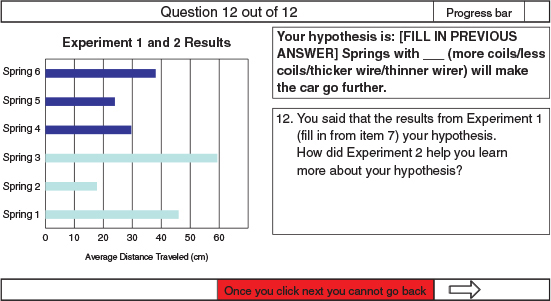

Scene 10: The student is asked how the results of the second experiment relate to her or his hypothesis: see Figure 3-10.

Scene 11: The final scene gives the student the spring characteristics that would lead to the car going the furthest distance and winning the race: see Figure 3-11.

Scoring

The pinball car task was developed as a prototype to demonstrate the use of design patterns in developing technology-enhanced, scenario-based tasks of hard-to-assess concepts. It has been pilot tested but not administered operationally. The developers suggest that the tasks could be scored several ways. It could be scored by summing those items aligned primarily to content standards and those aligned primarily to practice standards, thus producing two scores. Or the task could generate an overall score based on the aggregation of all items, which is more in keeping with the idea of three-dimensional science learning in the framework.

Alternatively, the specific strengths and weaknesses in students’ understanding could be inferred from the configurations of their correct and incorrect responses according to some more complex decision rule.

Construct Modeling: Measuring Silkworms

In this task, 3rd-grade elementary school students explored the distinction between organismic and population levels of analysis by inventing and revising ways of visualizing the measures of a large sample of silkworm larvae at a particular day of growth. The students were participating in a teacher-researcher partnership aimed at creating a multidimensional learning progression to describe practices and disciplinary ideas that would help young students consider evolutionary models of biological diversity.

The learning progression was centered on student participation in the invention and revision of representations and models of ecosystem functioning, variability, and growth at organismic and population levels (Lehrer and Schauble, 2012). As with other examples in this report, the task was developed prior to the publication of the NGSS, but is aligned with the life sciences progression of the NGSS: see Tables 1-1 and 3-1. The practices listed in the tables were used in the

TABLE 3-1 Assessment Targets for Example 3 (Measuring Silkworms) and the Next Generation Science Standards (NGSS) Learning Progressions

| Disciplinary Core Idea from the NGSS | Practices | Performance Expectation | Crosscutting Concept |

| LS1.A Structure and function (grades 3-5): Organisms have macroscopic structures that allow for growth. | Asking questions |

Observe and analyze the external structures of animals to explain how these structures help the animals meet their needs. | Patterns |

| Planning and carrying out Investigations |

|||

| Analyzing and interpreting data | |||

| LS1.B Growth and development of organisms (grades 3-5): Organisms have unique and diverse life cycles. | Using mathematics |

||

| Constructing explanations |

Gather and use data to explain that young animals and plants grow and change. Not all individuals of the same kind of organism are exactly the same: there is variation. | ||

| Engaging in argument from evidence |

|||

| Communicating information | |||

NOTES: LS1.A and LS1.B refer to the disciplinary core ideas in the framework: see Box 2-1 in Chapter 2.

development of core ideas about organism growth. The classroom-embedded task was designed to promote a shift in student thinking from the familiar emphasis on individual organisms to consideration of a population of organisms: to do so, the task promotes the practice of analyzing and interpreting data. Seven dimensions have been developed to specify this multidimensional construct, but the example focuses on just one: reasoning about data representation (Lehrer et al., 2013). Hence, an emerging practice of visualizing data was coordinated with an emerging disciplinary core idea, population growth, and with the crosscutting theme of pattern.

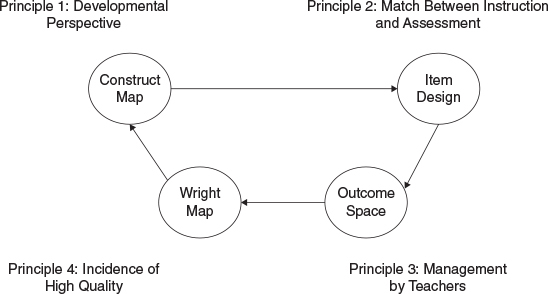

The BEAR Assessment System for Assessment Design

The BEAR Assessment System (BAS) (Wilson, 2005) is a set of practical procedures designed to help one apply the construct-modeling approach. It is based on four principles—(1) a developmental perspective, (2) a match between instruction and assessment, (3) management by teachers, and (4) evidence of high quality—each of which has a corresponding element: see Figure 3-12. These elements func-

FIGURE 3-12 The BEAR system.

SOURCE: Wilson (2009, fig. 2, p. 718). Reprinted with permission from John Wiley & Sons.

tion in a cycle, so that information gained from each phase of the process can be used to improve other elements. Current assessment systems rarely allow for this sort of continuous feedback and refinement, but the developers of the BAS believe it is critical (as in any engineering system) to respond to results and developments that could not be anticipated.

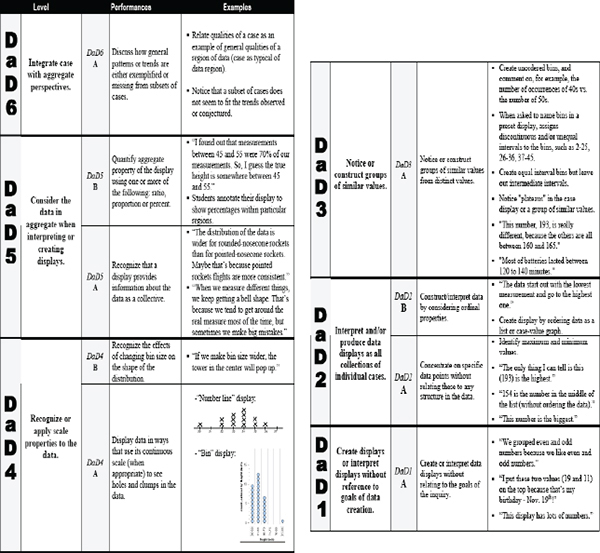

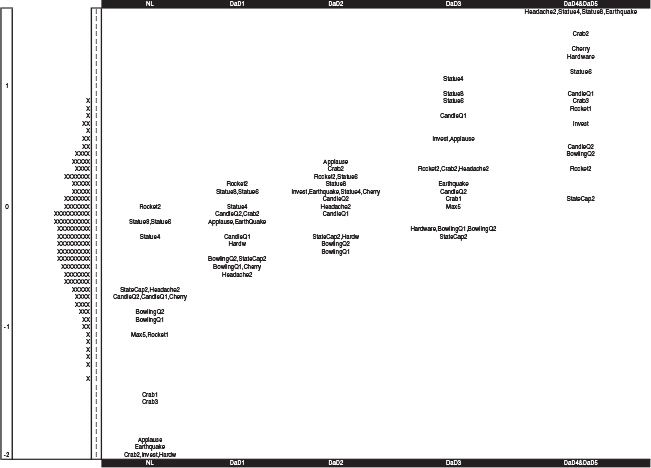

The first element of BAS is the construct map, which defines what is to be assessed. The construct map has been described as a visual metaphor for the ways students’ understanding develops, and, correspondingly, how it is hypothesized that their responses to items might change (Wilson, 2005). Figure 3-13 is an example of a construct map for one aspect of analyzing and interpreting data, data display (abbreviated as “DaD”). The construct map describes significant milestones in children’s reasoning about data representation, presenting them as a progression from a stage in which students focus on individual case values (e.g., the students describe specific data points) to a stage when they are capable of reasoning about patterns of aggregation. The first and third columns of Figure 3-13 display the six levels associated with this construct, with Level 6 being the most sophisticated.

The second BAS element is item design, which specifies how the learning performances described by the construct will be elicited. It is the means by which the match between the curriculum and the assessment is established. Item design can be described as a set of principles that allow one to observe students under a set of standard conditions (Wilson, 2005). Most critical is that the design specifications make it possible to observe each of the levels and sublevels described in the construct map.

The third element, outcome space, is a general guide to the way students’ responses to items developed in relation to a particular construct map will be valued. The more specific guidance developed for a particular item is used as the actual scoring guide, which is designed to ensure that student responses can be interpreted in light of the construct map. The third column of Figure 3-13 is a general scoring guide. The final element of BAS, a Wright map, is a way to apply the measurement model, to collect the data and link it back to the goals for the assessment and the construct maps.8 The system relies on a multidimensional way of organizing statistical evidence of the quality of the assessment, such as its reli-

___________

8A Wright map is a figure that shows both student locations and item locations on the same scale—distances along it are interpreted in terms of the probability of success of a student at that location succeeding at an item at that location (see Wilson, 2004, Chapter 5)

ability, validity, and fairness. Item-response models show students’ performance on particular elements of the construct map across time; they also allow for comparison within a cohort of students or across cohorts.

The Silkworm Growth Activity

In our example, the classroom activity for assessment was part of a classroom investigation of the nature of growth of silkworm larvae. The silkworm larvae are a model system of metamorphic insect growth. The investigation was motivated by students’ questions and by their decisions about how to measure larvae at different days of growth. The teacher asked students to invent a display that communicated what they noticed about the collection of their measures of larvae length on a particular day of growth.

Inventing a display positioned students to engage spontaneously with the forms of reasoning described by the DaD construct map (see Figure 3-13, above): the potential solutions were expected to range from Levels 1 to 5 of the construct. In this classroom-based example, the item design is quite informal, being simply what the teacher asked the students to do. However, the activity was designed to support the development of the forms of reasoning described by the construct.

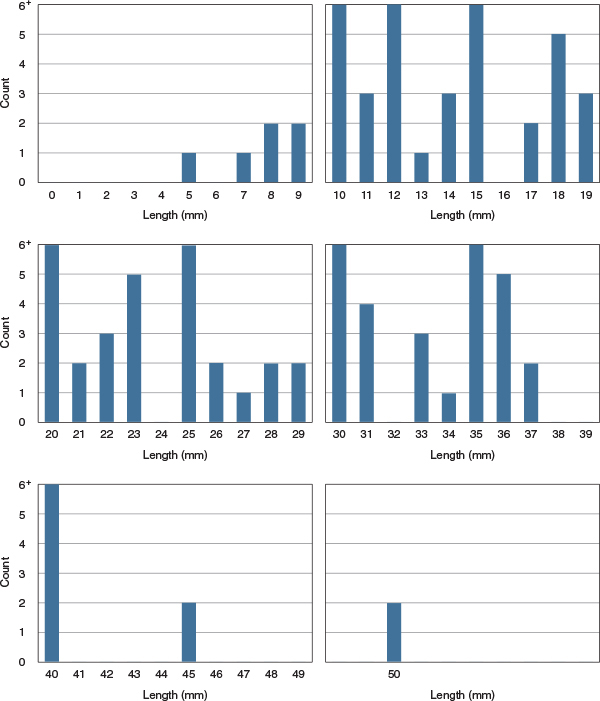

One data display that several groups of students created was a case-value graph that ordered each of 261 measurements of silkworms by magnitude: see Figure 3-14. The resulting display occupied 5 feet of the classroom wall. In this representation, the range of data is visible at a glance, but the icons resembling the larvae and representing each millimeter of length are not uniform. This is an example of student proficiency at Level 2 of the construct map. The second display developed by the student groups used equal-sized intervals to show equivalence among classes of lengths: see Figure 3-15. By counting the number of cases within each interval, the students made a center clump visible. This display makes the shape of the data more visible; however, the use of space was not uniform and produced some misleading impressions about the frequency of longer or shorter larvae. This display represents student proficiency at Level 3 of the construct map.

The third display shows how some students used the measurement scale and counts of cases, but because of difficulties they experienced with arranging the display on paper, they curtailed all counts greater than 6: see Figure 3-16. This display represents student proficiency at Level 4 of the construct map

The displays that students developed reveal significant differences in how they thought about and represented their data. Some focused on case values, while others were able to use equivalence and scale to reveal characteristics of the data

FIGURE 3-14 Facsimile of a portion of a student-created case-value representation of silkworm larvae growth.

SOURCE: Lehrer (2011). Copyright by the author; used with permission.

in aggregate. The construct map helped the teacher appreciate the significance of these differences.

To help students develop their competence at representing data, the teacher invited them to consider what selected displays show and do not show about the data. The purpose was to convey that all representational choices emphasize certain features of data and obscure others. During this conversation, the students critiqued how space was used in the displays to represent lengths of the larvae and began to

FIGURE 3-15 Facsimile of student-invented representation of groups of data values for silkworm larvae growth.

NOTE: The original used icons to represent the organisms in each interval.

SOURCE: Lehrer (2011). Copyright by the author; used with permission.

appreciate the basis of conventions about display regarding the use of space, a form of meta-representational competence (diSessa, 2004). The teacher also led a conversation about the mathematics of display, including the use of order, count, and interval and measurement scale to create different senses of the shape of the data. (This focus on shape corresponds to the crosscutting theme of pattern in the

NGSS.) Without this instructional practice, well-orchestrated discussion led by the teacher—who was guided by the construct map in interpreting and responding to student contributions—students would be unlikely to discern the bell-like shape that is often characteristic of natural variation.

The focus on the shape of the data was a gentle introduction to variability that influenced subsequent student thinking about larval growth. As some students examined Figure 3-16, they noticed that the tails of the distribution were comparatively sparse, especially for the longer silkworm larvae, and they wondered why. They speculated that this shape suggested that the organisms had differential access to resources. They related this possibility to differences in the timing of larval hatching and conjectured that larvae that hatched earlier might have begun eating and growing sooner and therefore acquired an advantage in the competition for food. The introduction of competition into their account of variability and growth was a new form of explanation, one that helped them begin to think beyond individual organisms to the population level. In these classroom discussions, the teacher blends instruction and diagnosis of student thinking for purposes of formative assessment.

Other Constructs and a Learning Progression

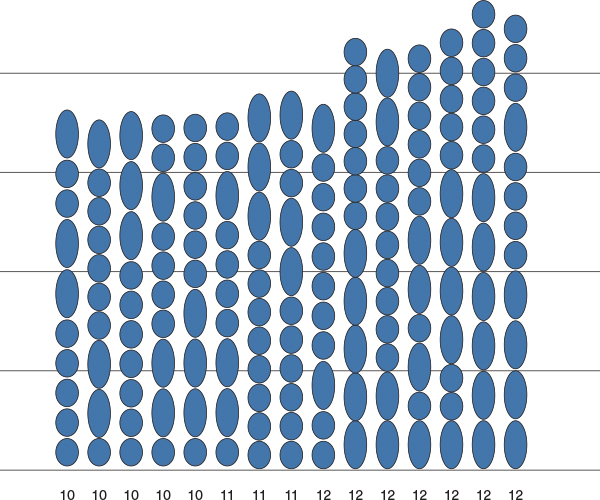

Our example is a classroom-intensive context, and formal statistical modeling of this small sample of particular student responses would not be useful. However, the responses of other students involved in learning about data and statistics by inventing displays, measures, and models of variability (Lehrer et al., 2007, 2011) were plotted using a DaD construct map (see Figure 3-13, above), and the results of the analysis of those data are illustrated in Figure 3-17 (Schwartz et al., 2011). In this figure, the left-hand side shows the units of the scale (in logits9) and also the distributions of the students along the DaD construct. The right-hand side shows the locations of the items associated with the levels of the construct—the first column (labeled “NL”) is a set of responses that are pre-Level 1—that is, they are responses that do not yet reach Level 1, but they show some relevancy, even if it is just making appropriate reference to the item. These points (locations of the thresholds) are where a student is estimated to have a probability of 0.50 of

___________

9The logit scale is used to locate both examinees and assessment tasks relative to a common, underlying (latent) scale of both student proficiency and task difficulty. The difference in logits between an examinee’s proficiency and a task’s difficulty is equal to the logarithm of the odds of a correct response to that task by that examinee, as determined by a statistical model.

FIGURE 3-17 Wright map of the DaD construct.

SOURCE: Wilson et al. (2013). Copyright by the author; used with permission.

responding at that level or below. Using this figure, one can then construct bands that correspond to levels of the construct and help visualize relations between item difficulties and the ordered levels of the construct. This is a more focused test of construct validity than traditional measures of item fit, such as the mean square or others (Wilson, 2005).

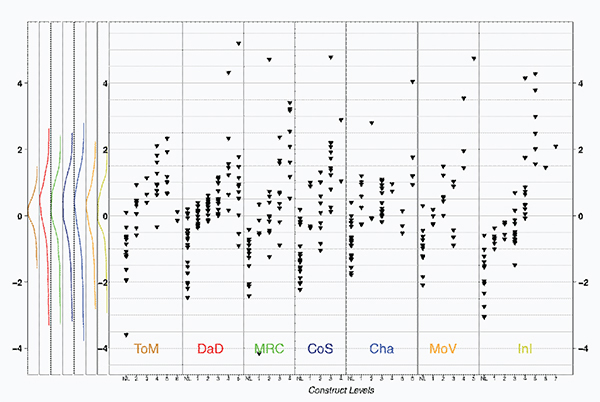

The DaD construct is but one of seven assessed with this sample of students, so BAS was applied to each of the seven constructs: theory of measurement, DaD, meta-representational competence, conceptions of statistics, chance, models of variability, and informal inference (Lehrer et al., 2013); see Figure 3-18.

FIGURE 3-18 Wright map of the seven dimensions assessed for analyzing and interpreting data.

NOTES: Cha = chance, CoS = conceptions of statistics, DaD = data display, InI = informal inference, MoV = models of variability, MRC = meta-representational competence, ToM = theory of measurement. See text for discussion.

SOURCE: Wilson et al. (2013). Copyright by the author; used by permission.

- Theory of measurement maps the degree to which students understand the mathematics of measurement and develop skills in measuring. This construct represents the basic area of knowledge in which the rest of the constructs are played out.

- DaD traces a progression in learning to construct and read graphical representations of the data from an initial emphasis on cases toward reasoning based on properties of the aggregate.

- Meta-representational competence, which is closely related to DaD, proposes keystone performances as students learn to harness varied representations for making claims about data and to consider tradeoffs among representations in light of these claims.

- Conceptions of statistics propose a series of landmarks as students come to first recognize that statistics measure qualities of the distribution, such as center and spread, and then go on to develop understandings of statistics as generalizable and as subject to sample-to-sample variation.

- Chance describes the progression of students’ understanding about how chance and elementary probability operate to produce distributions of outcomes.

- Models of variability refer to the progression of reasoning about employing chance to model a distribution of outcomes produced by a process.

- Informal inference describes a progression in the basis of students’ inferences, beginning with reliance on cases and ultimately culminating in using models of variability to make inferences based on single or multiple samples.

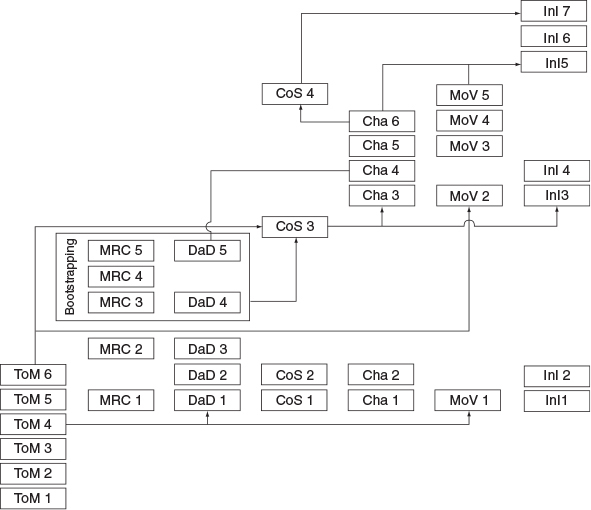

These seven constructs can be plotted as a learning progression that links the theory of measurement, a construct that embodies a core idea, with the other six constructs, which embody practices: see Figure 3-19. In this figure, each vertical set of levels is one of the constructs listed above. In addition to the obvious links between the levels within a construct, this figure shows hypothesized links between specific levels of different constructs. These are interpreted as necessary prerequisites: that is, the hypothesis is that a student needs to know the level at the base of the arrow before he or she can succeed on the level indicated at the point of the arrow. The area labeled as “bootstrapping” is a set of levels that require mutual support. Of course, performance on specific items will involve measurement error, so these links need to be investigated using multiple items within tasks.

Despite all the care that is taken in assessment design to ensure that the developed tasks measure the intended content and skills, it is still necessary to evaluate empirically that the inferences drawn from the assessment results are valid. Validity refers to the extent to which assessment tasks measure the skills that they are intended to measure (see, e.g., Kane, 2006, 2013; Messick, 1993; National Research Council, 2001, 2006). More formally, “Validity is an integrated evaluative judgment of the degree to which empirical evidence and theoretical rationales support the adequacy and appropriateness of inferences and actions based on the test” (Messick, 1989, p. 13). Validation involves evaluation of the proposed interpretations and uses of the assessment results, using different kinds of evidence,

FIGURE 3-19 Learning progression for analyzing and interpreting data.

NOTES: Cha = chance, CoS = conceptions of statistics, DaD = data display, InI = informal inference, MoV = models of variability, MRC = meta-representational competence, ToM = theory of measurement. See text for discussion.

evidence that is rational and empirical and both qualitative and quantitative. For the examples discussed in this report, validation would include analysis of the processes and theory used to design and develop the assessment, evidence that the respondents were indeed thinking in the ways envisaged in that theory, the internal structure of the assessment, the relationships between results and other outcome measures, and whether the consequences of using the assessment results were as expected, and other studies designed to examine the extent to which the

intended interpretations of assessment results are fair, justifiable, and appropriate for a given purpose (see American Educational Research Association, American Psychological Association, and National Council on Measurement in Education, 1999).

Evidence of validity is typically collected once a preliminary set of tasks and corresponding scoring rubrics have been developed. Traditionally, validity concerns associated with achievement tests have focused on test content, that is, the degree to which the test samples the subject matter domain about which inferences are to be drawn. This sort of validity is confirmed through evaluation of the alignment between the content of the assessment tasks and the subject-matter framework, in this case, the NGSS.

Measurement experts increasingly agree that traditional external forms of validation, which emphasize consistency with other measures, as well as the search for indirect indicators that can show this consistency statistically, should be supplemented with evidence of the cognitive and substantive aspects of validity (Linn et al., 1991; Messick, 1993). That is, the trustworthiness of the interpretation of test scores should rest in part on empirical evidence that the assessment tasks actually reflect the intended cognitive processes. There are few alternative measures that assess the three-dimensional science learning described in the NGSS and hence could be used to evaluate consistency, so the empirical validity evidence will be especially important for the new assessments that states will be developing as part of their implementation of the NGSS.

Examining the processes that students use as they perform an assessment task is one way to evaluate whether the tasks are functioning as intended, another important component of validity. One method for doing this is called protocol analysis (or cognitive labs), in which students are asked to think aloud as they solve problems or to describe retrospectively how they solved the problem (Ericsson and Simon, 1984). Another method is called analysis of reasons, in which students are asked to provide rationales for their responses to the tasks. A third method, analysis of errors, is a process of drawing inferences about students’ processes from incorrect procedures, concepts, or representations of the problems (National Research Council, 2001).

The empirical evidence used to investigate the extent to which the various components of an assessment actually perform together in the way they were designed to is referred to collectively as evidence based on the internal structure of the test (see American Educational Research Association, American Psychological Association, and National Council on Measurement in Education, 1999). For

example, in our example of measuring silkworm larvae growth, one form of evidence based on internal structure would be the match between the hypothesized levels of the construct maps and the empirical difficulty order shown in the measurement map in Figure 3-15 above.

One critical aspect of validity is fairness. An assessment is considered fair if test takers can demonstrate their proficiency in the targeted content and skills without other, irrelevant factors interfering with their performance. Many attributes of test items can contribute to what measurement experts refer to as construct-irrelevant variance, which occurs when the test questions require skills that are not the focus of the assessment. For instance, an assessment that is intended to measure a certain science practice may include a lengthy reading passage. Besides assessing skill in the particular practice, the question will also require a certain level of reading skill. Assessment respondents who do not have sufficient reading skills will not be able to accurately demonstrate their proficiency with the targeted science skills. Similarly, respondents who do not have a sufficient command of the language in which an assessment is presented will not be able to demonstrate their proficiency in the science skills that are the focus of the assessment. Attempting to increase fairness can be difficult, however, and can create additional problems. For example, assessment tasks that minimize reliance on language by using online graphic representations may also introduce a new construct-irrelevant issue because students have varying familiarity with these kinds of representations or with the possible ways to interact with them offered by the technology.

Cultural, racial, and gender issues may also pose fairness questions. Test items should be designed so that they do not in some way disadvantage the respondent on the basis of those characteristics, social economic status, or other background characteristics. For example, if a passage uses an example more familiar or accessible to boys than girls (e.g., an example drawn from a sport in which boys are more likely to participate), it may give the boys an unfair advantage. Conversely, the opposite may occur if an example is drawn from cooking (with which girls are more likely to have experience). The same may happen if the material in the task is more familiar to students from a white, Anglo-Saxon background than to students from minority racial and ethnic backgrounds or more familiar to students who live in urban areas than those in rural areas.

It is important to keep in mind that attributes of tasks that may seem unimportant can cause differential performance, often in ways that are unexpected and not predicted by assessment designers. There are processes for bias and sensitivity reviews of assessment tasks that can help identify such problems before the assess-

ment is given (see, e.g., Basterra et al., 2011; Camilli, 2006; Schmeiser and Welch, 2006; Solano-Flores and Li, 2009). Indeed this process was begun by the NGSS. Their development work included a process to review and refine the performance expectations using this lens (see Appendix 4 of the NGSS). After an assessment has been given, analyses of differential item functioning can help identify problematic questions so that they can be excluded from scoring (see, e.g., see Camilli and Shepard, 1994; Holland and Wainer, 1993; Sudweeks and Tolman, 1993).

A particular concern for science assessment is the opportunity to learn—the extent to which students have had adequate instruction in the assessed material to be able to demonstrate proficiency on the targeted content and skills. Inferences based on assessment results cannot be valid if students have not had the opportunity to learn the tested material, and the problem is exacerbated when access to adequate instruction is uneven among schools, districts, and states. This equity issue has particular urgency in the context of a new approach to science education that places many new kinds of expectations on students. The issue was highlighted in A Framework for K-12 Science Education: Practices, Crosscutting Concepts, and Core Ideas (National Research Council, 2012a, p. 280), which noted:

. . . access to high quality education in science and engineering is not equitable across the country; it remains determined in large part by an individual’s socioeconomic class, racial or ethnic group, gender, language background, disability designation, or national origin.

The validity of science assessments designed to evaluate the content and skills depicted in the framework could be undermined simply because students do not have equal access to quality instruction. As noted by Pellegrino (2013), a major challenge in the validation of assessments designed to measure the NGSS performance expectations is the need for such work to be done in instructional settings where students have had adequate opportunity to learn the integrated knowledge envisioned by the framework and the NGSS. We consider this issue in more detail in Chapter 7 in the context of suggestions regarding implementation of next generation science assessments.

CONCLUSION 3-1 Measuring three-dimensional learning as conceptualized in the framework and the Next Generation Science Standards (NGSS) poses a number of conceptual and practical challenges and thus demands a rigorous approach to the process of designing and validating assessments. The endeav- or needs to be guided by theory and research about science learning to ensure

that the resulting assessment tasks are (1) consistent with the framework and NGSS, (2) provide information to support the intended inferences, and (3) are valid for the intended use.

RECOMMENDATION 3-1 To ensure that assessments of a given performance expectation in the Next Generation Science Standards provide the evidence necessary to support the intended inference, assessment designers should follow a systematic and principled approach to assessment design, such as evidence-centered design or construct modeling. In so doing, multiple forms of evidence need to be assembled to support the validity argument for an assessment’s intended interpretive use and to ensure equity and fairness.