This chapter reviews the methods used by the US Environmental Protection Agency (EPA) to identify, evaluate, and synthesize the scientific evidence on nonmonotonic dose response (NMDR) curves in its draft State of the Science Evaluation: Nonmonotonic Dose Responses as They Apply to Estrogen, Androgen, and Thyroid Pathways and EPA Testing and Assessment Procedures (the SOTS evaluation; EPA 2013a). To address its first task, to determine whether EPA “fairly and soundly evaluated the weight of evidence” on NMDR curves, the committee first identified the key design elements of a thorough, systematic, and transparent evaluation and synthesis of environmental health data (see Box 2-1).

Those elements are derived from accepted approaches to literature-based evidence synthesis in the clinical sciences, particularly methods for systematic review in clinical medicine (Guyatt et al. 2011; Higgins and Green 2011). The National Research Council (NRC 2011, 2013) has recommended that EPA use similar approaches to support and improve its toxicologic assessments in support of its Integrated Risk Information System program. The methods in clinical medicine are not directly transferable, because environmental health assessments include evidence from multiple lines of research (in vitro, animal, and human studies) whereas clinical-medicine evaluations are based exclusively on studies of humans (Woodruff and Sutton 2011; Birnbaum et al. 2013). However, modified methods have been proposed for evaluating environmental health evidence (e.g., Woodruff and Sutton 2011; Kushman et al. 2013), and efforts are under way in EPA (2013b) and the National Toxicology Program (NTP) Office of Health Assessment and Translation (NTP 2013) to incorporate systematic approaches into their toxicologic assessments. Although the committee recognizes that such a systematic approach has not yet been formally established,

BOX 2-1 Design Elements of a Systematic Review

• Define study question

• Specify methods for collecting and evaluating evidence

o Literature-search strategy

o Study inclusion and exclusion criteria

o Methods for evaluating study quality

o Data presentation

o Methods for analyzing and synthesizing evidence

EPA has received enough guidance and recommendations from other National Research Council reports (NRC 2009, 2011) to have considered the use of more consistent and transparent approaches similar to the design elements shown in Box 2-1 to develop the SOTS evaluation.

The committee thus used the following criteria to evaluate how each of the elements in Box 2-1 was addressed in the SOTS evaluation:

• Clarity: Is the SOTS evaluation clear in its description of how each element was addressed?

• Consistency: Is the SOTS evaluation consistent among topics in its application of methods and criteria?

• Appropriate methods: Were the SOTS evaluation’s approaches to evaluating evidence appropriate?

Those criteria were used to examine the assessments that were conducted both within and among the three hormone pathways considered (estrogen, androgen, and thyroid) and to evaluate the different streams of evidence (in vitro studies and in vivo studies of aquatic species and animal models).

EVALUATION OF THE ENVIRONMENTAL PROTECTION AGENCY’S APPROACH

EPA’s strategy for developing the SOTS evaluation was to pose three central scientific questions about NMDR curves with respect to the estrogen, androgen, and thyroid hormone pathways. The agency was faced with the difficult challenge of comprehensively identifying, evaluating, and summarizing the large volume of information required to address those questions. The foreword to the SOTS evaluation indicates that because of time constraints the agency used an expert-driven approach to conduct the evaluation, which involved having different groups evaluate the evidence on the three hormone pathways separately. However, EPA did not establish an analysis plan in advance for the writing groups to follow. Rather, the groups determined independently how to

perform their analyses. That led to the lack of an overall plan for the approach, differences in the degree of documentation provided about the literature search and selection process used by the groups, differences in criteria that were used for study selection, and unclear documentation of how study quality was evaluated and of how conclusions were drawn. The lack of transparency and the inconsistencies raise questions about the quality of the approaches used.

Lack of Transparency. There was a lack of transparency at key steps of the SOTS evaluation. Prominent examples include the lack of documentation of the literature-search methods used for the estrogen and androgen sections, the lack of explicit criteria for evaluating study quality, the lack of clarity of the weight-of-evidence (WOE) methods, and the inadequate explanation of the process used to identify which studies carry the most weight (and why). Furthermore, the methods of data synthesis and weighing of evidence to identify conditions under which NMDR curves occur were not presented transparently. Thus, it was unclear how the authors concluded that NMDR curves were found more often in in vitro studies, at high doses, and for exposures of short duration.

Inconsistency. Inconsistencies were found in the methods used to identify studies for consideration; in the study inclusion and exclusion criteria and their application; in the criteria for evaluating study quality; in how data were presented, weighed, and analyzed; and in how the key data for each section were summarized. Those inconsistencies resulted largely from not having established a protocol for the writing groups to follow or, if a protocol was established, from failure to adhere to it rigorously for each of the three different hormone modalities. In the absence of a clear framework and its consistent application, the separate writing groups inevitably used different methods of review; this calls into question whether a more systematic approach would have led to the same conclusions.

The sections below review specific aspects of the SOTS evaluation that led the committee to draw those overarching conclusions.

Protocol

EPA decided to use independent groups to draft the SOTS evaluation, but there was no protocol for performing the evaluations to ensure that the different groups followed the same methods to reach conclusions. Instead, each group was allowed to perform its analysis on the basis of expert judgment. Documentation of the methods used by each group was difficult to find and in most cases did not appear to be provided.

Stipulating the methods ahead of an assessment and then applying them consistently in the various sections is a means of reducing author bias and of providing transparency. Several National Research Council reports have highlighted the importance of planning and scoping. For example, Science and Decisions: Advancing Risk Assessment noted that “increased emphasis on planning

and scoping and on problem formulation has been shown to lead to risk assessments that are more useful and better accepted by decision-makers” (NRC 2009, p. 6). Chapter 7 of the 2011 report on formaldehyde called for EPA to “ensure standardization of review and evaluation approaches among contributors and teams of contributors; for example, include standard approaches for reviews of various types of studies to ensure uniformity” (NRC 2011, p. 164).

Study Questions

Defining the study questions determines the structure and scope of any assessment (IOM 2011). The committee found the three central scientific questions of the SOTS evaluation (Box 2-2) to be clear and reasonable. Question 1 is framed broadly and is open-ended with respect to determining the “conditions” under which NMDR curves might occur. The scope of the question suggested to the committee that EPA would evaluate all relevant streams of evidence to answer it. However, the SOTS evaluation was restricted to in vitro studies and in vivo studies of aquatic species and animal models. Because of inadequate resources, evidence from epidemiologic or other types of human studies is not considered beyond reference to reviews conducted by other groups (e.g., Vandenberg et al. 2012; EFSA 2013). Furthermore, the scope of conditions being considered was not specified. It appears that the evidence on the estrogen and androgen hormone pathways was restricted to chemicals that have narrowly defined modes of action (discussed below under “Study-Selection Criteria”); this would limit the mechanistic conditions under which an NMDR curve might occur. There is an important incompatibility between the broad question posed by EPA and the narrow array of data considered to answer it. That is problematic because the answers to questions about the adequacy of EPA’s toxicity-testing strategies and risk-assessment practices (Questions 2 and 3) depend critically on the scope of the answer to Question 1.

The SOTS evaluation also uses several definitions that are important in determining the scope of the answers to the questions posed. The committee offers several observations about three key definitions:

BOX 2-2 Three Central Scientific Questions to be Addressed in the SOTS Evaluation (EPA 2013a)

• Question 1: Do [NMDRs] exist for chemicals and if so under what conditions do they occur?

• Question 2: Do NMDRs capture adverse effects that are not captured using [EPA’s] current chemical testing strategies (i.e., false negatives)?

• Question 3: Do NMDRs provide key information that would alter EPA’s current weight of evidence conclusions and risk assessment determinations, either qualitatively or quantitatively?

• Low-dose effect is defined in the SOTS evaluation as “a biological change occurring in the range of typical human exposures or at doses lower than those typically used in standard testing protocols”, which is the definition used by NTP (2001). That definition is vague and confuses two concepts—“low dose” and “low effect”—both of which are important in understanding endocrine disruptors and for identification of NMDR curves. Just the low-dose part of the definition is nonspecific: the range of doses reported as “low dose” in the literature and those used by NTP and relevant human exposures can differ by orders of magnitude (Teeguarden and Hanson-Drury 2013). Being clear about what is meant by low dose is thus critical to study evaluation and interpretation. The definition of low effect clearly depends on the end points being measured, and could be an issue at any dose, including those in the range used by NTP in animal bioassays. Thus, the committee recommends that EPA’s definitions be clear and specific to ensure their consistent application throughout the SOTS evaluation.

• Resilience is described in the SOTS evaluation as the ability of cells and tissues to adapt to maintain homeostasis. The concept of resilience, or adaptation, in a toxicologic context has varied definitions and is a controversial topic that requires careful consideration, but the overview in the SOTS evaluation (p. 30, Figure 2.2) is brief and insufficiently supported, with only a single reference (Andersen et al. 2005). The figure presented from that reference was adapted in a National Research Council report (NRC 2007) to address an important limitation of the resilience concept: that it might not apply in all cases and situations. The caption of the revised figure states that “when perturbations are sufficiently large or when the host is unable to adapt because of underlying nutritional, genetic, disease, or life-stage status, biologic function is compromised, and this leads to toxicity and disease” (NRC 2007, p. 49). The human population is composed of individuals in various states of disease and adaptation. Adaptation may occur in healthy adults who are exposed to a single chemical; but if there are multiple chemical exposures or exposures occur during critical periods of development, there could be little or no ability to adapt or change (Woodruff et al. 2008). Thus, there is particular concern about how the concept of resilience might have been used to evaluate data resulting from studies in which exposure occurred during development. It is also unclear how consideration of resilience might have affected study selection. The revised SOTS evaluation should either elaborate on this concept and specify how it was used in the analysis or omit it, particularly if it was provided simply for reference but did not affect study selection.

• Adverse effect is defined in the SOTS evaluation as “a measured endpoint that displays a change in morphology, physiology, growth, development, reproduction, or life span of a cell or organism, system, or population that results in an impairment of functional capacity, an impairment of the capacity to compensate for additional stress, or an increase in susceptibility to other influences” (adopted from Keller et al. 2012). The SOTS evaluation indicates that this defi-

nition is preferable to the one used by the agency for health-assessment purposes1 because it is a more “systems biology oriented description of adversity” and allows for consideration of mode of action, toxicity pathways, adverse-outcome pathways, and adaptive capability. Better justification for using the definition is needed, especially because the SOTS evaluation is intended to inform decisions about risk-assessment practices; explicit consideration should be given to the implications of this definition, in contrast with the definition used by EPA risk assessors, for health end-point selection, study selection and weighting, and related decisions.

Literature-Search Strategy

A literature-search strategy is designed to identify the universe of potentially relevant studies once a study question is specified. Information about the strategy and results should be provided in sufficient detail to ensure that each database search is replicable. For example, the databases should be specified, the search terms and strings listed, the dates on which the searches were conducted identified, and a summary of the search results provided. Any examination of the literature that is not thorough and systematic runs a risk of assembling an unrepresentative selection of publications and could lead to erroneous conclusions.

The independent writing groups of the SOTS evaluation conducted their literature searches differently. In most cases, no documentation of the search strategies was provided. An expert-based approach to literature selection and analysis appears to have been used for the estrogen and androgen sections. Section 4.2.1 (“Literature Search and Selection Strategy for Estrogen and Androgen Pathways” ) does a reasonable job of describing the complexities of the literature but says only in general terms that a “large database of journal articles and other reports were examined” and does not adequately describe how studies were identified and selected. In the androgen section, Table 4.3 presents 29 studies that were used to evaluate the androgen hormone pathway, but the committee could not find any description of a search strategy that led to their selection. Similarly, adequate documentation was not provided in the thyroid section on aquatic species (Section 4.1.4). In contrast, the search strategy is presented with reasonable completeness in the thyroid section on mammalian models (Section 4.2.4.2 and Appendix C). Failure to establish a clear method of literature identification and evaluation for the groups to follow is responsible for the section-to-section variation and is an important shortcoming of the report. EPA is aware of this problem and has indicated that it is monitoring systematic approaches being developed by the National Toxicology Program and EPA’s Integrated Risk In-

______________________________

1“A biochemical change, functional impairment, or pathologic lesion that affects the performance of the whole organism, or reduces an organism’s ability to respond to an additional environmental challenge” (EPA 2014).

formation System program and will consider them in revisions to the SOTS evaluation (EPA, unpublished material, September 6, 2013).

Study-Selection Criteria

In systematic reviews, clearly defined eligibility criteria are used to determine which studies will be included and which will be excluded from evaluation. Explicit and well-defined criteria are fundamental for a rigorous gathering of a defensible set of data for review (Abrami et al. 1988; Meline 2006), and they provide a guide for the standard of research used to evaluate WOE. Selection criteria should be formulated to identify and include as many informative studies as possible. Several approaches are available to guide study selection. One is to develop a population–exposure–comparator–outcome statement to define each of the elements of the studies. That approach is being modified for application to environmental and toxicologic studies by NTP (2013) and others (e.g., Koustas et al. in press). EPA should consider and adopt an approach that clearly lays out its study-selection criteria.

In the sections below, the committee considers whether the selection criteria in the SOTS evaluation were clearly presented and whether they would ensure that an appropriate set of studies is considered in the analysis.

General Issues with Study-Selection Criteria in the State-of-the-Science Evaluation

EPA’s SOTS evaluation states that it did not “attempt to design exclusion/inclusion criteria for studies uncovered in all literature searches; for reasons of resource limitations, this was done primarily for the description of the data on the thyroid hormone pathway” (EPA 2013a, p. 27). Thus, the literature search and analysis for thyroid disruptors (Section 4.2.4.2) describe the study-selection criteria and present a decision tree in an appendix (Figure C.1) to illustrate how the criteria were used to filter studies. Although the criteria were clearly laid out for the thyroid section, the committee questions the appropriateness of some of the criteria used to filter the studies (see section “Selection Criteria for Thyroid-Active Chemicals” below).

The committee attempted to identify some of the informal study-selection and study-quality criteria used in the estrogen and androgen sections. Table 2-1 presents a comparison of the study-selection criteria for the three hormonal pathways. For all three pathways, an “ideal” number of doses evaluated, type of chemicals evaluated, and specific restrictions for each of the sections are specified. As illustrated in the table, the criteria varied among sections (and even within sections for different chemicals), and the rationale for their use was only partially explained. For instance, Section 4.1 (“Aquatic Models” ) specifies that studies with four doses are preferred but that studies with fewer doses could be

| Section of SOTS Evaluation | SOTS Pages | Study-Selection Criteria | Study Quality Criteria | ||

| Number of Dose Groups | Chemicals and Modes of Action Considered | Restrictions and Filters | |||

| 3. In vitro studiesa | 43-44 | Unable to determine | Unable to determine | Unable to determine | Unable to determine |

| 4.1.1 Aquatic models (HPG axis) | 56-57 | A. ≥4 treatment groups B. <4 groups (included for chemicals or pathways where A not available) | A. Starting list included 28 “model chemicals” listed in Table 4.1 and was reduced to 11 “best-studied” and ”illustrative” examples B. Chemicals with adequately described MOA on the HPG axis (e.g., atrazine excluded because of insufficiently characterized MOA); no explanation of “adequately described” | Restrictions: A. “Restricted largely to” fish species B. Time windows B.1. Full life cycles B.2. When B.1 not available, “longer-term experiments during portions of the life-cycle expected to be sensitive to endocrine-active chemicals” | Unable to determine |

| 4.1.1 Aquatic models (Thyroid) | 58 | Unable to determine whether criteria used for aquatic models (HPG axis) were applied to this set of studies | Chemicals with effects on either: A. Sodium-iodide symporter (NIS) B. Thyroid peroxidase (TPO) | Restrictions: A. Fish and amphibians were included B. Time windows: unclear whether restrictions in point B above for aquatic models (HPG axis) were applied to this set of studies | Unable to determine |

| 4.2.1 Mammalian models (estrogen and androgen pathways) | 84 | A. ≥6 groups OR B. ≥4 groups (3 + 1 control), but large range of exposure (“large range” not defined) | No exclusion | Restrictions: A. Oral exposure B. Subcutaneous included if genomic study or if only few or no oral studies for a specific MOA | Unable to determine |

| Section of SOTS Evaluation | SOTS Pages | Study-Selection Criteria | Study Quality Criteria | ||

| Number of Dose Groups | Chemicals and Modes of Action Considered | Restrictions and Filters | |||

| 4.2.4.2 Mammalian models (thyroid) | 121-122 | ≥3 doses + control | Only one chemical for each of three MOAs described in depth: A. PTU (TPO inhibition) B. Perchlorate (NIS inhibition) C. PHAHs (u-regulation of thyroid hormone metabolism induced by nuclear receptor activation) No exclusions for the broader mammalian thyroid literature | Filters: 1. Minimum of three doses + control Evidence of statistically significant NMDR relationship 2. Absence of observations at lower doses in the study that would have been used to determine the LOEL or LOAEL | Unable to determine |

| 3. (a) Absence of other published reports on the chemical in which effects were observed at low levels; (b) absence of other published reports for effects on other end points that would have been used to determine LOEL or NOEL below the doses identified as having an NMDR relationship; (c) absence of study-quality concerns or statistical-power issues that weakened confidence in NMDR observation | |||||

aStudies identified by Vandenberg et al. (2012). Description of search strategy and study selection were not provided in the Vandenberg et al. paper.

Abbreviations: HPG, hypothalamic-pituitary-gonadal; LOAEL, lowest observed-adverse-effect level; LOEL, lowest observed-effect level; MOA, mode of action; NIS, sodium-iodide symporter; NMDR, nonmonotonic dose response; NOEL, no-observed-effect-level; PHAHs, polyhalogenated aromatic hydrocarbons; PTU, propylthiouracil; TPO, thyroperoxidase.

used to include additional chemicals and pathways in the analysis. In contrast, Section 4.2.1 (“Literature Search and Selection Strategy for E and A Pathways”), a minimum of six doses is specified, but in vivo studies that tested fewer doses were allowed for inclusion provided that the studies tested a “broad dose-range” so that studies cited by others as displaying NMDR curves could be included. However, no definition of a broad dose range was provided. Most of the sections restrict the evaluation to subsets of the studies available on the basis of species, route of exposure, or exposure levels. The restrictions do not appear to be consistently applied among sections.

In determining the set of chemicals to evaluate for each of the hormonal pathways, it appeared that the SOTS evaluation was not consistently comprehensive in its consideration of modes of action (MOAs). The section on the thyroid pathway provided the clearest description of the MOAs considered. Known mechanisms of thyroid disruption were described (pp. 36 and 119–120 and Figure 2.5), and the search strategy documents that potential NMDR curves with both genomic and nongenomic actions were considered. The thyroid section also included chemicals that have effects both on and outside the hypothalamic–pituitary–thyroid axis. Thus, this section was appropriately inclusive in considering potential MOAs.

In contrast, the scope of the MOAs considered for the estrogen and androgen pathways was unclear. On the basis of the data presented in the two sections, the evaluations appear to focus on studies in which nuclear receptor-mediated activity was observed or presumed. For example, although it is acknowledged in the text that estrogen can act on the cell via multiple mechanisms, only the nuclear receptor-mediated activity is depicted in Figure 2.6, and it appears that this is how the “estrogenic” MOA is primarily defined in the SOTS document. The committee found this to be too limited an interpretation of estrogen activity. For example, effects of estrogen action via the newly identified receptor GPR30 are dismissed in the SOTS evaluation as “difficult to evaluate” because the “responses are often of very low magnitude, and, although statistically significant, have little biological validation” (EPA 2013a, p. 49). Although investigation of estrogenic activity through GPR30 signaling and the physiologic outcomes associated with it continue, this MOA and similar rapid-signaling pathways initiated by estrogen are proving to be biologically significant (Filardo and Thomas 2012). Excluding this MOA not only is inappropriately dismissive but demonstrates how adoption of a narrowly conceived MOA constrains the identification of “under what conditions do [NMDR curves] occur” (part of Question 1). Not understanding the biologic significance of GRP30 signaling is an important and reasonable consideration in addressing Questions 2 and 3 but is too limiting for addressing Question 1. Also missing is discussion of whether studies reporting epigenetic modifications were included or excluded from consideration. Numerous studies have demonstrated that endocrine disruptors—such as bisphenol A, phthalates, vinclozolin, methoxychlor, and dioxins—can produce epigenetic modifications associated with altered behavioral, reproductive, and other neuroendocrine end points (Dolinoy et al. 2007; Prins et al.

2008; Wolstenholme et al. 2011; Guerrero-Bosagna et al. 2012; Tang et al. 2012; Kundakovic et al. 2013; Manikkam et al. 2013; Somm et al. 2013). It is biologically plausible, and there is precedent for steroid hormones to act through MOAs other than steroid receptor mediation.

The discussion of MOAs relevant to the androgen pathway (p. 36) focuses only on events surrounding androgen-receptor activation (agonism and antagonism) and touches on the effects of disrupting androgen-converting enzymes. However, MOAs and adverse-outcome pathways important in suppressing androgen synthesis (relevant for phthalates) are not discussed although they appear to be considered in subsequent sections analyzing the literature on NMDR curves (pp. 82 ff). In general, the selection of studies for androgenic activity appears to consider all relevant modalities, but the study-selection description lacks the transparency needed to verify that that is the case. Furthermore, in describing the selection strategy for the estrogen and androgen pathways, the SOTS evaluation states that a large database of journal articles and other reports was examined (p. 83), but the database is not identified or described.

Selection Criteria for Thyroid-Active Chemicals

The thyroid section is the only section that explicitly defines the criteria that were used for study selection, which includes four filters, as shown in Table 2-1. Generally, systematic-review methods used in clinical medicine (Higgins and Green 2011; IOM 2011) and those being developed for environmental health assessments (NTP 2013) recommend that selection criteria be chosen on the basis of relevance to the question being evaluated rather than only to study-quality issues. Study quality is important and should be evaluated, but after the studies have been selected for analysis. Study inclusion and exclusion criteria that are too strict may lead to the omission of studies that can provide useful information even if they are limited in some way. Accordingly, the committee disagrees with the use of criteria that would exclude studies because of issues related to statistical power (which are encompassed by Filters 2-4). Using statistical significance as an absolute criterion for selecting studies with respect to NMDR curves is not recommended, because it can be influenced by several factors that should be explored before a determination of how informative a study would be in addressing the question (see Chapter 3 for further discussion of this issue). For example, having a small number of data points can limit a study’s power to detect a significant result. In later analyses, it might be possible to combine data from several studies. When several studies that have small numbers of data points and marginally statistically significant results are combined, statistically significant results can be revealed because the statistical power has been increased (Cohn and Becker 2003; Walker et al. 2008; Haidich 2010). In contrast, Filter 2 could also exclude studies that were well powered but failed to detect an NMDR curve. Statistical issues are also pertinent to Filter 3, which restricts consideration to studies that found a lowest observed-effect level or

lowest observed-adverse-effect level. The identification of such levels can be heavily influenced by study design, particularly when small numbers of animals are tested. That concern is also relevant to Filters 4 (a) and (b). Issues related to study quality are discussed further below.

Evaluating Study Quality

The SOTS evaluation used an expert-based approach to evaluate study quality. Although expert judgment clearly is important, and the committee could find no clear description of a strategy or criteria for assessing the studies used in the evaluation (see Table 2-1). Lack of such criteria and of their systematic application to the studies raises serious concerns about the ability of the SOTS evaluation to reach conclusions regarding the degree to which NMDR curves are evident in the scientific literature. It also compromises the assessment of whether NMDR curves require changes in EPA chemical-testing and risk-assessment strategies. Another National Research Council committee is providing relevant guidance on study-quality issues, such as risk of bias, to EPA’s Integrated Risk Information System program (NRC in press). Recommendations from that committee could be supplemented with study-quality criteria specific to evaluating the evidence on NMDR curves.

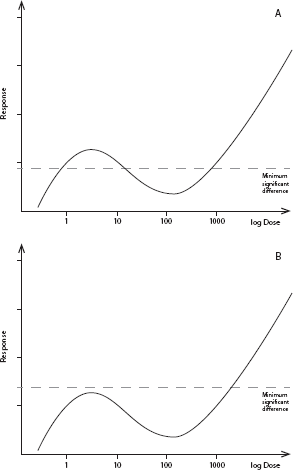

Evaluation of study quality for the SOTS evaluation should include whether a study has the design elements needed to determine the presence or absence of an NMDR curve. Most standard toxicity-testing protocols have low sensitivity and little statistical power for detecting NMDR curves, particularly at the lower end of the dose–response curve, because they typically test only three or four doses. Thus, it is not surprising that most studies in the SOTS evaluation did not find NMDR curves and that reproducibility of the small number of studies that have shown such an effect is low. Figure 2-1 gives a hypothetical example of the relationship between the statistical power of a toxicology experiment and the ability to reveal NMDR curves.

If few studies have the necessary methodologic features to evaluate whether a dose–response curve shows an NMDR relationship, it will be necessary to consider other studies. Such studies may have methodologic challenges, such as low statistical power, that raise questions about how the data should be analyzed and interpreted. For example, how should issues regarding multiple comparisons (such as inflated type I error rate in all tests combined) be handled in evaluating secondary or tertiary hypotheses? How should detection of a quadratic effect leading to an NMDR curve be handled if the original analysis of variance (ANOVA) was not statistically significant? What statistical methods should be used to perform robust analyses of variances in response that might be nonmonotonic but that might be functions of mean response and have outliers?

Those are difficult and controversial questions, and addressing them goes beyond the present committee’s task. In general, the committee supports the conduct of predefined secondary analyses as appropriate in support of evidence

synthesis, such as meta-analysis. Criteria will need to be developed for determining appropriate statistical approaches to evaluate NMDR curves. For example, ANOVA is not designed to detect NMDR curves, so criteria for using such other approaches as biologically based dose–response models, polynomial functions, or splines should be considered.

FIGURE 2-1 Relationship between the statistical power of a toxicologic experiment and the ability to reveal a nonmonotonic dose–response (NMDR) relationship. (A) Hypothetical NMDR curve. The horizontal dashed line depicts the minimum significant difference (MSD), the effect magnitude that can be detected as statistically significantly different from untreated controls a high percentage of the time. Effects below this line will be classified as not statistically significant. The magnitude of an MSD depends on several factors, such as the number of animals per dose group and the inherent variability of the measured response. In this example, the experiment is sufficiently powered to reveal an NMDR curve. (B) Same hypothetical NMDR curve as in (A), but with an underpowered experiment, which results in a larger MSD. In this case, an NMDR curve will tend to be overlooked because the nonmonotonicity will be classified as “background fluctuation” with no statistical significance.

Data Presentation and Summarization

Consistency of Exposure Descriptions

Given the wide range of chemicals and doses considered in the SOTS evaluation, environmental exposure ranges for each chemical could be provided to give context to the data. That would help in understanding the similarities and differences in findings between the studies described. Some examples of such context are already in the document; doses tested for genistein in the diet are described as including levels found in human diets (p. 97), and figures are used to demonstrate points of inflection in in vitro and in vivo data compared with environmental exposure concentrations (pp. 52-55). Alternative presentations might also be useful. For example, tabulation of the data might allow easier comparisons of the data. However, the presentation should consider the issues related to statistical power as described above.

It would also be helpful to report exposures to each chemical in the same units throughout a given section (for example, either as micrograms per liter or parts per billion). Inconsistency in the units is particularly problematic in the appendixes, where study tables are reproduced directly from publications. It would be helpful to convert standard international units to conventional units (or vice versa) in such tables. Consideration might also be given to creating a single large table for each appendix (expanded from Tables 4.1-4.4).

The committee recommends that, where it is possible, figures for a given end point be provided to show multiple dose–response relationships on the same scale for ease of comparison (see, for example, NTP 2013).

Consistency of Study Descriptions

Using a consistent format to present data from the individual studies that evaluated NMDR curves would allow multiple authors to contribute while permitting consistency in style and substance; this would make it easier for readers to understand the data and follow the conclusions. Tables 4.1–4.4 in the SOTS evaluation are purported to present the studies that provide evidence of NMDR curves. Some of the variation in how the data were analyzed in the various sections contributes to slightly different formats of the tables. However, the data presented are restricted to study-design elements and provide no information on the specific end points for which EPA asserts that NMDR curves were demonstrated. Such information would make the tables more useful by providing context for the evidence.

The committee found that Appendix A (the section on estrogen disruptors in mammals) provides useful context of the reported NMDR curves. For example, the appendix specifies the total number of end points evaluated in each study, which is then compared with the evidence in other generations in the same study (same doses, sex, and strain). The approach of cross-checking an

NMDR relationship in one generation by looking at the same end point in other generations is logical and valid.

In the thyroid section, the study descriptions of thyroid hormones should distinguish whether measurements are of total or of free triiodothyronine (T3) and thyroxine (T4). Changes in total hormone concentrations may be due simply to alterations in binding and may or may not be associated with alterations in the concentrations of the bioactive free hormones.

Summarization of Data

The SOTS evaluation provides detailed descriptions of the studies considered but little or no synthesis or summarization of the data to compare results among studies or to understand how the evidence was weighed to reach conclusions. The thyroid sections (Sections 4.2.4.4 and 4.2.4.5) do the best job of summarizing the overall findings of the studies that demonstrated NMDR curves. This type of data synthesis and interpretation is lacking in the androgen and estrogen sections. It will be important to ensure that other conclusions embedded throughout the evaluation are adequately supported. For example, support is needed for the statement on p. 75 that “this pattern suggests, perhaps, that NMDRs may be more prevalent in shorter-term assays, especially during periods of system disequilibrium.” Another example, on p. 113, inadequately summarizes the evidence on peripubertal exposure to DEHP on male rat reproductive development: “While effects on [preputial separation] and body and reproductive organ weights with an NMDR were observed in the Ge et al. (2007) [B.2.c.3], they were not seen by Noriega et al. (2009) [B.2.c.4] in either of two rat strains studied, and some of the other effects reported in Ge et al. are not consistent with findings from other publications.”

Approach to Synthesizing Evidence

The committee evaluated whether the SOTS evaluation has transparently laid out the process for integrating the evidence from various studies and provided a consistent rationale for WOE from various lines of research to support reasonable conclusions. The introduction to the SOTS evaluation describes the WOE used by EPA to determine hazards and risks associated with chemicals. The approach includes “assembling the relevant data; evaluating that data for quality and relevance; and an integration of the different lines of evidence to support conclusions concerning a property of a substance. The significant issues, strengths, and limitations of the data and the uncertainties that deserve serious consideration are presented, and the major points of interpretation highlighted” (p. 27). However, the SOTS evaluation notes that “for sections of [the] review, judgments of likelihood were based on relevant examples rather than a formal [WOE]” (p. 27). Thus, the committee understands that a formal WOE evaluation was not performed but was nonetheless struck by the lack of transparency in how the authors integrated the evidence in each section. Indeed, although such a

synthesis forms the core of what the SOTS evaluation intends to communicate, the balancing and weighing of all the evidence was not described, let alone described in a transparent manner that would readers to reach the same conclusion from the same data.

As advocated in previous National Research Council reports (e.g., NRC 2011), presentation of reviewed studies should be standardized in tabular or graphic form to capture the key dimensions of study characteristics, WOE, and utility for addressing the question under consideration. Transparency and clarity in the lines of evidence considered and how it was integrated to draw conclusions would help to minimize unintended or perceived biases on the part of the authors or the readers of the SOTS evaluation. For example, a table could specify by publication the end points evaluated and the dose–response evidence, which would illustrate studies that did and did not show NMDR curves. Alternatively, separate tables could be created for each end point. Either format would put the mass of the evidence in one place and allow readers to see easily the number of end points and the ones that did and did not have NMDR curves. Using figures that provide the same information for each of the studies that are included in the review would be a consistent and clear way to display the data. Such presentations would allow readers to observe the patterns in the data that led to the authors’ conclusions. Examples of approaches that could be used to guide revisions of the SOTS evaluation include those used by NTP’s Center for the Evaluation of Risks to Human Reproduction and those being developed by NTP’s Office of Health Assessment and Translation (NTP 2013), other proposed data review and synthesis tools recently developed (e.g., Woodruff and Sutton 2011), and approaches recommended by other National Research Council committees (NRC in press).

Statistical methods are available for combining evidence from multiple studies, including meta-analytic approaches (e.g., Greenland and Longnecker 1992; Berlin et al. 1993; Berlin and Coldiz 1999; Steenland et al. 2001; Sutton and Higgins 2008; Orsini et al. 2012) and Bayesian approaches (e.g., Sutton and Abrams 2001). Those methods could be adapted to evaluating the evidence on NMDR curves. However, that would need further research and development before implementation, inasmuch as current methods rely on a common point estimate of a single parameter and its standard error from each study and do not have the capacity to explore more complex relationships. Alternatives to conventional meta-analysis include pooling individual participant data from different studies for modeling or performing Bayesian hierarchic modeling. Regardless of how the methods are adapted, assessment of study heterogeneity, full specification of study design, and accessibility to individual participant data will be important for evidence integration and synthesis of NMDR data.

The committee focused on whether EPA fairly and soundly evaluated the evidence from diverse sources (in vivo, animal, mode-of-action, and epidemio-

logic studies) and whether the evaluation would be accepted as robust, transparent, objective, and repeatable by the scientific community. EPA has made it clear that time and resource constraints led to its decision to use separate, expert-based evaluations to develop the SOTS evaluation of NMDR curves. However, the agency failed to establish (or enforce) a clear set of methods for collecting and analyzing the evidence on NMDR curves to ensure that the groups conducted their assessments in a clear, consistent, and therefore replicable manner. Instead, the groups determined independently how to perform their analyses. Although such an approach might be appropriate as an internal scoping exercise for the agency, the SOTS evaluation is to be its foundational synthesis of the literature on NMDR curves for the estrogen, androgen, and thyroid pathways and addresses biologic responses that are often counterintuitive. The document will probably be a milestone event in the history of EPA’s engagement with endocrine disruptors because it draws conclusions about the existence of NMDR curves and the conditions under which they occur that will to be used to inform decisions about the agency’s toxicity-testing strategies and risk-assessment practices. Given its importance and its broad use, the committee judges that the SOTS evaluation should meet a higher standard of evaluation, particularly given the heated controversy surrounding this issue. Methods that provide a more systematic approach and greater transparency are necessary, or it will be too easy to dismiss the analysis as superficial or even biased in the literature selection and evaluation. Although it is clear that the authors spent enormous time and energy in developing the evaluation, it is fundamentally compromised, at least in appearance.

EPA has acknowledged the lack of a consistent and transparent process for data identification, selection, and evaluation and is actively engaged in establishing new procedures on the basis of recommendations from other National Research Council reports (NRC 2009, 2011). An upcoming National Research Council report will address methods specifically for performing evidence evaluations and evidence integration for EPA’s Integrated Risk Information System. EPA has already taken steps to address shortcoming in the current SOTS evaluation by developing a Performance Work Statement for subcontractors to conduct systematic literature searches, data extraction, and evaluation of the evidence on NMDR relationships (EPA, unpublished material, September 6, 2013). However, the results of this activity were not available to the committee, so recommendations are restricted to what was presented in the SOTS evaluation.

EPA’s SOTS evaluation should be revised to provide more systematic and transparent approaches to evaluating the literature on NMDR curves for the three hormone pathways. Guidance for such approaches is available from clinical sciences (e.g., IOM 2011), other National Research Council reports (e.g., NRC 2011), and those being developed at other government agencies (e.g., NTP 2013). Important considerations include the following:

• The mismatch between the breadth of the three central scientific questions of the SOTS evaluation and the narrower scope of the analytic approach used to address them should be rectified by either narrowing the scope of the questions or broadening the scope of the analysis. Specific important issues include these:

o The decision to exclude human studies should be reconsidered, particularly because the analysis will ultimately be used to make decisions about human health risk-assessment practices.

o The MOAs considered for each of the hormonal pathways should be clarified and considered in the context of the breadth of the questions to be answered.

• An analytic plan should be developed and applied consistently to the evidence on the three hormone pathways. Important elements of the plan include predefining and documenting the literature-search strategies and their results, criteria for selecting studies for analysis, criteria for determining study quality, templates for presenting evidence consistently in tabular and graphic form, and approaches to integration of evidence. The following are specific consideration for these elements:

o Improve the justification and context for the definitions of low-dose effect, resilience, and adverse effect used in the SOTS evaluation.

o The methodologic features that would be necessary for a study to be able to detect an NMDR relationship should be established. Ideally, studies would have multiple dose groups that were spaced across a defined exposure domain, including doses below those typically tested. Statistical design, biologic plausibility, and replicability should be factored into interpreting and weighing the evidence from such studies.

o Study exclusion and inclusion criteria should be established. Although statistical significance is an important consideration, it should not be an absolute criterion for including or excluding studies, inasmuch as standard toxicity-testing strategies generally do not have sufficient sensitivity and statistical power to detect NMDR curves

o Study quality criteria should be established. Statistical criteria should be given particular attention. It will be important to balance study-quality criteria that are based on statistical significance and those based on biologic plausibility.

o Secondary analyses of other studies may be necessary. Current methods for performing post hoc analysis of data and for combining evidence from multiple studies might be adapted for such purposes but will require research and development before implementation. EPA should consider soliciting input from the biostatistics community on the best methods to pursue in the long term and on what measures should be taken to complete the SOTS evaluation.

• Evidence tables and graphic presentations should use consistent units for varied studies (when possible), present multiple dose–response curves on the same scale (when possible) to facilitate comparisons, and provide more context for exposure ranges.

• The evidence on each hormone pathway should be summarized and synthesized to document the key evidence and WOE analysis that led to conclusions.

Abrami, P.C., P.A. Cohen, and S. d’Apollonia. 1988. Implementation problems in meta-analysis. Rev. Educ. Res. 58(2):151-179.

Andersen, M.E., J.E. Dennison, R.S. Thomas, and R.B. Conolly. 2005. New directions in incidence-dose modeling. Trends Biotechnol. 23(3):122-127.

Berlin, J.A., and G.A. Colditz. 1999. The role of meta-analysis in the regulatory process for foods, drugs, and devices.JAMA 281(9):830-834.

Berlin, J.A., M.P. Longnecker, and S. Greenland. 1993. Meta-analysis of epidemiologic dose-response data. Epidemiology 4(3):218-228.

Birnbaum, L.S., K.A. Thayer, J.R. Bucher, and M.S. Wolfe. 2013. Implementing systematic review at the National Toxicology Program: Status and next steps. Environ. Health Perspect. 121(4):A108-A109.

Cohn, L.D., and B.J. Becker. 2003. How meta-analysis increases statistical power. Psychol. Methods 8(3):243-253.

Dolinoy, D.C., D. Huang, and R.L. Jirtle. 2007. Maternal nutrient supplementation counteracts bisphenol A-induced DNA hypomethylation in early development. Proc. Natl. Acad. Sci. USA 104(32):13056-13061.

EFSA (European Food Safety Authority). 2013. Scientific opinion on the hazard assessment of endocrine disruptors: Scientific criteria for identification of endocrine disruptors and appropriateness of existing test methods for assessing effects mediated by these substances on human health and the environment. EFSA J. 11(3):3121.

EPA (U.S. Environmental Protection Agency). 2013a. State of the Science Evaluation: Nonmonotonic Dose Responses as They Apply to Estrogen, Androgen, and Thyroid Pathways and EPA Testing and Assessment Procedures. U.S. Environmental Protection Agency. June 2013 [online]. Available: http://epa.gov/ncct/download_files/edr/NMDR.pdf [accessed Aug. 12, 2013].

EPA (U.S. Environmental Protection Agency). 2013b. Part 1. Status of Implementation of Recommendations. Materials Submitted to the National Research Council, by Integrated Risk Information System Program, U.S. Environmental Protection Agency, January 30, 2013[online]. Available: http://www.epa.gov/IRIS/irisnrc.htm [accessed Oct. 22, 2013].

EPA (U.S. Environmental Protection Agency). 2014. Integrated Risk Information (IRIS) Glossary [online]. Available: http://ofmpub.epa.gov/sor_internet/registry/termreg/searchandretrieve/glossariesandkeywordlists/search.do?details=&glossaryName=IRIS%20Glossary [accessed Feb. 19, 2014].

Filardo, E.J., and P. Thomas. 2012. Minireview: G protein-coupled estrogen receptor-1, GPER-1: Its mechanism of action and role in female reproductive cancer, renal and vascular physiology. Endocrinology 153(7):2953-2962.

Ge, R.S., G.R. Chen, Q. Dong, B. Akingbemi, C.M. Sottas, M. Santos, S.C. Sealfon, D.J. Bernard, and M.P. Hardy. 2007. Biphasic effects of postnatal exposure to diethylhexylphthalate on the timing of puberty in male rats. J. Androl. 28:513-520 (as cited in EPA 2013a).

Greenland, S., and M.P. Longnecker. 1992. Methods for trend estimation from summarized dose-response data, with application to meta-analysis. Am. J. Epidemiol. 135(11):1301-1309.

Guerrero-Bosagna, C., T.R. Covert, M.M. Haue, M. Settles, E.E. Nilsson, M.D. Anway, and M.K. Skiinner. 2012. Epigenetic transgenerational inheritance of vinclozolin induced mouse adult onset disease and associated sperm epigenome biomarkers. Reprod. Toxicol. 34(4):694-707.

Guyatt, G., A.D. Oxman, E.A. Akl, R. Kunz, G. Vist, J. Brozek, S. Norris, Y. Falck-Ytter, P. Glasziou, H. DeBeer, R. Jaeschke, D. Rind, J. Meerpohl, P. Dahm, and H.J. Schünemann. 2011. GRADE guidelines: 1. Introduction-GRADE evidence profiles and summary of findings tables. J. Clin. Epidemiol. 64(4):383-394.

Haidich, A.B. 2010. Meta-analysis in medical research. Hippokratia 14(suppl. 1):29-37.

Higgins, J.P.T., and S. Green, eds. 2011. Cochrane Handbook for Systematic Reviews of Interventions Version 5.1.0, The Cochrane Collaboration [online]. Available: http://handbook.cochrane.org/ [accessed Feb. 7, 2014].

IOM (Institute of Medicine). 2011. Finding What Works in Health Care, Standards for Systematic Reviews. Washington, DC: The National Academies Press.

Keller, D.A., D.R. Juberg, N. Catlin, W.H. Farland, F.G. Hess, D.C. Wolf, and N.G. Doerrer. 2012. Identification and characterization of adverse effects in the 21st century toxicology. Toxicol. Sci. 126(2):291-297.

Koustas, E., J. Lam, P. Sutton, P.I. Johnson, D. Atchley, S. Sen, K.A. Robinson, D. Axelrad, and T.J. Woodruff. In press. Applying the navigation guide: Case study #1. The impact of developmental exposure to perfluorooctanoic acid (PFOA) on fetal growth. A systematic review of the non-human evidence. Environ. Health Perspect.

Kundakovic, M., K. Gudsnuk, B. Franks, J. Madrid, R.L. Miller, F.P. Perera, and F.A. Champagne. 2013. Sex-specific epigenetic disruption and behavioral changes following low-dose in utero bisphenol A exposure. Proc. Natl. Acad. Sci. USA. 10(24):9956-9961.

Kushman, M.E., A.D. Kraft, K.Z. Guyton, W.A. Chiu, S.L. Makris, and I. Rusyn. 2013. A systematic approach for identifying and presenting mechanistic evidence in human health assessments. Regul. Toxicol. Pharmacol. 67(2):266-277.

Manikkam, M., R. Tracey, C. Guerrero-Bosagna, and M.K. Skinner. 2013. Plastics derived endocrine disruptors (BPA, DEHP and DBP) induce epigenetic transgenerational inheritance of obesity, reproductive disease and sperm epimutations. PLoS One 8(1):e55387.

Meline, T. 2006. Selecting studies for systematic review: Inclusion and exclusion criteria. CICSD 33(Spring):21-27.

Noriega, N.C., K.L. Howdeshell, J. Furr, C.R. Lambright, V.S. Wilson, and L.E. Gray, Jr. 2009. Pubertal administration of DEHP delays puberty, suppresses testosterone production, and inhibits reproductive tract development in male Sprague-Dawley and Long-Evans rats. Toxicol. Sci. 111(1):163-178 (as cited in EPA 2013a).

NRC (National Research Council). 2007. Toxicity Testing in the 21st Century: A Vision and a Strategy. Washington, DC: The National Academies Press.

NRC (National Research Council). 2009. Science and Decisions: Advancing Risk Assessment. Washington, DC: The National Academies Press.

NRC (National Research Council). 2011. Review of the Environmental Protection Agency’s Draft IRIS Assessment of Formaldehyde. Washington, DC: The National Academies Press.

NRC (National Research Council). 2013. Critical Aspects of EPA’s IRIS Assessment of Inorganic Arsenic: Interim Report. Washington, DC: The National Academies Press.

NRC (National Research Council). In press. Review of Revisions to the IRIS Process. Washington, DC: The National Academies Press.

NTP (National Toxicology Program). 2001. National Toxicology Program’s Report of the Endocrine Disruptors Low Dose Peer Review. National Toxicology Program, U.S. Department of Health and Human Services, National Institute of Environmental Health Sciences, National Institutes of Health, Research Triangle Park, NC [online]. Available: http://www.epa.gov/endo/pubs/edmvs/lowdosepeerfinalrpt.pdf (as cited in EPA 2013a).

NTP (National Toxicology Program). 2013. OHAT Implementation of Systematic Review [online]. Available: http://ntp.niehs.nih.gov/go/38673 [accessed Oct. 23, 2013].

Orsini, N., R. Li, A. Wolk, P. Khudyakov, and D. Spiegelman. 2012. Meta-analysis for linear and nonlinear dose response relations: Examples, an evolution of approximations, and software. Am. J. Epidemiol. 175(1):66-73.

Prins, G.S., W.Y. Tang, J. Belmone, and S.M. Ho. 2008. Developmental exposure to bisphenol A increases prostate cancer susceptibility in adult rats: Epigenetic mode of action is implicated. Fertil. Steril. 89(2 Suppl):e41. doi: 10.1016/j.fertnstert.2007.12.023.

Somm, E., C. Stouder, and A. Paoloni-Giacobino. 2013. Effect of developmental dioxin exposure on methylation and expression of specific imprinted genes in mice. Reprod. Toxicol. 35:150-155.

Steenland, K., A. Mannetje, P. Bofetta, L. Stayner, M. Attfield, J. Chen, M. Dosemeci, N. DeKlerk, E. Hnizdo, R. Koskela, and H. Checkoway. 2001. Pooled exposure-response analyses and risk assessment for lung cancer in 10 cohorts of silica-exposed workers: An IARC multicentre study. Can. Caus. Control. 12(9):773-784.

Sutton, A.J., and K.R. Abrams. 2001. Bayesian methods in meta-analysis and evidence synthesis. Stat. Methods Med. Res. 10(4):277-303.

Sutton, A.J., and J.P.T. Higgins. 2008. Recent developments in meta-analysis. Stat. Med. 27:625-650.

Tang, W.Y., L.M. Morey, Y.Y. Cheung, L. Birch, G.S. Prins, and S.M. Ho. 2012. Neonatal exposure to estradiol/bisphenol A alters promoter methylation and expression of Nsbp1 and Hpcal1 genes and transcriptional programs of Dnmt3a/b and Mbd2/4 in the rat prostate gland throughout life. Endocrinology. 53(1):42-55.

Teeguarden, J.G., and S. Hason-Drury. 2013. A systematic review of bisphenol A “low dose” studies in the context of human exposure: A case for establishing standards for reporting “low-dose” effects of chemicals. Food Chem. Toxicol. 62:935-948.

Vandenberg, L.N., T. Colborn, T.B. Hayes, J.J. Heindel, D.R. Jacobs, D.H. Lee, T. Shioda, A.M. Soto, F.S. vom Saal, W.V. Welshons, R.T. Zoeller, and J.P. Myers. 2012. Hormones and endocrine-disrupting chemicals: Low-dose effects and nonmonotonic dose responses. Endocr. Rev. 33(3):378-455.

Walker, E., A.V. Hernandez, and M.W. Kattan. 2008. Meta-analysis: Its strengths and limitations. Cleve. Clin. J. Med. 75(6):431-439.

Wolstenholme, J.T., E.F. Rissman, and J.J. Connelly. 2011. The role of bisphenol A in shaping the brain, epigenome and behavior. Horm. Behav. 59(3):296-305.

Woodruff, T.J., and P. Sutton. 2011. An evidence-based medicine methodology to bridge the gap between clinical and environmental health sciences. Health Aff. 30(5):931-937.

Woodruff, T. J., and P. Sutton. In press. The navigation guide: An improved method for translating environmental health science into better health outcomes. Environ. Health Perspect.

Woodruff, T.J., L. Zeise, D.A. Axelrad, K.Z. Guyton, S. Janssen, M. Miller, G.G. Miller, J.M. Schwartz, G. Alexeeff, H. Anderson, L. Birnbaum, F. Bois, V.J. Cogliano, K. Crofton, S.Y. Euling, P.M. Foster, D.R. Germolec, E. Gray, D.B. Hattis, A.D. Kyle, R.W. Luebke, M.I. Luster, C. Portier, D.C. Rice, G. Solomon, J. Vandenberg, and R.T. Zoeller. 2008. Meeting report: Moving upstream-evaluating adverse upstream end points for improved risk assessment and decision-making. Environ. Health Perspect. 116(11):1568-1575.