Session 2:

Big Data Issues in Materials Research and Development

Session 2 of the workshop focused more specifically on the application of big data to materials research and development. Jim Davis, University of California Los Angeles, had originally been scheduled to present during Session 3, but the workshop participants determined his talk content was well suited to the discussions in Session 2, so he was moved here. Thom Mason, Oak Ridge National Laboratory; Chuck Ward, U.S. Air Force; Rusty Irving, GE Global Research; and Dr. Davis made presentations in Session 2.

Thom Mason, Director, Oak Ridge National Laboratory

Dr. Mason began by noting that the title of his talk might be misleading. His presentation focuses on scientific data, he said, which includes physics, but it includes many other fields as well. He explained that Oak Ridge National Laboratory (ORNL) is a DOE laboratory, and its drivers are derived from the DOE mission. Many of these drivers involve large amounts of data and computing. He pointed out that the data requirements vary across scientific disciplines. High-energy physics and cosmology have very high data volumes, on the order of 1 PB per day. Data volumes for neutron sources are somewhat smaller, but they are still bigger than can be comfortably managed. Improvements in imaging systems have resulted in larger volumes of data, the scale of which can overwhelm a smaller research group

with access to an ORNL beam line. Dr. Mason said that data come in many varieties (such imaging data, text, and sensor data), and the content must be combined. He argued that this growth in data is outpacing computing growth.

Dr. Mason also noted, as an aside, that a particular challenge in the materials community is that of provenance. Other disciplines, such as cosmology, have no concept of a sample. In materials, individual researchers invest much time and effort in producing and characterizing a sample, and as a consequence there will be challenges associated with moving to open source data because of the intellectual investment in a particular sample. The variability of samples between the different groups producing them is another challenge.

Dr. Mason stated that ORNL is DOE’s largest science and energy laboratory, and materials science permeates all of ORNL’s focus areas. He described ORNL’s high-performance computing ecosystem, including its flagship system, known as Titan.1 ORNL’s high-performance computing system models and simulates physical, biological, and chemical systems. It processes data, but it generates large volumes of data as well. The primary drivers for the supercomputing power at ORNL are scientific, but ORNL is also exploring industrial applications. Dr. Mason provided three examples:

- General Motors. General Motors sought to understand and develop new thermoelectric materials for higher fuel efficiency.

- BMI. BMI Corporation2 sought to create parts to retrofit onto Class 8 long-haul trucks for improved fuel efficiency and emissions.

- Boeing. Boeing sought to develop and validate computational models for transport airplane design and development.

Dr. Mason then described the Accelerating Data Acquisition, Reduction, and Analysis (ADARA) program at ORNL. ADARA is an experimental data analysis program developed in response to increased data and imaging needs from the Spallation Neutron Source. He pointed out that the Spallation Neutron Source is improving, and data volumes are growing at corresponding rates. In the past, the data volumes were handled via an individual researcher’s analysis code. The general rule of thumb was that one week of data collection equaled one year of graduate student analysis time. Now, however, the data are over a hundred times larger in size, and the old model of analysis has become unsustainable. When Dr. Mason was later asked if ADARA was limited to the Spallation Neutron Source, he clarified by saying that while ADARA is specific to the Spallation Source, the concept

_________________

1 More information about Titan can be found at http://www.olcf.ornl.gov/titan/. Accessed March 10, 2014.

2 BMI Corporation is an engineering services firm based in Greenville, South Carolina.

is applicable to other neutron and X-ray sources. The code is largely open source, facilitating expansion elsewhere.

Dr. Mason also described ORNL’s Manufacturing Demonstration Facility (MDF), which includes extensive capabilities for additive manufacturing. ORNL is interested in expanding the palate of materials it uses for additive manufacturing. The laboratory is developing titanium alloys (primarily for the medical device community), high-temperature alloys, and refractory metals (such as tungsten). The processing recipes and real-world performance of these materials are still unknown. Dr. Mason pointed out that ORNL’s high-performance computing capabilities are useful to model the additive manufacturing of complex shapes. He then described several examples from the MDF:

- Additive manufacturing of turbine blades. ORNL and its industrial partner, Morris Technologies, use neutron scattering to measure atomic plane spacing and better understand the link between residual stress and laser additive manufacturing processing. They seek to develop a pedigree that will enable the turbine blades’ use even in situations that could otherwise compromise human or environmental safety.

- Rapid, agile manufacturing. ORNL and its industrial partner, Local Motors, use crowdsourcing to design vehicles for short-run manufacturing. The project focuses on novel material development and additive manufacturing. They are currently creating a process for large-scale (20 feet per side) carbon-reinforced polymer development that combines additive and subtractive techniques.

In the discussion period, Robert Schafrik, GE Aviation, asked about the additive manufacturing processes. He pointed out that the construction of a large structure takes time and will require changes to the additive process. Those changes might affect the properties of the end result and should be accounted for in the models. Dr. Mason pointed out that ORNL is moving toward parallel processing with large component sizes. One promising avenue to improve throughput is to use additive manufacturing for tool and die production, greatly speeding up the development cycle, while employing traditional high-throughput techniques for volume manufacturing.

The discussion briefly turned to access to data. Dr. Mason said that ORNL desires to move to an open source model. Another participant thought that embargo periods might be useful; access to data could be restricted for a period of time, then the data could be sent to a public repository. Another participant discussed the problems associated with a protein crystal structure databank; researchers are required to submit data to that databank. The community is discovering that much of the data is wrong, a sign that experimental constraints may need to be

imposed for data consistency. Dr. Mason explained that, for the vast majority of work done at ORNL, the user owns the data but the expectation is that the data will be published. Under those constraints, ORNL does not collect a user fee. A small amount of work conducted at ORNL uses proprietary data, and that work is arranged under a full-cost recovery model.

MATERIALS GENOME INITIATIVE AND BIG DATA

Charles Ward, Lead for Integrated Computational Materials Science and Engineering, Air Force Research Laboratory

Dr. Ward began his presentation by showing that the amount of information related to materials has been increasing dramatically each year; in 2012, over 162,000 journal articles were published in the fields of materials science and engineering. He pointed out that industry has some of the best materials databases in the world. He gave an example of data-driven materials development in a cast and wrought disk alloy, R65, conducted by GE Aviation. Data-driven methods such as these are able to reduce both development time and costs by up to 50 percent.3

Dr. Ward then described the Materials Genome Initiative (MGI), which intends to create a Materials Innovation Infrastructure comprising accessible digital data, computational tools, and experimental tools. More about the MGI can be found in Box 1.

Dr. Ward then defined data and metadata. Data are the result of measurement; data can be physical, consisting of experimental results and uncertainty, or virtual, consisting of simulation results and uncertainty. Metadata are the information that describes the measurement process; metadata can also be physical, consisting of the experimental setup and settings, or virtual, consisting of the explicit underlying model, simulation software, and input parameters.

Dr. Ward presented an example of a traditional approach to materials development that focused on measuring a diffusion coefficient. In the example, a researcher would measure the diffusion coefficient by tracking the root mean displacement of tracer particles, record the values, and publish the results (including metadata in the publication), typically represented as a diffusion coefficient. However, this reporting approach is dependent on a specific model (the diffusion equation). The paper assumes that the data fit the model, and it does not actually report the experimentally measured data.

Dr. Ward noted that the crystallography community has moved toward open data sharing; crystallography is one of several disciplines that are leading the way

_________________

3 R. Schafrik, GE Aviation, paper presented at the RTO Applied Vehicle Technology Panel (AVT) Specialists’ Meeting, RTO-MP-AVT-187, on October 12, 2012.

BOX 1

Materials Genome Initiative

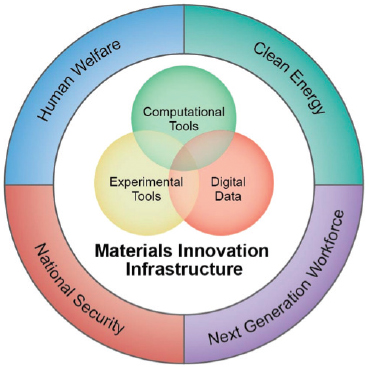

The Materials Genome Initiative (MGI), announced by the White House in June 2011, aims to double the speed at which materials are discovered, developed, and manufactured. MGI seeks to develop a materials innovation infrastructure that includes the following:

- Computational tools for modeling, simulation, design, and exploration.

- Experimental tools for synthesis and processing, characterization and analysis, testing and prototyping, and verification and validation.

- Digital data.

The goal of the MGI is to develop collaborative networks that support the sharing of best practices and data to foster an open environment for materials design and development. Areas of impact include clean energy, human welfare, national security, and the next generation workforce (see Figure B-1).

FIGURE B-1 Conceptual representation of the MGI, showing the overlapping infrastructure requirements and the application areas.

_______________

SOURCE: White House Materials Genome Initiative, http://www.whitehouse.gov/mgi/goals. Accessed February 26, 2014.

in open source data. The Crystallographic Open Database stores over 250,000 compounds and minerals. The International Union of Crystallography has mandated data archiving for its journals; as a result, one database (Crystmet) has over 150,000 entries of metallic and intermetallic structures, and another (Cambridge Structural Database, or CSD) has nearly 700,000 organic and metal-organic structures and almost 100,000 macromolecular structures available in its database. Dr. Ward suggested a next logical step for the materials community would be to capture data pertaining to CALPHAD.4 CALPHAD data also consist of metadata that increase in spatial and temporal complexity compared to crystallographic data.

Dr. Ward then posed a set of challenges in addressing materials data. He noted the following:

- Materials have a pervasive application. As a result, there is no single government funding agency that leads a cohesive materials research and development effort.

- Materials have a nearly infinite scale of design variability. There are few reference data sets, making it difficult to standardize data descriptions.

- Materials are studied and used by many technical disciplines. As a result, the data are widely dispersed, and a disparate vocabulary is used to describe them.

- Materials are often a product differentiator. This means that proprietary protections are often put into place for materials, making data sharing more difficult.

- Materials are a key to economic and national security. As a result, they are subject to export controls, such as the International Traffic in Arms Regulation (ITAR) and Export Administration Regulations (EAR).5

Dr. Ward provided information on the National Institute for Standards and Technology (NIST) workshop on MGI data, held in May 2012. The workshop identified common themes to be addressed for materials data archiving, including the following:

- Materials schema/ontology,

- Data and metadata standards,

- Data repositories/archives,

_________________

4 CALPHAD refers to the CALculation of PHAse Diagrams, a computational method of modeling the thermodynamic properties of materials.

5 For further reading, see, for example, NRC, Export Control Challenges Associated with Homeland Security, Washington, D.C.: The National Academies Press, 2012, and NRC, Beyond “Fortress America”: National Security Controls on Science and Technology in a Globalized World, Washington, D.C.: The National Academies Press, 2009.

- Data quality,

- Incentives for data sharing,

- Intellectual property, and

- Tools for finding data.

Dr. Ward also showed results from an open survey conducted by the Materials Research Society and The Minerals, Metals and Materials Society (TMS) in the summer of 2013. The survey had a large number (675) of respondents. The respondents reported an interest in sharing fairly modest amounts of data overall (about half of the respondents said the data quantity they wish to share would be <1 GB per year). Survey participants also responded that they would be most interested in databases and data mining tools related to a material’s physical and thermal properties. This is actually the most basic level—physical and thermal properties have the least complex data and metadata requirements. Survey respondents noted some impediments to data sharing, particularly with respect to data ownership and intellectual property rules. One slight surprise was that receiving feedback from others was considered a strong motivating factor for sharing data, on a par with increased research visibility.

Dr. Ward then described MGI initiatives with data-intensive elements taking place within the government, including a number of projects at ARL, the Air Force Research Laboratory (AFRL), ONR, DARPA, DOE, and NIST. He pointed out that these efforts use an ICME framework. (For more information about ICME, see Box 2.) The activities are geared to action. Dr. Ward postulated that the greatest challenges facing the government agencies in implementing the MGI are the coordination of effort and the understanding of lessons learned by different agencies.

Dr. Ward stated that open access to research results through open access publishing is growing, and it is creating an entirely new model for publishing. The government is encouraging open access via data management plans required in proposals—both the National Institutes of Health (NIH) and NSF have such requirements—as well as through a White House directive on public access (see Dr. Stebbins’s presentation summary, below, for more information). Some research disciplines, such as crystallography and evolutionary biology, have taken the initiative by adopting their own data archiving policies.

Dr. Ward concluded with the following summary statements:

- Materials data have intrinsic value that can enhance the research and development process.

- Several technical and cultural factors make the capture, archiving, and sharing of materials data difficult.

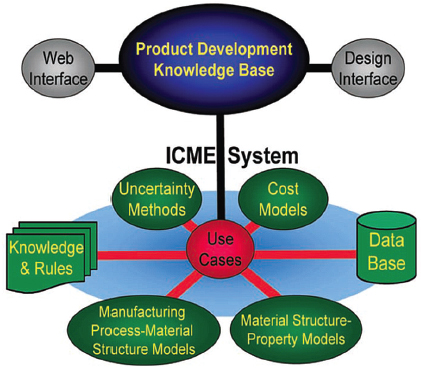

BOX 2

Integrated Computational Materials Engineering

ICME is “the integration of materials information, captured in computational tools, with engineering product performance analysis and manufacturing-process simulation” (NRC, Integrated Computational Materials Engineering, p. 9). It is a process by which materials, manufacturing processes, and component design can be optimized long before the components are fabricated (see Figure B-2). It is considered a promising emerging discipline that is still under development.

FIGURE B-2 An ICME system unifies materials information into a holistic system that is linked by means of a software integration tool to a designer knowledge base containing tools and models from other engineering disciplines.

_______________

SOURCE: NRC, Integrated Computational Materials Engineering: A Transformational Discipline for Improved Competitiveness and National Security. Washington, D.C.: The National Academies Press, 2008.

- The materials community appears willing to take on the challenge of materials data.

- Several MGI and community-led efforts are under way to guide materials data archiving.

- Broad discussion of materials data archiving within the community is gaining momentum and needs to be nurtured.

The question-and-answer period began with a discussion of data ownership. A participant asked about a scenario in which the data belong to whoever pays for it, not to the original data collector. Would this use of data without the knowledge of the persons who created the data not be dangerous? Dr. Ward pointed out that this is part of the normal course of science: One person creates data, another refines it. He gave several examples, such as data related to genomics, in which 500 people might access and analyze the data without having been part of its collection. He also pointed out that the field of evolutionary biology saw a 70 percent increase in data citations after setting up the Dryad program.

A participant asked if these challenges were being addressed by the materials genome community, or if they were merely being listed in the hope that another community would address them. Dr. Ward responded that the path forward is fairly clear, and that MGI hopes to follow the model of the evolutionary biology community in bringing the community together to decide what to do and how to add value.

Another participant pointed out that NSF and the NIH require data management plans in their proposals, and universities now have systems in place to publish data sets and provide them with a digital object identifier, which is citable forever. These might be models for the materials community. A data citation index might also be useful.

GE EFFORTS IN MATERIALS DATA: DEVELOPMENT OF THE ICME-NET

Russell Irving, Services Technology Leader, GE Global Research

Mr. Irving’s talk drew on information from the Metals Affordable Initiative Workshop on Data Management for ICME in June 2011. At that meeting, he listed four key advancements in computer science that should be taken advantage of:

- Cloud computing. This is elastic, use-as-you-go computing.

- Service-oriented architecture. This allows for interoperability and sharing.

- Federated data. This allows the owner of data to share some parts and

withhold other parts of databases. Users see data as if they were from one database.

- Business process management. This is modern work flow software.

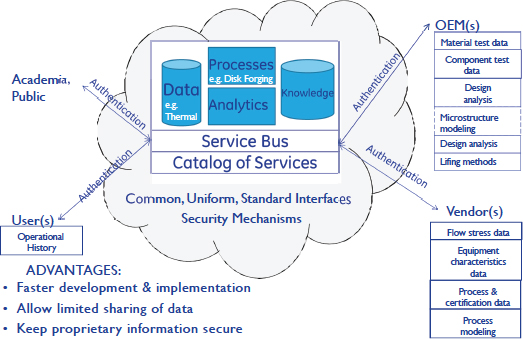

When combined, the four items can provide a collaborative ecosystem for materials development. The outcome at GE is the ICME-Net, which, according to Mr. Irving, fosters collaboration, enables the reuse of common analytics and processes, and builds knowledge accumulation and sharing. The interface to ICME-Net is a web browser providing access to all in GE who are cleared for access. Mr. Irving provided an example of ICME-Net for disk forging, shown in Figure 2.

Mr. Irving explained that GE had teamed with MIT to develop a collaborative ecosystem for open design and manufacturing; MIT was able to provide the software components needed, and together they built an environment for crowdsourcing military vehicle design. While the project did not move to Phase II, they were able to develop a robust prototype. GE leveraged the GE/MIT collaborative

FIGURE 2 ICME-Net for a disk forging use case. SOURCE: Russell Irving, GE Global Research, presentation to the Metals Affordable Initiative Workshop on Data Management for Integrated Computational Materials Engineering, Slide 6.

ecosystem platform technology to develop an innovation infrastructure for ICME-Net. The initial vision for ICME-Net consisted of the following goals:

- Enable geographically distributed collaboration on development, testing, and demonstration of ICME technology.

- Rapidly disseminate technology development through a “marketplace” of materials engineering models and processes.

- Enable the construction of large, complex simulations for ICME.

- Attract and sustain an ICME community.

- Provide an opportunity for both open source and retention of intellectual property access to technology and best practices.

Mr. Irving then described ICME-Net in more detail. The goal is to grow a collaborative ecosystem. ICME-Net intends to build a marketplace that will subtly motivate the community to participate. This will enhance productivity, reduce development cost and time, curate model development, and make businesses more responsive. Projects consist of both components and services, and users can also be contributors.

Mr. Irving then discussed three use cases for ICME-Net. The first was the ceramic matrix composites project. ICME-Net provides a single location for the storage of experimental data and analysis. Users can decide whether to store a calculated result. The single location provides an auditable trail of every activity; it can be searched to find analysis done on a particular day or by a particular person. Mr. Irving said the interface has some similarities to Facebook, in that users can say whether they “like” a particular service. In the marketplace, therefore, the best services will accumulate the most “likes.” Mr. Irving explained that each element (users, concepts, components, subcomponents, and services) is kept small. Curation and metadata management can be incorporated as the project develops.

Next, Mr. Irving described the Materials Applications Engineer for rotors, the second use case. This project results in large data sets, consisting of cut-up and microstructure data, as well as results from forging and heat-treatment models.

The final use case described was in alloy development. Users build small pieces of software to open the files and analyze the data one section at a time. The ICME-Net application contains a graphical user interface to lay out work flows and show linkages. The execution of the analysis is conducted in the cloud, and the curation and metadata management are separated from the data themselves.

Mr. Irving noted that ICME-Net can establish basic services for a particular application within several weeks. It was not originally intended to be used as a productivity tool, though that is now a top benefit. More functionality will continue to be added. He concluded with three main ideas:

- The ICME concept is not new, and there are similar needs in other application areas, such as cyberinfrastructure.

- Additional computing and software can extend the ICME-Net capability to, for example, a supply chain scenario.

- There remain many outstanding issues associated with intellectual property, export control, proper business models, and other policy concerns.

In the discussion session, someone asked who the users of ICME-Net are. Mr. Irving responded that currently the users are all internal to GE, although GE is working to allow vendor access as well. The users are materials scientists with data that need to be analyzed.

SMART MANUFACTURING: ENTERPRISE RIGHT TIME, NETWORKED DATA, INFORMATION, AND ACTION

Jim Davis, Vice Provost, Information Technology, and Chief Technology Officer, University of California Los Angeles

Dr. Davis pointed out that his talk would not focus on “big data” per se. He said his focus is manufacturing—but, as it happens, much is data-oriented in manufacturing right now. He began by defining some of the terms in the title of his talk. He explained that he means for the term “enterprise” to have as broad a scope as necessary, whether that means from factory to supply chain or across units. “Right time” is similar to real time, but it is associated with the window in time for taking action and not the rate of data collection. He stressed that “action” is an important element of smart manufacturing.

Dr. Davis then described the Smart Manufacturing Leadership Coalition (SMLC), which he cofounded in 2006. SMLC uses an industry-driven strategy to make more information available to the manufacturing community. Its current focus is implementation. He explained that the manufacturing community generally wants to share services (or “apps”), not proprietary data. He said that in his role as UCLA’s chief technology officer, he interfaces with the manufacturing operations community, where he sees an emphasis on smart manufacturing, cyberphysical systems, and the Internet of Things (IoT).6 In his role as head of information technology for UCLA, Dr. Davis interfaces with the information technology community, where he sees a focus on enterprise resource planning, big data, cloud computing, and mobility.

_________________

6 IoT is the network of uniquely identifiable physical objects (such as sensors or actuators) embedded throughout a network structure.

Dr. Davis then provided several different examples of smart manufacturing systems and the role of data in those systems:

- Example 1: Smart Manufacturing at General Mills. This is an example of network-based manufacturing. Dr. Davis said that a “material” is a statistical composite of its constituent elements. In this example of General Mills, one of the “materials” is Cheerios. The material for Cheerios is supplied by farms, all of which are subject to varying conditions such as weather and water accessibility. It would be helpful for General Mills to have basic data from each of the many farms in its supply chain, such as lot size and other information about the lot. There are manufacturing constraints as well, as the production facility must comply with cleanliness and contamination standards, and the Food and Drug Administration has tracking and traceability requirements. Dr. Davis explained that General Mills has a “green light” procedure, in which the constituent elements are analyzed for readiness to be put into production; the recipe is confirmed; the material is made; and the product is released upon confirmation that it meets requirements. Dr. Davis noted that while the Cheerios application may not qualify as big data, a lot of supporting data are involved in the manufacture of a Cheerio.

- Example 2: Smart Manufacturing at Praxair. Praxair supports oil and gas production at 40 or so facilities worldwide. Its furnaces must be kept in a certain temperature range. Dr. Davis explained that Praxair is currently very conservative about that range, which leads to waste heat and extra associated expense. Praxair would like to manage its risk differently to realize the potential for significant cost savings. The furnaces cannot have sensors in them, as the environment is too harsh. Instead, Praxair has turned to high-fidelity modeling in production. This project involves data at many different scales.

- Example 3: Smart Manufacturing at General Dynamics. At its Scranton, Pennsylvania, plant, General Dynamics has activities in heating and forging as well as cutting and machining. It is attempting to match the requirements for heating and forging to the output of the cutting and machining operations to better manage its energy usage and production efficiency.

- Example 4: ICME at Caltech’s Materials and Process Simulation Center. This center assesses the manufacturing interface with materials models. The center is often asked about material risk and response and would like to support an operational mode, such as ICME. The center is looking at infrastructure to allow the models to move seamlessly into manufacturing. It is also working with Caltech/JPL on integrated systems design, which

looks at how design models can inform the system and how to use models in production.

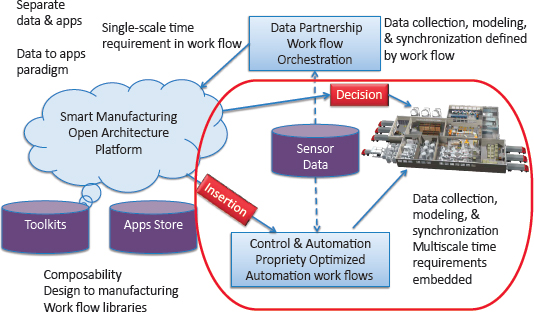

Dr. Davis said that smart manufacturing is based on a testbed approach. He explained that the SMLC’s portfolio of problems includes the following: smart machine operations, high-fidelity modeling, dynamic decisions, enterprise and supply chain decisions, and design and planning. All of these elements are data-intensive in some way, and an infrastructure is needed to manage these data appropriately.

Dr. Davis described smart manufacturing as a multilayered system, and he postulated that improvements can typically be made at points of handoff, or seams. Seams can be between different departments or vendors, between designers and manufacturers, or between business systems and control/automation. At the lowest layer, the microlayer, the focus is on insertion, rapid qualification, ICME, and informing control systems. There is a short time constant associated with these functions. The next layer, the mesolayer, is a much larger space and consists of the operational decisions. The focus is on operational performance and goals, maintenance, dynamic trade-offs, and people. The upper layer, the macrolayer, focuses on supply chain information and transitions to outside the company. Dr. Davis pointed out that there are seams within each layer as well as seams across layers. The time constants are different across the different layers, which creates seams as well. It is important to orchestrate applications—that is, manage the work flow. This construct allows one to manage time (a “window of action”). The work flow can be analyzed and then used to generate projections about the output. It also allows for tracking and traceability.

Dr. Davis then defined smart manufacturing intelligence and work flow. Smart manufacturing intelligence is characterized by

- Applications that can share data, data that can share applications, and applications that can connect to applications to achieve horizontal enterprise views and actions.

- Orchestration of standardized decision work flows based on structured adaptation and autonomy.

- Actionable data, trust, and visibility across the supply chain.

- In-time, in-production qualification of materials, products, and actions.

- In-time, in-production, multidimensional (business, operations, supply chain, customer, maintenance, energy) performance and adaptation.

- Cross-company operational data to improve performance.

- Evolvable design models in manufacturing.

The SMLC also defined work flow, stating that the smart manufacturing work flow enables a dynamic orchestration of manufacturing steps across different time

constants and seams, including the supply chain, without losing control of state. It is hosted on an interoperable, accessible, affordable, secure, and reliable hybrid cloud platform and supports commercial products and services, research and development needs, and academic interests.

Dr. Davis explained how smart manufacturing interfaces with the ISA-95 standard.7 He said that the SMLC takes advantage of standards but has not itself been involved in standards development. With respect to the definitions of ISA-95 Levels 0, 1, 2, or 4, the coalition is most interested in ISA-95 Level 3, which relates to manufacturing operations management. Level 3 seems to be a growing space with the most interfaces and thus offers the greatest opportunity for improvements in efficiency.

The SMLC looked at the main reasons the coalition stays together: Most SMLC members are interested in issues that extend beyond any one company. These issues are, for example, related to risk and organizational constraints. Data are part of each element.

The SMLC used a market-driven approach to identify its work flow, focusing on work flow as a service. The SMLC used the smartphone model of apps, toolkits, and so on. “App” is used here very broadly as a general-purpose term that allows systems to interface. The app model allows for enhanced flexibility. A work flow schematic is shown in Figure 3.

Dr. Davis explained that the SMLC uses a market-driven approach. Mapping and interface apps retrieve and manipulate data and map context. The apps should be put somewhere visible, and users should be able to understand how the apps are to be used and how well they perform. The SMLC is also interested in infrastructure that allows for the contribution of apps, broadening the space of innovation. The market is also driving standards-setting. Dr. Davis said this is equivalent to assembling a stack where the lower levels focus on the IoT. The SMLC focuses more on the middle layers, related to smart systems. Big data is the focus of the top of the stack; the amount of data increases as one rises through the stack. Currently, no one wants to share data, but there is a willingness to share apps and their use for different data applications. Dr. Davis pointed out that data valuation is a critical issue; when benefit is derived from the data, understanding the intellectual property becomes important, as does developing a business model to capitalize on that benefit.

Dr. Davis explained that there are many parallels in the health care system. The health care system also has value associated with patient data, as well as derivative intellectual property. One can think of companies as patients.

Dr. Davis noted that the app terminology relates smart manufacturing to

_________________

7 ISA-95 is an international standard for the integration of control systems for manufacturing and processing operations. See http://www.isa-95.com for more information. Accessed February 25, 2014.

FIGURE 3 Smart manufacturing work flow. The sections circled in red at the bottom right are related to infrastructure. SOURCE: Jim Davis, University of California, Los Angeles, presentation to the committee on February 5, 2014, Slide 15. Courtesy of Smart Manufacturing Leadership Coalition.

smartphones. The chip layer in a smartphone is analogous to the SMLC work-flow-as-a-service layer; carriers match up to large manufacturers because both groups address issues related to matching platforms and compatibility. Also, smartphones have free core apps as well as paid apps, just as in the smart manufacturing realm. One distinction is that the smart manufacturing community is linking the data flow between existing apps. The apps themselves are not new, but the toolkits (work flows assembled to have a certain function) are the most valuable to the manufacturing community.

Dr. Davis concluded by saying that smart manufacturing cannot be addressed piecemeal. The coalition remains cohesive in its attempt to take a comprehensive view of how to proceed in smart manufacturing, focusing on the architecture to enable and orchestrate the apps while allowing the marketplace to decide the use.

In the discussion period, a participant pointed out that smart manufacturing is customer-driven. In the defense community, the notion of a customer may be

less clear. The military is a consumer, but not necessarily a customer. Dr. Davis responded that whereas the focus would have to be less centered on the consumer when applying this to the DOD, the notion of orchestrating and coordinating is still important. Another participant suggested it may be better to consider the defense industrial base as the customer.

Dr. McGrath made some specific points related to Kepler.8 He pointed out that the smart manufacturing work flow is based on Kepler, which is a scientific platform that has been used for many years for scientific work flows. He pointed out that Kepler currently seemed to be working well, but as the system becomes vendor-agnostic and moves into the open source, the specific platform may need to be reassessed. He pointed out that Kepler will need to be able to capture metadata.

Dr. Schafrik asked about the differences between small manufacturers and larger ones. Dr. Davis said that the smaller manufacturers do not have the time or money to address the issues related to smart manufacturing. If a smaller manufacturer is given the tools to manage information for multiple customers, it is likely to use them.

A participant asked about access to DOD data. The response was that AFOSR, ONR, DARPA, and others are exploring ways to share data generated under a DOD contract with the broader community.

Another participant asked about validating data, particularly when using advanced manufacturing techniques. Dr. Irving said that there is more emphasis right now on improving advanced manufacturing techniques; once the technique is refined, one can consider which process parameters to capture.

A participant argued that, for the small-scale researcher, data collection is outpacing computing capabilities. In the case of neutron beams, the bottleneck is processing the data rather than gaining access to beam lines. Continued progress is needed to provide new data analysis programs.

A participant pointed out that an industrial manufacturer such as General Mills has commercial supply chains. However, DOD does not. It can be difficult to keep all points of the DOD supply chain engaged when demand falls off. Another participant noted that an older NRC report commented on the dual-use industrial base (National Research Council, 1999). That report pointed out that, in a downturn, a company that is optimized for the defense industry cannot be readily commercially successful at the same time. There are no technical constraints, but the business models do not match well.

_________________

8 Kepler is a free, open source, scientific work flow tool. See http://kepler-project.org for more information. Accessed February 25, 2014.

A participant concluded the discussion period by noting that the materials community has needs in all four V’s of big data (volume, velocity, variety, and veracity) but at different levels of urgency. The materials community may not be as limited by computational technology as other fields are, though there are still unresolved issues in how to manage and analyze data.