6

Metrics for Achieving Goals and Demonstrating Impact

Counting sounds easy until we actually attempt it, and then we quickly discover that often we cannot recognize what we ought to count. Numbers are no substitute for clear definitions and not everything that can be counted counts.

—William Bruce Cameron, 1958

The value of field stations is widely documented in success stories by leading scientists, anecdotal evidence, and qualitative and semi-quantitative data. But it is difficult to analyze quantitatively the collective contribution of field stations to research, education, and outreach, because of the lack of aggregated empirical evidence. Field stations need common metrics that clearly demonstrate to their parent institutions and to current and future funders the range and magnitude of their impact. The few metrics that are available are haphazardly collected, fragmented, and infrequently shared. This weakens internal assessments and inhibits any synthetic assessment of the collective value of field stations to the scientific community and to broader society. The availability of the information in question is increasingly important as financial resources shrink or are reallocated to initiatives deemed to be of greater importance by funding institutions. In times of shrinking budgets, demonstrating outcomes and value become essential in securing long-term funding.

Key Elements for Developing Metrics

Sound metrics for evaluating program performance and progress include both quantitative and qualitative measures (NRC 2005). Traditional input metrics (number of staff or amount of research funding) and output metrics (number of publications, dissertations, and theses) alone paint an incomplete picture (NRC 2005). Outcome metrics, although more difficult to collect, are also important to assess the overall value of the field stations to science and society. Appropriate metrics are essential for monitoring, assessing, or modifying programmatic and financial strategies of field stations. Some of these key elements are highlighted in Box 6-1.

On the basis of those key categories, field station metrics should be developed to monitor and assess the impact and effectiveness of research, education, outreach, and financial strategies (see Box 6-2 for examples of such metrics). In addition, metrics should be meaningful to current and potential funders and to the

BOX 6-1

Key Elements for Developing Appropriate Metrics

1. Good leadership, governance, and strategic planning

2. Clear strategic plans that identify the core mission and articulate the goals against which progress can be measured

3. Robust business and funding plans to support infrastructure and research goals

4. Straightforward metrics that

a. Encourage strategic assessments but avoid frequent, burdensome reporting

b. Advance progress in research and education, are easily understood and accepted, and promote quality

c. Maintain relevance, reflecting the dynamic and rapid pace of educational and scientific progress and objectives

5. Adequate human and financial resources for developing and applying useful metrics

SOURCE: NRC 2005 (pp. 3-4).

communities that field stations serve. Many field stations document at least one metric well, such as the number of research grants or number of publications, but fail to thoroughly document outputs and outcomes of other important activities, such as training, outreach, and achieving budgetary goals. Although it is essential to have metrics of standard performance, such as publications and grants, field stations also need to develop metrics to assess leadership success. For example, when measuring station director success, institutions should develop metrics to evaluate the leader’s role in the success of the field station in carrying out its mission, not simply the director’s career advancement as a scholar. Many smaller field stations lack the human and fiscal resources necessary for systematic gathering of data to document and archive metrics of performance. Thus, it is even more critical to have strong leadership with clear business and funding plans to enable efficient use of metrics with available resources.

Toward a Common Set of Metrics

The committee attempted to support the anecdotal evidence of the value of field stations to research, education, outreach, and career development with data and information on trends in funding, use, and impact. This proved to be an impossible task in the short time available and given the paucity of relevant information. Although each station might collect some data to demonstrate its contribution to research and education, the summative data and information for the broader community of field stations is neither stored nor accessible in a central location that we could identify. This is clearly a serious deficiency. We comment on some of the kinds of data that should be collected and made available so that, in the future, individual field stations and the community of field stations can make

BOX 6-2

Examples of Some Metrics to Assess Field Station Programs

Assessing impact of research

- Number of publications and their citation impact factors

- Number of digital datasets archived, downloaded, and cited

- Number of laws, regulations, and policies that have been influenced by field station research

- Participation in collaborative research and organizational networks

Assessing impact of education

- Alumni success stories

- Long-term tracking of field station students (e.g., graduation and career outcomes)

- Number of students conducting independent research (e.g., in Research Experience for Undergraduates and Experimental Program to Stimulate Competitive Research)

- Learning-outcomes assessments

Assessing impact of outreach

- Number of organizations that visit the field station (e.g., in summer programs and community organizations and through citizen science)

- Learning-outcomes assessments

- Media reach evaluation

Assessing field station use (research, teaching, and outreach)

- Number of user days and contact hours

- Peak season of use and capacity for facility

Assessing financial stability

- Number and size of grants enabled by field stations

- Amount of recovered overhead

- Revenue income from endowments, gifts, sponsored activities, and user charges

- Operating and maintenance expenses

a more compelling case of their value to science and society.

To make the case for their collective importance as a national and even international resource, field stations would benefit greatly from working together to develop a common set of metrics of performance to document their outputs and outcomes and to allow comparisons among stations. A common system for a wide range of metrics is important, so that evidence and trends of impact can be aggregated and differentiated across the wide range of missions and goals of individual field stations. Sharing the development and collection of metrics would be greatly facilitated if field stations were part of a network. This also would make an assessment of impacts less costly for individual field stations.. As outlined in Chapter 1, these metrics, data, and metadata will be an increasingly important

resource for individual field stations, networks of field stations, the nation, and the world in this era of climate change and other environmental and societal pressures, as well as declining funding and increasing demand for accountability. If metrics are to be diagnostic, they will have to be scalable by station size and mission. For example, not all field stations place equal priority on teaching, research, and outreach, and as has been observed, they range greatly in size.

There is a need for a centralized capacity to store, manage, and distribute data on metrics. To accomplish those goals on a broad scale, the National Science Foundation (NSF) could support the development and implementation of a centralized database of field station metrics in collaboration with such professional societies as the Organization of Biological Field Stations and the National Association of Marine Laboratories. That would require an investment, but it would provide major benefits in documenting the contributions of individual field stations and the community of field stations, and in evaluating the relative contributions of different field stations, and identifying potential networking opportunities among facilities.

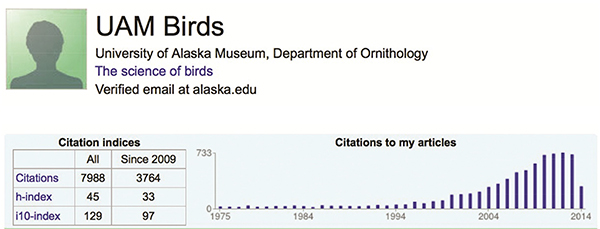

In general, measuring the impact of research at field stations on the scientific enterprise poses a challenge because benefits to society usually are not observed until years after research is completed. A few field stations are documenting metrics of research output: La Selva Biological Station produced over 3,000 publications from 1956 to 2007 (Michener et al. 2009), and the Rocky Mountain Biological Laboratory (RMBL) produces an average of 35 scientific publications per year (Billick and Price 2010); 1,324 publications and 97 dissertations had been based on research conducted at RMBL between its inception in 1928 and 2011 (Inouye 2013). However, tracking publications from field stations can be difficult. Modern data aggregators (e.g., altmetrics39 and Google Scholar) could make publication tracking easier. For example, the University of Alaska of the North recently generated a Google Scholar profile (UAM Birds), to better assess the number and quality of publications supported by the museum’s bird collection. The effort led museum staff to discover that “the body of work supported by the collection is diverse and well cited, with a profile h-index40 of 42, equivalent to an average Nobel laureate in physics” (Winker and Withrow 2014; Figure 6-1). A field station–specific digital object identifier (DOI) would be even more advantageous for publication tracking: each field station could publish a basic description of its location (where it is and general characteristics of the site) and then submit the description to a stable ecological archive to generate a DOI. If each future publication based on research at a particular field station cited this basic description, including the DOI, publications from the field station could be easily

__________________

39http://altmetrics.org/manifesto

40Measure of a “scholar’s” impact based on the number of publications and the number of citations per publication.

FIGURE 6-1. Google Scholar Page of the University of Alaska Museum Bird Collection. The scholar page lists all cited publications (not included here), tracks the number of publications over time, and calculates indices of citation impact.

tracked. That would not allow tracking of past activities, but it would allow tracking of future publications by field stations. The combination of a DOI and modern data aggregators could further facilitate the tracking of future publications on field station research.

DOIs could also be applied to a field station’s raw datasets, metadata, or biological collections. Sharing the data products from field stations broadly would add value to the data and to the field station where the data were collected. A potential metric of the impact and use of data from field stations could be developed by analyzing how often metadata and datasets are downloaded, used, or cited. Outputs such as the number of research publications or frequency of dataset use are important, but they are not good indicators of outcomes or impact. Outcomes also require attention.

Societal impact could be measured by aggregating the number of laws, regulations, policy decisions, or global assessments that have been informed by field station research and data (see, e.g., Table 1-2). Aggregation could be handled by the proposed field station network or a third-party oversight organization. The network could also survey educators in the K-12 and university systems to assess what teachers’ needs are in science, technology, engineering, and mathematics curricula and how field station programs have benefited or could potentially benefit teachers and students who visit. A network could prepare a report on the number of studies conducted or the number of researchers that use field stations each year and share this information with local communities, host institutions, government agencies, and other funders.

A variety of field stations have programs that can serve as models because they provide data repositories, libraries, lists of publications, information about the local climate, species found in the area, and scientists and staff members who work there. For example, the website of Archbold Biological Station includes annual reports, data and metadata, and even a fact sheet describing the location, habitats, climate, and species. The website for Cedar Point Biological Station, a smaller field

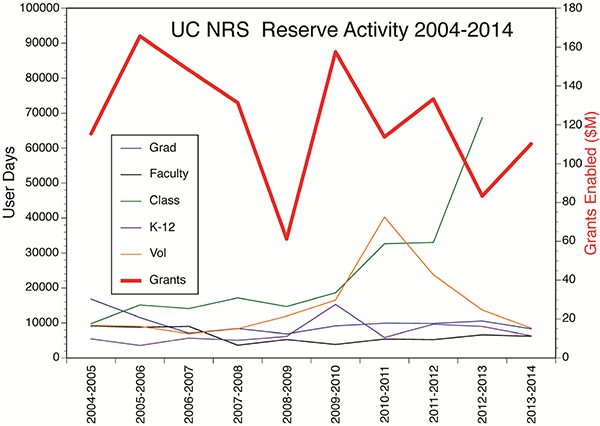

FIGURE 6-2. Aggregated Data on User Activities within the UC Natural Reserve System. User-reported data on field station user-days (1 day and generally 1 night) between 2004 and 2014 in self-defined categories as follows: graduate students (Grad), research faculty (Faculty), university-level students (Class); K-12 students; volunteers (Vol); and the total budgets of research grants that were approved to use one more of the UC NRS reserves (Grants). Prior to 2012, undergraduate and graduate student user-days were lumped together. Data for this chart was gathered from the Reserve Application Management System: http://rams.ucnrs.org/.

station that is associated with the University of Nebraska, provides information about science camps, facilities, and local natural areas and a link to alumni so that they can stay connected. The University of California Natural Reserve System uses centralized data collection to support its activities and is one of the first such databases41 that makes reserve-related research widely available within a network (Figure 6-2; Box 6-3).

These examples show that it is possible to create simple, yet sophisticated systems for collecting data and information on field stations that show the value of field stations with respect to meeting their core goals. It may be pertinent to point out that private field stations often have a stronger Web presence than many public field stations. One reason for that may be that private stations need to fund their continued operations through private contributions and user fees and have found that a strong Web presence leads to more financial support. Field stations could learn from the Conservation Measures Partnership, which, with funding from several private foundations interested in improving organization effectiveness,

__________________

BOX 6-3

University of California Natural Reserve System—Collecting and Aggregating Data

The University of California Natural Reserve System (UC NRS) needed a way to track their activities so that they could report quantitative measures of their use to supporting campus administrators and external private sector and state and federal agency funders. However, gathering data on the 39 field stations within UC NRS across a wide variety of uses for research, education, and public engagement is complex. The UC NRS stations are visited by many different people, and provide infrastructure for multiple research projects that can span different time periods and include more than one reserve.

In 2000, the Reserve Application Management System (RAMS)42 was created to address the challenge of gathering data on UC NRS use. RAMS captures information from the users of the field stations by asking them to fill out an application before they are allowed access to the reserves. The application gleans input data on every approved research project for inclusion in the core ecological metadata. RAMS also requires a user’s research permit information and provides a liability waiver form online. Some NRS reserves were slow to adopt RAMS, and so underreporting is an issue. Nonetheless, RAMS enables station managers and UC NRS leadership to aggregate a wide-variety of quantitative data about station uses.

For example, from January 2010 to January 2013, 26,600 people spent a total of 84,237 user-days on the 39 reserves, including over 2,500 university-level researchers. More than 150 undergraduate courses were offered at one or more NRS reserves, including 3,900 university students. Over 1,700 K-12 students participated in learning on the reserves. Research activity on the reserves resulted in 683 peer-reviewed journal articles, books, and book chapters. Research grants enabled by the reserves totaled $386.4 million. Research projects enabled by more than one reserve accounted for $74.6 million of the total extramural grant funding.

In 2012, RAMS was upgraded to a MySQL relational database. RAMS metadata follow the Morpho format developed by the Knowledge Network for Biocomplexity43 (see Michener and Jones 2012). The data is accessible online44 and includes location, temporal span, abstract of research, author, contact information, and funding sources and amounts.

developed a global standard for conservation projects.45

The value of field stations is widely acknowledged but unevenly documented by scientists and station managers in anecdotal evidence and in qualitative and semi-quantitative form. Measures of effectiveness that are aligned with field stations’ science, education, and business plans can lead to improvement in performance and impact but typically are lacking. In the absence of metrics, it is impossible to manage for improved outcomes.

__________________

Effective practices involve supporting and training leaders, collection of metrics, and networking among field stations. It is essential that all field stations have effective leadership and a strong support base that includes scientists, donors, and stakeholders that extend beyond the field station. It also is essential that field stations collect data that can be transformed into at least a minimal number of metrics to document their performance and successes. A strong communication program is necessary for both leaders and the institution and should include an effective and current Web presence. Finally, many functions of field stations could be enhanced by the formation of partnerships and networks, both nationally and internationally.

Recommendation: Field stations should work together to develop a common set of metrics of performance and impact. The metrics should be designed so that they can be aggregated for regions and the entire nation. Universities and other host institutions and funding organizations should support the gathering and transparent reporting of field station performance metrics because such information will enhance the stations’ ability to document the contributions of field stations to the nation’s research and education enterprise.

Recommendation: New mechanisms and funding need to be developed to collect, aggregate, and synthesize performance data for field stations, and to translate these data into metrics and information that can be used to document the value of the community of field stations to science and society.