Contemporary Issues for Protecting Patients in Cancer Research: Workshop Summary

In the nearly 40 years since implementation of federal regulations governing the protection of human participants in research, the number of clinical studies has grown exponentially. These studies have become more complex, with multisite trials now common, and there is increasing use of archived biospecimens and related data, including genomics data. In addition, growing emphasis on targeted cancer therapies requires greater collaboration and sharing of research data to ensure that rare patient subsets are adequately represented. Electronic records enable more extensive data collection and mining, but also raise concerns about the potential for inappropriate or unauthorized use of data, bringing patient protections into a new landscape. For example, there is growing interest in developing learning health care systems1 (LHCSs) and there are new funding sources for conducting comparative effectiveness research. There are also long-standing concerns about the processes and forms used to obtain informed consent from patients participating in clinical studies. These changes and challenges raise new ethical and practical questions for the oversight of clinical studies,

______________

1 Defined as a health care system in which science, informatics, incentives, and culture are aligned for continuous improvement and innovation, with best practices seamlessly embedded in the care process, patients and families active participants in all elements, and new knowledge captured as an integral by-product of the care experience (IOM, 2013).

and for protecting patients and their health information in an efficient manner that does not compromise the progress of biomedical research.

Recognizing this new landscape, the National Cancer Policy Forum of the Institute of Medicine convened a workshop to explore contemporary issues in human subjects protections2 as they pertain to cancer research, with the goal of identifying potential relevant policy actions. This workshop,3 held in Washington, DC, on February 24 and 25, 2014, brought together clinical researchers, government officials, members of Institutional Review Boards (IRBs), and patient advocates to discuss a wide range of topics, including

- The current regulatory arena and challenges in protecting participants in cancer research

- The patient perspective on current protections for research participants

- New oversight challenges stemming from genetic advances and the use of biospecimens in cancer research

- The evolving context of cancer research and care within LHCSs and multisite trials

- Education and research needs related to improving participant protections in research

This report is a summary of the workshop. A summary of suggestions from individual participants is provided in Box 1. The workshop agenda and statement of task can be found in the Appendix. The speakers’ biographies and presentations (as PDF and audio files) have been archived at http://www.iom.edu/Activities/Disease/NCPF/2014-FEB-24.aspx.

______________

2 “Human subjects protections” is the term used in regulations for the oversight of research involving humans. However, patient advocates at the workshop made an impassioned plea for changing this impersonal terminology. In this report, we often refer to “protections for participants in research.”

3 This workshop was organized by an independent planning committee whose role was limited to the identification of topics and speakers. This workshop summary was prepared by the rapporteurs as a factual summary of the presentations and discussions that took place at the workshop. Statements, recommendations, and opinions expressed are those of individual presenters and participants; are not necessarily endorsed or verified by the Institute of Medicine or the National Cancer Policy Forum; and should not be construed as reflecting any group consensus.

The current oversight of clinical research developed in response to past abuses of participants in clinical trials, such as the Tuskegee trial, in which some patients with syphilis received no treatment for their condition so that doctors could document the natural history of the disease. Awareness of such ethical lapses in the 1960s and 1970s led to the requirement that clinical studies undergo prospective ethical review by an IRB, and that participants give informed consent prior to participating in research.

IRB reviews are tiered according to the perceived risk involved in the study. Detailed review occurs for those studies in which participants would be exposed to the greatest risk, such as the study of experimental cancer drugs with potential serious side effects. Minimal risk studies, such as quality of life studies using questionnaires, can undergo what is known as expedited review, which entails review by the IRB chair or by one or more experienced IRB members designated by the chair, rather than review by the full IRB membership.4 Some studies, such as those involving the study of existing publicly available data or specimens, are considered so low risk that they are exempt from IRB review.5

Regulations governing IRBs for oversight of human research came into effect in 1981 and were further defined by the 1991 Common Rule,6 which specifies a baseline standard of ethics and protections of human research participants that has been adopted by 18 U.S. government agencies that support research. Additional oversight on clinical research was instituted after increasing use of electronic health records (EHRs) led Congress to pass the Health Insurance Portability and Accountability Act (HIPAA) in 1996, with the primary goals of improving the portability of health insurance, facilitating use of EHRs for patients, and protecting the privacy and security of personal health information. The Secretary of the U.S. Department of Health and Human Services (HHS) promulgated the final version of the HIPAA Privacy Rule7 in 2002. The Privacy Rule applies to “covered

______________

4 45 CFR 46.110, Categories 1 through 9.

5 45 CFR 46.101, Categories (b)(1) through (b)(6).

6 45 CFR 46.

7 45 CFR 160, 164.

BOX 1

Suggestions Made by Individual Workshop Participants

Overarching Suggestions to Improve Patient Protections in Cancer Research

- Actively engage patients in setting research priorities and in research oversight.

- Conduct research to better understand what protections patients want in clinical studies, and how best to achieve those protections.

- Clarify regulatory language to facilitate greater consistency in interpretations and implementation.

- Use online participant-centric registries in which participants can select the research options.

- Adopt the integration model of research and practice (i.e., a learning health care system [LHCS]).

- Develop an LHCS that is transparent to the patient community by providing information on studies that are being done and how patients and their medical care are affected by those studies.

- Rely less on informed consent for uses of data in an LHCS that are considered routine, and instead pursue other models of patient engagement to enhance protections for research participants.

- Provide educational opportunities to enhance the health and scientific literacy of the public.

Improving Deidentification Procedures and Privacy Protections

- Employ data protections that are proportional to the potential harm and consider the context of the research.

- Develop a national clearinghouse of models, methods, and evaluations of data deidentification.

- Use data deidentification methods that include both a risk estimation and a risk mitigation procedure.

- Discourage potential misuse of health data through laws prohibiting discrimination in health and life insurance and employment, as well as criminal penalties for hacking or stealing data.

Improving Consent Forms and Processes

- Shorten and simplify consent forms.

- Use health literacy principles and lexicons to simplify the language used in consent documents.

- Include a one-page summary of the consent form.

- Use simplified schemas to describe trial arms and key steps in each study, and use pictorials to show how any ancillary/ correlative studies (and their additional consents) fit into the trial structure.

- Use a teaching approach rather than a persuasive mode of speaking to patients during the consent process.

- Require confirmation of patient comprehension of consent as an entry criterion for studies.

- Develop and evaluate models of the consent process.

- Develop and disseminate evidence-based best practices for informed consent and incorporate feedback loops to foster improvement.

- Offer support for patients in the event that they decide not to participate in the trial offered.

- Clarify language for consent for future research.

- Incorporate interactive education technologies in the consent process.

Improving the Conduct and Oversight of Multisite Studies

- Standardize the operation of central institutional review boards (IRBs).

- Establish metrics to assess the quality of IRB review.

- Enroll patients in screening protocols that allow investigators to molecularly profile a patient’s cancer and then match them to interventional trials.

entities”8 and restricts the use or disclosure of an individual’s Protected Health Information (PHI) unless authorized (given written permission) by the individual, or unless permitted by the Privacy Rule for such things as treatment, payment, and health care operations; public health efforts; law enforcement; product recalls; or judicial or administrative proceedings. No such exemption exists for research (IOM, 2009a).

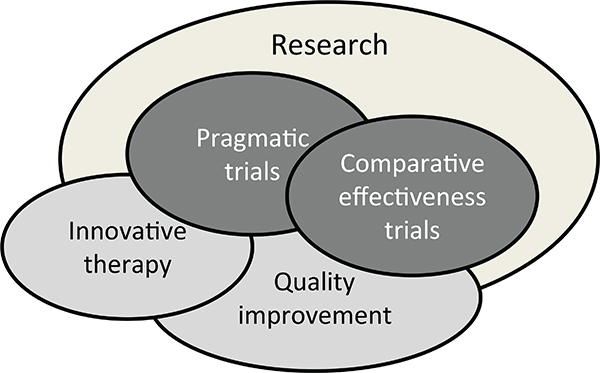

Alice Leiter, director of Health Information Technology Policy, National Partnership for Women & Families (formerly policy counsel, Health Privacy Project, Center for Democracy & Technology), said that under HIPAA, health care operations include “conducting quality assessment and improvement activities, including outcomes evaluation and development of clinical guidelines, provided that obtaining generalizable knowledge is not the primary purpose of any studies resulting from such activities.” Health care operations also include “population-based activities related to improving health or reducing health care costs and protocol development.” Research, in contrast, is defined by both HIPAA and the Common Rule as a “systematic investigation, including research development, testing, and evaluation, designed to develop or contribute to generalizable knowledge.”

Authorization to use or disclose PHI must specify in writing how, why, and to whom the health information can be used or disclosed. This authorization is distinct from, and in addition to, the traditional and well-established informed consent for research specified in the Common Rule. When patients sign the latter, they give consent to participate in a specific clinical study, after they have been informed of the specific potential risks and benefits of that research. Some informed consents also give permission for future research uses of patients’ health data or biospecimens, such as blood or tissue.

However, Melissa Bianchi, partner at Hogan Lovells US LLP, said that “research authorizations could not authorize future unspecified research.” Future research was initially not considered specific enough to comply with the patient authorization requirements of the Privacy Rule (IOM, 2009a). Consequently, information and biospecimens collected for one research study could not be used in another study without reauthorization from the original participants in the first research study, unless a waiver of authoriza-

______________

8 Defined as health plans, health care clearinghouses, and health care providers who transmit information in electronic form in connection with transactions for which HHS has adopted standards under HIPAA (45 C.F.R. § 164.510).

tion was granted for a subsequent study by an IRB or Privacy Board. The waiver could be granted if the board determined that the new research would pose minimal risk to patient confidentiality and safety, and that the research could not practically be done without the waiver. But no guidance was provided as to what factors should be considered in determining whether those criteria are met (IOM, 2009a).

SHORTCOMINGS OF CURRENT

REGULATIONS AND GUIDANCES

Several workshop participants discussed the perceived shortcomings of current regulations and guidances related to protection of participants in research. Some of that discussion focused on whether there was an appropriate balance when considering the potential risks and benefits of clinical research in oversight. When cancer patients have exhausted all available therapies, a clinical trial may be the best option for some patients, in spite of the uncertainty regarding the potential benefit of an experimental therapy. John Mendelsohn, Director of the Khalifa Institute for Personalized Cancer Therapy at the MD Anderson Cancer Center, said, “Our goal is to avoid unjustifiable risks, but to allow for uncertainty in order to let patients have access to what they need and what they might benefit from in treating their cancer. All of us need to do a better job at this and recognize there is no perfection. Perfection is often the enemy of the excellent.”

Deborah Collyar, founder and president, Patient Advocates in Research was particularly critical of what she called an evolution of patient consent from a document aimed mainly at protecting patients to one aimed at protecting institutions and thus viewing patients as liabilities. “We have to stop saying that informed consents are for patients and then create them for the institutions that are managing risks. It is okay to put in risk management if we need to, but please don’t pretend that is for the patient’s benefit. Risk management of the institution has to be separate from informed consent.”

Collyar also noted that current regulations were developed in reaction to what had happened to research subjects in the past. “It hasn’t been strategic because we wanted to put together a whole research program that made sense. Instead it has been reactive,” she said, noting that the concerns that led to the regulations were valid but “it has created a fearful environment that everybody focuses on because you don’t want to get your hands slapped or don’t want to get shut down. On the other end, people [who participate in research] want individual results and in a way that they can understand

them. They want data shared and the regulations we have actually thwart that process.” Susan Ellenberg, professor of biostatistics and associate dean for clinical research, University of Pennsylvania School of Medicine, added, “it is difficult to pull back on oversight mechanisms because most have been put in place because something awful happened and HHS or Congress had the view that this is never going to be allowed to happen again.” One conferee suggested that patient advocacy groups are in the best position to influence IRBs and regulators “so they have the courage to accept more risk.”

Other participants pointed out that risk varies depending on context, which is not adequately considered in current regulations and guidances. Ruth Faden, director of the Johns Hopkins Berman Institute of Bioethics, noted that health care systems vary tremendously in terms of transparency, accountability, and the extent to which patients are engaged.

Suanna Bruinooge, director of research policy, American Society of Clinical Oncology, added that IRB review should consider the context of the particular disease being studied. Many cancers are deadly and have no effective treatments when they are in advanced stages; as such, cancer research tends to be more integrated into the treatment setting. Such integration makes it difficult to apply current oversight, which makes strong divisions between research and clinical care, several participants noted. (See the “The Changing Context of Research and Care” section.) Patricia Ganz, distinguished university professor at the Fielding School of Public Health and director, Cancer Prevention & Control Research at the Jonsson Comprehensive Cancer Center, University of California, Los Angeles, noted “Often the best treatment for a patient is a clinical trial in oncology because we don’t have enough information about so many different kinds of cancers and the genetic tests we now have to explore tumors. We want to have every patient potentially be a research participant.”

Deidentified information does not qualify as protected health information. Therefore it is not protected under the Privacy Rule and can be disclosed to researchers at any time (IOM, 2009a). The Privacy Rule offers two methods to deidentify personal health information. Under the statistical method, a statistician or person with appropriate training verifies that

enough identifiers have been removed that the risk of identification of the individual is very small. Alternatively, data are considered deidentified if 18 specified personal identifiers are removed from the data (IOM, 2009a) (see Box 2).

However, there has been some confusion as to whether adequate procedures are being used to meet the Privacy Rule’s stipulations for statistical deidentification methods. In 2013, HHS issued a more specific guidance on deidentification that clarifies much of that confusion, Bianchi noted.

However, others at the workshop noted that although deidentification is an important data protection tool, it is not infallible. Brad Malin, associate professor of biomedical informatics and vice chair for research at the Vanderbilt University School of Medicine, said, “Given enough effort, time, and incentives, you can break into any system.” He pointed out several published cases in which researchers had reidentified patients using public databases (Sweeney, 1997). However, he added that data have been deidentified not just as an academic exercise “by a single computer scientist spending lots of time and graduate students’ capabilities,” but by people with little expertise in this area. Despite this, he said, “To the best of my knowledge there are no lawsuits that show deidentified information is leading to actual reidentifications and exploitation of individuals. But absence of proof is not the same as proof of absence.” He also noted that unique identifiers may not be accessible to the public in any known resource, and not all identifiers are unique or reproducible. “You have to recognize that your adversaries usually have incomplete knowledge about the world,” he said.

He also described efforts he and others have made to improve data protections, including automated data scrubbing systems that rely on machine learning and remove or provide substitutions for key identifiers. These systems are 90 to 99 percent effective in protecting health data, he said (Aberdeen et al., 2010; Carrell et al., 2013; Deleger et al., 2013). The data scrubbing should satisfy the HIPAA stipulation that “the risk is very small that the information could be used alone or in combination with other reasonably available information, by the anticipated recipient to identify the subject of the information.”

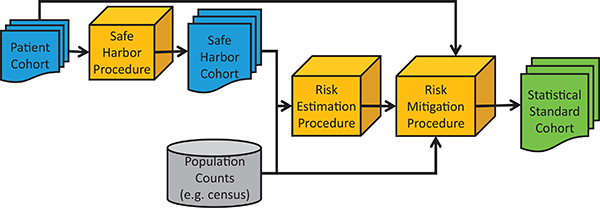

Malin suggested researchers use a risk-based deidentification model (see Figure 1) in which health data stripped of the 18 identifiers specified by the Privacy Rule is subjected to both a risk estimation and risk mitigation procedure (Benitez et al., 2010; Malin et al., 2011; Xia et al., 2013). Alternatively researchers can employ risk models that assess if the number of identifiers they scrub from their data makes their health information more or less

BOX 2

HIPAA “Safe Harbor” Deidentification Method

The Health Insurance Portability and Accountability Act (HIPAA) “Safe Harbor” Deidentification of Medical Record Information requires that each of the following identifiers of the individual or of relatives, employers, or household members of the individual must be removed from medical record information in order for the records to be considered deidentified:

- Names;

- All geographical subdivisions smaller than a state, including street address, city, county, precinct, ZIP code, and their equivalent geocodes, except for the initial three digits of a ZIP code, if according to the current publicly available data from the Bureau of the Census: (a) the geographic unit formed by combining all ZIP codes with the same three initial digits contains more than 20,000 people; and (b) the initial three digits of a ZIP code for all such geographic units containing 20,000 or fewer people is changed to 000.

- All elements of dates (except year) for dates directly related to an individual, including birth date, admission date, discharge date, date of death; and all ages over 89 and all elements of dates (including year) indicative of such age, except that such

protected than if they scrubbed it of the 18 standard identifiers (Malin et al, 2011). Malin added that the term “Big Data” does not mean the end of privacy and can actually mean “Big Privacy” because most studies showing that privacy breaches are possible assume an extremely strong adversary perusing a small specific subset of subjects. But in the current era of Big Data, cohorts are rarely that small. “In the real world, people have much limited identifiability and you can actually support the types of studies you want to support with almost perfect accuracy and still have the protection guarantees that you anticipated,” he said.

Although deidentification is not a panacea and there is always a risk of reidentification, risk exists in any security setting, Malin pointed out, so one must determine an appropriate level of risk and ensure accountability that this level is achieved. Data use agreements should indicate the potential for that risk of privacy breach, he added, and stressed that risk is proportional

-

ages and elements may be aggregated into a single category of age 90 or older;

- Phone numbers;

- Fax numbers;

- Electronic mail addresses;

- Social Security numbers;

- Medical record numbers;

- Health plan beneficiary numbers;

- Account numbers;

- Certificate/license numbers;

- Vehicle identifiers and serial numbers, including license plate numbers;

- Device identifiers and serial numbers;

- Web Universal Resource Locators;

- Internet Protocol address numbers;

- Biometric identifiers, including finger and voice prints;

- Full-face photographic images and any comparable images; and

- Any other unique identifying number, characteristic, or code (this does not mean the unique code assigned by the investigator to code the data).

SOURCES: 45 C.F.R. § 164.514(b); IOM, 2009a.

to the anticipated recipient’s trustworthiness, with a vetted investigator having more trustworthiness than the public at large. One study he conducted reviewed all actual reidentification attempts through 2010 and found only one case with health data subjected to deidentification of the 18 required identifiers—a reidentification success likelihood of 0.00013 (El Emam et al., 2011). Malin concluded his presentation by noting the need for a national clearinghouse of models, methods, and evaluations of data deidentification. He also said that data protections should be proportional to the potential harm and should consider the context of the research.

Stephen Joffe, Emanuel and Robert Hart associate professor and director, Penn Fellowship in Advanced Biomedical Ethics in the Department of Medical Ethics and Health Policy at the University of Pennsylvania Perelman School of Medicine, pointed out the necessity for having dates in patient data for cancer research in order to assess how much a specific treat-

FIGURE 1 A risk-based deidentification model. Health data stripped of the 18 identifiers specified by the HIPAA Privacy Rule are subjected to both a risk estimation and risk mitigation procedure.

SOURCES: Benitez et al., 2010; Malin et al., 2011; Xia et al., 2013. Reprinted with permission from the Journal of the American Medical Informatics Association.

ment delays the progression of cancer and other key indicators. He asked how Malin’s data scrubbing systems addressed this need for dates. Malin responded that in a manner acceptable to HIPAA, he substituted random dates for when a patient received cancer treatment and when their cancer progressed that still preserved the amount of time between the two. “This way you can look at what happens to a patient over time, but you cannot do an aligned epidemiological study across the entire population. That’s why it depends on what you are using the information for. You need to know this before you redact some dates because you want to make sure you keep the data in a useful form. If what you are doing is an epidemiologic study, dates become extremely important and you want to retain them,” Malin said.

Malin also pointed out that the 18 identifiers specified in the Privacy Rule for deidentification were based on what could be used to reidentify data in the 1990s. “Those 18 were probably sufficient at that time, but whether it will be 5 years from now I do not know. The data are not just getting bigger, but the dimensionality of the data is increasing. As more dimensions are added, the data become more identifiable. But as more people are added, the data become less identifiable. If the rate of the dimensions is growing at a faster pace than the number of people in your studies, you might have a problem,” Malin stressed.

Collyar noted that many patients actually wish to have their data reidentified in order to take advantage of innovative treatments indicated by new molecular signatures that could be assessed in their stored biospecimens or data. “Deidentification is great for discovery research, but when the new

molecular signatures come out, patients want to be able to use their sample or data to find out if they have [relevant markers],” she said.

Impediment to Quality Improvement and Learning

Several participants were critical of the distinction that current regulations make between quality improvement activities and research. Leiter pointed out that two quality improvement efforts using the same data and addressing the same questions will be treated as health care operations if the institutions only use the results internally, but as research if the institutions share the results with others so that learning may occur. “It creates a real roadblock to the learning health care system because you have these disincentives, while not increasing the protection to the patient or to a human subject by setting up this distinction,” she stressed. She noted that the Health IT [Information Technology] Policy Committee9 suggested that rules be aligned better so that even if the intent is to share results for generalizable knowledge, use of clinical data to evaluate safety, quality, and efficacy should be treated like health care operations as long as the provider entity maintains oversight and control over data use decisions (Comments on the ANPRM, 2012).

Richard Schilsky, chief medical officer for the American Society of Clinical Oncology, noted that if one is collecting data for health care operations and has no prespecified hypotheses or research plan, but rather is just observing trends that emerge in a dataset collected for another purpose, most people would not consider that to be a systematic investigation that falls under the research definition of HIPAA and the Common Rule. Leiter agreed but noted that some clinicians are still not 100 percent sure that such investigations are not systematic research. As such, they tend to err on the side of caution and either forgo the investigation or seek patient consent and IRB review of it. “There is a fear climate that you are going to be wrong given that it is so easy to make the argument on the side that you are doing systematic research,” she said.

______________

9 The Health IT Policy Committee makes recommendations to the National Coordinator for Health IT on a policy framework for the development and adoption of a nationwide health information infrastructure, including standards for the exchange of patient medical information. The American Recovery and Reinvestment Act of 2009 (ARRA) provides that the Health IT Policy Committee shall at least make recommendations on the areas in which standards, implementation specifications, and certifications criteria are needed in eight specific areas (see http://www.healthit.gov/FACAS/health-it-policy-committee) (accessed June 16, 2014).

Several patient advocates at the workshop also pointed out that current regulations thwart the data sharing that patients favor, to enable learning from their own health care experiences.

Collyar pointed out another problem with current regulations and guidances; they contain vague language that is interpreted differently by IRBs. Greater consistency in interpretations and implementation is needed, she suggested. “People don’t have equal access [to clinical trials] across the country, based on what their IRBs allow. How is it ethical for somebody in Nebraska not to be able to have the same thing that is available to them in Houston?” Collyar asked.

Others argued that regulations and guidances are not sufficient if they conflict with the existing incentives in the system. Regulations and incentives should ideally be aligned to encourage the most ethical conduct. Speaking in regard to the oversight of patient consent and authorization, Jeffrey Botkin, associate vice president for research integrity at the University of Utah, and chair of the Secretary’s Advisory Committee on Human Research Protections, stressed that “Incentives are too strong in favor of greater complexity [of consent forms]. I am skeptical that fundamental change will happen without changing those incentives. Significant progress will only be made if incentives change. New regulations or guidance from HHS will be essential to change incentives.” He suggested that an Office for Human Research Protections (OHRP) guidance could require ensuring that patients understand what has been said to them as part of the consent process, as it is for the consent process specified by the 2000 World Medical Association Declaration of Helsinki. Such a guidance might be an appropriate way to leverage changes in IRB standards for informed consent. “Often the IRBs are afraid of negative findings by OHRP so they will pay close attention to OHRP guidances,” he said.

Lack of Harmonization with International Standards

Some participants called for harmonization of U.S. regulations and guidances with international standards because of the increasing interna-

tional scope of biomedical research. “When coming up with standards, we need to include our international colleagues,” said Jeffrey Peppercorn, associate professor of medicine in the Division of Medical Oncology at Duke University Medical Center. Ellen Wright Clayton noted that universal standards will be difficult to devise, given the wide range of views on patient privacy just within Europe. “It is going to be a real challenge because there are different regulatory regimes and different cultural settings. We also need to realize that what the European Union laws say and what some European countries do couldn’t be more different,” she said.

A large portion of the workshop was devoted to discussing the current shortcomings in patient informed consent for participation in clinical studies. Many workshop participants spoke about long-standing concerns that most patient consent forms for research are too long and complex, with formatting that is too dense, and filled with technical terms patients do not understand. Consequently, many patients do not gain the essential information necessary for informed consent.

One study found that 63 percent of individuals tested did not recognize the potential for incremental risk posed by a clinical trial, and 70 percent did not understand the unproven nature of the treatment being evaluated in the study (Joffe, 2001). Another study concluded that oncology consent documents are “often so long that the average patient is unlikely to read the document and/or are written in language that is likely to be too complex for them to understand” (Sharp, 2004, p. 573). The Agency for Healthcare Research and Quality (AHRQ) has stated that “[Informed consent] documents are long and written at a reading level beyond the capacity of most potential subjects” (AHRQ, 2009, p. 1). Despite the awareness that consent forms are too long, the median number of pages for informed consent forms increased to 11 between 2000 and 2005 (Beardsley et al., 2007). A National Cancer Institute (NCI) audit found that the median page number for the consent forms used in 97 NCI-sponsored Phase III cancer clinical trials was 16, reported Mary McCabe, chair of the Ethics Committee and director of the Survivorship Program at Memorial Sloan Kettering Cancer Center.

Leiter pointed out that patient authorization forms tend to either be so short and understandable that they are too general and do not provide meaningful protections, “or they are incredibly meaningful because they explain absolutely everything, but then you get the 22-page, 10-point font

authorization form that gets put immediately in the trash after it is signed, or it is just signed without being read because it is too long.”

McCabe and Botkin offered several reasons for the length and complexity of consent forms, including

- Research sponsors, investigators, and IRBs want to provide comprehensive medical information and avoid legal liability. They all seek to be accurate by using technical language.

- A longer and more complex form is easier to write than a shorter, easier to read form, which requires communications expertise. “Simply barking at investigators to do a better job writing the consent forms is not an effective solution and instead institutions should provide people who can help individuals write in a more appropriate way,” Botkin said.

- There are no regulatory requirements that forms be shorter and simpler or that require key information conveyed is understood by participants.

- IRBs add too many unnecessary details to forms to cover institution-specific information. The institutional boilerplates they use to provide that information is usually written in an incomprehensible “legalese.”

- A lack of comprehension does not appear to reduce recruitment to trials. Patients are embarrassed to ask questions and reveal what they do not know, and often instead trust their practitioners to make the decisions about research participation that will be in their best interest.

Recognizing the shortcomings of consent forms, NCI started an initiative in 2011 to write an improved informed consent template that was first used in NCI-sponsored clinical trials in 2013. The new template has several changes over the older template provided by the Institute, including

- Use of a study title that a lay person can understand.

- Risks of the study are written from the patient perspective. They are listed according to how common or serious they are, and presented in an easy-to-read table format. Instead of describing risks as percentages, the new format describes them as “X out of 100.”

- Length limits stated for each section. Anything that is optional, such as correlative studies, is not described in the main body of the consent document.

- Information included about mandatory specimen collection and optional studies, including future studies in which patients can participate.

- Standard of care is described prior to describing the care that would be received in the clinical trial.

But Laura Cleveland, patient advocate, Alliance for Clinical Trials in Oncology, and member of the NCI Central Institutional Review Board (NCI CIRB), noted that despite those improvements, the new NCI template is still too long and overwhelming for patients, and has a reading level of a college sophomore according to her Flesch-Kincaid analysis. The average U.S. reading level in English is about eighth or ninth grade, said Michael Paasche-Orlow, associate professor of medicine, Boston University, with only about half the population being health literate. The elderly, minorities, immigrants, and those with chronic diseases tend to be less health literate, his research found (Paasche-Orlow et al., 2005).

“There are very large ethnic, racial, and economic gaps in literacy. The whole conversation we are having here really has another whole social justice lens to it, which is about what does it mean that we have a 20-page consent form—what is that going to do in society,” he said. One study mentioned by Paasche-Orlow found that African Americans received less information about clinical trials than whites (Penner et al., 2013). Another participant added that clinicians are often less inclined to discuss a clinical trial with minority, low-income participants with a minimum of education because they assume they will not understand the consent form. “It’s a factor that plays into health disparities and a real disparity in access to trials,” she said.

Another study by Paasche-Orlow found that after HIPAA was instituted, the average Flesch-Kincaid reading level of academic institutions’ template consent forms for research was greater than 11th grade, and that among institutions that had grade-level reading standards, only 6 percent of their templates met that standard, with the mean being 4.2 grade levels above the standard (Paasche-Orlow et al., 2013). He strongly suggested shortening and simplifying consent forms, noting that the people reading them “are physically and emotionally not at their best.”

Cleveland suggested using health lexicons to simplify the language used in consent documents and determining their reading levels by using the tools offered by various word processing programs. She also suggested including a one-page summary of the consent form for a potential cancer clinical trial participant. This summary could concisely state what a clinical trial is; what the specifics of the trial are under consideration; why it is being

done; and how it will be different from receiving usual care, as well as the costs involved. Practical helpful information could also be provided, such as what participants should bring to appointments and where they should park when being treated.

Several speakers noted that the consent form is only one component of informed consent, and that the consent process is equally if not more important. Botkin pointed out that the current consent process does not usually ascertain whether participants understand key information elements before a decision is made. “Rather than looking at whether the form is signed and has the right date on it, shouldn’t we also look and see if the institution is assessing understanding of the form?” McCabe added.

Paasche-Orlow noted a frequent lack of shared meaning between researcher and patient. For example, though disclosure of investigators’ ties to the drug industry is considered one of the main mechanisms to reveal potential conflicts of interest, he found patients’ often were impressed by such disclosures, with some responding with such comments as, “I did not realize he was such a big shot. I should be in this study because he is such a big shot.” Paasche-Orlow stressed that “If you do not check, you do not know what the person understands.”

Terrence Albrecht, associate center director, Barbara Ann Karmanos Cancer Institute, concurred, noting that her research on clinical interactions among patients, family members, and oncologists has shown that the participants tend to agree on the topics discussed, but disagree about the details. One of her studies found that patients often have misconceptions about whether they were offered the opportunity to participate in a clinical trial. Thirty-nine percent of patients who only discussed a trial with their practitioners, but were not formally offered participation, erroneously said they had been offered participation in a trial, whereas 14 percent of patients clearly offered the opportunity to participate in a trial reported they were not offered that option, including some who consented to the trial.

Cleveland added, “To many patients, consent for participation in a clinical study means ‘If I want a medical procedure I have to sign this form.’” She stressed, “We have to protect people in a way that they are going to be able to understand and process information to make a decision that is right for them. We need our health care professionals who are talking to people about clinical trials to have good communication skills.”

Paasche-Orlow suggested that clinicians use a teaching approach instead of using a persuasive mode of speaking to patients during the consent process (see Box 3). “We need to flip the default toward taking responsibility to confirm comprehension,” he said. Albrecht added that the consenting process often tends to be more of a monologue than a dialogue. She noted a conversation occurs when all participants have equal rights to speak, but stressed that often the balance of a consenting conversation is

Teach to goal is a learning mechanism to confirm comprehension. It involves teaching followed by assessing the person’s comprehension and then continued focused teaching until the person exhibits mastery of what is being taught. When this approach is taken in the consenting process, the medical professional starts with phrases such as “I want to make sure we have the same understanding about this research,” or “It’s my job to explain things clearly. To make sure I did this I would like to hear your understanding of the research project.”

The purpose of teach to goal is to check comprehension, not memory of the educational material. Consequently the medical professional should allow the potential research subject to consult the consent document when answering questions, which are aimed at confirming understanding of all the important elements of the study. The medical professional should also listen for simple parroting, and if a potential subject uses technical terms, ask them to explain further. Paasche-Orlow suggested asking open-ended questions such as “Tell me in your own words about the goal of this research and what will happen to you if you agree to be in this study” or “What do you expect to gain by taking part in this research?”

Any misinformation should be corrected until potential research subjects indicate that they have understood by correctly answering all the questions. Medical professionals need to make clear that the need to repeat information is due to their failure to clearly convey the information rather than the “fault” of the potential subject. For example, one could say, “Let’s talk about the purpose of the study again because I think I have not explained the project clearly.”

SOURCE: Paasche-Orlow presentation on February 24, 2014.

tipped to the rights of patients to be informed about research with little opportunity for them to engage in a dialogue with their practitioners.

Paasche-Orlow agreed and pointed out that the current standard is that if the patients want to know more, they have to ask questions, but usually such questions are not entertained until after a 45-minute monologue on the part of the clinician. “That is not an honest play at interaction,” he said. He suggested asking patients open-ended questions, such as “Tell me in your own words, what risks would you be taking and what do you expect to gain by participating in this study?” and then correcting any misinformation they have. “You have to make the consent discussion an interactive experience,” he said and suggested requiring confirmation of comprehension as entry criteria for studies.

Cleveland also suggested checking for patient understanding by asking a simple question, such as “Tell me what you understand about this,” which could be part of the clinical trial protocol template. Taylor noted that another advantage of asking patients such questions after a consent discussion is that they give practitioners feedback as to how well they are conducting the discussion, which could lead to improved consent discussions in the future. Paasche-Orlow concurred, noting in his presentation that “Right now people get very little feedback about their own skill level—what they are doing well, and what they are not doing well and need to improve.”

Several speakers said that there is a need to enhance practitioner knowledge, skills, and behavior in consent processes because many do not have the appropriate skills and expertise, or do not take the time to do it properly, and may be insensitive to the stressful circumstances in health care environments. “No matter how cool a customer, no matter how deep their education and how much they have studied their disease, they come in emotionally impaired—they are thinking about their families, themselves, and if they are going to leave a legacy. We have to address them as such,” Cleveland said. She noted that “the sickest people tend to be the most vulnerable,” and make privacy of their health information a low priority compared to using it to find a cure for their disease. “They want you to take everything and do anything with it in order to help them,” she said. “So there does need to be something in place to protect people when they are most vulnerable. The challenge is to put protections in place that are not paternalistic and are designed with the patient’s engagement,” she added.

Patients also often have little experience with research, Botkin noted, and the assumption that if you give people information they will make logical decisions in their own best interest “is a shallow, inaccurate description of how people live their lives, particularly in the context of serious illness.”

Cancer and other life-threatening ailments can provoke fear and anxiety that can make patients more passive and more dependent on and trusting of their care providers to make their medical decisions, including whether to enroll in a trial, Botkin said.

Albrecht suggested that in addition to adhering to a standard consent checklist of what to discuss during the consent process, there should be adherence to discussion points that offer support for patients if they decide not to participate in the trial offered. Such support would include reassuring patients that their doctor will continue to see them, that they will have support to address side effects, and that if they are not doing well on the study, their care will be reevaluated and a new care plan drawn up.

Although several speakers made suggestions for improving both consent forms and the consent process, Botkin noted that which ones might work best is unknown because insufficient research has been done to address this question. “It is not evidence-based as it is currently conducted,” he said. But more important, according to Botkin, is changing the incentives of stakeholders in the consent process. New ideas and data will not change these incentives, he said, and instead suggested new regulations or guidances from HHS that will change those incentives that have made consent forms and processes too complicated. In that regard, the Secretary’s Advisory Committee on Human Research Protections (SACHRP) made specific recommendations in 2013 for simplifying informed consent by reducing the number of required elements and increasing the number of optional elements (SACHRP, 2013) (see Table 1). Botkin also suggested HHS consider requiring that part of informed consent include ensuring subjects have understood the information in order to leverage some of the changes needed in the process. “My hope is to encourage a SACHRP initiative in this particular domain and we have had support from OHRP and the Assistant Secretary of Health for making this effort,” Botkin said.

Speaking from the patient perspective, Collyar stressed that whatever changes are made to the consenting process, a key component should be partnership with and respect and trust of patients. She also stressed that patients just want to have the essential information they need to make their decisions. “They don’t want to have to learn a whole new field themselves. No patient says ‘yes, I’m excited about becoming health literate.’”

Collyar agreed with the SACHRP recommendations and also suggested building multiple models of the consent process and evaluating and learning from them, as well as incorporating feedback loops so the consent process continues to improve. She also suggested that consent processes should continually adapt to new technologies as they become available.

TABLE 1 Elements of Informed Consent

| Current Elements of Informed Consent (45 CFR 46.116) | Revisions Proposed by SACHRP (2013) |

Required Elements

|

Required Elements

|

Optional Elements

|

Optional Elements

|

NOTE: SACHRP = Secretary’s Advisory Committee on Human Research Protections. SOURCES: HHS, 2013; Botkin presentation on February 25, 2014; http://www.hhs.gov/ohrp/sachrp/commsec/attachmentd-sec.letter19.pdf (accessed June 16, 2014).

Several participants suggested that a number of new technologies could improve the consent process, including interactive computer programs and audiovisuals that educate the patient about the trial; tablets that patients can use to fill out forms in the waiting room; and greater involvement of

research nurses during the entire consenting process. Paasche-Orlow demonstrated a computer program he developed that uses embodied interactive conversational agents to emulate face-to-face communication. In the example he showed, a cartoon version of a person appears on the computer screen and talks about how consent forms can be long and complicated “and my job is to help you understand as much as possible.” The conversational agent then asks the patient questions, such as if they have ever been in a research study before, and responds with empathy when needed. For example, if the patient says their previous experience with a research study was bad, the agent responds, “I’m sorry to hear that. If you choose to be in any more research studies, I certainly hope you have a better experience.”

The computer avatar educates the patient by explaining potential side effects with a visual that makes it easy to see what portion of participants are likely to experience those side effects. It also acts as an advocate for the potential participant, stating, “You mentioned before that you had been in an earlier study and were not satisfied. You know if you choose to join this study and then found you were not happy, you could withdraw. If you tell the research team, they will help you leave the study in a way that is safe for you.”

Paasche-Orlow noted that even the elderly who have little experience with such computer programs respond favorably, waving back at the avatar and easily manipulating the touch screen provided for their responses. He said his studies indicate that in some ways, the computer avatar performs better, on average, than the physicians discussing consent with patients because it remembers the personal information the potential participant provides and restates that information to personalize the process. In the real world, “these are often hour-long consent conversations and the research staff frequently follows a script, and although they have many opportunities to personalize and empathize, they choose not to exercise those options,” he said. For example, when taking a family history, the physician may note that the patient’s father died of cancer without making any empathetic comment about it. “It is very strange and has something to do with the culture of medicine in general. I think we can do better,” he said. He noted that the degree of empathy expressed by the computer conversational agent can vary. His studies show that patients with depression or lower literacy spend more time asking more questions if the computer program is altered so that the avatar expresses more empathy.

Michaele Christian, former associate director of the Cancer Therapy Evaluation Program in the Division of Cancer Treatment and Diagnosis at

NCI, suggested using audiovisuals to reduce the amount of time potential participants need to spend reading materials related to the study. Albrecht responded that some investigators, such as Neal Meropol at Cleveland Clinic, are experimenting with videos that patients can watch prior to meeting with their physician to discuss a clinical trial. “It is a preparatory aid tailored around the basic concerns and values of the patient,” she said.

Sharon Terry, president and chief executive officer of the Genetic Alliance, suggested using computer tablets on which potential research participants fill out their family and personal medical history in the waiting room. That information could be reviewed by a computer program that finds appropriate clinical trial matches for them. The patients could indicate their initial interest in a study on the tablet so that a clinician or clinical trial recruiter can then speak with the patient about that option. A similar tool for alerting physicians to inherited disorders and greater risk of preterm birth in their obstetric patients is currently undergoing testing. “This type of tool could aid community-based hospitals and clinics with more limited resources than major academic medical centers and so could be particularly important for increasing the diversity of the participants in clinical trials,” Terry said.

Albrecht noted a pilot study that she conducted in which the research nurse screens potential participants before they meet with the doctor, but then continues to be present when the doctor meets with the patient to discuss the clinical trial. “They sit in the background, but they have the imprimatur of the physician,” she noted, and they provide more details about the study once the physician leaves. This shortens the amount of time the physicians spend consenting patients and “that research nurse becomes a lifeline for that patient going forward throughout the rest of the decision process and into treatment.” But she noted that more incentives and system-wide changes need to be provided for such a supportive consenting process. Collyar suggested the development of a smartphone app that would make it easier to find and reconsent patients because cell phone numbers tend to stay constant even if they move, unlike landlines.

As McCabe said, “There is a whole new landscape out there and we really ought to move beyond that paper consent form and thinking about it in the usual way.”

Leiter pointed out that although informed consent is important, it alone cannot ensure adequate protection of participants in clinical

research. She suggested relying less on consent, especially for uses of data in an LHCS, and instead pursuing other models of patient engagement, such as seeking patient input into research, having greater transparency on the research uses of data, and imposing requirements to share results with patients. Terry concurred, saying, “Consent cannot bear the burden of the research industry. It is only part of the picture and we need to go to Fair Information Practice Principles more robustly” (see Box 4).

Even if they want their health data shared, Clayton cautioned that “people should be protected from unwarranted deidentification of all research data. The [key consideration] is not so much what they give consent to, but rather what is our oversight, accountability, transparency, limitations on downstream uses, etc. We should be paying much more attention to that.” Leiter pointed out that federated, decentralized research, in which data remain within the originating institution, offers more data protections than if data are collected in a big centralized location. “If we think about ways to protect data, we provide some way to incentivize keeping the data where it is to have the least exposure possible,” she said. “There are lots of other ways besides consent to layer on protection and lots of different tools to employ, and they too often get ignored in policy and legal discussions about data protection.”

The Health Information Technology for Economic and Clinical Health (HITECH) Act of 2009 called for numerous changes to the Privacy Rule, and in 2013, HHS announced a number of changes to the Rule and guidance (HHS, 2013). HHS specified that a HIPAA authorization can permit future research if the authorization adequately describes the future research such that it would be reasonable for an individual to expect that his or her protected health information could be used or disclosed for that purpose. Compound authorizations for research purposes are also now permitted as long as certain conditions are met. For example, the patient could simultaneously authorize the use of his or her health information for a specific current research project as well as for a biospecimen bank that distributes tissue samples and/or data for future research projects.

“This has made a big difference in terms of our ability to go forward and create forms that allow future research, but it is hard to know how to write these authorizations so that they adequately describe the future research so it would be reasonable for an individual to expect that their

BOX 4

Fair Information Practice Principles

The Fair Information Practice Principles (FIPPs) are a widely accepted framework at the core of the Privacy Act of 1975. They have become the basis of related laws and regulations adopted by many federal and state agencies. The FIPPs are not precise legal requirements, but principles for balancing the need for privacy with other interests in an era of computerized information. The FIPPS were first articulated in a comprehensive manner in the former U.S. Department of Health, Education, and Welfare’s 1973 report titled “Records, Computers, and the Rights of Citizens” (HEW, 1973). The eight FIPPs, as stated in the Nationwide Privacy and Security Framework for Electronic Exchange of Individually Identifiable Health Information, are

Individual Access: Individuals should be provided with a simple and timely means to access and obtain their individually identifiable health information in a readable form and format.

Correction: Individuals should be provided with a timely means to dispute the accuracy or integrity of their individually identifiable health information, and to have erroneous information corrected or to have a dispute documented if their requests are denied.

Openness and Transparency: There should be openness and transparency about policies, procedures, and technologies that directly affect individuals and/or their individually identifiable health information.

information is going to be used for it,” Bianchi said. “It has removed some of the roadblocks to researchers, but it is not clear how far it is going to get us,” she added, noting that it is not yet clear if current ongoing studies can be grandfathered because they described potential future research in the informed consent process.

Another issue that has affected biomedical research is the question regarding what constitutes sale of PHI, which is not allowed under HIPAA. Past language addressing this issue was quite vague, and researchers were concerned that payments they received to cover the cost of data transfer

Individual Choice: Individuals should be provided a reasonable opportunity and capability to make informed decisions about the collection, use, and disclosure of their individually identifiable health information.

Collection, Use, and Disclosure Limitation: Individually identifiable health information should be collected, used, and/or disclosed only to the extent necessary to accomplish a specified purpose(s) and never to discriminate inappropriately.

Data Quality and Integrity: Persons and entities should take reasonable steps to ensure that individually identifiable health information is complete, accurate, and up-to-date to the extent necessary for the person’s or entity’s intended purposes and has not been altered or destroyed in an unauthorized manner.

Safeguards: Individually identifiable health information should be protected with reasonable administrative, technical, and physical safeguards to ensure its confidentiality, integrity, and availability and to prevent unauthorized or inappropriate access, use, or disclosure.

Accountability: These principles should be implemented and adherence ensured through appropriate monitoring and other means, and methods should be in place to report and mitigate non-adherence breaches.

SOURCES: HEW, 1973; ONCHIT, 2008; http://itlaw.wikia.com/wiki/Fair_Information_Practice_Principles (accessed June 16, 2014).

would be interpreted as sale of PHI. HHS now specifies that a reasonable fee to cover the cost to prepare and transmit data is allowable.

ADVANCED NOTICE OF PROPOSED RULEMAKING

Holly Taylor, associate professor of health policy and management, Johns Hopkins Bloomberg School of Public Health and core faculty, Johns Hopkins Berman Institute of Bioethics, provided an overview of the recent Advanced Notice of Proposed Rulemaking (ANPRM) for the Common

Rule that HHS put forth in July 2011, with the aim of clarifying regulatory ambiguity and relieving perceived impediments to clinical research. Subtitled “Human Subjects Research Protections: Enhancing Protections for Research Subjects and Reducing Burden, Delay, and Ambiguity for Investigators,” this document made several suggestions related to protecting research subjects from informational risk, aligning IRB reviews to degree of risk, using one IRB for multisite studies, harmonizing human subject protections regulations, and improving consent forms and processes.

The ANPRM proposed that the HIPAA regulations be adopted as the universal standard for privacy protection in research, and that collection of data for research purposes, even if it does not have identifiers, should require patient consent. The ANPRM proposed requiring broad consent for future research use of archived biospecimens. This would be a change from current rules, which allows research without consent when biospecimens are deidentified. The proposed broad consent would enable research on archived biospecimens, even with identifiable samples, without the time and expense of reconsenting patients for additional studies. But Ellen Wright Clayton, Craig Weaver professor of pediatrics and professor of law, Vanderbilt Center for Biomedical Ethics and Society, noted that such consent for future research does not apply if research results will be returned to participants. In that case, patients would first need to be recontacted and reconsented for that particular study.

The lack of clarity in distinguishing research and quality improvement activities was also recognized by the ANPRM, which states “The Common Rule has been criticized for inappropriately being applied to and inhibiting research in certain activities including quality improvement, public health activities, program evaluation studies regarding quality improvement. These activities are in many instances conducted by health care and other organizations under clear authority to change internal operative procedures to increase safety or otherwise improve performance, often without the consent of staff or clients, followed by monitoring or evaluation of the effects,” Menikoff reported. He added, “It is far from clear that the Common Rule was intended to apply to such activities nor that having it apply produces any meaningful benefits to the public. It might have a chilling effect on the ability to learn from and conduct important types of innovation.”

Taylor said that other proposed changes would aim to better calibrate IRB review to the level of risk. For example, one suggestion was to revise and simplify the review approach for expedited review by eliminating the requirement for continuing review. The ANPRM also suggested replacing

“exempt research” with “excused research.” This category of research would require Principal Investigators to report to an IRB, which would audit such requests, with the assumption that as soon as a report is filed, the investigator could move forward with the research. Another key ANPRM proposal was the use of a single IRB of record for domestic multisite studies. This was in response to the recognition that multiple IRB reviews fail to improve human subject research protections, while lengthening the time of review and diverting valuable resources.

The ANPRM also called for improving consent forms and the consent process. Jerry Menikoff, director of the HHS Office for Human Research Protections, noted, “We’re all recognizing that consent forms have reached a point where they’re just getting longer and have more boilerplate [content] so you end up burying a lot of the key details that a person should actually be thinking about when they enroll in a research study. One of the purposes of ANPRM was to change the rules so that there would be greater authority to go from a 20-page consent form to a 3-page consent form that puts the boilerplate [material] somewhere else and is more to the point on what’s going to happen in the clinical trial and what the patient should be thinking about in terms of his or her best interests in enrolling.” (See the section on consent forms.)

Taylor said another ANPRM stipulation is that there should be an improved and more systematic approach for the collection and analysis of data on unanticipated problems and adverse events. The ANPRM also called for improved harmonization of regulations and related agency guidances related to human subjects protections. Finally, the ANPRM proposed that federal regulatory protections be extended to all research with human participants regardless of funding source, as long as this research is conducted at institutions in the United States that receive some federal funding from an agency that has adopted the Common Rule.

The ANPRM generated more than 1,000 comments. A joint response by the American Association for Cancer Research, the American Society of Clinical Oncology, and the Association of American Cancer Institutes indicated general support of the ANPRM, but requested better delineation of the responsibilities of the IRB of record, and reconsideration of the consent requirements for deidentified data. These organizations disagreed with the adoption of HIPAA as the universal standard for privacy protection. A committee of the National Research Council’s Board on Behavioral, Cognitive, and Sensory Sciences also concluded that HIPAA should not be adopted as the universal standard, and stated that the proposed requirement for con-

sent for use of deidentified data should be reconsidered (NRC, 2014). This committee also called for criteria to define what is and is not considered human subjects research. They also endorsed the new category of excused research, but noted the need to operationalize some of the procedures for that type of research.

The ANPRM has been closed for public commentary since October 2011. If revised regulations are ultimately developed and implemented, they would have to be endorsed by all the federal agencies that currently use the Common Rule. Taylor noted that it took 10 years to get such approval for the current Common Rule.

PATIENT PERSPECTIVES ON RESEARCH PROTECTIONS

Patients have a wide range of views on sharing their data for health research, Terry noted. One study found that about one quarter of survey respondents who expressed an opinion would be willing to share their data without consent if their identity is not revealed and the study is supervised by an IRB (20 percent of those surveyed said they were not sure). About half of respondents said they would want each study seeking to use their data to contact them in advance and seek specific consent each time (IOM, 2009a). “There’s really a wide spectrum of patient views on this. One size does not fit all,” Terry noted.

Clayton agreed, noting that a systematic review found substantial variability in participants’ stated desires for control over research use of their health information and their willingness to accept broad consent for use of their biospecimens in studies (Brothers et al., 2011). She also pointed out, “A lot of this research is affected by how you ask the question. If you ask them, are they worried about their information not being private and the research being risky, then you get the answer that they think this research is risky. If you ask them whether they think this research is worthwhile and a biobank is good for an institution to have, the answer is emphatically yes,” she said.

Clayton noted that 88 percent of patients surveyed at Vanderbilt about sharing their deidentified health data were either neutral or stated that data sharing made them more amenable to participating in research. A similar percentage of patients in Group Health Cooperative reconsented to data sharing. In a study Peppercorn conducted, about three quarters of participants agreed to having their DNA and biospecimens stored for potential use in future unspecified studies related to cancer or other diseases (Ludman

et al., 2010). “Patients basically are altruistic and want to help in clinical trials,” Cleveland stressed.

Taylor pointed out that many patients are willingly providing their health information on Facebook and other social media sites, which could provide investigators with a public resource for study recruitment. “There is some interesting debate in the literature about what is an ethical use of something like Facebook to try and track or retain [research] subjects,” she said. Leiter noted the increasing willingness of the younger generation to share personal information, including health information, on Internet forums, but noted a “fundamental lack of knowledge as to how unprotected those data are.” Health data uploaded onto the Internet have no legal protections, she said. “With the evolving tolerance for sharing information, there needs to be an understanding or something formulated so that health data are not as exposed as they are right now, even with people more willing to share it,” she added.

Bianchi agreed there is a lack of protection for health data in settings outside of those stipulated by HIPAA, including health data that patients upload to the Internet. She noted that the European Union has a “right to be forgotten” regulation that requires Internet servers and websites to erase individuals’ personal information that has been made public without legal justification (Data Guidance: Global Guidance in One, 2013). “Don’t we have a right to be forgotten too?” she asked. Malin added that studies by Alessandro Acquisti at Carnegie Mellon show that when people eagerly embrace new technologies or platforms, such as Facebook, they tend to make their personal information public using them, but then at a later point in time, “there is a lot of remorse that kicks in and they want to take that information offline. Just because people right now may feel comfortable putting information up online, they may not later when they try to get a job.” Gail Jarvik, head and professor, Division of Medical Genetics, University of Washington School of Medicine, noted that the elderly tend not to be as concerned about the privacy of their health information, studies show. “By the time you are 90, you have gotten the diseases you are going to get and there is not a secret in your genome hiding anymore so there is less of a concern,” she said.

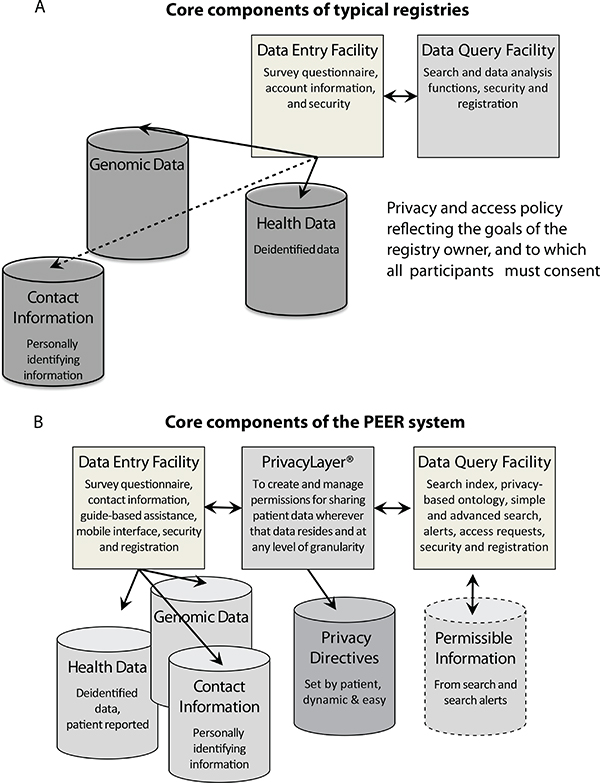

Terry suggested using online participant-centric registries with which participants can interact and change the research options that can be pursued with their data or biospecimens. She said many registries are currently centered on investigators, institutions, research sponsors, etc., rather than on the patient. She presented a model for such a registry called Platform for

Engaging Everyone Responsibly or PEER (see Box 5). According to Terry, PEER empowers participants to create and manage access to all of their information by moving participant contact details into the registry and giving them the ability to manage the right to access their health data (see Figure 2). “This is our vision of the future—that individuals will always be in the center of their data,” Terry said. She added that instead of individuals having the onerous task of finding a trial in which they can participate through clinicaltrials.gov, the trial can find the individuals, as long as they have provided enough of their health data on PEER.

Terry said that PEER not only meets the privacy standards of HIPAA, but exceeds them. By providing participants with easy access to information about clinical trials that are investigating treatment alternatives, PEER is doing the case management that is included in the HIPAA definition of “health care operations” that enables use or disclosure of protected health information. PEER affords individual participants a level of privacy protection that is beyond HIPAA, according to Terry, because it holds no personal information other than that which the participant or their representative explicitly provides, or requests that a third party provide, and the participant maintains continuous control over the use of his or her information. PEER has been deployed by a number of organizations and institutions, including various disease organizations and universities that are part of the National Patient-Centered Clinical Research Network of the Patient-focused Drug Development Initiative of the Food and Drug Administration.

Botkin suggested polling a focus group of some study participants about their willingness to share their data in the newer study instead of reconsenting all people for future research projects. He suspected that the majority of people in the focus group would want to have their data reused and such a finding could be leverage to have the study approved by an IRB.

Peppercorn suggested that potential misuse of health data could be discouraged through laws prohibiting discrimination in health and life insurance and employment, as well as by imposing criminal penalties for hacking or stealing data. He noted that the law has had trouble in the past keeping up with technological advances in cyberspace. “Let’s get ahead of it and prohibit things we don’t want done,” he said. Collyar concurred and emphasized the criminal penalty for inappropriate use of data because we cannot keep people from illicitly collecting data.

BOX 5

Platform for Engaging Everyone Responsibly (PEER)

PEER is an online participant-centric registry with which participants can interact and change the research options that can be pursued with their data or biospecimens. A number of access controls on PEER enable participants to specify, in a granular manner, medical researchers, organizations, data analysis platforms, and others that can and cannot use their health information and for what purpose. These controls, which can be operated independently of each other, include:

- Discovery, which controls who can discover their information • Contact information, which determines who can access their contact information

- Export, which controls who can export deidentified information from PEER

Participants have the option to edit their responses to these controls from a computer or smartphone, as well as to indicate if they want more information about a specific study or organization before deciding how it can use their health data. They can also access knowledgeable and trusted guides from their particular community, including patient advocates and activists, who describe in videos the different privacy settings and their ideas about sharing information, and also make recommendations on how to choose privacy settings based on the degree of privacy participants might want. The platform also sends participants notifications of data-sharing opportunities for which they can consent, deny, or delay making a decision.

PEER can be embedded in any website, such as those provided by disease foundations or cancer centers, and can be easily customized. This helps build trust for the platform on the local level at which participants would interact, according to Sharon Terry. One group that used PEER on their website, for example, changed the working language to make it more relevant to their community.

SOURCES: Terry presentation on February 25, 2014; Genetic Alliance (http://www.geneticalliance.org/programs/biotrust/peer; accessed June 16, 2014).

FIGURE 2 A. Typical registry architecture. Usual core components of registries include: functionality for data entry, a database of deidentified data, and facility for inquiry and/ or analysis of that data based on the privacy and access policy of the registry owner, to which all participants must consent in advance. Contact information for individual participants—if available—is most often loosely coupled, and outside the registry. B. Core component of the Platform for Engaging Everyone Responsibly (PEER). PEER is participant-centric with privacy controls from Private Access that empower participants to create and manage access to all of their information. The right to access contact details in the registry is separately managed by each participant.

SOURCE: Terry presentation on February 24, 2014.

ETHICAL CHALLENGES OF GENETIC ADVANCES

Angela Bradbury, assistant professor of medicine, Perelman School of Medicine at the University of Pennsylvania, opened the session on ethical challenges of genome-based cancer research by noting that recent technological advances enable researchers to easily and inexpensively determine the entire sequence of a person’s DNA (genome). In this era of the “$1,000 genome,” there is renewed interest in developing precision medicine (also called personalized medicine), whereby clinicians can use genetic findings in a person’s blood or tumor sample to attempt to determine the most suitable prevention efforts and/or treatments for certain cancers. Variants of BRCA, HER2, BRAF, and other genes are already being used in clinical oncology to select treatment, she noted (Burke and Psaty, 2007; Schwartz et al., 2008).