5

Hazards, Land Use, and Environmental Change

ESSAY: A FRACTION OF THE EARTH'S SURFACE

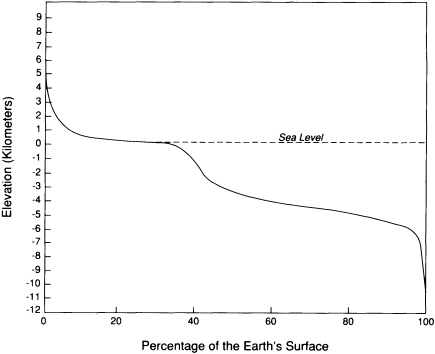

The surface is the interface between the Sun-powered processes dominated by erosion and deposition and the tectonic processes driven by the Earth's internal energy. Most of the surface lies at two general levels: nearly 60 percent is between 1 and 5 km below sea level, and about 25 percent lies within a kilometer above or below sea level (Figure 5.1). The remaining 15 percent of the surface is concentrated in tectonically active mountain belts, continental slopes, and oceanic trenches.

The part of the surface most heavily populated is usually less than 1 km above sea level; most activities are concentrated in areas not far above sea level. Mountainous areas usually are sparsely populated because they are less favorable to agriculture, construction, and transportation and are more susceptible to hazards. Such areas tend to be used for specialized agricultural activities, mining, and recreation. In places where large populations concentrate in or close to structurally active mountainous regions—for example, those in Armenia, Chile, Nepal, and California—the residents are faced with special problems in developing the land resource because of the risk of seismic, volcanic, and landslide hazards.

Just at sea level—within the zone affected by tidal and storm-generated oscillations—spreads the biologically diverse region of environmentally sensitive terrains: marshes, swamps, tidal flats, and fens. Historically, such expanses were considered wastelands or were drained, filled, or surrounded by sea walls to increase their agricultural worth. Research conducted within the past 50 years has disclosed the inadvisability of such projects because they can disturb the natural breeding habitats of wildlife and the cleansing functions of the world's wetlands. Close study shows that wetlands constitute whole ecosystems supporting vast populations, not only of waterfowl, reptiles, and amphibians but also microscopic creatures. Coastal wetlands abound in unfamiliar fungal and bacterial species that perform the invaluable tasks of isolating and neutralizing the toxic compounds flushed through the system in the hydrologic cycle.

The shallow-water area of the continental shelf is recognized as essential to fisheries and as a source of significant oil and gas production. The most recent ocean-waters flooding of the continental shelves took place within the past 10,000 years, and the nature of this geologically transient environment is as yet

FIGURE 5.1 Curve showing the cumulative percentage of the area of the Earth that is higher than any particular elevation. Most of the surface lies either 3 and 5 km below sea level or within 1 km above sea level. Human activities are concentrated in the areas that are less than 1 km above sea level.

poorly understood. Research techniques such as advanced side-scanning sonar systems are aiding in the study of the continental shelves. If sea levels rise more rapidly over the next century, interest in the continental shelf environment will intensify, and the use of land surfaces below sea level may extend beyond the Netherlands, where it is now focused.

Great attention is given to sudden landform changes such as abrupt changes of elevation during earthquakes, yet more money is spent yearly in attempts to retard the slow changes of landform development than on mitigation of the effects of sudden change. The incremental changes produced by erosion and deposition lead to soil loss; silting of reservoirs; and destructive transformations of hillslopes, rivers, and coastlines. Such evolution is natural and often inevitable. The activities of humankind have commonly accelerated the transformations, catapulting natural systems over thresholds and producing immediate environmental threats. The geomorphological processes affected by humans cover a staggering range of scale. From local denudation caused by livestock overgrazing, a significant component of the process of regional desertification, humankind has evolved into a major geomorphological agent. Proper understanding of progressive geomorphological changes can forestall precipitous transformations and prevent the loss of landform stability.

Landforms are not random features; they are the consequences of the interplay of constructive and destructive geological and hydrologic forces requiring careful study before they can safely be artificially modified. Our baselines for understanding processes at the surface are disturbingly short, although existing landscapes provide important information about the magnitudes and return frequencies for many natural processes. Only in the past century have detailed

observations been made concerning such features as flood intensities and frequencies, debris-flow distribution, subsidence, and landslides. Thus, predictions are based on recent to current conditions that are known to have been altered by human actions. Predictions of longer-term events, such as 1,000-year floods, are very unreliable because they must be based on models with uncertain numerical characteristics.

Landforms are sensitive to climatic change because the operating rates of the processes that mold landforms vary dramatically with climate. Fluvial processes dominate in sculpting landforms in semiarid regions, while wind processes are more significant in arid regions. Under very wet climatic conditions, landslides and downhill movements of surface rocks are dominant. Each of these geomorphic processes leaves distinctive evidence in the geological record. When climate changes, the dominant land-forming processes change in response. Threshold values of rainfall and temperature can be defined at which a change from the dominance of one land-forming process to another is likely to occur. It is therefore possible to forecast how agricultural regions might shift size and location in response to global warming and related changes in rainfall.

On an even longer time scale, low-lying coastal areas are subject to episodic flooding as sea level oscillates. Most remarkable are the paleo-landscapes locally exposed by erosion beneath extensive blankets of sedimentary rocks that have been deposited on the continents during flooding episodes. Glacial valleys 450-million-years old are evident at central Saharan sites now occupied by desert wadis, a 170-million-year-old sea stack lies fallen on a modern beach in Scotland, and a tropical beach 450-million-years old can be visited on the outskirts of Quebec City. The long-term durability of low-lying continental surfaces—less than 1 km above sea level and less than 0.2 km below sea level—that is demonstrated by landscape exhumation can be seen on much shorter time scales by the slow rates of erosion that characterize such flat areas.

The occurrence of these extensive areas of low relief, coupled with a suitable climate, makes regions such as the American Midwest prime land resources. The lush agricultural production of such regions can be maintained only if the landscape is treated with the same conservation ethic that inspires reverence for parks and wilderness areas. Prevention of soil erosion and respect for natural ecological balances in low-lying, low-relief regions can ensure their productivity far into the future. Geological characterization forms an essential basis for planned preservation and maintenance.

Human society exists in the biosphere, which thrives at the boundary layer between the solid-earth and its fluid envelopes, perched between the two engines of mantle convection and solar energy that drive geological processes. The biosphere, composed of chemical elements that are cycled among the reservoirs of the atmosphere, hydrosphere, crust, and mantle, also contributes to cycles of rapid chemical turnover and thus functions as a part of the geological processes. The more vigorous manifestations of those processes, however, regularly destroy the parts of the biosphere—and its human constructions—located in their paths. As society has increased its utilization of earth resources and expanded its population and area of colonization, the frequency of its encounters with vigorous geological processes has increased. Society has adopted a term, geological hazards, for these perfectly normal processes that began occurring long before humans arrived on the scene.

Many of the most tragic episodes in the natural history of humans have been related to geological hazards such as disastrous floods, earthquakes, sea waves, landslides, and volcanic eruptions. The fact is that most geological hazards can be avoided or mitigated through proper land-use planning, engineered design and construction practices, building of containment facilities such as dams, use

of preventive measures such as stabilization of landslides, and development of effective prediction and public warning systems. In many parts of the world such measures have already significantly reduced human suffering from geological hazards, although major challenges remain. To further mitigate these hardships, it is essential that a better fundamental understanding of each hazard-causing geological phenomenon be gained and widely disseminated.

Before the development of agriculture, the effects of human beings on the Earth were comparable to the effects of other species. But the onset of crop cultivation and animal domestication, with the subsequent growth of urban civilizations, introduced a new set of forces. Today, humans are changing basic earth processes in unprecedented ways and to unfamiliar degrees. At present, every person in the United States is responsible on average for the consumption of 16 metric tonnes—about 35,000 lb—of minerals and fossil fuels each year. This use does not include the tremendous volume of material moved during the construction of homes, parking lots, office buildings, factories, dams, highways, and other structures. On a worldwide basis, the human population uses nearly 50 billion metric tonnes of earth materials each year. This amount is more than three times the quantity of sediment transported to the sea by all the rivers of the world. Clearly, human beings have become a geological agent that must be taken into account in considering the workings of the earth system.

The various materials moved by human society are perturbing not only the physical cycles of the Earth, by increasing mass transfer, but also the chemical aspects. The biogeochemical and geochemical cycles that convert elements into living creatures, and into ore deposits and other geological concentrates, now have new aspects. The chemicals generated by manufacturing and the disposal of materials, including toxic compounds, occur in concentrations and combinations never before involved in natural systems. The consequences of such contaminations are poorly understood.

Some earth systems operating at the boundaries of the geosphere, hydrosphere, atmosphere, and biosphere are very fragile, and every human effort toward survival or improvement of the human condition necessarily results in repercussions on those systems. We dispose of our wastes in the same sedimentary basins that supply us with the bulk of our groundwater, energy, and mineral resources. Through our social, industrial, and agricultural activities, we are changing the composition of the atmosphere, with potentially serious effects on climate and terrestrial and marine ecosystems. The human population is expanding into less habitable parts of the world—steeper mountainsides, more ephemeral deltaic and barrier islands—which increases vulnerability to natural hazards and strains the biological and geological systems that sustain life. In this sense, geological conditions control the quality of human life.

People all over the Earth are constantly moving from less economically viable rural areas to the crowded cities in hopes of achieving a better livelihood. Wastes produced in and around the cities further compromise the quality of surrounding lands, and consequently there is a strong need for urban renewal or recycling of crowded urban spaces. With the crowding and densification of urban living comes an increased need for more uses of recycled land space. Buildings and other structures for human habitation, transport, or manufacturing are made taller and heavier. They may be founded on sites that are less than optimal for resisting the physical stress induced by their presence. Geology is the main interconnecting element between the craft of civil engineering and the intricacies of nature. It is being used in efforts addressing water quality, resource supply, waste isolation, and disaster mitigation to accommodate and reduce the adverse effects of societal growth.

If present trends continue, the integrity of the more fragile systems on which

human society is built cannot be assured. The time scale on which these systems might break down may be decades or it may be centuries. Human beings are unique among the influences on the Earth—we have the ability to foresee possible consequences of our activities, to devise alternative courses, to weigh the pros and cons of these alternatives, to make decisions, and then to behave accordingly.

Understanding the natural systems acting at the land surface presents a major scientific challenge because of the enormous social implications of those systems. Land resources, as basic elements of the global ecosystem, profoundly affect the lives of every human being on the face of the Earth. The loss of topsoil and forests, the loss of life and property caused by human-induced geomorphic change, and the pollution of air, soil, and water all result in growing consequences for both national and international welfare and security.

GEOMORPHIC HAZARDS

Landforms are continually changing, but except for a few spectacular instances their change is so gradual that it is scarcely noted. As population increases, more people are exposed to the effects of processes that have been going on for hundreds of millions of years. In many cases the increase in human population contributes to instability of the physical landscape. When human populations are threatened by geomorphic processes, those processes become geomorphic hazards. Great attention is given to hazards representing abrupt changes, such as earthquakes and volcanic eruptions, but more damage is caused and more money has to be spent annually in attempts to retard ongoing hazards such as landslides, debris flows, and the normal slow progression of erosion and redeposition that leads to soil loss; reservoir infilling; and river, coastline, and hillslope changes.

The surface of the land is shaped by internal forces—folding and faulting with consequent elevation or subsidence—and by erosion—wind and water weathering driven by solar energy and gravity. Wind and water accomplish erosion by forcibly loosening, removing, and transporting solid material. That eroded material becomes the sediment deposited elsewhere. Erosion and sediment production result from the exposure of earth materials and from variations of climate, vegetation, and topographic relief. For materials of similar strength, natural sediment production reaches a maximum at about 33 cm of rainfall per year. Below that amount, less runoff causes less removal of material; above that amount, increased vegetation protects the soil so the amount of erosion and sediment production decreases. Modern erosion rates can be very high, because both urban and agricultural development require removal of the original vegetation.

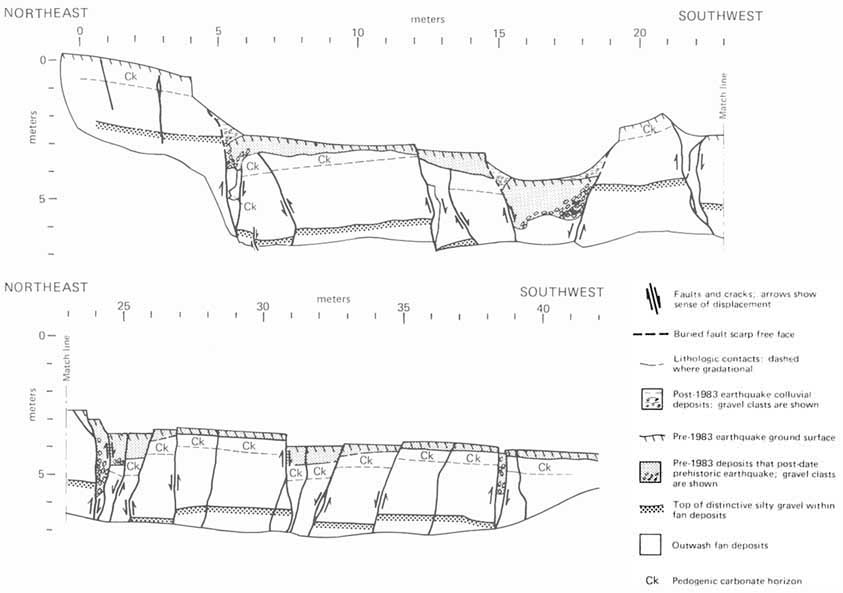

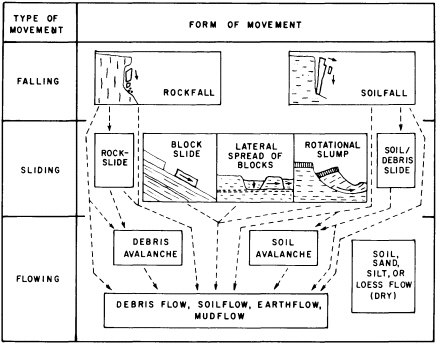

Geomorphic hazards may involve a slow progressive change in a landform (Figure 5.2) that, although in no sense catastrophic, can become a significant hazard involving costly preventive and corrective measures. There are three types of geomorphic hazards that combine different spans of time, different degrees of damage, and different energy expenditures. The most obvious type is a sudden event that produces an abrupt change—a landslide caused by monsoonal rains, an earthquake, or human activity such as removal of toe support from a stable slope (Figure 5.3). A second type is progressive change that leads to an abrupt result, typified by weathering breakdown of soil or rock that initiates slope failure, gullying of a steepening alluvial fan, meander growth and cutoff, or stream channel shifting. The final type of progressive change gradually produces a slowly developing geomorphic hazard—for example, gradual hillslope erosion, gradual meander shift, channel incision, or channel enlargement. Misidentifying or wrongly estimating the pace of progressive changes may result in incurring pointless protective or remedial costs. Accurate identification of impending problems attributable to slow progressive change can aid in the choice of remedial action and in prudent allocation of money toward engineered hazard prevention. Geomorphologists have quantified ways of identifying potential hazard sites that suggest future change. When historical information is available, it may be possible to make a crude estimate of the timing of landform failure. But, usually, too many influences operating on too fine a scale make it difficult to predict when a failure will occur.

FIGURE 5.2 Principal forms of mass movement, as correlated with dominant mechanisms of falling, sliding, and flowing. Each block represents a form that can characterize single or multiple events of ground failure; in many occurrences one form can give way to another, as indicated by dashed lines and arrows. Blocks in center and at left represent failure in bedrock, those in center and at right failure in surficial deposits. After R. H. Jahns, NRC, 1978, in Geophysical Predictions.

Increased erosion of productive agricultural soils has grown into a serious problem, both for the farmer and for those downstream who must cope with siltation. Soil erosion is accelerated by a variety of agricultural practices, including cultivating slopes at too steep an angle and irrigating with too much water or water under pressure. Under certain circumstances, erosion can proceed so rapidly and over so wide an area that remote sensing techniques may be the best way to monitor it. In situations that require estimates of slow erosion rates from significant topographic features, radioactive isotopic analysis can help in establishing chronologies of erosion surfaces and stratigraphic horizons.

Historically, the greatest amount of erosion in the eastern United States and resulting sediment transport by streams probably occurred in the eighteenth century as agriculture first became widespread. Estuaries became severely silted at that time. In the nineteenth century the western United States experienced a similar development; the process was documented in the Colorado River Basin during the 1880s as extensive channel incision developed in tributary valleys and created the characteristic ar-

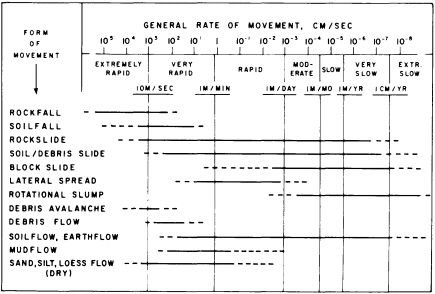

FIGURE 5.3 Ranges in general rates of movement for landslides and related features. Dashed parts of horizontal bars represent relatively uncommon or poorly documented occurrences. After R. H. Jahns, NRC, 1978, in Geophysical Predictions.

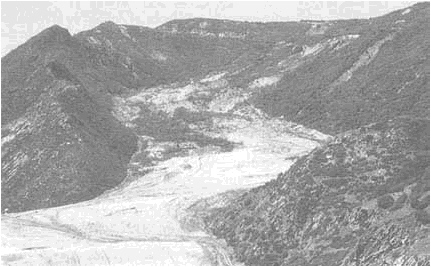

FIGURE 5.4 April 1983 Thistle, Utah, landslide. The landslide flowed down the valley damming Spanish Fork Canyon. Photograph courtesy of Gerald F. Wieczorek, U.S. Geological Survey.

royos. Sediment samples taken since 1930 show a significant decrease in sediment loads, suggesting that the incised channels have reached a new state of relative stability. This new equilibrium may result from a combination of conditions: less material is being eroded, and more of the sediment that is produced is being held up in the valleys, where it is deposited in newly developing floodplains. This trend has been enhanced by dam construction. The reservoirs extending behind the dams are clogging up with sediment, drawing attention to the long-term obsolescence of such facilities. In time the renewable resource of water for hydropower and agriculture may become severely compromised. On the lower Mississippi River, a 50 percent decrease in sediment transport has been associated with dam construction upstream on the Missouri River and bank stabilization elsewhere (see Figure 1.11). The Mississippi delta is being modified as the rate of sediment delivery decreases, and the natural slow subsidence due to basin deformation and sediment compaction outruns sediment accumulation, thereby permitting the sea to encroach onto the land.

Landslides and Debris Flows

In the 1970s, landslides—all categories of gravity-related slope failures in earth materials—caused nearly 600 deaths per year worldwide. About 90 percent of the deaths occurred in the circum-Pacific countries. Annual landslide losses in the United States, Japan, Italy, and India have been estimated at $1 billion or more for each country.

Landslide costs include direct and indirect losses affecting both public and private property (Figure 5.4). Direct costs can be defined as the costs of replacement, repair, or maintenance of damaged property or installations. An example of direct costs resulting from a single major event is the $200-million loss attributed to the 21-million-m 3 landslide and debris flow at Thistle, Utah, in 1983. The slide severed three major transportation arteries—U.S. highways 6 and 89 and the main line of the Denver and Rio Grande Western Railroad—and the lake it impounded by damming the Spanish Fork River inundated the town of Thistle, resulting in the destruction of businesses, homes, and railway switching yards. The indirect costs involved the cutoff of eastbound coal shipments along the railroad line. In 1983 oil was expensive and coal was crucial for generating electricity. With supplies from the west severed, eastern coal normally exported to Europe had to be rerouted. European industry, in turn, had to adjust to lowered supply. Ultimately, the landslide affected the international balance of payments.

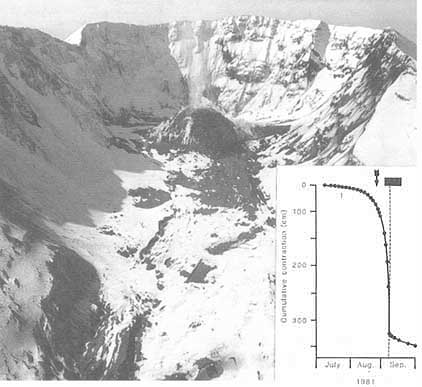

Destructive landslides have been noted in European and Asian records for over three millennia. The oldest recorded landslides occurred in Hunan Province in central China 3,700 years ago, when earthquake-induced landslides dammed the Yi and Lo rivers. Since then, slope failures have caused untold numbers of casualties and huge economic losses. In many countries, expenses related to landslides are immense and apparently growing. In addition to killing people, slope failures destroy or damage residential and industrial developments as well as agricultural and forest lands, and they eventually degrade the quality of water in rivers and streams. Landslides are often associated with other events: freeze-thaw episodes, torrential rains, floods, earthquakes, or volcanic activity. The bulging of the surface of Mount St. Helens over a rising magma body

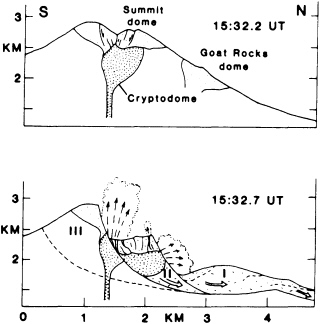

FIGURE 5.5 Sections through Mount St. Helens showing early development of rockslide-avalanche into three blocks (I, II, III) and explosions emitted primarily from block II; fine stipple shows preexisting domes and coarse stipple shows the 1980 cryptodome. After J. G. Moore and C. J Rice, NRC, 1984, in Explosive Volcanism: Inception, Evolution, and Hazards.

led to a massive air-blast landslide—2.8 km3 of rock, the largest slide in recorded history. Loosened material slipped off the side of the growing dome, unroofing the magma and permitting it to degas in a spectacular and locally disastrous eruption (Figure 5.5). The volcanic ash, dust, and pumice, mixed with rain and snowmelt, caused widespread debris flows in local valleys. A minor volcanic event high on the slopes of Nevado del Ruiz volcano in Colombia in 1985 melted enough glacial snow and ice to produce a debris flow that killed 25,000 people in the valley below. An earthquake off the coast of Peru in 1970 initiated a rockfall on Mt. Huascaran in the high Andes. The rockfall turned into a debris avalanche that moved at speeds approaching 300 km per hour and killed more than 20,000 people in the towns of Yungay and Ranrahirca.

Very large slides also are found on slopes below sea level. In the area around the Hawaiian Islands, recently discovered slide debris covers about 15,000 km2 and contains single blocks more than a kilometer thick that slide as much as 235 km away from the shallower water. A major problem in the Gulf of Mexico is slumping, which can disrupt seafloor pipelines and the foundations for drilling platforms worth hundreds of millions of dollars. In Hawaii the debris is well-consolidated basaltic lava, while in the Gulf of Mexico it is unconsolidated, or at best semiconsolidated, clastic sediments. In the geological record, boundaries of rock masses representing such major slides could very well be confused with the effects of tectonic faults; indeed, distinctions between the largest landslides and gravity-driven faults may be in the eye of the beholder.

Despite improvements in recognition, prediction, mitigative measures, and warning systems, worldwide landslide losses—of lives and property—are increasing, and the trend is expected to continue into the twenty-first century. Some of the causes for this increase are continued deforestation, possible increased regional precipitation due to short-term changing climate patterns, and, most important, increased human population.

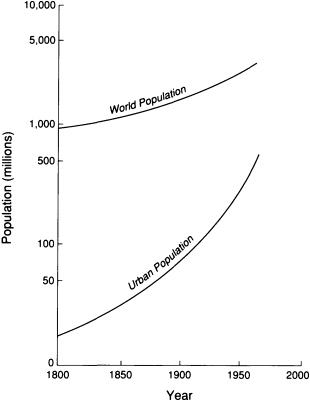

Demographic projections estimate that by 2025 the world's population will number more than 8 billion people. The urban population will increase to 5.1 billion—more than the total number of humans alive today (Figure 5.6). In the United

FIGURE 5.6 Urbanization compared with world population growth since 1800. Modified after Davis, 1965, Scientific American.

States the land areas of the 142 cities with populations greater than 100,000 increased by 19 percent in the 15-year period from 1970 to 1985. By the year 2000, 363,000 km2 in the conterminous United States will have been paved or built on. This is an area about the size of the state of Montana. Accommodation of this population pressure will call for large volumes of geological materials in the construction of buildings, transportation routes, mines and quarries, dams and reservoirs, canals, and communication systems. All of these activities can contribute to the increase of damaging slope failures. In other countries, particularly developing nations, the urbanization pattern is being repeated but often without adequate land planning, zoning, or engineering. Not only do development projects draw people, but the projects themselves as well as the people who settle the surrounding area often occupy just those hillside slopes that are susceptible to sliding. At present, there is no organized program to provide the geological studies that could prevent the worst scenarios posed by this threat.

To reduce landslide losses, research efforts should encompass more than investigations of physical processes in hazardous areas aimed at understanding the nature of slope movement. Earth scientists also need to perfect methods for identifying areas at risk and for mitigating contributory factors. These goals are attainable. Scientists can predict areas at risk and advise means to avoid or moderate danger, but much of the research needed has yet to be done.

For the past half century, geologists have relied primarily on aerial photography and field studies—ideally in combination—for identification of vulnerable slopes and recognition of landslides. In recent years, since multispectral satellite coverage has become available for much of the world, an additional tool is available that can provide images in black and white or color as well as spectral bands through red, green, and near-infrared wavelengths. The coverage, scale, and quality of multispectral imagery is expected to improve considerably within the next decades and provide valuable information that can lead to improved identification of landslide-prone locations.

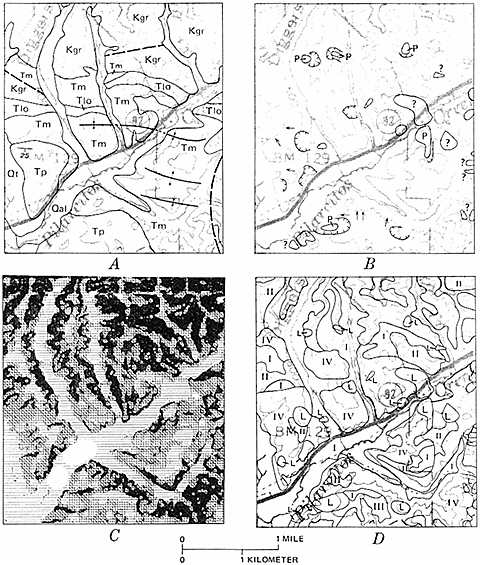

The information gathered from satellite reconnaissance can contribute to the growing store made use of in geographic information systems, which are digital systems of mapping spatial distribution that can be applied to the preparation of landslide susceptibility maps (Figure 5.7). These modern data-handling systems facilitate both pattern recognition and model building. Patterns and models that suggest landslide susceptibility can be tested, revised and improved, and then tested again against large numbers of observations.

As a result of these gains in knowledge, progress has been made in determining appropriate types of landslide mitigation. The most traditional mitigation technique is avoidance: keep away from areas at risk. When occupation of a site warrants risk, engineered control structures may be required, including surface water diversion and subsurface water drains, the construction of restraining structures such as walls and buttresses, and devices such as rock bolts. The establishment and enforcement of site grading codes calling for appropriate slope stabilization instituted in 1952 by Los Angeles County have worked well. Cut-and-fill grading techniques involving the removal of material from the slope head, regrading of uneven slopes, and hillslope benching are all of proven value. Consideration of such factors has had a major effect on reducing landslide losses in the United States, Canada, the European nations, the former Soviet Union, Japan, China, and other countries. Landslide research today is focused not so much on locating where landslides are and what hazards they represent as on figuring out how to cope with the potential hazard that they represent. This is an area of close cooperation between solid-earth scientists and geotechnical engineers.

Mitigation has also benefitted from substantial progress in the development of physical warning systems for impending landslides. Significant improvement will result as better instrumentation and communication systems are developed. Of particular importance will be continuing advances in computer technology and satellite communications. Hazard-interaction problems require a shift in perspective from the incrementalism of individual hazards to a broader systems approach. Earth scientists, engineers, land-use planners, and public officials are becoming aware of interactive natural hazards that occur simultaneously or in sequence and that produce cumulative effects that differ from those of their component hazards acting separately. In the case of landslides, research is particularly needed on cause-and-effect relationships with other geological hazards. For example, in the 1991 Mount Pinatubo (Philippines) eruption, the thick accumulating ash-fall and ash-flow deposits proved particularly liable to generate landslides and debris flows during typhoons. Research on the social aspects of such relationships in terms of warning systems and emergency services is necessary. At what point should people evacuate and abandon their little piece of the Earth?

FIGURE 5.7 Landslide susceptibility map (D) produced using geographic information systems methods of a part of San Mateo County, California, near the town of La Honda. A landslide inventory map (B) was compared with a geological map (A) and a slope map (C) to determine the percentage of each geological unit that has failed by landsliding in the past, and the slope important for the failure. These data formed the matrix for the susceptibility analysis (D). The higher the roman numeral, the more susceptible the slope is to landsliding in the future. Landslide deposits (L) are shown as a separate category (highest). This map was used by San Mateo County to reduce potential development in landslide-prone areas and to require detailed geological studies to determine the safety of building sites. Figure from Earl Brabb, U.S. Geological Survey.

Human intervention can reduce landslide risk by influencing some contributory causes. Projects that undermine slopes in marginal equilibrium or destabilize susceptible areas by quick drawdown of reservoirs can be avoided. Among projects that can lay the groundwork for disastrous landslides are road building, mining, fluid injection, and building construction that entails clearing vegetation. Planning and designing such projects with the local landslide potential in mind is absolutely essential. While these activities may not individually cause a landslide, they can increase the likelihood of slope failure as preconditions to which cloudbursts or earthquakes are added. Wherever hillsides receive precipitation over days and weeks, the pore-water pressure can build in rock fractures and decrease bulk shear strength, which can then induce displacements under less force than would be needed to shear a drier

material. A proven mitigation technique in such cases is for geologists to locate the water surface in fractured rocks and drain off destabilizing water by drilling horizontal wells.

Then there are the regional-scale contributory causes of increased landslide susceptibility such as deforestation. According to the World Resources Institute, approximately 109,000 km2 of tropical forest is being destroyed annually—an area the size of Ohio. Removal of the forest cover increases flooding, erosion, and landslide activity. This deforestation is causing serious landslide problems in many developing countries.

Over a period of about 3 years in the 1980s, during the course of an El Niño episode, regional weather changes in the western United States resulted in much heavier than average precipitation in mountainous areas. That increased precipitation caused a tremendous increase in landslide activity in California, Nevada, Utah, Colorado, Washington, and Oregon. Scientists are coming to understand such cycles through integration of collected data with information found in the historical and geological records. Cycles such as El Niño form the background variation of the climate pattern. But earth scientists do not know what to expect with additional perturbation from a changing greenhouse effect. Will the predicted temperature increase cause a decrease in precipitation, as occurred in mid-America during the summer of 1988? Will it increase storm activity throughout the mid-latitudes? Will it disrupt global climatic patterns, resulting in droughts in some areas and increased precipitation in others? Documented cause-and-effect sequences such as those related to El Niño episodes suggest that if areas prone to landslides are subjected to heavier than normal precipitation, susceptible slopes are likely to fail.

Land Subsidence

Land subsidence can be currently observed in at least 45 states; it is estimated to cost the nation more than $125 million annually. Subsidence can have human-induced or natural causes; both are costly. In the United States at least 44,000 km2 of land has been affected by subsidence attributed to human activity, and the figure is probably higher. As for natural subsidence, one event—the 1964 Alaskan earthquake—caused an area of more than 150,000 km2 to subside as much as 2.3 m. This event was extreme but not atypical of past or probable future disturbances.

The causes of subsidence are various but well known, and the hazard presented is well recognized; however, the indications of specific imminent danger and the possible cures or preventives are not clear. Subsidence can be induced by withdrawing subsurface support—by removing water, hydrocarbons, or rock without a compensating replacement. In many instances, oil or water is removed from porous host sediments that compact as the interstitial fluid is removed. In such cases, collapse is slow and gentle. More dangerous are situations that leave voids—withdrawal of water from cavernous limestone or mining of coal, salt, or metals. These voids can collapse gradually or suddenly. Not all subsidence is unexpected—ground over longwall coal mines is supposed to subside gradually to a new elevation that is both safe and stable. The ground surface above subsiding land is not a good place for a shopping center or school building, but it may be quite suitable for crops or recreation.

Natural subsidence occurs for several reasons. A basin surface may warp downward in response to recent sediment loading or by dewatering and compaction of sediments; both processes presently affect the Mississippi River delta. Tracts may subside because of folding or faulting, as in the Alaskan example above. Regions such as the Texas Gulf coast are triply vulnerable because they overlie a downward-flexing part of the crust; are above a thick pile—up to 10 km deep—of compacting sediments; and are being mined for groundwater and petroleum, which accelerates deflation of the sedimentary pile.

The primary cause of the most common, and most dangerous, subsidence in the United States is groundwater extraction through water supply wells. In California's San Joaquin Valley, 13,500 km2 of land surface has sunk as much as 9 m in the past 50 years because of removal of groundwater for irrigation. The danger, of course, is in more populated areas, especially those close to sea level. Some cities that are already struggling because of groundwater extraction include Houston-Galveston, Texas; Sacramento and Santa Clara, California; and Baton Rouge and New Orleans, Louisiana. The problem of induced subsidence is international and also threatens London, Bangkok, Mexico City, and Venice. If sea level continues to rise, the cities that are literally on the edge now will be fighting to stay above water.

Sinkholes, another common source of land collapse, can occur unexpectedly on a more local scale than wholesale subsidence. Sinkhole collapse generally results from the slumping of poorly consolidated surficial material into underground caverns.

Collapse over underground caverns can usually be attributed to a recent lowering of the groundwater level and consequent loss of pore-water pressure. As long as a cavern is filled with water, the material covering it receives enough support to stay in place. Actual collapse of bedrock caverns is less common. Sinkhole collapses are rapid but very local and can be either natural or human induced. Similar events result from the dewatering of abandoned mines that penetrate close to the surface. Ground-penetrating radar is used to identify shallow caverns, and seismic tomography has promise for evaluating the stability of mine pillars—volumes of unmined rock left to support the roof over adjacent mined areas.

Subsidence in one region, as a consequence of loading by former ice sheets, may be accompanied by compensating elevation in another region. The most recent glaciation of North America isostatically depressed the parts of the continent covered by ice—4.0 km thick in places—and caused upward bulging of land along the margins of the depression. Following deglaciation, the process reversed, and today the area once covered by ice is slowly rising at rates as large as about 3 mm/year as the glacial forebulge subsides. This process is tilting the Great Lakes region, and in a few thousand years it will divert Great Lakes drainage from the Niagara River through Chicago to the Illinois River, drying up Niagara Falls. In the meantime, some of this uplift may play a part in inducing small-magnitude earthquakes in the north central and New England states.

Floods

Any relatively low-lying area is subject to flood. Hurricanes and typhoons, tidal surges, and tsunamis can deliver too much water from the direction of the ocean. Snowmelt and ice dams, cloudbursts, and prolonged rainstorms can deliver too much water from inland areas. Some of the most frightening floods descend on mountain settlements when their watersheds receive cloudburst rain, and flash floods completely scour out valleys. In canyons of the mountainous western United States, warning signs read, ''In case of flash flood, climb straight up." In the United States, rainstorms and their accompanying flooding and debris flows accounted for 337 of the 531 federally declared disaster areas from 1965 to 1985. Human activities also cause or contribute to flooding—one of the most tragic floods struck Johnstown, Pennsylvania, in 1889 when a dam failed and 2,200 people were killed. Urbanization augments flooding. Studies revealed that in Houston, Texas, the creation of impervious surfaces increased the magnitude of the 2-year flood by nine times. Paving and stream channelization remove water quickly from one area, but they deliver more water more quickly to other areas. Factions argue about responsibility for the flood that struck Rapid City, South Dakota, in June 1972. That flood, which killed 245 people and caused $200 million in damage, followed an exceptional rainfall that was preceded by cloud-seeding efforts.

Floods can be terrible, but they are also accepted as few other hazards are. Humans have come to realize that access to transportation, fresh water, and rich alluvial soils is the reward for surviving floods. Long experience with floods has led to flood management practices. Because different management theories evolved in different environments, policy makers cannot, and perhaps should not, agree on standardized practices. Methods of management include land-use regulations; structural measures such as dams, levees, and floodwalls; land-treatment measures such as reforestation or terracing of stream banks; zoning ordinances and building codes; and warning systems.

Floods are not discussed at length here because they are considered in the 1991 National Research Council (NRC) report Opportunities in the Hydrologic Sciences, in which specific research activities such as short-term forecasting are identified. In that report emphasis is placed on the responsibility for reduction of flood loss that lies with public policy makers who can regulate development in flood plains. Estimating the risk in flood plains depends on knowing the probability of a repetition of events of a particular magnitude. In areas such as the western states where the historical record is short, this is not easy. Quaternary geologists are able to use the geological record to estimate both the timing and the scale of events in an area prior to written history.

Coastal Fluctuation

The coastline is a major battleground in the competition between the forces of deposition and erosion. It is also a region of concentrated human activities. Thus, coastal processes constantly affect populations and have long been the subject of conjecture. The causes of coastline fluctuations are numerous; they result from both internal and external earth processes.

One major factor is the uplift or subsidence of the coastal regions caused by tectonic forces. Most of the west coast of the United States is currently rising and has been for the past half-million years.

Uplift stages are typically marked by successively elevated terraces. Each terrace was once a wave-cut bench below a sea cliff, indicating a brief pause for a steadily rising coastline. The coastal terrain is riddled with landslides, an outcome accentuated by occasional earthquake shaking and by the infrequent cloudbursts. The problem is apparent, but identification of the most vulnerable slopes requires the careful scrutiny of engineering geologists.

The present site of the southeastern seashore of the United States stood well above sea level during the most recent continental glaciations. Rivers entering the sea did so farther to the east, through valleys incised into the coastal plain. As the ice sheets melted, the sea level rose and flooded the valleys, forming estuaries such as the Chesapeake Bay. The Patapsco, Potomac, and James rivers were all once tributaries of a Susquehanna River that flowed out past the present bay and formed a delta on what is now the continental shelf. As drastic as such changes might seem, they are relatively recent, having occurred over the past 10,000 years, and have cycled back and forth as ice sheets waxed and waned repeatedly over the past few hundreds of thousands of years.

Within the past 10,000 years of high sea level stand, currents and wave action along the shores have piled up barrier beaches such as those near Cape Hatteras, protecting shallow lagoons on the landward side. These are ephemeral and fragile creations, even without human dredging, building, and destruction of the vegetative cover. The lagoons and barrier islands record a complex history of deposition, erosion, and redeposition. A 1-m variation in sea level will change the sea-land interface substantially. In areas such as the Texas Gulf coast, where a thick section of sediments is gradually consolidating, scientists anticipate that shoreline features will be severely affected, probably with major losses of valuable property (Figure 5.8). Proposals to recover energy from geopressured fluids from southeast Texas aquifers would almost surely result in further subsidence of the surface and encroachment of the gulf on present-day shores.

As rivers erode the land, deltaic and estuarine environments become the first sites of major sediment deposition—except for constructed reservoirs and rare natural lakes. These brackish tidal waters are nursery grounds for many marine organisms as well as invitations for the establishment of human populations because of access to those nursery grounds, to transportation routes, and to freshwater sources just up river. Many, if not most, of the major estuaries of the country have become contaminated by natural or human-introduced pollutants. Boston Harbor is, unfortunately, an example of a contaminated estuary. The fish populations have declined to the point of disappearance, and the beaches are nearly deserted throughout the summer because of pollution. Chesapeake Bay pollution, first detected by the Public Health Service before World War I, has contributed to a decline of striped bass. Pollution of estuaries has an extremely deleterious effect on fish spawning, and encroachment often leads the way in destroying the estuarine habitat, the eventual result being diminution of potential food supplies.

Deltas, barrier islands, lagoons, tidal flats, and estuaries support a number of dynamic physical, chemical, and biological processes that operate in complex association. Although scientific understanding encourages informed management, only a few of the world's populated coastal environments have been studied in the required detail. The United States has engaged in a major effort in coastal-zone planning, but there has been no systematic effort at data collection to determine either baseline information or trends in essentials such as estuarine quality. Study of estuaries and other coastal environments should prove most productive when approached by interdisciplinary teams that can appreciate the complexities of the physical, chemical, and biological processes. The sensitivity and significance of coastal processes warrant a systematic integration of biological, sedimentological, hydrological, and geochemical data collection and analysis. Such an effort should result in a product that will inform decision makers in the near future.

TECTONIC HAZARDS

Earthquakes

Hazards such as landslides, subsidence, and floods are often exacerbated by human activities. But they are also often triggered by violent tectonic upheavals—earthquakes or volcanoes, or by the former's menacing effects, tsunamis. There is nothing that society can do at present to prevent tectonic upheavals, but there are methods to monitor, predict, mitigate, and avoid potential tectonic disasters (Figure 5.9 ). In the United States, progress in these strategies has involved many geologists over the past 20 years because of the need to protect population centers from threats such as the 1980 Mount St. Helen's eruption and the 1989 Loma Prieta earthquake. Scientists and policy makers know that

FIGURE 5.8 Impact of coastal erosion from Hurricane Andrew on Raccoon Island, Isles Dernieres, Louisiana. The top photograph was taken on July 9, 1992; the bottom photograph on August 30, 1992. Photographs courtesy of Jeff Williams, U.S. Geological Survey.

major natural disasters can be forestalled only by continuing research into the nature of such upheavals; responsible planning of engineered works and land-use facilities in areas at risk; and methods for warning, evacuating, and providing emergency relief. Only cautious, realistic planning can prevent overwhelming tragedies such as when hundreds of thousands of people were killed by the 1976 earthquake in Tangshan, China, or 3,000 were killed in the Philippines by a tsunami only 3 weeks later. The more knowledge that scientists gain about the causes and effects of earthquakes, the more able they will be to predict and plan for all these potential disasters. For example, the seismological techniques used to monitor earthquake zones can be used to monitor potential volcanic activity and potential tsunami threat. And assessment of earthquake, volcano, and tsunami hazard potential can help planners predict dangers from the associated landslides and floods.

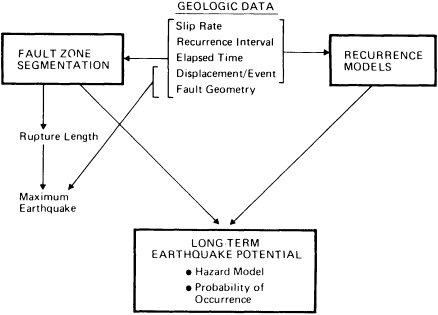

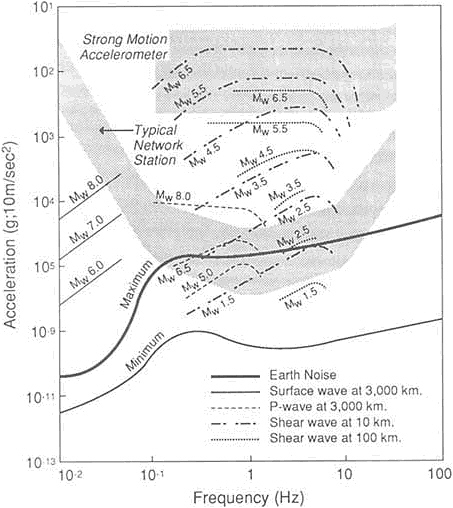

Earthquake hazard evaluation involves determinations of the specific location, frequency of occurrence, and intensity of energy release—which, in turn, require characterization of the space, time, and size distribution of the earthquakes that give rise to the hazard (Figure 5.10). Decision making in the face of serious earthquake threat requires that the hazards and risks be neither overestimated nor underestimated because of the great consequences for life, safety, and economic security. Administrators cannot expect citizens to abandon their daily lives for anything but imminent danger. Therefore, earth scientists have an obligation to acquire relevant data and pursue research aimed at reliable depiction of an earthquake threat.

Quantitative experience from many earthquakes

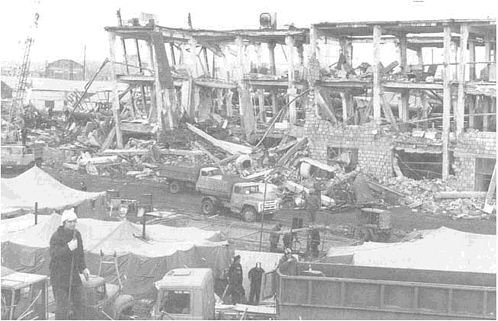

FIGURE 5.9 Earthquake damage to a precasting plant in Axtoran (near Leninakan), Ukraine. This plant made building components for the precast, reinforced-concrete buildings in Leiniakan. Note the collapsed precast-prestressed concrete trusses. Photograph by Fred Krimgold; reproduced from Earthquakes and Volcanoes 21(2), p. 73.

provides an accurate and useful understanding of an earthquake's seismic hazard parameters, the nature of the earthquake source, the maximum size of the resulting seismic waves, the qualitative characteristics of those seismic waves, and the potential effect along the surface in response to the wave's energy. The study of these parameters involves diverse disciplines, ranging from sedimentology and seismology to geotechnical and civil engineering. To arrive at a realistic picture of the damage that may result from an earthquake, decision makers must apply scientific knowledge of the seismic hazard to the specific characteristics of engineered works. Only then can they estimate the seismic risk—the threat that earthquakes present to human lives and property.

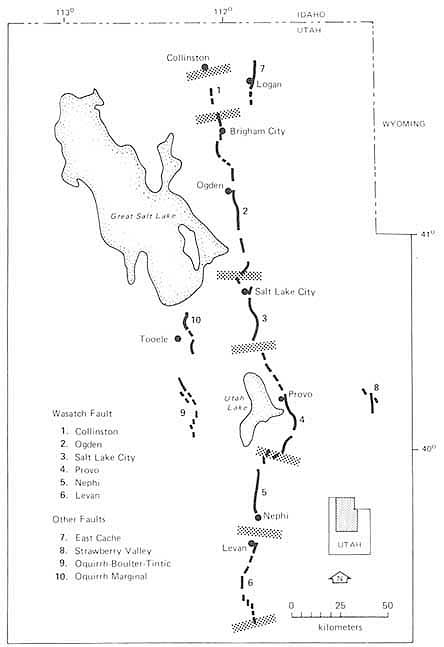

Great concentrations of population have settled close to major plate boundaries. Of those cities whose populations are projected to exceed 2 million by the year 2000, 40 percent are within the 200-km earthquake shock radius of a plate boundary zone. While most deadly earthquakes are related to plate boundaries, those boundaries are not always sharp, and the geometry of subduction-zone dip amplifies the breadth of potential damage. Studies of seismicity and active deformation have shown that the regions affected by plate interactions can be large. For example, the entire belt of mountains formed by the Indian-Asian collision stretches over an area of more than 6 km2. And the effects of the diffuse plate boundary in the western United States extend over 1,000 km into the continent to the active Wasatch Fault (Figure 5.11), which passes through Salt Lake City. The distribution of earthquakes in the United States reveals that broad zones can be involved in plate boundary deformation. In the western states occupying these zones, many faults underlie urban areas, where earthquakes of magnitude 6 or larger pose serious threats. Some of these dormant faults are concealed by basin sediments or overlying rock that responds to tectonic forces by folding rather than faulting; such landscapes may provide few visible geomorphic clues about potential hazard. And while these faults seem to approach the surface at high angles, geophysical surveys find correlations with regionally extensive reflection sur-

FIGURE 5.10 Relationship between geological data and aspects of seismic hazard evaluation. After D. P. Schwartz and K. J. Coppersmith, NRC, 1986, in Active Tectonics.

faces that are nearly horizontal and relatively deep within the crust. Analysis of geophysical data suggests that the faults are curvilinear in cross section, with one arm angling toward the surface and the other laterally underlying extensive areas. Possibly these listric faults may act as earthquake sources, although no significant earthquakes have so far been demonstrated to have occurred on the flat-lying segments of such faults.

With the continuing development and empirical refinement of plate tectonic theory, a first approximation of potential earthquake source location can be estimated reliably from the regional tectonic setting. Recent studies have shown that the total seismic energy released in plate boundary regions is more than 99 percent of the total worldwide seismic energy release. The problem is that most plate boundary regions have earthquakes quite frequently, which relieves stress incrementally, while the 1 percent of seismic energy released in intraplate events occurs only occasionally. Those singular events may therefore be extremely violent spasms. Nevertheless, the basic plate tectonic setting provides invaluable clues. One example is the Cascadia subduction zone in the Pacific Northwest, which marks the boundary between the Juan de Fuca and North American plates. Historically, this part of the plate interface zone has been seismically quiescent. But the possibility of powerful earthquakes occurring along this zone, which includes the cities of Vancouver, Seattle, and Portland, is suggested on the basis of its plate tectonic setting as well as neotectonic geological studies that indicate the occurrence of major subduction-zone earthquakes here within the recent prehistorical past. Earthquakes comparable in size to the 1964 Alaskan earthquake—which devastated Anchorage—may well occur along this coastline of the Pacific Northwest.

Intraplate earthquakes can pose a threat comparable to plate boundary events, as shown by the three very large earthquakes that rocked the New Madrid, Missouri, region in late 1811 and early 1812. Those earthquakes drained swamps, altered reaches of the Mississippi River, and created new lakes in parts of Tennessee. Chimneys toppled and stone walls cracked in St. Louis, Louisville, and even Cincinnati; church bells rang in Washington, D.C. Another major destructive intraplate event struck Charleston, South Carolina, in 1886. The considerable increase in population density throughout the eastern United States since these great intraplate earthquakes guarantees that comparable events would devastate communities in the eastern two-thirds of the country.

A valuable tool for identification of earthquake sources is the study of earthquakes described in the historical record. Modern seismometers have been useful only since the end of the nineteenth century, but thousands of earthquakes that predate such instrumentation are well documented in the historical literature. Systematic assessment of historical descriptions provides estimates of the sizes and locations of earthquakes in China, the Mediterranean, and the Middle East. Earthquakes have been cataloged in these regions of the world for a few

FIGURE 5.11 Segmentation model for the Wasatch Fault zone, Utah; stippled bands define segment boundaries. After D. P. Schwartz and K. J. Coppersmith, NRC, 1986, in Active Tectonics.

thousand years—intervals long enough to embrace rare larger events that recent instrumental data often do not include. Clearly, these historical events can indicate earthquake sources that might not otherwise be evident. The extensive historical catalogs may also provide indications of short-term fluctuations in the locations, sizes, and rates of earthquake activity that have not left evidence in the much longer geological record. The Chinese historical record bears witness to apparent spatial and temporal cycles having periods of a few hundred years. Such seismic cycles may also occur in Japan with regular intervals that range from 100 to 200 years. But there are no cases of instrumentally recorded data covering periods long enough to provide any real understanding of seismic cycles—or even to offer any certainty in predicting earthquake recurrence.

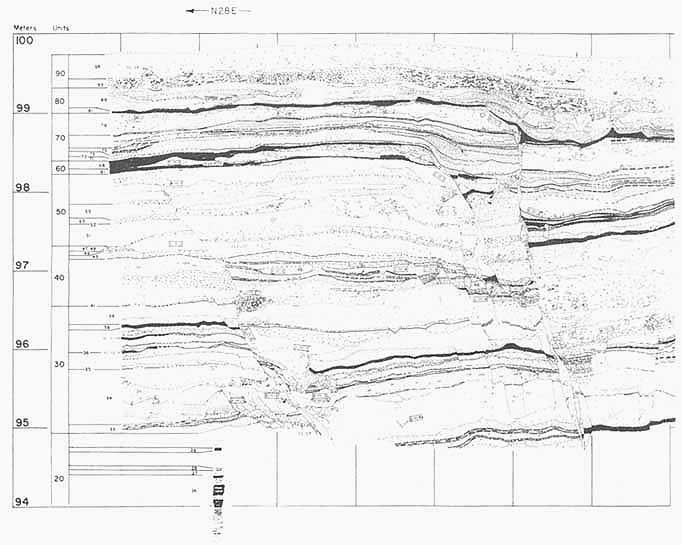

Major advances have been made in identifying prehistorical earthquake sources on the basis of geological field studies. Displacements along active faults can be established by combining the historical record with stratigraphy, geomorphic analyses, and age-determination techniques in the new subdiscipline of paleoseismology. A good example of how the new techniques have given insight into the

FIGURE 5.12 San Andreas Fault exposed in a trench at Pallett Creek, California. Black horizons are datable peat layers, which are progressively offset by greater amounts at greater depths (greater ages), the cumulative effect of earthquake faulting. From K. E. Sieh, 1978, Journal of Geophysical Research 83, pp. 3907-3939.

long-term seismic record is provided by studies of that segment of the San Andreas Fault in California that broke in 1857 (Figure 5.12). The segment currently is seismologically very quiet, but paleoseismological evidence indicates repeated large prehistorical displacements, which presumably generated large earthquakes. The average intervals between these large paleoearthquakes taken together with the time of the latest event in 1857 are used by geologists to make statistical extrapolations as to how likely similar events may be in the near future.

Relatively large significant earthquakes are sometimes caused by ruptures on faults that do not break through the surface, as illustrated by the Coalinga and Whittier Narrows earthquakes in California. Investigation at such localities, however, shows that these events do in fact leave their marks in the geological record. Measurement of changes in the shape of the surface provide geodetic evidence of continuing folding, such as slope steepening, and exposure of cross sections can reveal evidence of strong ground shaking, such as liquefaction—the transformation of a saturated soil into a fluid mass—and of continuing, long-term, centimeter-scale deformation within deposits overlying the faults. Detailed investigations using field geology techniques are essential for locating buried seismogenic faults.

The largest earthquake that a particular seismic source is capable of generating occurs very infrequently, so that the historical record of seismicity at any locality probably does not include the largest

earthquake that ever occurred at that locality during the past few thousand or tens of thousands of years. Consequently, estimates of maximum magnitude, or energy release, for seismic sources are based on the geological record. During individual earthquakes, faults typically do not rupture over the entire fault length; instead they slip over only a few segments of the entire length. Total rupture lengths increase with earthquake magnitude. Similarly, fault rupture area—the size of the plane that has ripped loose—increases with magnitude. The lengths and areas of old ruptured segments (see Figure 5.10) place constraints on the maximum sizes of earthquakes that might reasonably be expected at that location. Current work is aimed at identifying physical, geometric, and fault behavioral characteristics that are diagnostic of individual fault segments. Detailed geological studies along the length of whole fault systems help to identify segments at risk of future ruptures. The problem comes in estimating these parameters for a given fault before the occurrence of the maximum event. A wide variety of geological studies—geophysical, seismological, and geomorphic—aid in the estimation of parameters related to magnitude. Methods used to gather information include interpretation of geometric constraints determined from geophysical analysis, investigation of fault-scarp dimensions as paleoseismic indicators, and integration of stratigraphic relationships in exploratory trenches excavated across the fault (Figures 5.12 and 5.13). All of these approaches may, in combination, provide a clearer picture of the sizes and dates of paleoearthquakes exhibited in the geological record and help to predict whether a large earthquake is imminent.

The first known instrument for earthquake detection was invented nearly nineteen hundred years ago by a Chinese mathematician and astronomer. It was designed with eight dragons poised around the circumference of a hollow globe with unattached balls perched in their mouths and eight openmouthed toads waiting below them. An earthquake of even the slightest magnitude would allow a ball to fall from the mouth of a dragon into the mouth of a toad, setting off an alarm. It was thought that the earthquake would have originated in the direction of the empty-mouthed dragon. For two millennia after this invention, however, earthquake detection remained an elusive goal for researchers in Asia and Europe.

In the late nineteenth century, advances in both theory and engineering led to the development of the first seismometers—instruments that could reliably detect and record earth tremors. Modern seismometers continually monitor the Earth's vibrations and, being rigged with magnification equipment, produce a sensitive record of those vibrations. When an earthquake hits, anywhere on the globe, seismometers distributed over the surface fluctuate according to their reception of body waves and surface waves. There are two types of body waves: compressional—similar to the push and pull of a punch—and shear—similar to the writhing of a whiplash; both of these travel through the Earth. Surface waves travel in a complex array and cause most of the damage. To locate the source of the earthquake, both its focus deep within the lithosphere and its epicenter on the surface above the focus, seismologists need three seismological readings of body wave onset. They calculate the difference in arrival times between the compressional waves and the shear waves, which indicates the distance through the Earth from the seismometer to the focus; they can then pinpoint the fault rupture through triangulation. The techniques developed for recording natural earth shaking have produced a completely independent field of inquiry; seismic technology is a geophysical technique that induces mechanical shock-waves in the Earth, records their reflections, and produces images from the reflections that enhance understanding of the interior structure. The technology has come full circle as an essential tool for studying earthquake characteristics.

Recent advances in geophysical techniques are providing better descriptions of the three-dimensional geometry of earthquake sources. Deep crustal images—such as those constructed from data produced by the Consortium on Continental Reflection Profiling, or COCORP—can reveal the regional scale of low-angle faults as well as previously unsuspected local fault geometries. Seismic tomography—comparable to the remarkable CAT-scan images used for medical investigations—contributes dense numbers of consecutive slices through the Earth and promises the ability to detect ancient lesions and scars in crustal structure with a level of detail previously thought to be unattainable.

Earth scientists have also improved their ability to use mathematical inversion techniques. Inversion analyzes the effects to gain knowledge about the nature of the cause. Seismic inversion, using the data from seismometers, studies patterns produced by an earthquake's body and surface waves and determines the type of earthquake that could generate them. Geodetic inversion measures the physical changes on the surface produced by an earthquake and characterizes the sort of earthquake that

FIGURE 5.14 Expected accelerations produced by a range of magnitude earthquake as a function of frequency. The ranges of operation of the typical existing California network station and typical strong motion station are indicated by the stippled areas. The curves marked -120 and -160 dB indicate constant power spectral levels of acceleration of 10-12 and 10-16 (ms-2)2/Hz, respectively. Note that many earthquake motions are not within the range of existing instruments in the networks. From NRC, 1991, Real-Time Earthquake Monitoring.

would be capable of making those changes. Successful seismic and geodetic inversions may construct remarkable pictures of the fault rupture surface at depth and the associated complexities in both fault geometry and the dynamic rupture process. Powerful examples of the application of inversion techniques can be found in the studies of recent earthquakes at Imperial Valley and Coalinga, California, and the earthquake at Borah Peak, Idaho.

During the past 20 years efforts to obtain records of strong ground motion have increased, and the data bank has grown with each successive earthquake. The data have been recorded, for the most part, on networks of relatively simple accelerometers with minimal magnification capabilities. These records have been invaluable to the engineering community in designing structures to withstand earthquakes. Within the past few years a new generation of instruments has been developed, and they are now being deployed to supplement the older instruments (Figure 5.14). This new generation of instruments can digitally record a wide spectrum of frequencies over a wide dynamic range. The new data so gathered permit the analysis not only of strong ground motion, as did the older generation of instruments, but also details of the faulting process. Such instruments, and the data recorded by them, are revolutionizing our understanding of rupture mechanics.

Theoretical models have been developed for predicting the shapes of curves that represent earthquake ground-motion intensity, called seismic wave spectra. Although there is still some controversy over the physical interpretation of the models, different investigators have made predictions that are in general agreement with observed records, using data recorded in regions such as western North America where substantial numbers of strong-motion records are available. With that agreement as encouragement, theoretical models

have been proposed that make ground-motion estimates for intraplate regions where strong-motion records are sparse to nonexistent. Research using accelerograph records is supplemented by the study of data from seismometers located at great distances from an earthquake epicenter, called teleseismic data.

Techniques have been developed for simulation of near-source ground motion. These techniques are still the subject of some controversy, but they are being improved and have been used for the design evaluation of such critical facilities as nuclear power plants and nuclear waste repositories. Such simulations are routinely used to estimate the damage potential of postulated subduction-related earthquakes in the Pacific Northwest.

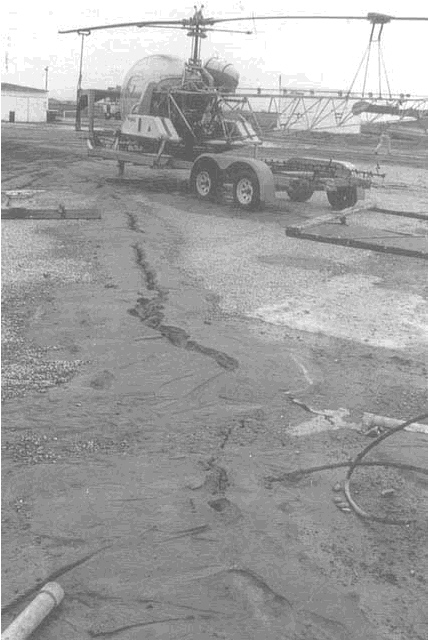

Several recent earthquakes have emphasized the critical importance of soil conditions to earthquake ground motions at a particular site. On September 19, 1985, a strong earthquake ruptured a fault along the Pacific coast of Mexico, west of Mexico City. Resonance of seismic wave energy within the lake sediments beneath Mexico City caused amplified ground motion and resulted in tremendous damage to high-rise buildings in the city even though it is located more than 350 km from the earthquake's epicenter. On October 17, 1989, the damage to buildings in San Francisco and Oakland, located more than 75 km from the epicenter of the Loma Prieta earthquake, occurred almost exclusively at locations underlain by unconsolidated man-made fill or soft sedimentary deposits. Saturated soils that are supporting heavy loads may transform into a fluid slurry when given a jolt—the process of liquefaction (Figure 5.15). Soil structure disruption from this process is identifiable in the geological record as a sign of past earthquake activity. Ironically, in both Mexico City and San Francisco, the susceptibility was known: the problems had been clearly identified in advance and were accurately shown on seismic hazard maps. Photographs taken in the Marina District following the 1906 San Francisco earthquake show evidence of liquefaction-induced soil failures identical to those revealed in photographs taken in 1989. These regrettable facts underscore the futility of hazard research and accurate risk assessment if communities are incompletely informed or choose to ignore known geological threats.

A fundamental goal of seismic hazard research and risk assessment is accurate prediction of potentially damaging ground motion. Seismic hazard is assessed using deterministic and probabilistic statistical analyses, and a dynamical systems approach is developing rapidly. The deterministic approach concentrates on the maximum earthquake that a given seismic source is believed capable of producing during a specified time period. Assuming the maximum earthquake will occur, this approach determines ground motions that can be empirically associated with a known fault of specific seismogenic characteristics. Deterministic seismic hazard analyses are useful for engineering applications at critical facilities, such as dams and nuclear power plants, where there is a need to develop a conservative seismic performance design and to compare the results with those of other approaches.

Probabilistic seismic hazard analyses that attempt to incorporate a more complete picture of the seismic environment affecting the site of interest are now in routine use for virtually all types of structures. This approach delineates the individual uncertainties associated with all aspects of the site and incorporates these uncertainties into the analysis. Key parameters, such as maximum magnitude, are expressed as probabilistic distributions in time and space; the final results are expressed as the probability of exceeding various levels of ground motion at the site. At this point an informed decision can be made about appropriate design requirements for the particular facility. An acknowledged disadvantage of the probabilistic approach is the need to estimate the frequency of occurrence of various levels of earthquake magnitude rather than to merely assume that the maximum earthquake will occur during the lifetime of the engineered works.

Since the 1970s, attempts at short-term deterministic prediction have been augmented by probabilistic forecasts as a pragmatic means of quantifying seismic risk in a socially useful manner. Along the San Andreas Fault in California, for example, 30-year forecasts of earthquake activity form the basis for earthquake hazard zonation and mitigation activities on both the state and local levels. The 1980 forecast for a 2 to 5 percent per year probability of a great earthquake along the fault in southern California led directly to a decade-long program of structural retrofitting or removal of the entire class of buildings with greatest life safety risk in Los Angeles. Cooperation between providers and consumers of earthquake predictions proves to be highly desirable when information flows in both directions. The earthquake prediction experiment at Parkfield changed from a purely scientific investigation to an operational short-term prediction program through the direct participation of the emergency response community in planning and funding the experiment.

FIGURE 5.15 Sand boils resulting from liquefaction during the 1989 Loma Prieta earthquake near Santa Cruz, California. Photograph courtesy of Gerald F. Wieczorek, U.S. Geological Survey.

As a consequence of emphasizing the inherently statistical nature of prediction, our understanding of the prospects of damaging earthquakes has been revolutionized. Through the development of a framework encompassing all that is known about a particular region, from the historical and instrumental record to its plate tectonic setting and the short-term influence of specific geophysical events, seismologists can work toward making useful predictions. For example, a decade ago the prospect for the generation of great earthquakes in the Cascadia subduction zone off the Pacific Northwest coast was a hotly debated subject. Evidence for prehistorical large-magnitude events found by paleoseismologists has redefined the subject of debate from the likelihood of a future event to its timing and the severity.

Society is increasingly dependent on location-specific scientific information, expressed quantitatively and qualitatively, for decision-making purposes. Earthquake prediction information must be communicated accurately and articulately in order to avoid panic while preparing for highly probable potential crises. Whether long-term or short-term

predictions, such messages should provide maps of earthquake damage potential, including such near-instantaneous changes as landsliding and liquefaction. Within this framework, short-term predictions can provide crucial information, provided the users are properly prepared to receive it; preparation must be made through public education programs. California has begun to issue short-term earthquake advisories based on probabilistic models of foreshock activity. These low-probability advisories—a 2 to 5 percent chance of a larger event in a 3-day window—have proved their worth by spurring individuals and institutions toward earthquake mitigation actions they had previously ignored. More precise short-term predictions may, some day, again revolutionize the technical ability to give earthquake warnings. Even if and when this goal is achieved, the translation of scientific knowledge into planning, preparation, and action will remain the most important task for scientists and public officials alike.

Modern computer-networking capabilities promise a new form of hazard mitigation involving real-time seismology that can immediately identify the most severely damaged areas and assign emergency relief priorities. It is now technically possible to determine earthquake source parameters such as size, depth, and direction of rupture propagation immediately after a large earthquake. Large-scale deployment of the new generation of broadband seismic instruments with satellite or other telemetry capabilities is making this a reality. The intensity distribution of earthquake ground shaking often exhibits a very irregular spatial pattern because of variations in the crustal structure near the epicenter, in site response, and in the force mechanisms—such as strike-slip, along the San Andreas Fault, or dip-slip, in western Mexico. When seismic networks are supplemented by portable instruments, generic path effects and site responses in an earthquake-prone region can be defined. It then becomes possible to quickly determine the spatial distribution of ground shaking and the damage exposure in an entire epicentral region.

Instantaneous communication capabilities offer further opportunities to mitigate earthquake damage. In cases where the earthquake is centered some distance away, it is possible to warn a region of imminent strong shaking as much as several tens of seconds before the onset of damaging shaking because of the relatively slow speed of seismic waves. Endangered regions could respond to the warnings in time to shut down delicate computer systems, isolate electric power grids and avoid widespread blackouts, protect hazardous chemical systems and offshore oil facilities, and safeguard nuclear power plants and national defense facilities.

A simple warning system using this concept has already been incorporated into the Japanese railroad system. A similar strategy is being implemented in the form of a tsunami warning system that will alert areas around the Pacific when any large submarine earthquake occurs in the Pacific basin. Real-time seismic and geodetic systems are also critical components of volcano monitoring systems that can provide fairly short-term warning of impending explosive eruptions.

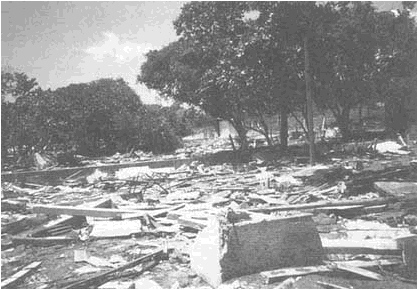

Tsunami Hazards

A tsunami along several hundred kilometers of the coast of Nicaragua on September 2, 1992, resulted in over 100 deaths as a 25-foot wave inundated coastal areas (Figure 5.16) and in places had run-ups of up to 1,000 m. Tsunamis are large ocean waves most commonly generated by the uplift or depression of sizable areas of the ocean floor during large subduction-zone earthquakes; significant tsunamis have also resulted from volcanic eruptions and large landslides or submarine slides. Like earthquakes, great sea waves are of little consequence in remote regions. In the open ocean they are hardly noticed, but as they approach the shore the waves increase in amplitude as they move into shallower water, depending on the nature of the local submarine topography. The resulting tsunami hazard in many coastal areas is far greater than is often appreciated. For example, during the great Alaskan earthquake of 1964, the loss of life from the tsunami generated in the offshore area was more than 15 times as great as the loss of life directly attributable to earthquake shaking; much of it occurred far from Alaska. Indeed, during the past 50 years, significantly more people have been killed in the United States by tsunamis than by other effects of earthquakes—although these statistics could change radically overnight with a major earthquake in a metropolitan area.

The areas of the United States most affected by tsunamis are Alaska, Hawaii, and the Pacific Northwest. Hilo, Hawaii, has been hit repeatedly by tsunamis originating as far away as southern Chile, and the same Chilean earthquake that produced tsunami devastation in Hilo in 1960 caused 200 deaths in far more distant Japan. Very recent geological field studies suggest that large prehistorical tsunamis occurred along the Oregon-Washington-British Columbia coast, probably generated by

FIGURE 5.16 Damage resulting from the September 2, 1992, tsunami that struck Nicaragua. Photograph courtesy of Mehmet Celebi, U.S. Geological Survey.