The Threat of Climate Change

Daniel J. Evans

While Operation Desert Storm grabbed everyone's attention, another potential crisis was forgotten. The combined pressures of population growth and pollution are threatening our planet's resources and could change our climate.

We must now pay more attention to this other threat, which has the potential to cause environmental damage far more widespread than occurred in the Gulf.

In 1989, Congress asked the National Academy of Sciences, the National Academy of Engineering, and the Institute of Medicine to evaluate both the scientific facts and policy implications of global climatic change. I chaired a group of 40 distinguished scientists, economists and policy-makers that has just completed this study.

We found that amounts of greenhouse gases which trap the sun's radiation and help warm our Earth, are increasing rapidly. These gases come from burning fossil fuels, harvesting our forests, and the escape of modern chemicals into our atmosphere. Human activity soon will push concentrations of these gases to levels unprecedented in human history.

Virtually all scientists agree on these facts. Wide differences begin to appear when scientists project what will happen to the Earth's climate as a result of these added greenhouse gases. Computer models concur that a doubling of current pollutants will cause global warming. Yet the pro-

jected amount of warming varies from 2 degrees to 9 degrees Fahrenheit. Some scientists disagree, saying there is little evidence that any significant global warming will occur.

Policy-makers have been left wondering how to proceed. After all, a temperature increase of 9 degrees is much more serious than a 2 degree rise — or no rise. Yet, despite this uncertainty, the rationale for taking action is compelling. The situation is much like facing a potential earthquake or fire. One does not know when a disaster will occur, or how damaging it will be. A prudent person buys insurance.

We discovered an array of such insurance measures that could substantially reduce pollutants in the atmosphere. Many of these measures cost little; some actually save money. Collectively they represent an insurance policy for the planet that we all should be eager to buy.

First, we should ensure that consumers pay the full environmental costs of various energy sources. The marketplace can lead us to the least polluting energy sources — if we let it. Research needs to be speeded up on alternative energy sources and also on a new generation of safe, economical nuclear power plants. If the intensity of global warming increases, nuclear energy may be an important alternative to coal-and oil-fired power units.

The main component of a planetary insurance policy should be improved energy efficiency. Everything from better home insulation to more efficient light bulbs and refrigerators will reduce energy use. Best of all, the price of energy saved will pay for most of the improvements.

Many of these measures have multiple benefits. They not only diminish the threat of global warming but also reduce acid rain, smog, and pollution of our air and water. Perhaps the best example of a double benefit is stopping the use of chlorofluorocarbons, which damage the Earth's protective ozone layer and contribute substantially to global warming.

Our committee identified a series of low-cost proposals that could reduce U.S. emissions of global warming gases collectively by an impressive 40 percent of 1990 levels. Adopting these ideas at even a quarter of proposed levels would cut our current pollution load by 10 percent.

Greenhouse warming is potentially a global problem. Even if the United States adapts to climate changes, other nations

could face catastrophe. It is vital to share new technologies and improve energy efficiency abroad. Otherwise, our own efforts may be overwhelmed by growing industrialization in the developing world.

There is a lot of talk about a new world order. Here is a pretty good place to start. Helping others to grow in an environmentally sound way contributes to world stability while avoiding climatic disaster.

No one can predict yet how significant global warming will be, but we need not wait for a clearer signal. Adopting the insurance policies outlined here will produce much benefit for little cost. The real bonus is that they also will make us more efficient and competitive, and create a healthier environment for our children.

April 14, 1991

Daniel J. Evans, a registered engineer and chairman of a Seattle consulting firm, is the former governor and U.S. senator from the state of Washington.

* * *

Designing a Cure for Greenhouse Warming

Thomas H. Lee

Recently some scientists have proposed ingenious — some would say reckless — techniques to bring global warming under control and prevent the Earth from overheating.

According to one suggestion, more than 50,000 large mirrors would be launched into orbit to reflect sunlight before it heats our atmosphere. A similar proposal would send billions of shiny balloons aloft.

Another idea is to deliberately release huge amounts of dust into the atmosphere to block sunlight. Then there is

the possibility of releasing sulfur dioxide above the oceans to stimulate cloud formation — still another way of blocking sunlight. According to one estimate, increasing the coverage of marine stratocumulus clouds by just 4 percent would offset the effect of a worldwide doubling of carbon dioxide (CO2) emissions.

Yet another approach is to fertilize the oceans with iron to stimulate the growth of aquatic plants and hasten the absorption of CO2 by the oceans.

Some of these proposals have an aura of science fiction, and one need not be an engineering whiz to fear they might cause inadvertent environmental problems worse than those they solve. Technological fixes also might lull society into complacency about global warming.

Even though there is evidence of global warming in the last century, whether it is due to greenhouse effects still is uncertain. But because the potential repercussions may be severe, we need to examine all mitigation options, including "geoengineering ideas" like these. I chaired a panel of the National Academy of Sciences, National Academy of Engineering and Institute of Medicine that has just completed an exhaustive study of ways of mitigating global warming. Our conclusion was that these options warrant further study, although none are close to being ready for actual implementation. But we also found that there are other options that are less costly, less exotic, and perhaps even boring by comparison – but that can work.

For example, if homeowners replaced just three incandescent light bulbs in each household with high-efficiency fluorescent tubes and purchased more efficient refrigerators and water heaters, they could reduce residential electricity demand substantially. If everyone also used a more efficient car, the United States might reduce its emissions of greenhouse gases by as much as 15 percent. Even though the financial paybacks of these improvements are very attractive, implementation might require creative policies.

If companies switched to alternative chlorofluorocarbons (CFCs) to replace those depleting the ozone layer, the amount of potential global warming would ease by a similar magnitude.

Though no single silver bullet exists, it is possible to re-

duce U.S. emissions of greenhouse gases substantially in a number of ways. Our panel divided these options into two categories. The first group includes those available at little or no cost. Several, such as improving the energy efficiency of homes and commercial buildings, actually provide cost savings. The list also includes making power plants more efficient and collecting gas from landfills.

The second category includes options that may cost more or have implementation obstacles. For example, increasing the use of natural gas for electricity generation can offer both savings and a reduction in CO2 emissions. But concern about the availability of natural gas is an obstacle. Transportation options such as improving mass transit, parking management or vehicle efficiency beyond certain levels require changes in lifestyle, which may be difficult.

The cost of these options varies, from less than one dollar for every ton of CO2 emission reduced to more than $500 per ton. Measuring costs solely in terms of avoided emissions, of course, leaves out other critical factors. For example, eliminating CFCs not only eases global warming but also protects the ozone layer.

So the equation is complicated. But after toting up all of the options, we concluded that the United States can reduce its greenhouse gas emissions by between 10 percent and 40 percent of current levels. This is a very impressive amount, although emissions in other parts of the world also must be controlled.

In other words, rather than just worrying about global warming, we have the potential to mitigate it at relatively low cost. And we may not need exotic technological fixes. Mirrors in the sky may be unnecessary if we have the vision to act here on Earth.

July 21, 1991

Thomas H. Lee is president of the Center for Quality Management and professor emeritus at the Massachusetts Institute of Technology.

* * *

Aquatic Ecosystems on the Critical List

John J. Berger

From San Francisco Bay to Lake Apopka in Florida, many of the nation's aquatic ecosystems are on the critical list.

These rivers, lakes, streams, estuaries and wetlands have been dredged, channelized, diked, dammed, massively diverted, silted in and contaminated. Left in shocking condition, they are losing native plant and animal species as well as their capacity to perform such life-sustaining ecological functions as absorbing wastes, purifying water and producing oxygen. Currently, the nation is destroying 290,000 acres of its wetlands every year, not to mention other resource losses.

Confronted by increasing population pressures and relentlessly growing demands on water resources, our aquatic ecosystems urgently need restoration.

An expert committee of the National Research Council concluded recently that it is indeed possible to repair damaged aquatic ecosystems to a close approximation of the condition they were in before they were disturbed. The committee recommended a coordinated national program of aquatic ecosystem restoration to rehabilitate 10 million acres of wetlands, 2 million acres of lakes, and 400,000 miles of rivers and streams.

The newly emerging science of restoration ecology makes such an ambitious goal increasingly realistic by providing tools to improve the condition of drained bottomland hardwood wetlands, channelized rivers, fishless streams and dying lakes. Specific resources that might benefit from restoration include the Chesapeake Bay, the Great Lakes and Midwest "prairie potholes."

Restoring these areas would not only help the environment but would provide much-needed jobs for those involved in the effort, as well as providing potable water, fishing, swimming, and other recreation and tourism. Other benefits include improved flood control, water quality and ground-

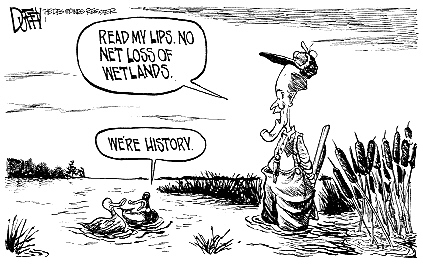

Drawing by Brian Duffy

The Des Moines Register, Iowa

water supplies, and increased numbers of fish and waterfowl. Restoration efforts also can provide a sense of empowerment for community groups that participate.

A significant message of the Research Council report is that when people manage natural resources and plan their restoration, they need to consider the long-term, large-scale interactions among rivers, lakes, streams, wetlands and groundwater, and the impacts of land-use practices on water systems.

Current restoration efforts, although worthwhile, have tended to be narrow in scope and uncoordinated on a regional basis. Many government agencies have limited jurisdiction and are only able to manage water quality or water quantity. Restoring an entire ecosystem, however, requires a much broader geographic perspective and a long-term approach that takes full account of the relationship between interconnected water resources and between those resources and their surrounding lands.

With myriad social needs tragically unmet, can we afford to pay for a national environmental restoration program?

The question really should be, Can we afford not to? Environmental damage that is not repaired promptly often becomes tremendously expensive or impossible to repair later.

It is unconscionable to pass on these costs to future generations or to foreclose future options for them. Once environmental degradation gets to a certain point, nature may be unable to repair the damage on a meaningful time scale. Endangered species cannot wait for help in the long-term future. If we do not act to restore their habitats now, they will be gone from the Earth forever.

Public and private spending on the environment for all purposes is a mere 1.6 percent of the gross national product, and a tiny fraction of that amount is being spent today on ecological restoration of aquatic ecosystems. Additional money for restoration must be found. Such a program could be part of the nation's economic recovery effort.

With national attention now focused on ways of revving up the economy, we must be wary of quick economic fixes that ignore vital environmental restoration needs. Narrowing the legal definition of ''wetland'' without careful scientific planning, for example, could cause unintended harm. We should be moving instead to restore degraded resources so we achieve a net gain rather than just the "no net loss" that President Bush has proposed for wetlands.

In addition to all of its domestic dividends, a bold national environmental restoration program could confirm the United States as a world environmental leader. It also could give us a head start on what ultimately will be a vast enterprise in the next century: global environmental restoration. Desirable as it is, environmental protection is not enough. We also must fix the environmental harm we have done.

February 9, 1992

John J. Berger, professor of environmental policy at the School of Public Affairs, University of Maryland, College Park, served as special consultant to the National Research Council's Committee on Restoration of Aquatic Ecosystems.

* * *

Science and the National Parks

Paul G. Risser

They are some of our country's brightest jewels, but Yellowstone, the Grand Canyon, Acadia and other national parks have a serious problem. The parks face growing pressures, from haze that obscures scenic views to a spiraling number of visitors. Yet those managing the parks are failing to take full advantage of one of their best tools for overcoming these problems — namely, science.

When oil spilled onto Alaska's Kenai Fjords and Katmai National Parks after the 1989 Exxon Valdez accident, a lack of data about pre-spill conditions made it impossible for park officials to fully assess the damage. A similar lack of understanding led to concession stands and other facilities being built in inappropriate places in Yellowstone and Yosemite, and to park boundaries failing to encompass complete ecosystems in Everglades National Park.

There are some research success stories. In Sequoia and Kings Canyon National Parks, managers believed for years that they should always try to prevent fires. Research showed that occasional fires are actually required for new trees to germinate; the policy has now been changed. In Great Smoky Mountains National Park, studies showing a decline in the black bear population led officials to uncover illegal hunting.

Scientific research is essential if the park system is to continue serving more than 250 million visitors annually while preserving precious resources for future generations.

Nearly a dozen independent reviews over the past 30 years have found the Park Service's research effort to be poorly organized and inadequate. The Service devotes much less of its budget and personnel to scientific research than do other agencies that administer federal land. The national parks could be producing some of our best research on the environment, geology, archaeology and a host of other fields. Instead, their scientific contributions are sporadic.

The Park Service has failed to respond adequately to these

repeated criticisms. Its scientific program remains plagued by inadequate resources, a vague mandate and a lack of independence even as the need for research has intensified. Air pollution is now obscuring scenery at the Grand Canyon, Shenandoah and other parks. Exotic plants and animals are invading park boundaries. Park managers must cope with critical changes in ground and surface water, increasing stream sedimentation and threats to wildlife populations. The crush of visitors at some of the parks is straining resources to the breaking point.

The park system currently conducts research both on its own and in cooperation with universities. It manages this research effort out of its Washington office, its 10 regional offices and some individual parks. Instead of having a single science program with a coordinated focus, it has 10 separate programs of uneven quality. The Service has identified $250 million to $300 million in unmet research needs, yet its research spending in fiscal year 1992 totaled only $29 million.

Still, the main problem is not money, although more money is needed. Rather it is with the very culture of the Park Service, which treats science as a secondary concern. Glacier National Park, the Grand Tetons and other treasures do not exist solely to impress tourists. They also provide an unparalleled source of untouched natural settings to study evolutionary adaptation, ecosystem dynamics and other natural processes. The parks can teach us about natural and human history, and deepen our understanding of regional and global environmental changes.

What can be done to set things right? I chaired a National Research Council committee that recently studied the situation. We urged Congress to take the lead by issuing an explicit mandate clarifying the Park Service's research mission. The Service, meanwhile, should act swiftly to enhance the program's credibility and quality. It needs to reach out to the broader scientific community by establishing an independent science advisory board and a competitive grants program. It also should recruit a chief scientist of high scientific stature.

The leadership of the Park Service has said it generally

agrees with our recommendations, but it must move decisively to show that a new era truly is dawning.

Incremental change is not sufficient. What's needed is nothing less than a metamorphosis in the Park Service, one that integrates science with day-to-day park management. Our national parks are too valuable an inheritance, and the problems they face too complex, to continue managing them with sketchy information and good intentions.

September 13, 1992

Paul G. Risser is vice president and provost at the University of New Mexico, Albuquerque.

* * *

Assessing the Threat of Toxic Waste Sites

Anthony B. Miller

If you live in the United States, there's roughly a one-in-six chance that your home is located within four miles of a chemical dump or other potentially hazardous waste site. Given our unpleasant memories of Love Canal and other incidents, it's reasonable to ask which of these more than 31,000 sites truly pose a threat.

Unfortunately, more than a decade after Congress established the Superfund program, we still cannot answer that question. A committee that I chaired for the National Research Council reported recently that the federal government has no comprehensive inventory of waste sites, no program for discovering new sites, insufficient data for determining safe exposure levels, and an inadequate system

for identifying sites that require immediate action to protect public health.

The Environmental Protection Agency (EPA) has conducted preliminary investigations of 27,000 of the reported sites. About 9,000 of these have been studied more extensively, and 1,200 have been placed on the National Priorities List for eventual cleanup. Yet the methods used to assess the public health danger at these sites are questionable, and it is far from clear how much the assessments have benefited nearby residents.

Opinion polls show the public believes that hazardous wastes constitute a serious threat, but many scientists and administrators in the field disagree. Our committee, which included experts in toxicology, exposure assessment and other fields, found the available evidence too skimpy to confirm or refute either view.

More than 5 billion metric tons of hazardous waste are produced each year in the United States. There's no question that substances toxic to humans and several animal species abound in hazardous waste sites. It's a big step, however, to say that most, or many, or even a substantial fraction of the sites pose a threat to nearby residents. Residential proximity does not necessarily mean that exposures and health risks are occurring, although the potential for exposure obviously is increased.

Epidemiologic studies of hazardous waste sites have complex technical limitations. However, increased rates of such conditions as birth defects, spontaneous abortions, cardiac anomalies, fatigue, and neurologic impairment have been tied to exposures among some nearby residents.

It is less clear whether exposure to the wastes can be blamed for medical problems where there is a long delay between exposure and disease. However, some studies have detected excesses of cancer in residents exposed to compounds found at some hazardous waste sites.

As for which sites are a problem or how close people have to live to be affected, much remains uncertain. Without clear answers, the only prudent course is to err on the side of public safety, just as we do in designing bridges or build-

ings. In evaluating the potential danger of a dump, officials should apply a large margin of safety.

Everyone would benefit, however, by reducing the uncertainty about hazardous wastes generally and about specific sites. Of the $4.2 billion spent annually on hazardous waste sites in the United States, less than 1 percent has gone to study health risks.

The scientific basis for evaluating Superfund sites must be improved. Expanded studies are needed — and soon. As toxic wastes disperse, more people will be exposed and it will become increasingly difficult to design studies that compare the health of exposed and unexposed populations. One kind of research that is especially important is identifying biologic markers that indicate whether someone has been exposed to toxic chemicals.

More broadly, the federal government should establish an aggressive program to discover hazardous waste sites. It needs to revamp its methods for evaluating known sites for population exposures, health effects and the need for cleanup measures. Washington also should expand technical assistance to state hazardous waste programs and increase support for university research in "environmental epidemiology."

After spending billions of dollars during the past decade to study and manage hazardous waste sites, the American people are entitled to firmer information. The only way to end the uncertainty over whether, or how much, sites endanger the public is to perform the necessary studies. We should strive to clear up this scientific mystery even as we clear up the wastes themselves. With more than 40 million people living near the sites, the public needs answers.

January 12, 1992

Anthony B. Miller is a professor in the Department of Preventive Medicine and Biostatistics at University of Toronto.

* * *

Protecting Our Nervous System from Toxic Chemicals

Philip J. Landrigan

As you work in your office or walk around your house today you may be exposed to chemical substances that, under certain circumstances, can injure the human nervous system.

Millions of workers are exposed every day to organic solvents. More than 4 million American children have excessive exposure to lead. Other Americans are exposed to pesticides, ethanol, illicit drugs, tranquilizers and other substances with a potential for harm.

Of the more than 70,000 chemical substances used commercially in the United States, fewer than 10 percent have been tested for neurotoxicity. Only a handful have been evaluated thoroughly. Which of these substances truly pose a danger to our nervous systems? We don't know. By using a better scientific system, we could find out more.

Although most substances in our environment are benign, some have been shown to cause neurological illnesses in both adults and children. Examples include:

-

Acute lead poisoning in children who ate chips of lead-based paint.

-

Severe disfigurement and mental retardation in residents of Minamata, Japan, who were exposed to mercury released into a bay by a plastics manufacturer.

-

Tremors, motor disturbances, anxiety and diminished coordination among industrial workers in Hopewell, Virginia, who helped manufacture the pesticide kepone.

-

Acute neurologic poisoning by the pesticide aldicarb among California residents who ate contaminated watermelons.

Chronic exposure to some environmental substances also has been shown to cause neurological disease. A syndrome resembling Parkinson's disease develops in people exposed to excessive levels of the metal manganese, as well as in

young adults who used the synthetic heroin substitute MPTP. Chronic exposure to lead can cause irreversible declines in children's intelligence and learning, as well as behavior problems. Prolonged exposure to some solvents can produce dementia.

Still unresolved is the question of whether exposure to neurotoxins can result in Alzheimer's disease, parkinsonism or other chronic degenerative disorders of the nervous system. Available evidence suggests that toxic environmental substances may contribute to the incidence of these diseases. The problem is complicated by long latency periods.

A committee of the National Research Council, which I chaired, reported recently that most of the chemical substances to which Americans are exposed in their homes, jobs and hobbies never have been evaluated for possible toxic effects on the nervous system. Accordingly, we called on the federal government to adopt a more effective approach for assessing neurotoxicity to protect the public.

The Environmental Protection Agency (EPA) has the primary federal responsibility for regulating the entry of new chemical substances into the marketplace. Until now, EPA scientists have evaluated neurotoxicity mainly by examining the chemical structure of new molecules. For the most part, they have not done direct testing of toxicity nor have they required chemical manufacturers to do so.

This approach is too roundabout and may fail to identify neurotoxins. EPA should be testing chemicals directly to see whether they are toxic to the nervous system. Proven methods exist to do this.

The agency should adopt a three-tiered testing approach to identify neurotoxic hazards, assess the doses that cause harmful effects, and determine how toxicity occurs. It also should monitor a broader range of chemicals and carry out long-term monitoring on high-risk populations, such as certain workers. Physicians across the country need data to help identify neurologic illnesses linked to toxic pollutants.

Chronic neurological disease has the potential to sap the vitality of American society. Some historians speculate that chronic lead poisoning 2,000 years ago was one of many factors leading to the demise of the Roman empire. Today

we live in a society that has synthesized thousands of new chemical substances. Most have enhanced our lives but a few may cause ill effects such as blindness, coma and dementia.

The danger goes beyond possible neurological disorders. Other Research Council committees have reported that environmental substances may harm the immune system, the lungs and the reproductive organs. There is no escaping the world around us but we can be much more vigilant about the substances that can harm us. First, however, we must start doing a better job of identifying which ones they are.

March 22, 1992

Philip J. Landrigan is Ethel H. Wise Professor and chair of the department of community medicine at Mount Sinai School of Medicine in New York.

* * *

Indoor Radon: Hype Versus Help

Anthony V. Nero Jr.

A public service advertisement on television shows people turning into skeletons after being exposed to radon gas in their homes. Print ads proclaim that living in a house with radon is like "exposing your family to hundreds of chest X-rays yearly."

The ads were produced by the Environmental Protection Agency (EPA) and the Ad Council with the best of intentions. High levels of radon pose serious risks of lung cancer. Yet the ads, which aired until recently, alarmed millions of people unnecessarily while inadequately helping those who truly are at risk. The same is true of the federal program behind them.

Radon is produced by the radioactive decay of radium, which exists in small amounts in all soil and rock. Radon decays to isotopes that can deposit in the lungs, causing cancer. Tens of thousands of families are exposed to greater radiation doses than allowed for workers in uranium mines. The increased risk of death is estimated to exceed one in a hundred, or one in ten for smokers.

Most people, however, face a much smaller risk. What the EPA should be doing is vigorously helping those in danger while not scaring everyone else. Instead, it has promoted a mythical picture of indoor radon that substantially exaggerates both the prevalence of homes having high concentrations and the size of the associated risks.

EPA recommends that every home be monitored and remedial action be taken in homes with radon levels above 4 picocuries per liter of air. About 6 percent or 7 percent of U.S. houses exceed this level. The agency has recommended the use of short-term tests that greatly inflate these percentages — to as high as 30 percent of homes — and then publicized the misleading results.

The exaggeration occurs because indoor radon concentrations fluctuate day to day and week to week. Monitoring kits placed for only a few days fail to show the true average. Also, until recently homeowners were advised to place the kits in the basement, where levels tend to be substantially higher than in upstairs living areas.

EPA said the higher levels cause a lung cancer risk equivalent to smoking a half-pack of cigarettes per day, and tried to goad parents into action by saying children suffer even greater risks. Substantial criticism by the scientific community caused it to back off both claims.

In trying to help the public, the agency's staff may feel that exaggerating the situation errs in the right direction. But the approach is backfiring. Americans just don't buy the emergency tone anymore. Even people who really are at risk dismiss the danger.

The United States needs a more sensible and effective control strategy. The essential components are clear: Inform the public reliably. Focus on finding and fixing homes where radon levels are unequivocally high. Examine seriously what,

if anything, should be done for the majority of homes with low or moderate levels.

An essential requirement is to adopt a monitoring protocol in which radon detectors are left in a home's main living area for a full year, or long enough to provide an accurate estimate. EPA should intensify its efforts to identify which specific areas of the country have the highest radon levels. Then it should encourage monitoring of every home in these areas, followed by remedial measures where necessary. The usual remedy, at a cost of $1,000 or so per home, is to install a venting system that draws radon-bearing air out from beneath the house before it enters. A moderate but focused program, devoting perhaps $500 million to the 100,000 ''hottest'' homes, would be much more effective than the unfocused multi-billion dollar programs now being promoted by EPA and Congress.

Real estate transactions in high-radon areas may need to include effective radon testing or remediation, much as many states now handle termite problems. The costs could be distributed widely through new insurance mechanisms. Special building codes also may be required in these areas.

More broadly, EPA must overcome its reluctance to include the scientific community in decisions involving radon. Scientists now must publicly confront the agency for lapses that would never have occurred had they been involved. It's time to stop crying wolf about radon and start getting serious.

November 1, 1992

Anthony V. Nero Jr. is a senior scientist in the indoor environment program at the University of California's Lawrence Berkeley Laboratory. This article is adapted from a longer version in the Fall 1992 edition of Issues in Science and Technology.

* * *

Deciding Who Gets Western Water

A. Dan Tarlock

Prolonged drought is a fact of life in the West, and every day of sunshine illuminates the need to allocate our shrinking water supplies more equitably and efficiently.

The federal government once could build enough new reservoirs to meet the demands of growing cities and the environment while still assuring supplies for agriculture. But the current system is straining to meet the water needs of homeowners in California, fishermen in the Northwest, farmers in Colorado and millions of others.

Irrigated agriculture, long the biggest water user in the West, clearly must relinquish some of the water obtained historically through the doctrine of "prior appropriation." But how?

The best way is through voluntary water transfers, such as an alfalfa farmer agreeing to sell water rights to an expanding suburb. Transferring water from willing sellers to willing buyers has the greatest potential to reallocate supplies fairly and efficiently.

However, there's a problem. A water transfer may make both the seller and buyer happy but harm others. The farmer's neighbors, for instance, might have to pay a larger share of maintaining the irrigation system. The town could lose part of its tax base. The local farm machinery store would have one less customer. Fish habitats and other aquatic ecosystems downstream might disappear. These and other "third parties" may have their lives turned upside down even though they were not involved directly in the transfer.

If water transfers are to succeed in easing growing shortages, Western states must find a better way to protect the interests of third parties such as rural communities and American Indians, as well as of "unrepresented" public interests like the environment. I chaired a National Research Council committee that recently studied the situation. We saw an urgent need for new procedures and laws to promote

equity and efficiency. A broader group of participants needs to be included when water transfers are negotiated.

Both urban water suppliers and large segments of the environmental community have embraced the concept of water marketing. Along with many farmers, they see markets as more effective than government subsidies or regulation at moving scarce resources from lower-to higher-value uses. Markets respect property and allow current owners to set the timetable and receive fair compensation.

The concept already has been proven effective in many instances. Most transfers to date have been from farms to cities but they also can go to support ecological systems or recreational uses. Transfers from an irrigation district east of Reno, Nevada, for example, are being used to restore a wildlife refuge.

Water markets differ from conventional markets in important ways. For one thing, water has been publicly subsidized for decades. Large amounts of it currently are held by public and private entities. And we have a long tradition of water supporting a variety of public needs. Selling water rights is not like selling a car or home; the transaction can impose significant costs on others. In some Hispanic villages in northern New Mexico, for example, the entire social system is tied to communally organized irrigation canals.

The ultimate goal of water policies should not be simply to promote transfers but to accomplish better water management generally. This requires that all of the relevant third parties be brought into the deliberations. Although this broad participation may complicate the process during the short term — and increase costs — it helps avoid lawsuits and other problems down the road.

Token gestures to include community groups and others in discussions over water transfers are not enough. Our committee called for third parties to be given legally cognizable interests. Some states have begun to develop new processes to evaluate transfers and accommodate the diverse economic and cultural values associated with water use. But more action is needed — not only by states but on the federal and tribal levels as well. Special attention must be paid

to protecting both the regions where water originates and non-consumptive "in-stream" uses such as those involving wetlands or recreation.

Arguments over water rights can be fierce. Voluntary water transfers transacted through the marketplace hold out the promise of bringing adversaries together with deals that are both fair and sensible. For transfers to work effectively, however, everyone with an interest must be seated at the bargaining table.

April 12, 1992

A. Dan Tarlock is professor of law at Chicago Kent College of Law in Chicago.