A FAMILY AFFAIR

The Top Quark And The Higgs Particle

by T. A. Heppenheimer

There was a time, just before World War II, when physicists had reason to believe that they had penetrated the deepest issues in their field. Werner Heisenberg, Erwin Schroedinger, Max Born, and Niels Bohr had taken the lead in inventing quantum mechanics, offering insights so powerful that graduate students were solving problems that had bedeviled savants for decades. Studies of atoms held the focus of concern, and here too there was fundamental advance.

People knew that an atom consisted of a nucleus surrounded by electrons. By elaborating this concept, Bohr had given a theoretical explanation for the regularities seen within the periodic table of elements, the foundation of chemistry. The nucleus, for its part, consisted of protons and neutrons. In addition, Britain's P. A. M. Dirac had recently given a powerful theory that described the electron.

The electron has a negative electric charge, and Dirac's theory predicted that there should also be positively charged electrons. In 1932 Carl

Anderson, an American, found them in cosmic rays; these particles became known as positrons. Here was a genuine advance, for this represented the first prediction of a new particle's existence, entirely from theory. The general view at the time was that a Dirac-like theory would soon come forth to describe the proton and neutron. At that point, physicists would have dug down to bedrock in their search for nature's ultimate secrets.

Today, after six decades and many more disappointed hopes, the field of physics is in a rather similar situation. Once again we have a set of powerful theories—the Standard Model, which offers great predictive power. Indeed, it not only accounts for all physical experiments performed to date, it has even shown its power by once again successfully predicting the existence of new particles. Yet today's researchers are not satisfied. Important features of the Standard Model remain unconfirmed; not all of its predictions have yet been borne out. Furthermore, even if one accepts it without reservation, it raises a number of new questions, which lie within the reach of experiments. In pursuing these matters, today's physicists are setting an agenda for the coming century.

The road to the Standard Model has not been smooth. It began just after the war, as the federal government allocated funds for construction of an increasingly powerful series of particle accelerators. In studies of the atom's nucleus, they quickly replaced the older technique of relying on observations of cosmic rays. The new accelerators produced beams of particles, such as electrons or protons, that were particularly intense. They also offered high energy, and experimenters could control this energy, turning it up and down.

The standard technique was to direct such a beam onto a target made of some material and observe the debris that came out as particles with that beam "split the atom"—or, more properly, shattered some of the target's nuclei. New types of particles might lie within those sprays of nuclear debris, and experimenters were not disappointed. Indeed, during the years after 1950, they found themselves with an embarrassment of riches. There appeared to be not one or two but rather dozens of new particles. So far as anyone could tell, they might all be as fundamental as the proton or the electron.

These new particles generally were very short lived. Unlike electrons and even positrons, they did not form well-defined tracks within a detector. Instead, they decayed into other particles, in as little as 10-16

seconds. Still, it was quite possible to describe them and particularly to note the energy required for their formation. As one proceeded with an experiment, gradually increasing the energy in an accelerator's beams, there would be characteristic values of energy where the incidence of these decay products would markedly increase. That meant that new types of particles were forming at those specific energies, even if they had very short lifetimes.

During the 1950s, these experimental results put physics in a quandary. Its leaders had sought to define theoretical physics as the deepest of the sciences, able to make fundamental and far-reaching predictions on the basis of pure thought. But the work of the accelerator labs was turning particle physics into merely a descriptive enterprise. Like botanists who catalog new species of plants without knowing how they had evolved, physicists could do little more than describe the new particles they were discovering. No theory had predicted their existence; indeed, their existence had come as a major surprise. The bright hope of the 1930s appeared premature, if not completely out of reach. Clearly, if physicists indeed were to get down to bedrock, they would have to do a lot more digging.

The first important step came after 1960. By then the roster of new particles was sufficiently numerous to bear some resemblance to the roster of chemical elements, for both numbered in the dozens. A century earlier, Russia's Dmitri Mendeleev had brought order to chemistry by organizing the known elements into his periodic table. This had proven to be more than an exercise in taxonomy; it had pointed toward an underlying order, for the periodicity in this table demanded an explanation based on fundamental principles. Similarly, in 1960, physicists faced the issue of building a counterpart to the periodic table, able to describe their particles in terms of their own underlying principles.

Two theorists, Murray Gell-Mann at the California Institute of Technology and Yuval Ne'eman, an Israeli, emerged as the Mendeleev of particle physics. Working independently, both of them showed that one could produce a periodic table of the particles by relying on a structure taken from abstract algebra, the group SU(3). This not only organized the known particles, it also brought the prediction of a new one, the omega-minus. In a spectacular confirmation of these ideas, researchers at Brookhaven National Laboratory, in 1964, found the omega-minus. This showed that there indeed was a pattern and order within the system of known particles. Their properties were not mutually independent; instead they were linked.

But the work of Gell-Mann and Ne'eman resembled that of

Mendeleev in another way, which showed that much work still lay ahead. These physicists' use of SU(3), like Mendeleev's periodic table, gave order and predictability, without disclosing the underlying source of the order. For Mendeleev the source had proved to lie in the structure of electron orbits within atoms. For physicists in the 1960s, the order that showed itself in SU(3) suggested strongly that the particles possessed some kind of deeper physical structure, able to stand as this underlying source. In seeking this structure, Gell-Mann again came to the forefront along with George Zweig at the European Center for Nuclear Research (CERN).

In developing his theory, Gell-Mann had considered whether he might be able to describe the particles as being built up from more fundamental units. He found that this was possible—and immediately rejected the idea, for the new particles, the building blocks, would have fractional electric charges such as 1/3 and 2/3. No one had ever seen such particles; all the known ones had charges of either 0 or 1. But in 1963 he decided that this might not be a problem after all. These fractionally charged entities might be trapped inside the particles they formed, trapped so strongly that they could never be seen.

Gell-Mann called them quarks. He invented the term out of whimsy, as a reaction against pretentious terminology, but soon found that James Joyce had used this word in Finnigans Wake.

Three quarks for Muster Mark!

Sure he hasn't got much of a bark.

And sure any he has it's all beside the mark.

Gell-Mann's theory indeed called for three quarks, which should combine to yield all the particles organized under SU(3). Still, a major problem lay in his suggestion that they could not be seen, even in principle. Such a viewpoint smacked of theology rather than physics, for while theologists indeed begin by asserting that one cannot see the matters that concern them, physics had always been an experimental science par excellence. The quark concept offered a compelling theory, but to win widespread acceptance it would have to pass the test of observation and experiment.

The work that would provide this confirmation got under way during 1967 at the Stanford Linear Accelerator Center (SLAC). It involved scattering electrons off protons, at increasingly high energies. Initially, at modest energies, the electrons simply bounced away, somewhat like bullets recoiling from impacts with a cannonball. But as the energies increased, the data took on new forms. Now it indicated that

the electrons were no longer ricocheting in a simple fashion, scattering off the protons as a whole. Instead, they were deflecting off tiny constituent objects that lay within each proton. This was understandable if those objects were quarks, and with this Gell-Mann's theory received a major boost.

While this work was progressing, other theorists were making headway as well. The studies of SU(3), and of quarks, had involved particles that feel the strong force. This is one of the fundamental forces of physics; it holds atomic nuclei together. Another force, equally fundamental, is the weak force, which mediates the production of energy in the sun. Here the road to understanding lay in unification, in creating a theory that would join the weak force to that of electromagnetism.

Unification was an old theme in physics. Scotland's James Clerk Maxwell had pursued it a century earlier, giving equations that unified the electric and magnetic fields. Maxwell's equations had then gone on to achieve the status of a cornerstone of physics. Of course, Maxwell had known nothing of subsequent discoveries: the electron, Einstein's relativity theory, quantum mechanics. Hence, an important project for physicists had featured creation of a theory that indeed would accommodate these findings; Dirac's work had stood as a major contribution. The full theory, known as quantum electrodynamics, reached fruition around 1950.

With this achievement in hand, theorists turned to the weak force. The view was that at sufficiently high energies it too would unify, joining with the electromagnetic force to yield a single "electroweak" force. The separate nature of these forces then would become apparent only at lower-energies. It took over a decade before a suitable electroweak theory was in hand, but in 1971 this effort also achieved success. The main contributors were Steven Weinberg, Sheldon Glashow, Abdus Salam, and Gerard 't Hooft.

Hence, by 1972, particle physics had turned around. A decade earlier it had been experiment rich and theory poor, beset by a plethora of newly discovered particles that resisted understanding. In 1972 the field was theory rich and experiment poor. Theories were in hand for quarks, the strong force, and the electroweak force. The problem now was to test them by conducting appropriate experiments. If these theories passed those tests, they could offer a new set of foundations for theoretical physics.

Electroweak theory was the first to face this challenge. It predicted the existence of a new particle, the Z, that could give rise to characteristic events that might occur within a particle accelerator. For instance, a

single electron might materialize in a detector, with no other particle in view. The Z itself would remain unseen in such an encounter, but the electron could show its presence. During 1973 and 1974, groups working at CERN in Switzerland and at Fermilab near Chicago both announced success in observing such events. Here was solid evidence both for the Z and for the electroweak theory.

In November 1974 it was the turn of the quark theorists. Gell-Mann's original theory, in 1963, had postulated three types of quark: Up, down, and strange. The up and down quarks sufficed to build protons and neutrons, the stuff of atomic nuclei and hence of the world of matter that we live in. The third or strange quark had its own roles. In combination with up or down quarks or with other strange quarks, it yielded the so-called strange particles. These had been seen in cosmicray studies during the postwar years, receiving that adjective because they had unusually long lifetimes before they decayed. Such particles could be seen in accelerators as well; the omega-minus, which had clinched Gell-Mann's early work with SU(3), was made up of three strange quarks.

However, this was not the end of the matter. Working from theory, Sheldon Glashow proposed in 1964 that there should be a fourth quark, which he called "charm." He declared that these four quarks should group into two families. Up and down would form the first, charm and strange the second.

In 1974 it was as true as ever that one could not see any type of quark in isolation, by knocking it out of a particle. In Glashow's words, "You can't even pull one out with a quarkscrew." But the theory predicted that charmed quarks should join with others to form new types of particles, having observable characteristics. At Brookhaven and SLAC, groups headed by Samuel Ting and Burton Richter, respectively, went on to find them.

The two labs had very different equipment. Brookhaven relied on a 1960-vintage accelerator, the Alternating Gradient Synchotron, which was not well suited to the task of finding particles with charm. Indeed, Ting would work with it for months before he was sure what he had found. The accelerator at SLAC was much newer and far more capable; if Richter knew the energy of these particles, he could discover them in a day. Ting knew this, and, as his data took form during the summer and fall of 1974, he went to considerable effort to prevent a leak of information that might allow his rival to beat him to the discovery.

Ting was not looking for charm; rather, he was looking for new particles of a different type, and he hoped to find them by searching

through a broad energy range, exploring this range using the Brookhaven accelerator. He was working with energies measured in billions of electron volts—GeV, in physicists' notation. (One GeV corresponds to slightly more than the energy required to form a proton or a neutron.) At an energy of 3.1 GeV he found a sharp peak in the data. Here was clear and dramatic evidence for a new particle, standing out as sharply as a bright spectral line.

Fortunately for Ting, Richter was working in a different energy range. But late in October, in reviewing recent data, several SLAC physicists noted some curious features in results taken near 3.1 GeV. To go back to that energy was no simple matter of turning a knob; it meant shutting down the accelerator and resetting the magnets along with an injection beam. Nevertheless, Richter's colleagues did just this and were quickly rewarded with the same high and narrow peak in the data that Ting had seen. Indeed, it was so narrow that they missed it the first time around. Both Ting and Richter could claim to have made this discovery, independently and nearly simultaneously. Ting called it the J particle. Richter named it the psi, and most other physicists, who did not care to take sides, called it the J/psi.

One question remained: What was it? Glashow quickly declared that it was indeed a type of charmed particle, which he called ''charmonium." Here was a decisive confirmation of the quark theory, showing that this theory was sufficiently powerful to predict the existence of a new particle. Indeed, it could predict a whole new class of particles, containing one or more charmed quarks and assembled along the lines of the strange particles in Gell-Mann's SU(3) theory.

Nevertheless, this triumph raised a new question. It now was clear that nature indeed had created two families of quarks: The up and down in the first, strange and charm in the second. But if there were two families, might there not be three? Four? An arbitrarily large number? No known result constrained these possibilities; theory offered no guidance, while experimental findings were similarly unhelpful. It was all too reminiscent of the situation prior to World War II, when physicists seemed to stand on the verge of complete understanding—only to see these prospects wash away in a flood of unpredicted and unexpected new particles.

In the ongoing dialogue between theory and experiment, the theorists certainly had had their say. Their work now stood as well confirmed, capable of accounting for all experimental observations, contradicted by none. Amid this success, people took to referring to this body of theory as the Standard Model. Still, the experimental work had hardly begun.

The immediate agenda lay in search for direct observation of the heavy particles predicted by the electroweak theory. There were three of them, denoted Z, W+, and W−, and they supposedly came into play in reactions mediated by the weak force. The work at CERN and Fermilab, in 1973 and 1974, had found evidence for the Z that was convincing but indirect; it was as if you would infer that a burglar had been in your house by finding the window open and your credit cards missing. The new effort sought the equivalent of catching the burglar red-handed.

These particles resisted discovery because they are very massive. Einstein's famous formula, e = mc2, shows that energy and mass are really two different forms of the same thing; hence, a unit of mass can also be regarded as a unit of energy. Preliminary experiments gave data that could predict the masses of the W and Z particles, from physical theory. The predicted masses were 79.5 and 90 GeV, respectively, corresponding to nearly a hundred times greater than a proton.

To search for these particles, and to study physics at these energies, physicists needed new tools. Conventional particle accelerators produced energetic beams and slammed them into fixed targets. These beams' energies had risen markedly through the postwar decades. In 1952 the Cosmotron, at Brookhaven, had been the first machine to achieve multiple GeV. Twenty years later the accelerator at Fermilab could put 200 GeV on target. Yet in searching for the W and Z particles, even this was not enough. The reason was that as the energy went up, the efficiency of the particle production went down. Less and less of the energy in the beam's particle was available to create new particles, for most of it was wasted in collisional debris.

Fortunately, there was an alternative. Instead of striking a fixed target, an accelerator might produce colliding beams. It would produce two beams, circulating in opposite directions and interpenetrating each other at selected locations. Most of the beams' particles would fly past each other and miss, but if the beams were both intense and narrowly focused, a useful number of these particles would collide head on. All their energy would then be available to drive reactions and to create new particles.

It helped that this colliding beam approach fitted in neatly with the engineering design of accelerators. The big ones, as at Fermilab, featured long strings of powerful magnets set end to end like a train of railroad cars, with the magnets curving gently to form a ring. The magnets stored the beam, sending it repeatedly through stations that added energy to the beam. This architecture lent itself readily to arrangements in which two such beams could counterrotate within the same ring,

flying in opposite directions. Such an experiment would amount to running two Indianapolis 500 races simultaneously, one with cars circling the track clockwise and the other counterclockwise. In the collisions of particles within such demolition derbies, physicists now hoped to make their finding.

At SLAC, Burton Richter was a particular advocate of this approach. He had built SPEAR, the Stanford Positron-Electron Accelerating Ring, and had used it in finding his psi particle, which contained charm. The nature of SPEAR testified to further advances in physics, for it collided beams of electrons and positrons. The positron, far from being a curiosity as in the days of Carl Anderson, now was a mainstay of physics research. Indeed, in SPEAR the positrons were so numerous that they formed beams and served to discover particles such as the psi, which were newer still.

For higher energies yet, physicists could use antiprotons. Antiprotons, like positrons, are a form of antimatter. These particles have all the properties of their conventional counterparts with one exception: They have opposite electric charges. The positron, for one, has the same mass and spin as the electron; but whereas the electron carries negative charge, that of the positron is positive. Similarly, the antiproton has the same mass and spin as the proton, but the proton carries positive charge, whereas that of the antiproton is negative. Emilio Segre and Owen Chamberlain had discovered the antiproton in 1955, using the Bevatron accelerator at the University of California at Berkeley; it had been one of the prizes of the new era of particle physics. Like the positron, the antiproton too had graduated to become a tool of research. A beam of antiprotons, colliding head on with one of the protons, could offer energies sufficient to create W and Z particles.

The Fermilab accelerator had been using protons since day one; it was a natural candidate for conversion. However, rather than carry through a straightforward modification, the lab's directors proceeded with a far-reaching upgrade that would feature an entirely new ring of magnets. Rather than shooting a 200 GeV beam at a fixed target, the new system would produce beams with energies of 1000 GeV, a trillion electron volts, or TeV. Two such beams, countercirculating, would collide head on to produce a total energy of 2 TeV. Still, this project was not due for completion until 1985, which left European researchers with an opportunity.

The opportunity lay with an existing accelerator in CERN, the Super Proton Synchrotron (SPS). In 1976 it was operating more as a powerful counterpart of the Fermilab system, directing proton beams at fixed

targets, with energy as great as 400 GeV. During that year, several physicists proposed to convert it to a colliding beam operation by making provision for a countercirculating beam of antiprotons. This conversion was more straightforward than the Fermilab upgrade, but it nevertheless took several years.

Finally, in July 1981 the machine was ready. It produced peak energies of 270 GeV in each beam, or 540 GeV in collisions. Initial operation involved low beam intensities, and these runs failed to produce W or Z particles. That was not surprising; the theory predicted that they would be rare, and the experimenters had only an approximate idea of the energy levels that would produce them. Until they zeroed in on the right energies, these particles would be rarer still.

Carlo Rubbia of Harvard University, who had proposed converting the SPS in 1976, now led the experimenters. They boosted the beam intensity by an order of magnitude, greatly increasing the collision rate, and proceeded with their search. In 1983 they gained full success, detecting the W+ and W− as well as the Z. Subsequent worked pinned down their masses: 80.15 GeV for the W particles, 91.187 GeV for the Z. This showed that in an important respect the electroweak theory was stronger than the quark theory. Quark theorists, such as Glashow, had predicted the existence of charm but had not been able to predict the mass of charmed particles. By contrast, electroweak theory had predicted masses as well as existence and with remarkably good accuracy.

Meanwhile, the matter of quark families was coming to the fore. In 1977 Leon Lederman, working at Fermilab, discovered a member of the third family, which received the name of the bottom quark. Its mass proved to be 4.7 GeV, which confirmed a pattern. Quarks of the second family, the charm and strange, had proven to be considerably more massive than their counterparts in the first family. Third-family quarks were heavier still. Indeed, subsequent work showed that the partner of the bottom quark—known quite logically as the top quark—certainly merited its name. Its mass indeed was at the top; searches up to 91 GeV failed to find it. That meant it would be even heavier than the W or Z particles, the heaviest yet found.

There still remained the question of how many such quark families existed in nature. In a virtuoso feat, investigators at SLAC and CERN proceeded to find a direct answer through studies of the Z. In doing so, they introduced a new concept: That studies of specific particles could shed light on fundamental problems that did not involve these particles directly. The multiplicity of families stood as an issue in quark theory; it did not appear within electroweak theory, which represented a separate

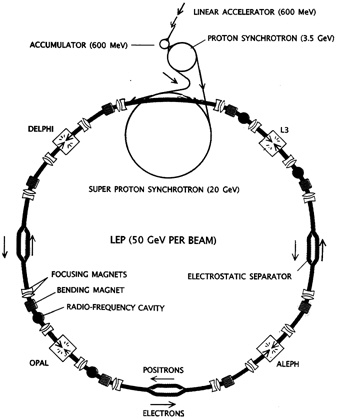

FIGURE 8.1 Bird's-eye view of LEP storage ring shows how electron and positron bunches are accelerated by a series of smaller machines. Four bunches each of electrons and positrons circulate in opposite directions and collide inside the four gigantic detectors: Aleph, Delphi, L3, and Opal. The bunches also cross paths at four intermediate sites, where they are prevented from colliding by electrostatic separator plates. (From "The LEP Collider" by Stephen Myers and Emilio Picasso. Copyright © 1990 by Scientific American, Inc. All rights reserved.)

and independent body of physical law. Yet the Z particle, featured in electroweak theory, proved to offer the key to the problem of quark families.

The reason lay in the uncertainty principle of quantum mechanics. This principle states that when a particle exists for a vanishingly short time—for the Z, some 10-25 seconds—there is a corresponding uncertainty in measuring its energy and therefore its mass. One might seek to determine the mass of a type of particle by making repeated measurements with great accuracy, but this principle would defeat the attempt. Any individual measurement might indeed show high precision, but no two measurements would agree. Different observations would show different values for the particle mass, with the ensemble of these values showing a bell-shaped curve, a distribution common in the field of statistics.

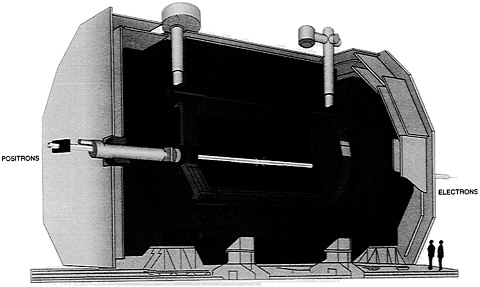

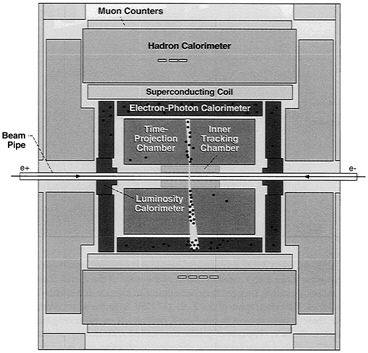

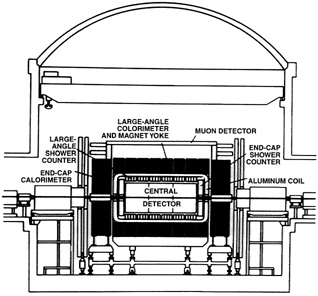

FIGURE 8.2 Graphic presentation of events allows physicists to visualize the trajectories and energies of particles within the structure of the detector (in this case, the Aleph experiment). The diagram above shows the components of Aleph. (Reprinted, with permission, from Breuker et al., 1991. Copyright © 1991 by Scientific American, Inc. All rights reserved.)

At SLAC and CERN, five groups carried out a related investigation. Rather than make direct measurements of the mass of the Z and observe the statistical scatter, they set their accelerator to operate at a succession of energies. The cited mass of the Z, 91.187 GeV, in fact was an average value; these groups sought to produce Z particles at energies ranging from 88 to 95 GeV. The rate of production varied with energy, peaking at 91.187; a plot of these variations, showing production rate as a function of energy, formed its own bell-shaped curve.

What did that have to do with the number of quark families? The width of this curve, and particularly the height of its peak, was related to the lifetime of the Z. That lifetime, in turn, depended on the number of different ways a Z could decay. Each such decay mode furnished a channel whereby the process of decay would create other particles. More channels meant a lower peak; fewer channels meant a higher peak. And the number of channels gave a direct determination of the number of quark families.

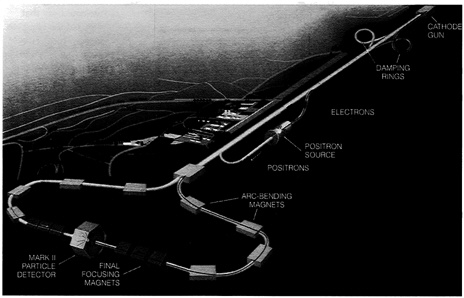

The work featured two new accelerators, the Stanford Linear Collider at SLAC and the Large Electron-Positron Collider (LEP) at CERN (see Figures 8.1, 8.2, and 8.3). The SLAC machine, an upgrade of the existing system, raised the collisional energy to 100 GeV, working with colliding beams of electrons and positrons (see Figure 8.4). However, the LEP was the workhorse of the effort, producing Zs at a rate

FIGURE 8.3 LEP detector at CERN. Beams of particles fly in from left and right, with their collisions taking place within the detector itself. Shielding screens out less-penetrating particles, while penetrating ones, such as muons, reach the detector periphery.

over a hundred times greater. During its construction, LEP had been the largest civil engineering project in Europe. It took form as a ring of magnets some 27 kilometers in circumference, and its beams had the intensity needed to make LEP a true Z factory. During the main experimental run, which lasted 4 months, LEP produced some 100,000 Zs.

Late in 1989 the directors of the five experimental groups pooled their data and announced the result: The number of quark families was 3.09 ± 0.09. Because that number had to be an integer, it actually was exactly 3 (see Figure 8.5). The third family, consisting of the bottom and the still-unseen top quark, would complete the roster.

With this new result now in hand, one might think that there is little left today for particle physicists, at a fundamental level. One more round of experiments might find the top quark, completing the list of elementary particles, and then everyone can go home. Such a view

amounts to declaring that after 60 years we once again are where we thought we were in the time of Dirac, ready to wrap everything up with one more burst of creativity. In fact, a most important topic remains for study, one whose investigation has barely begun.

This is the Higgs particle, a theoretical concept proposed in 1964 by Peter W. Higgs of the University of Edinburgh. It has potentially far-reaching significance, for many theorists believe that in this particle lies the origin of mass. This origin, which would account for the masses of elementary particles, stands as a very deep issue. The Standard Model has little to say on the subject. It asserts that some particles are massless, such as the photon, which carries light. It states that the electron and

FIGURE 8.4 Stanford Linear Collider speeds positrons and electrons along a 3-kilometer straightaway. The injector (top right) shoots electrons into a damping ring, which condenses them for later focusing. One bunch then enters the straightaway behind a bunch of positrons. The two bunches accelerate in tandem before entering separate arcs that focus and direct them to collision in the Mark II detector (bottom left). Meanwhile, the second bunch of electrons slams into a target, producing positrons (center). The positrons are returned to the front and are damped and stored. (From "The Number of Families of Matter" by Gary J. Feldman and Jack Steinberger. Copyright © 1991 by Scientific American, Inc. All rights reserved.)

FIGURE 8.5 Evidence for three families of quarks. Predicted curves for different numbers of families differ noticeably. The data points fall neatly on the three-family curve.

positron are equal in mass. But it gives no account of the masses of quarks.

The concept of Higgs particles holds that they permeate empty space, like raindrops in the air during a thunderstorm. Other particles then gain mass by absorbing them; we could say that they eat the Higgs to gain weight. However, that is not to say that we understand this procedure, not by a long shot. "That hasn't explained anything," notes Kevin Einsweiler of Lawrence Berkeley National Laboratory. "There's no deep understanding contained in that. For instance, the top quark gets its mass because it couples very strongly to the Higgs. But that's not an explanation; it's a description of a mechanism of how it happens in the theory. The theory says nothing about what these couplings are or why the couplings of the top to the Higgs should be extremely large."

Hence, the concept of the Higgs particle offers a set of ideas that might lead to a true theory of mass, but that could easily be taken as a matter for some future day. In fact, the Higgs particle is central to electroweak theory. A key attribute of that theory is its ability to make precise calculations based on only a few parameters, calculating experimentally observable quantities to any desired accuracy. As Gerard 't Hooft first showed in 1971, it is the Higgs particle that gives electroweak theory this power. In the absence of the Higgs, for some problems it would give absurd results. Martinus Veltman, who worked with 't Hooft, gives an example: The scattering of one W particle off another. Veltman notes that, if one deletes the Higgs from the theory, at high energies the probability of the scattering could be calculated as greater than 1. "Such a result is clearly nonsense," he writes. "The statement is analogous to saying that even if a dart thrower is aiming in the opposite direction from a target, she will still score a bull's-eye."

Nevertheless, the Higgs particle quickly leads to difficulties. Gravity should couple with the Higgs particles that pervade free space, and this would cause the entire universe to curl up into something the size of a football. Needless to say, this is contrary to observation. To get around this, some physicists assume that in the absence of the Higgs the universe would curl up in the opposite way, again to the size of a football. The Higgs-induced curvature then cancels the "natural" curvature, yielding a universe that extends, as we see it, for billions of light-years.

This means that the Higgs concept is intimately tied up with cosmology, with the structure of the universe. It also leads to problems in cosmology for which the answers amount to little more than speculation. Nor are these issues trivial. Joseph Polchinski of the University of Texas, who has worked on closely related matters, notes that "there have been a lot of reasons offered" as to why the universe is not football size, "and most of them are obviously wrong. It's a very hard problem. It requires something new in physics." Steven Weinberg adds that this "may be the deepest problem we have."

The Higgs particle thus enters directly into a number of very significant issues: The physical basis for electroweak theory, the origin of mass, the large-scale structure of the universe. No one has seen a Higgs, and an obvious problem would then be for experimenters to find it. At first blush this appears possible, for although the mass of the Higgs is ill defined, a variety of arguments lead to the conclusion that it should lie between 60 and 1000 GeV. That is not so much greater than the mass of the W and Z particles. And with the upgraded accelerator at Fermilab, the Tevatron, now reaching 1800 GeV in proton-antiproton collisions, the way would appear open to a direct attack on the problem.

However, other issues come into play, effectively ruling out the likelihood of a successful search with the present generation of accelerators. These are all of the colliding beam type, which give the most energy, and they come in two versions. There are positron-electron colliders, such as LEP; there also are proton-antiproton machines, such as Tevatron.

LEP today offers collisions up to 110 GeV and is to be upgraded to reach 200. But this type of machine loses efficiency at very high energy due to the problems of synchrotron radiation. That is a type of radiation emitted by electrons or positrons, as they whirl in a circle. One eases this problem by making the circle quite large; that is why LEP has a diameter greater than 8 kilometers. There nevertheless are limits to the amount of real estate that can be dedicated for the use of such a facility; there also are limits to the number of magnets that even a major project can afford.

LEP presses these limits, making it unlikely that a larger electron-proton collider will be built, at least in the near future. And if one were to try to raise the energy of LEP to 1000 GeV, one would find that energy drained off by synchrotron radiation.

One turns then to the alternative, in which the colliding particle beams feature protons and antiprotons. These particles are much heavier than electrons and positrons, and their high mass suppresses synchrotron radiation. However, at very high energies another problem arises. The colliding particles do not behave like single objects, miniature cannonballs if you will. Instead they behave as if they were charges of buckshot. A proton is made not only of quarks but also gluons, additional particles that hold the quarks together, and at very high energies this composite structure becomes apparent. A proton and antiproton then might meet head on and simply pass through each other, with all their quarks and gluons mutually missing one another in their passage.

This means that, when such a particle collision indeed takes place, it generally involves no more than two quarks or gluons out of the entire ensemble. If one wishes to achieve an honest 1 or 2 TeV in a particle collision, the actual energy of the colliding proton and antiproton must be vastly higher, because each quark or gluon holds only a small fraction of the whole. Indeed, the total energy, within the colliding beams, would be as great as 40 TeV, 20 in each. No present-day accelerator can remotely approach this goal.

With this, one enters a realm of particle accelerators that exist only on paper. For the past decade, the American physics community has set its hopes on a true 40 TeV machine, the Superconducting Supercollider. As of October 1993, it was under construction near Waxahachie, Texas. Builders were digging an underground tunnel of 87-kilometer circumference that was to hold its magnet ring. But during that month, the roof fell in. This does not mean that the tunnel collapsed. Rather, Congress voted to cut off funding.

As a result, physicists today are not entirely clear what they will do next. CERN, ever helpful, has proposed to pick up the falling torch by building an entirely new magnet ring within the existing LEP tunnel of 27 kilometers. The Europeans then would take the lead with an entirely new accelerator, the Large Hadron Collider (LHC). It would collide beams of protons and antiprotons, which physicists refer to as hadrons. However, being smaller than the Supercollider, it also would achieve less energy: 15 TeV. It then could find the Higgs only if that particle's mass were under 600 GeV.

But with this, high-energy physics faces a different type of collision, as experimenters' plans collide with the restrictions of government budgets. The LHC is budgeted at $1.4 billion, compared with $11 billion for the late lamented Supercollider. This lower cost results from its use of the existing LEP tunnel, along with a need for fewer magnets. Still, even before the Americans dropped the Supercollider, Europeans had repeatedly put off approving the LHC as a formal project. With no one now spurring them to compete in seeking these new frontiers, they could well offer further delays.

Moreover, Rome was not built in a day, and neither are major accelerators. Optimists hope that LHC will win approval in 1994, but even if it does, it would start operating no sooner than 2002. Even then, it would be sheer folly for physicists to try to find the Higgs particle by searching blindly in the energy range of 60 to 1000 GeV; that simply is too large an extent. As was true when Carlo Rubbia was searching for the W and Z particles in the early 1980s, it will help greatly if physicists can begin with a reasonably clear idea of the specific energy that will create the Higgs.

Fortunately, such a prediction is achievable—if experimenters first succeed in discovering the top quark. The reason is that the theory offers a set of mathematical relations that link the masses of the W, Z, top, and Higgs. Two of these masses are presently in hand, for the W and Z, with the W mass being steadily sharpened in ongoing experiments. A well-defined mass for the top will then pin down the Higgs mass with good precision. In this fashion, recent history may repeat. Just as detailed studies of the Z settled the very different question of the number of quark families, so discovery of the top quark can open the way to finding the Higgs.

Indeed, searches for the top have been a specialty at Fermilab, and these searches are continuing. During 1989, researchers at that lab competed with colleagues at CERN in pursuing this discovery. Neither group succeeded, but they showed that the top would have a mass of at least 91 GeV. This prediction drew on measurements of the W mass, made at both laboratories. Then in 1991 new results from the LEP at CERN offered an estimate for the mass: 140 ± 30 GeV. This sparked a new search at Fermilab, beginning in 1992. So far, this effort has not succeeded.

Still, the work continues. John Huth, an experimental leader at Fermilab, states that in 1994 or 1995 his lab will be able to extend the search to energies of 170 to 180 GeV. Further improvements in the accelerator system, which could enter service later in this decade, would

increase their limit to 240 to 250 GeV. Huth notes that an upper limit for the mass of the top, at the 95 percent confidence level, is 225 GeV, according to inferences from theory. Such an estimate does not guarantee success, of course, but it encourages physicists to continue to try.

To discover this quark demands highly sophisticated detectors, and these have kept pace with developments in the design of the accelerators themselves. As recently as 1974, when researchers at Brookhaven were working to discover the charm quark, their detector was the bubble chamber. It was quite advanced for its day and sufficiently important to win a Nobel prize for its inventor, Donald Glaser of the University of California at Berkeley. Still, it had some rather noteworthy limitations.

The Brookhaven chamber featured a large tank of liquid hydrogen that acted as a photographic emulsion that was both three-dimensional and reusable. When charged particles passed through this liquid—electrons, protons, particles formed from quarks—they produced lines of tiny bubbles to mark their paths. Cameras would take photos from several angles; then their film would advance for the next shot while the chamber reset itself and absorbed the bubbles.

The system could generate photos by the tens of thousands, and people had to go through all of them to look for interesting events. Lowly graduate students often handled this tedious work, but at times they were in short supply. When that happened, the job often fell to local housewives who needed the extra income. At Brookhaven the woman who supervised this work was a former YMCA cashier; she had gained her post because she had a talent for telling her co-workers what the physicists were looking for. When the lab recorded its first evidence for the charm quark in May 1974, the data came from a former switchboard operator. It was on frame 6967 of roll 27.

The bubble chamber was highly useful and featured strongly in the key discoveries of the era. However, it was quite unable to keep up with the floods of data than increasingly capable accelerators would generate. The answer lay in electronic detectors, massive arrays of circuitry that could cope with almost any number of particle collisions. The first of them went into operation at CERN and proved essential to Carlo Rubbia's discovery of the W and Z in 1983. They had the highly useful feature of generating their data in the direct form of electrical signals, rather than as photographs. Such signals lent themselves to computer processing. The computers then could ease the task of searching for new particles by rejecting many of the data sets that were entirely devoid of interest.

In turn, these computers have had no shortage of data. At Fermilab

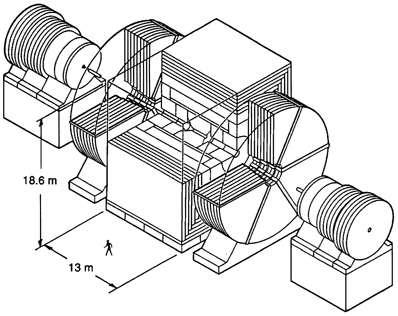

FIGURE 8.6 Main detector at CERN, 1982. Side view of the UA1 detector shows it in place in the SPS beam line. Experimental components for various purposes, including the search for intermediate vector bosons, are labeled. (From ''The Search for Intermediate Vector Bosons" by David B. Clive, Carlo Rubbia, and Simon van der Meer. Copyright © 1982 by Scientific American, Inc. All rights reserved.)

the CDF detector can track the individual particles produced in each collision. CDF generates over 10,000 bits of information regarding each such particle. A typical collision produces 30 or more of them, and the Fermilab accelerator can generate over 100,000 collisions per second, with CDF faithfully keeping pace.

Further, with accelerators offering increasingly high energies, the sheer size and weight of detectors are also keeping pace. The CERN detector of 1983 came to 2000 tons (see Figure 8.6). Today's CDF weighs in at 5000 tons, while the main detector for the Supercollider was to reach 30,000. That equals the weight of a battleship, and the comparison is not accidental. Much of a battleship's weight comes from heavy armor plate. Similar armor plate, of iron, lead, and even uranium, guards the outer regions of a detector. It screens out less-penetrating particles, such as protons, making it easier to detect the highly penetrating ones that spray from collisions. But as these collisions rise in energy, in successively larger accelerators, the energy of collisional debris also rises. Hence, detectors need more and more shielding to accomplish this screening.

In addition, increasing collisional energy makes it desirable to increase the volume of a detector. The CERN accelerator of 1983, at 540

GeV, called for a detector measuring 5 by 10 meters long. For the Supercollider, operating at 40,000 GeV, one proposed detector was to have the dimensions of 18 by 30 meters (see Figure 8.7). Its need for shielding and its weight would have grown proportionately.

Still, if all goes well, there will be some very interesting experiments in the next decade or two. Fermilab, with the world's most powerful operating accelerator, could find the top quark and pin down its mass. That would permit a clear prediction of the mass of the Higgs. In turn, this mass might prove to lie within the energy range accessible to the LHC, spurring its construction. Discovery of the Higgs, along with detailed studies of this particle, then could shed light on the origin of mass.

None of this is guaranteed, to be sure. Diligent search might fail to find the top quark, and this would be a disaster for the Standard Model. The Higgs mass might turn out to be too high for the LHC. Even if it lies within its range, it nevertheless might evade detection. That would pose fundamental problems for the basis of electroweak theory.

And even if all goes well, if, in the year 2005 or thereabouts, we have

FIGURE 8.7 Perspective drawing of a detector for the SSC. (Courtesy of SSC Central Design Group.)

the top quark and the Higgs safely in hand, physicists will still face a host of issues. The question of the size of the universe, and why it is not curled up into the size of a football, is only one of them.

"I do not know what I may seem to others," wrote Isaac Newton. "But, as to myself, I seem to have been only as a boy playing on the seashore, and diverting myself in now and then finding a smoother pebble or a prettier shell than ordinary, whilst the great ocean of truth lay all undiscovered before me." In this spirit, rather than with the hope of final and ultimate insight, physicists may welcome new accelerators such as the LHC, as they usher in the agenda of the next century. Our researchers may divert themselves with the pretty shells of the top quark and even the Higgs, but Newton's ocean still conceals many of the truly deep issues: The origin of mass; the origin of the universe; the character of a truly ultimate theory of nature, if indeed a theory exists. For all our hope and all our work to date, we still must stand with Paul: "We know in part, and we prophesy in part. … Now we see through a glass, darkly."

BIBLIOGRAPHY

Breuker, H., H. Drevermann, C. Grab, A. A. Rademakers, and H. Stone. 1991. Tracking and imaging elementary particles. Scientific American (Aug.):58–63.

Cline, D. B., C. Rubbia, and S. van de Meer. 1982. The search for intermediate vector bosons. Scientific American (March):48–59.

Crease, R. P., and C. C. Mann. 1986. The Second Creation. Macmillan, New York.

Feldman, G. J. and J. Steinberger. 1991. The number of families of matter. Scientific American (Feb.):70–75.

Fisher, A. 1979. Grand Unification: An elusive grail. Mosaic (Sept.):3–12.

Huth, J. 1992. The search for the top quark. American Scientist. (Sept./Oct.):430–443.

Lederman, L. M. 1990. The Tevatron. Scientific American (March):48–55.

Myers, S., and E. Picasso. 1990. The LEP collider. Scientific American (July):54–61.

National Research Council. 1986. Physics Through the 1990s: Elementary Particle Physics. National Academy Press, Washington, D.C.

Pagels, H. R. 1985. Perfect Symmetry. Simon & Schuster, New York.

Rees, J. R. 1989. The Stanford linear collider. Scientific American (Oct.):58–65.

Riordan, M. 1987. The Hunting of the Quark. Simon & Schuster, New York.

Schwarzschild, B. M. 1993. Decision approaching on CERN's proposed Large Hadron Collider. Physics Today (February):17–20.

Trefil, J. S. 1980. From Atoms to Quarks. Scribners, New York.

Veltman, M. J. G. 1986. The Higgs Boson. Scientific American (Nov.):76–84.