3

Understanding R&D within the Nonprofit Sector

This chapter brings together voices from the nonprofit sector to add insight and specifics to the more general portrait of the sector presented in Chapter 2. The great diversity within the sector, and its unique way of thinking about and performing research and development (R&D), became clearer at the workshop through these presentations. The presentations and subsequent discussion among workshop participants identified five key challenges that many participants said the National Science Foundation (NSF) will need to face as it designs the nonprofit R&D survey. These challenges are discussed in detail later in the chapter.

DEFINITION OF RESEARCH AND DEVELOPMENT USED BY NCSES

The National Center for Science and Engineering Statistics (NCSES) of NSF uses a specific definition of research and development in its surveys that produce data for the National Patterns of R&D Resources:

R&D is planned creative work aimed at discovering new knowledge or developing new and significantly improved goods and services. This includes a) activities aimed at acquiring new knowledge or understanding without specific immediate commercial applications or uses (basic research); b) activities aimed at solving a specific problem or meeting a specific commercial objective (applied research); and c) systematic use of research and practical experience to produce new or significantly improved goods, services, or processes (development).

The definition of R&D used by NSF1 is consistent with the definition provided by the Frascati Manual 2002, an internationally recognized methodology for collecting and using R&D statistics.

The steering committee did not give this definition of R&D to the representatives of organizations from the nonprofit sector and then ask whether their activities fit under the definition. Instead the committee approach was less prescriptive, asking the representatives to describe their organizations, the activities that they were engaged in that might be considered R&D, and the language they used to describe these activities.

VOICES FROM THE NONPROFIT SECTOR

Leaders from six different nonprofits—the American Cancer Society (ACS), Lutheran Social Service of Minnesota, LeadingAge, Hillside Family of Agencies, Prince William Regional Beekeepers Association, and Mote Marine Laboratory—presented views of R&D at their organizations. These six exemplars covered the range of organizational sizes, focuses, and structures seen among the diverse nonprofit sector. Susan Raymond began by describing the session’s purpose to explore the kinds of activities that constitute R&D in the nonprofit sector and the language used within the sector to describe these activities. The presenters described the types of R&D activities within their organizations, and how these activities are organized, funded, and accounted for. The presenters also described how their organizations think about research, what language they use to describe it, and whether they would be able to answer questions about the resources and staff time allocated to that research. Finally, the presenters offered suggestions for ways to word and improve the survey.

American Cancer Society

ACS is a large nonprofit organization, headquartered in Atlanta, Georgia. Regional and local offices support 11 different divisions. Daniel Heist, volunteer and board member of ACS, along with Catherine Mickle, chief financial officer, presented information about ACS. Heist began his presentation with the ACS mission statement:

As a nationwide, voluntary community health organization, the American Cancer Society is dedicated to eliminating cancer as a major public

______________

1The definitional text provided to respondents on the 1996–1997 NSF Nonprofit R&D Survey is discussed in more detail in Chapter 5 and provided in Box 5-1.

health problem by preventing cancer, saving lives, and diminishing suffering from cancer through research, education, advocacy, and service.2

In Heist’s view, the mission statement is particularly important from a fiduciary perspective because it guides their work. By focusing on this mission, the board and staff of ACS have worked together to identify and approve seven priority areas: lung cancer and tobacco control; nutrition and physical activity; colorectal cancer; breast cancer; cancer treatment and patient care; access to care–public policy; and global health. ACS ensures research dollars are directly tied to these priority areas in order to drive the greatest impact.

ACS conducts both an intramural and extramural research program, but research itself is not a priority area; rather, it is a functional area. Catherine Mickle described it as “a tool to drive us to the desired outcomes in these particular areas.” However, she also shared that the topic of whether research should be a focus area rather than a means to an end has been debated many times over the years by the ACS board. Its extramural research program (greater than $100 million) funds research housed at universities, hospitals, and other similar facilities. Mickle said ACS additionally engages in activities that could be categorized as research in its “cancer control efforts.” The main focus of this presentation, ACS’ intramural research program, fits well within the NSF definition of research, she stated.

The ACS intramural research program itself is guided by a set of articulated priorities linked to the overall mission of the organization (stated by Heist above). The research efforts are targeted toward areas where they believe they will have the greatest impact, such as contributing to the science about common cancers and known and emerging risk factors. Some research targets policy, community, and behavioral interventions, where known causes of cancer, such as smoking, exist. ACS conducts research on access to care and quality of care, as well as the psychosocial and support needs of patients and caregivers. Global tobacco control and the international cancer burden are growing areas of research. Finally, ACS also devotes research efforts toward evaluating the effectiveness of its own policies and programs.

These research priorities are housed within five different intramural research program areas or departments: surveillance and health services; economic and health policy; statistics and evaluation; behavioral; and epidemiology. A management team coordinates these departments. Altogether, ACS spent more than $21 million in 2013 on the intramural

______________

2The American Cancer Society mission statement: http://www.cancer.org/aboutus/whoweare/acsmissionstatements [December 2014].

research program, including management costs. Approximately $3 million was directed toward surveillance and health services; $1.5 million each toward economic and health policy, statistics and evaluation, and behavioral research; and $6 million on epidemiology research. One-third, or $7 million, of the total amount was spent on an ACS cancer prevention study project. Overall, 86 highly trained staff work to manage and conduct this intramural research program.

Mickle provided two examples of products that have resulted from ACS intramural research. The first, produced by the ACS surveillance and health services research department, has developed current incidence and mortality rates from various forms of cancer by gender. The accompanying publication, Cancer Facts and Figures (American Cancer Society, 2014), is widely used across the health care community, according to Mickle. In addition, the surveillance team reports on actual incidence by state and develops projections of future incidence and mortality.

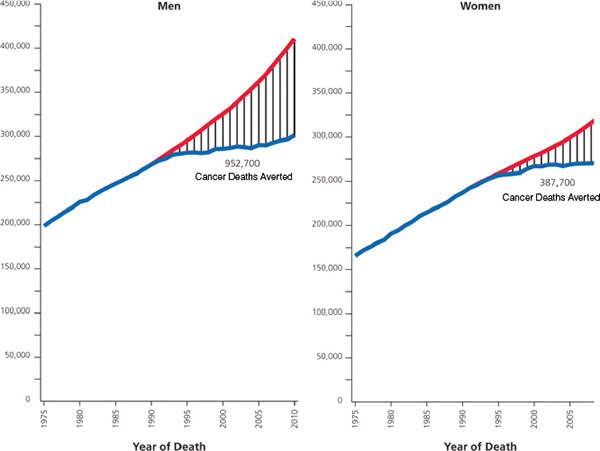

A second example shows cancer deaths averted through known interventions by gender, as shown in Figure 3-1. The graph shows a compari-

FIGURE 3-1 Total number of cancer deaths averted by declines in cancer death rates from 1991 to 2010 in men and from 1992 to 2010 in women.

SOURCE: Mickle and Heist (2014).

son between actual deaths from cancer and projections of the number of deaths that would have occurred if the cancer community had not intervened with proven ways to prevent cancer. The graph, prepared jointly by the epidemiology and surveillance teams, is “an important example to show how we’re using our intramural research efforts to coordinate and drive change, and perhaps in some circumstances our way of delivering our products and service in support of our mission,” she said, adding ACS seeks to increase “most lives saved” in the shortest period of time.

Other examples of ACS’ intramural research include work on tobacco tax policy, which includes analysis of trade policies, and tobacco control, as well as nutrition and physical activity and their direct linkages with cancer. The statistics and evaluation department serves as the internal analysis group. They assist with planning, as well as study and survey design. The behavioral research group addresses issues around survivor-ship, quality of life, health equity, and tobacco cessation.

Half of all of ACS’ intramural research staff works in the epidemiology department and focuses on cancer prevention. Heist described four significant areas where ACS research has had an impact. The first of these was the Hammond Horn study, which led to the 1964 Surgeon General report on the impact of tobacco. Next were the longitudinal Cancer Prevention Studies (CPS) 1 and 2, in which volunteers were interviewed about a range of factors and behaviors, and followed over time. CPS 1 was conducted from 1959 to 1972 and helped in showing the harmful effects of secondhand smoke and the ineffectiveness of low-tar nicotine cigarettes. CPS 2, initiated in 1982 and still ongoing, has been useful thus far in identifying a link between obesity and cancer, as well as other nutritional and physical activity factors. Finally, the fourth area is CPS 3, which began in 2006. This study, involving more than 300,000 participants, extends beyond interviews to involve the collection of blood samples. Heist estimates that approximately $37 million has been spent on CPS 3 to date, in part because of the costs of adequately controlling collected specimens, and stated that it could cost $125 million or more over the life of the project. Despite these costs, however, Heist stated that ACS feels that this investment is worthwhile for its potential impact. In Heist’s words, “saving lives is what drives our program and what we’re doing.”

Lutheran Social Service of Minnesota

Lutheran Social Service of Minnesota (LSSM) is a nonprofit organization with more than 2,300 employees who provide a broad array of community services across their state. Jodi Harpstead, chief executive officer of LSSM, described their work and how they approach and use research. According to Harpstead, LSSM is one of the oldest and largest

social service providers in the state of Minnesota. Serving 1 of every 65 Minnesotans, LSSM operates 23 different lines of service in every county. The mission statement for LSSM is

Lutheran Social Service of Minnesota expresses the love of Christ for all people through service that inspires hope, changes lives, and builds community.3

The organization is also part of a parent organization, Lutheran Services in America (LSA), which is comprised of more than 300 organizations across all 50 states and the Caribbean. Altogether, LSA accounts for $21 billion in human services across the country and serves 1 in every 50 Americans. State-level organizations within the LSA umbrella vary in size, primary funding source, and breadth of services offered. However, most of the services that LSA offers target older adults and people with disabilities who will need support in their communities for the rest of their lives. Such services address ongoing needs and generally not problems that can be “definitely solved,” and thus are often of less interest to, and attract less funding from, social philanthropists, she said.

LSSM receives 84 percent of its revenue from government sources; philanthropy accounts for 9 percent, with the remainder coming from client fees. LSSM takes pride in being a careful steward of its resources, and, according to a study conducted 10 years ago, has a reputation for being trustworthy, Harpstead said. Despite this reputation, Harpstead stated that she is experiencing pressures to demonstrate through research the results of her organization’s activities. One barrier to conducting this research, however, is the need for resources to implement it. As CEO, she must consider how to allocate resources in ways that will help both with fulfilling their mission and attracting more resources. For LSSM, reputation and the “politics of social policy” have had a much greater effect on revenue than have the results from research regarding program effectiveness.

Harpstead also shared that measuring the impact of the organization has inherent challenges. In her words, “How do you measure racism? How do we account for the shift of the global economy and factory jobs from Minnesota to China while trying to prove the value of our efforts to provide employment and housing services? How do you measure the effectiveness of our financial counselors when there is a multi-billion dollar for-profit industry devoting itself to convincing people to take out easy payday loans to support their families?” Nevertheless, LSSM con-

______________

3The Lutheran Social Service of Minnesota’s mission statement: http://www.lssmn.org/About-Us/ [December 2014].

ducts quarterly research to measure client outcomes and key performance indicators.

In addition to the internal research used in evaluating the services provided, LSSM makes use of other research sources, such as research conducted by the University of Minnesota and a local foundation that conducts social services research. In addition, LSA convenes its member organizations annually to network and discuss best practices. Furthermore, LSSM is a member of other national groups and associations that also share important information and research results. The accreditation process involved in maintaining membership in one or more of these groups also serves as a way that LSSM studies and documents its own work. LSSM also makes use of national-level data from organizations, such as the Corporation for National and Community Service, Lutheran Immigration and Refugee Services, and National Adoption Association. Harpstead stated, “I hope this does not leave the impression that because we are not funding millions of dollars of research inside and outside our organization that our work is not informed by research. There is a lot of third-party work . . . and other ways for us to get a hold of good research information that informs our work design and program implementation.”

LSSM recently contracted an outside firm to conduct literature reviews, interviews, and analyze state-level data to document how “life is currently” for people with disabilities and then to design how it ought to be. This work was represented in a graphic used by the state to plan for people with disabilities. Similar work has focused on older adults, as well as homeless youth. LSSM also works as part of a coalition to help transform how people with disabilities live in Minnesota. For example, they are working to help people move from group homes into their own apartments, and out of sheltered workshops and into paid employment with good wages and benefits. Harpstead commented, “I emphasize this piece a lot because it may not be what you in the room might have thought of as research or even think of now as research, but it has prompted an amazing social change across the State of Minnesota that is really affecting the lives of how people live and work in the state. It was started with a whole lot of research and now we are transforming our services as a result.”

Harpstead shared other ways that research occurs at and for LSSM. LSSM participates in a number of pilot studies, such as helping caregiving spouses use iPads to document isolation and depression. An individual doing a fellowship with LSSM completed a study of accountable care organizations (ACOs) across six states. LSSM plans to measure health care metrics as they create an ACO in Minnesota with multiple disability service providers. Other research activities include data mining of information collected through call centers, peer quality assessments of group

homes, and certain mental health counseling activities. These data are primarily used for program evaluation.

Although LSSM gathers, conducts, and uses research in a variety of ways, Harpstead stated the nonprofit does not have a research department or any line in the general ledger for R&D. Instead, senior directors at LSSM are expected to carry out and/or find the research results they need from other sources in order to ensure continued best practices. Harpstead noted revenue directed toward R&D could be modeled or estimated, and she said LSSM likely devotes 0.5 percent of its revenue to research.

The language that LSSM would use to describe its research efforts includes data-driven design, data mining, and program evaluation. As she said, “We have never called it R&D until we were invited to this conference.” Overall, Harpstead stated that her organization is considering how it could do more and “make a difference in our ability to fulfill our mission and improve services for people in Minnesota.”

LeadingAge

Robyn Stone, senior vice president for research at LeadingAge and executive director of the LeadingAge Center for Applied Research (CFAR), presented her views on R&D at LeadingAge. LeadingAge, formerly called the American Association of Homes and Services for the Aging, changed its name several years ago to reflect its expanded mission: The mission of LeadingAge is to expand the world of possibilities for aging.4

According to Stone, the organization represents an array of services among 6,000 members, including nursing homes, assisted living, adult day home, community-based services, and many low-income senior housing providers. CFAR “brings a breadth of knowledge and experience to a wide variety of research areas. The center has earned a national reputation for its ability to translate research findings into real-world policies and practices that improve the lives of older Americans and their caregivers.” (LeadingAge, n.d.)

Stone noted the emphasis on applied research as evident in the mission statement was something she enacted in her role as executive director of CFAR when she came to LeadingAge 15 years ago. The prior LeadingAge executive president valued the personal stories of their members and did not share an interest in data and research, but Stone stated that “evidence-based data is what helps us to move forward in terms of development and best practice . . . CFAR is really about bridging the worlds of policy, practice and research.” She added her experience as a trained researcher

______________

4The mission statement for LeadingAge: http://www.leadingage.org/About_LeadingAge.aspx [December 2014].

involved in governmental intramural research and research in the private sector informed her commitment to seeing and initiating the opportunity for LeadingAge to serve as a natural laboratory.

Research is conducted at LeadingAge in CFAR, but clinical, applied, and internal research also happen at the provider-level among many members. Some member providers also partner with academic health centers, she noted, which raises the possibility for double-counting these activities through NSF’s survey of R&D in higher education.

LeadingAge has 7 to 15 staff members and a $5 million budget. The budget also includes an additional internal source of funds of $500,000. LeadingAge pays salaries for the positions of the executive director, administrative staff, and a portion of some researchers’ time. Members have also contributed approximately $500,000 toward an innovation fund. Most of LeadingAge’s funding comes from federal contracts and grants with multiple agencies and various private foundations, sources that change over time.

Stone highlighted one project to illustrate what R&D looks like at LeadingAge. Over the past 10 years, LeadingAge has worked to develop a new model of housing and services for low-income seniors. The origin of the project came from a desire among members to measure the impact of whether an enriched service portfolio was making a difference in terms of resident outcomes. They also wanted to know whether they were saving Medicaid and Medicare dollars, and/or whether they were stopping evictions to better maintain properties. The research began with case studies and a literature review. Later, working with the U.S. Department of Health and Human Services (DHHS), they convened expert panels and workshops around the country to identify key issues. Ultimately, LeadingAge partnered with a research-contracting firm to create a database of information regarding low-income seniors, matching administrative data from the DHHS with Medicare and Medicaid claims from DHHS’ Centers for Medicare & Medicaid Services (CMS) for 12 jurisdictions around the country. These data enable LeadingAge to report and compare this population to others living in the community.

The data have indicated that this subpopulation—living in low-income, publicly subsidized senior housing—is sicker and has higher needs than many other segments of the population, including peers living in the surrounding communities. This knowledge has led to the focus on housing services, keeping people in their communities as long as possible, preventing evictions, avoiding movement into nursing homes, and avoiding costly hospital admissions—ultimately producing Medicare and Medicaid savings. LeadingAge used the research findings to develop a model of housing that centers on a service coordinator along with a wellness nurse.

This model is being tested in 80 housing properties across Vermont as part of a statewide Medicare coordinated care payment demonstration project. Each housing provider receives a per-person-per-month Medicare payment for service coordination, representing the first time that Medicare has paid for services in housing. LeadingAge and RTI International have partnered to conduct an evaluation of this service coordinator model. Initial results after 1 year of the program show reductions in the rate of growth in Medicare costs when compared with a control group with similar demographic characteristics.

Stone indicated that they also have ongoing development projects, including a learning collaborative of 12 housing providers around the United States, that partner with health care and social services of various sizes and types. Over the past 18 months, this group has engaged in data sharing, problem sharing and solving, and development of resident assessment tools for housing providers to use. In addition, LeadingAge helped this collaborative implement three new evidence-based practices focused on reducing depression, managing chronic disease self-care, and preventing falls.

Another initiative, recently funded by AARP, involves the creation of a toolkit to help housing providers partner with health care providers in their local communities to jointly achieve better health care outcomes and cost savings to the Medicare and Medicaid programs. LeadingAge’s Center for Housing Services conducts both qualitative and quantitative research. It publishes its work but also ensures that findings are accessible through trade publications, its website, and conference presentations. Other initiatives focus on CQI (continuous quality improvement) in nursing homes, the future of the geriatric workforce, and the exploration of a model of social investment bonds to support housing and services. For the latter project, LeadingAge is also working to initiate a research evaluation component to determine return on investment and any cost savings to Medicare and Medicaid.

According to Stone, “One of the things that nonprofits can do is to help solve some of these problems. While our association and our members are concerned about the bottom line, we are not as constrained by the profit motive as, for example, are our peers at the American Health Care Association, which represents primarily for-profit nursing homes. We are actually able to stretch out a little bit more and look at more of the innovation out there. I think that is what nonprofits can bring.” However, she noted that nonprofits often lack the research expertise, as well as adequate funding to conduct rigorous research, which can be quite costly to do. Adding to this problem is diminishing federal funds for this work, she said, and a desire by foundations to fund programs rather than research.

Stone ended by noting this lack of appetite for research funding means that research work is often couched in program development work.

Prince William Regional Beekeepers Association

Karla Eisen discussed the nature of R&D for the Prince William Regional Beekeepers Association (PWRBA), and how its R&D activities changed their culture and practices. PWRBA has 125 volunteer members and is a member of a state beekeeping association, composed of regional associations. The association strives to

- provide a forum for the exchange of ideas and views of mutual interest to beekeepers;

- provide education on the practical aspects of beekeeping and encourage the use of better and more productive methods in the apiary;

- foster cooperation between members of the association;

- promote understanding and cooperation between the association and the community with regard to beekeeping; and

- promote the use of hive and honey products.5

PWRBA operates with a budget of less than $5,000, with the exception of two grants. The grants funded research and the subsequent implementation of beekeeping practices that had become, said Eisen, “a lost art.” According to Eisen, over the past 25 years, beekeeping has become dependent on a commercial and agricultural model that produces boxes of packaged bees with which to start new colonies. These bees, including a queen bee, are packaged in the southern United States and shipped north, where the weather may be excessively cold, snowy, or rainy. The ability to develop new colonies has always been integral to the beekeeping process, but has become even more important in recent years with the spread of Colony Collapse Disorder. Eisen described how PWRBA has worked to change the existing model of starting new colonies to something that is more sustainable. The members learned to develop nucleus colonies—miniature hives that they made themselves. The organization also learned how to raise queen bees to distribute to its members. It did research to determine whether this approach was more effective than importing packaged bees from warmer climates.

This project originated at a state regional meeting when concerns were raised about bees dying in large numbers, coupled with the risks

______________

5Prince William Regional Beekeepers Association’s website: http://pwrbeekeepers.com/ [December 2014].

associated with importing Africanized bees. Research showed that large proportions of the bees coming to the Northern Virginia area were from Africanized bee areas. According to Eisen, “We had a vision. We wanted to develop a locally available and sustainable source of bees. We wanted to learn to make our own bees. We wanted to do education, training, and mentoring. We wanted to promote what is called Integrated Pest Management. We wanted to do outreach and education to the community. And most importantly, we wanted to just change the way we conducted business. We wanted to reduce our dependence on importing these packaged bees.”

In 2009, PWRBA applied successfully for a $15,000 Sustainable Agricultural Research and Education (SARE) grant6 from the U.S. Department of Agriculture (USDA) to conduct research on developing nucleus colonies. The SARE Grant Program targets farmers and producers, and requires that grant recipients conduct research, followed by outreach and education to the community. The research involves developing a hypothesis, and collecting, analyzing, and presenting the data. SARE itself also supports dissemination and outreach through its online database of projects.

In 2012, PWRBA applied for a second grant, this one through the USDA Specialty Crop Block Grant Program. This program is designed to enhance the competitiveness of specialty crops. PWRBA was awarded a grant to study and learn queen bee rearing. In Eisen’s view, “Those are development funds. They ask you for performance measures. You have to speak that language. It is not research, but clearly provides funds for development activities. These grants do not go to individual farmers, but only to associations.”

These projects targeted production of a product, locally raised nucleus hives or “nucs,” to be distributed to the students that PWRBA teaches each year as well as existing beekeepers in the region. In doing so, Eisen and her colleagues focused their efforts toward meeting the distribution and training goal, with the secondary goal of finding out if their methods would produce stronger bees. PWRBA proceeded in several steps:

- Beginning with a year-long pilot project to plan and educate, prior to implementation.

- Conducting an experiment comparing colonies started from packaged bees to those started from nucs.

______________

6The Sustainable Agricultural Research and Education (SARE) Program within the U.S. Department of Agriculture awards grants with the mission “to advance—to the whole of American agriculture—innovations that improve profitability, stewardship and quality of life by investing in groundbreaking research and education.”

- Raising queens and tracking their performance to identify the best breeders.

- Conducting education and outreach programs.

“Experiment” was the word chosen to communicate about the research to the individuals who would be raising and tracking their colonies. Organizing the experiment into three different groups further facilitated comparing different sources of queen bees.

Data collection involved capturing information about the weather, flower-bloom, indicators of hive health and productivity, and interventions by the beekeepers. The beekeepers reported their data monthly over a year via Survey Monkey. The individual beekeepers also gave summaries and recommendations based on their data and experiences. At the same time, PWRBA conducted numerous trainings and educational programs.

Eisen offered her perspective on the nature of this work in the following manner: “I was always very clear to call it citizen science, and I still call it citizen science. But even in our little baby research project, we did collect data. We did have descriptive statistics. This grant was $15,000. That was like a million dollars to our little beekeeping association. It was a lot of money to spend. I do think that is an issue for small nonprofits.” She added she was aware that the research lacked full scientific rigor, and involved many variables and experience levels. For example, weather and location varied among the colonies. Despite these limitations, however, their results are being replicated by other beekeeping organizations doing similar work in many areas of the country.

Results from their work indicated that the colonies started from nucs had a much better survival rate, and following these colonies for an additional year revealed an even larger difference favoring nucs over packaged bees (see Table 3-1). This work also led to increased knowledge and an ability to increase the production of colonies. In 1 year, PWRBA was able to quadruple the number of nucs it produced. Within 3 years they were able to produce enough nucs to support the entire student class and many existing beekeepers as well as to produce queen bees.

This project “has completely changed the way that we operate,” stated Eisen. PWRBA has eliminated the use of packaged bees completely and provide locally produced mini-hives to beekeepers instead. It has helped others learn to produce their own queens. It has continued their efforts in education and outreach, seeking additional funding for those efforts. The Southern SARE mobile display now highlights beekeeping with nucleus colonies as part of sustainable agriculture. Eisen herself shared her knowledge of raising queens with the White House beekeeper.

Eisen concluded her remarks by sharing her views on whether the

TABLE 3-1 One- and Two-Year Hive Survival, by Source of Starter Hive

| Package-Started Hives Number Started = 22 |

Nuc-Started Hives Number Started = 23 |

|||

| Number Survived Sept |

Number Survived Oct |

Number Survived Sept |

Number Survived Oct |

|

| A | 8 | 4 | 7 | 6 |

| B | 4 | 2 | 7 | 6 |

| C | 3 | 0 | 5 | 2 |

| Total | 15 | 6 | 19 | 14 |

| Survival Rate | 68% | 40% | 83% | 74% |

SOURCE: Eisen (2014).

term “research and development” would resonate with her organization. “I would have to give a resounding no to that, “she said. As someone who is involved with and works with others in agriculture, they identify with the terms “testing” or “experimenting” much more than with the word “research.” Eisen believes that her organization did development work; however, she observed, even in the crop specialty block grant program, the words “development” and “research” are not used. After polling 30 members of her organization on what words they would use to describe this work, only two individuals, the team leaders, responded. They offered that they collected data, had a hypothesis, had results, published a report, created new knowledge, and enabled product delivery. Eisen concluded that there are many other organizations like her own within the agriculture community.

Hillside Family of Agencies

Maria Cristalli, chief strategy and quality officer for Hillside Family of Agencies (HFA), offered her perspective on R&D. She began with HFA’s mission statement:

Hillside Family of Agencies provides individualized health, education, and human services in partnership with children, youth, adults, and their families through an integrated system of care.7

______________

7The mission statement for Hillside Family of Agencies: http://www.hillside.com/Generic.aspx?id=142 [December 2014].

HFA is known for providing services to children and families over a 177-year history, but Cristalli said significant changes, such as the Affordable Care Act, affect how the organization now operates. Medicaid dollars from New York State constitute approximately 40 percent of the current budget, and by January 2016, HFA and other traditional providers of children’s behavioral health services will be embracing epic change as New York State Department of Health intends to have all children’s Medicaid services under managed care. These shifts led HFA’s executive team to extend the organization’s services to adults through an integrated system of care.

HFA operates primarily in central and western New York and Prince George’s County, Maryland, offering a wide array of services. Of HFA’s total budget of $140 million, Cristalli estimated that approximately 1 percent is spent on “what you would characterize as research activities.” HFA has approximately 2,300 staff across various service locations.

HFA’s strategic intent statement, adopted in 2007, states: “Hillside Family of Agencies, in partnership with youth, families, and communities, will be the leader in translating research into effective practice solutions that create value (outcomes/cost.)” When this strategic intent was first launched, staff initially expressed concern that HFA would shift from being service-oriented to being research-oriented, moving away from a focus on helping people. This was not the case, however, according to Cristalli. Instead, she said HFA “wants to be specific and intentional about the services we are providing. The application of the most effective treatments and the measurement of outcomes to inform practice is our organizational goal. It is important that we understand outcomes relative to cost.” The outcomes of interest to HFA focused on enduring changes in the lives of the people it serves.

Cristalli then described the process that HFA developed to achieve the strategic intent. The process begins with deriving value from the data collected, while targeting very clearly defined outcomes. It identified benchmarking as an important process, using data combined with anecdotal stories to determine “best in class” service provision. Next, for certain programs, it planned to use a higher level of data gathered through research, program evaluation, and predictive analytics.

A key step in enacting this vision was bringing research expertise and leadership into the organization. Hiring a research director proved challenging, and HFA learned that few similar nonprofit organizations had internal research departments. Further, they were unsuccessful in identifying someone who could understand and communicate effectively with researchers and practitioners. Ultimately, Cristalli explained, HFA formalized a contract in 2009 with a department within the School of Social Work at the University of Buffalo to “combine their two strengths—

core competencies of practice at Hillside and research at this academic research institution—to create a strategically focused research function at Hillside.”

Since the inception of the partnership, HFA has invested $800,000 in that partnership and continues to renew the arrangement. The model for this research partnership involves staff, parents, and young people, who help to determine projects and research questions. This model of research—the Hillside-UB (HUB) model—was documented, including a journal article published in 2012 in Research on Social Work Practice (Dulmus and Cristalli, 2012).

Through the HUB model, researchers examined HFA’s organization and management to determine readiness for change and research. This helped HFA determine a baseline of organizational climate and readiness to implement evidence-based practices across 120 different services. Other capacity-building steps included developing a field unit that included interns as research assistants to doctoral students conducting research, and developing an internal, federally registered Institutional Review Board (IRB) to review projects. The HFA IRB complements the IRB at the University of Buffalo and focuses on benefits and risks for the young people served by HFA. Cristalli shared that as HFA has expanded research partnerships with other institutions, they have come to see themselves as “in a transition from being only a service provider to also being a knowledge purveyor. We are now sharing and disseminating what we learn in the literature through invited book chapters and peer-reviewed publications.”

Cristalli illustrated HFA’s mix of service provision and research by describing the Hillside Work-Scholarship Connection (HW-SC) in Prince George’s County, Maryland, and upstate New York. The program, funded by a variety of foundations and public-private partnerships, targets young people in school districts that are at risk of not graduating from high school. The services provided to these young people include academic support, job-readiness training, family engagement, year-round enrichment activities, postsecondary support, and youth advocate mentoring. Research has indicated that participation in the program, along with part-time employment, improves the graduation rate from 50 percent (the rate of comparable students in the school district) to 90 percent for HW-SC students employed by an employment partner. According to Cristalli, this equates to an $11 return to the community and investors for every $1 invested. This program has also brought acclaim to HFA. HFA was recently named to the S&I 1008 list of organizations by the Social

______________

8The S&I 100 is an index of top nonprofits creating social impact, created by the Social Impact Exchange (http://www.socialimpactexchange.org/exchange/si-100 [December 2014]).

Impact Exchange for use of rigorous evaluation and research, and ability to replicate results.

A key element of the HW-SC is the use of predictive analytics to identify the target population. They used factors identified through previous research (such as low socioeconomic status, low standardized tests scores, failing core courses, suspensions from school, poor attendance, and being over age for their grade) to identify students at risk of not graduating from high school. Research first addressed whether these particular risk factors were in fact meaningful in the districts in which the program would be implemented, and then determined whether they could be used effectively to identify students who would most benefit from the HW-SC service. “We are now looking at full population data to make better decisions about selection of the young people in partnership with the school districts where we are serving. There is just so much of a need and we want to be sure that we target the need appropriately,” Cristalli stated.

Some of the research at HFA has included quasi-experimental design. To ensure best practices, HFA has hired outside evaluators to evaluate the HW-SC several times over the past 10 years. However, the data analytics work to identify the target population of the program has been done internally by HFA’s business intelligence staff, who continue to partner with a researcher at the University of Buffalo. HFA employs five business intelligence staffers and a full-time PhD-level research coordinator. Prior to using this data-driven process to target participants, she said, HFA merely recruited interested young people and checked their qualifications against the list of risk factors. Now, HFA uses district-level data to select participants.

Data indicate that the identified risk factors do in fact predict the likelihood of graduating from high school. Using these data, HFA has been able to develop a model of probability of graduating with 75 percent accuracy, Cristalli explained. The model showed that the HW-SC Program would be most effective for students with between a 15 and 79 percent likelihood of graduating, according to Cristalli. Students above that threshold were predicted not to need the program, and students below that threshold were predicted to need more intensive services than what the program would offer. This data-driven process has changed the practices of HW-SC, Cristalli shared. It uses full population data and works in partnership with schools to recruit students to increase the impact of its program.

Cristalli closed by reflecting on how HFA would respond to questions about R&D. “When you say research and development . . . we think more about program development. It is more about product or service development. We do not use those terms [research and development] together,”

she said. Cristalli added that across the organization, staff are increasing their comfort with and the use of data to make decisions.

Mote Marine Laboratory

Michael Crosby, president and CEO of Mote Marine Laboratory in Florida, presented his perspective on R&D in the nonprofit sector. He began by sharing the history of the organization. According to Crosby, Dr. Genie Clark founded Mote 60 years ago because of her passion for shark research. Partnerships were developed with local shark fishermen. Philanthropy came first from the Vanderbilt Family and later from William Mote.

Mote Marine Laboratory’s main campus is located in Sarasota, Florida, with seven campuses around Florida and the Florida Keys. It is a diverse organization but is “first and foremost a research institution, a comprehensive research institution,” said Crosby. He added that Mote also conducts significant amounts of public education and outreach. Half of the 200 staff is focused on science, approximately 33 of whom hold doctorates. A cadre of volunteers also support the research efforts. The research began with shark research, but now extends to 24 different research programs, such as coral reef ecology and microbiology, ocean acidification, sea turtle conservation and research, and phytoplankton ecology. The work extends around the world in six continents.

Mote is guided by a strategic plan and a vision statement. The vision statement is as follows:

Mote Marine Laboratory will expand our leadership in nationally and internationally respected research programs that are relevant to conservation and sustainable use of marine biodiversity, healthy habitats and natural resources. Mote research programs will positively impact a diversity of public policy challenges through strong linkages to public outreach and education.9

In addition to this vision for 2020, Mote’s strategic plan focuses on four main priorities centered around world-class research, translation and transfer of research and technology, and public service. Mote scientists have produced about 3,500 peer-reviewed publications. In addition to this focus on disseminating scientific findings to the research community, Mote also maintains a commitment to translating and transferring research knowledge through an aquarium, which serves as an informal science education center. More than 350,000 people visit the center each

______________

9The strategic vision for Mote Marine Laboratory: http://mote.org/about-us/mission-vision [December 2014].

year, including 29,000 precollegiate students who visit through structured programs.

Crosby emphasized that Mote is a private nonprofit organization that does not operate as a part of any governmental agency or university, although it has many partnerships with such entities. Through these partnerships, it also offers connections for undergraduate and graduate students to be engaged in research with Mote scientists. In the past 5 years, more than 100 graduate students have conducted research for theses or dissertations at Mote, with Mote scientists serving as mentors. Recently, the Florida State Legislature appropriated funds to Mote to provide such research experiences to students from local universities. Furthermore, Mote has developed a postdoctoral program aimed at “recruiting the next generation of scientists,” which is funded entirely through philanthropic donations. By 2020, Mote plans to have up to seven of these 2-year fellowships.

Mote has a $20 million annual operating budget, half of which comes from competitive research grants from entities such as the National Institutes of Health, the National Science Foundation, and the U.S. Department of Defense, explained Crosby. The remainder comes from a combination of philanthropy, which includes membership fees, and net positive revenues from the aquarium. Overall, Mote is funded entirely by “soft money,” and staff do not have contracts or tenured positions. According to Crosby, that way of operating “makes us very entrepreneurial. Because we are independent, we have research freedom as well, but it comes at a price. The price is we basically eat what we kill if you will. We have to bring the money in or we cannot provide positions there. Philanthropy is a huge piece of what enables Mote to do what it does.” Mote maintains very little bureaucracy and prides itself on remaining responsive and nimble. For example, when the BP Deepwater Horizon oil spill occurred in 2010, Mote was one of the first environmental responders, because the president of Mote immediately authorized it.

Crosby noted that Mote’s individual proportion of the total R&D budget for “Other nonprofits” in NSF’s 2014 Science and Engineering Indicators report, is approximately one-tenth of 1 percent; however, collectively with other large marine research institutions such as the Monterrey Bay Aquarium Research Institute and Woods Hole, these institutions can perform a significant amount of R&D with philanthropy playing an increasing role.

CHALLENGES

The presentations from representatives of the six different nonprofit organizations and the discussions that followed shed light on a number

BOX 3-1

Key Challenges for the Design of the NSF Nonprofit R&D Survey, as Identified Through Workshop Discussions

- Understanding the diverse and unique nature of R&D in the nonprofit sector.

- Using the correct language for communication about R&D.

- Accounting for the interconnections among nonprofits.

- Identifying the correct respondents.

- Understanding the financial and labor resources within nonprofits.

of complexities within the nonprofit sector that pose challenges for the design of the NSF Nonprofit R&D Survey, according to Lester Salamon and other participants. They identified five challenge areas, shown in Box 3-1. The remainder of this chapter addresses these challenges in more detail. Chapters 4 and 5 further the discussion by identifying ways, suggested by participants, that NSF could address these challenges in the design of its survey.

Understanding the Diverse and Unique Nature of R&D in the Nonprofit Sector

One of the primary reasons the workshop steering committee set up presentations by individuals from nonprofit organizations was to learn about the types of activities that might constitute R&D in this sector. As their representatives reported, the organizations vary in the extent to which research is a distinct activity versus being embedded within their programmatic activities. Raymond described the important “functional role of research” at many of these organizations, regardless of whether a research department, division, or budget line item exists.

Paul David commented on nonprofit R&D within a broader context. He stated that many economists view R&D “primarily as an indicator of investment in inventive activity, which is in turn an input into a larger stream of processes, which come under the heading of innovation.” These innovative processes are key sources of economic growth, and ultimately potential sources for the improvement of human welfare and well-being. David observed that the nonprofit sector is emblematic of the new service sector, an emerging sector of the economy and one that is highly information intensive. As such, its products are not physical, but rather new information services designed to have an impact. Innovations in this sector are placed in a residual category of other products, rather than the

technological, physical, and process innovations that lend themselves to patenting. These issues are growing in importance in an economy increasingly centered around digital products and processes, he noted. In David’s view, the present undertaking to produce new baseline measures of R&D in the broader nonprofit sector should be recognized as an important opportunity with valuable long-term “spill-over” benefits of two kinds. It can illuminate the diverse functional roles played by R&D and the modes in which these activities are performed by information-intensive service organizations. Additionally, it can be used to explore and test novel quantitative indicators of aggregate volume, distribution and durability of R&D investments in the growing “new services” sector. The measurement task that NSF is to carry out should be approached with its potential for yielding broader “pilot project” payoffs in mind, said David.

Irwin Feller, professor emeritus of economics at Pennsylvania State University and member of the workshop steering committee, suggested that much of the activities described by the presenters may be included or excluded in the R&D survey depending upon the extent to which NSF is interested in measuring activities around evaluation, applied research, program testing, and data collection. He suggested that data collection in the absence of a hypothesis being tested is not research. Feller stated, “The challenge for NSF, I think, in designing this survey is how tightly they adhere to the existing definition of R&D, or how flexible or accommodating they are in encompassing the multitude of activities that these organizations do.” Ron Fecso, a consultant and member of the workshop steering committee, noted that this poses a difficult issue for NSF to consider, but added that in industry, quality control activities are not considered research. Therefore, he argued, to the extent that program evaluation is for the purpose of ensuring the quality of services and conducting market research, it is not necessarily research. He stated that “really clear definitions as to where that line gets drawn may be very important.” Stone added excluding program evaluation would result in excluding most nonprofits. Feller indicated his belief that program evaluation should be included because, particularly in social science research, program evaluation constitutes a way to gather data that can be used to test theories.

Stone summarized another issue regarding the nature of R&D, commenting, “I think one of the questions is what you do about translational research. I do not call implementing an evidence-based practice as research, but I do call evaluating the implementation of evidence-based research with good science around it to see whether it worked or did not work as research.” Translational research is critical to nonprofits that tend to frame their activities this way, taking what they learn and using it to change practice or policy, she argued. Stone also raised the question about whether using data in feedback loops or conducting market research

would be the type of activities pertinent to the NSF survey of R&D. Finally, she suggested that, from her perspective, the terms “research and development” together constitute a certain type of activity. As she stated, “I think R&D is a specific thing and not research. Development is again something else. R&D is research and development.” Several other participants said all research activities should be counted, even those that lacked quality or rigor.

Salamon summarized his views on this topic by stating, “First of all, I was blown away by these presentations because what I think they demonstrated pretty powerfully is that there is something very important happening in the nonprofit world in conducting systematic data-gathering and research. Whether we come up with the right words for it or not, this trend is moving the sector in the direction of evidence-based decision making. And how fully that counts as ‘research’ in the terms that have been used to define R&D in NSF surveys is worth debating.” But Salmon and several others noted the nonprofits are doing important work worth capturing; he urged the group to find new ways to capture these activities. One participant suggested that nonprofits are qualitatively different from many other sectors, and that these qualitative aspects of their activities should be captured and not just quantified. He added that these differences should inform the survey design, noting that in some cases nonprofit institutions spin off technology into for-profit ventures.

Using the Correct Language for Communication about R&D

A second key challenge raised by many participants was identifying the correct terminology to use to ask nonprofit organizations about their R&D activities. They said the discussions at this workshop make clear that the traditional terminology of R&D does not work in many nonprofit organizations, and the way the nonprofits themselves think about their activities and the words they use for those activities can affect how they respond to survey questions. Among the alternative terms that participants suggested were applied research, evidence-based decision making, translational research, data mining, testing, capturing information, or experimenting.

Harpstead and others suggested a clear definition at the beginning of the survey of what is meant by “research” would be necessary for organizations such as hers to answer questions about these activities. She added that some of her colleagues at other organizations might consider documenting their annual outcomes as research, whereas, she observed that the planned survey seems to be targeting research that tests hypotheses. In Harpstead’s words, “perhaps you have to start your survey with a clear definition of what you call research and then ask how many of us do

that. You get a very different answer than if you say, ‘Do you do research in your nonprofit?’”

Mickle argued that the definition needs to be put in “plain English” for respondents. She added that the breadth of the question and types of activities included could affect how easily she could identify the staff, volunteers, and other resources who do those activities, because they may cut across many areas of her organization. This would require making estimates of what percentage of time various staff members spend on activities that constitute R&D.

One potential way of incorporating language to help communicate the distinctions about the nature of R&D in the nonprofit sector would be to ask respondents a series of questions, suggested Michael Larsen, professor of statistics at George Washington University and member of the workshop steering committee. For example, he noted, the Current Population Survey asks multiple questions, rather than a single question, to determine whether someone is active in the labor force, and if so, unemployed. Similarly, a series of questions may help to tease apart the subtle distinctions between research done for evaluation and research done for other purposes, he stated. Responses to these questions can be used to determine whether particular activities would be counted for the purposes of the survey. According to Larsen, “you might not end up with a single estimate. You might end up with ‘This is the estimate if we are strict. This is the estimate if we include a little bit more.’”

Salamon endorsed and expanded upon Larsen’s suggestion of using a series of questions. He suggested beginning with a lead question, and then following up with a series of prompts that include terms such as those listed above. Several participants suggested conducting a pilot study to test these terms. Feller reflected this view by noting, “It is such an important but fluid kind of issue that has such important impact, that it might be worthwhile just to test it.”

Donald Dillman, Regents Professor of Sociology at Washington State University and member of the workshop steering committee, observed that the way that an organization sees its purpose has an important effect on how it will respond to a question. For example, some organizations are highly rewarded for doing R&D and thus may work to include as many of their activities as possible in the survey; the opposite may be true for another organization whose board focuses on service delivery and does not support such activities. Cristalli agreed with Dillman, stating that this phenomenon occurs both among board members and staff members. Furthermore, she added, many traditional funders of nonprofits, including county and state governments, do not pay for research and want to be assured that such activities are not among those for which they provide funds.

Accounting for the Interconnections Among Nonprofits

A third challenge identified by many participants in surveying nonprofits is that many of these organizations are interconnected. This makes it difficult to identify which of them should be made eligible for sampling and leads to potential double-counting of reported R&D. Raymond drew attention to the range of partnerships that exist between organizations. They include partnerships between two or more nonprofits, or they may exist between a nonprofit and a corporation or an academic institution. Stone agreed and noted that nonprofits are more likely to engage in these types of partnerships than are for-profit entities. Nonprofits are more likely to need to be collaborative to pool resources, she said, whereas for-profit organizations are often more protective of their systems. Stone suggested that the survey might need to be able to detect some of these structural relationships.

As illustration, a number of nonprofit organizations are joined together as umbrella groups, consisting of a “lead” organization and many smaller member organizations. R&D activities may take place within some or all of the individual organizations (e.g., the Lutheran Social Service of Minnesota as a part of Lutheran Social Service of America), and the “lead” organization may or may not be aware of that research activity. Alternatively, the R&D is sometimes directed by the “lead” organization itself (LeadingAge), and the individual member organizations may or may not be participants. Harpstead cautioned that surveying individual organizations that are part of an umbrella group could result in counting a collaborative effort occurring within the umbrella group multiple times.

Salamon agreed with Harpstead and suggested that thinking of individual organizations as potential sampling units may not work for this NSF survey. Rather, he said, “maybe there is a way to short circuit it and to use a kind of wholesale approach. That would be, for example, going out to some of these umbrella groups and essentially subcontracting the surveying to them.” In essence, Salamon suggested that the “lead” organization in the umbrella group could survey its members about their research activities and what resources are devoted to them, while adding any research activities carried out by the “lead” organization itself. He argued that this could reduce potential double-counting of research efforts, and data about research efforts could be aggregated across an entire organizational structure (umbrella group).

The interconnections are even broader, several people pointed out. Some nonprofit organizations conduct research in-house through their intramural research programs. Others may provide extramural research funds to other organizations that will conduct the research. Some nonprofits, such as the American Cancer Society, have both types of research

within their portfolio. According to Mickle, this issue could be significant for a number of nonprofit organizations.

Stone elaborated on Mickle’s observations, saying that because many nonprofit organizations are funded through grants, donations, and other “soft money,” the amount of and ways in which they are engaging in research will be variable, particularly among individual member organizations that are part of umbrella groups. Some of these organizations have their own research institutes, while others do not. She added that some of this research would be through partnerships with academic institutions.

Several workshop participants expressed serious concerns that the interconnected networks and ways of conducting research within the nonprofit sector could exacerbate the likelihood of double-counting research efforts in the NSF survey. Collaborations, partnerships, and networks increase the risk of double-counting, suggested Salamon. One way that this can occur is when the nonprofit is a research funder, but counts funded research as part of its R&D at the same time that the funded entity also reports this same activity. Different members of an umbrella group may report the same research and the same resources applied to that research. The ways in which government agencies and academic institutions report their R&D activities may result in double-counting, as well. According to Stone, this may be an issue for foundations that fund a great deal of research. As she stated, “their output is our input.” Salamon reminded the group that the survey was intended to focus on the performers of research, rather than the funders. Dillman said careful wording of the survey was needed to avoid confusion on that point.

Salamon indicated that addressing the challenge of the interconnections among the nonprofit sector begins with recognizing that some research is going on and “throwing the net broadly.” Even with this inclusive strategy, he said, a careful sampling plan with weighting is needed. “I do not think the argument that some are doing it intensely and some not so intensely at a particular moment in time is a reason not to go after this broader approach, but rather a reason to be pretty careful about the sampling and data collection strategies,” said Salamon.

Identifying the Correct Respondents

Identifying the correct respondent is a fourth key challenge for the survey, according to many participants. Mickle agreed and said that even within a single organization, responses to questions about R&D will vary depending upon who completes the survey. She explained that while the ACS scientific research staff would answer in one way, her market research staff would answer another way because each thinks of research in different ways. Eisen echoed this notion in her presentation; while her

leadership team suggested that the beekeeping organization conducts ongoing research, Eisen disagreed because her standards for research differ from that of her colleagues. Stone expressed similar views.

Raymond emphasized identifying the correct respondent was a critical issue and the correct respondent might vary across organizations. In other words, she said, a person’s title may not be the ideal basis by which to select a respondent. Salamon added that a series of questions might be the best approach for this issue as well. As a possible solution, he suggested asking, “‘Who in your organization will know most about the following types of activities?’ as opposed to pre-selecting the individual that we ask to be a respondent. Let the organization determine within its own situation who the person or persons are that know about the things that we are asking about.”

Jeffry Berry, professor of political science at Tufts University, said the challenge of finding the right respondent is both challenging and costly, and would likely involve hiring staff to contact potential respondents on the telephone. He suggested one possibility for implementing the survey would be “drawing the sample randomly and then arranging for specific people to participate. This would involve seeking an agreement with those respondents and providing them with a person they could come back to and ask questions if they run into trouble filling out the survey. The measurement problems are really very difficult here. This is a preferred alternative to just the random sample and throwing the surveys out in the mail.”

Further discussion of this topic is included in Chapter 5.

Understanding the Financial and Labor Resources within Nonprofits

Several participants pointed out volunteers are a significant portion of the labor resources used by nonprofit organizations. Although participants did not discuss this topic at great length, determining how to count volunteer hours is a challenge for this survey whose intent is to measure resources spent on R&D within the nonprofit sector. Salamon identified this issue in his summary remarks, stating, “If we leave out the role of volunteers, we are going to significantly undercount the economic value and implicit cost.” He added that this is an issue across sectors in terms of measuring labor, with a trend within the statistical community toward including “unpaid work . . . putting a value on it and bringing it into economic accounting.” He cited the examples of staff comprised entirely of volunteers, such as the Prince William Regional Beekeepers Association. Similarly, the American Cancer Society uses a large number of volunteers in recruiting participants for a large research study. Mickle said that ACS uses volunteers in many research-related roles, so whether to include this

volunteer labor would affect her estimates of labor devoted to research significantly. She added that the donated services of volunteers are critical to ACS’ operations, and it would be important to quantify the value that these volunteers provide.

Counting paid versus unpaid staff is only one of the complexities that relate to how financial and labor resources devoted to R&D are counted in the nonprofit sector. Several participants raised other issues related to funding that may be pertinent to the NSF survey, such as the timing of funding and whether there were internal funds available for research. In cases where the research funding is dependent upon grants, research activities could vary from year to year, noted Raymond. She also noted philanthropists who donate funds often have a shorter timeframe by which they expect to see results than is the case with many research studies.

Crosby offered a different perspective on the role of philanthropy for nonprofits. In his view, philanthropy offers a greater flexibility and risk-taking, often not valued or available through other sources. In addition, philanthropy is increasing and offers valuable resources at a time when government funding for scientific activities has been decreasing. Although some have voiced concern that this type of partnership between philanthropy and research may be a shift away from national priorities, in Crosby’s opinion, there is value in challenging existing paradigms and doing innovative research. Nonprofit research institutions are uniquely positioned for “being nimble, being entrepreneurial, for taking risks,” stated Crosby. Finally, he indicated he hoped a revamped survey of R&D in the nonprofit sector would allow for greater visibility for philanthropy and for the nonprofit sector.

CHAPTER SUMMARY

Presenters shared their experiences and perspectives on R&D at their nonprofit organizations. Many workshop participants voiced their opinions that nonprofit organizations are conducting research worthy of being captured in the NSF survey. However, as this chapter illustrates, the complexity of this sector presents a number of conceptual and methodological challenges to address in developing the NSF Nonprofit R&D Survey. Chapters 4 and 5 provide some guidelines suggested by presenters for meeting these challenges and designing the survey.

This page intentionally left blank.