5

Toward Efficient and Sustainable Delivery of Interventions

Delivery of evidence-based prevention on a large scale has required the development of measures to monitor implementation, including selection and adoption of evidence-based programs, training and technical assistance, fidelity monitoring, and other factors. Measurement of implementation factors can provide information related to the decision-making process for evidence-based prevention, quality of program or service delivery, sustainability of implementation, and influence of implementation factors on intervention outcomes. In the final panel of the workshop, four presenters talked about lessons learned from implementation monitoring and recommendations for moving the field forward given the need for sustained program quality to improve outcomes.

MEASURING IMPLEMENTATION OF EVIDENCE-BASED PREVENTION: LESSONS FROM COMMUNITIES THAT CARE

The National Research Council (NRC) and Institute of Medicine (IOM) report Preventing Mental, Emotional, and Behavioral Disorders Among Young People (2009) summarized the burgeoning knowledge base for prevention science. It noted that 40 years of prevention science research advances have produced a strong understanding of the epidemiology and etiology of problem behaviors, a wealth of efficacy trials that have tested preventive interventions, and research findings on how to build an effective infrastructure to use prevention science to achieve community impact.

“If only it would be so easy,” said Richard Catalano, the Bartley Dobb Professor for the Study and Prevention of Violence and co-founder of the

Social Development Research Group in the School of Social Work at the University of Washington. Despite the research advances that have occurred, prevention approaches that do not work or have not been evaluated are much more widely used than are evidence-based programs (Ringwalt et al., 2009). “The challenge for the 21st century is how can we build a prevention infrastructure to increase the use of tested and effective prevention policies and programs with fidelity and impact at scale,” Catalano said.

A major difficulty in overcoming this challenge is that communities are different from one another, and communities need to decide locally what policies and programs they use. Overcoming this difficulty requires building the capacity of local coalitions to reduce common risk factors for multiple negative outcomes, according to Catalano, which in turn requires several actions:

- Assessing and prioritizing epidemiological levels of risk, protection, and problems

- Choosing proven programs that match local priorities

- Implementing chosen programs with fidelity to those targeted

Catalano used the Communities That Care (CTC) program as an example of this approach. CTC is a proven method to build community commitment and capacity to prevent underage drinking, tobacco use, and delinquent behavior, including violence. Developed in 1988, the program underwent 15 years of implementation and improvement through community input prior to being tested in a randomized controlled trial involving 12 pairs of matched communities across 7 states from Maine to Washington. The positive effects of the program have been independently replicated in a statewide test in Pennsylvania.

CTC has succeeded by building a prevention infrastructure, said Catalano. It first creates citizen coalitions of diverse stakeholders, including key leaders, elected officials, judges, faith leaders, parents, educators, and business leaders. These groups assess and prioritize risk, protection, and behavior problems with a student survey. They address locally prioritized risk with tested and effective prevention programs that are matched to those priorities. Then they support and sustain high-fidelity implementation of these chosen programs.

The trial of CTC provided funding for the selected programs, training in the selected programs, and fidelity and reach monitoring of the selected programs, along with funding for a community coordinator and training. It also provided weekly phone technical assistance and two site visits per year. Assessment surveys are done every 2 years.

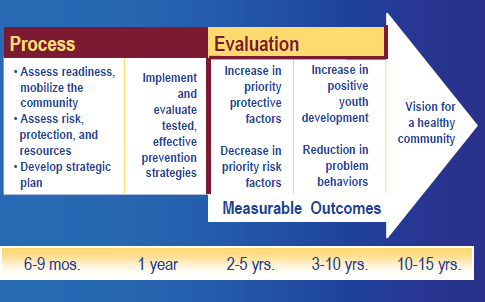

The timeline for implementation encompasses the process, evaluation, and measurable outcomes (see Figure 5-1). Milestones and benchmarks

FIGURE 5-1 The Communities That Care timeline. The timeline calls for measurable outcomes 2 to 5 years after the process begins.

SOURCE: Catalano, 2014.

assess the key components of CTC’s strategy, including goals, steps, actions, and conditions needed for CTC implementation to build prevention infrastructure. The milestones and benchmarks are listed in CTC training manuals and discussed in training workshops, incorporated into the community coordinator’s job performance objectives, and reviewed by technical assistance providers and coordinators during weekly phone calls.

As an example of phase 1, readiness for CTC, Catalano cited the milestone of “the community is ready to begin CTC,” with the more specific benchmark “A key leader ‘champion’ has been identified to guide the CTC process.” For phase 5, implementing the Community Action Plan, a milestone is “implementers of evidence-based programs, policies, or practices have the necessary skills, expertise, and resources to implement with fidelity,” with the benchmark “implementers have received needed training and technical assistance.”

The CTC implementation has been maintained with fidelity over time, Catalano noted. The percentage of milestones completed across communities and raters ranged between 83 percent and 91 percent in the fifth year (Fagan et al., 2009).

A second measurement instrument is the CTC youth survey, which assesses young people’s experiences and perspectives in the sixth, eighth, tenth, and twelfth grades. It identifies levels of risk and protective factors

for substance use, crime, violence, and depression at the state, district, city, school, or neighborhood levels and provides a foundation for the selection of tested and effective actions.

The selection of programs based on the survey results presents a challenge to measurement strategies, noted Catalano, because it generates the need to evaluate fidelity across a range of programs while continuing to encourage local ownership, high fidelity, and the sustainability of prevention programs. All CTC sites are expected to achieve a high level of fidelity, including

- Adherence—Implementing the core content and components

- Delivery of sessions—Implementing the specified number, length, and frequency of sessions

- Quality of delivery—Ensuring that implementers are prepared, enthusiastic, and skilled

- Participant responsiveness—Ensuring that participants are engaged and retaining material

To measure fidelity, assessment checklists were obtained from developers or created by research staff. The checklists were completed by program staff, coalition members, and reviewed locally as well as analyzed at the University of Washington. These checklists showed high levels of implementation adherence and participant responsiveness, said Catalano.

Catalano concluded with the following recommended actions:

- Build capacity and provide tools (such as the CTC milestones and benchmarks) to achieve effective prevention infrastructure.

- Build capacity and provide tools to assess and prioritize local risk, protection and youth outcomes, match priorities to evidence-based programs, and repeat assessment periodically.

- Build capacity and provide tools to ensure program fidelity and engagement of target population.

- Create citizen–advocate–scientists to affect risk, protection, substance use, delinquency, and violence community wide.

CTC has been able to get communities to choose the right programs, implement them with fidelity, and achieve positive outcomes, Catalano said. Furthermore, creating citizen-scientists has built advocacy for evidence-based prevention at the local level. These citizens are in the best position to support evidence-based practices in their communities, the best choices for children and families, and taking evidence-based prevention to scale.

In response to a question, Catalano pointed to the interplay that can occur between states and local communities, with each interested in what is

happening at the other level. For example, communities can be the impetus to get a state involved, or a two-way feedback loop can result in modifications to programs at both levels.

THE STAGES OF IMPLEMENTATION COMPLETION

A growing body of measures target key aspects of implementation, including organizational culture and climate, organizational readiness, leadership, attitudes toward evidence-based practices, and the use of research evidence. However, there remains a gap in the measure of the implementation process itself, said Lisa Saldana, senior research scientist at Oregon Social Learning Center. This gap encompasses measures of the implementation process, including the rate of implementation, implementation activities, and patterns of implementation behavior, along with measures of implementation outcomes, including milestones and penetration.

Implementation of evidence-based practices entails extensive planning, training, and quality assurance, Saldana noted. It also requires a complex set of interactions among developers, system leaders, frontline staff, and consumers, who ultimately have to buy in to whether the interventions meet their needs. This is a recursive process of well-defined stages or steps that are not necessarily linear, said Saldana.

Little is known about which methods and interactions are most important for successful implementation. In addition, little is known about how and if the process influences successful outcomes. “Just because this is the way we have been doing it does not necessarily imply that that is what is necessary,” said Saldana.

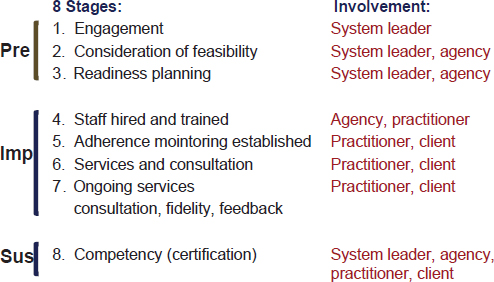

The Stages of Implementation Completion (SIC) was developed as part of an implementation trial focused on the scale-up of the Multidimensional Treatment Foster Care (MTFC) intervention (Chamberlain et al., 2011). Fifty-three sites in California and Ohio involved in youth foster care were observed over the entire implementation process. Some sites dropped off along the way, providing an opportunity to observe the factors that influenced how far they got in the implementation process. A tool was developed to measure the rate and thoroughness of implementation. Eight stages of implementation were measured, from engagement to competency. The tool was not only data driven but date driven, said Saldana, in that activities were tracked along with the dates on which they occurred. The SIC involves assessment of implementation behavior of different levels of agents. The eight stages of implementation were broken down into the three phases of pre-implementation, implementation, and sustainment, involved different levels of agents (see Figure 5-2).

The SIC yields three overall scores. The first is duration, or the amount of time that it takes to complete a stage. The second is proportion, or the

FIGURE 5-2 The eight Stages of Implementation Completion. The stages are divided into pre-implementation, implementation, and sustainment phases.

SOURCE: Saldana et al., 2011.

number of recommended activities that are completed. The third is the stage score, which is how far along within the implementation process an agency or organization has progressed.

The recursive nature of implementation makes it impossible to look at each stage across the entire SIC, said Saldana. Agencies might be in more than one stage at a time, or they might also be going backward because of feedback from stakeholders. Rasch-based modeling helps account for these difficulties, said Saldana. This modeling has demonstrated reliability across all eight stages. It also has demonstrated face validity while identifying three clusters of sites based on their pre-implementation behavior: those that complete activities relatively quickly, those that are relatively slow, and noncompleters. Finally, it has demonstrated predictive validity in that sites that both took longer to complete each stage and completed fewer activities had a significantly lower hazard of successful program start-up during the study period (Saldana et al., 2011). “You have to hit a little bit of a sweet spot,” said Saldana. “You don’t want to go too fast. You also don’t want to go too slow. You don’t necessarily need to complete all of the activities, but there are some critical key activities that, if you skip, your chances of success are going to be decreased.”

These initial results have been successfully replicated in other sites. In a sample of 75 recent MTFC interventions, sites were successfully clustered,

failed sites spent significantly longer in pre-implementation than successful sites, and sites that took longer to complete stages one through three had a significantly lower hazard of successful program start-up (Saldana, 2014).

In response to a question, Saldana noted that the pre-implementation process is also key, in that it predicts competencies that are needed for the sustainment phase. And during the sustainment phase, all levels of agents need to be involved for sustainability within a community, with ongoing dialogue and feedback between programs and policy makers.

Saldana and her colleagues are now trying to adapt the SIC to other programs and service sectors. Will similar utility be found, she asked. Is there a universality in implementation? One finding from a much broader study of interventions in schools, child welfare, juvenile justice, and substance abuse is that there are more similarities than differences in the types of activities they are including in their implementation strategies (Saldana et al., 2014). As a result, a universal SIC was being developed at the time of the workshop, and “preliminary studies of the universal SIC psychometrics are very promising,” according to Saldana. This could lead to a standardized method of measuring implementation processes and milestones across different practices and service sectors, which could make it possible to detect sites that are at risk for implementation failure and evaluate alternative implementation strategies.

SIC can reliably distinguish poor versus good performers, Saldana concluded. It can reliably distinguish between different implementation strategies and provides meaningful prediction of implementation milestones. Results from the SIC also can provide data-driven evidence that can be used to engage in conversations with high-level systems leaders, who also have a powerful influence on implementation. For example, Saldana and her colleagues have been developing an interactive website that can provide high-level systems leaders with information on the value of key elements in the implementation process.

IMPLEMENTING PROGRAMS WITH FIDELITY

When programs that were developed in academia under well-controlled conditions are transferred into the community, they are not necessarily implemented the same way they were while being developed. Marion Forgatch, senior scientist emerita at the Oregon Social Learning Center and executive director of Implementation Sciences International Inc., illustrated this process by discussing implementations of the Parent Management Training Oregon (PMTO) model, which provides interventions to parents to help protect children and enhance their development (Forgatch and Patterson, 2010). Randomized controlled trials in a variety of sample populations have linked parenting practices not only with positive outcomes for children but with improved outcomes for the parents.

The program has been implemented in a number of locations through a process known as full transfer. Control of the program is placed into the hands of the community. As part of a broader infrastructure, a governing authority is tasked with sustaining model fidelity and effective treatment outcomes. This process starts with a visionary leader or group committed to affecting lasting change, said Forgatch. This person or group needs strong social and political capital and the resources to support the necessary structure. A leader also needs longevity; “They are not here today and gone tomorrow,” said Forgatch. “It starts at the top, but it is sustained at the bottom by the families and the practitioners who are satisfied with the methods and the outcomes.”

The full transfer approach has tremendous capacity to improve reach, because each new generation of practitioners who are trained can in turn train other practitioners. For example, from a first generation of 29 practitioners trained in Norway, more than 1,000 have now been trained in an intervention provided to families in clinics. “We spend a lot of time training the first generation,” said Forgatch. “These are the people who carry the program forward, so they have to know the model, and they have to know the procedures really well.” The program has been implemented throughout the United States, in Europe, and in locations in Africa and Latin America.

The implementation process is evaluated through an instrument called the fidelity of implementation (FIMP). This measure is based on direct observation of therapy sessions, with ratings by observers of practitioner adherence to the model and competence. Ratings are in five categories:

- Knowledge—Proficiency in understanding and applying core components

- Structure—Session management, pacing/timing, responsiveness

- Teaching—Promotes mastery, use of role play, problem solving

- Process—Clinical and strategic skills, supportive context for learning

- Overall—Growth, satisfaction, likely return, adjust context, difficulty

Sessions are scored to evaluate training and certification, drift across and within generations of practitioners, and to assess mechanisms of change. The only people able to score FIMP are certified therapists to ensure that competent adherence to the model is being properly assessed.

Technology is a central feature of the evaluation. A database and web portal known as FIMP Central provide videotapes of sessions where coders can access the video and make their ratings, with the ratings being retained on the portal so supervisors of the coding teams can assess reliability. Online and in-person meetings further enhance the reliability of assessments.

FIMP has several uses, including

- Teaching tool for training and coaching

- Evaluation of training and certification

- Evaluation of drift across generations

- Evaluation of drift within a generation

- Assessment of mechanisms, such as whether fidelity predicts improved parenting and whether fidelity predicts improved parent outcomes

In the last category, for instance, Forgatch cited a recent study showing that fidelity assessed during treatment predicts change in observed parenting before and after treatment and change in child outcomes (Forgatch and Domenech Rodríguez, in press). “We are very pleased with those findings. It said, ‘Yes, our fidelity measure is indeed predicting the mechanism of change in our model.’”

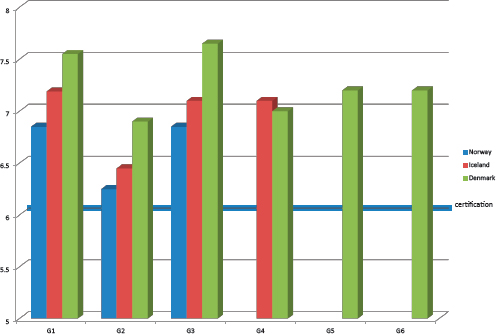

FIMP also has been used to study the fidelity of implementation over successive generations of training. These results showed that fidelity does drop in the second generation of training, but it then recovers as successive generations of trainers improve their training skills (see Figure 5-3).

MEASURING IMPLEMENTATION FIDELITY USING COMPUTATIONAL METHODS

In the past, measuring the fidelity of implementation has generally involved direct observation or the observation of recordings, which requires highly trained individuals and can be time consuming and costly. But the fidelity of implementation also can be measured using computational methods, said Carlos Gallo, a research assistant professor in the Department of Psychiatry and Behavioral Sciences at Northwestern University. He provided two examples as proofs of concept: one from a program known as Familias Unidas, and the other from audio recordings of an intervention known as the Good Behavior Game.

Familias Unidas is an evidence-based parent training intervention for Hispanic youth. It is delivered in family visits at home by a school counselor, generally in a bilingual context, where an adolescent or young adult is speaking English, a parent is speaking Spanish, and a school counselor is switching between the two. The goal of the computational analysis is to recognize the type of questions or statements the facilitator is making to the parents to engage them and communicate acceptance, trust, and respect. The evaluation focuses on an aspect of fidelity known as joining. Linguistic structures linked to joining include statements (such as “You like school.”), yes/no questions (such as “Do you like school?”), and open-ended questions

FIGURE 5-3 Implementation fidelity over generations of trainers. Though implementation fidelity declined in the second generation of trainers, it did not drop below the certification level, and fidelity recovered in subsequent generations.

NOTE: X-axis shows trainer generation number, Y-axis depicts implementation fidelity level.

SOURCE: Forgatch, 2014.

(such as “Why do you like school?”). The more open-ended the question, the better the degree of joining.

Gallo and his colleagues wrote a program that scans for these linguistic patterns and then rates each sentence (Gallo et al., 2014). The program can identify different kinds of statements with a high degree of reliability compared with a human rater. But the cost of a human rater is approximately $800 per session, which, said Gallo, “becomes prohibitively expensive as local agencies want to pick up on these interventions and carry on the same work carefully.”

The second example he described is based on nonlinguistic cues. The Good Behavior Game is a universal classroom behavior management strategy for first grade teachers that has been shown to influence adolescent and young adult drug abuse, sexual risk behavior, delinquency, and suicidal behavior (Kellam et al., 2008). It calls for the intervention to be delivered by the teacher in a neutral tone, not an angry, frustrated, or sad tone. “You

can imagine how challenging this can be when you are giving instructions to 40 kids that are running around in a classroom. You need to keep sane and speak neutrally.” Furthermore, speaking neutrally is a key competence in this intervention, though coaches for the intervention find that doing so is challenging.

In a laboratory test, Gallo and his colleagues have taken audio clips spoken by an angry person or a person speaking neutrally and have enabled a computer to learn about features of the frequency domain for these samples. By analyzing patterns in voice frequency, the computer can determine whether something is said in an angry or neutral way. The program is 98 percent accurate in distinguishing a neutral from an angry tone, and 87 percent successful in distinguishing a neutral from an emotional tone. “This is something that we can do in a split second throughout an hour session,” Gallo noted. Teachers then can be asked in the same session when and why an emotion started and how it progressed, rather than trying to remember days after a session was recorded.

Computational linguistics has several other advantages over human raters, Gallo noted. It can scan 100 percent of a session, not just a sample of that session, and it can do so on a much more detailed level. Eventually, such programs could be adapted for tablets or phones, where they could display summary statistics of what happened in a session. They can flag particular moments in a session for review and analysis. Facilitators can receive global assessments of their delivery of an intervention and compare themselves with peers. Such programs also could point to places where teachers or other facilitators are adapting an intervention for a particular circumstance, which could provide valuable lessons. “These facilitators know their community a lot better. They know what are the needs of the target audience.”

These techniques are developing very rapidly, Gallo noted, driven partly by the very wide range of applications for computational linguistics. For example, the programs they have developed could be applied to many other interventions because the linguistic processes they have analyzed occur in many contexts. Furthermore, as several workshop participants observed in the discussion session, resistance to such applications is diminishing as people become more accustomed to being recorded.

Catalano, R. F. 2014. Measuring implementation of evidence-based prevention to improve impact and sustainability: Lessons from Communities That Care. Presented at Institute of Medicine and National Research Council Workshop on Innovations in Design and Utilization of Measurement Systems to Promote Children’s Cognitive, Affective, and Behavioral Health, Washington, DC.

Chamberlain, P., C. H. Brown, and L. Saldana. 2011. Observational measure of implementation progress in community based settings: The Stages of Implementation Completion (SIC). Implementation Science 6:116.

Fagan, A. A., K. Hanson, J. D. Hawkins, and M. Arthur. 2009. Translational research in action: Implementation of the communities that care prevention system in 12 communities. Journal of Community Psychology 37(7):809-829.

Forgatch, M. S. 2014. Fidelity: Preventing drift. Presented at Institute of Medicine and National Research Council Workshop on Innovations in Design and Utilization of Measurement Systems to Promote Children’s Cognitive, Affective, and Behavioral Health, Washington, DC.

Forgatch, M. S., and M. M. Domenech Rodríguez. in press. Interrupting coercion: The iterative loops among theory, science, and practice. In Oxford Handbook of Coercive Relationship Dynamics edited by T. J. Dishion and J. J. Snyder. New York: Oxford University Press.

Forgatch, M. S., and G. R. Patterson. 2010. Parent Management Training–Oregon Model: An intervention for antisocial behavior in children and adolescents. In Evidence-Based Psychotherapies for Children and Adolescents, 2nd edition, edited by J. R. Weisz and A. E. Kazdin. New York: Guilford.

Gallo, C. G., H. Pantin, J. Villamar, G. Prado, M. Tapia, M. Ogihara, G. Cruden, and C. H. Brown. 2014. Blending qualitative and computational linguistics methods for fidelity assessment: Experience with the Familias Unidas preventive intervention. Administration and Policy in Mental Health. E-pub ahead of print. http://dx.doi.org/10.1007/s10488014-0538-4 (accessed August 4, 2015).

Kellam, S. G., C. H. Brown, J. M. Poduska, N. S. Ialongo, W. Wang, P. Toyinbo, and H. C. Wilcox. 2008. Effects of a universal classroom behavior management program in first and second grades on young adult behavioral, psychiatric, and social outcomes. Drug and Alcohol Dependence 95:S5-S28.

NRC (National Research Council) and IOM (Institute of Medicine). 2009. Preventing mental, emotional, and behavioral disorders among young people: Progress and possibilities. Washington, DC: The National Academies Press.

Ringwalt, C., A. A. Vincus, S. Hanley, S. T. Ennett, J. M. Bowling, and L. A. Rohrbach. 2009. The prevalence of evidence-based drug use prevention curricula in U.S. middle schools in 2005. Prevention Science 10(1):33-40.

Saldana, L. 2014. The stages of implementation completion for evidence-based practice: Protocol for a mixed methods study. Implementation Science 9(43):1-11.

Saldana, L., P. Chamberlain, W. Wang, and H. Brown. 2011. Predicting program start-up using the Stages of Implementation Measure. Administration and Policy in Mental Health and Mental Health Services Research 39(6):419-425.

Saldana, L., J. Chapman, H. Schaper, and M. Campbell. 2014. Standardized measurement of implementation: The universal SIC. Presented at the 7th Annual Conference on the Science of Dissemination and Implementation, Bethesda, MD.