This chapter provides an overview of diagnosis in health care, including the committee’s conceptual model of the diagnostic process and a review of clinical reasoning. Diagnosis has important implications for patient care, research, and policy. Diagnosis has been described as both a process and a classification scheme, or a “pre-existing set of categories agreed upon by the medical profession to designate a specific condition” (Jutel, 2009).1 When a diagnosis is accurate and made in a timely manner, a patient has the best opportunity for a positive health outcome because clinical decision making will be tailored to a correct understanding of the patient’s health problem (Holmboe and Durning, 2014). In addition, public policy decisions are often influenced by diagnostic information, such as setting payment policies, resource allocation decisions, and research priorities (Jutel, 2009; Rosenberg, 2002; WHO, 2012).

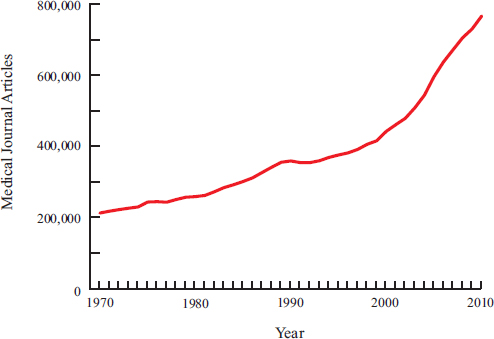

The chapter describes important considerations in the diagnostic process, such as the roles of diagnostic uncertainty and time. It also highlights the mounting complexity of health care, due to the ever-increasing options for diagnostic testing2 and treatment, the rapidly rising levels of biomedical and clinical evidence to inform clinical practice, and the frequent comorbidities among patients due to the aging of the popula-

______________

1 In this report, the committee employs the terminology “the diagnostic process” to convey diagnosis as a process.

2 The committee uses the term “diagnostic testing” to be inclusive of all types of testing, including medical imaging, anatomic pathology, and laboratory medicine, as well as other types of testing, such as mental health assessments, vision and hearing testing, and neurocognitive testing.

tion (IOM, 2008, 2013b). The rising complexity of health care and the sheer volume of advances, coupled with clinician time constraints and cognitive limitations, have outstripped human capacity to apply this new knowledge. To help manage this complexity, the chapter concludes with a discussion of the role of clinical practice guidelines in informing decision making in the diagnostic process.

OVERVIEW OF THE DIAGNOSTIC PROCESS

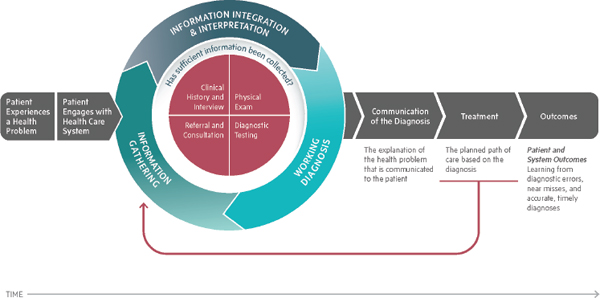

To help frame and organize its work, the committee developed a conceptual model to illustrate the diagnostic process (see Figure 2-1). The committee concluded that the diagnostic process is a complex, patient-centered, collaborative activity that involves information gathering and clinical reasoning with the goal of determining a patient’s health problem. This process occurs over time, within the context of a larger health care work system that influences the diagnostic process (see Box 2-1). The committee’s depiction of the diagnostic process draws on an adaptation of a decision-making model that describes the cyclical process of information gathering, information integration and interpretation, and forming a working diagnosis (Parasuraman et al., 2000; Sarter, 2014).

The diagnostic process proceeds as follows: First, a patient experiences a health problem. The patient is likely the first person to consider his or her symptoms and may choose at this point to engage with the health care system. Once a patient seeks health care, there is an iterative process of information gathering, information integration and interpretation, and determining a working diagnosis. Performing a clinical history and interview, conducting a physical exam, performing diagnostic testing, and referring or consulting with other clinicians are all ways of accumulating information that may be relevant to understanding a patient’s health problem. The information-gathering approaches can be employed at different times, and diagnostic information can be obtained in different orders. The continuous process of information gathering, integration, and interpretation involves hypothesis generation and updating prior probabilities as more information is learned. Communication among health care professionals, the patient, and the patient’s family members is critical in this cycle of information gathering, integration, and interpretation.

The working diagnosis may be either a list of potential diagnoses (a differential diagnosis) or a single potential diagnosis. Typically, clinicians will consider more than one diagnostic hypothesis or possibility as an explanation of the patient’s symptoms and will refine this list as further information is obtained in the diagnostic process. The working diagnosis should be shared with the patient, including an explanation of the degree of uncertainty associated with a working diagnosis. Each time there is a

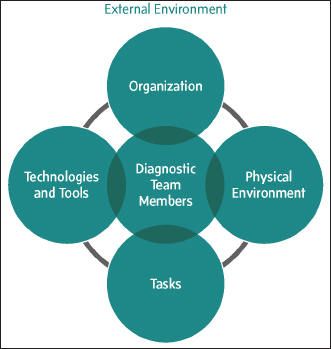

The diagnostic process occurs within a work system that is composed of diagnostic team members, tasks, technologies and tools, organizational factors, the physical environment, and the external environment (see figure on opposite page) (Carayon et al., 2006, 2014; Smith and Sainfort, 1989):

- Diagnostic team members include patients and their families and all health care professionals involved in their care.

- Tasks are goal-oriented actions that occur within the diagnostic process.

- Technologies and tools include health information technology (health IT) used in the diagnostic process.

- Organizational characteristics include culture, rules and procedures, and leadership and management considerations.

- The physical environment includes elements such as layout, distractions, lighting, and noise.

- The external environment includes factors such as the payment and care delivery system, the legal environment, and the reporting environment.

All components of the work system interact, and each component can affect the diagnostic process (e.g., a change in the physical environment may affect the usefulness and accessibility of health IT, and a change in the diagnostic team may affect the assignment of tasks). The work system provides the context in which the diagnostic process occurs (Carayon et al., 2006, 2014). There is a range of settings (i.e., work systems) in which the diagnostic process can occur—for example, outpatient primary or specialty care office settings, emergency departments, inpatient hospital settings, long-term care facilities, and retail clinics. Each of these includes the six components of a work system—diagnostic team members and tasks, technologies and tools, organizational factors, the physical environment, and the external environment—although the nature of the components may differ among and between settings. The six components of the work system and how they are related to diagnosis and diagnostic error are described in detail in Chapters 4–7.

revision to the working diagnosis, this information should be communicated to the patient. As the diagnostic process proceeds, a fairly broad list of potential diagnoses may be narrowed into fewer potential options, a process referred to as diagnostic modification and refinement (Kassirer et al., 2010). As the list becomes narrowed to one or two possibilities, diagnostic refinement of the working diagnosis becomes diagnostic verification, in which the lead diagnosis is checked for its adequacy in explaining the signs and symptoms, its coherency with the patient’s context (physiology, risk factors), and whether a single diagnosis is appropriate. When considering invasive or risky diagnostic testing or treatment options, the

diagnostic verification step is particularly important so that a patient is not exposed to these risks without a reasonable chance that the testing or treatment options will be informative and will likely improve patient outcomes.

Throughout the diagnostic process, there is an ongoing assessment of whether sufficient information has been collected. If the diagnostic team members are not satisfied that the necessary information has been collected to explain the patient’s health problem or that the information available is not consistent with a diagnosis, then the process of information gathering, information integration and interpretation, and develop-

ing a working diagnosis continues. When the diagnostic team members judge that they have arrived at an accurate and timely explanation of the patient’s health problem, they communicate that explanation to the patient as the diagnosis.

It is important to note that clinicians do not need to obtain diagnostic certainty prior to initiating treatment; the goal of information gathering in the diagnostic process is to reduce diagnostic uncertainty enough to make optimal decisions for subsequent care (Kassirer, 1989; see section on diagnostic uncertainty). In addition, the provision of treatment can also inform and refine a working diagnosis, which is indicated by the feedback loop from treatment into the information-gathering step of the diagnostic process. This also illustrates the need for clinicians to diagnose health problems that may arise during treatment.

The committee identified four types of information-gathering activities in the diagnostic process: taking a clinical history and interview; performing a physical exam; obtaining diagnostic testing; and sending a patient for referrals or consultations. The diagnostic process is intended to be broadly applicable, including the provision of mental health care. These information-gathering processes are discussed in further detail below.

Clinical History and Interview

Acquiring a clinical history and interviewing a patient provides important information for determining a diagnosis and also establishes a solid foundation for the relationship between a clinician and the patient. A common maxim in medicine attributed to William Osler is: “Just listen to your patient, he is telling you the diagnosis” (Gandhi, 2000, p. 1087). An appointment begins with an interview of the patient, when a clinician compiles a patient’s medical history or verifies that the details of the patient’s history already contained in the patient’s medical record are accurate. A patient’s clinical history includes documentation of the current concern, past medical history, family history, social history, and other relevant information, such as current medications (prescription and over-the-counter) and dietary supplements.

The process of acquiring a clinical history and interviewing a patient requires effective communication, active listening skills, and tailoring communication to the patient based on the patient’s needs, values, and preferences. The National Institute on Aging, in guidance for conducting a clinical history and interview, suggests that clinicians should avoid interrupting, demonstrate empathy, and establish a rapport with patients (NIA, 2008). Clinicians need to know when to ask more detailed questions and how to create a safe environment for patients to share sensitive information about their health and symptoms. Obtaining a history can be chal-

lenging in some cases: For example, in working with older adults with memory loss, with children, or with individuals whose health problems limit communication or reliable self-reporting. In these cases it may be necessary to include family members or caregivers in the history-taking process. The time pressures often involved in clinical appointments also contribute to challenges in the clinical history and interview. Limited time for clinical visits, partially attributed to payment policies (see Chapter 7), may lead to an incomplete picture of a patient’s relevant history and current signs and symptoms.

There are growing concerns that traditional “bedside evaluation” skills (history, interview, and physical exam) have received less attention due the large growth in diagnostic testing in medicine. Verghese and colleagues noted that these methods were once the primary tools for diagnosis and clinical evaluation, but “the recent explosion of imaging and laboratory testing has inverted the diagnostic paradigm. [Clinicians] often bypass the bedside evaluation for immediate testing” (Verghese et al., 2011, p. 550). The interview has been called a clinician’s most versatile diagnostic and therapeutic tool, and the clinical history provides direction for subsequent information-gathering activities in the diagnostic process (Lichstein, 1990). An accurate history facilitates a more productive and efficient physical exam and the appropriate utilization of diagnostic testing (Lichstein, 1990). Indeed, Kassirer concluded: “Diagnosis remains fundamentally dependent on a personal interaction of a [clinician] with a patient, the sufficiency of communication between them, the accuracy of the patient’s history and physical examination, and the cognitive energy necessary to synthesize a vast array of information” (Kassirer, 2014, p. 12).

Physical Exam

The physical exam is a hands-on observational examination of the patient. First, a clinician observes a patient’s demeanor, complexion, posture, level of distress, and other signs that may contribute to an understanding of the health problem (Davies and Rees, 2010). If the clinician has seen the patient before, these observations can be weighed against previous interactions with the patient. A physical exam may include an analysis of many parts of the body, not just those suspected to be involved in the patient’s current complaint. A careful physical exam can help a clinician refine the next steps in the diagnostic process, can prevent unnecessary diagnostic testing, and can aid in building trust with the patient (Verghese, 2011). There is no universally agreed upon physical examination checklist; myriad versions exist online and in textbooks.

Due to the growing emphasis on diagnostic testing, there are concerns that physical exam skills have been underemphasized in current

health care professional education and training (Kassirer, 2014; Kugler and Verghese, 2010). For example, Kugler and Verghese have asserted that there is a high degree in variability in the way that trainees elicit physical signs and that residency programs have not done enough to evaluate and improve physical exam techniques. Physicians at Stanford have developed the “Stanford 25,” a list of physical diagnostic maneuvers that are very technique-dependent (Verghese and Horwitz, 2009). Educators observe students and residents performing these 25 maneuvers to ensure that trainees are able to elicit the physical signs reliably (Stanford Medicine 25 Team, 2015).

Diagnostic Testing

Over the past 100 years, diagnostic testing has become a critical feature of standard medical practice (Berger, 1999; European Society

BOX 2-2

Laboratory Medicine, Anatomic Pathology, and Medical Imaging

Pathology is usually separated into two disciplines: laboratory medicine and anatomic pathology. Laboratory medicine, also referred to as clinical pathology, focuses on the testing of fluid specimens, such as blood or urine. Anatomic pathology addresses the microscopic examination of tissues, cells, or other solid specimens.

Laboratory medicine is a medical subspecialty concerned with the examination of specific analytes in body fluids (e.g., cholesterol in serum, protein in urine, or glucose in cerebrospinal fluid), the specific identification of microorganisms (e.g., disease-causing bacteria in sputum, human immunodeficiency virus in blood, or parasites in stool), the analysis of bone marrow specimens (e.g., the identification of a specific of type of leukemia), and the management of transfusion therapy (e.g., cross-matching blood products, or plasmapheresis). Generally, clinical pathologists, except those with blood banking and coagulation expertise, do not interact directly with patients.

Anatomic pathology is a medical subspecialty concerned with the testing of tissue specimens or bodily fluids, typically by specialists referred to as anatomic pathologists, to interpret results and diagnose diseases or health conditions. Some anatomic pathologists perform postmortem examinations (autopsies). Typically, anatomic pathologists do not interact directly with patients, with the notable exception of the performance of fine needle aspiration biopsies.

Laboratory scientists, historically referred to as medical technologists, may contribute to this process by preparing and collecting samples and performing tests. Especially for laboratory medicine, the ordering of diagnostic tests and the

of Radiology, 2010). Diagnostic testing may occur in successive rounds of information gathering, integration, and interpretation, as each round of information refines the working diagnosis. In many cases, diagnostic testing can identify a condition before it is clinically apparent; for example, coronary artery disease can be identified by an imaging study indicating the presence of coronary artery blockage even in the absence of symptoms.

The primary emphasis of this section focuses on laboratory medicine, anatomic pathology, and medical imaging (see Box 2-2). However, there are many important forms of diagnostic testing that extend beyond these fields, and the committee’s conceptual model is intended to be broadly applicable. Aditional forms of diagnostic testing include, for example, screening tools used in making mental health diagnoses (SAMHSA and HRSA, 2015), sleep apnea testing, neurocognitive assessment, and vision and hearing testing.

interpretation of results are usually performed by the patient’s treating clinician, although pathologists have much to offer in these areas.

It is worth mentioning that with the advent of precision medicine, molecular diagnostic testing is not specifically aligned with either clinical or anatomic pathology (see Box 2-3).

Medical imaging, also known as radiology, is a medical specialty that uses imaging technologies (such as X-ray, ultrasound, computed tomography [CT], magnetic resonance imaging [MRI], and positron emission tomography [PET]) to diagnose diseases and health conditions. For many conditions, it is also used to select and plan treatments, monitor treatment effectiveness, and provide longterm follow-up. Image interpretation is typically performed by radiologists or, for selected tests involving radioactive nuclides, nuclear medicine physicians. Technologists support the process by carrying out the imaging protocols. Most radiologists today have subspecialty training (e.g., in pediatric radiology or neuroradiology), while the remainder (about 18 percent) are generalists (Bluth et al., 2014). Specialists in other clinical disciplines, such as emergency medicine physicians and cardiologists, may be trained and credentialed to perform and interpret certain types of medical imaging. This can include imaging (such as ultrasound) to localize tissue targets during biopsy.

A new subspecialty in radiology is molecular imaging, which involves the use of functional MRI techniques as well as MRI, PET/CT, or PET/MRI with molecular imaging probes. Several new molecular imaging probes have recently been approved for clinical use, and a growing number are entering clinical trials. The field of radiology also includes interventional radiology, which offers image-guided biopsy and diagnostic procedures as well as image-guided, minimally invasive treatments.

Although it was developed specifically for laboratory medicine, the brain-to-brain loop model is useful for describing the general process of diagnostic testing (Lundberg, 1981; Plebani et al., 2011). The model includes nine steps: test selection and ordering, sample collection, patient identification, sample transportation, sample preparation, sample analysis, result reporting, result interpretation, and clinical action (Lundberg, 1981). These steps occur during five phases of diagnostic testing: prepre-analytic, pre-analytic, analytic, post-analytic, and post-post-analytic phases. Errors related to diagnostic testing can occur in any of these five phases, but the analytic phase is the least susceptible to errors (Eichbaum et al., 2012; Epner et al., 2013; Laposata, 2010; Nichols and Rauch, 2013; Stratton, 2011) (see Chapter 3).

The pre-pre-analytic phase, which involves clinician test selection and ordering, has been identified as a key point of vulnerability in the work process due to the large number and variety of available tests, which makes it difficult for nonspecialist clinicians to accurately select the correct test or series of tests (Hickner et al., 2014; Laposata and Dighe, 2007). The pre-analytic phase involves sample collection, patient identification, sample transportation, and sample preparation. During the analytic phase, the specimen is tested, examined, or both. Adequate performance in this phase depends on the correct execution of a chemical analysis or morphological examination (Hollensead et al., 2004), and the contribution to diagnostic errors at this step is small. The post-analytic phase includes the generation of results, reporting, interpretation, and follow-up. Ensuring accurate and timely reporting from the laboratory to the ordering clinician and patient is central to this phase. During the post-post-analytic phase, the ordering clinician, sometimes in consultation with pathologists, incorporates the test results into the patient’s clinical context, considers the probability of a particular diagnosis in light of the test results, and considers the harms and benefits of future tests and treatments, given the newly acquired information. Possible factors contributing to failure in this phase include an incorrect interpretation of the test result by the ordering clinician or pathologist and the failure by the ordering clinician to act on the test results: for example, not ordering a follow-up test or not providing treatment consistent with the test results (Hickner et al., 2014; Laposata and Dighe, 2007; Plebani and Lippi, 2011).

The medical imaging work process parallels the work process described for pathology. There is a pre-pre-analytic phase (the selection and ordering of medical imaging), a pre-analytic phase (preparing the patient for imaging), an analytic phase (image acquisition and analysis), a post-analytic phase (the imaging results are interpreted and reported to the ordering clinician or the patient), and a post-post-analytic phase (the integration of results into the patient context and further action). The rel-

evant differences between the medical imaging and pathology processes include the nature of the examination and the methods and technology used to interpret the results.

Laboratory Medicine and Anatomic Pathology

In 2008 a Centers for Disease Control and Prevention (CDC) report described pathology as an “essential element of the health care system,” stating that pathology is “integral to many clinical decisions, providing physicians, nurses, and other health care providers with often pivotal information for the prevention, diagnosis, treatment, and management of disease” (CDC, 2008, p. 19). Primary care clinicians order laboratory tests in slightly less than one third of patient visits (CDC, 2010; Hickner et al., 2014), and direct-to-patient testing is becoming increasingly prevalent (CDC, 2008). There are now thousands of molecular diagnostic tests available, and this number is expected to increase as the mechanisms of disease at the molecular level are better understood (CDC, 2008; Johansen Taber et al., 2014) (see Box 2-3).

The task of selecting the appropriate diagnostic testing is challenging for clinicians, in part because of the sheer volume of choices. For example, Hickner and colleagues (2014) found that primary care clinicians report uncertainty in ordering laboratory medicine tests in approximately 15 percent of diagnostic encounters. Choosing the appropriate test requires understanding the patient’s history and current signs and symptoms, as well as having a sufficient suspicion or pre-test probability of a disease or condition (see section on probabilistic reasoning) (Pauker and Kassirer, 1975, 1980; Sox, 1986). The likelihood of disease is inherently uncertain in this step; for instance, the clinician’s patient population may not reflect epidemiological data, and the patient’s history can be incomplete or otherwise complicated. Advances in molecular diagnostic technologies and new diagnostic tests have introduced another layer of complexity. Many clinicians are struggling to keep up with the growing availability of such tests and have uncertainty about the best application of these tests in screening, diagnosis, and treatment (IOM, 2015a; Johansen Taber et al., 2014).

Diagnostic tests have “operating parameters,” including sensitivity and specificity that are particular to the diagnostic test for a specific disorder (see section on probabilistic reasoning). Even if a test is performed correctly, there is a chance for a false positive or false negative result. Test interpretation involves reviewing numerical or qualitative (yes or no) results and combining those results with patient history, symptoms, and pretest disease likelihood. Test interpretation needs to be patient-specific and to consider information learned during the physical exam and the clinical history and interview. Several studies have highlighted test inter-

The President’s Precision Medicine Initiative highlights the growing interest in taking individual variability into account when defining disease, tailoring treatment, and improving prevention (NIH, 2015). This initiative hinges on recent advances in molecular and cellular biology, which have provided insights into the mechanisms of disease at the molecular level. These advances have contributed to the development of molecular diagnostic testing, which analyzes a patient’s biomarkers in the genome or proteome. Concurrently, the role of pathology has expanded from morphologic observations into comprehensive analyses using combined histological, immunohistochemical, and molecular evaluations.

The use of molecular diagnostics is a rapidly developing area. Molecular diagnostic tests are being developed and used to diagnose and monitor disease, assess risk, inform whether a particular therapy is likely to be effective in a specific patient, and predict a patient’s response to therapy (AvaMedDx, 2013). Molecular diagnostic testing can identify a variety of specific genetic alterations relevant to diagnosis and treatment; molecular diagnostic techniques are also used to detect the genetic material of organisms causing infection. Panels of biomarkers are being developed into molecular diagnostic tests (omics-based tests) that are used to assess risk and inform treatment decisions, such as Oncotype DX and MammaPrint in breast cancer (IOM, 2012).

Molecular diagnostic testing is expected to improve patient management and outcomes. The potential advantages of molecular diagnostics include (1) providing earlier and more accurate diagnostic methods; (2) offering information about disease that will better tailor treatments to patients; (3) reducing the occurrence

pretation errors, such as the misinterpretation of a false positive human immunodeficiency virus (HIV) screening test for a low-risk patient as indicative of HIV infection (Gigerenzer, 2013; Kleinman et al., 1998). In addition, test performance may only be characterized in a limited patient population, leading to challenges with generalizability (Whiting et al., 2004).

The laboratories that conduct diagnostic testing are some of the most regulated and inspected areas in health care (see Table 2-1). Some of the relevant entities include The Joint Commission and other accreditors, the federal government, and various other organizations, such as the College of American Pathologists (CAP) and the American Society for Clinical Pathology. There are many ways in which quality is assessed. Examples include proficiency testing of clinical laboratory assays and pathologists (e.g., Pap smear proficiency testing), many of which are regulated under the Clinical Laboratory Improvement Amendments, and inter-laboratory

of side effects from unnecessary treatments; (4) providing better tools to for the monitoring of patients for treatment success or disease recurrence; and (5) improving patient outcomes and quality of life.

However, the translation of molecular diagnostic technologies into clinical practice has been a complex and challenging endeavor. One major challenge is the development and rigorous evaluation of molecular diagnostic tests before their implementation in clinical practice. The development pathway is often timeconsuming, expensive, and uncertain. In addition, there are underdeveloped and inconsistent standards of evidence for evaluating the scientific validity of tests and a lack of appropriate study designs and analytical methods for these analyses (IOM, 2007, 2010, 2012). Ensuring that diagnostic tests have adequate analytical and clinical validity is critical to preventing diagnostic errors. For example, in 2005 the Centers for Disease Control and Prevention and the Food and Drug Administration issued a warning about potential diagnostic errors related to false positives caused by contamination in a Lyme disease test (Nelson et al., 2014). As molecular diagnostic testing becomes increasingly complex (such as the movement from single biomarker tests to omicsbased tests that rely on high-dimensional data and complex algorithms), there is considerable interest in ensuring their appropriate development and use (IOM, 2012). Molecular diagnostic testing presents many regulatory, clinical practice, and reimbursement challenges; an Institute of Medicine study is looking into these issues and is expected to release a report in 2016 (IOM, 2015b). For example, one regulatory issue is the oversight of laboratorydeveloped tests, an area that has been met with considerable controversy (see Table 2-1) (Evans and Watson, 2015; Sharfstein, 2015). A clinical practice issue is next generation sequencing, which may frequently identify new genetic variants with unknown implications for health outcomes (ACMG Board of Directors, 2012).

comparison programs (e.g., CAP’s Q-Probes, Q-Monitors, and Q-Tracks programs).

Medical Imaging

Medical imaging plays a critical role in establishing the diagnoses for innumerable conditions and it is used routinely in nearly every branch of medicine. The advancement of imaging technologies has improved the ability of clinicians to detect, diagnose, and treat conditions while also allowing patients to avoid more invasive procedures (European Society of Radiology, 2010; Gunderman, 2005). For many conditions (e.g., brain tumors), imaging is the only noninvasive diagnostic method available. The appropriate choice of imaging modality depends on the disease, organ, and specific clinical questions to be addressed. Computed tomography (CT) and magnetic resonance imaging (MRI) are first-line methods for as-

| Entity | Role in Quality or Oversight |

| Centers for Disease Control and Prevention (CDC) | The CDC performs research on laboratory testing processes, including quality improvement studies, and develops technical standards and laboratory practice guidelines (CDC, 2014). The CDC also manages the Clinical Laboratory Improvement Advisory Committee (CLIAC), a body that offers guidance to the federal government on quality improvement in the clinical laboratory and revising Clinical Laboratory Improvement Amendments (CLIA) standards. |

| Centers for Medicare & Medicaid Services (CMS) | CMS regulates laboratories under CLIA (CMS, 2015b). To ensure CLIA compliance, laboratories undergo review of results reporting, laboratory personnel credentialing (i.e., competency assessment), quality control efforts, and procedure documentation. Laboratories are also required to perform proficiency testing (PT), a process in which a laboratory receives an unknown sample to test and report the findings back to the PT program, which evaluates the laboratory’s performance. |

| CMS grants states or accreditation organizations the authority to deem a laboratory as CLIA-compliant. In most cases the laboratory is deemed compliant by virtue of being accredited by the accreditation organization. Accreditation organizations with deeming authority for CLIA include AABB, the American Association for Laboratory Accreditation, the American Society for Histocompatibility and Immunogenics, COLA, the College of American Pathologists, the Healthcare Facilities Accreditation Program, and The Joint Commission (CMS, 2014). | |

| Food and Drug Administration (FDA) | FDA reviews and assesses the safety, efficacy, and intended use of in vitro diagnostic tests (IVDs) (FDA, 2014a). FDA assesses the analytical validity (i.e., analytical specificity and sensitivity, accuracy, and precision) and clinical validity (i.e., the accuracy with which the test identifies, measures, or predicts the presence or absence of a clinical condition or predisposition), and it develops rules and guidance for CLIA complexity categorization. One subset of IVDs, laboratory developed tests (LDTs), has been granted enforcement discretion from FDA; in 2014 FDA stated its intent to begin regulating LDTs (FDA, 2014b). |

| American Academy of Family Physicians (AAFP) | The AAFP offers a number of CMS-approved PT programs (AAFP, 2015). |

| Entity | Role in Quality or Oversight |

| American Society for Clinical Pathology (ASCP) | ASCP certifies medical laboratory professionals. ASCP also manages a CMS-approved PT program for gynecologic cytology (ASCP, 2014). |

| College of American Pathologists (CAP) |

CAP accreditation ensures the safety and quality of laboratories and satisfies CLIA requirements. CAP also offers an inter-laboratory peer PT program (CAP, 2013, 2015). This program includes

|

| Healthcare Facilities Accreditation Program (HFAP) | HFAP accreditation ensures the safety and quality of laboratories and satisfies CLIA requirements (HFAP, 2015). |

| The Joint Commission | The Joint Commission accreditation ensures the safety and quality of laboratories and satisfies CLIA requirements (The Joint Commission, 2015). |

sessing conditions of the central and peripheral nervous system, while for musculoskeletal and a variety of other conditions, X-ray and ultrasound are often employed first because of their relatively low cost and ready availability, with CT and MRI being reserved as problem-solving modalities. CT procedures are frequently used to assess and diagnose cancer, circulatory system diseases and conditions, inflammatory diseases, and head and internal organ injuries. A majority of MRI procedures are performed on the spine, brain, and musculoskeletal system, although usage for the breast, prostate, abdominal, and pelvic regions is rising (IMV, 2014).

Medical imaging is characterized not just by the increasingly precise anatomic detail it offers but also by an increasing capacity to illuminate biology. For example, magnetic resonance spectroscopic imaging has allowed the assessment of metabolism, and a growing number of other MRI sequences are offering information about functional characteristics, such as blood perfusion or water diffusion. In addition, several new tracers for

molecular imaging with PET (typically as PET/CT) have recently been approved for clinical use, and more are undergoing clinical trials, while PET/MRI was recently introduced to the clinical setting. Functional and molecular imaging data may be assessed qualitatively, quantitatively, or both. Although other forms of diagnostic testing can identify a wide array of molecular markers, molecular imaging is unique in its capacity to noninvasively show the locations of molecular processes in patients, and it is expected to play a critical role in advancing precision medicine, particularly for cancers, which often demonstrate both intra- and intertumoral biological heterogeneity (Hricak, 2011).

The growing body of medical knowledge, the variety of imaging options available, and the regular increases in the amounts and kinds of data that can be captured with imaging present tremendous challenges for radiologists, as no individual can be expected to achieve competency in all of the imaging modalities. General radiologists continue to be essential in certain clinical settings, but extended training and sub-specialization are often necessary for optimal, clinically relevant image interpretation, as is involvement in multidisciplinary disease management teams. Furthermore, the use of structured reporting templates tailored to specific examinations can help to increase the clarity, thoroughness, and clinical relevance of image interpretation (Schwartz et al., 2011).

Like other forms of diagnostic testing, medical imaging has limitations. Some studies have found that between 20 and 50 percent of all advanced imaging results fail to provide information that improves patient outcome, although these studies do not account for the value of negative imaging results in influencing decisions about patient management (Hendee et al., 2010). Imaging may fail to provide useful information because of modality sensitivity and specificity parameters; for example, the spatial resolution of an MRI may not be high enough to detect very small abnormalities. Inadequate patient education and preparation for an imaging test can also lead to suboptimal imaging quality that results in diagnostic error.

Perceptual or cognitive errors made by radiologists are a source of diagnostic error (Berlin, 2014; Krupinski et al., 2012). In addition, incomplete or incorrect patient information, as well as insufficient sharing of patient information, may lead to the use of an inadequate imaging protocol, an incorrect interpretation of imaging results, or the selection of an inappropriate imaging test by a referring clinician. Referring clinicians often struggle with selecting the appropriate imaging test, in part because of the large number of available imaging options and gaps in the teaching of radiology in medical schools. Although consensus-based guidelines (e.g., the various “appropriateness criteria” published by the American College of Radiology [ACR]) are available to help select imaging tests for many

conditions, these guidelines are often not followed. The use of clinical decision support systems at the point of care as well as direct consultations with radiologists have been proposed by the ACR as methods for improving imaging test selection (Allen and Thorwarth, 2014).

There are several mechanisms for ensuring the quality of medical imaging. The Mammography Quality Standards Act (MQSA)—overseen by the Food and Drug Administration—was the first government-mandated accreditation program for any type of medical facility; it was focused on X-ray imaging for breast cancer. MQSA provides a general framework for ensuring national quality standards in facilities that perform screening mammography (IOM, 2005). MQSA requires all personnel at facilities to meet initial qualifications, to demonstrate continued experience, and to complete continuing education. MQSA addresses protocol selection, image acquisition, interpretation and report generation, and the communication of results and recommendations. In addition, it provides facilities with data on diagnostic performance that can be used for benchmarking, self-monitoring, and improvement. MQSA has decreased the variability in mammography performed across the United States and improved the quality of care (Allen and Thorwarth, 2014). However, the ACR noted that MQSA is complex and specified in great detail, which makes it inflexible, leading to administrative burdens and the need for extensive training of staff for implementation (Allen and Thorwarth, 2014). It also focuses on only one medical imaging modality in one disease area; thus, it does not address newer screening technologies (IOM, 2005). In addition, the Medicare Improvements for Patients and Providers Act (MIPPA)3 requires that private outpatient facilities that perform CT, MRI, breast MRI, nuclear medicine, and PET exams be accredited. The requirements include personnel qualifications, image quality, equipment performance, safety standards, and quality assurance and quality control (ACR, 2015a). There are four CMS-designated accreditation organizations for medical imaging: ACR, the Intersocietal Accreditation Commission, The Joint Commission, and RadSite (CMS, 2015a). MIPPA also mandated that, beginning in 2017, ordering clinicians will be required to consult appropriateness criteria to order advanced medical imaging procedures, and the act called for a demonstration project evaluating clinician compliance with appropriateness criteria (Timbie et al., 2014). In addition to these mandated activities, societies such as ACR and the Radiological Society of North America (RSNA) provide quality improvement programs and resources (ACR, 2015b; RSNA, 2015).

______________

3 Public Law 110-275 (July 15, 2008).

Referral and Consultation

Clinicians may refer to or consult with other clinicians (formally or informally) to seek additional expertise about a patient’s health problem. The consult may help to confirm or reject the working diagnosis or may provide information on potential treatment options. If a patient’s health problem is outside a clinician’s area of expertise, he or she can refer the patient to a clinician who holds more suitable expertise. Clinicians can also recommend that the patient seek a second opinion from another clinician to verify their impressions of an uncertain diagnosis or if they believe that this would be helpful to the patient. Many groups raise awareness that patients can obtain a second opinion on their own (AMA, 1996; CMS, 2015c; PAF, 2012). Diagnostic consultations can also be arranged through the use of integrated practice units or diagnostic management teams (Govern, 2013; Porter, 2010; see Chapter 4).

IMPORTANT CONSIDERATIONS IN THE DIAGNOSTIC PROCESS

The committee elaborated on several aspects of the diagnostic process which are discussed below, including

- diagnostic uncertainty

- time

- population trends

- diverse populations and health disparities

- mental health

Diagnostic Uncertainty

One of the complexities in the diagnostic process is the inherent uncertainty in diagnosis. As noted in the committee’s conceptual model of the diagnostic process, an overarching question throughout the process is whether sufficient information has been collected to make a diagnosis. This does not mean that a diagnosis needs to be absolutely certain in order to initiate treatment. Kassirer concluded that:

Absolute certainty in diagnosis is unattainable, no matter how much information we gather, how many observations we make, or how many tests we perform. A diagnosis is a hypothesis about the nature of a patient’s illness, one that is derived from observations by the use of inference. As the inferential process unfolds, our confidence as [clinicians] in a given diagnosis is enhanced by the gathering of data that either favor it or argue against competing hypotheses. Our task is not to attain cer-

tainty, but rather to reduce the level of diagnostic uncertainty enough to make optimal therapeutic decisions. (Kassirer, 1989, p. 1489)

Thus, the probability of disease does not have to be equal to one (diagnostic certainty) in order for treatment to be justified (Pauker and Kassirer, 1980). The decision to begin treatment based on a working diagnosis is informed by: (1) the degree of certainty about the diagnosis; (2) the harms and benefits of treatment; and (3) the harms and benefits of further information-gathering activities, including the impact of delaying treatment.

The risks associated with diagnostic testing are important considerations when conducting information-gathering activities in the diagnostic process. While underuse of diagnostic testing has been a long-standing concern, overly aggressive diagnostic strategies have recently been recognized for their risks (Zhi et al., 2013) (see Chapter 3). Overuse of diagnostic testing has been partially attributed to clinicians’ fear of missing something important and intolerance of diagnostic uncertainty: “I am far more concerned about doing too little than doing too much. It’s the scan, the test, the operation that I should have done that sticks with me—sometimes for years. . . . By contrast, I can’t remember anyone I sent for an unnecessary CT scan or operated on for questionable reasons a decade ago” (Gawande, 2015). However, there is growing recognition that overly aggressive diagnostic pursuits are putting patients at greater risk for harm, and they are not improving diagnostic certainty (Kassirer, 1989; Welch, 2015).

When considering diagnostic testing options, the harm from the procedure itself needs to be weighed against the potential information that could be gained. For some patients, the risk of invasive diagnostic testing may be inappropriate due to the risk of mortality or morbidity from the test itself (such as cardiac catheterization or invasive biopsies). In addition, the risk for harm needs to take into account the cascade of diagnostic testing and treatment decisions that could stem from a diagnostic test result. Included in these assessments are the potential for false positives and ambiguous or slightly abnormal test results that lead to further diagnostic testing or unnecessary treatment.

There are some cases in which treatment is initiated even though there is limited certainty in a working diagnosis. For example, an individual who has been exposed to a tick bite or HIV may be treated with prophylactic antibiotics or antivirals, because the risk of treatment may be felt to be smaller than the risk of harm from tick-borne diseases or HIV infection. Clinicians sometimes employ empiric treatment strategies—or the provision of treatment with a very uncertain diagnosis—and use a patient’s response to treatment as an information-gathering activity to help arrive at a working diagnosis. However, it is important to note

that response rates to treatment can be highly variable, and the failure to respond to treatment does not necessarily reflect that a diagnosis is incorrect. Nor does improvement in the patient’s condition necessarily validate that the treatment conferred this benefit and, therefore, that the empirically tested diagnosis was in fact correct. A treatment that is beneficial for some patients might not be beneficial for others with the same condition (Kent and Hayward, 2007), hence the interest in precision medicine, which is hoped to better tailor therapy to maximize efficacy and minimize toxicity (Jameson and Longo, 2015). In addition, there are isolated cases where the morbidity and the mortality of a diagnostic procedure and the likelihood of disease is sufficiently high that significant therapy has been given empirically. Moroff and Pauker (1983) described a decision analysis in which a 90-year-old practicing lawyer with a new 1.5 centimeter lung nodule was deemed to have a sufficiently high risk for mortality from lung biopsy and high likelihood of malignancy that the radiation oncologists felt comfortable treating the patient empirically for suspected lung cancer.

Time

Of major importance in the diagnostic process is the element of time. Most diseases evolve over time, and there can be a delay between the onset of disease and the onset of a patient’s symptoms; time can also elapse before a patient’s symptoms are recognized as a specific diagnosis (Zwaan and Singh, 2015). Some diagnoses can be determined in a very short time frame, while months may elapse before other diagnoses can be made. This is partially due to the growing recognition of the variability and complexity of disease presentation. Similar symptoms may be related to a number of different diagnoses, and symptoms may evolve in different ways as a disease progresses; for example, a disease affecting multiple organs may initially involve symptoms or signs from a single organ. The thousands of different diseases and health conditions do not present in thousands of unique ways; there are only a finite number of symptoms with which a patient may present. At the outset, it can be very difficult to determine which particular diagnosis is indicated by a particular combination of symptoms, especially if symptoms are nonspecific, such as fatigue. Diseases may also present atypically, with an unusual and unexpected constellation of symptoms (Emmett, 1998).

Adding to the complexity of the time-dependent nature of the diagnostic process are the numerous settings of care in which diagnosis occurs and the potential involvement of multiple settings of care within a single diagnostic process. Henriksen and Brady noted that this process—for patients, their families, and clinicians alike—can often feel like “a disjointed

journey across confusing terrain, aided or impeded by different agents, with no destination in sight and few landmarks along the way” (Henriksen and Brady, 2013, p. ii2).

Some diagnoses may be more important to establish immediately than others. These include diagnoses that can lead to significant patient harm if not recognized, diagnosed, and treated early, such as anthrax, aortic dissection, and pulmonary embolism. Sometimes making a timely diagnosis relies on the fast recognition of symptoms outside of the health care setting (e.g., public awareness of stroke symptoms can help improve the speed of receiving medical help and increase the chances of a better recovery) (National Stroke Association, 2015). In these cases, the benefit of treating the disease promptly can greatly exceed the potential harm from unnecessary treatment. Consequently, the threshold for ordering diagnostic testing or for initiating treatment becomes quite low for such health problems (Pauker and Kassirer, 1975, 1980). In other cases, the potential harm from rapidly and unnecessarily treating a diagnosed condition can lead to a more conservative (or higher-threshold) approach in the diagnostic process.

Population Trends

Population trends, such as the aging of the population, are adding significant complexity to the diagnostic process and require clinicians to consider such complicating factors in diagnosis as comorbidity, polypharmacy and attendant medication side effects, as well as disease and medication interactions (IOM, 2008, 2013b). Diagnosis can be especially challenging in older patients because classic presentations of disease are less common in older adults (Jarrett et al., 1995). For example, infections such as pneumonia or urinary tract infections often do not present in older patients with fever, cough, and pain but rather with symptoms such as lethargy, incontinence, loss of appetite, or disruption of cognitive function (Mouton et al., 2001). Acute myocardial infarction (MI) may present with fatigue and confusion rather than with typical symptoms such as chest pain or radiating arm pain (Bayer et al., 1986; Qureshi et al., 2000; Rich, 2006). Sensory limitations in older adults, such as hearing and vision impairments, can also contribute to challenges in making diagnoses (Campbell et al., 1999). Physical illnesses often present with a change in cognitive status in older individuals without dementia (Mouton et al., 2001). In older adults with mild to moderate dementia, such illnesses can manifest with worsening cognition. Older patients who have multiple comorbidities, medications, or cognitive and functional impairments are more likely to have atypical disease presentations, which may increase the risk of experiencing diagnostic errors (Gray-Miceli, 2008).

Diverse Populations and Health Disparities

Communicating with diverse populations can also contribute to the complexity of the diagnostic process. Language, health literacy, and cultural barriers can affect clinician–patient encounters and increase the potential for challenges in the diagnostic process (Flores, 2006; IOM, 2003; The Joint Commission, 2007). There are indications that biases influence diagnosis; one well-known example is the differential referral of patients for cardiac catheterization by race and gender (Schulman et al., 1999). In addition, women are more likely than men to experience a missed diagnosis of heart attack, a situation that has been partly attributed to real and perceived gender biases, but which may also be the result of physiologic differences, as women have a higher likelihood of presenting with atypical symptoms, including abdominal pain, shortness of breath, and congestive heart failure (Pope et al., 2000).

Mental Health

Mental health diagnoses can be particularly challenging. Mental health diagnoses rely on the Diagnostic and Statistical Manual of Mental Disorders (DSM); each diagnosis in the DSM includes a set of diagnostic criteria that indicate the type and length of symptoms that need to be present, as well as the symptoms, disorders, and conditions that cannot be present, in order to be considered for a particular diagnosis (APA, 2015). Compared to physical diagnoses, many mental health diagnoses rely on patient reports and observation; there are few biological tests that are used in such diagnoses (Pincus, 2014). A key challenge can be distinguishing physical diagnoses from mental health diagnoses; sometimes physical conditions manifest as psychiatric ones, and vice versa (Croskerry, 2003a; Hope et al., 2014; Pincus, 2014; Reeves et al., 2010). In addition, there are concerns about missing psychiatric diagnoses, as well as overtreatment concerns (Bor, 2015; Meyer and Meyer, 2009; Pincus, 2014). For example, clinician biases toward older adults can contribute to missed diagnoses of depression, because it may be perceived that older adults are likely to be depressed, lethargic, or have little interest in interactions. Patients with mental health–related symptoms may also be more vulnerable to diagnostic errors, a situation that is attributed partly to clinician biases; for example, clinicians may disregard symptoms in patients with previous diagnoses of mental illness or substance abuse and attribute new physical symptoms to a psychological cause (Croskerry, 2003a). Individuals with health problems that are difficult to diagnose or those who have chronic pain may also be more likely to receive psychiatric diagnoses erroneously.

CLINICAL REASONING AND DIAGNOSIS

Accurate, timely, and patient-centered diagnosis relies on proficiency in clinical reasoning, which is often regarded as the clinician’s quintessential competency. Clinical reasoning is “the cognitive process that is necessary to evaluate and manage a patient’s medical problems” (Barrows, 1980, p. 19). Understanding the clinical reasoning process and the factors that can impact it are important to improving diagnosis, given that clinical reasoning processes contribute to diagnostic errors (Croskerry, 2003a; Graber, 2005). Health care professionals involved in the diagnostic process have an obligation and ethical responsibility to employ clinical reasoning skills: “As an expanding body of scholarship further elucidates the causes of medical error, including the considerable extent to which medical errors, particularly in diagnostics, may be attributable to cognitive sources, insufficient progress in systematically evaluating and implementing suggested strategies for improving critical thinking skills and medical judgment is of mounting concern” (Stark and Fins, 2014, p. 386). Clinical reasoning occurs within clinicians’ minds (facilitated or impeded by the work system) and involves judgment under uncertainty, with a consideration of possible diagnoses that might explain symptoms and signs, the harms and benefits of diagnostic testing and treatment for each of those diagnoses, and patient preferences and values.

The current understanding of clinical reasoning is based on the dual process theory, a widely accepted paradigm of decision making. The dual process theory integrates analytical and non-analytical models of decision making (see Box 2-4). Analytical models (slow system 2) involve a conscious, deliberate process guided by critical thinking (Kahneman, 2011). Nonanalytical models (fast system 1) involve unconscious, intuitive, and automatic pattern recognition (Kahneman, 2011).

Fast system 1 (nonanalytical, intuitive) automatic processes require very little working memory capacity. They are often triggered by stimuli or result from overlearned associations or implicitly learned activities.4 Examples of system 1 processes include the ability to recognize human faces (Kanwisher and Yovel, 2006), the diagnosis of Lyme disease from a bull’s-eye rash, or decisions based on heuristics (mental shortcuts), intuition, or repeated experiences.

In contrast, slow system 2 (reflective, analytical) processing places a heavy load on working memory and involves hypothetical and counterfactual reasoning (Evans and Stanovich, 2013; Stanovich and Toplak, 2012). System 2 processing requires individuals to generate mental models

______________

4 The term “system 1” is an oversimplification because it is unlikely there is a single cognitive or neural system responsible for all system 1 cognitive processes.

BOX 2-4

Models of Clinical Reasoning

Analytical models (slow system 2). Hypotheticodeductivism is an analytical reasoning model that describes clinical reasoning as hypothesis testing (Elstein et al., 1978, 1990). The steps involved in hypothesis testing include

- Cue acquisition: Clinicians obtain contextual information by taking a history, performing a physical examination, administering diagnostic tests, or consulting with other clinicians.

- Hypothesis generation (working diagnoses): Clinicians formulate alternative diagnostic possibilities.

- Cue interpretation (diagnostic modification and refinement): Clinicians interpret the consistency of the information with each of the alternative hypotheses under consideration.

- Hypothesis evaluation (diagnostic verification): The data are weighed and combined to evaluate whether one of the working diagnoses can be confirmed. If not, further information gathering, hypothesis generation, interpretation, and evaluation is conducted until verification is achieved (Elstein and Bordage, 1988).

Analytical reasoning models have several additional characteristics. First, the generation of a set of hypotheses that occurs after cue acquisition facilitates the construction of a differential diagnosis, with evidence suggesting that the consideration of potential hypotheses prior to gathering information can improve diagnostic accuracy (Kostopoulou et al., 2015). Second, in order to supplement hypotheses retrieved from memory, some clinicians may employ clinical decision support tools. Third, the evolving list of diagnostic hypotheses determines subsequent information-gathering activities (Kassirer et al., 2010). Fourth, the entire process involves, either explicitly or implicitly, clinicians assigning and updating the probability of each potential diagnosis, given the available data (Kassirer et al., 2010).

These models hold that clinical problem-solving tasks, such as diagnosis, require deliberate, logically sound reasoning by clinicians. Thus, clinical reasoning can be improved by developing the critical thinking skills (Papp et al., 2014). They also imply that clinical reasoning uses the presence or absence of specific signs or symptoms to be evidence that either confirms or disproves a diagnosis. Studies

of what should or should not happen in particular situations, in order to test possible actions or to explore alternative causes of events (Stanovich, 2009). Hypothetical thinking occurs when one reasons about what should occur if some condition held: For example, if this patient has diabetes, then the blood sugar level should exceed 126 mg/dl after an 8-hour fast, or if prescribed a diabetes medication, the sugar level should improve. Counterfactual reasoning occurs when one thinks about what should occur if the situation differed from how it actually is. The deliberate,

have shown that clinicians do participate in analytical reasoning (Barrows et al., 1982; Elstein et al., 1978; Neufeld et al., 1981). However, studies also suggest that experience is crucial to the development of expertise and that general problemsolving skills, such as hypothesis testing, cannot account for differences in clinical reasoning skills between experts and novices (Elstein and Schwarz, 2002; Groen and Patel, 1985; Neufeld et al., 1981; Norman, 2005). These findings support a role for nonanalytical models of clinical reasoning and the importance of content knowledge and clinical experience.

Nonanalytical models (fast system 1). Broadly construed through a pattern-recognition framework, nonanalytical models attempt to understand clinical reasoning through human categorization and classification practices. These models suggest that clinicians make diagnoses and choose treatments by matching presenting patients to their mental models of diseases (or information about diseases that is stored in memory). Although the nature of these mental models remain under debate, most assume that they are either exemplars (specific patients seen previously and stored in memory as concrete examples) or prototypes (an abstract disease conceptualization that weighs disease features according to their frequency) (Bordage and Zacks, 1984; Norman, 2005; Rosch and Mervis, 1975; Schmidt et al., 1990; Smith and Medin, 1981, 2002).

Expert pattern matching by experienced clinicians may involve illness scripts, in which elaborated disease knowledge includes enabling conditions or risk factors (e.g., physical contact with the Ebola virus); the pathophysiology of the disease (Ebola virus replication, invasion and destruction of endothelial surfaces); and the signs and symptoms of the disease (bleeding from Ebola) (Boshuizen and Schmidt, 2008). After encountering a patient, a clinician may activate a single illness script or multiple scripts. Illness scripts differ from exemplars and prototypes by having more extensive knowledge stored for each disease. As the diagnostic process evolves, the clinician matches the activated scripts against the presenting signs and symptoms, with the best matching script offered as the most likely diagnosis.

While exemplars, prototypes, and illness scripts are assumed to encode different types of information about disease conditions—that is, actual instances versus typical presentation versus multidimensional information—pattern recognition models assume them to play the same role in diagnosis.

conscious, and reflective nature of both hypothetical and counterfactual reasoning illustrates the analytical nature of system 2.

Heuristics—mental shortcuts or cognitive strategies that are automatically and unconsciously employed—are particularly important for decision making (Gigerenzer and Goldstein, 1996). Heuristics can facilitate decision making but can also lead to errors, especially when patients present with atypical symptoms (Cosmides and Tooby, 1996; Gigerenzer, 2000; Kahneman, 2011; Klein, 1998; Lipshitz et al., 2001; McDonald, 1996).

When a heuristic fails, it is referred to as a cognitive bias. Cognitive biases, or predispositions to think in a way that leads to failures in judgment, can also be caused by affect and motivation (Kahneman, 2011). Prolonged learning in a regular and predictable environment increases the success-fulness of heuristics, whereas uncertain and unpredictable environments are a chief cause of heuristic failure (Kahneman, 2011; Kahneman and Klein, 2009). There are many heuristics and biases that affect clinical reasoning and decision making (see Table 2-2 for medical and nonmedical examples). Additional examples of heuristics and biases that affect decision making and the potential for diagnostic errors are described below (Croskerry, 2003b):

- The representativeness heuristic answers the question, “how likely is it that this patient has a particular disease?” by assessing how typical the patient’s symptoms are for that disease. If the symptoms are highly typical (e.g., fever and nausea after contact with an individual from West Africa with Ebola virus), then it is likely the patient will be diagnosed as having that condition (e.g., Ebola virus infection). The representativeness bias refers to the tendency to make decisions based on a typical case, even when this may lead to an incorrect judgment. The representativeness bias helps to explain why an incorrect diagnosis (e.g., a patient diagnosed as not having Ebola virus infection) is made when presenting symptoms are atypical (e.g., no fever or nausea after contact with a person from West Africa).

- Base-rate neglect describes the tendency to ignore the prevalence of a disease in determining a diagnosis. For example, a clinician may think the diagnosis is acid reflux because it is a prevalent condition, even though it is actually an MI, which can present with similar symptoms (e.g., chest pain), but is less likely.

- The overconfidence bias reflects the universal tendency to believe that we know more than we do. This bias encourages individuals to diagnose a disease based on incomplete information; too much faith is placed in one’s opinion rather than on carefully gathering evidence. This bias is especially likely to develop if clinicians do not have feedback on their diagnostic performance.

- Psych-out errors describe the increased susceptibility of people with mental illnesses to clinician biases and heuristics due to their mental health conditions. Patients with mental health issues may have new physical symptoms that are not considered seriously because their clinicians attribute them to their mental health issues. Patients with physical symptoms that mimic mental illnesses (hypoxia, delirium, metabolic abnormalities, central

TABLE 2-2 Examples of Heuristics and Biases That Influence Decision Making

| Heuristic or Bias | Medical Example | Nonmedical Example |

| Anchoring is the tendency to lock onto salient features in the patient’s initial presentation and failing to adjust this initial impression in the light of later information. | A patient is admitted from the emergency department with a diagnosis of heart failure. The hospitalists who are taking care of the patient do not pay adequate attention to new findings that suggest another diagnosis. | We buy a new car based on excellent reviews and tend to ignore or downplay negative features that are noticed. |

| Affective bias refers to the various ways that our emotions, feelings, and biases affect judgment. | New complaints from patients known to be “frequent flyers” in the emergency department are not taken seriously. | We may have the belief that people who are poorly dressed are not articulate or intelligent. |

| Availability bias refers to our tendency to more easily recall things that we have seen recently or things that are common or that impressed us. | A clinician who just recently read an article on the pain from aortic aneurysm dissection may tend toward diagnosing it in the next few patients he sees who present with nonspecific abdominal pain, even though aortic dissections are rare. | Because of a recent news story on a tourist kidnapping in Country “A,” we change the destination we have chosen for our vacation to Country “B.” |

| Context errors reflect instances where we misinterpret the situation, leading to an erroneous conclusion. | We tend to interpret that a patient presenting with abdominal pain has a problem involving the gastrointestinal tract, when it may be something else entirely: for example, an endocrine, neurologic or vascular problem. | We see a work colleague picking up two kids from an elementary school and assume he or she has children, when they are instead picking up someone else’s children. |

| Search satisficing, also known as premature closure, is the tendency to accept the first answer that comes along that explains the facts at hand, without considering whether there might be a different or better solution. | The emergency department clinician seeing a patient with recent onset of low back pain immediately settles on a diagnosis of lumbar disc disease without considering other possibilities in the differential diagnosis. | We want a plane ticket that costs no more than $1,000 and has no more than one connection. We perform an online search and purchase the first ticket that meets these criteria without looking to see if there is a cheaper flight or one with no connections. |

nervous infections, and head injuries) may also be susceptible to these errors.

In addition to cognitive biases, research suggests that fallacies in reasoning, ethical violations, and financial and nonfinancial conflicts of interest can influence medical decision making (Seshia et al., 2014a,b). These factors, collectively referred to as “cognitive biases plus,” have been identified as potentially undermining the evidence that informs clinical decision making (Seshia et al., 2014a,b).

The interaction between fast system 1 and slow system 2 remains controversial. Some hold that these processes are constantly occurring in parallel and that any conflicts are resolved as they arise. Others have argued that system 1 processes generate an individual’s default response and that system 2 processes may or may not intervene and override system 1 processing (Evans and Stanovich, 2013; Kahneman, 2011). When system 2 overrides system 1, this can lead to improved decision making, because engaging in analytical reasoning may correct for inaccuracies. It is important to note that slow system 2 processing does not guarantee correct decision making. For instance, clinicians with an inadequate knowledge base may not have the information necessary to make a correct decision. There are some instances when system 1 processing is correct, and the override from system 2 can contribute to incorrect decision making. However, when system 1 overrides system 2 processing, this can also result in irrational decision making.

Intervention by system 2 is likely to occur in novel situations when the task at hand is difficult; when an individual has minimal knowledge or experience (Evans and Stanovich, 2013; Kahneman, 2011); or when an individual deliberately employs strategies to overcome known biases (Croskerry et al., 2013). Monitoring and intervention by system 2 on system 1 is unlikely to catch every failure because it is inefficient and would require sustained vigilance, given that system 1 processing often leads to correct solutions (Kahneman, 2011). Factors that affect working memory can impede the ability of system 2 to monitor and, when necessary, intervene on system 1 processes (Croskerry, 2009b). For example, if clinicians are tired or distracted by elements in the work system, they may fail to recognize when a decison provided by system 1 processing needs to be reconsidered (Croskerry, 2009b).

System 1 and system 2 perform optimally in different types of clinical practice settings. System 1 performs best in highly reliable and predictable environments but falls short in uncertain and irregular settings (Kahneman and Klein, 2009; Stanovich, 2009). System 2 performs best in relaxed and unhurried environments.

Dual Process Theory and Diagnosis

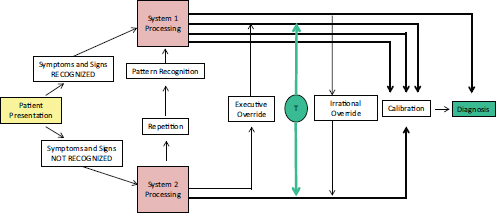

This section applies the dual process theory of clinical reasoning to the diagnostic process (Croskerry, 2009a,b; Norman and Eva, 2010; Pelaccia et al., 2011). Croskerry and colleagues provide a framework for understanding the cognitive activities that occur in clinicians as they iterate through information gathering, information integration and interpretation, and determining a working diagnosis (Croskerry et al., 2013) (see Figure 2-2).

When patients present, clinicians gather information and compare that information with their knowledge about various diseases. This can

FIGURE 2-2 The dual process model of diagnostic decision making. When a patient presents to a clinician, the initial data include symptoms and signs of disease, which can range from single characteristics of disease to illness scripts. If the symptoms and signs of illness are recognized, system 1 processes are used. If they are not recognized, system 2 processes are used. Repetition of data to system 2 processes may eventually be recognized as a new pattern and subsequently processed through system 1. Multiple arrows stem from system 1 processes to depict intuitive, fast, parallel decision making. Because system 2 processes are slow and serial, only one arrow stems from system 2 processes, depicting analytical decision making. The executive override pathway shows that system 2 surveillance has the potential to overrule system 1 decision making. The irrational override pathway shows the capability for system 1 processes to overrule system 2 analytical decision making. The toggle arrow (T) illustrates how the decision maker may employ both fast system 1 and slow system 2 processes throughout the decision-making process. The manner in which data are processed through system 1 and system 2 determines the calibration of a clinician’s diagnostic performance, or a clinician’s understanding of his/her diagnostic abilities and limitations.

SOURCE: Adapted by permission from BMJ Publishing Group Limited. Cognitive debiasing 1: Origins of bias and theory of debiasing. P. Croskerry, G. Singhal, and S. Mamede. BMJ Quality and Safety 22(Suppl 2):ii58–ii64. 2013.

include comparing a patient’s signs and symptoms with clinicians’ mental models of diseases (or information about diseases that is stored in memory as exemplars, prototypes, or illness scripts; see Box 2-4). This initial pattern matching is an instance of fast system 1 processing. If a sufficiently unique match occurs, then a diagnosis may be made without involvement of slow system 2.

However, some symptoms or signs may not be recognized or they may trigger mental models for several diseases at once. When this happens, slow system 2 processing may be engaged, and the clinician will continue to gather, integrate, and interpret potentially relevant information until a working diagnosis is generated and communicated to the patient. When this process triggers pattern matches for several mental models of disease, a differential diagnosis is developed. At this point, the diagnostic process shifts to slow system 2 analytical reasoning. Based on their knowledge base, clinicians then use deductive reasoning: If this patient has disease A, what clinical history and physical examination findings might be expected, and does the patient have them? This process is repeated for each condition in the differential diagnosis and may be augmented by additional sources of information, such as diagnostic testing, further history gathering or physical examination, or referral or consultation. The cognitive process of reassessing the probability assigned to each potential diagnosis involves inductive reasoning,5 or going from observed signs and symptoms to the likelihood of each disease to determine which hypothesis is most likely (Goodman, 1999). This can help refine and narrow the differential diagnosis. Further information gathering activities or treatment could provide greater certainty regarding a working diagnosis or suggest that alternative diagnoses be considered. Throughout this process, clinicians need to communicate with patients about the working diagnosis and the degree of certainty involved.

Task complexity and expertise affect which cognitive system is dominantly employed in the diagnostic process. System 1 processing is more likely to be used when patients present with typical signs and symptoms of disease. However, system 2 processing is likely to intervene in situations marked by novelty and difficulty, when patients present with atypical signs and symptoms, or when clinicians lack expertise (Croskerry, 2009b; Evans and Stanovich, 2013). Novice clinicians and medical students are more likely to rely on analytical reasoning throughout the diagnostic process compared to experienced clinicians (Croskerry, 2009b; Elstein and Schwartz, 2002; Kassirer, 2010; Norman, 2005). Expert clinicians possess better developed mental models of diseases, which support more reliable pattern matching (system 1 processes) (Croskerry, 2009b). As a clinician

______________

5 Inductive reasoning involves probabilistic reasoning (see the following section).

accumulates experience, the repetition of system 2 processing can expand pattern matching possibilities by building and storing in memory mental models for additional diseases that can be triggered by patient signs and symptoms. The ability to create and develop mental models through repetition explains why expert clinicians are more likely to rely on pattern recognition when making diagnoses than are novices—continuous engagement with disease conditions allows the expert to develop more reliable mental models of disease—by retaining more exemplars, creating more nuanced prototypes, or developing more detailed illness scripts.

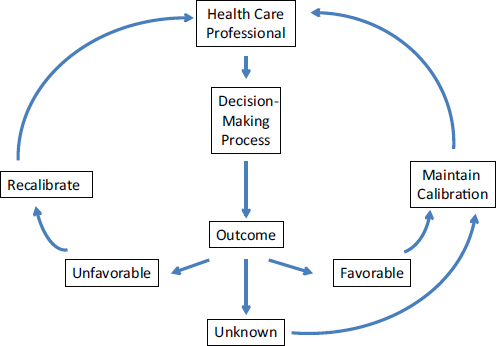

The way in which information is processed through system 1 and system 2 informs a clinician’s subsequent diagnostic performance. Figure 2-3 illustrates the concept of calibration, or the process of a clinician becoming aware of his or her diagnostic abilities and limitations through feedback. Feedback mechanisms—both in educational settings (see Chapter 4) and in learning health care systems (see Chapter 6)—allow

FIGURE 2-3 Calibration in the diagnostic process. Favorable or unfavorable information about a clinician’s diagnostic performance provides good feedback and improves clinician calibration. When a patient’s diagnostic outcome is unknown, it will be treated as favorable and lead to poor calibration.

SOURCE: Adapted with permission from The feedback sanction. P. Croskerry. Academic Emergency Medicine 7(11):1232–1238, 2000.

clinicians to compare their patients’ ultimate diagnoses with the diagnoses that they provided to those patients. Calibration enables clinicians to assess their diagnostic accuracy and improve their future performance.

Work system factors influence diagnostic reasoning, including diagnostic team members and tasks, technologies and tools, organizational characteristics, the physical environment, and the external environment. For example, Chapter 6 describes how the physical environment, including lighting, noise, and layout, can influence clinical reasoning. Chapter 5 discusses how health IT can improve or degrade clinical reasoning, depending on the usability of health IT (including clinical decision support), its integration into clinical workflow, and other factors. Box 2-5 describes how certain individual characteristics of diagnostic team members can affect clinical reasoning.

Probabilistic (Bayesian) Reasoning