Computer Security and Privacy: Where Human Factors Meet Engineering

FRANZISKA ROESNER

University of Washington

As the world becomes more computerized and interconnected, computer security and privacy will continue to increase in importance. In this paper I review several computer security and privacy challenges faced by end users of the technologies we build, and considerations for designing and building technologies that better match user expectations with respect to security and privacy. I conclude with a brief discussion of open challenges in computer security and privacy where engineering meets human behavior.

INTRODUCTION

Over the past several decades new technologies have brought benefits to almost all aspects of daily life for people all over the world, transforming how they work, communicate, and interact with each other.

Unfortunately, however, new technologies also bring new and serious security and privacy risks. For example, smartphone malware is on the rise, often tricking unsuspecting users by appearing as compromised versions of familiar, legitimate applications (Ballano 2011); by recent reports, more than 350,000 variants of Android malware have been identified (e.g., Sophos 2014). These malicious applications incur direct and indirect costs by stealing financial and other information or by secretly sending costly premium SMS messages. Privacy is also a growing concern on the web, as advertisers and others secretly track user browsing behaviors, giving rise to ongoing efforts at “do not track” technology and legislation.1

_____________

1 Congress introduced, but did not enact, the Do-Not-Track Online Act of 2013, S.418. Available at https://www.congress.gov/bill/113th-congress/senate-bill/418.

Such concerns cast a shadow on modern and emerging computing platforms that otherwise provide great benefits. The need to address these and other computer-related security and privacy risks will only increase in importance as the world becomes more computerized and interconnected. This paper focuses specifically on computer security and privacy at the intersection of human factors and the engineering of new computer systems.

DESIGNING WITH A “SECURITY MINDSET”

It is vital to approach the engineering design process with a “security mindset” that attempts to anticipate and mitigate unexpected and potentially dangerous ways technologies might be (mis)used. Research on computer security and privacy aims to systematize such efforts by (1) studying and developing cryptographic techniques, (2) analyzing or attempting to attack deployed technologies, (3) measuring deployed technical ecosystems (e.g., the web), (4) studying human factors, and (5) designing and building new technologies. Some of these (e.g., cryptography or usable security) are academic subdisciplines unto themselves, but all work together and inform each other to improve the security and privacy properties of existing and emerging technologies.

Many computer security and privacy challenges arise when the expectations of end users do not match the actual security and privacy properties and behaviors of the technologies they use—for example, when installed applications secretly send premium SMS messages or leak a user’s location to advertisers, or when invisible trackers observe a user’s behavior on the web.

There are two general approaches to try to mitigate these discrepancies. One involves trying to help users change their mental models about the technologies they use to be more accurate (e.g., to help users think twice before installing suspicious-looking applications), by educating them about the risks and/or by carefully designing the user interfaces (UIs) of app stores. Recent work by Bravo-Lillo and colleagues (2013) on designing security-decision UIs to make them more difficult for users to ignore is a nice example of this approach.

The alternative is to (re)design technologies themselves so that they better match the security and privacy properties that users intuitively expect, by “maintaining agreement between a system’s security state and the user’s mental model” (Yee 2004, p. 48).

Both approaches are valuable and complementary. This paper explores the second: designing security and privacy properties in computer systems in a way that goes beyond taking human factors into account to actively remove from users the burden of explicitly managing their security and/or privacy at all. To illustrate the power of this approach, I review research on user-driven access control and then highlight other recent examples.

RETHINKING SMARTPHONE PERMISSIONS WITH USER-DRIVEN ACCESS CONTROL

Consider smartphones (such as iOS, Android, or Windows Phone), other modern operating systems (such as recent versions of Windows and Mac OS X), and browsers. All of these platforms both allow users to install arbitrary applications and limit the capabilities of those applications in an attempt to protect users from potentially malicious or “buggy” applications. Thus, by default, applications cannot access sensitive resources or devices such as the file system, microphone, camera, or GPS. However, to carry out their intended functionality, many applications need access to these resources.

Thus an open question in modern computing platforms in recent years has been: How should untrusted applications be granted permissions to access sensitive resources?

Challenges

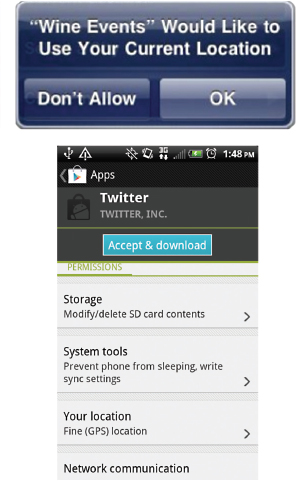

Most current platforms explicitly ask users to make the decision about access. For example, iOS prompts users to decide whether an application may access a sensitive feature such as location, and Android asks users to agree to an install-time manifest of permissions requested by an application2 (Figure 1).

Unfortunately, these permission-granting approaches place too much burden on users. Install-time manifests are often ignored or not understood by users (Felt et al. 2012a), and permission prompts are disruptive to the user’s experience, teaching users to ignore and click through them (Motiee et al. 2010). Users thus unintentionally grant applications too many permissions and become vulnerable to applications that use the permissions in malicious or questionable ways (e.g., secretly sending SMS messages or leaking location information).

In addition to outright malware (Ballano 2011), studies have shown that even legitimate smartphone applications commonly leak or misuse private user data, such as by sending it to advertisers (e.g., Enck et al. 2010).

Toward a New Approach

It may be possible to reduce the frequency and extent of an application’s illegitimate permissions by better communicating application risks to users (e.g., Kelley et al. 2013) or redesigning permission prompts (e.g., Bravo-Lillo et al. 2013). However, my colleagues and I found in a user survey that people have existing expectations about how applications use permissions; many believe, for example, that an application cannot (or at least will not) access a sensitive resource

_____________

2 Android M will use runtime prompts similar to iOS instead of its traditional install-time manifest.

FIGURE 1 Existing permission-granting mechanisms that require the user to make explicit decisions: runtime prompts (as in iOS, above) and install-time manifests (as in Android, below).

such as the camera unless it is related to the user’s activities within the application (Roesner et al. 2012b). In reality, however, after being granted the permission to access the camera (or another sensitive resource) once, Android and iOS applications can continue to access it in the background without the user’s knowledge.

Access Based on User Intent

This finding speaks for an alternate approach: modifying the system to better match user expectations about permission granting. To that end, we developed user-driven access control (Roesner et al. 2012b) as a new model for granting permissions in modern operating systems.

Rather than asking the user to make explicit permission decisions, user-driven access control grants permissions automatically based on existing user actions within applications. The underlying insight is that users already implicitly indicate the intent to grant a permission through the way they naturally interact with an application. For example, a user who clicks on the “video call” button in a video chat application implicitly indicates the intent to allow the application to access the camera and microphone until the call is terminated.

If the operating system could interpret the user’s permission-granting intent based on this action, then it would not need to additionally prompt the user to make an explicit decision about permissions and it could limit the application’s access to a time intended by the user. The challenge, however, is that the operating system cannot by default interpret the user’s actions in the custom user interfaces (e.g., application-specific buttons) of all possible applications.

Access Control Gadgets

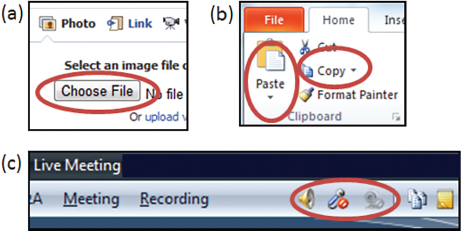

To allow the operating system to interpret permission-granting intent, we developed access control gadgets (ACGs), special, system-controlled user interface elements that grant permissions to the embedding application. For example, in a video chat application, the “video call” button is replaced by a system-controlled ACG. Figure 2 shows additional examples of permission-related UI elements that can be easily replaced by ACGs to enable user-driven access control.

The general principle of user-driven access control has been introduced before (as discussed below), but ACGs make it practical and generalizable to

FIGURE 2 Access control gadgets (ACGs) are system-controlled user interface elements that allow the operating system to capture a user’s implicit intent to grant a permission (e.g., to allow an application to access the camera).

multiple sensitive resources and permissions, including those that involve the device’s location and clipboard, files, camera, and microphone. User-driven access control is powerful because it improves users’ security and privacy by changing the system to better match their expectations, rather than the other way around. That is, users’ experience interacting with their applications is unchanged while the underlying permissions granted to applications match the users’ expectations.

OTHER EXAMPLES

User-driven access control follows philosophically from Yee (2004) and a number of other works. CapDesk (Miller 2006) and Polaris (Stiegler et al. 2006) were experimental desktop computer systems that applied a similar approach to file system access, giving applications minimal privileges but allowing users to grant applications permission to access individual files via a “powerbox” user interface (essentially a secure file picking dialog). Shirley and Evans (2008) proposed a system (prototyped for file resources) that attempts to infer a user’s access control intent from the history of user behavior. And BLADE (Lu et al. 2010) attempts to infer the authenticity of browser-based file downloads using similar techniques. Related ideas have recently appeared in mainstream commercial systems, including Mac OS X and Windows 8,3 whose file picking designs also share the underlying user-driven access control philosophy.

Note that automatically managing permissions based on a user’s interactions with applications may not always be the most appropriate solution. Felt and colleagues (2012b) recommended combining a user-driven access control approach for some permissions (e.g., file access) with other approaches (e.g., prompts or post facto auditing) that work more naturally for other permissions or contexts. The approach of designing systems to better and more seamlessly meet users’ security and privacy expectations is much more general than the challenges surrounding application permissions discussed above.

Following are a few of the systems that are intentionally designed to better and more automatically match users’ security and privacy expectations. Such efforts are often well complemented by work that attempts to better communicate with or educate users by changing the designs of user interfaces.

Personal Communications

To secure communications between two parties, available tools such as PGP have long faced usability challenges (Whitten and Tygar 1999). Efforts

_____________

3 Apple posted information in 2011 on the “App sandbox and the Mac app store” (available at https://developer.apple.com/videos/wwdc/2011/), and Windows information on “Accessing files with file pickers” is available at http://msdn.microsoft.com/en-us/library/windows/apps/hh465174.aspx.

that remove the burden from users while providing stronger security and privacy include Vanish (Geambasu et al. 2009), which supports messages that automatically “disappear” after a period of time; ShadowCrypt (He et al. 2014), which replaces existing user input elements on websites with ones that transparently encrypt and later decrypt user input; and Gyrus (Jang et al. 2014), which ensures that only content that a user has intended to enter into a user input element is what is actually sent over the network (“what you see is what you send”).

Commercially, communication platforms such as email and chat are increasingly moving toward providing transparent end-to-end encryption (e.g., Somogyi 2014), though more work remains to be done (Unger et al. 2015). For example, the security of journalists’ communications with sensitive sources has come into question in recent years and requires a technical effort that provides low-friction security and privacy properties to these communications (McGregor et al. 2015).

User Authentication

Another security challenge faced by many systems is that of user authentication, which is typically handled with passwords or similar approaches, all of which have known usability and/or security issues (Bonneau et al. 2012). One approach to secure a user’s accounts is two-factor authentication, in which a user must provide a second identifying factor in addition to a password (e.g., a code provided by an app on the user’s phone). Two-factor authentication provides improved security and is seeing commercial uptake, but it decreases usability; efforts such as PhoneAuth (Czeskis et al. 2012) aim to balance these factors by using the user’s phone as a second factor only opportunistically when it happens to be available.

Online Tracking

As a final example, user expectations about security and privacy do not match the reality of today’s systems with respect to privacy on the web. People’s browsing behaviors are invisibly tracked by third-party advertisers, website analytics engines, and social media sites.

In earlier work we discovered that social media trackers, such as Facebook’s “Like” or Twitter’s “tweet” button, represent a significant fraction of trackers on popular websites (Roesner et al. 2012a). To mitigate the associated privacy concerns, we applied a user-driven access control design philosophy to develop ShareMeNot, which allows tracking only when the user clicks the associated social media button. ShareMeNot’s techniques have been integrated into the Electronic Frontier Foundation’s Privacy Badger tool (https://www.eff.org/privacybadger), which automatically detects and selectively blocks trackers without requiring explicit user input.

CHALLENGES FOR THE FUTURE

New technologies are improving and transforming people’s lives, but they also bring with them new and serious security and privacy concerns. Balancing the desired functionality provided by increasingly sophisticated technologies with security, privacy, and usability remains an important challenge.

This paper has illustrated efforts across several contexts to achieve this balance by designing computer systems that remove the burden from the user. However, more work remains to be done in all of these and other domains, particularly in emerging areas that rely on ubiquitous sensors, such as the augmented reality technologies of Google Glass and Microsoft HoloLens.

By understanding and anticipating these challenges early enough, and by applying the right insights and design philosophies, it will be possible to improve the security, privacy, and usability of emerging technologies before they become widespread.

REFERENCES

Ballano M. 2011. Android threats getting steamy. Symantec Official Blog, February 28. Available at http://www.symantec.com/connect/blogs/android-threats-getting-steamy.

Bonneau J, Herley C, van Oorschot PC, Stajano F. 2012. The quest to replace passwords: A frame-work for comparative evaluation of web authentication schemes. Proceedings of the 2012 IEEE Symposium on Security and Privacy. Washington: IEEE Society.

Bravo-Lillo C, Cranor LF, Downs J, Komanduri S, Reeder RW, Schechter S, Sleeper M. 2013. Your attention please: Designing security-decision UIs to make genuine risks harder to ignore. Proceedings of the Symposium on Usable Privacy and Security (SOUPS), July 24 –26, Newcastle, UK.

Czeskis A, Dietz M, Kohno T, Wallach D, Balfanz D. 2012. Strengthening user authentication through opportunistic cryptographic identity assertions. Proceedings of the 19th ACM Conference on Computer and Communications Security, October 16 –18, Raleigh, NC.

Enck W, Gilbert P, Chun B, Cox LP, Jung J, McDaniel P, Sheth AN. 2010. TaintDroid: An information-flow tracking system for realtime privacy monitoring on smartphones. Proceedings of the 9th USENIX Conference on Operating System Design and Implementation. Berkeley: USENIX Association.

Felt AP, Ha E, Egelman S, Haney A, Chin E, Wagner D. 2012a. Android permissions: User attention, comprehension, and behavior. Proceedings of the Symposium on Usable Privacy and Security (SOUPS), July 11–13, Washington, DC.

Felt AP, Egelman S, Finifter M, Akhawe D, Wagner D. 2012b. How to ask for permission. Proceedings of the 7th Workshop on Hot Topics in Security (HotSec), August 7, Bellevue, WA.

Geambasu R, Kohno T, Levy A, Levy HM. 2009. Vanish: Increasing data privacy with self-destructing data. Proceedings of the 18th USENIX Security Symposium. Berkeley: USENIX Association.

He W, Akhawe D, Jain S, Shi E, Song D. 2014. ShadowCrypt: Encrypted web applications for everyone. Proceedings of the ACM Conference on Computer and Communications Security. New York: Association for Computing Machinery.

Jang Y, Chung SP, Payne BD, Lee W. 2014. Gyrus: A framework for user-intent monitoring of text-based networked applications. Proceedings of the Network and Distributed System Security Symposium (NDSS), February 23–26, San Diego.

Kelley PG, Cranor LF, Sadeh N. 2013. Privacy as part of the app decision-making process. Proceedings of the SIGCHI Conference on Human Factors in Computing Systems. New York: Association for Computing Machinery.

Lu L, Yesneswaran V, Porras P, Lee W. 2010. BLADE: An attack-agnostic approach for preventing drive-by malware infections. Proceedings of the 17th ACM Conference on Computer and Communications Security. New York: Association for Computing Machinery.

McGregor SE, Charters P, Holliday T, Roesner F. 2015. Investigating the computer security practices and needs of journalists. Proceedings of the 24th USENIX Security Symposium. Berkeley: USENIX Association.

Miller MS. 2006. Robust composition: Towards a unified approach to access control and concurrency control. PhD thesis, Johns Hopkins University.

Motiee S, Hawkey K, Beznosov K. 2010. Do Windows users follow the principle of least privilege? Investigating user account control practices. Proceedings of the 6th Symposium on Usable Privacy and Security (SOUPS). New York: Association for Computing Machinery.

Roesner F, Kohno T, Wetherall D. 2012a. Detecting and defending against third-party tracking on the web. Proceedings of the 9th USENIX Symposium on Networked Systems Design and Implementation, April 25 –27, San Jose.

Roesner F, Kohno T, Moshchuk A, Parno B, Wang HJ, Cowan C. 2012b. User-driven access control: Rethinking permission granting in modern operating systems. Proceedings of the IEEE Symposium on Security and Privacy, May 20 –23, San Francisco.

Shirley J, Evans D. 2008. The user is not the enemy: Fighting malware by tracking user intentions. Proceedings of the Workshop on New Security Paradigms. New York: Association for Computing Machinery.

Somogyi S. 2014. An update to end-to-end. Google Online Security Blog, December 16. Available at http://googleonlinesecurity.blogspot.com/2014/12/an-update-to-end-to-end.html.

Sophos. 2014. Our top 10 predictions for security threats in 2015 and beyond. Blog, December 11. Available at https://blogs.sophos.com/2014/12/11/our-top-10-predictions-for-security-threats-in-2015-and-beyond/.

Stiegler M, Karp AH, Yee K-P, Close T, Miller MS. 2006. Polaris: Virus-safe computing for Windows XP. Communications of the ACM 49:83–88.

Unger N, Dechand S, Bonneau J, Fahl S, Perl H, Goldberg I, Smith M. 2015. SoK: Security messaging. Proceedings of the IEEE Symposium on Security and Privacy, May 17–21, San Jose.

Whitten A, Tygar JD. 1999. Why Johnny can’t encrypt: A usability evaluation of PGP 5.0. Proceedings of the USENIX Security Symposium 8:14.

Yee K-P. 2004. Aligning security and usability. IEEE Security and Privacy 2(5):48–55.

This page intentionally left blank.