2

An Approach to Policy Evaluation for the National Flood Insurance Program

The Federal Emergency Management Agency (FEMA) was directed by Congress to conduct a study on how changes required by the Biggert-Waters Flood Insurance Reform and Modernization Act of 2012 (BW 2012) affect the affordability of flood insurance premiums. Such a study could support the design of a National Flood Insurance Program (NFIP) affordability framework that includes a financial assistance program, as specified by the Homeowner Flood Insurance Affordability Act of 2014 (HFIAA 2014), Section 9. The committee’s first report (NRC, 2015a) described policy options that might be considered in proposing an affordability framework and also the design decisions that must be made by policy makers to develop an affordability assistance program. The various options can be combined in different ways to formulate a wide range of policy option alternatives. This chapter is organized in consideration of needing to choose among these policy option alternatives. It has three major sections: elements of a planning process; a discussion of policy modeling, including microsimulation; and an illustrative application of a planning process to evaluate affordability policy options.

ELEMENTS OF A PLANNING PROCESS TO EVALUATE AND COMPARE NFIP POLICY OPTIONS

A structured planning process for conducting the congressionally required affordability study can be organized around a suite of interrelated evaluation elements (Deason et al., 2010; Stokey and Zeckhauser, 1978). These elements are briefly described below and are generally executed in a

stepwise fashion, but the process can be iterative to provide opportunities for revisiting and refining steps to formulate a given alternative option.

- Identify problems and opportunities. The evaluation process begins by identifying policy-relevant questions and outcomes. Most of the questions will be of the “what-if” nature, organized around a policy problem to be addressed by as yet unspecified policy options. For example, a what-if question would be the following: For how many policyholders would a particular assistance program eliminate the cost burden of NFIP risk-based premiums under full implementation of BW 2012? In the NFIP context, these could include the number and percent of policyholders who are cost burdened by their NFIP premiums using different measures of cost burden selected by policymakers for comparison.

- Forecast future conditions. With the first element completed, a forecast of the future level of each metric without any policy interventions is prepared. In the context of BW 2012 Section 100236, this future condition would be BW 2012 fully implemented, without an associated affordability policy. This is the baseline for the analysis as discussed in this report.

- Formulate policy options. The next step is formulation of alternative policy options that might affect metrics in the future. Report 1 (Chapters 4, 6, and 7) discussed actions to increase takeup rates and affordability policy designs that might reduce the cost burden of NFIP premiums.

- Predict future conditions with an alternative policy option in place. The analytical and generally quantitative element then follows, wherein models and data are used to predict conditions under the baseline and with the policy options. For example, to assess the effect of a mitigation loan program, the number and percent of policyholders who would be cost burdened under the loan program could be estimated and compared to the number and percent values predicted under the BW 2012 baseline.

- and 6. Evaluate and compare policy options. With all predictions made, the results are displayed in ways to allow decision makers to evaluate and compare options and, ultimately, choose a preferred policy option. Ideally, implementation of the chosen option is followed by monitoring of outcomes to ensure that policy-relevant concerns are being addressed; this will mean that important metrics (e.g., number and percent of policyholders who are cost burdened) are measured and tracked to assess the status of a given option.

MODEL DEVELOPMENT FOR EVALUATING AFFORDABILITY POLICY OPTIONS

Policy Modeling: What If?

A common charge to federal agencies from executive and legislative policy makers is to provide quantitative answers to questions about the likely future effects of one or more policy options in a “what-if” framework: If a policy changes in a specified way, what is an agency’s best estimate of not only the total costs to the government compared with current policy 1, 5, or 10 years out, but also who will benefit and who will lose from the change—which population groups, geographic areas, and organizational players? Any such analysis, no matter how simplistic, requires the development, implicitly or explicitly, of a model that makes assumptions and applies them to data to generate estimates.

One definition of a quantitative model is a “mathematical framework representing some aspects of reality at a sufficient level of detail to inform a clinical or policy decision” (Caro et al., 2012). More generally, a model is a communication tool that allows the complexity of a given system to be reduced to its component elements. Models range from simple to highly complex (Box 2-1). Models can be ad hoc—developed for one-time use, often “on the fly”—or they can be formal—developed for longer-term use for repeated evaluations of alternative policy options as they emerge in an area and, hence, requiring extensive documentation of assumptions, inputs, outputs, and modeling processes. Model outputs can range from aggregates for a few categorizations of the population of interest, to detailed disaggregation by areas or population subgroups. Model inputs can similarly pertain to a relatively small number of prespecified aggregations or to large numbers of individual observations that can be reaggregated in different ways. Model operations can be largely deterministic, or they can be probabilistic and include behavioral predictions based on empirical studies. Models can also accomplish future projections by “aging” the initial database and incorporate changes in key parameters due to external forces (e.g., sea-level rise due to climate change).

Prior to the advent of high-speed computers and extensive databases, modelling analysis was limited to simple, deterministic, highly aggregated, ad hoc models that could be computed on the “back of the envelope.” Beginning in the 1960s and 1970s, several types of formal computer modeling techniques and software were developed for longer-term use. Today, ad hoc models developed for specific applications often use software that utilizes tabular spreadsheets.1 Tabular spreadsheets could be considered the

__________________

1 Spreadsheets can also be used to develop some types of formal models.

BOX 2-1a

Types of Policy Analysis Models and Their Applicability to the NFIP

Time-Series Models—Models that range from simple extrapolation of a time series, such as participants in a program (e.g., the number of policyholders) or aggregate annual claims, to complex macroeconomic models that interrelate large numbers of time series with specified assumptions about, for example, the relationship of economic output to various inputs. With their focus on forecasting aggregate quantities based on historical data, simplistic time-series models are too limited for policy modeling of the NFIP, and complex macroeconomic models are not applicable.

Regression Models—Models that include parameter estimates of the relationships of input (right-side) variables to an output (left-side) variable from a regression performed on a database; for example, a regression model might be useful to relate the probability of participating in a flood mitigation program to characteristics of homeowners. Regression models are too limited for most policy modeling, including that required of the NFIP. One such limitation is the ecological fallacy—the logical error of making inferences about individuals (e.g., policyholders) from relationships estimated for groups (e.g., communities). Another limitation is the complexity of modeling many outcomes together. Nonetheless, appropriately specified and estimated regression models can often provide one source of input to another type of model (e.g., a microsimulation model).

Cell-Based Models—Spreadsheet models that perform computations on prespecified “cells.” For example, NFIP policyholders might be classified by premium category prior to BW 2012 (e.g., NFIP full-risk, pre-flood insurance rate map [FIRM] subsidized, grandfathered), elevation, amount of coverage or property value, broad geographic area of property, and other characteristics. The cell-based

functional equivalent of yesterday’s “back of the envelope” calculations, although spreadsheets can also be used in more formal models. Among the available formal modeling techniques are time-series models, regression models, cell-based models, microsimulation models, and general equilibrium models (See Box 2-1). Numerous computing software packages have the capability of undertaking many of the modelling techniques listed below.

In considering modeling options, FEMA could view its congressional directive—that is to conduct a study on how BW 2012 would affect the affordability of flood insurance premiums—as limited to requiring the development of one or more ad hoc models for estimating costs and benefits of specific affordability policy options. However, if FEMA views its Congressional request in the context of a long history of requests for different kinds

approach could be useful for the NFIP—for example, assessing the effect of a very specifically targeted policy (e.g., providing affordability assistance based on a simple formula to policyholders that received pre-FIRM subsides prior to BW 2012). However, a cell-based model would limit the detail of disaggregation of outputs that could be provided to policy makers and would likely require frequent respecification to add, delete, and modify cells as policy options (e.g., assistance targeting) and output needs changed.

Microsimulation Models—Models that operate on microlevel databases of individual records (e.g., policyholders), mimicking how current and alternative program provisions apply to the individual units described in those records. Such models permit detailed disaggregation of outputs to serve policy makers’ diverse needs. Although microsimulation models are often complex (but typically less complex than macroeconomic models) and can be costly to build and maintain, such a model would be highly flexible and well suited, and relevant to the policy modeling needs of the NFIP.

Computable General Equilibrium Models—As their name implies, models that simulate entire economies, which are typically disaggregated into sectors, and are designed to estimate the general equilibrium effects—after several rounds—of major economic policy changes (e.g., changes in taxes). They are not applicable to the NFIP, which pertains to a tiny part of the U.S. economy. Similarly, integrated assessment models, which are used to model the interaction of environment factors, such as climate change, economic impacts, and large-scale policy responses (Nordhaus and Sztorc, 2013), are much too broad for use for the NFIP.

__________________

a Although Report 2 focuses on the applicability of these techniques for policy modeling of the NFIP, more general discussions of these methods and their strengths and weaknesses for other applications can be found in NRC (1991, 1997) and OASPE (2012).

of analysis (See Appendix B) then it may choose to pursue the development, maintenance, documentation, and regular updating of a formal policy modeling tool that can be used repeatedly to analyze a variety of policy options and the effects of changing external conditions. The task for FEMA then becomes determining which formal modeling tool (or tools) to select for investment given the kinds of policy questions it is likely to be asked.

FEMA’s modeling needs for NFIP premium affordability study require the ability to estimate yet-to-be developed policy options, singly and in combination, that could affect NFIP premium revenues and the affordability of premiums for current individual policyholders and groups of policyholders (defined, for example, by income or wealth, geographic area, and other characteristics) and potential policyholders. Congress and other

stakeholders may want answers to questions that have a specific focus, such as, What are the effects in a particular congressional district for various groups of property owners and where are the effects concentrated? As FEMA’s modeling capacity is developed over time, the agency would be able to predict behavioral effects, such as the propensity for homeowners to newly purchase, increase, decrease, or entirely drop flood insurance coverage in response to changing premiums or assistance in paying premiums or undertaking mitigation. This combination of analytical requirements leads to consideration of microsimulation techniques for assessing NFIP policy options, including options for providing affordability assistance.

Regarding this choice, the committee began with the reality that the effects of BW 2012 (and other legislation and policies) are manifested first at the level of the individual policyholder/property owner. Therefore, the most appropriate and credible analytical approach has to begin at that level, and the only such approach for highly flexible, fine-grained, realistic analysis is microsimulation. In addition, microsimulation is the only approach that readily allows results to be presented for various levels of aggregation, as is typically required by policy makers. Of course, as the report notes, FEMA will need to consider its current directive from Congress and its mission and long-term objectives, as well as time, the availability of resources, and other factors in making a decision on modeling strategies. FEMA may determine that other modeling approaches (e.g., cell-based models) can be useful for some limited questions and purposes. But the committee does recommend microsimulation to FEMA on the assumption that FEMA will continue to be asked for detailed analysis of costs and benefits of various proposals for changes in the NFIP or other policies (e.g., disaster relief or mandatory purchase requirements), so that an investment in microsimulation is well worth it. Moreover, microsimulation analysis would likely be conducted on a sample of households and properties, and the size of the sample deemed adequate for answering the policy questions being raised will feature heavily in determining the cost and time for obtaining needed data completing cost-benefit analyses of various policy options.

A fully developed microsimulation model can be complex and can be costly to build and maintain. However, a complete microsimulation model does not need to be built before any analyses can be completed. Rather the construction of the model can begin immediately by building separate modules and as the available data permit can be used to answer some important but limited questions. Over time new modules can be built and linked together to create a more complete model that can be quickly deployed to answer future NFIP policy questions as they arise.

What Is Microsimulation?

The microsimulation modeling approach to produce estimates of the effects of proposed changes in government programs involves obtaining inputs from microlevel databases of individual records, mimicking how current and alternative program provisions apply to the individuals described in those records. For example, in simulating the effects of changes to the Supplemental Nutrition Assistance Program (formerly the Food Stamp Program), microsimulation models process records for families as if they were applying to the local welfare office for benefits, and in simulating the effects of tax law changes, microsimulation models process records for people as if they were filling out their 1040 tax forms.2

Microsimulation models have two essential elements: (1) a microdatabase and (2) a computer program. The database is constructed from administrative or survey data with information on households in the population targeted by the government program. The model’s computer program codes the rules of the government program under both the “baseline” policy, which is typically the current policy, and a “reform” policy, which is a proposed alternative. The computer program also simulates, in the case of a government assistance program, whether a household is eligible for the government program and the benefits for which the household would qualify. In addition, the computer program simulates a household’s behavioral response, determining whether the household will participate in the program. Processing all the households in the database, the model counts participants to estimate the total participation in the program and adds up the assistance provided to estimate total program costs. By performing these operations under both baseline and reform policies and comparing the results, the model estimates the program cost and participation effects of the proposed reform policy option. The model can also estimate the distributional effects of the reform, identifying the population subgroups that gain and lose benefits (Schirm and Zaslavsky, 1997).

For the NFIP, a fully developed microsimulation model would likely have a database consisting of current NFIP policyholders and potential policyholders—in other words, both insured and uninsured properties—in areas of flood risk. For each property in the database, the computer simulation program would use location information, property characteristics, and preferred coverage to simulate premiums to be paid under a baseline and a proposed alternative policy option. Information on the assistance program design features, the property, and the property owner will allow the computer program to simulate whether the property owner is eligible for assistance (and the amount of assistance) in paying the premium or for

__________________

2 Commercial tax preparation software is a form of microsimulation modeling.

undertaking mitigation. The program would also be able to aggregate the simulated results across properties in the database to estimate outcomes such as the insurance takeup rate, NFIP net revenues, and federal expenditures. Also, effects on subgroups defined by property or policyholder characteristics, including geographic area, premium category prior to BW 2012 (NFIP full-risk, pre-FIRM subsidized [PFS], grandfathered, and preferred risk policy) and household income or wealth could be estimated (see Box 2-2).3

Typically, microsimulation models are developed incrementally, with continuing improvements to both the database and computer simulation program over time. The simulation program is usually modularized, and the simulated results from some modules feed into other modules. Such modularization facilitates the refinement of old modules and the addition of new modules to enhance the model’s simulation capabilities. In addition to simulation modules, the program will have basic tabulation routines that aggregate across individual observations in the database to produce estimated outcomes for the entire NFIP and important subgroups.

For the NFIP, an initial microsimulation database might include only current policyholders. Through time, properties that are not covered might be added to the database. Similarly, the first-generation model might not simulate behavioral responses, assuming, instead, that current policyholders maintain the same level of coverage as before even if the premium were to change substantially. Subsequently, a behavioral response module could be developed that simulates whether a current policyholder increases, decreases, or drops coverage and whether a potential policyholder takes up coverage on a previously uncovered property in response to a change in the premium, considering the current or potential policyholder’s income and other characteristics.4

Although the first-generation NFIP microsimulation model might not simulate behavioral responses, it would certainly need a module that estimates a property’s flood risk based on the property’s characteristics, as well as a module that estimates the flood insurance premium based on the NFIP

__________________

3 Output from the microsimulation program could include, for example, premium revenues and the percentage of policyholders who are cost burdened by their NFIP premiums, for not only the entire NFIP, but also the subgroup of policyholders who lost pre-FIRM subsidies due to BW 2012. These outcomes would be estimated under both the baseline condition and the alternative policy option under consideration. The differences between the outcomes—such as the increase or decrease in the percentage of cost-burdened policyholders—show the effects of the alternative policy option.

4 Pending the development of such a behavioral response module, a microsimulation model could have the capability of conducting sensitivity analyses based on certain—probably fairly crude—assumptions, such as no response at all versus no response at all except for dropping coverage entirely if the premium increase exceeds some specified threshold.

BOX 2-2

A Microsimulation Model of the NFIP

A microsimulation model operates on a microlevel database of individual records (e.g., property owners in floodplains) and simulates how current program provisions and alternative policy options affect these individual records.

The essential elements in developing a microsimulation model of the NFIP include the following:

- Construction of a microdatabase of properties, policies, and owners with all the relevant data elements, including hazard maps or other means to estimate flood losses and future claims should floods of different magnitudes occur and cause damage to properties.

- Development of a computer program that can simulate a baseline policy (e.g., BW 2012 as fully implemented) and alternative policy reforms (e.g., an affordability assistance plan). The program would perform all of the necessary calculations to show “what happens” to a property owner or to other entities of interest (an entire community or other relevant subgroups) under the baseline and under alternative options. As it is developed, FEMA’s microsimulation model could incorporate projections of the baseline into the future based on changes in the population (e.g., aging and development) as well as changes in external conditions (e.g., sea-level rise due to climate change).

Microsimulation models are conceptually attractive because they begin at the appropriate decision level of the property and property owner and can account for the diverse circumstances and characteristics of the relevant population. In FEMA’s case, the relevant population may be current NFIP policyholders and potential policyholders in areas of flood risk.

rating tables (or the tables under an alternative plan), the chosen coverage and deductible, and the estimated risk from the risk module.5 Meanwhile, the microsimulation database would need to have all of the data elements required by these modules. These data needs and the gaps in existing data are discussed in detail in Chapter 3.

Of course, the reform options of most immediate interest to FEMA are affordability assistance programs, such as a program that provides premium assistance to policyholders who are cost burdened by NFIP risk-based premiums. If the assistance is paid from general federal revenues, the

__________________

5 The analysis called for by BW 2012 is pushing the NFIP rate-setting practice toward “full-risk” premiums on all insured properties. Therefore, the premium determination module must be able to mimic the process by which premiums are estimated.

BOX 2-3

Projection Capabilities in Microsimulation Models

Because federal, state, and other agencies are often asked to provide estimates of policy effects for future periods, any modeling tool requires a capability for projection of its input database. Projection capabilities in microsimulation models are achieved by two basic techniques: static aging and dynamic aging.

Static microsimulation models project a sample forward for short time periods by reweighting the records in the database (e.g., if new construction in an area is expected to increase at-risk properties by 10 percent over the next 5 years, then the properties in the database in that area are treated as if they each represented 1.1 properties).

Dynamic microsimulation models project a sample forward by dynamic aging (e.g., people aged 50 become 60 in year t + 10). FEMA may not need the added complexities of dynamic aging, because it is not concerned with following the trajectories of individual policyholders. Rather it is concerned with point-in-time estimates for specified periods (e.g., 5 or 10 years into the future). Such estimates can be accomplished by static reweighting techniques.

effect of the assistance program on NFIP premium revenues is limited to its effect on whether the premiums paid by those who receive assistance change their demand for insurance, a response that might not be simulated by a first-generation model. Yet, if the assistance program design caps premiums paid to the NFIP, revenues to the program would be reduced. In either case, one or more modules may be needed to determine which policyholders are eligible for assistance, the amount of assistance to be provided, and how the amount of assistance is paid (whether by reducing the premium or from an “outside” source). Additional modules or enhancements to other modules might be required if assistance programs providing mitigation assistance are to be simulated. For example, it could be necessary to simulate how a particular mitigation activity lowers flood risk and, thereby, the flood insurance premium (Box 2-3).

Moving Forward

FEMA will need to determine what it seeks to accomplish with any modeling it undertakes. At one extreme, it might see its needs met by a model with limited capabilities to answer immediate and specific questions about affordability policy options. For example, FEMA may choose to estimate only the effects of a policy that provides some or all previous recipients of PFS rates with assistance amounts equal to their pre-FIRM subsidies.

In contrast, FEMA might aspire to develop a microsimulation capability for providing rapid responses to a wide array of questions over time, including questions about program design that have not yet been asked (see Table 2-1 in section entitled “Identify Policy-Relevent Questions”).

Models based on microsimulation techniques are conceptually highly attractive because they operate at the appropriate decision level (e.g., household or individual) and take into account the diverse circumstances and characteristics of the relevant population, whether it be low-income families or taxpayers, or, in FEMA’s case, NFIP policyholders and potential policyholders in areas of flood risk. Such models are able to respond to important needs of the policy process for information about the effects of very fine-grained, as well as broader, policy changes, and the effects of policy changes on the NFIP as whole, as well as important population subgroups.

Building microsimulation models, however, which are necessarily complex to reflect the complexities of government programs and individual circumstances, requires substantial time and resources. There are recognized practices for an agency looking to develop a simulation model to address its needs for evaluating various policy options (NRC, 1991; OASPE, 2012, and the references on pp. 74-75 therein). These include

- setting clear goals and priorities;

- building capacity incrementally through time, especially as new and better data become available;

- focusing on building self-contained modules that can be readily added to or removed from the model;

- designing modules to facilitate documentation and validation and allow for enhancement over time;

- being cognizant of the need to provide for entry and exit points in the model that facilitate linkages with other models, even if at some future date;

- constructing prototypes and establishing milestones throughout the development process to help identify design flaws at an early stage;

- enabling some analysis capabilities before the entire model is completed;

- attaining model accessibility to allow for peer review and other users who are not experts;

- preparing adequate documentation on a timely basis for the model and its components; and

- conducting validation studies of the model and its components, including the assessment of uncertainty through the use of sensitivity analysis and the application of sample reuse techniques to measure variance.

Following these recognized practices will allow for the development of a well-documented and modularized microsimulation model. In adopting these practices, an agency is required to make clear the assumptions (tested and untested) in the model, the strengths and weaknesses of model components and the underlying data, and the relationships among model components. Such transparency helps develop a short-term and longer-term agenda for research and data acquisition to improve the model, which, in turn, improves the estimates of policy outcomes it provides.

MICROSIMULATION MODELING FOR THE NFIP

The purpose of this section is to illustrate how the evaluation elements might be implemented in a FEMA evaluation of affordability policy options. It is illustrative and not meant to be a recommendation for how

BOX 2-4

North Carolina Proof-of-Concept Pilot Analysis

The North Carolina Floodplain Mapping Program (NCFMP) prepared a reporta that served as a reference for the Committee on the Affordability of National Flood Insurance Premiums. NCFMP conducted analyses, as instructed by the committee, relevant to the committee’s charge and the analyses were considered by the committee in writing Report 2.

The NCFMP work focused on the analytical challenges, data needs, and related data acquisition issues for conducting a national-level flood insurance affordability assessment. NCFMP was selected to work with the committee in this “proof-of-concept” pilot analysis because of the extensive and sophisticated databases and analytical models developed by the state to assess flood risk. By many measures, the NCFMP databases and related methods of analysis are the most advanced in the United States, although they still fall short in some respects of what might be needed eventually by FEMA.

The NCFMP’s report demonstrated an analytical approach and identified data requirements for evaluating different NFIP policy scenarios, with specific attention to policies that would limit premium increases (premium assistance or mitigation grants) for some subset of policyholders. As a part of the study process, this committee provided the scope of work that resulted in the NCFMP report. The NCFMP report, however, is not a committee product, rather a report that the committee references throughout Report 2.

The main objectives of the pilot analyses were to

- test the conceptual logic and computational methods for an affordability analysis and

- identify data needs to perform similar analysis at a nationwide scale.

FEMA might conduct a particular analysis. In preparing these sections of the chapter, the committee provided specific illustrations for each of the six elements of the planning process that are pertinent to applying a microsimulation approach to the NFIP. The particular illustrations used are based on the committee’s experience in preparing both Report 1 and Report 2, along with insights gained from the North Carolina proof-of-concept pilot analysis (NCFMP, 2015; Box 2-4). As FEMA begins to implement its own analysis, it will have to define the relevant questions, outcomes and metrics to measure outcomes, and alternative policy options to evaluate.

Identify Policy-Relevant Questions

A common charge to federal agencies from executive and legislative policy makers is to provide quantitative answers to questions about likely

To accomplish objectives, NCFMP had three tasks:

- Compile and integrate relevant data.

- Establish a baseline flood insurance portfolio for North Carolina.

- Evaluate alternative NFIP policy options and their impact on affordability.

NCFMP has acquired and developed advanced datasets and tools to support its ongoing and planned initiatives on floodplain mapping. Examples of specialized datasets include building footprints, which have detailed physical building and property information, floodplain mapping, and digital flood elevation data. Other examples include methods for calculating building-level flood damages, mitigation costs, and flood insurance premiums. NCFMP uses these advanced datasets and tools to support management of all regulatory and nonregulatory flood hazards and other risk management data in a database-derived, digital display environment.

These activities conducted by the NCFMP demonstrate that it is possible to acquire additional data for policyholders (beyond the data that FEMA has available) and data for properties that are not insured. The proof-of-concept pilot analysis further demonstrated that it is possible to use such data to simulate the replacement of pre-FIRM subsidized and grandfathered premiums by NFIP risk-based premiums and the targeting and costs of affordability assistance based on a very simple (but not recommended) measure of cost burden.

__________________

a The NCFMP (2015) report is publicly available at http://dels.nas.edu/resources/staticassets/wstb/miscellaneous/wstb-cp.pdf.

future effects of one or more policy options in a “what-if” scenario. For example, if a policy changes, what is an agency’s best estimate of the effects compared with maintaining the current policy 1, 5, and 10 years into the future?6 To make such evaluations requires defining the objectives by which each option will be evaluated. This is an exercise that begins with the first element in the planning process—identifying problems and opportunities—but can be adjusted and clarified throughout the process. Any such quantitative analysis requires the analyst to understand the policy-relevant questions.

One approach is to identify evaluation objectives that are explicit or implicit in the questions being asked by decisionmakers. For the NFIP, this refers to the leading question posed by BW 2012 Section 100236, which is generally how to provide assistance (or make other reforms) that reduce the cost burden of an NFIP policy on owners of properties in flood-prone areas, as the legislation moves the NFIP toward risk-based pricing. This general concern can lead to a large number of more detailed questions as shown in Table 2-1. Table 2-1 includes illustrative examples of the questions posed during the course of the study by guest speakers, iterative discussions with FEMA, and other sources such as studies and reports from the Government Accountability Office (GAO) and the Congressional Research Service (see Appendix B). Questions can be either descriptive or of the “if-then” type. Such questions can give some guidance as to what kind of analyses might be needed to answer the questions being asked.

To conduct the required affordability analysis, FEMA will need to narrow down from the many possible descriptive and “if-then” questions into a more limited number of questions that can focus the analysis of alternative policy options and be used to define metrics for measuring the most critical program outcomes. These metrics will then serve as the basis for estimating the effects of each alternative option relative to the baseline and for comparing the alternatives against each other according to the policy objectives embodied in the metrics. Making reference to Table 2-1 and keeping the provisions of BW 2012 including Section 100236 in mind, one possible set of questions following from Report 1 might be the following:

- Does an assistance program reduce the number of policyholders who are cost burdened and the degree to which they are cost burdened (relative to BW 2012)? (Report 1, Chapter 6)

- Is an assistance program consistent with actuarial pricing principles, including NFIP revenues that cover claims and expenses through time, and does it provide transparency of grandfathering, discounts, and subsidies, and minimize cross subsidies? (Report 1, Chapters 2 and 3)

__________________

6 This also means that objectives, or at least the emphasis on particular objectives, may vary over time.

TABLE 2-1 Examples of Affordability Specific Questions

| Descriptive Questions Characteristics of the flood insurance program, as it existed before BW 2012, or as it is expected to exist after implementing BW 2012. |

|

| If-Then Questions The effects of an alternative policy option relative to the baseline of BW 2012. (These effects may or may not include behavioral responses, depending on the analytical capabilities of the microsimulation model.) |

|

- What is the effect of an assistance program on takeup rates, including compliance with mandatory purchase and securing increased purchase by property owners who currently do not choose to purchase insurance? (Report 1, Chapters 2 and 4)

- What are the costs to the federal treasury of an assistance program? (Report 1, Chapter 6)7

For purposes of evaluating alternative policy options, questions such as these can be used to define metrics, so that the effect of a policy option (relative to the baseline) on each metric can be simulated. The metrics chosen will be logically connected to the policy questions and objectives and easily understood by decision makers and stakeholders. As an example of such an approach, NCFMP used the questions and associated metrics shown in Table 2-2 to structure and conduct the proof-of-concept pilot analysis.

This table is not an illustration of a complete ideal set of outcome metrics, but rather is only presented as an illustration from the proof-of-concept analysis. In fact, data gaps, which are discussed in detail in Chapter 3, may limit what outcomes can be predicted. For example, one of the long-standing concerns of Congress has been the takeup rate of flood insurance and how that takeup rate might be affected by higher premiums. No simulation to answer that question was done in the proof-of-concept analysis, because there was no behavioral response equation that could be used to predict the effect of higher premiums on takeup rate. Another challenge may be defining a measurable metric for a qualitative concern (see Box 2-5).

Specify Future Baseline Conditions

Analysis of flood insurance affordability policy options would define a baseline that can be used to evaluate the effect of alternative affordability policy options. The BW 2012, Section 100236, language suggests that the baseline is a situation where BW 2012 is in full effect. Adopting this baseline would require specification of what this means specifically in terms of rates for various classes of policyholders. This may not always be clear. For example, BW 2012 directs FEMA to evaluate the purchase of private reinsurance. The outcome of such an evaluation is not yet certain. As a result, one possible future condition is that there is a new load on all

__________________

7 These questions are related to—but do not replace—the six decision questions that policy makers must consider when designing affordability policy options (Report 1, Chapter 6). Potential answers to some of those six questions will undoubtedly be informed by descriptive and simulation analyses (showing, for example, the numbers and characteristics of policyholders who are cost burdened under BW 2012).

TABLE 2-2 Illustrative Evaluation Questions and Associated Metrics

| Evaluation Question | Metric Description |

| How cost burdened are policyholders? | Number and percentage of all policyholders who will be cost burdened based on the definition chosen by policymakers.a |

| Number and percentage of policyholders who previously paid pre-FIRM subsidized rates who will be cost burdened. | |

| Number and percentage of current policyholders who would lose grandfathered rates who will become cost burdened. | |

| Number of property owners in 500-year floodplains, who do not have a policy, and for whom purchase of an NFIP risk-based policy will create a cost burden. | |

| Does NFIP pricing follow actuarial principles regarding net revenues and cross subsidies? | Expected NFIP premiums minus the sum of expected claims and expenses. Percent of all revenue from explicit across-the-board loadings to compensate for forgone revenue. |

| What is the effect on federal treasury spending? | Expenditures made for a premium assistance program. Expected spending for post-flood disaster aid. |

a In the North Carolina proof-of-concept analysis, cost burden was defined as when premiums exceeded 1 percent of flood insurance coverage. This measure was used because data to calculate this measure were readily available. This cost-burden measure was also discussed in Report 1 since the idea that premiums exceeding 2 percent of coverage are excessive was suggested in HFIAA 2014. The committee does not endorse this as a measure of cost burden. For further discussion, see Chapter 4 of this current report.

flood insurance premiums for reinsurance. Another is that the decision is for FEMA not to purchase reinsurance, but to continue to borrow from the federal treasury when necessary. This uncertainty by itself suggests that two different baselines are possible.

There are other future uncertainties independent of BW 2012 that can affect baseline conditions.8 For instance, the baseline takeup rate for flood insurance policies will be influenced by many factors. For instance, the amount of marketing of insurance policies by FEMA, general economic conditions, the occurrence of storms, and so forth can all impact takeup

__________________

8 The number of drivers of future conditions may be dictated by time horizon or nature of questions. To illustrate sea-level and climate change effects 30 years into the future is a different projection requirement than projecting private flood insurance policies in force in the next 5 years.

BOX 2-5

Illustrating the Challenge of Defining a Metric: Community Resiliency

Resiliency has been defined as “the capacity of a system to absorb change and disturbances, and still retain its basic structure and function—its identitya (Walker and Salt, 2006).

A resilient community is one which has the capacity to “absorb change and disturbances,” returning quickly to full function. One test of community resiliency is its ability to recover from a major flood. Another concern that may be expressed by policy makers is what impact BW 2012 would have on a community’s ability to recover from a flood.

The disruptions most relevant to NFIP flood insurance are direct damages to property and its contents. Following a flood, property owners bear the responsibility for repair or replacement of damaged buildings. Residential structures may be damaged or destroyed, relocating population and disrupting community cohesion. In some cases, property owners may have the financial resources—either available funds or borrowing capacity—to move quickly to restore properties to pre-flood conditions. However, many if not most property owners are not in a position to finance major, unanticipated repairs, let alone complete reconstruction.

The other means of dealing with flood damage are the following:

- Abandon the property, either in full or in part.

- Use post-flood disaster assistance (in the form of grants or low-interest loans) and other funds as needed to make needed repairs or replacements.

- In the case of properties covered by flood insurance, use insurance proceeds and other funds as needed to make needed repairs or replacements.

The first option is, of course, the antithesis of resiliency. If this is the result for some number of properties throughout a community, then the structure and the function of the community are lost or, at best, seriously damaged.

Although some states can provide a limited amount of post-flood assistance, the major programs of this kind are operated by the federal government—principally FEMA, the Department of Housing and Urban Development (such as the community development block grant [CDBG] program), and the Small Busi-

rates. Further, there is increasing interest in the private sector becoming more involved in underwriting flood insurance. New technologies and a better understanding of flood risks may have increased that interest (GAO, 2014a). Other examples of different baseline conditions include projections of changes in population density in flood-prone areas, price and extent of private-sector flood insurance offerings, and effects of changes in flood risk related to climate change.

ness Administration (SBA). A 2012 paper (Kousky and Shabman, 2012) analyzes the aid households can expect to receive from these programs and find that it is much less than many may anticipate. Federal assistance is only available in the case of a federal disaster declaration, which does not occur for all floods. FEMA grants to individuals through the Individual Assistance program are also only authorized in a subset of declarations; GAO (2012) found that, for declarations issued between 2004 and 2011, only 45 percent authorized Individual Assistance. Furthermore, the amount of this assistance is quite limited—capped at a bit more than $30,000 per property (this number is indexed to inflation), and the average payout is only $4,000 (McCarthy, 2010). Low-interest loans from the SBA may be available, but these must be repaid, although that can help provide liquidity to homeowners. Individuals may receive grants through their state or local government funded by a CDBG, but that is highly uncertain. Local governments have enormous flexibility in how they use these funds and only in a few instances have they been used to make large grants to households simply for repair. Kousky and Shabman (2012) also noted federal disaster aid might not be disbursed for many months after the event.a

For any significant damage, it would appear that the property owner must bear the bulk of the financial responsibility. Clearly some may be unable to do so. Insurance can thus be resiliency enhancing in that it can make the funds needed for rebuilding available to disaster victims. In summary, reliance on disaster aid seems likely to produce only partial recovery and that only after some delay. For both reasons, some community resiliency is lost.

In a policy simulation, the best metric for representing community resilience may simply be the takeup rate (expressed as a percent of properties) of flood insurance. It is a metric that can be affected by a policy change and is measurable, at least in principle. And it has a logical connection to the basic concept to be represented. Communities with high takeup rates can be expected to be more resilient than those that rely on self-funding and government assistance. High takeup rates will be associated with not only more complete recovery of community structure and function, but also more timely recovery.

__________________

a There are also several programs post-disaster to fund investments in hazard mitigation, such as the Hazard Mitigation Grant Program, the Increased Cost of Compliance coverage of the NFIP, and at times CDBGs. This discussion, however, was about funding simply repair, and not investments in mitigation.

The baseline can be defined on the assumption that fully implemented BW 2012 does not trigger behavioral responses by floodplain property owners and occupants. This may not be the most likely outcome through time, however. Most obviously, increasing premiums might change the number of policies in force. If this possibility is to be included in the baseline, then a prediction equation will be needed to relate policies in force to changes in the cost of premiums attributable to BW 2012 (see Box 2-6).

BOX 2-6

Premiums, Insurance Purchase, and Mitigation

The call for an affordability framework in HFIAA 2014 reflected a congressional interest in whether higher premiums might result in reduced purchase of flood insurancea and conversely whether a premium assistance program might maintain purchase by those who might drop coverage or encourage purchase by those who never had coverage before. BW 2012, as well as HFIAA 2014, reflected congressional intent that FEMA encourage property owners to implement mitigation actions, including but not limited to structure elevation that FEMA would credit toward premium reductions.

The cost of flood insurance is the premium paid when the policy is purchased. The benefit is the promise of compensation in the form of a claims payment, bounded by the chosen deductible and coverage amount. Each property owner must decide how much insurance coverage to purchase or maintain so that the perceived expected benefit justifies the cost. In many cases, the outcome of that decision is to purchase no insurance at all.

Many factors, other than premiums, affect the insurance purchase decision. Benefits are evaluated by property owners based on their estimates of the probability of flooding and the estimated loss should flooding occur. Those estimates may differ substantially from the FEMA-estimated probability and loss. Other factors affecting purchase may include

- expectations for disaster aid,

- income available to pay the premium in consideration of other expenses,

- mandatory purchase requirement, and

- risk attitudes.

These factors all need to be considered when trying to isolate the effect of premiums on the insurance purchase decision. Despite the interest in the effect of premiums on takeup, a review of the literature in Report 1, Chapter 4, concluded that any prediction of the effect of premium levels on the decision to buy insurance would be accompanied by substantial uncertainty. For this reason, the North Carolina report did not simulate changes in takeup rate or in mitigation adoption due to changes in premiums.

However, absent a reliable prediction model, an alternative is to have the baseline assume that BW 2012 will not affect policies in force and then recognize that possibility as part of a qualitative discussion of the analytical results. The choice of future baseline conditions is a judgment for the analysts and may play an important role in analyzing alternative policy options for addressing affordability issues.9

__________________

9 Given that projections will be highly speculative, FEMA analysts may want to consider more than one baseline, with a “base baseline” being no change and one or more alternative baselines allowing for changes (e.g., in the takeup rate).

The literature reviewed suggests that premium price elasticity of demand for insurance—the sensitivity of the quantity demanded to changes in the price—is quite inelastic. This means that a 1 percent increase in price will bring about a reduction in policies in force of less than 1 percent, perhaps significantly less than one-half percent. This conclusion, however, cannot be made with confidence, so for the purposes of microsimulation, further review of the literature, or perhaps new empirical studies, may be needed on the decision processes.

There are additional complicating factors. For one, the actual price elasticity may differ from one location to another. For example, policyholders in coastal high-risk zones such as areas in the special flood hazard area that are subject to additional hazards due to storm induced wave action may be less sensitive to changes in premium levels, due to a greater sense of risk. Policyholders in multiunit buildings may be more sensitive, due to a lower perception of risk. In addition, some of the price changes that may be considered are larger than what has been observed in the past. In these cases, it is unclear whether the demand response is reasonably predictable using past data.

Now consider the effect of premium levels on the decision to implement mitigation. All mitigation measures present the same problem: how to justify a capital investment at the present time on the basis of insurance premium reductions expected in the future. This is a benefit-cost problem, although the property owner may not see it as such. A way to proceed is to identify any mitigation measures likely to be feasible for a particular structure, determine the upfront cost and any continuing maintenance cost for each identified measure, and then obtain an insurance premium quotation that may reflect a lower price due to the reduced expected flood losses from undertaking the mitigation measure.

If insurance purchase and adoption of mitigation is a matter of policy concern then it will be necessary for microsimulation to build behavioral response modules using assumed premium price elasticity estimates. The elasticity estimates used in microsimulation under these conditions would be based on best available information and would report the sensitivity of the simulation results to different elasticity assumptions.

__________________

a Policies in force across the nation were 5,646,144 in 2011; 5,620,017 in 2012; 5,568,642 in 2013; and 5,350,887 in 2014. Data source available at http://www.fema.gov/ total-policies-force-calendar-year (accessed on October 29, 2015).

Formulate Alternative Policy Options

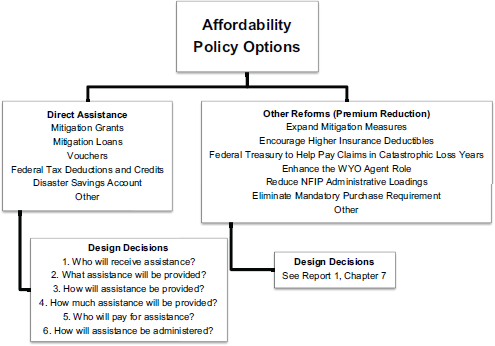

Report 1 (Chapter 6) presented six design decisions (questions) and associated options for designing an assistance program within an affordability framework. These questions are reproduced in Figure 2-1 below. In addition, Report 1 (Chapter 7) described options for providing direct assistance to cost-burdened policyholders, as well as policy options that could reduce premiums for all policyholders.

Affordability policy options can be one or multiple combinations of direct assistance and other reforms (premium reductions) (Figure 2-1). If a

FIGURE 2-1 Affordability Policy Options

SOURCE: Adapted from NRC, 2015a (Report 1, Chapters 6 and 7).

direct assistance program is included, then answers to each of the six design decision questions must be provided to define the specific features of the assistance program. As one example, an alternative option might be limited to allowing flood insurance premiums to be included as a federal income tax deduction, based on specified conditions of the taxpayer. As another example, a cash assistance program (whether for premiums or mitigation) combined with NFIP risk-based premiums will need to specify conditions that can be used to define who is eligible and the amount of assistance received. If other policy reforms are to be included, then their provisions must be completely specified. For example, if the federal treasury is to pay all claims that exceed a specified level in a given year, then that level needs to be specified.

Numerous affordability policy options can be identified early in the evaluation process and then become more refined in their design as the analysis proceeds; additional options may be introduced at any time. Analysis may show that some options may be incompatible and cannot be included in an affordability policy option.

Conduct Simulations

Microsimulation as an analytical approach can predict how a given policy option might affect an evaluation metric relative to a baseline condition. Also recall that the modifier “micro” in microsimulation means that effects of an alternative policy option are first estimated at the level of the property owner and occupant if not the owner and then aggregated. If applied to an analysis of NFIP affordability policy options the database must include data for individual properties and their owners. The properties can be a sample that represents the larger population of interest up to and including every property in the population. It is understood that data may be sparse at first; that is, the information about the characteristics of properties and owners may be limited. As such, it might not be possible to answer some questions at all, while answers to some other questions are incomplete or otherwise limited.

The effect of data limitations is demonstrated by the experience of the North Carolina proof-of-concept analysis. In that work the question posed was, “How many policyholders will be cost burdened by higher rates?” For any individual property owner, the answer requires a definition of cost burden (see Report 1, Chapter 6). Only then is it possible to develop a description of whether a policyholder is faced with an unaffordable premium increase or not. In the North Carolina study, the available data used to define cost burden were values of the ratio of flood insurance premium to insurance coverage expressed as a percent, and values greater than 1 percent were defined as cost burdensome. This cost-burden measure was chosen because policyholder income and annual housing costs were not available; however, the committee does not recommend this as a measure of cost burden. Although the analysis was not able to use an income-referenced measure of cost burden, the effort to answer this question focuses attention on this most important data gap. One result was to stimulate discussion among the committee on use of assessed property values as a reference for measuring ability to pay higher premiums, in recognition that such data are available in all communities. This possibility is discussed in detail in Chapter 4. Options for filling data gaps are described in Chapter 3.

Generally, the North Carolina analysis had to predict metrics (Table 2-2) for the baseline and then predict the metrics with specified policy options in effect. An example can illustrate. Two of the questions addressed in the North Carolina report were, “How many policyholders will be cost burdened by higher rates?” and “What would be the cost to the federal treasury of a premium assistance program?” Analyzing these questions required first narrowing their focus to a particular subpopulation of policyholders. In this application, the focus was narrowed to policyholders who at the time BW 2012 first went into effect would lose their eligibility for a pre-FIRM sub-

sidized rate or grandfathered rate. This focus then meant that a descriptive tabulation was required to estimate how many policies were grandfathered and how may were paying pre-FIRM subsidized rates prior to BW 2012. In the North Carolina analysis pre-FIRM subsidized polices were identified in the NFIP database, but an algorithm had to be developed for tabulating which policies were grandfathered.

Then an “if-then” calculation was made for those affected policyholders. Each policyholder’s coverage selections reported in the NFIP database, as well as property characteristics (flood zone, first-floor elevation), were data inputs to the appropriate NFIP rating tables. The result was an estimate of the NFIP risk-based premium for that property. Subtracting the estimated payment made prior to BW 201210 from the new premium estimate was the increased payment to the NFIP for each policyholder. Summing over all affected policies resulted in an estimate of the new premium revenues to the NFIP from BW 2012, specifically from these policyholders. However, which of the policyholders would be cost burdened by the higher rates? This required defining a measure of cost burden (see Chapter 4, section on The Ability to Pay Flood Insurance Premiums) and then tabulating the number of cost-burdened policyholders with BW 2012.

Next an affordability policy option had to be described. For ease of simulation and given available data that policy was to restore pre-FIRM subsidized rates and grandfathered rates to eligible policyholders; also, any forgone revenue to the NFIP from that restoration would be paid to the NFIP from the federal treasury. Eligibility was defined by two criteria: (1) having a PFS or grandfathered rate prior to BW 2012 and (2) being cost burdened by the NFIP risk-based rate under BW 2012. Based on the predicted NFIP risk-based rate (as the baseline) and the predicted rate paid prior to BW 2012 (the alternative policy option of restoring pre-FIRM subsidies and grandfathering for those eligible), estimates would need to be made of how many policyholders would receive assistance (that is, have their rate discounted) and how much revenue would be provided by the treasury to the NFIP.

Compare and Display Effects of Alternative Policy Options

The estimated effects of an alternative policy option (e.g., an affordability assistance program) are changes in the chosen outcome metrics relative

__________________

10 Although the NFIP policy database included premiums paid, the estimate of pre–BW 2012 premiums based on North Carolina’s own data and the value recorded in the NFIP database frequently disagreed, often substantially. So estimates based on North Carolina’s own data were used for premiums both without and with BW 2012 in effect (see Chapter 3 for further discussion).

to the specified baseline. The North Carolina study identified, defined, and described a baseline with removal of pre-FIRM subsidized and grandfathered rates under BW 2012 and illustrative alternative affordability policy options (Table 2-3). The analysis was constrained by available data, time for completing the study, and models available to predict metrics that represent selected outcomes under the baseline and alternative policy options. The policy options described are a small subset of the numerous possibilities suggested previously in Figure 2-1.

The NCFMP databases and models were used to simulate the baseline and alternative policy options. Output results were displayed in tabular form to compare the alternative policy options with the baseline. Three examples of model output results are shown for illustrative purposes below.

EXAMPLE 1. How cost burdened are policyholders by their flood insurance premiums?

| Number and Percent of Policies | ||||

| Severity of Cost Burden | Baseline | Alternative Policy Option | ||

| Not cost burdened | ||||

| Cost burdened | ||||

| Severely cost burdened | ||||

| Total | ||||

EXAMPLE 2: Does NFIP pricing follow actuarial principles regarding net revenues and cross-subsidization?

| Baseline | Alternative Policy Option | |

| NFIP net revenue | ||

| Percent of revenues from cross subsidies |

EXAMPLE 3: How does an alternative policy option affect federal spending?

| Baseline | Alternative Policy Option | |

| Annual payment to NFIP for forgone revenue | ||

| Annual total payment to eligible policyholders for premium assistance | ||

| Annual total payment to eligible policyholders for mitigation assistance |

TABLE 2-3 Illustrations of Baseline Condition and Alternative Policy Options

| Baseline Condition | Immediate NFIP risk-based rates for selected policyholders | All policyholders who were paying pre-FIRM subsidized or grandfathered premiums will now pay NFIP risk-based premiums. The preferred risk policy and specific rate policy rates are unchanged. No change in the number of policies in force as a result of BW 2012 implementation. Cost burden was defined for illustrative purposes as when premiums exceeded 1 percent of flood insurance coverage. The committee is not endorsing this as a measure of cost burden. |

| Alternative Policy Option: Premium assistance so that policyholders pay what was paid before BW 2012 |

Provide premium assistance by reducing premiums for those policyholders who meet two eligibility criteria:

|

For policyholders meeting the two eligibility criteria, their premiums are restored to the amounts paid prior to BW 2012 (that is, the pre-FIRM subsidized or grandfathered amounts). |

| Alternative Policy Option: Premium assistance so that policyholders pay no more than 1 percent of coverage |

Provide premium assistance by reducing premiums for those policyholders who meet two eligibility criteria:

|

For policyholders meeting the two eligibility criteria, premiums are capped to 1 percent of total flood insurance coverage. |

| Alternative Policy Option: Premium or mitigation assistance |

Provide premium assistance or mitigation assistance grant to those policyholders who meet two eligibility criteria:

|

Premium assistance is a payment equal to the difference between the NFIP risk-based rate and the previous pre-FIRM subsidized or grandfathered rate if a policyholder is eligible. Mitigation assistance grant is amount required to elevate property to base flood elevation plus 2 feet for those property owners who meet the eligibility criteria. |

FEMA was directed by Congress to conduct a study on how BW 2012 would affect the affordability of flood insurance premiums. Currently FEMA does not have a modeling approach in place that can be used to answer the kinds of questions that follow from BW 2012. The most promising way forward is to initiate a process for building modeling capacity over time.

Finding 2.1. FEMA’s capability to evaluate affordability policy options is very limited but can be substantially advanced by embracing a microsimulation modeling approach and building the model incrementally through time. This would begin with conceptual microsimulation model design and the writing of computational algorithms for the self-contained modules, as necessary data are identified and data gaps filled.

Finding 2.2. Conducting the initial affordability analysis and building longer-term capacity following a six-element or similarly structured planning and evaluation process can focus the analysis activities on key questions, aid in the identification of the most policy-relevant evaluation outcomes, ensure that policy options and outcome metrics are described in ways that are amenable to empirical representation in a microsimulation model, identify modeling and data needs as well as gaps, and, as a result, expedite the execution and enhance the quality of the initial policy analysis and continuing development of analytical capabilities.

This page intentionally left blank.