3

Promising Practices and Ongoing

Challenges

Course-based research remains a relatively new practice when used at scale to reach large numbers of students. But examples of course-based research have existed for many years, and several large-scale projects have been developed recently, each of which have provided valuable lessons from which to derive effective practices. (See the boxes throughout this report for details on a dozen such projects across the spectrum of STEM fields.) Course-based research also can build on a rapidly growing knowledge base in the learning sciences.

In the first panel of the convocation, four presenters examined promising practices in course-based research and some of the challenges that remain in learning about effective approaches. As continued research in the learning sciences reveals the best ways to engage undergraduates, these practices can be incorporated into new and expanding programs. Specific issues discussed included the difficulty of agreeing how to measure student outcomes and getting faculty to undertake assessments that go beyond self-report data (e.g., Dirks et al., 2014), the alignment of undergraduate research experiences with known learning strategies, and the use of consortia to assess the impact and disseminate best practices (see Box 3-1). It was pointed out by several participants that generating more informative evaluations of the benefits of CREs beyond student self-reporting may depend on a change in policy at funding agencies to require such evaluations from the faculty teaching and carrying out research on these courses with agency support.

Measuring the Outcomes of Course-Based Research

Many courses that incorporate undergraduate research are doing great things, said Marcia Linn, professor of development and cognition in the Graduate School of Education at the

University of California, Berkeley. The question is how to capture best practices so as to offer design principles for people who want to start new courses.

In her own teaching, Linn had computer science students in the 1990s working on big and fundamental problems rather than on simple demonstration programs. Early on, she collaborated with computer science faculty as they implemented a “flipped” classroom, where most of the work of the course was done in a laboratory (Linn and Clancy, 1992). This required figuring out how to give the instructor credit for teaching the course, since at the time only lecturing counted as instruction. “We had to go up to the highest levels to get that changed,” she said. Using a case study approach, students were able to deal much more quickly with large and complex programs. “This case study approach is now widely used in introductory computer science,” she said. In computer science, offering lab-centric courses is now the accepted norm.

Linn pointed out that many of the outcome measures used to study the impact of research experiences are based on students’ self-reports rather than on student testing, research presentations, student notebooks, or other external evidence of effectiveness and impact (Linn et al., 2015). Self-report data are suspect, in part, because students often seek to make themselves look accomplished or to report what they think researchers want to hear. More nuanced and valid evidence of outcomes could help instructors refine courses iteratively so that they provide increased benefits to students, she said.

Course-based research experiences tend to be short—usually one semester or less—which can be a disadvantage. But many current undergraduate research experiences, particularly those using an “apprenticeship” model, serve only a selected audience, and these efforts can be difficult to scale up, Linn pointed out. Linn challenged the field to rethink undergraduate research as a continuous process, saying: “How can we promote lifelong learning of science practices by engaging students in research experiences from their first course through to their final capstone course?”

One way to make research experiences a continuous process, Linn proposed, is to start by introducing research dilemmas as case studies in introductory courses. These cases would illustrate difficulties posed by experimental design problems, and by conflicting results, leading to development of strategies for critiquing experiments, and ways to deal with unanticipated consequences. These science practices could be reinforced in subsequent laboratories and through lectures, discussions, and assessments. For example, students could be asked in laboratory assignments to design or critique an experiment.7 These experiences could be consolidated in capstone courses, with research projects required for all majors. “Why can’t all lab courses have some of these components?” she asked. “Why can’t we replace cookbook labs with opportunities to deal with uncertainty?”

In designing research experiences, Linn emphasized the importance of knowledge integration as a process that promotes lifelong learning (Linn and Eylon, 2011). “We want students to keep building on their understanding of experimentation.” Students’ ideas about scientific research remain fragmented, even when they learn something about the nature of research in high school. “Students get a glimmer of what it means to do science, they get a glimmer of the frustration or the excitement of a discovery, but they need to integrate those ideas and build a coherent understanding of experimentation.” For example, she quoted a student saying of course-based research, “I honestly expected it to be like my organic chemistry lab. . . . I’m used to ‘here is the procedure, now get to it.’”

Linn suggested looking at a wide range of indicators of learning in courses and projects. This could include challenging students to produce designs for experiments, reviews of primary literature, journal reflections, data collection and analysis, accounts of collaboration practices, and a final poster and/or research presentation. This would allow students to demonstrate their mastery of the scientific approach by asking and responding to questions. Longitudinal indicators of impacts could include determining who succeeds (in terms of both developing identity as a scientist and developing autonomy), tracking engagement in follow-on experiences (such as future courses, internships, or a senior project), and gathering evidence of persistence and success (such as presentations at meetings, publications, graduation, and decisions to attend graduate school or enter a STEM career). Such measures also would be useful to college and university

___________________

7 Immersing students in analyzing seminal research papers in science has been described by one of the invited participants to the convocation, Sally Hoskins from the City University of New York (e.g., Stevens and Hoskins, 2014; Gottesman and Hoskins, 2013; Hoskins et al., 2011; see also Chapter 5).

administrators who are looking for additional metrics for program efficacy beyond self-reporting by students.

Linn recently has been working on the development of virtual experiments that use machine learning to score students’ responses. As an example, she demonstrated a virtual experiment on atmospheric warming in which students manipulate levels of greenhouse gases to learn more about climate change (Svihla and Linn, 2011).8 Students might, for example, use evidence from virtual experiments to address the question, “Ozone is causing global climate change. Do you agree or disagree?” The online materials developed in the Web-based Inquiry Science Environment (WISE) are in widespread use in precollege schools and some colleges (see WISE.Berkeley.edu). “WISE is open source and free, and it’s very easy to customize,” she said.

Linn concluded with suggestions based on what has worked at her institution. The design of research experiences for undergraduates benefits from trial and refinement, she said. New and more nuanced indicators and assessments can help the designers of course-based research identify ways to improve student experiences (e.g., Dirks et al., 2014). And comparison studies where two or more promising alternatives are implemented could yield valuable design principles such as have been established for computer science (Linn and Clancy, 1992).

Learning about the Epistemology of Science

Troy Sadler, professor of science education at the University of Missouri, and his colleagues have been studying the potential for research experiences to teach students about the epistemology of science—how science operates, the nature of scientific knowledge, and the ways in which scientific ideas build, develop, and change over time. A frequently made assumption is that research programs for students or teachers will lead them to develop more sophisticated ideas about how science operates, and research does demonstrate some learning gains of this type, he said. However, Sadler emphasized that gains of this type are only seen if there is explicit attention to teaching the nature of science. Students who engage in research have demonstrated gains in understanding of the complexity of the scientific research process, the uncertainty of research processes, the significance of validity, the role of collaboration, and the nonlinearity of scientific methods (Richmond and Kurth, 1999; Ryder & Leach, 1999; Bell et al., 2003; Schwartz et al., 2004; Varelas et al., 2005; Hunter et al., 2007; Sadler et al., 2010). These are important gains that help them prepare for more demanding research. However, little evidence exists for the learning of more complex themes, including an appreciation for the different kinds of scientific knowledge (such as the distinction between theories and laws), the tentative yet durable nature of scientific knowledge, the social dimensions of science, and the role of creativity in science (Bell et al., 2003).

___________________

8 The experiment is available at http://wise.berkeley.edu/previewproject.html?projectId=9028.

One interpretation of student learning from research experiences, said Sadler, is that learning gains represent shifts in the “practical epistemologies” of science, that is, students’ ideas about their own science experiences and the ways in which they engaged in the practices of science (Sandoval, 2005). In contrast, the ideas resistant to change correspond to students’ “formal epistemologies” of science, which correspond to ideas students hold about how science works beyond their own personal experience. In addition, research experiences vary, and learning gains may be related to these variations, Sadler noted. Mediators associated with learning include the duration of the research experiences, the degree to which students focus on various aspects of epistemology, personal interest in the project, collaboration within the laboratory group, mentoring supports, explicit instructional supports and the amount and quality of reflection associated with the experience. For example, students can have very different experiences depending on the extent to which they are able to contribute to the development of research questions and make important decisions around analytic procedures. Laboratory constraints may make such involvement difficult or impossible, but when students have greater involvement with the epistemic dimensions of research, not simply the mechanics of data collection, they are more likely to learn about the epistemology of science, Sadler said. Thus it is important that students are not just doing the monotonous tasks involved in most research, but are directly engaged in posing and addressing important questions (Burgin et al., 2012; Burgin and Sadler, 2013; see also Box 3-2).

Providing students with explicit instructional support is another important variable, Sadler commented. For example, Sadler and his colleagues recently did a quasi-experimental comparison between students engaged in research experiences who were in seminars that focused either on the nature and content of science, or just on the content of science. In the former case, much of the nature of science material was drawn from activities developed for K-12 education, such as discussions of historical cases and knowledge of how students understand scientific processes. The instructors also took a structured approach to eliciting student reflection. For example, they provided students with composition books and had them write out answers to questions designed to help them think about the nature of science, after which the students were given feedback on their reflections. In both cases, students showed gains in their knowledge of how science operates, but the students with the extra instructional support demonstrated more sophisticated ideas, even in areas known to be more resistant to change, such as different forms of scientific knowledge (e.g., distinctions between theories and laws), the diversity of scientific approaches, and the social and cultural dimensions of science (Burgin & Sadler, in press).

The mediators of learning can interact among themselves, Sadler noted. For example, mentorship generally enables students to develop more sophisticated ideas about science, but mentorship can be provided by other students through laboratory collaboration, not just by faculty members. Peers, graduate students, and others can help students think through and reflect on their decisions and actions. Mentors also can help students understand the development of research questions and the use of analytic techniques even in circumstances where students have less involvement in these areas. Similarly, explicit instructional support can help make up for a lack of support in other areas.

Sadler suggested several ways to help students learn as research experiences become a bigger part of undergraduate science education:

- Optimize research contexts, including the connections among student interests, research opportunities, and collaborative environments. This requires that a school develop a ‘menu’ of several different options for research experiences.

- Help faculty members and graduate students develop effective mentoring practices (e.g., Pfund et al., 2015).

- Encourage students to reflect on their research and its connections to broader themes in science.

- Balance the requirements and realities of undertaking shared research (e.g., the need for standard protocols) with opportunities for students to engage with epistemic practices such as decision-making, so that they can make significant contributions to the research program as they progress.

- Leverage explicit instructional supports for learning about the nature of science.

- Expand strategies for assessing epistemology-related constructs.

As Sadler pointed out, developing measures of effective learning remains “a huge problem.” Self-report may not reveal the level of understanding of difficult concepts, and the assessment models that do exist are not easy to scale up and disseminate. However, some measures developed for K-12 education could work in higher education. Bringing together different communities and devoting resources to the development of appropriate assessment tools could help to develop and disseminate such measures, promoting their use.

Using Quasi-Experiments to Measure the Outcomes of Course-Based Research

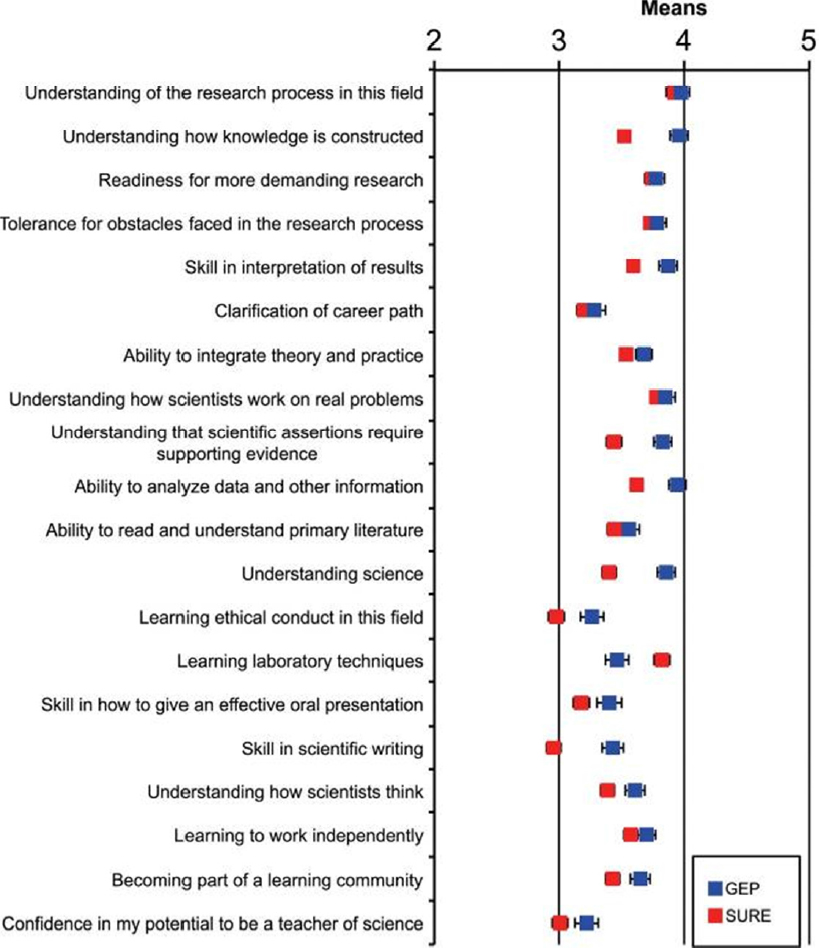

In his commissioned paper and video presentation, David Lopatto, professor of psychology at Grinnell College, observed that undergraduates can realize many benefits by participating in course-based research. Based on a survey of undergraduates at four institutions, these benefits generally include the following (Lopatto 2010):

- Personal development

- Knowledge synthesis

- Data collection and interpretation skills

- Design and hypothesis testing skills

- Information literacy

- Computer skills

- Interaction and communication skills

- Responsibility

- Professional development

Course-based research is a complex package of treatment variables, Lopatto emphasized. This package may produce positive benefits at one site (college or university), but a major question is whether same package can produce similar benefits at other sites. A program that has common features across several sites can help answer this question (see Box 3-3). This strategy shifts the focus from confounding variables to the robustness of a program’s overall effectiveness.

It seeks to determine which treatment variables produce a successful outcome in different environments.

To study the effects of course-based research, Lopatto suggested the use of what Cook and Campbell (1979) identify as quasi-experiments—that is, an experiment that examines “treatments, outcomes measures, and experimental units, but does not use random assignments to create comparisons from which treatment-caused change is inferred. Instead, the comparisons depend on nonequivalent groups that differ from each other in many ways other than the presence of a treatment whose effects are being tested. . . . In a sense, quasi-experiments require making explicit the irrelevant causal forces hidden within the ceteris paribus [other things being equal] of random assignment” (Cook and Campbell, 1979, p. 6).

Lopatto also argued against using dispositional variables as outcomes—that is, human traits, motivations, and other characteristics that suggest the occurrence of structural change in the character of students. Instead, he urged focusing on behavior. STEM education is successful when students are able to behave as scientists in those settings where it is appropriate to do so. For example, he pointed to a separate set of statements made by Smith college alumnae when they were five, ten, and fifteen years out of college that show how difficult it is for some people to maintain a scientific identity as they mature:

“After completing my MS degree, I decided I was tired of working in the sciences and wanted to do something completely different.”

“I was at a wonderful PhD program . . . with the path of many, many career options ahead. . . . But I had been away from my husband for years and wanted to be with him and have children. My science education argued for BOTH cases. . . . I know the biological downsides to waiting to have kids, but I also had an appreciation for the stats: if I left, I’d probably never finish my degree.”

As another example of the importance of relying on behavior rather than dispositions, Lopatto observed that the best way to know whether an undergraduate science major wants to go to graduate school is to ask him or her. “Her future in graduate school is not affected by content or critical thinking skills if she does not intend to submit an application.” As argued by the psychologist Kurt Lewin, behavior depends on what can be termed the psychological field at the time of the behavior, which consists of the influence of the past and the future on the person in the present. Thus, an undergraduate in a course-based research program will respond in a way affected by his or her past experiences, his or her present experiences, and his or her expectations of the future. “If we wish to know what their intentions are and how they will continue in the program, we need to ask,” said Lopatto. Discussions of assessment usually revolve around three domains of student learning: cognitive, behavioral, and attitudinal, Lopatto said. The cognitive domain includes content learning, the behavioral domain includes demonstrations of skill acquisition, and the attitudinal domain includes how students feel about their education. How much the three domains overlap or correlate remains unknown, said Lopatto, which makes it difficult to predict how measures might correlate in the field.

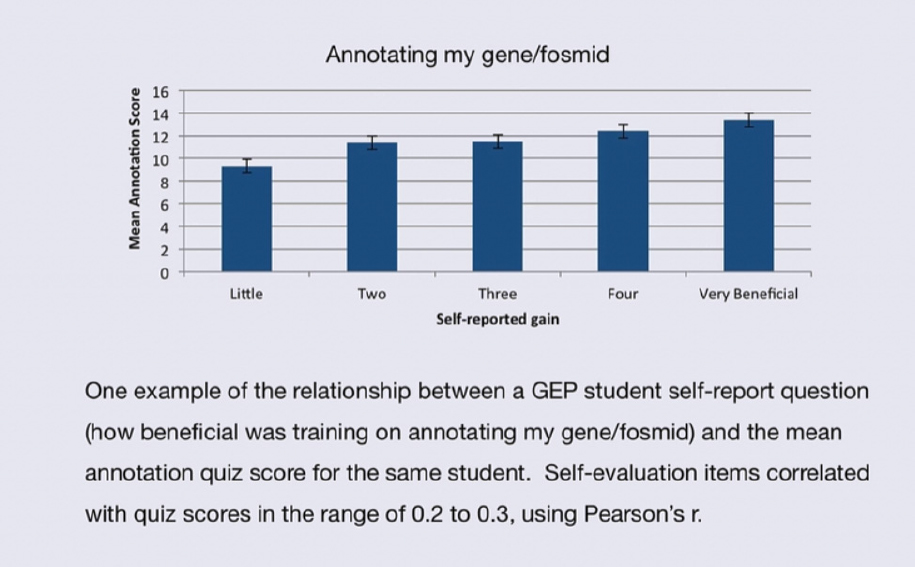

Fortunately, Campbell’s work on quasi-experiments sheds light on how to proceed, Lopatto continued. He advocated the concept of multi-operationalism, the use of more than one outcome measure. If multiple measures tap into the same learning domain, they should correlate. However, the correlation should be modest. A high correlation renders the measures redundant. A zero correlation suggests that the measures are tapping into different learning domains. A modest correlation suggests the two measures are tapping into the same domain, but that each has sources of error. What is important is that the two measures have independent sources of error. For example, in the assessments Lopatto and his colleagues have done of the Genomics Education Partnership at Washington University, a modest correlation exists between how students assess their experiences and their test scores on relevant material (Shaffer et al., 2010, see also Figs. 3-2 and 3-3).

Consortia afford many advantages for doing these kinds of experiments, Lopatto said. A group of sites can be in agreement about program goals, offer a common set of activities, provide common training for instructors or teaching assistants, have a central support site, and use a common set of assessment measures. This approach permits the detection of a successful treatment despite multiple sources of noise, enables the generation of relatively large data sets, tolerates departures from experimental control, and allows for subsequent replication, assuming that new members can join an ongoing consortium.

A Systems Perspective on Best Practices

The mission of the Council on Undergraduate Research, which is a national organization of more than 10,000 individual and 700 institutional members, is to support and promote high-quality undergraduate research and scholarship (e.g., Karukstis and Elgren, 2007; Boyd and Wesemann, 2011) .9 It has ten discipline-based divisions—arts and humanities, biology, chemistry, geosciences, health sciences, mathematics and computer science, physics and

___________________

9 Additional scholarly papers on course-based research experiences for undergraduates can also be found in the CUR Quarterly, available at http://www.cur.org/publications/curquarterly/.

astronomy, psychology, social sciences, and engineering—and two multidisciplinary, administration-based divisions—at-large and undergraduate research program directors. It has grown 40 percent over the last four years, said its executive officer Elizabeth Ambos, and growth has been particularly strong in non-STEM areas.

Ambos described a recent publication, based on work supported by the Council and the National Science Foundation, which explores the prospects for enhancing and expanding undergraduate research from a systems perspective (Malachowski et al., 2015). This study focused on the potential for partnerships within state systems of higher education and public and private consortia to foster the institutionalization of undergraduate research at individual member institutions and across the systems or consortia as a whole. Most campuses in all of the systems and consortia studied had expanded and enhanced their undergraduate research programs. Even more important, all planned to incorporate more undergraduate research directly into the curriculum and at earlier stages.

Major strategies were to establish centralized undergraduate research offices and to create inventories of available opportunities for research, address faculty reward structures, and use the power of convening and messaging among participants. Faculty members were encouraged to align course-based research with their own research, as appropriate. The project also identified roadblocks to increasing undergraduate research, including leadership transitions that resulted in lost momentum, the fact that academic cultures are slow to change, and recent decreases in state funding. For example, Ambos noted, academic leaders tend to turn over much faster than do tenured faculty members, which has “a huge impact on the change process.”

The results of this project have led to several recommendations, noted Ambos. One is to recommend sustained institutional investment in such programs for at least a decade, not just for the more common three- to five-year planning horizon. Another is to invest in teams and use a nested leadership model, where leaders come from different parts of an institution’s instructional and administrative structure, thus helping to align strategic thinking and reward structures. Undergraduate research can be linked with institutional change, said Ambos, which can help avoid the trap of such research becoming an isolated goal. Finally, change can be leveraged through systems and consortia convening, messaging, assessing, building infrastructure, and redistributing resources.

Ambos also said that the value of the nested leadership model extends beyond STEM subjects. “I’m not sure we can engender deep change in the faculty reward system for STEM faculty without having it be an all-university partnership between STEM and non-STEM faculty. Except in the very largest research universities, where reward structures can often be determined within a college basis, almost all other institutions’ reward structures are determined at the institutional level.” The institutions that are moving fastest to incorporate research into undergraduate courses are those where STEM and non-STEM faculty are moving more quickly to rewrite tenure and promotion strategies, are providing more opportunities for adjunct faculty to be

engaged, and are aligning hiring practices with milestones for the engagement of students in research.

The Value of Student Reflection

A prominent topic in the discussion following the panel presentations was the value of student reflection when incorporated into course-based research. (Chapter 7 provides a synthesis of the discussions that occurred throughout the convocation.) Sadler noted that in his studies, student reflection is set up to be both multifaceted and structured. For example, when students have an opportunity to talk through what they are doing with their mentors, they can connect their activities to broader themes, he said. Also, instructors can provide students with composition books in which they answer targeted questions geared toward getting them to think about how their work relates to the nature of science. “They put down their ideas, and we’d provide feedback on their reflection,” Sadler said. “For some students in rich laboratory contexts maybe that kind of structured experience wasn’t necessary. . . . [But] we find those journals to be helpful.”

Linn added that her group has found “the value of reflection in student journals or in activities that include specific prompts for reflection to be extremely beneficial.” When students are asked to explain what they have been observing, their learning tends to be more durable, she said. “It’s difficult to have to write a reflection,” she added. “But courses with this type of difficulty, which is hard work, often result in student errors during learning but more durable understanding on subsequent assessments” (Bjork and Linn, 2006). Reading and evaluating students’ reflections can be time-consuming for the instructors of a course, especially for those courses with large enrollments. Using natural language processing and machine learning takes advantage of computers to evaluate student responses—a reasonable strategy especially when these evaluations are used not for grades but to guide further student thinking (Gerard et al., 2015). “The learning is not directly from the feedback but from the revision process to their reflection. [The benefits from] having students reflect is one of the most important findings that we have in the literature in the learning sciences.”

Ryan Kelsey from the Helmsley Charitable Trust noted that his organization has been working with a consortium of engineering schools to incorporate reflection into engineering practices. “It’s everything from the right kinds of prompts all the way up to e-portfolio kinds of approaches. We have schools attempting an entire continuum of different types of approaches.” Such efforts should lead to a more nuanced appreciation of best practices in this area.