CHAPTER FOUR

Attribution of Particular Types of Extreme Events

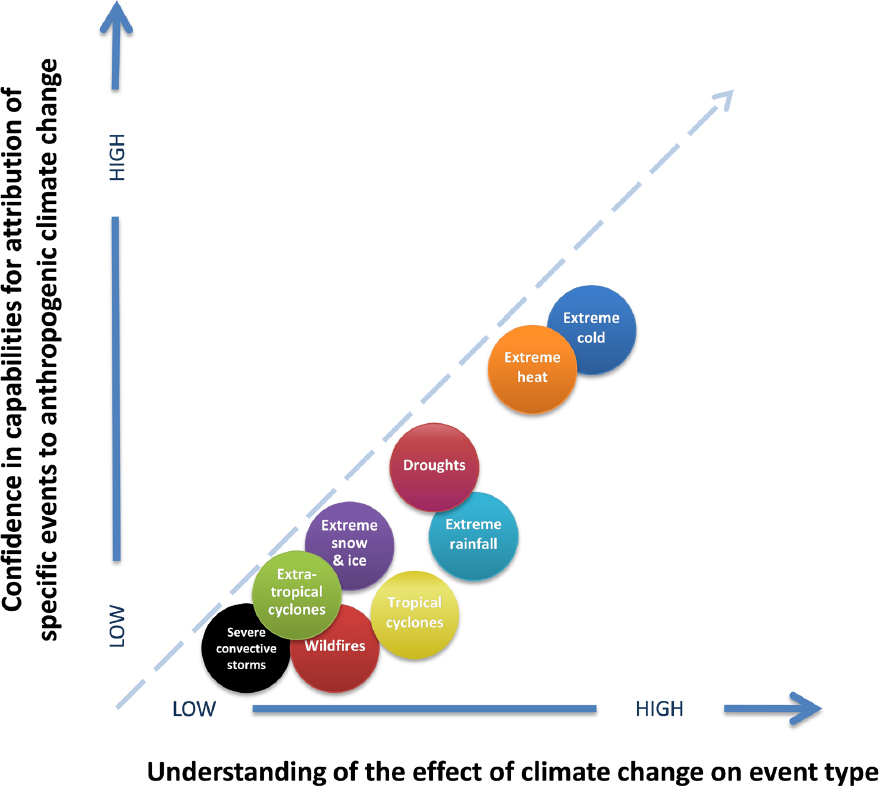

The scientific issues and challenges associated with extreme event attribution vary greatly from one event type to another. This chapter considers event types one at a time, focusing first on issues associated with event definition. Such issues may be conceptual or associated with limitations of the available observations. As background to attribution studies of single events of each type, prior knowledge also is reviewed. This includes research on patterns or trends in historical observations as well as projections of future change using climate models. Though not strictly attribution, this broader context is relevant to the statement of task in that any scientifically responsible attribution statements are informed, necessarily, not just by formal attribution studies but by all aspects of existing scientific understanding of the relationship between the extreme event type in question and climate change. Existing attribution studies on single extreme events also are reviewed as part of this background. The number of studies varies widely; for some event types there are few or even no such studies. For each category, advances that might be possible in the near future are considered.

The event types considered here do not represent all possible event types influenced by climate factors; moreover, some examples are of events defined not solely by atmospheric or meteorological quantities like temperature. The section on extreme precipitation, a meteorological event, considers only precipitation itself, not flooding, as the defining characteristic. The section on drought focuses on meteorological drought (primarily precipitation deficit) and hydrological drought, which are consequences of atmospheric factors. Wildfires are not, strictly speaking, meteorological events at all, but they—like other extreme events discussed here—are of great societal concern, and the likelihood and extent of wildfires can be influenced by climatic factors. These choices about how and whether to include non-meteorological factors in our assessment of attribution are subjective and reflect committee judgment, available literature, and expertise. The committee recognizes that many additional events and other natural hazards may be impacted by climate change (e.g., sea level rise, landslides, coral bleaching, etc.) that could be discussed in the context of event attribution.

EXTREME COLD EVENTS

Event Type Definition

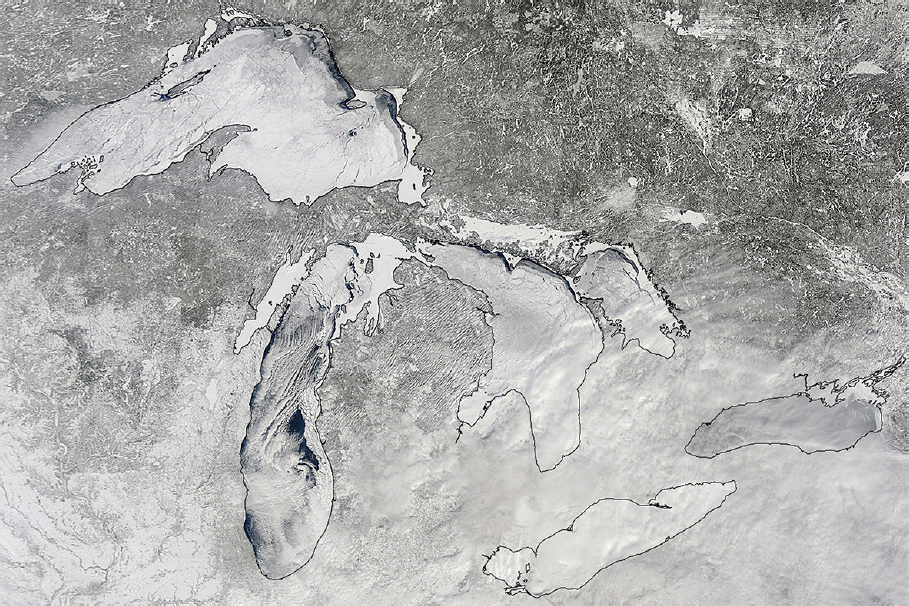

Extreme cold events are generally described in terms of temperature, although wind, snow, and ice can compound the impacts of an extreme cold event (Figure 4.1). The actual temperatures that characterize a cold event vary regionally and seasonally, but the temperatures during such an event will be in the cold tail of the probability distribution of temperatures for a location or region and time of year. The event definitions most often are based on daily temperatures, although multiday or longer averages also have been used. The criteria can be either an absolute temperature threshold (e.g., 0ºC, 0ºF, –20ºC), often arbitrarily chosen, or a percentile value such as the 1-percentile or the 10-percentile criterion used in the Expert Team on Climate Change Detection and Indices (ETCCDI) ClimDEX database (Sillmann et al., 2013a,b). Duration and inten-

sity are other metrics of an extreme cold event. Metrics of duration can be the length of time (e.g., number of days) that a certain minimum threshold of temperature is exceeded or the time for which the multiday average temperature is below a prescribed threshold; intensity, on the other hand, is often measured by the lowest temperature attained. In some instances, the severity of a cold event has been quantified as the product of the event duration and intensity.

Prior Knowledge and Overview of Attribution Studies

Extreme cold events are driven by a combination of thermodynamics (cold air mass formation) and dynamics (the large-scale circulation, advection). Horton and colleagues (2015) have used self-organizing maps derived from atmospheric reanalyses to show that both factors have played roles in recent changes in extreme cold events. In particular, increasing trends in northerly flow have led to an increasing trend in winter cold extremes over central Asia.

The research to date indicates that extreme cold events are less frequent and less severe than in previous decades, although interannual variability is still large enough to allow extreme cold events such as occurred in North America in 2014 and Europe in 2012. Even over 60-year periods, trends in the coldest temperature of the year are not compellingly positive over Europe and the United States (van Oldenborgh et al., 2015, Figure 4b). The increases in cold extreme daily minimum temperatures (i.e., warming) are generally greater than are the increases in extreme daily maximum temperatures, and there is no indication of increased variability of daily or monthly winter temperatures over the United States (Kunkel et al., 2015; Screen et al., 2015). A similar warming of the coldest temperatures over other land areas of the world emerged from Sillmann and colleagues’analysis (2013a,b) of the ETCCDI indices for 1948-2005 in 4 different atmospheric reanalyses and 31 Coupled Model Intercomparison Project Phase 5 (CMIP5) models. The tendency for cold extremes to warm by more than hot extremes also is apparent in Collins and colleagues’ (2013) Figures 12.13 and 12.14 as well as the U.S. National Climate Assessment’s Figure 2.20 (Melillo et al., 2014).

The general expectation is that cold events defined relative to fixed temperature thresholds should become less frequent and less severe as the climate warms on the global scale. But, it is nonetheless possible for them to increase in frequency or intensity regionally for periods of time (e.g., due to increases in the intensity of cold air advection from polar to lower-latitude regions).

Extreme cold events in eastern North America have characterized a few recent winters (2014, 2012), but such events are less frequent and their actual temperatures

less extreme in the past few decades than in earlier decades of the 20th century (van Oldenborgh et al., 2015; Wolter et al., 2015). In an analysis of observational data, van Oldenborgh and colleagues (2015) find that the return times of the lowest minimum temperatures of 2014 in the midwestern United States ranged from 6 to 44 years in the present climate, but only from 3 to 7 years in the climate of the 1950s; likewise, return times of the cold winter-averaged temperatures were greater in the present climate than in the 1950s. Decreases in cold wave events of 4-day duration had the lowest frequency during the 2001-2010 decade in all eight subregions of the United States examined by Peterson and colleagues (2013b), although the decade of the 1980s had the highest frequencies nationally. But, 20-year return values of the daily minimum temperatures warmed over the entire contiguous United States during the 1950-2007 period, by as much as 3° and 4ºC in much of the West (Peterson et al., 2013b).

There is a notable absence of conditional attribution studies pertaining to extreme cold events. Nevertheless, observational studies do provide evidence of a general decrease in the frequency of occurrence of extreme cold temperatures over the past few decades in most land areas of the world (Hartmann et al., 2013; Kharin et al., 2013). Kharin and colleagues show that the trends of extreme cold ETCCDI indices are comparable in atmospheric reanalyses and CMIP5 historical simulations in which external forcing was historical. In this respect, external forcing (including its anthropogenic component) is implicated in the decreasing frequency of observed cold extremes. The reduction of cold extremes has been detected and attributed in extreme seasonal and annual temperatures (Christidis et al., 2012; Stott et al., 2013) as well as in the ETCCDI metrics of cold daily extremes (Morak et al., 2013; Zwiers et al., 2011). Attribution studies by Kharin and colleagues (2013) and others have drawn on comparisons of observational data with climate model simulations driven by natural and anthropogenic forcing.

More recently, Wolter and colleagues (2015) also find decreasing frequencies of extreme cold events: in this case, events affecting the Upper Midwest of the United States, in CMIP5 models and in an ensemble of Community Earth System Model (CESM) simulations driven by historical forcing. The decreased frequency of cold extreme arises primarily from the underlying increase of the mean temperature, not from the decreased variability (Screen et al., 2015; Trenary et al., 2015; Wolter et al., 2015). Gao and colleagues (2015) show that decreases in temperature variance account for generally less than 20% of the projected 21st-century decreases in extreme cold temperatures over North America; the mean warming accounts for most of the remainder. The fact that underlying warming has moderated cold extremes also has been shown using daily circulation analogs for the European cold events of 2010 (Cattiaux et al., 2010).

Several recent attribution studies have examined extreme cold events in the context of retreating Arctic sea ice. By prescribing reduced Arctic sea ice cover but historically observed ocean temperatures outside of the Arctic in two different global climate models, Screen and colleagues (2015) find that ice loss is associated with decreased likelihood of extreme cold events (as well as decreased variability of temperature) over nearly the entire Northern Hemisphere land areas. The exception is the central Asian region, where the probability of extreme cold events increases with ice loss, in agreement with earlier studies (Inoue et al., 2012; Kim et al., 2014; Mori et al., 2014). For the rest of the hemisphere, the underlying warming dominates the trend of extreme cold events, implying that thermodynamically induced changes dominate dynamically induced variations, such as the jet stream. While some studies do point to influences of sea-ice change on large-scale dynamics (Francis and Vavrus, 2015; Jaiser et al., 2013; Kim et al., 2014; Peings and Magnusdottir, 2014), the signals remain embedded in the noise of natural variability (Barnes et al., 2014) and, from the perspective of extreme cold events, are overwhelmed by the underlying warming. Additional attempts to link Arctic warming with an amplified jet stream and cold winters in middle latitudes have been made by Francis and Vavrus (2012, 2015).

On the Horizon

While the observational network is sufficiently dense to capture extreme cold events over most land areas (except possibly Antarctica), there have been few evaluations of the ability of models to simulate the frequency and the intensity of these events. Sillmann and colleagues (2011) and Whan and colleagues (2016) show that some climate models are able to capture the linkage between atmospheric blocking and cold events over Europe and North America, respectively. More comprehensive assessments are needed, however, of models’ ability to simulate cold temperatures for the right reasons. The lowest temperatures are often reached under clear-sky, calm conditions characterized by strong near-surface temperature inversions. Limited vertical resolution is likely to impact model simulation of temperatures in such situations. It also is apparent from the studies cited above that atmospheric blocking events must be well simulated if models are to simulate extreme cold events realistically. Finally, decadal and even longer trends in cold extremes can be impacted by multidecadal variability in the climate system (e.g., the Atlantic Multidecadal Oscillation [AMO] and the Pacific Decadal Oscillation [PDO]), which models must simulate in order to capture the temporal spectrum of extreme cold events.

With regard to a possible role of sea-ice loss and Arctic amplification, mechanistic linkages are still an active area of research. Such linkages may contribute to cold

events in some areas, particularly central Asia, but the dynamic mechanisms underlying such linkages need to be established. Hypothesis-driven model experiments are needed to identify any dynamic mechanisms linking Arctic changes with midlatitude extreme events.

Finally, impact-relevant metrics of extreme cold events need to be developed for use in attribution studies. In a climate with polar amplified warming, increased equatorward flow will likely be required if cold air advection is to cause any hypothetical increase of extreme cold events in middle latitudes. In such cases, the extreme cold temperatures will be associated with winds to a greater extent than in the past, which, in turn, will contribute to more extreme windchill values. Metrics such as the windchill index are just starting to be used in cold event attribution studies (Gao et al., 2015).

EXTREME HEAT EVENTS

Event Type Definition

Heat events have been defined over a variety of timescales in the literature, from as little as 1 day to at least 1 year. This report distinguishes between temperature anomalies of short duration (days, heat events) and those of longer duration (weeks and longer, warm anomalies). Because temperature is a continuous variable, the spatial extent of a given heat event or warm anomaly is somewhat subjectively defined and can change through time as the event unfolds. Typically, a latitude-longitude box is used, but sometimes single stations (e.g., King et al., 2015) or political boundaries (e.g., Texas or Korea) are used. While a large majority of studies focus on heat events over land, some (e.g., Funk et al., 2013; Kam et al., 2015) have looked at warm sea surface temperatures (SSTs) anomalies over periods of seasons to years.

The impacts of heat events and warm anomalies (e.g., on human health) can be exacerbated by high dew points, and also by high nighttime temperatures (which, in turn, are more likely if dew points are high; e.g., Gershunov and Guirguis, 2012). Conversely, the amplitudes of the warm anomalies themselves can be increased by land-atmosphere feedbacks if moisture is low; this connection between drought and warm anomalies is covered below in the section on drought. In addition to their direct impacts, warm anomalies over both land and ocean can contribute to other types of extreme events (e.g., droughts or wildfires).

Prior Knowledge and Overview of Attribution Studies

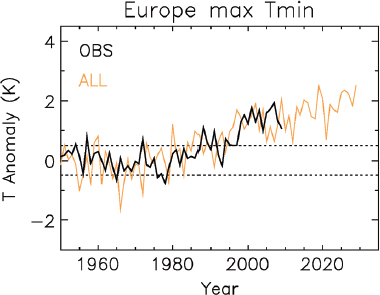

The Intergovernmental Panel on Climate Change (IPCC) Fifth Assessment Report (Hartmann et al., 2013) noted that “a large amount of evidence continues to support the conclusion that most global land areas analyzed have experienced significant warming of both maximum and minimum temperature extremes since about 1950” and concludes that “it is . . . very likely that human influence has contributed to observed global scale changes in the frequency and intensity of daily temperature extremes since the mid-20th century, and likely that human influence has more than doubled the probability of occurrence of heat waves in some locations” (Figure 4.2). They also note that minimum temperatures have increased more than maximum temperatures, and maps of changes show statistically significant increases in two indices of extreme temperatures in almost every land area since 1950: the 90th percentile of daily minimum temperatures and the 90th percentile of daily maximum temperatures. For the region of North and Central America (lumped for purposes of simplicity in a table), they assess changes in heat waves and warm events as “medium confidence: increases in more regions than decreases but 1930s dominates longer-term trends in the USA.” The U.S. National Climate Assessment corroborates and provides additional details: “Heat waves have generally become more frequent across the U.S. in recent decades, with western regions (including Alaska) setting records for numbers of these

events in the 2000s. . . . Most other regions in the country had their highest number of short-duration heat waves in the 1930s” (Walsh et al., 2014). Regarding future projections, in the IPCC Fifth Assessment Report, Collins and colleagues (2013) stated that “It is also very likely that heat waves, defined as spells of days with temperature above a threshold determined from historical climatology, will occur with a higher frequency and duration.”

For northern hemisphere land areas, numerous studies have examined different aspects of trends in extreme temperatures. Horton and colleagues (2015), for example, relate trends in extreme temperatures to atmospheric circulation changes over the 1979-2013 period, and Abatzoglou and Redmond (2007) explain the asymmetry in seasonal warming (1958-2006) between the eastern and western United States as a consequence of changes in atmospheric circulation. Peterson and colleagues (2013b) note the decadal changes in heat waves in nine U.S. regions, defined as collections of states, for each decade since the 1900s, where a heat event is defined as a 4-day period exceeding the 5-year return period value for the period 1895-2010 (Figure 3.5). The 1930s remains the decade with the most heat waves, a curious fact that may be partly explained by the types of circulation changes noted by Horton and colleagues (2015) and Abatzoglou and Redmond (2007) for more recent periods. They note that even on these spatial scales, natural variability can dominate over anthropogenic warming to date.

Heat events are arguably the extreme weather events for which attribution studies are most straightforward and have the longest history. Public and scientific interest in extreme event attribution increased rapidly after the 2003 European heat wave, which was associated with tens of thousands of excess deaths and prompted the seminal paper by Stott and colleagues (2004), whose methods form the groundwork for much subsequent work in this field (e.g., fraction of attributable risk). Of the events covered in the annual Explaining Extreme Events special issue of BAMS, heat events or warm anomalies are the largest share (e.g., 8 out of 32 for 2014). This may reflect the greater likelihood of successful attribution of heat waves, compared to other event types, to human-induced climate change using existing models and data (see the discussion of selection bias in Chapter 2).

Most attribution studies of heat events and warm anomalies include an assessment of the trend in the temperature statistic used to define the event and an indication of how extreme the event was in the context of the observed record. Many studies also compare the magnitude with a distribution from long CMIP5 runs: in some cases, from long simulations with constant 19th-century radiative forcing; in some cases, from simulations using observed radiative forcing (i.e., CMIP5-ALL). For example, the Euro-

pean annual mean temperature in 2014 was shown to be far outside the distribution of CMIP5 20th-century simulations even with observed forcing (Kam et al., 2015).

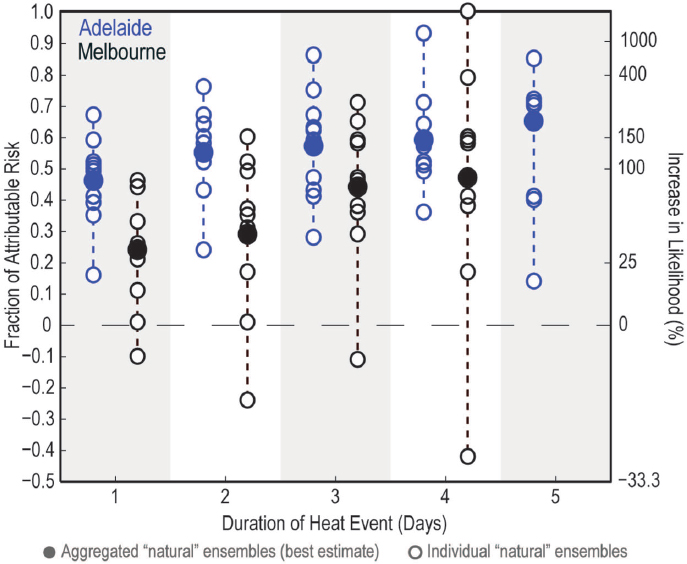

Most recent studies calculate fraction of attributable risk (FAR), and some also estimate the uncertainty in FAR—for instance, by bootstrapping subsets from natural ensembles using 10 general circulation models (GCMs) (King et al., 2015). Some studies also explore how the results depend on event definition: for example, Black and colleagues (2015) examine the January 2014 heat events in Adelaide and Melbourne, Australia, using definitions of heat wave with durations ranging from 1 to 5 days. Some studies also compute return periods for different thresholds (e.g., Christidis et al., 2015) or the risk ratio (RR) (e.g., Hannart et al., 2015a).

A number of studies used very large ensembles (i.e., bigger than available from CMIP5) from either a global (e.g., Massey et al. 2014; Rupp et al., 2012) or a regional (Black et al., 2015; King et al., 2015; Figure 4.3) atmospheric model. In these studies, changes in FAR, RR, and/or return period are calculated using an approach (see Chapter 3) that estimates the anthropogenic contribution to modern SSTs and subtracts that from the observed SSTs, typically with SST patterns from at least a few global coupled climate models used to estimate the anthropogenic contribution. Other approaches to estimating the counterfactual include using early 20th-century or preindustrial control (e.g., Black et al., 2015).

On the Horizon

Simulations of heat events and warm anomalies may benefit from improvements in land-surface schemes in global and regional models. Few studies include an evaluation of the models’ ability to simulate the important statistical properties of the event of interest. While Trenberth and colleagues (2015) do not include heat events among their examples of a highly conditioned approach, this approach clearly could be applied to heat events, starting perhaps with one of the most impactful events, like the Russian heat wave of 2010. Heat events and warm anomalies may be the best candidates for assessing the reliability and robustness of attribution methods because the direct thermodynamic effects on this type of extreme event are generally more straightforward than, for example, heavy rainfall.

DROUGHTS

Event Type Definition

Droughts are complex phenomena involving various combinations of atmospheric inputs (chiefly precipitation, but also temperature), storage terms like soil moisture and snowpack, and responses of the human and natural system on a variety of timescales. In addition, there are several types of drought (Wilhite and Glantz, 1985); these include meteorological drought (lower than expected precipitation over an extended period); hydrological drought (depletion of surface or subsurface water supply); agricultural drought (aspects of meteorological drought or hydrological drought that have im-

pacts on agriculture, like reduced crop yield); and socioeconomic drought (effects on the supply of economic goods like hydroelectric power). In this report, we focus on meteorological drought and hydrologic drought.

Droughts are driven by multiple factors, including precipitation deficits, feedbacks associated with soil moisture and evapotranspiration, and large-scale dynamics associated with ocean, land, and air temperatures. Droughts can occur across broad regions up to continental scale, but they also can have dramatically different implications for communities that are in close proximity to each other. The same drought can change in location and intensity from month to month in dramatic ways, as can be seen in the maps produced by the National Integrated Drought Information System (NIDIS) and the U.S. Drought Monitor. Furthermore, anthropogenic climate change has been shown to affect drought differently in different seasons and in different regions, particularly in the varied ways that reduced snowpack affects surface flows.

As Redmond (2002) points out, drought may be better defined as “insufficient water to meet needs.” Thus, a holistic view of droughts encompasses both meteorological and hydrologic factors, on the “supply” side, and terrestrial ecosystems, human consumption, and losses, on the “demand” side, as well as infrastructure for water delivery, policies that affect water use, flexibility in addressing local shortfalls, etc. Because event selection for extreme event attribution is often driven by the magnitude of the impacts rather than the magnitude of the atmospheric driver, such considerations can be important in framing a drought attribution study. Similar holistic considerations apply to other extreme event types, to varying degrees.

One reason that attributing both extreme flooding and extreme droughts to anthropogenic climate change is particularly difficult is that changes in the hydrologic cycle are both causes of the event (a climatic driver) and consequences of the event (with water supply availability and flooding being literally “downstream” from the changes in precipitation). Another is that land use decisions and investments in water-related infrastructure for hydroelectric power generation, flood control, and water supply have dramatically changed the natural hydrology within watersheds and have usually decreased—but sometimes increased—the risks associated with extreme events. It is therefore often quite challenging to attribute the impacts of droughts and floods to extreme events in the same way that it is possible to attribute changes in the intensity of precipitation (which is “upstream” from the drought or flood).

As an illustration of the complexity of defining and assessing drought, consider some of the hydrologic contributing factors to drought. Redmond (2002) refers to a “snow drought”—that is, for locations like much of the western United States that receive a majority of precipitation as snowfall and where summer precipitation is typically

quite low, a deficit in winter snow can lead to summer drought. Bumbaco and Mote (2010) take the concept further, providing specific examples of when low winter precipitation or, in some cases, high winter or spring temperature ends up producing unusually low snowmelt for the dry summer period. Because there are so few observations, especially long records, of soil moisture, many studies use an index of drought computed from monthly observations of precipitation and/or temperature, like soil moisture computed in a hydrologic model, the Standardized Precipitation Index, or the Palmer Drought Severity Index (Funk et al., 2013). The simplicity of the latter makes it attractive to use in large-scale drought assessments, but that also may bias results—especially in the context of climate change. Thus, assessment of change in drought characteristics should consider including several indices, with specific consideration of their particular limitations (Seneviratne et al., 2012; Sheffield et al., 2012).

Prior Knowledge and Overview of Attribution Studies

The IPCC Special Report on Extremes (Seneviratne et al., 2012) noted that on a global scale, and owing in part to the variety of ways to define drought, there were not enough direct observations of drought-like conditions to conclude that there were robust global trends, but some regions of the world have experienced more intense and longer droughts. The IPCC Fifth Assessment Report (Hartmann et al., 2013) notes that some studies find an increase in the percentage of global land area in drought since 1950, but interannual and decadal-scale variability is high, and the results depend on datasets and methods used. The attribution section assigns low confidence to attributing changes in drought over global land areas since the mid-20th century due to observational uncertainties and, again, high variability (Bindoff et al., 2013). Also, results differ depending on whether drought is defined as a rainfall deficit or by using hydrological variables like evaporation, many of which are affected by warming (see, e.g., Seneviratne et al., 2010). Nevertheless, some regional attribution studies are available. For example, Barnett and Pierce (2009) suggest that human influence has affected the hydrology of the western United States when snowpack and seasonal streamflow are considered. Because temperature plays a role in determining evaporation, snowpack, soil moisture, and—indirectly—streamflow, attribution of hydrological drought may be more robust than is strictly meteorological drought, which is more strongly influenced by precipitation. It also may be the case that attribution for some specific droughts may be more straightforward than reaching broad conclusions about the role of anthropogenic climate change in droughts globally because some of the specific regional factors that cause varying responses of drought to climate may be better understood in particular locations and times than others.

Regarding projections of future drought over the 21st century due to human influence, the IPCC Special Report on Extremes expressed “medium confidence that droughts will intensify in the 21st century in some seasons and areas, due to reduced precipitation and/or increased evapotranspiration. This applies to regions including southern Europe and the Mediterranean region, central Europe, central North America, Central America and Mexico, northeast Brazil, and southern Africa” (Seneviratne et al., 2012). Low confidence was expressed elsewhere due to disagreement between different projections, resulting both from different models and from different indices of drought. Additional uncertainties result from soil moisture limitations on evapotranspiration, the impact of CO2 concentrations on plant transpiration, observational uncertainties relevant to interpretation of historical trends, and process representation in current land models (e.g., Greve et al., 2014; Sheffield et al., 2012; Trenberth et al., 2014).

Many drought-related attribution studies (e.g., Funk et al., 2015; Hoerling et al., 2013; Wilcox et al., 2015) use a similar approach to those for heat: comparing CMIP5 runs from the preindustrial control, natural-only 20th century, and anthropogenic forcings. Some (e.g., Barlow and Hoell, 2015; Hoerling et al., 2013) use SST-conditioned runs: that is, atmosphere-only model simulations using observed SSTs, often compared with a counterfactual to compute FAR. A few also use an approach closer to seasonal forecasting, which somewhat resembles a highly conditioned approach: Hoerling and colleagues (2013) use an 80-member ensemble with the operational Global Forecast System (GFS) model for October 2009-September 2011 to study the Texas drought of 2011, and Funk and colleagues (2015) also use GFS to study the east African drought of 2012.

With both global and regional models, numerous papers have used very large ensembles of simulations generated on the climateprediction.net platform. For example, Bergaoui and colleagues (2015) looked at drought in the Southern Levant (approximately Israel) using the Hadley HadAM3P global model and counterfactual SSTs generated from 11 GCMs; Marthews and colleagues (2015) use the regional model HadRM3P to study drought in east Africa; Rupp and colleagues (2012) use the HadAM3P global model to study heat and drought over Texas.

Other studies using large ensembles do not use climateprediction.net, however. Seager and colleagues (2015) draw on simulations with observed SSTs to April 2014 made by 7 research groups, with a total of 150 GCM simulations. Their focus is more on diagnosing teleconnections to specific SST anomalies, however, than on attribution to human-induced climate change. Shiogama and colleagues (2013b) use a 100-member ensemble of MIROC5 to study drought in the south Amazon region.

As might be expected given the ambiguity of results concerning the trends in fraction of global area affected by drought (Hartmann et al., 2013), attribution studies do not always find strong influence of anthropogenic climate change. Some recent studies of the Colorado River anticipate dramatic impacts on river flows associated with changes in temperature (Vano et al., 2012, 2014). Meanwhile, no anthropogenic contribution was specifically identified in a recent study of eastern Brazil’s recent drought; rather, it was linked to a natural but unusual excursion of the South Atlantic Convergence Zone (Otto et al., 2015c). Several other studies (namely, Barlow and Hoell, 2015; McBride et al., 2015; Wilcox et al., 2015) found uncertain changes in likelihood and strength (see also Herring et al., 2015a, for summary tables). Shiogama and colleagues (2013a) note that their results were sensitive to bias correction.

While most attribution studies of drought focus on precipitation deficits, others have taken a more expansive approach. Funk and colleagues (2015) run a hydrologic model over eastern Africa and discuss changes in soil moisture and evapotranspiration, though they do not conduct attribution on those variables. Marthews and colleagues (2015), also studying east African drought, compute return periods for precipitation, specific humidity, and both shortwave and longwave radiative fluxes.

While drought is acknowledged to be a complex phenomenon due to the many physical processes involved and the broad range of societal factors that influence its occurrence and intensity, some aspects of drought are influenced by temperature in ways that are better understood, and thus more amenable to attribution, than others. In particular, temperature exacerbates hydrological drought in some regions by increasing surface evaporation, so that increasing temperature causes an increasing risk of hydrological drought even if precipitation does not change (e.g., Diffenbaugh et al., 2015; Williams et al., 2015).

On the Horizon

Because drought is caused by multiple factors at different scales and contexts, an area that needs further work is understanding the dominant factors that have historically been causes of drought in specific regions and watersheds. For example, for much of the United States, the drought of record is still the 1930s Dust Bowl era, which, in turn, might have been exceeded by droughts early in the last millennium (e.g., Herweijer et al., 2007). Though there are anthropogenic links to changes in atmospheric circulation patterns (and associated anomalies in precipitation and temperature) in different seasons of the year and in different regions of the globe, the multiple interacting causes of individual droughts are not well understood. It may be possible to disen-

tangle some of these components of drought and perform attribution studies in this context. Other possible future efforts that remain largely unexplored include using a combination of large ensemble and full hydrologic model simulations for attribution, decomposing droughts into circulation components and thermodynamic components (as suggested by Trenberth et al., 2015). Another challenge in the attribution of drought relates to its linkage to climate variability (e.g., SST anomalies in different basins) on seasonal-to-decadal timescales. Given that understanding is lacking on how different climate modes change as a result of anthropogenic climate change, our ability to understand drought response related to changes in climate variability is limited. Because droughts (like many other extremes) can lead to shifts in water management and policy, water managers and policy makers alike often ask the attribution question, alongside more immediate questions like predicting the end of a current drought, and these demands are likely to continue.

The ongoing California drought has been the subject of a large and rapidly growing number of studies, often reaching apparently contradictory conclusions. For example, Cheng and colleagues (2016) distinguish between the response of shallow (<10cm) and deep (>1m) soil moisture and estimate little effect of anthropogenic warming on drought risk because of competing influences of rising precipitation and rising temperature. By contrast, Diffenbaugh and colleagues (2015) find that warming alone increases drought risk in California, using a modified drought severity index. It will be an important challenge for future workers to develop a systematic approach to synthesizing all of these different studies.

EXTREME RAINFALL

Event Type Definition

An extreme rainfall event is defined as one in which precipitation over some specified time period exceeds some threshold, either at a point (i.e., as measured by a single rain gauge) or in an average over some spatial region.

In practice, the definition of an extreme rainfall event varies widely. Time periods of interest can vary from hourly to monthly. The choice of threshold also is quite variable. Some studies use fixed absolute thresholds (e.g., 25.4 mm or 1 inch/day), while others use a fixed percentile based on the distribution at a given location in order to capture variations in what “extreme” means in practice in different regions. Some studies do not use thresholds at all. For example, some studies use annual or seasonal maxima (e.g., 24-hour precipitation accumulation). This approach also is used to develop the

intensity duration frequency (IDF) curves for extreme precipitation that are used in engineering practice.

Extreme precipitation can typically be traced to forcing associated with strong vertical motion and significant water vapor (Westra et al., 2014). Extreme precipitation is associated with an array of meteorological processes, including tropical cyclones, extratropical cyclones, monsoons, atmospheric rivers, and localized convection (Kunkel et al., 2013).

Changes in extreme rainfall can be quantified using such empirically defined metrics as trends in the frequency with which some specified threshold is exceeded. Alternatively, statistical methods rooted in extreme value theory (Coles, 2001) can be used, allowing return levels for the most extreme events to be quantified (Kunkel et al., 2013).

Attribution of regional precipitation extremes is more challenging than that of temperature extremes (Bhend and Whetton, 2013; van Oldenborgh et al., 2013). Numerical models, as a rule, do not simulate precipitation as well as they do temperature because of the smaller space and timescales of the precipitation field and the strong reliance on parameterizations of convection and other physical processes in all but the highest-resolution models. Kendon and colleagues (2014) argue that convection-permitting models on the order of 1.5 km horizontal resolution are necessary to resolve convective processes associated with certain types of events. The salient lesson is that caution is required with extreme rainfall analysis of lower resolution models.

Prior Knowledge and Overview of Attribution Studies

More intense and more frequent extreme precipitation events have long been projected in a warming climate (Hartmann et al., 2013; Hirsch and Archfield, 2015). An array of studies continues to provide strong support for upward trends in the intensity and frequency of extreme precipitation events (Kunkel et al., 2013; Seneviratne et al., 2012). Wuebbles and colleagues (2014) project that such trends will continue and that heavy precipitation in simulations in CMIP5 may be underestimates relative to observed trends. Regarding the recent historical record, Hartmann and colleagues (2013) state: “It is likely that since about 1950 the number of heavy precipitation events over land has increased in more regions than it has decreased. Confidence is highest for North America and Europe where there have been likely increases in either the frequency or intensity of heavy precipitation with some seasonal and/or regional variation. It is very likely that there have been trends towards heavier precipitation events in central North America.” With respect to future projections, Kirtman and colleagues (2013) state: “The frequency and intensity of heavy precipitation events over land will

likely increase on average in the near term. However, this trend will not be apparent in all regions because of natural variability and possible influences of anthropogenic aerosols.”

Global atmospheric water vapor concentrations are robustly expected to increase with temperature at a rate of around 6-7% per degree Celsius, approximately consistent with the saturation value as determined by the Clausius-Clapeyron relationship, because observed and projected changes in relative humidity are small (e.g., Held and Soden, 2006; Wright et al., 2010). Global mean rainfall values cannot increase at this rate because of global energy budget constraints (e.g., Held and Soden, 2006). Extreme rainfall events are not subject to these constraints, and a simple hypothesis is that the intensity of such events should increase at the rate that water vapor does (Allen and Ingram, 2002). This would be the case if the atmospheric circulation (including the strength of convective updrafts) were to remain constant in amplitude and structure. Dean and colleagues (2013) conclude that moisture availability was 1 to 5% higher for an extreme precipitation event in New Zealand because of anthropogenic greenhouse gases (GHGs). They also conclude that the number of synoptic events with ample moisture for extreme rain events increased. Integrated Water Vapor (IWV) Transport associated with Atmospheric Rivers (ARs) also has been shown to increase using CMIP-5 models under RCP8.5 (Warner et al., 2015). This led to increased mean and extreme winter precipitation along the West Coast of the United States.

Thus, analysis of trends in extremes sometimes focuses on whether trends in either models or observations are less than, equal to, or greater than that expected from the Clausius-Clapeyron relationship (e.g., Lenderink and Van Meijgaard, 2008; O’Gorman and Schneider, 2009; Singleton and Toumi, 2013). This is useful in that it separates the relatively well-understood role of increasing specific humidity from the much less well-understood role of changes in updraft strength or vertical structure, focusing attention on possible physics behind the latter to the extent it is found to be important.

Consistent with this expectation, Kunkel and colleagues (2013) note that trends in the mean are less than those in the extreme values. Wuebbles and colleagues (2014) summarize key findings using the extreme precipitation index (EPI) and note an upward trend in both the intensity and the frequency of extreme precipitation events in the United States. A number of other studies have noted statistically significant increases in the frequency of occurrence or intensity of extreme precipitation events with durations ranging from hours to several days in various parts of the world (Donat et al., 2013; Krishnamurthy et al., 2009; Mann and Emanuel, 2006; Westra et al., 2013).

Westra and colleagues (2013), using land-based data, find that annual maxima of 1-day precipitation have increased significantly, with a central estimate of roughly 7% per

1-degree C temperature rise. Herring and colleagues (2014) cite a number closer to 5.3% per 1-degree C temperature rise, though within the uncertainty range of Westra and colleagues (2013). Janssen and colleagues (2014) update previous EPI-based studies and evaluated climate model simulations using Representative Concentration Pathways. Their results find increasing trends in extreme precipitation over the continental United States. Zhang and colleagues (2013) conclude that increases in Northern Hemisphere precipitation extremes since 1951 can be partially attributed to human influence on the climate, estimating a sensitivity of 5% per degree C in intensity. Their findings suggest that 1-in-20 year events in the 1950s are trending toward becoming 1-in-15 year events, which translates to a FAR of 25% and a RR of 1.33.

Most approaches to attribution of regional precipitation extremes have utilized ensembles of global models, a specific model in conjunction with a long historical record, or non-parametric statistical analyses of observational climate datasets.

Hoerling and colleagues (2014), using the National Aeronautics and Space Administration’s (NASA’s) Goddard Earth Observing System Model, Version 5 (GEOS-5) simulations, conclude that the extreme 5-day rainfall in northeast Colorado in 2013 could not be conclusively linked to anthropogenic climate change. In fact, they argue that such events may have become less frequent in that region. By contrast, they did note that Sillmann and colleagues (2013a,b) show increases in 5-day rainfall intensities for the globe and in the overall averages by the end of the 21st century. The strength of Hoerling and colleagues’ (2014) simulations lies in the 1-degree model simulations available over a significant period of the record (1871-2013), which allow for robust statistical analysis and characterization of the tails of the distribution. Model uncertainty itself is not addressed, however, nor is the dynamic mechanism for the simulated weakening of precipitation extremes in northeast Colorado identified or its robustness assessed.

Knutson and colleagues (2014) analyze seasonal precipitation extremes in the regions of the United States in 2013, using Global Historical Climate Network data in combination with CMIP5 output to perform attribution to external forcing (natural and anthropogenic combined). They find a role for external forcing in some of the observed extremes and “some suggestion of increased risk attributable to anthropogenic forcing,” but they are not able to clearly distinguish anthropogenic from natural forcing because their study design did not separate these. Otto and colleagues (2015a) use very large ensemble or regional-scale models in a probabilistic event attribution study in the United Kingdom. Their results are somewhat conflicting in terms of whether anthropogenic forcing contributed to extreme summer precipitation events. They find that the risk of an extreme rainfall event doubled in July because of anthropogenic

forcing but not in the other summer months. The authors suggest that the Clausius-Clapeyron relationship governs the July results but that unresolved dynamic processes are likely playing some role as well.

On the Horizon

Most of the attribution studies related to precipitation extremes have been conducted with a limited number of models or limited simulation samples. Larger multi-model ensembles would increase confidence. Heterogeneity issues in surface observations need to continue to be addressed also. Though convective parameterization continues to be a challenge of modeling studies addressing precipitation, increasing computer power and model spatial resolution should mitigate this limitation.

As the data record of satellite-based precipitation estimates lengthens, they may become viable for trend detection and attribution studies for extreme precipitation. Satellite-based studies are emerging as particularly useful for assessing regional and global extremes, particularly over the oceans and poorly instrumented regions (Lockhoff et al., 2014, Pombo et al., 2015). The Global Precipitation Measurement (GPM) mission and other capabilities will be beneficial in the coming years to decades (Hou et al., 2014).

As stated by Otto and colleagues (2015a), it will be critical that future studies better understand and resolve the multiple meteorological causes of heavy precipitation in order to better grasp causality and attribution. This statement will be relevant to any future attribution studies on extreme rainfall events.

EXTREME SNOW AND ICE STORMS

Event Type Definition

Severe winter weather includes snow and ice (freezing rain) storms, often accompanied by wind. While there are no universal criteria for defining extreme snow or ice storms, the National Weather Service typically issues heavy snow warnings for expected accumulations of 6 inches in 12 hours (or 8 inches in 24 hours) and ice storm warnings for expected ice accumulations of 0.25 inches or more. Impacts of a snow or an ice storm are compounded by wind as well as by the population of the area impacted by the storm. Region-specific impact indices have been developed: for example, the Northeast (U.S.) Snowfall Impact Scale (NESIS), which combines snowfall amounts and the number of people residing in the affected area. The absence of universal metrics for assessing heavy snow and ice events complicates the analysis

of trends and attribution studies. In addition, snowfall measurements are known to suffer from heterogeneities, such as gauge undercatch, and data on snow depth are of limited value for determining the snowfall from a single storm, as compaction and drifting are common with winter snow events. Lack of in situ measurements hinders the analysis of extreme snow and ice events in sparsely populated areas.

Prior Knowledge and Overview of Attribution Studies

Overall snow cover has decreased in the Northern Hemisphere, due in part to higher temperatures that shorten the time snow is on the ground (Derksen and Brown, 2012). Few studies have addressed trends in heavy snow and ice events, however, especially over regional and larger spatial scales. For the entire Northern Hemisphere, the summary in the preceding section (“Extratropical Cyclones”) showed that there is mixed evidence for trends in the frequency and intensity of cold-season storms, regardless of whether they produce snow and/or freezing rain. Several studies of overall storm frequencies also indicate a northward shift in the primary tracks during winter (Seiler and Zwiers, 2015a,b; Wang et al., 2013). Theory suggests that for the coldest climates, the occurrence of extreme snowfalls should increase with warming due to increasing atmospheric water vapor, while for warmer climates it should decrease due to decreased frequency of subfreezing temperatures, though by less than mean snowfall decreases (O’Gorman, 2014).

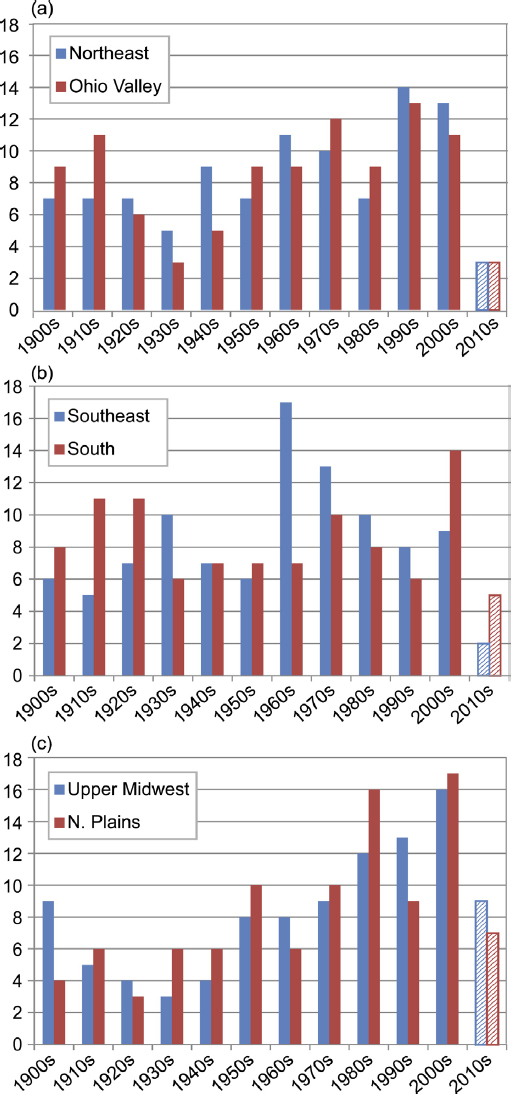

Over the century timescale, data from 1900 to the early 2000s show no significant trend in the percentage of the United States experiencing seasonal snowfall totals in the upper (or lower) 10 percentiles defined from the record as a whole (Kunkel et al., 2009). But when the top 100 snowstorms (defined on the basis of snowfall amount and areal coverage) are evaluated for various regions of the United States, there are substantial increases in the frequencies of occurrence from 1901-1960 to 1961-2013 in the northern regions (Northern Plains, Upper Midwest, Ohio Valley, and Northeast) but not in the southern regions of the United States (Figure 4.4).

To the committee’s knowledge, recent analyses of the frequencies of ice storms in the United States are lacking. Earlier studies of the number of freezing rain days (regardless of amount or intensity) showed no evidence of systematic trends in freezing rain occurrences over the United States during the latter half of the 20th century (Changnon and Karl, 2003; Houston and Changnon, 2006). There are indications of increases in ice storms in the North Atlantic subarctic (Hansen et al., 2014a), however.

In view of the data limitations and the ambiguities in event definition, it is not surprising that there have been few attribution studies of global or regional trends in

observations of extreme snow and ice events. Yet, there have been several attribution studies of particular events, conditioned on initial conditions in the atmosphere. Edwards and colleagues (2014) simulate the western South Dakota blizzard of October 2013, finding no difference in accumulated snowfall (snow water equivalent) between preindustrial counterfactual runs and modern-day simulations. Anel and colleagues (2014) use an ensemble of model simulations of recent winters to conclude that heavier-than-normal snowfall seasons in the Spanish Pyrenees are not directly attributable to anthropogenic forcing. Wang and colleagues (2015b) show that Himalayan blizzards such as the October 2014 event have an increased likelihood of occurrence when tropical cyclones from the Bay of Bengal interact with stronger extratropical systems, and they inferred an “increased possibility” of such circumstances in the future. In an earlier study conditioned on SSTs, Barsugli and colleagues (1999) find that the major ice storm of 1998 in the northeastern United States and eastern Canada was simulated more accurately when observed El Niño ocean temperature anomalies in the tropical Pacific were prescribed. With the possible exception of a tropical cyclone connection in the study by Wang and colleagues (2015b), none of the event attribution studies point to anthropogenic climate change as a major factor in the heavy snow events. The sample of case studies of extreme snow events examined to date, however, is too small to rule out possible anthropogenic warming effects. While trends in freezing rain events in the northern middle latitudes are prime candidates for effects of anthropogenic warming (Cheng et al., 2011; Klima and Morgan, 2015), systematic analyses of observed trends in freezing rain events have yet to be performed.

On the Horizon

Attribution of extreme snow and ice events suffers from a similar challenge as do some other extreme event types in that the events are strongly governed by the atmospheric circulation, for which externally forced changes are uncertain. For this reason, attribution of extreme snow and ice storm events may benefit from an emphasis on the thermodynamic state during particular events, as argued by Trenberth and colleagues (2015). Conditional attribution studies of snow and ice storms have lagged behind similar studies for other event types.

The databases underlying assessments of heavy snow and icing events have major deficiencies that hinder trend detection as well as attribution studies. It is likely that events are missed and/or their severity is underestimated. The construction of databases suitable for attribution studies merits consideration and action in the observing community.

Finally, recent cold winters and heavy snow events in the northern United States have raised public awareness of this type of event. The number of high-impact events in the northeastern United States, as measured by the population-weighted NESIS index, increased abruptly in the 2006-2015 period. This apparent abrupt increase, as well as the need to distinguish changes in drivers from changes in impacts, makes clarification of the role of anthropogenic climate change in snowstorms affecting the northern United States a high priority.

TROPICAL CYCLONES

Event Type Definition

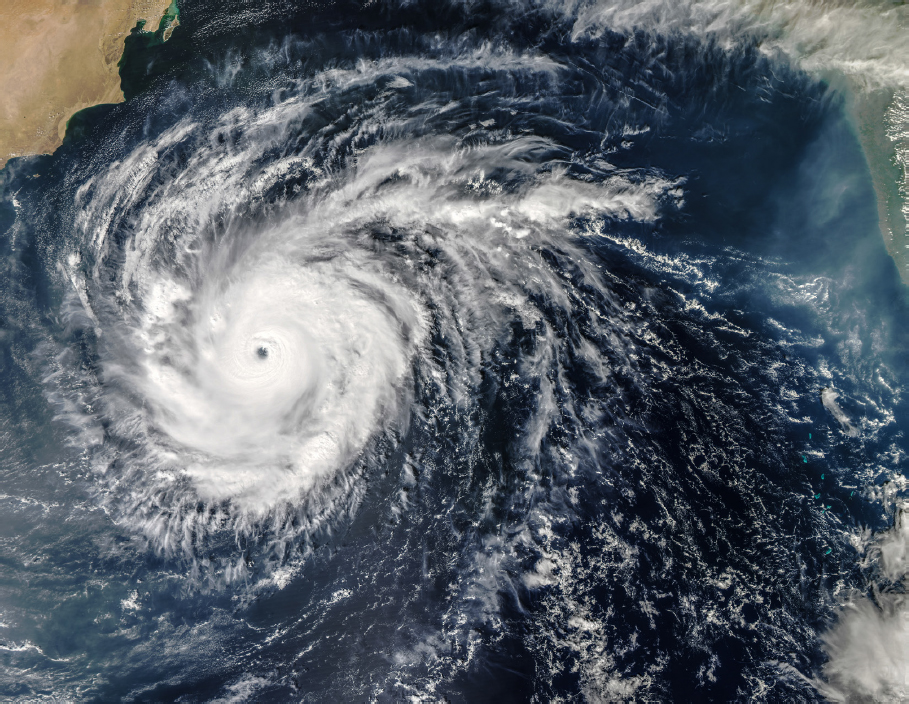

The National Oceanic and Atmospheric Administration (NOAA) defines a tropical cyclone as “a warm-core non-frontal synoptic-scale cyclone, originating over tropical or subtropical waters, with organized deep convection and a closed surface wind circulation about a well-defined center.” In each region of the globe that is prone to tropical cyclones, a Regional Specialized Meteorological Center, under the World Meteorological Organization (WMO), determines when a given system is a tropical cyclone and determines its intensity from available observations.

The intensity of a tropical cyclone is conventionally understood to indicate its maximum sustained wind. This is only a loose guide to the potential severity of a given storm’s impacts, however, as hazards associated with cyclones include both coastal and freshwater flooding as well as winds. A specific tropical cyclone event also might be defined for attribution purposes by storm surge, precipitation, storm size, economic damage, or other variables. For some of these quantities, observations are inadequate.

Maximum sustained wind speed itself is determined largely from satellite images, with in situ observations used where available. Uncertainties are significant (e.g., Knaff et al., 2010; Landsea and Franklin, 2013; Velden et al., 2006; see Figure 4.5) and may be greater for other variables, such as storm surge in regions where automated tide gauges are not available.

Even with good observations, the severity of an event may be very different in different variables. A storm may have weak winds, for example, but still cause a major disaster due to precipitation, storm surge, or high vulnerability. Similarly, attribution studies may reach different conclusions depending on which variable is considered, without necessarily implying any contradiction.

To the committee’s knowledge, purely observation-based methods have not been used to perform event attribution studies on tropical cyclones. Methods that rely on extreme value theory (e.g., van Oldenborgh et al., 2015) are not practical for tropical cyclones. These methods rely on the existence of a continuous time series for the variable of interest, while tropical cyclones are rare events that do not provide such time series. Many studies (as discussed below) look for trends in tropical cyclone statistics, but for the most part these have been inconclusive even on regional or global scales.

Prior Knowledge and Overview of Attribution Studies

Many studies have examined whether long-term trends exist in tropical cyclone statistics. Assessment of these trends is difficult due to the shortness of observational records in many basins; large natural variability, including at low frequencies, which may obscure any longer-term trends; and changes in observing systems and practices over time, which introduce heterogeneities into the observations even in those basins that do have relatively long-term records. Synthesis studies, using specified thresholds of statistical significance against a null hypothesis of zero trend, typically find that long-term trends cannot be clearly detected in tropical cyclone numbers, intensities, or integrated measures of activity (e.g., IPCC, 2014; Knutson et al., 2010; Walsh et al., 2015). An exception may be the frequency of the most intense storms.

Some studies find marginally significant increases in the frequency of category 4 and 5 storms (e.g., Elsner et al., 2008; Emanuel, 2006; Kossin et al., 2013), while others find yet greater significance by, for example, detecting a temporal pattern of increase that more closely matches estimates of GHG-driven change rather than a pure linear trend (Holland and Bruyere, 2014). In some regions, there are clear trends in recent decades; the Atlantic, where data are of highest quality, stands out (e.g., Emanuel, 2006). The attribution of these trends to specific causes remains debated, however, with some attributing them to natural variability and others to reductions in anthropogenic aerosol forcing (Mann and Emanuel, 2006). Kossin and colleagues (2014) find a robust increase—both in the global and hemispheric means and in most individual basins—in the average latitude at which storms reach their maximum intensities.

Little model-based research addresses the question of whether an anthropogenic influence is already present in long-term tropical cyclone statistics. There is, however, a large literature that addresses how tropical cyclones may change in future climates. Some of these studies use the same global climate models as used for overall climate change assessment (e.g., Camargo, 2013), but these are generally viewed as inadequate because their spatial resolutions are too low to produce good simulations of tropical cyclones. The field has advanced greatly in recent years due to the existence of higher-resolution global atmospheric models (e.g., Yoshimura and Sugi, 2005; Zhao et al., 2009) as well as innovative downscaling techniques that combine higher-resolution regional or idealized models of tropical cyclones with global models of climate change (Emanuel, 2006), or statistical refinement techniques to address the limitations on cyclone intensity posed by limited resolution (Zhao and Held, 2010).

Based in large part on these new models, broad consensus has emerged as to the expected future trends and their levels of certainty (e.g., IPCC, 2013; Knutson et al.,

2010; Walsh et al., 2015). Tropical cyclones are projected to become more intense as the climate warms. There is considerable confidence in this conclusion, as it is found in a wide range of numerical models and also justified by theoretical understanding, particularly because there is a well-established body of theory for the maximum potential intensity of tropical cyclones (e.g., Bryan and Rotunno, 2009; Emanuel, 1986, 1988; Holland, 1997). The rate of intensification per degree of global mean surface warming remains quantitatively uncertain; however, because maximum potential intensities are projected to rise (e.g., Camargo, 2013), future observations of tropical cyclones with intensities significantly higher than those observed in the past would be consistent with expectations in a warming climate, and attribution studies for such storms would have a firm basis in physical understanding.

The global frequency of tropical cyclone formation is projected to decrease (Camargo et al., 2014; Knutson et al., 2008, 2010; Seneviratne et al., 2012; Walsh et al., 2015), but there is less confidence in this conclusion than in the increase in intensity; some credible models produce increases in frequency (Emanuel, 2013). The uncertainty is still greater in projections of tropical cyclone frequency in individual basins. Changes in the frequency of the most intense storms are related to changes in both the frequency of all storms and the average storm intensity. Thus, they are less certain than the intensity changes alone because reduced frequency and increased intensity have opposing effects; Christensen and colleagues (2013) state that the frequency of the most intense storms “will more likely than not increase substantially in some basins under projected 21st century warming.” Precipitation in tropical cyclones is expected to increase because of the increased water vapor content of the atmosphere, similarly to other extreme precipitation events; Christensen and colleagues (2013) express medium confidence in this projection. While there are only a few projections of changes in storm surge itself, total coastal flood depths, relative to fixed elevations, are confidently projected to increase as a consequence of sea level rise (e.g., Hoffman et al., 2010; Woodruff et al., 2013). Coastal flood risk due to storm surge is projected to increase due to both sea level rise and tropical cyclone intensity change, though the influence of the latter is more model-dependent (e.g., Emanuel, 2008; Lin et al., 2012).

To the committee’s knowledge, attribution studies of single tropical cyclones using large ensemble simulations (without conditioning on event occurrence), for example, as needed to calculate a FAR, have not been performed. Murakami and colleagues (2015), however, executed a study of this kind with a global high-resolution model to perform attribution on a single tropical cyclone season as a whole.

The highly-conditioned method has been used in a few recent studies of individual tropical cyclones. Trenberth and Fasullo (2007) and Wang and colleagues (2015a) esti-

mate the role of climate change in the rainfall produced by pairs of individual storms in the United States and Taiwan. Lackmann (2015) simulates Hurricane Sandy (2012) in a high-resolution regional model nested into large-scale climate fields obtained from coupled simulations representing conditions in 1900, 2012, and 2100. Irish and colleagues (2014) consider the influence of anthropogenic climate change on the flooding due to Hurricane Katrina in 2005, including an estimate of the potential anthropogenic influence on the hurricane’s intensity as well as the role of sea level rise in increasing the total water depth relative to a fixed benchmark. All of these studies find modest increases in their respective measures of event intensity due to warming. The highly conditioned approach may be particularly attractive for tropical cyclone studies because large-ensemble approaches have not yet been practical, while a range of tools exists for modeling individual storms and their impacts.

On the Horizon

Though not practical in the past, large-ensemble attribution studies of individual tropical cyclones are becoming technically possible. High-resolution global models now exist that simulate tropical cyclones reasonably well (e.g., Shaevitz et al., 2014) and could be used for this purpose; the challenge is the high computational cost per simulation year as well as the large number of years required for statistical significance. Downscaling methods, whether statistical, dynamic, or hybrid (e.g., Emanuel, 2006), can be much less computationally expensive and could be used today for such studies (e.g., Takayabu et al., 2015). These methods typically require specified SST and so would be conditional on a given SST scenario as well as GHG increases. In addition to these two conditions to model quality requirements, the lack of consensus on the significance of observed trends in tropical cyclone statistics would pose a challenge to the interpretation of such studies for tropical cyclones. Because one of the difficulties in trend detection studies is the sample size in the presence of large low-frequency natural variability, however, model-based attribution studies would have an advantage to the extent that they could generate larger sample sizes than those available from observations.

EXTRATROPICAL CYCLONES

Event Type Definition

The term “extratropical cyclone” refers to the migratory frontal cyclones of middle and high latitudes, which are embedded within the large-scale westerly flow and thus

move from west to east. There is no unique operational definition for the term, though a number of features are commonly agreed to be important. Extratropical cyclones derive their energy from the horizontal temperature contrasts in the extratropical atmosphere, through the process of baroclinic instability, and often contain fronts, though they also may be strengthened by latent heat release. Extratropical cyclones likewise can arise as tropical cyclones lose their axisymmetry and other tropical features in the process of extratropical transition. Studies generally define extratropical cyclone intensities either by minimum surface pressure (converted to sea level) or by maximum lower-tropospheric vorticity.

The impacts of extratropical cyclones are generally felt through frontal precipitation, storm surges, or windstorms; the latter are often concentrated in so-called sting jets embedded within the synoptic system. Storm surges warrant special treatment because they also depend on tidal variations and on sea-level rise, not just on the storm itself.

Prior Knowledge and Overview of Attribution Studies

Statistics of observed events exhibit pronounced multidecadal variability, often linked with large-scale circulation patterns such as the North Atlantic Oscillation (NAO). Although trends are sometimes reported in the literature, they are highly sensitive to the period chosen and to how the storms are defined. Assessments of historical centennial timescale changes have to be based largely on reanalyses, which may contain long-term heterogeneities (Krueger et al., 2013). As a result, there is no consensus on attributed trends in observations, at least in the Northern Hemisphere. A recent comprehensive review for the North Atlantic and northwest Europe is provided by Feser and colleagues (2015a), and for the U.S. East Coast by Colle and colleagues (2015).

The expected effect of human-induced climate change on extratropical cyclones is unclear because there are competing factors: The reduction in pole-to-equator temperature gradient expected from polar amplification would tend to weaken cyclones, but the increase in moisture would tend to strengthen them, as would the increase in upper tropospheric temperature gradient (O’Gorman, 2010). Although the IPCC Fourth Assessment Report concluded that cyclones would be expected to strengthen, this was based on a study (Lambert and Fyfe, 2006) that used minimum surface pressure as the index; the overall expected decrease in surface pressure at higher latitudes thus induced a trend which was not actually related to cyclone intensity. In the IPCC Fifth Assessment Report, future projections of extratropical cyclones were found to be uncertain (Christensen et al., 2013).

Moreover, the storm track positions could change location in the future. Zappa and colleagues (2013b) find an overall intensification of the wintertime storm track over northern Europe in the CMIP5 models and a weakening of the Mediterranean storm track, but the confidence in this projection remains uncertain because the relevant physical processes are not yet understood. Seiler and Zwiers (2015a,b) find that explosive cyclones “rapidly intensifying low pressure systems with severe wind speeds and heavy precipitation” tend to shift poleward in the Northern Hemisphere, decrease in frequency due to weakening baroclinicity, and increase slightly in intensity. Hoskins and Woollings (2015) discuss the various physical mechanisms that have been proposed for driving anthropogenic circulation changes at midlatitudes and their link to weather extremes, and they conclude that there is substantial uncertainty concerning what can be expected in the future.

Human influence appears to be stronger in the Southern Hemisphere, where it has been exerted through stratospheric ozone depletion. Model-based attribution studies have found an ozone depletion influence on Southern Hemispheric extratropical cyclones and associated extreme precipitation, evident most clearly in a poleward shift in the storm track (Grise et al., 2014; Kang et al., 2013).

Yang and colleagues (2015) use a seasonal prediction system to assess the drivers of the extreme storminess over the central United States and Canada in winter 2013/2014; they found no evidence of a human influence, but they did find a FAR in the range of 33-75% due to the multiyear anomalous tropical Pacific winds.

Marciano and colleagues (2015) run a weather model to simulate observed individual wintertime extratropical cyclone events along the U.S. East Coast in present-day and project future thermodynamic environments. They find increases in precipitation, cyclone intensity, and low-level jet strength resulting from the increased latent heating. This was for the future, however; there was no assessment of the human influence so far. For storm surges, the contribution from sea-level rise has been estimated under the highly conditioned assumption of no change in storminess; Lopeman and colleagues (2015) perform such a study for Hurricane Sandy in 2012; technically an extratropical cyclone at landfall), while Colle and colleagues (2015) discuss longer-term changes in New York City. In both cases, the anthropogenic contribution to past storm surges was estimated to be small but predicted to become a substantial factor (in terms of decreases in return periods) over the course of this century.

Because extratropical cyclones are defined as discrete events rather than extreme values of continuous time series, observation-based methods for attribution using extreme value theory may not apply as straightforwardly to extratropical cyclones as to some other event types. Nevertheless, both van Oldenborgh and colleagues (2015)

and Wild and colleagues (2015) use observational analysis to challenge the suggestion (e.g., Huntingford et al., 2014) that the intense storminess over the United Kingdom in winter 2013/2014 was driven by anomalously warm Pacific SSTs, which might have an anthropogenic component.

On the Horizon

Trzeciak and colleagues (2014) suggest that although current global climate models generally underrepresent the intensity of extratropical cyclones due to insufficient latent heat release, once the horizontal resolution is finer than about 100 km they should be adequate, and that the systematic biases will then mainly involve storm track location. Seiler and Zwiers (2015a) found that resolution is not correlated with explosive storm intensity across the CMIP5 ensemble, but they note that competing effects of vertical resolution and model physics inhibit strong interpretation of that result. Horizontal resolution has been found to be important in sensitivity studies with single models (e.g., Jung et al., 2006), and idealized simulations of extratropical cyclones have been shown to be limited by resolution and dissipation at typical climate model resolutions (Polvani et al., 2004). Thus, it may still be the case that resolution is a factor limiting analyses of storm intensity, and that improvements in resolution will be beneficial to future attribution studies. Zappa and colleagues (2013a) showed that the location biases (features simulated with some fidelity but occurring in the wrong location) in CMIP5 models are generally very severe in the North Atlantic. As a result, typically the model biases in storm count at specific locations are several times larger than the change expected under RCP8.5 at the end of the century. Experience with medium-range and seasonal prediction systems has shown that these biases tend to be alleviated with higher spatial resolution, however. Thus, it is currently feasible to run global models with a reasonable representation of extratropical cyclones. The main issue for event attribution, then, is to assess whether simulated anthropogenic changes in the large-scale circulation that affect the storm tracks are credible. Without a robust physical understanding of the processes controlling such changes, or a clear signature in observations, this will be a challenge (Hoskins and Woollings, 2015).

Any attribution of the impacts of extratropical cyclones—frontal precipitation, storm surges, or windstorms—would likely have to downscale the synoptic situation in some credible manner, which for the foreseeable future will require a highly conditioned framework.

WILDFIRES

Event Type Definition

Although wildfires are not meteorological events, their likelihood and extent can be influenced by climatic factors. Wildfires are often large and rapidly spreading fires affecting forests, shrub areas, and/or grasslands. Wildfires occur in many areas of the world, especially those with extensive forests and grasslands (Romero-Lankao et al., 2014). While most wildfires are started by lightning, a substantial number are started by humans, especially near populated areas. The most common metric of wildfires is the area burned, either by a single wildfire or by all wildfires during a fire season in a particular region.

Attribution of wildfire trends and extreme events is complicated by (1) the role of humans in ignitions, fire suppression, and management of forests and other biomes (Gauthier et al., 2015; Lin et al., 2014); (2) the importance of lightning, hence small-scale thunderstorms, in igniting large fire outbreaks; (3) the importance of larger-scale weather in the wildfire spread and growth into major events (specifically, winds and humidity for fire spread, and rain for extinguishing a fire outbreak; Abatzoglou and Kolden, 2011); and (4) the health of the forest (e.g., a white pine bark beetle infestation). Thus, attribution studies need to consider three time/space scales: (1) individual large fires, which are controlled primarily by short-term weather patterns; (2) regional-scale within-season extreme fire periods, which are driven by seasonal weather patterns; and (3) large fire seasons, which are regional-scale events resulting from climate teleconnections associated with persistent blocking ridges that cause extended fire seasons (with delayed season-ending rains). Preseason preconditioning of soils and vegetation can play a role on all three timescales.

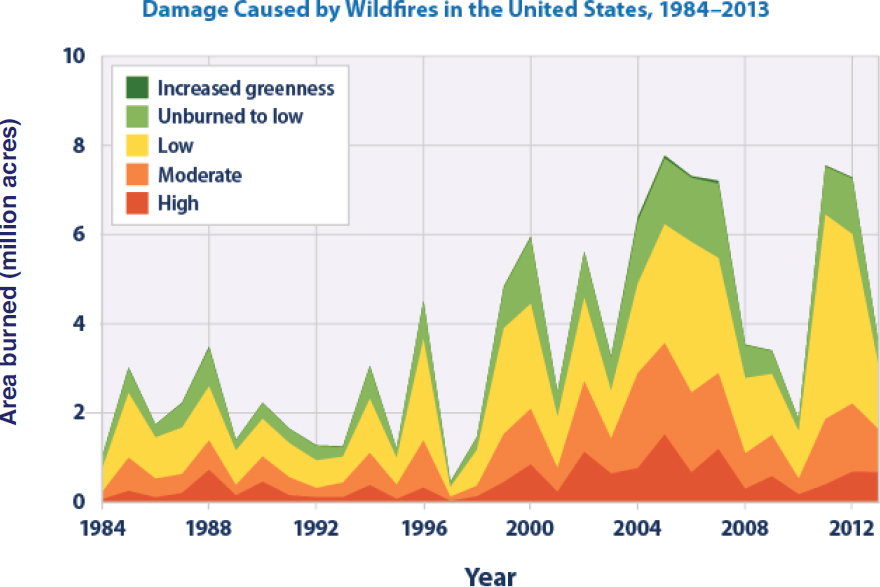

Prior Knowledge and Overview of Attribution Studies

Analysis of wildfire trends and extremes is limited by the availability of consistent data records. For example, fire surveillance methods have improved in recent decades; the area actually burned by a fire can be less than the area within the fire perimeter; and some metrics of fire activity include only large fires. There has been an overall increase in the area burned in the United States over the past several decades (Figure 4.6). The increase is especially apparent in the West. Trends are less apparent in Canada, where the area burned by large fires increased from the 1960s to the 1980s and 1990s, after which there has not been an increase (Krezek-Hanes et al., 2011). Globally, however, fire weather season lengths showed significant increases during 1979-2013 across more

than 25% of the Earth’s vegetated surface, resulting in a 19% increase in the global mean fire weather season length (Jolly et al., 2015).

Periods of unstable atmospheric conditions result in high winds, rapid fire growth, extreme fire behavior, and convective storms that provide lightning for ignitions. Because climate models do not explicitly include lightning (or explicit formulations of convective storms), atmospheric stability and rain rate have been used to construct indices of lightning activity derived from model output. In an application of this approach to the output of a set of global climate models, Romps and colleagues (2014) project an increase in lightning strikes over the contiguous United States by 12% (+/–5%) per °C of global warming, or about 50% over this century.

Wildfires are closely associated with heat and drought, so some of the attribution issues pertaining to extreme wildfires and their likelihoods are covered in the preceding

subsections on heat and drought. One of the earliest attribution studies showed that the increase of wildfire burn areas in Canada during 1959-1999 was consistent with anthropogenic summer warming (Gillett et al., 2004).

In addition to the controls by climate and weather (highlighted above), the availability of fuels and hence the state of the vegetation affects individual fires, as well as overall fire season severity. Attribution studies have generally used climate model output in conjunction with vegetation models or with metrics of fire risk derived from model-simulated precipitation and temperature. An example of the latter is a recent study by Yoon and colleagues (2015), who use ensembles of historical and future RCP8.5 simulations by the CESM model to show that an increase in fire risk in California is attributable to climate change. Beginning in the 1990s, the latter part of the historical simulation, a clear separation emerges between fire risks driven by only natural variability (the counterfactual climate, a long preindustrial simulation) and those driven by anthropogenic climate forcing (Yoon et al., 2015; see Figure 2.2). These results indicate that an increase in fire risk in California is attributable to climate change, consistent with the occurrence since 2010 of several of the most severe fire years on record in California.

Similar model-derived results have been obtained for the broader western United States (Luo et al., 2013; Yue et al., 2013), for Alaska (Mann et al., 2012), and for Canada (Flannigan et al., 2015). In the latter study, each degree of warming was found to require a precipitation increase of 15% to offset the temperature-driven decrease of the moisture content of fine surface fuels.

On the Horizon