3

Advancing the Hardware Foundation

![]()

While it is software that comes to mind most readily when we think about using computers—the interfaces that we interact with, the applications we use to perform tasks—hardware is the foundation that makes all computing and communications technology possible. Behind Instagram is a camera; behind your favorite mapping app is a Global Positioning System (GPS) satellite; powering your laptop is a lithium-ion battery; central to your smartphone is its liquid crystal display (LCD) touchscreen display. As this handful of examples make clear, there are a tremendous number of component technologies making up the devices we depend on every day. In a vast majority of cases these technologies are rooted in pioneering research conducted or funded by the federal government, which later fed into other research and commercial endeavors.

Current trends suggest a future populated with ever more computing and communications technologies, from self-driving cars to gadgets that are worn on or even embedded inside the body. As we move toward this future we will continue to depend on fundamental research to overcome the limitations of current technologies and usher forth new hardware and computer architectures. This chapter summarizes presentations by Margaret Martonosi, on the evolution of computer architectures that balance capabilities and speed against the limitations of energy and heat, and Thad Starner, on the history and development of wearable computers.

DEVELOPING DISRUPTIVE ARCHITECTURES

As our computers and communication tools evolve, each new device must strike the right balance between capabilities, speed, power, and thermal constraints in order to achieve a higher level of functionality and speed without overheating or draining power too quickly. Exchange between researchers and industry has been crucial to the development of new, disruptive architectures that can overcome previous constraints and enable radically new products.

Margaret Martonosi, a professor of computer science at Princeton University, is known for her work on computer architecture. Coined in a 1964 IBM paper,1 the term computer architecture refers to the field of computer design concerned with balancing competing factors such as computing performance, power needs, cost, and reliability. In particular, Martonosi’s research has focused on power efficiency.

Martonosi described computer architecture as a mediator between computer technology—the technical challenges of building computers—and computing applications—what you can do with those computers once they are built. Today’s technology landscape brings challenges and opportunities in both realms. “Since it’s a very dynamic time for both the application side and the technology side, that makes it a particularly interesting time to be a computer architect,” said Martonosi.

Hitting Inevitable Limits

Current computing applications are dramatically widening the scope of what computers can do. Today’s computers work with a lot of data highly distributed across a diversity of devices and are much more communication-intensive than computers in the past. At the same time, new applications and functionalities are demanding more performance for computations, storage, and communication. For many years, improvements in architecture and technology enabled computers to get smaller and faster without increasing power usage. Unfortunately, although engineers are still making everything smaller, they are having difficulty increasing speed or reducing power use: Computers are hitting the inevitable limits of speed and power constraints.

The transition toward the Internet of Everything raises the stakes on overcoming these challenges. For example, biomedical researchers are exploring multiple promising opportunities to improve people’s health and save lives by embedding sophisticated, computer-enabled medical devices inside the body. But for these devices to become widely applicable, computers are needed that do not quickly burn through their batteries

___________________

1 G.M. Amdahl, G.A. Blaauw, and F.P. Brooks, Jr., 1964, Architecture of the IBM System/360, IBM Journal of Research and Development 87-101.

or throw off heat that could harm the patients they are intended to help. Current technologies are not sufficient to realize all of the important computing applications envisioned for the future.

A History of Innovation from the Niche to the Mainstream

Martonosi described the evolution of research and technologies that are reflected in today’s computer architectures. Much of this work, for example, has been informed by DARPA-funded supercomputing research conducted in the 1970s through the 1990s. Although those investments were targeted at niche areas of computer science, experience has proven that sustained government-funded research ultimately trickles into the mainstream; the results of DARPA’s supercomputer research investments are central to the architecture of the computers and smartphones we use today. Martonosi noted, “It’s a real success story of research that was done, viewed as niche, that later we had to pull it out of our pockets and use it in a much more mainstream way.”

Energy use, Martonosi’s focal area, is not a new area of computer architecture; in fact, power has been a consideration since the very first computers. Even the developers of ENIAC, the first electronic computer, developed by Mauchly and Eckert in the mid-1940s, paid careful attention to its power use, which was about 150-175 kilowatts.2 Ever since those early days, when a computer architecture design hit its power limit, there was a new technology to switch to: first there were relays, then vacuum tubes, then bipolar transistors, and then metal-oxide semiconductors. Today, Martonosi said, computer architects are once again facing power limits, but the difference this time is that there is no ready new technology to switch to that would enable computers to increase their productivity without hitting thermal constraints.

Computer architecture research in the 1990s led to several important developments making computers more energy efficient. One is dynamic voltage scaling. Stemming from InfoPad, a DARPA-funded project at the University of California, Berkeley,3 this innovation enabled a computer to reduce its power supply voltage in order to optimize power use. Dynamic voltage scaling is now standard on every phone and computer. Other technical solutions to power efficiency, also DARPA-funded, include narrow bit-width optimizations and speculation control. These solutions, developed by basic computer science researchers, were also quickly incorporated into product design.

Power modeling is another innovative idea with roots in basic computer architecture research that took place in the 1990s. For the first time, power models allowed architects to evaluate new ideas for optimizing power usage much earlier in the design

___________________

2 G. Farrington, 1996, ENIAC: Birth of the Information Age, Popular Science (March):74-76.

3 University of California, Berkeley, “Infopad: Wireless MultiMedia Computing,” http://www.wirelesscommunication.nl/reference/chaptr01/dtmmsyst/infopad/infopad.htm, accessed November 18, 2015.

process. As a result, computer architects were able to influence design, and power models became critical to understanding the power–capability trade-offs. Martonosi’s early efforts in power modeling were adopted by the computer industry, specifically IBM, becoming part of mainstream computer design.

By the early 2000s, although researchers had been steadily making improvements, they still needed to do more to address power constraints. A big breakthrough came in 2005, with the invention of on-chip parallelism: the addition of more processors to a silicon chip in order to allow a computer to carry out multiple calculations simultaneously. This development enabled more computation with less power usage. At this point, parallelism research and power research, once separate computer science areas, converged, and the joint research led to the invention of computer chips that contain many specialized, heterogeneous processors to carry out the hundreds of computations made by today’s devices.4

Martonosi explained that the progress from one technological advancement to the next has not always been linear. As on-chip parallelism was integrated into more products, starting in the mid-2000s, computer architects reached back into decades of DARPA- and NSF-funded parallelism research to improve capabilities in this area. Several key projects, such as those focused on shared-memory cache coherence, scalable protocols, and Hydra chip multiprocessors, led directly to technologies now widely used in today’s computer servers, network processors, and smartphones. Martonosi said that parallelism, like the early research on supercomputer architectures, is another area in which early government funding of a niche research area has led directly to technologies that are now in wide use.

A Disruptive Moment

Computer architecture straddles hardware, which allows software to operate, and software, which lets us use computers to perform tasks. Today’s seismic changes in both hardware and software are creating significant changes in computer architecture as well, said Martonosi: “I see this as an interesting, exciting, and disruptive moment in computer architecture.” Devices today are full of computer chips that are high-performing and power-efficient but incredibly complex to program, including processors for audio, video, face recognition, and dozens of other capabilities. Pointing to the diagram of a modern chip, Martonosi noted, “The manual for this chip is 5,000 pages long.”

Martonosi raised the concern that the commercial computing industry is unlikely to address fundamental challenges facing computer architecture, for several reasons. First, as other presenters have said, most companies’ goals are too short term and modest to

___________________

4 J. Li and J.F. Martinez, 2005, Power-performance implications of thread-level parallelism on chip multiprocessors, pp. 124-134 in IEEE International Symposium on Performance Analysis of Systems and Software, 2005, ISPASS 2005, doi:10.1109/ISPASS.2005.1430567.

pursue solutions to high-level, crosscutting problems. Also, hardware and software are in most cases developed by different companies, so few companies have a financial motivation to take on the burden of research and development in the no-man’s land between them. In addition, individual pieces of software, also designed by different companies, may not work well together when combined on one device (a bit like the Tower of Babel, according to Martonosi). Finally, companies are also unlikely to collaborate with their competition and may not even feel enough market pressure to tackle the problem.

Martonosi said these issues are likely to lead to an increase in software development costs and a decrease in software reliability and security. The U.S. military systems’ reliance on computers, which comprise many different software and hardware parts from many different vendors, illustrates the importance of taking up this challenge at a broad level. There are multiple areas ripe for research, Martonosi added. For example, there is a need for solutions that can help manage chip heterogeneity and establish a better balance between communication needs, which dominate device use today, and computational needs. Basic research advances in these areas would support computer architects’ important role as mediators between power usage and computation and hopefully usher in a new generation of computers that can be both faster and more energy efficient.

The Winding Path of Wearables

Computers that are small and lightweight enough to be worn on or in the human body—such as the Apple watch, Fitbit, Google Glass, and others—hold tremendous potential for a variety of uses. But the technology behind today’s wearable computers has followed a circuitous and at times surprising path through government-funded academic research, the experiments of hobbyists and tinkerers, and commercialization by multinational technology companies. Today’s wearables are made possible by myriad component technologies, such as speech recognition, lithium-powered batteries, cloud computing, and innovative architectures that allow computers to be lightweight, low power, and seamlessly integrated into people’s daily lives.

Thad Starner, a professor at the Georgia Institute of Technology’s College of Computing and a pioneer in the field of wearable computers, delivered a presentation on the history of wearables while himself sporting Google Glass, a breakthrough wearable product he helped to develop.

Why Wearables?

While wearable computers perform many of the same functions as a smartphone or traditional computer, they offer a number of additional advantages. One is that performing a quick task using a wearable computer requires significantly less movement and attention than accessing a laptop or phone that might be across the table or in another room.

Perhaps most relevant to today’s busy, multitasking society is that wearing a computer shortens the length of time between intention and action: as soon as you realize a task needs to be done, you can complete it within seconds.

Because wearables are closer to the body, they can also access health information such as temperature or pulse, making them useful for monitoring fitness activities or providing medical alerts. Starner asserted that more advances are expected in the medical fields. Pacemakers, wearable glucose monitors, wearable insulin pumps, and other such devices, for example, could read signals from a patient’s body and personalize treatment accordingly. Rehabilitation specialists are looking into wearable robotics, a truly cross-disciplinary field, to help patients recover muscle strength or limb movement after an injury. Of course, Starner noted, giving computers such access to our bodies and our health information means that privacy and data protection are crucial whenever wearable computing is discussed or designed.

Starner also pointed out that fashion is likely to be a significant driver in the development of wearables. To some extent, wearables may get the most traction from first being fashionable, then becoming more functional as they spread and evolve. The most exciting aspect of wearable computing, in Starner’s view, is that the industry is really just getting started: “The interaction between man and machine, between the computer and the user, is just getting interesting. I think we’ll see more advances in the next 10 years than in all the previous years combined.”

From Fiction to Fact

While a number of wearable computing technologies are gaining steam on the commercial market, the history of research and innovation leading up to this point has been a somewhat bumpy road. “In the press, people are suddenly discovering wearable computing. . . and the question I get often is, ‘Why now?’ There are actually some very good reasons why we could not do this before,” Starner said.

People have been envisioning wearable computers at least as long as computers have been around. A 1945 issue of Life magazine featured an article titled “As We May Think,” in which computer pioneer Vannevar Bush imagined a futuristic device he postulated would someday assist scientists in their work: a forehead-mounted camera used to record experiments on-the-go. At the time, computers were the size of an entire room and photographic technology was still far from its current digital form, but that didn’t stop visionaries like Bush from assuming—correctly, in this instance—that wearable computing would eventually become a reality.

Early work on artificial intelligence and virtual reality (such as “Augmenting Human Intellect: A Conceptual Framework” by Douglas Engelbart in 1962 and Ivan Sutherland’s Sword of Damocles head-mounted display in 1968) was also built on the assumption

that as computers advanced and connected the world, people would be wearing them and they would truly be a part of us. However, although computer interfaces did get smaller and more advanced, largely thanks to DARPA-funded research, they first evolved into the personal computer, away from the body as separate machines.

Starner recounted how he has long tinkered with wearable computing. As a student at MIT in the late 1990s, he created a wearable computer for taking notes more effectively that included goggles, a keyboard that could be held in one hand, and a 1-pound hard drive stowed in a backpack. Starner’s homegrown device presaged his eventual involvement in developing Google Glass, a cutting-edge commercial product designed to fulfill a similar need, albeit in a format that is more appealing to the general public than Starner’s original bulky apparatus.

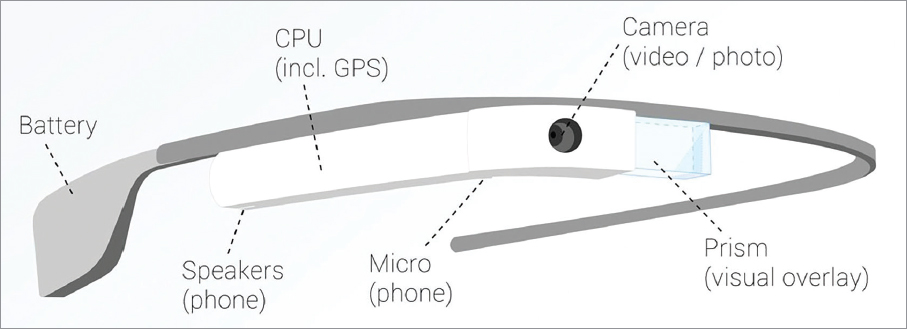

The early 2000s saw a significant push toward mobile wearable computing as smartphones took off and displays grew smaller. A key development in the path to Google Glass was the ability to embed the display inside a glass lens, removing the need for clunky goggles. The resulting technology (see Figure 3.1) allows regular people—not only computer scientists or technology hobbyists—to use this intuitive and seamlessly integrated, wearable technology.

Many of the component technologies that enable today’s wearables can be traced to research and development advanced or funded by the federal government. A micro-display pioneered by Hubert Upton at Bell Labs in the 1960s was later further developed by DARPA for use in military helicopters; the U.S. Army also incorporated the microdisplays into wearable computers to increase efficiency and reduce the number of personnel needed for inspecting tanks. NASA, driven by a need for better cameras for its missions, advanced key technologies that were later incorporated into webcams, smartphone cameras, and Google Glass. Sensing, GPS, and speech recognition are other key ingredients of wearable technology that can be traced back to government-funded research.

Although many of the early drivers for wearable technologies were rooted in government applications, wearables have clearly had commercial appeal as well. In 1995, Nintendo stuck two microdisplays together and sold 1 million units of its Virtual Boy, the first virtual reality consumer product. Finding no existing standard for personal-area networks and unable to deploy wearables for its employees without such a standard, FedEx convened experts to create the one used today, IEEE 802.15.6.

While wearables might seem, to the casual observer, as if they arrived overnight, Starner stressed that today’s consumer wearables could be traced down a long path from the hobbyist researcher and the federally funded lab to military applications to consumer products. As these devices continue to improve and become an ever more integral part of our lives, he concluded, we owe a great debt to the government-funded research pioneers, from all areas of computer science, who created the technologies that enable present—and future—wearables.