5

People and Computers

![]()

As all of the workshop presenters made clear, computers and communications technologies have had a profound impact on everyday lives and will continue to do so in the foreseeable future. A common thread of the workshop was the constant push and pull between technology and people: the ways people influence technology and, in turn, the ways technology influences people. This chapter focuses on three presentations exploring different aspects of the relationships between humans and technologies: cybersecurity, user-centered design, and social science research.

In his presentation, Stefan Savage shared key challenges and trends in cybersecurity. In the next presentation, Scott Hudson described the emergence and key contributions of user-centered design in computer science. A basic premise of user-centered design is that no matter how innovative or elegant a new program or piece of hardware is, technology sinks or floats based on how useful—and usable—it is. Drawing examples from academic research and commercial products, Hudson described the practice of user-centered design and its crucial role in the success of many of the technologies depended on today. The third presentation, by Duncan Watts, explored how technology has been informed by—and has itself opened up vast new opportunities for—social science. Insights about how people behave and interact with each other are of tremendous value for businesses and governments alike, and Watts described how this research has been fueled by a strong government–academia–industry ecosystem.

SEEKING CYBERSECURITY

While much of the workshop focused on the positive outcomes of the many technological innovations that have been enjoyed over the past few decades, Stefan Savage discussed a darker aspect of this technological growth: cybersecurity. Savage, a computer science professor and researcher at the University of California, San Diego, has spent his career working on cybersecurity topics such as network worms, malware, and wireless security.

Defining “security” as freedom from fear and danger, Savage described how technological changes have brought both new fears and new dangers. He attributed most of today’s security challenges to five major developments: the Internet and its pervasive connectivity, e-commerce, data centralization, mobile technologies, and the emergence of the Internet of Things. In short, Savage said, “We have handed over control of our lives to computers and to the networks that interconnect them.”

What makes cybersecurity such a pernicious problem, he explained, is that it is not merely a technological challenge that can be “fixed.” Because cyberattackers are human and stand to gain financially from these activities, it is actually a socioeconomic problem with adversaries, victims, and defenders. Technology simply provides the tools and setting for these battles to unfold.

Another key challenge, according to Savage, is that “we have no way to evaluate security solutions except by how they fail.” This confusion leads to a morass in the cybersecurity field with many problems but few solutions.

Savage discussed how cybersecurity is also a crosscutting discipline. The challenge for this field has long been how to build security solutions into the entire array of quickly-developing technologies and applications, from machine learning algorithms to wearable computers and personal monitors to robots and autonomous vehicles.

Savage traced many of the major components of cybersecurity in use today to 30 years of federally funded academic research. Thanks to government funding, academic researchers not only were able to focus on developing individual security solutions for individual problems but also had the time and funding to incorporate cybersecurity research into larger public policy problems. This government funding has spurred industrywide advances in cybersecurity that extend far beyond the reach of one specific product or technology.

Rather than delving into the long history of computer security, Savage focused on two recent stories that illustrate how government-funded academic cybersecurity research has been essential in creating industrywide standards that protect consumers and businesses. The first story, from the automobile industry, led to safer cars, and the second story, relating to software piracy, helps businesses protect intellectual property.

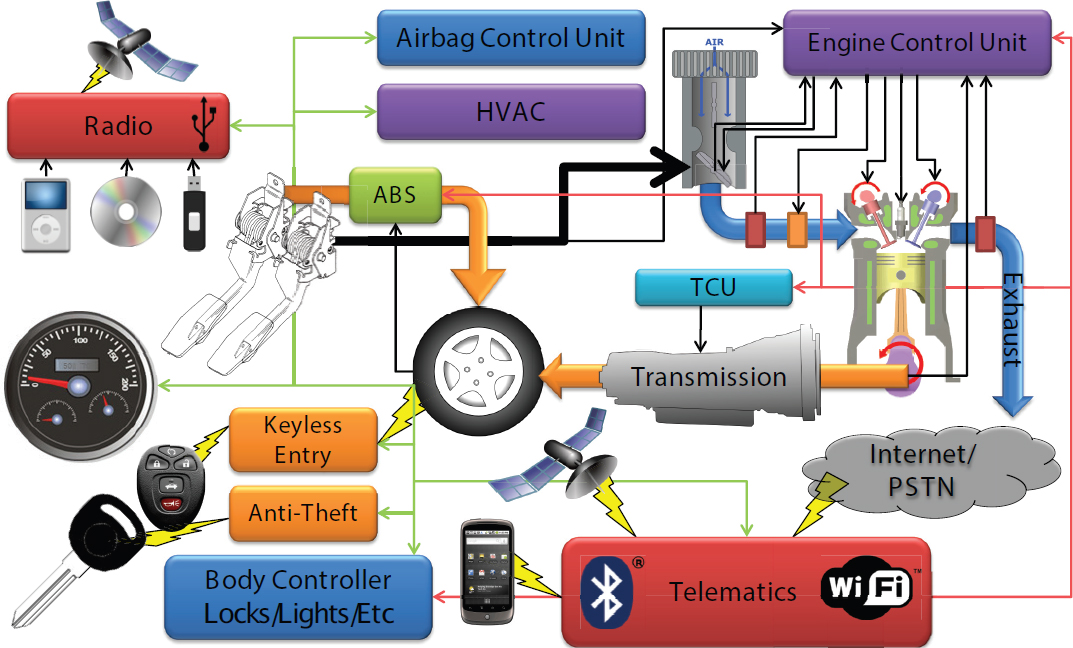

Security in Transportation

Although most people may not be aware of it, Savage said transportation today is “deeply computerized.” A single car, for example, may have up to 30 different computers all networked together, from the radio to the brakes to the air conditioning (see Figure 5.1). Many of these computers are designed to provide functions that humans cannot; for example, adjusting the fuel-oxygen mixture to regulate emissions. Others enhance safety or provide entertainment. The net result is a huge amount of digital information being created and transmitted by incredibly complicated systems; yet, most in-car computer systems are equipped with far fewer security protections than a typical personal computer.

Savage’s research has shown that it is possible to tap into these systems to remotely take over a car. In one experiment, for example, his team was able to deactivate the brakes of a brand new car, straight off of the dealer’s lot, from 1,000 miles away using several undefended virtual access points.

Savage pointed out that the car industry has some “extra-technical” challenges that make incorporating cybersecurity especially difficult. Car manufacturing is a complex process involving numerous third-party suppliers; many of the component computers that wind up in a single car come from different manufacturers and use different programming languages, making it difficult to secure both the component parts and the car as a whole.

In addition, in many cases the systems are so complex that traditional cybersecurity tools do not apply. “If you bought an American car in the last few years, it probably has a phone number and an Internet address,” said Savage. “There are lots of good reasons why it has that, but the side effect is that we now have this kind of systemic risk.”

Cars are just one example of the broader trend toward the “Internet of Things,” in which computers and connectivity are being built into many types of products beyond typical computer products like laptops and smartphones. This trend is especially active in the area of transportation. “There is almost no trip that you take, whether it is up or down a floor or whether it is through the air, that you are not ultimately depending on a computer to do the right thing,” said Savage. Anything with computer control and connectivity is subject to risk, whether it is a car, airplane, train, or refrigerator. Yet the companies that make these products do not feel the same pressure to increase their security as do companies that make traditional computer products; because no one is known to be attacking such products, companies have so far made only modest security investments.

But there is good news. By exposing weaknesses, research by Savage and others has propelled a wholesale overhaul of how the American auto industry designs software. Savage noted that this was not something that would have been advanced by the private sector alone; only academic researchers had the time and long-term vision to unravel these problems, work with regulators and industry leaders, and convince the National Transportation and Safety Board that these were pressing problems requiring industrywide solutions and standards. Even after new security standards and recommendations were in place, Savage said that the auto industry might have been tempted to ignore them, except for the fact that around the same time, Toyota was forced to pay more than $1 billion in fines because of its “unintended acceleration” problems.1 This quantified a previously unquantifiable problem for the auto industry by revealing what a security breach could cost them. In Savage’s view, the prospect of future enormous payouts scared industry into finally adopting the cybersecurity standards that had been developed by academia with government support.

Fighting Spam and Piracy

Whereas cars and other computerized devices are examples of an underappreciated cybersecurity threat, industry and the general public have a much greater awareness of the problems of spam and piracy. In particular, the sale of pirated software is a particularly active problem and one that the software industry spends hundreds of millions of dollars to stem.

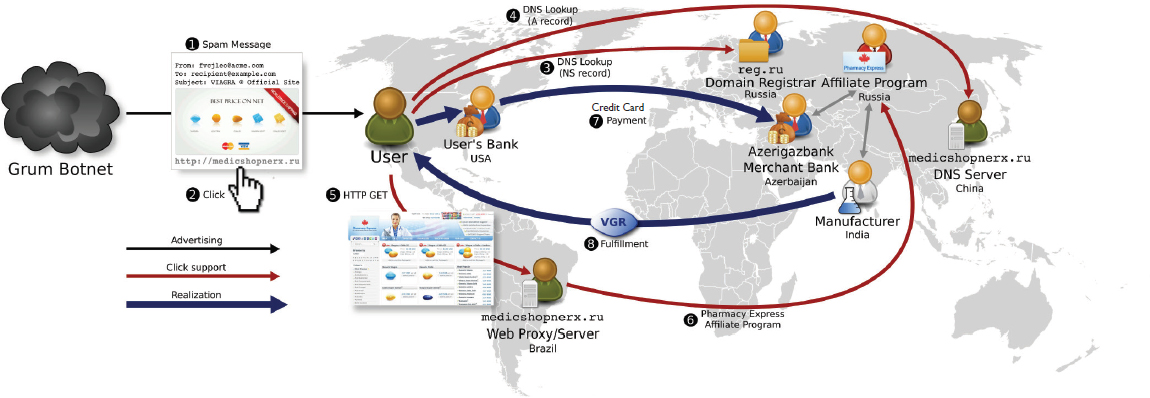

Savage described an innovative approach his team developed to fight Internet spammers selling pirated software. Until recently the traditional approach has been to filter

___________________

1 C. Woodyard, 2012, Toyota to pay $1.1B in ‘unintended acceleration’ cases, USA Today, December 26, http://www.usatoday.com/story/money/cars/2012/12/26/toyota-unintended-acceleration-runaway-cars/1792477/.

spam emails, shut down websites hawking pirated software, and seize any goods. But this approach rarely solves the problem permanently, because the same spammers can easily pop up again using new e-mail and web addresses (see Figure 5.2). Also, the spam system works in large part because there is consumer demand for cheap pirated software. “Up to 40 percent of all revenue from e-mail spam comes from people who go into their Spam folder and click on the items there, because they want those things,” Savage said.

Given this challenging context, Savage and his team approached the problem from a different angle. Realizing that it’s a game being played for financial gain, they tried to find a way to undermine the finances of the software pirates. Targeting e-mail spam as just one symptom of the larger problem of piracy, the team untangled the complex connections going from the e-mail offer, to the web proxy, to the domain server and several other points along the way until getting to the actual financial processing. In order to undermine spammers’ financial gains, Savage’s team purchased more than 600 items from spam e-mails (using no government money, Savage noted, though the research was otherwise government-funded), and followed the processing chain to determine how the spammers were receiving their money. The study revealed that 95 percent of the money acquired through pirated software spam was going through just three banks.2

Once alerted to the penalties of working with spammers, the banks quickly dropped these accounts, leaving spammers with no way to monetize their sales. While

___________________

2 C. Kanich, N. Weavery, D. McCoy, T. Halvorson, C. Kreibichy, K. Levchenko, V. Paxson, G.M. Voelker, and S. Savage, 2011, Show me the money: Characterizing spam-advertised revenue, pp. 219-234 in Proceedings of the 20th USENIX Conference on Security (SEC’11), USENIX Association, Berkeley, Calif.

switching e-mails or domains is easy, switching banks is far more difficult for spammers, and this strategy has proved effective in shutting down certain types of spammers. As a result, there has been a substantial drop in sales of pirated software as a whole, and the process of targeting spammers’ finances is now widely used by virtually all companies looking to protect their intellectual property from unauthorized distribution.

In computerized cars and software piracy and on numerous other cybersecurity fronts, Savage said academia has a crucial role to play and that solutions cannot be left to industry or government alone. With government funding, academic researchers have the ideal funding structures and culture to experiment with strategies before their value is obvious. Companies and mission-oriented government agencies, on the other hand, are often crisis-focused and unable to invest in the long-view, experimental solutions cybersecurity requires. This critical, government-funded academic work has exposed weaknesses in consumer products and bolstered the intellectual property rights of businesses, said Savage—and our citizens and our economies are safer because of it.

THE USER-CENTERED DESIGN RENAISSANCE

Scott Hudson, a professor of human–computer interaction at Carnegie Mellon University, presented an overview of the evolution and spread of user-centered design and its impacts on the adoption and success of technology. Although it’s easy to assume this approach was a foregone conclusion, he described how computer scientists spent decades working hard behind the scenes in order to “make it easy to make things easy.”

The enormously successful photo-sharing application Instagram, developed by a team of two, gained 30 million users over a 2-year period until being acquired by Facebook for $1 billion. Although it’s an extreme example, this story illustrates the enormous value of user-centered design in the creation of software products: Ease-of-use was critical both to the meteoric rise of Instagram among consumers and to the ability for such a small team to develop and quickly deploy such a wildly successful app.

In the early days of the personal computer and the Internet, using software to perform even relatively simple tasks took knowledge and experience. Today, most software is designed to be so intuitive to the user that there is virtually no learning curve involved. But the benefits of user-centered design do not stop with the user: Software developers and the technology industry as a whole have benefitted from this trend as well. Where it used to take experienced developers weeks or months to create the user interface for a new software tool, now nearly anyone can create technology applications quickly, easily, and well, even with minimal technical skills.

Building Toward a Sea Change

Computer scientists were not initially focused on user-centered design. In the 1980s, many academic researchers were working on simplifying computer programming, but from a top-down, systems-level perspective. The user—the person who would ultimately interact with the software to perform tasks—was an afterthought. Early academic research projects such as Tiger3 and ADM4 were intended to simplify user interfaces but were too systems-focused; these and other processes born of academic research were clunky and complicated for nonexperts.

It was from this context that a gradual sea change emerged in the 1980s and 1990s, creating a growing recognition that computer science needed to be user-centered, not systems-centered, in order to succeed. More and more, people began to realize that the solution to clunky, difficult software would come not from a new technology or algorithm, but from a new approach to design altogether.

This new user-centered mindset, although ultimately the key to the success of many technologies and companies, complicated everything. Even compared to the programming required to make a highly complex computer system work, figuring out the user’s needs and preferences is an extremely tricky challenge. “Users are hard to deal with, because you can’t open the user’s head and pour in the right mental model,” said Hudson. Recognizing that creating something that can be used is the fundamental end goal for computers, computer scientists had to come to terms with the fact that you must design for the users you actually have, not the users you wish you had, he added.

Hudson described how the first generation of developer-friendly toolkits for creating user-friendly interfaces emerged from exchange and interplay between academic researchers at places like Carnegie Mellon, Stanford, and MIT and companies such as Sun, Apple, and Microsoft. In the 1990s, three major projects led by Linton at Stanford, Myers at Carnegie Mellon, and Hudson at the University of Arizona and Georgia Tech created functional user interface design toolkits including Interviews and Fresco, Garnet and Amulet, and Artkit and subArctic, respectively. Contributions from these projects, such as concurrency models, resizable icons, and layout abstractions, are evident today in Apple, Android, and Adobe user tools.

Another successful tool coming out of that era was graphic user interface builders—programs that allow developers to actually draw the graphical parts of a graphical user interface instead of creating them only with lines of code. These visual tools were a big success and led to what is now a maxim of user-centered design: “Visual things

___________________

3 D.J. Kasik, 1982, A user interface management system, Computer Graphics 22(4):113-120.

4 A.J. Schulert, G.T. Rogers, and J.A. Hamilton, 1985, ADM—A dialogue manager, pp. 177-183 in Proceedings of SIGCHI Conference on Human Factors in Computing Systems (CHI ‘85), Association of Computing Machinery, New York, N.Y.

should be expressed visually.” Today, every modern development environment has visual components. Microsoft even used the term “visual” in a series of programming products: Visual Basic, Visual C++, and Visual Studio.

A New Way of Creating Technology

Once user-centered design became a more universal and widely accepted part of the technology development process, more innovations followed. “Now we see the fingerprints of this work all over modern interactive systems of all sorts,” reflected Hudson.

One important example of early user-centered design research was Columbia University’s 1997 Touring Machine project, in which researchers experimented with deploying, in real-world situations, mobile computing contraptions that included a computer with wireless Internet access, GPS, and a handheld display and input (Figure 5.3). The machines, despite being impractical, were an essential part of the user-interaction

research that laid the groundwork for mobile devices to come. Building and using them helped researchers explore how people might use a mobile device with Internet connectivity, and the project’s devices are seen as important early precursors to the iPhone.

SOURCE: S. Feiner, B. MacIntyre, T. Hollerer, and A. Webster, A touring machine: Prototyping 3D mobile augmented reality systems for exploring the urban environment, pp. 74-81 in First IEEE International Symposium on Wearable Computers, 1997, doi:10.1109/ISWC.1997.629922. Courtesy of Steven K. Feiner.

Another example, from a subarea of user-centered design focused on interaction techniques, is the “pinch” gesture: a smooth interaction for simultaneous translating and scaling text or an image. While it may seem new, this feature actually traces its roots to Myron Kruger’s 1983 VideoPlace gaming system. The zoomable interface was invented in 1994, and adding zoom to the pinch gesture allows users to maximize space and readability on the small screens of today’s smartphones and wearable devices.

In Hudson’s view, these and many other innovations, enabled by the major cultural shift away from systems-focused design and toward the user, were significant drivers behind today’s “There’s an app for that” world. In less than 10 minutes, as opposed to days, weeks, or months not so long ago, a novice can now create a working app and start accumulating users and revenue. “Computing has now spread out into lots of places it hasn’t been before, . . . [so] it has had a tremendous impact that we haven’t seen before,” said Hudson.

Taking a user-centered approach has led computer scientists to change the questions that they ask when conceptualizing a new product, explained Hudson. Instead of wondering if they can build a technology, or how to start building it, following a user-centered approach means asking, “How well does it work with the user?” User-centered design has been revolutionary for many applications and areas of computer science, including wearable computing, context-aware computing, and data visualization.

A general lesson from the story of user-centered design, Hudson explained, is that rather than investing in research to develop only individual technologies, it is important to target work that has an amplifying effect across the broader field. “Even more than lots of individual technologies, things like this—ideas that amplify other ideas and enable other ideas—are really what we should be after,” he said.

While it is impossible to predict at the outset which research project will lead to the next industry-wide innovation, there is still a great deal of room for improvement in user experience and other crosscutting areas of computer science. In the user-centered design space, for example, Hudson said high-performance computing could be made much more accessible with simple, user-friendly tools. Such tools could enable nonscientists, such as small business owners, to learn more from the specialized data they collect.

The mindset change from a top-down, systems view of computer design to a user-centered view has had an enormous impact across multiple technologies and the technology-driven economy in general, mostly by amplifying the impact of technologies and by making them easier to develop. In Hudson’s view, user-centered design is an idea that then inspires other ideas, but there was no direct path or single research project that led to this epiphany. Rather, it took a winding path and even some seeming dead ends to gradually build into a sea change that enabled the flexible, user-friendly tools and technologies we enjoy today.

HARNESSING BIG DATA FOR SOCIAL INSIGHTS

Technological developments over the past several decades have opened up powerful new opportunities for understanding people and societies. New ways of generating, collecting, and analyzing social data have shed new light on economics, politics, sociology, anthropology, and many other areas within the social sciences. Research into the questions posed by these fields touches every aspect of human society, from families and interpersonal relationships to high-stakes topics like presidential elections, international politics, and economic markets.

Government funding has long been central to enabling new insights in these areas. Duncan Watts, a principal researcher at Microsoft Research, offered his views on how

the online world has changed social science, the emerging importance of computational science as a way to understand and solve social science research questions, and the role of the government–academia–industry ecosystem in advancing this field. Watts began his research career in mathematics but quickly became fascinated by the dynamics of connections and networks among people and has conducted research at Columbia University, Yahoo! Research, and other organizations. At Microsoft, he studies the social networks that dominate today’s online culture.

From Social Science to Social Media (and Back Again)

Watts described how federal funding has been instrumental in laying the groundwork for a whole new sector of the economy: the online social world. To the casual observer, it is easy to assume that wildly popular social media companies like Facebook and BuzzFeed simply stumbled upon winning formulas for connecting and engaging their users. In reality, Facebook and other social networks are built, in part, on research from the early 2000s examining the drivers of network structure, network growth, and social contagion, while BuzzFeed and other news sites build from research on the nature of social influence to tailor their articles to what readers are most likely to enjoy and share with friends, Watts explained. In addition to underpinning such applications in the for-profit sector, Watts said fundamental research on networks and relationships is also being integrated into basic and translational research in other areas of science, such as medicine, physics, and biology.

Conversely, just as the social sciences helped to fuel the growth of social media, social media are providing new fodder to advance social science research. Now that online social networks are thriving, Watts and other researchers in government, academia, and industry are tapping into this new online world and its data to further study human networks and social problems.

A New Way to Do Research

Social scientists have long studied “off-line” social networks, but in the past there was no easy way to harness large amounts of social and behavioral data, and data collection was often a painstaking and time-consuming process. “If you’re trying to understand how information flows through a society, you need to know what people think, you need to know when they change their minds, you need to know whom they are talking to. This is a tremendous observational challenge when you are talking about millions of people,” said Watts. Now, with people constantly interacting with media and each other through technologies capable of recording and storing their behavior, researchers are making progress far more quickly. “We realized after [the invention of social networks] that this is a tremendous wealth of information that can inform us about social interaction and behavior,” said Watts.

In particular, online surveys and crowdsourcing tools such as Mechanical Turk have made it easier for researchers to connect with research subjects and gather data. For example, researchers can now take advantage of the “bored at work” network—people who are constantly on computers and willing to fill out surveys as a brief distraction from their daily tasks—to quickly and cheaply gather survey data. In addition, the emergence of virtual meetings has made it more feasible for researchers to study group dynamics because it is no longer necessary in every case to gather people together in the same room at the same time.

One of the most powerful aspects of social media for social science is that data can be collected passively, without relying on information that is actively solicited through surveys or focus groups. Because the use of social networks is so widespread and users of these services are generating so much data, social science is increasingly becoming a computational science, in which researchers tap extremely large data sets for insights about people’s behavior. “It’s clear to us working in the field now that social science over the last decade or so is rapidly becoming a computational science,” said Watts, adding that the use of high-performance computing has benefited greatly from the interplay between government-funded academic research and industry data and tools. Watts said, “I think it’s also very clear that both federal funding and support from industry labs have been critical.”

Watts highlighted a handful of examples of how social media and online networks have shed light on human behavior. For example, one NSF-funded study Watts’s group conducted in the mid-2000s, when he was at Columbia University, showed how social media can create a snowball effect in which the perceived popularity of an item influences more people to like it. The study revealed that the more popular a previously unknown song appeared to be (as indicated by how many “likes” it had), the more popular it became, while songs with fewer “likes” were basically ignored.5 So, although we tend to assume that we make our own decisions about our purchases and preferences, people are more subject to others’ tastes than we think. The study provided valuable evidence that the consumer market doesn’t merely reveal preferences but can construct them through a process of social influence.

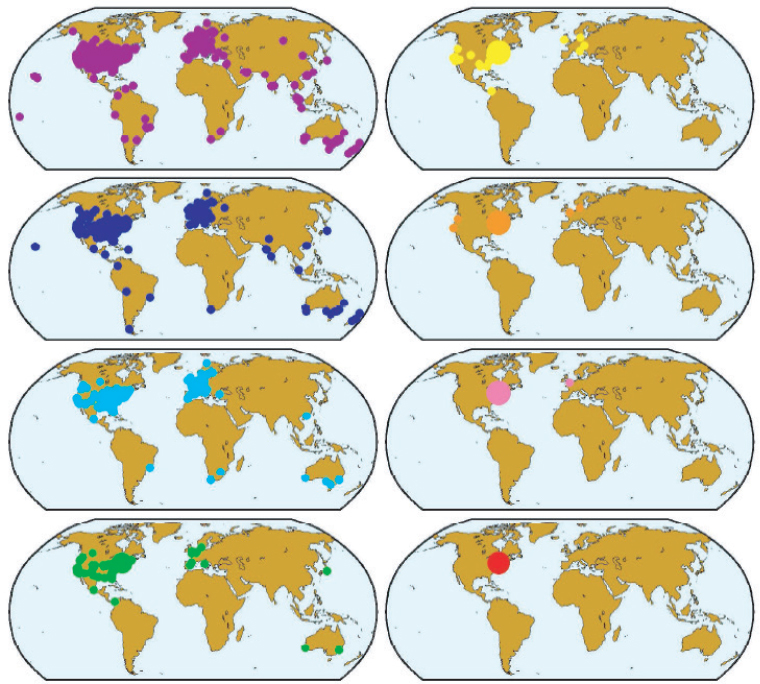

In an earlier study, Watts and his collaborators used email to replicate Stanley Milgram’s famous “small world” experiment, which determined that there are a median of six degrees of separation between people. The NSF-funded study achieved the same results as Milgrim’s, revealing that people could reach a stranger, even across the globe, through a chain of 5-7 contacts, on average (see Figure 5.4).6

___________________

5 M. Salganik, P. Dodds, and D. Watts, 2006, Experimental study of inequality and unpredictability in an artificial cultural market, Science 311:854-856.

6 P. Dodds, R. Muhamad, and D. Watts, 2003, An experimental study of search in global social networks, Science 301(5634):827-829.

SOURCE: Duncan J. Watts, “From Small World Networks to Computational Social Science,” presentation to the workshop, March 5, 2015, http://sites.nationalacademies.org/cs/groups/cstbsite/documents/webpage/cstb_160426.pdf.

A more recent study, which Watts oversaw in his current position at Microsoft Research, tracked information dissemination on Twitter and attempted to quantify and categorize what makes news items “go viral” on social networks.7

Social media hold virtually limitless potential for insights into human behavior; further applications of these data, for example, include crisis mapping, the real-time gathering and analysis of social media information during a political crisis or natural disaster, and digital ethnography, the study of relationships in a digital rather than a physical space. In addition, Watts said smartphones offer fertile ground for research and could be used as “social sensors” or to mine data on productivity. The thousands of data points being generated by smartphones and all of our other interactive technologies hold “profound implications for what we could know about the state of the world, what we could know about the collective mind, what we could do in terms of interventions, peer influence, and collective behavior or crowd computing,” said Watts.

___________________

7 S. Goel, A. Anderson, J. Hofman, and D. Watts, 2015, The structural virality of online diffusion, Management Science 1-17.

A Prolific Data Ecosystem

Social science is an area in which the government–academia–industry ecosystem is particularly evident. Watts pointed out that while most social science research is supported by the government, much of the data used in these studies is generated by companies. The technology industry is also funding and advancing research, not merely providing the data—particularly in the area of computational research in which large data sets are mined for insights. Yahoo! Labs, for example, conducts its own research while also making data sets freely available for academic use.

The marriage of computer science and social science will be crucial to advancing the next generation of social science questions and solutions, Watts said, adding that both federal and industry funding will be critical to this effort. Looking back, Watts admitted that he never would have expected to see an online social network capable of reaching a billion people, yet this has come to pass. As this example shows, it is impossible to predict what will be available 10 or 15 years from now, even for experts embedded in the field.

Although reluctant to make specific predictions given this inherent uncertainty, Watts said a likely key to future social science insights will be an increasing trend toward interdisciplinary work. Social science is interdisciplinary by nature, yet social scientists and computer scientists often work separately in academic departments with little overlap. A greater emphasis on more interdisciplinary, mixed-method research focused on solving problems, not just publishing papers in journals, will be important to keeping social science research moving both forward and sideways into other scientific fields, said Watts.

Finally, Watts noted that the push-and-pull between humans and technologies becomes ever more critical to understand as the computing world moves closer to the human world. As robotic technologies become more integrated into our lives and wearable computers literally become a part of us, new approaches to computer design will be needed in order to fully understand the needs of the user and design the best possible solutions. This is a key area in which interdisciplinary work uniting social science, user-centered design, and computer science will be crucial to advancing effective solutions.