The objective of implementation science is to incorporate new findings into clinical practice. On average it takes 17 years to convert just 14 percent of original research into benefits for patients, said David Chambers, deputy director for implementation science at the National Cancer Institute (Balas and Boren, 2000). Furthermore, the 17 years does not include how long it takes to develop and perform the research. This chapter describes the goals and approaches of implementation science and identifies areas where the field may provide benefits to genomic medicine.

THE GOALS OF IMPLEMENTATION SCIENCE1

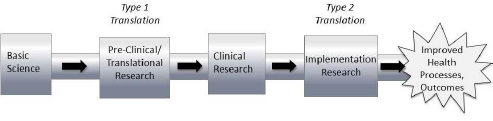

In the progression of discoveries from basic research to clinical research and from clinical research to improved health outcomes and processes, implementation science focuses on the latter half of the process, known as Type 2 translation (see Figure 2-1), said Brian Mittman, a research scientist at Kaiser Permanente Southern California. However, Mittman went on to note that those working in implementation science have tried to minimize use of the word “translation” in describing their work, partly because of confusion about different forms of translational research.

Mittman defined implementation science as “the scientific study of methods to promote the systematic uptake of research findings and other evidence-based practices into routine practice and, hence, to improve the quality and effectiveness of health services. The field of implementation

SOURCE: Brian Mittman, National Academies of Sciences, Engineering, and Medicine workshop presentation, November 19, 2015.

___________________

1Additional background reading on implementation science is available in Appendix E.

science includes the study of influences on health care professional and organizational behavior.”

Mittman identified three major aims of implementation science as they pertain to health care:

- To generate reliable strategies for improving health-related processes and outcomes and to facilitate the widespread adoption of these strategies.

- To produce insights and generalizable knowledge regarding implementation processes, barriers, facilitators, and strategies.

- To develop, test, and refine implementation theories and hypotheses, methods, and measures.

“The goal of research in implementation science—and this applies to other complex interventions as well—should not be to determine whether an implementation strategy is effective or not,” Mittman said. Rather the goal should be “to understand how [the strategy] works and to ultimately provide guidance in adapting, modifying and customizing it by [providing] an understanding of its mechanisms of action so [the strategy] can be made to work more effectively.” In some cases, he said, the implementation setting can also be modified to increase the likelihood of success—for example, by strengthening institutional leadership or modifying culture or mindsets.

Work in implementation science is published under a number of different identifiers, including knowledge transition, translational research, research utilization, knowledge utilization, knowledge-to-action, knowledge transfer and exchange, technology transfer, and dissemination research. One controversy within the field, Mittman said, is whether to coalesce around a single set of terms, in part to gain greater legitimacy and support for this work, or to support the existing multitude of terms. One potential problem with the latter approach, he said, is that it might lead to confusion among leaders at health care systems, who may think that they already have implementation science programs underway when in fact they are using the approaches of quality improvement, or another related field.

There are key differences between implementation science and such fields as quality improvement, Mittman said. Quality improvement commonly targets specific local quality problems via rapid-cycle iterative improvement. Implementation science generally seeks to develop and rigorously evaluate fixed implementation strategies to address implementation gaps across multiple sites. In this respect, the goal of im-

plementation science is to develop generalizable knowledge. Because of these differences between the two fields, Mittman said, there are opportunities for synergy as well as opportunities—many of them unfortunately missed—for those in both fields to learn from their colleagues. Another important distinction is between implementation science and comparative effectiveness research, specifically their goals, methods, measures, and outcomes. Comparative effectiveness research involves the generation and synthesis of evidence that compares the benefits and harms of alternative methods to prevent, diagnose, treat, and monitor a clinical condition or to improve the delivery of care (IOM, 2009).

One unique aspect of implementation science is its focus on the ways in which interventions can and cannot be replicated across settings, such as integrated health care systems, academic medical centers, and community-based clinics. The findings of implementation science generate “insights into constraints, into some of the core principles that would allow us to select from a mix of implementation interventions, deploy them in different ways, and adapt them,” Mittman said. In complex organizations, function is the underlying need that is addressed by an intervention, whereas form is the way that an intervention is operationalized. “For most of these implementation problems,” Mittman said, “there’s a relatively constant list of functions that need to be fulfilled. But the exact form that those implementation strategies or components would take—the way we provide for patient education support or clinician education, or the way that we achieve conducive professional norms—those strategies are likely to differ across sites.”

INSIGHTS ON THE ADOPTION OF NEW CLINICAL PRACTICES

Implementation science has generated several key insights concerning the adoption of new clinical practices, Mittman said. For example, Damschroder and colleagues published a Consolidated Framework for Implementation Research (CFIR), which offers an overarching classification system to promote implementation theory development, and verification (Damschroder et al., 2009). The CFIR is composed of a multitude of factors that have been shown to influence implementation processes and outcomes, including the characteristics of an innovation, the inner setting, the outer setting, the individuals who deliver care or facilitate implementation, and the implementation process. There are instances when an evidence-based, practice-changing strategy will not succeed

because the external regulatory environment, social environment, or fiscal environment are not conducive to change, Mittman said. “Implementation science is not a matter of focusing on one or two factors but, instead, taking into account the full spectrum of influences on clinical practices and attempting to both understand these influences [and] leverage the influences.”

Clinical practices are highly stable and slow to change, a phenomenon that is sometimes referred to as clinical inertia. In many ways, the stability of health practitioners is highly appropriate, Mittman said. Clinicians are often hesitant to change the way they practice medicine because they are used to contradictory evidence in the literature, he said. But he added that interventions typically are not based on the results of single studies but rather on systematic reviews, clinical practice guidelines, and other stable and strong bodies of evidence. Researchers at the University of California, Los Angeles, developed a multicomponent cancer genetics toolkit that attempted to address many of the constraints that cause clinical inertia (Scheuner et al., 2014). The goal of the toolkit was to facilitate the collection and use of cancer family histories by primary-care clinicians. There were several distinct components within the toolkit, including a continuing medical education–approved lecture series, patient and clinician information sheets, a reminder embedded in the electronic health record system, patient questionnaires, and a practice feedback report. According to the authors, this multilevel approach resulted in increased clinician knowledge regarding cancer genetics, an increase in cancer family history documentation, and higher rates of patient referrals to genetic counselors.

Another insight from implementation science is that practices and settings are highly heterogeneous. Implementation strategies need to be multifaceted in order to attend to the numerous influences and constraints on clinical practice, Mittman said, and “simple practice change strategies are not sufficient.”

Finally, Mittman pointed out that supportive norms are needed to enable practice change. Professional norms and external expectations, including the expectations of patients, can drive practice changes, just as improvements in knowledge and skills can. “Physicians are well aware of the fact that there are many opportunities to improve care. They have limited time. They allocate their time and attention to what is often referred to as the squeaky wheel. When we have performance measures in place, when we have other strategies that direct the attention of clinicians

and staff to specific problems, there’s a great likelihood that they will change.”

APPLYING IMPLEMENTATION SCIENCE TO GENOMICS

The intersection of genomics and implementation science is a particularly ripe area for exploration, Chambers said. There are many open questions with regard to the sustainability of evidence-based practices in a changing context, and the adaptability and evolution of evidence-based practices over time. “Most of the scientific community would agree that we certainly have reached a point where there are [genomic] applications that ought to make their way into regular use,” Chambers said. For example, the Evaluation of Genomic Applications in Practice and Prevention (EGAPP) working group, overseen by the Centers for Disease Control and Prevention, has developed a systematic process for assessing evidence regarding the validity and utility of rapidly emerging genetic tests for clinical practice. EGAPP has identified a number of “Tier 1 findings,” which are classified as genomic applications with sufficient evidence to support their use in the clinic (Teutsch et al., 2009). One example of a Tier 1 finding, Chambers said, is screening within the colorectal cancer population for Lynch syndrome. Lynch syndrome is a hereditary disposition to developing colorectal cancer and certain other malignancies due to a mutation in a mismatch repair gene (EGAPP Working Group, 2009). Clinicians need to be thinking about delivering the initial test for Lynch syndrome to high-risk individuals along with the ongoing screening and monitoring needed to manage someone with high risk, Chambers added. This will require effective coordination of the test and the cascade of actions that should follow in order to optimize care for carriers and their family members.

Similarly, there is a strong evidence base linking specific BRCA1 and BRCA2 mutations to an increased risk for breast cancer and other malignancies (Campeau et al., 2008). Effective implementation requires the identification of mutations at the population level, effective scaling up to family members, and the establishment of screening, monitoring, and preemptive treatment, Chambers said.

Stakeholders may also want to carefully consider the demand for new genetic tests before trying to implement them in clinical care, Chambers said. Demand depends on having informed patients who are able to make and articulate their decisions and also on whether a new test fits into a clinical pathway where testing is the norm, he said.

Available Resources

An increasing number of resources are available for those interested in pursuing implementation science, including training programs, research infrastructures, improved measurement tools, and other tools to link research with practice and policy. Annual conferences on the topic bring together more than 1,000 people, many of whom have committed to implementation science as a career, Chambers said. The journal Implementation Science is an open-access, peer-reviewed online journal which publishes research that is relevant to the scientific study of methods to promote the uptake of research findings into routine health care in clinical, organizational, or policy contexts.

Chambers said that those interested in implementing genomics into clinical practice can learn a great deal from other disciplines that face similar issues. The Global Implementation Society, part of the larger Global Implementation Initiative, was designed to unite implementation experts across fields to define, support, and expand professional roles related to implementation in human service organizations and government systems.

POTENTIAL GAPS IN IMPLEMENTATION RESEARCH

A variety of research gaps exist in the implementation science pipeline. One such gap is root cause analysis, especially as it applies to diagnosing potential problems during the implementation of new clinical practices. As Mittman observed, often “we take an implementation strategy or quality improvement program that has been shown to be effective elsewhere and we apply it to a new problem, which is in some ways similar to taking a medication that has been shown to be effective for reducing severity of headache and applying that to a set of patients with an unrelated condition.” Those integrating new clinical practices need to diagnose the implementation gaps such as insufficient system support or misaligned financial incentives, before they develop an implementation strategy and begin to evaluate that strategy, said Mittman.

Rigorous randomized controlled trials (RCTs) are generally preferred by academic scientists and peer reviewers. However, in implementation science, “RCTs tend to be very artificial, have very low external validity, and oftentimes not very much value,” Mittman said. “Many of the insights that we need to generate regarding implementation barriers, pro-

cesses, and strategies are probably more easily obtained from observing and studying natural experiments. We need much more observational implementation research and probably a bit less interventional implementation research,” he added.

Even when large and expensive RCTs are conducted, they may show that an intervention did not succeed in changing clinical practice, Mittman said. Small pilot projects at a single site can often provide important information about implementation barriers that avoids the burden of large and costly studies at multiple sites, he added.

Given the challenges of root cause analysis and study design, the best approach to implementation research is to develop a diverse portfolio of studies, including efficacy-oriented implementation studies that use grant funds to hire staff to provide monitoring and technical assistance, and other effectiveness-oriented studies “where we as the implementation research team [take a step back] and allow the system to essentially do its thing,” Mittman said.

What May Be Learned from Implementation Research

In an effort to identify which health interventions can fit within real-world public health and clinical service systems, the National Institutes of Health (NIH) is conducting dissemination and implementation research.2 Chambers noted that the NIH defines dissemination as “the targeted distribution of information and intervention materials to a specific public health or clinical practice audience” and implementation as “the use of strategies to adopt and integrate evidence-based health interventions and change practice patterns within specific settings” (Lomas, 1993). Much of the current implementation research has focused on the effectiveness of different implementation approaches, methods development, training systems for providers, financing and policy changes, and emerging approaches such as learning collaboratives and the use of technology as a driver of dissemination and implementation.

The NIH has room to improve its methods for dissemination and implementation Chambers said. “We need to do a better job of more active and more effective dissemination of the information that our science generates,” he said. “And then we need to do a better job of figuring out

___________________

2For further information about implementation science resources from the NIH, see http://www.fic.nih.gov/researchtopics/pages/implementationscience.aspx (accessed February 22, 2016) or http://cancercontrol.cancer.gov/is/index.html (accessed February 22, 2016).

how we implement change and how we use strategies to adopt and integrate effective health interventions.”

The core of implementation research consists of implementation strategies, implementation outcomes, and service outcomes, Chambers said. Implementation studies often focus on questions such as:

- Is implementing a particular practice feasible within a given setting?

- Is the practice acceptable to clinicians, to patients, and to systems?

- What are the costs associated with the innovation?

- Can the practice be sustained over time?

- What are the levels of fidelity or quality that are needed to ensure good outcomes?

The NIH has a number of standing program announcements on dissemination and implementation research, which to date have yielded over 140 projects cutting across 16 institutes and centers, Chambers said. The NIH recognized that across many disciplines, researchers and clinicians were struggling with the challenges of implementation such as feasibility, provider readiness, sustainability, financing, and quality, Chambers said.

POSSIBLE OBSTACLES TO IMPLEMENTATION

Several obstacles to implementation were described by Mittman, particularly the challenge of handling the vast amount of information available (see Box 2-1). For example, in the past, the volume of clinical knowledge was limited enough that individual physicians could go through a period of training and apprenticeship and obtain most of the knowledge required for the job. Today, however, the body of required knowledge has grown so large that clinicians cannot keep it in their minds, and a much larger spectrum of factors influence clinical practice, Mittman said. “It’s no longer a matter of changing physician practices through continuing medical education; it’s now a matter of trying to influence all of these different factors, levers, or constraints.” As a result of this added complexity, quality improvement strategies that focus on just a few causes of conservative practice and instability typically are not sufficient, Mittman remarked. Instead, large, multifaceted, stakeholder-engaged, partner-oriented strategies are needed to address all of the constraints, he said.

Another obstacle to implementation that Chambers cited is that many innovators do not think carefully about the fit between the things they are developing and the audiences and systems that are required to implement those innovations. These issues need to be considered on the front end of innovation, he said. For example, even if a genomic test identifies the optimal treatment for an illness and can reduce the risk for health problems, the mere existence of a test does not ensure its use. In this instance, if only half of insurers choose to cover that test, only half the systems that incorporate the test train their clinicians to use it, only half the clinicians trained actually use it in practice, and only half of their patients get tested (assuming perfect access, testing, and follow-up), only about 6 percent of patients will benefit. And these assumptions are optimistic, Chambers said. The return on investment could come from tests making their way through this cascade of obstacles into use.

EVIDENCE GENERATION DURING IMPLEMENTATION

In some cases, a technology or practice is implemented in practice after evidence has been gathered, but in other cases evidence needs to be gathered as a technology is being introduced into care (see Chapter 4). This raises a dilemma, as Mittman pointed out. “What do we do about a clinical practice for which the evidence base is not quite as strong as we

would like it to be but it addresses an important clinical question for which we don’t have better alternatives? Should we proceed to begin to implement that practice even though the evidence is not solid, or do we continue to study clinical effectiveness and only after 5 to 10 years of that type of research move into implementation?”

One potential answer to this dilemma is hybrid approaches that combine effectiveness and implementation research. Blended effectiveness–implementation studies may provide benefits such as accelerated translation, more effective implementation strategies, and higher quality information for researchers and decision makers (Curran et al., 2012). In addition, the hybrid studies offer a way for those who are not necessarily interested or do not feel qualified to conduct implementation studies to facilitate the adoption of their work, Mittman said.

Approaches to implementation science have changed over time in ways that are helpful for evidence generation, Chambers said. Innovation is no longer viewed as a strictly linear process progressing from basic research to clinical research and, ultimately, to community practice. Despite widespread recognition of the existence of feedback in an otherwise linear process, it was often assumed that there needed to be a complete evidence base before the innovation could be implemented in practice. However, the evidence base does not need to be optimal prior to starting to implement, he said. The challenge for the future is recognizing that researchers have an opportunity to generate evidence at all stages of implementation, and adapting to incoming evidence in real time, Chambers said.

UPDATING THE THINKING ABOUT IMPLEMENTATION SCIENCE

There are several outdated assumptions about implementation science that could be superseded by new knowledge, Chambers said. First, evidence-based practices are not static and instead are constantly changing, as is the system in which those practices are being implemented. Implementation does not proceed one practice or test at a time, and consumers and patients are not homogeneous. Finally, choosing to deimplement unsuccessful practices or not to implement an evidence-based practice is not necessarily irrational. “There are all sorts of legitimate reasons why things are not implemented, and we need to understand those,” Chambers said. “We need to be thinking about the fit between our testing, the fit between our interventions and where they’re delivered,

and how they fit into the workflow as well as the needs of the population that we’re trying to serve.”