2

The Draft National Science Advisory Board for Biosecurity Policy Framework, the Risk and Benefit Assessment, and Insights for the Policy Process

OVERVIEW OF THE NATIONAL SCIENCE ADVISORY BOARD FOR BIOSECURITY DRAFT WORKING PAPER

Samuel Stanley, the chair of the National Science Advisory Board for Biosecurity (NSABB), highlighted the valuable role played by the National Academies of Sciences, Engineering, and Medicine’s first symposium on gain-of-function (GOF) research in the deliberations of the NSABB’s Working Group on GOF Issues (hereafter, NSABB WG), in particular during the development of its Draft Working Paper and recommendations. Dr. Stanley reviewed the activities undertaken by the NSABB since the start of the deliberative process. He reviewed the charge to the NSABB and highlighted the outputs produced to date, including Framework for Conducting Risk and Benefit Assessments of Gain-of-Function Research in May 2015 (NSABB, 2015b) and Working Paper Prepared by the NSABB Working Group on Evaluating the Risks and Benefits of Gain-of-Function Studies to Formulate Policy Recommendations in December 2015 (NSABB, 2015a).

Dr. Stanley introduced the NSABB WG’s Draft Working Paper, noting that it included guiding principles for NSABB deliberations; analysis and interpretation of the formal risk and benefit assessment (RBA); consideration of ethical values and decision-making frameworks; analysis of the current policy landscape and potential policy options; preliminary findings from the NSABB WG’s analyses; draft recommendations for the NSABB’s consideration; and a number of important questions for further consideration. He reviewed key inputs into the work of the NSABB WG.

Dr. Stanley provided some reflections on the RBA prepared by Gryphon Scientific (Gryphon Scientific, 2015), describing it as rigorous and comprehensive and representing a monumental amount of work. The scope of the RBA addressed biosafety risks and biosecurity risks as well as benefits from GOF research. The study had allowed the NSABB to understand the different risks associated with research involving relevant pathogens and certain GOF experiments. It had helped them to identify and distinguish GOF studies that raise significant concerns from those that do not. Dr. Stanley indicated it assisted in identifying and evaluating the potential benefits of GOF studies and in comparing the potential benefits derived from GOF studies to those that may be achieved through alternative approaches.

Drawing on the ethics report prepared by Michael Selgelid (Selgelid, 2015), Dr. Stanley highlighted a number of important values to consider when evaluating research proposals involving GOF studies as well as when establishing mechanisms to review and/or make funding decisions about them. These included both substantive values (such as non-malfeasance, beneficence, social justice, respect for persons, scientific freedom, and responsible stewardship) and procedural values (such as public participation and democratic deliberation, accountability, and transparency).

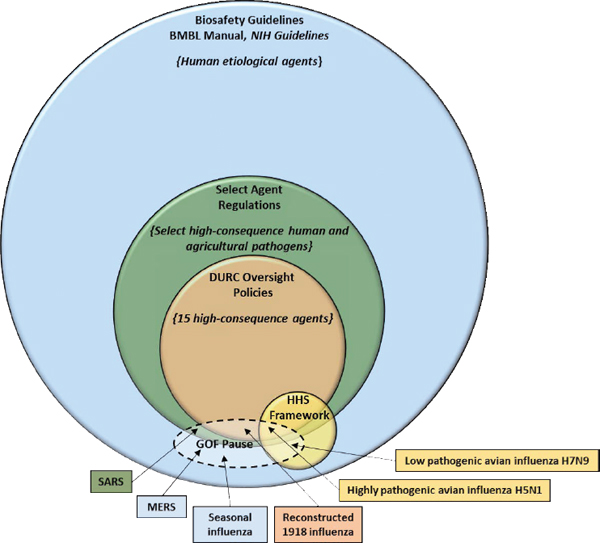

He noted that there are multiple policies and frameworks already in place for managing risks during the research lifecycle (see Figure 2-1). These include reviews of the scientific merit of proposed research; measures for biosafety oversight, such as the Biosafety in Microbiological and Biomedical Laboratories manual (CDC and NIH, 2007) and the NIH Guidelines for Research Involving Recombinant or Synthetic Nucleic Acid Molecules (NIH, 2013); the Federal Select Agent Program; the U.S. government policies for federal and institutional oversight of life sciences dual use research of concern (White House, 2012, 2014b); the Department of Health and Human Services framework for guiding funding decisions about certain GOF studies with highly pathogenic avian influenza (HHS, 2012); and measures that relate to sharing and communicating scientific findings and research products. Dr. Stanley noted that the success of these measures depends on effective compliance and implementation. He noted that there were different levels of oversight depending on what pathogen was involved and what was being done with it.

Dr. Stanley then summarized the key findings and recommendations from the NSABB WG’s Draft Working Paper. The five key findings are:

- There are many types of GOF studies and not all of them have the same level of risks. Only a small subset of GOF studies—GOF studies of concern—entail risks that are potentially significant enough to warrant additional oversight;

- The U.S. government has effective policy frameworks in place for managing risks associated with life sciences research (see Figure 2-1).

NOTE: BMBL = Biosafety in Microbiological and Biomedical Laboratories; DURC = dual use research of concern; GOF = gain-of-function; HHS = Department of Health and Human Services; MERS = Middle East respiratory syndrome; NIH = National Institutes of Health; SARS = severe acute respiratory syndrome.

SOURCE: National Science Advisory Board for Biosecurity, 2015a: 27.

- Oversight policies vary in scope and applicability, therefore, current oversight is not sufficient for all GOF studies that raise concern;

- There are life sciences research studies that should not be conducted on ethical or public health grounds if the potential risks associated with the study are not justified by the potential benefits. Decisions about whether GOF research of concern should be permitted will

There are several points throughout the research life cycle where, if the policies are implemented effectively, risks can be managed and oversight of GOF studies could be applied;

-

entail an assessment of the potential risks and anticipated benefits associated with the individual experiment in question. The scientific merit of a study is a central consideration during the review of proposed studies but other considerations and values are also important; and

- The biosafety and biosecurity issues associated with GOF studies are similar to those issues associated with all high containment research, but a small subset of GOF studies have the potential to generate strains with high and potentially unknown risks. Managing risks associated with all high containment research requires Federal-level oversight, institutional awareness and compliance, and a commitment by all stakeholders to safety and security. Biosafety and biosecurity are international issues requiring global engagement. (NSABB, 2015a: 3-4)

The NSABB WG’s Draft Working Paper also includes four recommendations:

- Research proposals involving GOF studies of concern entail the greatest risks and should be reviewed carefully for biosafety and biosecurity implications, as well as potential benefits, prior to determining whether they are acceptable for funding. If funded, such projects should be subject to ongoing oversight at the federal and institutional levels;

- In general, oversight mechanisms for GOF studies of concern should be incorporated into existing policy frameworks. The risks associated with some GOF research of concern can be identified and adequately managed by existing policy frameworks if those policies are implemented properly. However, the level of oversight provided by existing frameworks varies by pathogen. For some pathogens, existing oversight frameworks are robust and additional oversight mechanisms should generally not be required. For other pathogens, existing oversight frameworks are less robust and may require supplementation. All relevant policies should be implemented appropriately and enhanced when necessary to effectively manage risks;

- The risk-benefit profile for GOF studies of concern may change over time and should be re-evaluated periodically to ensure that the risks associated with such research is adequately managed and the benefits are being realized.

- The U.S. government should continue efforts to strengthen biosafety and biosecurity, which will foster a culture of responsibility that will support not only the safe conduct of GOF research of concern but of all research involving pathogens. (NSABB, 2015a: 4-5)

A key issue related to the first finding and recommendation is the question of what constitutes “GOF studies of concern.” As Dr. Stanley explained:

GOF research of concern would be a study that can be anticipated to generate a pathogen that is, one, highly transmissible in a relevant mammalian model, two, highly virulent in a relevant mammalian model, and three, is likely more capable of being spread among human populations than currently circulating strains of the pathogen. The first two characteristics are intended to involve the concept of the threshold. That is, the generated pathogen would need to be highly transmissible and highly virulent. Studies of pathogens with moderate virulence and transmissibility entail risks of course, but in general, those risks can be managed through existing mechanisms. The third criterion is intended to capture the concept of pandemic potential. That is, a pathogen could spread rapidly among human populations, either because there’s no population immunity, no available counter-measures, or for some other reason. (Stanley, 2016)

The question of the appropriate criteria for defining GOF studies of concern was a recurring theme in subsequent discussions.

Dr. Stanley went on to explain that the NSABB WG had also identified a number of principles for guiding funding decisions related to GOF studies of concern (see Box 2-1).

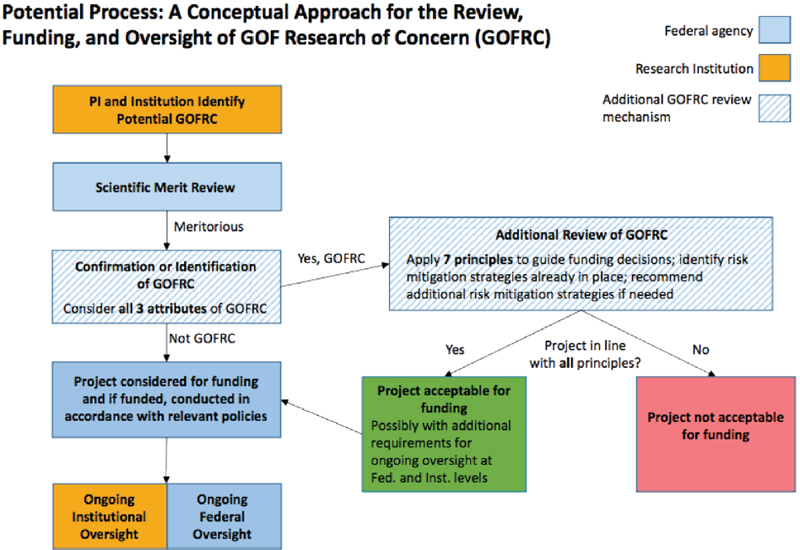

To further assist in determining how such arrangements might function in practice, the NSABB WG had continued to develop the conceptual approach for the review, funding, and oversight of GOF studies of concern, including a new diagram (see Figure 2-2), which Dr. Stanley presented at the symposium. It includes activities to be undertaken at the institutional and federal levels and details what additional steps would be required for GOF studies of concern. He added that, as discussed at the NSABB’s January meeting and in the NSABB WG’s Draft Working Paper, the NSABB had highlighted a number of questions that needed further consideration and input (NSABB, 2015a: 46). He said that the NSABB WG was also considering a new question: “What type of body should be tasked with the high-level review of GOF research of concern. Would a FACA-like1 committee be desirable, or as now envisioned by NSABB, can such reviews be accomplished by federal agencies, or other groups internal to the United States government?”

Dr. Stanley concluded by saying that the NSABB would continue working on its recommendations, with plans for a meeting scheduled for May 24, at which the final report would be discussed and possibly voted

_______________

1 “The Federal Advisory Committee Act [FACA] was enacted in 1972 to ensure that advice by the various advisory committees formed over the years is objective and accessible to the public. The Act formalized a process for establishing, operating, overseeing, and terminating these advisory bodies” (General Services Administration, http://www.gsa.gov/portal/category/21242).

on. He encouraged the participants to continue to submit comments to the NSABB and to take an active role in the symposium discussions.

Harvey Fineberg, chair of the Symposium Planning Committee, then moderated an open discussion of the NSABB WG’s Draft Working Paper. In his introductory remarks, Dr. Fineberg highlighted the importance of determining what does, or should, qualify as GOF studies of concern and the subset of research that may warrant additional oversight. He recalled the three characteristics identified by the NSABB WG and stressed the importance of defining the threshold between research of concern and other studies. He commented that the proposed definition did not take into account the starting point of virulence, transmissibility, or resistance of the pathogen. “If you have a very resistant organism that is very virulent if contracted, and all you want to do is to test whether the function of transmissibility could be enhanced, why would that be less of concern than starting with a less virulent, less resistant, less transmissible organism, and trying to produce increased function along all three

NOTE: GOF = gain-of-function; PI = principal investigator.

SOURCE: Stanley, 2016.

dimensions?” He suggested that conceptually it might make more sense to think about “zones” of GOF research where concerns arise, because any combination of the three, or any one, two, or three, leads to a zone of concern outside of what the native organism represents (Fineberg, 2016).

Dr. Fineberg commented that the research enterprise is generally positive because it reveals truths of nature. But there could be a class of investigations that provoke scientific, ethical, or social concerns. In such cases, he felt, the burden of proof as to the value of a specific piece of research would move to those wanting to pursue it.

In conclusion, he added to Margaret Hamburg’s comments, noting that, although the NSABB was focused on recommendations for U.S. policy, this was intrinsically a global challenge. He expressed the hope that during the symposium participants would consider the issues related to the development of a global regime to manage this class of research of concern, in addition to and beyond any national regime.

Discussion

Joseph Kanabrocki from the University of Chicago and Kenneth Berns from the University of Florida, co-chairs of the NSABB WG, joined Dr. Stanley on stage for the discussion.

The discussion that followed highlighted several themes from the presentations. The scope of assessing risks and benefits was explored, with questions raised by George Gao from the Chinese Academy of Sciences and the Chinese Center for Disease Control and Prevention about why there was comparatively little focus on loss-of-function experiments, given the difficulty of predicting which method would reduce, or increase, or enhance a virus’s virulence or transmissibility. More broadly, Keiji Fukuda from the World Health Organization noted connections to other issues in which life sciences research lies at the heart of safety or security concerns, such as food security or genetically modified organisms. He highlighted the importance of engaging with access and benefit-sharing regimes.

Alternative approaches to tying increased oversight to funding were discussed. Some participants felt that direct regulatory approaches would be preferable. A review of different policy options from a recently published commentary was presented by Thomas Inglesby from the Center for Health Security, University of Pittsburgh Medical Center (UPMC), that included lifting the moratorium on GOF research; seeking an international consensus; securing national and international agreement to restrict the performance of GOF studies of concern; designating a board; establishing clear red lines for GOF studies of concern; and requiring the purchase by research institutions of specific liability insurance policies (Lipsitch et al., 2016). Megan Palmer from Stanford University reflected that several

of the questions identified by the NSABB WG as requiring further consideration corresponded to some of the tasks given to the NSABB by the White House. She also asked the NSABB WG to provide key lessons on the limitations of expertise or limitations in the process that might be fed into broader or future discussions on the oversight of life science research.

The importance of international collaboration was stressed and the potential for those wishing to undertake GOF studies of concern to relocate to less restrictive environments was noted by Abdulaziz Alagaili from King Saud University in Saudi Arabia. He also argued that any oversight frameworks should apply to the private sector as well as academia. Piers Millett from Biosecure suggested that an international component should be a significant aspect of future work, including the allocation of necessary resources; a mandate for long-term, sustained engagement; and genuine two-way conversations (rather than the presentation of a finalized solution). He also suggested that any international discussions should be co-hosted by relevant health and security entities to prevent perceptions of bias.

The outcomes from discussions held in other countries about GOF research were highlighted. Filippa Lentzos from King’s College London, for example, in a comment made via the Web, noted findings from these discussions of the

- Lack of clear and convincing justifications for GOF studies of concern;

- Role of personal or institutional interests in agenda setting;

- Global dimension of GOF research of concern and the need for an international solution;

- Potential for accidents, abuse, and malpractice, and the intricate relationship between trust and accountability;

- Instability of political contexts and changing security environments, and the need for transparency in biodefense-related research; and

- Need for clear red lines on the most dangerous GOF experiments that apply to the public, private, and military sectors.

She also raised the issue of how to ensure that the lay public’s voice is heard and incorporated into the decision-making process around GOF of concern research.

Another participant, Catherine Rhodes from the University of Cambridge, recalled a recent meeting in the United Kingdom in which influenza researchers indicated an interest in developing international approaches to the oversight of relevant research but feared any such process becoming dominated by existing U.S. policy discussions.

On the question of how to define “GOF studies of concern,” some participants objected to requiring that all three of the characteristics recommended by the NSABB WG be met. Dr. Millett felt that the threshold for risk requiring additional oversight had been set too high and that any research that would be expected to produce a pathogen with any two of the characteristics should be considered for additional oversight. In this respect, Marc Lipsitch from Harvard University noted that the original GOF experiments that prompted the international controversy were initially believed to have met only two of these criteria, and eventually met only one. Thus, those experiments would not be subject to any of the oversight provisions under discussion. He and Dr. Inglesby argued that the third criterion was superfluous and that only issues of transmissibility and virulence need be considered. Dr. Lipsitch cited the original White House charge (White House, 2014a), public comments submitted to the NSABB by the Infectious Disease Society of America (IDSA, 2016), and “common sense” in support of his argument. John Steel from Emory University raised technical questions about how to measure these characteristics; for example, what does “highly” transmissible mean? He also cited the shortcomings of animal models to approximate transmissibility and called for additional guidance on how to make such decisions in practice.

The discussion also included a number of other specific reflections on the NSABB WG’s Draft Working Paper and its recommendations

- Dr. Inglesby highlighted how important it was that any relevant regulations or other measures governing GOF studies of concern apply anywhere relevant research is being conducted, regardless of whether the laboratory receives federal funds or whether it is found in the public or private sectors.

- The value of the NSABB making its recommendations broad enough to fit GOF studies of concern with any pathogen, rather than just those covered by the moratorium, was noted by Dr. Millett. He also suggested that any characterization of GOF studies of concern should not be based upon taxonomy but instead focus on functional characteristics as contained in the draft definition.

- Dr. Selgelid from Monash University in Australia raised the possibilities of making oversight arrangements progress along a spectrum rather than being treated as binary. In such a model, a single risk threshold would not be established (above which research would be governed by specific oversight measures), but rather increasing levels of oversight would apply as the relative risk of the work increases. He also commented that, rather than first making a judgment about the scientific merit of a study and then assessing whether it raised GOF issues of concern, it might

-

be better to include considerations of risk at the earlier stage. If two studies show equal scientific merit and neither is considered of concern, then—other things being equal—would it be better to fund the less risky study if one cannot fund both?

- Questions as to the efficacy of the existing arrangement for addressing biosecurity information risks were raised by Dr. Inglesby and Dr. Millett, who encouraged further reflection on suitable oversight.

- Some participants, such as Dr. Millett, felt that bodies involved with assessing risks and benefits could not be housed within either the health or the security architecture but should be located inside a neutral agency.

- Questions about the interface between the proposed regulatory framework developed by the NSABB WG and existing arrangements for GOF experiments with specific agents, such as the one implemented by the National Institutes of Health (NIH), were raised by Nicholas Evans from the University of Pennsylvania. The ethics report also was discussed, with Dr. Evans suggesting that the scope of ethical issues related to GOF studies of concern was considerably broader than the scope of those included in the consideration of benefits in other areas, such as human subjects research. This, he suggested, would seem to increase the challenges in suitably reflecting potential benefits of GOF studies of concern.

Dr. Fineberg began the period of taking responses from the NSABB members by welcoming comments on any issue but said he hoped that they would reflect in particular on the “core question of what qualifies as being of concern.” He noted that there had been a variety of viewpoints expressed about the necessity of meeting all three criteria, the implications of thresholds versus a spectrum, and the question he had raised earlier of whether the starting point could enough to make research meeting only one criterion “of concern.” Dr. Kanabrocki responded by clarifying that the NSABB had not changed its thinking, as some had suggested, about the third criterion. The NSABB WG had recognized that there was some misinterpretation of the original language to limit the criteria to only resistance to countermeasures. Instead the intent is to capture the broader question of pandemic potential. Dr. Berns added that he emphasized “what’s important is what you wind up with,” that is, the potential for a pandemic and this led to the question of whether or not there were existing defense mechanisms. He also commented on the difficulty of predicting the consequences of research and the challenges in attempting to quantify such risks. Dr. Stanley commented that the issue was whether

the research could create risk significantly above the existing risk for that pathogen. Again, this tied to the questions of natural immunity or countermeasures.

In response to other questions and comments, Drs. Stanley, Kanabrocki, and Berns acknowledged the importance of the issues that had been raised, and they commented that the NSABB had struggled, for example, with the question of whether to offer broad recommendations or the more specific guidance for which some participants were calling. Dr. Berns commented that, even more than the definition, he thought the NSABB WG had struggled with the level at which the decision would be made. Should the final decision be made inside the government or by an outside group, such as a FACA committee? Efficiency might suggest handling the decision inside the government, but the public interest in transparency would argue strongly for a FACA committee. They also noted that, although the NSABB was tasked to make recommendations for the U.S. government, they recognized the importance of the international dimensions of the issue. Dr. Stanley thought all the members of the NSABB, as well as its sponsors, believed that it was necessary to “strive for a global solution here, and that some type of harmonization essentially of these processes would be extraordinarily valuable.” The process was definitely not completed and they welcomed the input the NSABB WG would receive during the 2 days of the symposium.

LESSONS FROM THE RISK AND BENEFIT ASSESSMENT

Charles Haas from Drexel University, a member of the Symposium Planning Committee, introduced the goals of the session. The details of the risk and benefit assessment (RBA) had already been reviewed in detail at the January 2016 NSABB meeting.2 The purpose was therefore to build on those prior discussions to consider how risk assessment more broadly could serve the important roles that the NSABB’s draft findings and recommendations, including its proposed conceptual approach for making decisions about GOF studies of concern, had given to judgments about risks and benefits.

Rocco Casagrande from Gryphon Scientific provided an overview of the RBA (Gryphon Scientific, 2015) as basic background for the session. The purpose of this 8-month study was to provide data on the risks and benefits associated with research on modified strains of influenza viruses

_______________

2 The webcast of those discussions, along with copies of presentation slides and written public comments, are available at http://osp.od.nih.gov/office-biotechnology-activities/event/2016-01-07-130000-2016-01-08-220000/national-science-advisory-board-biosecuritynsabb-meeting.

and the coronaviruses. The RBA had been divided into three major tasks, each of which required a distinct data collection and analysis approach: a quantitative biosafety risk assessment; a semi-quantitative biosecurity risk assessment; and a qualitative benefit assessment. Dr. Casagrande noted that the RBA was comparative; it determined the change in risk from research on GOF pathogens compared to research on wild type pathogens and identified the benefits to science, public health, and medicine afforded by GOF research compared to alternative research and innovations.

Dr. Casagrande presented key findings from the RBA. The biosafety risk assessment included a map of the series of events necessary for a laboratory incident to result in a pandemic. The probability of each event resulting in the next necessary event was determined. The RBA established that only a small minority of laboratory incidents with the most contagious influenza viruses would cause a local outbreak, and only a minority of those would lead to a global pandemic.

The published RBA had identified the pandemic strain of the 1918 H1N1 influenza virus as posing the greatest risk. However, subsequent information made available to Gryphon Scientific at the January NSABB meeting by Dr. Kanta Subbarao from NIH showing a high degree of cross protection afforded by exposure to the 2009 influenza against the 1918 influenza enabled a reassessment. Further analysis determined that the naturally circulating 1957 H2N2 influenza virus became the “riskiest” pandemic strain because its antigenic profiles would cause about 100 times more global cases, although it is only one-tenth as deadly as the 1918 strain.3 As a result, it became the comparator against which other risks should be evaluated. The RBA also determined that the riskiest modified strain was a 1918 H1N1 strain altered to evade residual immunity or to be otherwise more transmissible.

Other key findings from the biosafety assessment included

- Manipulating GOF seasonal influenza strains at the BSL3 level may compensate for the increase in risk posed by the modified strains, largely because the extra system of respiratory protection decreases the risk of a laboratory acquired infection.

- Some of the manipulations that could theoretically increase risk may not be achievable or desirable. For example: (i) a strain that can overcome protective vaccination increases risk only if it can evade vaccine protection via immune modulation, not antigenic

_______________

3 The details of the Gryphon Scientific analysis are available in supplemental material on its website: http://www.gryphonscientific.com/wp-content/uploads/2016/03/Supplementalinfo%E2%80%93Protection-against-Infection-with-1918-H1N1-Pandemic-Strain.pdf. The final version of the report was released in April 2016 (Gryphon Scientific, 2016).

change; (ii) the scientific value of increasing the transmissibility of influenza virus beyond that of the most transmissible strains (or final titer beyond 1E8) is questionable and perhaps infeasible; (iii) there is no animal model of transmission for the coronaviruses, so manipulation of this trait is not currently achievable; and (iv) some estimates suggest that severe acute respiratory syndrome coronavirus (SARS-CoV) may already be more transmissible than was estimated in the RBA, in which case further manipulation would not affect risk.

The biosecurity risk assessment had two components: an assessment of the risks from acts targeting a laboratory; and security risks derived from the information generated by the studies. Key findings of the assessment of risks from acts targeting a laboratory included

- The traits that drive risk are similar when considering biosafety and biosecurity because the pathogens are transmissible. How the initial infections were caused is of little consequence once a local outbreak begins.

- Biosecurity events are often predicted to involve the covert infection of the public, so this type of an infection is much more likely to cause a global outbreak. By contrast, laboratory workers benefit from health surveillance and isolation protocols not available to the general public.

- To match the risk posed by biosafety incidents given a historical rate of laboratory acquired infections, a biosecurity event that covertly infects a member of the public must occur only once every 50-200 years. These events include theft of an infected animal, contaminated piece of equipment, or viral stock. Given the frequency with which these biosecurity events have happened, the RBA suggested that biosecurity be given as much consideration as biosafety.

The information biosecurity risk assessment analyzed “the risk that a malicious actor might misuse the information in publications describing GoF research” (Gryphon Scientific, 2015: 212). Key findings included

- Minimal information risk remains for GOF studies in influenza viruses because dual use methods have already been published.

- Significant information risk remains for GOF studies in the coronaviruses, but these studies are hampered by a lack of model systems.

- Information risk could easily be generated by research on other transmissible pathogens.

The benefits assessment identified GOF studies providing critical or unique benefits for both

- Influenza viruses, including studies that enhance viral growth from low titer; lead to evasion of residual or induced immunity; enhance virulence; enhance transmissibility; and lead to evasion of therapeutics in use and in development. And,

- Coronaviruses, including studies that alter host tropism; enhance virulence; and lead to evasion of therapeutics in development.

Dr. Casagrande highlighted a number of lessons learned during the execution of the RBA. He stated that the distinction between seasonal and pandemic flu is artificial because an old seasonal flu strain could become a new pandemic strain (as highlighted by 1957 H2N2 replacing 1918 H1N1 as the riskiest pandemic strain). He noted the lack of data on human reliability in life sciences laboratories in contrast to data from other well-researched sources such as the nuclear, chemical, and transportation industries. Those data show that human error is the most common cause of accidents. To use an example from the life sciences, it is more common for a lab worker not to use a powered air purifying respirator (PAPR) properly than for a PAPR to be defective. He also cited the difficulty posed by having no risk benchmark for work with wild type pathogens and the difficulty posed by restriction of the RBA to influenza and coronaviruses.

The RBA was then applied to a number of specific experiments, including those that

- Include virulence factors from 1918 H1N1 influenza in a 2009 H1N1 strain, which did not increase the probability that an outbreak escapes local control and indicated that global consequences scale linearly with case fatality rate.

- Aim to create antigenically distinct strains of a recently circulating seasonal influenza strain, which resulted in strains having a 2-3-fold increase in risk of escape, capable of inflicting 10 times more global deaths, resulting in a 20-30-fold increase in risk of infection. The meaning of this risk increase is difficult to interpret in the absence of standards for risk tolerance but suggests that more controls and measures should be taken to control infection risk from this modified pathogen than from the wild-type pathogen.

Dr. Casagrande also noted that bench researchers may not be familiar enough with the epidemiological properties of pathogens to properly characterize their strains. Guides or tools are needed to easily obtain

parameter values for wild type strains and, perhaps, to aid with the calculations.

A series of commentators provided reflections on the RBA. They were asked to consider

- What they know needs to occur, based on their prior experience in the context of policy making, to make use of the Gryphon Scientific analysis and other information.

- The potential value of risk–benefit analysis in making decisions on individual cases of proposed research projects rather than the role of a study intended to cover an entire class (i.e., GOF) of investigation, which was the purpose of the Gryphon Scientific analysis.

Louis (Tony) Cox from Cox Associates highlighted the value of attempts to quantify risk in the RBA (Cox, 2016). Dr. Cox also discussed risk management, or what to do about that risk, especially as it related to determining which proposals to fund. Dr. Cox highlighted the value of clearly defined decision rules and conditional decision rules, detailing the conditions that would need to be met before a proposal might be funded. Dr. Cox reflected that efforts to determine the maximum acceptable risk were not useful approaches in a GOF setting. He argued that both the context and the benefits needed to be taken into account and suggested that attempting to improve the risk–benefit profile may be a more suitable approach. Dr. Cox suggested that “arbitrary coherence”—accepting risks because they are less risky than those we already accept—was also not appropriate in a GOF context. He believed that benchmarks and precedents were not necessarily the most appropriate basis for decision making but supported gathering more information before making funding decisions, including on opportunity costs. He asserted that there is a need to learn from past experience and to make the decision-making process adaptive. Dr. Cox also identified a series of specific proposals for strengthening funding decisions on GOF studies of concern (see Box 2-2).

Kara Morgan from Battelle Memorial Institute reminded participants of the difficulty of decision making on low-probability, high-risk events. Dr. Morgan introduced a number of tools developed to assist in such situations and help match decision-making complexity to potential risk. She discussed three frameworks for decision making, describing the frameworks as part of a continuum, enabling their adaptation to different contexts (see Box 2-3).

Dr. Morgan concluded that decision making is a social process, not an analytical one. There is a need for a process to help move from analysis to a decision. She advised the symposium that decision frameworks, rules, and process were just as important as the analysis.

Adam Finkel from the University of Pennsylvania set out five factors to strengthen risk–benefit analysis that should be integrated into the development of the policy framework for GOF studies of concern (see Box 2-4).

Dr. Finkel noted considerable differences in opinion among different risk estimates of GOF studies of concern. He argued that risk was not a binary state and this provided the potential for a hierarchy of decision rules. He also noted the importance of including justice and equity for those individuals affected by risk. Dr. Finkel commented that it becomes much harder to assess risk when uncertainties exist and they are uncorrelated. He also felt that it was necessary to do a better job of communicating the benefits of GOF research. He called for further efforts to identify where the faults that lead to risk are occurring. He introduced a new study of existing best practices in regulatory excellence based on the concept of “listening, learning, and leading” developed through work in the Canadian energy sector (Coglianese, 2015).

Dr. Finkel discussed the importance of basic laboratory safety. He believed the best way to prevent accidents from infecting the population was to prevent them from infecting laboratory workers. Dr. Finkel concluded by encouraging the use of a more solution-focused risk–benefit analysis—where options are not restricted to a specific limited set of options—but one which focuses on the underlying policy need. He provided examples from sources of drinking water and synthetic biology. He cautioned that uncertainties rarely cancelled each other out in practice.

Discussion

The resulting discussion began by highlighting the importance of having good baseline data against which to measure risk: for example, through a national database or framework of laboratory near misses, accidents, or disclosures, as discussed by James Welch from the Elizabeth R. Griffin Foundation. Panelists noted that the U.S. government had already committed to develop such a database (U.S. Government, 2015: 4).4 The need for additional resources to undertake focused research to fill data gaps was highlighted by Gigi Kwik Gronvall from the UPMC Center for Health Security. Shortages of data on benefits and risks were felt by several participants to apply to infectious disease research and emerging areas of life science research more broadly. The importance of tools to enable scientists to operate safely and securely on an ongoing basis was also noted by Dr. Gronvall. There was also a discussion, prompted by Allison Mistry from Gryphon Scientific, of the need to differentiate between conducting functional changes in wild type as opposed to research backbones or chassis. The value of including comparative risks in different chassis in definitions of GOF and GOF studies of concern was also explored.

Participants also considered the limits of comparing risks and benefits in this type of research. The discussion explored the challenges in suitably reflecting the potential public health benefits of research. Corey Meyer from Gryphon Scientific, who had led the benefits assessment portion of the RBA, made a number of comments. She said that although it may be possible, at least qualitatively, to compare the risks and benefits of research for public health, she was not sure that was true for the benefits of scientific knowledge. She also wanted to underscore that “while the risks of the research are immediate in that they are occurring at the time the research is being conducted, the benefits to public health will be realized in the future. And there is significant uncertainty in how long it will take for those benefits to be realized because translation of basic science research into public health benefits is complex and depends on many other factors.”

Adam Finkel commented that there is a substantial literature on discounting and the time value of benefits on which one could draw. He thought the problem was not intractable and offered the example of climate change research, where he said there is a movement toward lower discount rates. In this area, “the future speaks more loudly than we

_______________

4 The recommendation—“Establish a new voluntary, anonymous, non-punitive incident-reporting system for research laboratories that would ensure the protection of sensitive and private information, as necessary”—is one of the products of the Federal Experts Security Advisory Panel, whose report was made public in October 2015 (U.S. Government, 2015).

have allowed in the past.” So that part is not at all intractable. He cited the example from Michael Selgelid’s white paper of benefits in terms of expected lives saved.

Rocco Casagrande commented that the daunting part of assessing future benefits is not how much to discount potential lives saved but how to make an estimate of how likely it is that any scientific discovery will lead to a public health benefit. Tony Cox commented that it also depends on what other research is done. And Kara Morgan noted that even failed experiments may offer useful lessons. John Steel also noted that such research can help ameliorate disease events that happen infrequently but that potentially result in tens of millions of deaths.

Some participants, such as Marc Lipsitch, questioned the findings on the unique benefits of certain GOF research, suggesting that the knowledge could have been generated using alternative approaches. In his view, the net contribution of GOF research to knowledge on influenza viruses has been overstated. He also said that the knowledge about mutations and phenotypes identified by Fouchier and Kawaoka had already been identified in previous safe experiments. The confirmation that they were important in the GOF context was new, but he asserted that their utility for public health prediction was so far unproven. “So the net benefit for public health is much smaller than the net knowledge.” Issues around identifying and ensuring sufficient oversight of dual use research more broadly were also discussed, including that, as life science and biotechnology tools are getting more powerful, the potential for their misuse for malign purposes might also increase. On the RBA, some participants, such as Piers Millett, reflected that the process of updating the risk comparator from the 1918 influenza strain to the H2N2 1957 pandemic strain was a practical example of the importance of the inclusion of the concepts of innate or acquired immunity against pathogens in the third set of characteristics proposed by the NSABB to define GOF studies of concern. He also suggested that the RBA was a missed opportunity to explore the international opportunity costs associated with different decisions on GOF studies of concern, from a moratorium on relevant research, through increased oversight, to taking no additional steps.

The shortcoming of existing arrangements in identifying and mitigating biosecurity information risks was noted by some participants, including Victoriya Krakovna from the Future of Life Institute, Dr. Millett, and Megan Palmer. They argued that these assessed risks were only low because the critical information had already been released into the public domain over the last decade. This led to questions about the efficacy of current arrangements to identify potential future biosecurity information risks, such as those for coronaviruses highlighted in the RBA. The value of encouraging comments and reflections on the RBA and associated

methodologies from a wider group including different types of expertise was also noted by Megan Palmer.

THE SCIENCE OF SAFETY AND THE SCIENCE OF PUBLIC CONSULTATION

Baruch Fischhoff from Carnegie Mellon University, a member of the Symposium Planning Committee, opened the session by explaining that there had been a successful session at the first Academies GOF symposium, which offered an introduction to the lessons from research into human factors, public consultation, and risk assessment to inform the preparations by NIH and the NSABB for the RBA. This year the planning committee had organized another session focused on the insights that social science research can offer about the design and implementation of federal oversight for GOF studies of concern. The panel included experts in organizational culture, human factors, and public consultation who would offer comments on the NSABB draft recommendations and specific suggestions for the ultimate choices to be made by the U.S. government.

Ruthanne Huising of McGill University introduced the insights about compliance with safety regulations in life science laboratories gained from past research in which she had taken part (see, e.g., Huising and Silbey, 2011). Since 2012 she had also been observing Canadian regulators design new biosafety and security regulations that went into force in December 2015 using an intensive public consultation process.

Dr. Huising discussed behavior and decision making as mediated by social organizations, which can include both formal social structures (such as organizations and families) and what she termed emergent systems of meaning (“culture”), which include norms, values, and assumptions. The incidents that led to the GOF deliberative process had provoked extensive discussions of the existing culture of life sciences laboratories as this affected safety and security. In these and similar discussions, the concept of culture is often treated as both the “problem” (a “lax culture” or “insufficient culture”) and the “solution” (“build a culture of safety,” “change the culture”). Culture, she argued, is often understood as a managerial tool. She described how concepts of culture can be applied to understand how laboratories approach and implement safety provisions.

Dr. Huising described how culture might be shaped through socialization processes. Beginning with graduate training, researchers are observing and learning how successful members of their field think, talk, and act. They learn how competent, respected members of the community behave, potentially through their attention to safety, security, and risk.

Safety cultures can be designed and Dr. Huising provided examples of the systems used by BP and Dow Chemicals. Such efforts tend to be

top down and centralized, she noted. They can be slow to develop and expensive, and they often ignore differences in interests and resources. She suggested that safety cultures can also emerge, resulting in multiple, heterogeneous cultures. Such change often occurs in response to shocks, with new values and norms emerging. This approach can be slow, but it is self-reproducing. Dr. Huising felt this model might be more suitable for the scientific endeavor, in part because it would better reflect the nature of the organizational structure of research laboratories.

The organizational structure of relevant institutions can also impact culture, Dr. Huising noted, with administrative and academic laboratory components operating with different logics. Academic administration is organized in ways that give it considerable similarities to the organization of regulatory and other government agencies. In contrast, the laboratories, at least in theory, operate through collegial governance and a democratic approach to organizing. That said, principal investigators (PIs) have remarkable autonomy in how they organize and run their laboratories. Dr. Huising commented that “Decision making is highly decentralized, and often operates according to verbal agreements. Trust is a very important component in how things get done in these laboratories.” Because the laboratories work on soft money, they are often in flux, with continuously changing resources, members, and activities. She also noted that these different professional bureaucracies have implications for biosafety and biosecurity, in particular by determining responsibility for legal and administrative requirements, allocating, authority to enforce those requirements, and facilitating compliance.

Dr. Huising presented key findings from studies of safety culture in biology laboratories. She emphasized that these studies came from BSL2 facilities because of the difficulties in obtaining sustained access to higher containment (BSL3, BSL4) facilities. The findings include:

- Researchers experience compliance requests as intrusions and impediments to their work. They communicate safety as peripheral to their research work and sometimes delegate it to students. They are most likely to incorporate safety features into their practices when they align with efforts to control physical materials.

- Most violations are minor (housekeeping). A small number of laboratories account for the majority of violations.

- Organizations depend on environmental, health, and safety (EHS) staff (such as Biosafety Officers) to ensure compliance.

With regard to the last finding, she noted that the roles of the EHS staff included buffering researchers from record-keeping, inspections, corrections, and helping to maintain compliance. They negotiate increased

daily compliance by working in laboratories, generating familiarity, trust, and relationships, which also gave them the ability to anticipate problems and to identify emerging dangers. In many cases, the EHS staff was able to draw on requirements and regulations to increase their resources and authority in relation to faculty, but she commented that these “boots on the ground” were chronically underfunded.

She noted the emergence of a “responsibility movement” in other facets of the life sciences, with examples of good practice in green chemistry, nanotechnology, synthetic biology, and the citizen science movement. Dr. Huising concluded by providing a number of specific recommendations for developing policy options for GOF research (see Box 2-5).

Gavin Huntley-Fenner from Huntley-Fenner Advisors introduced concepts in human factor research relevant to the NSABB WG’s Draft Working Paper and recommendations. Dr. Huntley-Fenner highlighted that, in general, human error has increased proportionally as a contributor to accidents. He recalled that for laboratory biosafety, despite advances in technology, instruments, and personal protective equipment, the World Health Organization had asserted that “human error remains one of the most important factors at the origin of accidents” (WHO, 2006). He noted that there was a lack of data on human reliability in laboratories, and he stressed the importance of collecting more data on safety. He cited the conclusion in the RBA that “The state of knowledge of the rates and consequences of human errors in life science laboratories is too poor to develop robust predictions of the absolute frequency with which laboratory accidents will lead to laboratory acquired infections” (Gryphon Scientific, 2015: 3) to underscore the relevance for GOF policy deliberations.

There are a range of factors that can contribute to the emergence of error, including how physical and cognitive stresses undermine human reliability, according to Dr. Huntley-Fenner. He suggested that the comparative scarcity of accidents might still mask latent risks, with more numerous incidents and errors going unreported. He highlighted research by the Government Accountability Office in 2009 that concluded latent risks still exist in laboratories from underappreciated human error (GAO, 2009). He suggested that human factors research could provide tools for designing, implementing and maintaining systems in which errors are mitigated when they occur. The benefits of incorporating human factor principles were potentially significant, with Dr. Huntley-Fenner suggesting that they could reduce risk associated with GOF studies of concern substantially. He noted that some simple approaches could yield a significant reduction of errors, such as the development and use of simple checklists, which had a significant impact in reducing surgical errors in hospitals in both developed and resource poor countries (Haynes et al., 2009).

Progress has been made in other areas to address shortcomings in human factor safety data. For example, Dr. Huntley-Fenner discussed how the National Aeronautics and Space Administration has succeeded in mining data it already had in ways that provided insights into areas where it had less data, which was then used successfully to reduce risk (Chandler et al., 2010). He argued that limited relevant laboratory safety data do exist. For example, a survey of laboratory acquired infections in 68 institutions in Belgium indicated that 95 percent of the incidents involved human error (Willemarck et al., 2012: 14). He suggested that the human factors research community was well positioned to provide relevant data but more work was needed in high-containment laboratories.

Measuring incidents was only one necessary step; controlling incidents was also important, noted Dr. Huntley-Fenner. He highlighted research that showed the success of applying multifaceted controls. He also highlighted the importance of considering context. Guidance from the United Kingdom on human factors that result in noncompliance with standard operating procedures demonstrated that cutting corners was mainly “due to situational and organizational factors. These factors include, for example, time pressure, workload, staffing levels, training, supervision, and availability of resources” (Bates and Holroyd, 2012). Dr. Huntley-Fenner recommended rigorous data collection and sophisticated analytics to reduce risk associated with GOF studies of concern (see Box 2-6). The self-driving Google car was provided as an example of successfully gathering and leveraging data on human decision making and error to build a system that reduces those risks.

Monica Schoch-Spana from the UPMC Center for Health Security outlined four basic considerations for the design of public deliberations.

- Which public(s) to involve in deliberations—In the context of GOF studies of concern, Dr. Schoch-Spana suggested that considering three overlapping categories would be useful: the pure public, or naive citizens; the affected public, or persons or groups whose lives are altered or influenced by a policy decision; and the partisan public, or representatives of groups with vested interests or expertise in the policy matter. She also noted that each of these categories of the public had been engaged in past discussions on GOF studies of concern, with the affected public implied in the RBA, affected publics and the pure public noted in the ethics analysis, and partisan publics reflected in relevant publications and comments.

- What is the purpose for public(s) deliberation—Three distinct aims were highlighted: knowledge exchange, conveying information from policy makers to publics, or transmitting views, opinions, or attitudes from the publics to policy makers; innovation, eliciting rich unpredictable insights that come from crowd-sourcing a problem or from experiential, on-the-ground knowledge; and democratic accountability, ensuring broad representation in a decision about the common good. If the public deliberation on GOF studies of concern was intended for democratic accountability, Dr. Schoch-Spana noted it was necessary to give people the time, information, space, and authority they need to perform that role. Merely bringing “ordinary people” or a cross-section of society together to deliberate does not automatically achieve this aim.

-

She suggested a series of desirable characteristics for public deliberations on GOF studies of concern, including diversity, balance, civility, accountability, and consent.

- Which process enables the public to fulfill its purpose—The use of three types of processes in the GOF deliberative process were reviewed: communication, a form of transparency through putting out information for the public—for example, press releases, educational websites, and summary reports such as those made available by the first Academies GOF symposium and the NSABB GOF meetings, as well as making the RBA available online; consultation, a means of gathering input, such as through enabling public comments on draft NSABB recommendations and to the U.S. government on future funding and oversight policy; and collaboration, a more deliberative option to exchange ideas and share responsibility for making and implementing policy. To date, she felt that the life sciences and other partisan publics have had strong input but deliberation with the broad public has not yet been explored.

- On what problem will the public(s) deliberate—Dr. Schoch-Spana reviewed good practices in identifying problems, especially where there are conflicting values as to the public good, and for controversial and divisive topics. She used them to identify three questions on which the publics might deliberate for GOF studies of concern: (i) Despite potential contributions to public health, should studies that could produce a pathogen of pandemic potential be performed at all?; (ii) Are finite dollars better spent on experiments to create pathogens with pandemic potential (which produce unique knowledge) or on strengthening the rest of the flu preparedness portfolio?; and (iii) If any, what added steps should trustee institutions (e.g., the U.S. government or research entities) take to strengthen pathogen of pandemic potential biosafety and biosecurity protections and public confidence in them?

Dr. Schoch-Spana also discussed how to operationalize standard elements of deliberation design. She noted that there is no single methodology for public deliberation, but she did describe a number of minimum standards for public deliberation, in particular for inclusivity and diversity, the provision of information, value-based reasoning. She also discussed methods for measuring the success of the process. Dr. Schoch-Spana concluded that meetings to date have engaged individuals from the life sciences, security, public health, biosafety, risk analysis, and the drug and vaccine industries, but the general public had been largely absent. She identified an unresolved issue of whether more sophisticated, resource-intensive deliberative sessions could be held outside the present circle

of vested parties. A number of possible activities for such a process were suggested (see Box 2-7).

In opening the floor for discussion, Baruch Fischhoff commented that the panelists had been encouraged to offer recommendations based on their own professional experience and research. He added that for those in the audience who were not familiar with the social, behavioral, and decision sciences, the panel should have provided some idea of the breadth and depth of the research that is available if one wanted to put the human aspect of this enterprise on a scientific foundation. It also illustrated the mix of methods used in this research: various theories; multiple methods of observation, including direct observation and laboratory and field experiments; traditional and statistical and analysis; and various types of data.

Discussion

The resulting discussion further elucidated specific aspects of the presentations. The importance of additional data gathering on accidents and associated human factors research was a repeated theme. Susan Wolf, an NSABB member from the University of Minnesota speaking in her personal capacity, raised operational issues around data collection, data standards, and the development of data collection systems. Gavin Huntley-Fenner commented that the dearth of current data on accidents and human reliability in laboratories does not mean that what people want to know is unknowable. He and others also noted the value as well as the potential challenges in implementing confidential accident reporting. The need to ensure that comprehensive reporting systems for

human errors are developed and implemented in a nonpunitive manner was stressed by Kavita Berger from Gryphon Scientific. In response, Dr. Huntley-Fenner noted the importance of even seemingly small things, such as finding language for reporting forms that did not use negative categories (“theft,” “loss”), and designing systems so that there was feedback or other incentives for reporting, such as providing information that could be used to improve safety. Ruthanne Huising said that the new regulatory framework developed in Canada had a nonpunitive reporting system that offered potential lessons about dealing with privacy issues and offering useful feedback.

Given what he saw as the difficulties of implementing a nonpunitive system in high-containment laboratories, Andrew Kilianski, a National Research Council Fellow from the Edgewood Chemical Biological Center Aberdeen Proving Ground, made the specific suggestion to conduct research focused on the possible relationships between human error by graduate students under minimal-containment standards and other indicators of their proficiency. Megan Palmer from Stanford University highlighted the importance of strategic interventions to allow sustained scholarship on the social and behavioral dimensions of research. Monica Schoch-Spana commented that best practices for biosafety and biosecurity have not been captured, synthesized, and disseminated by researchers.

Adam Finkel said he was concerned that there had not been a discussion of a confidential channel for reporting incidents, citing what he thought was becoming a less favorable climate for “whistle-blowers” in many settings. He also stressed the need to consider outside incentives to support a culture, including enforcement. He thought that traditional regulation was probably not appropriate but cited other models, such as third-party audits, that could be considered.

Issues around the enforcement of safety and security regimes were also addressed, with some participants noting that a subsection of accidents and incidents are a result of negligence and malfeasance, requiring some form of censure. These individuals highlighted the importance of access to necessary resources for enforcement.

Opportunities for strengthening safety by designing out the consequences for human error were also noted: for example, by Dr. Finkel. He cited useful precedents from health care settings and commented that he sensed opportunities were not being widely studied or implemented in laboratory settings. In response, Dr. Huntley-Fenner noted a paradox that, as one designs out the other sources of error, human factors become an increasing portion of whatever error remains. That is not a reason to neglect those helpful improvements, but it is a reminder that human error will always be with us.

Kavita Berger noted past work on behavioral threat assessments and asked whether there were methods that could be applied earlier to detect individuals who posed potential biosecurity threats such as, for example, someone stealing an agent or animals, vandalism, violence, or deliberate misuse.

Participants discussed a multilayered approach as raised by Dr. Schoch-Spana, highlighting the need to ensure that such a system includes public engagement and transparency at all levels. Susan Wolf asked about the potential value of having a formal FACA committee for GOF studies of concern. Dr. Schoch-Spana commented that a FACA committee would satisfy one level of engagement and could be beneficial, but one should think of shared governance across all levels. She cited the systems in place at Duke University and St. Jude Children’s Research Hospital (see next chapter) as examples worth studying for approaches to providing a diversity of views and participants. She commented on the need for more efforts to collect and share best practices about ways to improve biosafety, biosecurity, and what she called “bio-credibility.” The potential additional burden imposed on scientists involved with GOF studies of concern from participating in further public deliberation exercises was raised by Margaret Kosal from the Georgia Institute of Technology. She asked if this was another unfunded mandate to educate a sometimes ignorant public that might be hostile to science for reasons that have not come up in these discussions.

David Drew from the Woodrow Wilson Center introduced himself as a “concerned citizen” who had not been familiar with the GOF controversy before the symposium. He raised the issue of whether the type of public engagement by scientists represented at the symposium was actually a form of “upstream engagement,” which can be interpreted as designed to defuse the public’s concerns without really addressing them. Dr. Schoch-Spana responded that to be effective the public deliberation process needs to be a shared dialogue that leads to mutually agreeable common ground, not just pure persuasion. Silja Vöneky from the University of Freiburg said she appreciated the stress on the value of ensuring that culture change comes from within scientific disciplines. But she also noted other strong incentives for scientists, such as publication, and suggested broader consideration of opportunities to nudge scientists to strengthen their focus on the safety and security implications of their work. Dr. Huising commented that the issue of culture change is sensitive when one is dealing with elites. In this case one was dealing with highly educated elites who are used to substantial autonomy and are not necessarily very open to ideas that are coming from elsewhere. She believed strongly that the ideas about the importance of safety and security in science are going to have to come from some of the best researchers in each

discipline. “We need the leaders in these disciplines to model the importance of these values and normative expectations in research. We need the journals to expect it and conferences to highlight it. It will have to be pushed from within to be effective.”